RELIABILITY AND VALIDITY RELIABILITY AND VALIDITY These important

RELIABILITY AND VALIDITY

RELIABILITY AND VALIDITY These important concepts relate to any measure/instrument 1. Validity of a measure: The degree to which a measure actually measures what we think it measures. 2. Reliability of a measure: The consistency of a measure over time or under similar situations. In social, or business research we cannot expect any measure to be perfectly valid or perfectly reliable. The best we can do is to design our measures to be as valid and reliable as they can be and where feasible run some statistical tests to evaluate their levels of reliability and validity. .

THREE APPROACHES TO RELIABILITY 1. 'Is the data/observation which I have just obtained for employee J, Material K, product L, or equipment M, consistent with data if the test were repeated every succeeding day, etc? ' This concept of reliability considers whether the obtained value or observation really is a stable indication of employee’s, product’s, material’s or equipment’s performance. This implies a definition of reliability in terms of stability, dependability and predictability over time and is the definition most often given.

THREE APPROACHES TO RELIABILITY 2. Another approach asks, 'Is this value which I have just obtained on employee J, material K, product L, or equipment M an accurate indication of their 'true' performance? ' This question really asks whether the measurements are accurate. This links to (1) above for if performance or measurement is stable and predictable across occasions then it is most likely an accurate reflection of performance. If there are changes in performance values across occasions then accuracy is unlikely - how do we ever know then which value is the ‘correct’ one?

THREE APPROACHES TO RELIABILITY 3. The third approach also implies the previous two approaches and asks how much error there is in the values obtained. A personality scale of employability, a survey of customer preferences or a measurement of the accuracy of a sophisticated piece of technology is done usually once. Our goal, of course, is to hit the 'true' value on this one occasion. To the extent that we miss the 'true' scores, our measuring instrument is unreliable and contains error. In determining the level of accuracy or stability in a measure, a researcher really needs to identify the amount of error that exists, and the causes for that error.

SOURCES OF ERROR • There are two sources of variability in measurement: (a) variability induced by real actual differences between individual employees or pieces of parallel equipment in the ability to perform the requirements; (b) error variability which is a combination or error from two sources

SOURCES OF ERROR (i) random fluctuation error. Subtle variations in individual and equipment performance from day to day such as an unreliable worker who may produce exceptional work on one day but follows up with a day of poor quality work.

SOURCES OF ERROR (ii) systematic or constant error. This is the result of one or more variables continually influencing a performance level or measurement in a particular direction, a constant bias pushing values up all the time or pulling them down all the time. For example, practice effect.

COMPONENTS OF A VALUE OR SCORE • There are three components of a score/value: 1. the true observation or score, 2. the actual observed/obtained score and 3. the error score. • • A simple equation links these three scores. Observed score (what we got) = true score (what we are after) ± error score or Xobs = Xtrue ± error.

RELIABILITY AND ERROR • ‘Error’ does not imply ‘wrong’. It means that uncontrolled variables are having some influence on the data • The error may be positive or negative, depending on whether the value(s) are 'over' or 'under' with reference to the ‘true’ value. • The smaller the error score, the closer the observed score approximates the true score and the greater the reliability. Where there is no error component Xobs = Xtrue + 0, i. e. perfect reliability.

RELIABILITY AND ERROR • As the error component increases, the true score component decreases and the observed X will increase in difference from the true score. • In parallel fashion to the individual score formula, the total variance in obtained data is equal to the sum of the 'true' variance and the error variance, viz. SD 2 obs = SD 2 true + SD 2 error

RELIABILITY AND ERROR • We can now define the reliability of any set of measurements as the proportion of their variance which is true variance, i. e. the ratio of true variance to observed variance. • When the true variance is equal to the observed variance, i. e. when there is no error variance, this ratio has a value of +1. 0. This is the 'perfect reliability' value: SD 2 true SD 2 obs = 1 rtt = +1. 0 (rtt symbolizes reliability and is in fact the correlation coefficient)

RELIABILITY AND ERROR • Manipulating the above formulae, reliability equals 1 minus the error variance divided by the obtained variance. rtt = 1 - SD 2 error SD 2 obs Reliability is therefore the degree to which there is an absence of measurement error.

DETERMINING RELIABILITY 1. Test-retest reliability. The degree to which the measure returns the same value from the same respondents on a second occasion. Temporal reliability or stability over time 2. Parallel-form reliability. When two equivalent (but different) forms of a measure are developed (e. g. two equivalent forms of a single personality scale are developed), it is the degree to which the same value is returned from the same respondents for the two different equivalent forms.

DETERMINING RELIABILITY 3. Split-Half or Inter-Item Method – A measure of reliability reflecting the degree to which one half of the items is the same as that for the other half of the items (like a test-retest, but can be performed on a single occasion). Problem – how do we determine which items should be in each half? Often worked on the odd-even items on a scale/test. 4. Internal Consistency Method (Cronbach’s Alpha) – A measure of reliability that is equivalent to the mathematical average of all possible split half combinations.

CRONBACH ALPHA INTERNAL RELIABILITY ANALYSIS • • Select Analyze, choose Scale and click on Reliability Analysis to open the Reliability Analysis dialogue box. Transfer all the scale or subtest items to the Items box. Ensure Alpha is chosen in the Model box Click on Statistics to open the Reliability Analysis Statistics dialogue box. In the Descriptives for area, select Item, Scale and Scale if item deleted. In the Inter-item area choose Correlations Select Continue then OK to produce the output

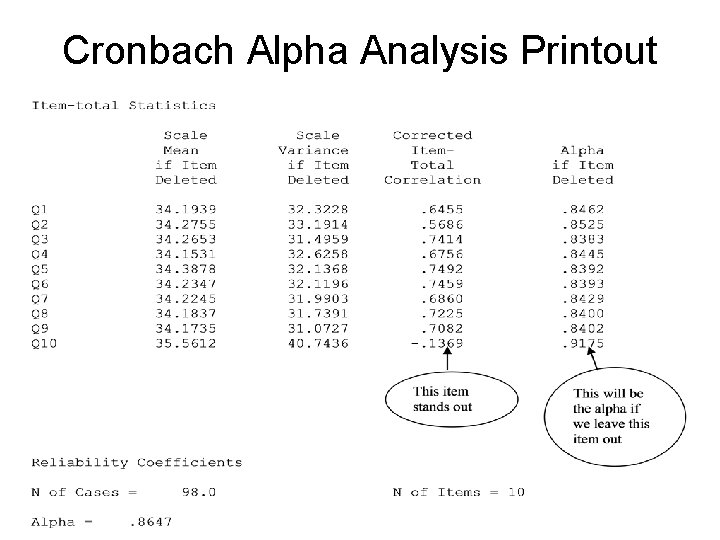

Cronbach Alpha Analysis Printout

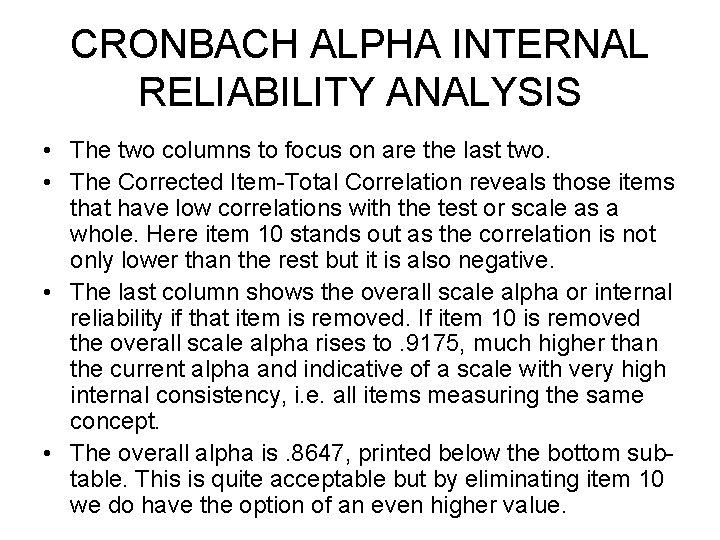

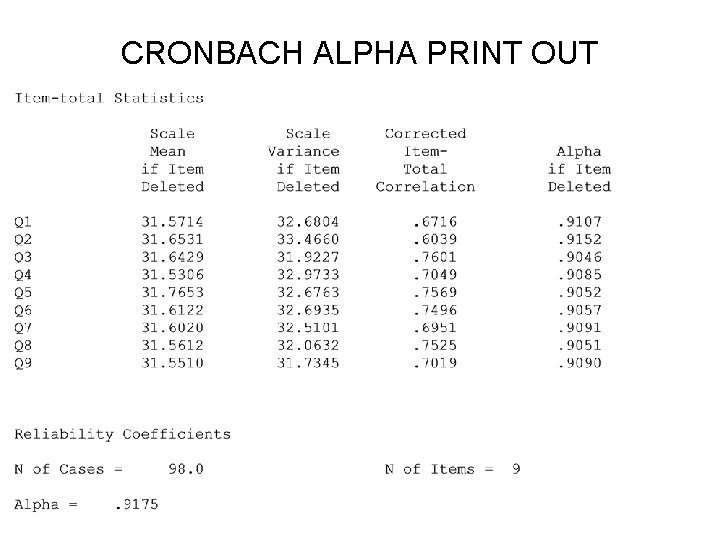

CRONBACH ALPHA INTERNAL RELIABILITY ANALYSIS • The two columns to focus on are the last two. • The Corrected Item-Total Correlation reveals those items that have low correlations with the test or scale as a whole. Here item 10 stands out as the correlation is not only lower than the rest but it is also negative. • The last column shows the overall scale alpha or internal reliability if that item is removed. If item 10 is removed the overall scale alpha rises to. 9175, much higher than the current alpha and indicative of a scale with very high internal consistency, i. e. all items measuring the same concept. • The overall alpha is. 8647, printed below the bottom subtable. This is quite acceptable but by eliminating item 10 we do have the option of an even higher value.

CRONBACH ALPHA PRINT OUT

STANDARD ERROR OF MEASUREMENT The Standard Error of Measurement (SEM) is used to determine the range of certainty around a reported score or value. It estimates the range of scores individual respondents might get if they were to take the same test over and over (assuming no benefits from the repeated practice).

STANDARD ERROR OF MEASUREMENT - THEORY – A measure of the range of error around any known score or value • Repeated administration of a single test to derive a series of data from an individual or piece of equipment etc… is assumed to approximate to a normal distribution. • The spread of this distribution is another way of conceptualizing the reliability of the assessment. The smaller the spread, the greater the confidence with which any one observed value can be taken to represent the 'true' score. • The SD of this distribution is the index of its spread or error distribution and is known as the standard error of measurement, or SEM

STANDARD ERROR OF MEASUREMENT • The standard error of measurement (SEM) is an estimate of error in interpreting an individual’s score. A score is only an estimate of a person’s “true” performance. Using a reliability coefficient and the test’s standard deviation, we can calculate the range of error

STANDARD ERROR OF THE MEASURE • The formula is SEM = SD (1 – r) • where SD is the SD of the data and r is the reliability coefficient of the measure. • The interpretation of the standard error of measurement is like that of the SD of a set of raw values. E. g. there is a probability of 0. 68, that the obtained value does not deviate from the ‘true’ value by more than +/-1 SEM. The other probabilities are of course: 0. 95 for Xobs ± 1. 96 SEM, and 0. 99 for Xobs ± 2. 57 SEM

STANDARD ERROR OF MEASUREMENT EXAMPLE • A test with a reliability coefficient of. 96 and a standard deviation of 15 yields of SEM of 3 SEM = SD ( 1 – r ) = 15 ( 1 -. 96) = 15 . 04 = 15 x. 2 = 3 • Example: Joe took the test and received a score of 100. • Let’s build a “band of error” around Joe’s test score of 100, using a 68% interval which is approximately equal to ± 1 SD. • For a 68% interval: Test score ± 1(SEM) = 100 (1 x 3) = 100 3 • Why the “ 1”? Adopting the normal distribution for our theoretical distribution of error, 68% of the values lie within the area 1 SD. • Chances are 68 out of 100 that Joe’s true score falls within the range of 97 and 103. • A similar argument can be employed for the 95% range

Standard Error of Measurement • Using the same data, what is the band of error if we use the 95% confidence interval? • Test score 1. 96(SEM) = 100 (1. 96 x 3) = 100 5. 88 • Chances are 95 out of 100 that Joe’s true score falls within the range of 94. 12 and 105. 88. • The higher a test’s reliability coefficient, the smaller the test’s SEM. The larger the SEM the less reliable the test is.

STANDARD ERROR OF THE MEASURE • The SEM assumes that errors in measurement be equally distributed throughout the range of test scores. • The calculated ranges above are quite revealing for they show that even with a highly reliable test, there is still a considerable band of error round an obtained score.

FACTORS THAT INFLUENCE RELIABILITY • Length of Assessment – reliability increases with length • Methods of Estimating Reliability – test-retest with short time period can give high reliability than long time gap; internal consistency can give high reliability if poor items removed. • Type of measurement – subjective response measures (questionnaires; personality scales, etc) give lower reliabilities than objective measures (academic tests; machine production runs). • Standardization and administration – control of possible error sources like instructions, timing, distractions will increase reliability

RELIABILITY VERSUS VALIDITY • A reliable measure will produce a consistent result when measures are taken at different points in time or under similar situations. • Validity concerns whether the testing instrument actually measures the construct/concept/variable it purports to measure • You can have a reliable method/instrument that is not valid (speedometer in car always reads 10 kph too fast but is not valid as a measure of the real speed).

VALIDITY Two types of Validity. 1. External validity – capacity to generalize from sample result to population 2. Internal validity - can the research design actually provide the answer to the question asked

TYPES OF INTERNAL VALIDITY The degree to which the results are valid within the confines of the study – Content Validity: • The extent to which an assessment truly samples the universe of content – Face Validity: • A validity of appearances that disguises the real intent of the assessment – public relations looks good and genuine – Predictive Validity: • The extent to which the measurement will predict future performance or level on a criterion variable – Concurrent Validity: • The extent to which the measurement truly represents the current status of performance or level on a criterion variable

Types of Internal Validity – Construct Validity: • The extent to which the measure represents the underlying construct.

TYPES OF CONSTRUCT VALIDITY Two types of Construct Validity. a. Convergent Validity: The degree of correspondence of 2 different measures which both purport to measure the same thing. b. Discriminant Validity: The degree of lack of correspondence between 2 different measures which purport to measure different things.

THREATS TO INTERNAL VALIDITY External events - outside of researcher’s control • • • Regression - mov’t of outliers towards mean under repeated measurement Dropout 0 r Mortality - The loss of subjects on follow up often occurs in longitudinal studies and may result in confounding the effects of the experimental variables, for whereas the groups may have initially been randomly selected, the residue that stays the course is likely to be different from the unbiased sample that began it. Unreliable measures/tests/scales

THREATS TO INTERNAL VALIDITY • • • Sample size. Larger sample sizes properly selected are more representative and likely to demonstrate differences. Violating statistical assumptions like normality Fishing, i. e. carrying out a lot of tests hoping one will be significant; unfortunately 1 in 20 will be by chance.

THREATS TO INTERNAL VALIDITY • When participants vary considerably on factors other than those being measured, an increase in the amount of variance is produced. E. g. gross intelligence differences may cause the DV differences not the supposed IV • History - events other than the experimental treatments will occur naturally during the time between pre-test and post-test measurements. Such events are often impossible to eliminate or prevent happening, but produce effects that can be mistakenly attributed to differences in treatment.

THREATS TO INTERNAL VALIDITY • Maturation refers to the psychological or physical changes that occur naturally within the participant or equipment over time. A person often improves in performance with experience and the equipment runs less well with age. • Regression to the mean - simply refers to instances when subjects/equipment scoring highest on a pre-test are likely to score relatively lower on a post-test; conversely, those scoring lowest on a pre-test are likely to score relatively higher on a post-test.

EXTERNAL VALIDITY Refers to the extent to which the results of a sample are transferable to a population (generalizability). – Population Validity: • whether a sample of participants’ responses are an accurate assessment of the target population. – Ecological Validity • questions the generalizability of a study’s findings to other environmental contexts.

THREATS TO EXTERNAL VALIDITY 1. Failure to Describe and Operationalize Independent and Dependent Variables Explicitly. Unless variables and methodologies are adequately described, future replications of the experimental conditions are virtually impossible 2. Selection Bias; Lack of Representativeness, e. g. use of opportunity samples 3. Reactive Bias. E. g. Participants act up aware they are participants.

- Slides: 38