Relationship between two variables Two quantitative variables correlation

Relationship between two variables • • Two quantitative variables: correlation and regression methods Two qualitative variables: contingency table methods One quantitative, one qualitative : two-sample methods already considered… We’ll begin with two quantitative variables, continuous measurement variables usually, X and Y. There are usually two situations giving rise to X and Y: 1. 2. • bivariate sampling: assume we select pairs at random from a bivariate population fixed-X sampling: an experiment is performed where the X’s are fixed in advance and we observe the values of Y that correspond to those X’s. In either of these cases we may use the correlation coefficient and regression to look for the association between X and Y. Think of Y as the response and X the explanatory variable (though in the first case above, we may not have an explanatory/response situation…)

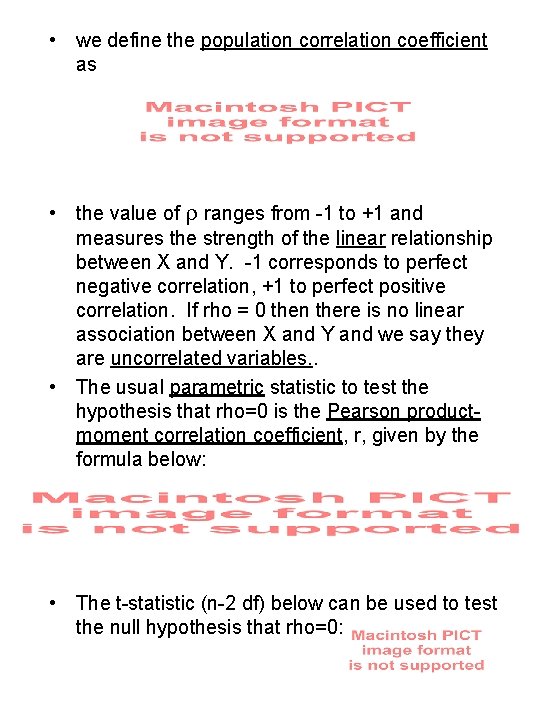

• we define the population correlation coefficient as • the value of r ranges from -1 to +1 and measures the strength of the linear relationship between X and Y. -1 corresponds to perfect negative correlation, +1 to perfect positive correlation. If rho = 0 then there is no linear association between X and Y and we say they are uncorrelated variables. . • The usual parametric statistic to test the hypothesis that rho=0 is the Pearson productmoment correlation coefficient, r, given by the formula below: • The t-statistic (n-2 df) below can be used to test the null hypothesis that rho=0:

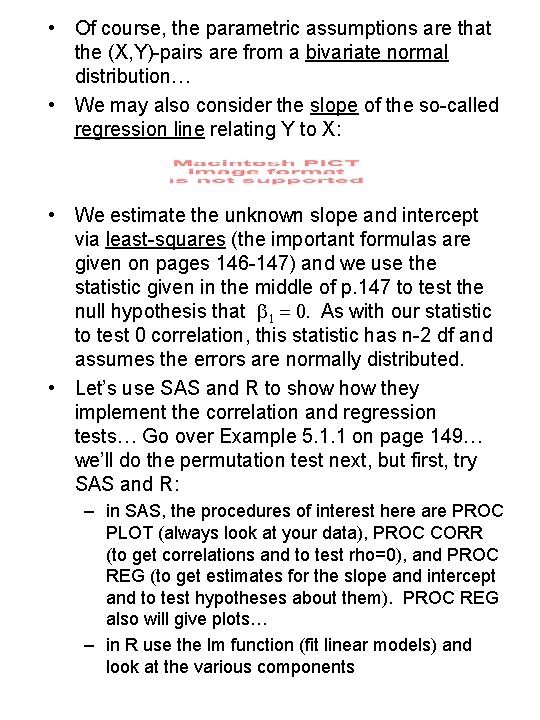

• Of course, the parametric assumptions are that the (X, Y)-pairs are from a bivariate normal distribution… • We may also consider the slope of the so-called regression line relating Y to X: • We estimate the unknown slope and intercept via least-squares (the important formulas are given on pages 146 -147) and we use the statistic given in the middle of p. 147 to test the null hypothesis that b 1 = 0. As with our statistic to test 0 correlation, this statistic has n-2 df and assumes the errors are normally distributed. • Let’s use SAS and R to show they implement the correlation and regression tests… Go over Example 5. 1. 1 on page 149… we’ll do the permutation test next, but first, try SAS and R: – in SAS, the procedures of interest here are PROC PLOT (always look at your data), PROC CORR (to get correlations and to test rho=0), and PROC REG (to get estimates for the slope and intercept and to test hypotheses about them). PROC REG also will give plots… – in R use the lm function (fit linear models) and look at the various components

• Let’s try to reproduce the empirical distribution of the correlation coefficient, r, as given in Figure 5. 1. 3 on page 153 – use R (see R#9, page 2). Note the correspondence between the transformed r (Z=r(sqrt(n-1))) percentiles and the standard normal percentiles (see Table 5. 1. 3 on page 152). • HW: Read Chapter 5, through page 153. Do problems #1, 3 and 4 on page 189 Then for next time, we’ll begin our discussion of the Spearman’s rank correlation coefficient…

- Slides: 4