Relational Data Mining Donato Malerba Dipartimento di Informatica

Relational Data Mining Donato Malerba Dipartimento di Informatica Università degli studi di Bari malerba@di. uniba. it http: //www. di. uniba. it/~malerba/ Dipartimento di Informatica Università di Bari

Overview • Single-table assumption • (Multi-)relational data mining and ILP • FO representations • Upgrading propositional DM systems to FOL • A case study: Mining Association rules • Conclusions MRDM – Prof. D. Malerba 2

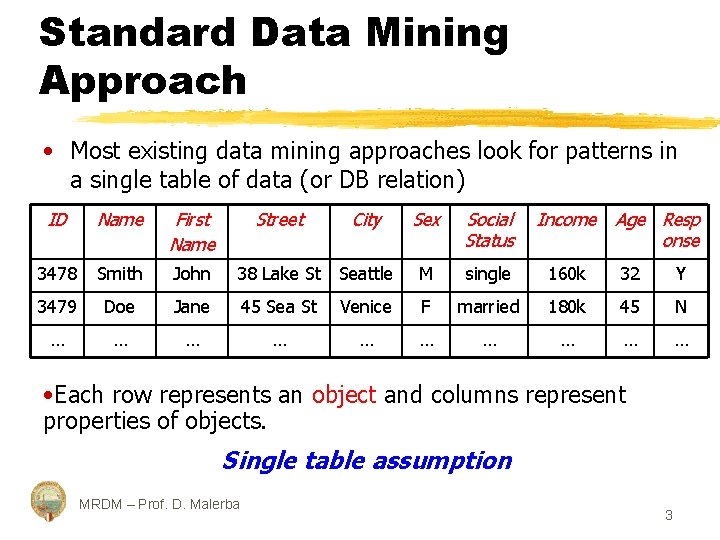

Standard Data Mining Approach • Most existing data mining approaches look for patterns in a single table of data (or DB relation) ID Name First Name 3478 Smith John 3479 Doe … … Street City Sex Social Status Income Age Resp onse 38 Lake St Seattle M single 160 k 32 Y Jane 45 Sea St Venice F married 180 k 45 N … … … … • Each row represents an object and columns represent properties of objects. Single table assumption MRDM – Prof. D. Malerba 3

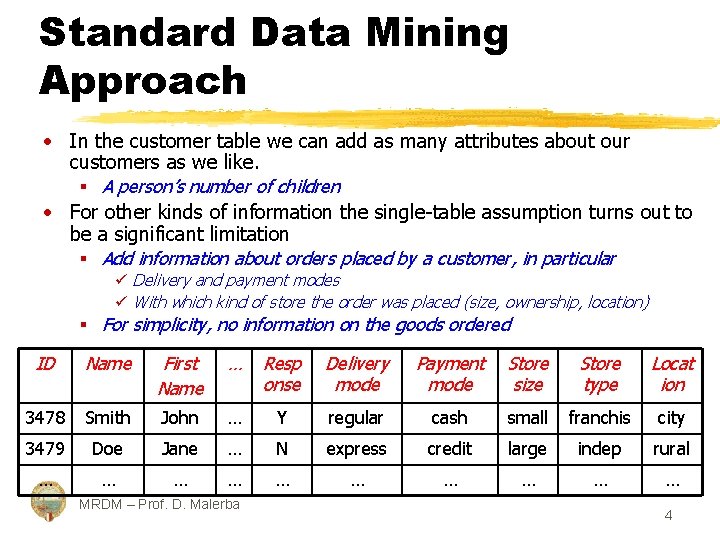

Standard Data Mining Approach • In the customer table we can add as many attributes about our customers as we like. § A person’s number of children • For other kinds of information the single-table assumption turns out to be a significant limitation § Add information about orders placed by a customer, in particular ü Delivery and payment modes ü With which kind of store the order was placed (size, ownership, location) § For simplicity, no information on the goods ordered ID Name First Name … Resp onse Delivery mode Payment mode Store size Store type Locat ion 3478 Smith John … Y regular cash small franchis city 3479 Doe Jane … N express credit large indep rural … … … … … MRDM – Prof. D. Malerba 4

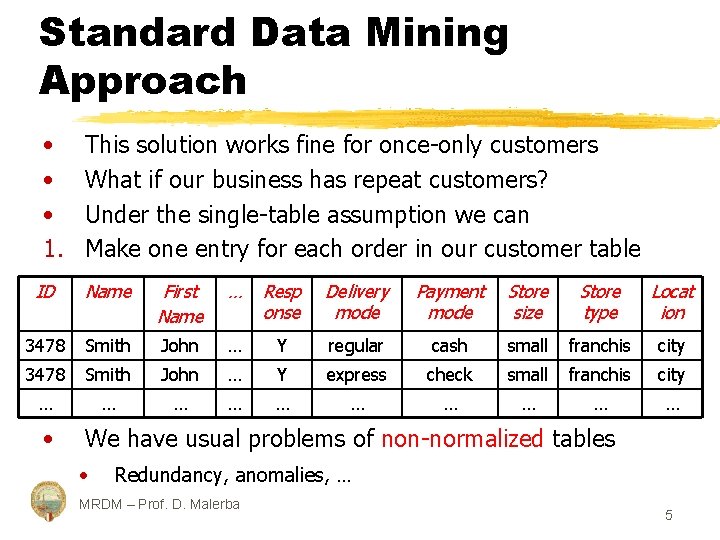

Standard Data Mining Approach • • • 1. This solution works fine for once-only customers What if our business has repeat customers? Under the single-table assumption we can Make one entry for each order in our customer table ID Name First Name … Resp onse Delivery mode Payment mode Store size Store type Locat ion 3478 Smith John … Y regular cash small franchis city 3478 Smith John … Y express check small franchis city … … … … … • We have usual problems of non-normalized tables • Redundancy, anomalies, … MRDM – Prof. D. Malerba 5

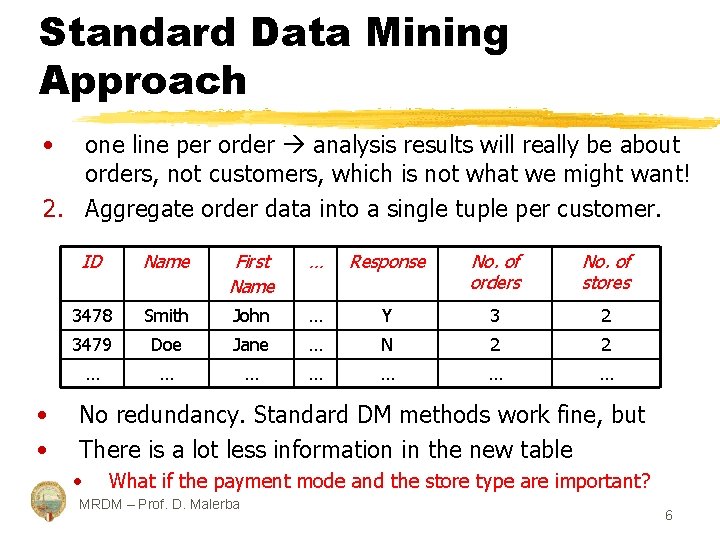

Standard Data Mining Approach • one line per order analysis results will really be about orders, not customers, which is not what we might want! 2. Aggregate order data into a single tuple per customer. • • ID Name First Name … Response No. of orders No. of stores 3478 Smith John … Y 3 2 3479 Doe Jane … N 2 2 … … … … No redundancy. Standard DM methods work fine, but There is a lot less information in the new table • What if the payment mode and the store type are important? MRDM – Prof. D. Malerba 6

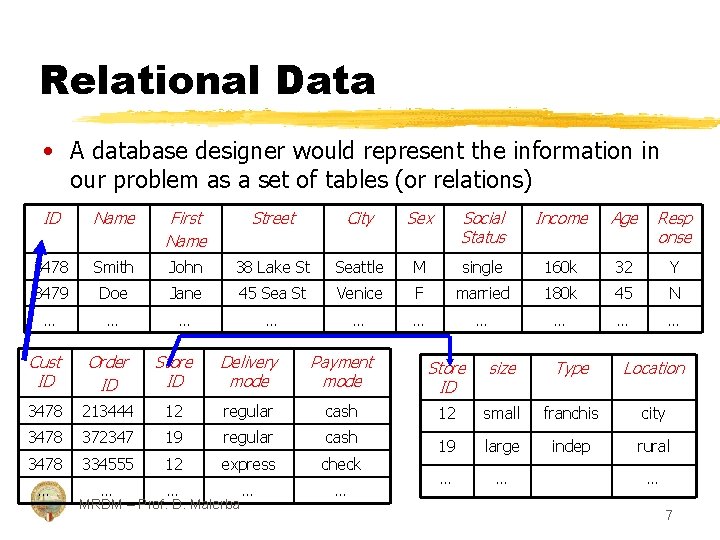

Relational Data • A database designer would represent the information in our problem as a set of tables (or relations) ID Name First Name Street City Sex Social Status Income Age Resp onse 3478 Smith John 38 Lake St Seattle M single 160 k 32 Y 3479 Doe Jane 45 Sea St Venice F married 180 k 45 N … … … … … Cust ID Order ID Store ID Delivery mode Payment mode Store ID size Type Location 3478 213444 12 regular cash 12 small franchis city 3478 372347 19 regular cash 3478 334555 12 express check 19 large indep rural … … … … MRDM – Prof. D. Malerba … 7

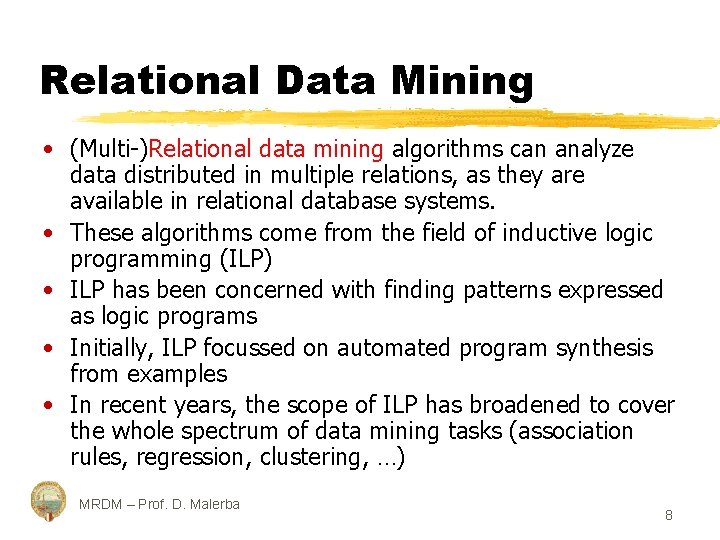

Relational Data Mining • (Multi-)Relational data mining algorithms can analyze data distributed in multiple relations, as they are available in relational database systems. • These algorithms come from the field of inductive logic programming (ILP) • ILP has been concerned with finding patterns expressed as logic programs • Initially, ILP focussed on automated program synthesis from examples • In recent years, the scope of ILP has broadened to cover the whole spectrum of data mining tasks (association rules, regression, clustering, …) MRDM – Prof. D. Malerba 8

ILP successes in scientific fields • In the field of chemistry/biology § Toxicology § Prediction of Dipertene classes from nuclear magnetic resonance (NMR) spectra • Analysis of traffic accident data • Analysis of survey data in medicine • Prediction of ecological biodegradation rates The first commercial data mining systems with ILP technology are becoming available. MRDM – Prof. D. Malerba 9

Relational patterns • Relational patterns involve multiple relations from a relational database. • They are typically stated in a more expressive language than patterns defined on a single data table. § Relational classification rules § Relational regression trees § Relational association rules IF Customer(C 1, N 1, FN 1, Str 1, City 1, Zip 1, Sex 1, So. St 1, In 1, Age 1, Resp 1) AND order(C 1, O 1, S 1, Deliv 1, Pay 1) AND Pay 1 = credit_card AND In 1 108000 THEN Resp 1 = Yes MRDM – Prof. D. Malerba 10

Relational patterns IF Customer(C 1, N 1, FN 1, Str 1, City 1, Zip 1, Sex 1, So. St 1, In 1, Age 1, Resp 1) AND order(C 1, O 1, S 1, Deliv 1, Pay 1) AND Pay 1 = credit_card AND In 1 108000 THEN Resp 1 = Yes good_customer(C 1) customer(C 1, N 1, FN 1, Str 1, City 1, Zip 1, Sex 1, So. St 1, In 1, Age 1, Resp 1) order(C 1, O 1, S 1, Deliv 1, credit_card) In 1 108000 This relational pattern is expressed in a subset of first-order logic! A relation in a relational database corresponds to a predicate in predicate logic (see deductive databases) MRDM – Prof. D. Malerba 11

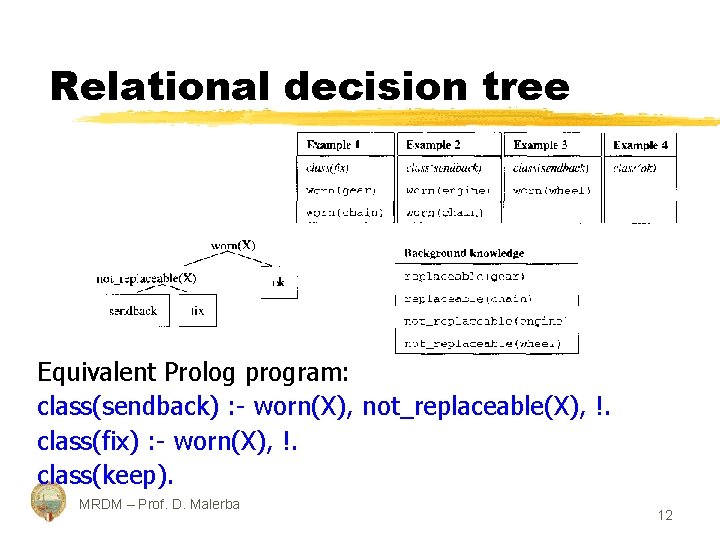

Relational decision tree Equivalent Prolog program: class(sendback) : - worn(X), not_replaceable(X), !. class(fix) : - worn(X), !. class(keep). MRDM – Prof. D. Malerba 12

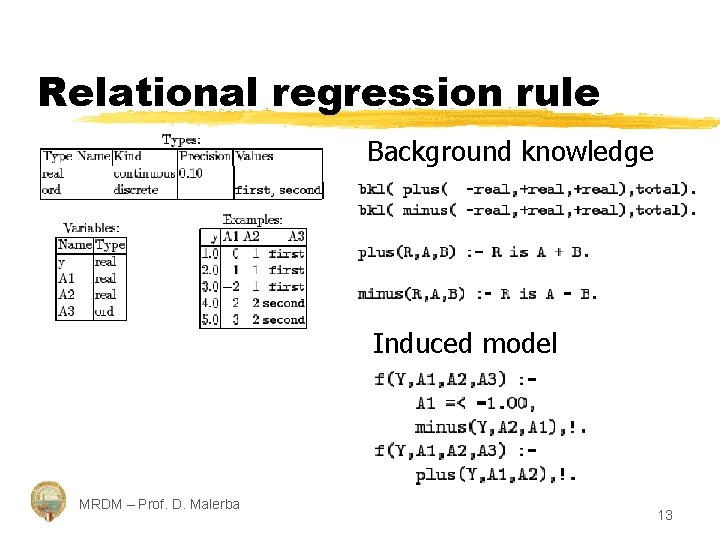

Relational regression rule Background knowledge Induced model MRDM – Prof. D. Malerba 13

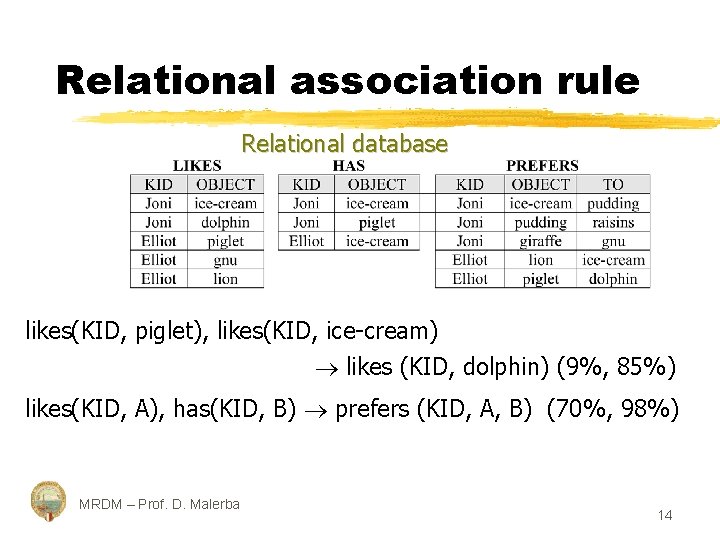

Relational association rule Relational database likes(KID, piglet), likes(KID, ice-cream) likes (KID, dolphin) (9%, 85%) likes(KID, A), has(KID, B) prefers (KID, A, B) (70%, 98%) MRDM – Prof. D. Malerba 14

First-order representations • • An example is a set of ground facts, that is a set of tuples in a relational database From the logical point of view this is called a (Herbrand) interpretation because the facts represent all atoms which are true for the example, thus all facts not in the example are assumed to be false. From the computational point of view each example is a small relational database or a Prolog knowledge base A Prolog interpreter can be used for querying an example. MRDM – Prof. D. Malerba 15

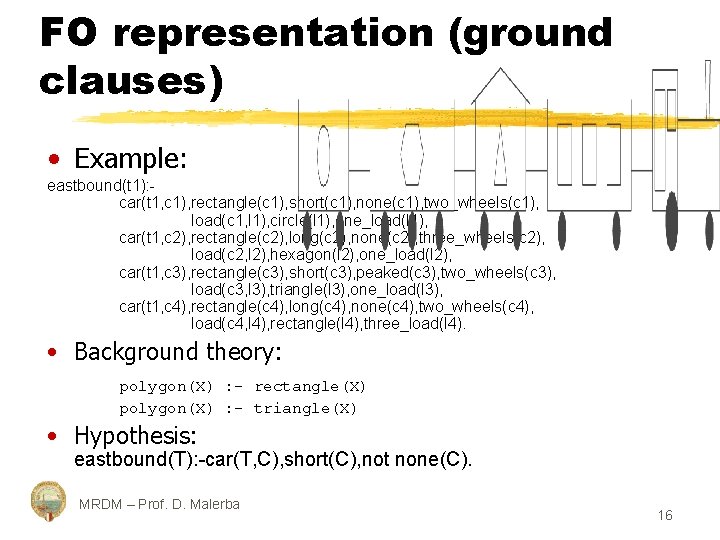

FO representation (ground clauses) • Example: eastbound(t 1): car(t 1, c 1), rectangle(c 1), short(c 1), none(c 1), two_wheels(c 1), load(c 1, l 1), circle(l 1), one_load(l 1), car(t 1, c 2), rectangle(c 2), long(c 2), none(c 2), three_wheels(c 2), load(c 2, l 2), hexagon(l 2), one_load(l 2), car(t 1, c 3), rectangle(c 3), short(c 3), peaked(c 3), two_wheels(c 3), load(c 3, l 3), triangle(l 3), one_load(l 3), car(t 1, c 4), rectangle(c 4), long(c 4), none(c 4), two_wheels(c 4), load(c 4, l 4), rectangle(l 4), three_load(l 4). • Background theory: polygon(X) : - rectangle(X) polygon(X) : - triangle(X) • Hypothesis: eastbound(T): -car(T, C), short(C), not none(C). MRDM – Prof. D. Malerba 16

Background knowledge • As background knowledge is visible for each example, all the facts that can be derived from the background knowledge and an example are part of the extended example. • Formally, an extended example is the minimal Herbrand model of the example and the background theory. • When querying an example, it suffices to assert the background knowledge and the example; the Prolog interpreter will do the necessary derivations. MRDM – Prof. D. Malerba 17

Learning from interpretations • The ground-clause representation is peculiar of an ILP setting denoted as learning from interpretations. • Similar to older work on structural matching. • It is common to several relational data mining systems, such as § CLAUDIEN: searches for a set of clausal regularities that hold on the set of examples § TILDE: top-down induction of logical decision trees § ICL: Inductive classification logic (upgrade of CN 2) • It contrasts with the classical ILP setting employed by the systems PROGOL and FOIL. MRDM – Prof. D. Malerba 18

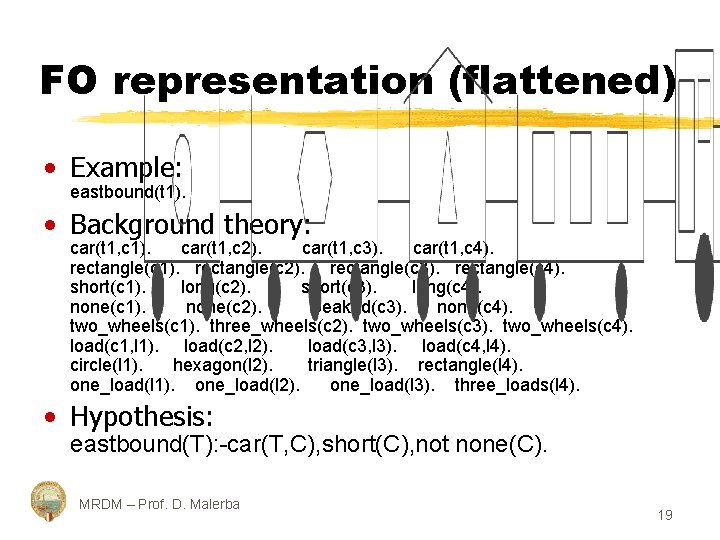

FO representation (flattened) • Example: eastbound(t 1). • Background theory: car(t 1, c 1). car(t 1, c 2). car(t 1, c 3). car(t 1, c 4). rectangle(c 1). rectangle(c 2). rectangle(c 3). rectangle(c 4). short(c 1). long(c 2). short(c 3). long(c 4). none(c 1). none(c 2). peaked(c 3). none(c 4). two_wheels(c 1). three_wheels(c 2). two_wheels(c 3). two_wheels(c 4). load(c 1, l 1). load(c 2, l 2). load(c 3, l 3). load(c 4, l 4). circle(l 1). hexagon(l 2). triangle(l 3). rectangle(l 4). one_load(l 1). one_load(l 2). one_load(l 3). three_loads(l 4). • Hypothesis: eastbound(T): -car(T, C), short(C), not none(C). MRDM – Prof. D. Malerba 19

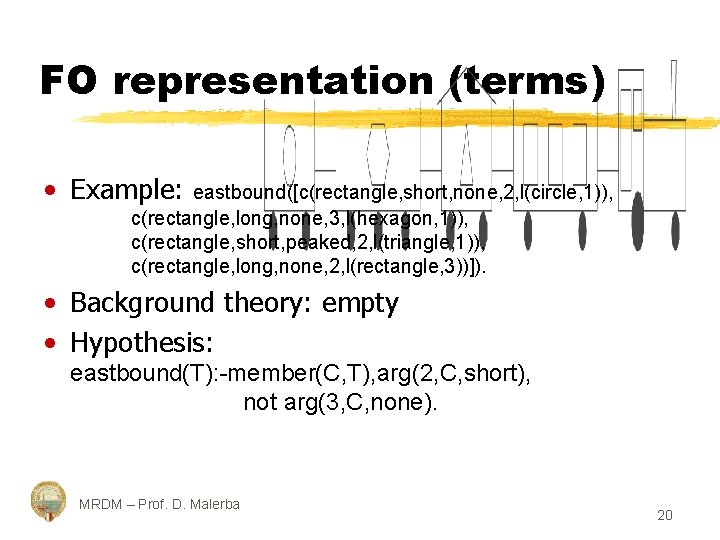

FO representation (terms) • Example: eastbound([c(rectangle, short, none, 2, l(circle, 1)), c(rectangle, long, none, 3, l(hexagon, 1)), c(rectangle, short, peaked, 2, l(triangle, 1)), c(rectangle, long, none, 2, l(rectangle, 3))]). • Background theory: empty • Hypothesis: eastbound(T): -member(C, T), arg(2, C, short), not arg(3, C, none). MRDM – Prof. D. Malerba 20

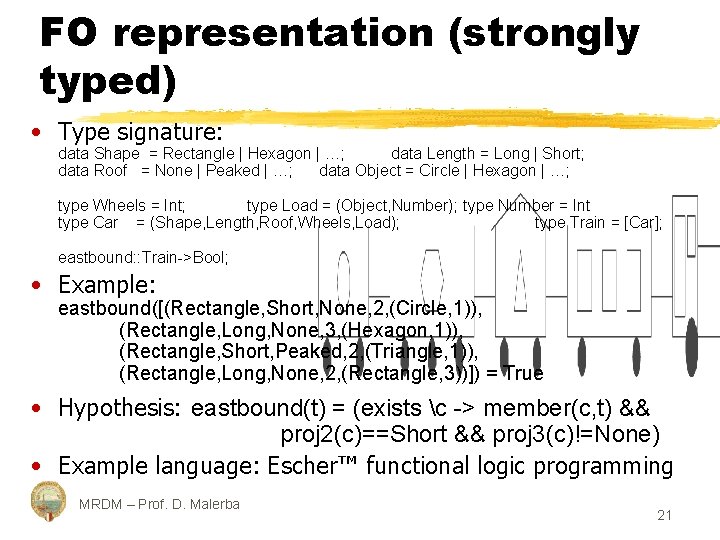

FO representation (strongly typed) • Type signature: data Shape = Rectangle | Hexagon | …; data Length = Long | Short; data Roof = None | Peaked | …; data Object = Circle | Hexagon | …; type Wheels = Int; type Load = (Object, Number); type Number = Int type Car = (Shape, Length, Roof, Wheels, Load); type Train = [Car]; eastbound: : Train->Bool; • Example: eastbound([(Rectangle, Short, None, 2, (Circle, 1)), (Rectangle, Long, None, 3, (Hexagon, 1)), (Rectangle, Short, Peaked, 2, (Triangle, 1)), (Rectangle, Long, None, 2, (Rectangle, 3))]) = True • Hypothesis: eastbound(t) = (exists c -> member(c, t) && proj 2(c)==Short && proj 3(c)!=None) • Example language: Escher™ functional logic programming MRDM – Prof. D. Malerba 21

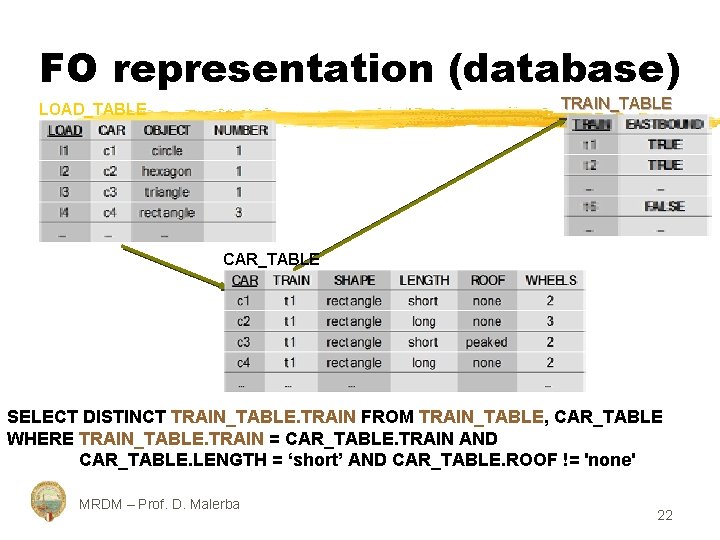

FO representation (database) TRAIN_TABLE LOAD_TABLE CAR_TABLE SELECT DISTINCT TRAIN_TABLE. TRAIN FROM TRAIN_TABLE, CAR_TABLE WHERE TRAIN_TABLE. TRAIN = CAR_TABLE. TRAIN AND CAR_TABLE. LENGTH = ‘short’ AND CAR_TABLE. ROOF != 'none' MRDM – Prof. D. Malerba 22

Individual-centered representation • The database contains information on a number of trains. • Each train is an individual. • The database can be partitioned according to individual to obtain a ground-clause representation • Problem: sometime individuals share common parts. • Example: we want to discriminate black and white figures on the basis of their position. Each geom. figure is an individual MRDM – Prof. D. Malerba 23

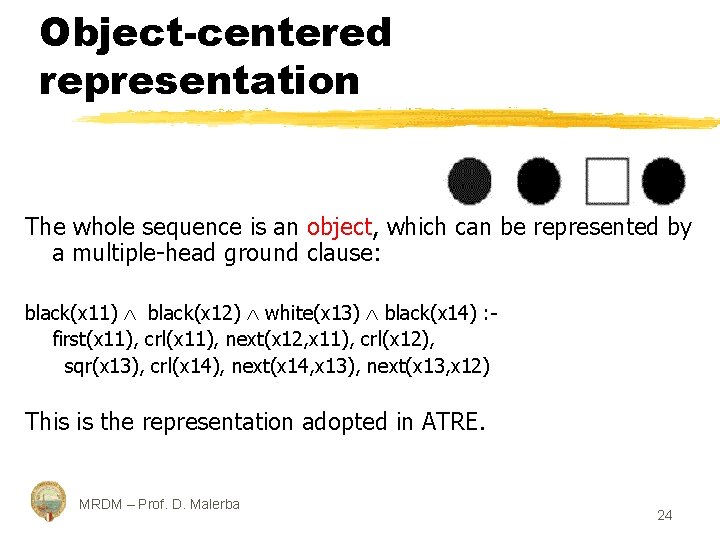

Object-centered representation The whole sequence is an object, which can be represented by a multiple-head ground clause: black(x 11) black(x 12) white(x 13) black(x 14) : - first(x 11), crl(x 11), next(x 12, x 11), crl(x 12), sqr(x 13), crl(x 14), next(x 14, x 13), next(x 13, x 12) This is the representation adopted in ATRE. MRDM – Prof. D. Malerba 24

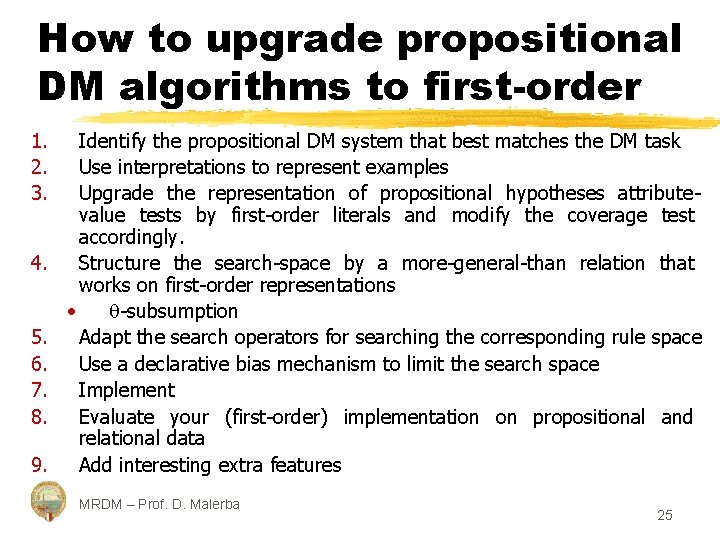

How to upgrade propositional DM algorithms to first-order 1. 2. 3. 4. 5. 6. 7. 8. 9. Identify the propositional DM system that best matches the DM task Use interpretations to represent examples Upgrade the representation of propositional hypotheses attributevalue tests by first-order literals and modify the coverage test accordingly. Structure the search-space by a more-general-than relation that works on first-order representations • -subsumption Adapt the search operators for searching the corresponding rule space Use a declarative bias mechanism to limit the search space Implement Evaluate your (first-order) implementation on propositional and relational data Add interesting extra features MRDM – Prof. D. Malerba 25

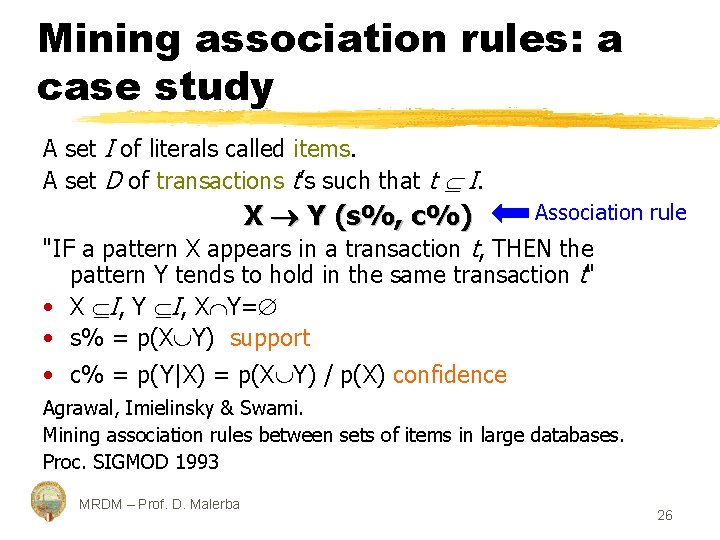

Mining association rules: a case study A set I of literals called items. A set D of transactions t’s such that t I. X Y (s%, c%) Association rule "IF a pattern X appears in a transaction t, THEN the pattern Y tends to hold in the same transaction t" • X I, Y I, X Y= • s% = p(X Y) support • c% = p(Y|X) = p(X Y) / p(X) confidence Agrawal, Imielinsky & Swami. Mining association rules between sets of items in large databases. Proc. SIGMOD 1993 MRDM – Prof. D. Malerba 26

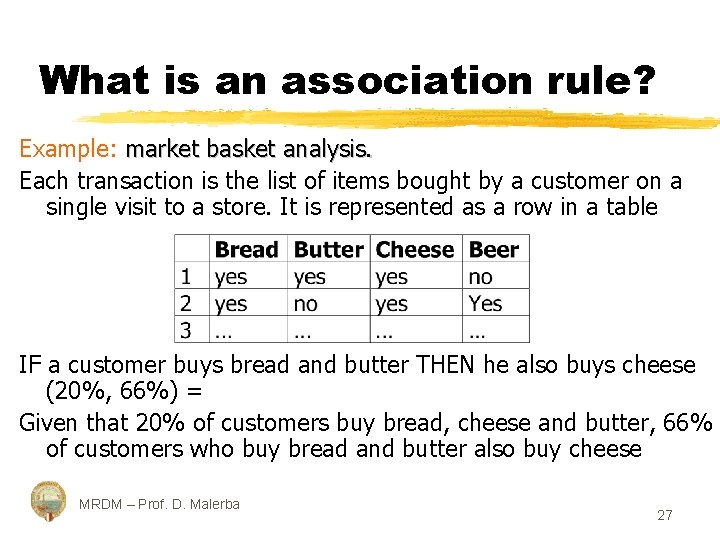

What is an association rule? Example: market basket analysis. Each transaction is the list of items bought by a customer on a single visit to a store. It is represented as a row in a table IF a customer buys bread and butter THEN he also buys cheese (20%, 66%) = Given that 20% of customers buy bread, cheese and butter, 66% of customers who buy bread and butter also buy cheese MRDM – Prof. D. Malerba 27

Mining association rules The propositional approach Problem statement Given: • a set of transactions D • a couple of thresholds, minsup and minconf Find all association rules that have support and confidence greater than minsup and minconf respectively. MRDM – Prof. D. Malerba 28

Mining association rules The propositional approach Problem decomposition • Find large (or frequent) itemsets • Generate highly-confident association rules Representation issues • The transaction set D may be a data file, a relational table or the result of a relational expression • Each transaction is a binary vector MRDM – Prof. D. Malerba 29

Mining association rules The propositional approach Solution to the first sub-problem The APRIORI algorithm (Agrawal & Srikant, 1999) Find large 1 -itemsets Cycle on the size (k>1) of the itemsets § APRIORI-gen Generate candidate k-itemsets from large (k-1)-itemsets § Generate large k-itemsets from candidate k-itemsets (cycle on the transactions in D) until no more large itemsets are found. MRDM – Prof. D. Malerba 30

Mining association rules The propositional approach Solution to the second sub-problem • For every large itemset Z, find all non-empty subsets X’s of Z • For every subset X, output a rule of the form X (Z-X) if support(Z)/support(X) minconf. Relevant work Agrawal & Srikant (1999). Fast Algorithms for Mining Association Rules, in Readings in Database Systems, Morgan Kaufmann Publishers. Han & Fu (1995). Discovery of Multiple-Level Association Rules from Large Databases, in Proc. 21 st VLDB Conference MRDM – Prof. D. Malerba 31

Mining association rules The ILP approach Problem statement Given: • a deductive relational database D • a couple of thresholds, minsup and minconf Find all association rules that have support and confidence greater than minsup and minconf respectively. MRDM – Prof. D. Malerba 32

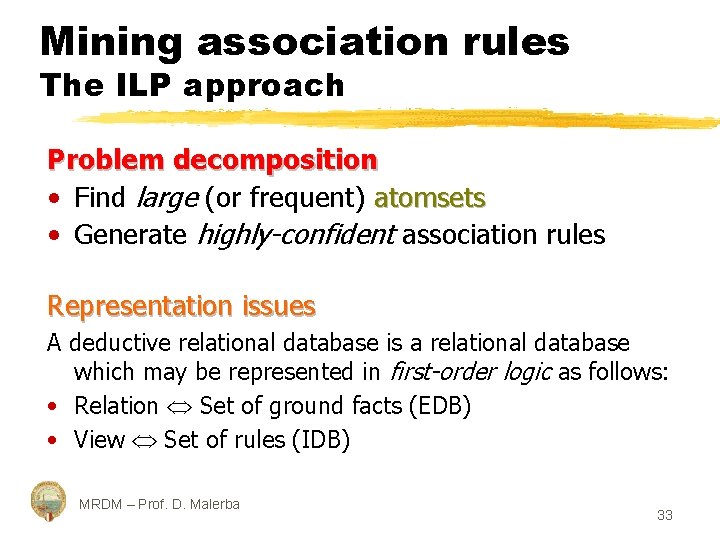

Mining association rules The ILP approach Problem decomposition • Find large (or frequent) atomsets • Generate highly-confident association rules Representation issues A deductive relational database is a relational database which may be represented in first-order logic as follows: • Relation Set of ground facts (EDB) • View Set of rules (IDB) MRDM – Prof. D. Malerba 33

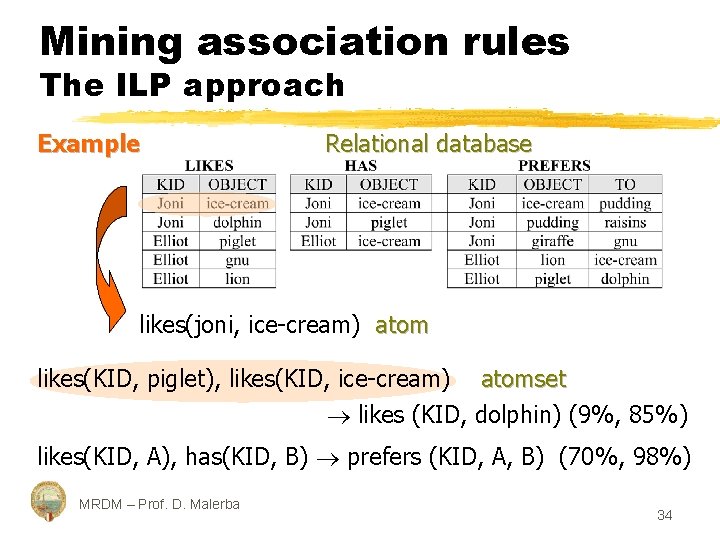

Mining association rules The ILP approach Example Relational database likes(joni, ice-cream) atom likes(KID, piglet), likes(KID, ice-cream) atomset likes (KID, dolphin) (9%, 85%) likes(KID, A), has(KID, B) prefers (KID, A, B) (70%, 98%) MRDM – Prof. D. Malerba 34

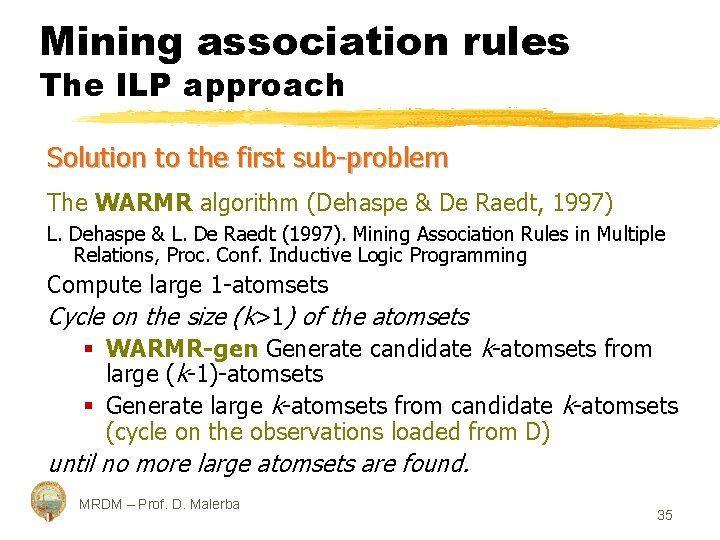

Mining association rules The ILP approach Solution to the first sub-problem The WARMR algorithm (Dehaspe & De Raedt, 1997) L. Dehaspe & L. De Raedt (1997). Mining Association Rules in Multiple Relations, Proc. Conf. Inductive Logic Programming Compute large 1 -atomsets Cycle on the size (k>1) of the atomsets § WARMR-gen Generate candidate k-atomsets from large (k-1)-atomsets § Generate large k-atomsets from candidate k-atomsets (cycle on the observations loaded from D) until no more large atomsets are found. MRDM – Prof. D. Malerba 35

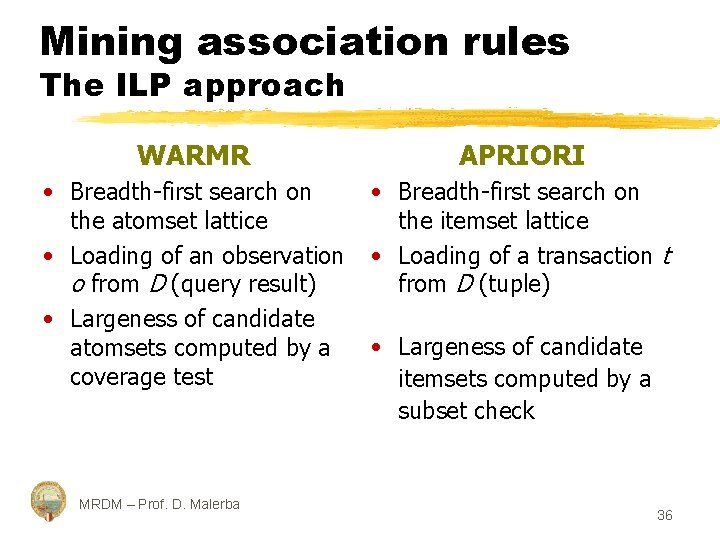

Mining association rules The ILP approach WARMR APRIORI • Breadth-first search on the atomset lattice the itemset lattice • Loading of an observation • Loading of a transaction t o from D (query result) from D (tuple) • Largeness of candidate atomsets computed by a • Largeness of candidate coverage test itemsets computed by a subset check MRDM – Prof. D. Malerba 36

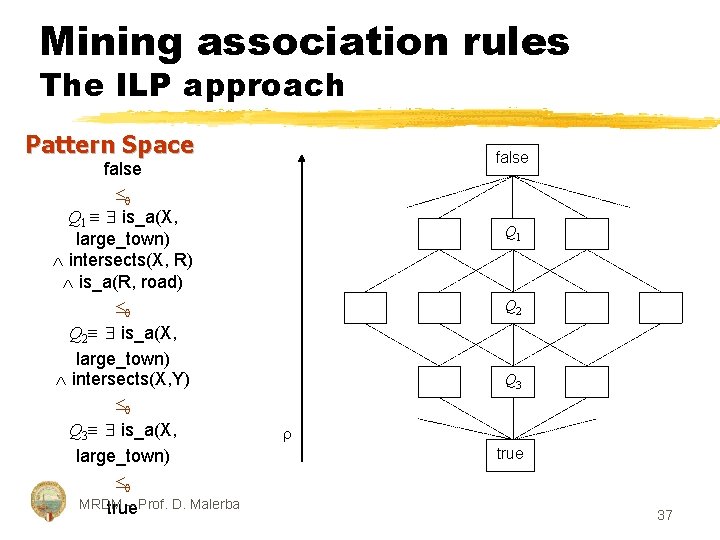

Mining association rules The ILP approach Pattern Space false Q 1 is_a(X, large_town) intersects(X, R) is_a(R, road) Q 2 is_a(X, large_town) intersects(X, Y) Q 3 is_a(X, large_town) MRDM – Prof. D. Malerba true false Q 1 Q 2 Q 3 true 37

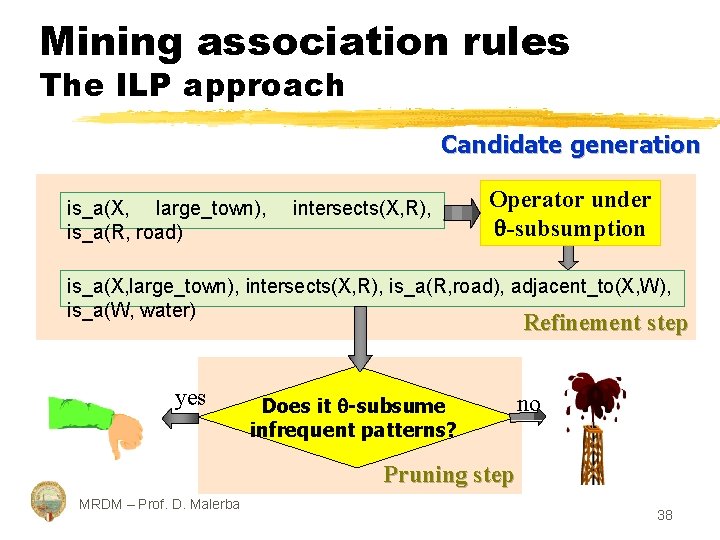

Mining association rules The ILP approach Candidate generation is_a(X, large_town), is_a(R, road) intersects(X, R), Operator under -subsumption is_a(X, large_town), intersects(X, R), is_a(R, road), adjacent_to(X, W), is_a(W, water) Refinement step yes Does it -subsume infrequent patterns? no Pruning step MRDM – Prof. D. Malerba 38

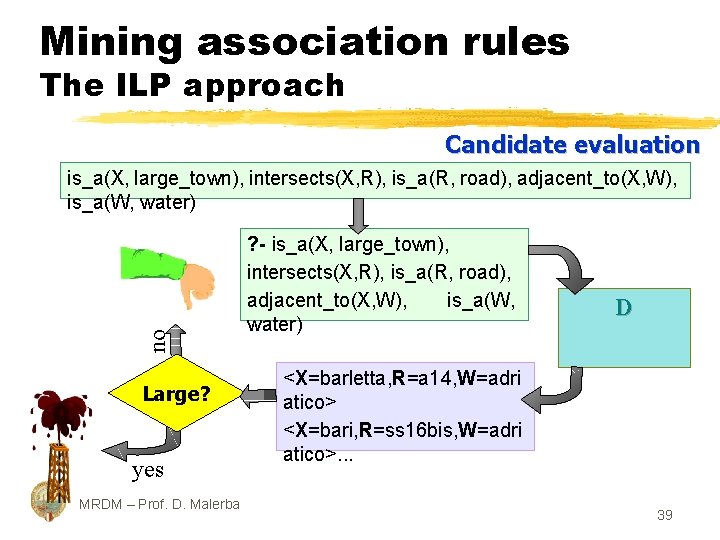

Mining association rules The ILP approach Candidate evaluation no is_a(X, large_town), intersects(X, R), is_a(R, road), adjacent_to(X, W), is_a(W, water) Large? yes MRDM – Prof. D. Malerba ? - is_a(X, large_town), intersects(X, R), is_a(R, road), adjacent_to(X, W), is_a(W, water) D <X=barletta, R=a 14, W=adri atico> <X=bari, R=ss 16 bis, W=adri atico>. . . 39

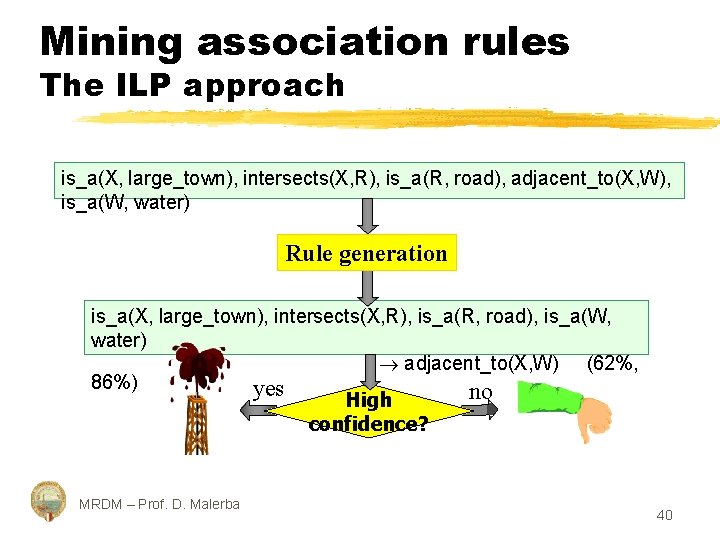

Mining association rules The ILP approach is_a(X, large_town), intersects(X, R), is_a(R, road), adjacent_to(X, W), is_a(W, water) Rule generation is_a(X, large_town), intersects(X, R), is_a(R, road), is_a(W, water) adjacent_to(X, W) (62%, 86%) yes no High confidence? MRDM – Prof. D. Malerba 40

Conclusions and future work • Multi-relational data mining: more data mining than logic program synthesis § choice of representation formalisms § input format more important than output format § data modelling — e. g. object-oriented data mining § new learning tasks and evaluation measures Reference Saso Dzeroski and Nada Lavrac, editors, Relational Data Mining, Springer-Verlag, September 2001 MRDM – Prof. D. Malerba 41

- Slides: 41