Reinforcing Reachable Routes Muralidhar H Sortur Gujar Sujit

Reinforcing Reachable Routes Muralidhar H Sortur Gujar Sujit Prakash Debmalya Panigrahi 4/9/2005 COMPUTER COMMUNICATION NETWORKS 1

Agenda n Routing q q n Reinforcement Learning in Routing q q q n n Multi-path routing Reachability routing Q-routing Ant-based routing Modified Ant-based routing Experimental Results Suggestions for improvement 4/9/2005 COMPUTER COMMUNICATION NETWORKS 2

Routing n Objectives q q n Minimize delay Maximize throughput Obvious solution q q Shortest (single) path routing!!! Shortest with respect to some cost n n 4/9/2005 Static costs Dynamic costs COMPUTER COMMUNICATION NETWORKS 3

Single Path Routing n Is this always a good choice? n NO!!! q q 4/9/2005 Imposes a routing tree on the available graph structure Not capable of meeting multiple performance objectives Severe oscillations in dynamic cost setting Failure of optimal link is costly COMPUTER COMMUNICATION NETWORKS 4

Multi-Path Routing n How to overcome these shortcomings? n MULTI-PATH ROUTING q q Multiple active paths between nodes Loop detection required Connection oriented less Single metric Multi metric 4/9/2005 COMPUTER COMMUNICATION NETWORKS 5

Reachability Routing n All loop free paths between source and destination used q q Exploration and exploitation Hard reachability n n q Soft reachability n 4/9/2005 All and only loop free paths used Does not have a practically viable solution All loop free paths used COMPUTER COMMUNICATION NETWORKS 6

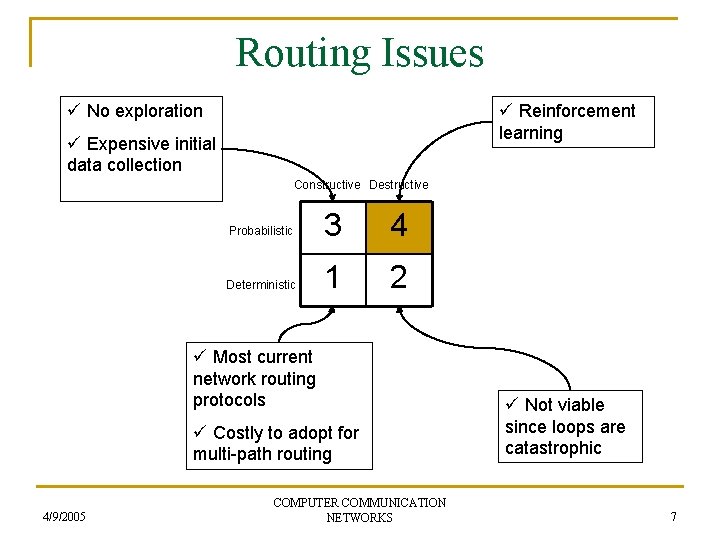

Routing Issues ü No exploration ü Reinforcement learning ü Expensive initial data collection Constructive Destructive Probabilistic 3 4 Deterministic 1 2 ü Most current network routing protocols ü Costly to adopt for multi-path routing 4/9/2005 COMPUTER COMMUNICATION NETWORKS ü Not viable since loops are catastrophic 7

Reinforcement Learning n Populating routing tables is viewed as a problem of learning the entries q q n Agents = Routers Action = Exploration by control packets Reinforcement = Probabilities tweaked according to response from environment Concurrent learning at all routers – multi-agent learning Probabilistic nature of routing table entries q 4/9/2005 Suitable for single path and multi-path routing COMPUTER COMMUNICATION NETWORKS 8

![Q-Routing [Boyan-Litman, ’ 94] n One of the first RL algorithms for routing n Q-Routing [Boyan-Litman, ’ 94] n One of the first RL algorithms for routing n](http://slidetodoc.com/presentation_image_h/247558b3c72a1d0bd6bdf087da37a925/image-9.jpg)

Q-Routing [Boyan-Litman, ’ 94] n One of the first RL algorithms for routing n Each router x maintains Qx(d, is)- a metric denoting estimated time for delivery of a packet to destination d on interface is q 4/9/2005 Deterministic or probabilistic routing possible COMPUTER COMMUNICATION NETWORKS 9

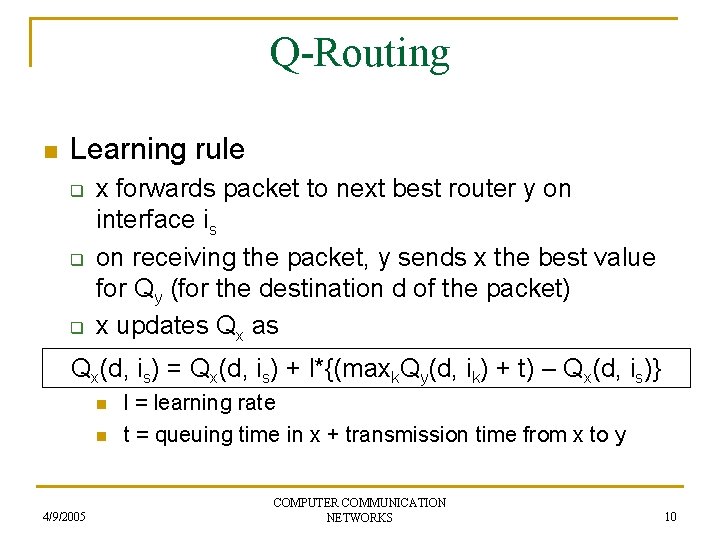

Q-Routing n Learning rule q q q x forwards packet to next best router y on interface is on receiving the packet, y sends x the best value for Qy (for the destination d of the packet) x updates Qx as Qx(d, is) = Qx(d, is) + l*{(maxk. Qy(d, ik) + t) – Qx(d, is)} n n 4/9/2005 l = learning rate t = queuing time in x + transmission time from x to y COMPUTER COMMUNICATION NETWORKS 10

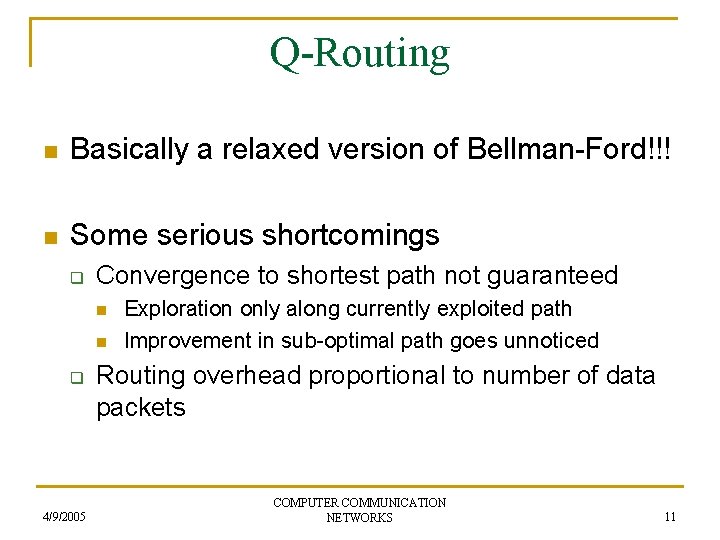

Q-Routing n Basically a relaxed version of Bellman-Ford!!! n Some serious shortcomings q Convergence to shortest path not guaranteed n n q 4/9/2005 Exploration only along currently exploited path Improvement in sub-optimal path goes unnoticed Routing overhead proportional to number of data packets COMPUTER COMMUNICATION NETWORKS 11

![Ant-based Routing [Subramanian et al, ’ 97] n Exploration and exploitation decoupled q q Ant-based Routing [Subramanian et al, ’ 97] n Exploration and exploitation decoupled q q](http://slidetodoc.com/presentation_image_h/247558b3c72a1d0bd6bdf087da37a925/image-12.jpg)

Ant-based Routing [Subramanian et al, ’ 97] n Exploration and exploitation decoupled q q 4/9/2005 Exploration by small control packets called ants generated by hosts to randomly chosen destinations Exploitation by data packets COMPUTER COMMUNICATION NETWORKS 12

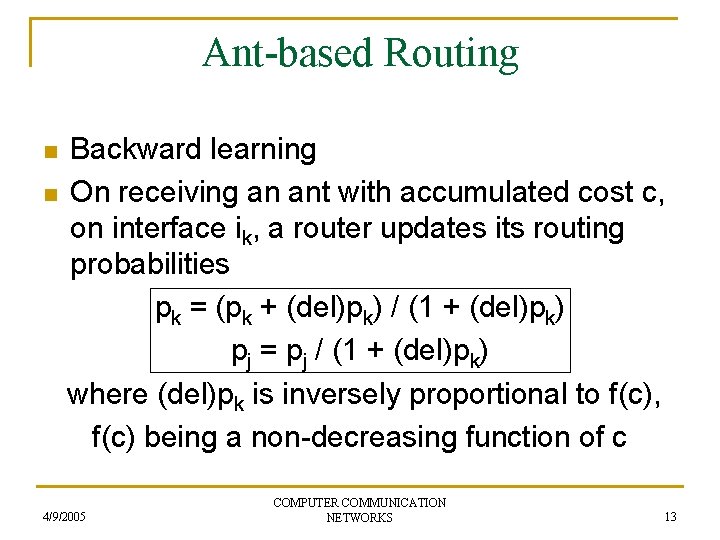

Ant-based Routing n n Backward learning On receiving an ant with accumulated cost c, on interface ik, a router updates its routing probabilities pk = (pk + (del)pk) / (1 + (del)pk) pj = pj / (1 + (del)pk) where (del)pk is inversely proportional to f(c), f(c) being a non-decreasing function of c 4/9/2005 COMPUTER COMMUNICATION NETWORKS 13

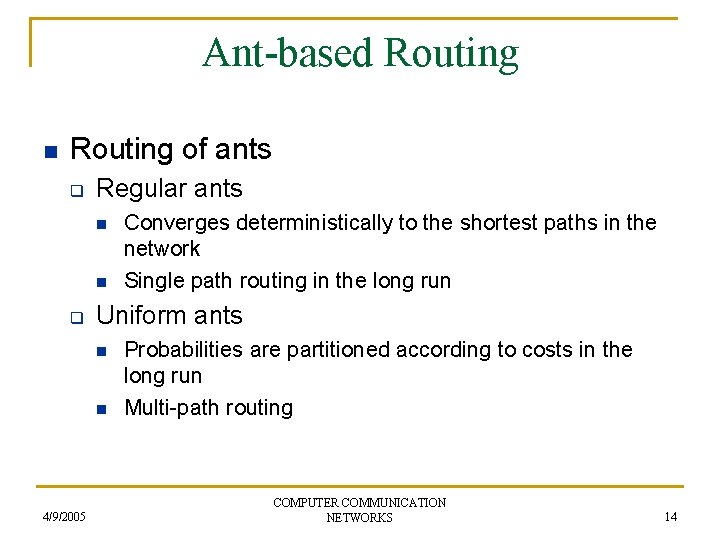

Ant-based Routing n Routing of ants q Regular ants n n q Uniform ants n n 4/9/2005 Converges deterministically to the shortest paths in the network Single path routing in the long run Probabilities are partitioned according to costs in the long run Multi-path routing COMPUTER COMMUNICATION NETWORKS 14

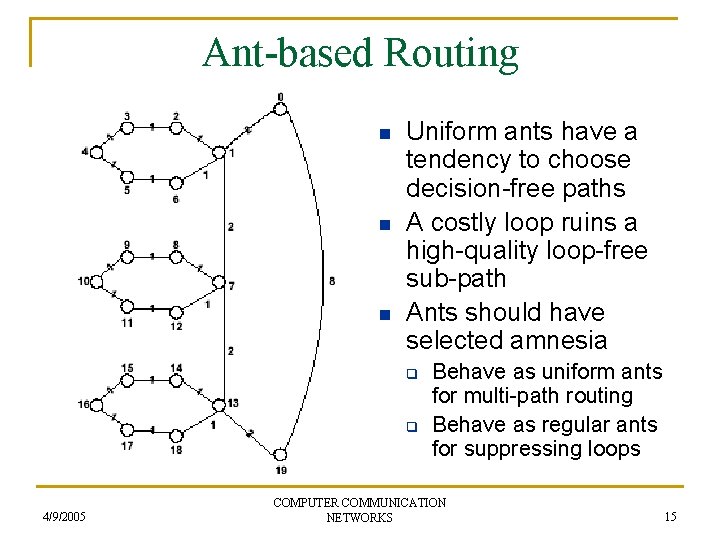

Ant-based Routing n n n Uniform ants have a tendency to choose decision-free paths A costly loop ruins a high-quality loop-free sub-path Ants should have selected amnesia q q 4/9/2005 Behave as uniform ants for multi-path routing Behave as regular ants for suppressing loops COMPUTER COMMUNICATION NETWORKS 15

![Modified Ant-based Routing [Varadarajan et al, ’ 03] n Introduce statistics table for each Modified Ant-based Routing [Varadarajan et al, ’ 03] n Introduce statistics table for each](http://slidetodoc.com/presentation_image_h/247558b3c72a1d0bd6bdf087da37a925/image-16.jpg)

Modified Ant-based Routing [Varadarajan et al, ’ 03] n Introduce statistics table for each node q q q 4/9/2005 Remembers the number of ants generated and those that returned without delivery for each destination Discard, for each destination, all interfaces that had a 100% delivery failure Effectively tries to detect loops and discard interfaces leading to them COMPUTER COMMUNICATION NETWORKS 16

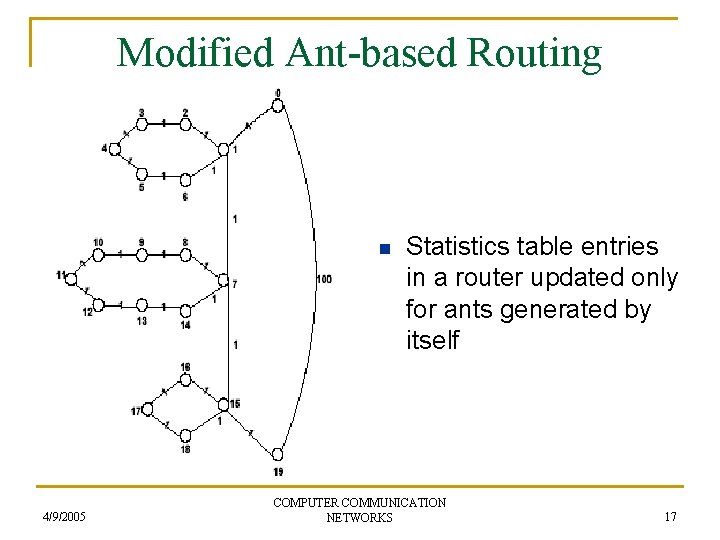

Modified Ant-based Routing n 4/9/2005 Statistics table entries in a router updated only for ants generated by itself COMPUTER COMMUNICATION NETWORKS 17

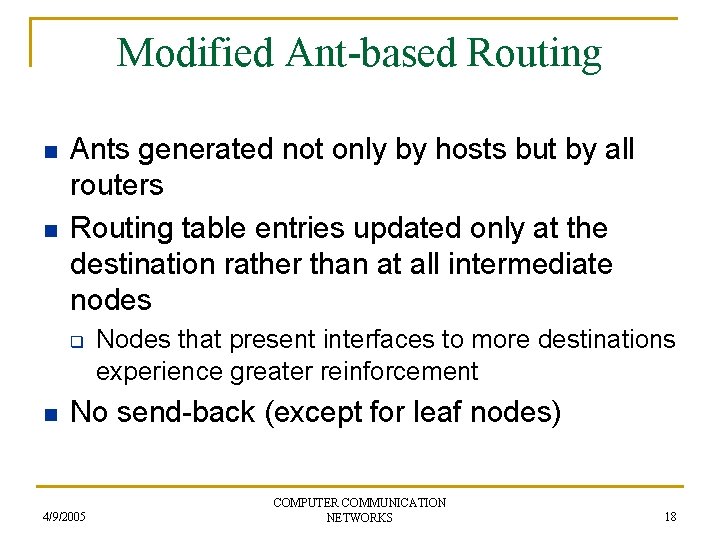

Modified Ant-based Routing n n Ants generated not only by hosts but by all routers Routing table entries updated only at the destination rather than at all intermediate nodes q n Nodes that present interfaces to more destinations experience greater reinforcement No send-back (except for leaf nodes) 4/9/2005 COMPUTER COMMUNICATION NETWORKS 18

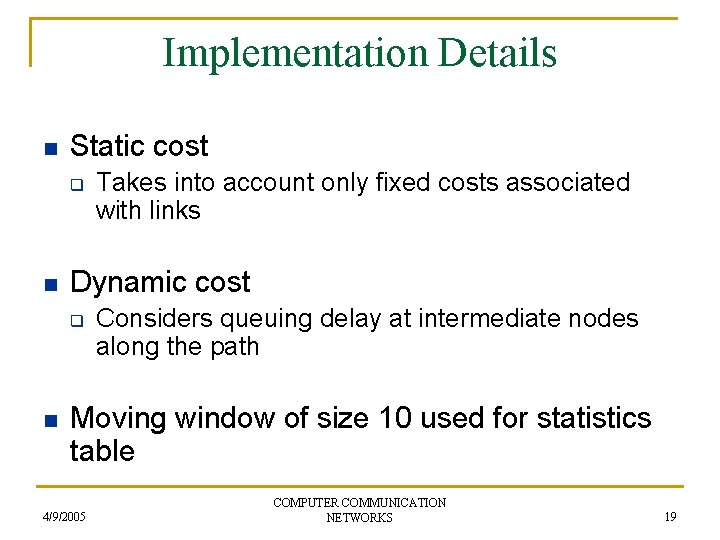

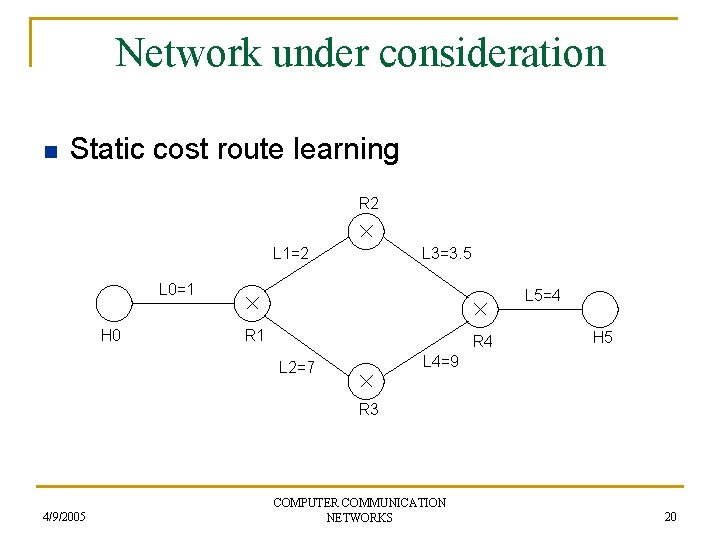

Implementation Details n Static cost q n Dynamic cost q n Takes into account only fixed costs associated with links Considers queuing delay at intermediate nodes along the path Moving window of size 10 used for statistics table 4/9/2005 COMPUTER COMMUNICATION NETWORKS 19

Network under consideration n Static cost route learning R 2 L 1=2 L 3=3. 5 L 0=1 H 0 L 5=4 R 1 R 4 H 5 L 4=9 L 2=7 R 3 4/9/2005 COMPUTER COMMUNICATION NETWORKS 20

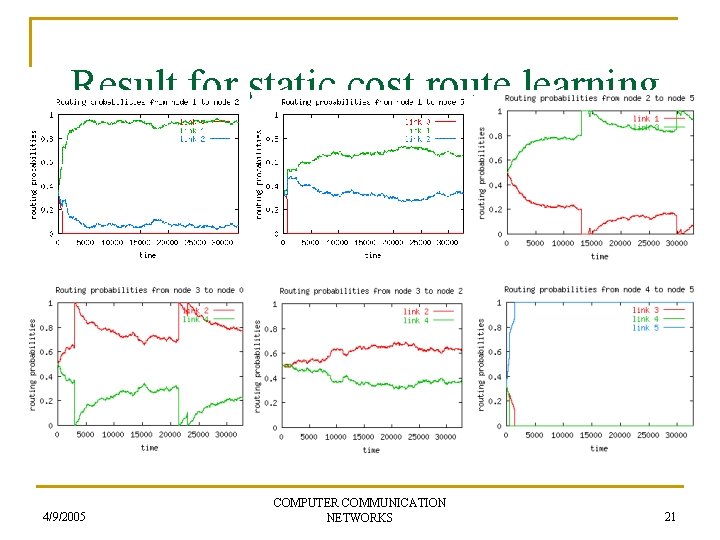

Result for static cost route learning 4/9/2005 COMPUTER COMMUNICATION NETWORKS 21

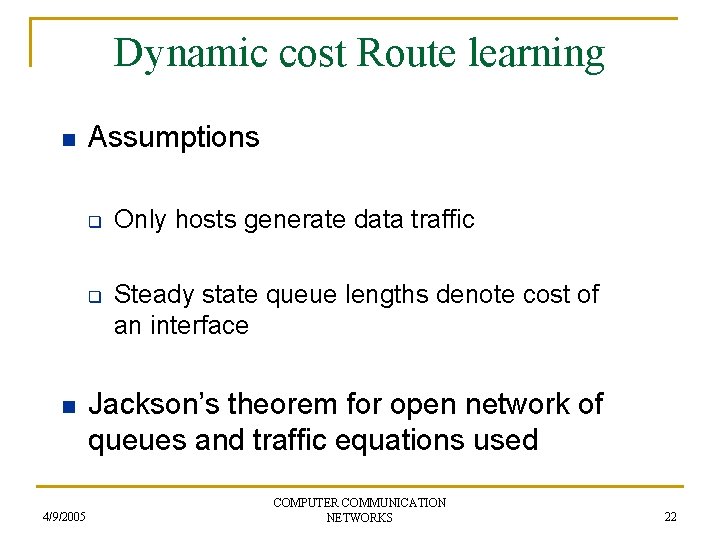

Dynamic cost Route learning n Assumptions q q n 4/9/2005 Only hosts generate data traffic Steady state queue lengths denote cost of an interface Jackson’s theorem for open network of queues and traffic equations used COMPUTER COMMUNICATION NETWORKS 22

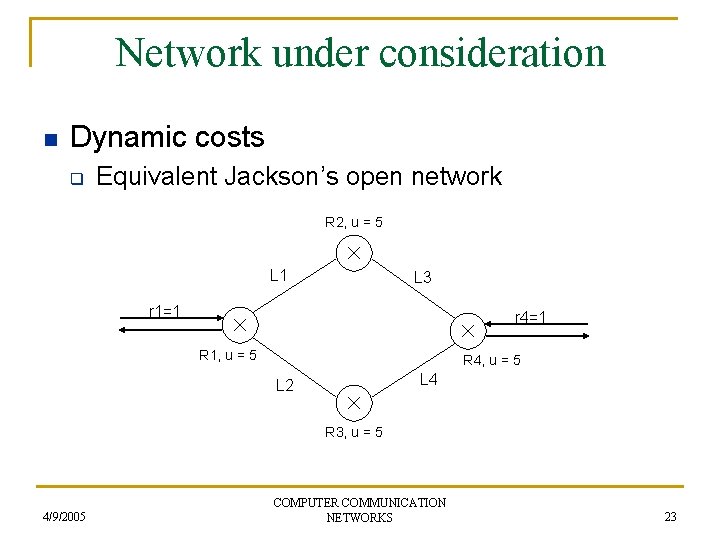

Network under consideration n Dynamic costs q Equivalent Jackson’s open network R 2, u = 5 L 1 L 3 r 1=1 r 4=1 R 1, u = 5 R 4, u = 5 L 4 L 2 R 3, u = 5 4/9/2005 COMPUTER COMMUNICATION NETWORKS 23

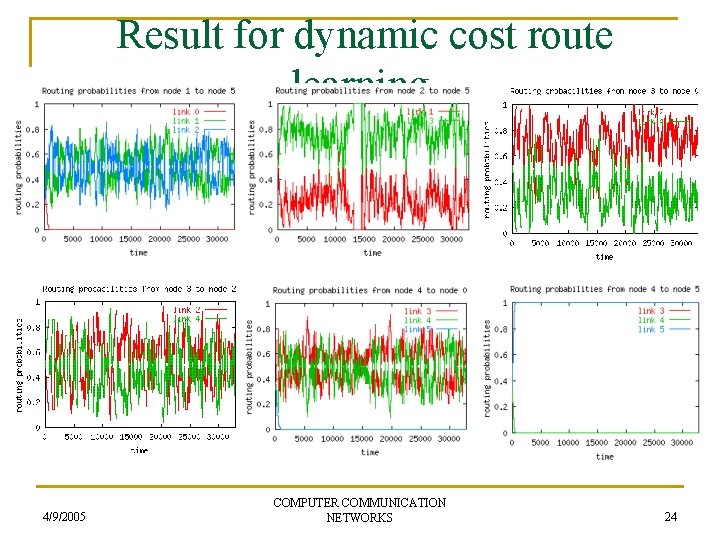

Result for dynamic cost route learning 4/9/2005 COMPUTER COMMUNICATION NETWORKS 24

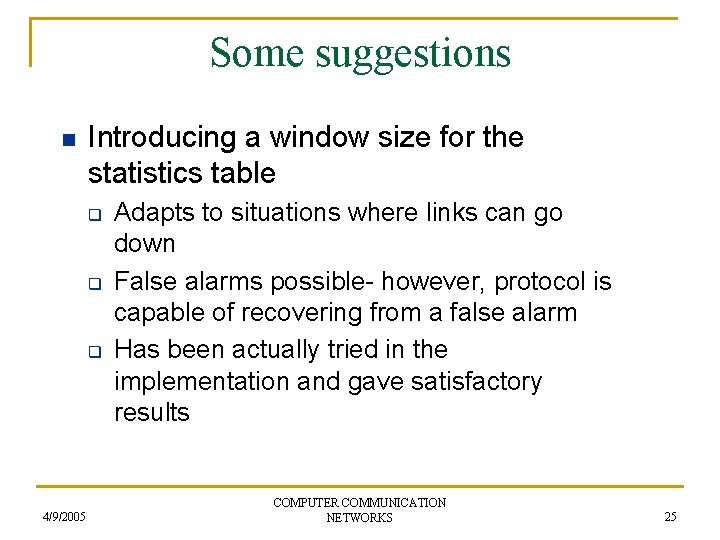

Some suggestions n Introducing a window size for the statistics table q q q 4/9/2005 Adapts to situations where links can go down False alarms possible- however, protocol is capable of recovering from a false alarm Has been actually tried in the implementation and gave satisfactory results COMPUTER COMMUNICATION NETWORKS 25

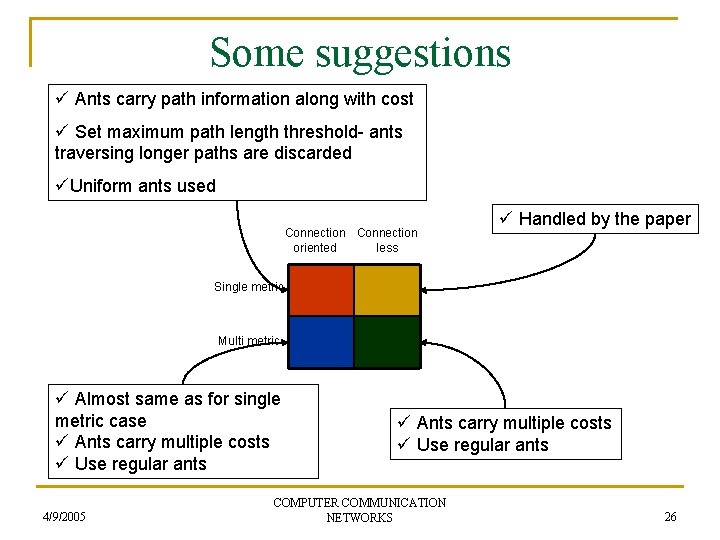

Some suggestions ü Ants carry path information along with cost ü Set maximum path length threshold- ants traversing longer paths are discarded üUniform ants used Connection oriented less ü Handled by the paper Single metric Multi metric ü Almost same as for single metric case ü Ants carry multiple costs ü Use regular ants 4/9/2005 ü Ants carry multiple costs ü Use regular ants COMPUTER COMMUNICATION NETWORKS 26

References q q q 4/9/2005 Srinidhi Varadarajan, Naren Ramakrishnan and Muthukumar Thirunavukkarasu, Reinforcing Reachable Routes. In Computer Networks, Vol. 43, No. 3, pages 389 -416, Oct 2003 Devika Subramanian, Johnny Chen and Peter Druschel, Ants and Reinforcement Learning: A Case Study in Routing in Dynamic Networks. In Proceedings of the 15 th International Joint Conference on Artificial Intelligence (IJCAI ’ 97), pages 832 -839. Morgan Kaufmann, San Francisco, CA, 1997 Justin A. Boyan and Michael L. Littman, Packet Routing in Dynamically Changing Networks: A Reinforcement Learning Approach. In Advances in Neural Information Processing Systems 6 (NIPS 6), pages 671 -678. Morgan Kauffman, San Francisco, CA, 1994 COMPUTER COMMUNICATION NETWORKS 27

THANK YOU 4/9/2005 COMPUTER COMMUNICATION NETWORKS 28

- Slides: 28