Reinforcement Learning Presented by Kyle Feuz Outline Motivation

Reinforcement Learning Presented by: Kyle Feuz

Outline Motivation MDPs RL Model-Based Model-Free Challenges Q-Learning SARSA

Examples Pac-Man Spider

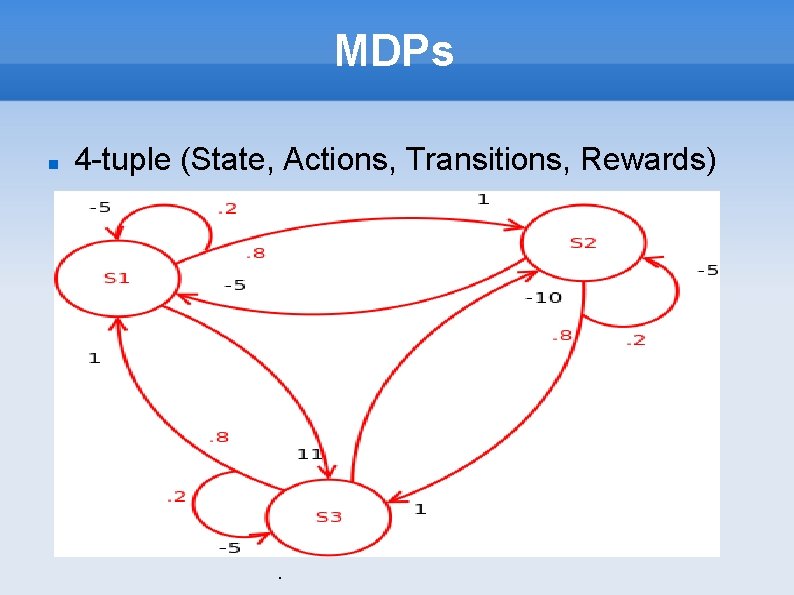

MDPs 4 -tuple (State, Actions, Transitions, Rewards) .

Important Terms Policy Reward Function Value Function Model

Model-Based RL Learn transition function Learn expected rewards Compute the optimal policy

Model-Free RL Learn expected rewards/values Skip learning transistion function Trade-offs?

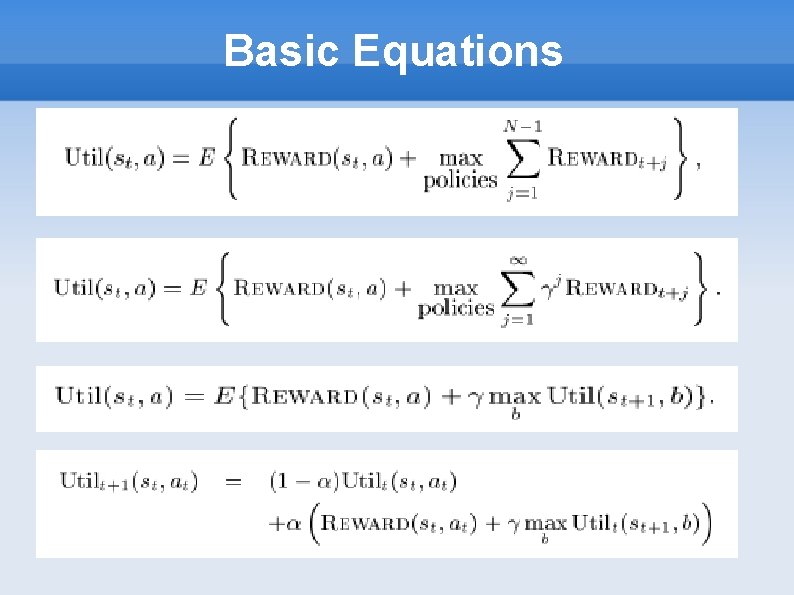

Basic Equations

Examples Pac-Man Spider Mario

Q-Learning Demo Video

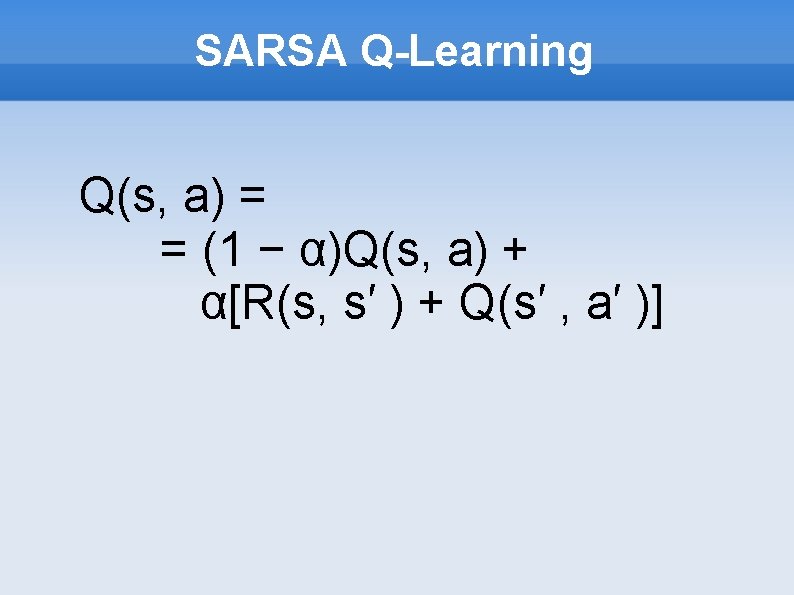

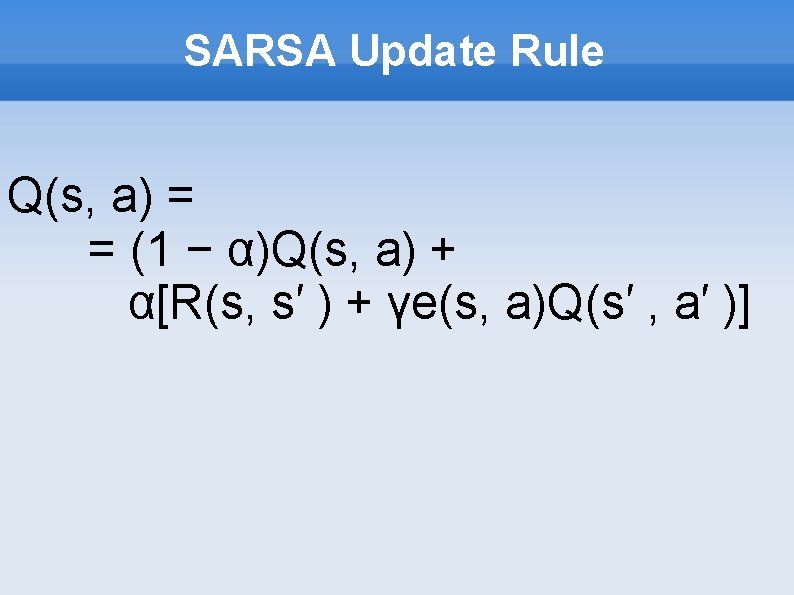

SARSA Q-Learning Q(s, a) = = (1 − α)Q(s, a) + α[R(s, s′ ) + Q(s′ , a′ )]

Challenges Explore vs. Exploit State Space representation Training Time Multiagent Learning Moving Target Competive or Cooperative

Transfer Learning for Reinforcement Learning on a Physical Robot Applied TL and RL on Nao robot TL using the q-value reuse approach RL uses SARSA variant State space is represented via CMAC Neural Network inspired by the cerebellum Acts as an associative memory Allows agents to generalize the state space

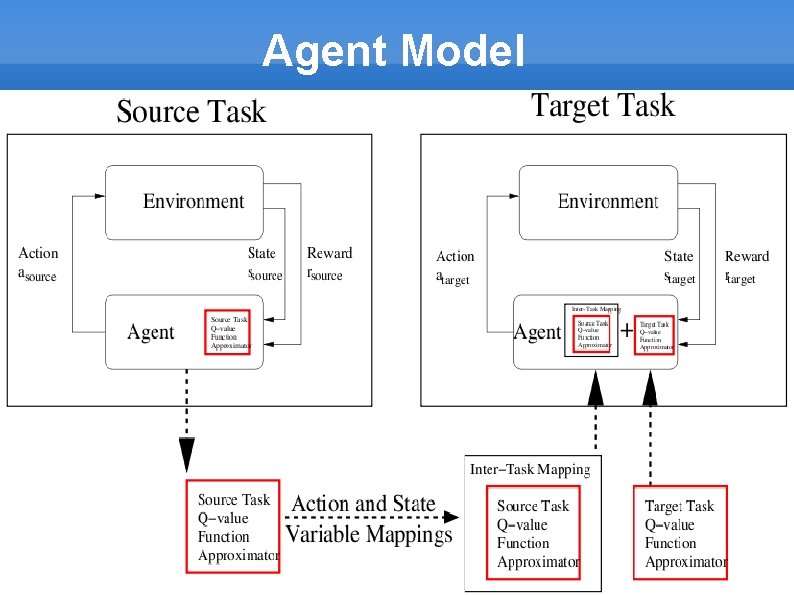

Agent Model

SARSA Update Rule Q(s, a) = = (1 − α)Q(s, a) + α[R(s, s′ ) + γe(s, a)Q(s′ , a′ )]

Q-Value Reuse Q(s, a) = = Qsource (χX (s), χA (a)) + Qtarget (s, a)

Experimental Setup Seated Nao robot Hit the ball at 45 angle 5 Actions in Source – 9 Actions in Target

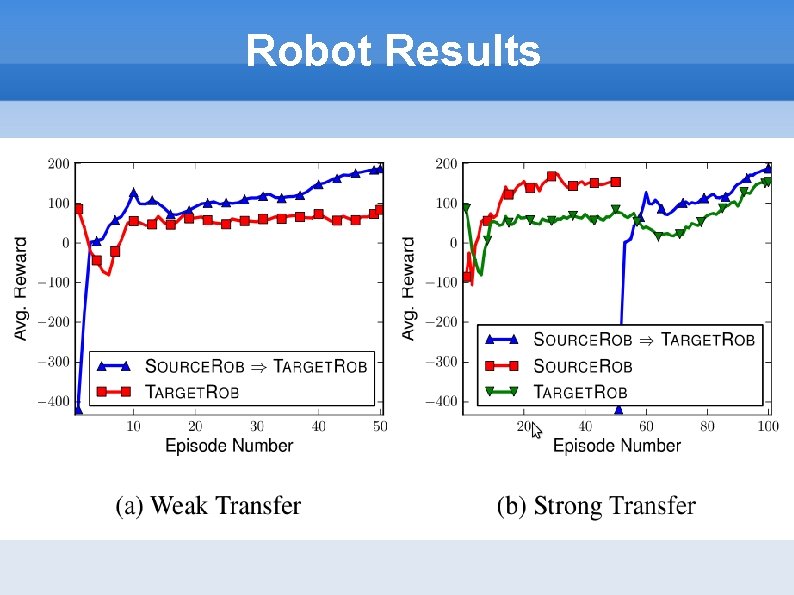

Robot Results

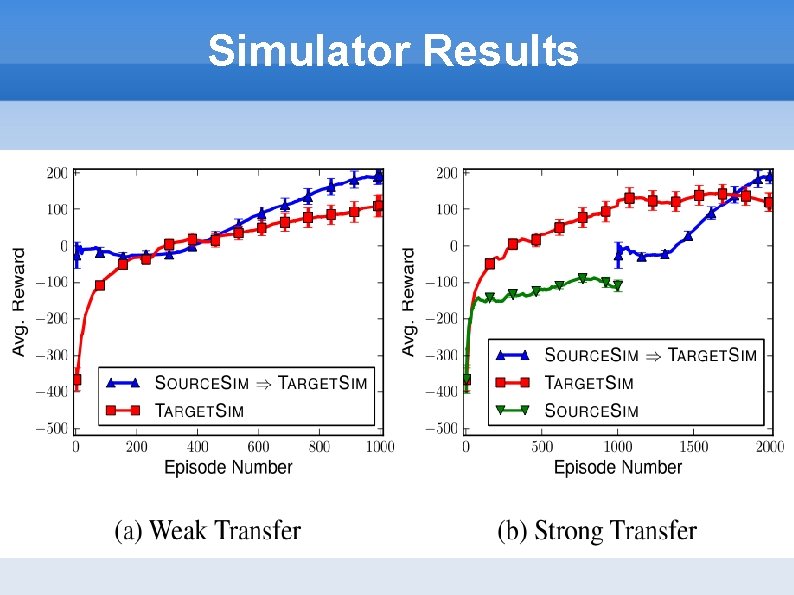

Simulator Results

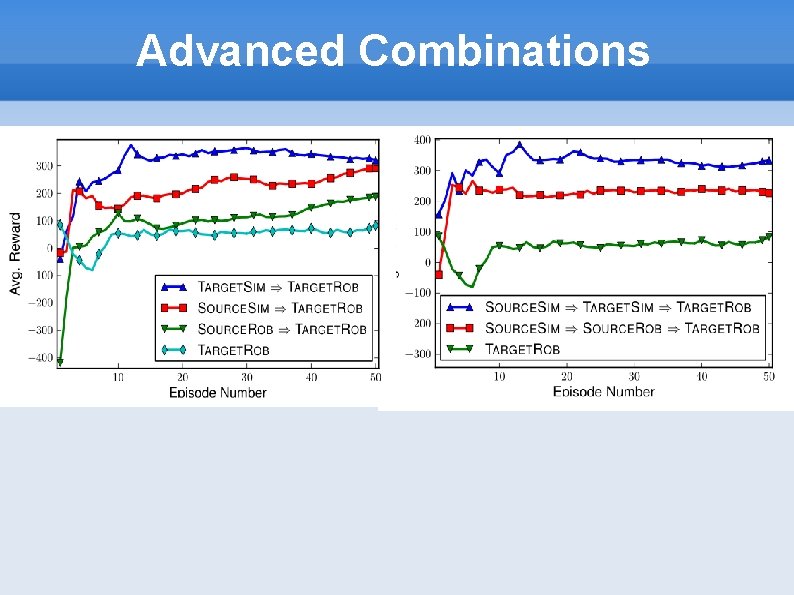

Advanced Combinations

Examples Pac-Man Spider Mario Q-Learning Penalty Kick Others

References and Resources rl repository rl-community rl on PBWorks rl warehouse Reinforcement Learning: An Introduction Artificial Intelligence: A Modern Approach How to Make Software Agents do the Right Thing

Questions?

- Slides: 25