Reinforcement Learning Michael Roberts With Material From Reinforcement

Reinforcement Learning Michael Roberts With Material From: Reinforcement Learning: An Introduction Sutton & Barto (1998)

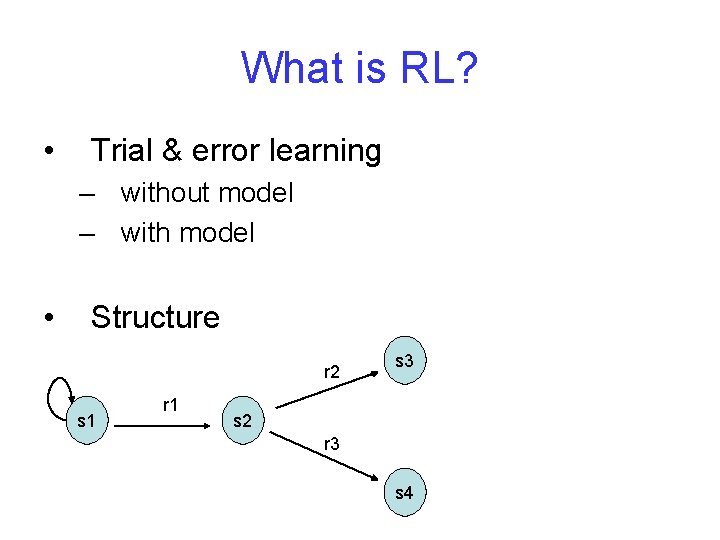

What is RL? • Trial & error learning – without model – with model • Structure r 2 s 1 r 1 s 3 s 2 r 3 s 4

RL vs. Supervised Learning • Evaluative vs. Instructional feedback • Role of exploration • On-line performance

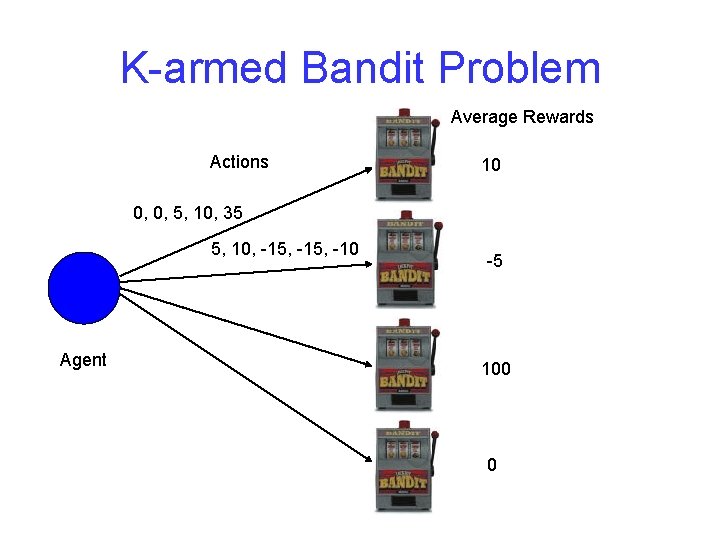

K-armed Bandit Problem Average Rewards Actions 10 0, 0, 5, 10, 35 5, 10, -15, -10 Agent -5 100 0

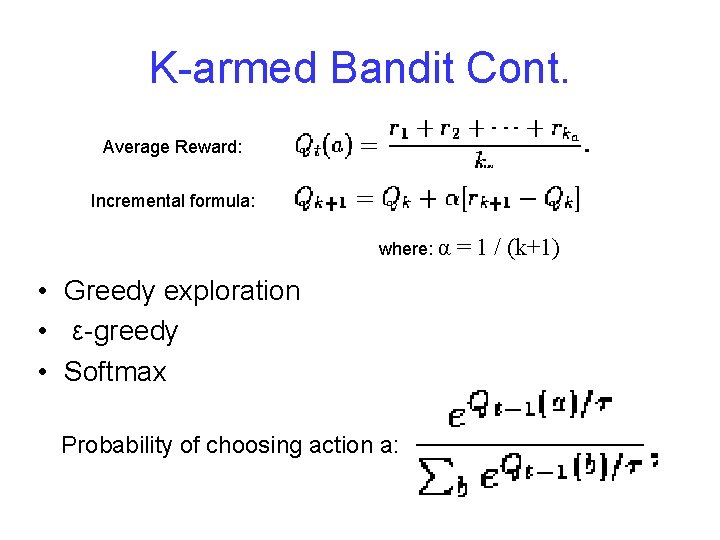

K-armed Bandit Cont. Average Reward: Incremental formula: where: α • Greedy exploration • ε-greedy • Softmax Probability of choosing action a: = 1 / (k+1)

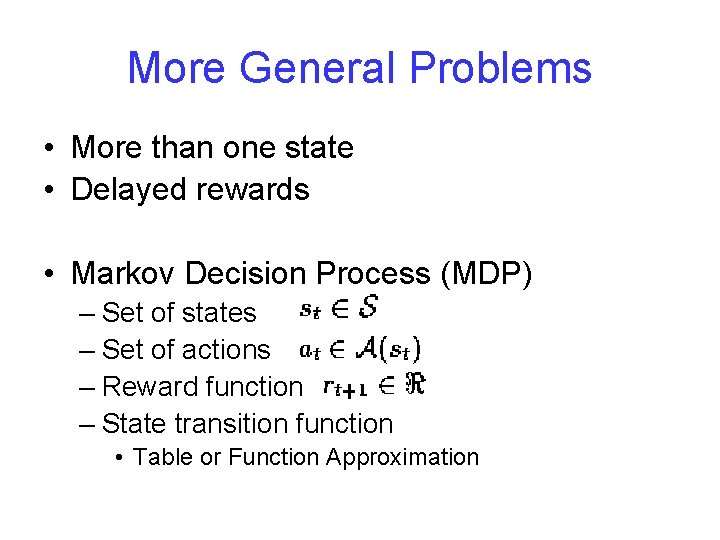

More General Problems • More than one state • Delayed rewards • Markov Decision Process (MDP) – Set of states – Set of actions – Reward function – State transition function • Table or Function Approximation

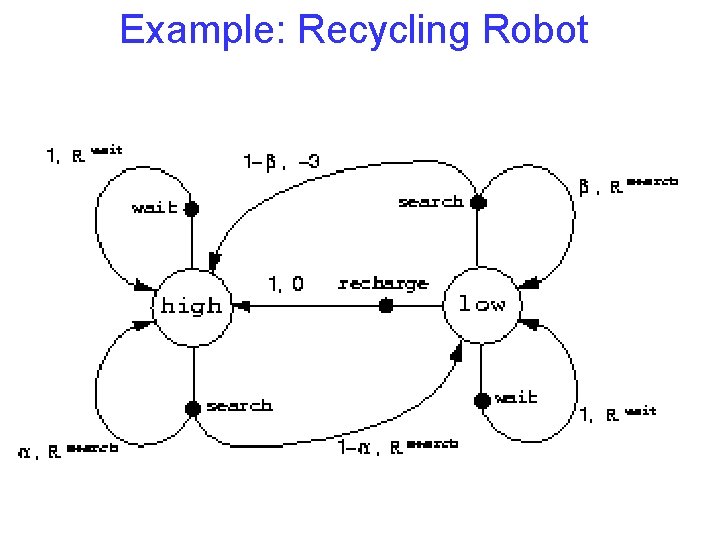

Example: Recycling Robot

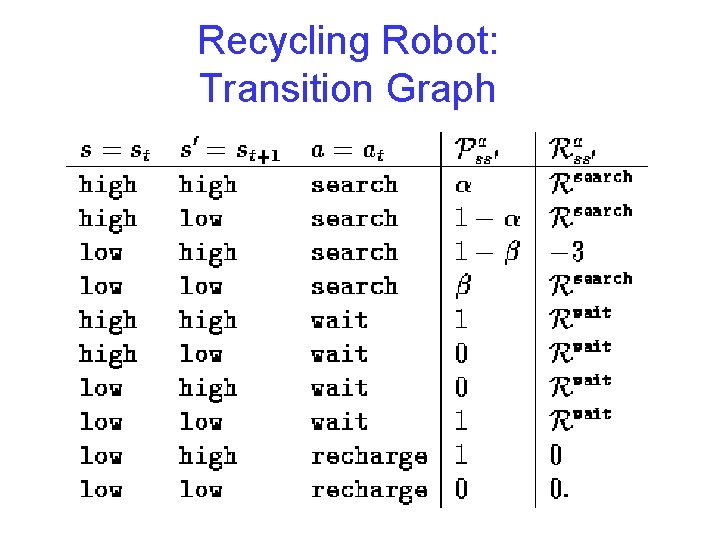

Recycling Robot: Transition Graph

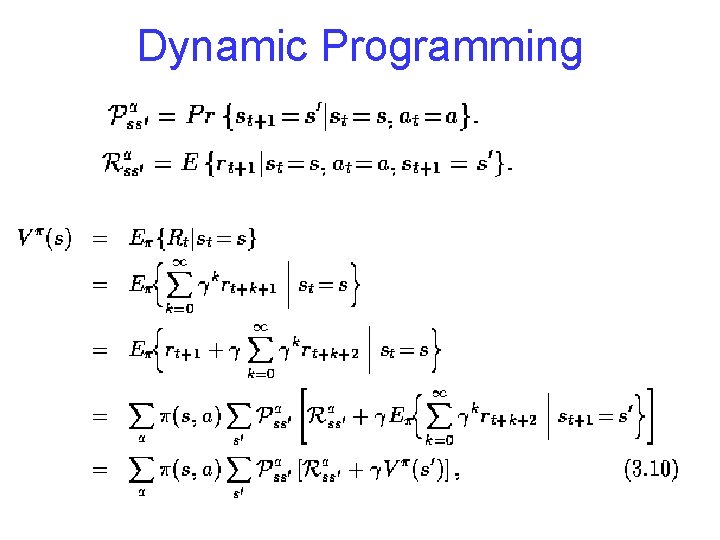

Dynamic Programming

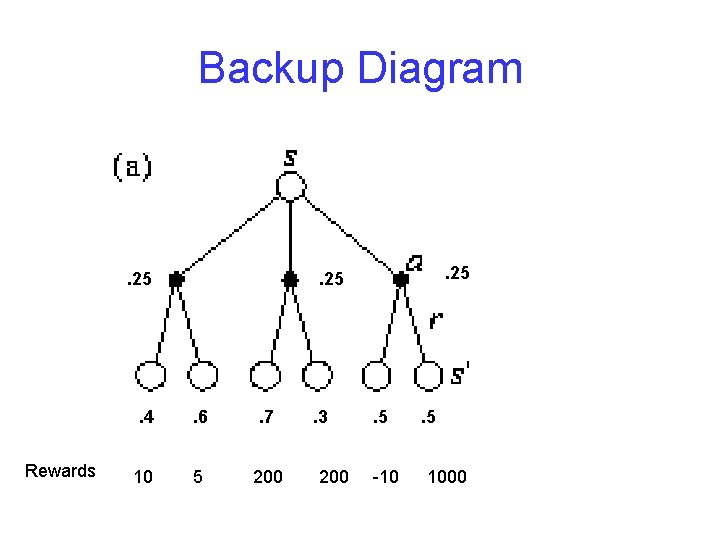

Backup Diagram . 25 Rewards . 25 . 4 . 6 . 7 10 5 200 . 3 200 . 5 -10 . 5 1000

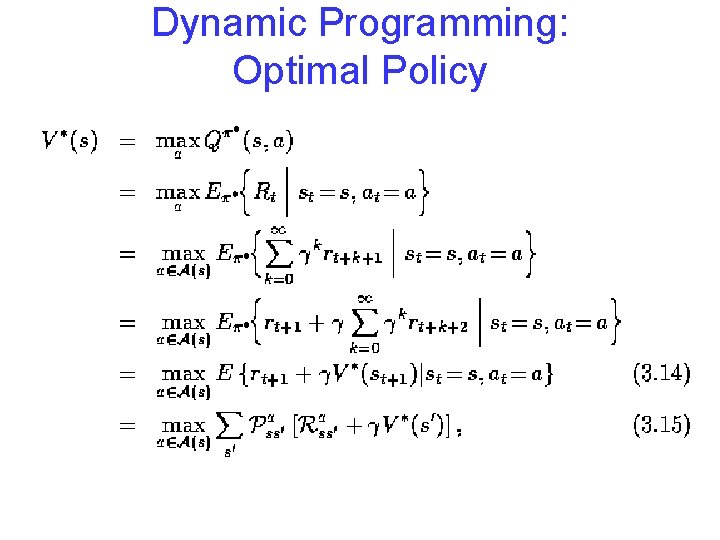

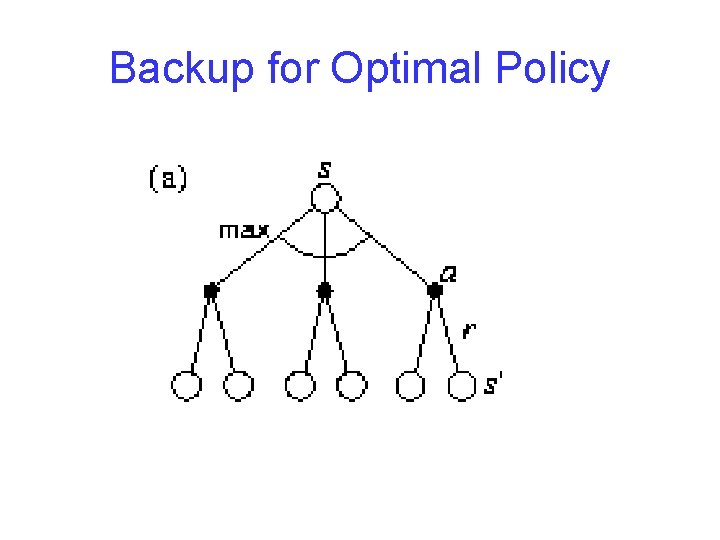

Dynamic Programming: Optimal Policy

Backup for Optimal Policy

Performance Metrics • Eventual convergence to optimality • Speed of convergence to optimality • Regret (Kaelbling, L. , Littman, M. , & Moore, A. 1996)

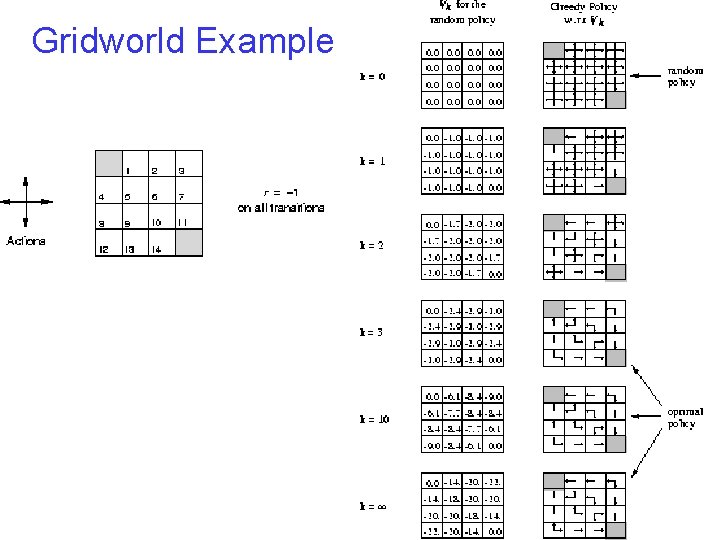

Gridworld Example

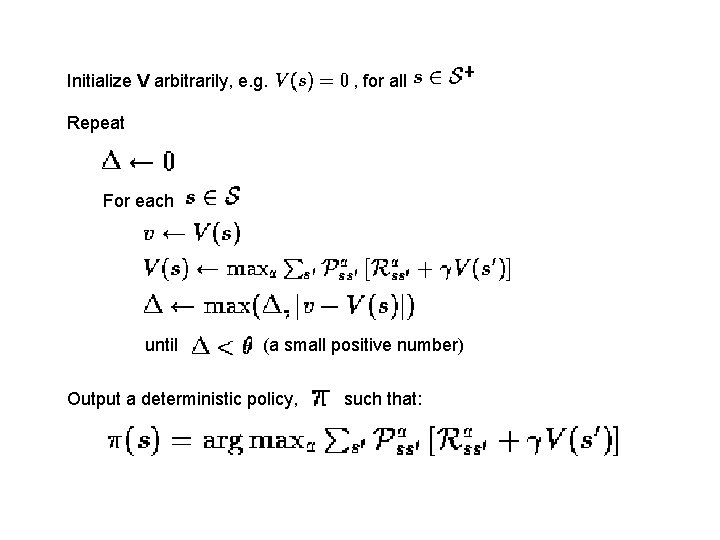

Initialize V arbitrarily, e. g. , for all Repeat For each until (a small positive number) Output a deterministic policy, such that:

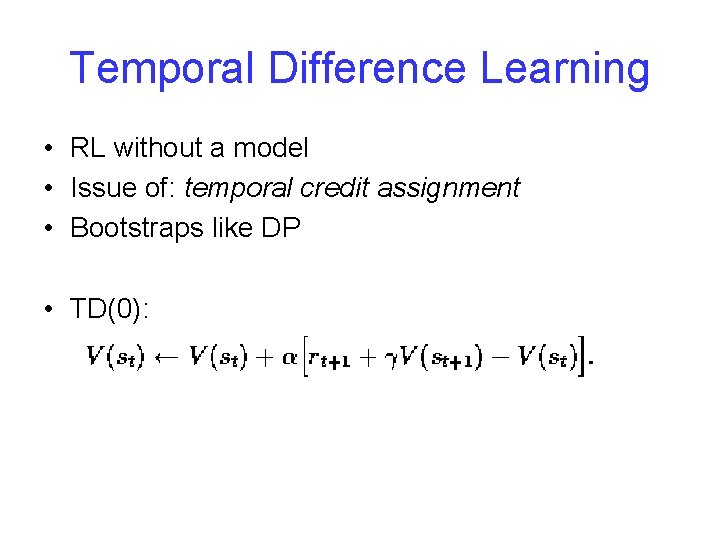

Temporal Difference Learning • RL without a model • Issue of: temporal credit assignment • Bootstraps like DP • TD(0):

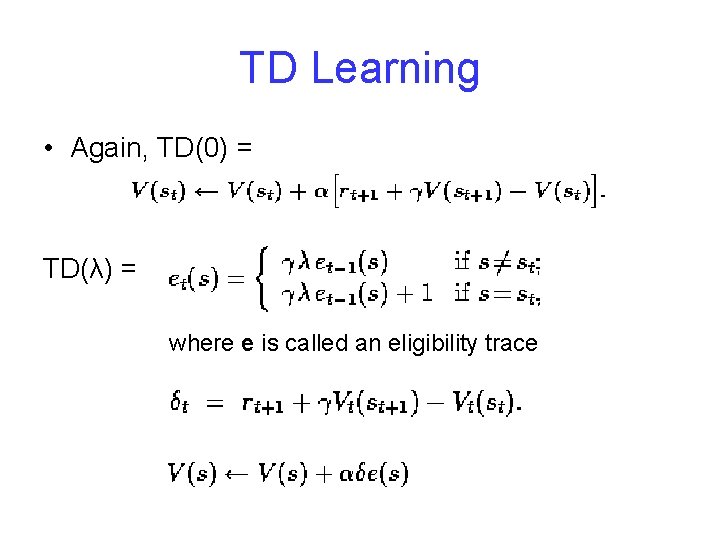

TD Learning • Again, TD(0) = TD(λ) = where e is called an eligibility trace

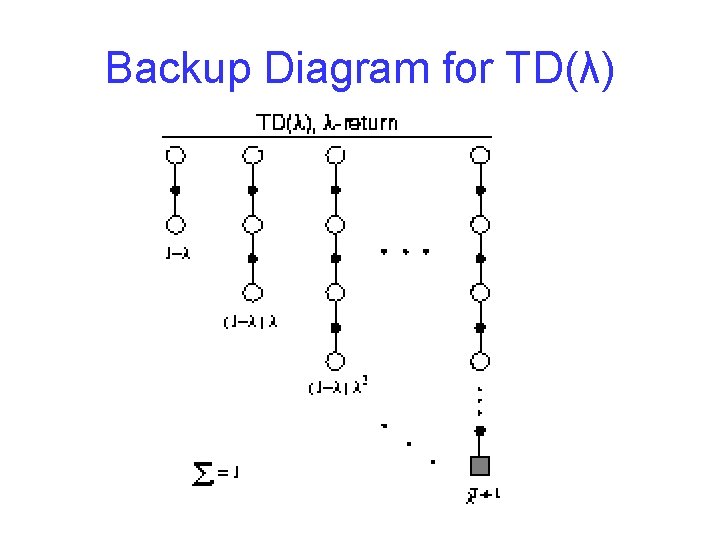

Backup Diagram for TD(λ)

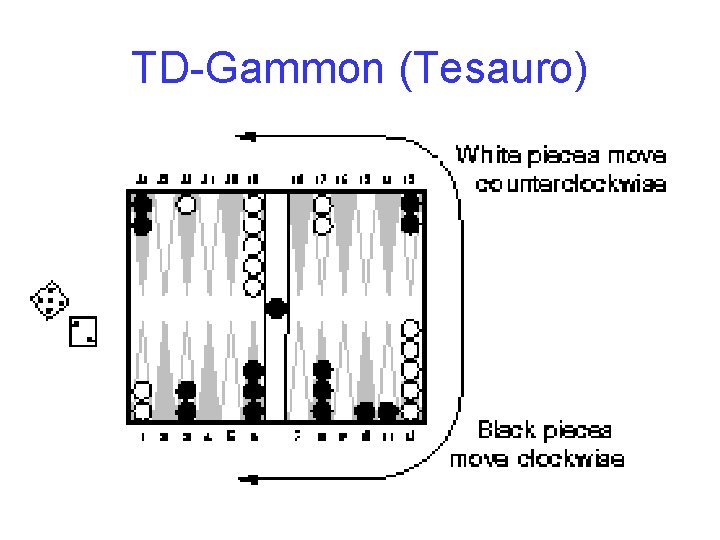

TD-Gammon (Tesauro)

Additional Work • • POMDP’s Macros Multi-agent rl Multiple reward structures

- Slides: 21