Reinforcement Learning Learning algorithms Function Approximation Yishay Mansour

- Slides: 23

Reinforcement Learning: Learning algorithms Function Approximation Yishay Mansour Tel-Aviv University

Outline • Week I: Basics – Mathematical Model (MDP) – Planning • Value iteration • Policy iteration • Week II: Learning Algorithms – Model based – Model Free • Week III: Large state space

Learning Algorithms Given access only to actions perform: 1. policy evaluation. 2. control - find optimal policy. Two approaches: 1. Model based (Dynamic Programming). 2. Model free (Q-Learning, SARSA).

Learning: Policy improvement • Assume that we can compute: – Given a policy π, – The V and Q functions of π • Can perform policy improvement: – Π= Greedy (Q) • Process converges if estimations are accurate.

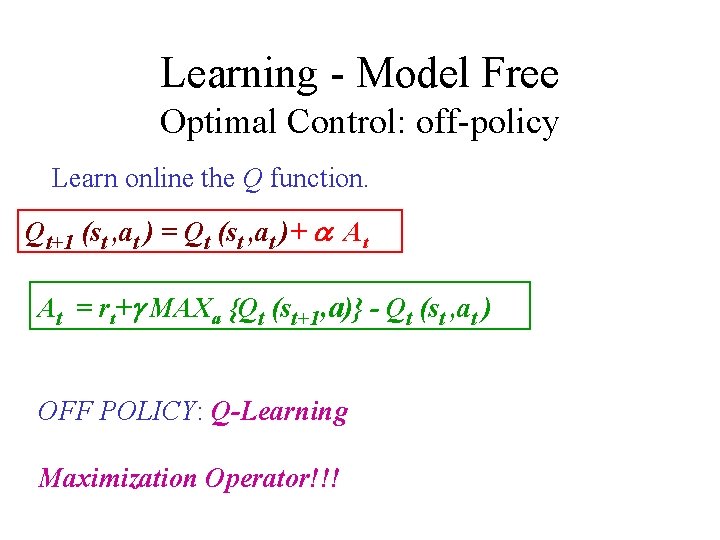

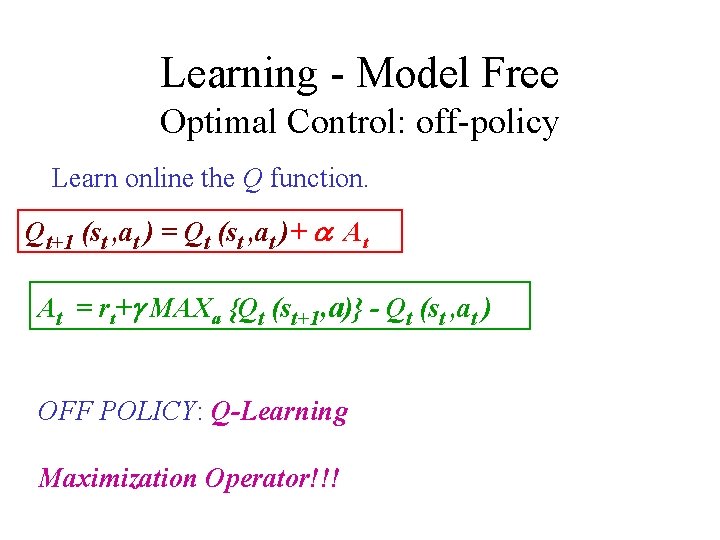

Learning - Model Free Optimal Control: off-policy Learn online the Q function. Qt+1 (st , at ) = Qt (st , at )+ a At At = rt+g MAXa {Qt (st+1, a)} - Qt (st , at ) OFF POLICY: Q-Learning Maximization Operator!!!

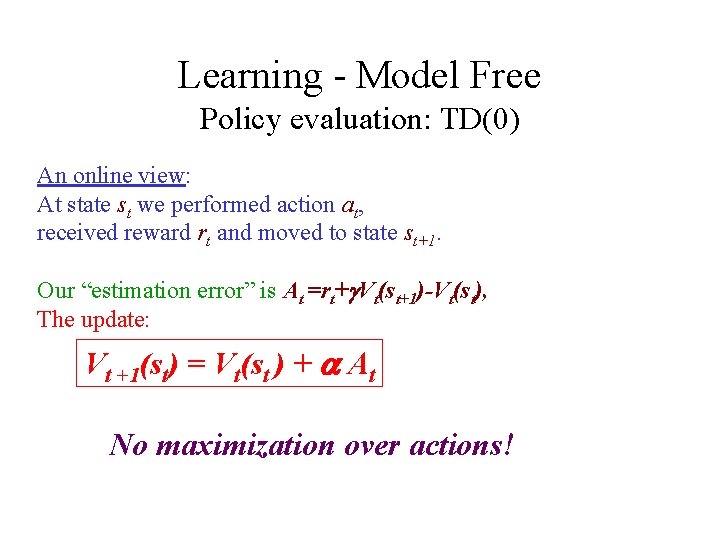

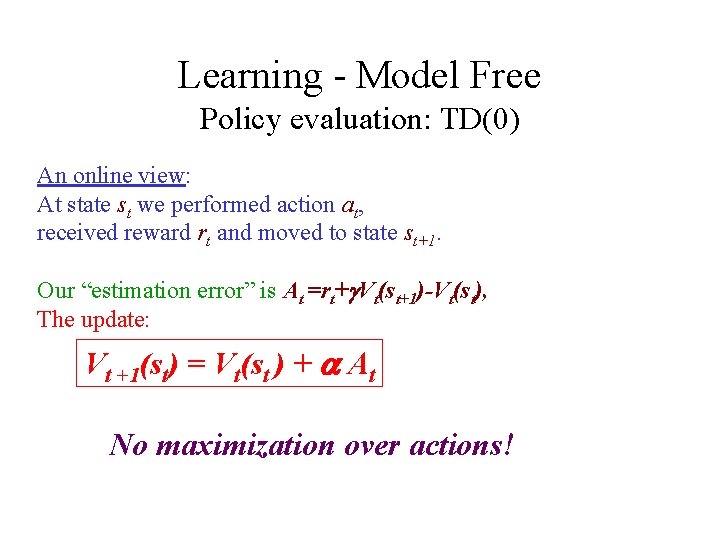

Learning - Model Free Policy evaluation: TD(0) An online view: At state st we performed action at, received reward rt and moved to state st+1. Our “estimation error” is At =rt+g. Vt(st+1)-Vt(st), The update: Vt +1(st) = Vt(st ) + a At No maximization over actions!

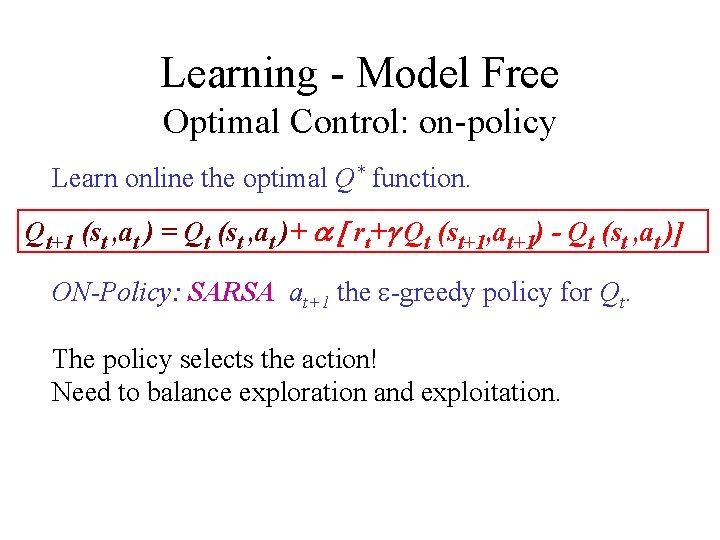

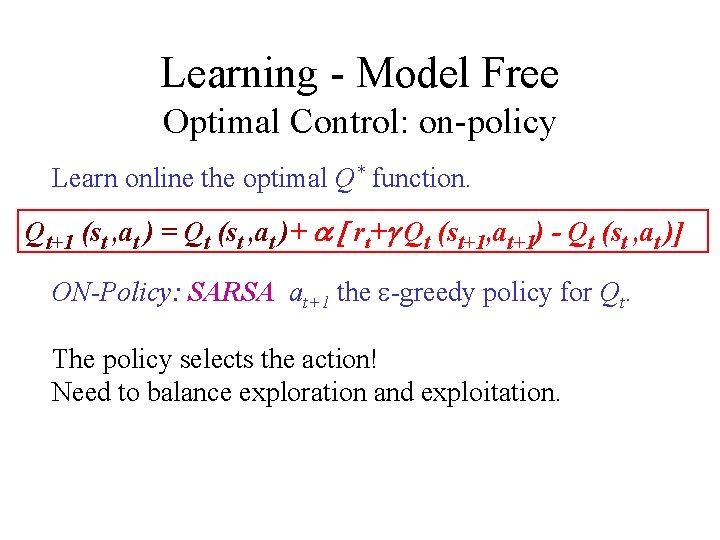

Learning - Model Free Optimal Control: on-policy Learn online the optimal Q* function. Qt+1 (st , at ) = Qt (st , at )+ a [ rt+g Qt (st+1, at+1) - Qt (st , at )] ON-Policy: SARSA at+1 the e-greedy policy for Qt. The policy selects the action! Need to balance exploration and exploitation.

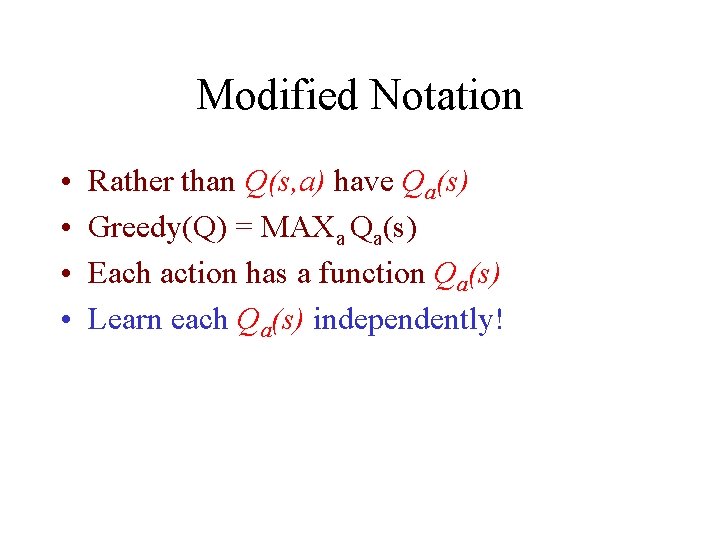

Modified Notation • • Rather than Q(s, a) have Qa(s) Greedy(Q) = MAXa Qa(s) Each action has a function Qa(s) Learn each Qa(s) independently!

Large state space • Reduce number of states – Symmetries (x-o) – Cluster states • Define attributes • Limited number of attributes • Some states will be identical – Action view of a state

Example X-O • For each action (square) – Consider row/diagonal/column through it – The state will encode the status of “rows”: • • • Two X’s Two O’s Mixed (both X and O) One X One O empty – Only Three types of squares/actions

Clustering states • • Need to create attributes Attributes should be “game dependent” Different “real” states - same representation How do we differentiate states? – We estimate action value. – Consider only legal actions. – Play “best” action.

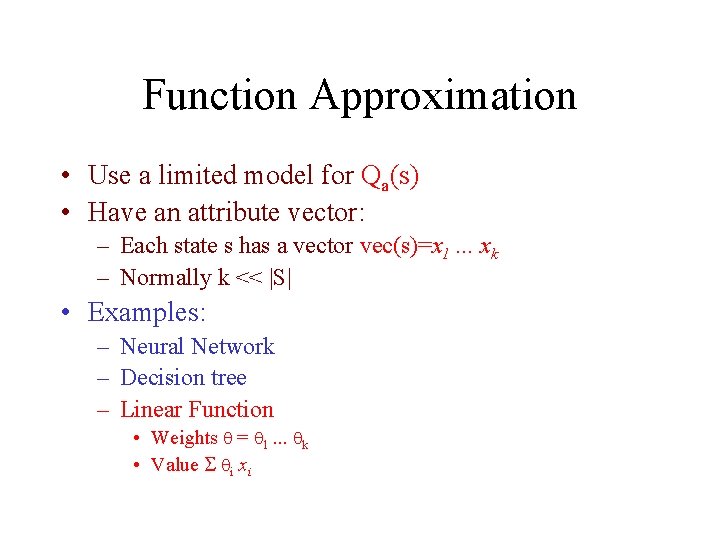

Function Approximation • Use a limited model for Qa(s) • Have an attribute vector: – Each state s has a vector vec(s)=x 1. . . xk – Normally k << |S| • Examples: – Neural Network – Decision tree – Linear Function • Weights = 1. . . k • Value i xi

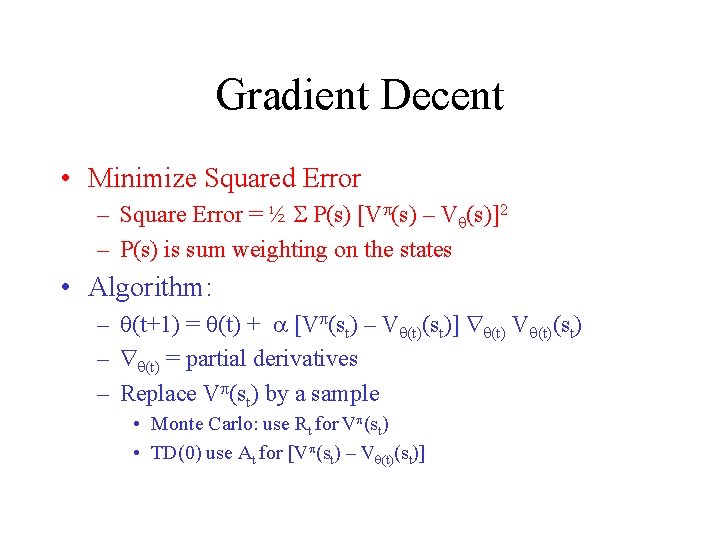

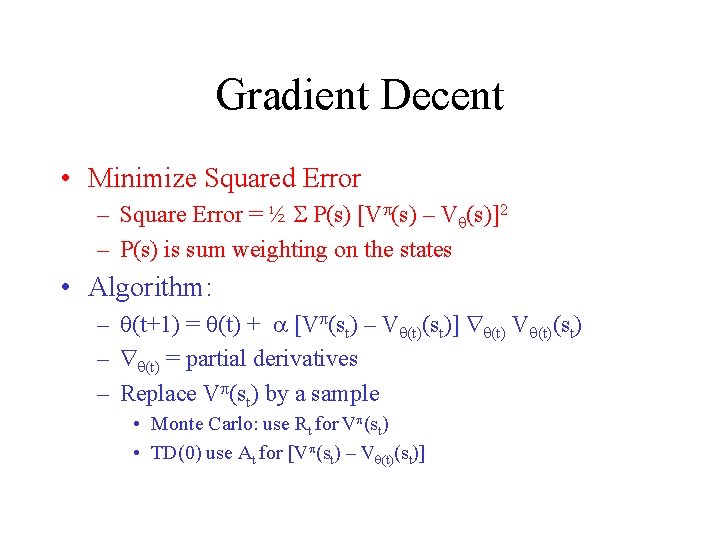

Gradient Decent • Minimize Squared Error – Square Error = ½ P(s) [V (s) – V (s)]2 – P(s) is sum weighting on the states • Algorithm: – (t+1) = (t) + [V (st) – V (t)(st)] (t) V (t)(st) – (t) = partial derivatives – Replace V (st) by a sample • Monte Carlo: use Rt for V (st) • TD(0) use At for [V (st) – V (t)(st)]

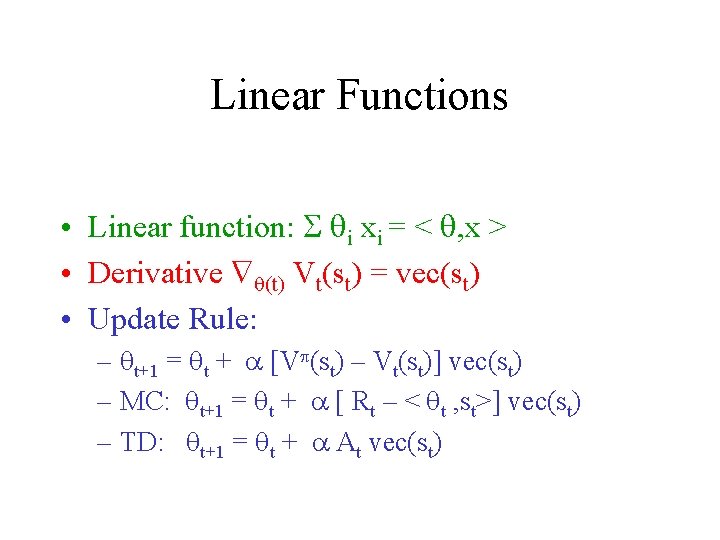

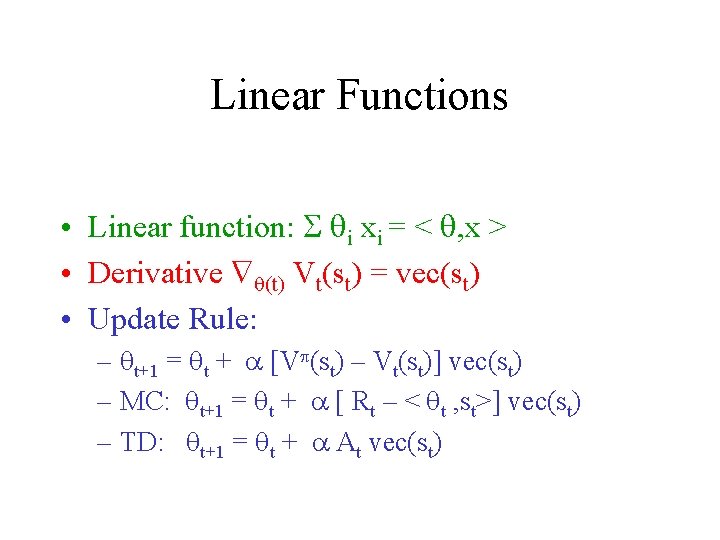

Linear Functions • Linear function: i xi = < , x > • Derivative (t) Vt(st) = vec(st) • Update Rule: – t+1 = t + [V (st) – Vt(st)] vec(st) – MC: t+1 = t + [ Rt – < t , st>] vec(st) – TD: t+1 = t + At vec(st)

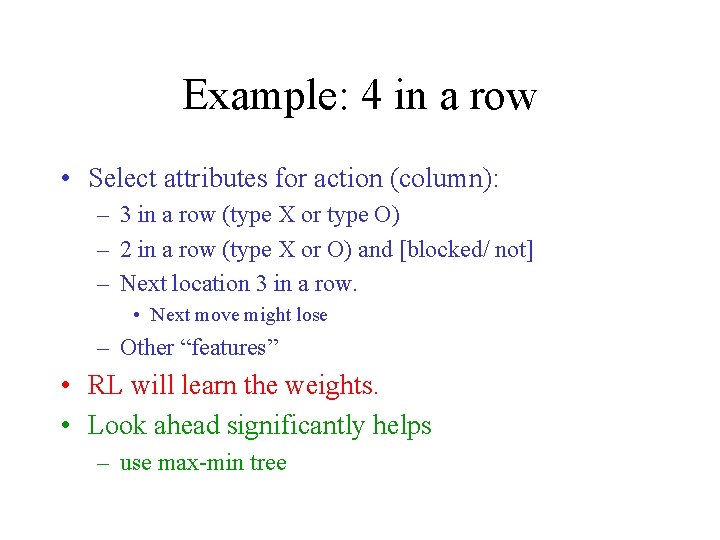

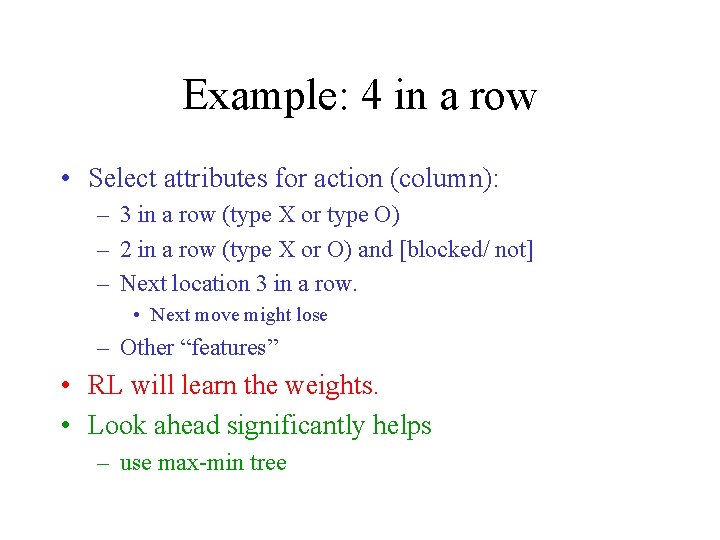

Example: 4 in a row • Select attributes for action (column): – 3 in a row (type X or type O) – 2 in a row (type X or O) and [blocked/ not] – Next location 3 in a row. • Next move might lose – Other “features” • RL will learn the weights. • Look ahead significantly helps – use max-min tree

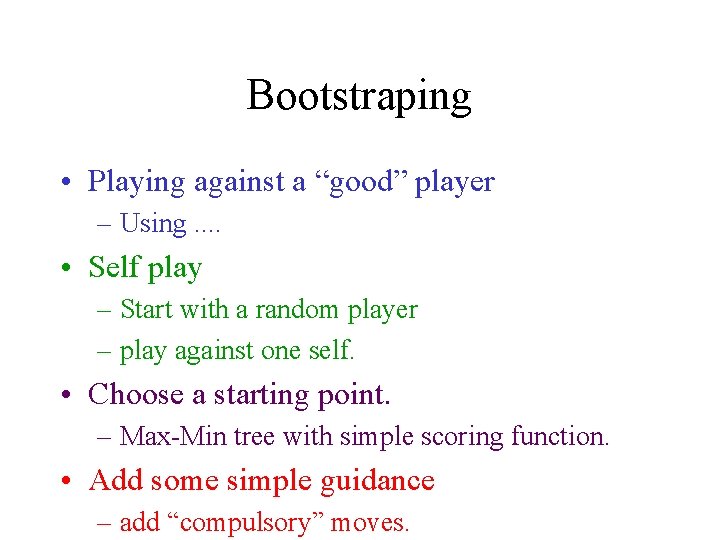

Bootstraping • Playing against a “good” player – Using. . • Self play – Start with a random player – play against one self. • Choose a starting point. – Max-Min tree with simple scoring function. • Add some simple guidance – add “compulsory” moves.

Scoring Function • Checkers: – Number of pieces – Number of Queens • Chess – Weighted sum of pieces • Othello/Reversi – Difference in number of pieces • Can be used with Max-Min Tree – ( , ) pruning

Example: Revesrsi (Othello( • Use a simple score functions: – difference in pieces – edge pieces – corner pieces • Use Max-Min Tree • RL: optimize weights.

Advanced issues • Time constraints – fast and slow modes • Opening – can help • End game – many cases: few pieces, – can be solved efficiently • Train on a specific state – might be helpful/ not sure that its worth the effort.

What is Next? • Create teams: – at least 2 students at most 3 students • Group size will influence our expectations! – Choose a game! – Give the names and game • GUI for game – Deadline Dec. 17, 2006

Schedule (more( • System specification – Project outline – High level components planning – Jan. 21, 2007 • Build system • Project completion – April 29, 2007 • All supporting documents in html!

Next week • GUI interface (using C++) • Afterwards: – Each groups works by itself