Reinforcement Learning in the MultiRobot Domain 10262020 1

Reinforcement Learning in the Multi-Robot Domain 10/26/2020 1

Source l “Reinforcement Learning in the Multi. Robot Domain” : Maja J Mataric 10/26/2020 2

Introduction l Mataric describes a method for realtime learning in an autonomous agent l Reinforcement Learning (RL) is used l The agent learns based on rewards and punishments l This method is experimentally validated on a group of 4 robots learning a foraging task 10/26/2020 3

Why? l. A successful learning algorithm would allow autonomous agents to exhibit complex behaviors with little (or no) extra programming. 10/26/2020 4

Two Main Challenges l The state space is prohibitively large – Building a predictive model is very slow – Might be more efficient to learn a policy l Dealing with Structuring and Assignment of reinforcement – Environment does not provide a direct source of immediate reinforcement – Delayed Credit 10/26/2020 5

Addressing Challenges Since the state space is prohibitively large, it is necessary to reduce the space using behaviors and conditions – Behaviors • Homing / Wall-following • Abstract away low-level controllers – Conditions • Have-puck? / at-home? • Abstract away details of the state space 10/26/2020 6

Addressing Challenges l Reinforcement is difficult because an event that induces reinforcement may be due to past actions such as – Attempts to reach a goal – or Reactions to another robot l To address this Mataric uses Shaped Reinforcement in the form of – Heterogeneous reward functions – and Progress Estimators 10/26/2020 7

Reward Functions l Heterogeneous reward functions combine multi-modal feedback from – External (sensory) – and Internal (state) modalities Each behavior has an associated goal which provides a reinforcement signal l More sub-goals leads to more frequent reinforcement which leads to faster convergence l 10/26/2020 8

Reward Functions Progress Estimators (PE’s) provide positive or negative reinforcement with respect to the current goal l PE’s Decrease sensitivity to noise l – Noise-induced events are not consistently supported l PE’s Encourage Exploration – Non-productive behaviors are terminated l Decrease fortuitous rewards – Over time less reward is given to fortuitous successes 10/26/2020 9

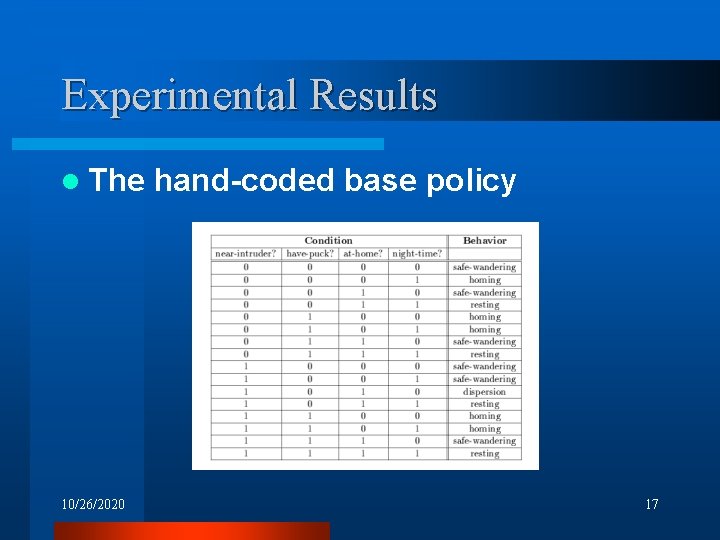

The Learning Task l The Learning Task consists of finding a mapping from conditions – – l Have-puck? At-home? Near-intruder? Night-time? To behaviors – – – 10/26/2020 – Safe-wandering Dispersion Resting homing 10

Learning Algorithm l Matrix A(c, b) is a normalized sum of the reinforcement, R, for each behavior pair over time t A(c, b) = Sumt R(c, t) l Learning 10/26/2020 is continuous 11

Immediate Reinforcement l Positive – E p: – Egd: – Egw: l Negative – Ebd: – Ebw: l grasped-puck dropped-puck-at-home woke-up-at-home dropped-puck-away-from-home woke-up-away-from-home The events are merged into one heterogeneous reinforcement function RE(c) 10/26/2020 12

Progress Estimators l RI(c, t) – Minimizing Interference – Positive for increasing distance – Negative for decreasing distance l RH(c, t) – Homing (with puck) – Positive for nearer to home – Negative for farther from home 10/26/2020 13

Control Algorithm l Behavior selection is induced by events l Events are triggered – Externally – Internally – By progress estimators 10/26/2020 14

Control Algorithm When an event is detected, the following control sequence is executed 1. Current (c, b) pair is reinforced 2. Current behavior is terminated 3. New behavior selected – Choose an untried behavior – Otherwise choose “best” behavior 10/26/2020 15

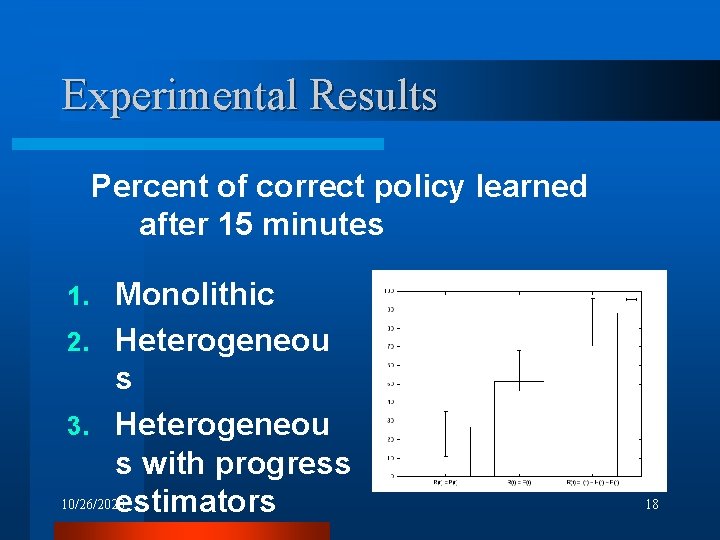

Experimental Results Three approaches compared 1. Monolithic single-goal reward • Puck delivery to home Heterogeneous reward function 3. Heterogeneous reward function with two progress estimators 2. 10/26/2020 16

Experimental Results l The 10/26/2020 hand-coded base policy 17

Experimental Results Percent of correct policy learned after 15 minutes Monolithic 2. Heterogeneou s 3. Heterogeneou s with progress 10/26/2020 estimators 1. 18

Evaluation l Monolithic – Does not provide enough feedback l Heterogeneous Reward – Certain behaviors pursued too long – Behaviors with delayed reward ignored (homing) l Heterogeneous with Progress Estimators – Eliminates thrashing – Impact of fortuitous rewards minimized 10/26/2020 19

Conclusions l Mataric’s methods of heterogeneous reward functions with progress estimators can improve performance using domain knowledge 10/26/2020 20

Critique l Multi-robot learning? l The techniques converge to a handcrafted policy – what is the optimal policy? 10/26/2020 21

Questions? 10/26/2020 22

- Slides: 22