Reinforcement Learning Ata Kaban A Kabancs bham ac

Reinforcement Learning Ata Kaban A. Kaban@cs. bham. ac. uk School of Computer Science University of Birmingham

Learning by reinforcement • Examples: – Learning to play Backgammon – Robot learning to dock on battery charger • Characteristics: – – No direct training examples – delayed rewards instead Need for exploration & exploitation The environment is stochastic and unknown The actions of the learner affect future rewards

Brief history & successes • Minsky’s Ph. D thesis (1954): Stochastic Neural-Analog Reinforcement Computer • Analogies with animal learning and psychology • TD-Gammon (Tesauro, 1992) – big success story • Job-shop scheduling for NASA space missions (Zhang and Dietterich, 1997) • Robotic soccer (Stone and Veloso, 1998) – part of the world-champion approach • ‘An approximate solution to a complex problem can be better than a perfect solution to a simplified problem’

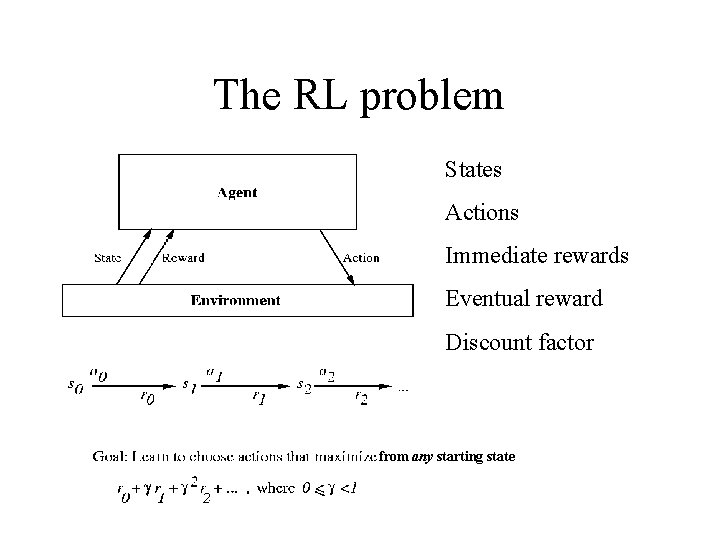

The RL problem States Actions Immediate rewards Eventual reward Discount factor from any starting state

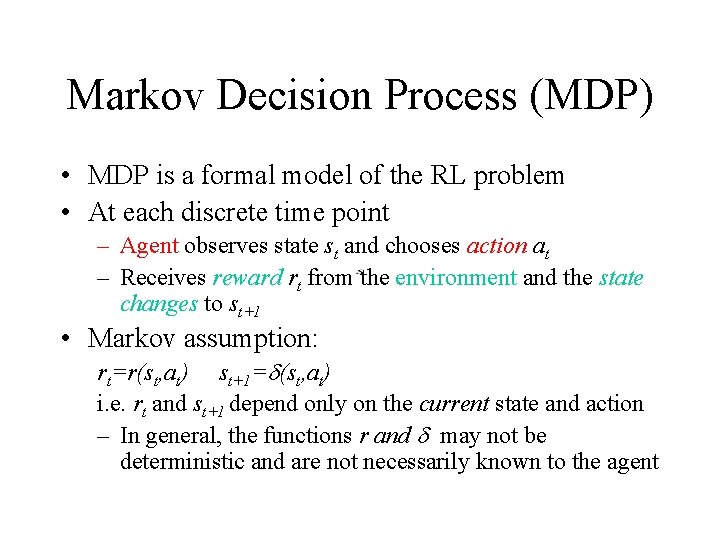

Markov Decision Process (MDP) • MDP is a formal model of the RL problem • At each discrete time point – Agent observes state st and chooses action at – Receives reward rt from the environment and the state changes to st+1 • Markov assumption: rt=r(st, at) st+1= (st, at) i. e. rt and st+1 depend only on the current state and action – In general, the functions r and may not be deterministic and are not necessarily known to the agent

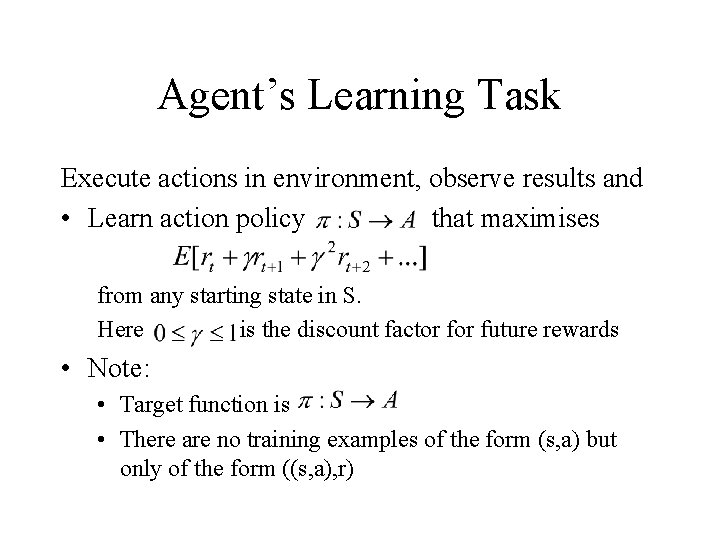

Agent’s Learning Task Execute actions in environment, observe results and • Learn action policy that maximises from any starting state in S. Here is the discount factor future rewards • Note: • Target function is • There are no training examples of the form (s, a) but only of the form ((s, a), r)

Example: TD-Gammon • Immediate reward: +100 if win -100 if lose 0 for all other states • Trained by playing 1. 5 million games against itself • Now approximately equal to the best human player

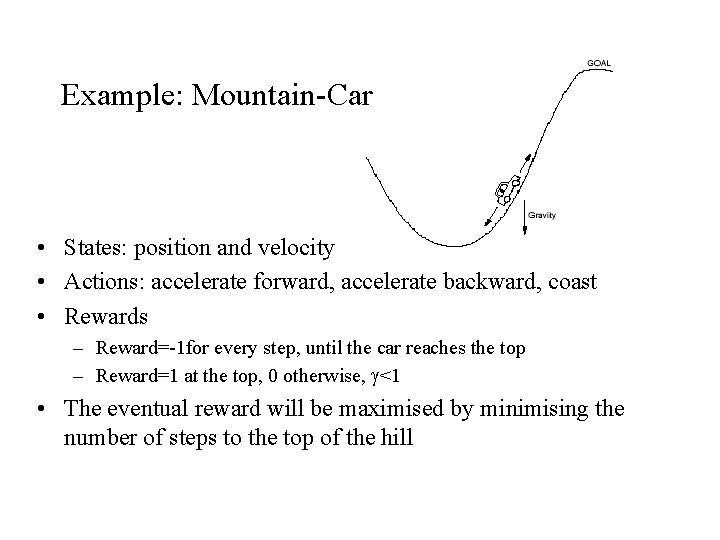

Example: Mountain-Car • States: position and velocity • Actions: accelerate forward, accelerate backward, coast • Rewards – Reward=-1 for every step, until the car reaches the top – Reward=1 at the top, 0 otherwise, <1 • The eventual reward will be maximised by minimising the number of steps to the top of the hill

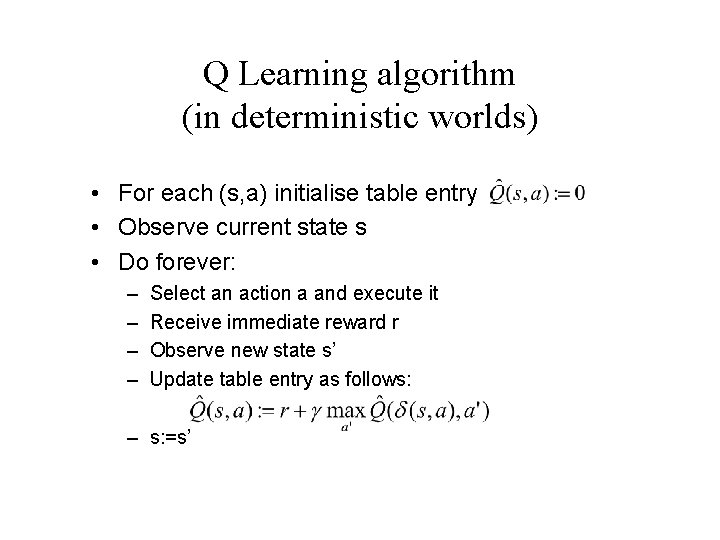

Q Learning algorithm (in deterministic worlds) • For each (s, a) initialise table entry • Observe current state s • Do forever: – – Select an action a and execute it Receive immediate reward r Observe new state s’ Update table entry as follows: – s: =s’

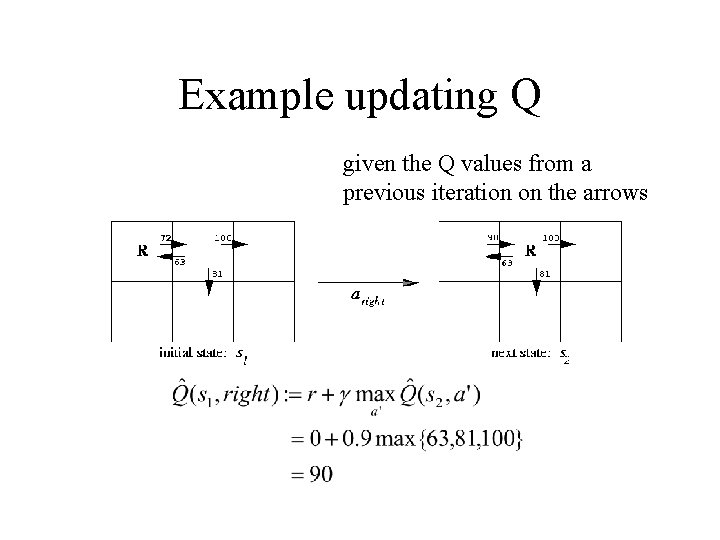

Example updating Q given the Q values from a previous iteration on the arrows

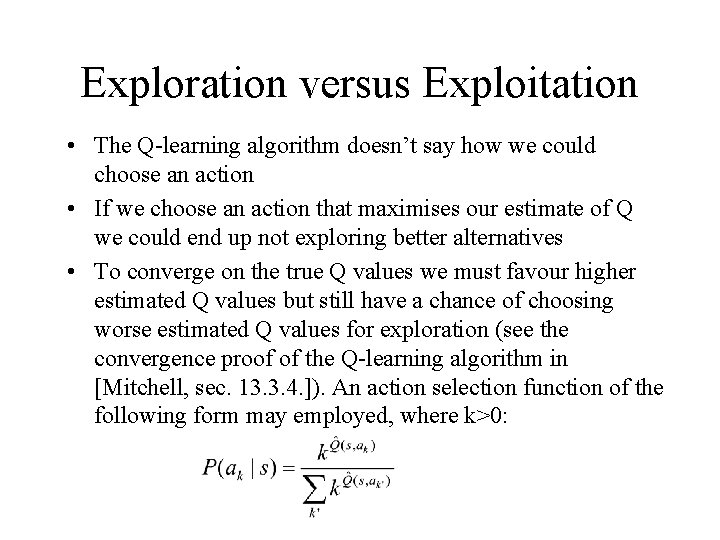

Exploration versus Exploitation • The Q-learning algorithm doesn’t say how we could choose an action • If we choose an action that maximises our estimate of Q we could end up not exploring better alternatives • To converge on the true Q values we must favour higher estimated Q values but still have a chance of choosing worse estimated Q values for exploration (see the convergence proof of the Q-learning algorithm in [Mitchell, sec. 13. 3. 4. ]). An action selection function of the following form may employed, where k>0:

Summary • Reinforcement learning is suitable for learning in uncertain environments where rewards may be delayed and subject to chance • The goal of a reinforcement learning program is to maximise the eventual reward • Q-learning is a form of reinforcement learning that doesn’t require that the learner has prior knowledge of how its actions affect the environment

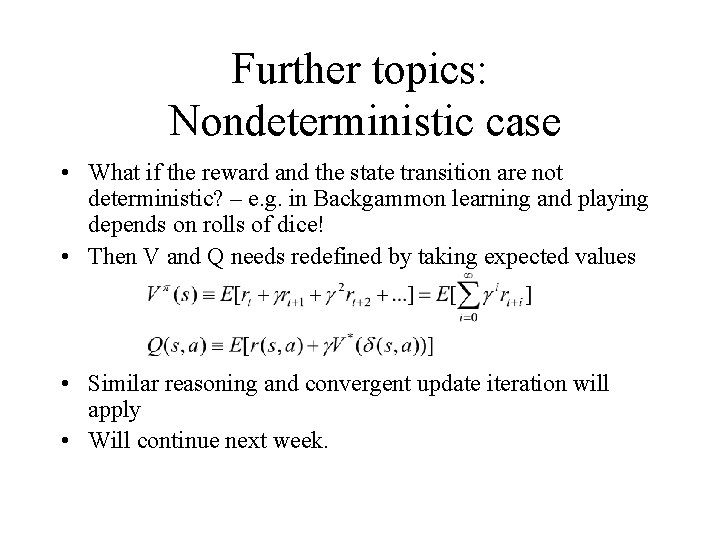

Further topics: Nondeterministic case • What if the reward and the state transition are not deterministic? – e. g. in Backgammon learning and playing depends on rolls of dice! • Then V and Q needs redefined by taking expected values • Similar reasoning and convergent update iteration will apply • Will continue next week.

- Slides: 13