Refresher in inferential statistics Tim batesed ac uk

+ Refresher in inferential statistics Tim. bates@ed. ac. uk http: //www. psy. ed. ac. uk/events/research_seminars/psychstats

+ Resources n http: //www. statmethods. net

+ Our basic question… n Did something occur? n Importantly, did what we predicted would occur, transpire? , i. e. , is the world as we predicted? n Why does this require statistics?

+ Is Breastfeeding good for Baby’s brains?

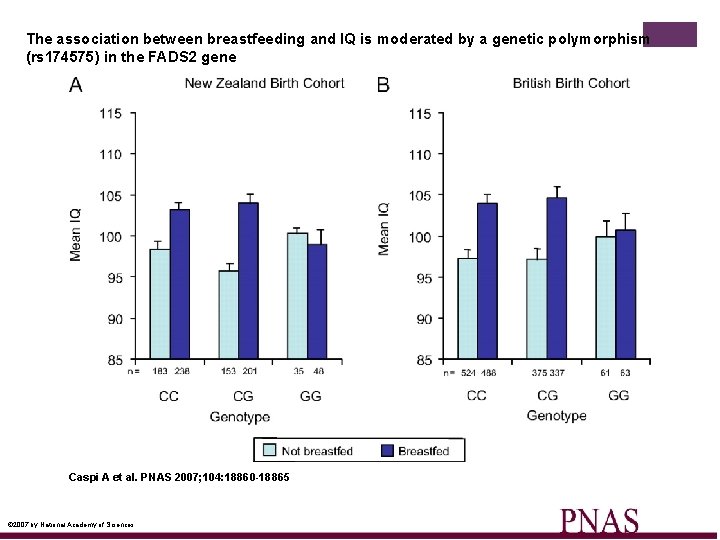

The association between breastfeeding and IQ is moderated by a genetic polymorphism (rs 174575) in the FADS 2 gene Caspi A et al. PNAS 2007; 104: 18860 -18865 © 2007 by National Academy of Sciences

+ Overview n Hypothesis testing n p-values n Type I vs. Type II errors n Power n Correlation n Fisher’s exact test n T-test n Linear regression n Non-parametric statistics (mostly for you to go over in your own time)

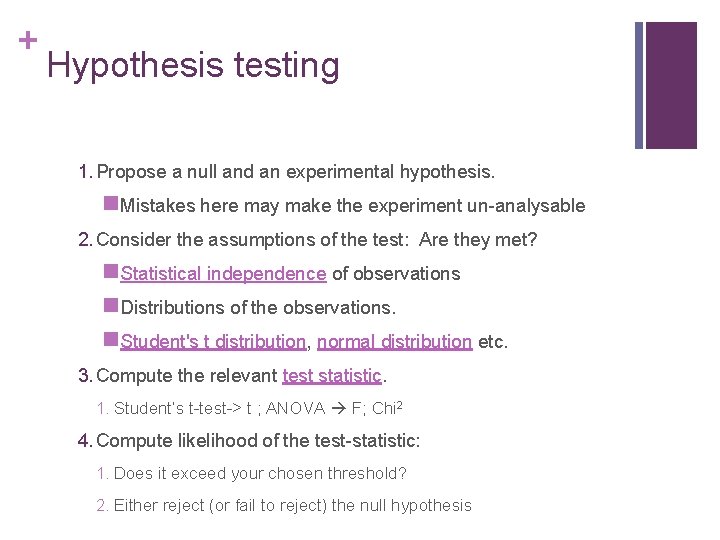

+ Hypothesis testing 1. Propose a null and an experimental hypothesis. n. Mistakes here may make the experiment un-analysable 2. Consider the assumptions of the test: Are they met? n. Statistical independence of observations n. Distributions of the observations. n. Student's t distribution, normal distribution etc. 3. Compute the relevant test statistic. 1. Student’s t-test-> t ; ANOVA F; Chi 2 4. Compute likelihood of the test-statistic: 1. Does it exceed your chosen threshold? 2. Either reject (or fail to reject) the null hypothesis

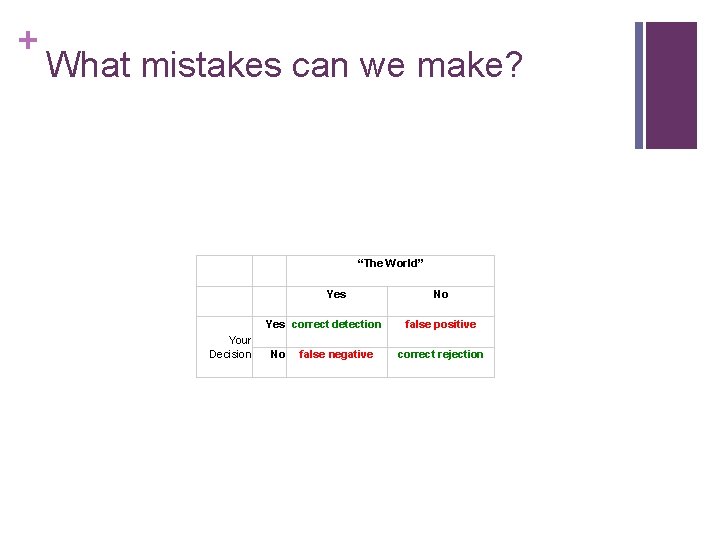

+ What mistakes can we make? “The World” Yes correct detection Your Decision No false negative No false positive correct rejection

+ Starting to make inferences…the Binomial n Toss a coin

+ Dropping lots of coins. . . n Pachinko

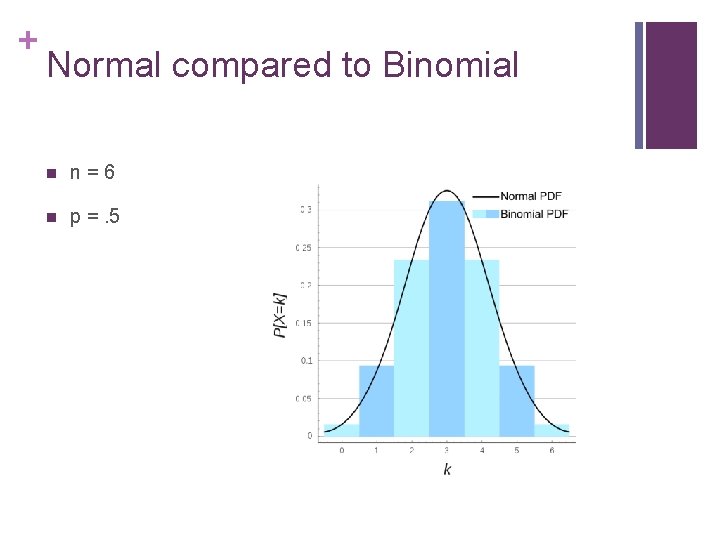

+ Normal compared to Binomial n n = 6 n p =. 5

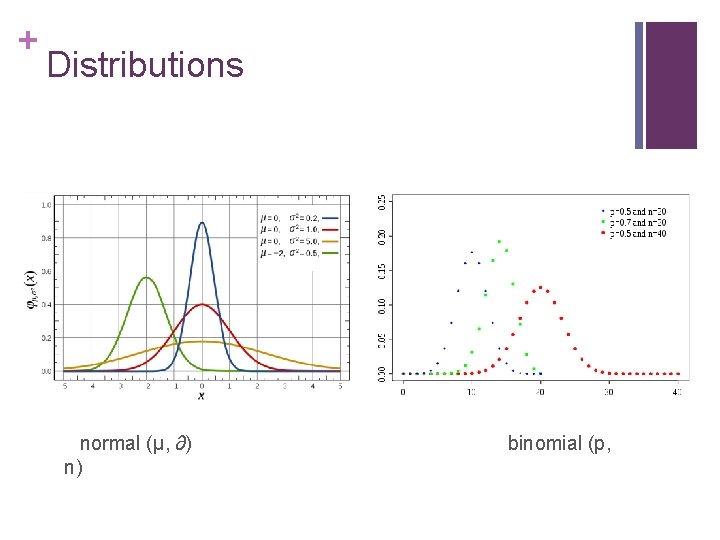

+ Distributions normal (µ, ∂) binomial (p, n)

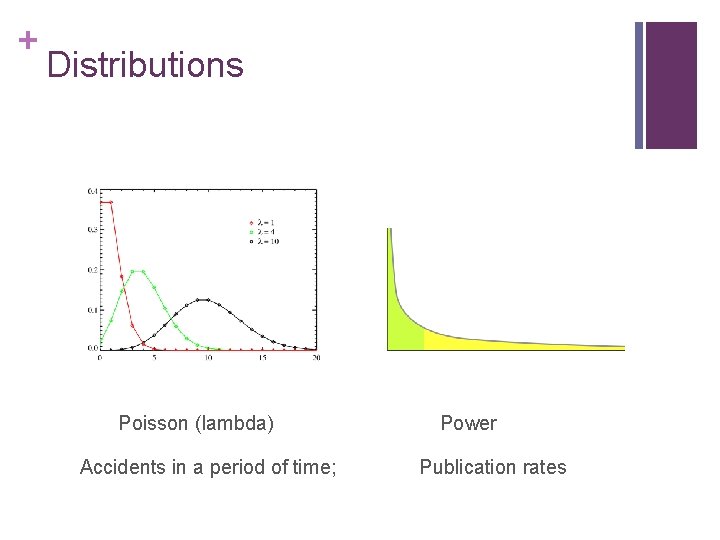

+ Distributions Poisson (lambda) Power Accidents in a period of time; Publication rates

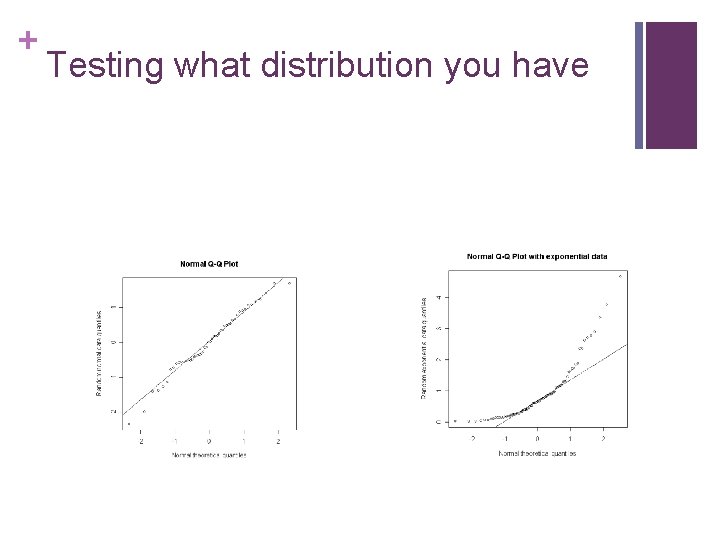

+ Testing what distribution you have

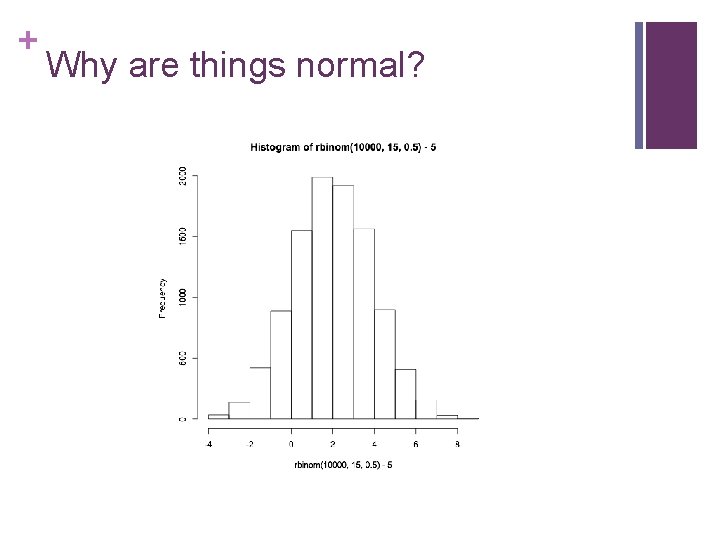

+ Why are things normal?

+ Central limit theorem n The mean of a large number of independent random variables is distributed approximately normally.

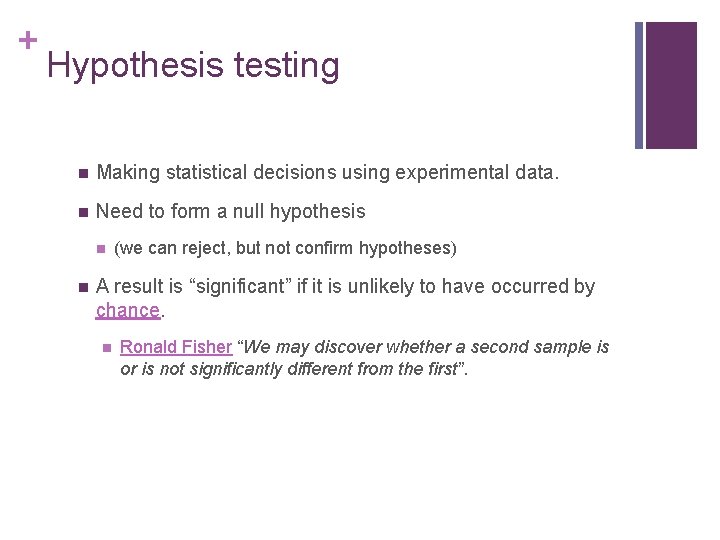

+ Hypothesis testing n Making statistical decisions using experimental data. n Need to form a null hypothesis n n (we can reject, but not confirm hypotheses) A result is “significant” if it is unlikely to have occurred by chance. n Ronald Fisher “We may discover whether a second sample is or is not significantly different from the first”.

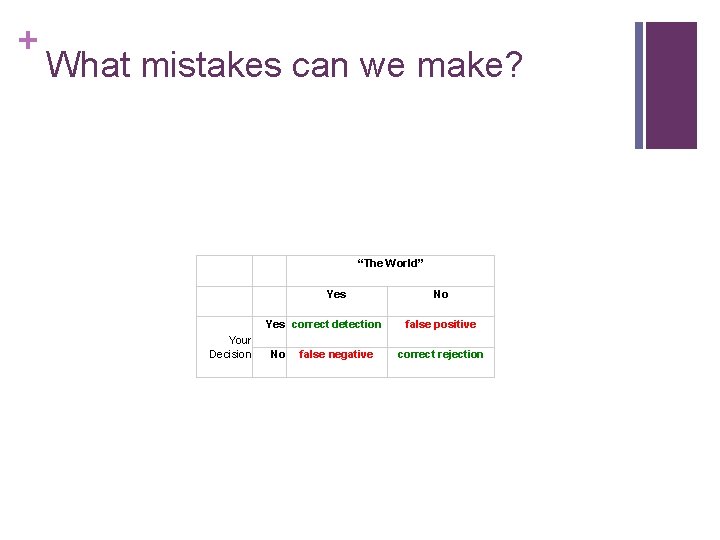

+ What mistakes can we make? “The World” Yes correct detection Your Decision No false negative No false positive correct rejection

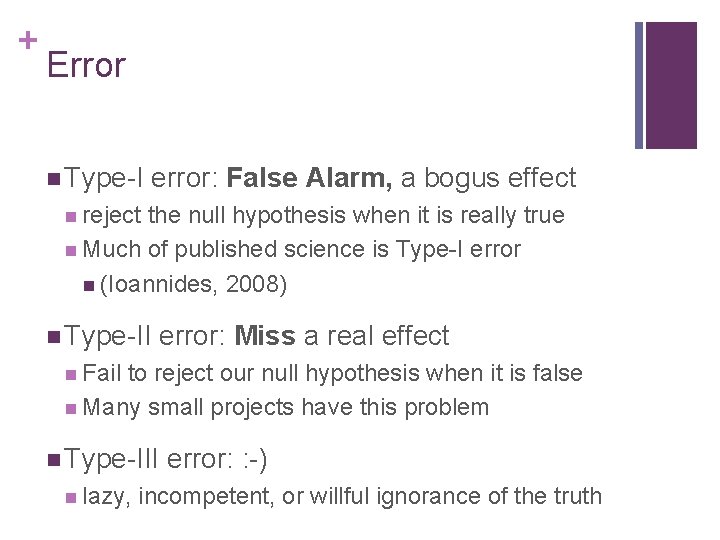

+ Error n Type-I error: False Alarm, a bogus effect n reject the null hypothesis when it is really true n Much of published science is Type-I error n (Ioannides, 2008) n Type-II error: Miss a real effect n Fail to reject our null hypothesis when it is false n Many small projects have this problem n Type-III error: : -) n lazy, incompetent, or willful ignorance of the truth

+ p-values n Almost any difference (a count, a difference in means, a difference in variances) can be found with some probability, irrespective of the true situation. n All we can do is to set a threshold likelihood for deciding that an event occurred by chance. n p=. 05 = 1 time in 20, the result would be as large by chance when the null hypothesis is true.

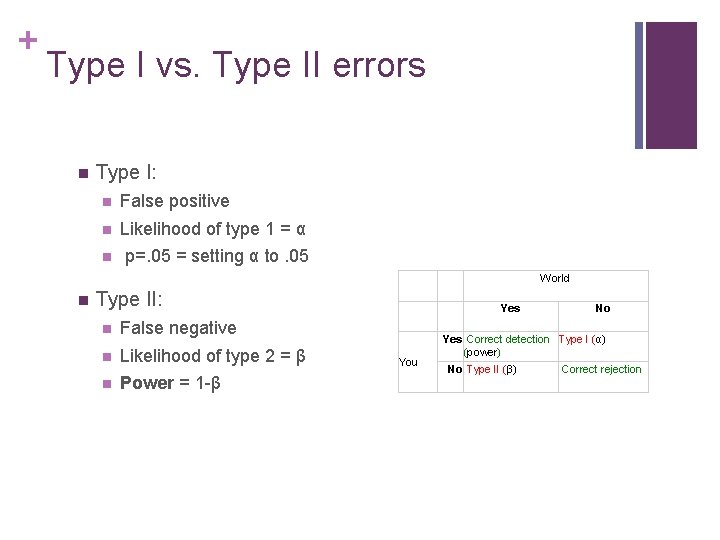

+ Type I vs. Type II errors n Type I: n False positive n Likelihood of type 1 = α n p=. 05 = setting α to. 05 World n Type II: n False negative n Likelihood of type 2 = β n Power = 1 -β Yes You No Yes Correct detection Type I (α) (power) No Type II (β) Correct rejection

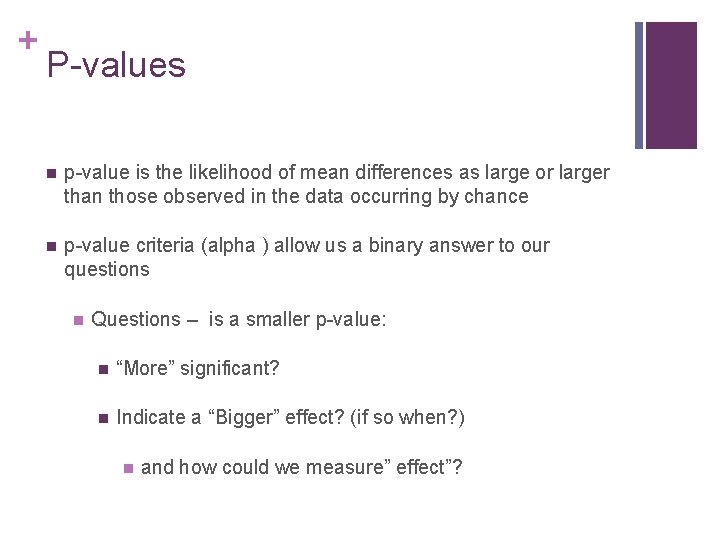

+ P-values n p-value is the likelihood of mean differences as large or larger than those observed in the data occurring by chance n p-value criteria (alpha ) allow us a binary answer to our questions n Questions – is a smaller p-value: n “More” significant? n Indicate a “Bigger” effect? (if so when? ) n and how could we measure” effect”?

+ Compare these two statements n It’s ‘significant’, but how big is the effect? n I can see it’s big: but what is the p-value?

+ Confidence Intervals n Range of values within a given likelihood threshold n n n (for instance 95%) Closely related to p-values. n p = 1 -CI n i. e. , if p<. 05, 95% CI will not include 0 (no difference) n Would you rather have a CI or a p-value? n Why? What is an effect size?

+ P and CI n You can’t go from p to CI! n You can go from CI to p n At a p=. 05, 95%CIs will overlap less than 25% n At p=. 01, the 95% CI bars just touch

+ Units of a Confidence Interval n n Unlike p, CIs are given in the units of the DV n Cumming and Finch (2005) n BMI in people on a low carb diet might be 19 -23 kg/m 2 Cumming, G. and Finch S. (2005). Inference by eye: confidence intervals and how to read pictures of data. American Psychologist. 60: 170 -80. PMID: 15740449

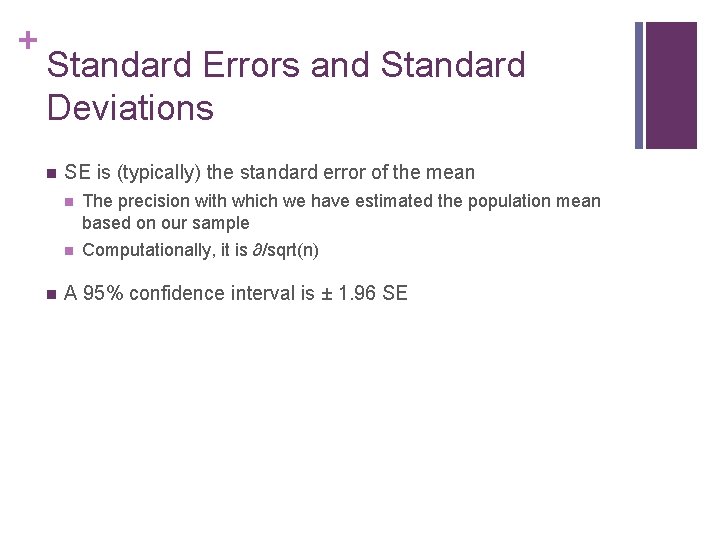

+ Standard Errors and Standard Deviations n n SE is (typically) the standard error of the mean n The precision with which we have estimated the population mean based on our sample n Computationally, it is ∂/sqrt(n) A 95% confidence interval is ± 1. 96 SE

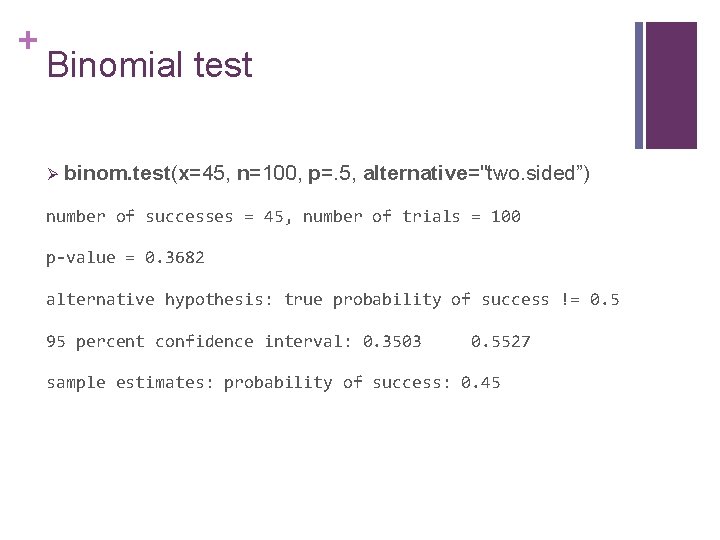

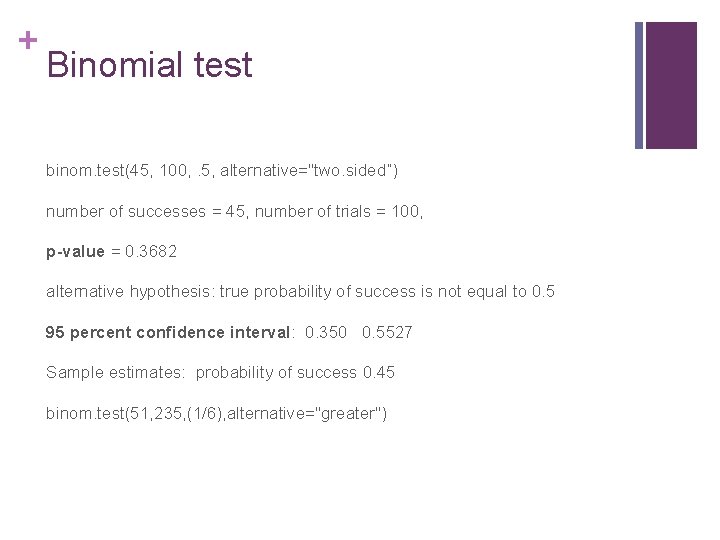

+ Example: coin toss n Random sample of 100 coin tosses, of a coin believed to be fair n We observed number of 45 heads, and 55 tails: Is the coin fair?

+ Binomial test Ø binom. test(x=45, n=100, p=. 5, alternative="two. sided”) number of successes = 45, number of trials = 100 p-value = 0. 3682 alternative hypothesis: true probability of success != 0. 5 95 percent confidence interval: 0. 3503 0. 5527 sample estimates: probability of success: 0. 45

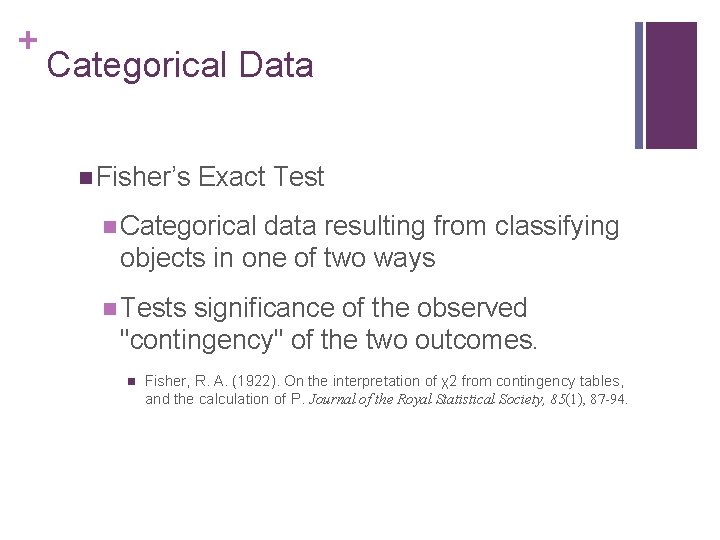

+ Categorical Data n Fisher’s Exact Test n Categorical data resulting from classifying objects in one of two ways n Tests significance of the observed "contingency" of the two outcomes. n Fisher, R. A. (1922). On the interpretation of χ2 from contingency tables, and the calculation of P. Journal of the Royal Statistical Society, 85(1), 87 -94.

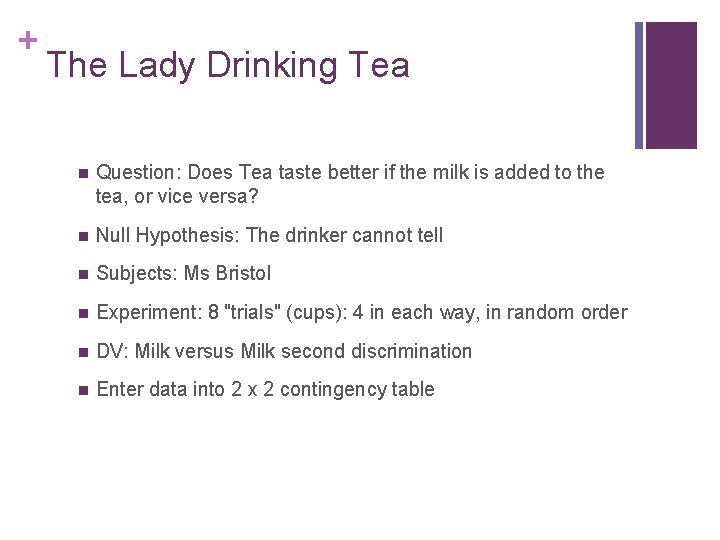

+ The Lady Drinking Tea n Question: Does Tea taste better if the milk is added to the tea, or vice versa? n Null Hypothesis: The drinker cannot tell n Subjects: Ms Bristol n Experiment: 8 "trials" (cups): 4 in each way, in random order n DV: Milk versus Milk second discrimination n Enter data into 2 x 2 contingency table

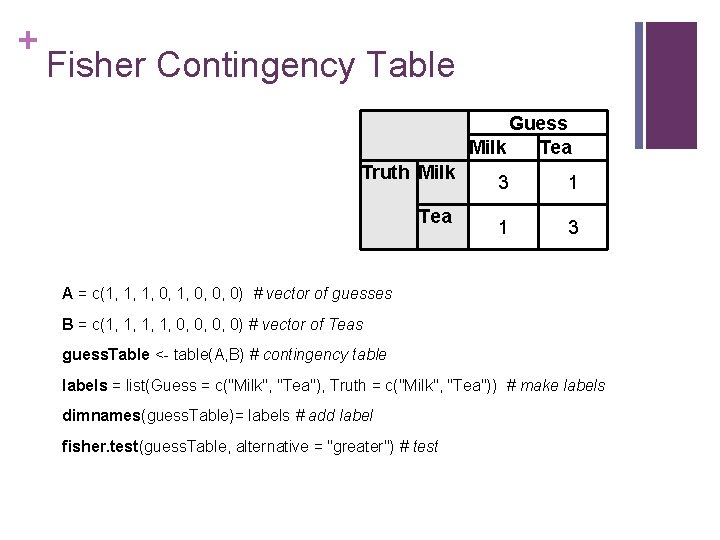

+ Fisher Contingency Table Guess Milk Tea Truth Milk Tea 3 1 1 3 A = c(1, 1, 1, 0, 0, 0) # vector of guesses B = c(1, 1, 0, 0, 0, 0) # vector of Teas guess. Table <- table(A, B) # contingency table labels = list(Guess = c("Milk", "Tea"), Truth = c("Milk", "Tea")) # make labels dimnames(guess. Table)= labels # add label fisher. test(guess. Table, alternative = "greater") # test

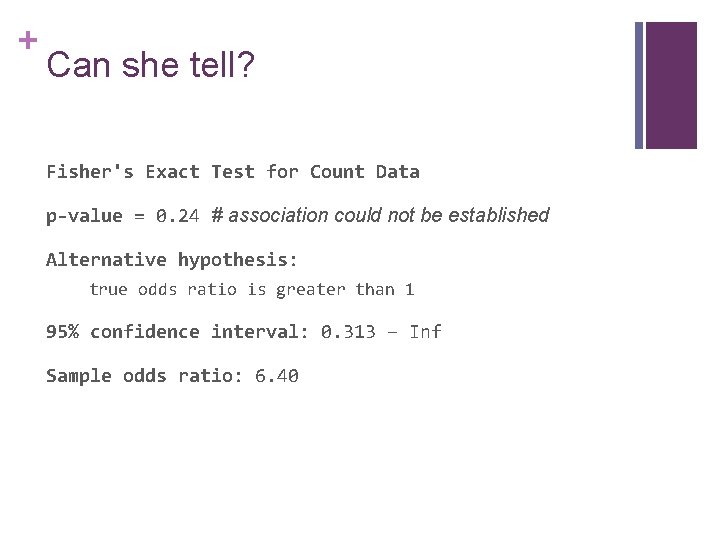

+ Can she tell? Fisher's Exact Test for Count Data p-value = 0. 24 # association could not be established Alternative hypothesis: true odds ratio is greater than 1 95% confidence interval: 0. 313 – Inf Sample odds ratio: 6. 40

+ What if we have two continuous variables? Are they related Q: If you have continuous depression scores and cut-off scores, which is more powerful?

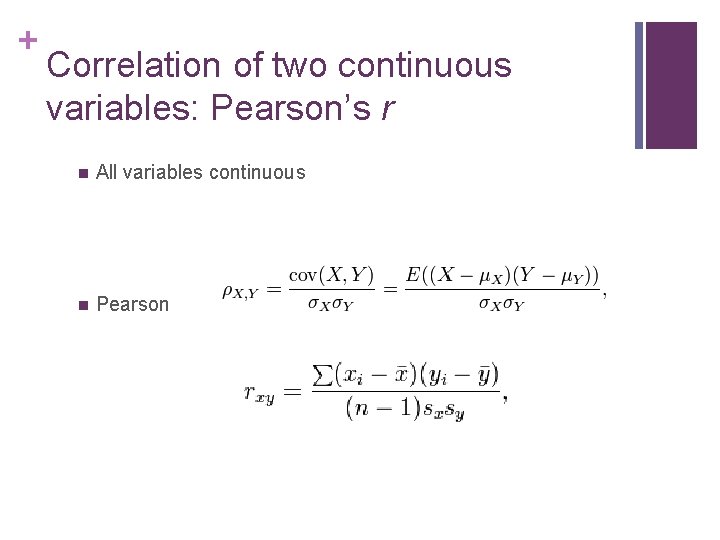

+ Correlation of two continuous variables: Pearson’s r n All variables continuous n Pearson

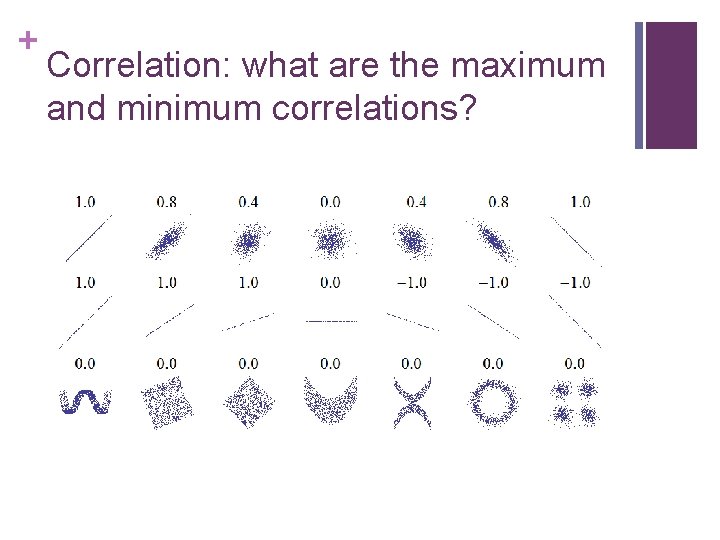

+ Correlation: what are the maximum and minimum correlations?

+ Power (1 -β) n Probability that a test will correctly reject the null hypothesis. n Complement of the false negative rate, β n False negative = missing a real effect n 1 -β = p (correctly reject a false null hypothesis)

+ Power and how to get it n Probability of rejecting the null hypothesis when it is false n Whence comes power?

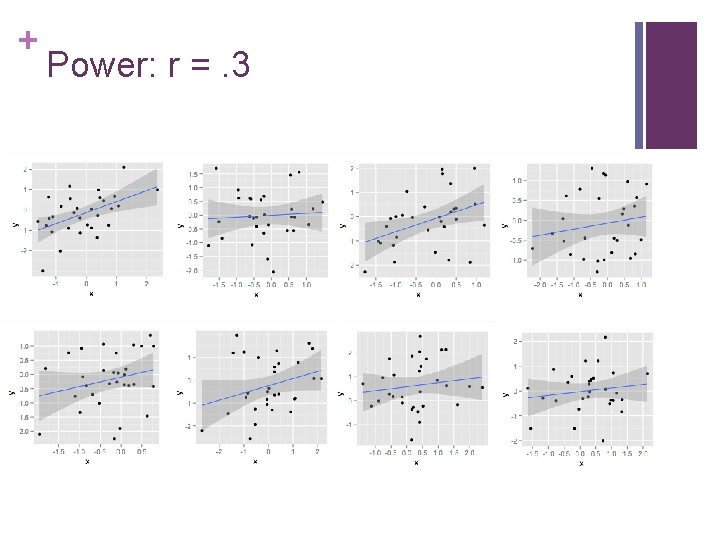

+ Power applied to a correlation Samples of n=30 from a population in which two normal traits correlate 0. 3 n r=0. 3 n xy = mvrnorm (n=30, mu=rep(0, 2), Sigma= matrix(c(1, r, r, 1) , nrow=2, ncol=2)); n xy = data. frame(xy); n names(xy) <- c("x", "y"); n qplot(x, y, data = xy, geom = c("point" , "smooth"), method=lm)

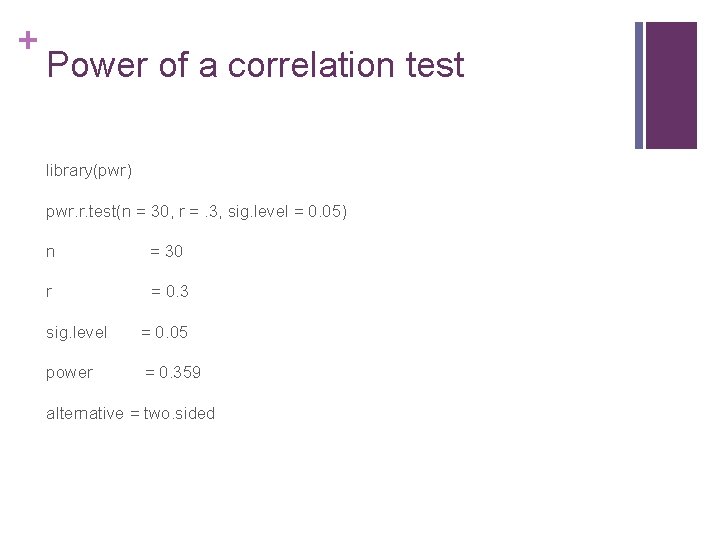

+ Power of a correlation test library(pwr) pwr. r. test(n = 30, r =. 3, sig. level = 0. 05) n = 30 r = 0. 3 sig. level = 0. 05 power = 0. 359 alternative = two. sided

+ Power: r =. 3

+ t-test n When we wish to compare means in a sample, we must estimate the standard deviation from the sample n Student's t-distribution is the distribution of small samples from normally varying populations

+ t-distribution function n t is defined as the ratio: n Z/sqrt(V/v) n Z is normally distributed with expected value 0 and variance 1; n V has a chi-square distribution with ν degrees of freedom;

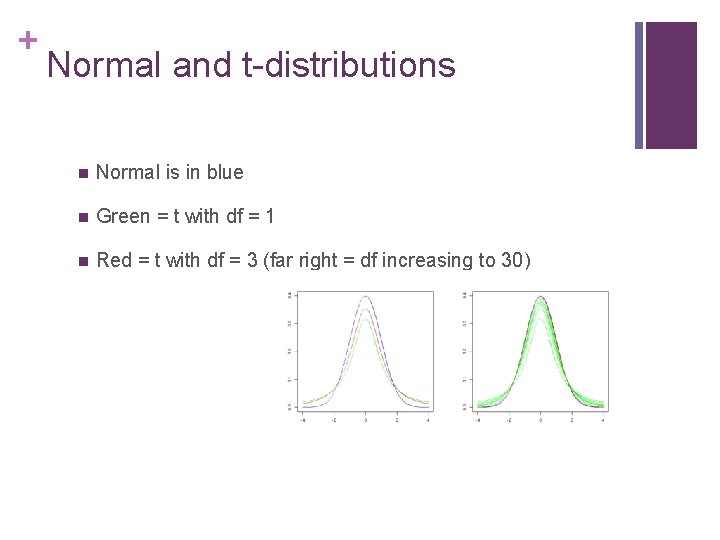

+ Normal and t-distributions n Normal is in blue n Green = t with df = 1 n Red = t with df = 3 (far right = df increasing to 30)

+ Power of t-test power. t. test(n=15, delta=. 5) Two-sample t test power calculation n = 15 ; delta = 0. 5 ; sd = 1; sig. level = 0. 05 power = 0. 26 alternative = two. sided NOTE: n is number in *each* group

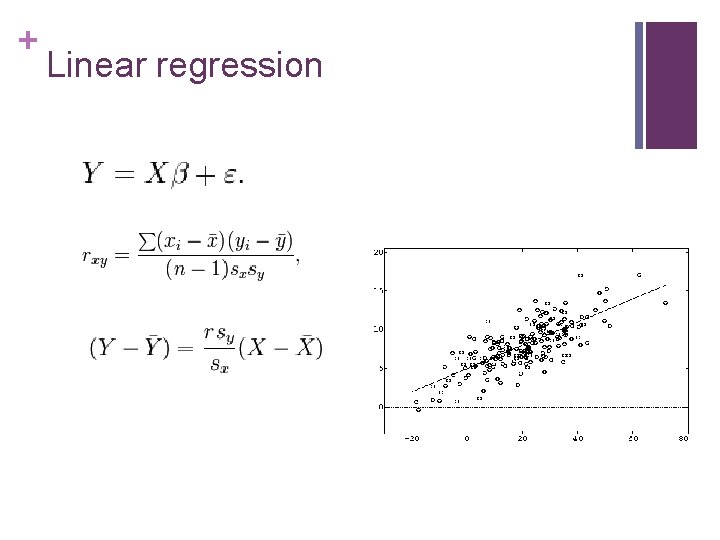

+ Linear regression

+ Linear regression n fit = lm(y ~ x 1 + x 2 + x 3, data=mydata) n summary(fit) # show results n anova(fit) # anova table n coefficients(fit) # model coefficients n confint(fit, level=0. 95) # CIs for model parameters n fitted(fit) # predicted values n residuals(fit) # residuals n influence(fit) # regression diagnostics

+ Nonparametric Statistics Timothy C. Bates tim. bates@ed. ac. uk

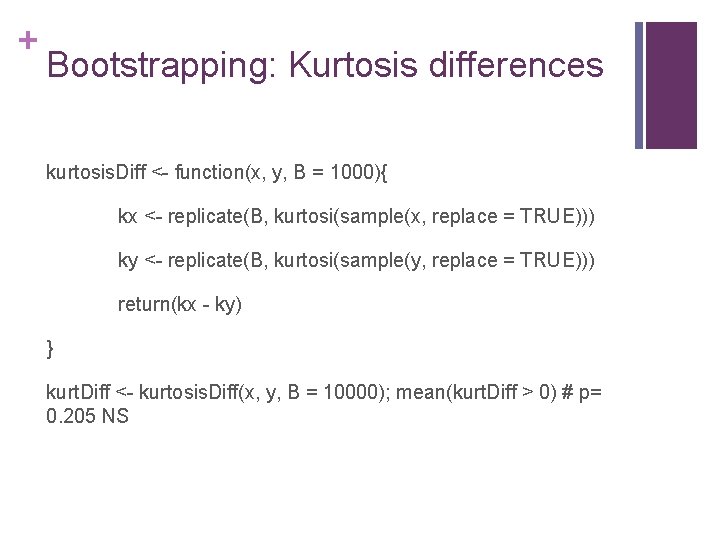

+ Bootstrapping: Kurtosis differences kurtosis. Diff <- function(x, y, B = 1000){ kx <- replicate(B, kurtosi(sample(x, replace = TRUE))) ky <- replicate(B, kurtosi(sample(y, replace = TRUE))) return(kx - ky) } kurt. Diff <- kurtosis. Diff(x, y, B = 10000); mean(kurt. Diff > 0) # p= 0. 205 NS

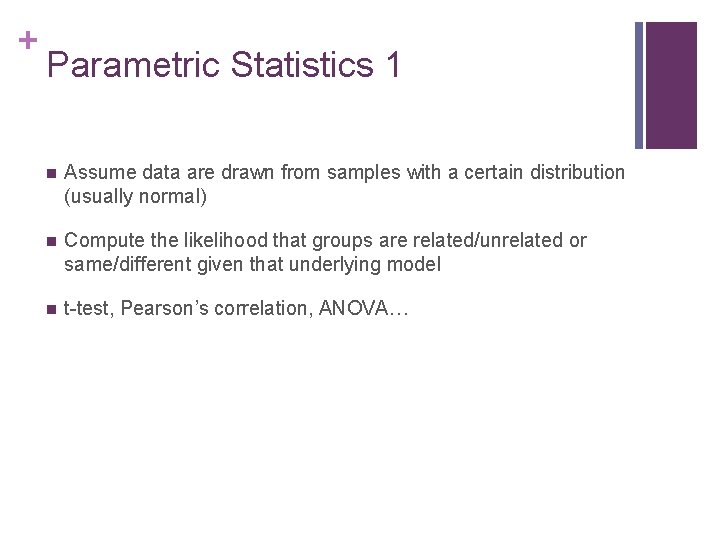

+ Parametric Statistics 1 n Assume data are drawn from samples with a certain distribution (usually normal) n Compute the likelihood that groups are related/unrelated or same/different given that underlying model n t-test, Pearson’s correlation, ANOVA…

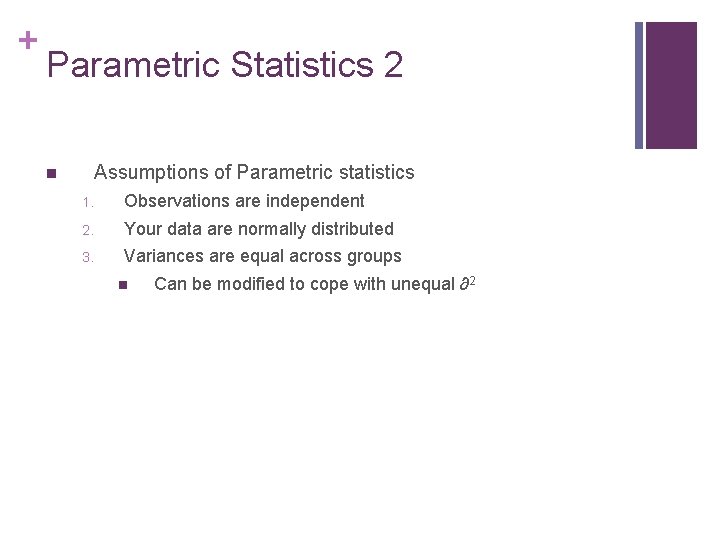

+ Parametric Statistics 2 Assumptions of Parametric statistics n 1. Observations are independent 2. Your data are normally distributed 3. Variances are equal across groups n Can be modified to cope with unequal ∂2

+ Non-parametric Statistics? n Non-parametric statistics do not assume any underlying distribution n They compute the likelihood that your groups are the same or different by comparing the ranks of subjects across the range of scores.

+ Non-parametric Statistics Assumptions of non-parametric statistics n 1. Observations are independent

+ Non-parametric Statistics? n Non-parametric statistics do not assume any underlying distribution n Estimating or modeling this distribution reduces their power to detect effects… n So don’t use them unless you have to

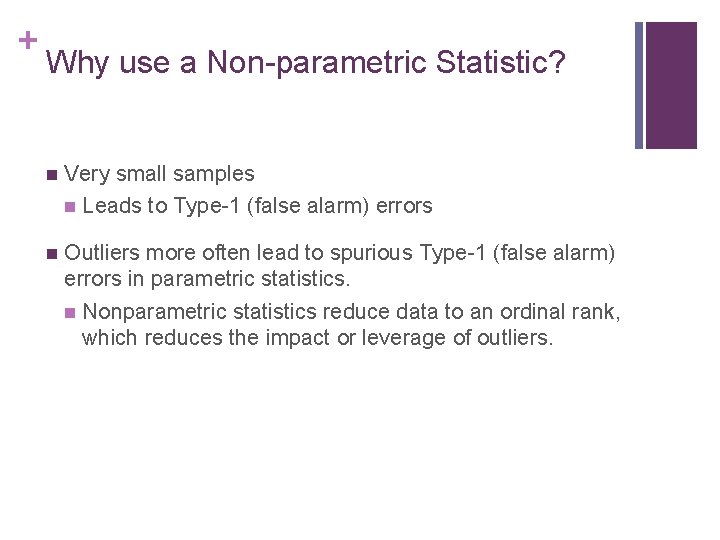

+ Why use a Non-parametric Statistic? n Very small samples n Leads to Type-1 (false alarm) errors n Outliers more often lead to spurious Type-1 (false alarm) errors in parametric statistics. n Nonparametric statistics reduce data to an ordinal rank, which reduces the impact or leverage of outliers.

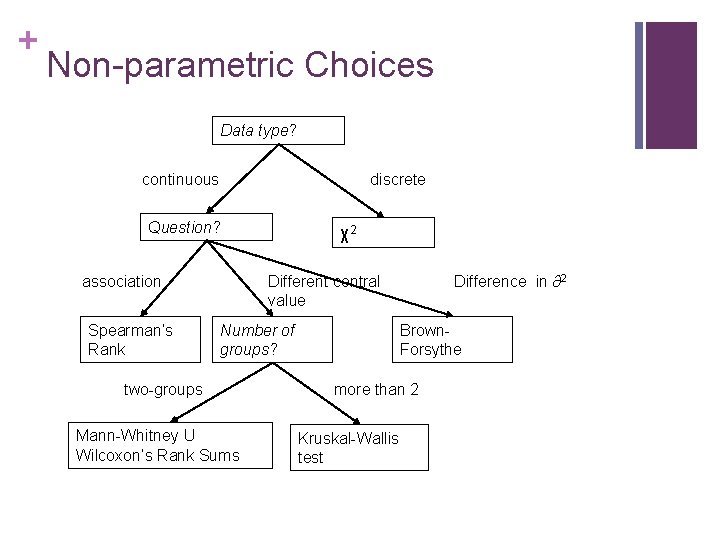

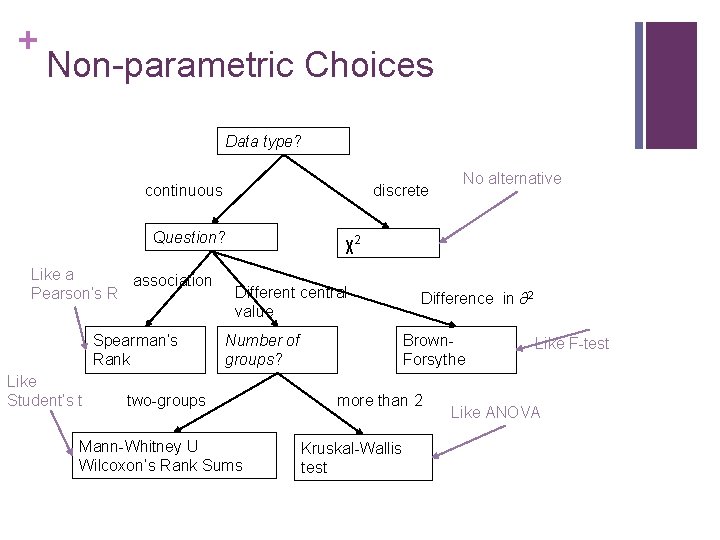

+ Non-parametric Choices Data type? continuous discrete Question? association Spearman’s Rank χ2 Different central value Number of groups? two-groups Mann-Whitney U Wilcoxon’s Rank Sums Difference in ∂2 Brown. Forsythe more than 2 Kruskal-Wallis test

+ Non-parametric Choices Data type? continuous discrete Question? Like a association Pearson’s R Spearman’s Rank Like Student’s t No alternative χ2 Different central value Number of groups? two-groups Mann-Whitney U Wilcoxon’s Rank Sums Difference in ∂2 Brown. Forsythe more than 2 Kruskal-Wallis test Like F-test Like ANOVA

+ Binomial test binom. test(45, 100, . 5, alternative="two. sided”) number of successes = 45, number of trials = 100, p-value = 0. 3682 alternative hypothesis: true probability of success is not equal to 0. 5 95 percent confidence interval: 0. 350 0. 5527 Sample estimates: probability of success 0. 45 binom. test(51, 235, (1/6), alternative="greater")

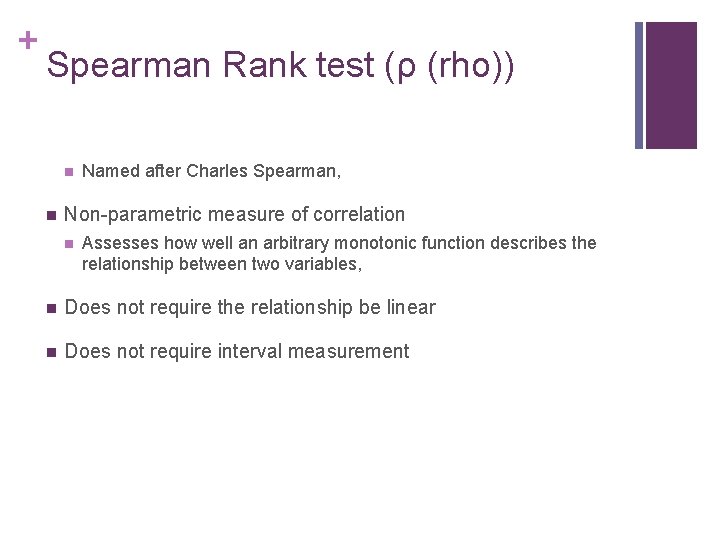

+ Spearman Rank test (ρ (rho)) n n Named after Charles Spearman, Non-parametric measure of correlation n Assesses how well an arbitrary monotonic function describes the relationship between two variables, n Does not require the relationship be linear n Does not require interval measurement

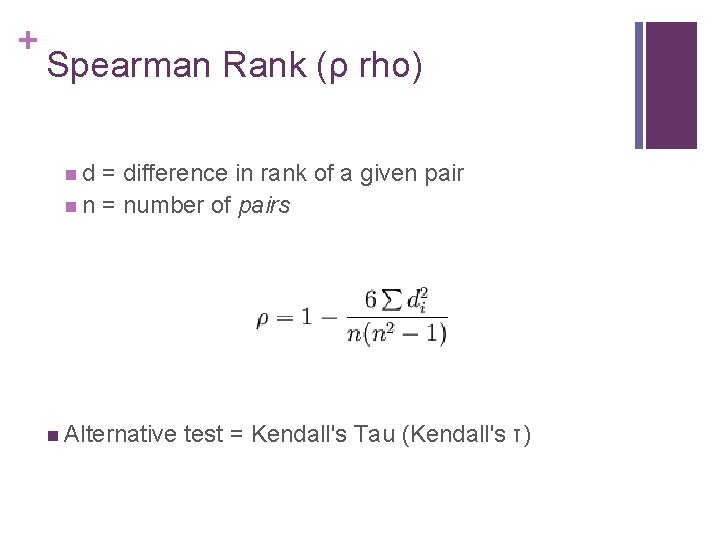

+ Spearman Rank (ρ rho) n d = difference in rank of a given pair n n = number of pairs n Alternative test = Kendall's Tau (Kendall's τ)

+ Mann-Whitney U n AKA: “Wilcoxon rank-sum test n n Mann & Whitney, 1947; Wilcoxon, 1945 Non-parametric test for difference in the medians of two independent samples n Assumptions: n Samples are independent n Observations can be ranked (ordinal or better)

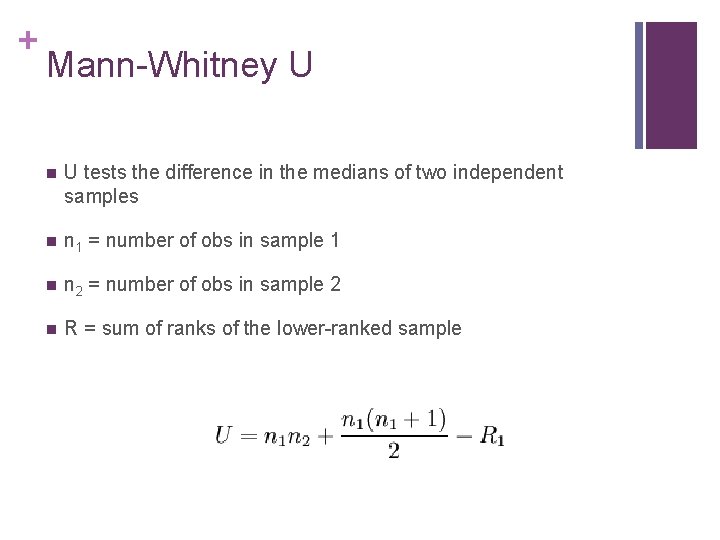

+ Mann-Whitney U n U tests the difference in the medians of two independent samples n n 1 = number of obs in sample 1 n n 2 = number of obs in sample 2 n R = sum of ranks of the lower-ranked sample

+ Mann-Whitney U or t? n Should you use it over the t-test? n Yes if you have a very small sample (<20) n (central limit assumptions not met) n If your data are really ordinal n Otherwise, probably not. n It is less prone to type-I error n (spurious significance) due to outliers. n But does not in fact handle comparisons of samples whose variances differ very well n (Use unequal variance t-test with rank data)

+ Wilcoxon signed-rank test (related samples) n Same idea as Mann-U, generalized to matched samples n Equivalent to non-independent sample t-test

+ Kruskall-Wallis n Non-parametric one-way analysis of variance by ranks (named after William Kruskal and W. Allen Wallis) n tests equality of medians across groups. n It is an extension of the Mann-Whitney U test to 3 or more groups. n Does not assume a normal population, n Assumes population variances among groups are equal.

+ Aesop: Mann-Whitney U Example n Suppose that Aesop is dissatisfied with his classic experiment in which one tortoise was found to beat one hare in a race. n He decides to carry out a significance test to discover whether the results could be extended to tortoises and hares in general…

+ Aesop 2: Mann-Whitney U n He collects a sample of 6 tortoises and 6 hares, and makes them all run his race. The order in which they reach the finishing post (their rank order) is as follows: n tort = c(1, 7, 8, 9, 10, 11) n hare = c(2, 3, 4, 5, 6, 12) n Original tortoise still goes at warp speed, original hare is still lazy, but the others run truer to stereotype.

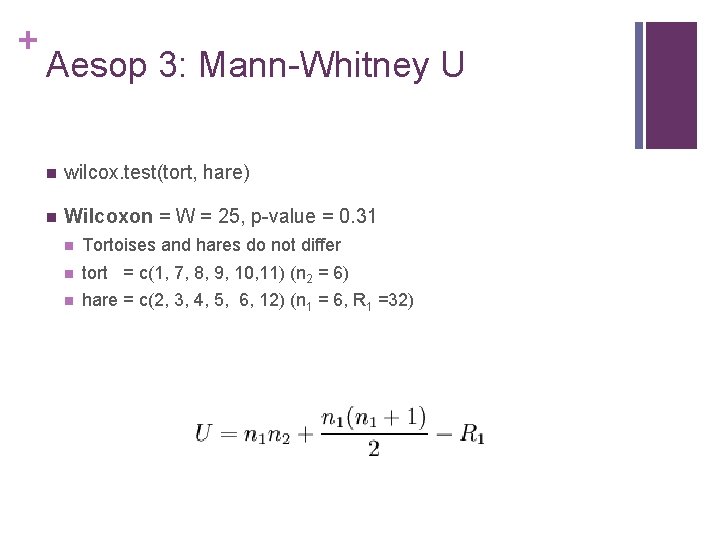

+ Aesop 3: Mann-Whitney U n wilcox. test(tort, hare) n Wilcoxon = W = 25, p-value = 0. 31 n Tortoises and hares do not differ n tort = c(1, 7, 8, 9, 10, 11) (n 2 = 6) n hare = c(2, 3, 4, 5, 6, 12) (n 1 = 6, R 1 =32)

- Slides: 68