Reconstructing the Future Capacity Planning with data thats

![struct acct - Solaris struct acct { char ac_flag; char ac_stat; char ac_pad[2]; uid_t struct acct - Solaris struct acct { char ac_flag; char ac_stat; char ac_pad[2]; uid_t](https://slidetodoc.com/presentation_image_h2/f2e315aca9c6eb17ae91d4969fd17458/image-12.jpg)

- Slides: 43

Reconstructing the Future Capacity Planning with data that’s gone “Troppo” Steve Jenkin - Info Tech. Neil Gunther - Performance Dynamics

Overview • Background • Process: acquire data, investigate, analyse/model – The detail – Sifting through the lumps – Data Analysis and Modeling • Summary 2

Aims • With relic data, wanted to write up analysis in performance modeling terms. • Believed techniques for success in project useful to designers and practitioners. 3

Background • ATO project - one of many hosted sites – 12 -18 month project – Replacements I & II followed • • “Complex Environment” System Diagram Software Contractor Our bad days – Runaway Failure late February – Anzac Day - security pinhole 4

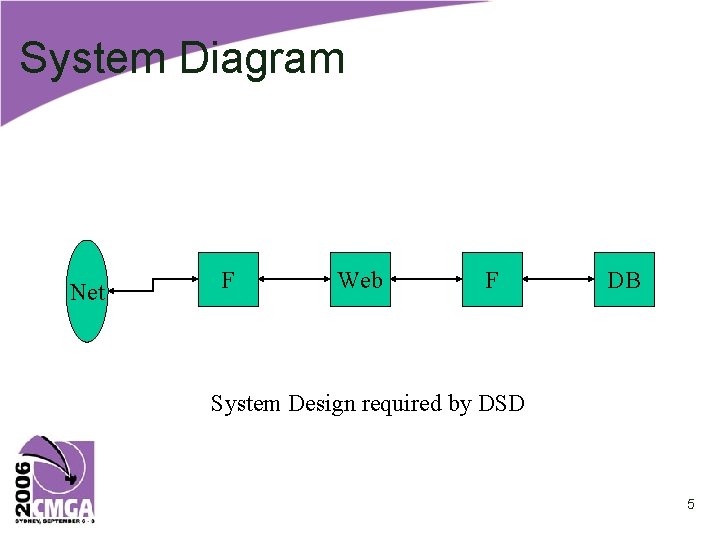

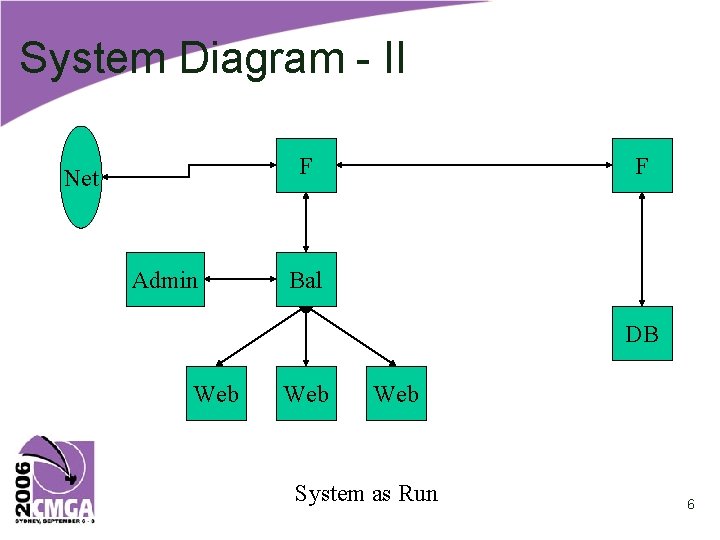

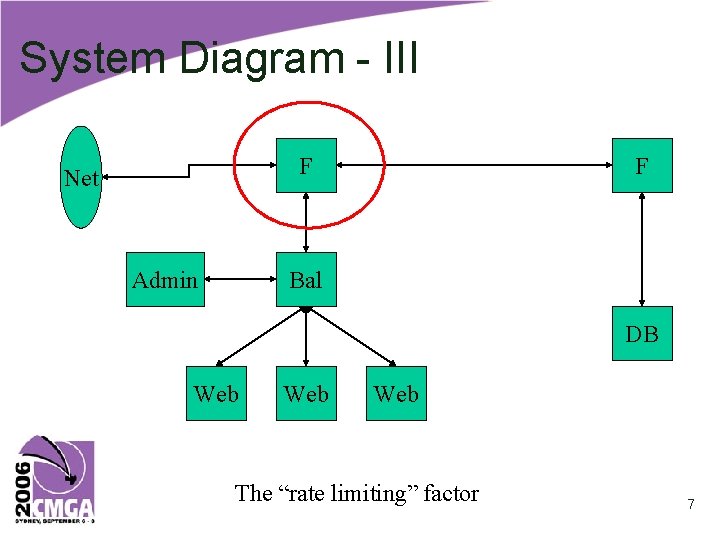

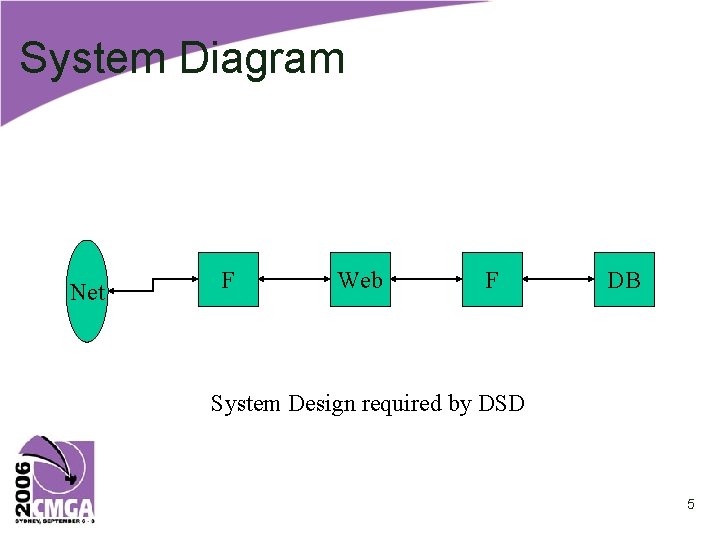

System Diagram Net F Web F DB System Design required by DSD 5

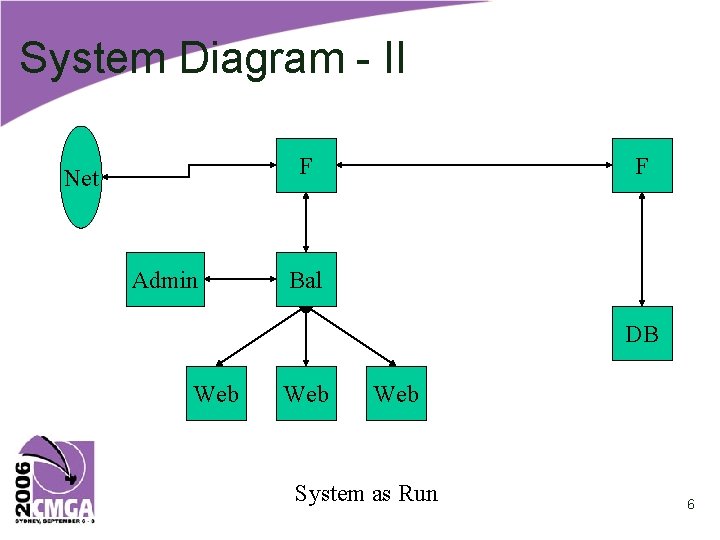

System Diagram - II F Net Admin F Bal DB Web Web System as Run 6

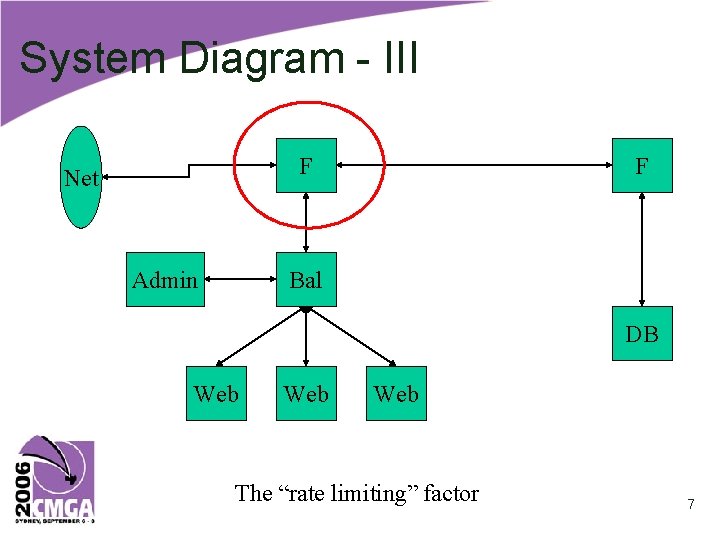

System Diagram - III F Net Admin F Bal DB Web Web The “rate limiting” factor 7

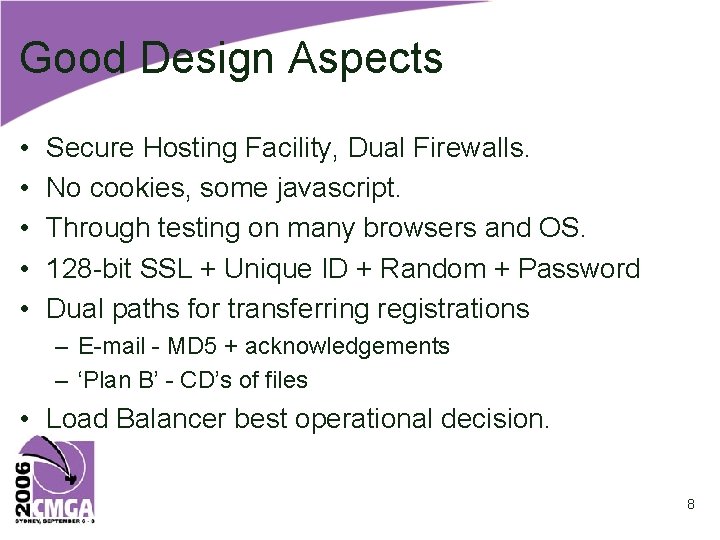

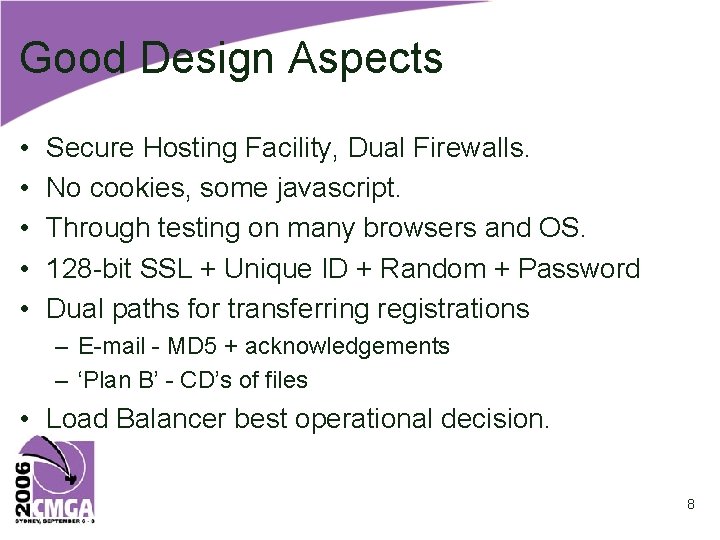

Good Design Aspects • • • Secure Hosting Facility, Dual Firewalls. No cookies, some javascript. Through testing on many browsers and OS. 128 -bit SSL + Unique ID + Random + Password Dual paths for transferring registrations – E-mail - MD 5 + acknowledgements – ‘Plan B’ - CD’s of files • Load Balancer best operational decision. 8

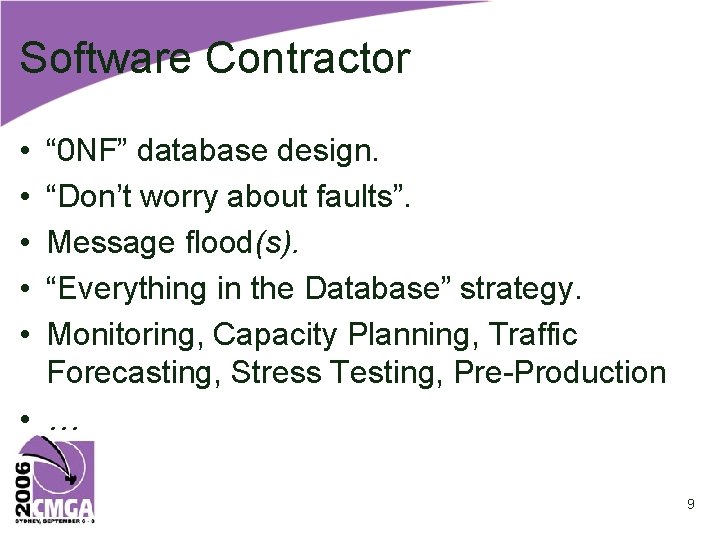

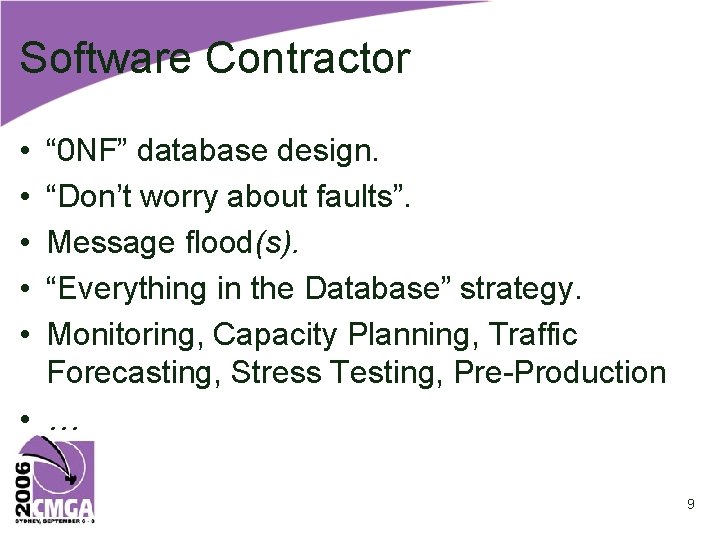

Software Contractor • • • “ 0 NF” database design. “Don’t worry about faults”. Message flood(s). “Everything in the Database” strategy. Monitoring, Capacity Planning, Traffic Forecasting, Stress Testing, Pre-Production • … 9

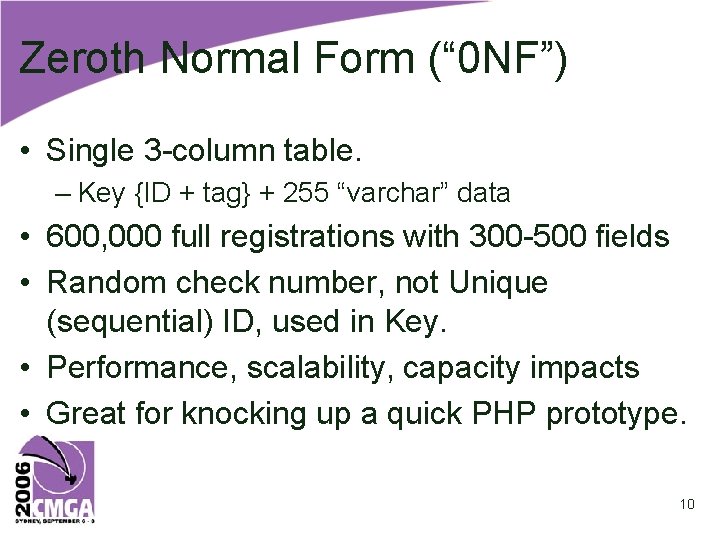

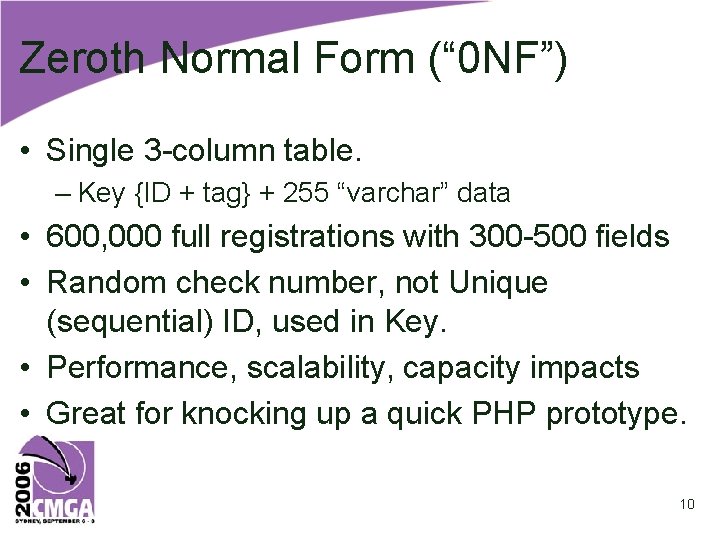

Zeroth Normal Form (“ 0 NF”) • Single 3 -column table. – Key {ID + tag} + 255 “varchar” data • 600, 000 full registrations with 300 -500 fields • Random check number, not Unique (sequential) ID, used in Key. • Performance, scalability, capacity impacts • Great for knocking up a quick PHP prototype. 10

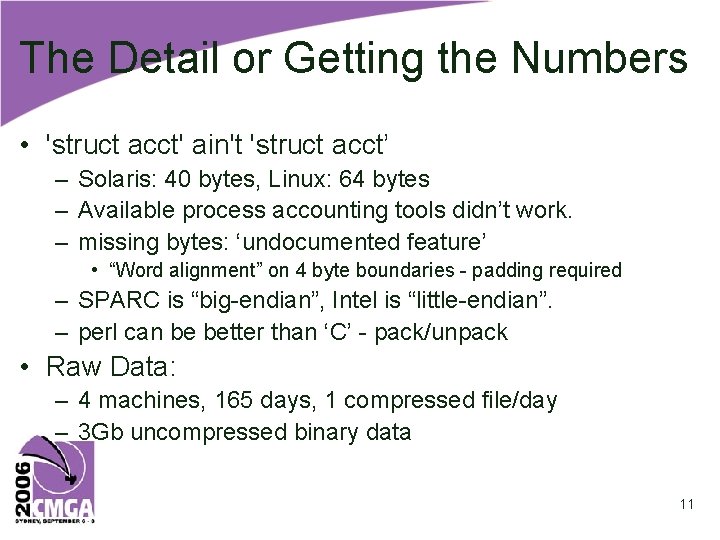

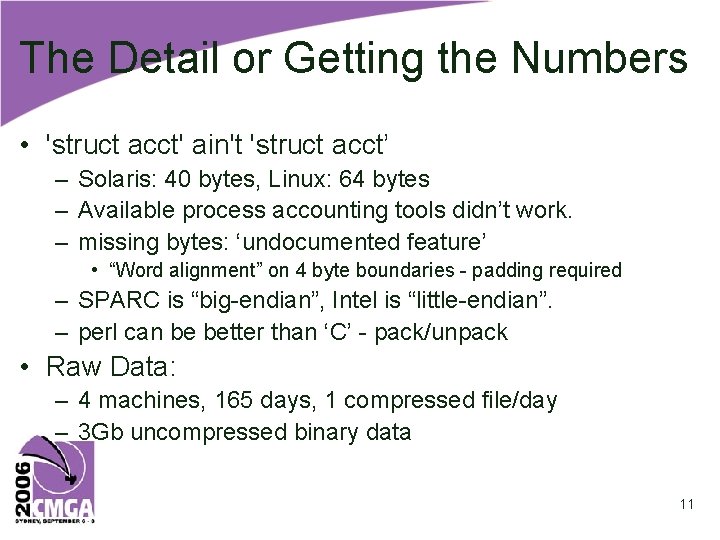

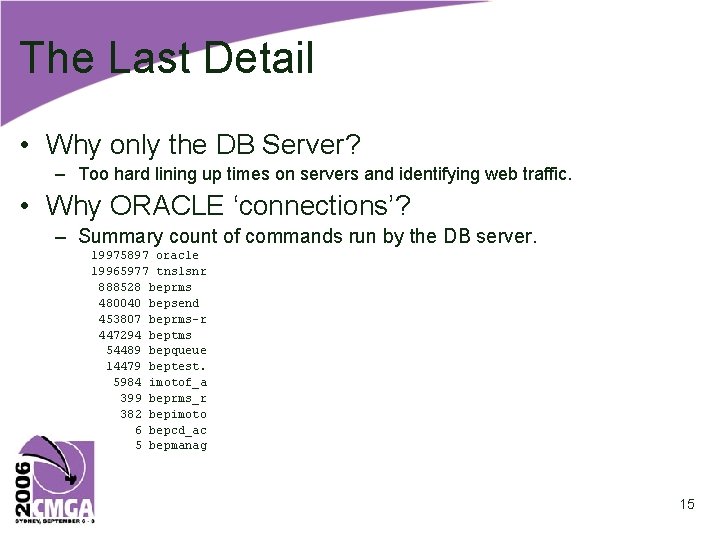

The Detail or Getting the Numbers • 'struct acct' ain't 'struct acct’ – Solaris: 40 bytes, Linux: 64 bytes – Available process accounting tools didn’t work. – missing bytes: ‘undocumented feature’ • “Word alignment” on 4 byte boundaries - padding required – SPARC is “big-endian”, Intel is “little-endian”. – perl can be better than ‘C’ - pack/unpack • Raw Data: – 4 machines, 165 days, 1 compressed file/day – 3 Gb uncompressed binary data 11

![struct acct Solaris struct acct char acflag char acstat char acpad2 uidt struct acct - Solaris struct acct { char ac_flag; char ac_stat; char ac_pad[2]; uid_t](https://slidetodoc.com/presentation_image_h2/f2e315aca9c6eb17ae91d4969fd17458/image-12.jpg)

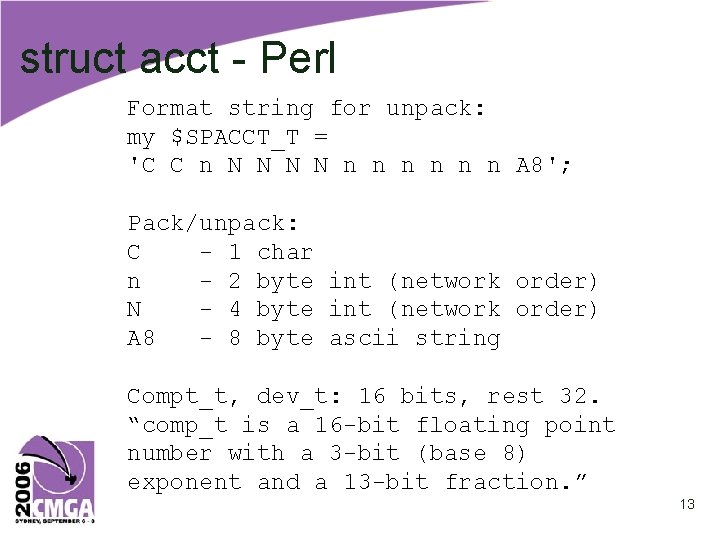

struct acct - Solaris struct acct { char ac_flag; char ac_stat; char ac_pad[2]; uid_t ac_uid; gid_t ac_gid; dev_t ac_tty; time_t ac_btime; comp_t ac_utime; comp_t ac_stime; comp_t ac_etime; comp_t ac_mem; comp_t ac_io; comp_t ac_rw; char ac_comm[8]; }; /* /* /* /* Accounting flag */ Exit status */ PADDING */ Accounting user ID */ Accounting group ID */ control tty */ Beginning time */ accounting user time in clock ticks */ accounting system time in clock ticks */ accounting total elapsed time in clock ticks */ memory usage in clicks (pages) */ chars transferred by read/write */ number of block reads/writes */ command name */ Sizeof is 40 bytes, But 1+1+2+(2*4)+2+4+(6*2)+8 = 38 Huh? How long is a ‘clock tick’? What’s a ‘comp_t’, ‘uid_t’, …? 12

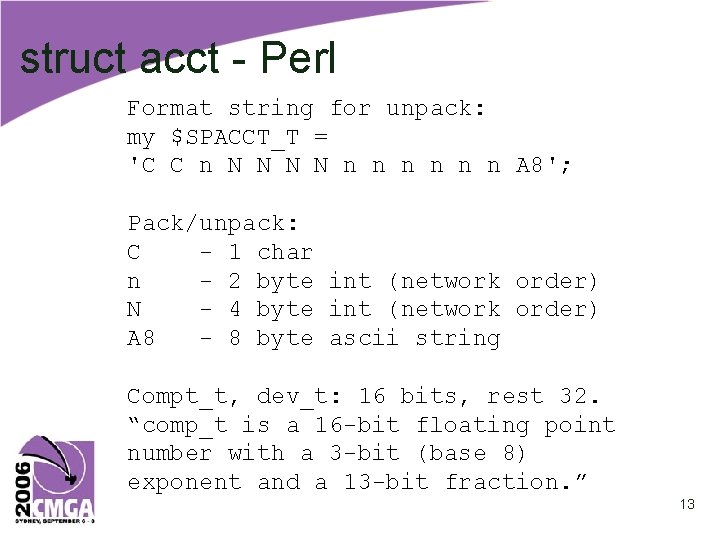

struct acct - Perl Format string for unpack: my $SPACCT_T = 'C C n N N n n n A 8'; Pack/unpack: C - 1 char n - 2 byte int (network order) N - 4 byte int (network order) A 8 - 8 byte ascii string Compt_t, dev_t: 16 bits, rest 32. “comp_t is a 16 -bit floating point number with a 3 -bit (base 8) exponent and a 13 -bit fraction. ” 13

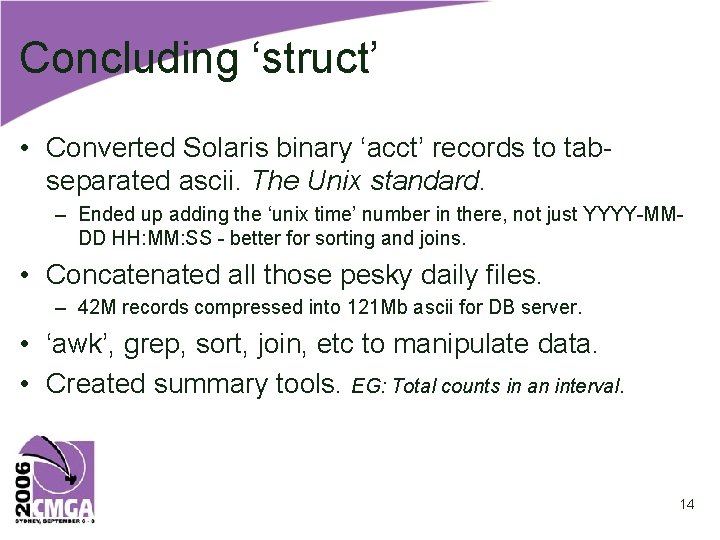

Concluding ‘struct’ • Converted Solaris binary ‘acct’ records to tabseparated ascii. The Unix standard. – Ended up adding the ‘unix time’ number in there, not just YYYY-MMDD HH: MM: SS - better for sorting and joins. • Concatenated all those pesky daily files. – 42 M records compressed into 121 Mb ascii for DB server. • ‘awk’, grep, sort, join, etc to manipulate data. • Created summary tools. EG: Total counts in an interval. 14

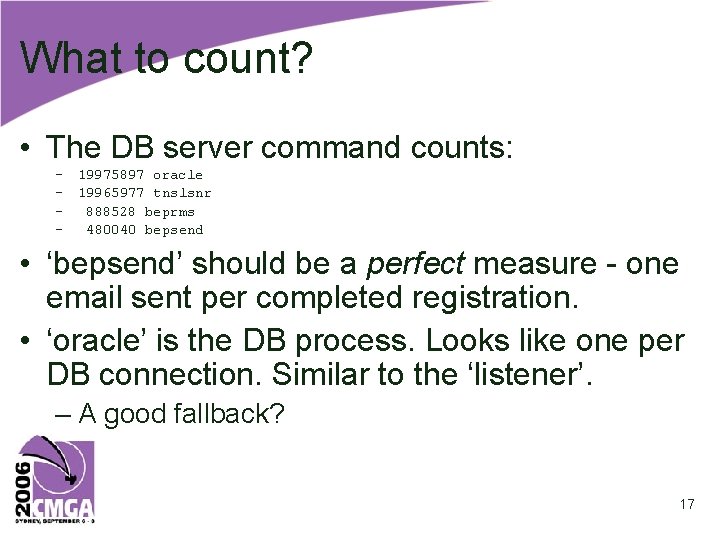

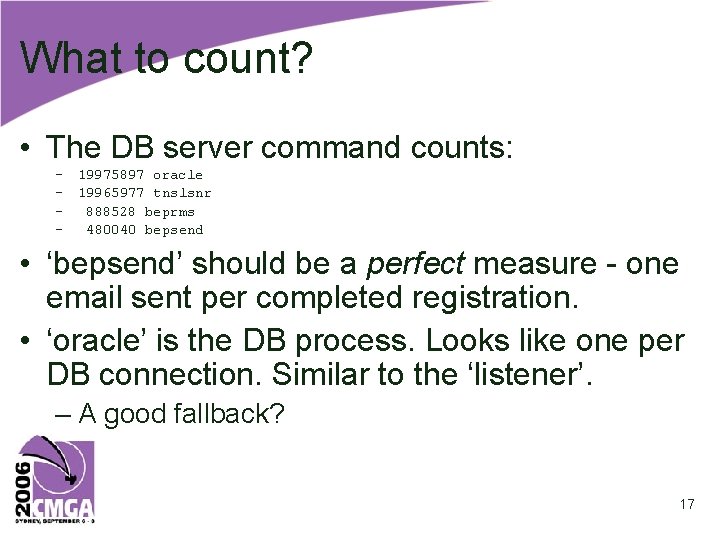

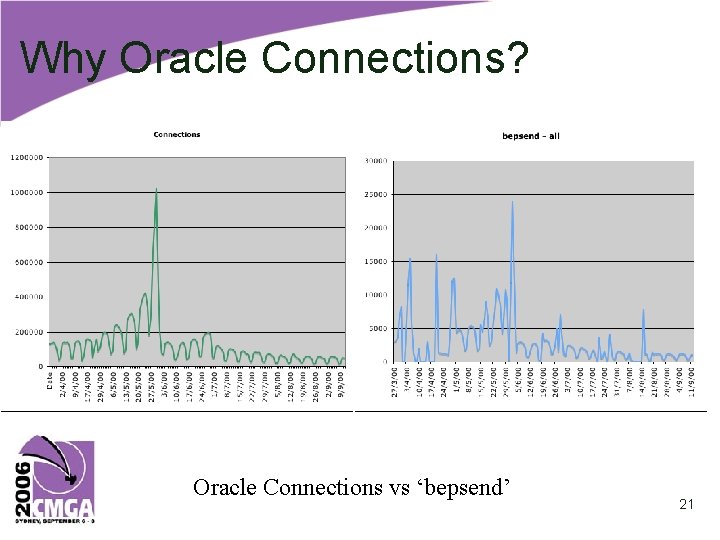

The Last Detail • Why only the DB Server? – Too hard lining up times on servers and identifying web traffic. • Why ORACLE ‘connections’? – Summary count of commands run by the DB server. 19975897 oracle 19965977 tnslsnr 888528 beprms 480040 bepsend 453807 beprms-r 447294 beptms 54489 bepqueue 14479 beptest. 5984 imotof_a 399 beprms_r 382 bepimoto 6 bepcd_ac 5 bepmanag 15

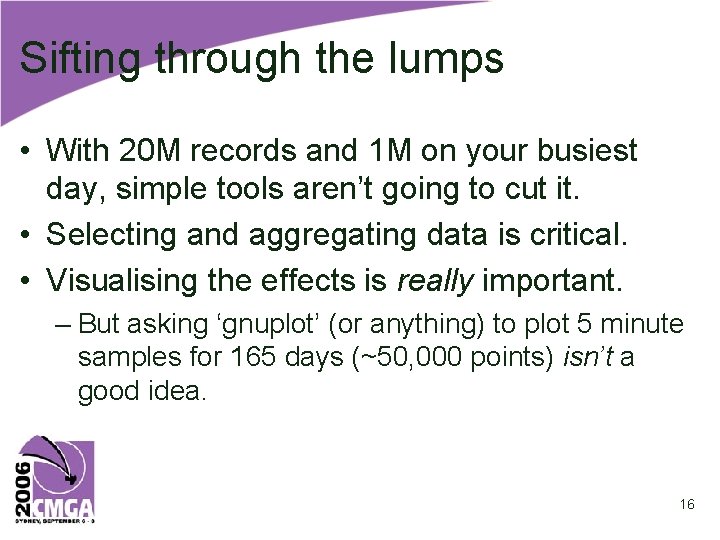

Sifting through the lumps • With 20 M records and 1 M on your busiest day, simple tools aren’t going to cut it. • Selecting and aggregating data is critical. • Visualising the effects is really important. – But asking ‘gnuplot’ (or anything) to plot 5 minute samples for 165 days (~50, 000 points) isn’t a good idea. 16

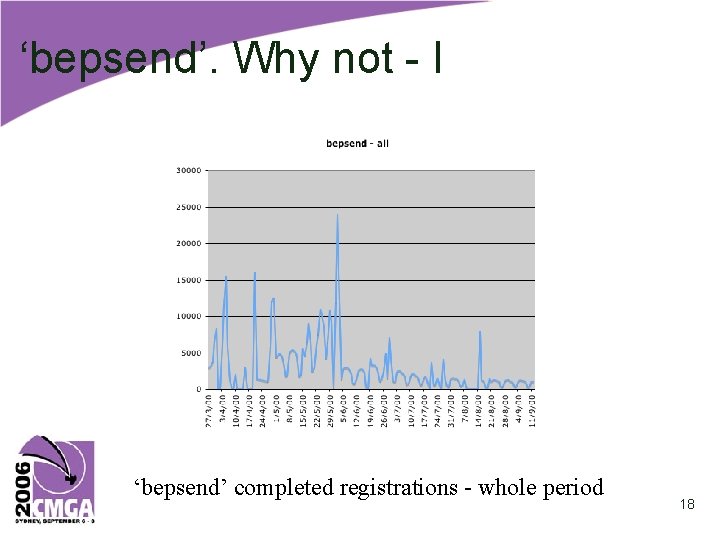

What to count? • The DB server command counts: – 19975897 oracle – 19965977 tnslsnr – 888528 beprms – 480040 bepsend • ‘bepsend’ should be a perfect measure - one email sent per completed registration. • ‘oracle’ is the DB process. Looks like one per DB connection. Similar to the ‘listener’. – A good fallback? 17

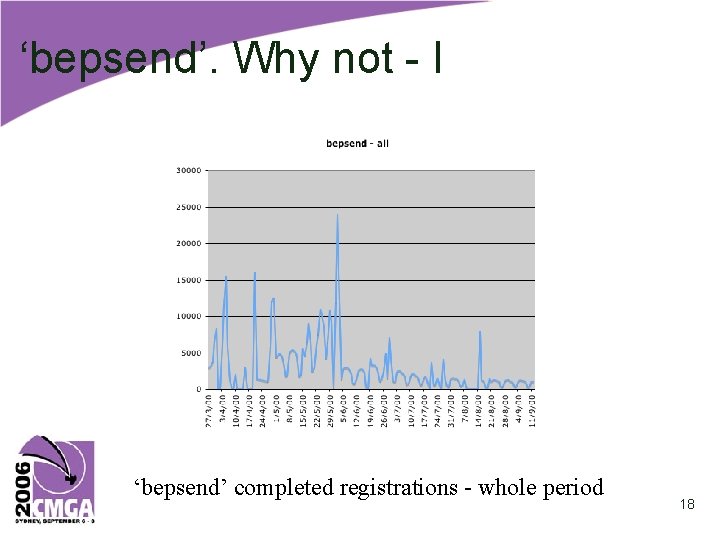

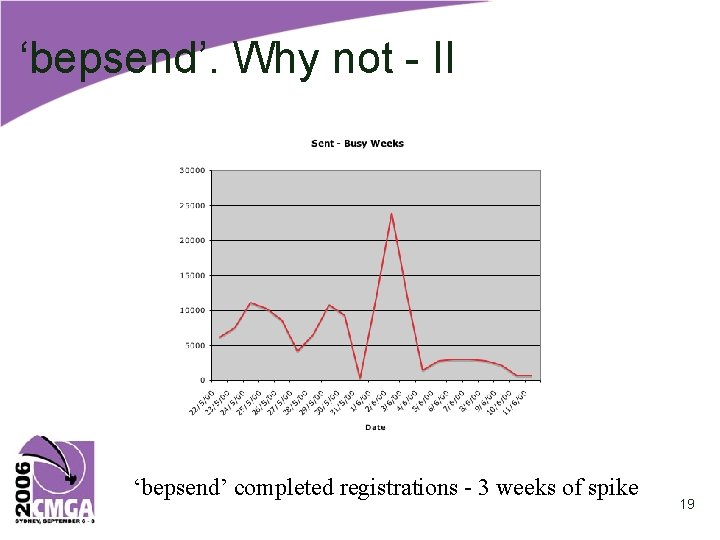

‘bepsend’. Why not - I ‘bepsend’ completed registrations - whole period 18

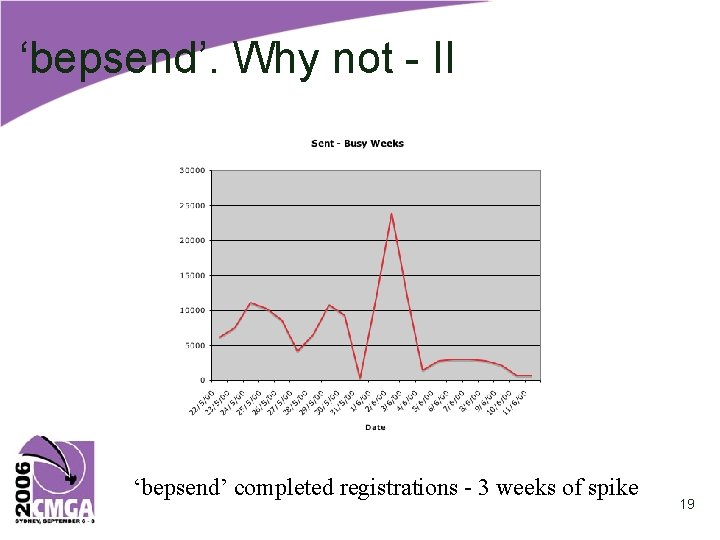

‘bepsend’. Why not - II ‘bepsend’ completed registrations - 3 weeks of spike 19

‘bepsend’. Why not - III • • E-mails not always sent. There were floods after ‘stoppages’. The rate was capped at 600 or 1000/hr. Turned off ‘bepsend’ for the Busiest day. – spent next 3 days clearing the backlog. • Close, but no cigar. 20

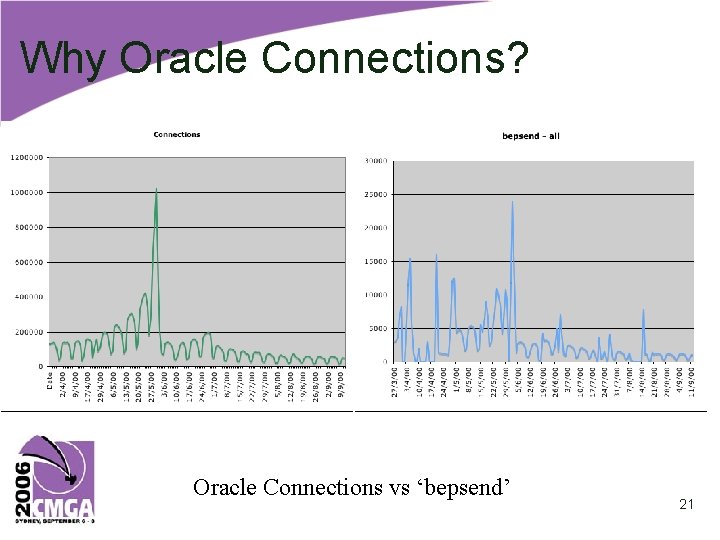

Why Oracle Connections? Oracle Connections vs ‘bepsend’ 21

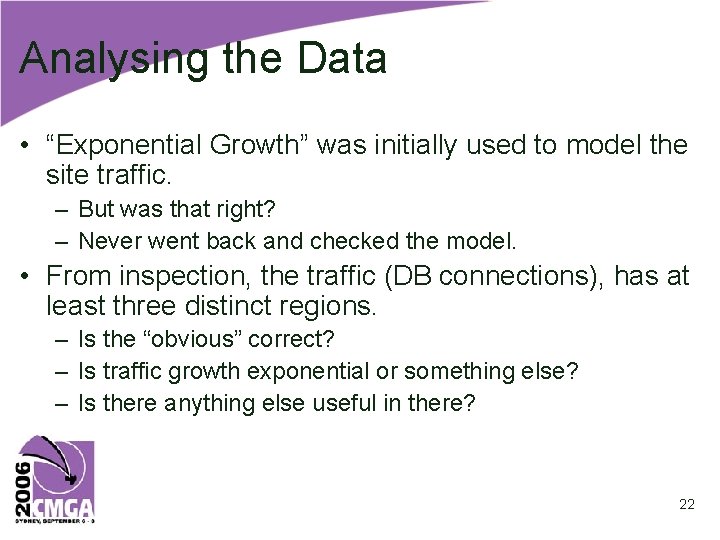

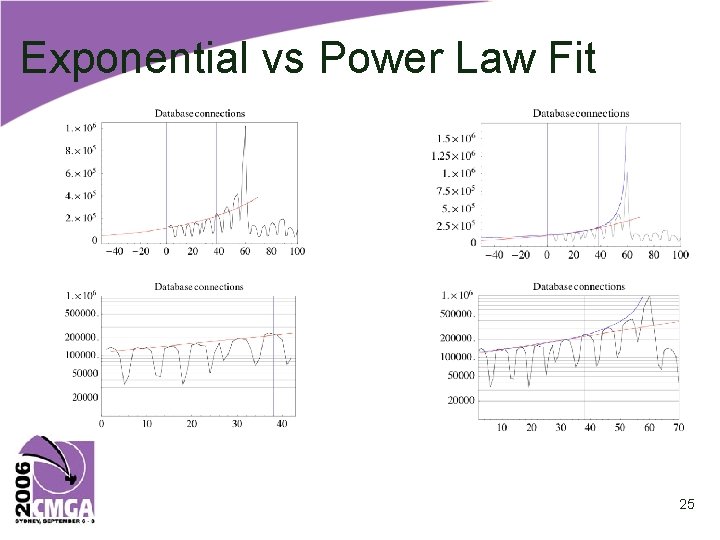

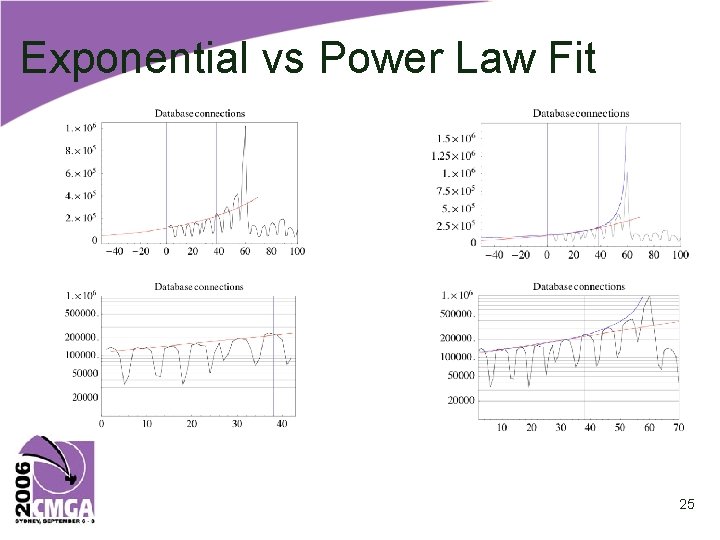

Analysing the Data • “Exponential Growth” was initially used to model the site traffic. – But was that right? – Never went back and checked the model. • From inspection, the traffic (DB connections), has at least three distinct regions. – Is the “obvious” correct? – Is traffic growth exponential or something else? – Is there anything else useful in there? 22

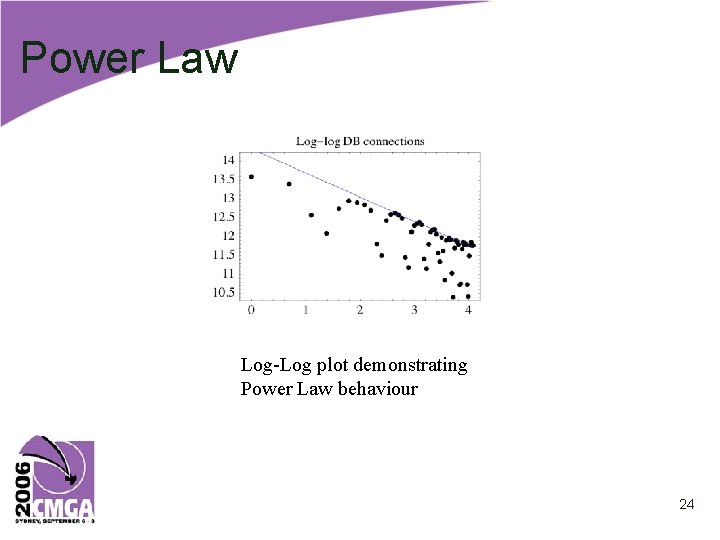

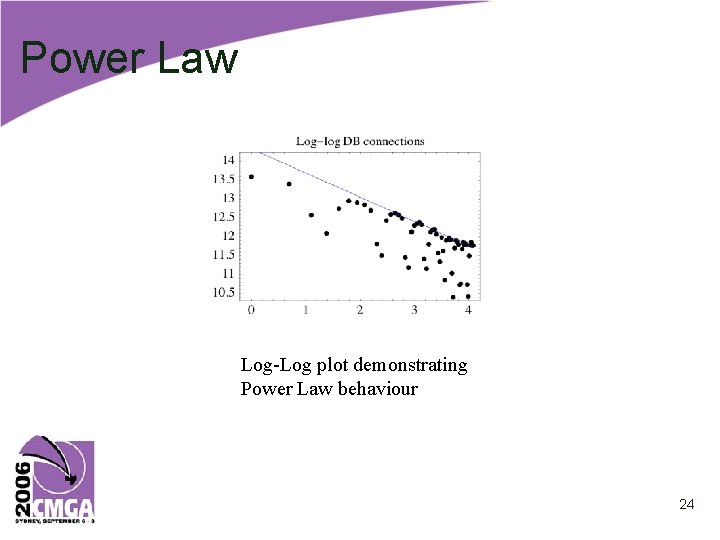

Mathematica Marvels • Reconstructing the original exponential model – – Doubling period ~6 weeks – But significantly low around the spike • Enter the Power Law – “highly correlated behaviour” as a cause – “The system is primarily doing just one thing for a longer than average time” [Gun 06] 23

Power Law Log-Log plot demonstrating Power Law behaviour 24

Exponential vs Power Law Fit 25

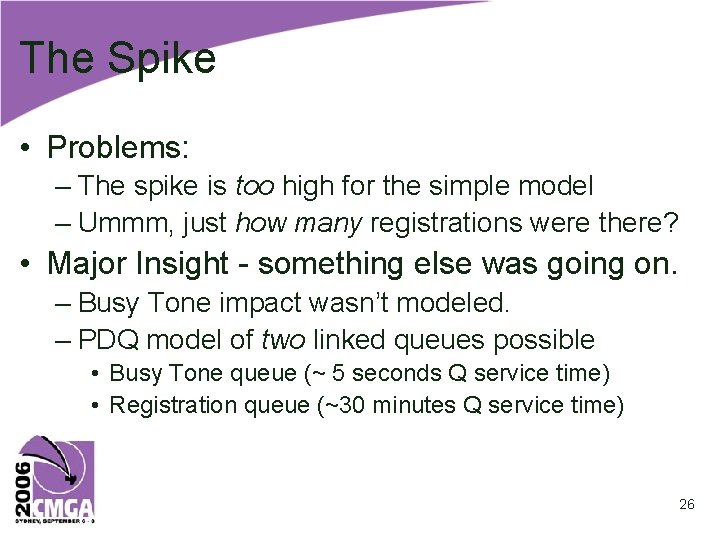

The Spike • Problems: – The spike is too high for the simple model – Ummm, just how many registrations were there? • Major Insight - something else was going on. – Busy Tone impact wasn’t modeled. – PDQ model of two linked queues possible • Busy Tone queue (~ 5 seconds Q service time) • Registration queue (~30 minutes Q service time) 26

Other time-series models • • Simpoint Irregular Sampling Holt-Winters - for seasonal (repeating) data From the paper: – Neil Gunther’s expert area – The other techniques are appropriate when many chunks are missing. – That wasn’t the problem here. But we know what to do in those cases. 27

Getting Expert help • With my trusty Excel & modest arsenal of techniques, no surprising results likely. • Having someone to talk the problems through was invaluable. • Especially when they have much better Maths, a bunch of powerful tools and lots of experience using them. 28

Runaway Failures • As web server response slows, users ‘click’ again and again. CGI’s have to run to completion, but more are started. – “positive feedback” vs negative/self-limiting. • System Demand increases precisely because of slow response, due to high system load. • Correlation of Load and Demand, as noted earlier, leads to super-exponential growth. 29

Busy Tone • A response to a system ‘meltdown’ due to sudden increase in demand when the site was first advertised. • Load Average incr. from ~1 to 150 in meltdown. • Response Time not measured. “Off the chart”. • BT implementation weakness - DB access. • Consumed large fraction of system on Busy Day. – System averaged 1250 -1500 registrations/hr. 30

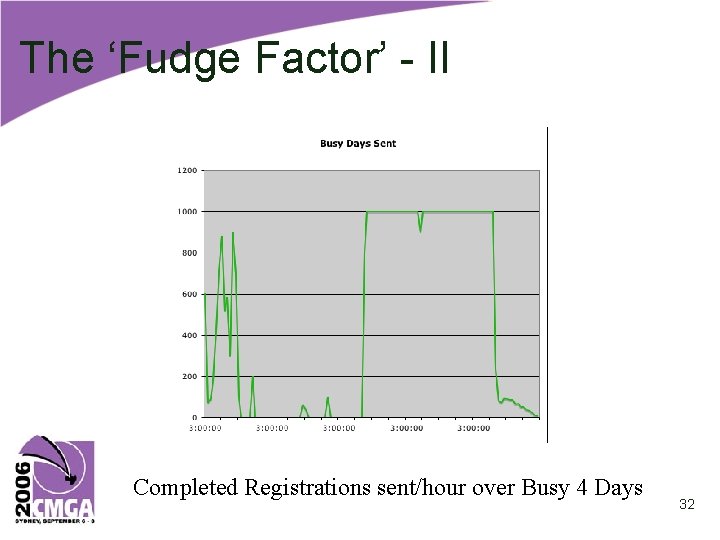

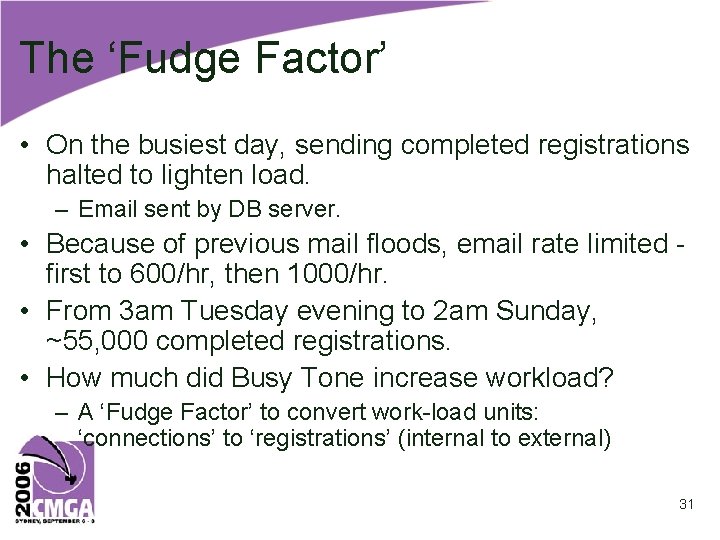

The ‘Fudge Factor’ • On the busiest day, sending completed registrations halted to lighten load. – Email sent by DB server. • Because of previous mail floods, email rate limited first to 600/hr, then 1000/hr. • From 3 am Tuesday evening to 2 am Sunday, ~55, 000 completed registrations. • How much did Busy Tone increase workload? – A ‘Fudge Factor’ to convert work-load units: ‘connections’ to ‘registrations’ (internal to external) 31

The ‘Fudge Factor’ - II Completed Registrations sent/hour over Busy 4 Days 32

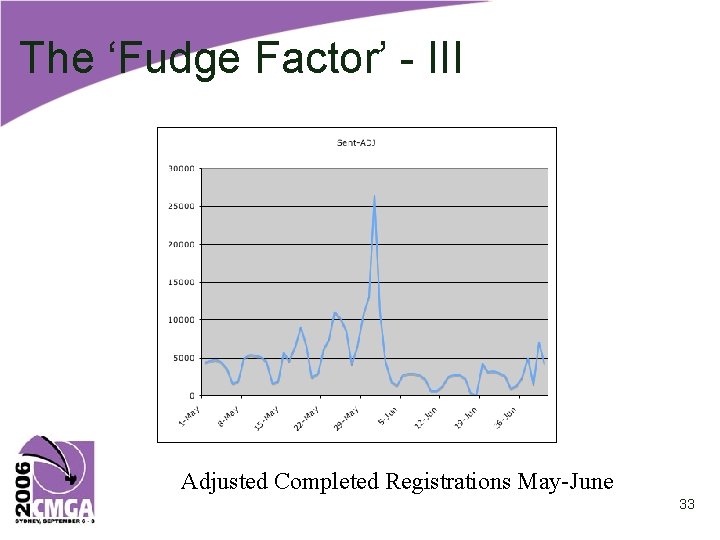

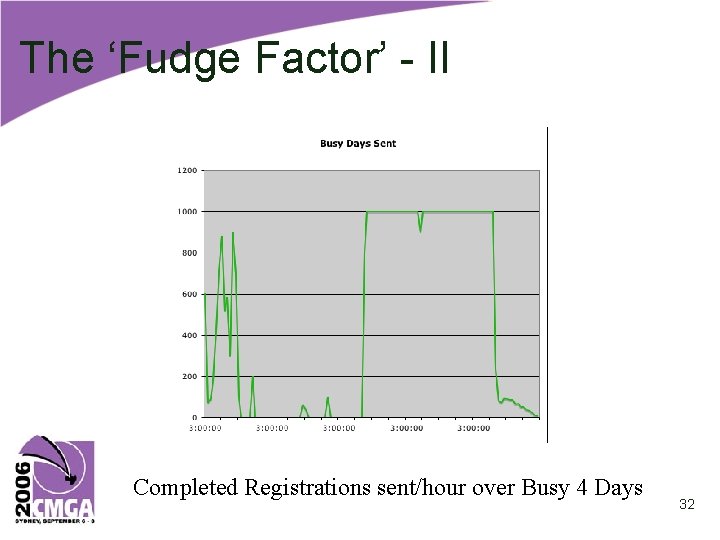

The ‘Fudge Factor’ - III Adjusted Completed Registrations May-June 33

The ‘Fudge Factor’ - IV • For whole period – 19, 965, 976 Connections, 480, 076 registrations, and ratio of 41. 5 • For the ‘busiest 4 days’ 3 am Tue 30/5 - 2 am Sun 04/06 – 2, 517, 219 Oracle Connections – 54, 245 Registrations completed (12. 5% total) – 46. 4 Connections/Registration ratio • Rough effect of Busy Day – ~55, 000 Connections at ‘average rate” = 2, 256, 006 an excess of 261, 213 connections. – 10% for busy 4 days. – 20% if all on the day of the spike. – Averaged 12 -13 ‘retries’ for all users. 34

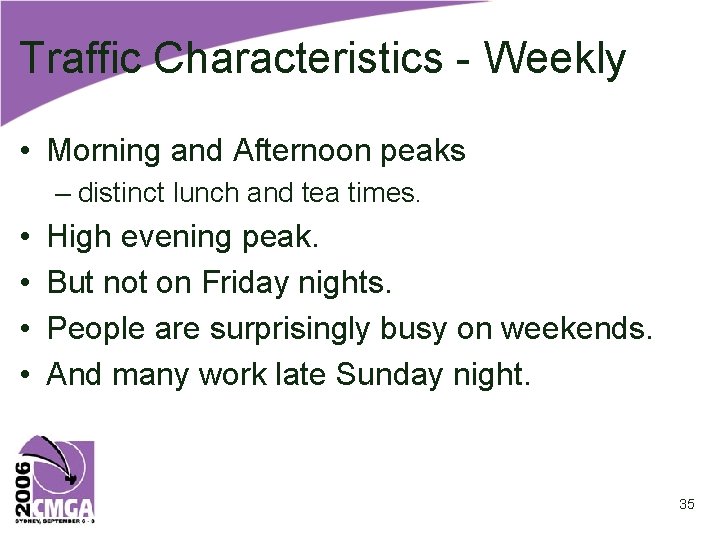

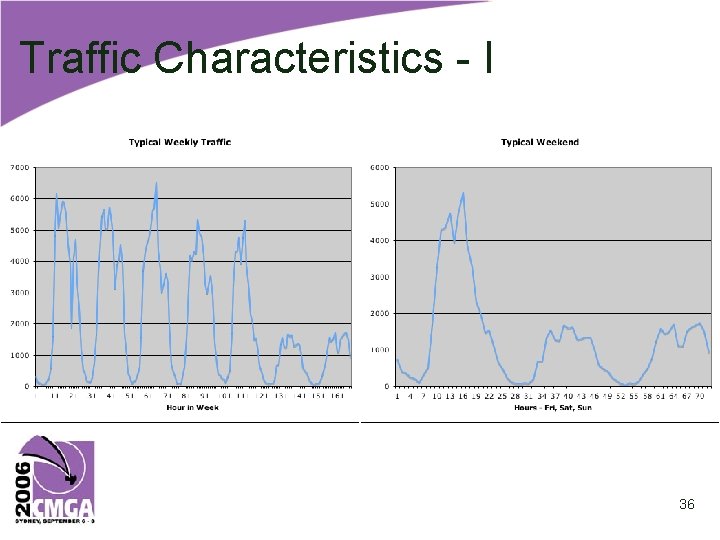

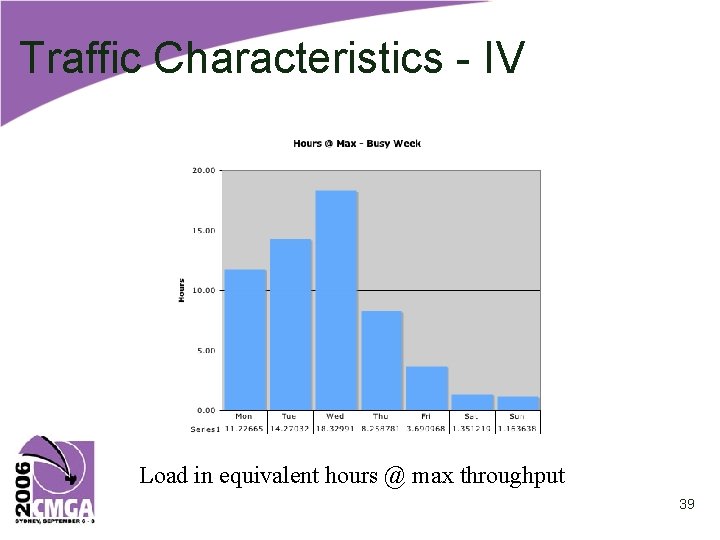

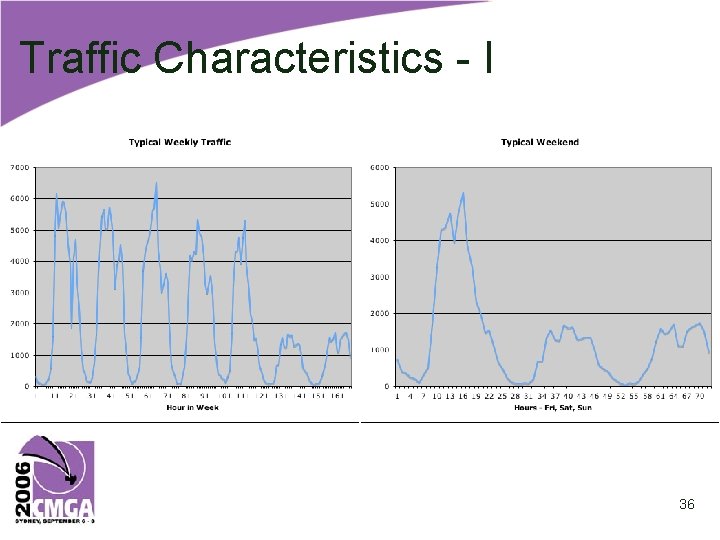

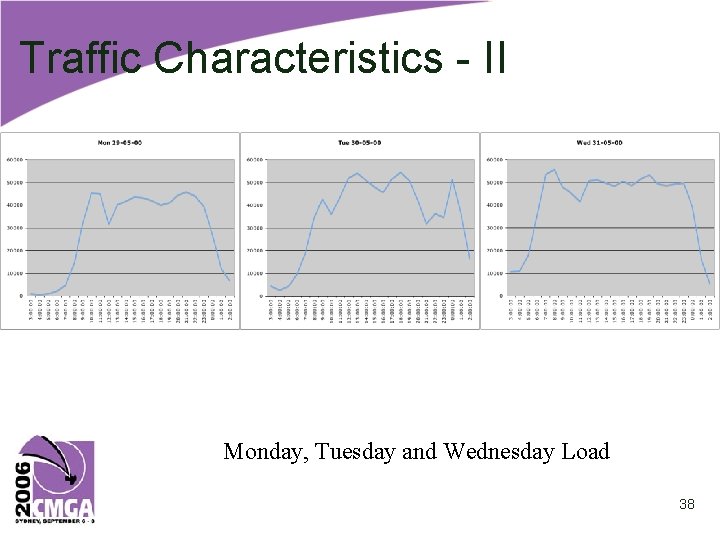

Traffic Characteristics - Weekly • Morning and Afternoon peaks – distinct lunch and tea times. • • High evening peak. But not on Friday nights. People are surprisingly busy on weekends. And many work late Sunday night. 35

Traffic Characteristics - I 36

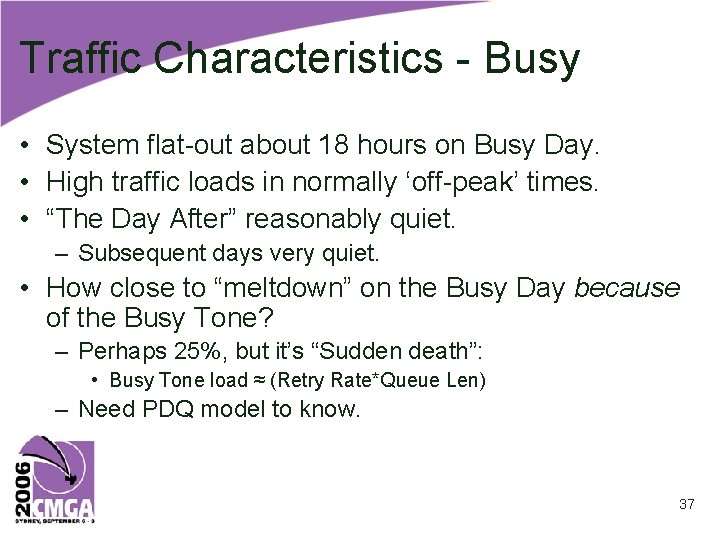

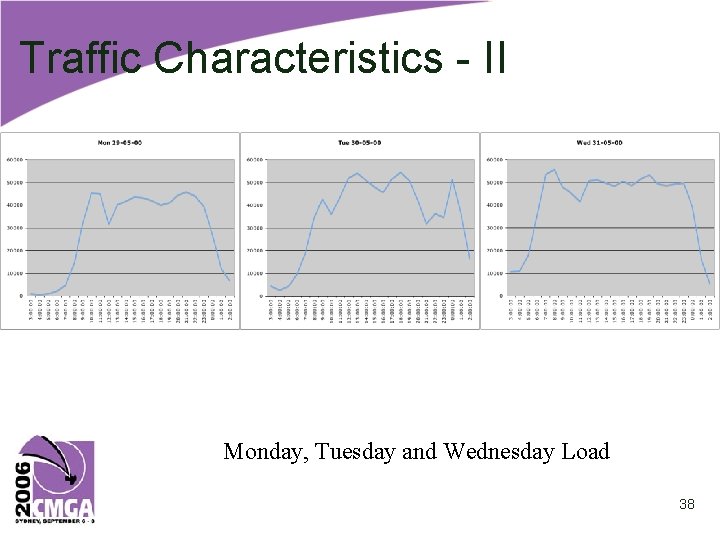

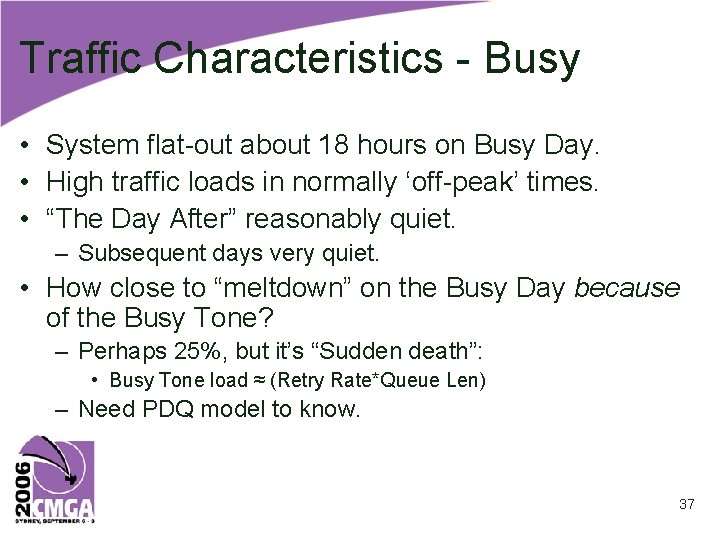

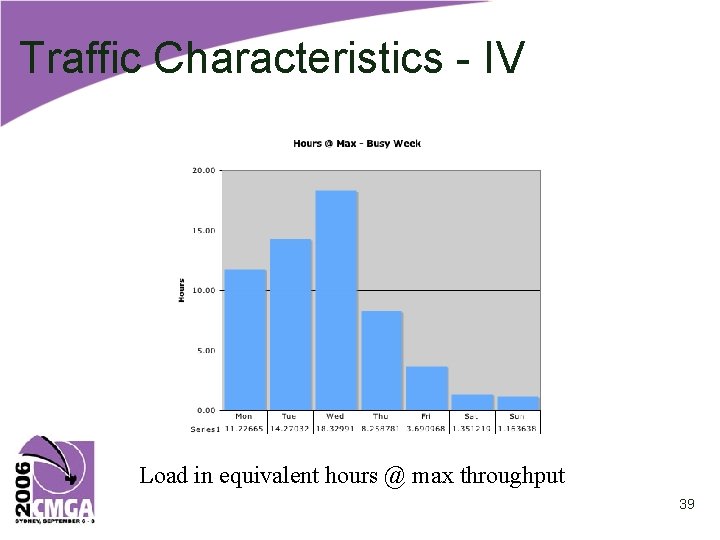

Traffic Characteristics - Busy • System flat-out about 18 hours on Busy Day. • High traffic loads in normally ‘off-peak’ times. • “The Day After” reasonably quiet. – Subsequent days very quiet. • How close to “meltdown” on the Busy Day because of the Busy Tone? – Perhaps 25%, but it’s “Sudden death”: • Busy Tone load ≈ (Retry Rate*Queue Len) – Need PDQ model to know. 37

Traffic Characteristics - II Monday, Tuesday and Wednesday Load 38

Traffic Characteristics - IV Load in equivalent hours @ max throughput 39

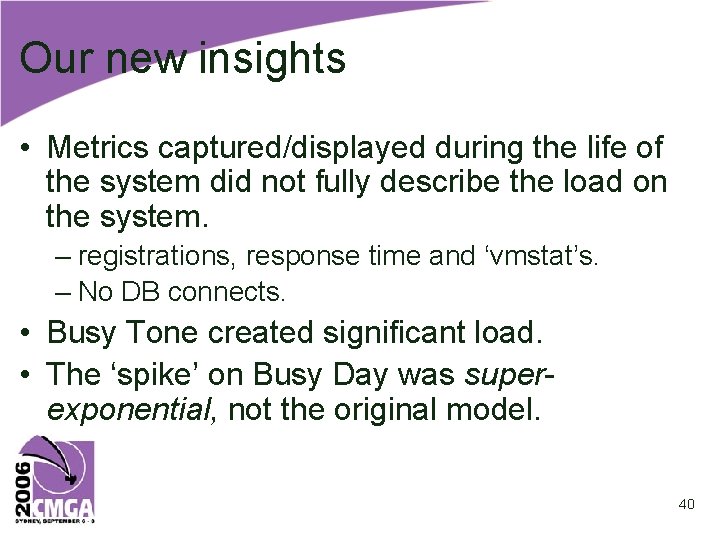

Our new insights • Metrics captured/displayed during the life of the system did not fully describe the load on the system. – registrations, response time and ‘vmstat’s. – No DB connects. • Busy Tone created significant load. • The ‘spike’ on Busy Day was superexponential, not the original model. 40

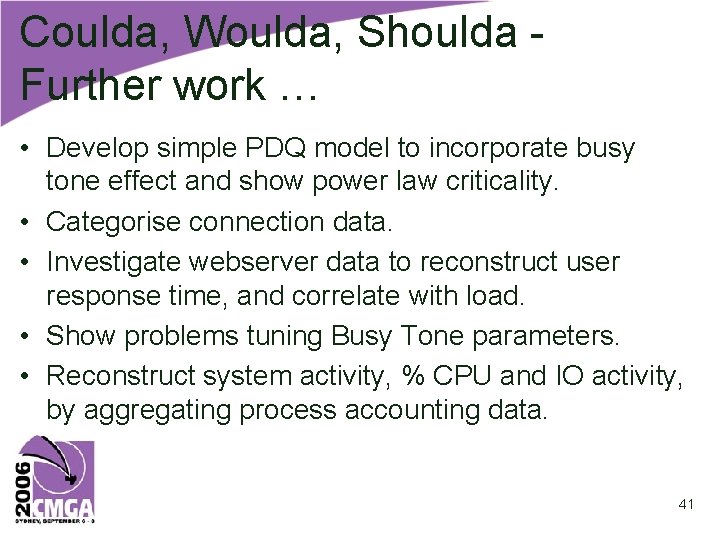

Coulda, Woulda, Shoulda Further work … • Develop simple PDQ model to incorporate busy tone effect and show power law criticality. • Categorise connection data. • Investigate webserver data to reconstruct user response time, and correlate with load. • Show problems tuning Busy Tone parameters. • Reconstruct system activity, % CPU and IO activity, by aggregating process accounting data. 41

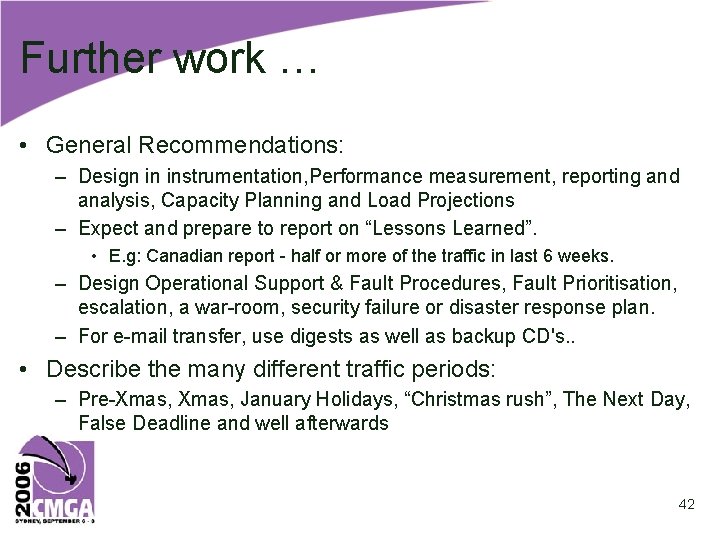

Further work … • General Recommendations: – Design in instrumentation, Performance measurement, reporting and analysis, Capacity Planning and Load Projections – Expect and prepare to report on “Lessons Learned”. • E. g: Canadian report - half or more of the traffic in last 6 weeks. – Design Operational Support & Fault Procedures, Fault Prioritisation, escalation, a war-room, security failure or disaster response plan. – For e-mail transfer, use digests as well as backup CD's. . • Describe the many different traffic periods: – Pre-Xmas, January Holidays, “Christmas rush”, The Next Day, False Deadline and well afterwards 42

Summary • We banged on some different system data and were able to verify some important system effects. • And learnt a few new things along the way. – – About the system. About Busy Tone and more runaway failures. About handling and processing large datasets. About performance analysis and modeling. 43