Recognition of biological cells the beginning of study

Recognition of biological cells – the beginning of study Marcin Skoczylas Bordeaux, February 2006

Since 1990 Participating in various computer programming competitions 1998 – 2003 Technical University – Computer Science Politechnika Białostocka, Poland 2003 – 2005 Movie and Television Academy – director diploma (hobby)

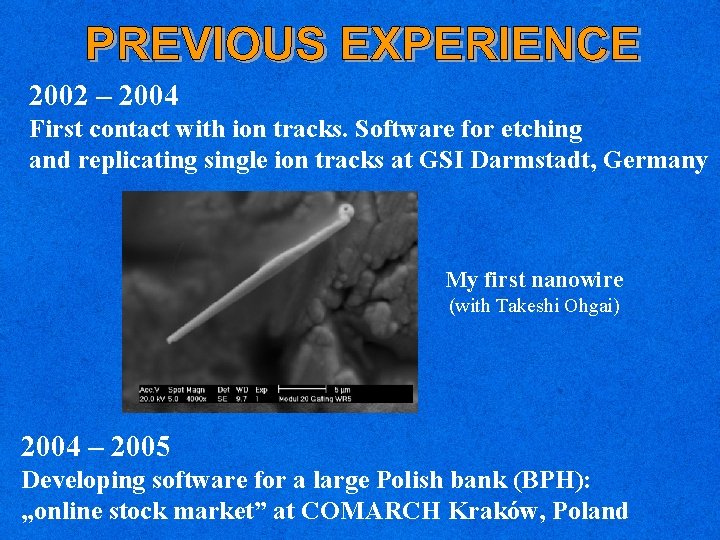

2002 – 2004 First contact with ion tracks. Software for etching and replicating single ion tracks at GSI Darmstadt, Germany My first nanowire (with Takeshi Ohgai) 2004 – 2005 Developing software for a large Polish bank (BPH): „online stock market” at COMARCH Kraków, Poland

Development: - Create software for automatic cell positioning - Resolve typical recognition problems such as overlapping cells, or cells with shape variations - Build fast algorithms Study: - Image processing techniques - Neural Networks - Parallel programming

Advanced image processing can be time consuming: - Increase processing speed by CPU clustering (parallel programming) - Use Neural Networks for the recognition and classification Note: Neural Networks are common practice in Optical Character Recognition (OCR), stained cells recognition, fingerprints.

1892 Santiago Ramon y Cajal determined that the nervous system is built with discrete neurons. They communicate with each other by sending electrical signals using axons which touch the dendrites, transmitting the signal through synapses 1943 Mc. Culloch and Pitts proposed the first computationel model of a neuron. Output was 0 or 1 1949 Donald Olding Hebb invented learning rule: Synaptic connections and the strength of a synapse would increase by the repeated activation of one neuron by the other one

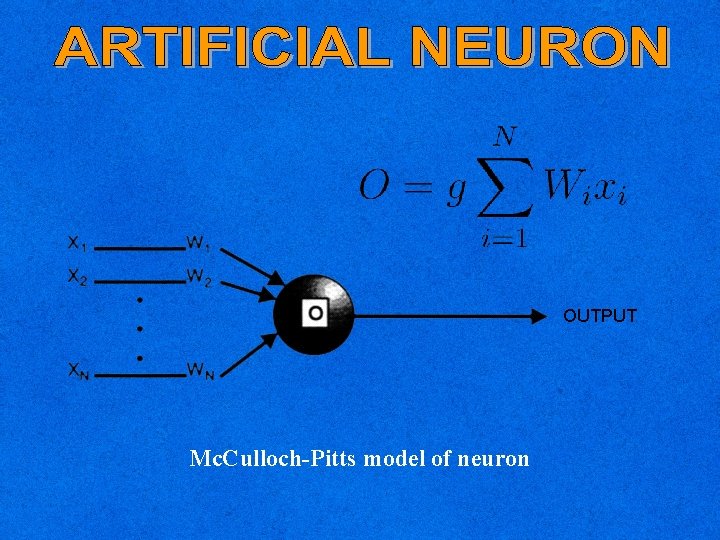

OUTPUT Mc. Culloch-Pitts model of neuron

1962 Frank Rosenblatt discovered first iterative learning procedure for single layer perceptron. Tapping a great potential. 1969 Marvin Minsky and Seymour Papert showed that single layer perceptron is very limited, it cannot learn simple functions like XOR 1982 Hopfield suggested that a neural network can be analyzed as energy function 1985 Ackley, Hinton & Sejnowski: Boltzman Machine 1986 David Rumelhart: Back-propagation learning

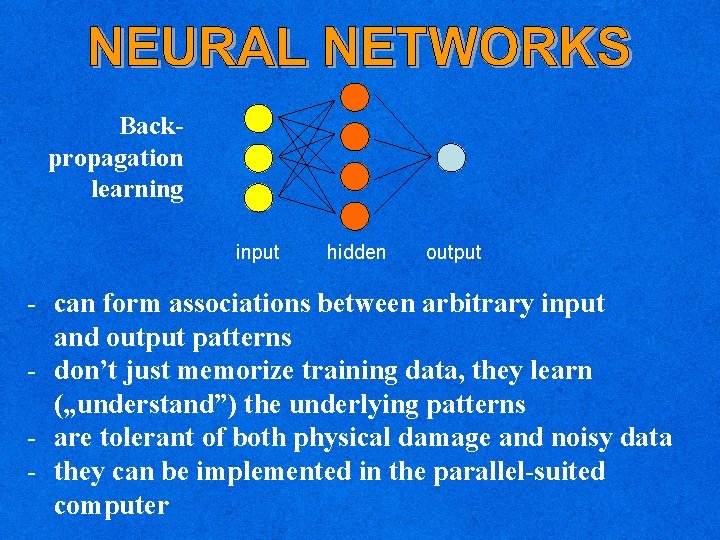

Backpropagation learning input hidden output - can form associations between arbitrary input and output patterns - don’t just memorize training data, they learn („understand”) the underlying patterns - are tolerant of both physical damage and noisy data - they can be implemented in the parallel-suited computer

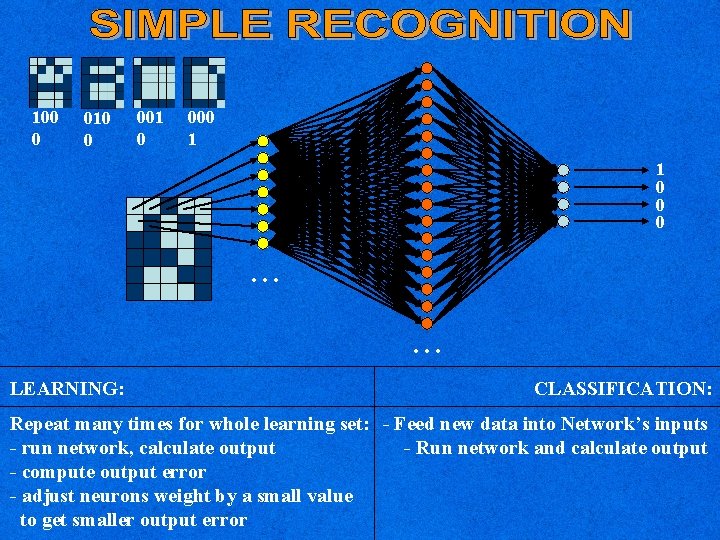

100 0 010 0 001 0 000 1 1 0 0 0 . . . LEARNING: CLASSIFICATION: Repeat many times for whole learning set: - Feed new data into Network’s inputs - run network, calculate output - Run network and calculate output - compute output error - adjust neurons weight by a small value to get smaller output error

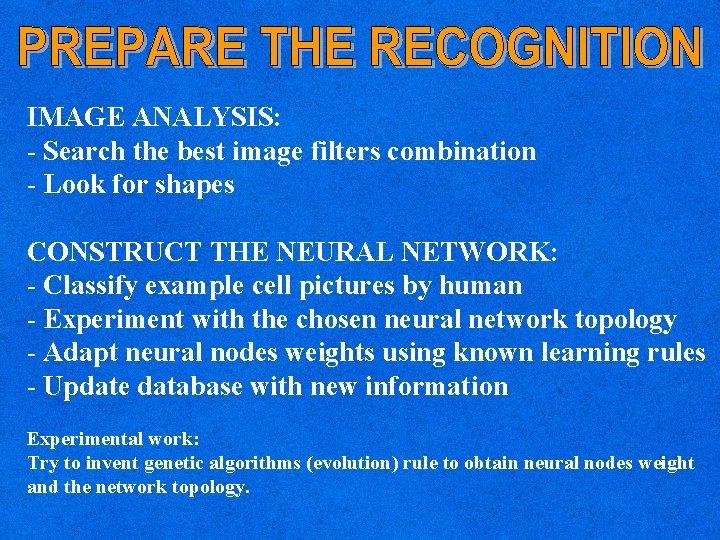

IMAGE ANALYSIS: - Search the best image filters combination - Look for shapes CONSTRUCT THE NEURAL NETWORK: - Classify example cell pictures by human - Experiment with the chosen neural network topology - Adapt neural nodes weights using known learning rules - Update database with new information Experimental work: Try to invent genetic algorithms (evolution) rule to obtain neural nodes weight and the network topology.

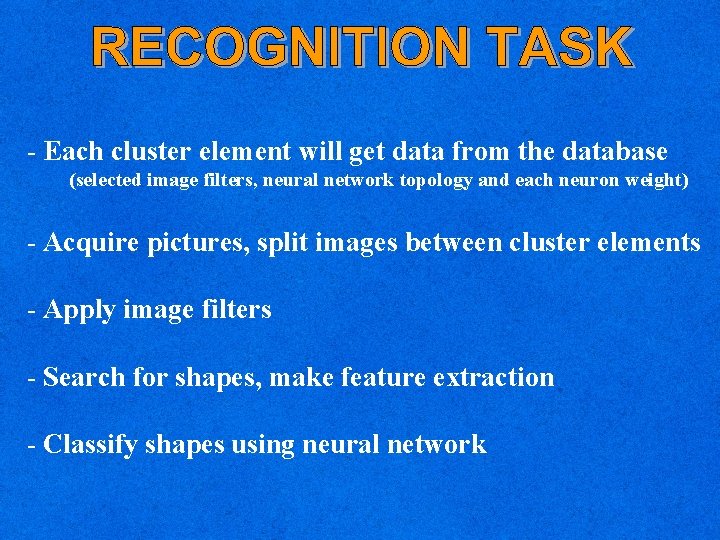

- Each cluster element will get data from the database (selected image filters, neural network topology and each neuron weight) - Acquire pictures, split images between cluster elements - Apply image filters - Search for shapes, make feature extraction - Classify shapes using neural network

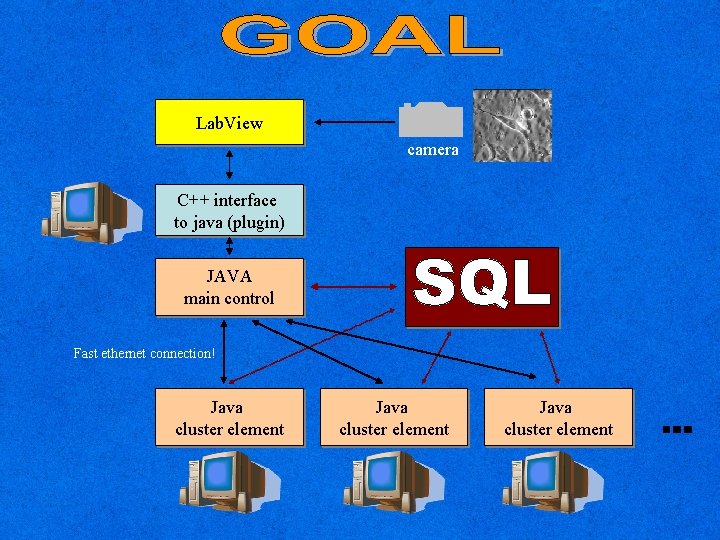

Lab. View camera C++ interface to java (plugin) JAVA main control Fast ethernet connection! Java cluster element

JAVA RMI ECLIPSE

mars@demon. pl http: //slajerek. demon. pl Litereature: Rafael Gonzalez, Richard Woods: Digital Image Processing Robert Pandya, B. Macy: Pattern recognition with neural networks Joe Tebelskis: Speech recognition using Neural Networks, Ph. D thesis The Image of neuron comes from www. mhhe. com/biosci/ap/histology_mh/page 1. html

- Slides: 16