Recap Low Level Vision Input pixel values from

- Slides: 38

Recap • Low Level Vision – Input: pixel values from the imaging device – Data structure: 2 D array, homogeneous – Processing: • • 2 D neighborhood operations Histogram based operation Image enhancements Feature extraction – Edges – Regions

Recap • Mid Level Vision – Input: features from low level processing – Data structures: lists, arrays, heterogeneous – Processing: • Pixel/feature grouping operations • Model based operations • Object descriptions – Lines – orientation, location – Regions – central moments – Relationships amongst objects

What’s Left To Do? • High Level Vision – Input: • Symbolic objects from mid level processing • Models of the objects to be recognized (a priori knowledge) – Data structures: lists, arrays, heterogeneous – Processing: • Object correspondence – Local correspondences represent individual object (or component) recognitions – Global correspondences represent recognition of an object in the context of the scene • Search problem – Typified by the “NP-Complete” question

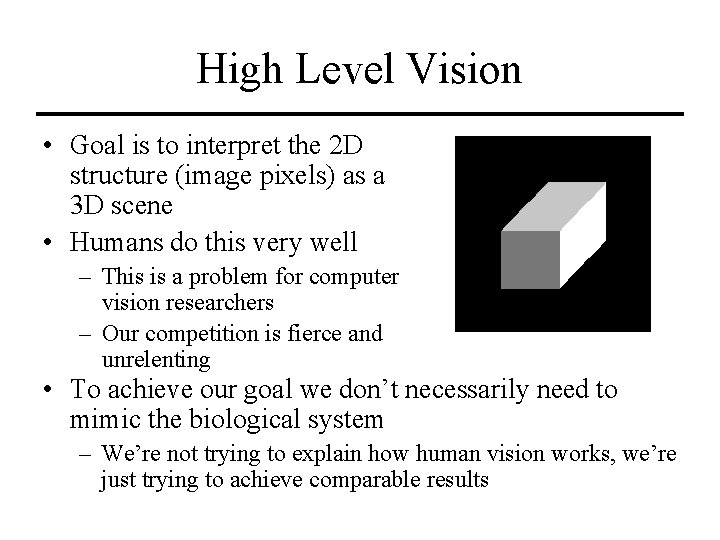

High Level Vision • Goal is to interpret the 2 D structure (image pixels) as a 3 D scene • Humans do this very well – This is a problem for computer vision researchers – Our competition is fierce and unrelenting • To achieve our goal we don’t necessarily need to mimic the biological system – We’re not trying to explain how human vision works, we’re just trying to achieve comparable results

High Level Vision • The 3 D scene is made up of – Objects – Illumination due to light sources • The appearance of boundaries between object surfaces is dependent on their orientation relative to the light source (and surface material, sensing device…) – This is where we get edges from

Labeling Edges • In a 3 D scene, edges have very specific meanings – They separate objects from one another • Occlusion – They demark sudden changes in surface orientation within a single object – The demark sudden changed in surface albedo (light reflectance) – A shadow cast by an object

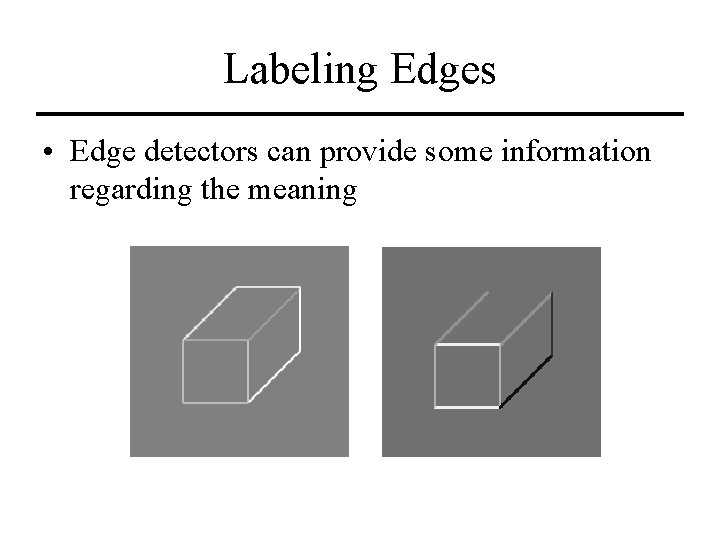

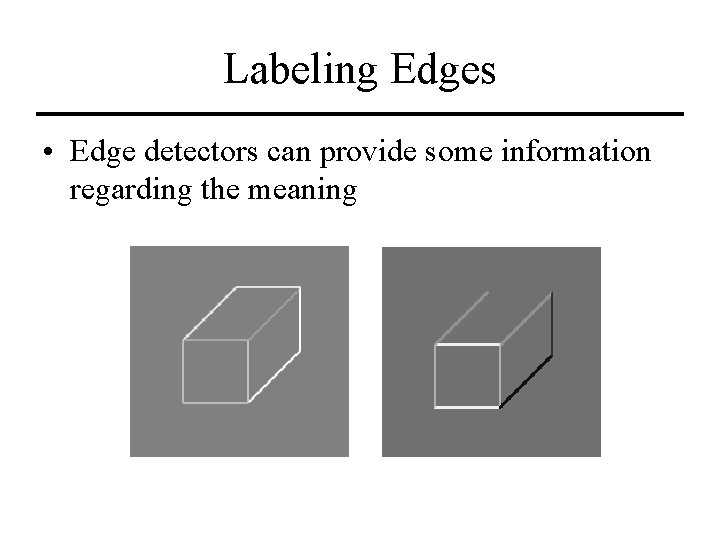

Labeling Edges • Edge detectors can provide some information regarding the meaning

Labeling Edges • But additional information must be provided through logic and reasoning • Under some constrained “worlds” we can identify all possible edge and vertex types – “Blocks World” assumption • Toy blocks • Trihedral vertices – Sounds simple but much has been learned from studying such worlds

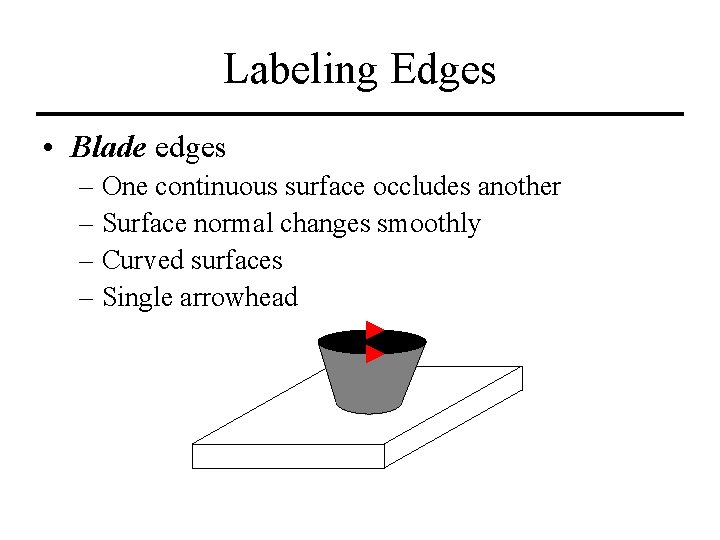

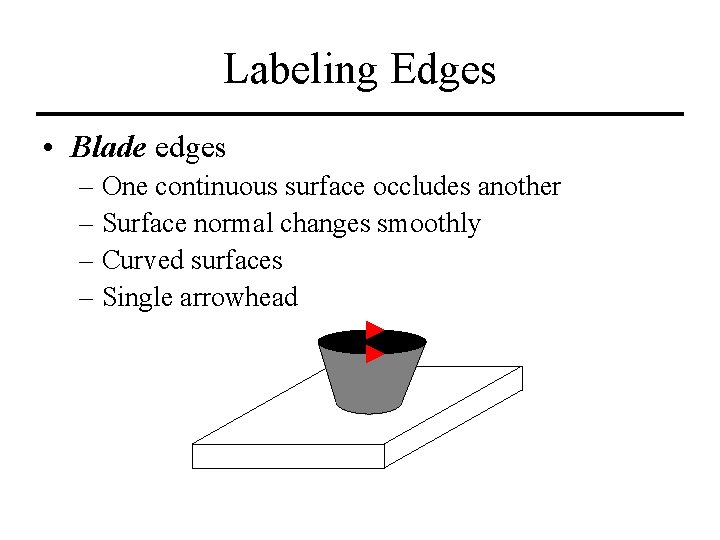

Labeling Edges • Blade edges – One continuous surface occludes another – Surface normal changes smoothly – Curved surfaces – Single arrowhead

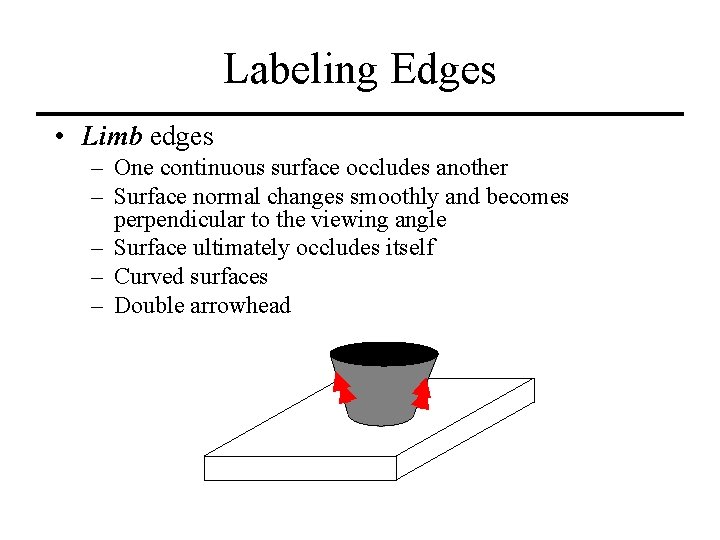

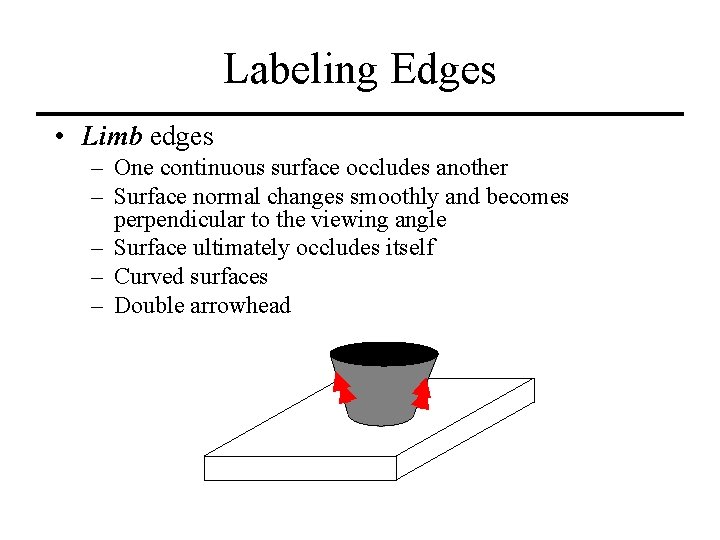

Labeling Edges • Limb edges – One continuous surface occludes another – Surface normal changes smoothly and becomes perpendicular to the viewing angle – Surface ultimately occludes itself – Curved surfaces – Double arrowhead

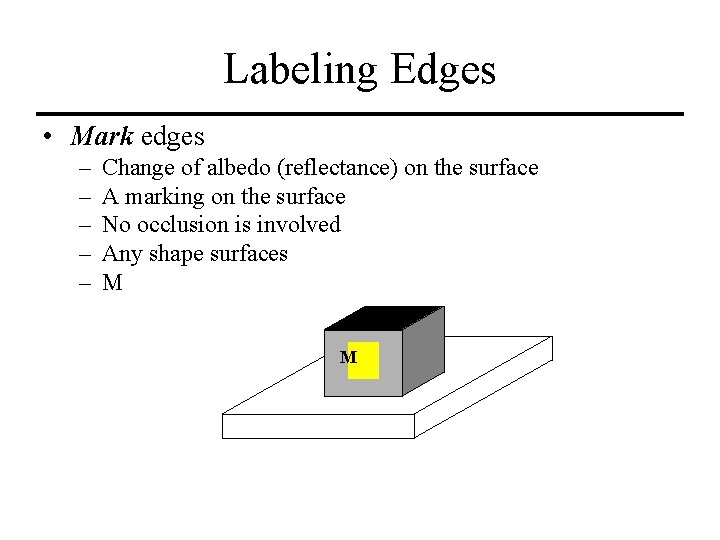

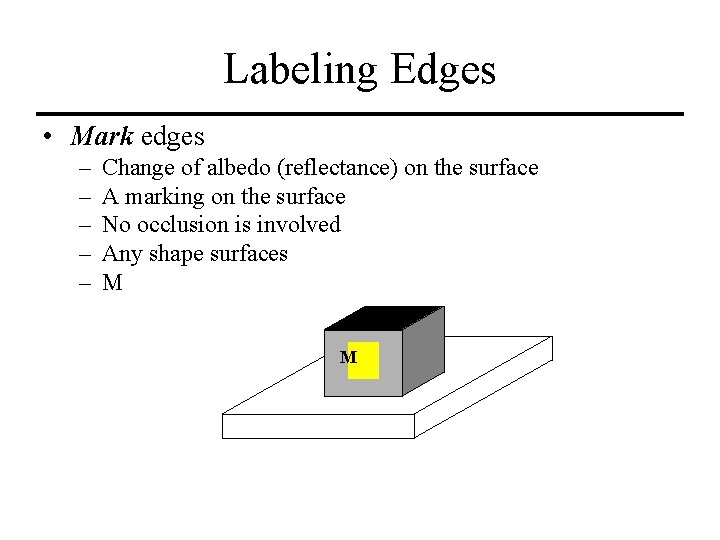

Labeling Edges • Mark edges – – – Change of albedo (reflectance) on the surface A marking on the surface No occlusion is involved Any shape surfaces M M

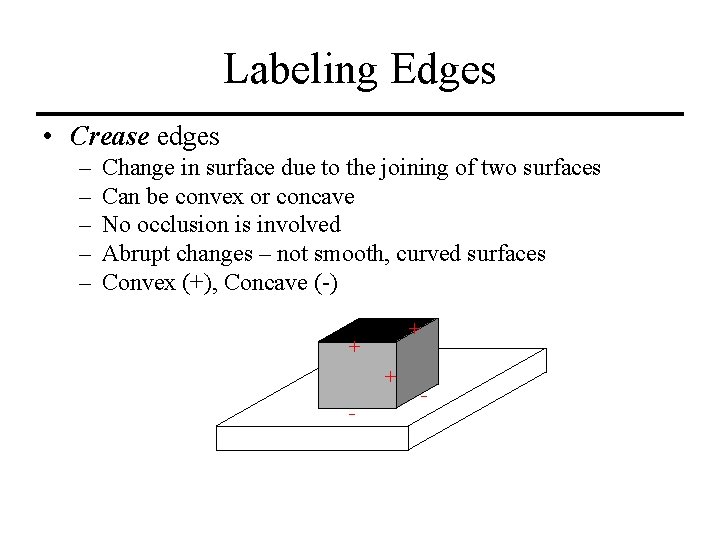

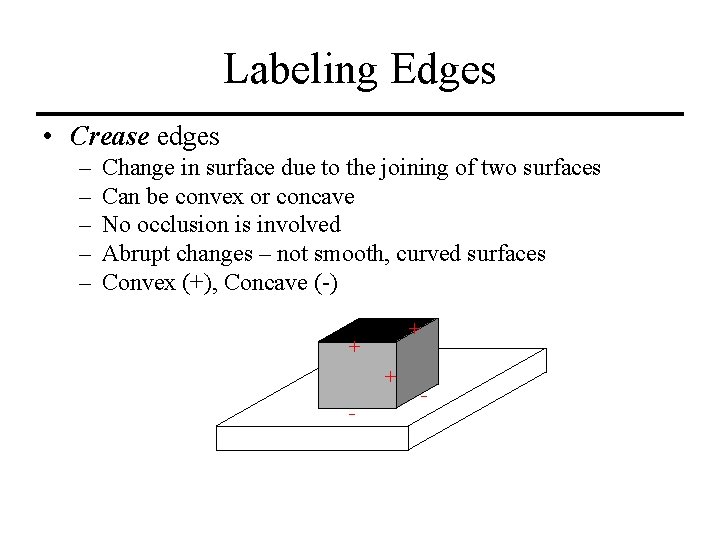

Labeling Edges • Crease edges – – – Change in surface due to the joining of two surfaces Can be convex or concave No occlusion is involved Abrupt changes – not smooth, curved surfaces Convex (+), Concave (-) + + + - -

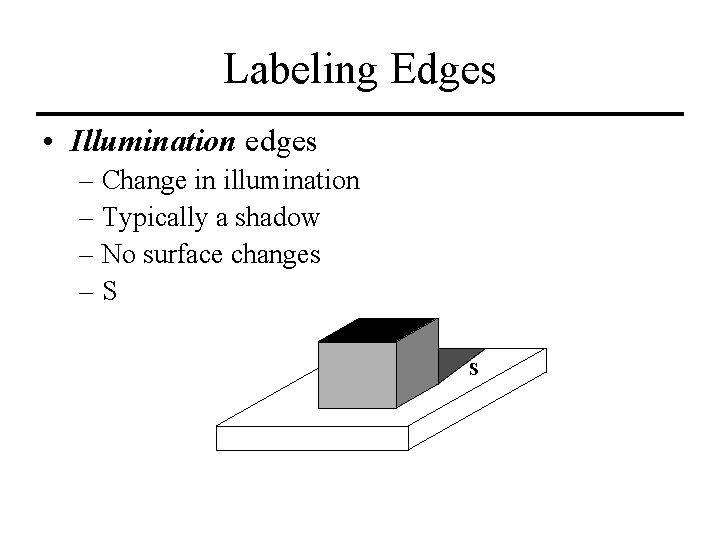

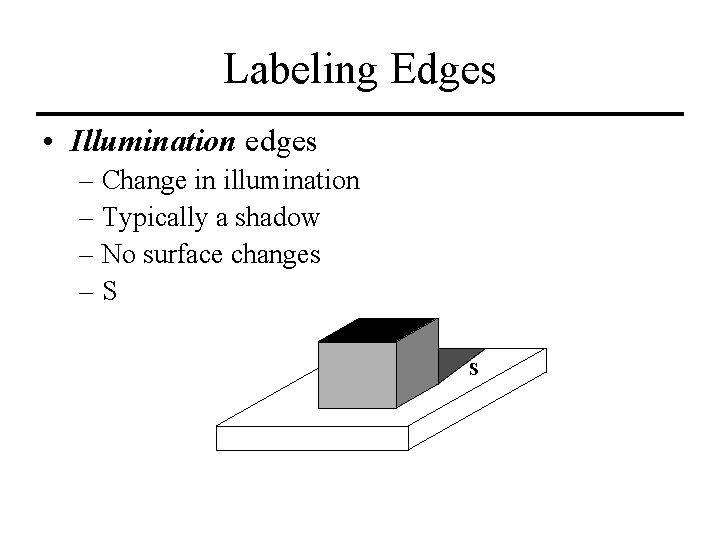

Labeling Edges • Illumination edges – Change in illumination – Typically a shadow – No surface changes –S S

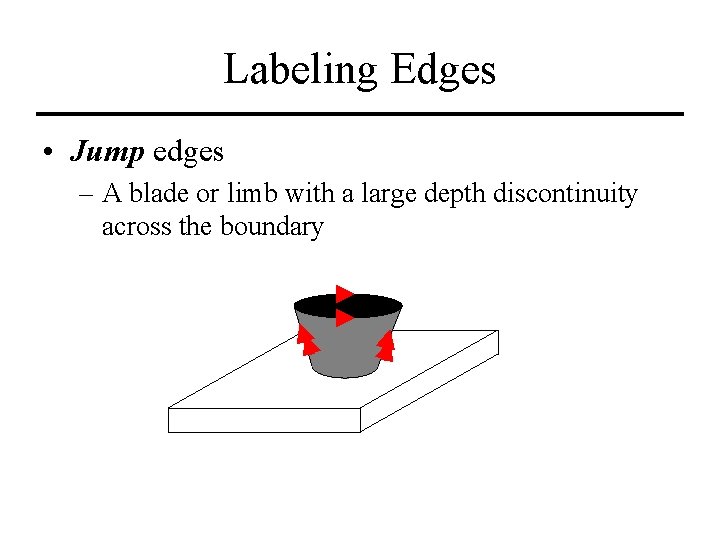

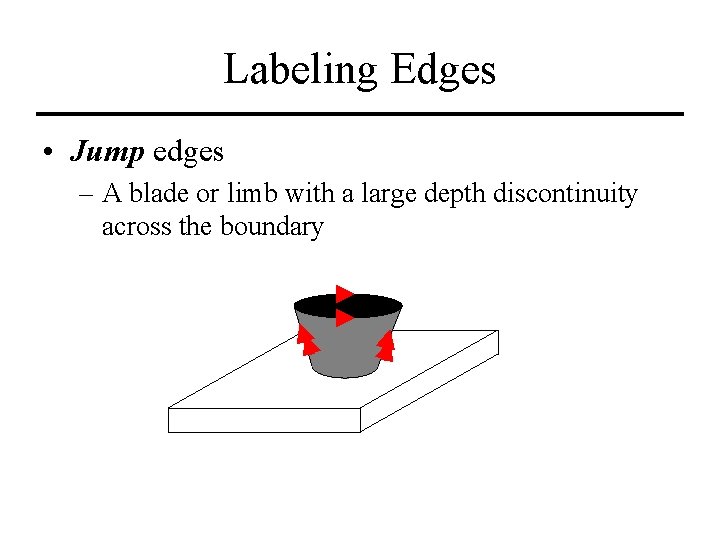

Labeling Edges • Jump edges – A blade or limb with a large depth discontinuity across the boundary

Labeling Edges • This edge labeling scheme is proposed by a few researchers • There are variations • You don’t have to do it this way if it doesn’t suit the needs of your application • Choose whatever scheme you want – Just make sure you are consistent

Vertices • A Vertex is the place where two or more lines intersect • Observations regarding the types of vertices possible when mapping 3 D objects into a 2 D space have been made • Assumes trihedral corners – Restricted to a “blocks world” but may be useful elsewhere

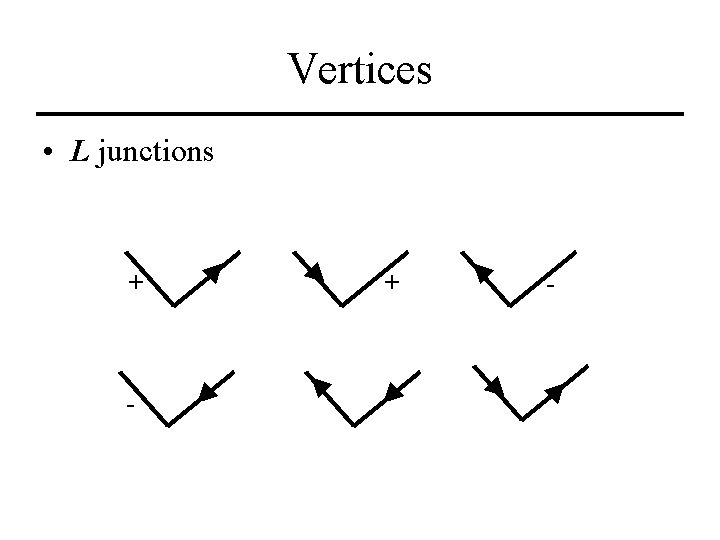

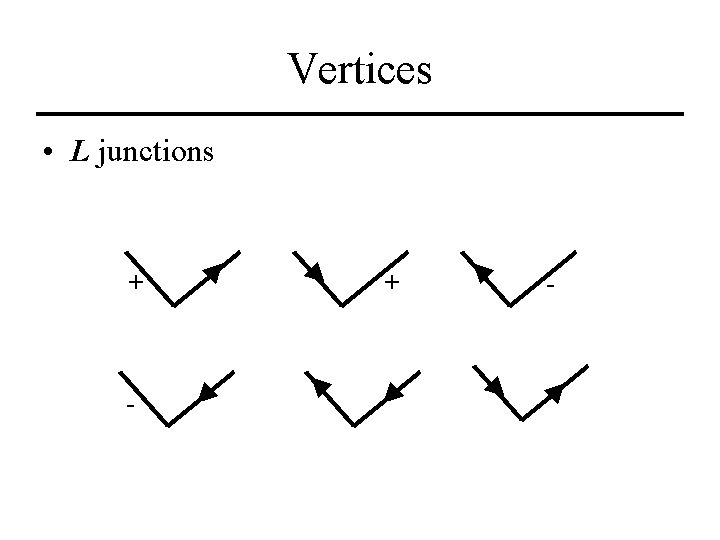

Vertices • L junctions + -

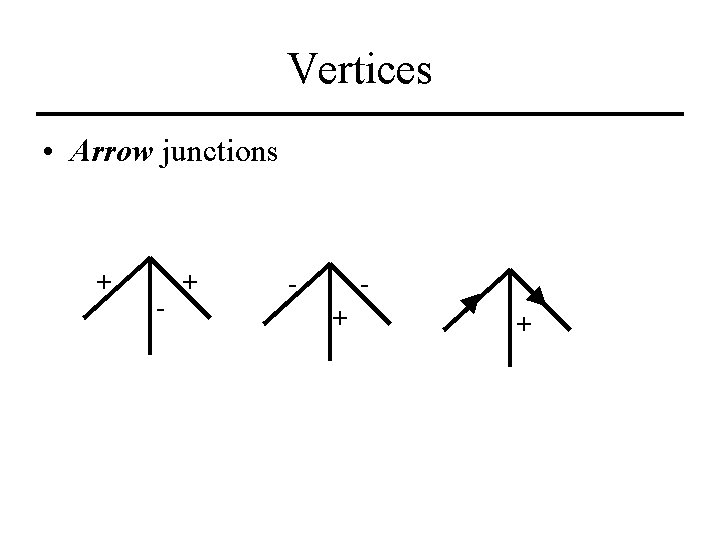

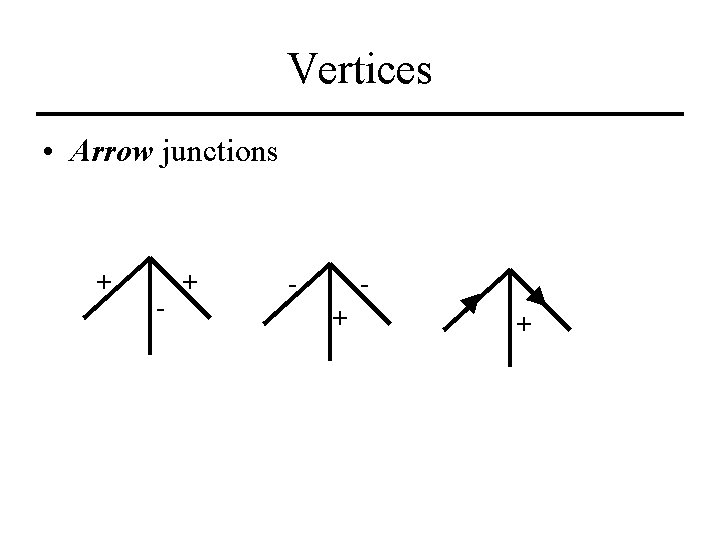

Vertices • Arrow junctions + - + +

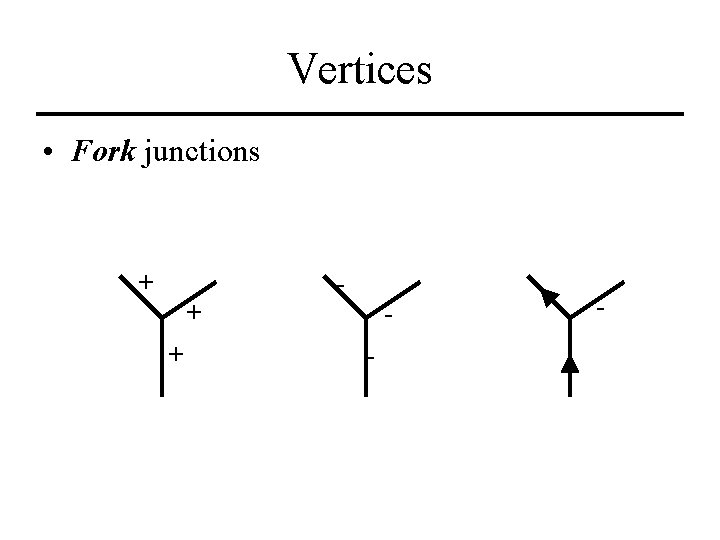

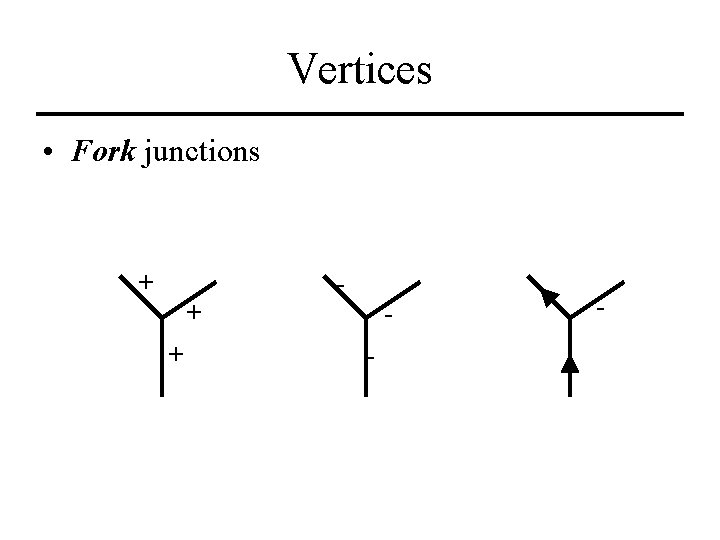

Vertices • Fork junctions + + + - -

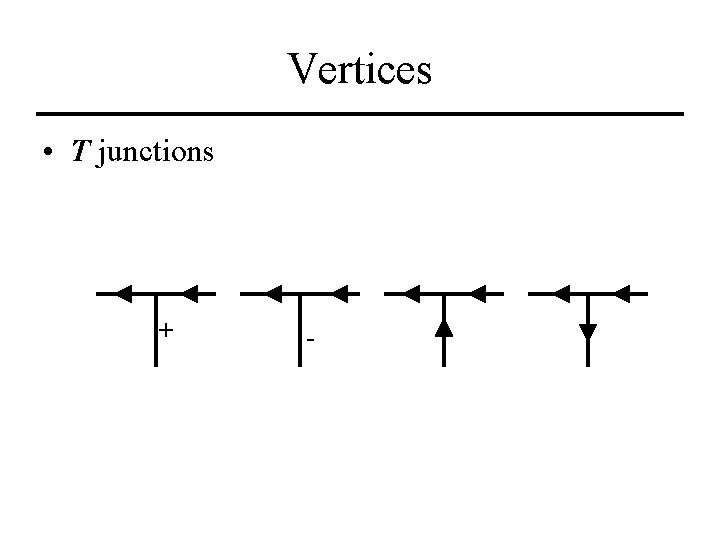

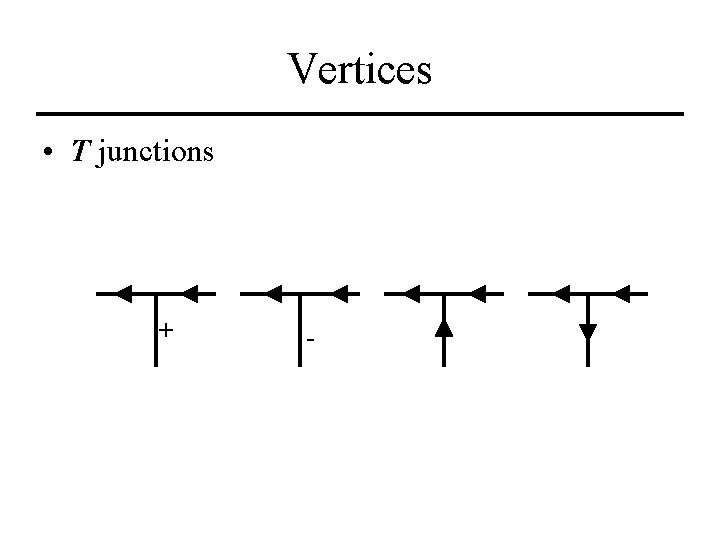

Vertices • T junctions + -

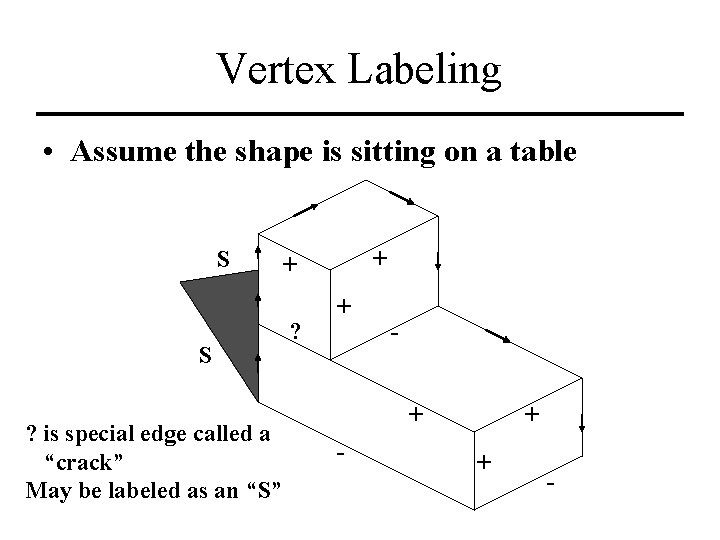

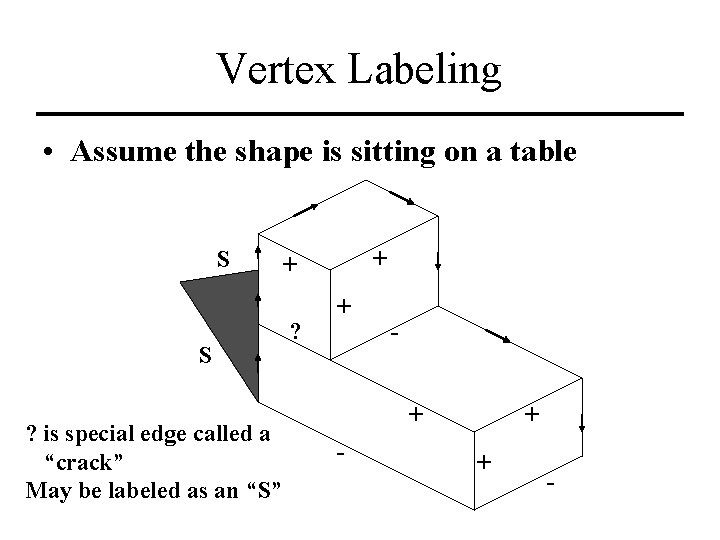

Vertex Labeling • Assume the shape is sitting on a table S S ? is special edge called a “crack” May be labeled as an “S” + + ? + + -

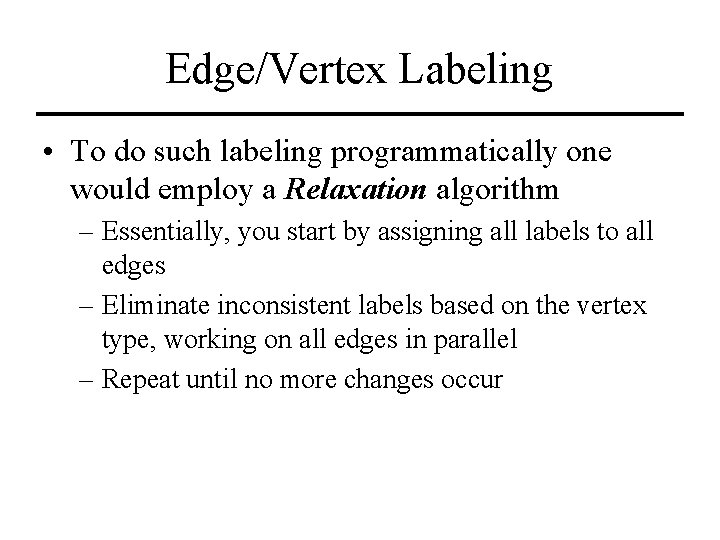

Edge/Vertex Labeling • To do such labeling programmatically one would employ a Relaxation algorithm – Essentially, you start by assigning all labels to all edges – Eliminate inconsistent labels based on the vertex type, working on all edges in parallel – Repeat until no more changes occur

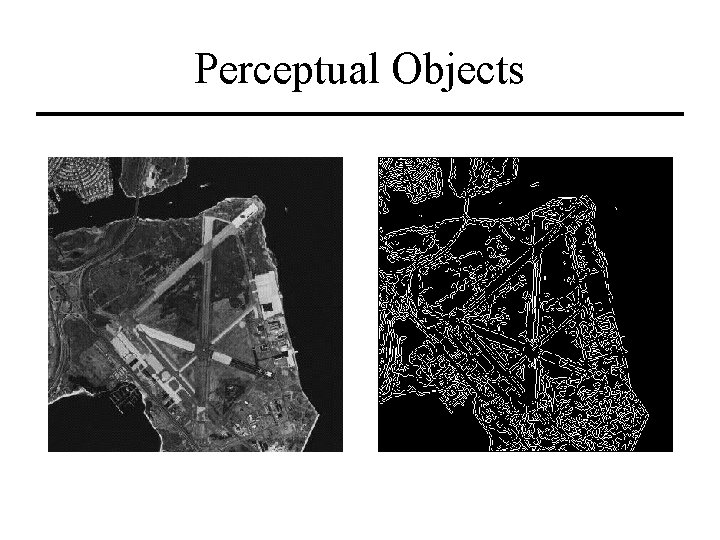

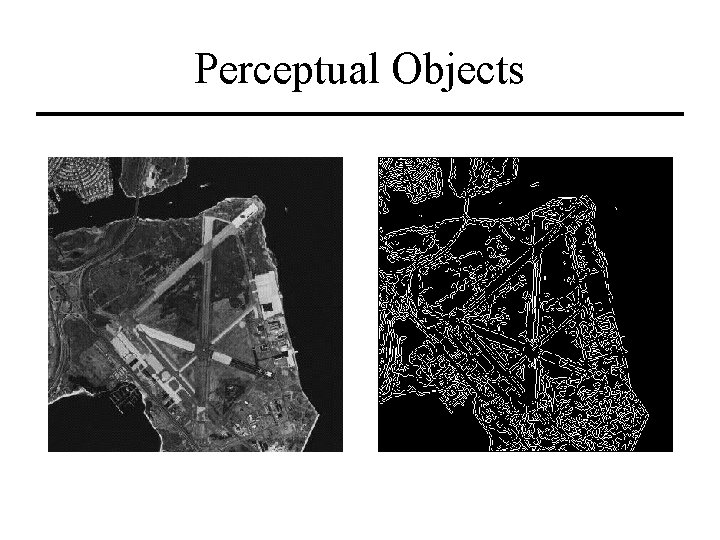

Perceptual Objects • Outside of the blocks world – Long, thin ribbon-like objects • Made of [nearly] parallel lines • Straight or Curved – Region objects • Homogeneous intensity • Homogeneous texture • Bounded by well defined edges

Perceptual Objects

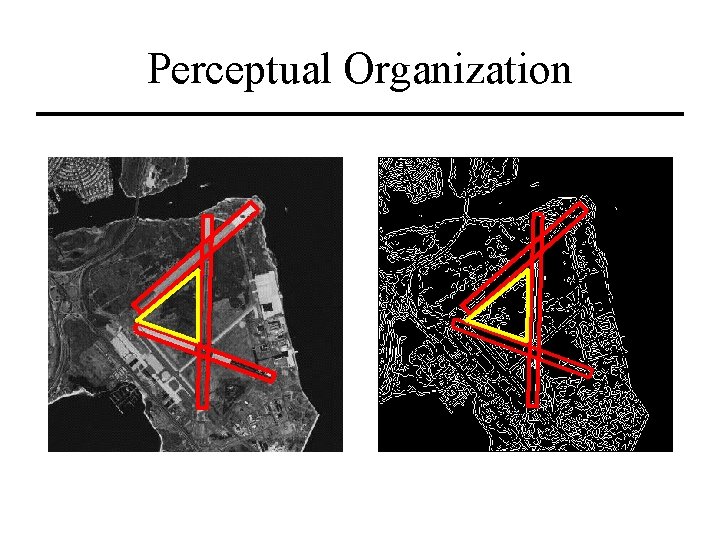

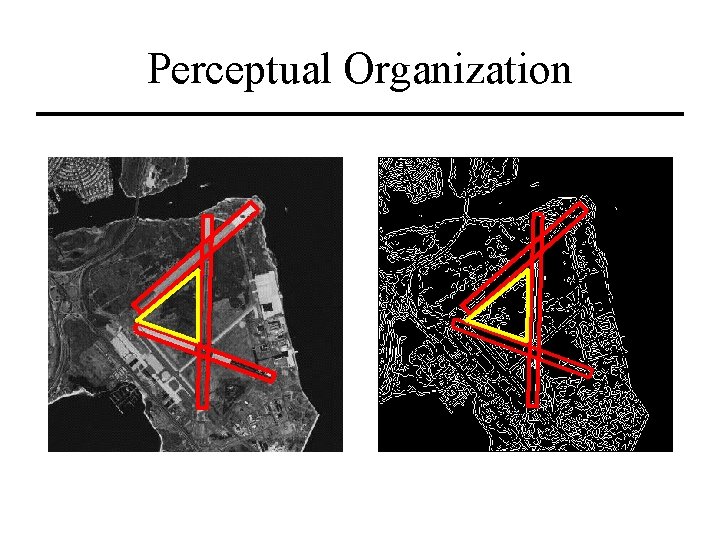

Perceptual Organization

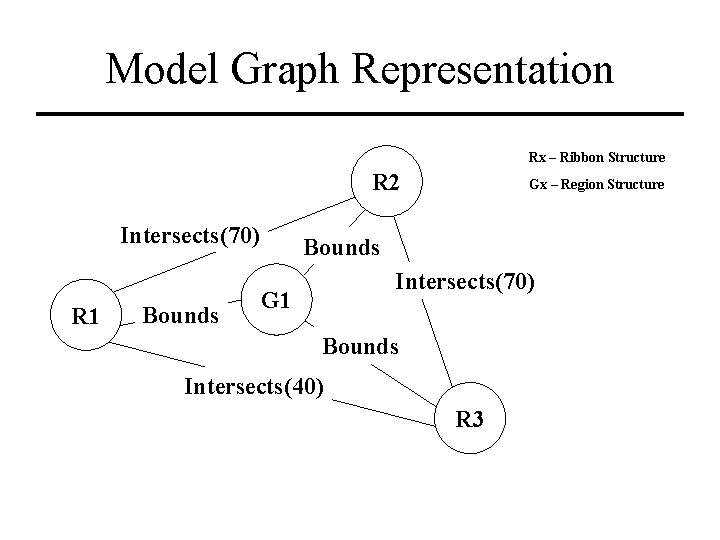

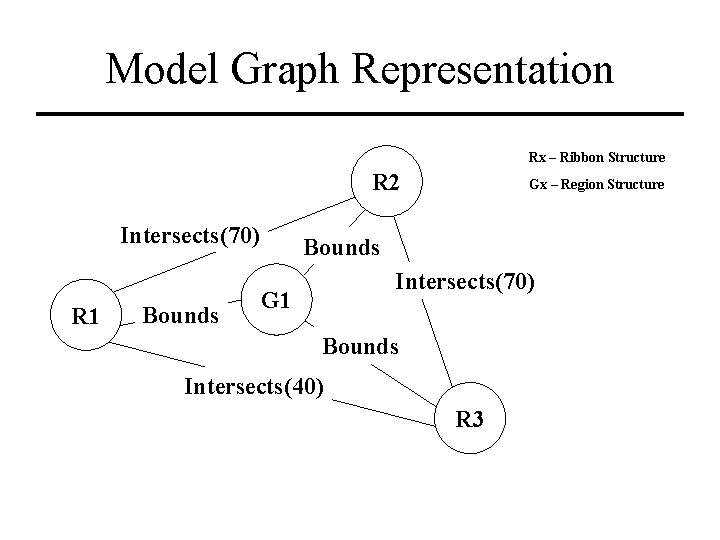

Model Graph Representation Rx – Ribbon Structure R 2 Intersects(70) R 1 Bounds Gx – Region Structure Bounds Intersects(70) G 1 Bounds Intersects(40) R 3

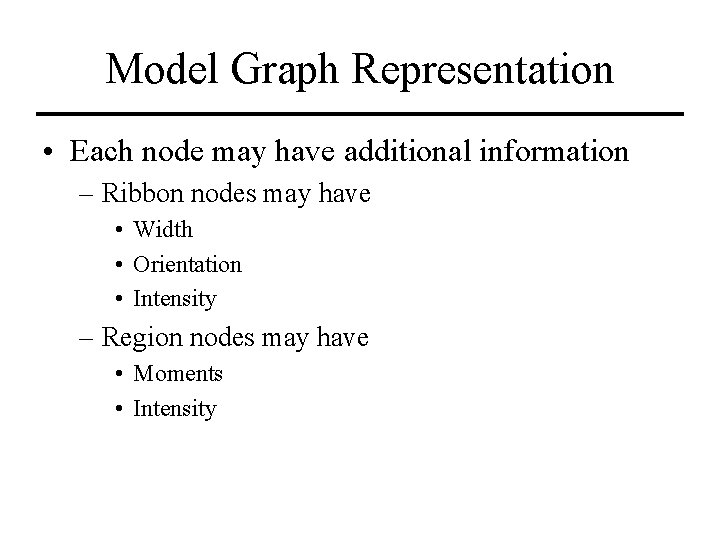

Model Graph Representation • Each node may have additional information – Ribbon nodes may have • Width • Orientation • Intensity – Region nodes may have • Moments • Intensity

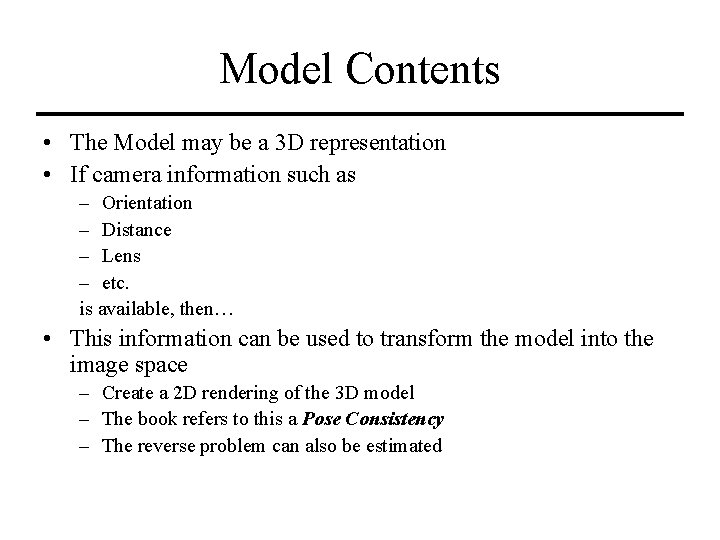

Model Contents • The Model may be a 3 D representation • If camera information such as – Orientation – Distance – Lens – etc. is available, then… • This information can be used to transform the model into the image space – Create a 2 D rendering of the 3 D model – The book refers to this a Pose Consistency – The reverse problem can also be estimated

Model Matching • Matching can proceed after feature extraction – Extract features from the image – Create the scene graph – Match the scene graph to the model graph using graph theoretic methods • Matching can proceed during feature extraction – Guide the areas of concentration for the feature detectors

Model Matching • Matching that proceeds after feature extraction – Depth first tree search – Can be a very, very slow process – Heuristics may help • Anchor to the most important/likely objects • Matching that proceeds during feature extraction – Set up processing windows within the image – System may hallucinate (see things that aren’t really there)

Model Matching • Difficult to make the system… – Orientation dependent – Illumination dependent • “Difficult” doesn’t mean “impossible” – Just means it’ll take more time to perform the search

Relaxation Labeling • Formally stated – An iterative process that attempts to assign labels to objects based on local constraints (based on an object’s description) and global constraints (how the assignment affects the labeling of other objects) • The technique has been used for many matching applications – – Object labeling Stereo correspondence Motion correspondence Model matching

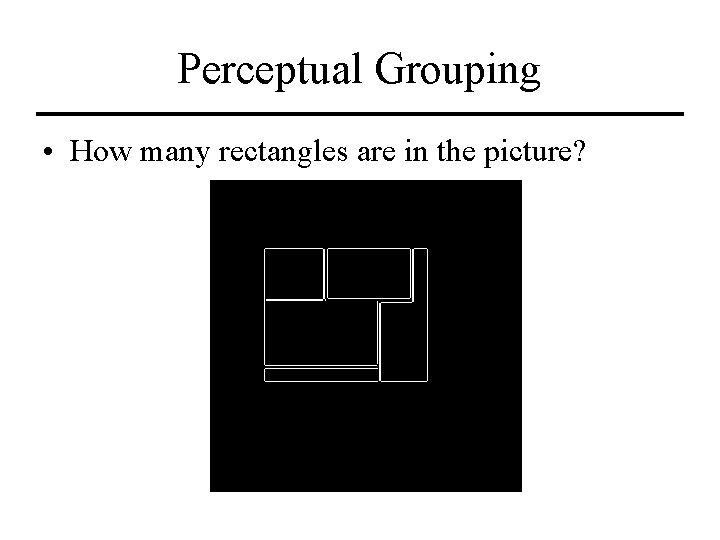

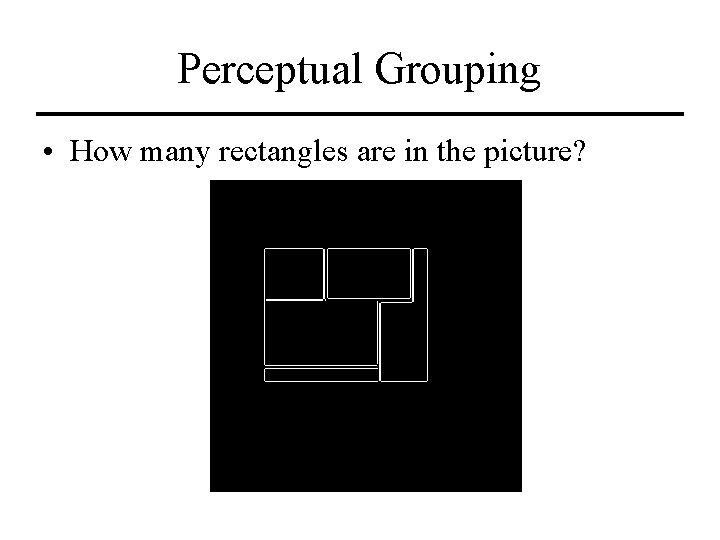

Perceptual Grouping • How many rectangles are in the picture?

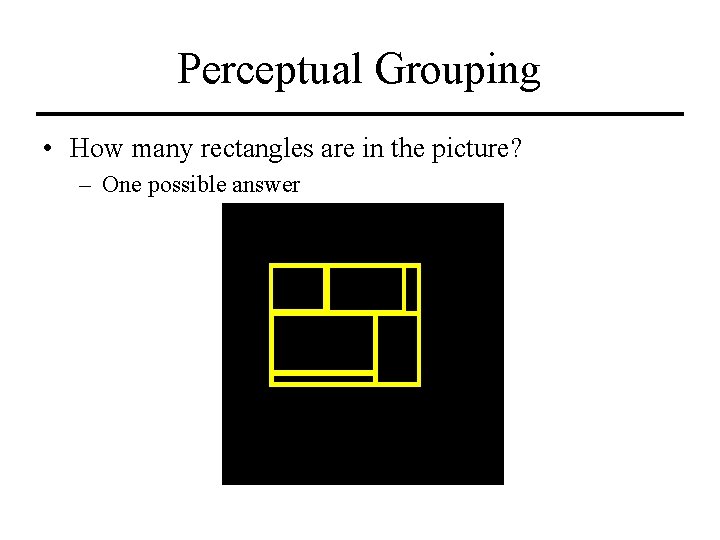

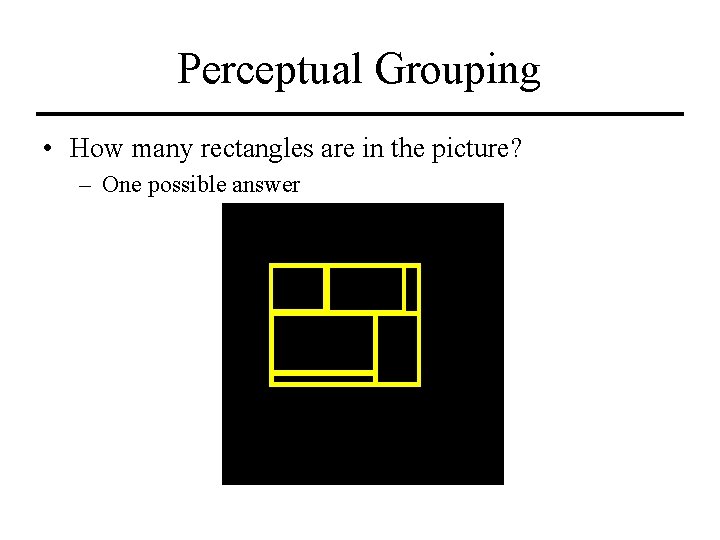

Perceptual Grouping • How many rectangles are in the picture? – One possible answer

Perceptual Grouping • It depends on what the picture represents – What is the underlying model? • Toy blocks? • Projective aerial view of a building complex? • Rectangles drawn on a flat piece of paper? – Was the image sensor noisy? (long lines got broken up) – Depending on the answer, you may solve the problem with • Relaxation labeling • Graph matching • Neural network based training/learning

Summary • High level vision is probably the least understood – It requires more than just an understanding of detectors – It requires understanding of the data structures used to represent the objects and the logic structures for reasoning about the objects • This is where computer vision moves from the highly mathematical field of image processing into the symbolic field of artificial intelligence

Things To Do • Reading for Next Week – Multi-Frame Processing • Chapter 10 – The Geometry of Multiple Views • Chapter 11 – Stereopsis

Final Exam • See handout