Reactive Learning Active Learning with Relabeling Christopher H

Re-active Learning: Active Learning with Re-labeling Christopher H. Lin University of Washington Mausam IIT Delhi Daniel S. Weld University of Washington 1

*Speaker not paid by Oracle Corporation 2

CROWDSOURCING 3

(Labeling) Mistakes Were Made Human 4

Majority Vote Parrot Parakeet Parrot 5

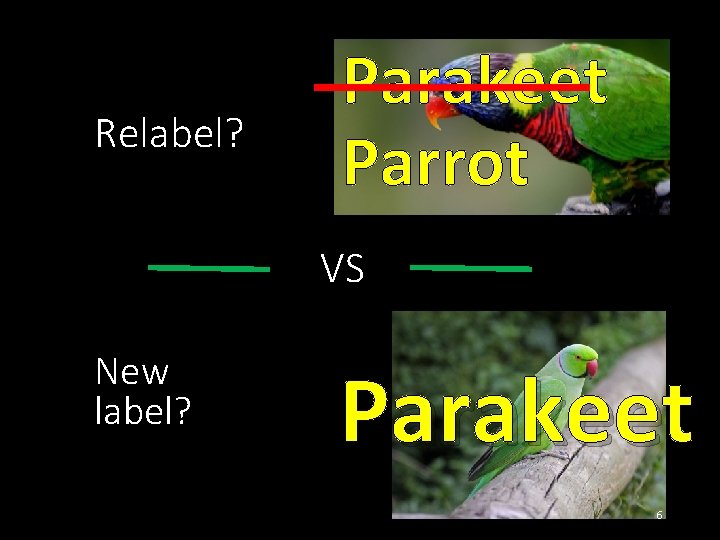

Relabel? Parakeet Parrot VS New label? Parakeet 6

MORE NOISY DATA LESS BETTER DATA 7

![MORE NOISY DATA LESS BETTER DATA [Sheng et al. 2008, Lin et al. 2014] MORE NOISY DATA LESS BETTER DATA [Sheng et al. 2008, Lin et al. 2014]](http://slidetodoc.com/presentation_image_h/83c72af2c93dca539d40ae1c640763e1/image-8.jpg)

MORE NOISY DATA LESS BETTER DATA [Sheng et al. 2008, Lin et al. 2014] 8

![Re-active Learning Contributions Standard Active Learning Algorithms Fail Uncertainty Sampling [Lewis and Catlett 1994] Re-active Learning Contributions Standard Active Learning Algorithms Fail Uncertainty Sampling [Lewis and Catlett 1994]](http://slidetodoc.com/presentation_image_h/83c72af2c93dca539d40ae1c640763e1/image-9.jpg)

Re-active Learning Contributions Standard Active Learning Algorithms Fail Uncertainty Sampling [Lewis and Catlett 1994] Expected Error Reduction [Roy and Mc. Callum 2001] Re-active Learning Algorithms Extensions of Uncertainty Sampling Impact Sampling 9

Standard active learning algorithms fail! 10

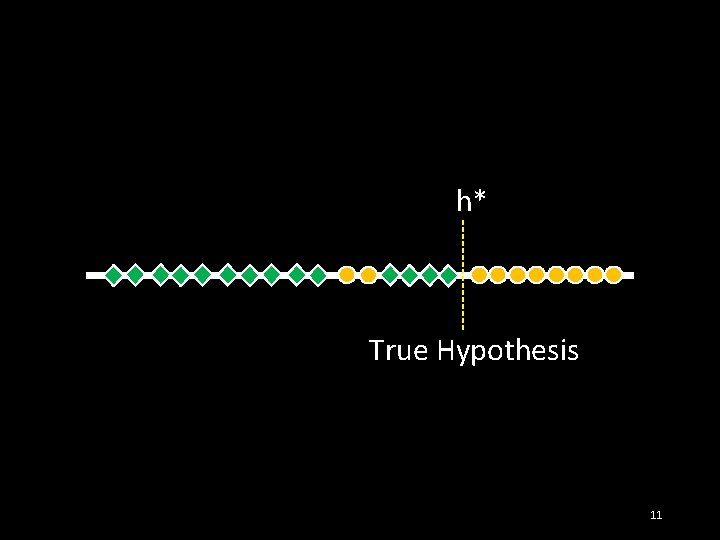

h* True Hypothesis 11

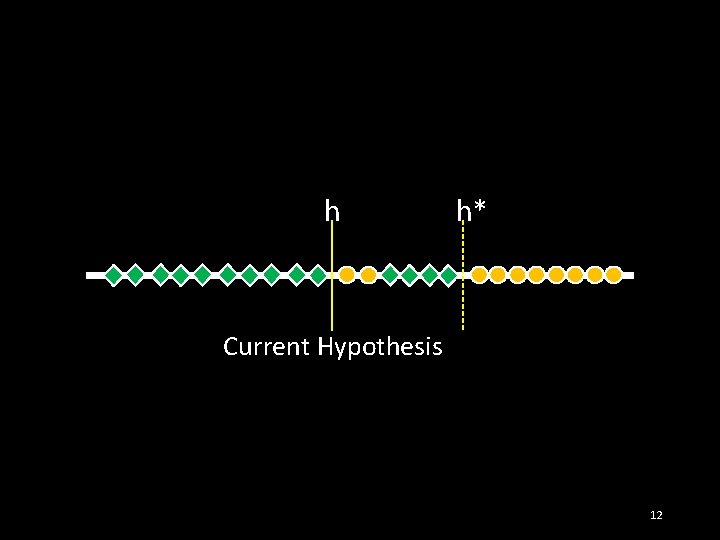

h h* Current Hypothesis 12

![h h* Uncertainty Sampling [Lewis and Catlett (1994)] 13 h h* Uncertainty Sampling [Lewis and Catlett (1994)] 13](http://slidetodoc.com/presentation_image_h/83c72af2c93dca539d40ae1c640763e1/image-13.jpg)

h h* Uncertainty Sampling [Lewis and Catlett (1994)] 13

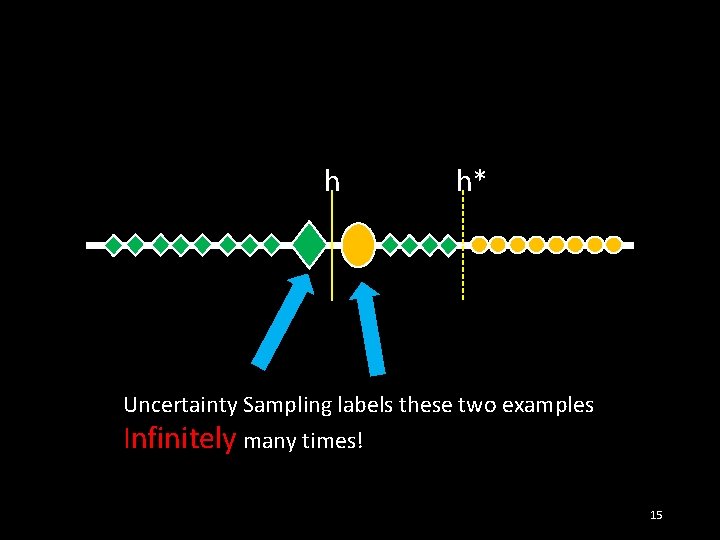

h h* Suppose labeled many times already! 14

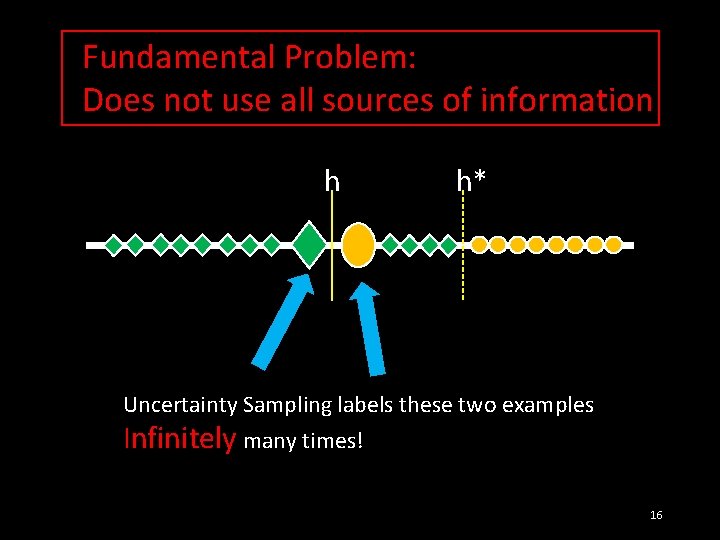

h h* Uncertainty Sampling labels these two examples Infinitely many times! 15

Fundamental Problem: Does not use all sources of information h h* Uncertainty Sampling labels these two examples Infinitely many times! 16

![Re-active Learning Contributions Standard Active Learning Algorithms Fail Uncertainty Sampling [Lewis and Catlett 1994] Re-active Learning Contributions Standard Active Learning Algorithms Fail Uncertainty Sampling [Lewis and Catlett 1994]](http://slidetodoc.com/presentation_image_h/83c72af2c93dca539d40ae1c640763e1/image-17.jpg)

Re-active Learning Contributions Standard Active Learning Algorithms Fail Uncertainty Sampling [Lewis and Catlett 1994] Expected Error Reduction [Roy and Mc. Callum 2001] Re-active Learning Algorithms Extensions of Uncertainty Sampling Impact Sampling 17

![Expected Error Reduction (EER) [Roy and Mc. Callum (2001)] Also suffers from infinite looping! Expected Error Reduction (EER) [Roy and Mc. Callum (2001)] Also suffers from infinite looping!](http://slidetodoc.com/presentation_image_h/83c72af2c93dca539d40ae1c640763e1/image-18.jpg)

Expected Error Reduction (EER) [Roy and Mc. Callum (2001)] Also suffers from infinite looping! 18

![Re-active Learning Contributions Standard Active Learning Algorithms Fail Uncertainty Sampling [Lewis and Catlett 1994] Re-active Learning Contributions Standard Active Learning Algorithms Fail Uncertainty Sampling [Lewis and Catlett 1994]](http://slidetodoc.com/presentation_image_h/83c72af2c93dca539d40ae1c640763e1/image-19.jpg)

Re-active Learning Contributions Standard Active Learning Algorithms Fail Uncertainty Sampling [Lewis and Catlett 1994] Expected Error Reduction [Roy and Mc. Callum 2001] Re-active Learning Algorithms Extensions of Uncertainty Sampling Impact Sampling 19

How to fix? Consider the aggregate label uncertainty! 20

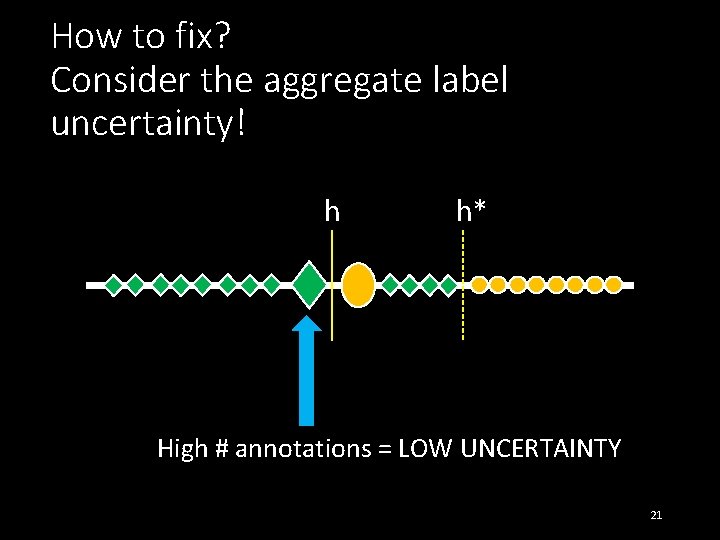

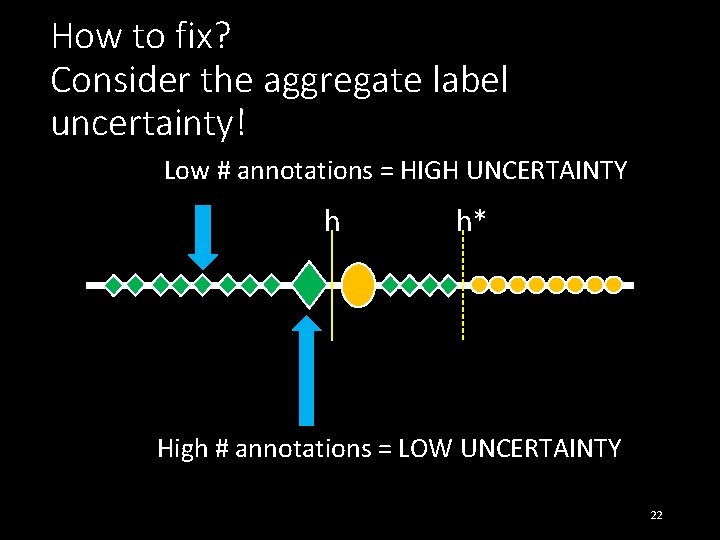

How to fix? Consider the aggregate label uncertainty! h h* High # annotations = LOW UNCERTAINTY 21

How to fix? Consider the aggregate label uncertainty! Low # annotations = HIGH UNCERTAINTY h h* High # annotations = LOW UNCERTAINTY 22

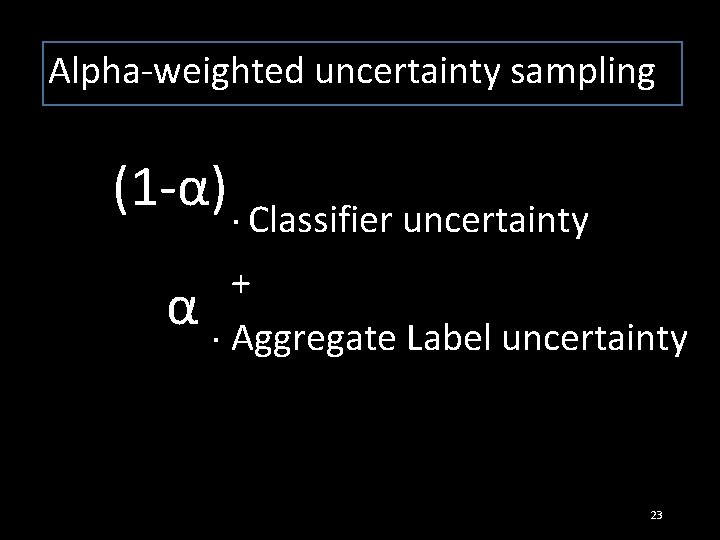

Alpha-weighted uncertainty sampling (1 -α). Classifier uncertainty α +. Aggregate Label uncertainty 23

Fixed-Relabeling Uncertainty Sampling 1) Pick new unlabeled example using classifier uncertainty 2) Get a fixed number of labels for that example 24

![Re-active Learning Contributions Standard Active Learning Algorithms Fail Uncertainty Sampling [Lewis and Catlett 1994] Re-active Learning Contributions Standard Active Learning Algorithms Fail Uncertainty Sampling [Lewis and Catlett 1994]](http://slidetodoc.com/presentation_image_h/83c72af2c93dca539d40ae1c640763e1/image-25.jpg)

Re-active Learning Contributions Standard Active Learning Algorithms Fail Uncertainty Sampling [Lewis and Catlett 1994] Expected Error Reduction [Roy and Mc. Callum 2001] Re-active Learning Algorithms Extensions of Uncertainty Sampling Impact Sampling 25

Impact (ψ) Sampling 26

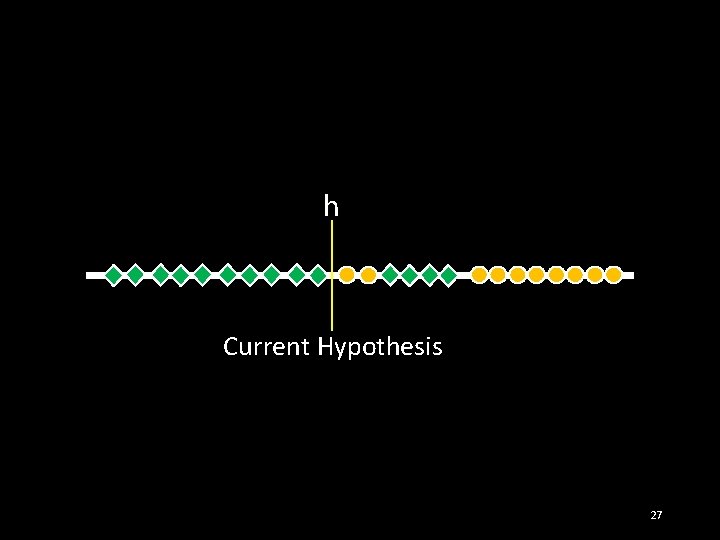

h Current Hypothesis 27

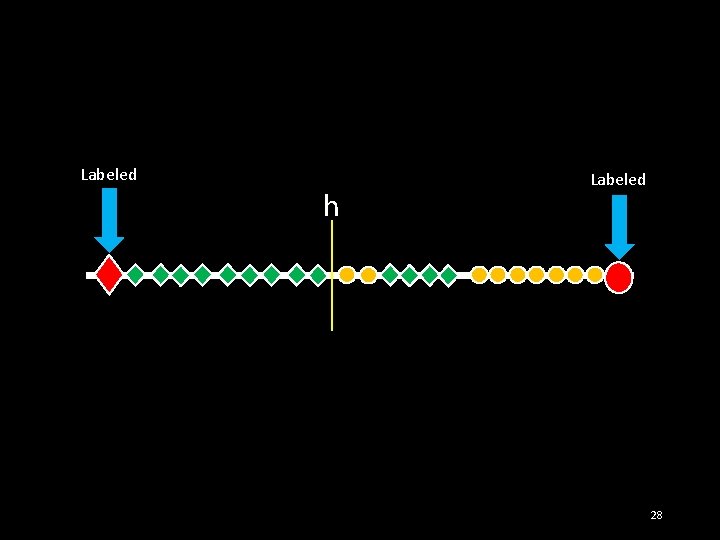

Labeled h Labeled 28

Labeled h Labeled What is the impact of labeling this example? 29

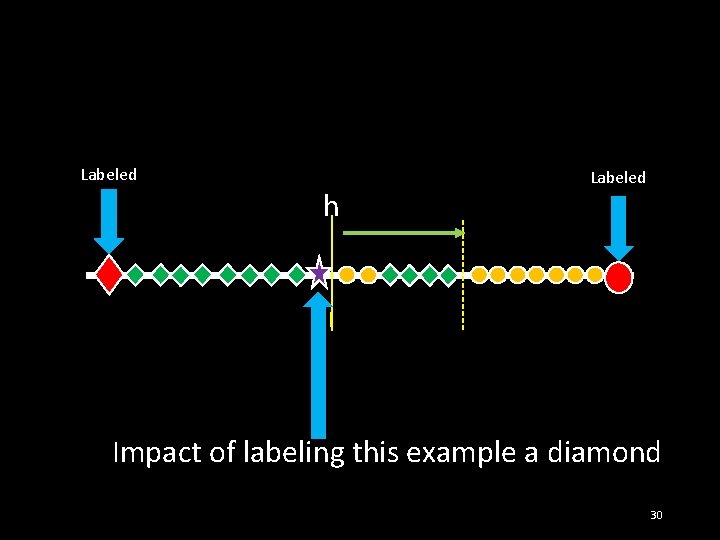

Labeled h Labeled Impact of labeling this example a diamond 30

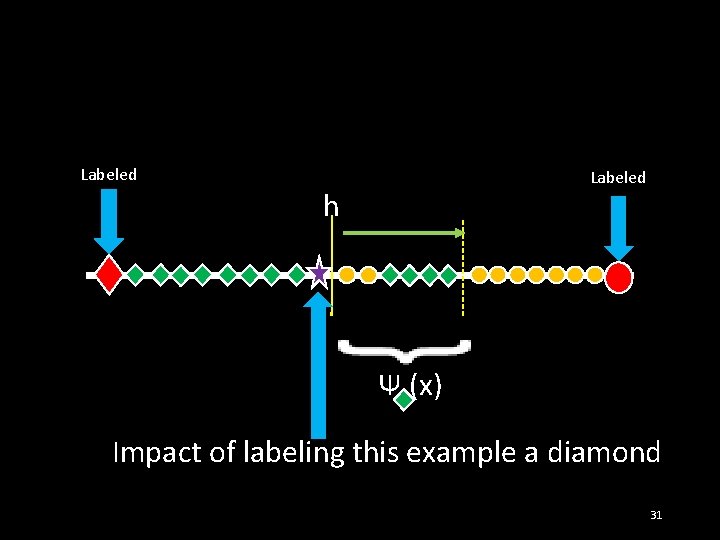

Labeled h Ψ (x) Impact of labeling this example a diamond 31

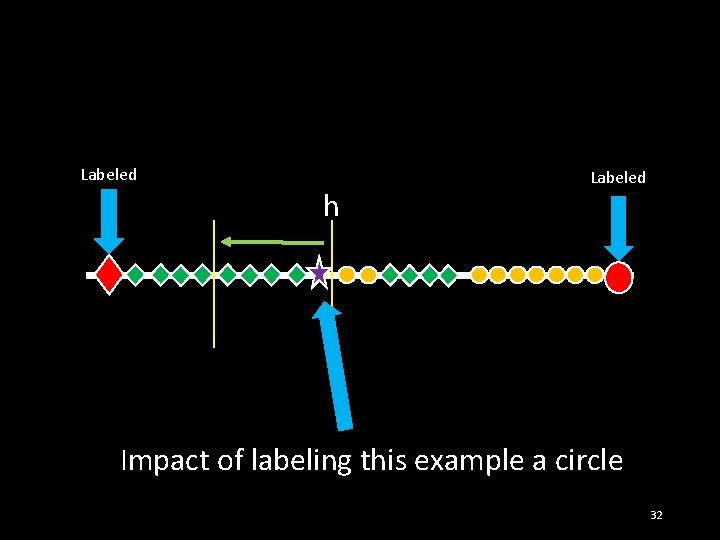

Labeled h Labeled Impact of labeling this example a circle 32

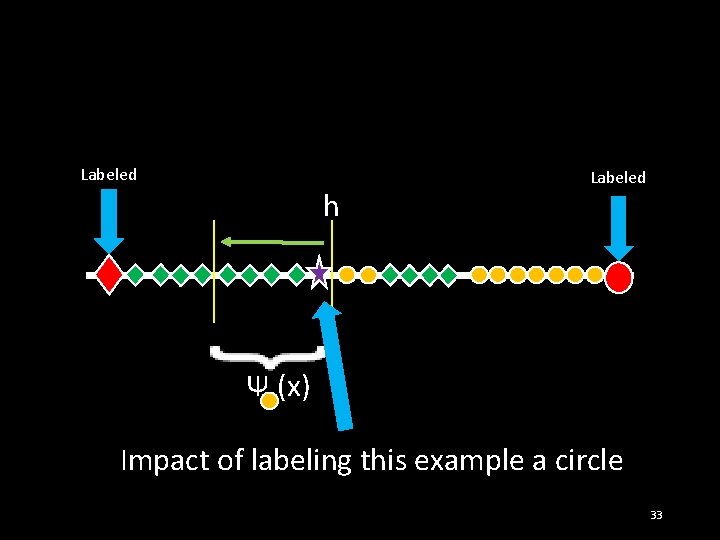

Labeled h Labeled Ψ (x) Impact of labeling this example a circle 33

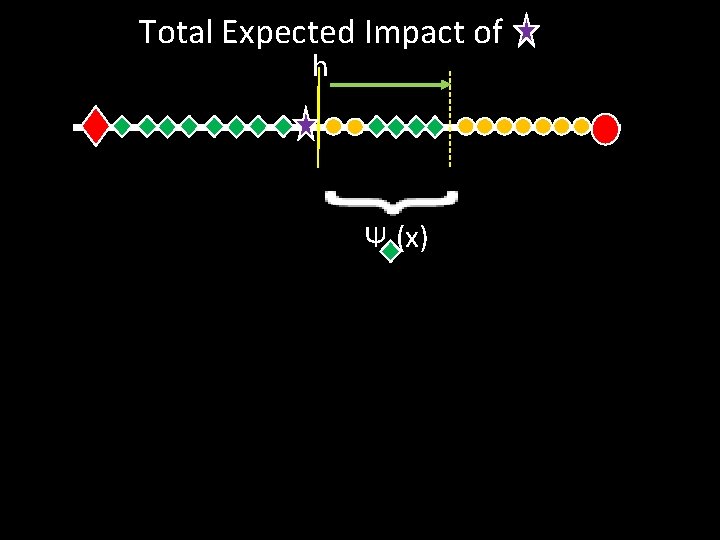

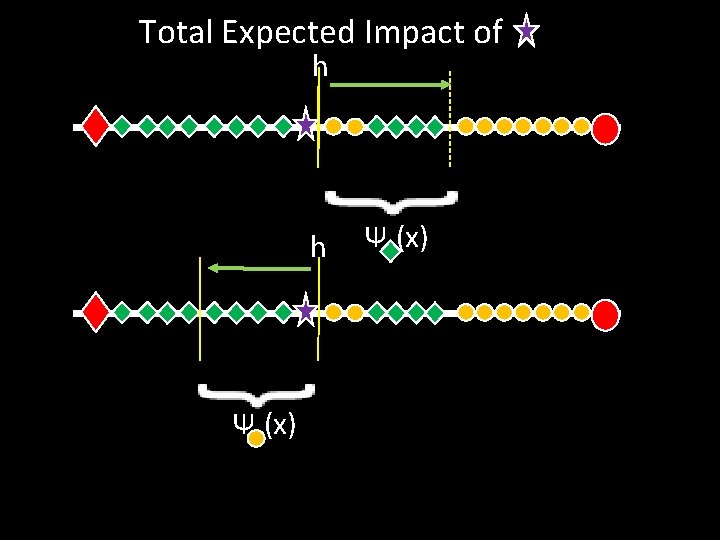

Total Expected Impact of h Ψ (x)

Total Expected Impact of h h Ψ (x)

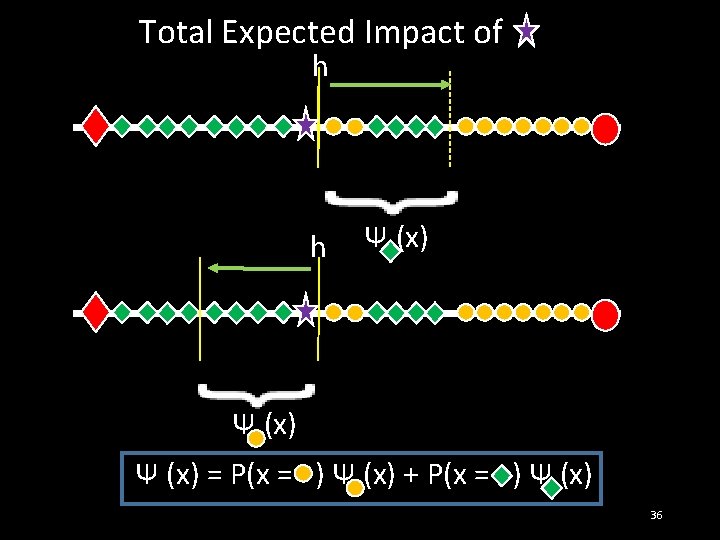

Total Expected Impact of h h Ψ (x) = P(x = ) Ψ (x) + P(x = ) Ψ (x) 36

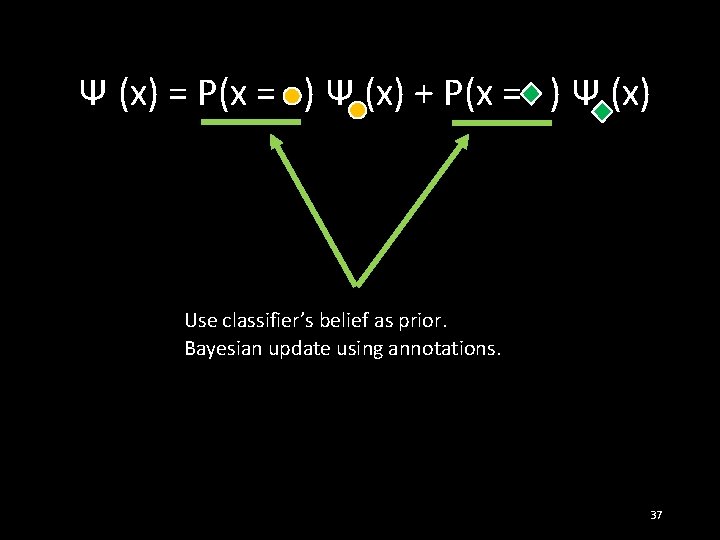

Ψ (x) = P(x = ) Ψ (x) + P(x = ) Ψ (x) Use classifier’s belief as prior. Bayesian update using annotations. 37

Assuming annotation accuracy > 0. 5: As # annotations (x) goes to infinity, Ψ(x) goes 0. 38

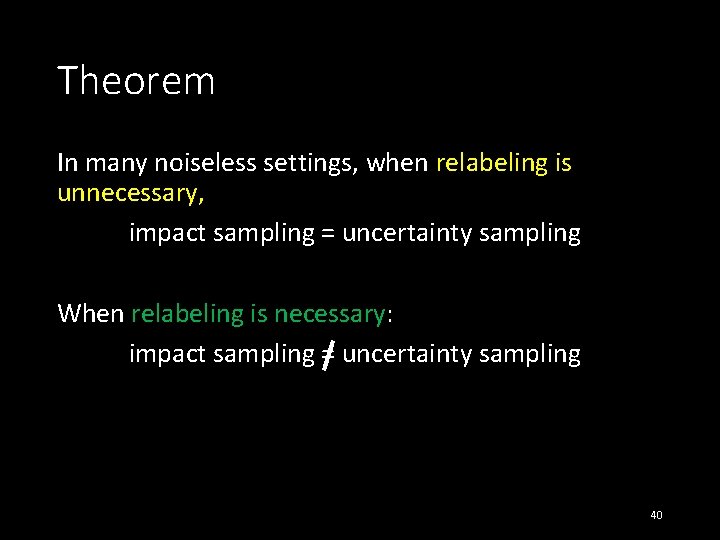

Theorem In many noiseless settings, when relabeling is unnecessary, impact sampling = uncertainty sampling 39

Theorem In many noiseless settings, when relabeling is unnecessary, impact sampling = uncertainty sampling When relabeling is necessary: impact sampling = uncertainty sampling 40

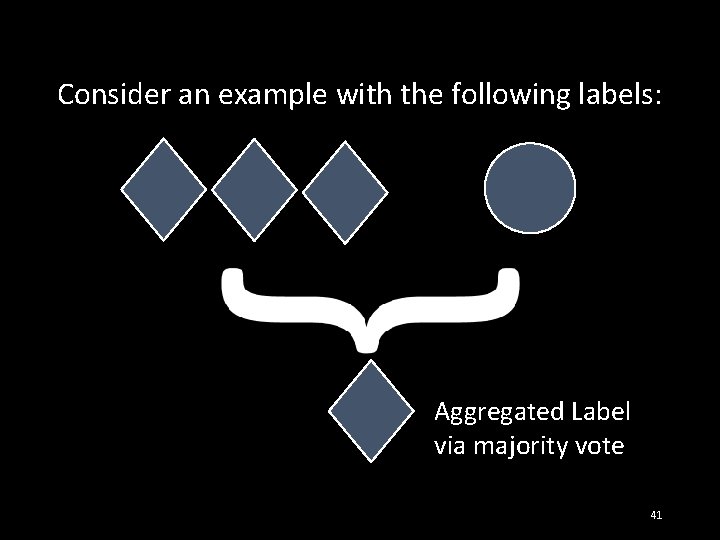

Consider an example with the following labels: Aggregated Label via majority vote 41

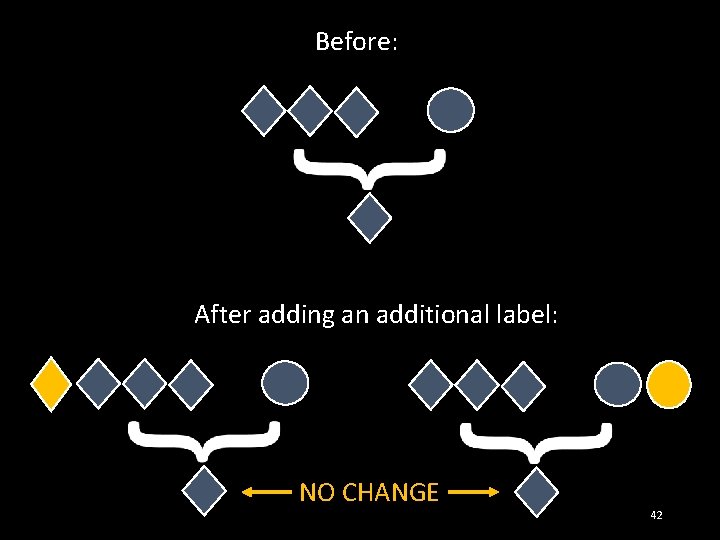

Before: After adding an additional label: NO CHANGE 42

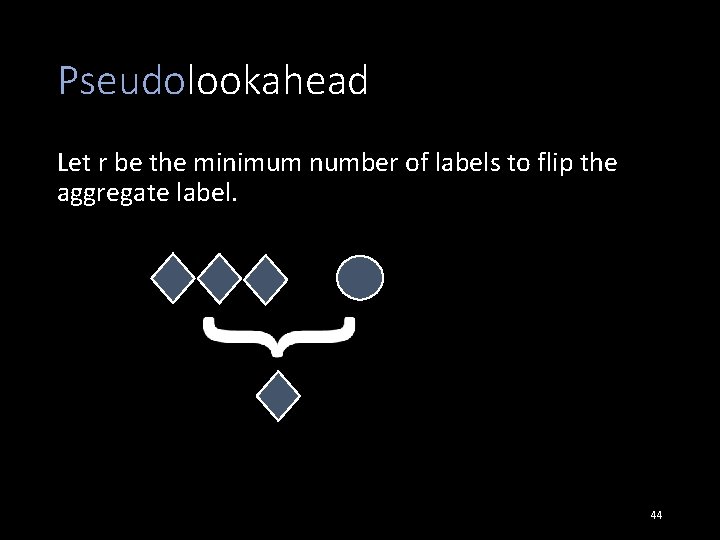

Pseudolookahead Let r be the minimum number of labels to flip the aggregate label. 43

Pseudolookahead Let r be the minimum number of labels to flip the aggregate label. 44

Pseudolookahead Let r be the minimum number of labels to flip the aggregate label. r=3 45

Pseudolookahead Redefine Ψ (x) = Ψr (x) / r 46

Pseudolookahead Redefine Ψ (x) = Ψr (x) / r Careful Optimism! 47

Budget = 1000 Label Accuracy = 75% 10, 30, 50, 70, 90 Features 48

EER impact Alpha-uncertainty Fixed-uncertainty passive Gaussian (num features = 90) 49

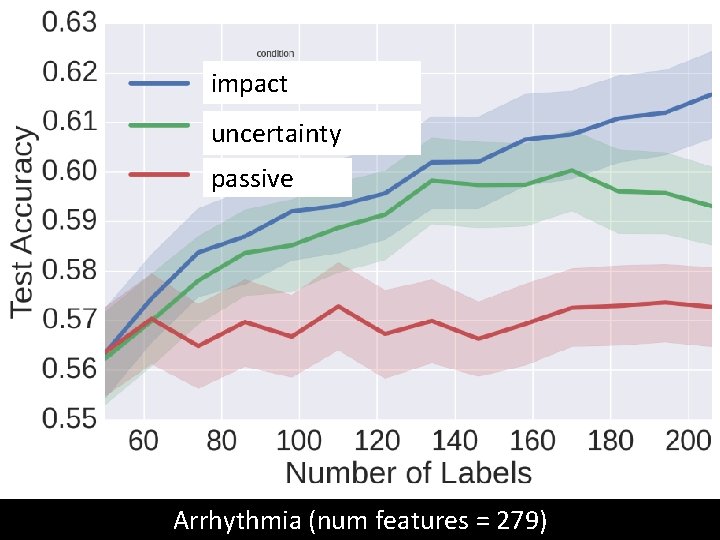

impact uncertainty passive Arrhythmia (num features = 279)

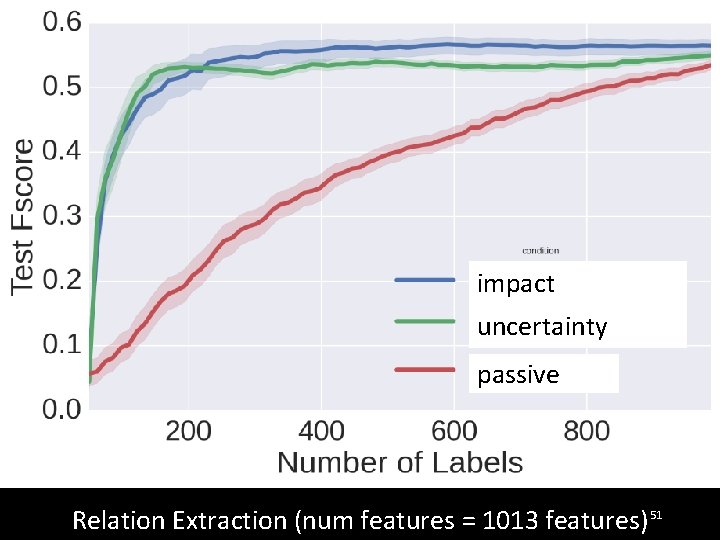

impact uncertainty passive Relation Extraction (num features = 1013 features) 51

![Re-active Learning Contributions Standard Active Learning Algorithms Fail Uncertainty Sampling [Lewis and Catlett 1994] Re-active Learning Contributions Standard Active Learning Algorithms Fail Uncertainty Sampling [Lewis and Catlett 1994]](http://slidetodoc.com/presentation_image_h/83c72af2c93dca539d40ae1c640763e1/image-52.jpg)

Re-active Learning Contributions Standard Active Learning Algorithms Fail Uncertainty Sampling [Lewis and Catlett 1994] Expected Error Reduction [Roy and Mc. Callum 2001] Re-active Learning Algorithms Extensions of Uncertainty Sampling Impact Sampling 52

- Slides: 52