RDMS CMS distributed Tier 2 plans and estimates

RDMS CMS distributed Tier 2: plans and estimates O. Kodolova, 1 E. Tikhonenko 2 1 – SINP MSU; 2 - JINR Meeting of Russia-CERN JWG on Computing&SW for LHC CERN, March 08, 2005

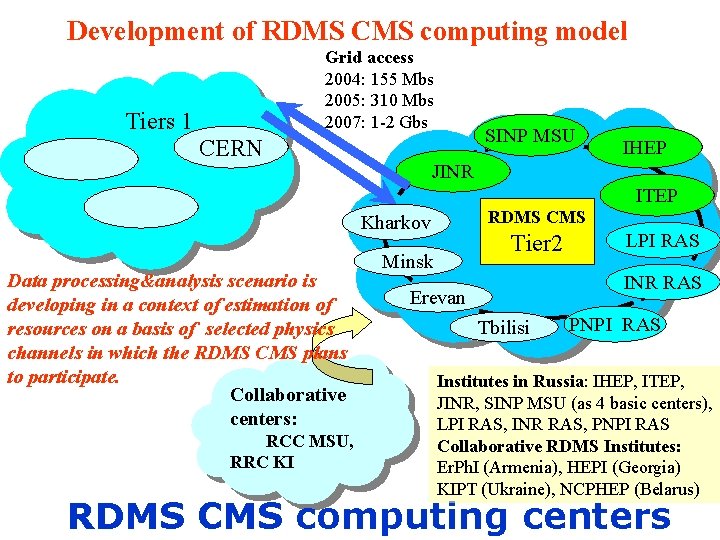

Development of RDMS CMS computing model Grid access 2004: 155 Mbs 2005: 310 Mbs 2007: 1 -2 Gbs Tiers 1 CERN SINP MSU IHEP JINR ITEP RDMS CMS Kharkov Data processing&analysis scenario is developing in a context of estimation of resources on a basis of selected physics channels in which the RDMS CMS plans to participate. Collaborative centers: RCC MSU, RRC KI Tier 2 Minsk LPI RAS INR RAS Erevan Tbilisi PNPI RAS Institutes in Russia: IHEP, ITEP, JINR, SINP MSU (as 4 basic centers), LPI RAS, INR RAS, PNPI RAS Collaborative RDMS Institutes: Er. Ph. I (Armenia), HEPI (Georgia) KIPT (Ukraine), NCPHEP (Belarus) RDMS CMS computing centers

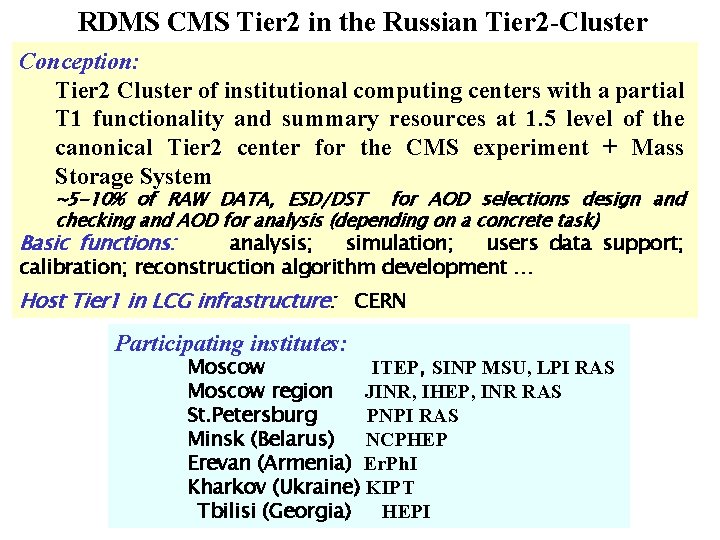

RDMS CMS Tier 2 in the Russian Tier 2 -Cluster Conception: Tier 2 Cluster of institutional computing centers with a partial T 1 functionality and summary resources at 1. 5 level of the canonical Tier 2 center for the CMS experiment + Mass Storage System ~5 -10% of RAW DATA, ESD/DST for AOD selections design and checking and AOD for analysis (depending on a concrete task) Basic functions: analysis; simulation; users data support; calibration; reconstruction algorithm development … Host Tier 1 in LCG infrastructure: CERN Participating institutes: Moscow ITEP, SINP MSU, LPI RAS Moscow region JINR, IHEP, INR RAS St. Petersburg PNPI RAS Minsk (Belarus) NCPHEP Erevan (Armenia) Er. Ph. I Kharkov (Ukraine) KIPT Tbilisi (Georgia) HEPI

Steps to estimate the resources: The questionnaire distributed at all the RDMS CMS institutes To coordinate these activities, a special RDMS CMS Working Group on Computing (12 members) was created in December, 2004

Questionnaire in work: the example for dimuon production

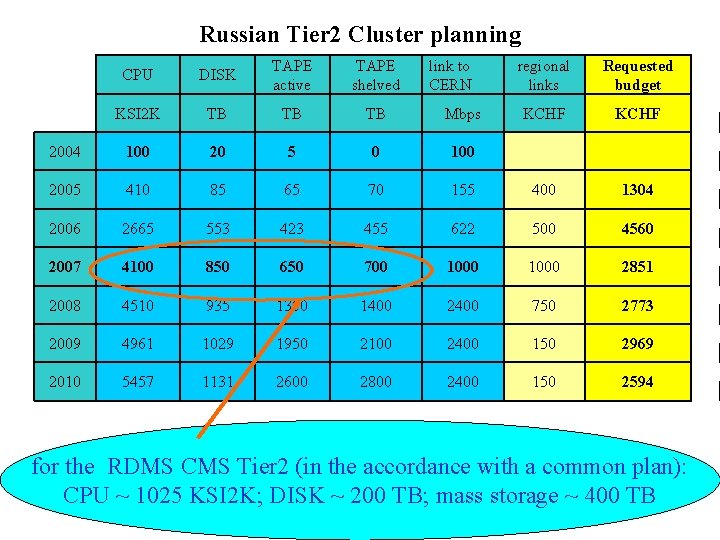

Russian Tier 2 Cluster planning CPU DISK TAPE active TAPE shelved link to CERN regional links Requested budget KSI 2 K TB TB TB Mbps KCHF 2004 100 20 5 0 100 2005 410 85 65 70 155 400 1304 2006 2665 553 423 455 622 500 4560 2007 4100 850 650 700 1000 2851 2008 4510 935 1300 1400 2400 750 2773 2009 4961 1029 1950 2100 2400 150 2969 2010 5457 1131 2600 2800 2400 150 2594 for the RDMS CMS Tier 2 (in the accordance with a common plan): CPU ~ 1025 KSI 2 K; DISK ~ 200 TB; mass storage ~ 400 TB

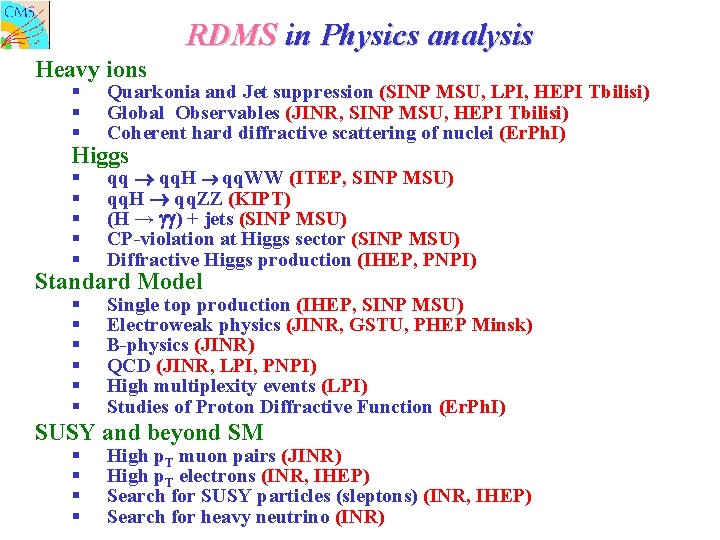

RDMS in Physics analysis Heavy ions § § § Quarkonia and Jet suppression (SINP MSU, LPI, HEPI Tbilisi) Global Observables (JINR, SINP MSU, HEPI Tbilisi) Coherent hard diffractive scattering of nuclei (Er. Ph. I) § § § qq qq. H qq. WW (ITEP, SINP MSU) qq. H qq. ZZ (KIPT) (H → ) + jets (SINP MSU) CP-violation at Higgs sector (SINP MSU) Diffractive Higgs production (IHEP, PNPI) § § § Single top production (IHEP, SINP MSU) Electroweak physics (JINR, GSTU, PHEP Minsk) B-physics (JINR) QCD (JINR, LPI, PNPI) High multiplexity events (LPI) Studies of Proton Diffractive Function (Er. Ph. I) Higgs Standard Model SUSY and beyond SM § § High p. T muon pairs (JINR) High p. T electrons (INR, IHEP) Search for SUSY particles (sleptons) (INR, IHEP) Search for heavy neutrino (INR)

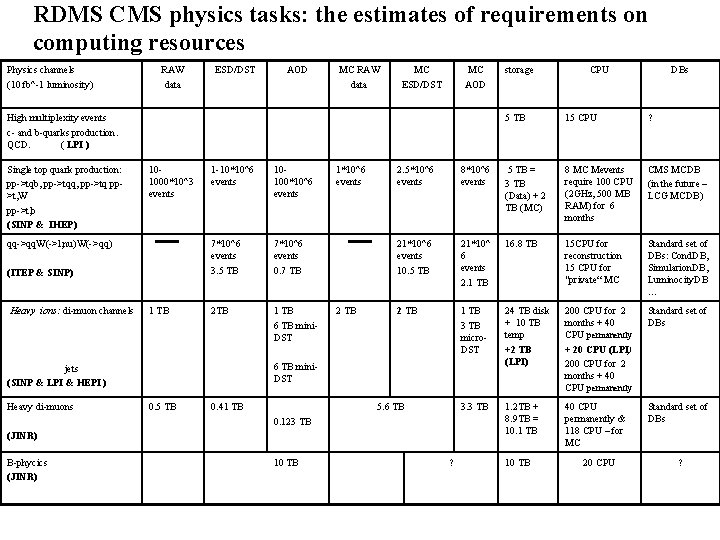

RDMS CMS physics tasks: the estimates of requirements on computing resources Physics channels (10 fb^-1 luminosity) RAW data ESD/DST AOD MC RAW data MC ESD/DST MC AOD High multiplexity events c- and b-quarks production. QCD. ( LPI ) Single top quark production: pp->tqb, pp->tqq, pp->tq pp>t, W pp->t, b (SINP & IHEP) 101000*10^3 events qq->qq. W(->l, nu)W(->qq) (ITEP & SINP) Heavy ions: di-muon channels 1 TB 1 -10*10^6 events 10100*10^6 events 7*10^6 events 3. 5 TB 7*10^6 events 0. 7 TB 2 TB 1 TB 6 TB mini. DST 2 TB 0. 5 TB 0. 41 TB 5 TB 15 CPU ? 5 TB = 3 TB (Data) + 2 TB (MC) 8 MC Mevents require 100 CPU (2 GHz, 500 MB RAM) for 6 months CMS MCDB (in the future – LCG MCDB) 21*10^6 events 10. 5 TB 21*10^ 6 events 2. 1 TB 16. 8 TB 15 CPU for reconstruction 15 CPU for "private“ MC Standard set of DBs: Cond. DB, Simularion. DB, Luminocity. DB … 2 TB 1 TB 3 TB micro. DST 24 TB disk + 10 TB temp +2 TB (LPI) 200 CPU for 2 months + 40 CPU permanently + 20 CPU (LPI) 200 CPU for 2 months + 40 CPU permanently Standard set of DBs 3. 3 TB 1. 2 TB + 8. 9 TB = 10. 1 TB 40 CPU permanently & 118 CPU – for MC Standard set of DBs 5. 6 TB 0. 123 TB 10 TB DBs 8*10^6 events (JINR) B-phycics (JINR) CPU 2. 5*10^6 events 6 TB mini. DST jets (SINP & LPI & HEPI ) Heavy di-muons 1*10^6 events storage ? 10 TB 20 CPU ?

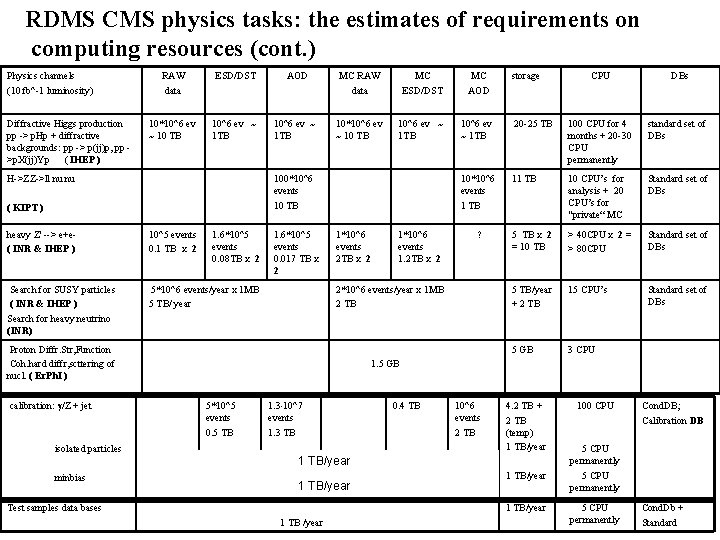

RDMS CMS physics tasks: the estimates of requirements on computing resources (cont. ) Physics channels (10 fb^-1 luminosity) Diffractive Higgs production pp -> p. Hp + diffractive backgrounds: pp -> p(jj)p, pp >p. X(jj)Yp ( IHEP ) RAW data ESD/DST AOD MC RAW data MC ESD/DST MC AOD 10*10^6 ev ~ 10 TB 10^6 ev ~ 1 TB 20 -25 TB 100 CPU for 4 months + 20 -30 CPU permanently standard set of DBs 10*10^6 events 1 TB 10 CPU’s for analysis + 20 CPU’s for "private“ MC Standard set of DBs 5 TB X 2 = 10 TB > 40 CPU X 2 = > 80 CPU Standard set of DBs 5 TB/year + 2 TB 15 CPU’s Standard set of DBs 5 GB 3 CPU H->ZZ->ll nu nu 100*10^6 events 10 TB ( KIPT ) heavy Z' --> e+e( INR & IHEP ) 10^5 events 0. 1 TB X 2 1. 6*10^5 events 0. 08 TB X 2 Search for SUSY particles ( INR & IHEP ) Search for heavy neutrino (INR) 5*10^6 events/year x 1 MB 5 TB/ year 1. 6*10^5 events 0. 017 TB X 2 1*10^6 events 2 TB X 2 isolated particles minbias ? 2*10^6 events/year x 1 MB 2 TB Proton Diffr. Str, Function Coh. hard diffr, scttering of nucl. ( Er. Ph. I ) calibration: /Z + jet 1*10^6 events 1. 2 TB X 2 storage CPU DBs 1. 5 GB 5*10^5 events 0. 5 TB 1. 3 10^7 events 1. 3 TB 0. 4 TB 10^6 events 2 TB 4. 2 TB + 2 TB (temp) 1 TB/year Test samples data bases 1 TB/year 1 TB /year 100 CPU Cond. DB; Calibration DB 5 CPU permanently Cond. Db + Standard

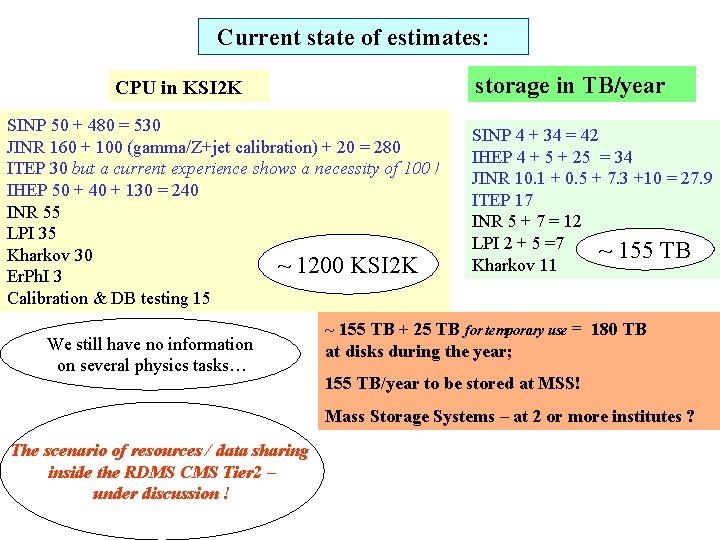

Current state of estimates: storage in TB/year CPU in KSI 2 K SINP 50 + 480 = 530 JINR 160 + 100 (gamma/Z+jet calibration) + 20 = 280 ITEP 30 but a current experience shows a necessity of 100 ! IHEP 50 + 40 + 130 = 240 INR 55 LPI 35 Kharkov 30 ~ 1200 KSI 2 K Er. Ph. I 3 Calibration & DB testing 15 We still have no information on several physics tasks… SINP 4 + 34 = 42 IHEP 4 + 5 + 25 = 34 JINR 10. 1 + 0. 5 + 7. 3 +10 = 27. 9 ITEP 17 INR 5 + 7 = 12 LPI 2 + 5 =7 ~ 155 TB Kharkov 11 ~ 155 TB + 25 TB for temporary use = 180 TB at disks during the year; 155 TB/year to be stored at MSS! Mass Storage Systems – at 2 or more institutes ? The scenario of resources / data sharing inside the RDMS CMS Tier 2 – under discussion !

- Slides: 10