Rank Aggregation Rank Aggregation Settings Multiple items Webpages

Rank Aggregation

Rank Aggregation: Settings • Multiple items – Web-pages, cars, apartments, …. • Multiple scores for each item – By different reviewers, users, according to different features… • Some aggregation function on the scores – Sum, Average, Max… • Goal: compute the top-k items

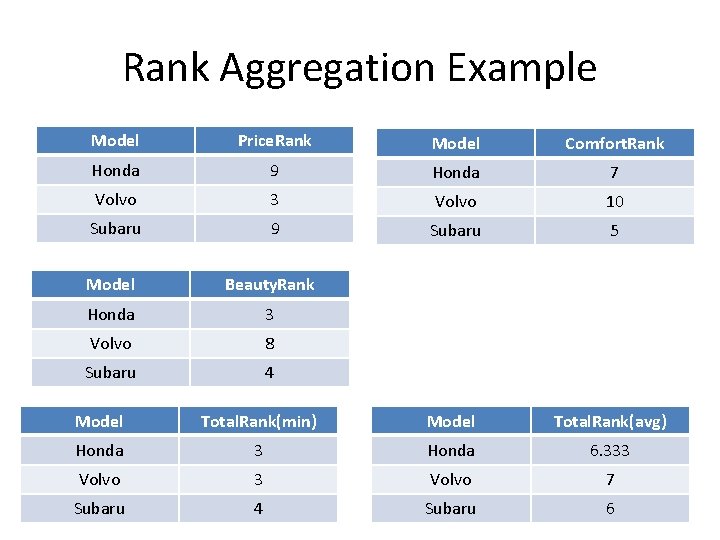

Rank Aggregation Example Model Price. Rank Model Comfort. Rank Honda 9 Honda 7 Volvo 3 Volvo 10 Subaru 9 Subaru 5 Model Beauty. Rank Honda 3 Volvo 8 Subaru 4 Model Total. Rank(min) Model Total. Rank(avg) Honda 3 Honda 6. 333 Volvo 7 Subaru 4 Subaru 6

Naïve Algorithm • Compute the aggregated rank for all items • Find the best one, then the second best one… the k best one • Good for small-scale problems • Still not feasible for web scales…

Can we do any better? • An assumption to help us: each individual list comes sorted – Reasonable for search engines, user rankings… • Another assumption: monotonicity of the aggregation function • Now can we do any better?

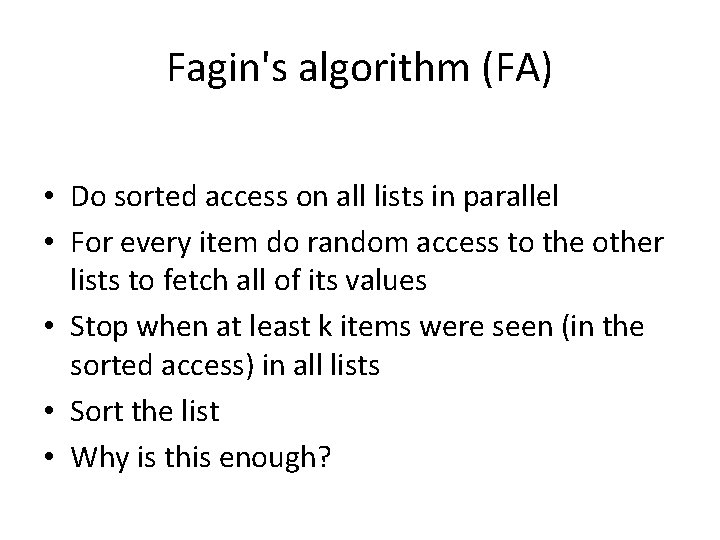

Fagin's algorithm (FA) • Do sorted access on all lists in parallel • For every item do random access to the other lists to fetch all of its values • Stop when at least k items were seen (in the sorted access) in all lists • Sort the list • Why is this enough?

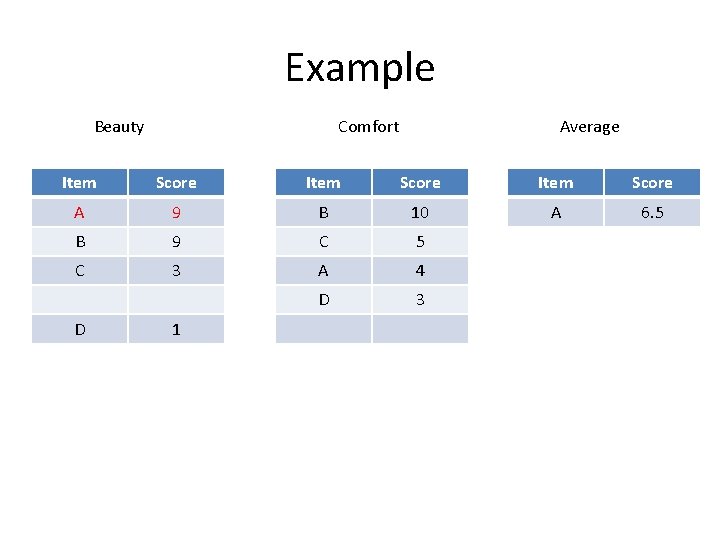

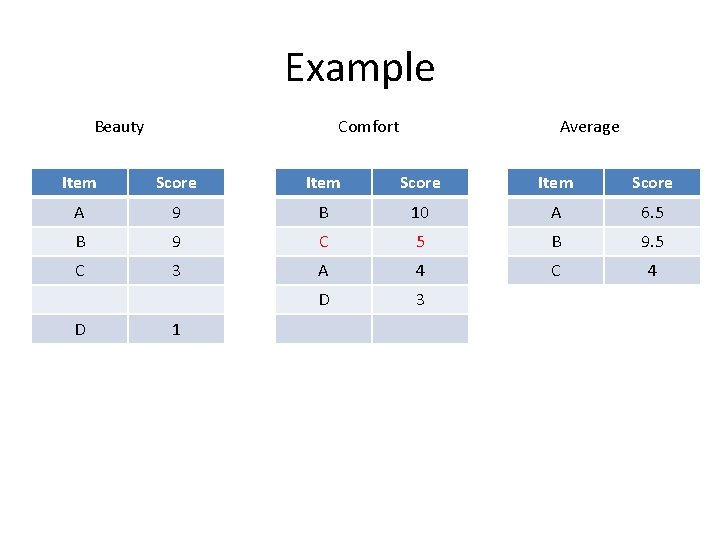

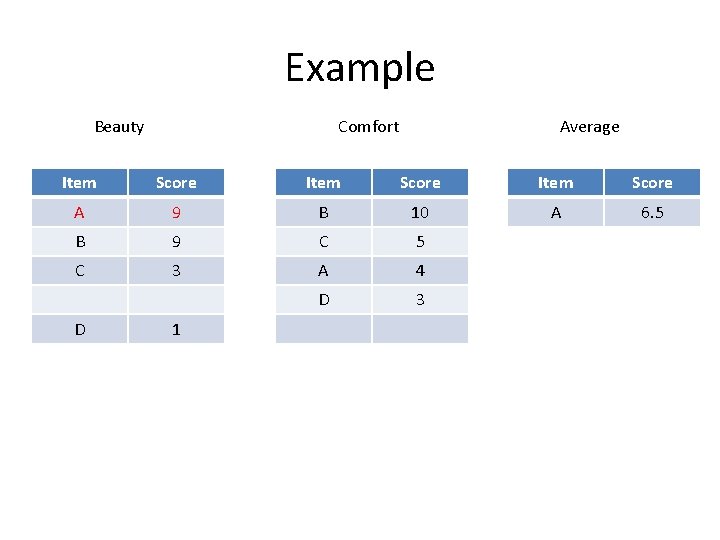

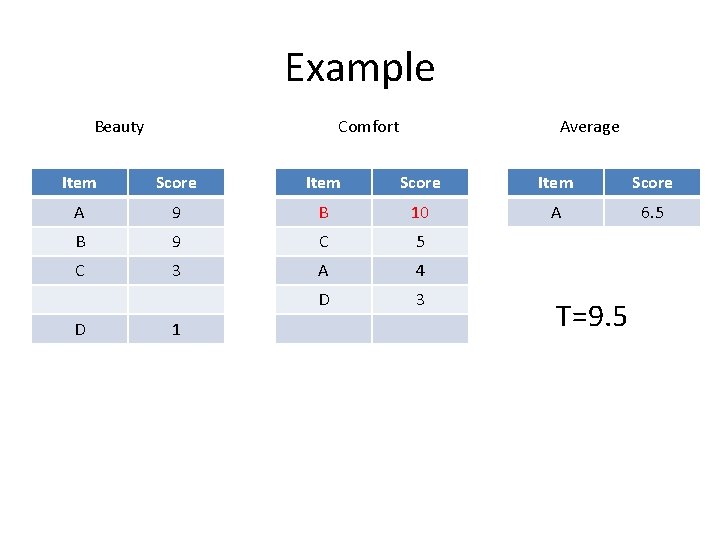

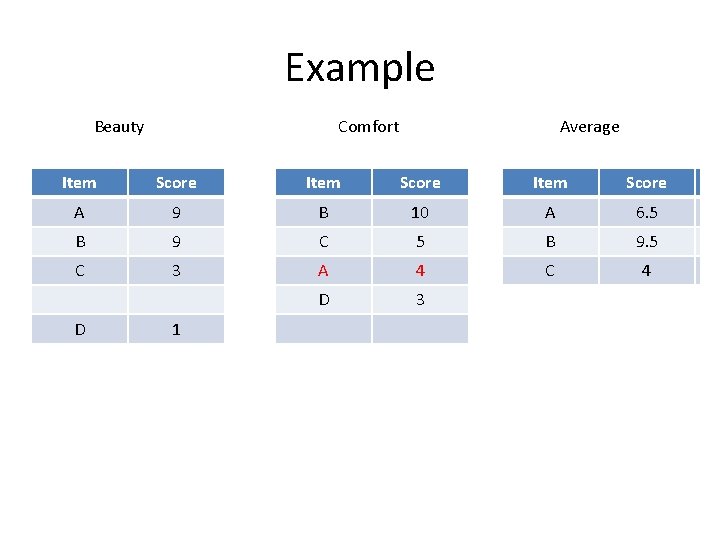

Example Beauty Comfort Average Item Score A 9 B 10 A 6. 5 B 9 C 5 C 3 A 4 D 3 D 1

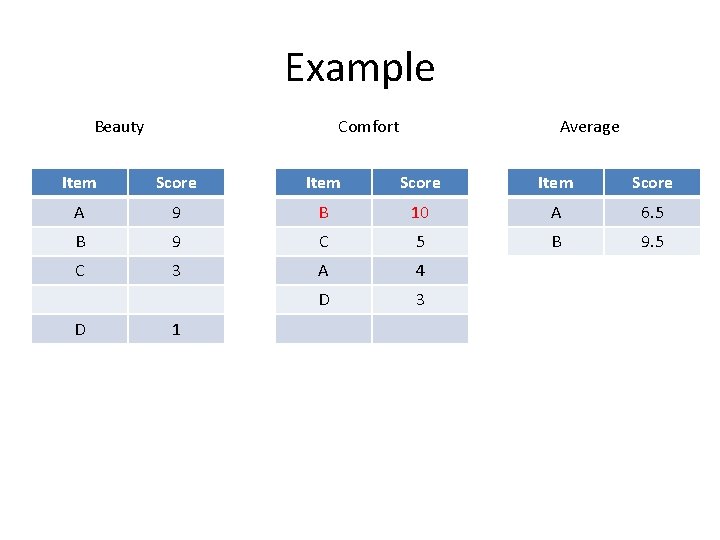

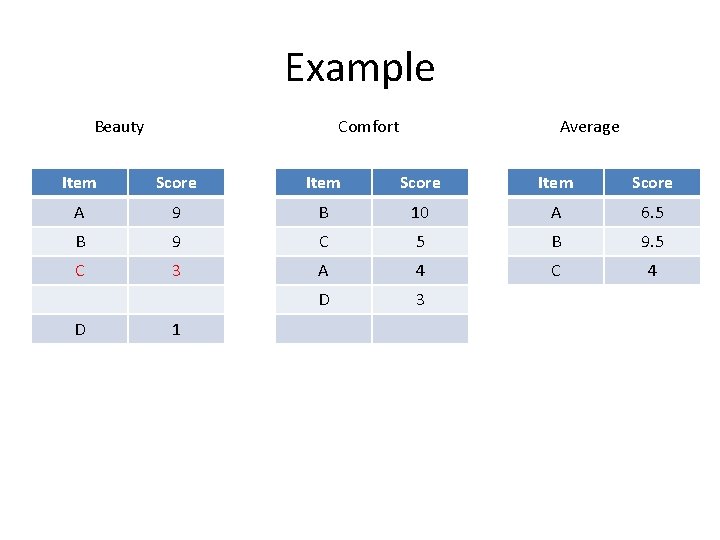

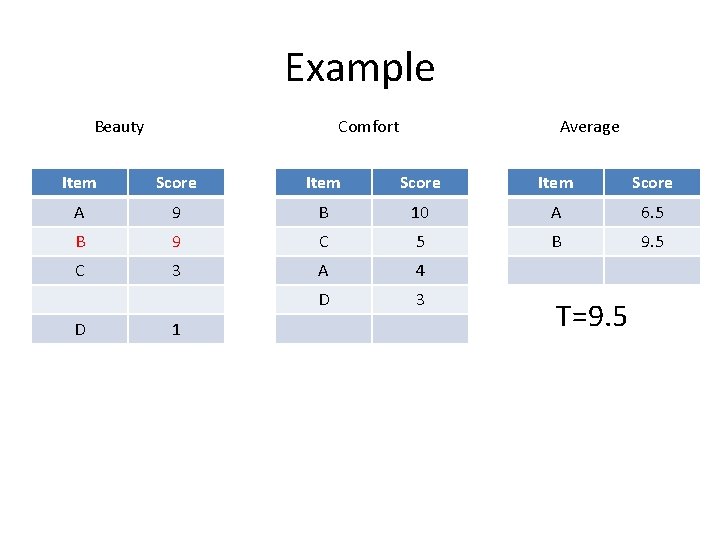

Example Beauty Comfort Average Item Score A 9 B 10 A 6. 5 B 9 C 5 B 9. 5 C 3 A 4 D 3 D 1

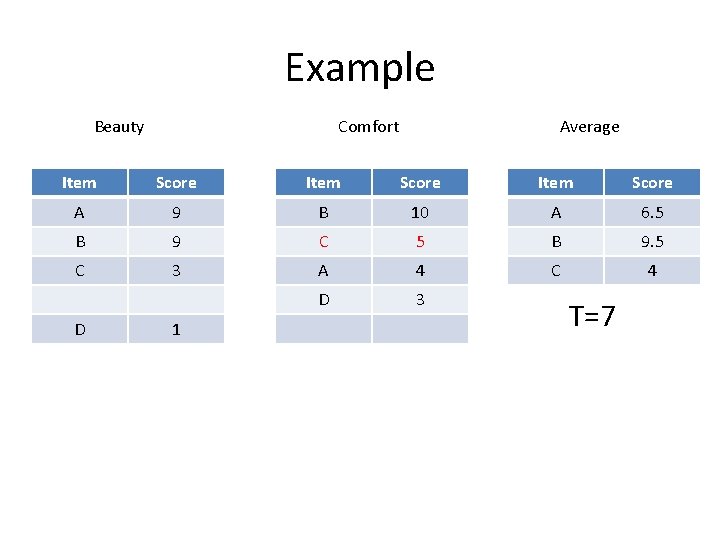

Example Beauty Comfort Average Item Score A 9 B 10 A 6. 5 B 9 C 5 B 9. 5 C 3 A 4 D 3 D 1

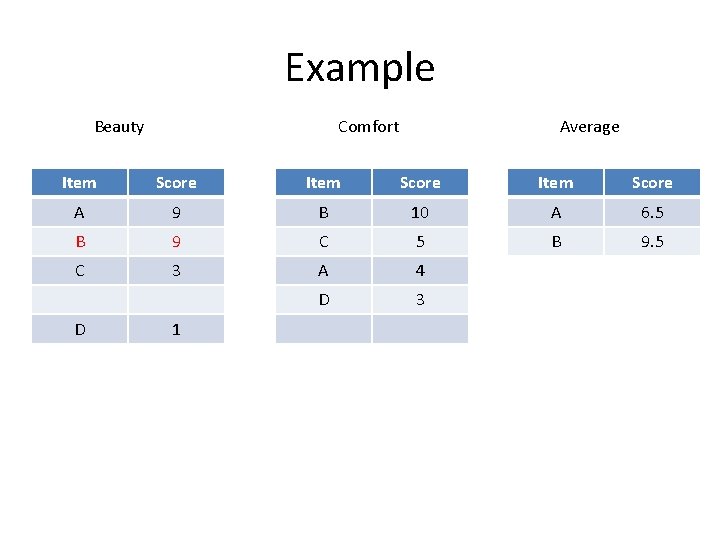

Example Beauty Comfort Average Item Score A 9 B 10 A 6. 5 B 9 C 5 B 9. 5 C 3 A 4 C 4 D 3 D 1

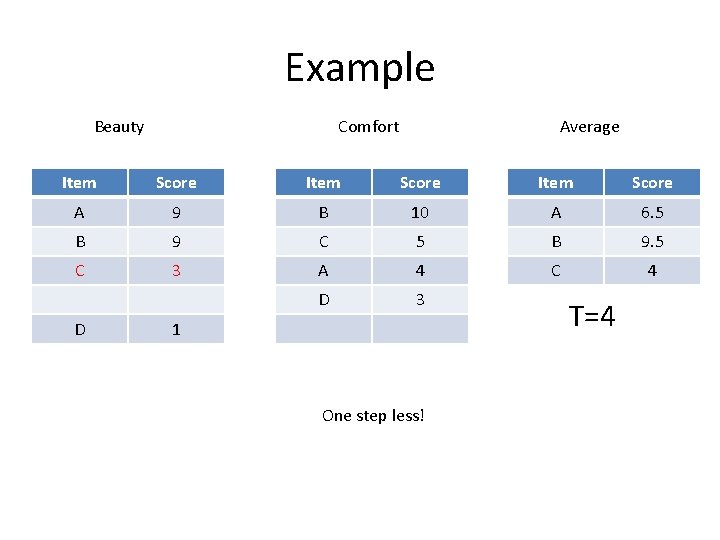

Example Beauty Comfort Average Item Score A 9 B 10 A 6. 5 B 9 C 5 B 9. 5 C 3 A 4 C 4 D 3 D 1

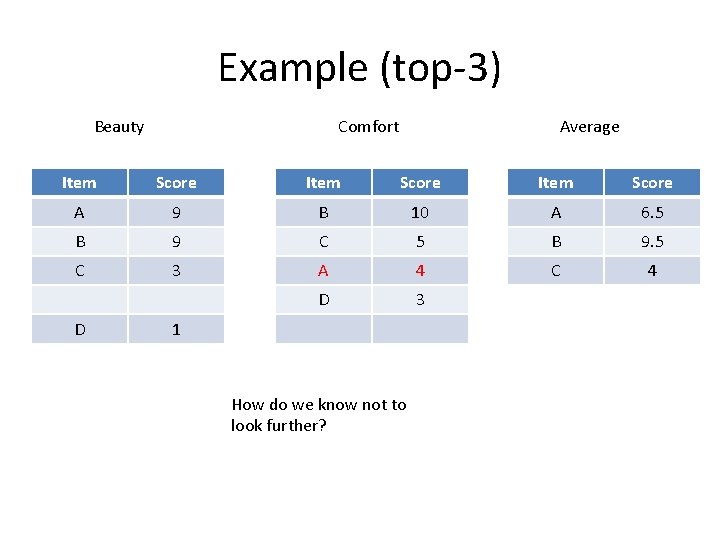

Example (top-3) Beauty Comfort Average Item Score A 9 B 10 A 6. 5 B 9 C 5 B 9. 5 C 3 A 4 C 4 D 3 D 1 How do we know not to look further?

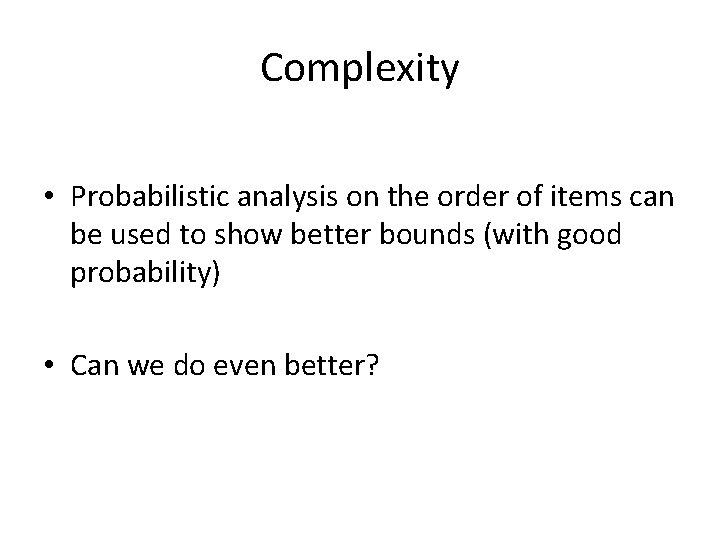

Complexity • Probabilistic analysis on the order of items can be used to show better bounds (with good probability) • Can we do even better?

Cost model • This is a very simple settings so we can define a finer cost model than worst case complexity • In a web context it is important to do so – Since the scale is huge • We associate some cost Cs with every sorted access , and some cost Cr with every random access • Denote the cost for algorithm A on input instance I by cost(A, I)

Instance-optimality • An algorithm A is instance-optimal if for every input instance I, cost(A, I) = O(cost(A', I)) for every algorithm A' • A very strong notion • But we can realize it here!

Threshold Algorithm (TA) • Idea: sometimes we can stop before seeing k objects in every list • Use a threshold on how good can a score of an unseen object be. • Based on aggregating the minimal score seen so far in all lists

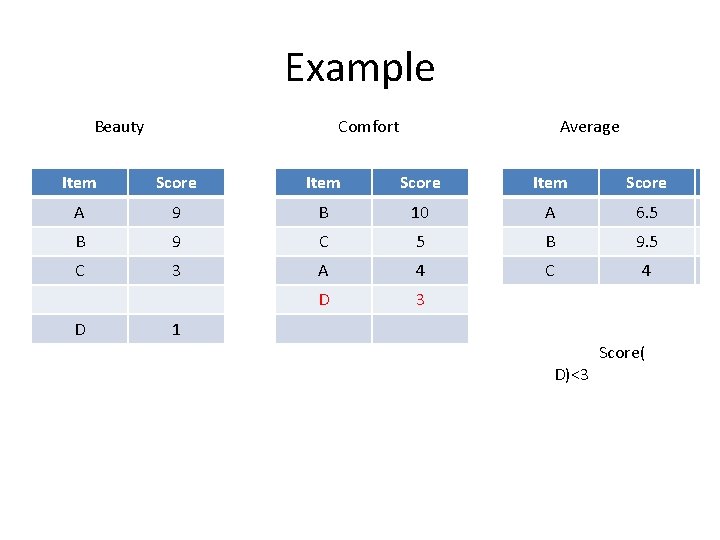

Example Beauty Comfort Average Item Score A 9 B 10 A 6. 5 B 9 C 5 C 3 A 4 D 3 D 1

Example Beauty Comfort Average Item Score A 9 B 10 A 6. 5 B 9 C 5 C 3 A 4 D 3 D 1 T=9. 5

Example Beauty Comfort Average Item Score A 9 B 10 A 6. 5 B 9 C 5 B 9. 5 C 3 A 4 D 3 D 1 T=9. 5

Example Beauty Comfort Average Item Score A 9 B 10 A 6. 5 B 9 C 5 B 9. 5 C 3 A 4 C 4 D 3 D 1 T=7

Example Beauty Comfort Average Item Score A 9 B 10 A 6. 5 B 9 C 5 B 9. 5 C 3 A 4 C 4 D 3 D 1 One step less! T=4

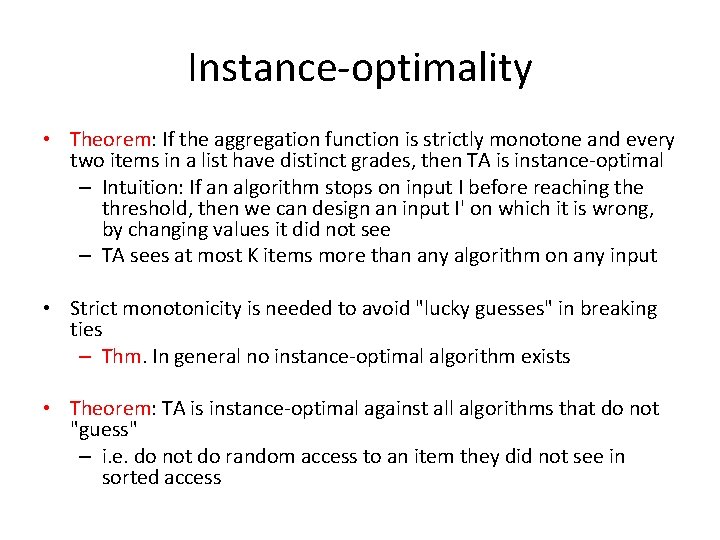

Instance-optimality • Theorem: If the aggregation function is strictly monotone and every two items in a list have distinct grades, then TA is instance-optimal – Intuition: If an algorithm stops on input I before reaching the threshold, then we can design an input I' on which it is wrong, by changing values it did not see – TA sees at most K items more than any algorithm on any input • Strict monotonicity is needed to avoid "lucky guesses" in breaking ties – Thm. In general no instance-optimal algorithm exists • Theorem: TA is instance-optimal against all algorithms that do not "guess" – i. e. do not do random access to an item they did not see in sorted access

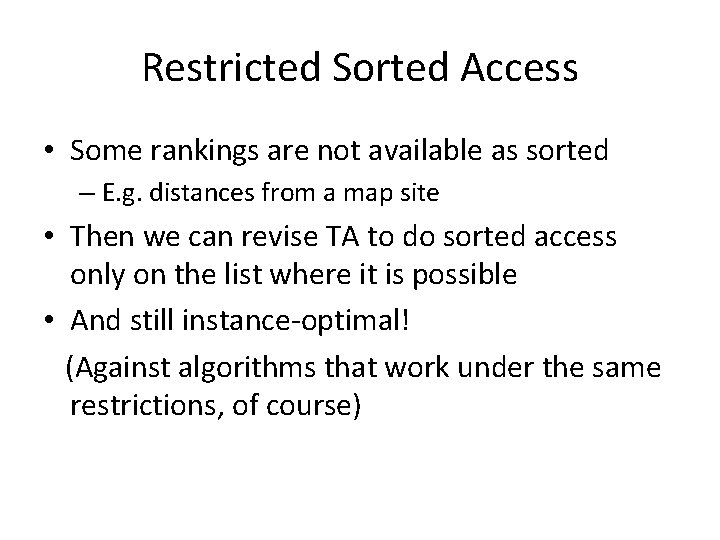

Restricted Sorted Access • Some rankings are not available as sorted – E. g. distances from a map site • Then we can revise TA to do sorted access only on the list where it is possible • And still instance-optimal! (Against algorithms that work under the same restrictions, of course)

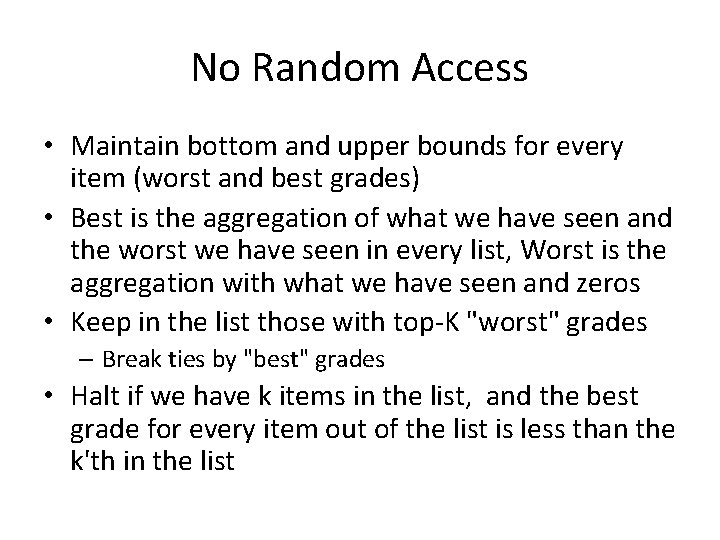

No Random Access • Maintain bottom and upper bounds for every item (worst and best grades) • Best is the aggregation of what we have seen and the worst we have seen in every list, Worst is the aggregation with what we have seen and zeros • Keep in the list those with top-K "worst" grades – Break ties by "best" grades • Halt if we have k items in the list, and the best grade for every item out of the list is less than the k'th in the list

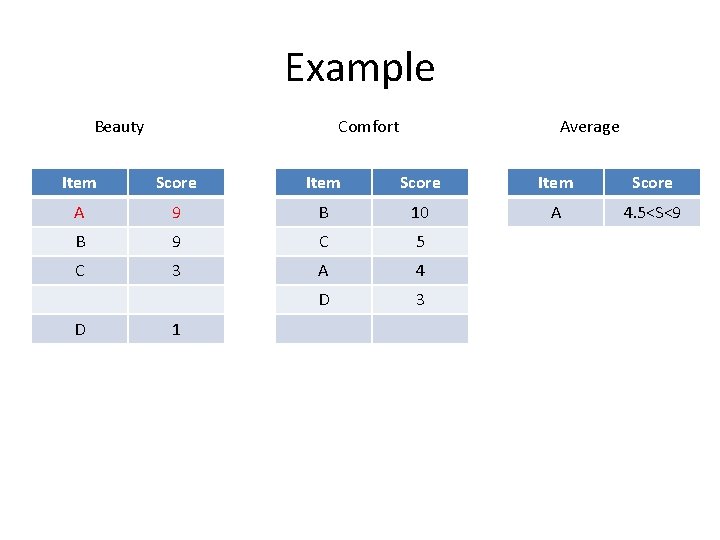

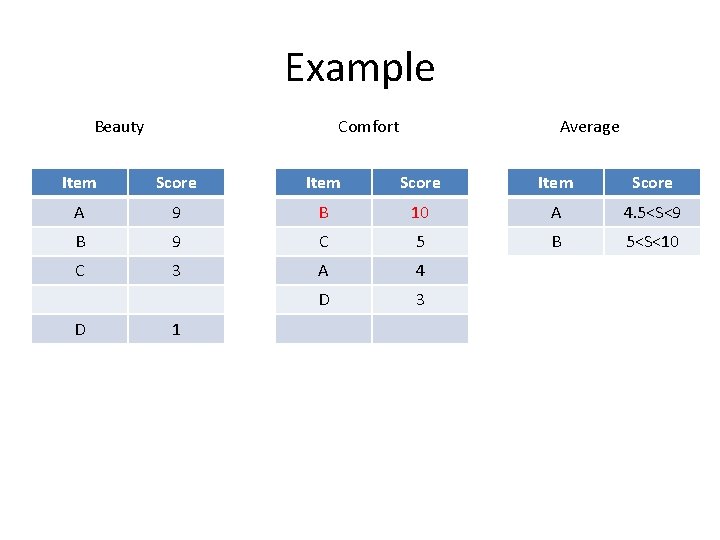

Example Beauty Comfort Average Item Score A 9 B 10 A 4. 5<S<9 B 9 C 5 C 3 A 4 D 3 D 1

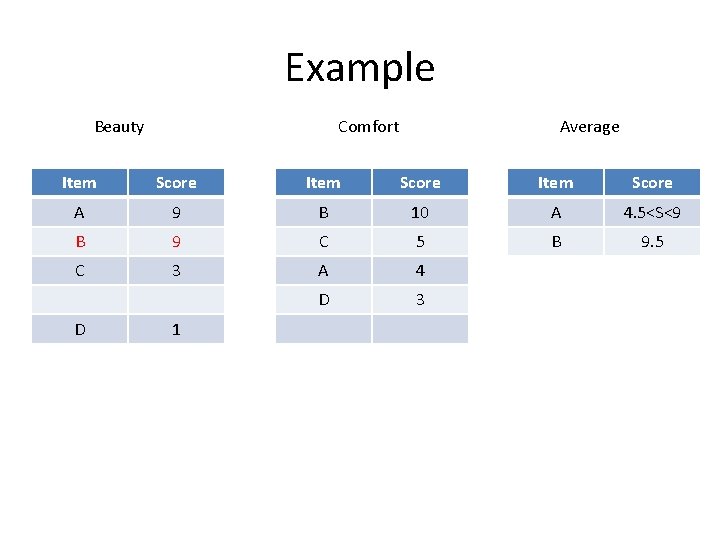

Example Beauty Comfort Average Item Score A 9 B 10 A 4. 5<S<9 B 9 C 5 B 5<S<10 C 3 A 4 D 3 D 1

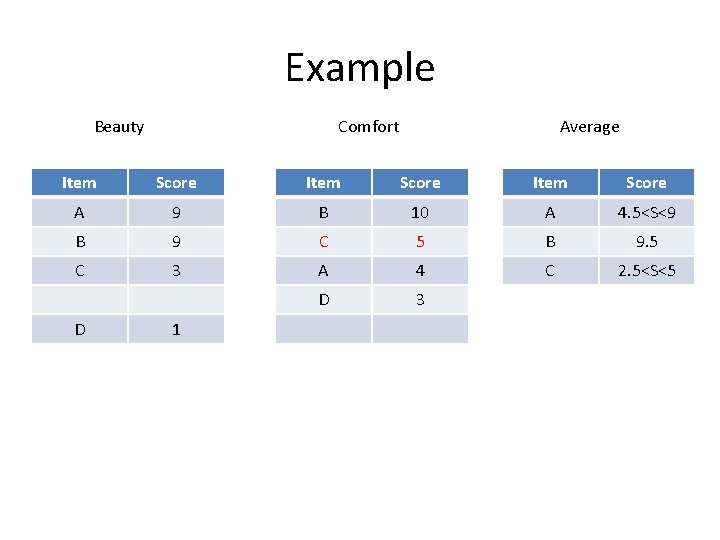

Example Beauty Comfort Average Item Score A 9 B 10 A 4. 5<S<9 B 9 C 5 B 9. 5 C 3 A 4 D 3 D 1

Example Beauty Comfort Average Item Score A 9 B 10 A 4. 5<S<9 B 9 C 5 B 9. 5 C 3 A 4 C 2. 5<S<5 D 3 D 1

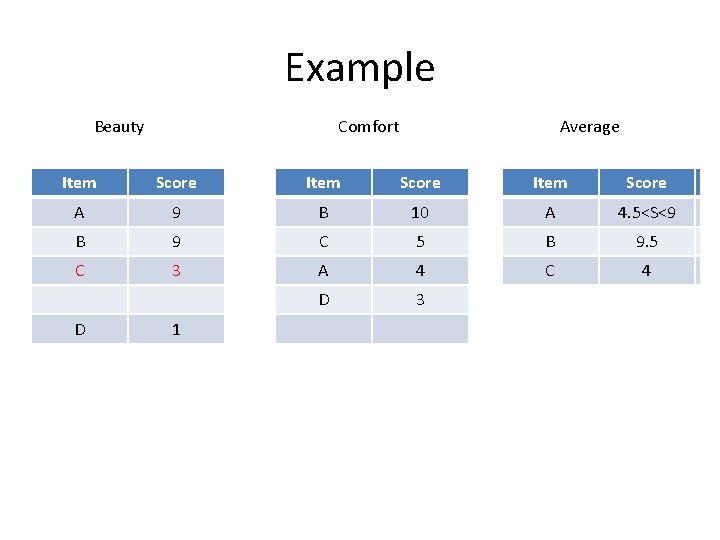

Example Beauty Comfort Average Item Score A 9 B 10 AA 4. 5<S<9 6. 5 B 9 C 5 BB 9. 5 C 3 A 4 CC 44 D 3 D 1

Example Beauty Comfort Average Item Score A 9 B 10 AA 6. 5 B 9 C 5 BB 9. 5 C 3 A 4 CC 44 D 3 D 1

Example Beauty Comfort Average Item Score A 9 B 10 AA 6. 5 B 9 C 5 BB 9. 5 C 3 A 4 CC 44 D 3 D 1 D)<3 Score(

- Slides: 31