Randomized Algorithms 1 Upper Bounds Wed like to

Randomized Algorithms 1

Upper Bounds We’d like to say “Algorithm A never takes more than f(n) steps for an input of size n” “Big-O” Notation gives worst-case, i. e. , maximum, running times. A correct algorithm is a constructive upper bound on the complexity of the problem that it solves. 2

Lower Bounds Establish the minimum amount of time needed to solve a given computational problem: n Searching a sorted list takes at least (log N) time. Note that such arguments also classify the problem; need not be constructive! 3

Randomized vs Deterministic Algorithms Deterministic algorithms n n Take at most x steps Assuming input distribution, analyze expected running time. Randomized algorithms n n n Take x steps on average Take x steps with high probability Output “correct” answer with probability p 4

Randomized Quicksort Always output correct answer Takes O(N log N) time on average Likelihood of running O(N log N) time? n Running time is O(N log N) with probability 1 -N^(-6). 5

Order Statistics The ith order statistic in a set of n elements is the ith smallest element The minimum is the 1 st order statistic The maximum is the nth order statistic The median is the n/2 th order statistic n If n is even, there are 2 medians How can we calculate order statistics? What is the running time? 6

Order Statistics How many comparisons are needed to find the minimum element in a set? The maximum? Can we find the minimum and maximum with less than twice the cost? Yes: n Walk through elements by pairs w Compare each element in pair to the other w Compare the largest to maximum, smallest to minimum n Total cost: 3 comparisons per 2 elements, 3 n/2 comparisons in total 7

Finding Order Statistics: The Selection Problem A more interesting problem is selection: finding the ith smallest element of a set We will show: n n A practical randomized algorithm with O(n) expected running time A cool algorithm of theoretical interest only with O(n) worst-case running time 8

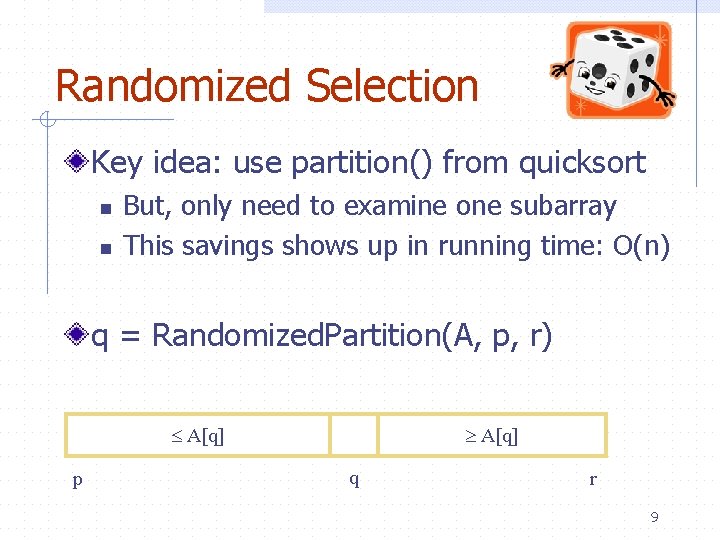

Randomized Selection Key idea: use partition() from quicksort n n But, only need to examine one subarray This savings shows up in running time: O(n) q = Randomized. Partition(A, p, r) A[q] p A[q] q r 9

![Randomized Selection Randomized. Select(A, p, r, i) if (p == r) then return A[p]; Randomized Selection Randomized. Select(A, p, r, i) if (p == r) then return A[p];](http://slidetodoc.com/presentation_image_h2/ef3d70f3174ccf127ffdf95c033cf3a5/image-10.jpg)

Randomized Selection Randomized. Select(A, p, r, i) if (p == r) then return A[p]; q = Randomized. Partition(A, p, r) k = q - p + 1; if (i == k) then return A[q]; if (i < k) then return Randomized. Select(A, p, q-1, i); else return Randomized. Select(A, q+1, r, i-k); k A[q] p A[q] q r 10

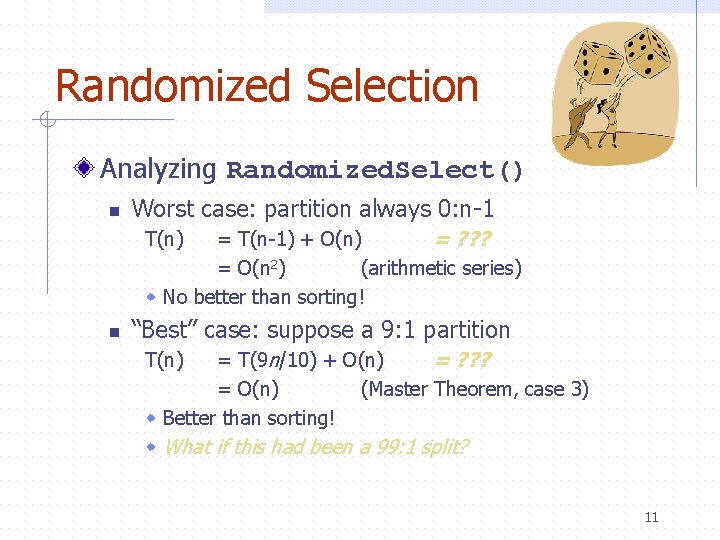

Randomized Selection Analyzing Randomized. Select() n Worst case: partition always 0: n-1 = T(n-1) + O(n) = ? ? ? = O(n 2) (arithmetic series) w No better than sorting! T(n) n “Best” case: suppose a 9: 1 partition = T(9 n/10) + O(n) = ? ? ? = O(n) (Master Theorem, case 3) w Better than sorting! w What if this had been a 99: 1 split? T(n) 11

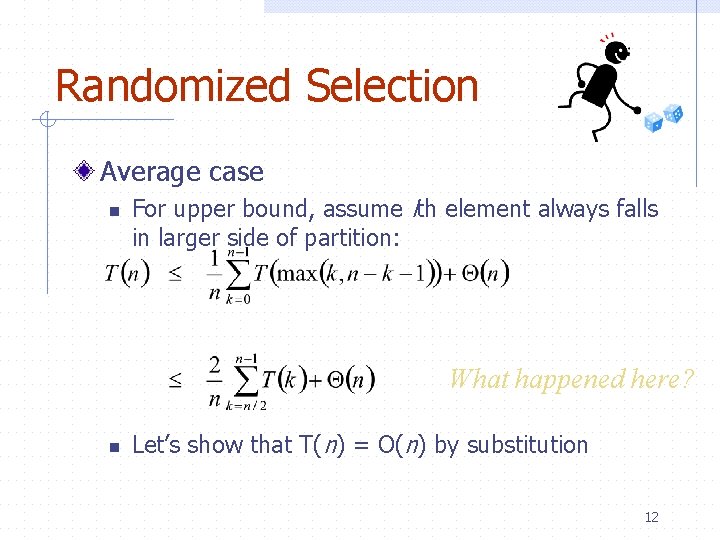

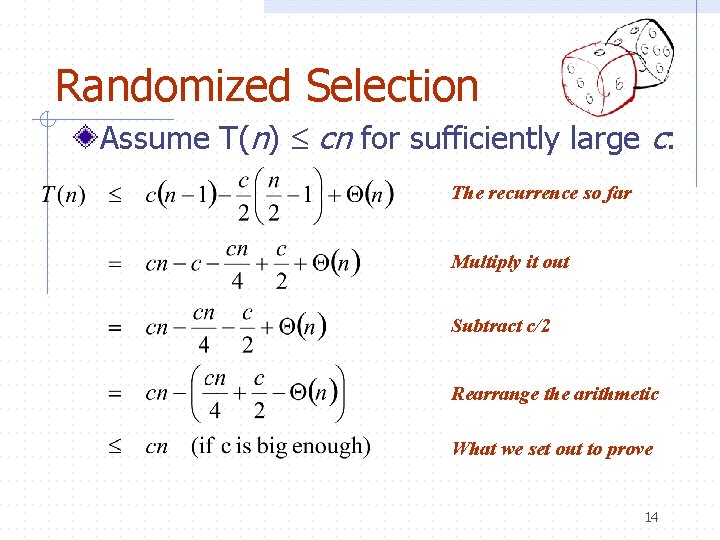

Randomized Selection Average case n For upper bound, assume ith element always falls in larger side of partition: What happened here? n Let’s show that T(n) = O(n) by substitution 12

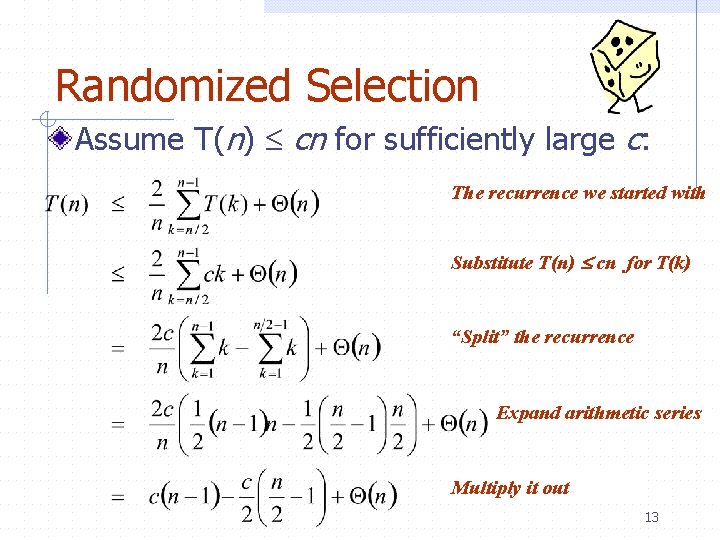

Randomized Selection Assume T(n) cn for sufficiently large c: The recurrence we started with What happened Substitute T(n) here? cn for T(k) What happened “Split” the recurrence here? Expand arithmetic series What happened here? Multiply it out here? What happened 13

Randomized Selection Assume T(n) cn for sufficiently large c: The recurrence so far What happened Multiply it out here? What happened Subtract c/2 here? Rearrange the arithmetic What happened here? What we set out here? to prove happened 14

Linear-Time Selection Deterministic Algorithms Randomized algorithm works well in practice What follows is a deterministic linear time algorithm, really of theoretical interest only Basic idea: n n Generate a good partitioning element Call this element x 15

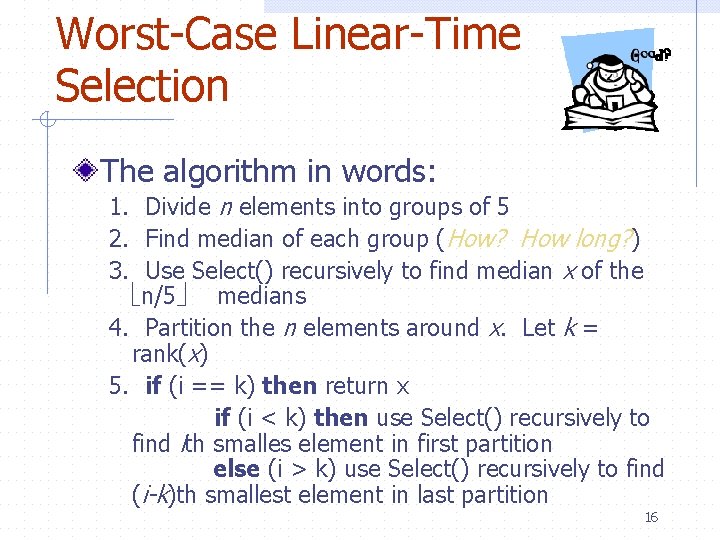

Worst-Case Linear-Time Selection The algorithm in words: 1. Divide n elements into groups of 5 2. Find median of each group (How? How long? ) 3. Use Select() recursively to find median x of the n/5 medians 4. Partition the n elements around x. Let k = rank(x) 5. if (i == k) then return x if (i < k) then use Select() recursively to find ith smalles element in first partition else (i > k) use Select() recursively to find (i-k)th smallest element in last partition 16

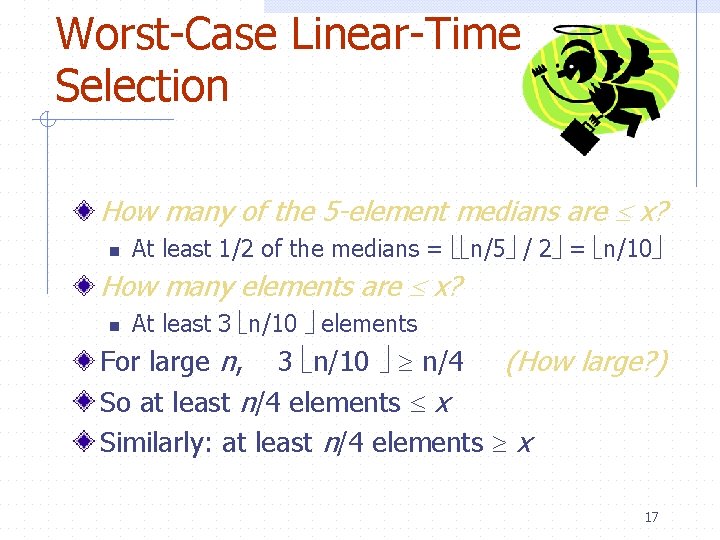

Worst-Case Linear-Time Selection How many of the 5 -element medians are x? n At least 1/2 of the medians = n/5 / 2 = n/10 How many elements are x? n At least 3 n/10 elements For large n, 3 n/10 n/4 (How large? ) So at least n/4 elements x Similarly: at least n/4 elements x 17

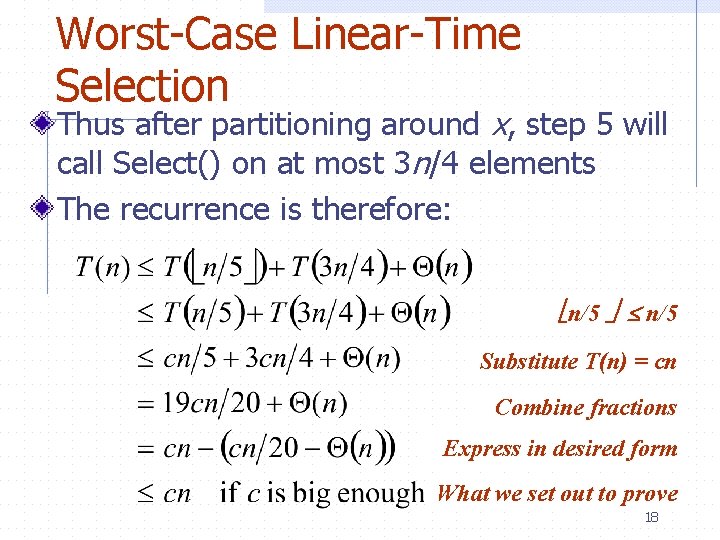

Worst-Case Linear-Time Selection Thus after partitioning around x, step 5 will call Select() on at most 3 n/4 elements The recurrence is therefore: n/5 ? ? ? n/5 Substitute T(n) =? ? ? cn Combine fractions ? ? ? Express in desired form ? ? ? What we set out to prove ? ? ? 18

Worst-Case Linear-Time Selection Intuitively: n Work at each level is a constant fraction (19/20) smaller w Geometric progression! n Thus the O(n) work at the root dominates 19

Selection Algorithm Using sorting, takes O(nlogn) time A randomized algorithm n n Takes O(n) time on average Takes O(n) time with high probability A deterministic algorithm n Takes O(n) time 20

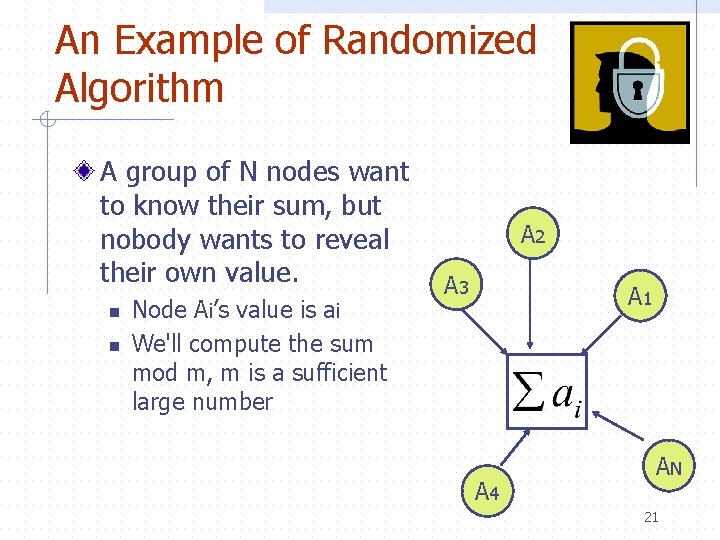

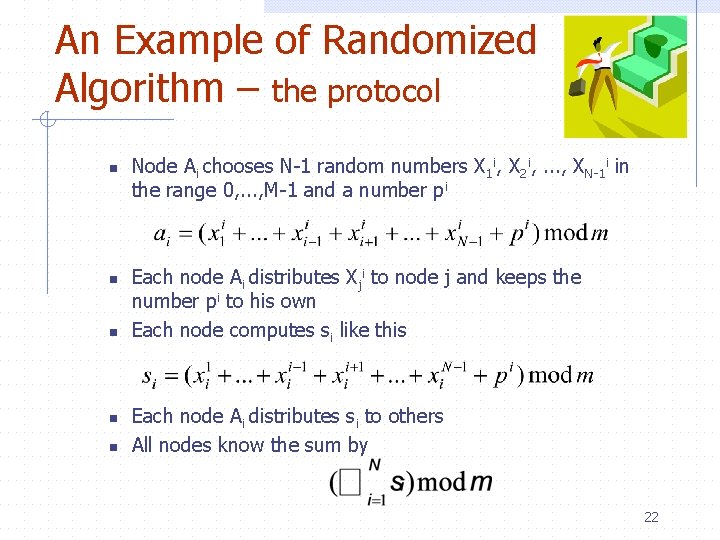

An Example of Randomized Algorithm A group of N nodes want to know their sum, but nobody wants to reveal their own value. n n Node Ai’s value is ai We'll compute the sum mod m, m is a sufficient large number A 2 A 3 A 1 A 4 AN 21

An Example of Randomized Algorithm – the protocol n n n Node Ai chooses N-1 random numbers X 1 i, X 2 i, . . . , XN-1 i in the range 0, . . . , M-1 and a number pi Each node Ai distributes Xji to node j and keeps the number pi to his own Each node computes si like this Each node Ai distributes si to others All nodes know the sum by 22

Many Other Randomized Algorithms Hashing: Universal Hash Function Resource Sharing n Ethernet collision avoidance Load Balancing Packet Routing Primality Test 23

- Slides: 23