Randomization in Graph Optimization Problems David Karger MIT

Randomization in Graph Optimization Problems David Karger MIT http: //theory. lcs. mit. edu/~karger MIT

Randomized Algorithms Flip coins to decide what to do next l Avoid hard work of making “right” choice l Often faster and simpler than deterministic algorithms l l Different from average-case analysis » » Input is worst case Algorithm adds randomness 2 MIT

Methods l Random selection » l Random sampling » » l MIT generate a small random subproblem solve, extrapolate to whole problem Monte Carlo simulation » l if most candidate choices “good”, then a random choice is probably good simulations estimate event likelihoods Randomized Rounding for approximation 3

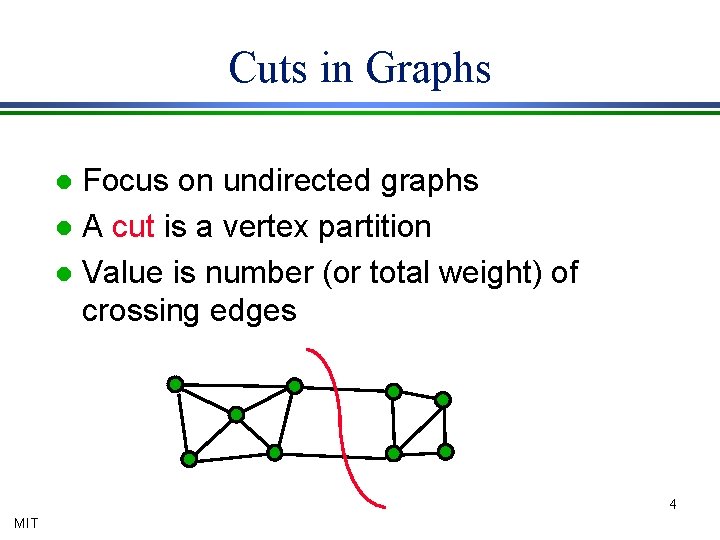

Cuts in Graphs Focus on undirected graphs l A cut is a vertex partition l Value is number (or total weight) of crossing edges l 4 MIT

Optimization with Cuts l Cut values determine solution of many graph optimization problems: » » » min-cut / max-flow multicommodity flow (sort-of) bisection / separator network reliability network design Randomization helps solve these problems 5 MIT

Presentation Assumption For entire presentation, we consider unweighted graphs (all edges have weight/capacity one) l All results apply unchanged to arbitrarily weighted graphs l » Integer weights = parallel edges » Rational weights scale to integers » Analysis unaffected » Some implementation details MIT 6

![Basic Probability Conditional probability » Pr[A Ç B] = Pr[A] × Pr[B | A] Basic Probability Conditional probability » Pr[A Ç B] = Pr[A] × Pr[B | A]](http://slidetodoc.com/presentation_image_h/9ee06c63ae71bcf92ea40f4db43478d6/image-7.jpg)

Basic Probability Conditional probability » Pr[A Ç B] = Pr[A] × Pr[B | A] l Independent events multiply: » Pr[A Ç B] = Pr[A] × Pr[B] l Union Bound » Pr[X È Y] £ Pr[X] + Pr[Y] l Linearity of expectation: » E[X + Y] = E[X] + E[Y] l MIT 7

Random Selection for Minimum Cuts Random choices are good when problems are rare MIT

Minimum Cut Smallest cut of graph l Cheapest way to separate into 2 parts l Various applications: l » network reliability (small cuts are weakest) » subtour elimination constraints for TSP » separation oracle for network design l Not s-t min-cut 9 MIT

Max-flow/Min-cut s-t flow: edge-disjoint packing of s-t paths l s-t cut: a cut separating s and t l [FF]: s-t max-flow = s-t min-cut l » max-flow saturates all s-t min-cuts » most efficient way to find s-t min-cuts l [GH]: min-cut is “all-pairs” s-t min-cut » find using n flow computations 10 MIT

![Flow Algorithms l Push-relabel [GT]: » » push “excess” around graph till it’s gone Flow Algorithms l Push-relabel [GT]: » » push “excess” around graph till it’s gone](http://slidetodoc.com/presentation_image_h/9ee06c63ae71bcf92ea40f4db43478d6/image-11.jpg)

Flow Algorithms l Push-relabel [GT]: » » push “excess” around graph till it’s gone max-flow in O*(mn) (note: O* hides logs) – » l recent O*(m 3/2) [GR] min-cut in O*(mn 2) --- “harder” than flow Pipelining [HO]: » » save push/relabel data between flows min-cut in O*(mn) --- “as easy” as flow 11 MIT

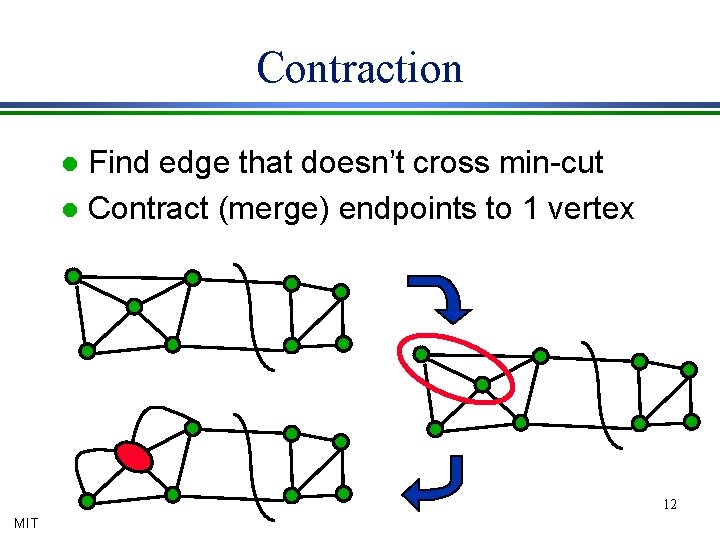

Contraction Find edge that doesn’t cross min-cut l Contract (merge) endpoints to 1 vertex l 12 MIT

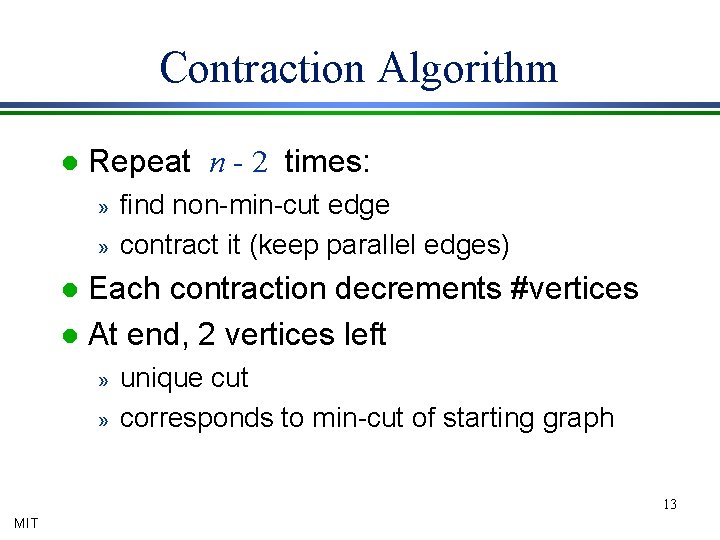

Contraction Algorithm l Repeat n - 2 times: » » find non-min-cut edge contract it (keep parallel edges) Each contraction decrements #vertices l At end, 2 vertices left l » » unique cut corresponds to min-cut of starting graph 13 MIT

14

![Picking an Edge Must contract non-min-cut edges l [NI]: O(m) time algorithm to pick Picking an Edge Must contract non-min-cut edges l [NI]: O(m) time algorithm to pick](http://slidetodoc.com/presentation_image_h/9ee06c63ae71bcf92ea40f4db43478d6/image-15.jpg)

Picking an Edge Must contract non-min-cut edges l [NI]: O(m) time algorithm to pick edge l » » n contractions: O(mn) time for min-cut slightly faster than flows If only could find edge faster…. Idea: min-cut edges are few 15 MIT

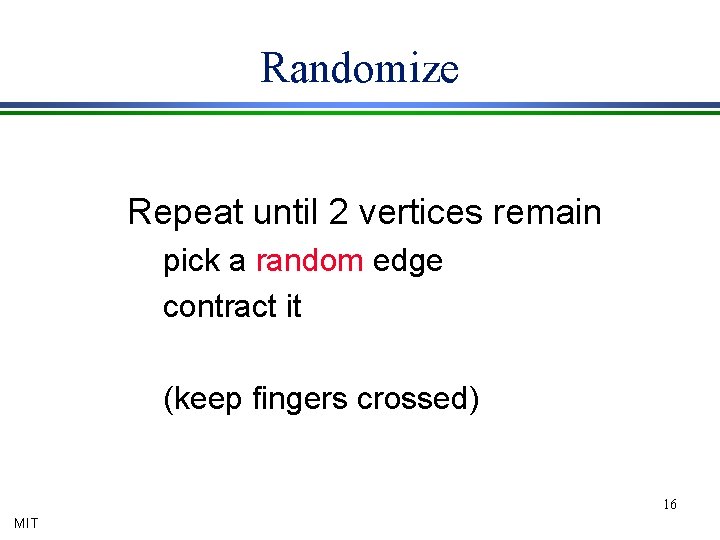

Randomize Repeat until 2 vertices remain pick a random edge contract it (keep fingers crossed) 16 MIT

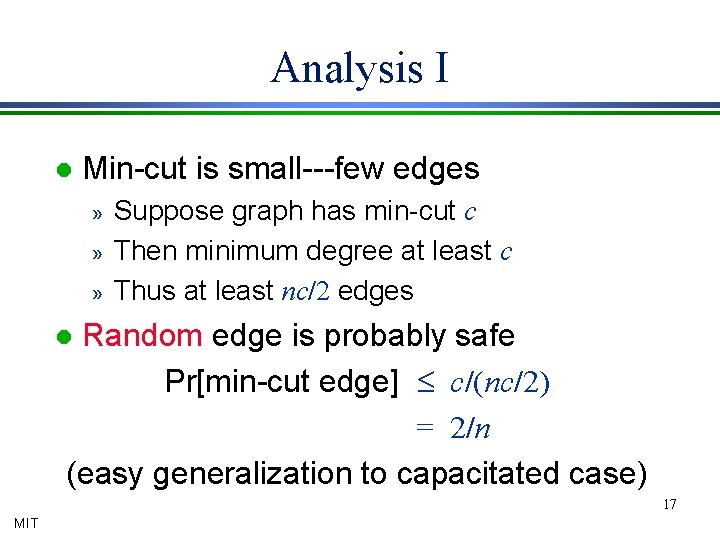

Analysis I l Min-cut is small---few edges » » » Suppose graph has min-cut c Then minimum degree at least c Thus at least nc/2 edges Random edge is probably safe Pr[min-cut edge] £ c/(nc/2) = 2/n (easy generalization to capacitated case) l 17 MIT

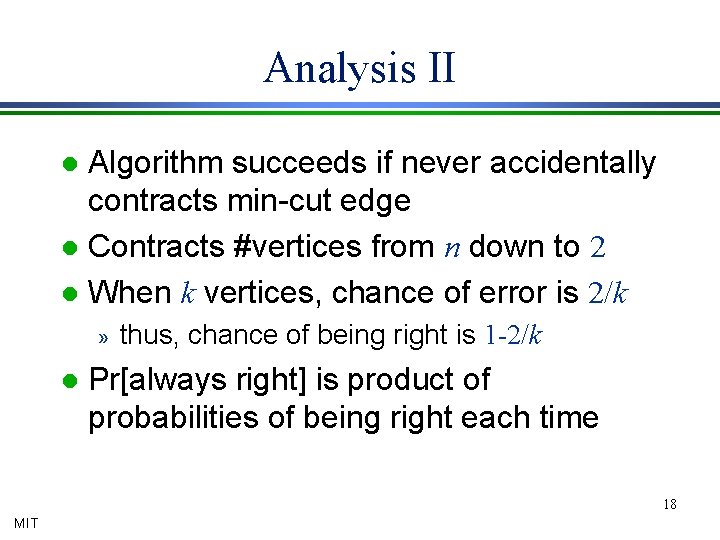

Analysis II Algorithm succeeds if never accidentally contracts min-cut edge l Contracts #vertices from n down to 2 l When k vertices, chance of error is 2/k l » l thus, chance of being right is 1 -2/k Pr[always right] is product of probabilities of being right each time 18 MIT

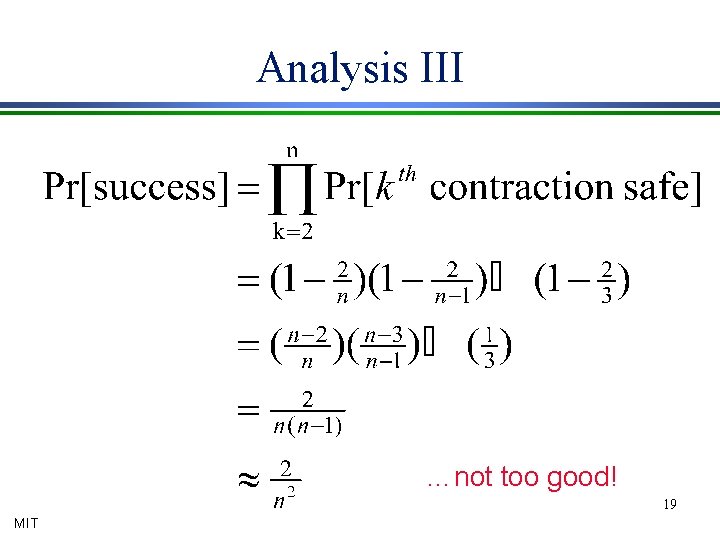

Analysis III …not too good! 19 MIT

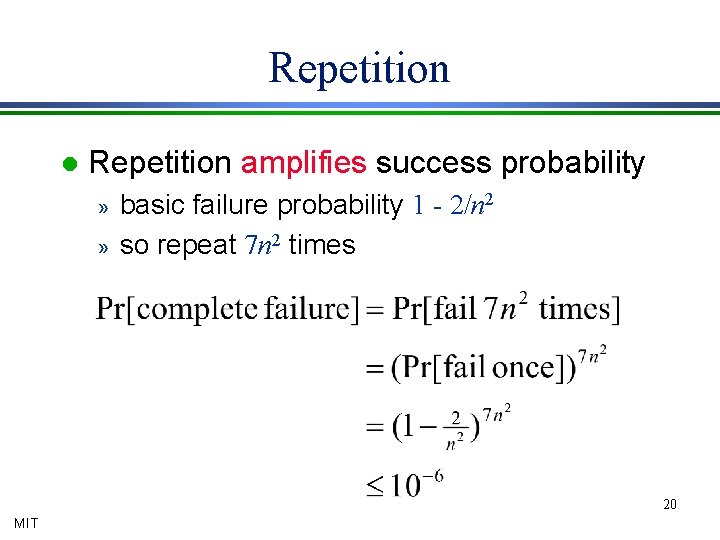

Repetition l Repetition amplifies success probability » » basic failure probability 1 - 2/n 2 so repeat 7 n 2 times 20 MIT

How fast? l Easy to perform 1 trial in O(m) time » just use array of edges, no data structures But need n 2 trials: O(mn 2) time l Simpler than flows, but slower l 21 MIT

![An improvement [KS] l When k vertices, error probability 2/k » big when k An improvement [KS] l When k vertices, error probability 2/k » big when k](http://slidetodoc.com/presentation_image_h/9ee06c63ae71bcf92ea40f4db43478d6/image-22.jpg)

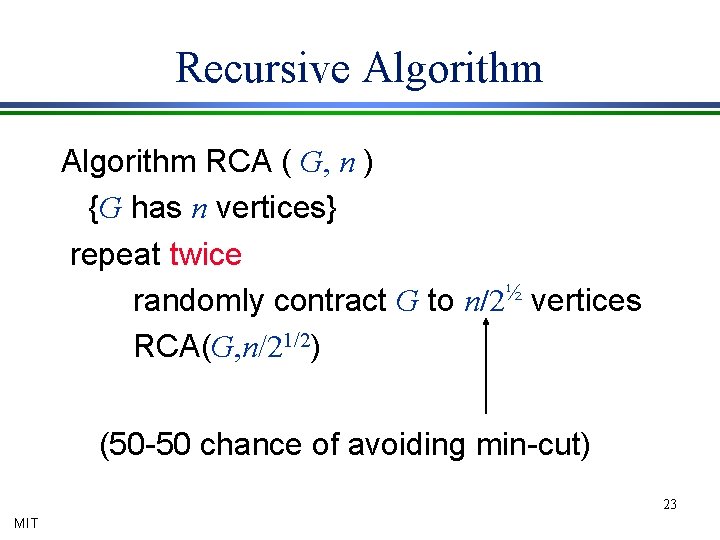

An improvement [KS] l When k vertices, error probability 2/k » big when k small l Idea: once k small, change algorithm » » l algorithm needs to be safer but can afford to be slower Amplify by repetition! » Repeat base algorithm many times 22 MIT

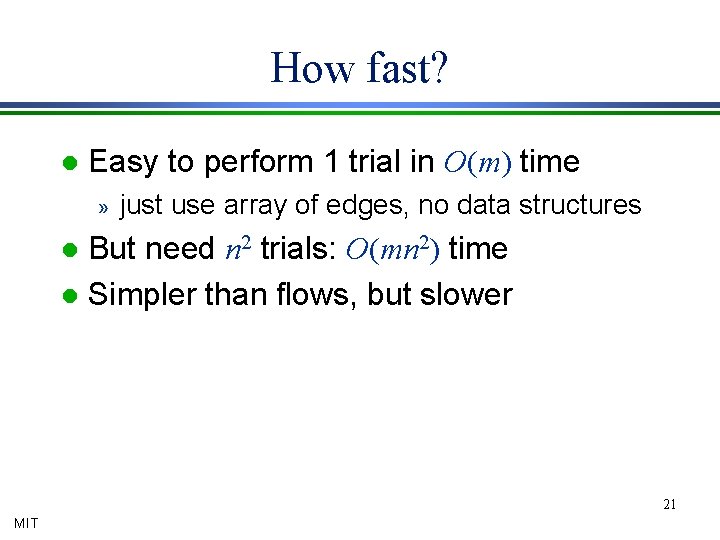

Recursive Algorithm RCA ( G, n ) {G has n vertices} repeat twice randomly contract G to n/2½ vertices RCA(G, n/21/2) (50 -50 chance of avoiding min-cut) 23 MIT

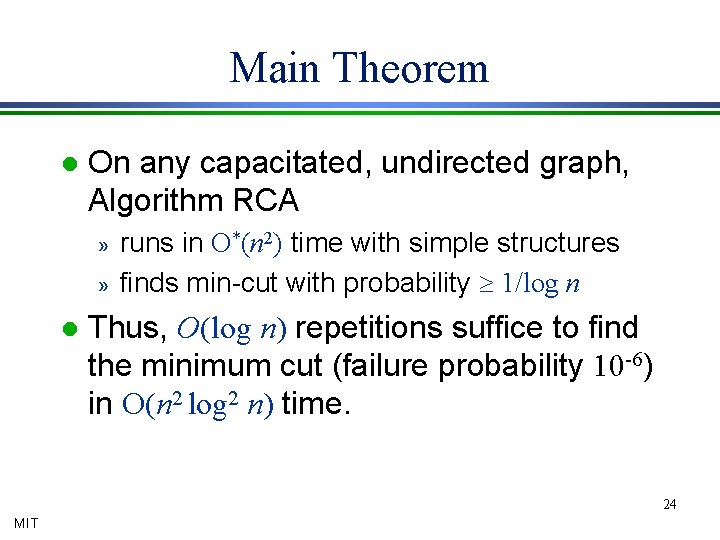

Main Theorem l On any capacitated, undirected graph, Algorithm RCA » » l runs in O*(n 2) time with simple structures finds min-cut with probability ³ 1/log n Thus, O(log n) repetitions suffice to find the minimum cut (failure probability 10 -6) in O(n 2 log 2 n) time. 24 MIT

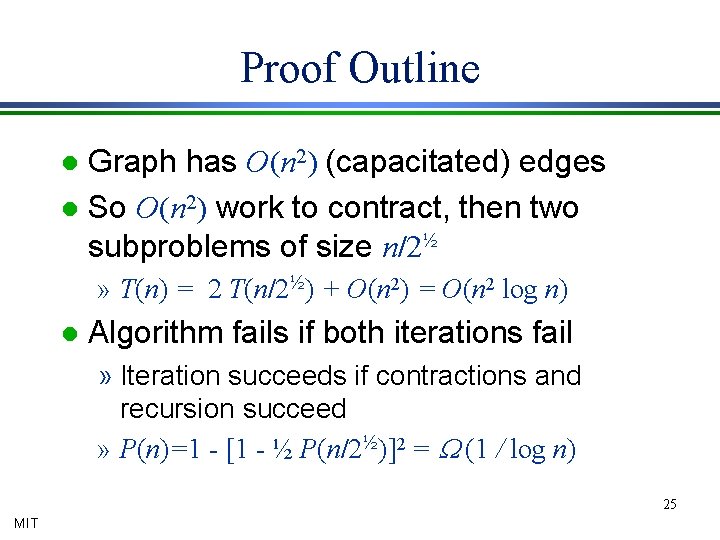

Proof Outline Graph has O(n 2) (capacitated) edges l So O(n 2) work to contract, then two subproblems of size n/2½ l » T(n) = 2 T(n/2½) + O(n 2) = O(n 2 log n) l Algorithm fails if both iterations fail » Iteration succeeds if contractions and recursion succeed » P(n)=1 - [1 - ½ P(n/2½)]2 = W (1 / log n) 25 MIT

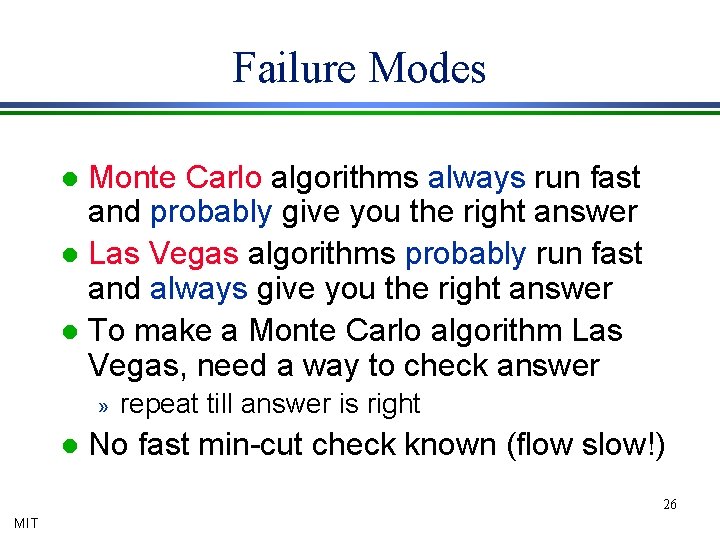

Failure Modes Monte Carlo algorithms always run fast and probably give you the right answer l Las Vegas algorithms probably run fast and always give you the right answer l To make a Monte Carlo algorithm Las Vegas, need a way to check answer l » l repeat till answer is right No fast min-cut check known (flow slow!) 26 MIT

How do we verify a minimum cut? MIT

Enumerating Cuts The probabilistic method, backwards MIT

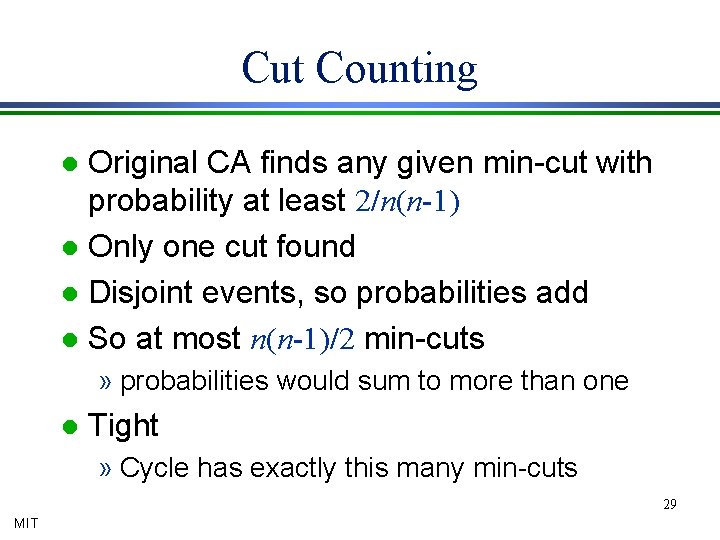

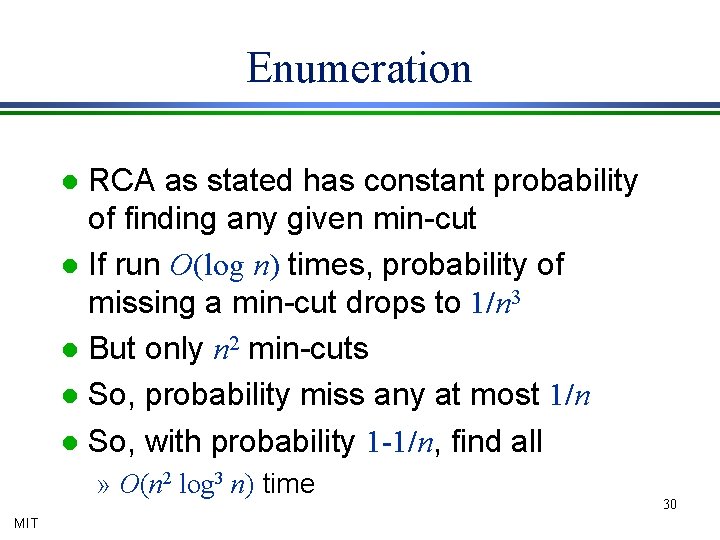

Cut Counting Original CA finds any given min-cut with probability at least 2/n(n-1) l Only one cut found l Disjoint events, so probabilities add l So at most n(n-1)/2 min-cuts l » probabilities would sum to more than one l Tight » Cycle has exactly this many min-cuts 29 MIT

Enumeration RCA as stated has constant probability of finding any given min-cut l If run O(log n) times, probability of missing a min-cut drops to 1/n 3 l But only n 2 min-cuts l So, probability miss any at most 1/n l So, with probability 1 -1/n, find all l » O(n 2 log 3 n) time MIT 30

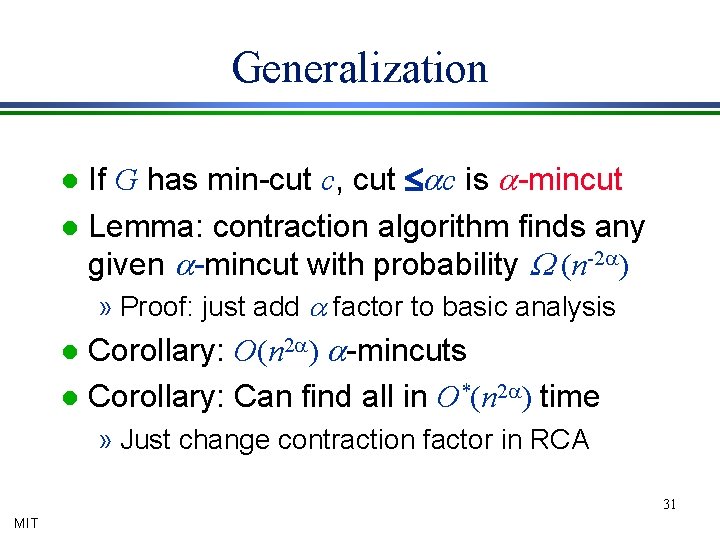

Generalization If G has min-cut c, cut £ac is a-mincut l Lemma: contraction algorithm finds any given a-mincut with probability W (n-2 a) l » Proof: just add a factor to basic analysis Corollary: O(n 2 a) a-mincuts l Corollary: Can find all in O*(n 2 a) time l » Just change contraction factor in RCA 31 MIT

Summary l A simple fast min-cut algorithm » Random selection avoids rare problems Generalization to near-minimum cuts l Bound on number of small cuts l » Probabilistic method, backwards 32 MIT

Random Sampling MIT

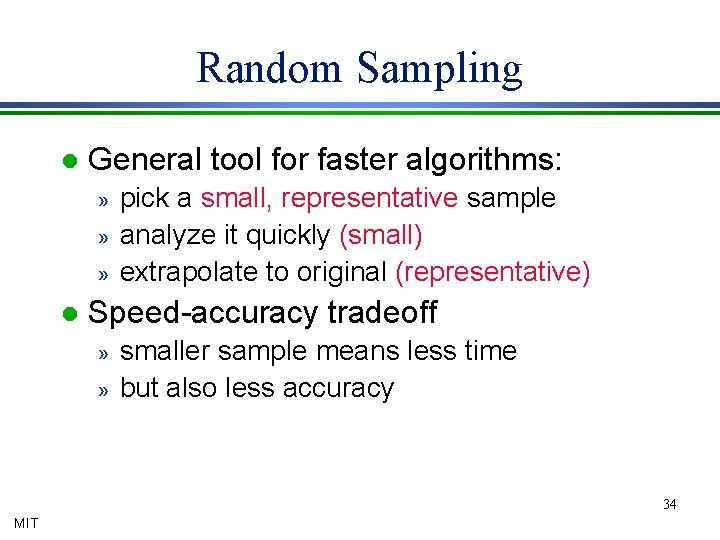

Random Sampling l General tool for faster algorithms: » » » l pick a small, representative sample analyze it quickly (small) extrapolate to original (representative) Speed-accuracy tradeoff » » smaller sample means less time but also less accuracy 34 MIT

A Polling Problem Population of size m l Subset of c red members l Goal: estimate c l Naïve method: check whole population l Faster method: sampling l » Choose random subset of population » Use relative frequency in sample as estimate for frequency in population 35 MIT

![Analysis: Chernoff Bound Random variables Xi Î [0, 1] l Sum X = å Analysis: Chernoff Bound Random variables Xi Î [0, 1] l Sum X = å](http://slidetodoc.com/presentation_image_h/9ee06c63ae71bcf92ea40f4db43478d6/image-36.jpg)

Analysis: Chernoff Bound Random variables Xi Î [0, 1] l Sum X = å Xi l Bound deviation from expectation l Pr[ |X-E[X]| ³ e E[X] ] < exp(-e 2 E[X] / 4) “Probably, X Î (1±e) E[X]” l If E[X] ³ 4(ln n)/e 2, “tight concentration” l » Deviation by e probability < 1 / n 36 MIT

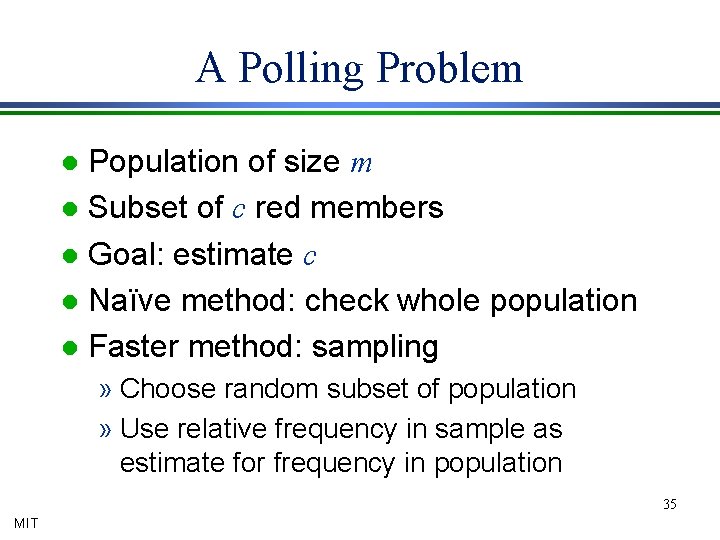

Application to Polling Choose each member with probability p l Let X be total number of reds seen l Then E[X]=pc l So estimate ĉ by X/p l Note ĉ accurate to within 1±e iff X is within 1±e of expectation: l ĉ = X/p Î (1±e) E[X]/p = (1±e) c 37 MIT

Analysis Let Xi=1 if ith red item chosen, else 0 l Then X= å Xi l Chernoff Bound applies » Pr[deviation by e] < exp(-e 2 pc/ 4) » < 1/n if pc > 4(log n)/e 2 l Pretty tight » if pc < 1, likely no red samples l » so no meaningful estimate 38 MIT

Sampling for Min-Cuts MIT

![Min-cut Duality l [Edmonds]: min-cut=max tree packing » » » l convert to directed Min-cut Duality l [Edmonds]: min-cut=max tree packing » » » l convert to directed](http://slidetodoc.com/presentation_image_h/9ee06c63ae71bcf92ea40f4db43478d6/image-40.jpg)

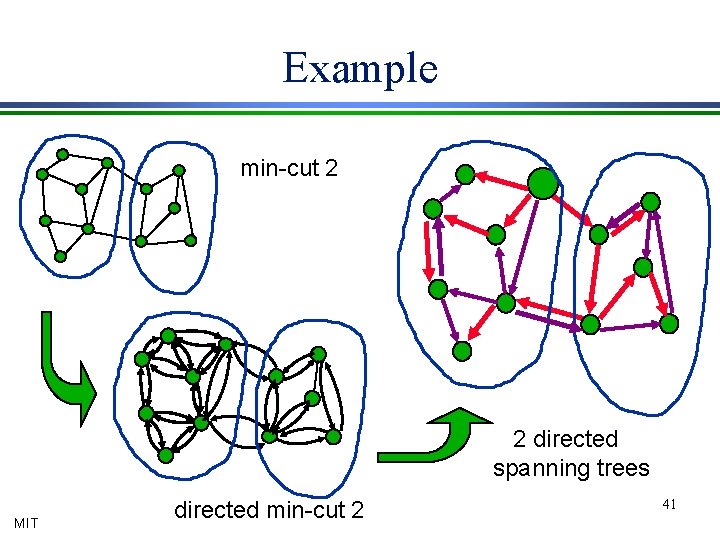

Min-cut Duality l [Edmonds]: min-cut=max tree packing » » » l convert to directed graph “source” vertex s (doesn’t matter which) spanning trees directed away from s [Gabow] “augmenting trees” » » » add a tree in O*(m) time min-cut c (via max packing) in O*(mc) great if m and c are small… 40 MIT

Example min-cut 2 2 directed spanning trees MIT directed min-cut 2 41

Random Sampling Gabow’s algorithm great if m, c small l Random sampling l » » » l reduces m, c scales cut values (in expectation) if pick half the edges, get half of each cut So find tree packings, cuts in samples Problem: maybe some large deviations 42 MIT

Sampling Theorem Given graph G, build a sample G(p) by including each edge with probability p l Cut of value v in G has expected value pv in G(p) l Definition: “constant” r = 8 (ln n) / e 2 l Theorem: With high probability, all exponentially many cuts in G(r / c) have (1 ± e) times their expected values. l 43 MIT

![A Simple Application [Gabow] packs trees in O*(mc) time l Build G(r / c) A Simple Application [Gabow] packs trees in O*(mc) time l Build G(r / c)](http://slidetodoc.com/presentation_image_h/9ee06c63ae71bcf92ea40f4db43478d6/image-44.jpg)

A Simple Application [Gabow] packs trees in O*(mc) time l Build G(r / c) l » » l minimum expected cut r by theorem, min-cut probably near r find min-cut in O*(r m) time using [Gabow] corresponds to near-min-cut in G Result: (1+e) times min-cut in O*(m/e 2) time 44 MIT

Proof of Sampling: Idea Chernoff bound says probability of large deviation in cut value is small l Problem: exponentially many cuts. Perhaps some deviate a great deal l Solution: showed few small cuts l » only small cuts likely to deviate much » but few, so Chernoff bound applies 45 MIT

Proof of Sampling l Sampled with probability r /c, » a cut of value ac has mean ar » [Chernoff]: deviates from expected size by more than e with probability at most n-3 a At most n 2 a cuts have value ac l Pr[any cut of value ac deviates] = O(n-a) l Sum over all a ³ 1 l 46 MIT

Las Vegas Algorithms Finding Good Certificates MIT

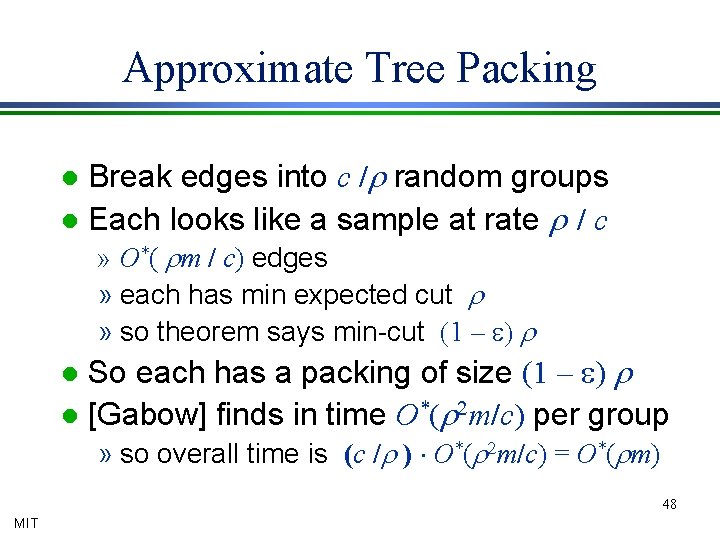

Approximate Tree Packing Break edges into c /r random groups l Each looks like a sample at rate r / c l » O*( rm / c) edges » each has min expected cut r » so theorem says min-cut (1 – e) r So each has a packing of size (1 – e) r l [Gabow] finds in time O*(r 2 m/c) per group l » so overall time is (c /r ) × O*(r 2 m/c) = O*(rm) 48 MIT

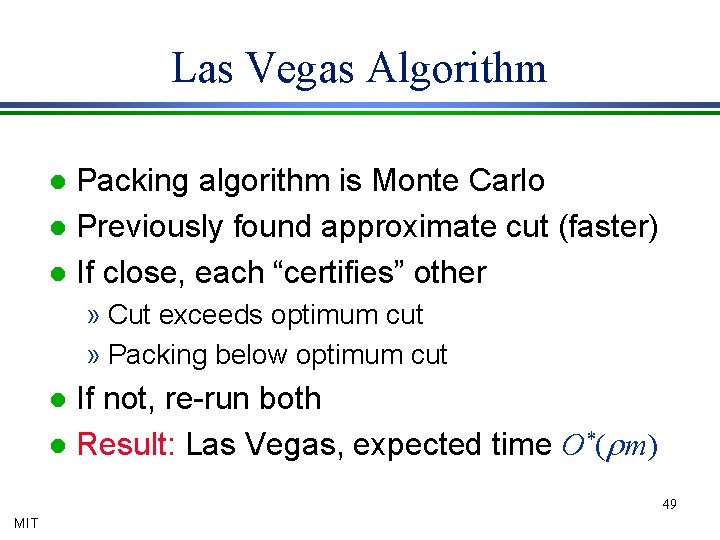

Las Vegas Algorithm Packing algorithm is Monte Carlo l Previously found approximate cut (faster) l If close, each “certifies” other l » Cut exceeds optimum cut » Packing below optimum cut If not, re-run both l Result: Las Vegas, expected time O*(rm) l 49 MIT

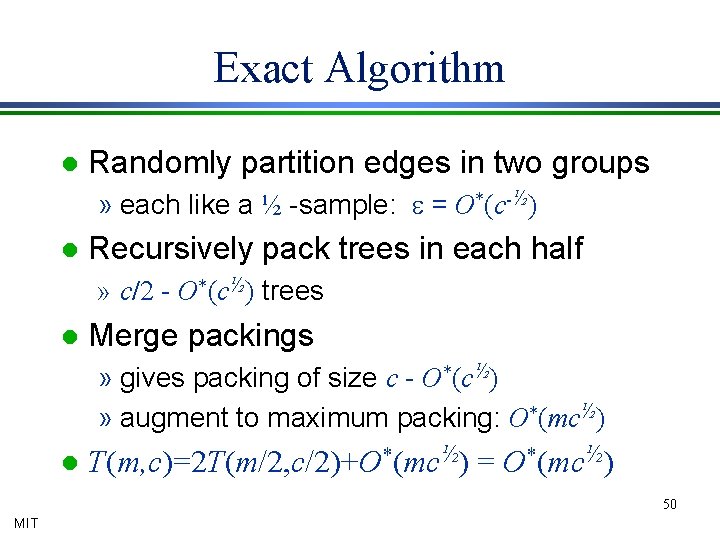

Exact Algorithm l Randomly partition edges in two groups » each like a ½ -sample: e = O*(c-½) l Recursively pack trees in each half » c/2 - O*(c½) trees l Merge packings » gives packing of size c - O*(c½) » augment to maximum packing: O*(mc½) l T(m, c)=2 T(m/2, c/2)+O*(mc½) = O*(mc½) 50 MIT

Nearly Linear Time MIT

![Analyze Trees Recall: [G] packs c (directed)-edge disjoint spanning trees l Corollary: in such Analyze Trees Recall: [G] packs c (directed)-edge disjoint spanning trees l Corollary: in such](http://slidetodoc.com/presentation_image_h/9ee06c63ae71bcf92ea40f4db43478d6/image-52.jpg)

Analyze Trees Recall: [G] packs c (directed)-edge disjoint spanning trees l Corollary: in such a packing, some tree crosses min-cut only twice l To find min-cut: l » find tree packing » find smallest cut with 2 tree edges crossing 52 MIT

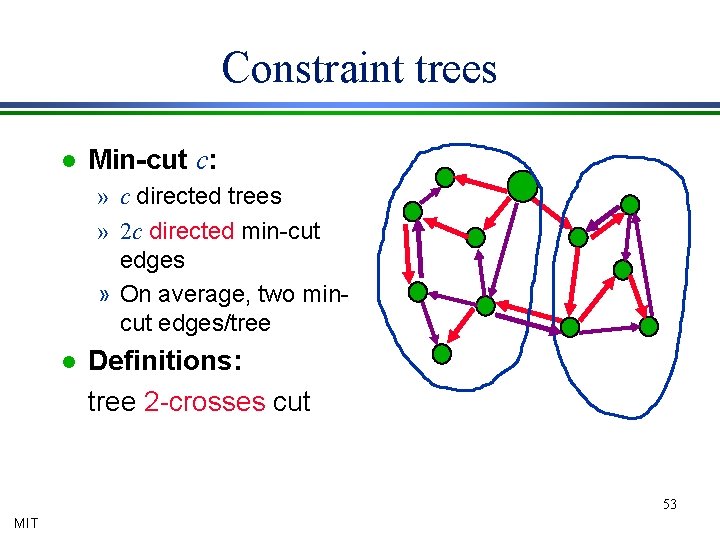

Constraint trees l Min-cut c: » c directed trees » 2 c directed min-cut edges » On average, two mincut edges/tree l Definitions: tree 2 -crosses cut 53 MIT

Finding the Cut l l l From crossing tree edges, deduce cut Remove tree edges No other edges cross So each component is on one side And opposite its “neighbor’s” side 54 MIT

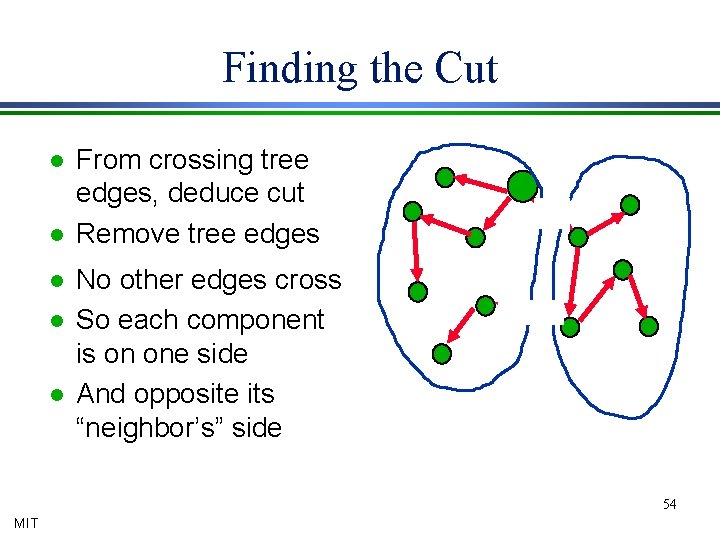

Two Problems l Packing trees takes too long » Gabow runtime is O*(mc) l Too many trees to check » Only claimed that one (of c) is good l Solution: sampling 55 MIT

Sampling l Use G(r/c) with e=1/8 » pack O*(r) trees in O*(m) time » original min-cut has (1+e)r edges in G(r / c) » some tree 2 -crosses it in G(r / c) » …and thus 2 -crosses it in G l Analyze O*(r) trees in G » time O*(m) per tree l Monte Carlo 56 MIT

Simple First Step Discuss case where one tree edge crosses min-cut MIT

Analyzing a tree Root tree, so cut subtree l Use dynamic program up from leaves to determine subtree cuts efficiently l Given cuts at children of a node, compute cut at parent l Definitions: l » v¯ are nodes below v » C(v¯) is value of cut at subtree v¯ 58 MIT

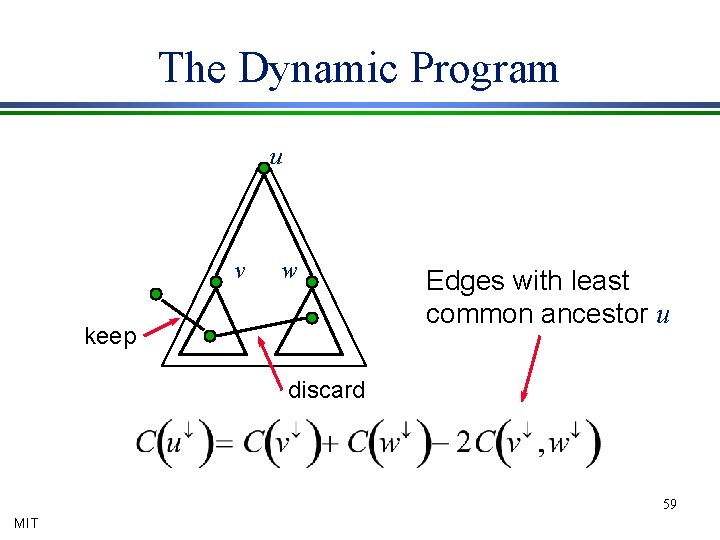

The Dynamic Program u v w keep Edges with least common ancestor u discard 59 MIT

Algorithm: 1 -Crossing Trees Compute edges’ LCA’s: O(m) l Compute “cuts” at leaves l » Cut values = degrees » each edge incident on at most two leaves » total time O(m) l Dynamic program upwards: O(n) Total: O(m+n) 60 MIT

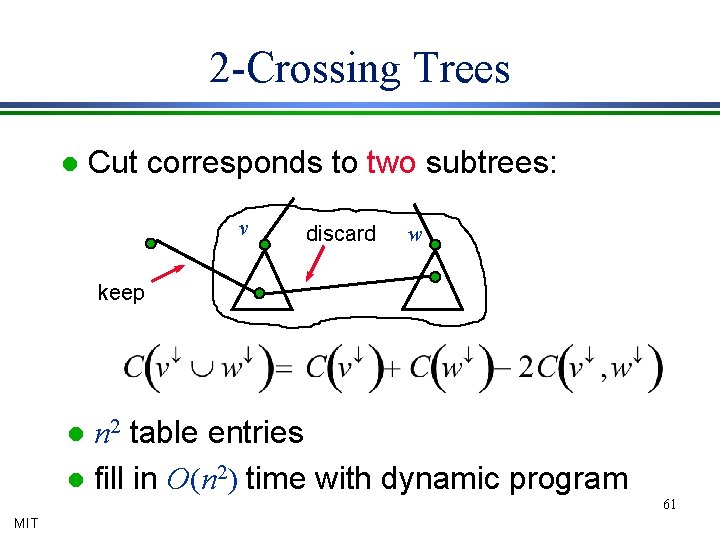

2 -Crossing Trees l Cut corresponds to two subtrees: v discard w keep n 2 table entries l fill in O(n 2) time with dynamic program l 61 MIT

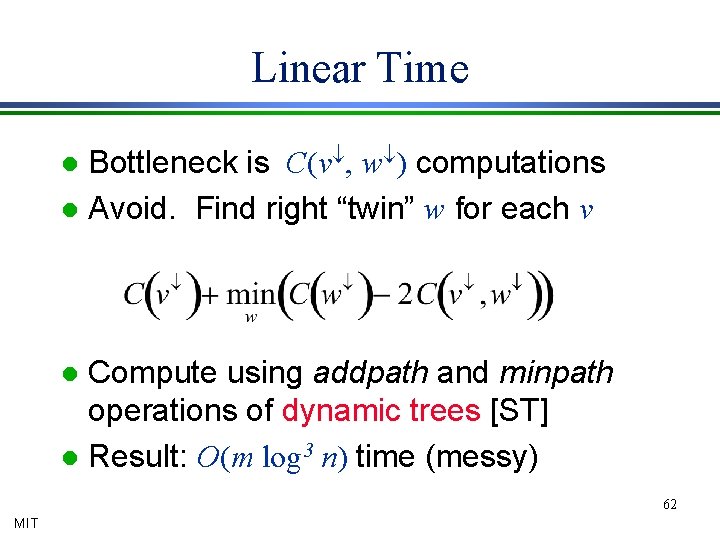

Linear Time Bottleneck is C(v¯, w¯) computations l Avoid. Find right “twin” w for each v l Compute using addpath and minpath operations of dynamic trees [ST] l Result: O(m log 3 n) time (messy) l 62 MIT

How do we verify a minimum cut? MIT

Network Design Randomized Rounding MIT

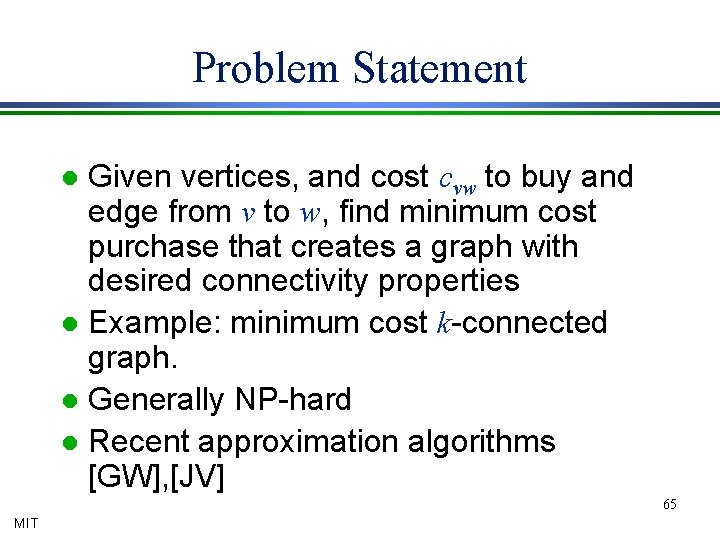

Problem Statement Given vertices, and cost cvw to buy and edge from v to w, find minimum cost purchase that creates a graph with desired connectivity properties l Example: minimum cost k-connected graph. l Generally NP-hard l Recent approximation algorithms [GW], [JV] l 65 MIT

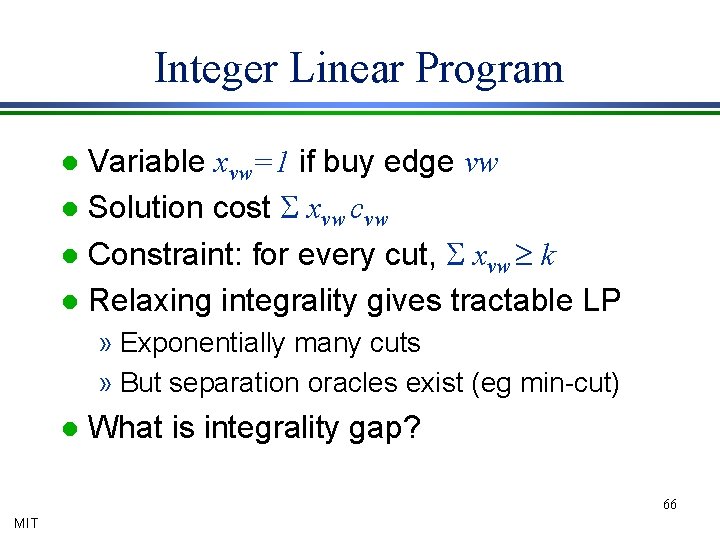

Integer Linear Program Variable xvw=1 if buy edge vw l Solution cost S xvw cvw l Constraint: for every cut, S xvw ³ k l Relaxing integrality gives tractable LP l » Exponentially many cuts » But separation oracles exist (eg min-cut) l What is integrality gap? 66 MIT

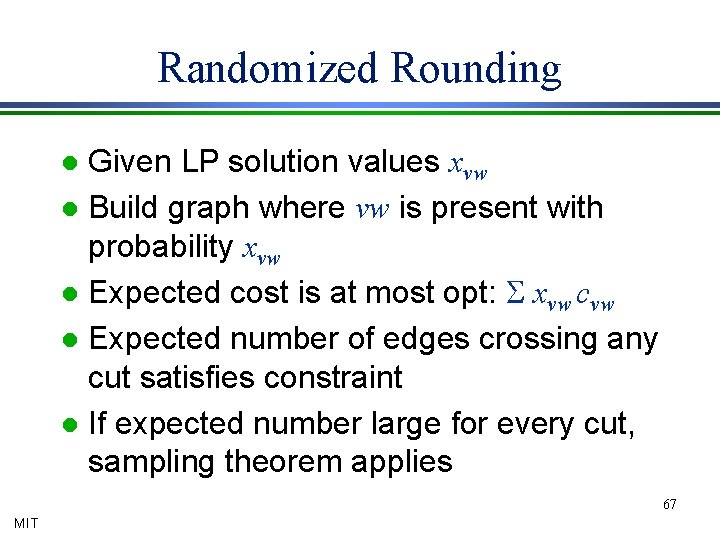

Randomized Rounding Given LP solution values xvw l Build graph where vw is present with probability xvw l Expected cost is at most opt: S xvw cvw l Expected number of edges crossing any cut satisfies constraint l If expected number large for every cut, sampling theorem applies l 67 MIT

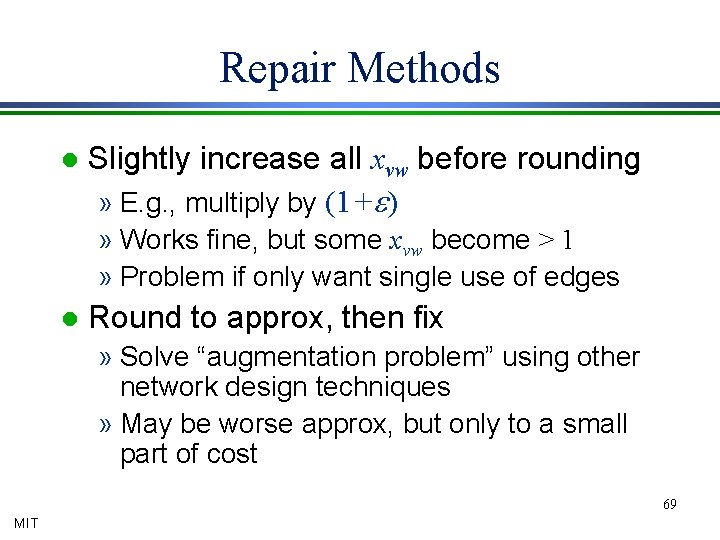

k-connected subgraph Fractional solution is k-connected l So every cut has (expected) k edges crossing in rounded solution l Sampling theorem says every cut has at least k-(k log n)1/2 edges l Close approximation for large k l Can often repair: e. g. , get k-connected subgraph at cost 1+((log n)/k)1/2 times min l 68 MIT

Repair Methods l Slightly increase all xvw before rounding » E. g. , multiply by (1+e) » Works fine, but some xvw become > 1 » Problem if only want single use of edges l Round to approx, then fix » Solve “augmentation problem” using other network design techniques » May be worse approx, but only to a small part of cost 69 MIT

![Nonuniform Sampling Concentrate on the important things [Benczur-Karger, Karger-Levine] MIT Nonuniform Sampling Concentrate on the important things [Benczur-Karger, Karger-Levine] MIT](http://slidetodoc.com/presentation_image_h/9ee06c63ae71bcf92ea40f4db43478d6/image-70.jpg)

Nonuniform Sampling Concentrate on the important things [Benczur-Karger, Karger-Levine] MIT

s-t Min-Cuts Recall: if G has min-cut c, then in G(r/c) all cuts approximate their expected values to within e. l Applications: l l MIT Min-cut in O*(mc) time [G] Approximate/exact in O*((m/c) c) =O*(m) s-t min-cut of value v in O*(mv) Approximate in O*(mv/c) time Trouble if c is small and v large. 71

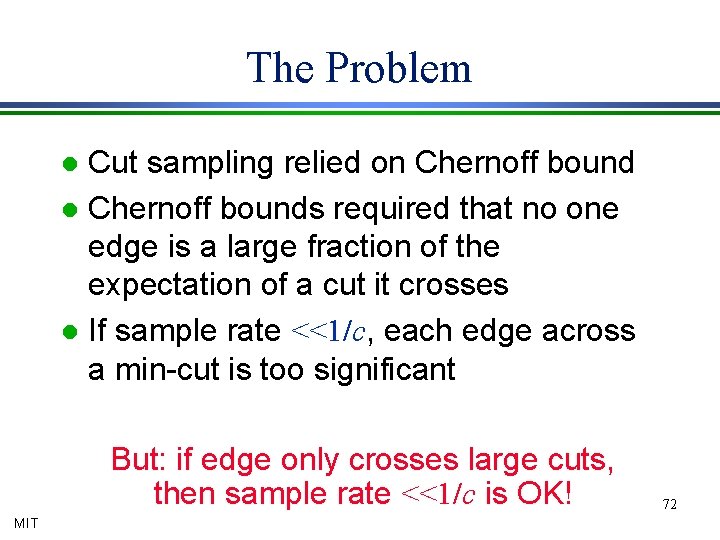

The Problem Cut sampling relied on Chernoff bound l Chernoff bounds required that no one edge is a large fraction of the expectation of a cut it crosses l If sample rate <<1/c, each edge across a min-cut is too significant l But: if edge only crosses large cuts, then sample rate <<1/c is OK! MIT 72

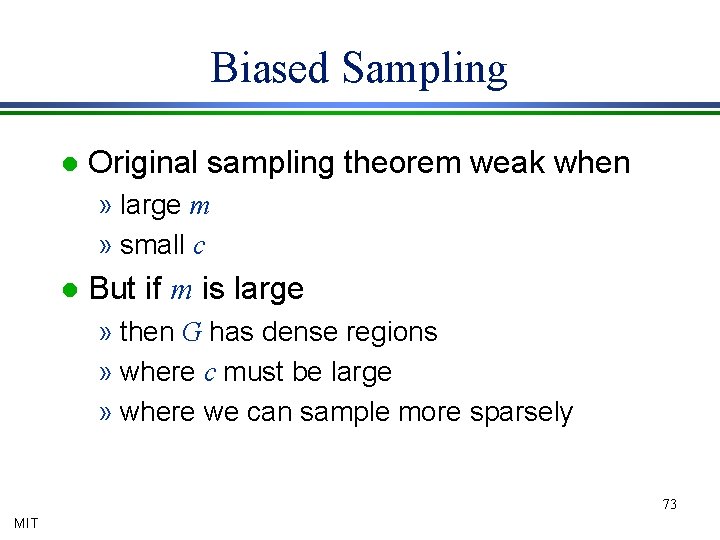

Biased Sampling l Original sampling theorem weak when » large m » small c l But if m is large » then G has dense regions » where c must be large » where we can sample more sparsely 73 MIT

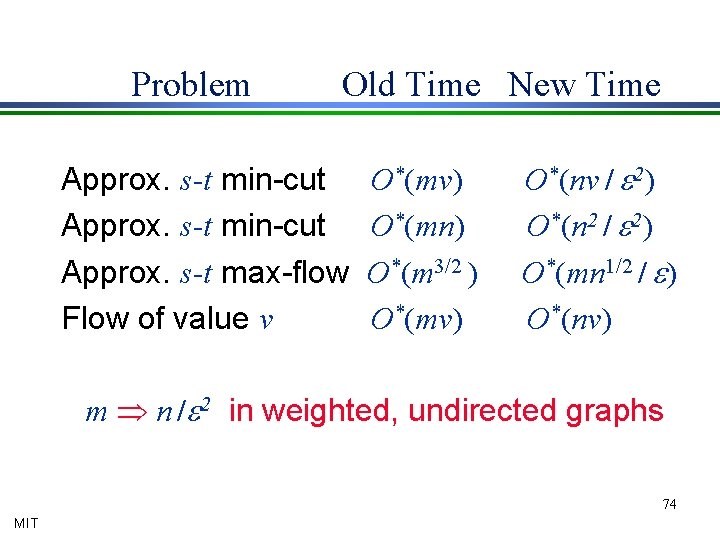

Problem Old Time New Time Approx. s-t min-cut Approx. s-t max-flow Flow of value v O*(mv) O*(mn) O*(m 3/2 ) O*(mv) O*(nv / e 2) O*(n 2 / e 2) O*(mn 1/2 / e) O*(nv) m Þ n /e 2 in weighted, undirected graphs 74 MIT

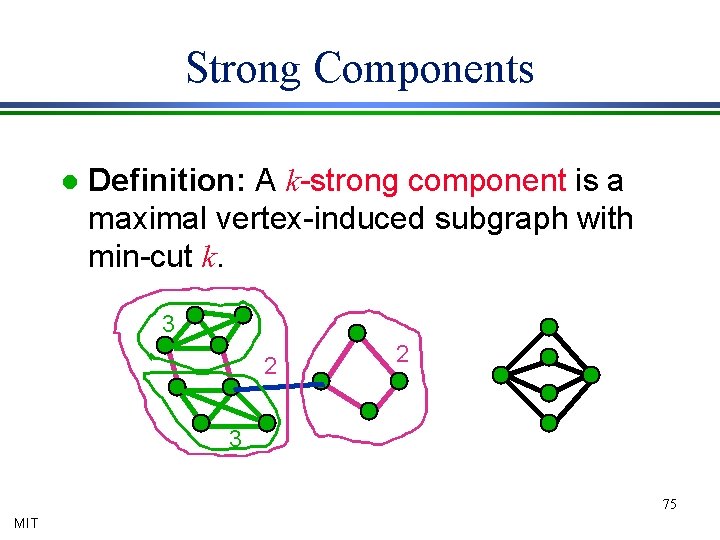

Strong Components l Definition: A k-strong component is a maximal vertex-induced subgraph with min-cut k. 3 2 2 3 75 MIT

Nonuniform Sampling Definition: An edge is k-strong if its endpoints are in same k-component. l Stricter than k-connected endpoints. l Definition: The strong connectivity ce for edge e is the largest k for which e is k -strong. l Plan: sample dense regions lightly l 76 MIT

Nonuniform Sampling Idea: if an edge is k-strong, then it is in a k-connected graph l So “safe” to sample with probability 1/k l Problem: if sample edges with different probabilities, E[cut value] gets messy l Solution: if sample e with probability pe, give it weight 1/pe l Then E[cut value]=original cut value l 77 MIT

![Compression Theorem Definition: Given compression probabilities pe, compressed graph G[pe] » includes edge e Compression Theorem Definition: Given compression probabilities pe, compressed graph G[pe] » includes edge e](http://slidetodoc.com/presentation_image_h/9ee06c63ae71bcf92ea40f4db43478d6/image-78.jpg)

Compression Theorem Definition: Given compression probabilities pe, compressed graph G[pe] » includes edge e with probability pe and » gives it weight 1/pe if included Note E[G[pe]] = G Theorem: G[r / ce] l » approximates all cuts by e » has O (rn) edges 78 MIT

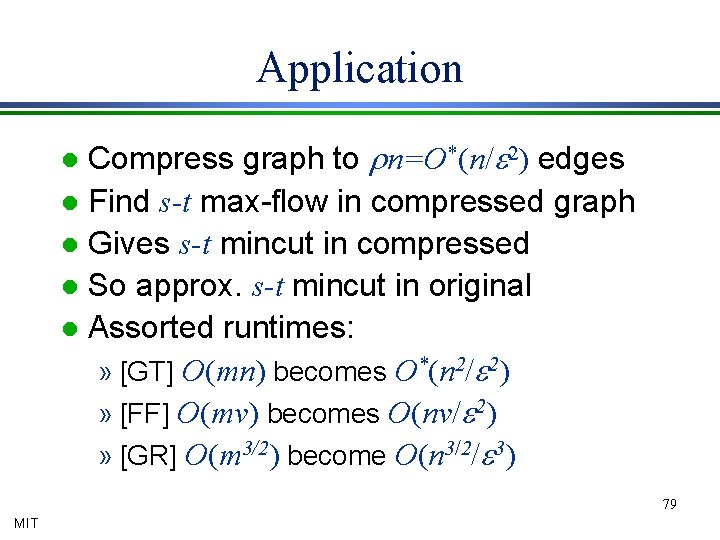

Application Compress graph to rn=O*(n/e 2) edges l Find s-t max-flow in compressed graph l Gives s-t mincut in compressed l So approx. s-t mincut in original l Assorted runtimes: » [GT] O(mn) becomes O*(n 2/e 2) » [FF] O(mv) becomes O(nv/e 2) » [GR] O(m 3/2) become O(n 3/2/e 3) l 79 MIT

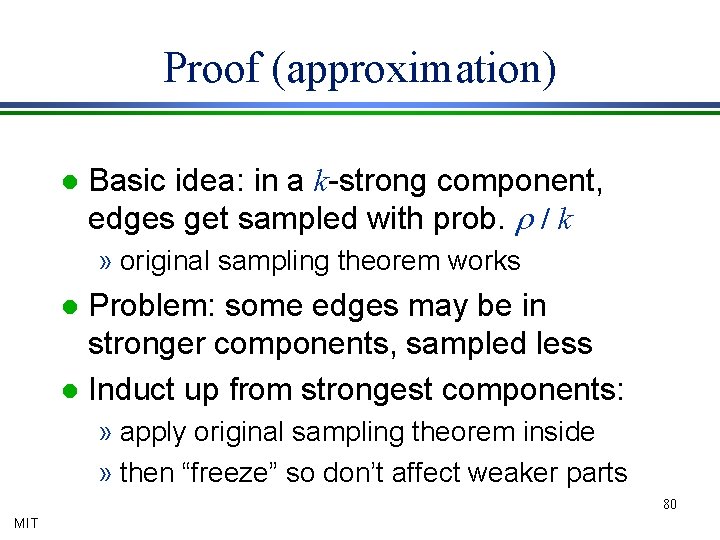

Proof (approximation) l Basic idea: in a k-strong component, edges get sampled with prob. r / k » original sampling theorem works Problem: some edges may be in stronger components, sampled less l Induct up from strongest components: l » apply original sampling theorem inside » then “freeze” so don’t affect weaker parts 80 MIT

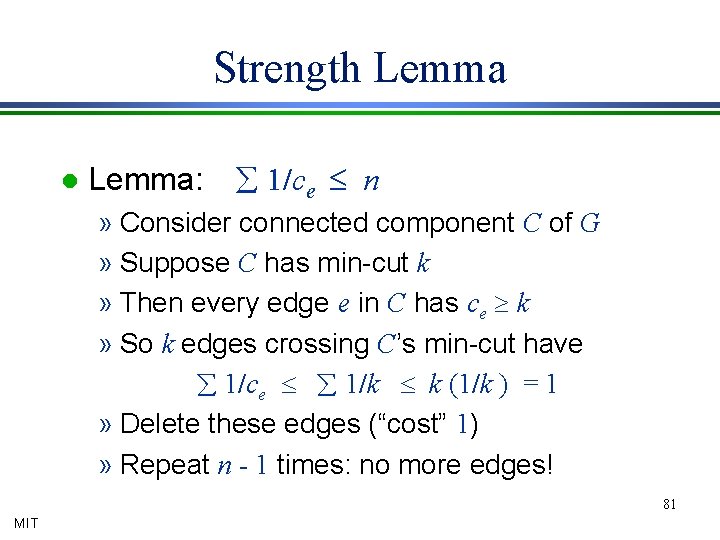

Strength Lemma l Lemma: å 1/ce £ n » Consider connected component C of G » Suppose C has min-cut k » Then every edge e in C has ce ³ k » So k edges crossing C’s min-cut have å 1/ce £ å 1/k £ k (1/k ) = 1 » Delete these edges (“cost” 1) » Repeat n - 1 times: no more edges! 81 MIT

Proof (edge count) l Edge e included with probability r / ce l So expected number is S r / ce We saw S 1/ce £ n l So expected number at most r n l 82 MIT

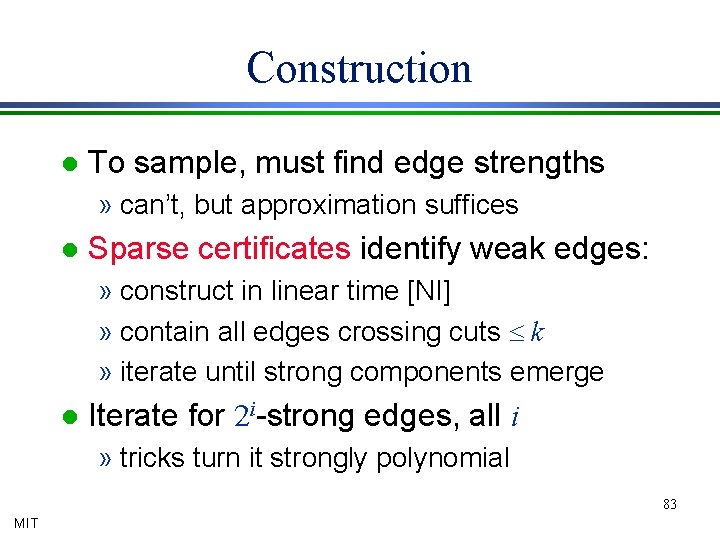

Construction l To sample, must find edge strengths » can’t, but approximation suffices l Sparse certificates identify weak edges: » construct in linear time [NI] » contain all edges crossing cuts £ k » iterate until strong components emerge l Iterate for 2 i-strong edges, all i » tricks turn it strongly polynomial 83 MIT

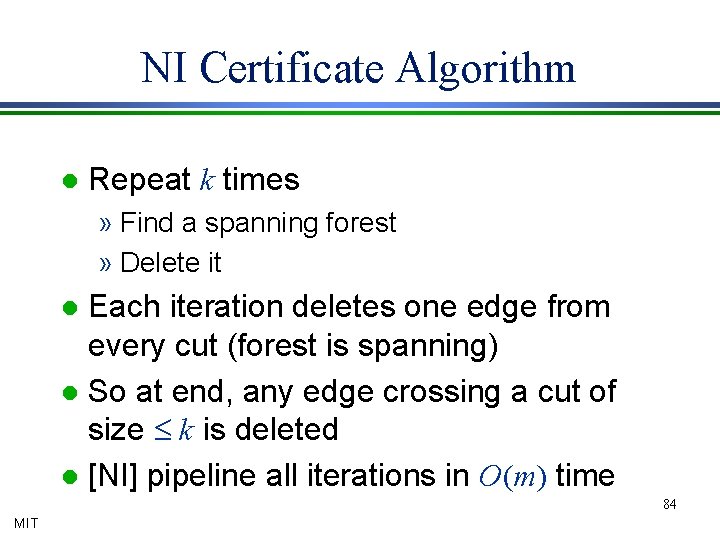

NI Certificate Algorithm l Repeat k times » Find a spanning forest » Delete it Each iteration deletes one edge from every cut (forest is spanning) l So at end, any edge crossing a cut of size £ k is deleted l [NI] pipeline all iterations in O(m) time l 84 MIT

Approximate Flows l Uniform sampling led to tree algorithms » Randomly partition edges » Merge trees from each partition element l Compression problematic for flow » Edge capacities changed » So flow path capacities distorted » Flow in compressed graph doesn’t fit in original graph 85 MIT

Smoothing l If edge has strength ce, divide into br / ce edges of capacity ce /br » Creates br å 1/ce = brn edges Now each edge is only 1/br fraction of any cut of its strong component l So sampling a 1/b fraction works l So dividing into b groups works l Yields (1 -e) max-flow in O*(mn 1/2 / e) time l 86 MIT

Exact Flow Algorithms Sampling from residual graphs MIT

Residual Graphs Sampling can be used to approximate cuts and flows l A non-maximum flow can be made maximum by augmenting paths l But residual graph is directed. l Can sampling help? l » Yes, to a limited extent 88 MIT

First Try Suppose current flow value f » residual flow value v-f l Lemma: if all edges sampled with probability rv/c(v-f) then, w. h. p. , all directed cuts within e of expectations l » Original undirected sampling used r/c Expectations nonzero, so no empty cut l So, some augmenting path exists l 89 MIT

Application When residual flow i, seek augmenting path in a sample of mrv/ic edges. Time O(mrv/ic). l Sum over all i from v down to 1 l Total O(mrv (log v)/c) since S 1/i=O(log v) l Here, e can be any constant < 1 (say ½) l So r=O(log n) l Overall runtime O*(mv/c) l 90 MIT

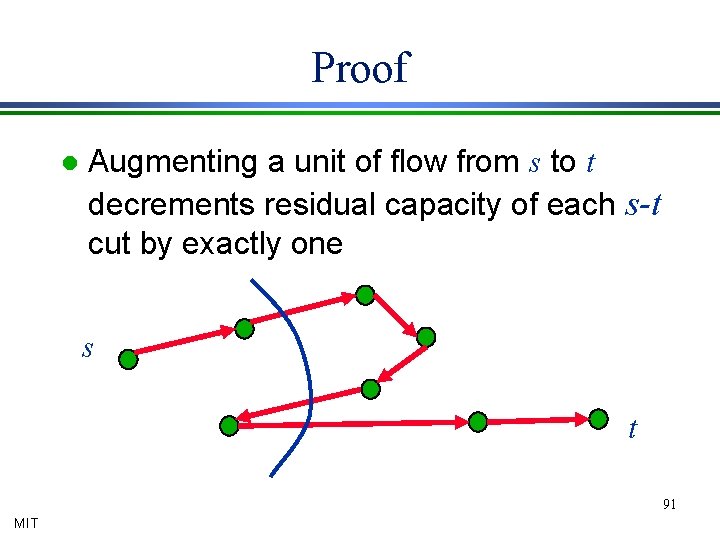

Proof l Augmenting a unit of flow from s to t decrements residual capacity of each s-t cut by exactly one s t 91 MIT

Analysis Each s-t cut loses f edges, had at least v l So, has at least ( v-f ) / v times as many edges as before l But we increase sampling probability by a factor of v / ( v-f ) l So expected number of sampled edges no worse than before l So Chernoff and union bound as before l 92 MIT

Strong Connectivity Drawback of previous: dependence on minimum cut c l Solution: use strong connectivities l Initialize a=1 l Repeat until done » Sample edges with probabilities ar / ke l » Look for augmenting path » If don’t find, double a 93 MIT

Analysis Theorem: if sample with probabilities ar/ke, and a > v/(v-f), then will find augmenting path w. h. p. l Runtime: l » a always within a factor of 2 of “right” v/(v-f) » As in compression, edge count O(a r n) » So runtime O(r n Siv/i)=O*(nv) 94 MIT

Summary Nonuniform sampling for cuts and flows l Approximate cuts in O(n 2) time l » for arbitrary flow value l Max flow in O(nv) time » only useful for “small” flow value » but does work for weighted graphs » large flow open 95 MIT

Network Reliability Monte Carlo estimation MIT

The Problem l Input: » Graph G with n vertices » Edge failure probabilities – For exposition, fix a single p l Output: » FAIL(p): probability G is disconnected by edge failures 97 MIT

![Approximation Algorithms Computing FAIL(p) is #P complete [V] l Exact algorithm seems unlikely l Approximation Algorithms Computing FAIL(p) is #P complete [V] l Exact algorithm seems unlikely l](http://slidetodoc.com/presentation_image_h/9ee06c63ae71bcf92ea40f4db43478d6/image-98.jpg)

Approximation Algorithms Computing FAIL(p) is #P complete [V] l Exact algorithm seems unlikely l Approximation scheme l » Given G, p, e, outputs e-approximation » May be randomized: – succeed with high probability » Fully polynomial (FPRAS) if runtime is polynomial in n, 1/e 98 MIT

Monte Carlo Simulation Flip a coin for each edge, test graph l k failures in t trials Þ FAIL(p) » k/t l E[k/t] = FAIL(p) l How many trials needed for confidence? l » “bad luck” on trials can yield bad estimate » clearly need at least 1/FAIL(p) l MIT Chernoff bound: O*(1/e 2 FAIL(p)) suffice to give probable accuracy within e » Time O*(m/e 2 FAIL(p)) 99

![Chernoff Bound Random variables Xi Î [0, 1] l Sum X = å Xi Chernoff Bound Random variables Xi Î [0, 1] l Sum X = å Xi](http://slidetodoc.com/presentation_image_h/9ee06c63ae71bcf92ea40f4db43478d6/image-100.jpg)

Chernoff Bound Random variables Xi Î [0, 1] l Sum X = å Xi l Bound deviation from expectation l Pr[ |X-E[X]| ³ e E[X] ] < exp(-e 2 E[X] / 4) l If E[X] ³ 4(log n)/e 2, “tight concentration” » Deviation by e probability < 1 / n l No one variable is a big part of E[X] 100 MIT

Application Let Xi=1 if trial i is a failure, else 0 l Let X = X 1 + … + Xt l Then E[X] = t · FAIL(p) l Chernoff says X within relative e of E[X] with probability 1 -exp(e 2 t FAIL(p)/4) l So choose t to cancel other terms l » “High probability” t = O(log n / e 2 FAIL(p)) » Deviation by e with probability < 1 / n 101 MIT

![For network reliability l Random edge failures » Estimate FAIL(p) = Pr[graph disconnects] l For network reliability l Random edge failures » Estimate FAIL(p) = Pr[graph disconnects] l](http://slidetodoc.com/presentation_image_h/9ee06c63ae71bcf92ea40f4db43478d6/image-102.jpg)

For network reliability l Random edge failures » Estimate FAIL(p) = Pr[graph disconnects] l Naïve Monte Carlo simulation » Chernoff bound---“tight concentration” Pr[ |X-E[X]| £ e E[X] ] < exp(-e 2 E[X] / 4) » O(log n / e 2 FAIL(p)) trials expect O(log n / e 2) network failures---sufficient for Chernoff » So estimate within e in O*(m/e 2 FAIL(p)) time 102 MIT

Rare Events When FAIL(p) too small, takes too long to collect sufficient statistics l Solution: skew trials to make interesting event more likely l But in a way that let’s you recover original probability l 103 MIT

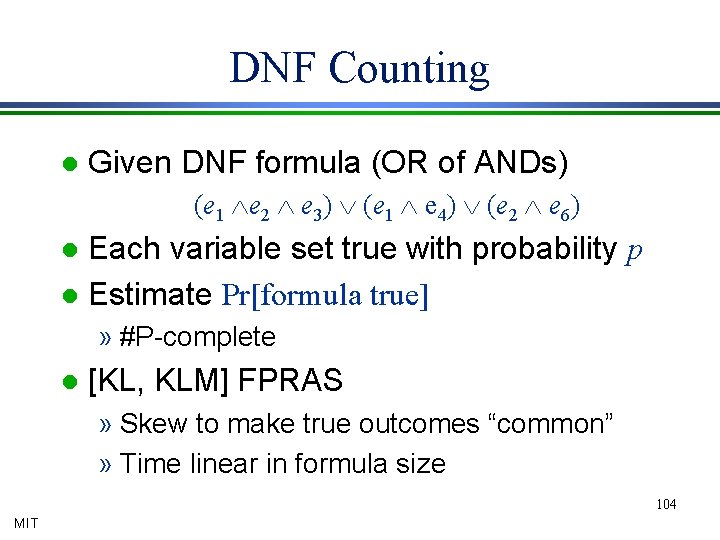

DNF Counting l Given DNF formula (OR of ANDs) (e 1 Ùe 2 Ù e 3) Ú (e 1 Ù e 4) Ú (e 2 Ù e 6) Each variable set true with probability p l Estimate Pr[formula true] l » #P-complete l [KL, KLM] FPRAS » Skew to make true outcomes “common” » Time linear in formula size 104 MIT

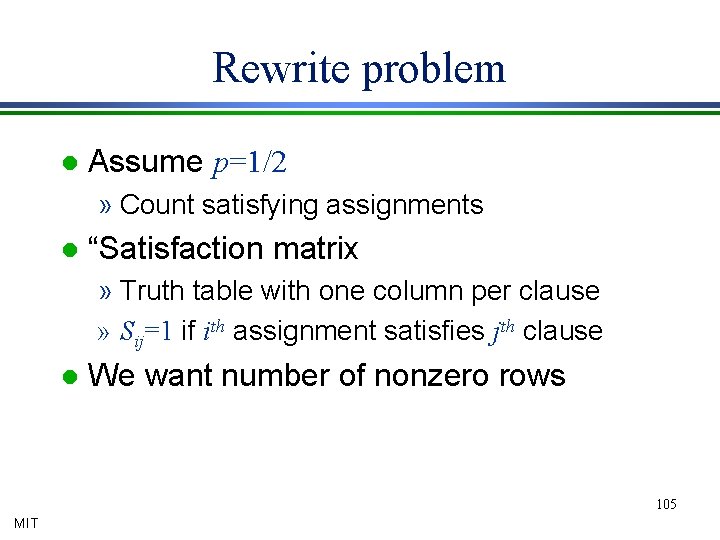

Rewrite problem l Assume p=1/2 » Count satisfying assignments l “Satisfaction matrix » Truth table with one column per clause » Sij=1 if ith assignment satisfies jth clause l We want number of nonzero rows 105 MIT

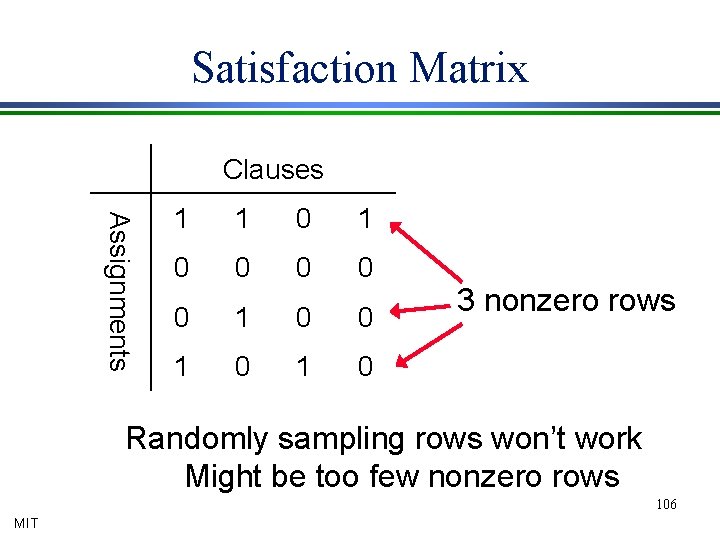

Satisfaction Matrix Clauses Assignments 1 1 0 0 0 1 0 3 nonzero rows Randomly sampling rows won’t work Might be too few nonzero rows 106 MIT

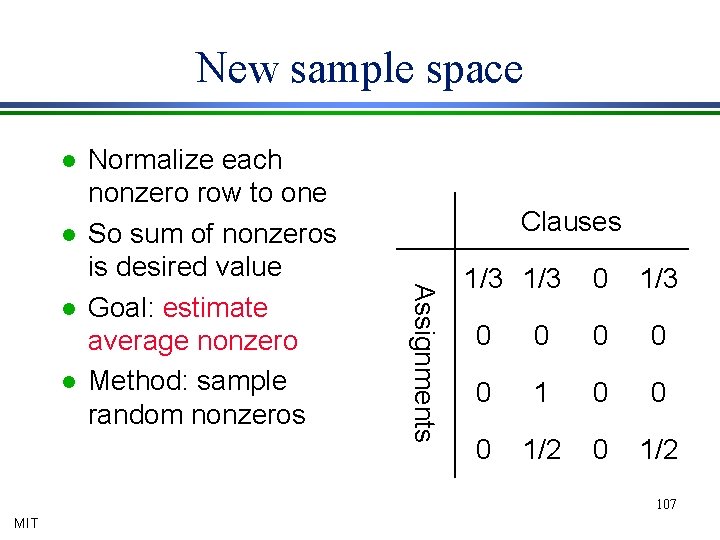

New sample space l l l Clauses Assignments l Normalize each nonzero row to one So sum of nonzeros is desired value Goal: estimate average nonzero Method: sample random nonzeros 1/3 0 0 0 1 0 0 0 1/2 107 MIT

Sampling Nonzeros l We know number of nonzeros/column » If satisfy given clause, all variables in clause must be true » All other variables unconstrained l Estimate average by random sampling » Know number of nonzeros/column » So can pick random column » Then pick random true-for-column assignment 108 MIT

![Few Samples Needed Suppose k clauses l Then E[sample] > 1/k l » 1 Few Samples Needed Suppose k clauses l Then E[sample] > 1/k l » 1](http://slidetodoc.com/presentation_image_h/9ee06c63ae71bcf92ea40f4db43478d6/image-109.jpg)

Few Samples Needed Suppose k clauses l Then E[sample] > 1/k l » 1 £ satisfied clauses £ k » 1 ³ sample value ³ 1/k Adding O(k log n / e 2) samples gives “large” mean l So Chernoff says sample mean is probably good estimate l 109 MIT

Reliability Connection l Reliability as DNF counting: » Variable per edge, true if edge fails » Cut fails if all edges do (AND of edge vars) » Graph fails if some cut does (OR of cuts) » FAIL(p)=Pr[formula true] Problem: the DNF has 2 n clauses 110 MIT

Focus on Small Cuts Fact: FAIL(p) > pc l Theorem: if pc=1/n(2+d) then Pr[>a-mincut fails]< n-ad l Corollary: FAIL(p) » Pr[£ a-mincut fails], where a=1+2/d l Recall: O(n 2 a) a-mincuts l Enumerate with RCA, run DNF counting l 111 MIT

Review l Contraction Algorithm » O(n 2 a) a-mincuts » Enumerate in O*(n 2 a) time 112 MIT

Proof of Theorem Given pc=1/n(2+d) l At most n 2 a cuts have value ac l Each fails with probability pac=1/na(2+d) l Pr[any cut of value ac fails] = O(n-ad) l Sum over all a > 1 l 113 MIT

Algorithm RCA can enumerate all a-minimum cuts with high probability in O(n 2 a) time. l Given a-minimum cuts, can e-estimate probability one fails via Monte Carlo simulation for DNF-counting (formula size O(n 2 a)) l Corollary: when FAIL(p)< n-(2+d), can e-approximate it in O (cn 2+4/d) time l 114 MIT

Combine For large FAIL(p), naïve Monte Carlo l For small FAIL(p), RCA/DNF counting l Balance: e-approx. in O(mn 3. 5/e 2) time l Implementations show practical for hundreds of nodes l Again, no way to verify correct l 115 MIT

Summary Naïve Monte Carlo simulation works well for common events l Need to adapt for rare events l Cut structure and DNF counting lets us do this for network reliability l 116 MIT

Conclusions MIT

Conclusion Randomization is a crucial tool for algorithm design l Often yields algorithms that are faster or simpler than traditional counterparts l In particular, gives significant improvements for core problems in graph algorithms l 118 MIT

Randomized Methods l Random selection » l Monte Carlo simulation » l » MIT simulations estimate event likelihoods Random sampling » l if most candidate choices “good”, then a random choice is probably good generate a small random subproblem solve, extrapolate to whole problem Randomized Rounding for approximation 119

Random Selection When most choices good, do one at random l Recursive contraction algorithm for minimum cuts l » Extremely simple (also to implement) » Fast in theory and in practice [CGKLS] 120 MIT

Monte Carlo To estimate event likelihood, run trials l Slow for very rare events l Bias samples to reveal rare event l FPRAS for network reliability l 121 MIT

Random Sampling Generate representative subproblem l Use it to estimate solution to whole l » Gives approximate solution » May be quickly repaired to exact solution Bias sample toward “important” or “sensitive” parts of problem l New max-flow and min-cut algorithms l 122 MIT

Randomized Rounding Convert fractional to integral solutions l Get approximation algorithms for integer programs l “Sampling” from a well designed sample space of feasible solutions l Good approximations for network design. l 123 MIT

Generalization Our techniques work because undirected graph are matroids l All our results extend/are special cases l » Packing bases » Finding minimum “quotients” » Matroid optimization (MST) 124 MIT

Directed Graphs? Directed graphs are not matroids l Directed graphs can have lots of minimum cuts l Sampling doesn’t appear to work l 125 MIT

Open problems l Flow in O(n 2) time » Eliminate v dependence » Apply to weighted graphs with large flows » Flow in O(m) time? l Las Vegas algorithms » Finding good certificates l Detrministic algorithms » Deterministic construction of “samples” » Deterministically compress a graph 126 MIT

Randomization in Graph Optimization Problems David Karger MIT http: //theory. lcs. mit. edu/~karger@mit. edu MIT

- Slides: 127