RANDOM VARIABLES EXPECTATIONS VARIANCES ETC 1 Variable Recall

RANDOM VARIABLES, EXPECTATIONS, VARIANCES ETC. 1

Variable • Recall: • Variable: A characteristic of population or sample that is of interest for us. • Random variable: A function defined on the sample space S that associates a real number with each outcome in S. 2

Random Variables • If X is a function that assigns a real numbered value to every possible event in a sample space of interest, X is called a random variable. • It is denoted by capital letters such as X, Y and Z. • The specified value of the random variable is unknown until the experimental outcome is observed. 3

EXAMPLES • The experiment of flipping a fair coin. Outcome of the flip is a random variable. S={H, T} X(H)=1 and X(T)=0 • Select a student at random from all registered students at METU. We want to know the weight of these students. X = the weight of the selected student S: {x: 45 kg X 300 kg} 4

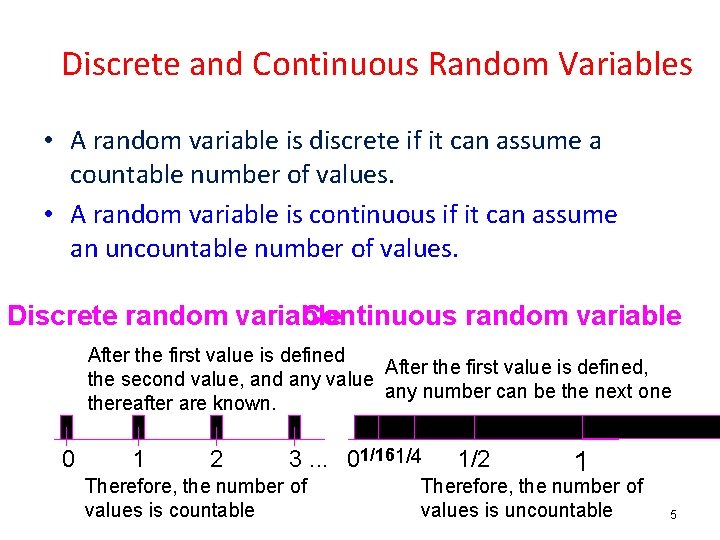

Discrete and Continuous Random Variables • A random variable is discrete if it can assume a countable number of values. • A random variable is continuous if it can assume an uncountable number of values. Discrete random variable Continuous random variable After the first value is defined, the second value, and any value any number can be the next one thereafter are known. 0 1 2 3. . . 01/161/4 Therefore, the number of values is countable 1/2 1 Therefore, the number of values is uncountable 5

DISCRETE RANDOM VARIABLES • If the set of all possible values of a r. v. X is a countable set, then X is called discrete r. v. • The function f(x)=P(X=x) for x=x 1, x 2, … that assigns the probability to each value x is called the probability mass function (p. m. f. ) 6

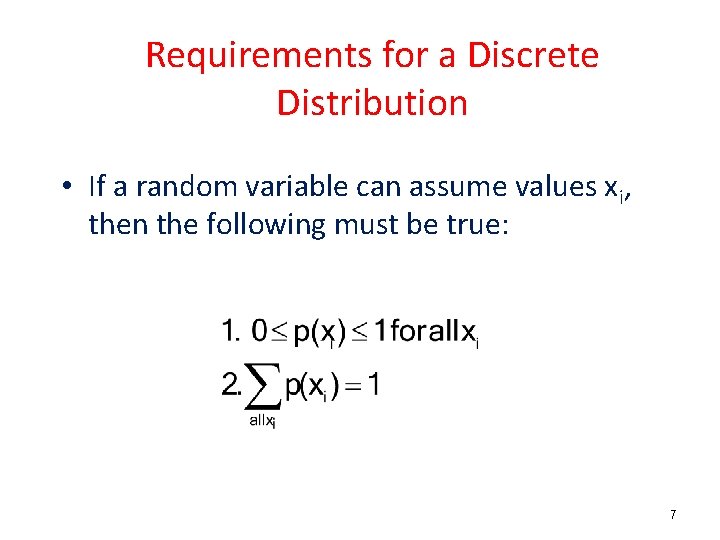

Requirements for a Discrete Distribution • If a random variable can assume values xi, then the following must be true: 7

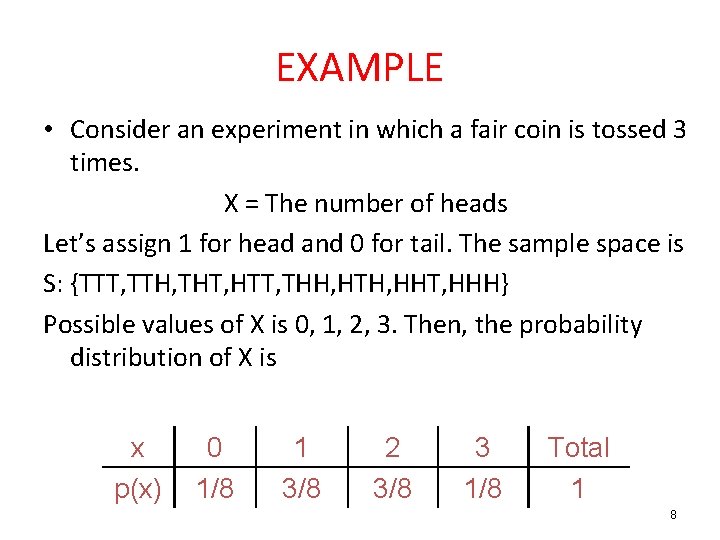

EXAMPLE • Consider an experiment in which a fair coin is tossed 3 times. X = The number of heads Let’s assign 1 for head and 0 for tail. The sample space is S: {TTT, TTH, THT, HTT, THH, HTH, HHT, HHH} Possible values of X is 0, 1, 2, 3. Then, the probability distribution of X is x p(x) 0 1/8 1 3/8 2 3/8 3 1/8 Total 1 8

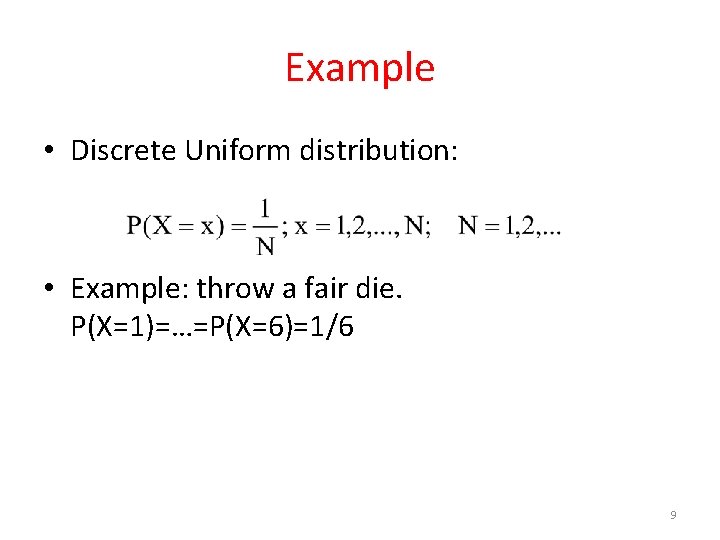

Example • Discrete Uniform distribution: • Example: throw a fair die. P(X=1)=…=P(X=6)=1/6 9

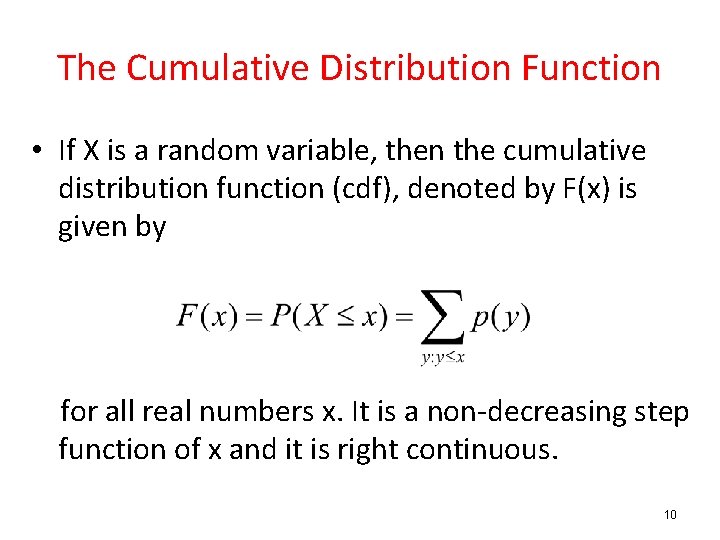

The Cumulative Distribution Function • If X is a random variable, then the cumulative distribution function (cdf), denoted by F(x) is given by for all real numbers x. It is a non-decreasing step function of x and it is right continuous. 10

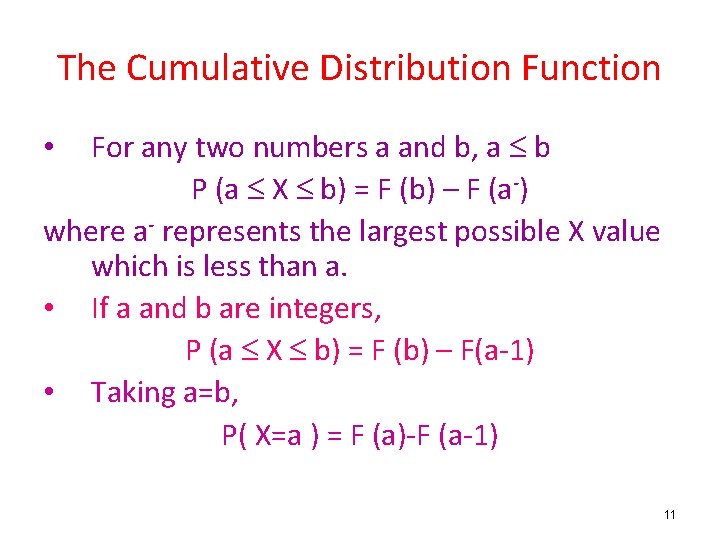

The Cumulative Distribution Function For any two numbers a and b, a b P (a X b) = F (b) – F (a-) where a- represents the largest possible X value which is less than a. • If a and b are integers, P (a X b) = F (b) – F(a-1) • Taking a=b, P( X=a ) = F (a)-F (a-1) • 11

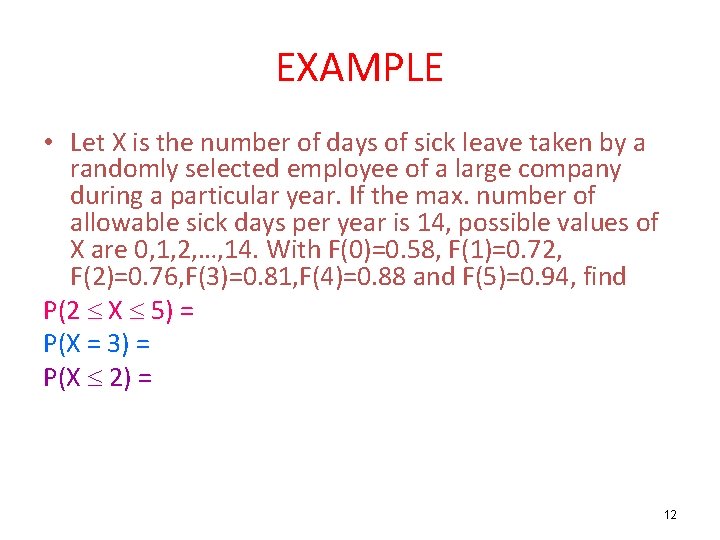

EXAMPLE • Let X is the number of days of sick leave taken by a randomly selected employee of a large company during a particular year. If the max. number of allowable sick days per year is 14, possible values of X are 0, 1, 2, …, 14. With F(0)=0. 58, F(1)=0. 72, F(2)=0. 76, F(3)=0. 81, F(4)=0. 88 and F(5)=0. 94, find P(2 X 5) = P(X = 3) = P(X 2) = 12

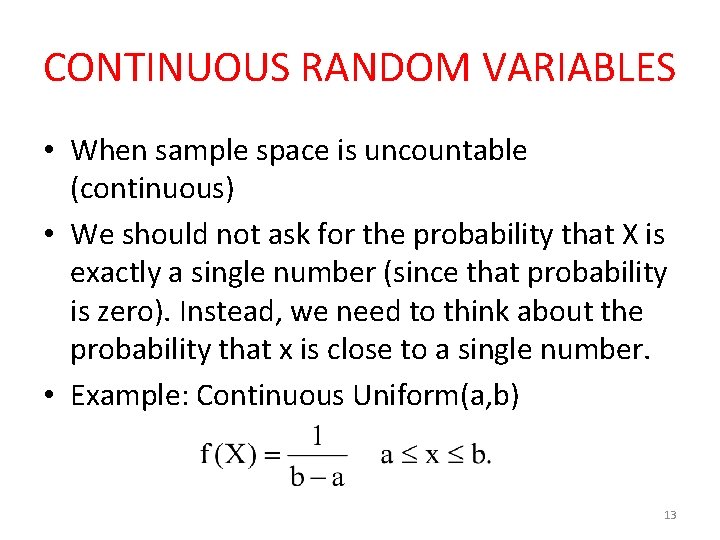

CONTINUOUS RANDOM VARIABLES • When sample space is uncountable (continuous) • We should not ask for the probability that X is exactly a single number (since that probability is zero). Instead, we need to think about the probability that x is close to a single number. • Example: Continuous Uniform(a, b) 13

Probability Density Function (pdf) • If the probability density around a point x is large, that means the random variable X is likely to be close to x. If, on the other hand, f(x)=0 in some interval, then X won't be in that interval. • Probabilities for continuous distributions are measured over ranges of values rather than single points. A probability indicates the likelihood that a value will fall within an interval. 14

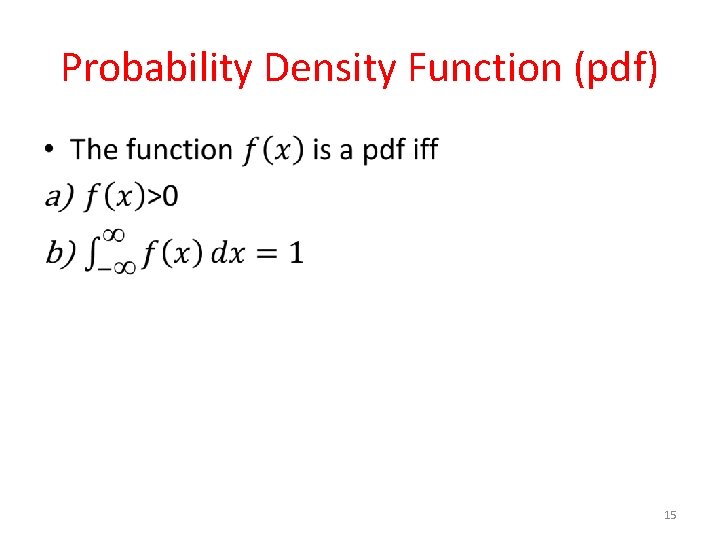

Probability Density Function (pdf) • 15

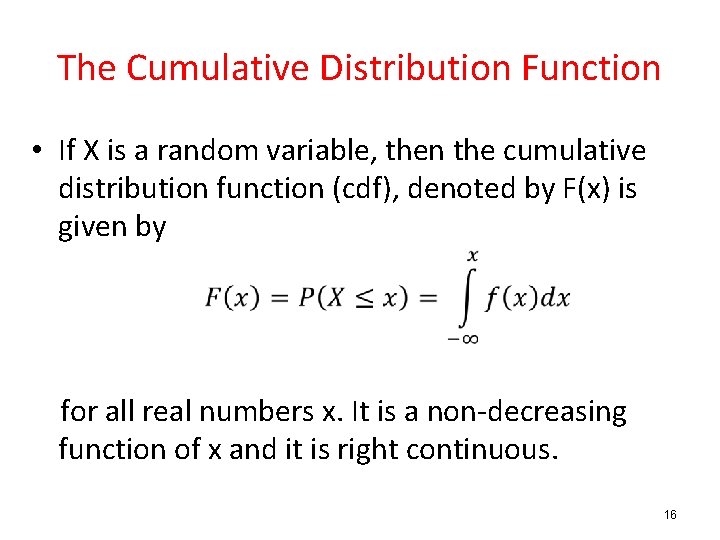

The Cumulative Distribution Function • If X is a random variable, then the cumulative distribution function (cdf), denoted by F(x) is given by for all real numbers x. It is a non-decreasing function of x and it is right continuous. 16

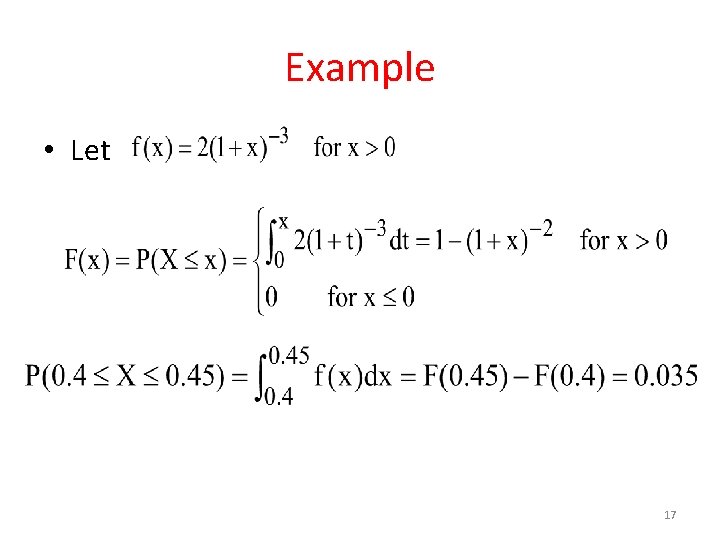

Example • Let 17

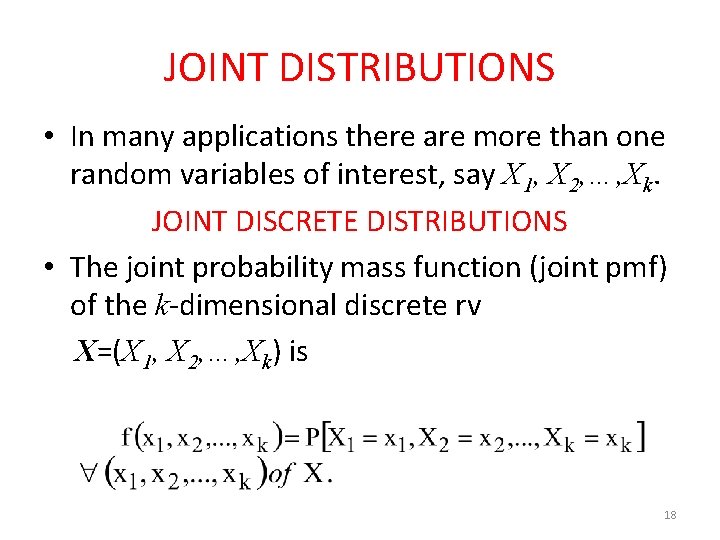

JOINT DISTRIBUTIONS • In many applications there are more than one random variables of interest, say X 1, X 2, …, Xk. JOINT DISCRETE DISTRIBUTIONS • The joint probability mass function (joint pmf) of the k-dimensional discrete rv X=(X 1, X 2, …, Xk) is 18

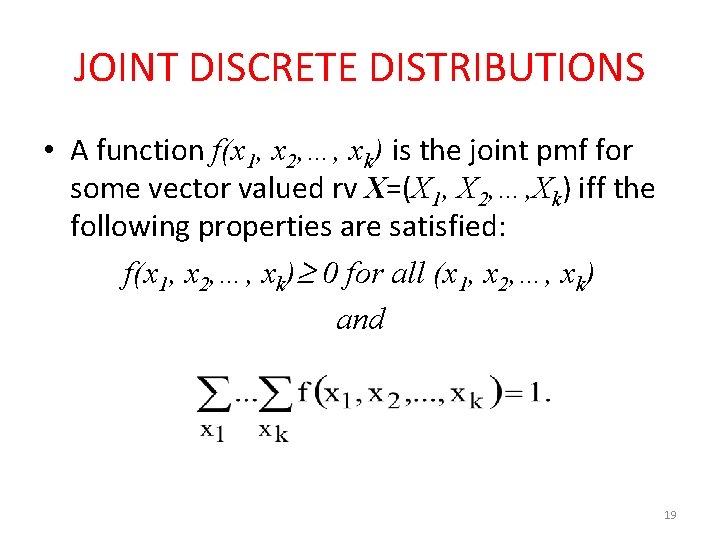

JOINT DISCRETE DISTRIBUTIONS • A function f(x 1, x 2, …, xk) is the joint pmf for some vector valued rv X=(X 1, X 2, …, Xk) iff the following properties are satisfied: f(x 1, x 2, …, xk) 0 for all (x 1, x 2, …, xk) and 19

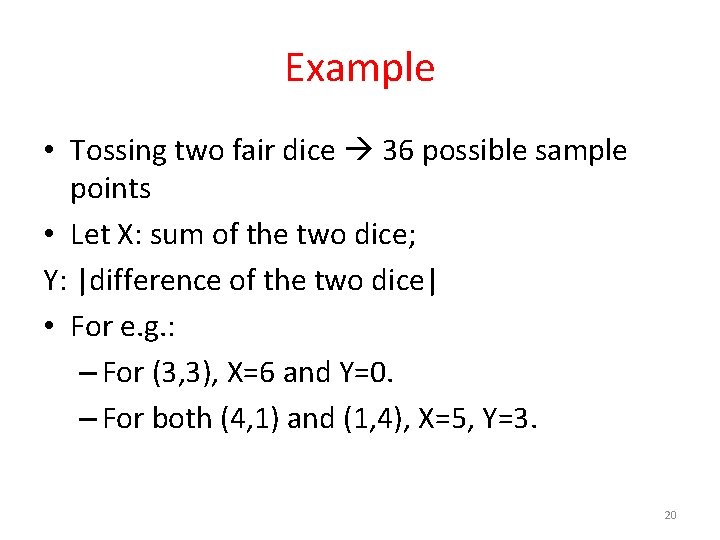

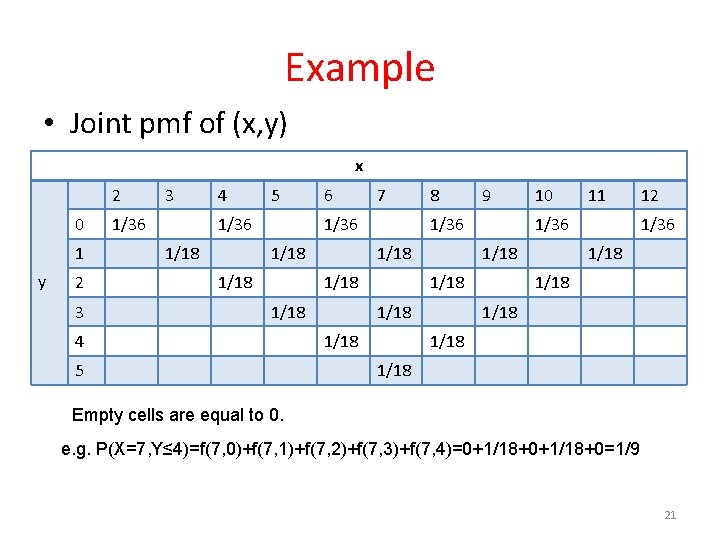

Example • Tossing two fair dice 36 possible sample points • Let X: sum of the two dice; Y: |difference of the two dice| • For e. g. : – For (3, 3), X=6 and Y=0. – For both (4, 1) and (1, 4), X=5, Y=3. 20

Example • Joint pmf of (x, y) x 2 0 1 y 2 3 3 1/36 4 5 1/36 1/18 6 7 1/36 1/18 5 9 1/36 1/18 4 8 1/18 11 1/36 1/18 10 12 1/36 1/18 1/18 Empty cells are equal to 0. e. g. P(X=7, Y≤ 4)=f(7, 0)+f(7, 1)+f(7, 2)+f(7, 3)+f(7, 4)=0+1/18+0=1/9 21

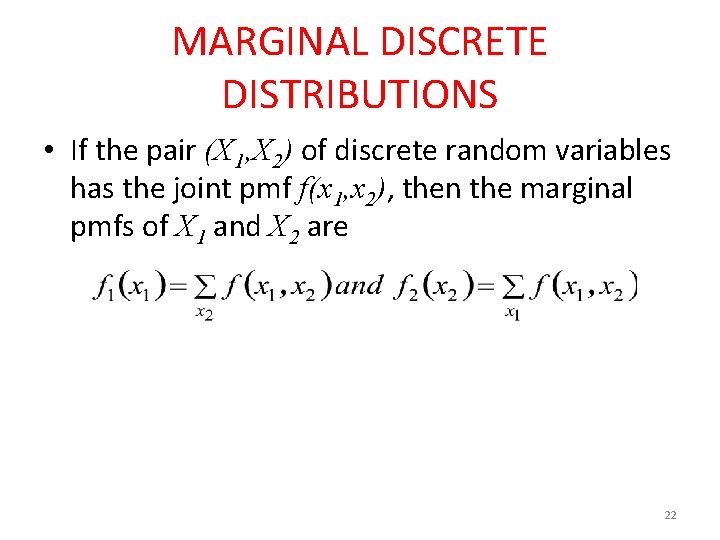

MARGINAL DISCRETE DISTRIBUTIONS • If the pair (X 1, X 2) of discrete random variables has the joint pmf f(x 1, x 2), then the marginal pmfs of X 1 and X 2 are 22

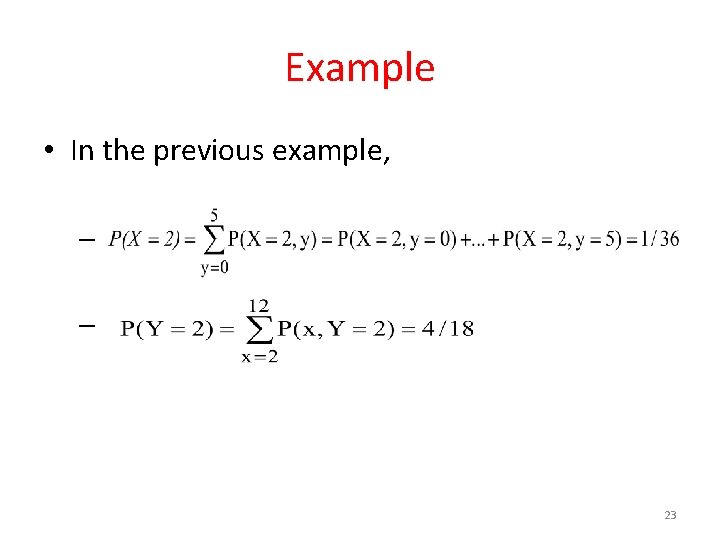

Example • In the previous example, – – 23

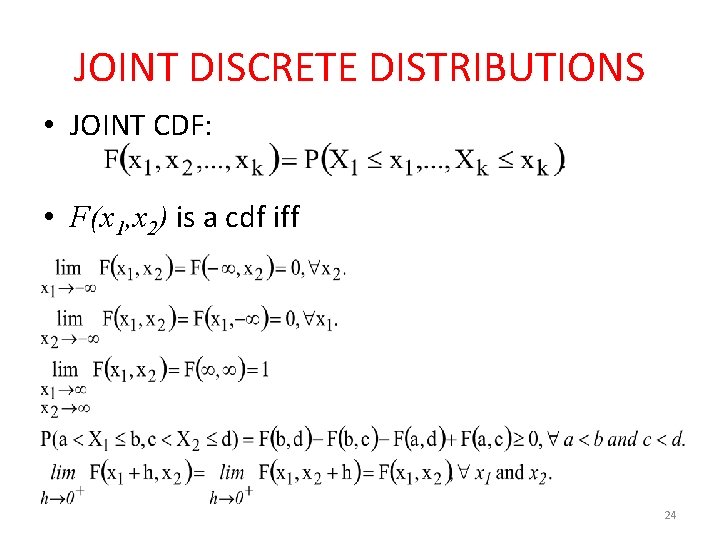

JOINT DISCRETE DISTRIBUTIONS • JOINT CDF: • F(x 1, x 2) is a cdf iff 24

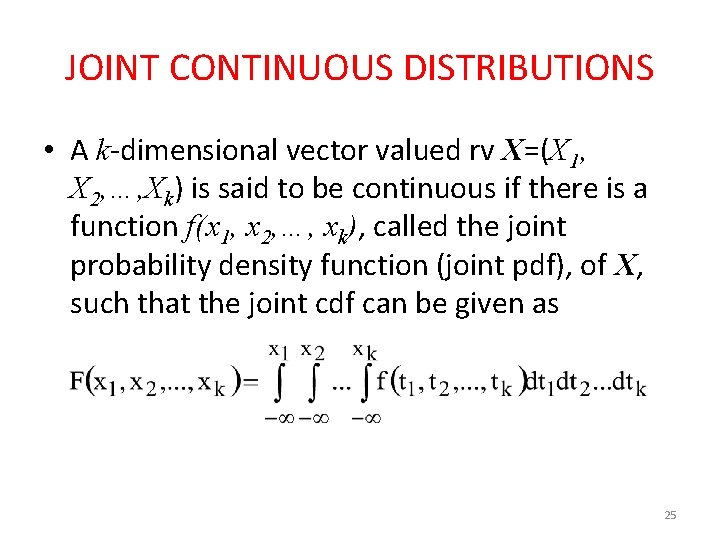

JOINT CONTINUOUS DISTRIBUTIONS • A k-dimensional vector valued rv X=(X 1, X 2, …, Xk) is said to be continuous if there is a function f(x 1, x 2, …, xk), called the joint probability density function (joint pdf), of X, such that the joint cdf can be given as 25

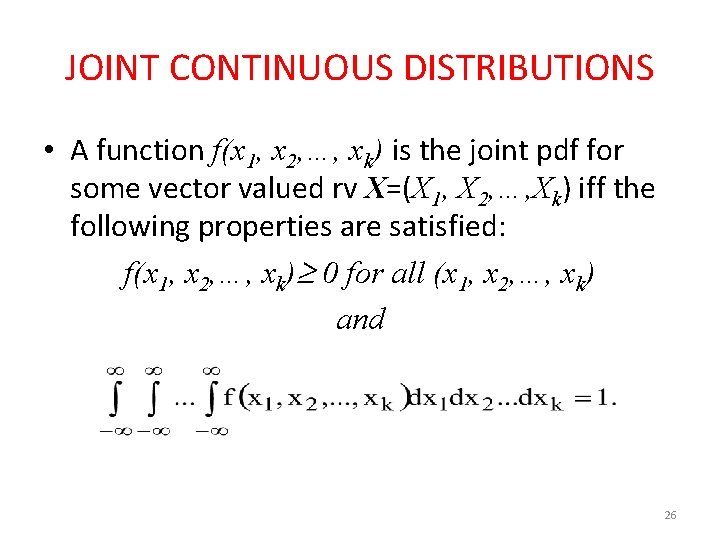

JOINT CONTINUOUS DISTRIBUTIONS • A function f(x 1, x 2, …, xk) is the joint pdf for some vector valued rv X=(X 1, X 2, …, Xk) iff the following properties are satisfied: f(x 1, x 2, …, xk) 0 for all (x 1, x 2, …, xk) and 26

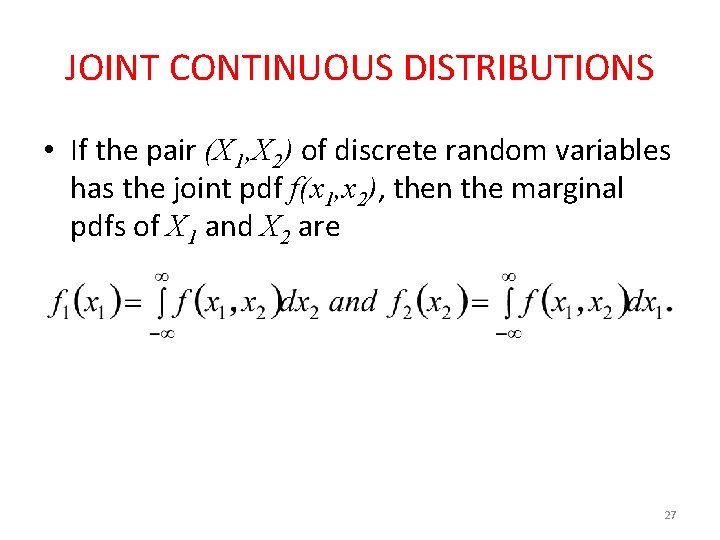

JOINT CONTINUOUS DISTRIBUTIONS • If the pair (X 1, X 2) of discrete random variables has the joint pdf f(x 1, x 2), then the marginal pdfs of X 1 and X 2 are 27

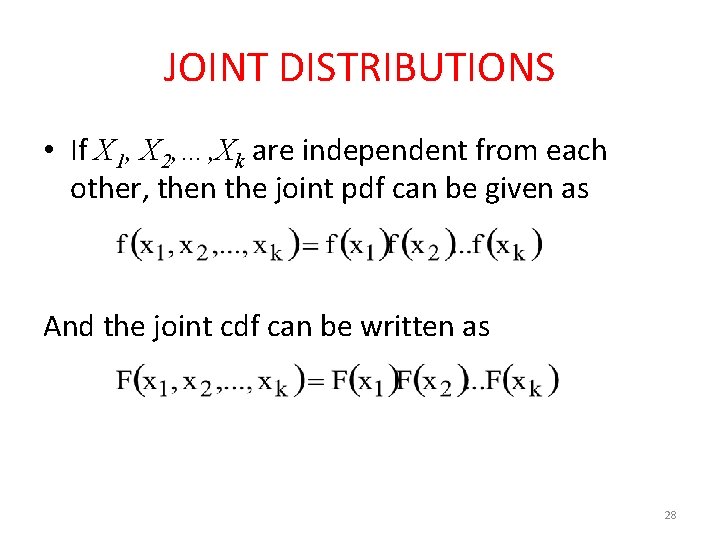

JOINT DISTRIBUTIONS • If X 1, X 2, …, Xk are independent from each other, then the joint pdf can be given as And the joint cdf can be written as 28

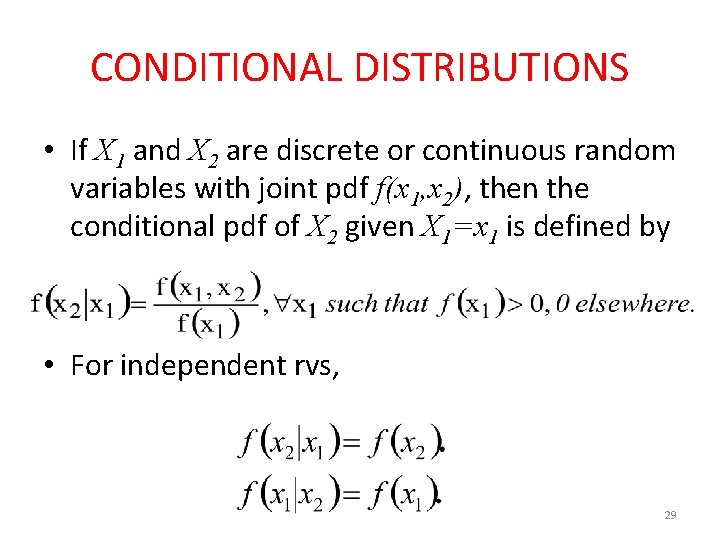

CONDITIONAL DISTRIBUTIONS • If X 1 and X 2 are discrete or continuous random variables with joint pdf f(x 1, x 2), then the conditional pdf of X 2 given X 1=x 1 is defined by • For independent rvs, 29

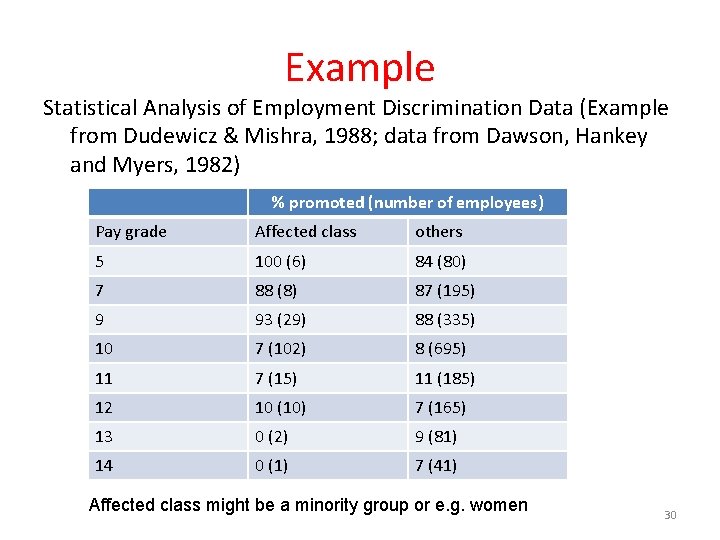

Example Statistical Analysis of Employment Discrimination Data (Example from Dudewicz & Mishra, 1988; data from Dawson, Hankey and Myers, 1982) % promoted (number of employees) Pay grade Affected class others 5 100 (6) 84 (80) 7 88 (8) 87 (195) 9 93 (29) 88 (335) 10 7 (102) 8 (695) 11 7 (15) 11 (185) 12 10 (10) 7 (165) 13 0 (2) 9 (81) 14 0 (1) 7 (41) Affected class might be a minority group or e. g. women 30

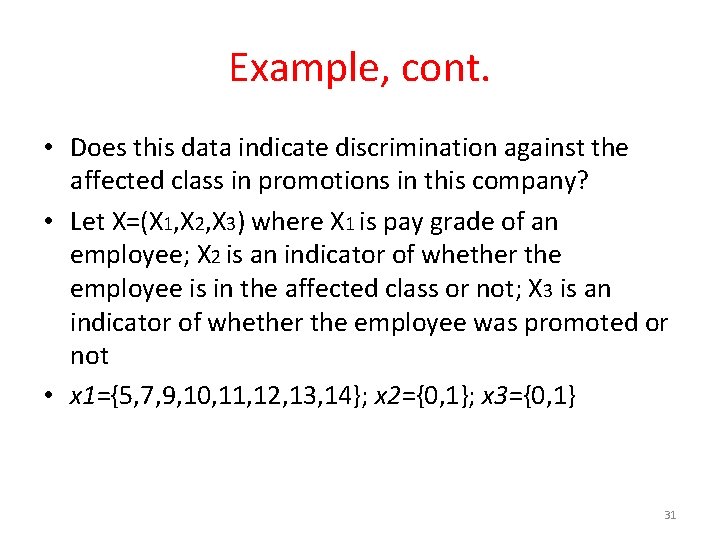

Example, cont. • Does this data indicate discrimination against the affected class in promotions in this company? • Let X=(X 1, X 2, X 3) where X 1 is pay grade of an employee; X 2 is an indicator of whether the employee is in the affected class or not; X 3 is an indicator of whether the employee was promoted or not • x 1={5, 7, 9, 10, 11, 12, 13, 14}; x 2={0, 1}; x 3={0, 1} 31

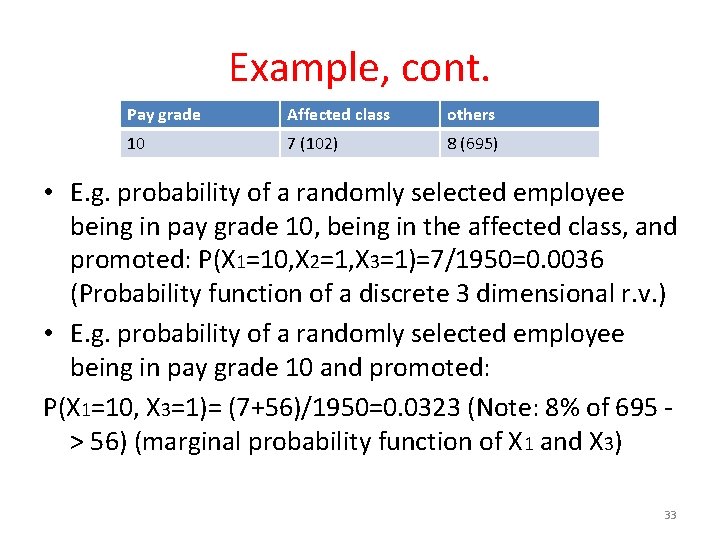

Example, cont. Pay grade Affected class others 10 7 (102) 8 (695) • E. g. , in pay grade 10 of this occupation (X 1=10) there were 102 members of the affected class and 695 members of the other classes. Seven percent of the affected class in pay grade 10 had been promoted, that is (102)(0. 07)=7 individuals out of 102 had been promoted. • Out of 1950 employees, only 173 are in the affected class; this is not atypical in such studies. 32

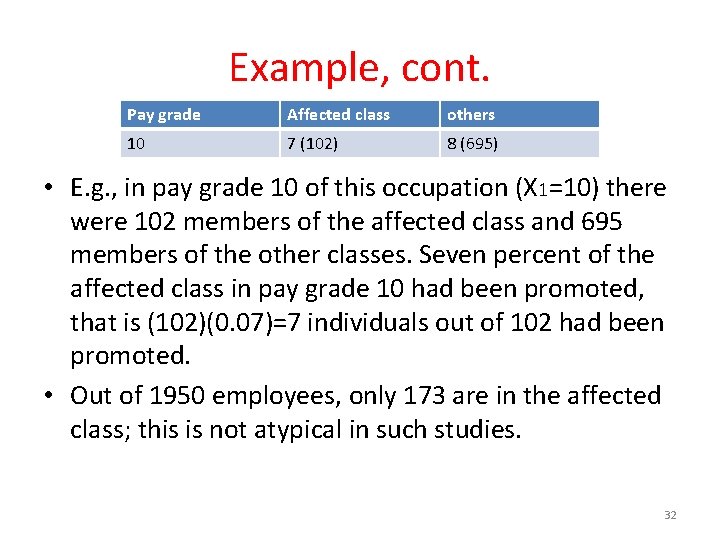

Example, cont. Pay grade Affected class others 10 7 (102) 8 (695) • E. g. probability of a randomly selected employee being in pay grade 10, being in the affected class, and promoted: P(X 1=10, X 2=1, X 3=1)=7/1950=0. 0036 (Probability function of a discrete 3 dimensional r. v. ) • E. g. probability of a randomly selected employee being in pay grade 10 and promoted: P(X 1=10, X 3=1)= (7+56)/1950=0. 0323 (Note: 8% of 695 > 56) (marginal probability function of X 1 and X 3) 33

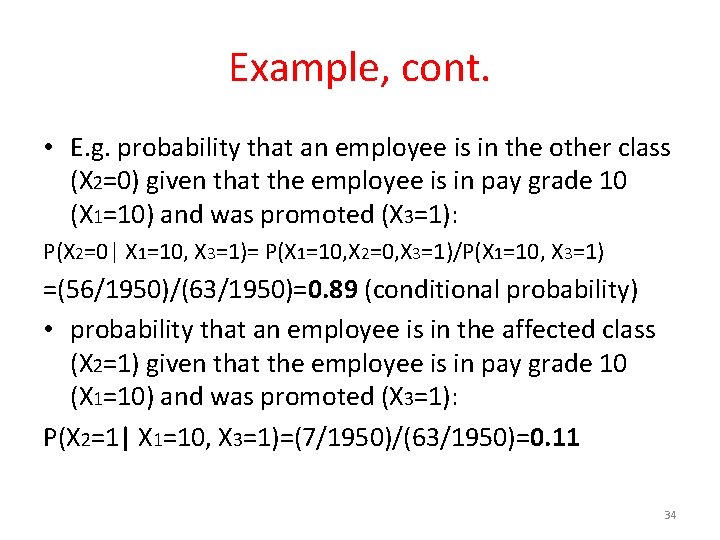

Example, cont. • E. g. probability that an employee is in the other class (X 2=0) given that the employee is in pay grade 10 (X 1=10) and was promoted (X 3=1): P(X 2=0| X 1=10, X 3=1)= P(X 1=10, X 2=0, X 3=1)/P(X 1=10, X 3=1) =(56/1950)/(63/1950)=0. 89 (conditional probability) • probability that an employee is in the affected class (X 2=1) given that the employee is in pay grade 10 (X 1=10) and was promoted (X 3=1): P(X 2=1| X 1=10, X 3=1)=(7/1950)/(63/1950)=0. 11 34

Describing the Population • We’re interested in describing the population by computing various parameters. • For instance, we calculate the population mean and population variance. 35

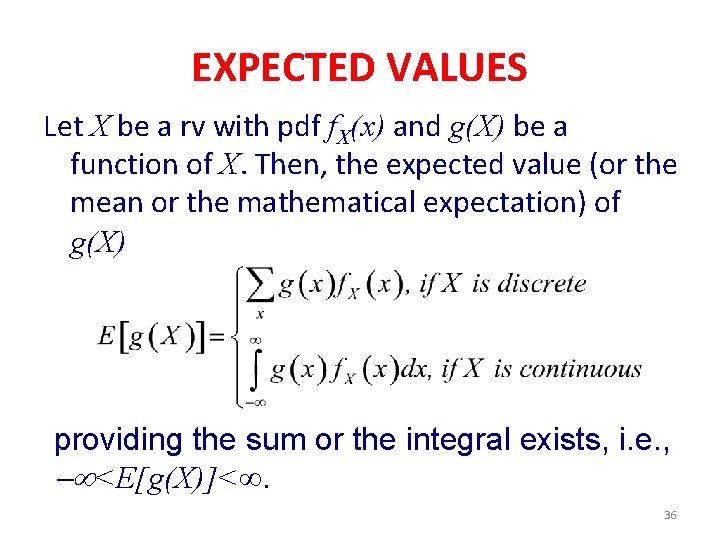

EXPECTED VALUES Let X be a rv with pdf f. X(x) and g(X) be a function of X. Then, the expected value (or the mean or the mathematical expectation) of g(X) providing the sum or the integral exists, i. e. , <E[g(X)]<. 36

![EXPECTED VALUES • E[g(X)] is finite if E[| g(X) |] is finite. 37 EXPECTED VALUES • E[g(X)] is finite if E[| g(X) |] is finite. 37](http://slidetodoc.com/presentation_image_h2/70d0e4273d075ee0793fc6f932e6c4c4/image-37.jpg)

EXPECTED VALUES • E[g(X)] is finite if E[| g(X) |] is finite. 37

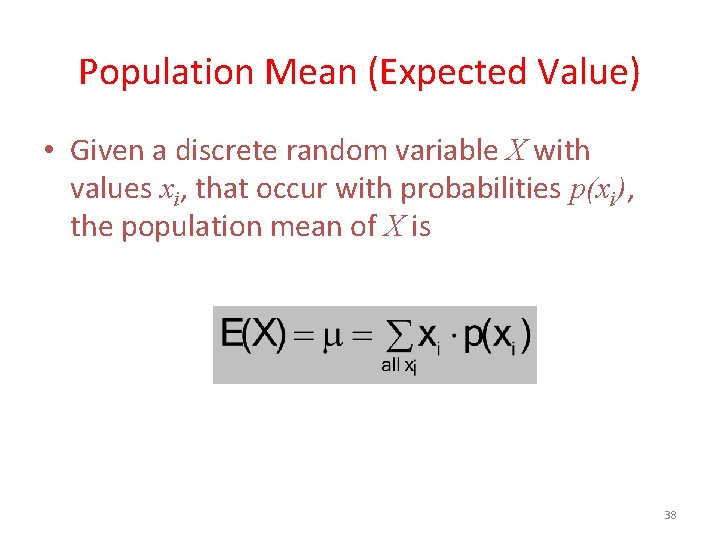

Population Mean (Expected Value) • Given a discrete random variable X with values xi, that occur with probabilities p(xi), the population mean of X is 38

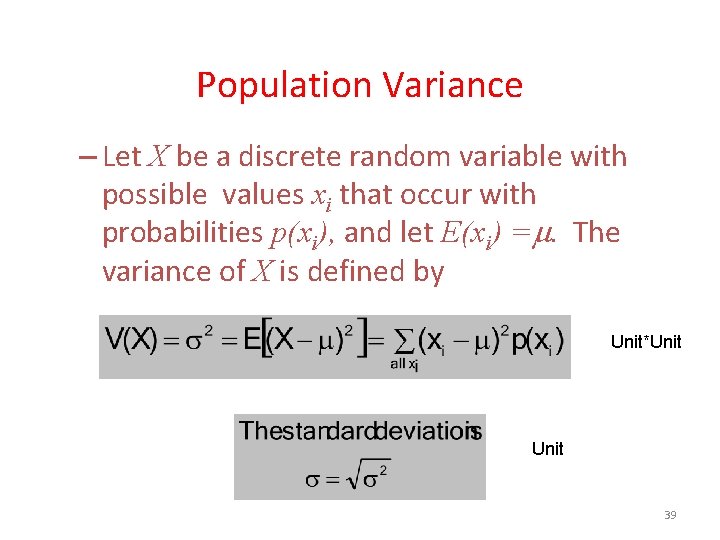

Population Variance – Let X be a discrete random variable with possible values xi that occur with probabilities p(xi), and let E(xi) =. The variance of X is defined by Unit*Unit 39

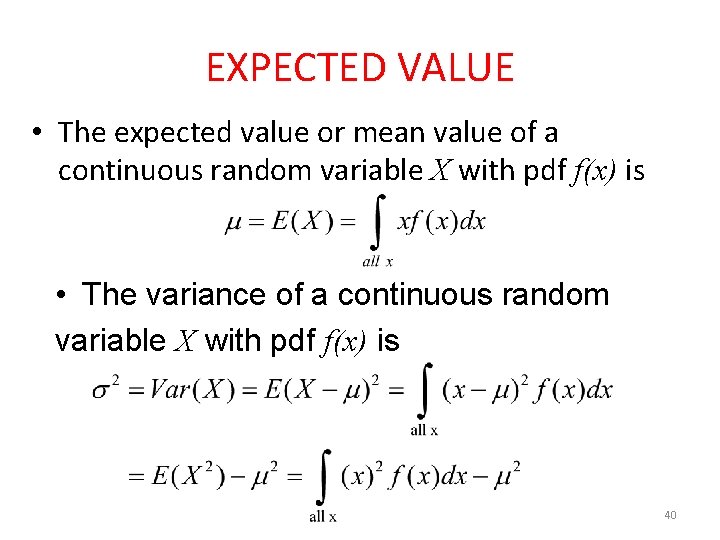

EXPECTED VALUE • The expected value or mean value of a continuous random variable X with pdf f(x) is • The variance of a continuous random variable X with pdf f(x) is 40

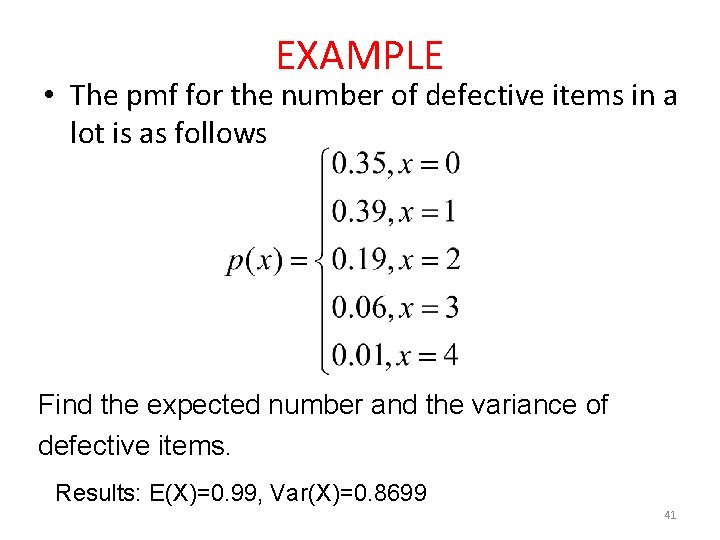

EXAMPLE • The pmf for the number of defective items in a lot is as follows Find the expected number and the variance of defective items. Results: E(X)=0. 99, Var(X)=0. 8699 41

EXAMPLE • Let X be a random variable. Its pdf is f(x)=2(1 -x), 0< x < 1 Find E(X) and Var(X). 42

EXAMPLE • What is the mathematical expectation if we win $10 when a die comes up 1 or 6 and lose $5 when it comes up 2, 3, 4 and 5? X = amount of profit 43

EXAMPLE • A grab-bay contains 6 packages worth $2 each, 11 packages worth $3, and 8 packages worth $4 each. Is it reasonable to pay $3. 5 for the option of selecting one of these packages at random? X = worth of packages 44

EXAMPLE • Let X be a random variable and it is a life length of light bulb. Its pdf is f(x)=2(1 -x), 0< x < 1 Find E(X) and Var(X). 45

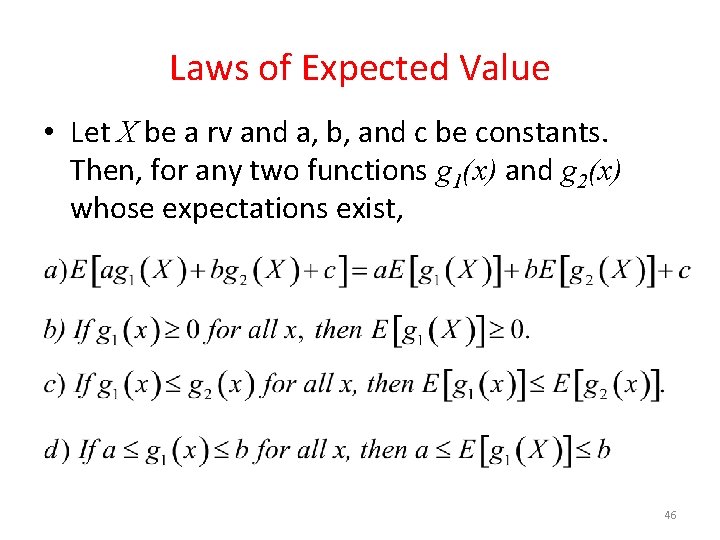

Laws of Expected Value • Let X be a rv and a, b, and c be constants. Then, for any two functions g 1(x) and g 2(x) whose expectations exist, 46

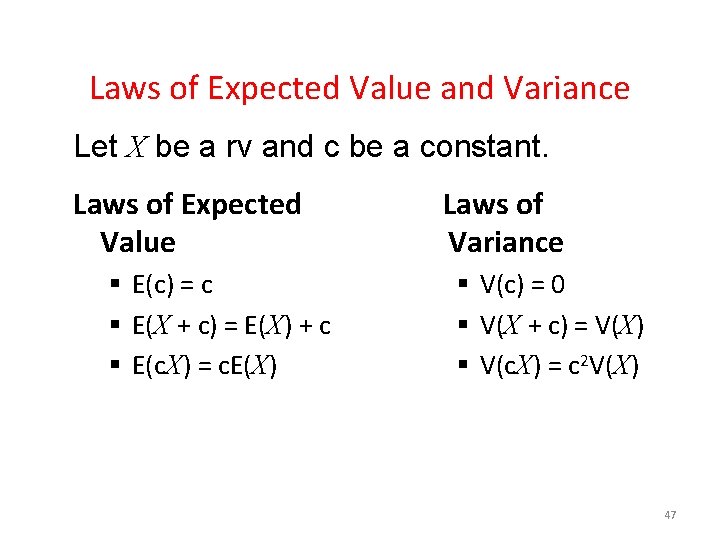

Laws of Expected Value and Variance Let X be a rv and c be a constant. Laws of Expected Value § E(c) = c § E(X + c) = E(X) + c § E(c. X) = c. E(X) Laws of Variance § V(c) = 0 § V(X + c) = V(X) § V(c. X) = c 2 V(X) 47

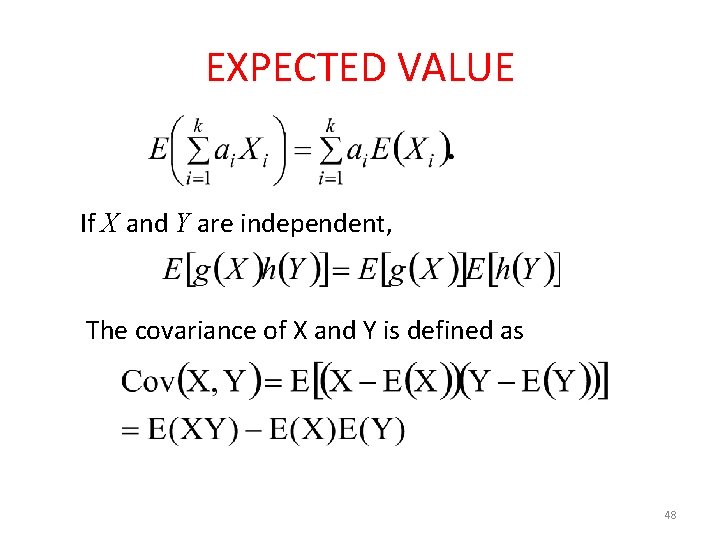

EXPECTED VALUE If X and Y are independent, The covariance of X and Y is defined as 48

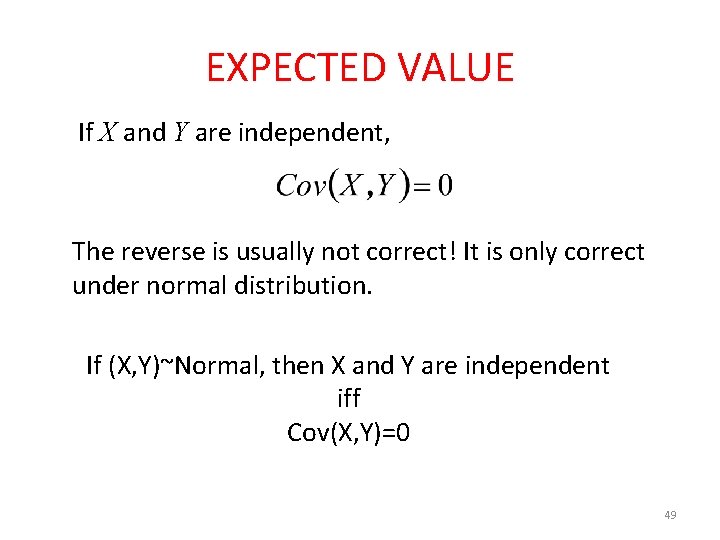

EXPECTED VALUE If X and Y are independent, The reverse is usually not correct! It is only correct under normal distribution. If (X, Y)~Normal, then X and Y are independent iff Cov(X, Y)=0 49

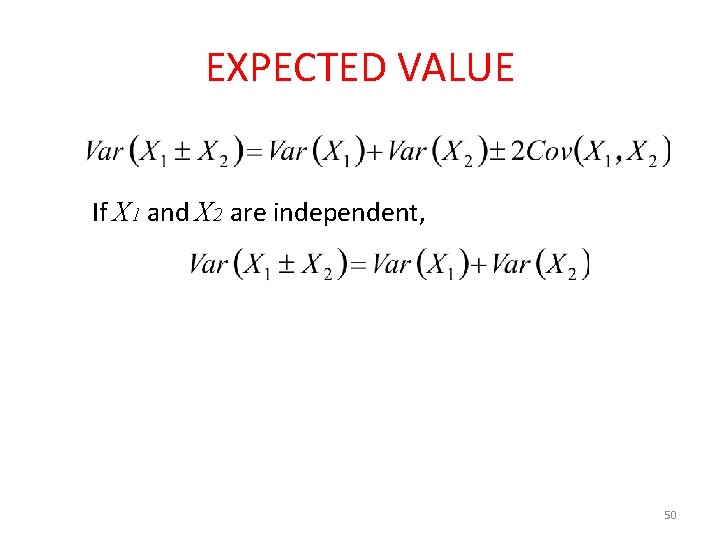

EXPECTED VALUE If X 1 and X 2 are independent, 50

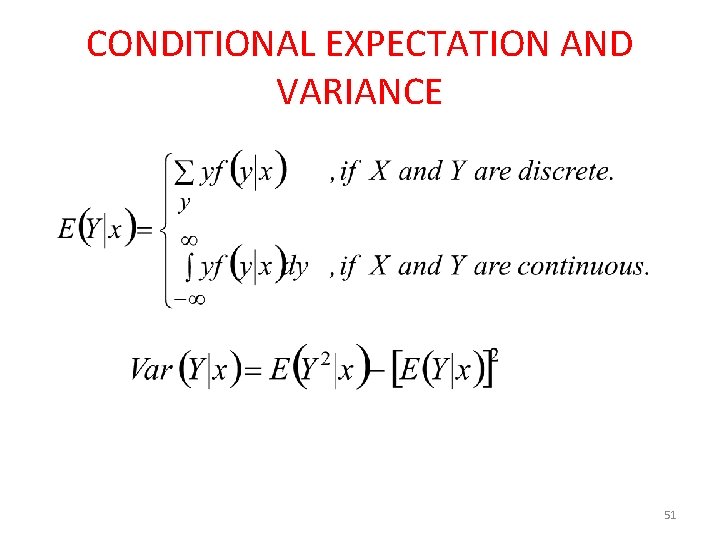

CONDITIONAL EXPECTATION AND VARIANCE 51

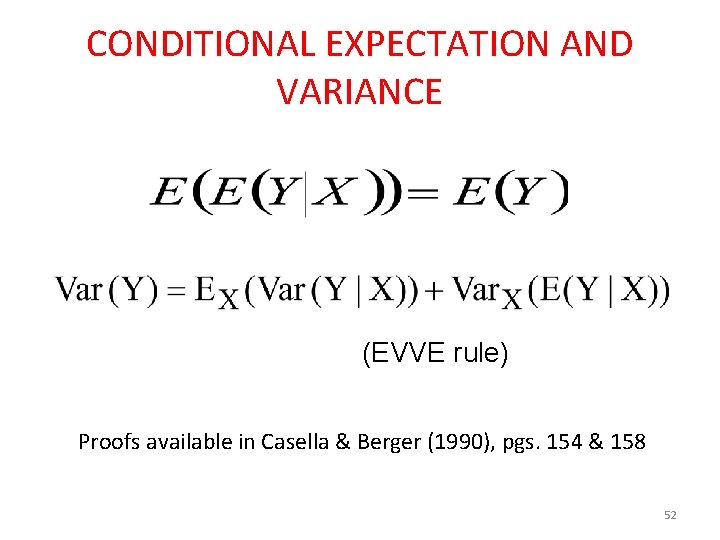

CONDITIONAL EXPECTATION AND VARIANCE (EVVE rule) Proofs available in Casella & Berger (1990), pgs. 154 & 158 52

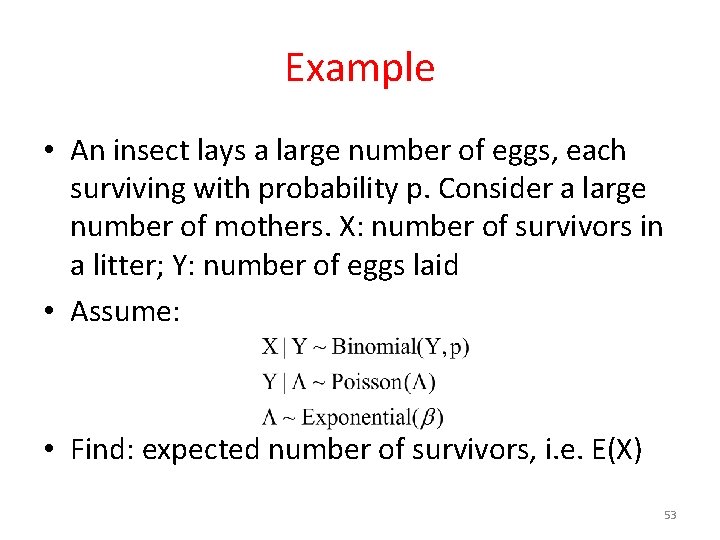

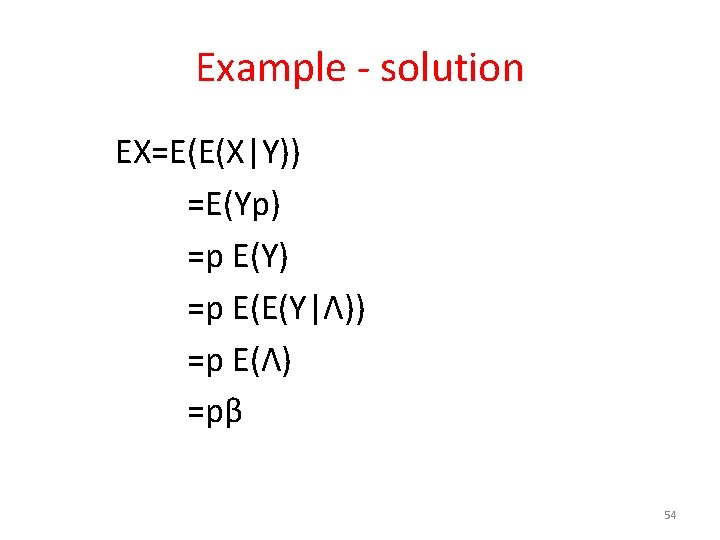

Example • An insect lays a large number of eggs, each surviving with probability p. Consider a large number of mothers. X: number of survivors in a litter; Y: number of eggs laid • Assume: • Find: expected number of survivors, i. e. E(X) 53

Example - solution EX=E(E(X|Y)) =E(Yp) =p E(Y) =p E(E(Y|Λ)) =p E(Λ) =pβ 54

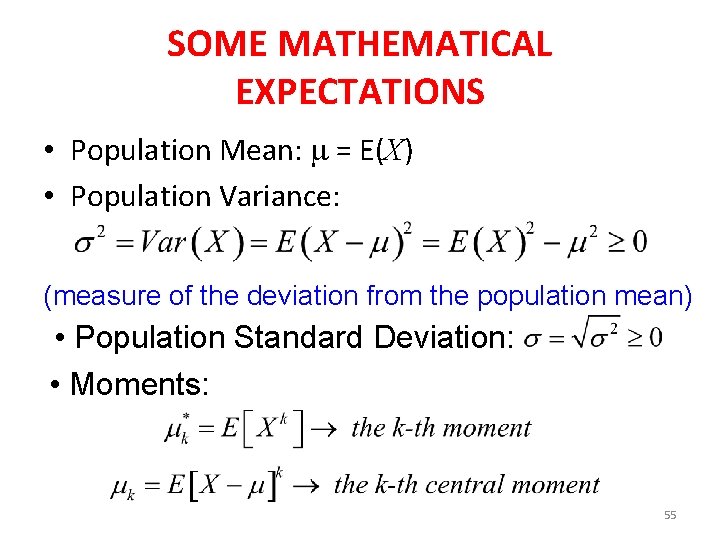

SOME MATHEMATICAL EXPECTATIONS • Population Mean: = E(X) • Population Variance: (measure of the deviation from the population mean) • Population Standard Deviation: • Moments: 55

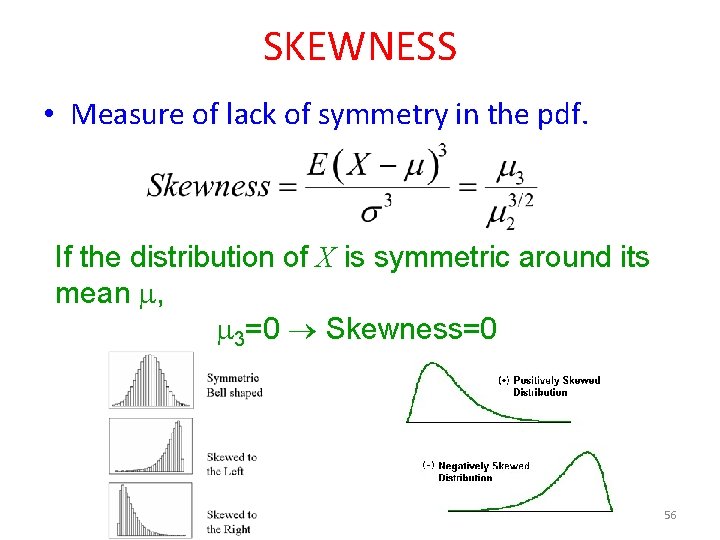

SKEWNESS • Measure of lack of symmetry in the pdf. If the distribution of X is symmetric around its mean , 3=0 Skewness=0 56

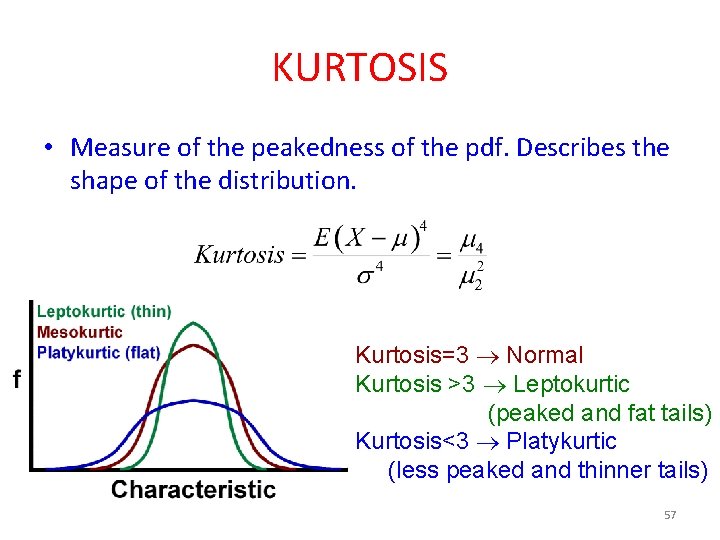

KURTOSIS • Measure of the peakedness of the pdf. Describes the shape of the distribution. Kurtosis=3 Normal Kurtosis >3 Leptokurtic (peaked and fat tails) Kurtosis<3 Platykurtic (less peaked and thinner tails) 57

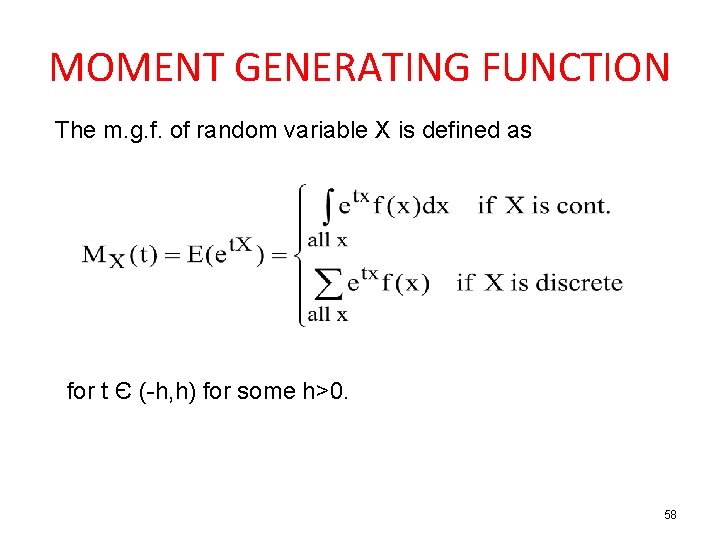

MOMENT GENERATING FUNCTION The m. g. f. of random variable X is defined as for t Є (-h, h) for some h>0. 58

![Properties of m. g. f. • M(0)=E[1]=1 • If a r. v. X has Properties of m. g. f. • M(0)=E[1]=1 • If a r. v. X has](http://slidetodoc.com/presentation_image_h2/70d0e4273d075ee0793fc6f932e6c4c4/image-59.jpg)

Properties of m. g. f. • M(0)=E[1]=1 • If a r. v. X has m. g. f. M(t), then Y=a. X+b has a m. g. f. • • M. g. f does not always exists (e. g. Cauchy distribution) 59

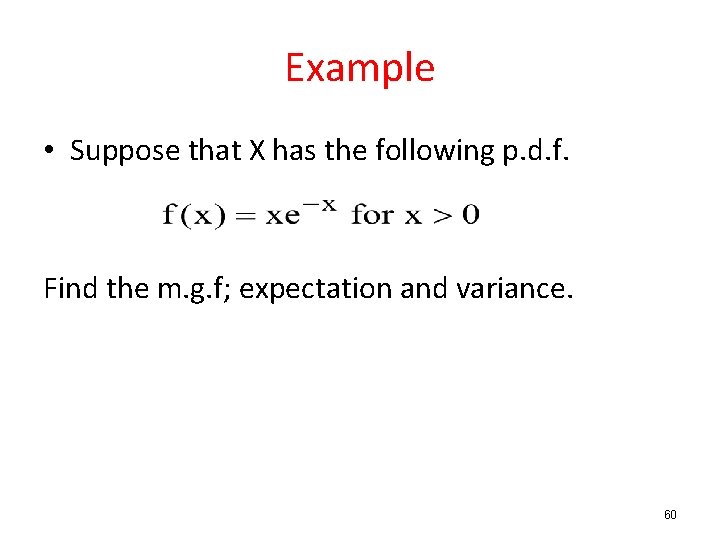

Example • Suppose that X has the following p. d. f. Find the m. g. f; expectation and variance. 60

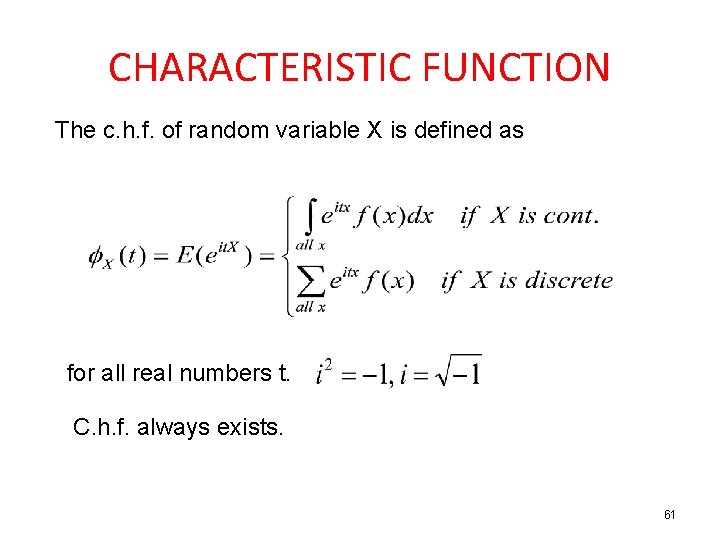

CHARACTERISTIC FUNCTION The c. h. f. of random variable X is defined as for all real numbers t. C. h. f. always exists. 61

Uniqueness Theorem: 1. If two r. v. s have mg. f. s that exist and are equal, then they have the same distribution. 2. If two r, v, s have the same distribution, then they have the same m. g. f. (if they exist) Similar statements are true for c. h. f. 62

- Slides: 62