RAMP Models and Platforms Krste Asanovic UC Berkeley

RAMP Models and Platforms Krste Asanovic UC Berkeley RAMP Retreat, Berkeley, CA January 15, 2009 1

Much confusion about RAMP Frequently asked questions: n When will RAMP be finished/usable? n What ISA does RAMP use? n Can RAMP model my new feature “X”? n How accurate is RAMP? n Why so many different RAMP projects? n Why is there not more sharing among projects? 2

Not much confusion about software simulators Rarely asked questions: n When will software simulation be finished/usable? n What ISA do software simulators use? n Can a software simulator model my new feature “X”? n How accurate is software simulation? n Why so many software simulators? n Why is there not more sharing among software simulators? 3

RAMP is a consortium, not a project n Many projects with different goals ¨ n So far, much sharing of ideas and techniques ¨ n Very healthy and active community Some sharing of low-level infrastructure ¨ n sometimes multiple per site Boards + platform-level interfaces to DRAM, Ethernet, etc. Not a single complete infrastructure that everyone uses ¨ and that’s been OK, and might continue to be OK 4

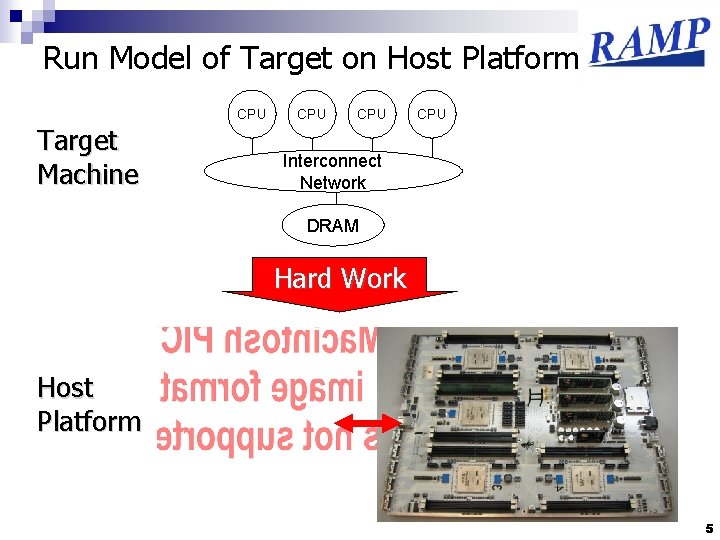

Run Model of Target on Host Platform CPU Target Machine CPU CPU Interconnect Network DRAM Hard Work Host Platform 5

RAMP Projects’ Goals n Model some target machine trading off: ¨ Fidelity ¨ Model design effort ¨ Emulation speed (and capacity) 6

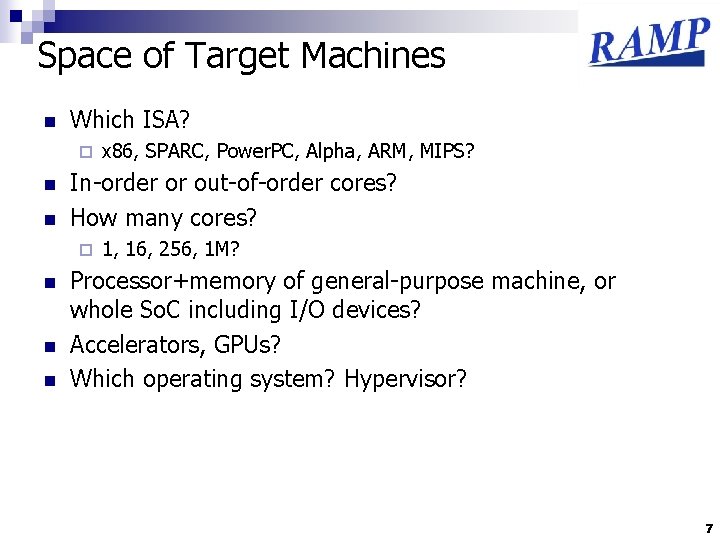

Space of Target Machines n Which ISA? ¨ n n In-order or out-of-order cores? How many cores? ¨ n n n x 86, SPARC, Power. PC, Alpha, ARM, MIPS? 1, 16, 256, 1 M? Processor+memory of general-purpose machine, or whole So. C including I/O devices? Accelerators, GPUs? Which operating system? Hypervisor? 7

ISA Wars n Original pick to standardize around was SPARC Open standard ¨ Available verification suite ¨ Simplest ISA with extensive general-purpose software support (i. e. , desktop/server development environment available) ¨ n SGI/MIPS sorely missed… Leon implementation for FPGA ¨ Simics ¨ n But the intent was always to support multiple ISAs 8

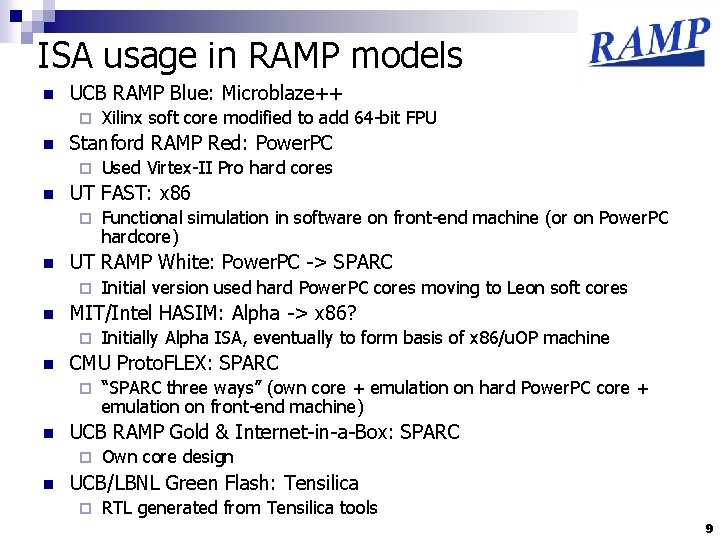

ISA usage in RAMP models n UCB RAMP Blue: Microblaze++ ¨ n Stanford RAMP Red: Power. PC ¨ n “SPARC three ways” (own core + emulation on hard Power. PC core + emulation on front-end machine) UCB RAMP Gold & Internet-in-a-Box: SPARC ¨ n Initially Alpha ISA, eventually to form basis of x 86/u. OP machine CMU Proto. FLEX: SPARC ¨ n Initial version used hard Power. PC cores moving to Leon soft cores MIT/Intel HASIM: Alpha -> x 86? ¨ n Functional simulation in software on front-end machine (or on Power. PC hardcore) UT RAMP White: Power. PC -> SPARC ¨ n Used Virtex-II Pro hard cores UT FAST: x 86 ¨ n Xilinx soft core modified to add 64 -bit FPU Own core design UCB/LBNL Green Flash: Tensilica ¨ RTL generated from Tensilica tools 9

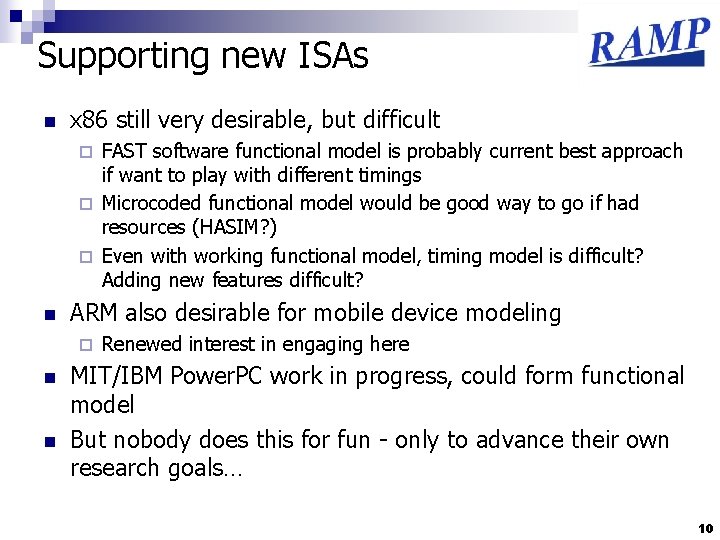

Supporting new ISAs n x 86 still very desirable, but difficult FAST software functional model is probably current best approach if want to play with different timings ¨ Microcoded functional model would be good way to go if had resources (HASIM? ) ¨ Even with working functional model, timing model is difficult? Adding new features difficult? ¨ n ARM also desirable for mobile device modeling ¨ n n Renewed interest in engaging here MIT/IBM Power. PC work in progress, could form functional model But nobody does this for fun - only to advance their own research goals… 10

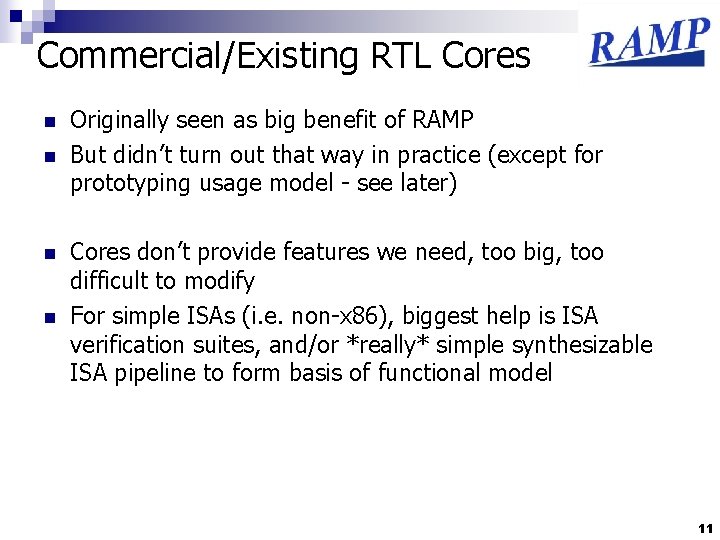

Commercial/Existing RTL Cores n n Originally seen as big benefit of RAMP But didn’t turn out that way in practice (except for prototyping usage model - see later) Cores don’t provide features we need, too big, too difficult to modify For simple ISAs (i. e. non-x 86), biggest help is ISA verification suites, and/or *really* simple synthesizable ISA pipeline to form basis of functional model 11

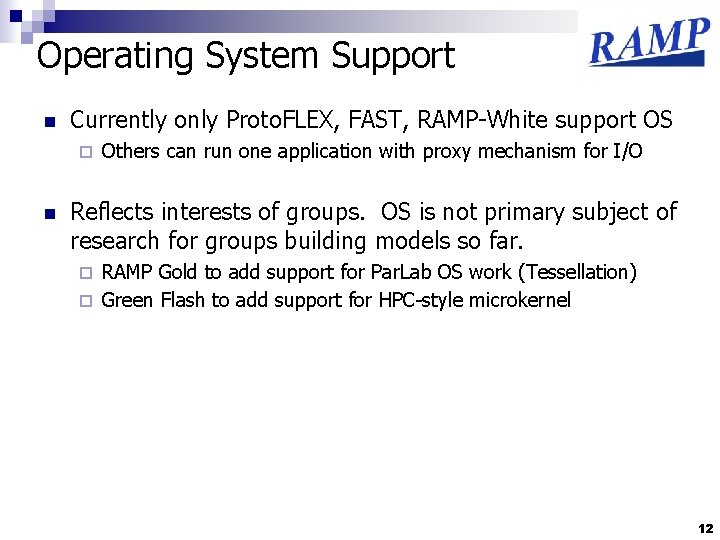

Operating System Support n Currently only Proto. FLEX, FAST, RAMP-White support OS ¨ n Others can run one application with proxy mechanism for I/O Reflects interests of groups. OS is not primary subject of research for groups building models so far. RAMP Gold to add support for Par. Lab OS work (Tessellation) ¨ Green Flash to add support for HPC-style microkernel ¨ 12

Target systems n From a few, to millions of cores Scaling simulation to 100 s of cores was a shared goal ¨ But smaller core counts (16 -128) very interesting also ¨ Huge core counts (>1 E 6) also of interest ¨ n Single node versus clusters RAMP Blue & Internet-in-a-box are message-passing clusters ¨ Rest are shared-memory systems ¨ n Memory hierarchy and cache coherence protocols ¨ n Desktop/Laptop/Server versus Handheld or So. C ¨ n Wide variety of possibilities What is important to model for given research topic? Accelerators/GPUs ¨ Even wider variety than CPU ISAs/microarchitectures 13

Wide variety, how to reuse? Proposal: n ISA functional models also FPU across ISAs ¨ Perhaps even common u. OP engine across all ISAs? ¨ n CPU Microarchitecture timing model ¨ n Memory functional model ¨ n Host-level caches + memory interleaving Memory hierarchy timing models ¨ n E. g. , in-order superscalar, out-of-order with unified physical register file On-chip network types as subset I/O bus shims ¨ To allow random RTL to be attached for I/O devices and non-GPU accelerators This won’t be easy, as have to agree on interfaces between these components, might need further specialization Definitely need more experience doing all of the above 14

Simulator Types n n Functional model only (no timing) RTL models (functional includes timing) ¨ Also used for chip prototyping n Split functional and timing models n + Hybrids of above 15

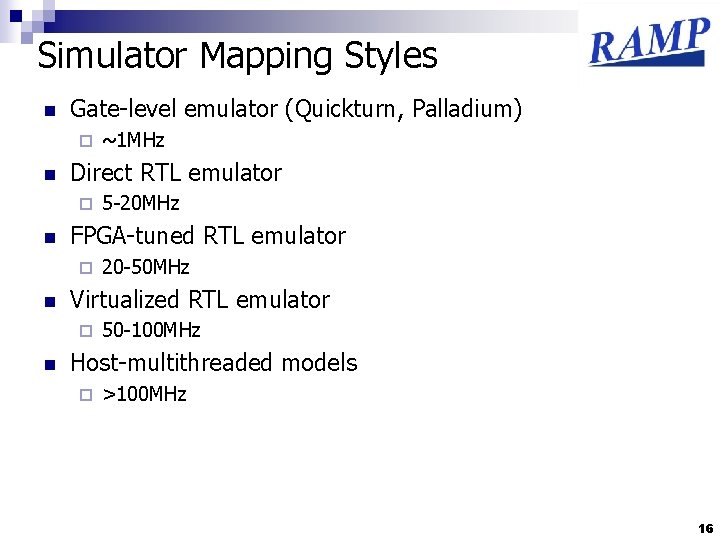

Simulator Mapping Styles n Gate-level emulator (Quickturn, Palladium) ¨ n Direct RTL emulator ¨ n 20 -50 MHz Virtualized RTL emulator ¨ n 5 -20 MHz FPGA-tuned RTL emulator ¨ n ~1 MHz 50 -100 MHz Host-multithreaded models ¨ >100 MHz 16

17

RAMP Blue Release 2/25/2008 - design available from RAMP website - ramp. eecs. berkeley. edu 18

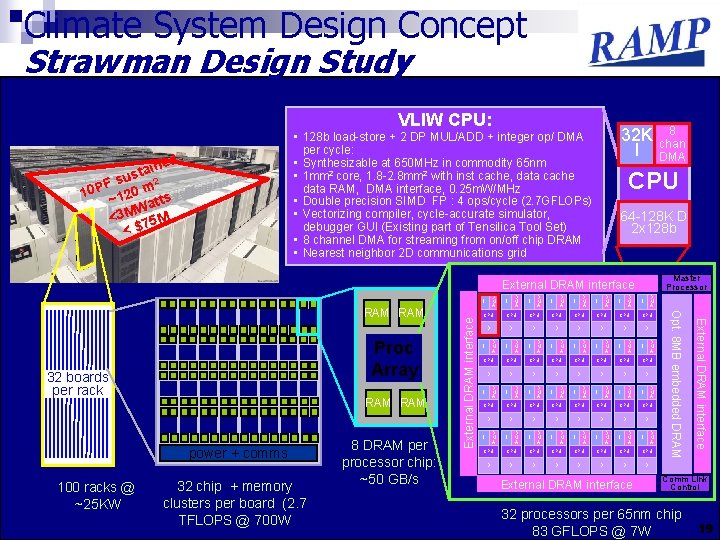

Climate System Design Concept Strawman Design Study VLIW CPU: 32 K I • 128 b load-store + 2 DP MUL/ADD + integer op/ DMA per cycle: • Synthesizable at 650 MHz in commodity 65 nm • 1 mm 2 core, 1. 8 -2. 8 mm 2 with inst cache, data cache data RAM, DMA interface, 0. 25 m. W/MHz • Double precision SIMD FP : 4 ops/cycle (2. 7 GFLOPs) • Vectorizing compiler, cycle-accurate simulator, debugger GUI (Existing part of Tensilica Tool Set) • 8 channel DMA for streaming from on/off chip DRAM • Nearest neighbor 2 D communications grid d aine t s F su m 2 P 0 0 1 ~12 atts W <3 M 75 M <$ CPU 64 -128 K D 2 x 128 b Master Processor External DRAM interface D M A 32 boards per rack RAM power + comms 100 racks @ ~25 KW 32 chip + memory clusters per board (2. 7 TFLOPS @ 700 W 8 DRAM per processor chip: ~50 GB/s D M A I D M A I D M A I CPU CPU CPU CPU D D D D D M A I D M A I D M A I CPU CPU D D D D D M A I D M A I CPU CPU D D D D External DRAM interface Proc Array D M A I Opt. 8 MB embedded DRAM RAM External DRAM interface I 8 chan DMA Comm Link Control 32 processors per 65 nm chip 83 GFLOPS @ 7 W 19

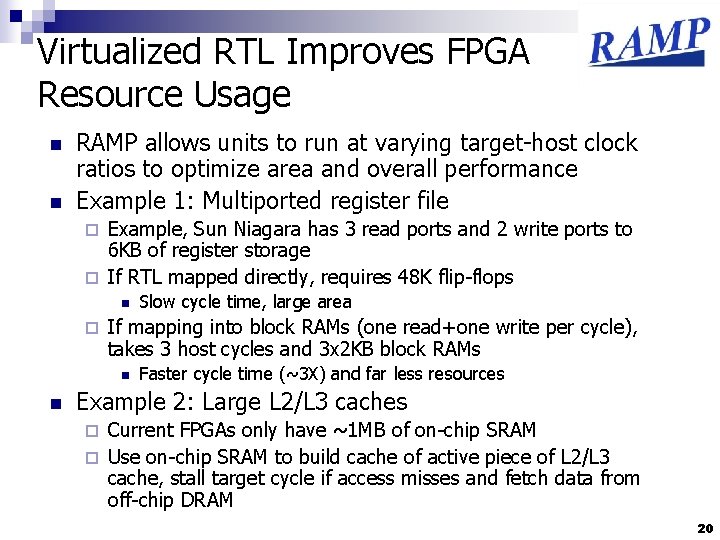

Virtualized RTL Improves FPGA Resource Usage n n RAMP allows units to run at varying target-host clock ratios to optimize area and overall performance Example 1: Multiported register file Example, Sun Niagara has 3 read ports and 2 write ports to 6 KB of register storage ¨ If RTL mapped directly, requires 48 K flip-flops ¨ n ¨ If mapping into block RAMs (one read+one write per cycle), takes 3 host cycles and 3 x 2 KB block RAMs n n Slow cycle time, large area Faster cycle time (~3 X) and far less resources Example 2: Large L 2/L 3 caches Current FPGAs only have ~1 MB of on-chip SRAM ¨ Use on-chip SRAM to build cache of active piece of L 2/L 3 cache, stall target cycle if access misses and fetch data from off-chip DRAM ¨ 20

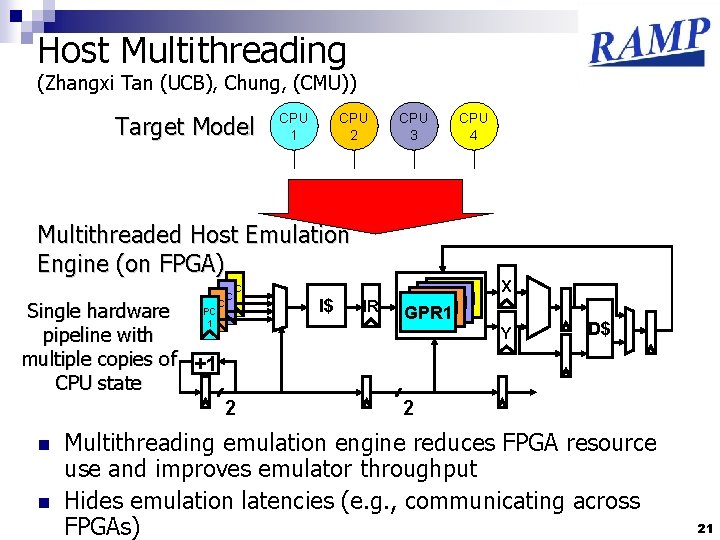

Host Multithreading (Zhangxi Tan (UCB), Chung, (CMU)) Target Model CPU 1 CPU 2 CPU 3 CPU 4 Multithreaded Host Emulation Engine (on FPGA) PC PC 1 1 1 Single hardware pipeline with multiple copies of +1 CPU state 2 n n I$ IR GPR 1 X Y D$ 2 Multithreading emulation engine reduces FPGA resource use and improves emulator throughput Hides emulation latencies (e. g. , communicating across FPGAs) 21

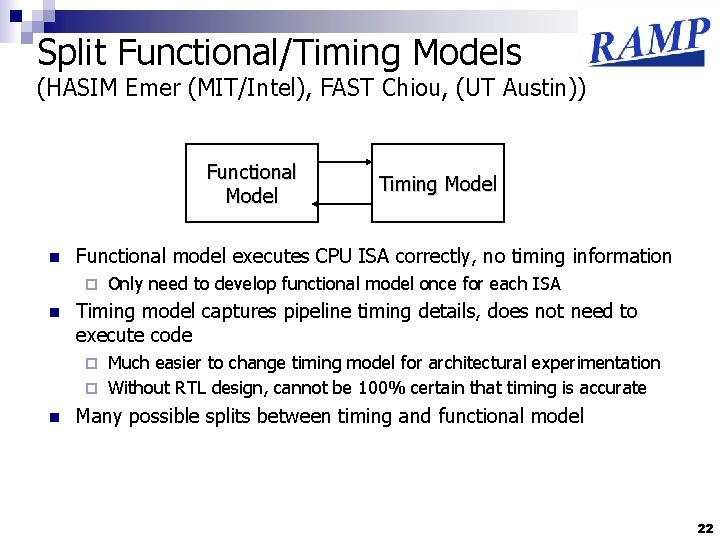

Split Functional/Timing Models (HASIM Emer (MIT/Intel), FAST Chiou, (UT Austin)) Functional Model n Functional model executes CPU ISA correctly, no timing information ¨ n Timing Model Only need to develop functional model once for each ISA Timing model captures pipeline timing details, does not need to execute code Much easier to change timing model for architectural experimentation ¨ Without RTL design, cannot be 100% certain that timing is accurate ¨ n Many possible splits between timing and functional model 22

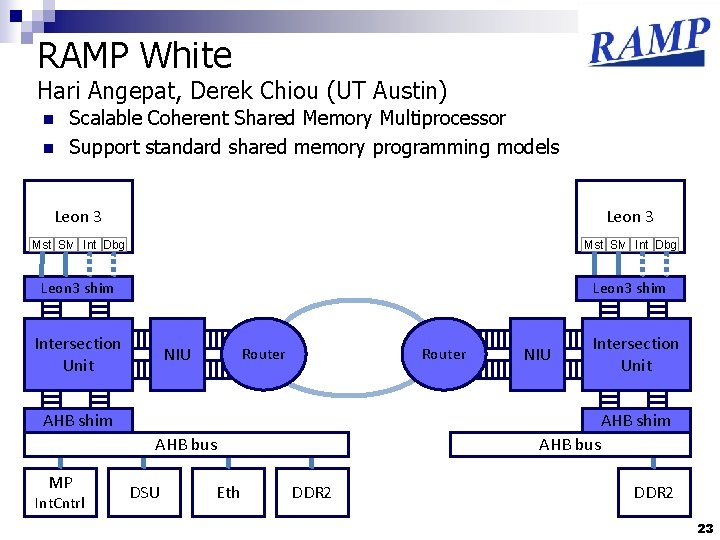

RAMP White Hari Angepat, Derek Chiou (UT Austin) n n Scalable Coherent Shared Memory Multiprocessor Support standard shared memory programming models Leon 3 Mst Slv Int Dbg Leon 3 shim Intersection Unit NIU Router NIU Intersection Unit AHB shim AHB bus MP Int. Cntrl 23 DSU Eth AHB bus DDR 2 RAMP-White 23

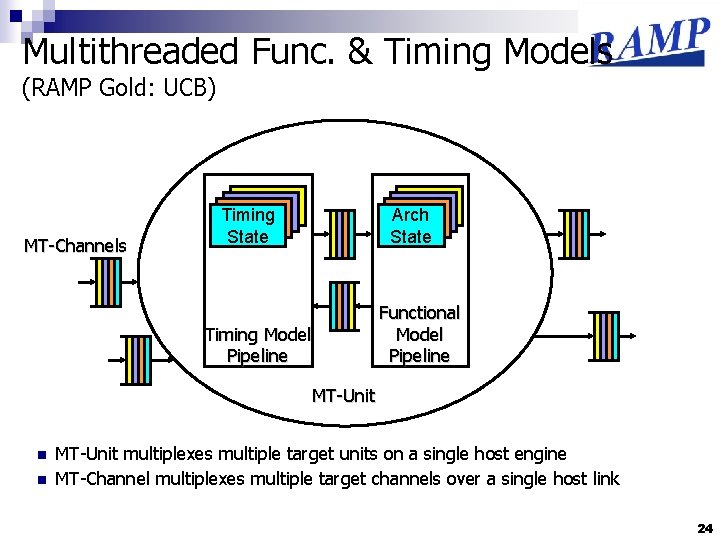

Multithreaded Func. & Timing Models (RAMP Gold: UCB) MT-Channels Timing State Arch State Functional Model Pipeline Timing Model Pipeline MT-Unit n n MT-Unit multiplexes multiple target units on a single host engine MT-Channel multiplexes multiple target channels over a single host link 24

CMU Simics/RAMP Simulator 16 -CPU Shared-memory Ultra. SPARC III Server (Sun. Fire 3800) BEE 2 Platform 25 25

What Hardware Platforms? n RTL mapping approaches Need large amounts of logic ¨ Selected BEE 2, and then designed BEE 3 for this emulation style ¨ Observed that don’t need much interconnect bandwidth (memory + inter-board links) because RTL cores are slow and latency sensitive ¨ n Host-multithreading allows large systems to be mapped to small (one? ) FPGA (e. g. , 64 -128 cores on ML 505) Logic gate count not as critical, need to focus on on-chip capacity, offchip memory bandwidth and total memory capacity per FPGA (conventional processor memory hierarchy issues multiplied by multithreading factor) ¨ One big FPGA with lots of fast memory channels would be ideal ¨ n Software functional emulation (FAST) or transplant (Proto. FLEX) Focus on fast coherent connection to front-end x 86 CPU ¨ Hypertransport, FSB, QPI interfaces better than PCI I/O connections ¨ 26

Summary n Many reasons for great divergence in RAMP projects ¨ Different ISAs, different target machines, different research topics, different emulation styles n Sharing possible, but hard work and more experience needed n Questions? 27

- Slides: 27