Query Processing for the Semantic Sensor Web Antonios

Query Processing for the Semantic Sensor Web Antonios Deligiannakis Technical University of Crete

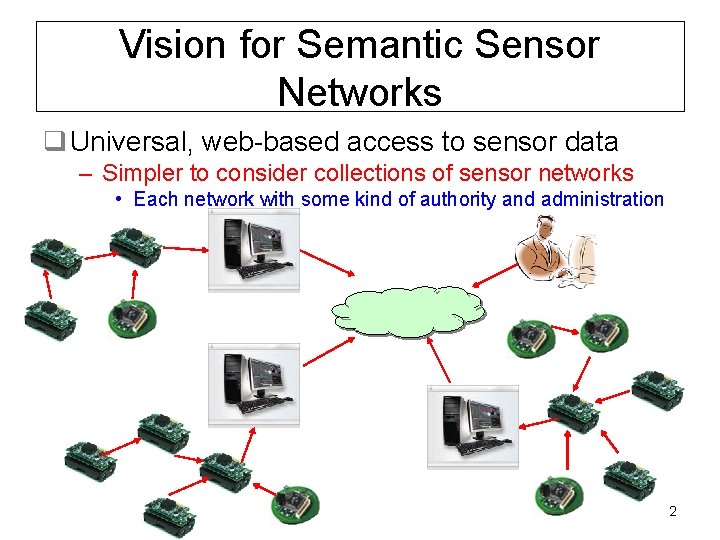

Vision for Semantic Sensor Networks q Universal, web-based access to sensor data – Simpler to consider collections of sensor networks • Each network with some kind of authority and administration 2

Of Course, Nothing Comes Easy… q Requires additional info for collected data – Location/orientation of sensor, time, authority, measured quantities, units, errors etc • Some of them are static, some may change (time, location…) – Additional info may significantly impact volume of transmitted data q Requires proper languages for querying data – Query execution still needs to be optimized within each network 3

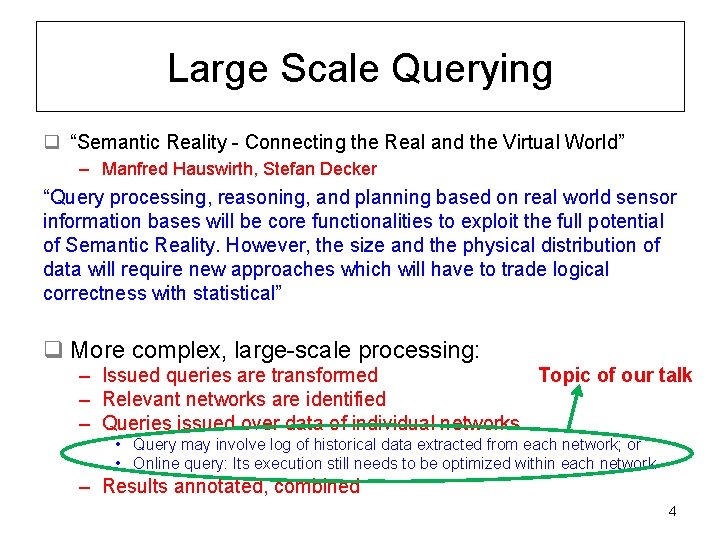

Large Scale Querying q “Semantic Reality - Connecting the Real and the Virtual World” – Manfred Hauswirth, Stefan Decker “Query processing, reasoning, and planning based on real world sensor information bases will be core functionalities to exploit the full potential of Semantic Reality. However, the size and the physical distribution of data will require new approaches which will have to trade logical correctness with statistical” q More complex, large-scale processing: Topic of our talk – Issued queries are transformed – Relevant networks are identified – Queries issued over data of individual networks • Query may involve log of historical data extracted from each network; or • Online query: Its execution still needs to be optimized within each network – Results annotated, combined 4

Data Collection – Potential Queries q Collecting all data (SELECT * queries) – Entire network or a subregion, periodically or frequently q Collect aggregates of data – Report AVG, SUM, MAX quantities in an area/network q Data Reduction based on user-specified data quality – In all types of queries: (historical, aggregate…) – Minimize bandwidth based on quality (or the dual problem) q Detecting Outliers – “Strange” readings: Interesting phenomenon or malfunction? q Joins – Report information based on combined readings of sensors (i. e. , report when a lion is close to a deer) – Harder to optimize. Naïve solution of sending potential joining tuples (or projected attributes of them) to base station is often not far from best case 5

One-shot vs Continuous Queries q One-shot Queries – Ask a query, get results, DONE q Continuous Queries – Perform a query • Specify how OFTEN it should be executed (query epoch) • Specify until WHEN it should run (optional) – “Report avg temp per room every 30 sec, for the next hour” – “Report all measurements of sensor nodes in Room X every 1 min” – More typical for monitoring applications – More data, more chances of doing something clever… 6

Data Collection – Types of Queries q Pull vs Push Based Queries – Pull-Based Queries: sensors transmit data only in response to queries that request them – Push-based Queries: transmit data to cache node proactively • If I know that someone will likely request it soon, transmit to avoid query propagation, organization etc… – Tradeoff based on how often data is requested by different queries • Hybrid Push-Pull Query Processing for Sensor Networks – Niki Trigoni, Yong Yao, Alan Demers, Johannes Gehrke, Rajmohan Rajaraman 7

Data Collection – Types of Queries q Pull vs Push Based Queries – Data are usually pulled based on user queries – Exceptions for queries to historical data • Node may need to push its bunch of latest measurements if memory becomes full – Metadata changes likely need to be pushed to some external directory • Allows queries to be performed based on “correct” knowledge • Is this an overkill for metadata that change constantly? – I. e. , time of acquired measurements is common – Careful organization helps. I. e. , time can sometimes be inferred – Possible to combine both approaches 8

Outline q Sensor Nodes – Brief Intro: Parts of sensors, capabilities, constraints q Techniques for Query Processing – Topologies for Data Collection – Data Reduction based on user-specified data quality – Detecting Outliers q Conclusions 9

Parts of a Sensor q Sensing equipment (sensor and data acquisition boards) – Internal (“built-in”) vs external sensing capabilities q CPU q Memory q Battery – Some sensors may collect gather energy from the sun, vibrations etc q Radio to transmit/receive data from other sensors 10

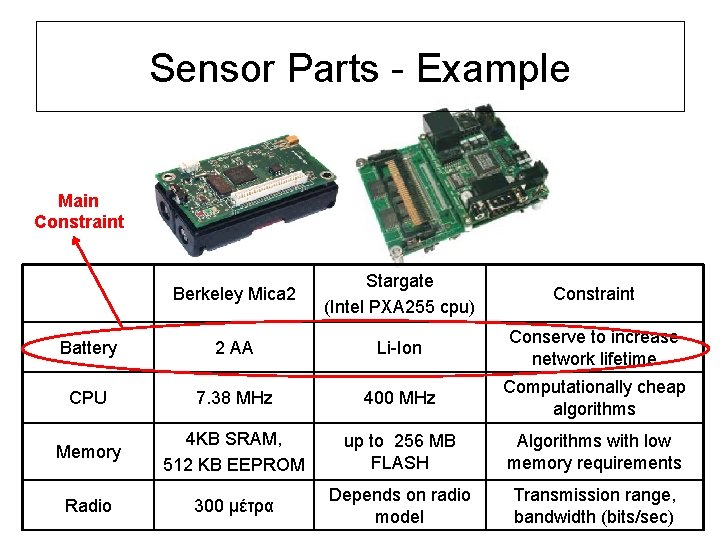

Sensor Parts - Example Main Constraint Berkeley Mica 2 Stargate (Intel PXA 255 cpu) Constraint Battery 2 ΑΑ Li-Ion Conserve to increase network lifetime CPU 7. 38 MHz 400 MHz Computationally cheap algorithms Memory 4 KB SRAM, 512 KB EEPROM up to 256 MB FLASH Algorithms with low memory requirements Radio 300 μέτρα Depends on radio model Transmission range, bandwidth (bits/sec)

Energy Constraints q 3 -5% battery yearly increase – CPU speed increases much faster • However, energy per cpu instruction decreases q Some applications: unattended deployment – Eg: Disaster scenarios, military environments… – Often hard or impossible to replace batteries q Maximizing network lifetime is the main target – Cost-effective only if sensor networks last long – Applications with sensors without power constraints are much easier to handle 12

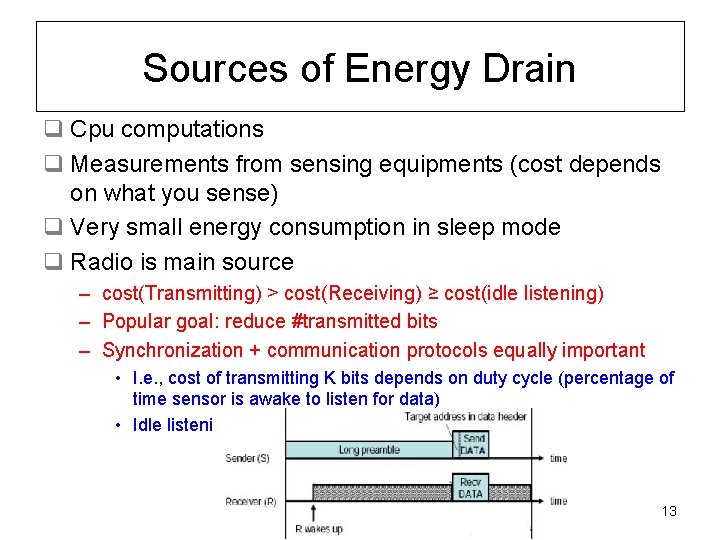

Sources of Energy Drain q Cpu computations q Measurements from sensing equipments (cost depends on what you sense) q Very small energy consumption in sleep mode q Radio is main source – cost(Transmitting) > cost(Receiving) ≥ cost(idle listening) – Popular goal: reduce #transmitted bits – Synchronization + communication protocols equally important • I. e. , cost of transmitting K bits depends on duty cycle (percentage of time sensor is awake to listen for data) • Idle listening for too long is extremely costly 13

Assumptions and Goals in Subsequent Algorithms q Research Emphasis on more constrained environments – Wireless communication, short transmission ranges • One or more base stations with increased capabilities may exist • Candidates for gateways to the semantic sensor web – Energy limitations (battery powered sensors) q Goal of algorithms in all applications that follow: – Preserve Energy – Organize sensors and their schedules • Good schedules allow sensors to power down their radios/cpus and go into a sleep mode – Reduce size of transmitted data q Processing (esp, aggregation) focuses on numeric measurements q Implication of having a strict schedule on when to collect data: base stations knows when quantities are collected – Such metadata may not even need to be transmitted 14

Outline q Sensor Nodes – Brief Intro: Parts of sensors, capabilities, constraints q Techniques for Query Processing – Topologies for Data Collection – Data Reduction (SELECT * and aggregate queries) – Detecting Outliers q Conclusions June 1, 2009 Antonios Deligiannakis 15

1 a. Data Collection: TAG q TAG: a Tiny Aggregation Service for Ad-Hoc Sensor Networks – Samuel Madden, Michael Franklin, Joseph Hellerstein q Goals – Specify SQL type query – Organize sensors and schedules them to reduce energy consumption – Emphasizes/uses IN-NETWORK query processing – Targets aggregate queries, but similar functionality can be used for SELECT * queries q Results of paper incorporated into Tiny. DB – Data processing system built on top of Tiny. OS 16

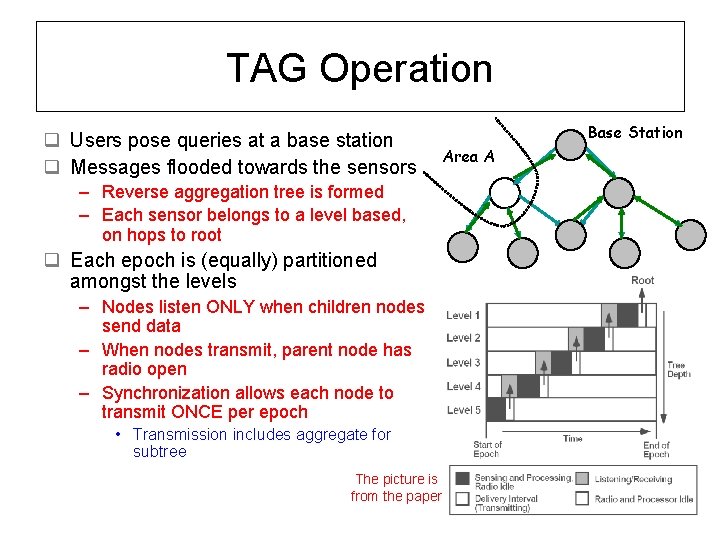

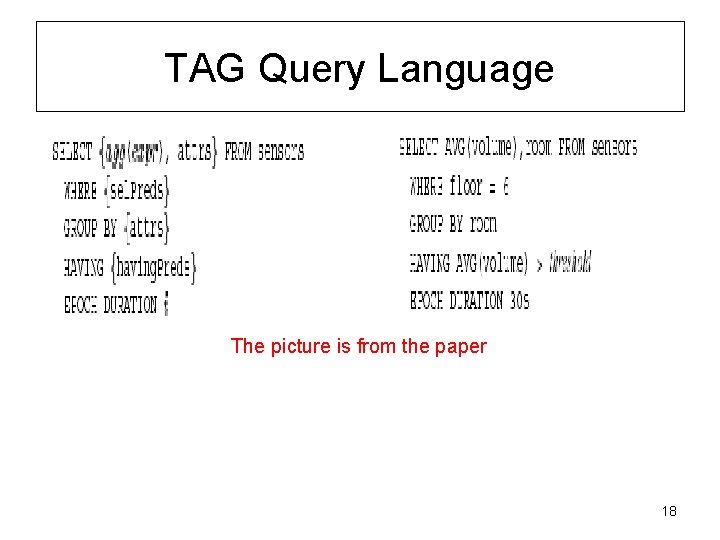

TAG Operation q Users pose queries at a base station q Messages flooded towards the sensors – Reverse aggregation tree is formed – Each sensor belongs to a level based, on hops to root q Each epoch is (equally) partitioned amongst the levels – Nodes listen ONLY when children nodes send data – When nodes transmit, parent node has radio open – Synchronization allows each node to transmit ONCE per epoch • Transmission includes aggregate for subtree The picture is from the paper Base Station Area A

TAG Query Language The picture is from the paper 18

TAG Contributions q Support of multiple aggregate functions AND group-by queries – Classification and behavior based on type of function q In network processing dramatically reduces transmitted data q Synchronization allows sensors to sleep most of the time q Further optimizations for monotonic aggregates – I. e. , MAX aggregate: Don’t transmit aggregate if you overhear a sibling that reports a larger aggregate q Considerations for message loss etc… 19

1 b. Data Collection: Wave. Scheduling q Wave. Scheduling: Energy-Efficient Data Dissemination for Sensor Networks – Niki Trigoni, Yong Yao, Alan J. Demers, Johannes Gehrke, Rajmohan Rajaraman – Proposed in the Cougar system q Observation: In TAG, nodes at the same level transmit at the same time – Many collisions, message losses – Many retransmissions, energy drain q Goal: Organize nodes in order to minimize message collisions 20

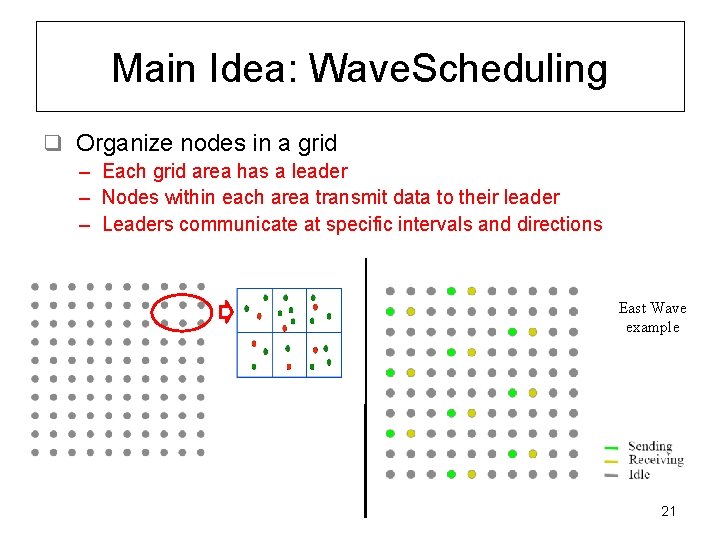

Main Idea: Wave. Scheduling q Organize nodes in a grid – Each grid area has a leader – Nodes within each area transmit data to their leader – Leaders communicate at specific intervals and directions East Wave example 21

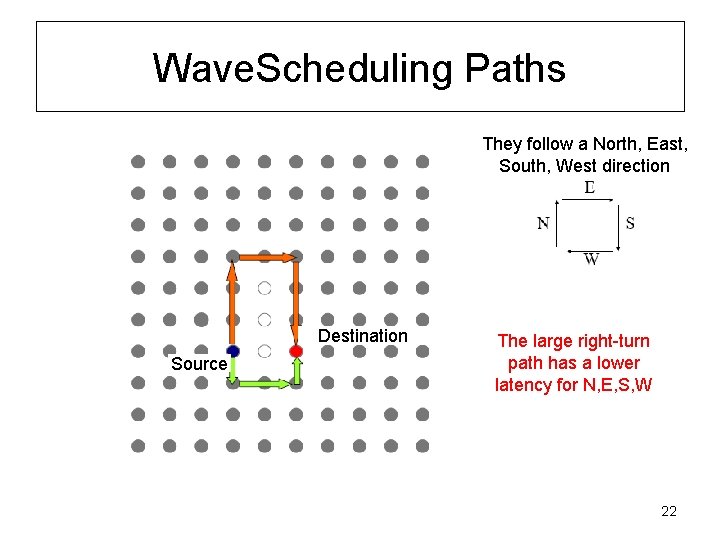

Wave. Scheduling Paths They follow a North, East, South, West direction Destination Source The large right-turn path has a lower latency for N, E, S, W 22

Wave. Scheduling Contributions q. Transmissions without collisions – Much lower energy drain – But also, significantly larger delays • Few nodes transmit at each time, long paths to follow 23

Outline q Sensor Nodes – Brief Intro: Parts of sensors, capabilities, constraints q Techniques for Query Processing – Topologies for Data Collection – Data Reduction (SELECT * and aggregate queries) – Detecting Outliers q Conclusions June 1, 2009 Antonios Deligiannakis 24

2 a. Compressing Historical Measurements Compressing Historical Information in Sensor Networks – A. Deligiannakis, Y. Kotidis, N. Roussopoulos q Application: If past measurements are important, they should be periodically transmitted to base station, before the memory is exhausted q Transmitting all measurements is costly 25

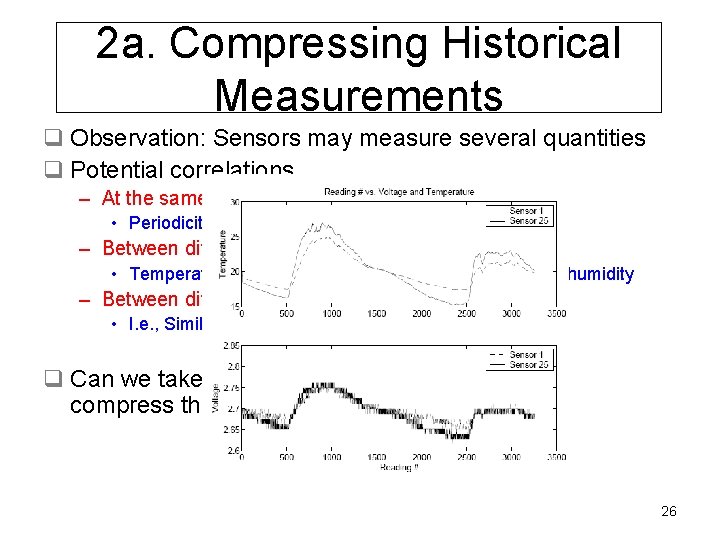

2 a. Compressing Historical Measurements q Observation: Sensors may measure several quantities q Potential correlations – At the same quantity • Periodicity, similar trends – Between different quantities • Temperature and Voltage [Deshpande 04], pressure and humidity – Between different sensors in an area • I. e. , Similar temperature and noise levels q Can we take advantage of such correlations to compress the data? 26

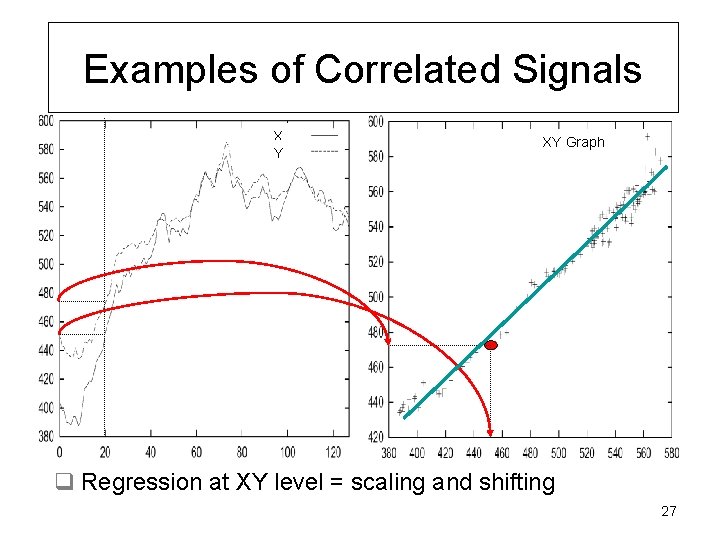

Examples of Correlated Signals X Y XY Graph q Regression at XY level = scaling and shifting 27

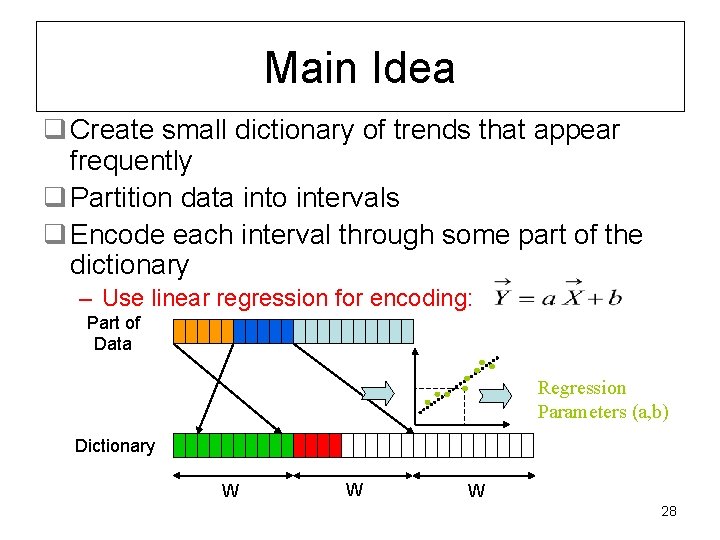

Main Idea q Create small dictionary of trends that appear frequently q Partition data into intervals q Encode each interval through some part of the dictionary – Use linear regression for encoding: Part of Data Regression Parameters (a, b) Dictionary W W W 28

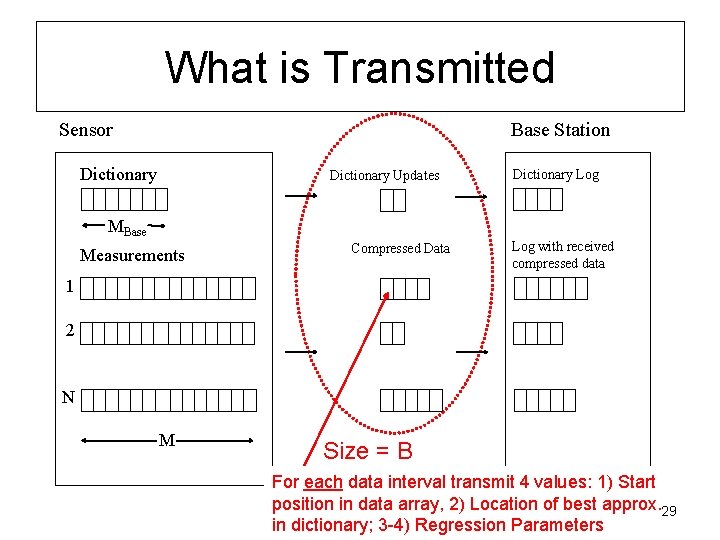

What is Transmitted Sensor Base Station Dictionary Updates Dictionary Log ΜBase Measurements Compressed Data Log with received compressed data 1 2 N M Size = B For each data interval transmit 4 values: 1) Start position in data array, 2) Location of best approx. 29 in dictionary; 3 -4) Regression Parameters

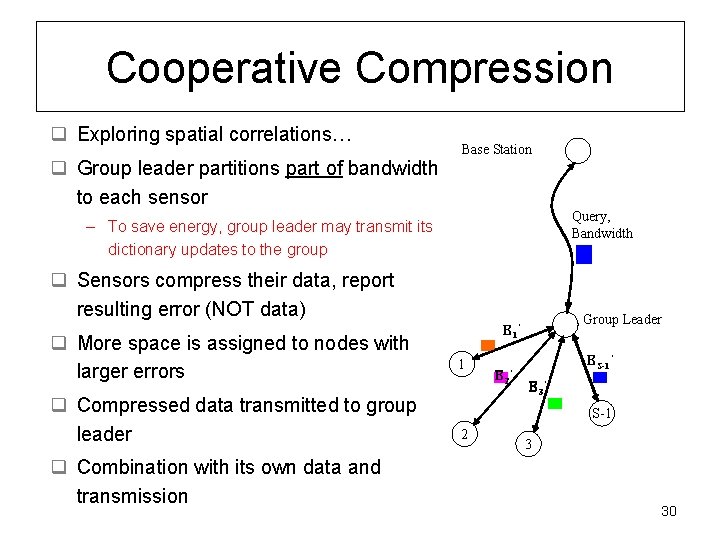

Cooperative Compression q Exploring spatial correlations… q Group leader partitions part of bandwidth to each sensor Base Station Query, Bandwidth – To save energy, group leader may transmit its dictionary updates to the group q Sensors compress their data, report resulting error (NOT data) q More space is assigned to nodes with larger errors q Compressed data transmitted to group leader q Combination with its own data and transmission Group Leader Β E 1’ B 1 Β E 2’ B ES-1’ Β B Β E 3’ B S-1 2 3 30

Algorithm Benefits q To minimize different error metrics, simply change regression algorithm – I. e. , SSE, SSRE and Max errors are simple to handle q More space to difficult signals/sensors q Group organization saves team the need to compute dictionary (expensive part) – Need to rotate group leader selection, to avoid draining energy – Can apply HEED protocol for group leader selection • Prob of becoming group leader is analogous to Ecurr / Einit 31

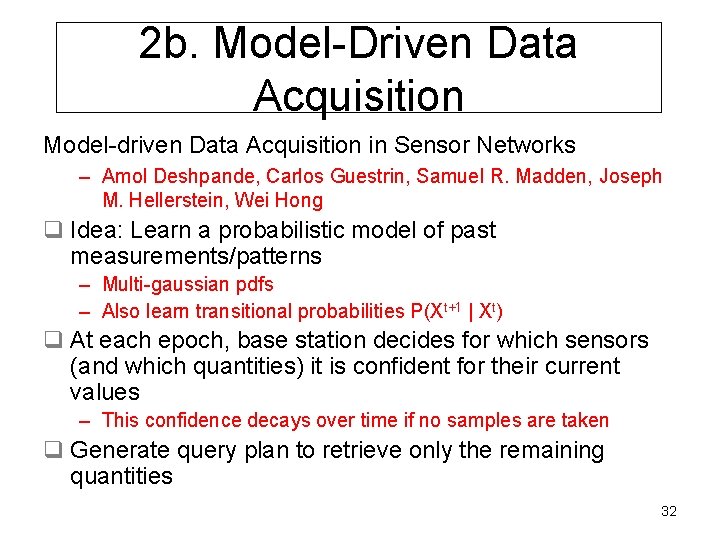

2 b. Model-Driven Data Acquisition Model-driven Data Acquisition in Sensor Networks – Amol Deshpande, Carlos Guestrin, Samuel R. Madden, Joseph M. Hellerstein, Wei Hong q Idea: Learn a probabilistic model of past measurements/patterns – Multi-gaussian pdfs – Also learn transitional probabilities P(Xt+1 | Xt) q At each epoch, base station decides for which sensors (and which quantities) it is confident for their current values – This confidence decays over time if no samples are taken q Generate query plan to retrieve only the remaining quantities 32

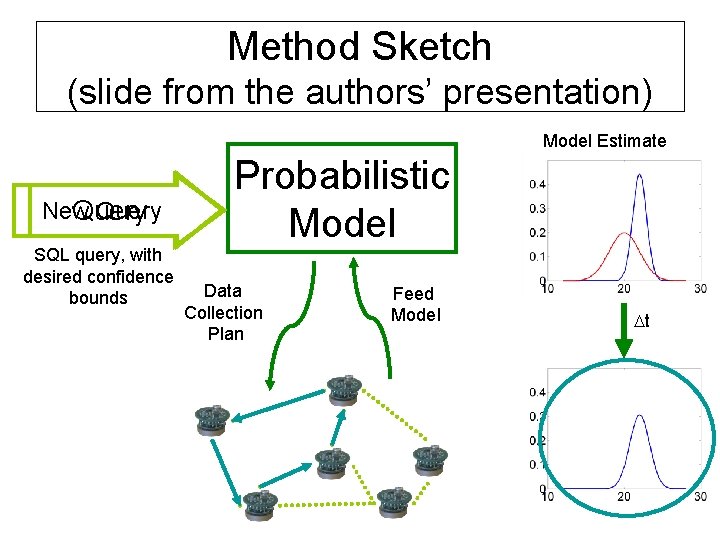

Method Sketch (slide from the authors’ presentation) Model Estimate New Query SQL query, with desired confidence bounds Probabilistic Model Data Collection Plan Feed Model Dt

Algorithm Characteristics q Takes advantage of correlations – May decide to sample voltage instead of temperature, to decrease energy q Model can be used for missing data (inaccessible sensors) q Can handle point and range queries q However, hard to handle previously unseen patterns – “Thus, for models to perform accurate, predictions they must be trained in the kind of environment where they will be used” q Centralized model, difficult to scale – Subsequent work by same authors proposed a more distributed system (KEN) 34

2 c. Snapshot Queries q Snapshot Queries: Towards Data-Centric Sensor Networks – Yannis Kotidis q Idea: Nodes in proximity likely observe similar things q Find if you are needed to answer queries – Does there exist a representative that can approximate your data accurately? q Nodes that are needed (representatives) constitute network snapshot – Can estimate and answer queries for remaining nodes as well q Completely decentralized approach – Representatives tested, change with few messages 35

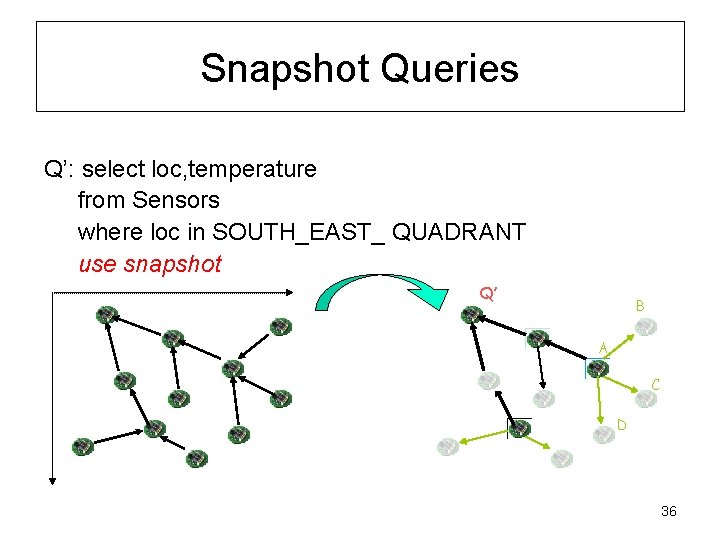

Snapshot Queries Q’: select loc, temperature from Sensors where loc in SOUTH_EAST_ QUADRANT use snapshot Q’ B A C D 36

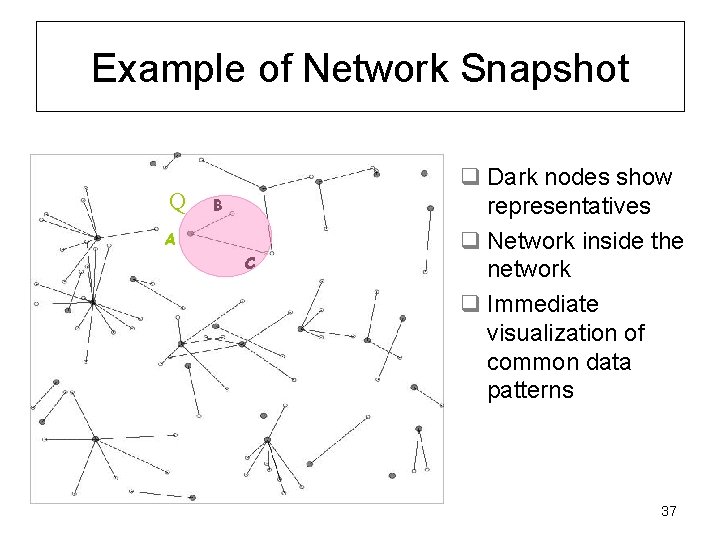

Example of Network Snapshot Q B A C q Dark nodes show representatives q Network inside the network q Immediate visualization of common data patterns 37

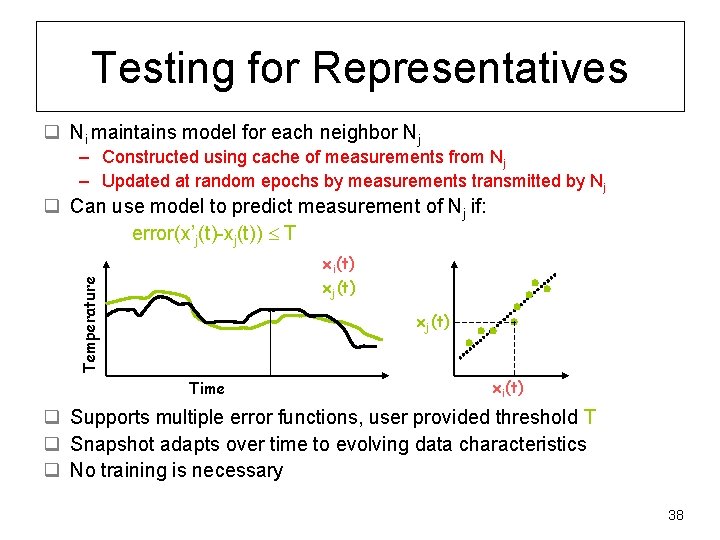

Testing for Representatives q Ni maintains model for each neighbor Nj – Constructed using cache of measurements from Nj – Updated at random epochs by measurements transmitted by Nj q Can use model to predict measurement of Nj if: error(x’j(t)-xj(t)) T Temperature xi(t) xj(t) Time xi(t) q Supports multiple error functions, user provided threshold T q Snapshot adapts over time to evolving data characteristics q No training is necessary 38

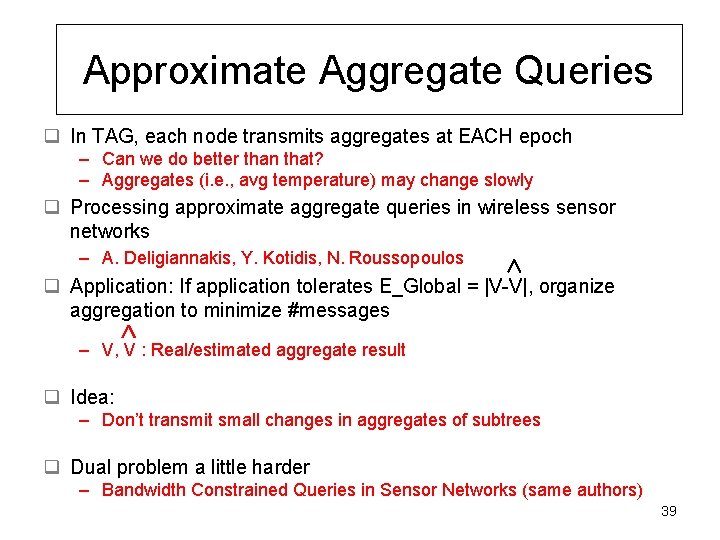

Approximate Aggregate Queries q In TAG, each node transmits aggregates at EACH epoch – Can we do better than that? – Aggregates (i. e. , avg temperature) may change slowly q Processing approximate aggregate queries in wireless sensor networks – A. Deligiannakis, Y. Kotidis, N. Roussopoulos q Application: If application tolerates E_Global = |V-V|, organize aggregation to minimize #messages – V, V : Real/estimated aggregate result q Idea: – Don’t transmit small changes in aggregates of subtrees q Dual problem a little harder – Bandwidth Constrained Queries in Sensor Networks (same authors) 39

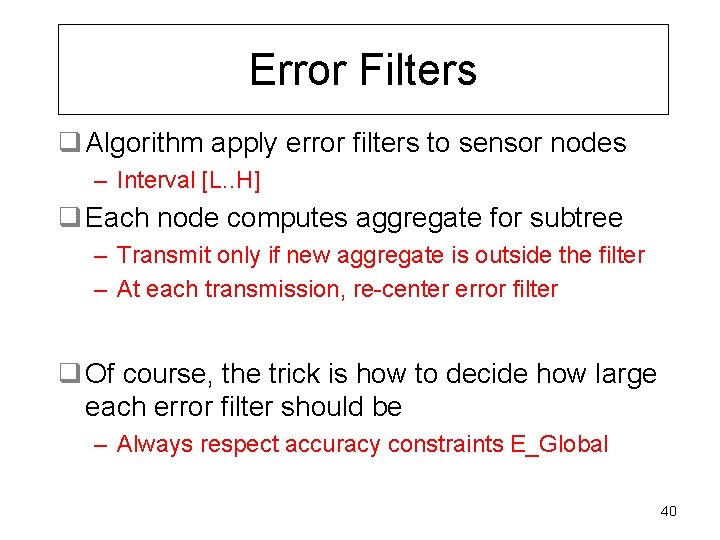

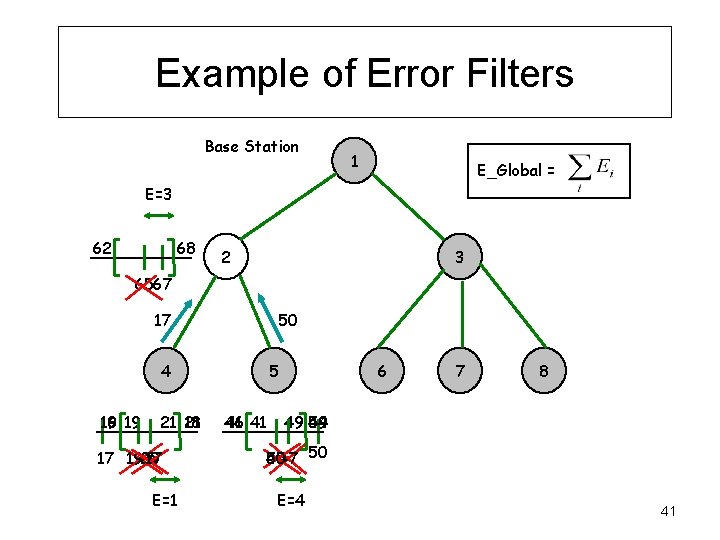

Error Filters q Algorithm apply error filters to sensor nodes – Interval [L. . H] q Each node computes aggregate for subtree – Transmit only if new aggregate is outside the filter – At each transmission, re-center error filter q Of course, the trick is how to decide how large each error filter should be – Always respect accuracy constraints E_Global 40

Example of Error Filters Base Station 1 E_Global = E=3 62 68 2 3 6567 17 50 4 16 19 19 21 21 18 17 17 19. 5 20 E=1 5 46 41 41 6 7 8 49 49 54 5047 50 45 E=4 41

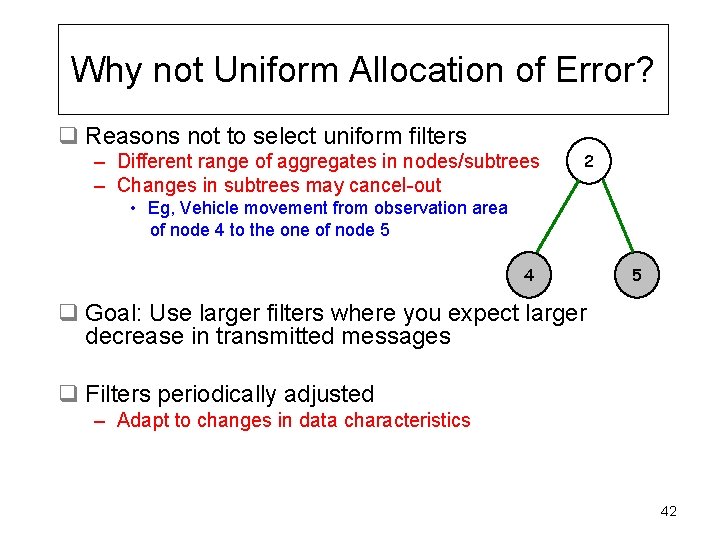

Why not Uniform Allocation of Error? q Reasons not to select uniform filters – Different range of aggregates in nodes/subtrees – Changes in subtrees may cancel-out 2 • Eg, Vehicle movement from observation area of node 4 to the one of node 5 4 5 q Goal: Use larger filters where you expect larger decrease in transmitted messages q Filters periodically adjusted – Adapt to changes in data characteristics 42

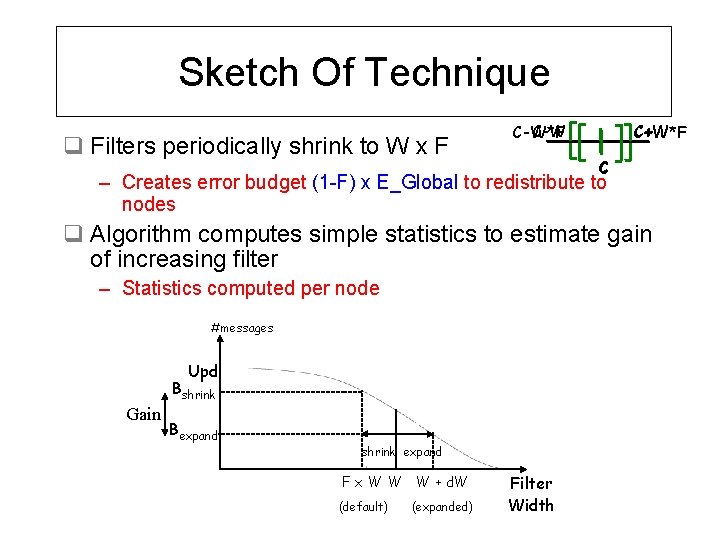

Sketch Of Technique q Filters periodically shrink to W x F C-W*F C+W*F C – Creates error budget (1 -F) x E_Global to redistribute to nodes q Algorithm computes simple statistics to estimate gain of increasing filter – Statistics computed per node #messages Upd Bshrink Gain Bexpand shrink expand F x W W W + d. W (default) (expanded) Filter Width

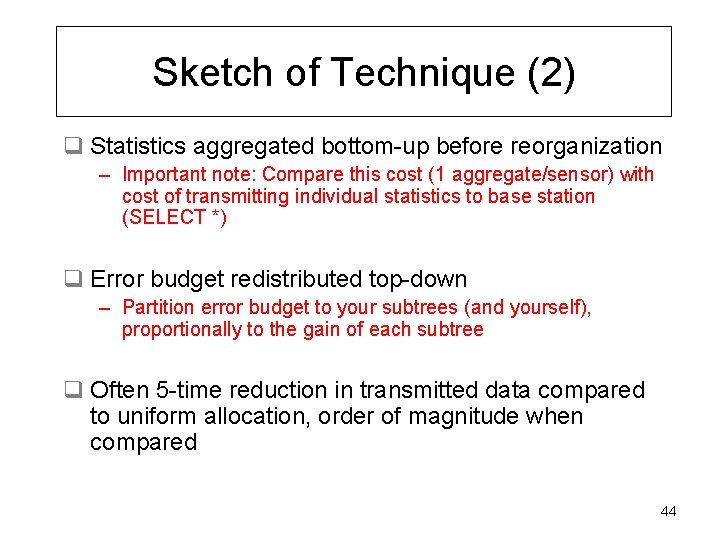

Sketch of Technique (2) q Statistics aggregated bottom-up before reorganization – Important note: Compare this cost (1 aggregate/sensor) with cost of transmitting individual statistics to base station (SELECT *) q Error budget redistributed top-down – Partition error budget to your subtrees (and yourself), proportionally to the gain of each subtree q Often 5 -time reduction in transmitted data compared to uniform allocation, order of magnitude when compared 44

Outline q Sensor Nodes – Brief Intro: Parts of sensors, capabilities, constraints q Techniques for Query Processing – Topologies for Data Collection – Data Reduction (SELECT * and aggregate queries) – Detecting Outliers q Conclusions June 1, 2009 Antonios Deligiannakis 45

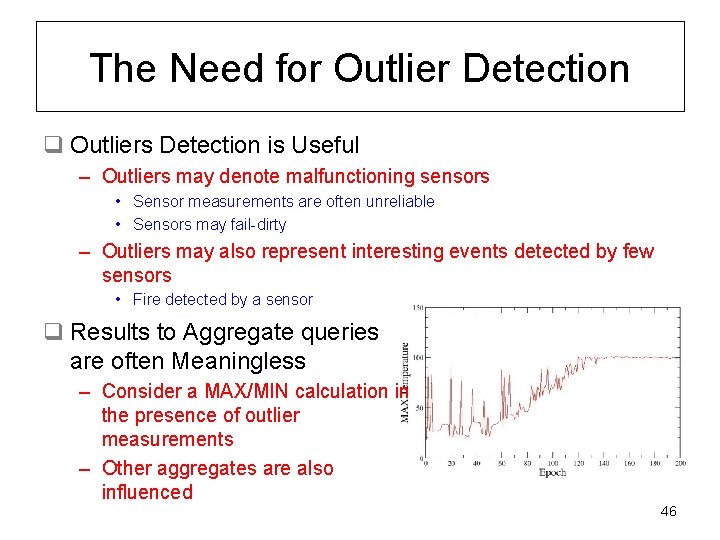

The Need for Outlier Detection q Outliers Detection is Useful – Outliers may denote malfunctioning sensors • Sensor measurements are often unreliable • Sensors may fail-dirty – Outliers may also represent interesting events detected by few sensors • Fire detected by a sensor q Results to Aggregate queries are often Meaningless – Consider a MAX/MIN calculation in the presence of outlier measurements – Other aggregates are also influenced 46

What do we Need? q Goals for aggregate queries: – A “clean” aggregate – Reporting of outlier values – Both in a SINGLE, in-network framework 47

What to Consider as an Outlier? q Need to support several similarity metrics q Also consider characteristics of monitored quantity – Measurements may depend on distance from source (e. g. , noise, heat) – Simply relying on values for testing similarity between sensors is not enough – comparing recent trends may be more appropriate q Provide provision for user-specific “minimum support” – How many other sensors need to be similar to you, so that you are not considered as an outlier? 48

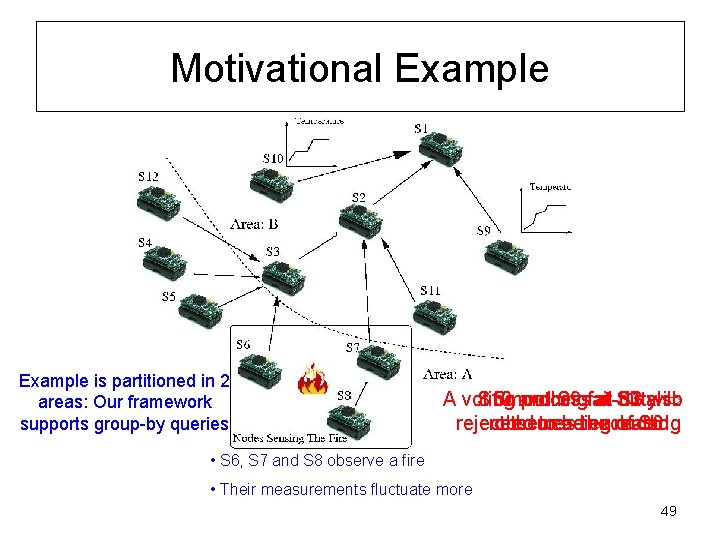

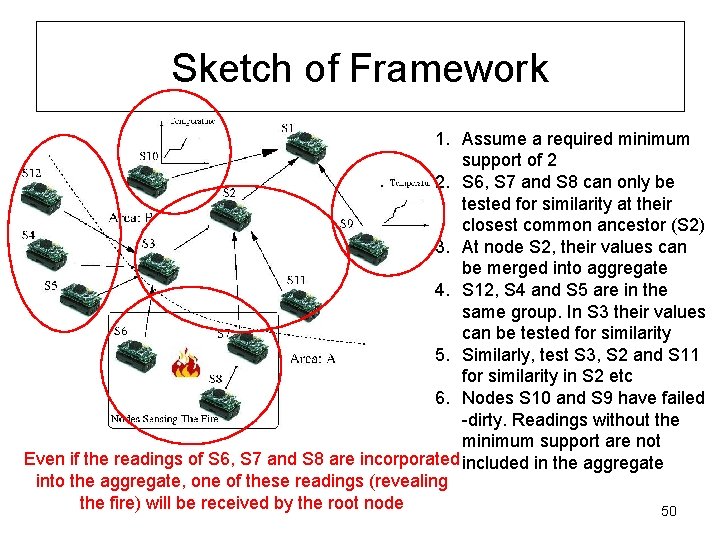

Motivational Example is partitioned in 2 areas: Our framework supports group-by queries S 10 Smoothing and S 9 fail-dirty at A voting process at S 3 also will need obscures bethe excluded reading reject the to reading of S 6 • S 6, S 7 and S 8 observe a fire • Their measurements fluctuate more 49

Sketch of Framework 1. Assume a required minimum support of 2 2. S 6, S 7 and S 8 can only be tested for similarity at their closest common ancestor (S 2) 3. At node S 2, their values can be merged into aggregate 4. S 12, S 4 and S 5 are in the same group. In S 3 their values can be tested for similarity 5. Similarly, test S 3, S 2 and S 11 for similarity in S 2 etc 6. Nodes S 10 and S 9 have failed -dirty. Readings without the minimum support are not Even if the readings of S 6, S 7 and S 8 are incorporated included in the aggregate into the aggregate, one of these readings (revealing the fire) will be received by the root node 50

Framework Features q Performs similarity tests over the latest K readings of sensors – Can plug similarity functions with minimal cost q Allows for minimum support q GROUP-BY support – Grouping based on latest measurement, OR static predicates (area, id etc) q Can limit tests within each group using a CONSTRAIN TEST clause – Semantic information (i. e. , location) could be useful here – I. e. , only perform tests between sensors in the same floor q Collection Tree periodically reorganized – Move towards places you will find witnesses, outliers 51

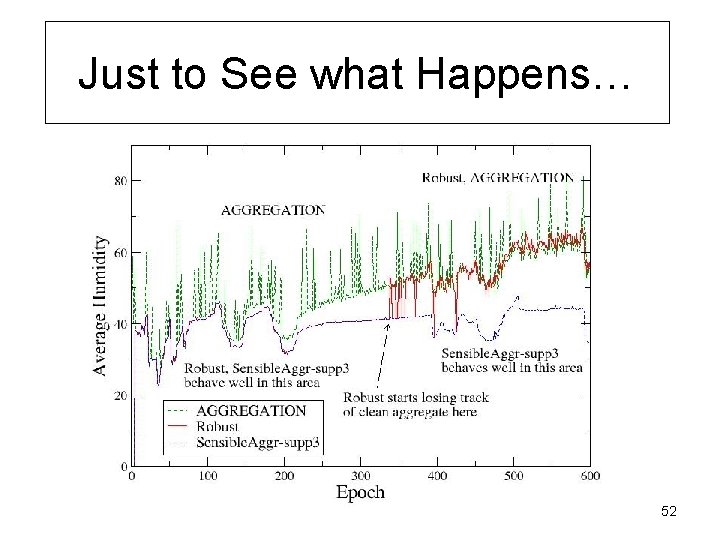

Just to See what Happens… 52

Some More Notable Approaches q Cluster-Based Communication – – LEACH, Pegasis, HEED Goal: Organize sensors into clusters Intra-cluster and inter-cluster communication Overhead for clusterheads, so probabilistic election and rotation q Optimizing Scheduling – Nodes have different loads to transmit – Can determine minimal times to transmit/listen based on worst case estimates of time to transmit/link – See: “Workload-aware Optimization of Query Routing Trees in Wireless Sensor Networks (Micro. Pulse) by P. Andreou, D. Zeinalipour-Yazti, P. Chrysanthis, G. Samaras 53

Some More Notable Approaches (2) q Sensor Localization – Few sensor nodes are actually GPS-enabled • Catalog for Crossbow gives only 1 such data acquisition board (MTS 420) – Several algorithms for sensor localization • Option 1: Sensors are not localized, but there exist landmarks with GPS knowledge of themselves • Option 2: Mobile sensors can localize even without any infrastructure, if (1) the sensors are free to move, (b) they have a common reference point (i. e. , direction of North) – GPSFree Node Localization in Mobile Wireless Sensor Networks, by Huseyin Akcan, Vassil Kriakov, Herve Bronnimann, Alex Delis q Duplicate-Insensitive sketches for aggregate queries – Minimizes impact of data loss 54

Conclusions q Presented several query processing techniques – All aim to process data in-network • Crucial for network lifetime – Techniques for extraction of historical measurements, or online querying (point, range, aggregate, group-by) – All data reduction techniques presented are easily tunable • Given desired error/accuracy, minimize bandwidth consumption; or • Given bandwidth consumption, minimize error of produced results • Both useful to satisfy different user requirements – Important to properly schedule nodes • Increases available time to sleep, decreases the energy drain • If possible, avoid creating different schedule per each query – Too many conflicts, sensor continuously working… 55

- Slides: 55