Query Operations Relevance Feedback and Personalization CSC 575

Query Operations; Relevance Feedback; and Personalization CSC 575 Intelligent Information Retrieval

i. Topics 4 Query Expansion i. Thesaurus based i. Automatic global and local analysis i. Word Embeddings 4 Relevance Feedback via Query modification 4 Information Filtering through Personalization i. Collaborative and Content-Based Recommender Systems i. Social Recommendation i. Interface Agents and Agents for Information Filtering Intelligent Information Retrieval 2

Thesaurus-Based Query Expansion i For each term, t, in a query, expand the query with synonyms and related words of t from thesaurus. i May weight added terms less than original query terms. i Generally increases recall. i May significantly decrease precision, particularly with ambiguous terms. 4 “interest rate” “interest rate fascinate evaluate” i Word. Net 4 A more detailed database of semantic relationships between English words. 4 Developed by famous cognitive psychologist George Miller and a team at Princeton University. 4 About 144, 000 English words. 4 Nouns, adjectives, verbs, and adverbs grouped into about 109, 000 synonym sets called synsets. Intelligent Information Retrieval 3

Word. Net Synset Relationships i i i i i Antonym: front back Attribute: benevolence good (noun to adjective) Pertainym: alphabetical alphabet (adjective to noun) Similar: unquestioning absolute Cause: kill die Entailment: breathe inhale Holonym: chapter text (part-of) Meronym: computer cpu (whole-of) Hyponym: tree plant (specialization) Hypernym: fruit apple (generalization) i Word. Net Query Expansion 4 Add synonyms in the same synset. 4 Add hyponyms to add specialized terms. 4 Add hypernyms to generalize a query. 4 Add other related terms to expand query. Intelligent Information Retrieval 4

Statistical Thesaurus i Problems with human-developed thesauri 4 Existing ones are not easily available in all languages. 4 Human thesuari are limited in the type and range of synonymy and semantic relations they represent. 4 Semantically related terms can be discovered from statistical analysis of corpora. i Automatic Global Analysis 4 Determine term similarity through a pre-computed statistical analysis of the complete corpus. 4 Compute association matrices which quantify term correlations in terms of how frequently they co-occur. 4 Expand queries with statistically most similar terms. Intelligent Information Retrieval 5

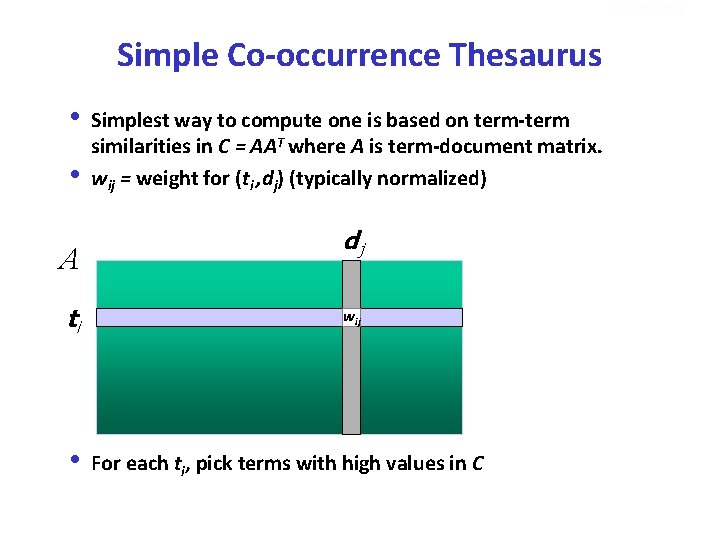

Sec. 9. 2. 3 Simple Co-occurrence Thesaurus i Simplest way to compute one is based on term-term similarities in C = AAT where A is term-document matrix. i wij = weight for (ti , dj) (typically normalized) A ti dj wij i For each ti, pick terms with high values in C

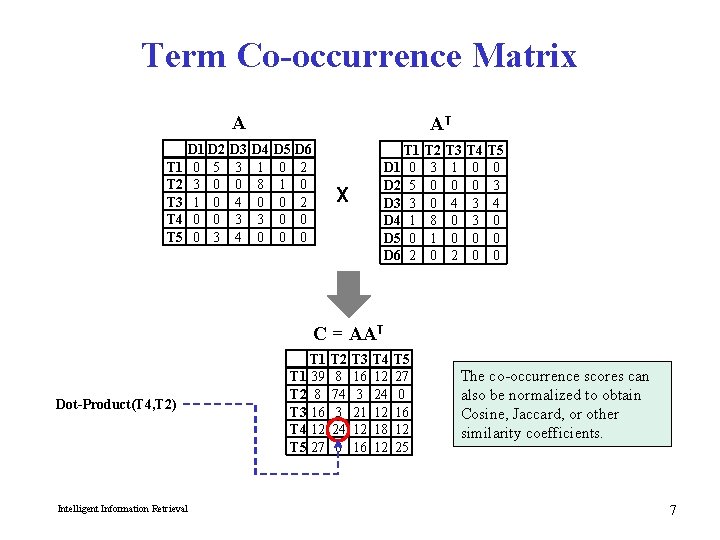

Term Co-occurrence Matrix A T 1 T 2 T 3 T 4 T 5 D 1 D 2 0 5 3 0 1 0 0 3 D 3 3 0 4 3 4 AT D 4 1 8 0 3 0 D 5 0 1 0 0 0 D 6 2 0 0 T 1 D 1 0 D 2 5 D 3 3 D 4 1 D 5 0 D 6 2 X T 2 3 0 0 8 1 0 T 3 1 0 4 0 0 2 T 4 0 0 3 3 0 0 T 5 0 3 4 0 0 0 C = AAT Dot-Product(T 4, T 2) Intelligent Information Retrieval T 1 39 T 2 8 T 3 16 T 4 12 T 5 27 T 2 8 74 3 24 0 T 3 16 3 21 12 16 T 4 12 24 12 18 12 T 5 27 0 16 12 25 The co-occurrence scores can also be normalized to obtain Cosine, Jaccard, or other similarity coefficients. 7

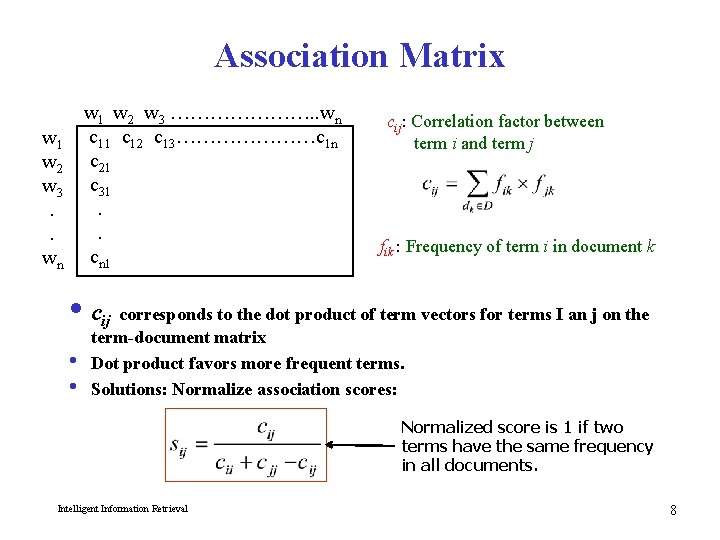

Association Matrix w 1 w 2 w 3. . wn w 1 w 2 w 3 …………………. . wn c 11 c 12 c 13…………………c 1 n c 21 c 31. . cn 1 cij: Correlation factor between term i and term j fik : Frequency of term i in document k i cij corresponds to the dot product of term vectors for terms I an j on the term-document matrix i Dot product favors more frequent terms. i Solutions: Normalize association scores: Normalized score is 1 if two terms have the same frequency in all documents. Intelligent Information Retrieval 8

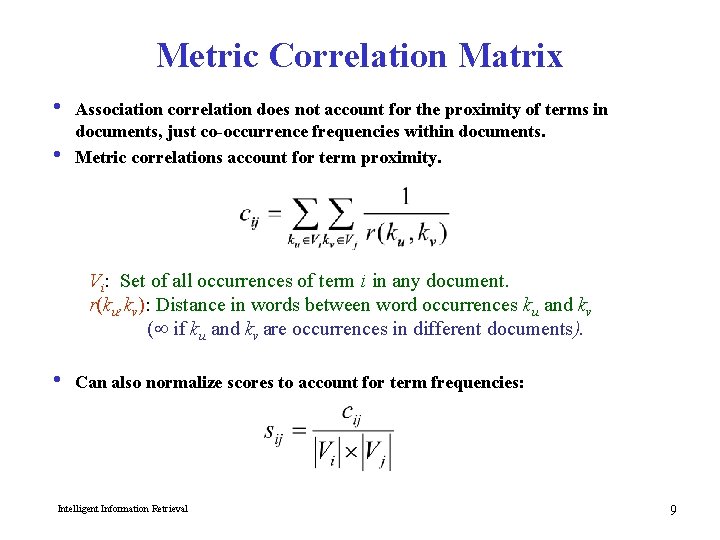

Metric Correlation Matrix i Association correlation does not account for the proximity of terms in documents, just co-occurrence frequencies within documents. i Metric correlations account for term proximity. Vi: Set of all occurrences of term i in any document. r(ku, kv): Distance in words between word occurrences ku and kv ( if ku and kv are occurrences in different documents). i Can also normalize scores to account for term frequencies: Intelligent Information Retrieval 9

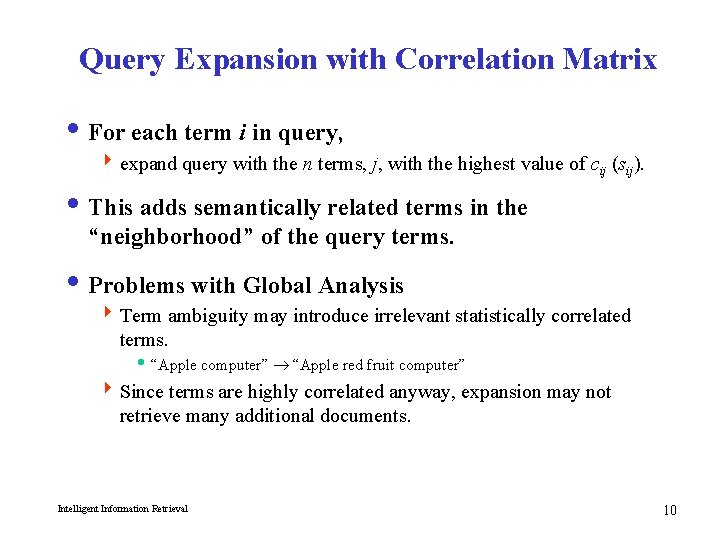

Query Expansion with Correlation Matrix i For each term i in query, 4 expand query with the n terms, j, with the highest value of cij (sij). i This adds semantically related terms in the “neighborhood” of the query terms. i Problems with Global Analysis 4 Term ambiguity may introduce irrelevant statistically correlated terms. i “Apple computer” “Apple red fruit computer” 4 Since terms are highly correlated anyway, expansion may not retrieve many additional documents. Intelligent Information Retrieval 10

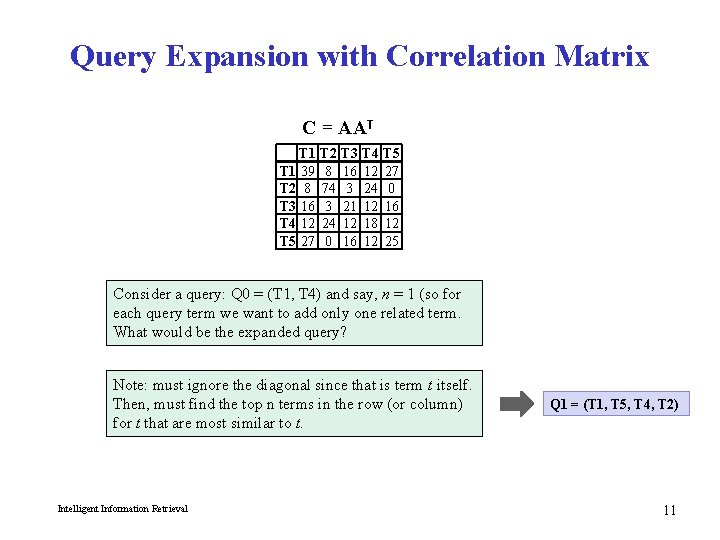

Query Expansion with Correlation Matrix C = AAT T 1 39 T 2 8 T 3 16 T 4 12 T 5 27 T 2 8 74 3 24 0 T 3 16 3 21 12 16 T 4 12 24 12 18 12 T 5 27 0 16 12 25 Consider a query: Q 0 = (T 1, T 4) and say, n = 1 (so for each query term we want to add only one related term. What would be the expanded query? Note: must ignore the diagonal since that is term t itself. Then, must find the top n terms in the row (or column) for t that are most similar to t. Intelligent Information Retrieval Q 1 = (T 1, T 5, T 4, T 2) 11

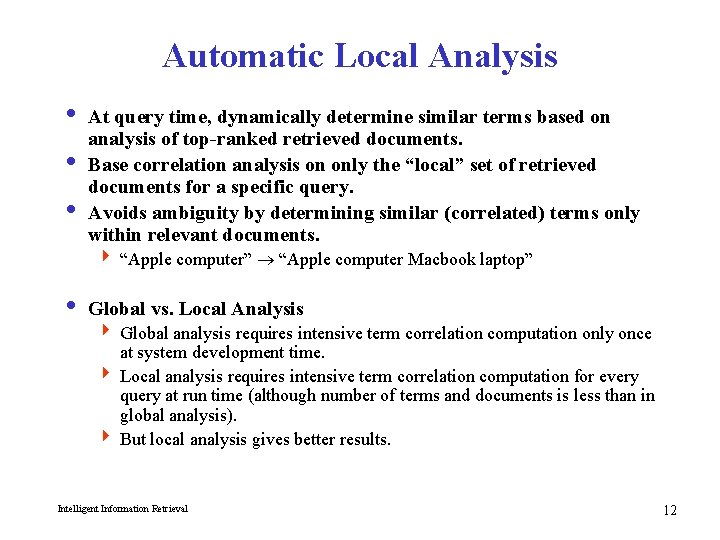

Automatic Local Analysis i At query time, dynamically determine similar terms based on analysis of top-ranked retrieved documents. i Base correlation analysis on only the “local” set of retrieved documents for a specific query. i Avoids ambiguity by determining similar (correlated) terms only within relevant documents. 4 “Apple computer” “Apple computer Macbook laptop” i Global vs. Local Analysis 4 Global analysis requires intensive term correlation computation only once at system development time. 4 Local analysis requires intensive term correlation computation for every query at run time (although number of terms and documents is less than in global analysis). 4 But local analysis gives better results. Intelligent Information Retrieval 12

Global Analysis Refinements i Only expand query with terms that are similar to all terms in the query. 4 “fruit” not added to “Apple computer” since it is far from “computer. ” 4 “fruit” added to “apple pie” since “fruit” close to both “apple” and “pie. ” i Use more sophisticated term weights (instead of just frequency) when computing term correlations. Intelligent Information Retrieval 13

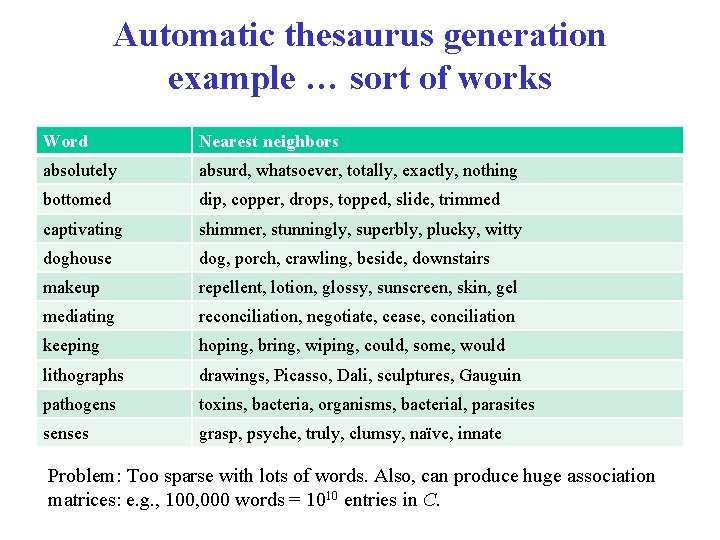

Automatic thesaurus generation example … sort of works Word Nearest neighbors absolutely absurd, whatsoever, totally, exactly, nothing bottomed dip, copper, drops, topped, slide, trimmed captivating shimmer, stunningly, superbly, plucky, witty doghouse dog, porch, crawling, beside, downstairs makeup repellent, lotion, glossy, sunscreen, skin, gel mediating reconciliation, negotiate, cease, conciliation keeping hoping, bring, wiping, could, some, would lithographs drawings, Picasso, Dali, sculptures, Gauguin pathogens toxins, bacteria, organisms, bacterial, parasites senses grasp, psyche, truly, clumsy, naïve, innate Problem: Too sparse with lots of words. Also, can produce huge association matrices: e. g. , 100, 000 words = 1010 entries in C.

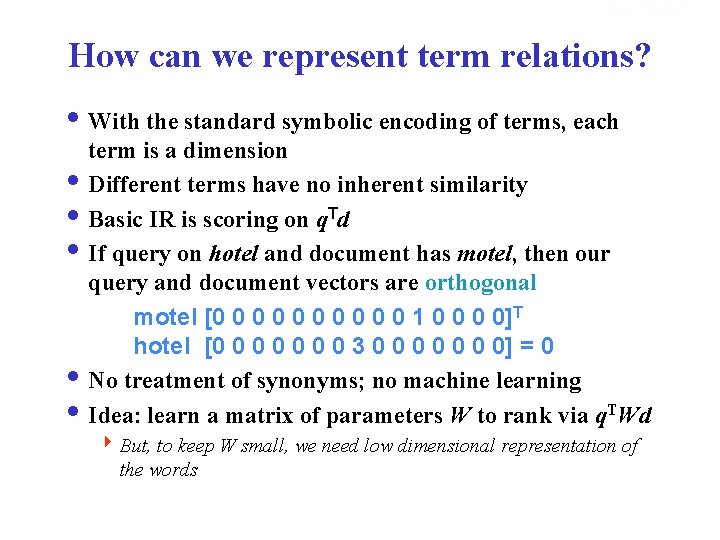

Sec. 9. 2. 2 How can we represent term relations? i With the standard symbolic encoding of terms, each term is a dimension i Different terms have no inherent similarity i Basic IR is scoring on q. Td i If query on hotel and document has motel, then our query and document vectors are orthogonal motel [0 0 0 0 0 1 0 0]T hotel [0 0 0 0 3 0 0 0 0] = 0 i No treatment of synonyms; no machine learning i Idea: learn a matrix of parameters W to rank via q. TWd 4 But, to keep W small, we need low dimensional representation of the words

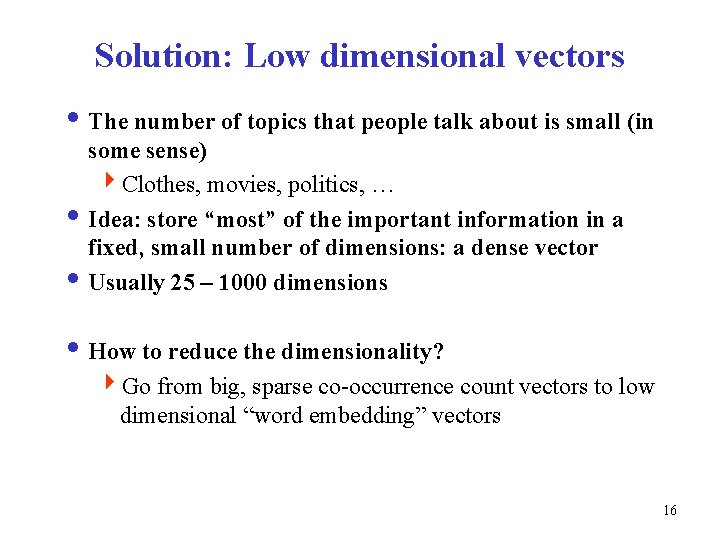

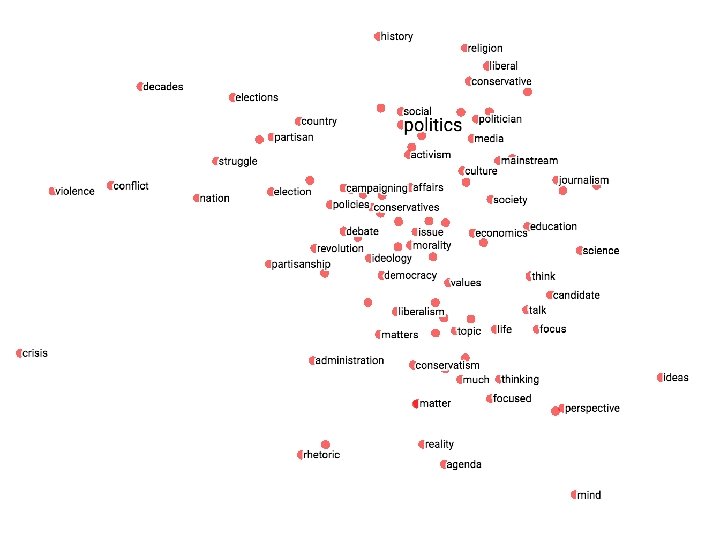

Solution: Low dimensional vectors i The number of topics that people talk about is small (in some sense) 4 Clothes, movies, politics, … i Idea: store “most” of the important information in a fixed, small number of dimensions: a dense vector i Usually 25 – 1000 dimensions i How to reduce the dimensionality? 4 Go from big, sparse co-occurrence count vectors to low dimensional “word embedding” vectors 16

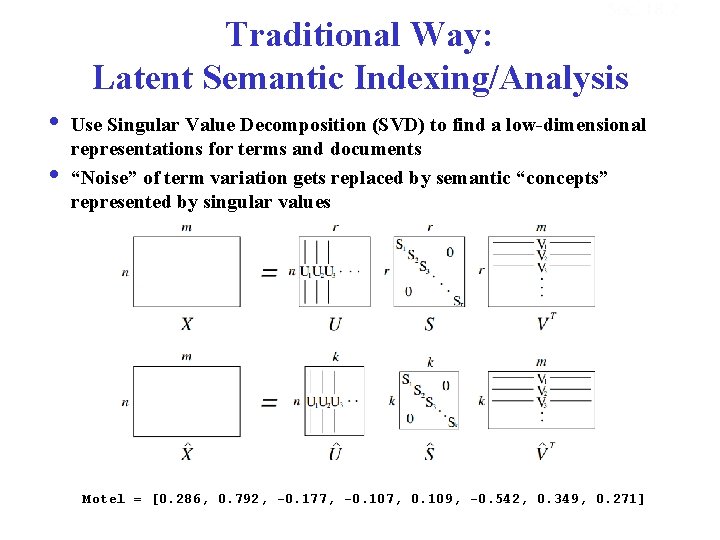

Sec. 18. 2 Traditional Way: Latent Semantic Indexing/Analysis i Use Singular Value Decomposition (SVD) to find a low-dimensional representations for terms and documents i “Noise” of term variation gets replaced by semantic “concepts” represented by singular values Motel = [0. 286, 0. 792, -0. 177, -0. 107, 0. 109, -0. 542, 0. 349, 0. 271]

Sec. 9. 2. 2 How can we represent term relations? i Alternatively, we can directly learn word relations i Learn a dense low-dimensional representation of a word in d dimensions such that dot products u. Tv express word similarity i We could still include a “translation” matrix between vocabularies (e. g. , cross-language): u. TWv 4 But now W is small! i Supervised Semantic Indexing (Bai et al. Journal of Information Retrieval 2009) shows successful use of learning W for information retrieval

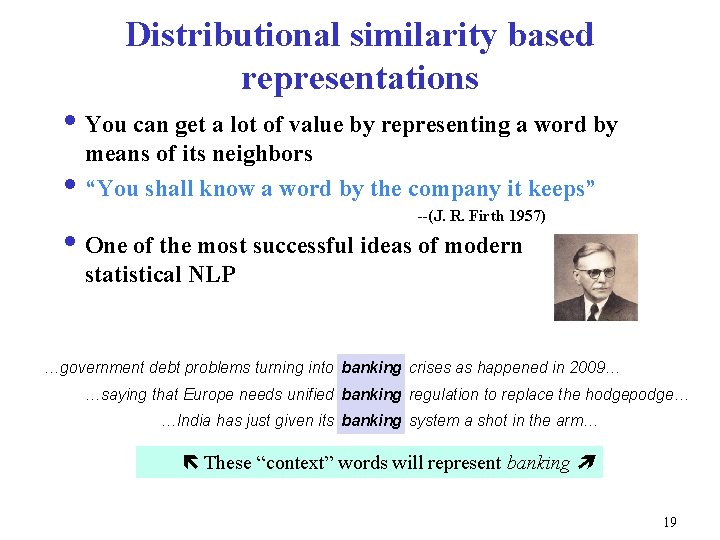

Distributional similarity based representations i You can get a lot of value by representing a word by means of its neighbors i “You shall know a word by the company it keeps” --(J. R. Firth 1957) i One of the most successful ideas of modern statistical NLP …government debt problems turning into banking crises as happened in 2009… These represent banking the hodgepodge… …saying that Europe needs words unified will banking regulation to replace …India has just given its banking system a shot in the arm… These “context” words will represent banking 19

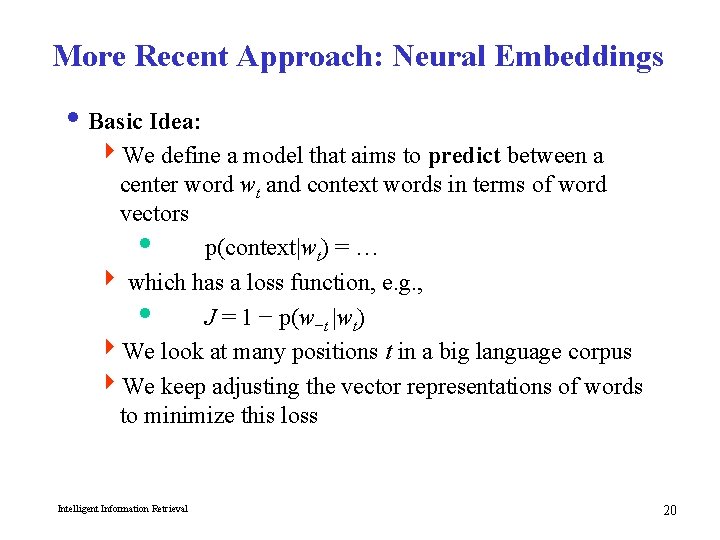

More Recent Approach: Neural Embeddings i Basic Idea: 4 We define a model that aims to predict between a center word wt and context words in terms of word vectors i p(context|wt) = … 4 which has a loss function, e. g. , i J = 1 − p(w−t |wt) 4 We look at many positions t in a big language corpus 4 We keep adjusting the vector representations of words to minimize this loss Intelligent Information Retrieval 20

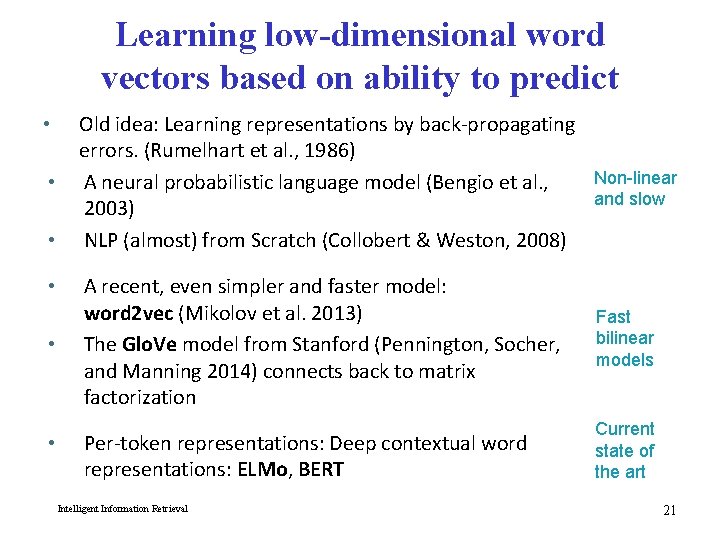

Learning low-dimensional word vectors based on ability to predict • • • Old idea: Learning representations by back-propagating errors. (Rumelhart et al. , 1986) Non-linear A neural probabilistic language model (Bengio et al. , and slow 2003) NLP (almost) from Scratch (Collobert & Weston, 2008) A recent, even simpler and faster model: word 2 vec (Mikolov et al. 2013) The Glo. Ve model from Stanford (Pennington, Socher, and Manning 2014) connects back to matrix factorization Fast bilinear models Per-token representations: Deep contextual word representations: ELMo, BERT Current state of the art Intelligent Information Retrieval 21

![Word 2 vec is a family of algorithms [Mikolov et al. 2013] Idea: Predict Word 2 vec is a family of algorithms [Mikolov et al. 2013] Idea: Predict](http://slidetodoc.com/presentation_image_h2/4dfa42c673f692790182a81f061e2233/image-22.jpg)

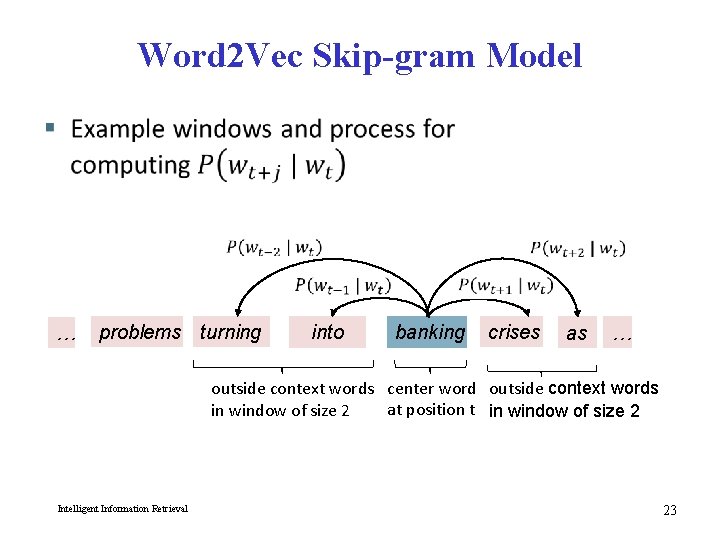

Word 2 vec is a family of algorithms [Mikolov et al. 2013] Idea: Predict between every word and its context words! Two algorithms 1. Skip-grams (SG) Predict context words given target (position independent) 2. Continuous Bag of Words (CBOW) Predict target word from bag-of-words context Intelligent Information Retrieval 22

Word 2 Vec Skip-gram Model … problems turning into banking crises as … outside context words center word outside context words at position t in window of size 2 Intelligent Information Retrieval 23

![Word 2 vec is a family of algorithms [Mikolov et al. 2013] Intelligent Information Word 2 vec is a family of algorithms [Mikolov et al. 2013] Intelligent Information](http://slidetodoc.com/presentation_image_h2/4dfa42c673f692790182a81f061e2233/image-24.jpg)

Word 2 vec is a family of algorithms [Mikolov et al. 2013] Intelligent Information Retrieval 24

Skip-gram

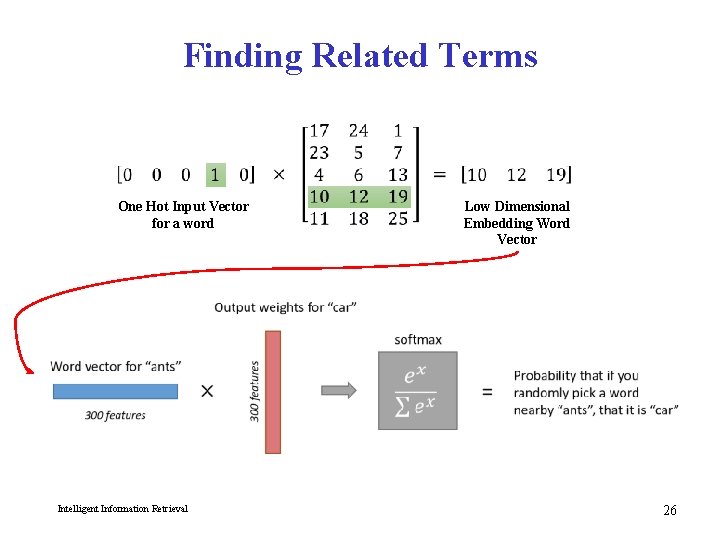

Finding Related Terms One Hot Input Vector for a word Intelligent Information Retrieval Low Dimensional Embedding Word Vector 26

27

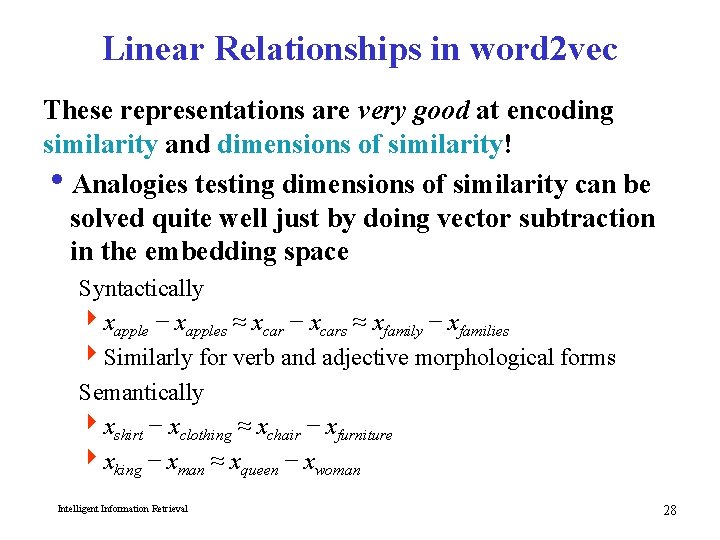

Linear Relationships in word 2 vec These representations are very good at encoding similarity and dimensions of similarity! i. Analogies testing dimensions of similarity can be solved quite well just by doing vector subtraction in the embedding space Syntactically 4 xapple − xapples ≈ xcar − xcars ≈ xfamily − xfamilies 4 Similarly for verb and adjective morphological forms Semantically 4 xshirt − xclothing ≈ xchair − xfurniture 4 xking − xman ≈ xqueen − xwoman Intelligent Information Retrieval 28

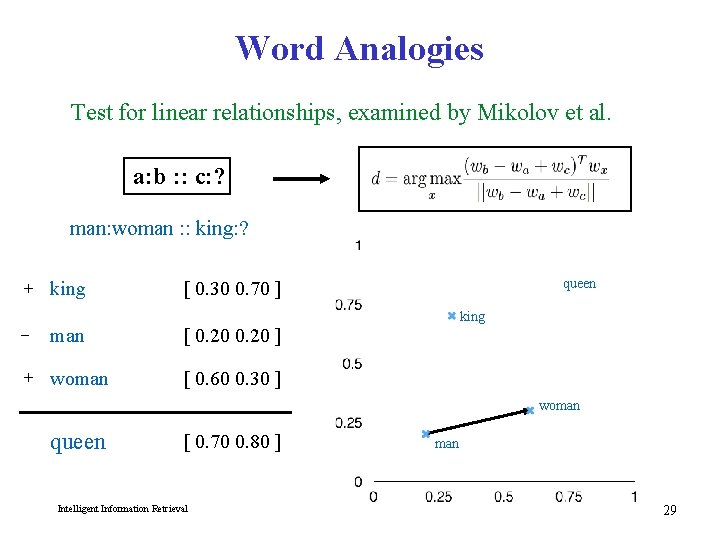

Word Analogies Test for linear relationships, examined by Mikolov et al. a: b : : c: ? man: woman : : king: ? + king queen [ 0. 30 0. 70 ] − man [ 0. 20 ] + woman [ 0. 60 0. 30 ] king woman queen [ 0. 70 0. 80 ] Intelligent Information Retrieval man 29

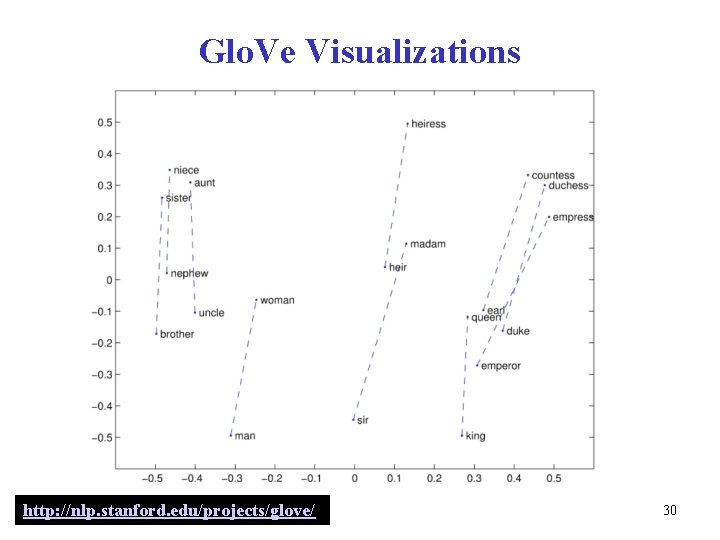

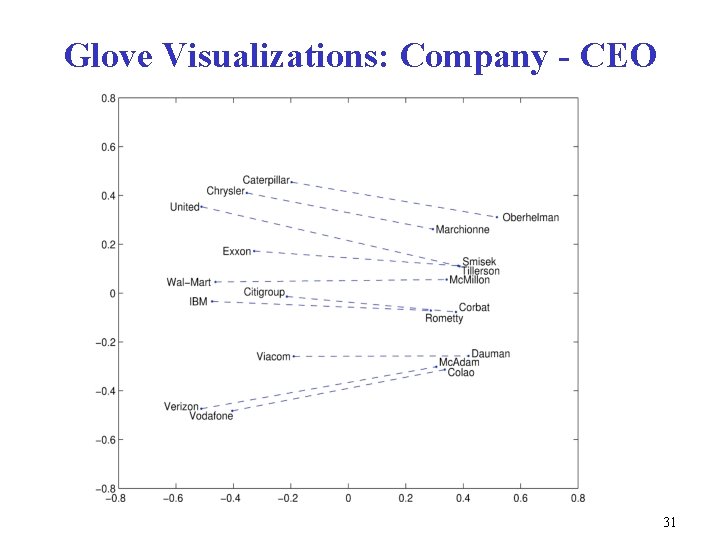

Glo. Ve Visualizations http: //nlp. stanford. edu/projects/glove/ 30

Glove Visualizations: Company - CEO 31

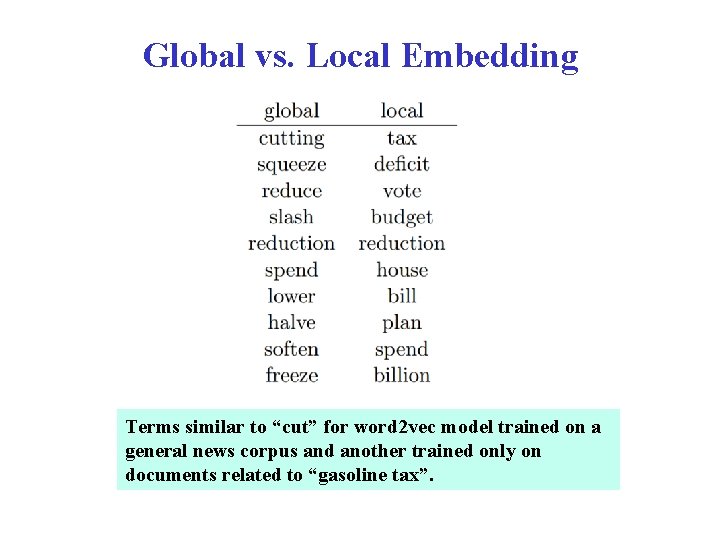

Global vs. Local Embedding Terms similar to “cut” for word 2 vec model trained on a general news corpus and another trained only on documents related to “gasoline tax”.

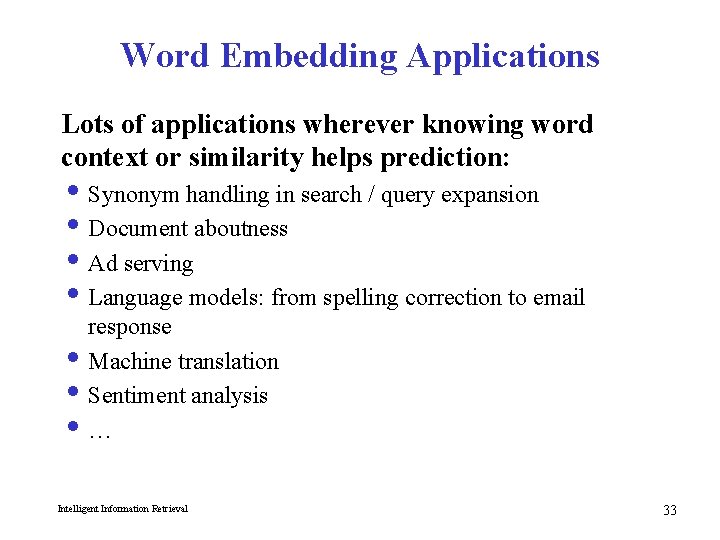

Word Embedding Applications Lots of applications wherever knowing word context or similarity helps prediction: i Synonym handling in search / query expansion i Document aboutness i Ad serving i Language models: from spelling correction to email response i Machine translation i Sentiment analysis i… Intelligent Information Retrieval 33

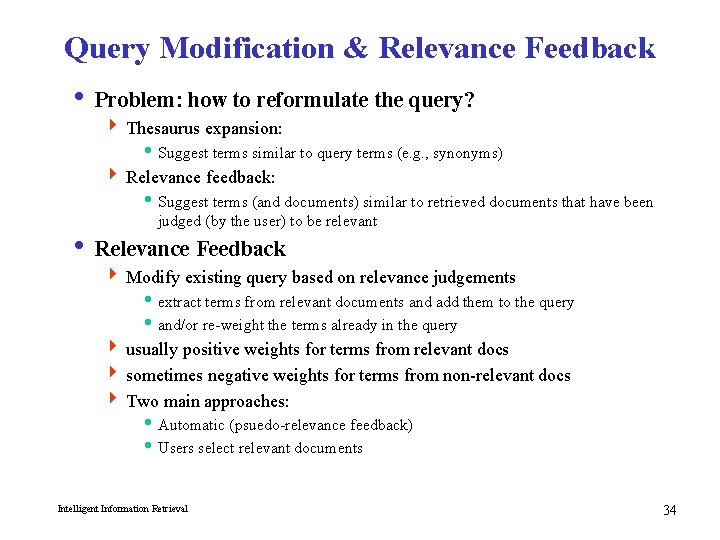

Query Modification & Relevance Feedback i Problem: how to reformulate the query? 4 Thesaurus expansion: i Suggest terms similar to query terms (e. g. , synonyms) 4 Relevance feedback: i Suggest terms (and documents) similar to retrieved documents that have been judged (by the user) to be relevant i Relevance Feedback 4 Modify existing query based on relevance judgements i extract terms from relevant documents and add them to the query i and/or re-weight the terms already in the query 4 usually positive weights for terms from relevant docs 4 sometimes negative weights for terms from non-relevant docs 4 Two main approaches: i Automatic (psuedo-relevance feedback) i Users select relevant documents Intelligent Information Retrieval 34

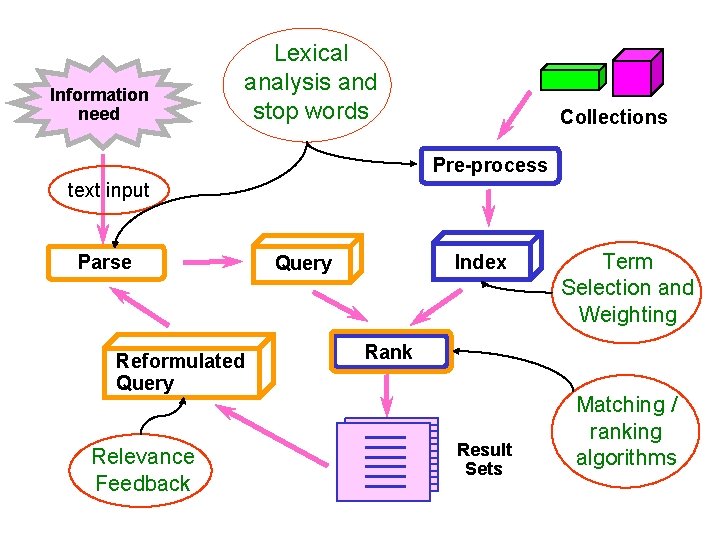

Information need Lexical analysis and stop words Collections Pre-process text input Parse Reformulated Query Relevance Feedback Index Query Term Selection and Weighting Rank Result Sets Matching / ranking algorithms

Query Reformulation in Vector Space Model i Change query vector using vector algebra. i Add the vectors for the relevant documents to the query vector. i Subtract the vectors for the irrelevant docs from the query vector. i This both adds both positive and negatively weighted terms to the query as well as reweighting the initial terms. Intelligent Information Retrieval 36

Rocchio’s Method (1971) Intelligent Information Retrieval 37

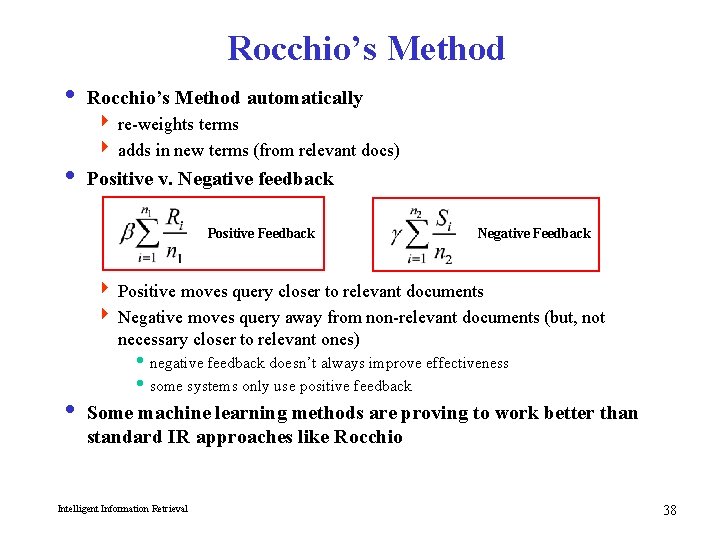

Rocchio’s Method i Rocchio’s Method automatically 4 re-weights terms 4 adds in new terms (from relevant docs) i Positive v. Negative feedback Positive Feedback Negative Feedback 4 Positive moves query closer to relevant documents 4 Negative moves query away from non-relevant documents (but, not necessary closer to relevant ones) i negative feedback doesn’t always improve effectiveness i some systems only use positive feedback i Some machine learning methods are proving to work better than standard IR approaches like Rocchio Intelligent Information Retrieval 38

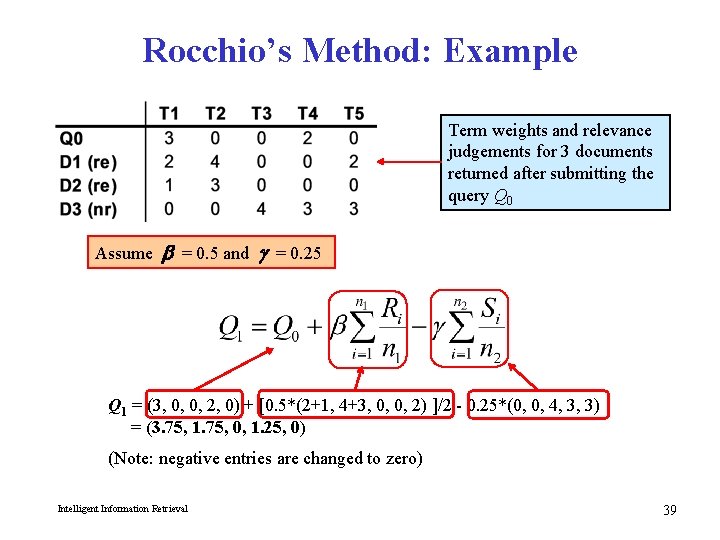

Rocchio’s Method: Example Term weights and relevance judgements for 3 documents returned after submitting the query Q 0 Assume b = 0. 5 and g = 0. 25 Q 1 = (3, 0, 0, 2, 0) + [0. 5*(2+1, 4+3, 0, 0, 2) ]/2 - 0. 25*(0, 0, 4, 3, 3) = (3. 75, 1. 75, 0, 1. 25, 0) (Note: negative entries are changed to zero) Intelligent Information Retrieval 39

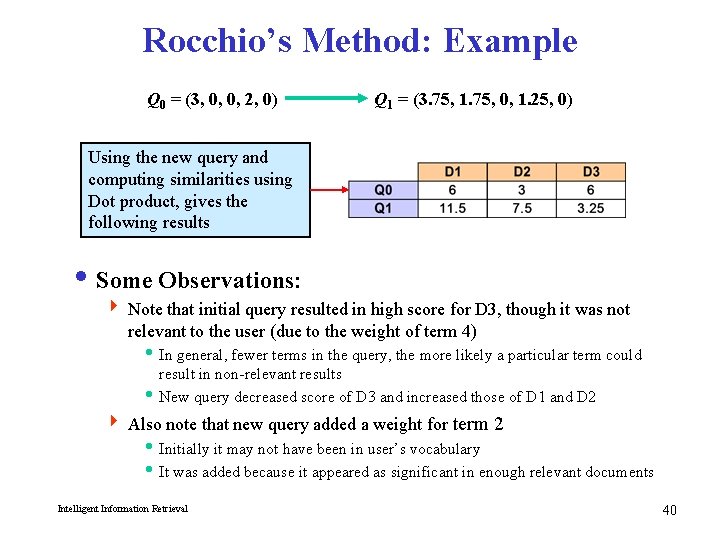

Rocchio’s Method: Example Q 0 = (3, 0, 0, 2, 0) Q 1 = (3. 75, 1. 75, 0, 1. 25, 0) Using the new query and computing similarities using Dot product, gives the following results i Some Observations: 4 Note that initial query resulted in high score for D 3, though it was not relevant to the user (due to the weight of term 4) i In general, fewer terms in the query, the more likely a particular term could result in non-relevant results i New query decreased score of D 3 and increased those of D 1 and D 2 4 Also note that new query added a weight for term 2 i Initially it may not have been in user’s vocabulary i It was added because it appeared as significant in enough relevant documents Intelligent Information Retrieval 40

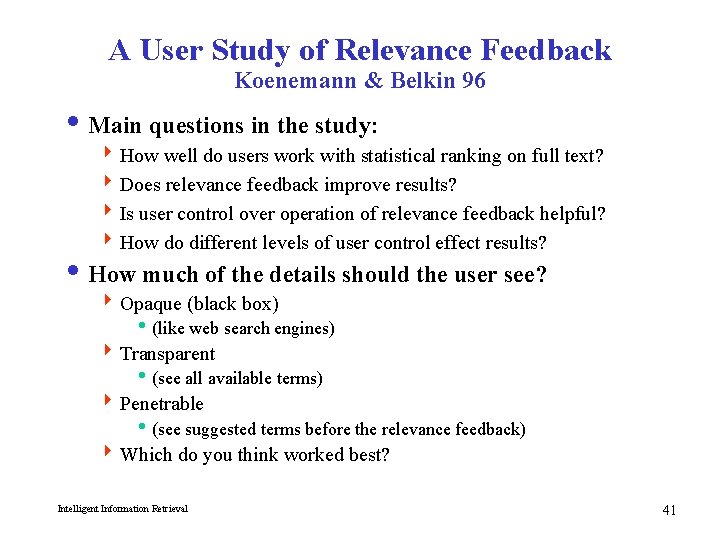

A User Study of Relevance Feedback Koenemann & Belkin 96 i Main questions in the study: 4 How well do users work with statistical ranking on full text? 4 Does relevance feedback improve results? 4 Is user control over operation of relevance feedback helpful? 4 How do different levels of user control effect results? i How much of the details should the user see? 4 Opaque (black box) i(like web search engines) 4 Transparent i(see all available terms) 4 Penetrable i(see suggested terms before the relevance feedback) 4 Which do you think worked best? Intelligent Information Retrieval 41

Details of the User Study Koenemann & Belkin 96 i 64 novice searchers 4 43 female, 21 male, native English speakers i TREC test bed 4 Wall Street Journal subset i Two search topics 4 Automobile Recalls 4 Tobacco Advertising and the Young i Relevance judgements from TREC and experimenter i System was INQUERY (vector space with some bells and whistles) i Goal was for users to keep modifying the query until they get one that gets high precision 4 They did not reweight query terms 4 Instead, only term expansion Intelligent Information Retrieval 42

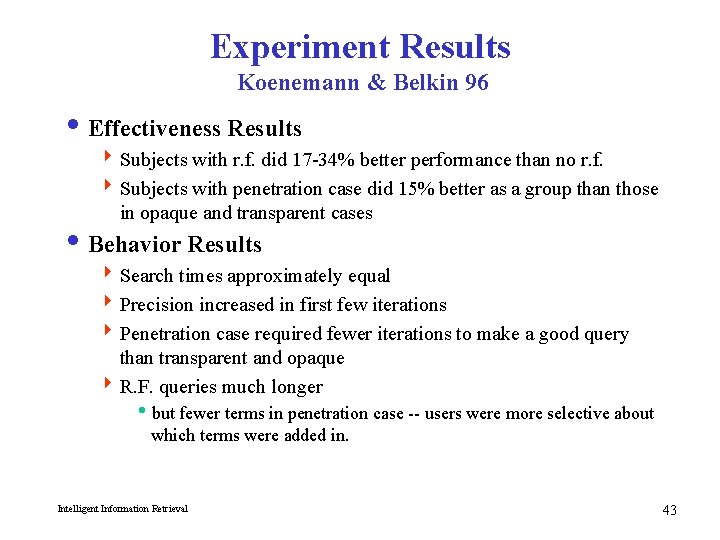

Experiment Results Koenemann & Belkin 96 i Effectiveness Results 4 Subjects with r. f. did 17 -34% better performance than no r. f. 4 Subjects with penetration case did 15% better as a group than those in opaque and transparent cases i Behavior Results 4 Search times approximately equal 4 Precision increased in first few iterations 4 Penetration case required fewer iterations to make a good query than transparent and opaque 4 R. F. queries much longer ibut fewer terms in penetration case -- users were more selective about which terms were added in. Intelligent Information Retrieval 43

Relevance Feedback Summary i Iterative query modification can improve precision and recall for a standing query 4 TREC results using SMART have shown consistent improvement 4 Effects of negative feedback are not always predictable i In at least one study, users were able to make good choices by seeing which terms were suggested for r. f. and selecting among them 4 So … “more like this” can be useful! i Exercise: Which of the major Web search engines provide relevance feedback? Do a comparative evaluation Intelligent Information Retrieval 44

Pseudo Feedback i Use relevance feedback methods without explicit user input. i Just assume the top m retrieved documents are relevant, and use them to reformulate the query. i Allows for query expansion that includes terms that are correlated with the query terms. i Found to improve performance on TREC competition ad-hoc retrieval task. i Works even better if top documents must also satisfy additional Boolean constraints in order to be used in feedback. Intelligent Information Retrieval 45

Alternative Notions of Relevance Feedback i With advent of WWW, many alternate notions have been proposed 4 Find people “similar” to you. Will you like what they like? 4 Follow the users’ actions in the background. Can this be used to predict what the user will want to see next? 4 Follow what lots of people are doing. Does this implicitly indicate what they think is good or not good? i Several different criteria to consider: 4 Implicit vs. Explicit judgements 4 Individual vs. Group judgements 4 Standing vs. Dynamic topics 4 Similarity of the items being judged vs. similarity of the judges themselves Intelligent Information Retrieval 46

The Recommendation Task i Basic formulation as a prediction problem Given a profile Pu for a user u, and a target item it, predict the preference score of user u on item it i Typically, the profile Pu contains preference scores by u on some other items, {i 1, …, ik} different from it 4 preference scores on i 1, …, ik may have been obtained explicitly (e. g. , movie ratings) or implicitly (e. g. , time spent on a product page, news article, etc. )

User Preferences Ratings Binary Feedback Explicit Reviews Implicit Behaviors

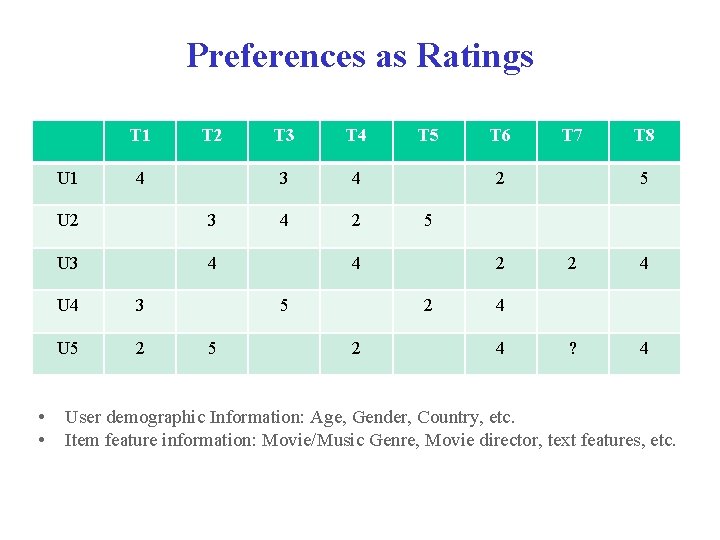

Preferences as Ratings T 1 U 1 • • T 2 4 U 2 3 U 3 4 U 4 3 U 5 2 T 3 T 4 3 4 4 2 T 7 T 8 5 5 2 2 2 T 6 2 4 5 5 T 5 2 4 ? 4 4 4 User demographic Information: Age, Gender, Country, etc. Item feature information: Movie/Music Genre, Movie director, text features, etc.

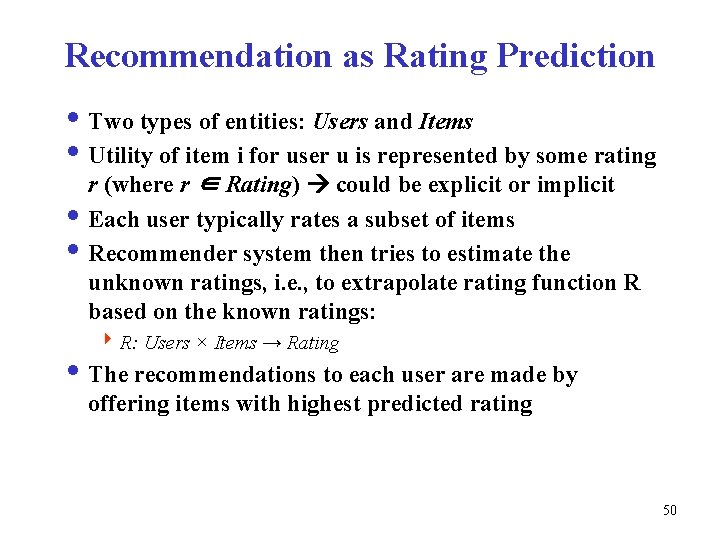

Recommendation as Rating Prediction i Two types of entities: Users and Items i Utility of item i for user u is represented by some rating r (where r ∈ Rating) could be explicit or implicit i Each user typically rates a subset of items i Recommender system then tries to estimate the unknown ratings, i. e. , to extrapolate rating function R based on the known ratings: 4 R: Users × Items → Rating i The recommendations to each user are made by offering items with highest predicted rating 50

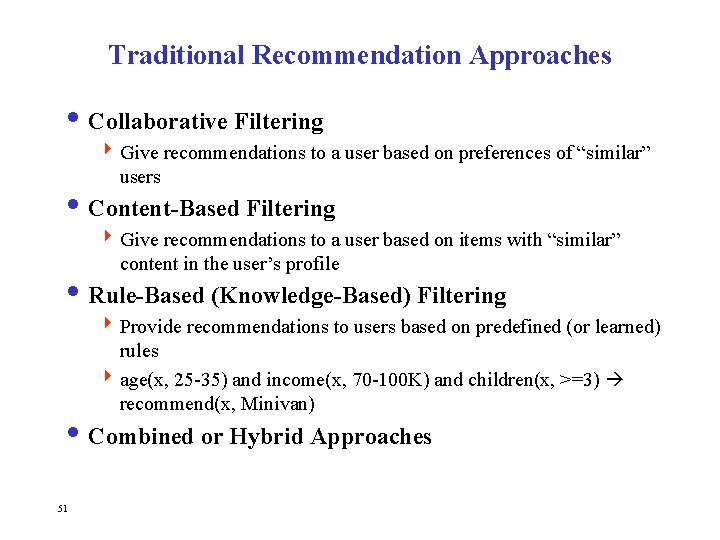

Traditional Recommendation Approaches i Collaborative Filtering 4 Give recommendations to a user based on preferences of “similar” users i Content-Based Filtering 4 Give recommendations to a user based on items with “similar” content in the user’s profile i Rule-Based (Knowledge-Based) Filtering 4 Provide recommendations to users based on predefined (or learned) rules 4 age(x, 25 -35) and income(x, 70 -100 K) and children(x, >=3) recommend(x, Minivan) i Combined or Hybrid Approaches 51

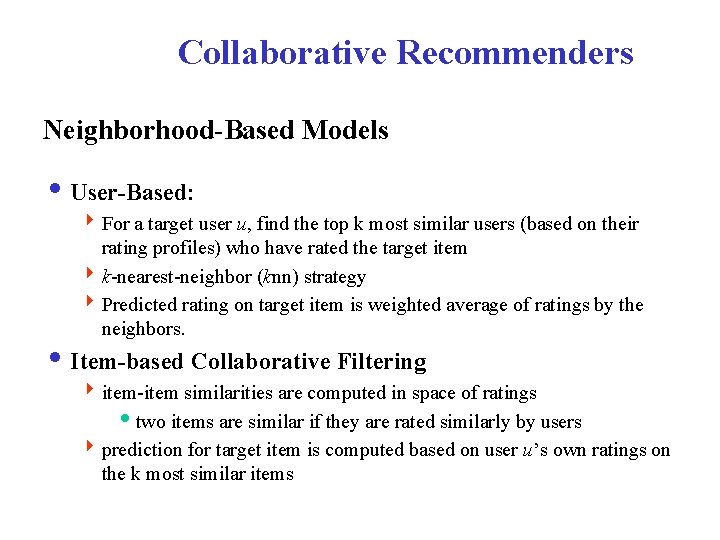

Collaborative Recommenders Neighborhood-Based Models i User-Based: 4 For a target user u, find the top k most similar users (based on their rating profiles) who have rated the target item 4 k-nearest-neighbor (knn) strategy 4 Predicted rating on target item is weighted average of ratings by the neighbors. i Item-based Collaborative Filtering 4 item-item similarities are computed in space of ratings itwo items are similar if they are rated similarly by users 4 prediction for target item is computed based on user u’s own ratings on the k most similar items

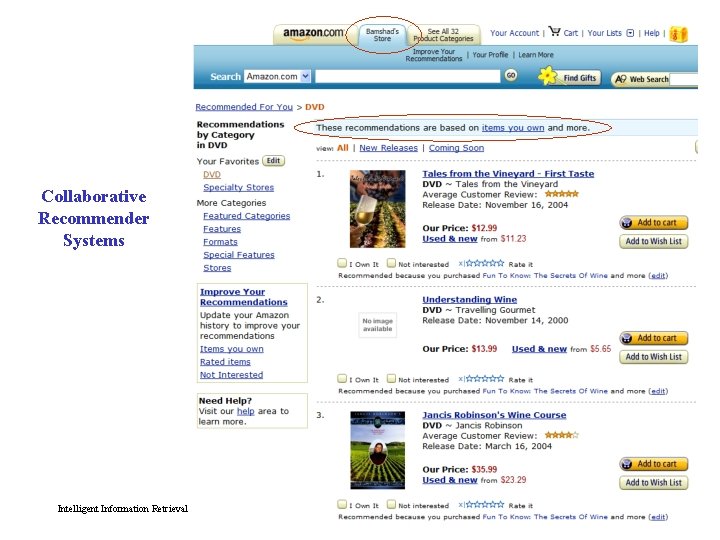

Collaborative Recommender Systems Intelligent Information Retrieval 53

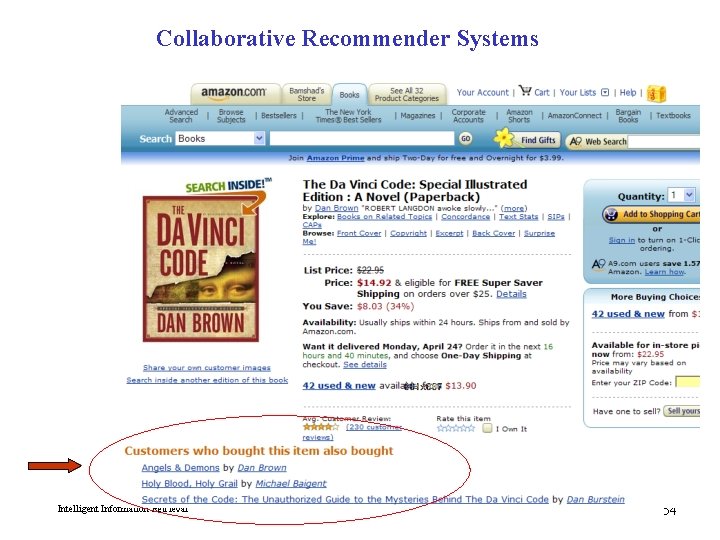

Collaborative Recommender Systems Intelligent Information Retrieval 54

Collaborative Recommender Systems 55

Collaborative Recommender Systems 56

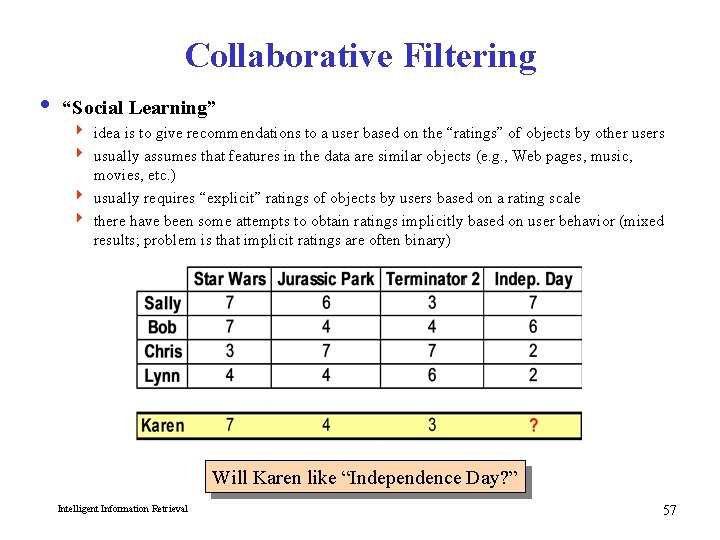

Collaborative Filtering i “Social Learning” 4 idea is to give recommendations to a user based on the “ratings” of objects by other users 4 usually assumes that features in the data are similar objects (e. g. , Web pages, music, movies, etc. ) 4 usually requires “explicit” ratings of objects by users based on a rating scale 4 there have been some attempts to obtain ratings implicitly based on user behavior (mixed results; problem is that implicit ratings are often binary) Will Karen like “Independence Day? ” Intelligent Information Retrieval 57

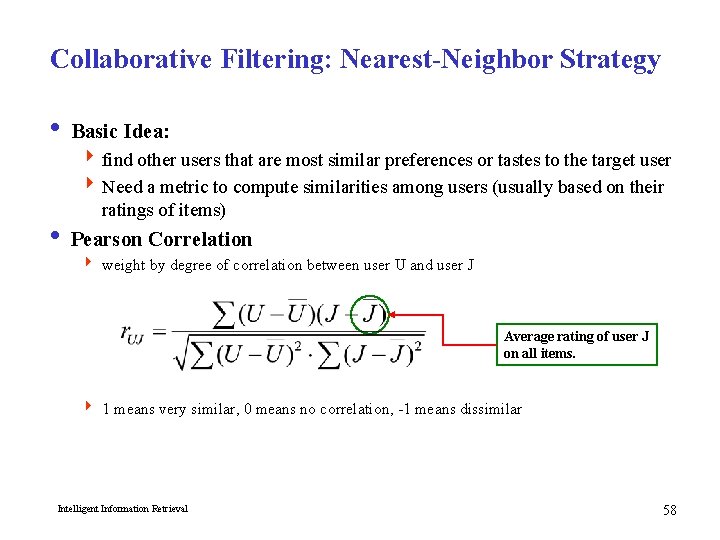

Collaborative Filtering: Nearest-Neighbor Strategy i Basic Idea: 4 find other users that are most similar preferences or tastes to the target user 4 Need a metric to compute similarities among users (usually based on their ratings of items) i Pearson Correlation 4 weight by degree of correlation between user U and user J Average rating of user J on all items. 4 1 means very similar, 0 means no correlation, -1 means dissimilar Intelligent Information Retrieval 58

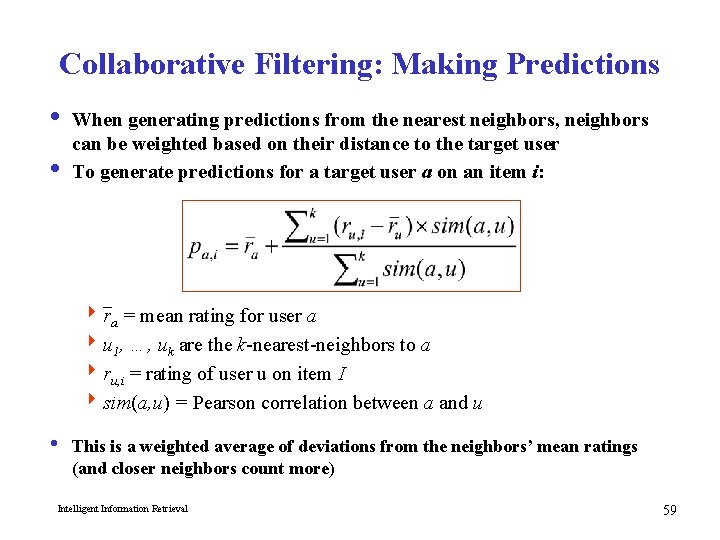

Collaborative Filtering: Making Predictions i When generating predictions from the nearest neighbors, neighbors can be weighted based on their distance to the target user i To generate predictions for a target user a on an item i: 4 ra = mean rating for user a 4 u 1, …, uk are the k-nearest-neighbors to a 4 ru, i = rating of user u on item I 4 sim(a, u) = Pearson correlation between a and u i This is a weighted average of deviations from the neighbors’ mean ratings (and closer neighbors count more) Intelligent Information Retrieval 59

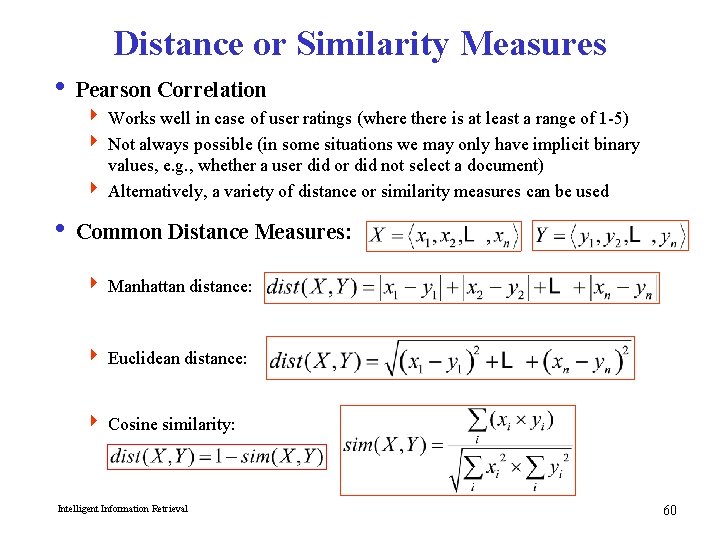

Distance or Similarity Measures i Pearson Correlation 4 Works well in case of user ratings (where there is at least a range of 1 -5) 4 Not always possible (in some situations we may only have implicit binary values, e. g. , whether a user did or did not select a document) 4 Alternatively, a variety of distance or similarity measures can be used i Common Distance Measures: 4 Manhattan distance: 4 Euclidean distance: 4 Cosine similarity: Intelligent Information Retrieval 60

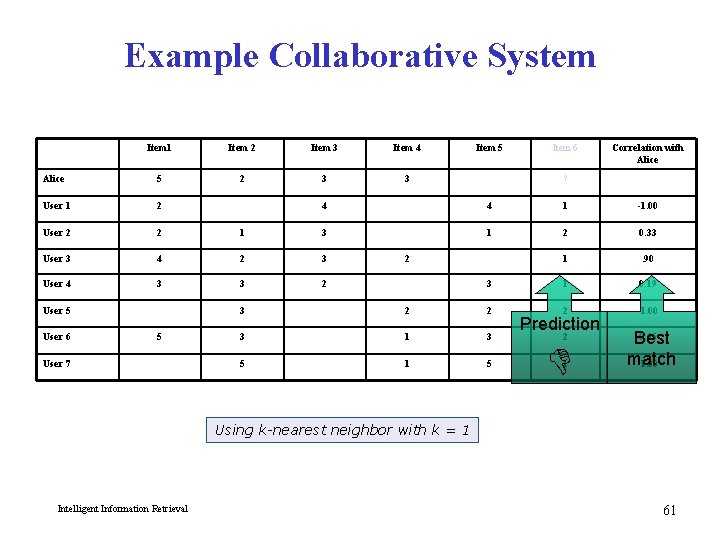

Example Collaborative System Item 1 Item 2 Item 3 Item 4 Alice 5 2 3 3 User 1 2 User 2 2 User 3 User 4 Item 6 Correlation with Alice ? 4 4 1 -1. 00 1 3 1 2 0. 33 4 2 3 1 . 90 3 3 2 3 1 0. 19 2 -1. 00 User 5 User 6 Item 5 5 User 7 2 3 2 2 3 1 3 5 1 5 Prediction 2 1 0. 65 Best match -1. 00 Using k-nearest neighbor with k = 1 Intelligent Information Retrieval 61

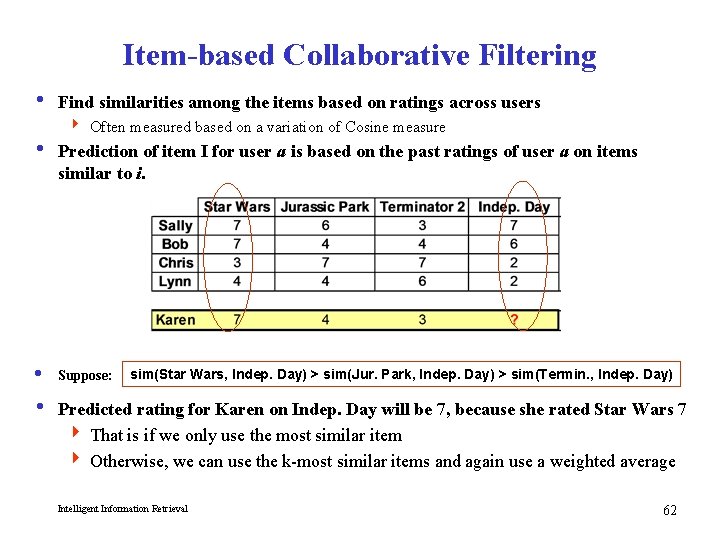

Item-based Collaborative Filtering i Find similarities among the items based on ratings across users 4 Often measured based on a variation of Cosine measure i Prediction of item I for user a is based on the past ratings of user a on items similar to i. i Suppose: sim(Star Wars, Indep. Day) > sim(Jur. Park, Indep. Day) > sim(Termin. , Indep. Day) i Predicted rating for Karen on Indep. Day will be 7, because she rated Star Wars 7 4 That is if we only use the most similar item 4 Otherwise, we can use the k-most similar items and again use a weighted average Intelligent Information Retrieval 62

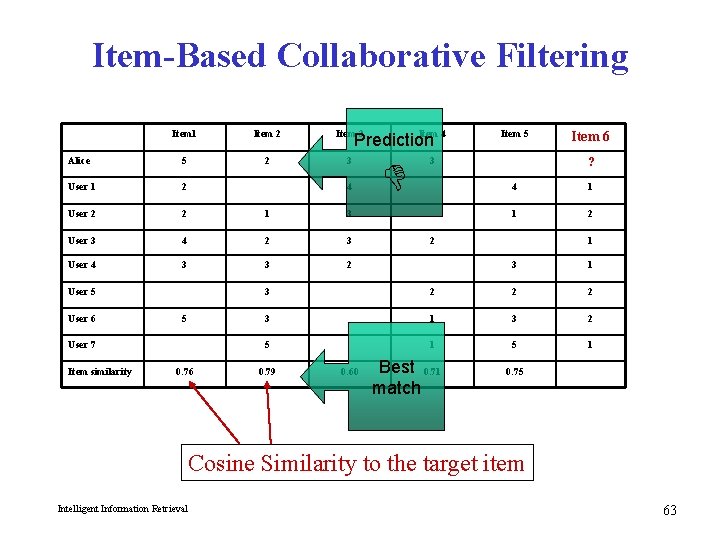

Item-Based Collaborative Filtering Item 1 Item 2 Item 3 Alice 5 2 3 User 1 2 User 2 2 1 3 User 3 4 2 3 User 4 3 3 2 4 User 5 User 6 5 User 7 Item similarity Item 4 Prediction 0. 76 Item 5 3 Item 6 ? 4 1 1 2 2 1 3 2 2 2 3 1 3 2 5 1 0. 79 0. 60 Best 0. 71 match 0. 75 Cosine Similarity to the target item Intelligent Information Retrieval 63

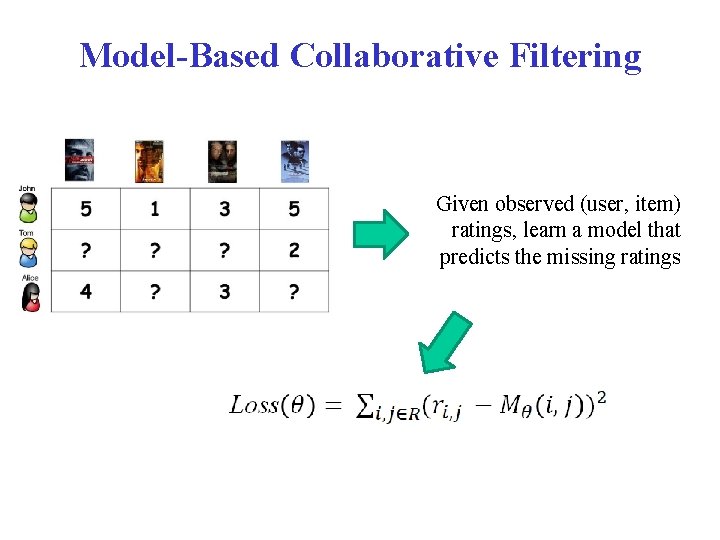

Model-Based Collaborative Filtering Given observed (user, item) ratings, learn a model that predicts the missing ratings

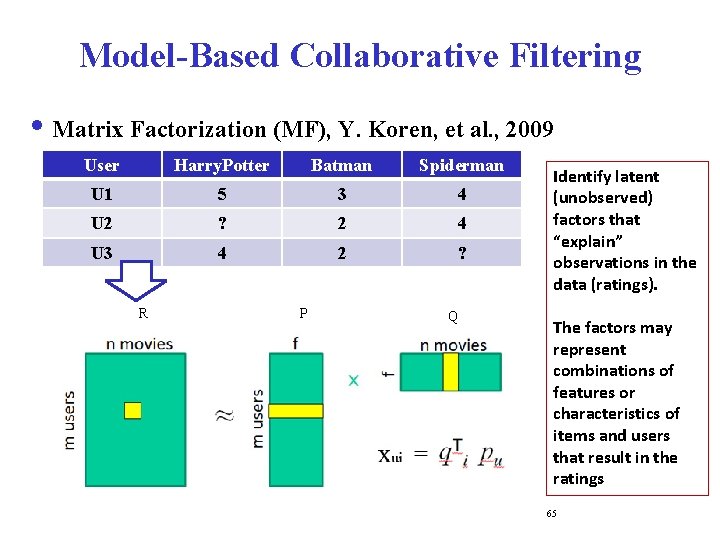

Model-Based Collaborative Filtering i Matrix Factorization (MF), Y. Koren, et al. , 2009 User Harry. Potter Batman Spiderman U 1 5 3 4 U 2 ? 2 4 U 3 4 2 ? R P Q Identify latent (unobserved) factors that “explain” observations in the data (ratings). The factors may represent combinations of features or characteristics of items and users that result in the ratings 65

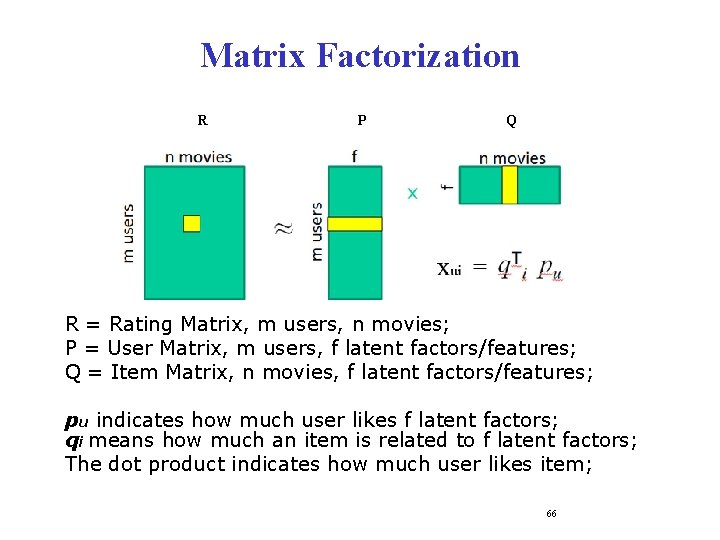

Matrix Factorization R P Q R = Rating Matrix, m users, n movies; P = User Matrix, m users, f latent factors/features; Q = Item Matrix, n movies, f latent factors/features; pu indicates how much user likes f latent factors; qi means how much an item is related to f latent factors; The dot product indicates how much user likes item; 66

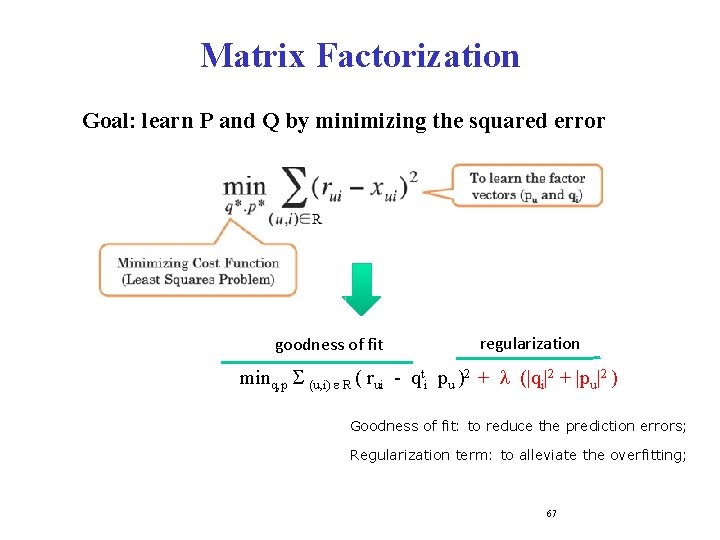

Matrix Factorization Goal: learn P and Q by minimizing the squared error goodness of fit regularization minq, p S (u, i) e R ( rui - qti pu )2 + l (|qi|2 + |pu|2 ) Goodness of fit: to reduce the prediction errors; Regularization term: to alleviate the overfitting; 67

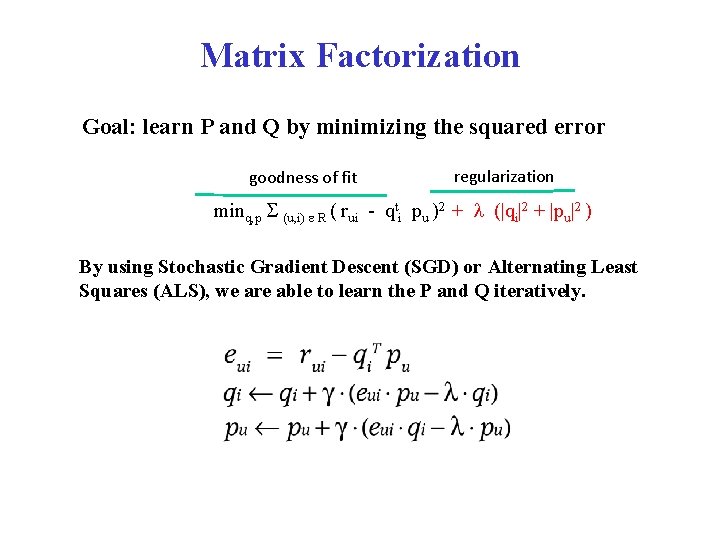

Matrix Factorization Goal: learn P and Q by minimizing the squared error goodness of fit regularization minq, p S (u, i) e R ( rui - qti pu )2 + l (|qi|2 + |pu|2 ) By using Stochastic Gradient Descent (SGD) or Alternating Least Squares (ALS), we are able to learn the P and Q iteratively.

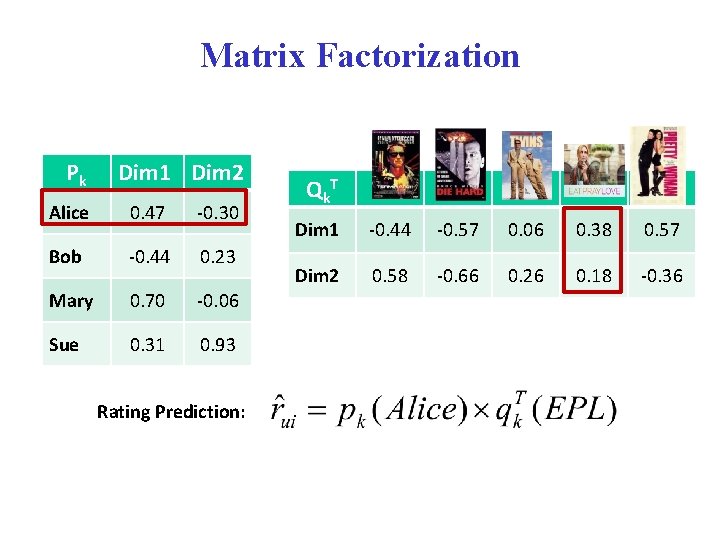

Matrix Factorization Pk Dim 1 Dim 2 Alice 0. 47 -0. 30 Bob -0. 44 0. 23 Mary 0. 70 -0. 06 Sue 0. 31 0. 93 Rating Prediction: Qk T Dim 1 -0. 44 -0. 57 0. 06 0. 38 0. 57 Dim 2 0. 58 -0. 66 0. 26 0. 18 -0. 36

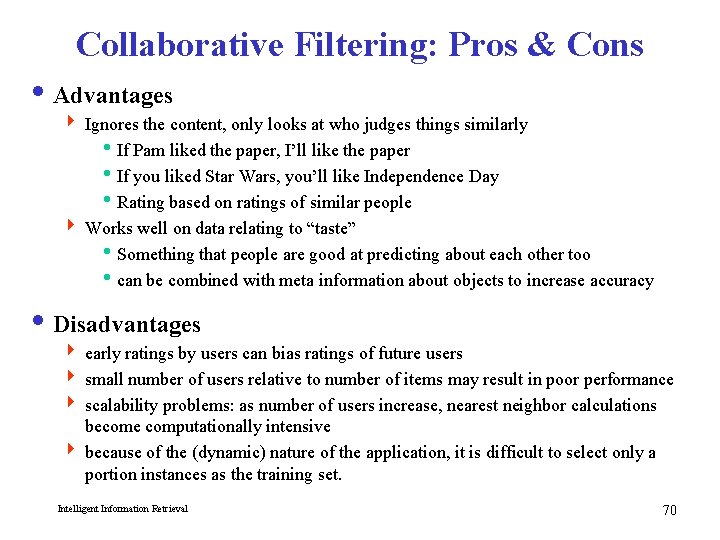

Collaborative Filtering: Pros & Cons i Advantages 4 Ignores the content, only looks at who judges things similarly i. If Pam liked the paper, I’ll like the paper i. If you liked Star Wars, you’ll like Independence Day i. Rating based on ratings of similar people 4 Works well on data relating to “taste” i. Something that people are good at predicting about each other too ican be combined with meta information about objects to increase accuracy i Disadvantages 4 early ratings by users can bias ratings of future users 4 small number of users relative to number of items may result in poor performance 4 scalability problems: as number of users increase, nearest neighbor calculations become computationally intensive 4 because of the (dynamic) nature of the application, it is difficult to select only a portion instances as the training set. Intelligent Information Retrieval 70

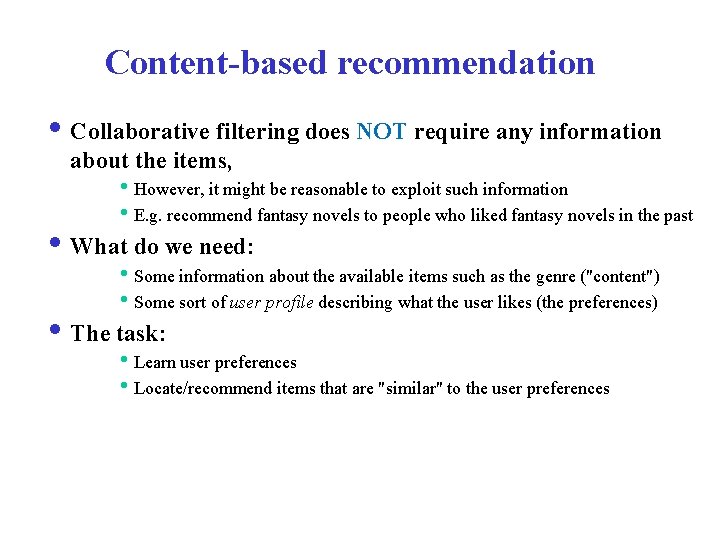

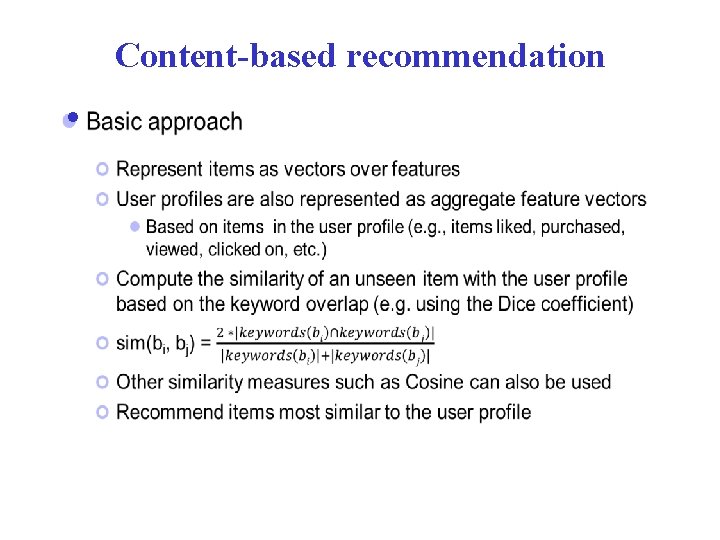

Content-based recommendation i Collaborative filtering does NOT require any information about the items, i. However, it might be reasonable to exploit such information i. E. g. recommend fantasy novels to people who liked fantasy novels in the past i What do we need: i. Some information about the available items such as the genre ("content") i. Some sort of user profile describing what the user likes (the preferences) i The task: i. Learn user preferences i. Locate/recommend items that are "similar" to the user preferences

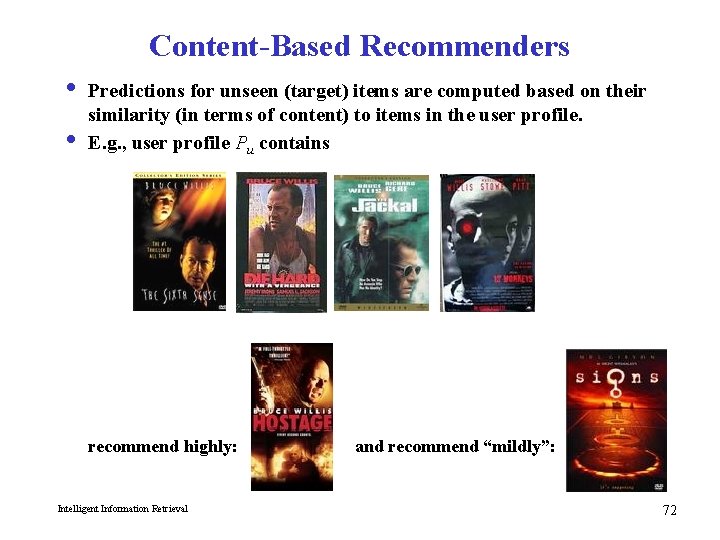

Content-Based Recommenders i Predictions for unseen (target) items are computed based on their similarity (in terms of content) to items in the user profile. i E. g. , user profile Pu contains recommend highly: Intelligent Information Retrieval and recommend “mildly”: 72

Content-based recommendation i

Content-Based Recommender Systems Intelligent Information Retrieval 74

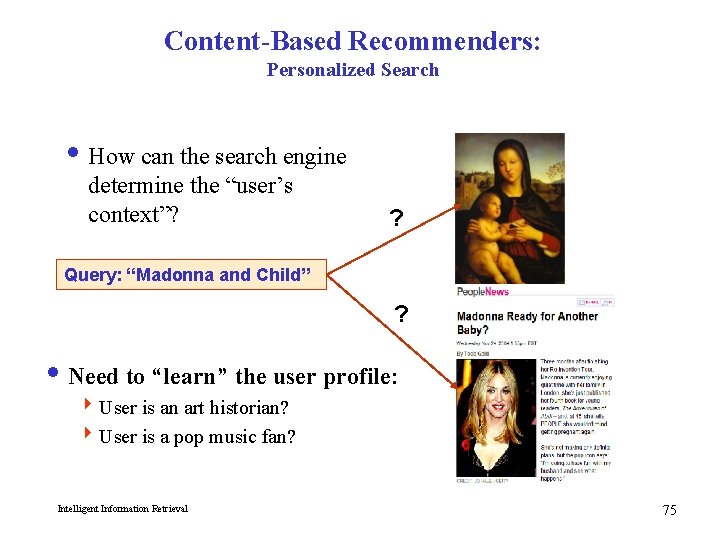

Content-Based Recommenders: Personalized Search i How can the search engine determine the “user’s context”? ? Query: “Madonna and Child” ? i Need to “learn” the user profile: 4 User is an art historian? 4 User is a pop music fan? Intelligent Information Retrieval 75

Content-Based Recommenders i Music recommendations i Play list generation Example: Pandora Intelligent Information Retrieval 76

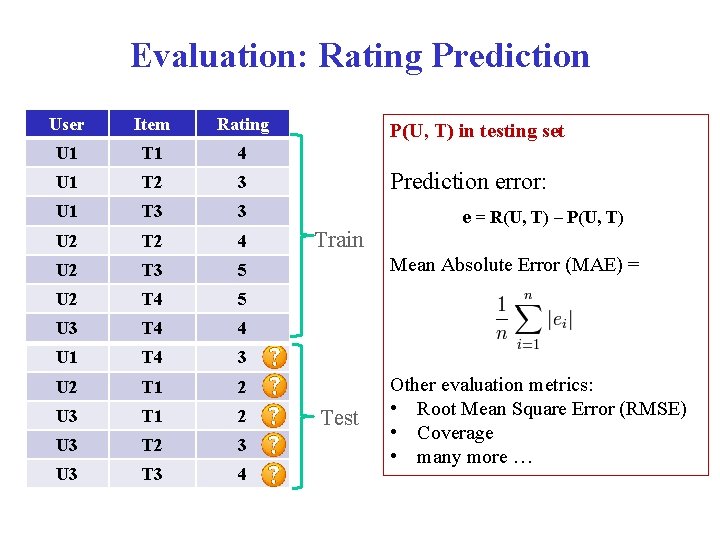

Evaluation: Rating Prediction User Item Rating U 1 T 1 4 U 1 T 2 3 U 1 T 3 3 U 2 T 2 4 U 2 T 3 5 U 2 T 4 5 U 3 T 4 4 U 1 T 4 3 U 2 T 1 2 U 3 T 2 3 U 3 T 3 4 P(U, T) in testing set Prediction error: Train e = R(U, T) – P(U, T) Mean Absolute Error (MAE) = Test Other evaluation metrics: • Root Mean Square Error (RMSE) • Coverage • many more …

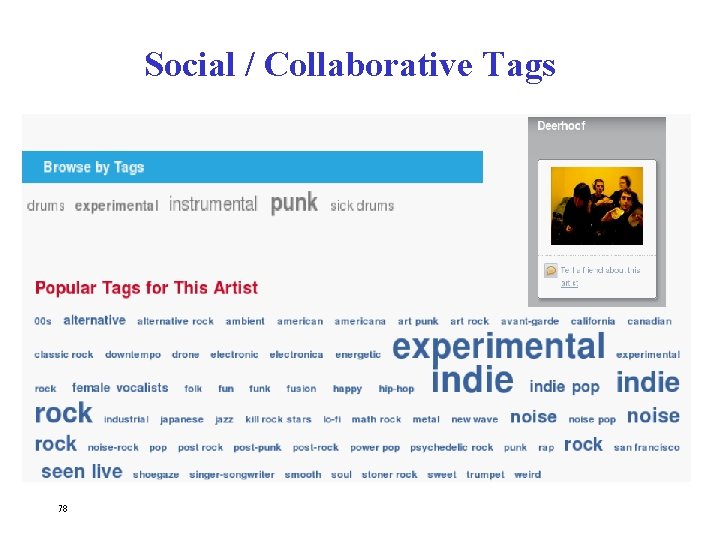

Social / Collaborative Tags 78

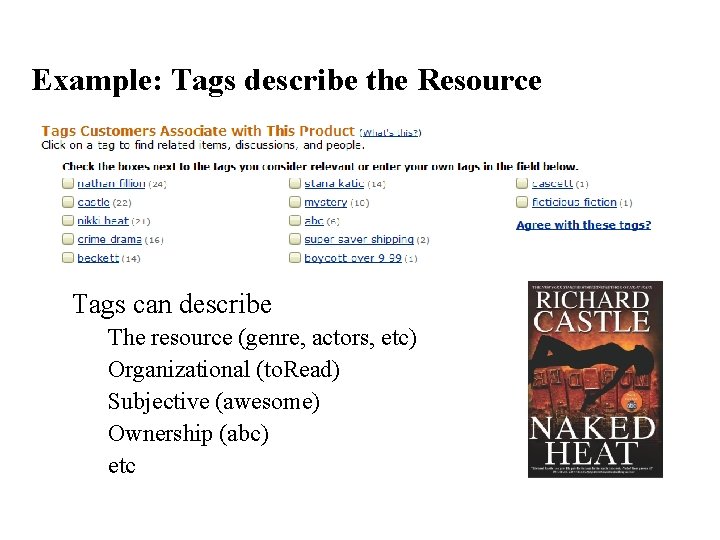

Example: Tags describe the Resource • Tags can describe • • • The resource (genre, actors, etc) Organizational (to. Read) Subjective (awesome) Ownership (abc) etc

Tag Recommendation

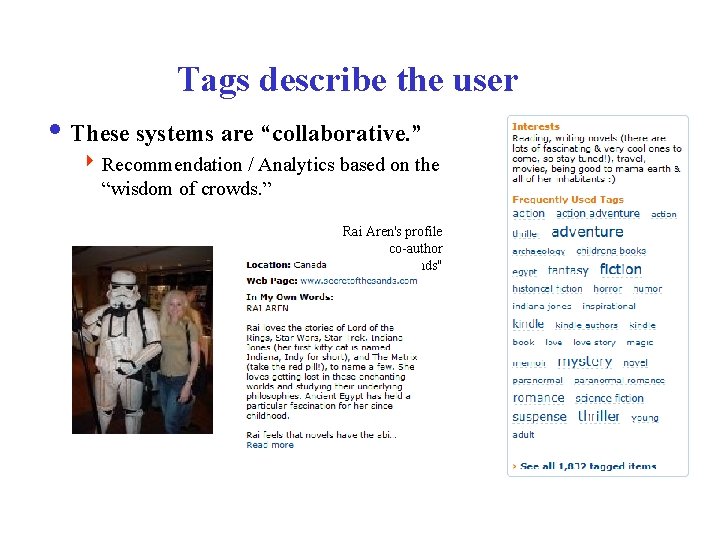

Tags describe the user i These systems are “collaborative. ” 4 Recommendation / Analytics based on the “wisdom of crowds. ” Rai Aren's profile co-author “Secret of the Sands"

Social Recommendation i A form of collaborative filtering using social network data 4 Users profiles represented as sets of links to other nodes (users or items) in the network 4 Prediction problem: infer a currently non-existent link in the network 82

Example: Using Tags for Recommendation 83

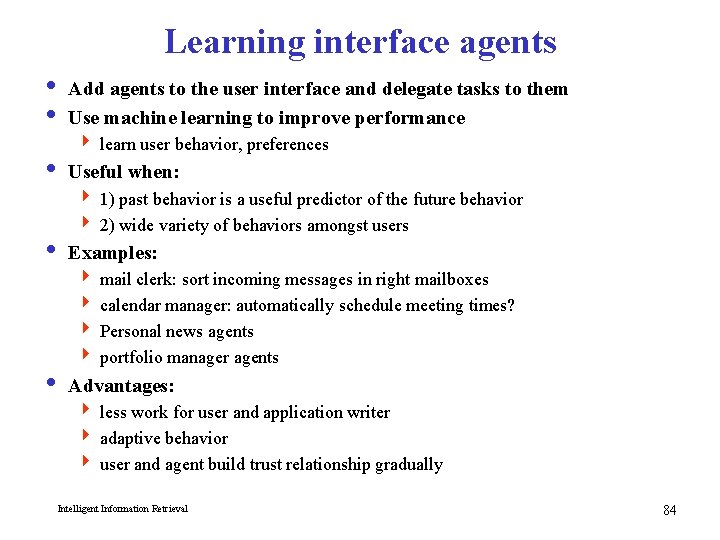

Learning interface agents i Add agents to the user interface and delegate tasks to them i Use machine learning to improve performance 4 learn user behavior, preferences i Useful when: 4 1) past behavior is a useful predictor of the future behavior 4 2) wide variety of behaviors amongst users i Examples: 4 mail clerk: sort incoming messages in right mailboxes 4 calendar manager: automatically schedule meeting times? 4 Personal news agents 4 portfolio manager agents i Advantages: 4 less work for user and application writer 4 adaptive behavior 4 user and agent build trust relationship gradually Intelligent Information Retrieval 84

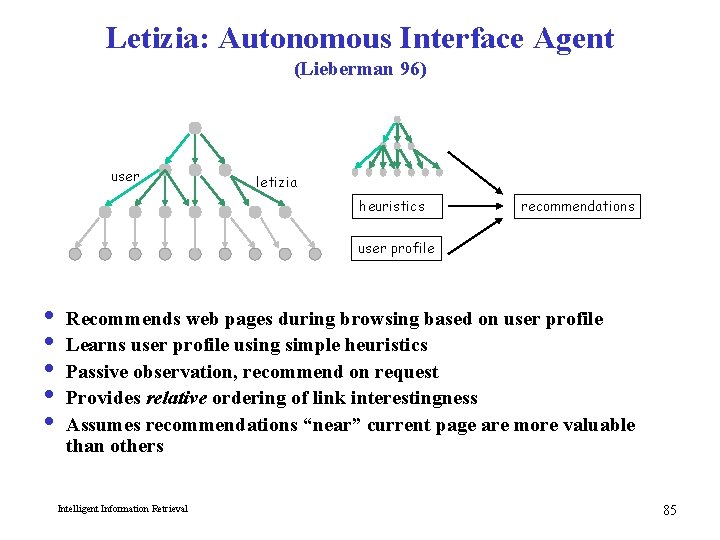

Letizia: Autonomous Interface Agent (Lieberman 96) user letizia heuristics recommendations user profile i i i Recommends web pages during browsing based on user profile Learns user profile using simple heuristics Passive observation, recommend on request Provides relative ordering of link interestingness Assumes recommendations “near” current page are more valuable than others Intelligent Information Retrieval 85

Letizia: Autonomous Interface Agent i Infers user preferences from behavior i Interesting pages 4 record in hot list (save as a file) 4 follow several links from pages 4 returning several times to a document i Not Interesting 4 spend a short time on document 4 return to previous document without following links 4 passing over a link to document (selecting links above and below document) i Why is this useful 4 tracks and learns user behavior, provides user “context” to the application (browsing) 4 completely passive: no work for the user 4 useful when user doesn’t know where to go 4 no modifications to application: Letizia interposes between the Web and browser Intelligent Information Retrieval 86

Consequences of passiveness i Weak heuristics 4 example: click through multiple uninteresting pages en route to interestingness 4 example: user browses to uninteresting page, then goes for a coffee 4 example: hierarchies tend to get more hits near root i Cold start i No ability to fine tune profile or express interest without visiting “appropriate” pages i Some possible alternative/extensions to internally maintained profiles: 4 expose to the user (e. g. fine tune profile) ? 4 expose to other users/agents (e. g. collaborative filtering)? 4 expose to web server (e. g. cnn. com custom news)? Intelligent Information Retrieval 87

ARCH: Adaptive Agent for Retrieval Based on Concept Hierarchies (Mobasher, Sieg, Burke 2003 -2007) i ARCH supports users in formulating effective search queries starting with users’ poorly designed keyword queries i Essence of the system is to incorporate domain-specific concept hierarchies with interactive query formulation i Query enhancement in ARCH uses two mutuallysupporting techniques: 4 Semantic – using a concept hierarchy to interactively disambiguate and expand queries 4 Behavioral – observing user’s past browsing behavior for user profiling and automatic query enhancement

Overview of ARCH i The system consists of an offline and an online component i Offline component: 4 Handles the learning of the concept hierarchy 4 Handles the learning of the user profiles i Online component: 4 Displays the concept hierarchy to the user 4 Allows the user to select/deselect nodes 4 Generates the enhanced query based on the user’s interaction with the concept hierarchy Intelligent Information Retrieval 89

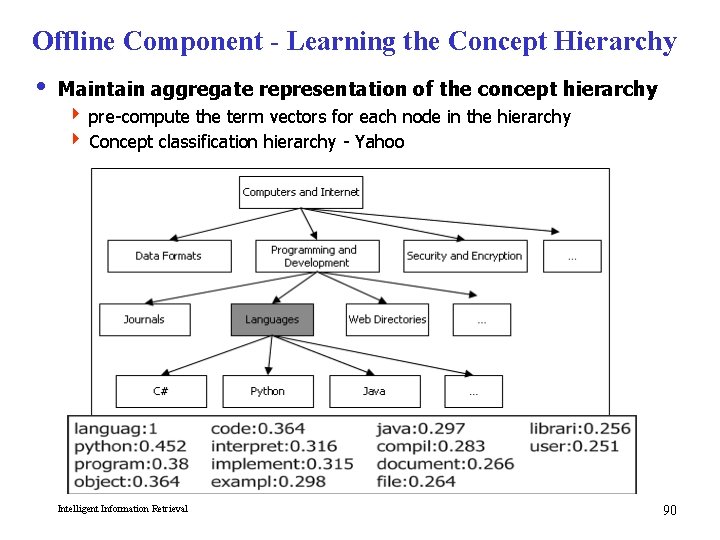

Offline Component - Learning the Concept Hierarchy i Maintain aggregate representation of the concept hierarchy 4 pre-compute the term vectors for each node in the hierarchy 4 Concept classification hierarchy - Yahoo Intelligent Information Retrieval 90

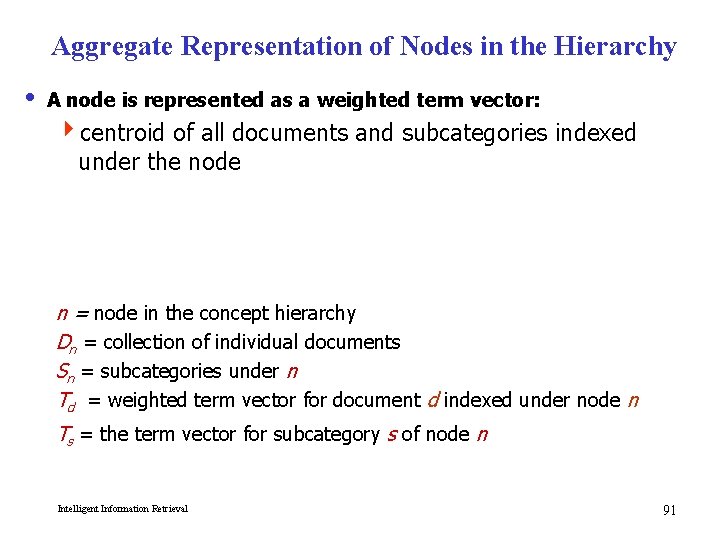

Aggregate Representation of Nodes in the Hierarchy i A node is represented as a weighted term vector: 4 centroid of all documents and subcategories indexed under the node n = node in the concept hierarchy Dn = collection of individual documents Sn = subcategories under n Td = weighted term vector for document d indexed under node n Ts = the term vector for subcategory s of node n Intelligent Information Retrieval 91

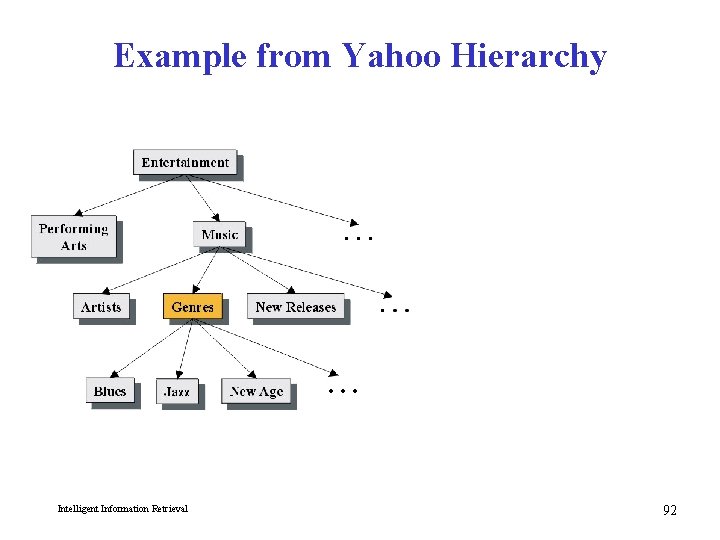

Example from Yahoo Hierarchy Intelligent Information Retrieval 92

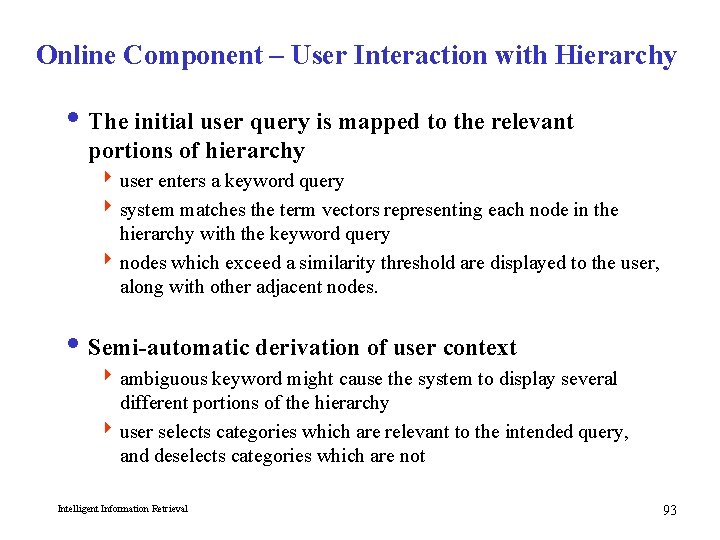

Online Component – User Interaction with Hierarchy i The initial user query is mapped to the relevant portions of hierarchy 4 user enters a keyword query 4 system matches the term vectors representing each node in the hierarchy with the keyword query 4 nodes which exceed a similarity threshold are displayed to the user, along with other adjacent nodes. i Semi-automatic derivation of user context 4 ambiguous keyword might cause the system to display several different portions of the hierarchy 4 user selects categories which are relevant to the intended query, and deselects categories which are not Intelligent Information Retrieval 93

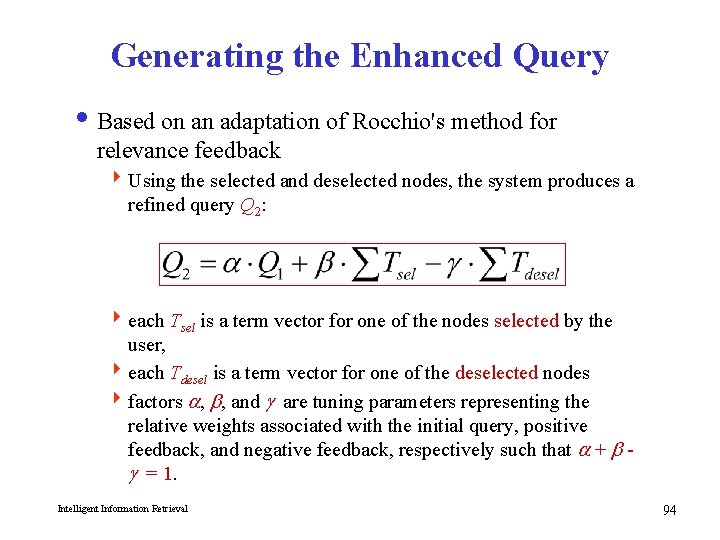

Generating the Enhanced Query i Based on an adaptation of Rocchio's method for relevance feedback 4 Using the selected and deselected nodes, the system produces a refined query Q 2: 4 each Tsel is a term vector for one of the nodes selected by the user, 4 each Tdesel is a term vector for one of the deselected nodes 4 factors a, b, and g are tuning parameters representing the relative weights associated with the initial query, positive feedback, and negative feedback, respectively such that a + b g = 1. Intelligent Information Retrieval 94

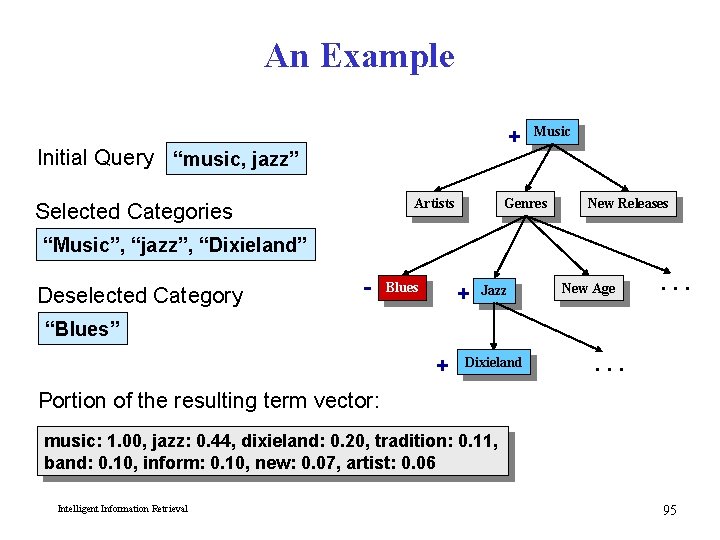

An Example + Initial Query “music, jazz” Artists Selected Categories Music Genres New Releases “Music”, “jazz”, “Dixieland” Deselected Category - + Blues Jazz New Age . . . “Blues” + Dixieland . . . Portion of the resulting term vector: music: 1. 00, jazz: 0. 44, dixieland: 0. 20, tradition: 0. 11, band: 0. 10, inform: 0. 10, new: 0. 07, artist: 0. 06 Intelligent Information Retrieval 95

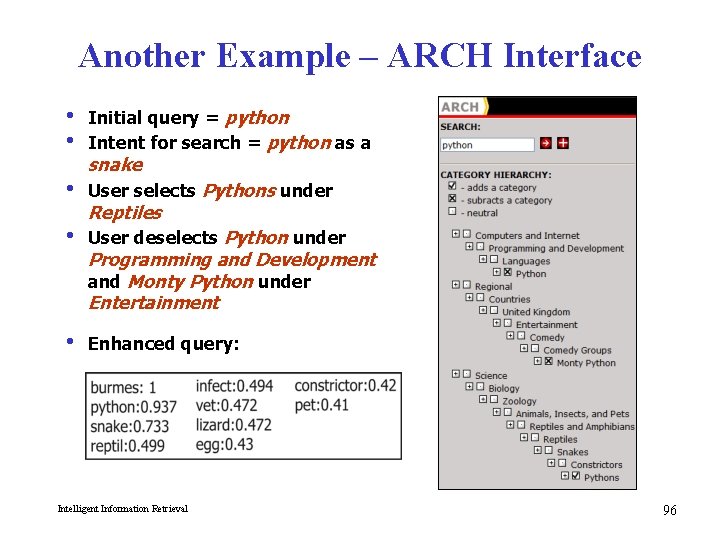

Another Example – ARCH Interface i Initial query = python i Intent for search = python as a snake i User selects Pythons under Reptiles i User deselects Python under Programming and Development and Monty Python under Entertainment i Enhanced query: Intelligent Information Retrieval 96

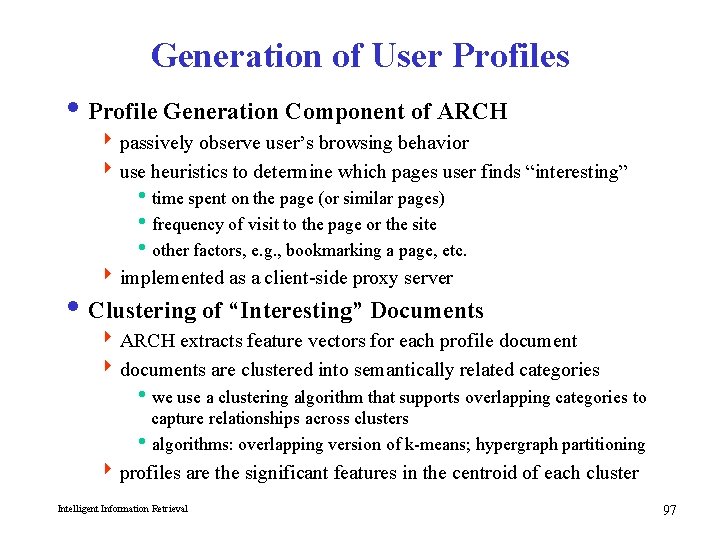

Generation of User Profiles i Profile Generation Component of ARCH 4 passively observe user’s browsing behavior 4 use heuristics to determine which pages user finds “interesting” itime spent on the page (or similar pages) ifrequency of visit to the page or the site iother factors, e. g. , bookmarking a page, etc. 4 implemented as a client-side proxy server i Clustering of “Interesting” Documents 4 ARCH extracts feature vectors for each profile document 4 documents are clustered into semantically related categories iwe use a clustering algorithm that supports overlapping categories to capture relationships across clusters ialgorithms: overlapping version of k-means; hypergraph partitioning 4 profiles are the significant features in the centroid of each cluster Intelligent Information Retrieval 97

User Profiles & Information Context i Can user profiles replace the need for user interaction? 4 Instead of explicit user feedback, the user profiles are used for the selection and deselection of concepts 4 Each individual profile is compared to the original user query for similarity 4 Those profiles which satisfy a similarity threshold are then compared to the matching nodes in the concept hierarchy imatching nodes include those that exceeded a similarity threshold when compared to the user’s original keyword query. 4 The node with the highest similarity score is used for automatic selection; nodes with relatively low similarity scores are used for automatic deselection Intelligent Information Retrieval 98

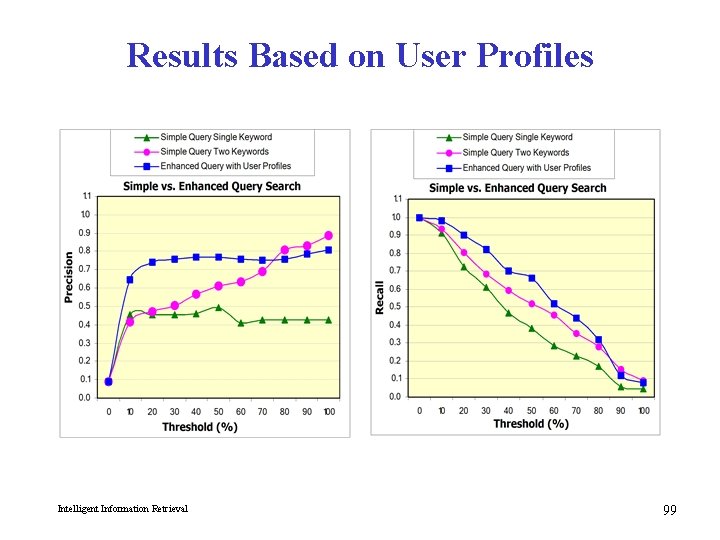

Results Based on User Profiles Intelligent Information Retrieval 99

- Slides: 99