QBSS Applications Les Cottrell SLAC Presented at the

QBSS Applications Les Cottrell – SLAC Presented at the Internet 2 Working Group on QBone Scavenger Service (QBSS), October 2001 www. slac. stanford. edu/grp/scs/talk/qbss-i 2 -oct 01. ppt Partially funded by DOE/MICS Field Work Proposal on Internet End-to-end Performance Monitoring (IEPM), also supported by IUPAP 1

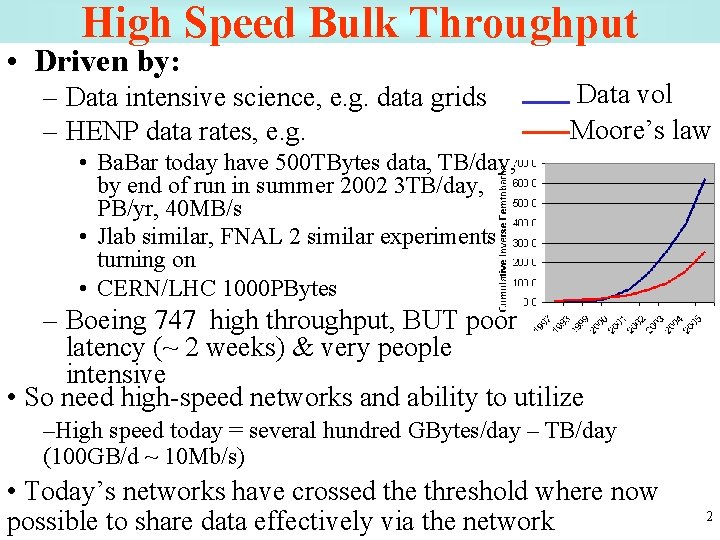

High Speed Bulk Throughput • Driven by: – Data intensive science, e. g. data grids – HENP data rates, e. g. Data vol Moore’s law • Ba. Bar today have 500 TBytes data, TB/day, by end of run in summer 2002 3 TB/day, PB/yr, 40 MB/s • Jlab similar, FNAL 2 similar experiments turning on • CERN/LHC 1000 PBytes – Boeing 747 high throughput, BUT poor latency (~ 2 weeks) & very people intensive • So need high-speed networks and ability to utilize –High speed today = several hundred GBytes/day – TB/day (100 GB/d ~ 10 Mb/s) • Today’s networks have crossed the threshold where now possible to share data effectively via the network 2

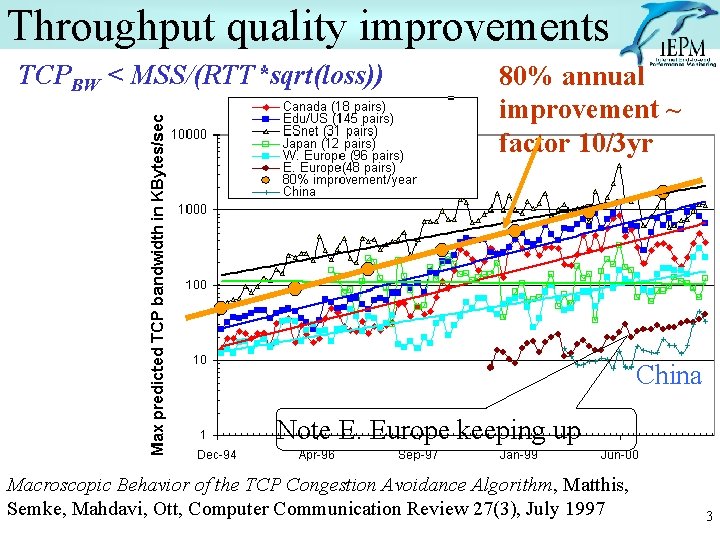

Throughput quality improvements TCPBW < MSS/(RTT*sqrt(loss)) 80% annual improvement ~ factor 10/3 yr China Note E. Europe keeping up Macroscopic Behavior of the TCP Congestion Avoidance Algorithm, Matthis, Semke, Mahdavi, Ott, Computer Communication Review 27(3), July 1997 3

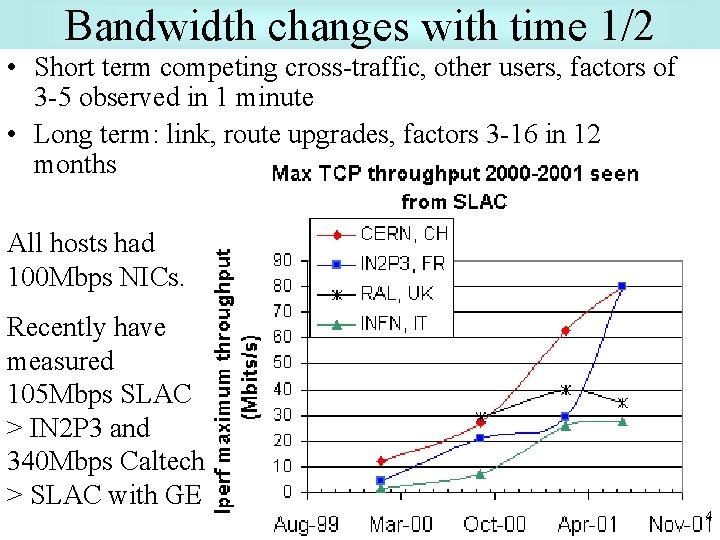

Bandwidth changes with time 1/2 • Short term competing cross-traffic, other users, factors of 3 -5 observed in 1 minute • Long term: link, route upgrades, factors 3 -16 in 12 months All hosts had 100 Mbps NICs. Recently have measured 105 Mbps SLAC > IN 2 P 3 and 340 Mbps Caltech > SLAC with GE 4

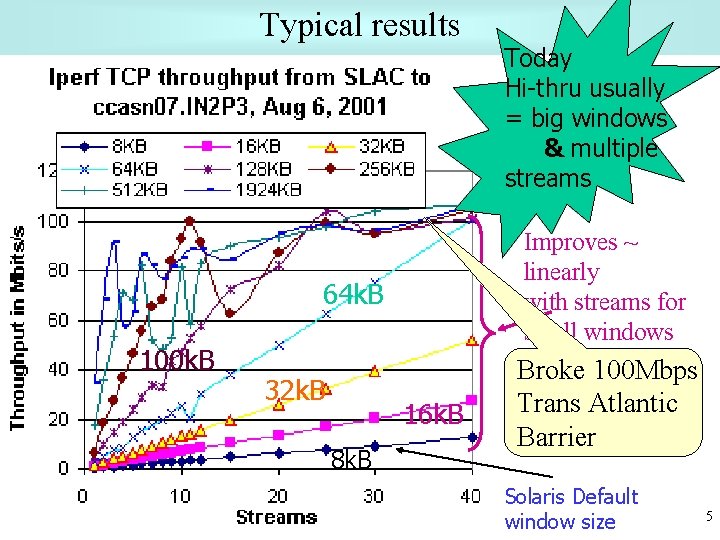

Typical results Improves ~ linearly with streams for small windows 64 k. B 100 k. B 32 k. B 16 k. B 8 k. B Today Hi-thru usually = big windows & multiple streams Broke 100 Mbps Trans Atlantic Barrier Solaris Default window size 5

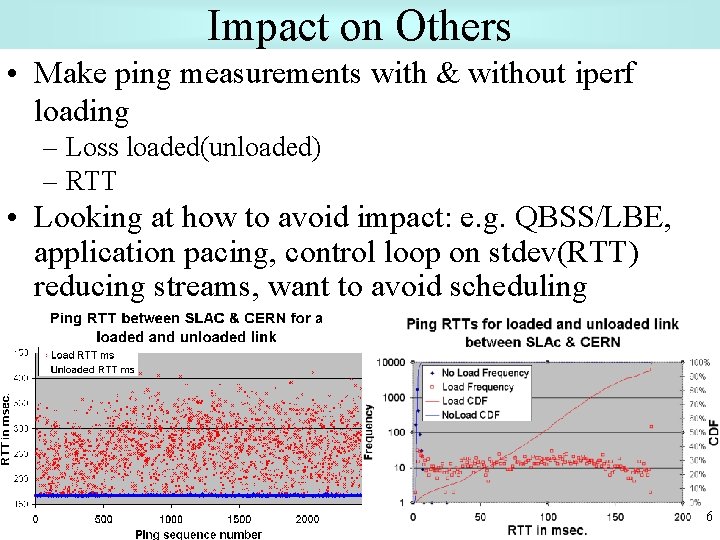

Impact on Others • Make ping measurements with & without iperf loading – Loss loaded(unloaded) – RTT • Looking at how to avoid impact: e. g. QBSS/LBE, application pacing, control loop on stdev(RTT) reducing streams, want to avoid scheduling 6

HENP Experiment Model • World wide collaborations necessary for large undertakings • Regional computer centers in France, Italy, UK & US – Spending Euros on data center at SLAC not attractive – Leverage local equipment & expertise • Resources available to all collaborators • Requirements - bulk: – Bulk data replication (current goal > 100 MBytes/s) – Optimized cached read access to 10 -100 GB from 1 PB data set • Requirements – interactive: – Remote login, video conferencing, document sharing, joint code development, co-laboratory (remote operations, reduced travel, more humane shifts) – Modest bandwidth – often < 1 Mbps – Emphasis on quality of service & sub-second responses 7

Applications • Main network application focus today is on replication at multiple sites worldwide (mainly N. America, Europe and Japan) • Need fast, secure, easy to use, extendable way to copy data between sites – Need to interactive and real time at same time, e. g. experiment control, video & voice conferencing • HEP community has developed 2 major (freely available) applications to meet replication need: bbftp and bbcp 8

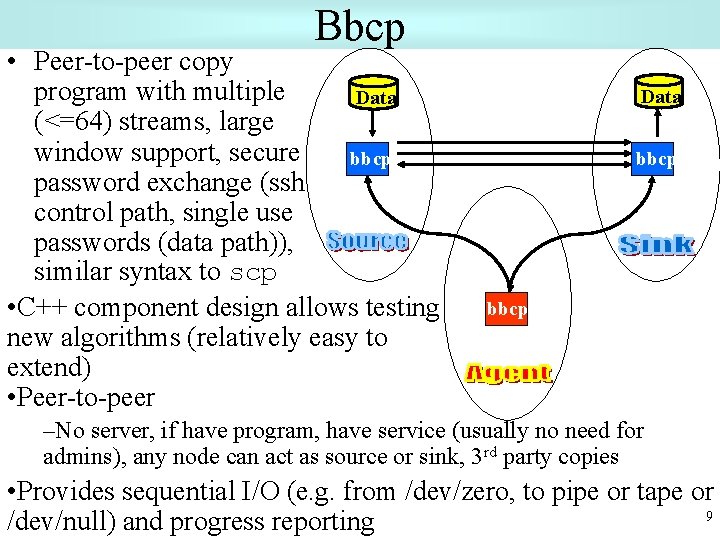

Bbcp • Peer-to-peer copy program with multiple Data (<=64) streams, large window support, secure bbcp password exchange (ssh control path, single use passwords (data path)), similar syntax to scp • C++ component design allows testing new algorithms (relatively easy to extend) • Peer-to-peer Data bbcp –No server, if have program, have service (usually no need for admins), any node can act as source or sink, 3 rd party copies • Provides sequential I/O (e. g. from /dev/zero, to pipe or tape or 9 /dev/null) and progress reporting

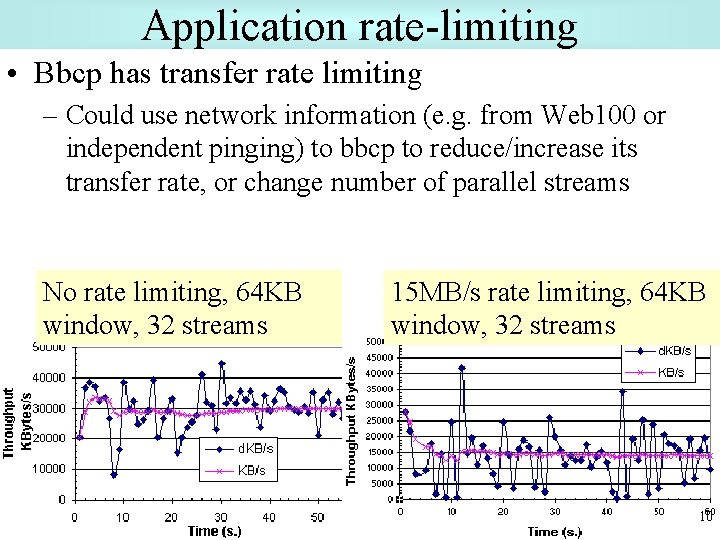

Application rate-limiting • Bbcp has transfer rate limiting – Could use network information (e. g. from Web 100 or independent pinging) to bbcp to reduce/increase its transfer rate, or change number of parallel streams No rate limiting, 64 KB window, 32 streams 15 MB/s rate limiting, 64 KB window, 32 streams 10

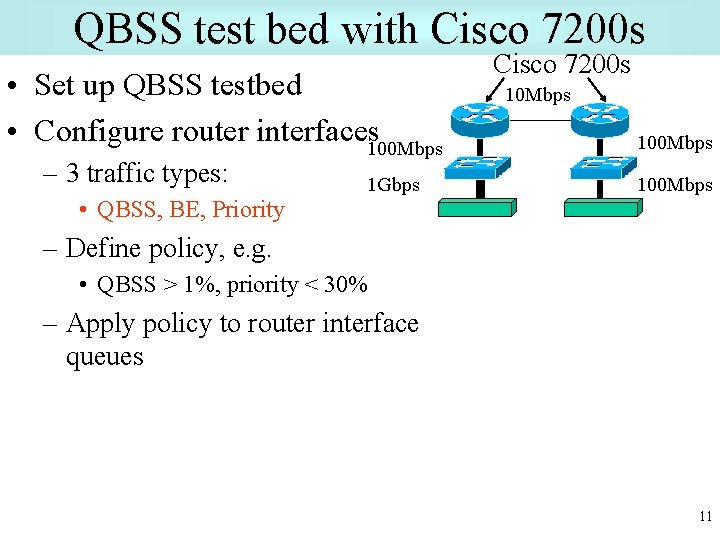

QBSS test bed with Cisco 7200 s • Set up QBSS testbed • Configure router interfaces 100 Mbps – 3 traffic types: 1 Gbps Cisco 7200 s 10 Mbps 100 Mbps • QBSS, BE, Priority – Define policy, e. g. • QBSS > 1%, priority < 30% – Apply policy to router interface queues 11

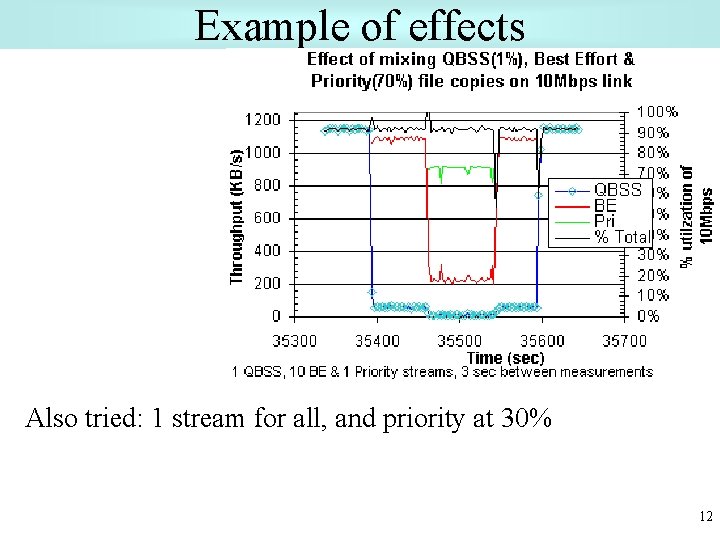

Example of effects Also tried: 1 stream for all, and priority at 30% 12

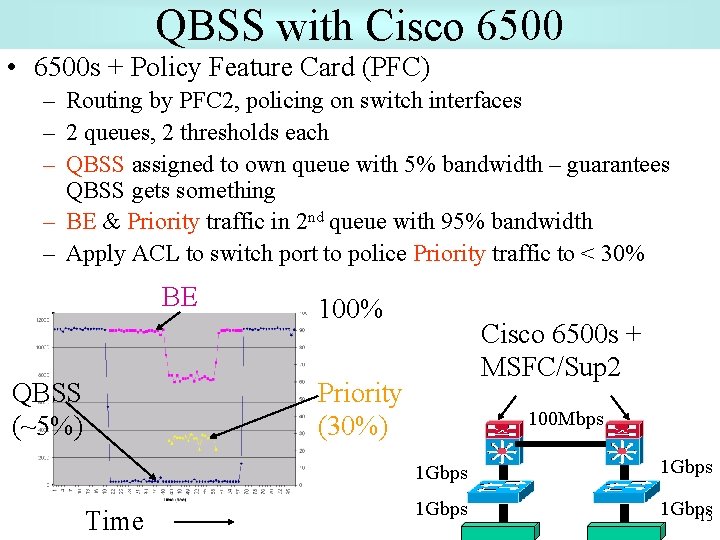

QBSS with Cisco 6500 • 6500 s + Policy Feature Card (PFC) – Routing by PFC 2, policing on switch interfaces – 2 queues, 2 thresholds each – QBSS assigned to own queue with 5% bandwidth – guarantees QBSS gets something – BE & Priority traffic in 2 nd queue with 95% bandwidth – Apply ACL to switch port to police Priority traffic to < 30% BE QBSS (~5%) 100% Cisco 6500 s + MSFC/Sup 2 Priority (30%) Time 100 Mbps 1 Gbps 13

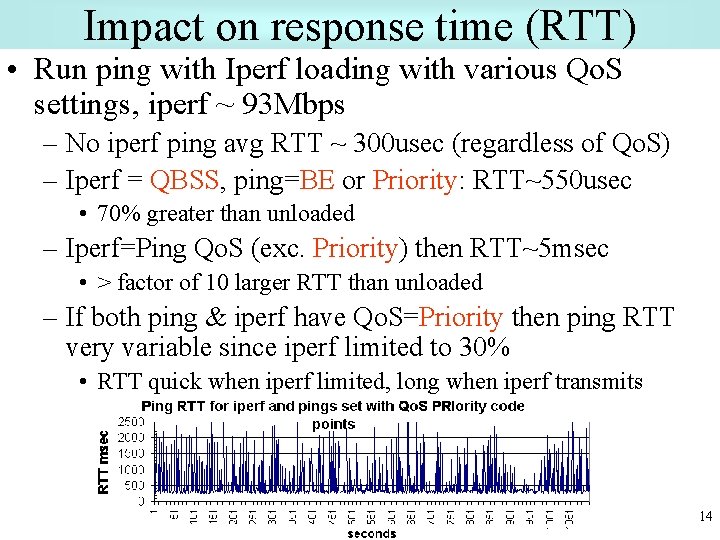

Impact on response time (RTT) • Run ping with Iperf loading with various Qo. S settings, iperf ~ 93 Mbps – No iperf ping avg RTT ~ 300 usec (regardless of Qo. S) – Iperf = QBSS, ping=BE or Priority: RTT~550 usec • 70% greater than unloaded – Iperf=Ping Qo. S (exc. Priority) then RTT~5 msec • > factor of 10 larger RTT than unloaded – If both ping & iperf have Qo. S=Priority then ping RTT very variable since iperf limited to 30% • RTT quick when iperf limited, long when iperf transmits 14

Possible usage • Apply priority to lower volume interactive voice/video-conferencing and real time control • Apply QBSS to high volume data replication • Leave the rest as Best Effort • Since 40 -65% of bytes to/from SLAC come from a single application, we have modified to enable setting of TOS bits • Need to identify bottlenecks and implement QBSS there • Bottlenecks tend to be at edges so hope to try with a few HEP sites 15

SC 2001 demo • Send data from SLAC/FNAL booth computers (emulate a tier 0 or 1 HENP site) to over 20 other sites with good connections in about 6 countries • Part of bandwidth challenge proposal • Saturate 2 Gbps connection to floor network • Apply QBSS to some sites, priority to a few and rest Best Effort – See how QBSS works at high speeds 16

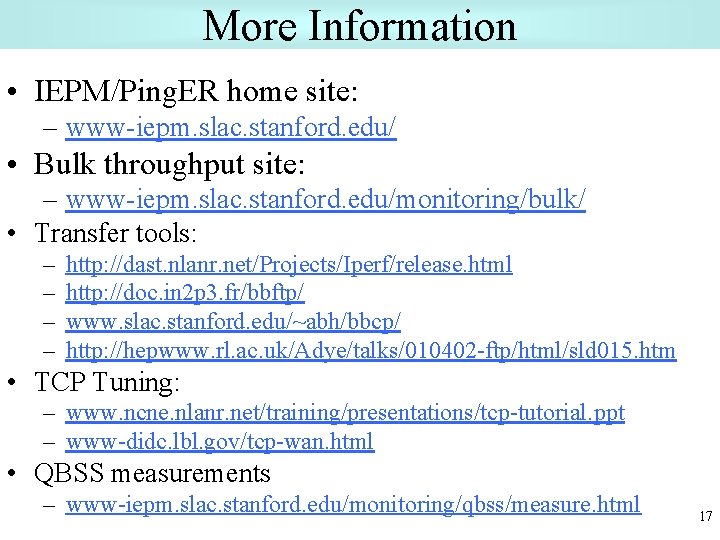

More Information • IEPM/Ping. ER home site: – www-iepm. slac. stanford. edu/ • Bulk throughput site: – www-iepm. slac. stanford. edu/monitoring/bulk/ • Transfer tools: – – http: //dast. nlanr. net/Projects/Iperf/release. html http: //doc. in 2 p 3. fr/bbftp/ www. slac. stanford. edu/~abh/bbcp/ http: //hepwww. rl. ac. uk/Adye/talks/010402 -ftp/html/sld 015. htm • TCP Tuning: – www. ncne. nlanr. net/training/presentations/tcp-tutorial. ppt – www-didc. lbl. gov/tcp-wan. html • QBSS measurements – www-iepm. slac. stanford. edu/monitoring/qbss/measure. html 17

Extra slides with more detail 18

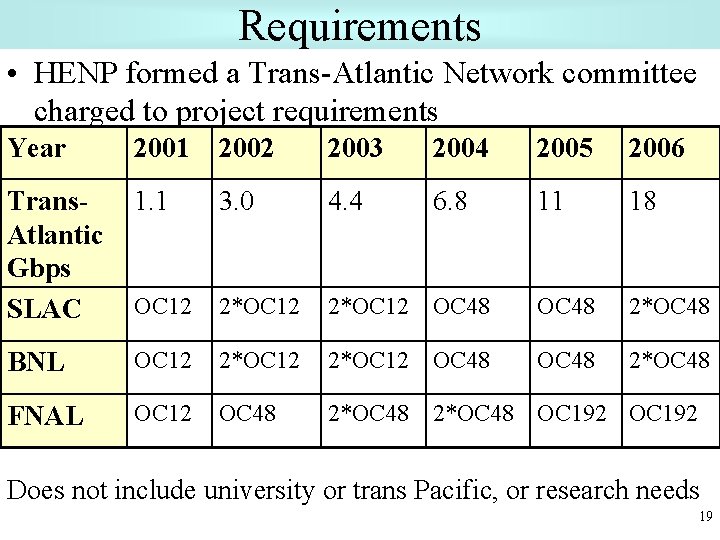

Requirements • HENP formed a Trans-Atlantic Network committee charged to project requirements Year 2001 2002 2003 2004 2005 2006 Trans. Atlantic Gbps SLAC 1. 1 3. 0 4. 4 6. 8 11 18 OC 12 2*OC 12 OC 48 2*OC 48 BNL OC 12 2*OC 12 OC 48 2*OC 48 FNAL OC 12 OC 48 2*OC 48 OC 192 Does not include university or trans Pacific, or research needs 19

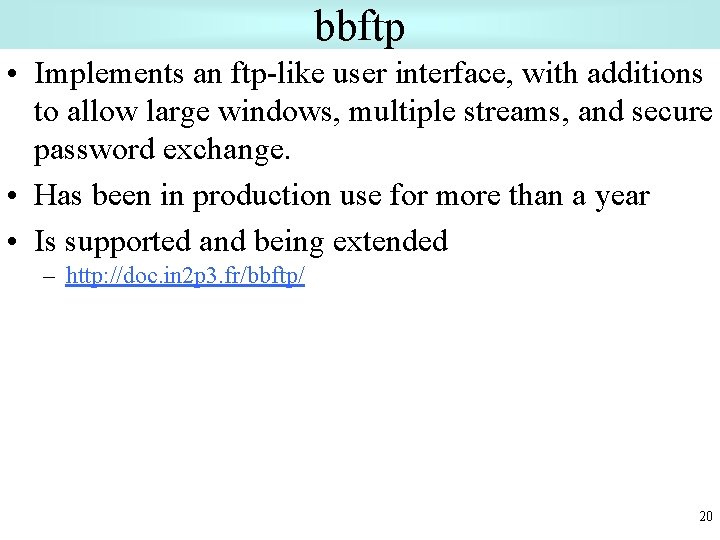

bbftp • Implements an ftp-like user interface, with additions to allow large windows, multiple streams, and secure password exchange. • Has been in production use for more than a year • Is supported and being extended – http: //doc. in 2 p 3. fr/bbftp/ 20

Bbcp: algorithms • Data pipelining – Multiple streams “simultaneously” pushed • • Automatically adapts to router traffic shaping Can control maximum rate Can write to tape, read from /dev/zero, write to /dev/null, pipe Check-pointing (resume failed transmission) • Coordinated buffers – All buffers same-sized emd-to-end • Page aligned buffers – Allows direct I/O on many file-systems (e. g. Veritas) 21

Bbcp: Security • Low cost, simple and effective security – Leveraging widely deployed infrastructure • If you can ssh there you can copy data • Sensitive data is encrypted – One time passwords and control information • Bulk data is not encrypted – Privacy sacrificed for speed • Minimal sharing of information – Source and Sink do not reveal environment 22

![Bbcp: user interface & features • Familiar syntax – bbcp [ options ] source Bbcp: user interface & features • Familiar syntax – bbcp [ options ] source](http://slidetodoc.com/presentation_image/937b28f782bfc9e132610933dc6fec88/image-23.jpg)

Bbcp: user interface & features • Familiar syntax – bbcp [ options ] source [ … ] ] target • Sources and target can be anything – [[username@]hostname: ]]path – /dev/zero or /dev/null • Easy but powerful – Can gather data from multiple hosts – Many usability and performance options • Features: read from /dev/zero; write to: tape, /dev/null, pipe; check-pointing; MD 5 checksums; compression; transfer rate limiting; progress reporting; mark Qo. S/TOS bits 23

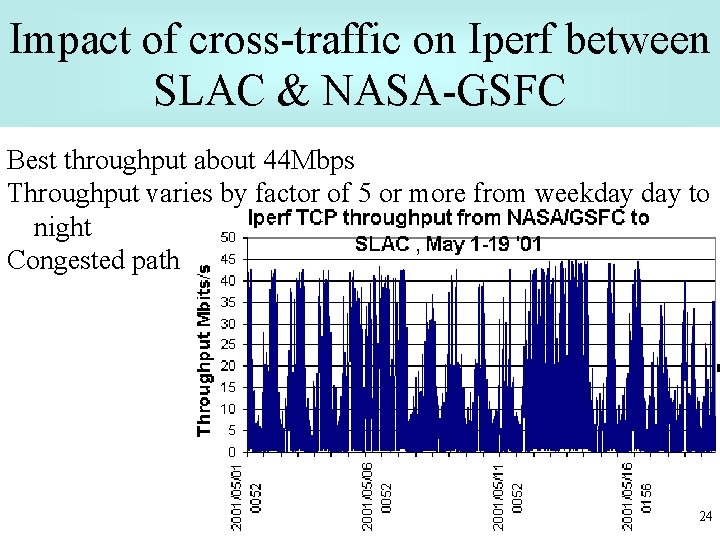

Impact of cross-traffic on Iperf between SLAC & NASA-GSFC Best throughput about 44 Mbps Throughput varies by factor of 5 or more from weekday to night Congested path 24

Using bbcp to make QBSSmeasurements • Run bbcp src data /dev/zero, dst=/dev/null, report throughput at 1 second intervals – with TOS=32 (QBSS) – After 20 s. run bbcp with no TOS bits specified (BE) – After 20 s. run bbcp with TOS=40 (priority) – After 20 more secs turn off Priority – After 20 more secs turn off BE 25

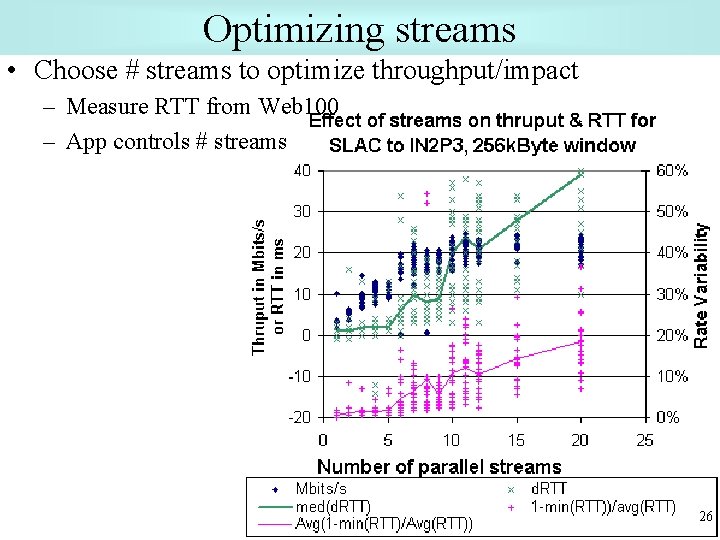

Optimizing streams • Choose # streams to optimize throughput/impact – Measure RTT from Web 100 – App controls # streams 26

- Slides: 26