Published in HPCA 2003 Runahead Execution An Alternative

Published in HPCA 2003 Runahead Execution: An Alternative to Very Large Instruction Windows for Out-of-order Processors Onur Mutlu § Jared Stark † Chris Wilkerson ‡ Yale N. Patt§ § ECE Department † Microprocessor Research The University of Texas at Austin Intel Labs {onur, patt}@ece. utexas. edu jared. w. stark@intel. com Presented by Silvan Niederer 21. 18 ‡ Desktop Platforms Group Intel Corporation chris. wilkerson@intel. com 1

Background, Problem & Goal 2

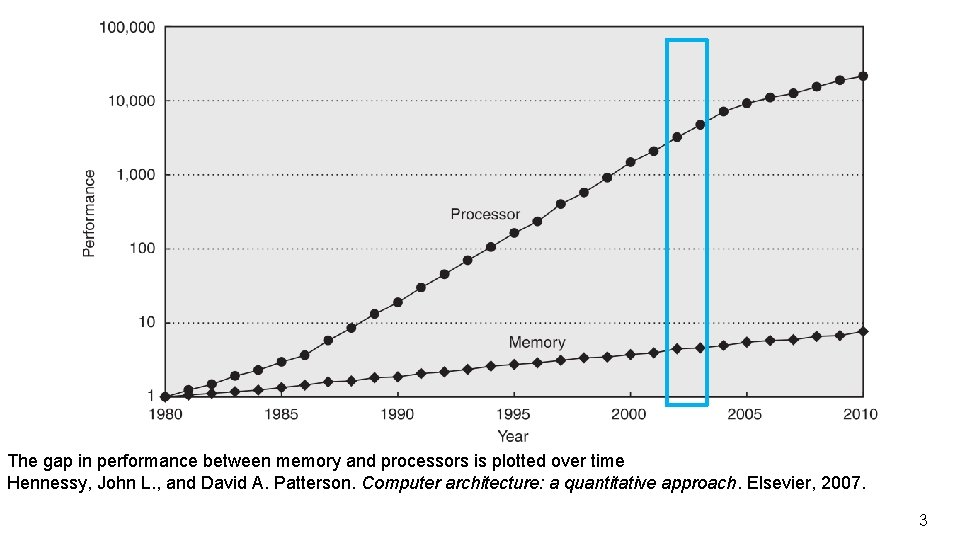

The gap in performance between memory and processors is plotted over time Hennessy, John L. , and David A. Patterson. Computer architecture: a quantitative approach. Elsevier, 2007. 3

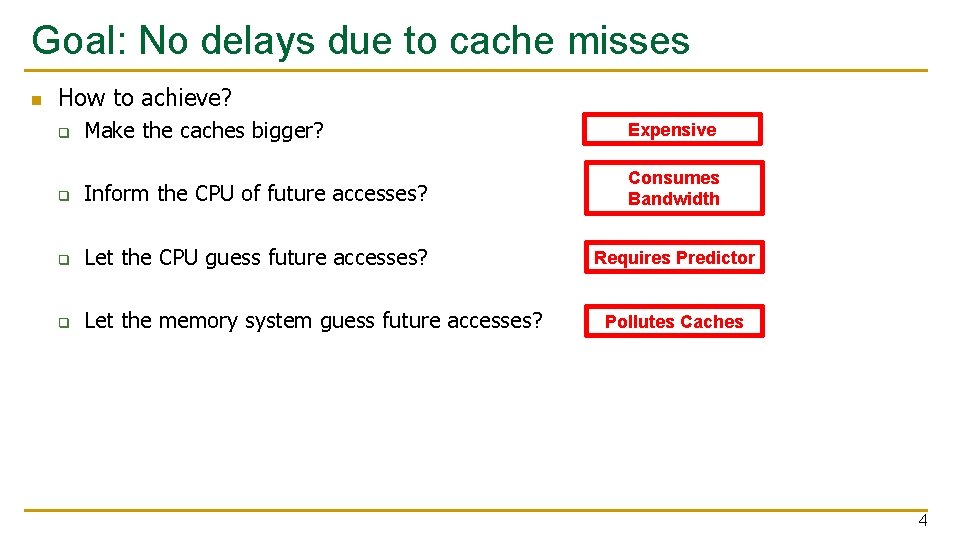

Goal: No delays due to cache misses n How to achieve? Make the caches bigger? Expensive q Inform the CPU of future accesses? Consumes Bandwidth q Let the CPU guess future accesses? Requires Predictor q Let the memory system guess future accesses? q Pollutes Caches 4

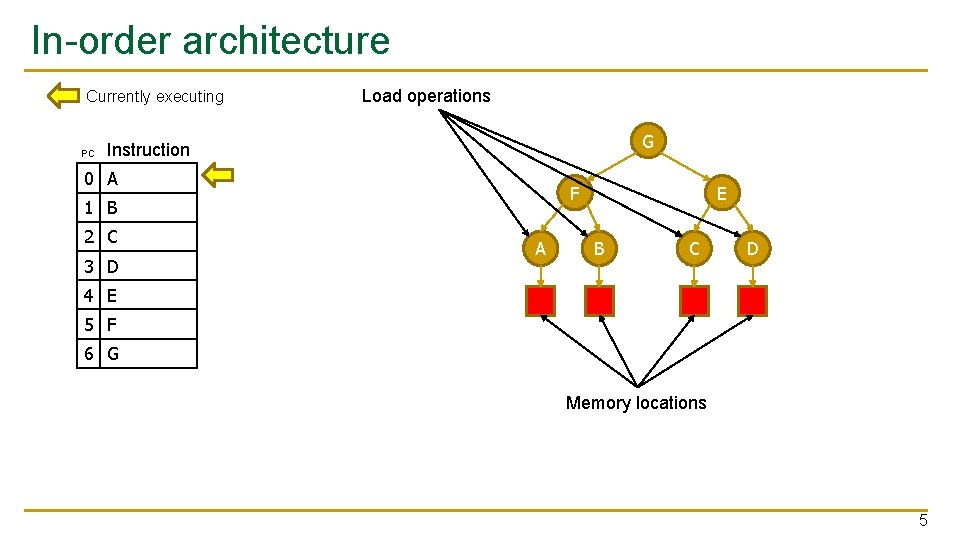

In-order architecture Currently executing PC Load operations G Instruction 0 A F 1 B 2 C 3 D A E B C D 4 E 5 F 6 G Memory locations 5

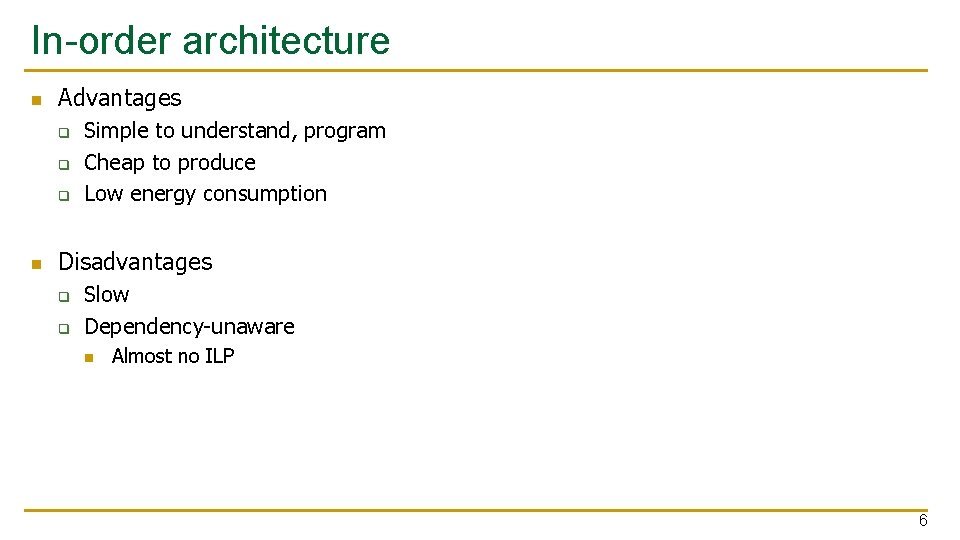

In-order architecture n Advantages q q q n Simple to understand, program Cheap to produce Low energy consumption Disadvantages q q Slow Dependency-unaware n Almost no ILP 6

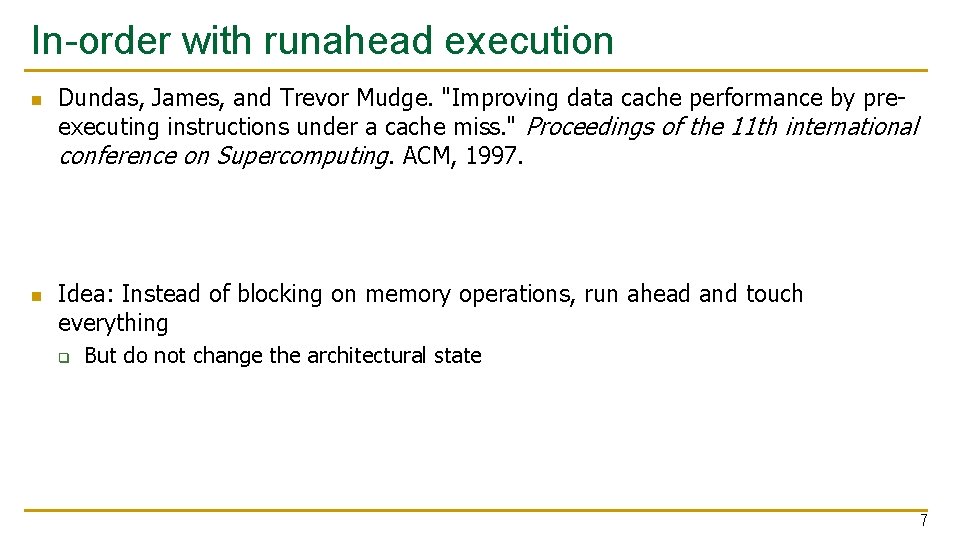

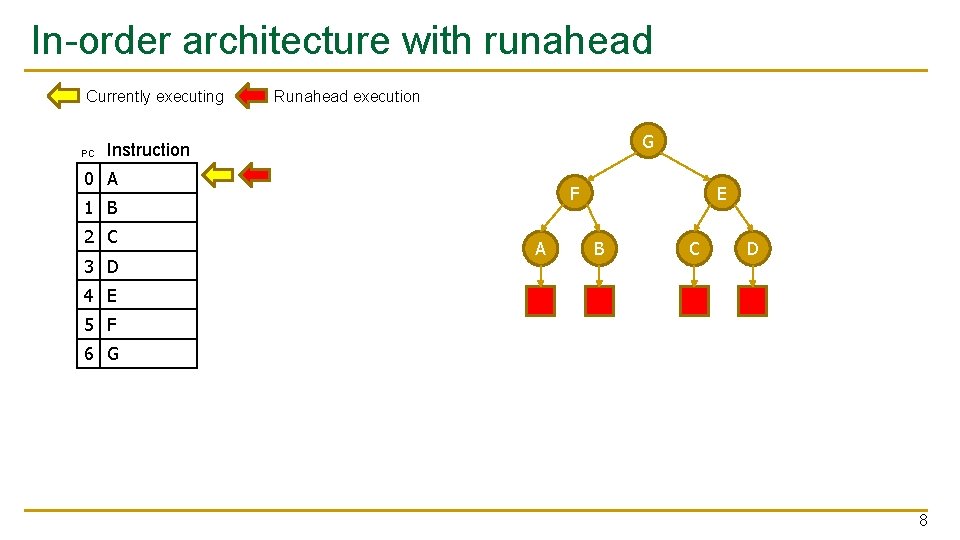

In-order with runahead execution n n Dundas, James, and Trevor Mudge. "Improving data cache performance by preexecuting instructions under a cache miss. " Proceedings of the 11 th international conference on Supercomputing. ACM, 1997. Idea: Instead of blocking on memory operations, run ahead and touch everything q But do not change the architectural state 7

In-order architecture with runahead Currently executing PC Runahead execution G Instruction 0 A F 1 B 2 C 3 D A E B C D 4 E 5 F 6 G 8

In-order architecture with runahead n Advantages q q n Simple MLP Disadvantages q q Small additional cost Some executed instructions are repeated n Results of runahead execution are not reused 9

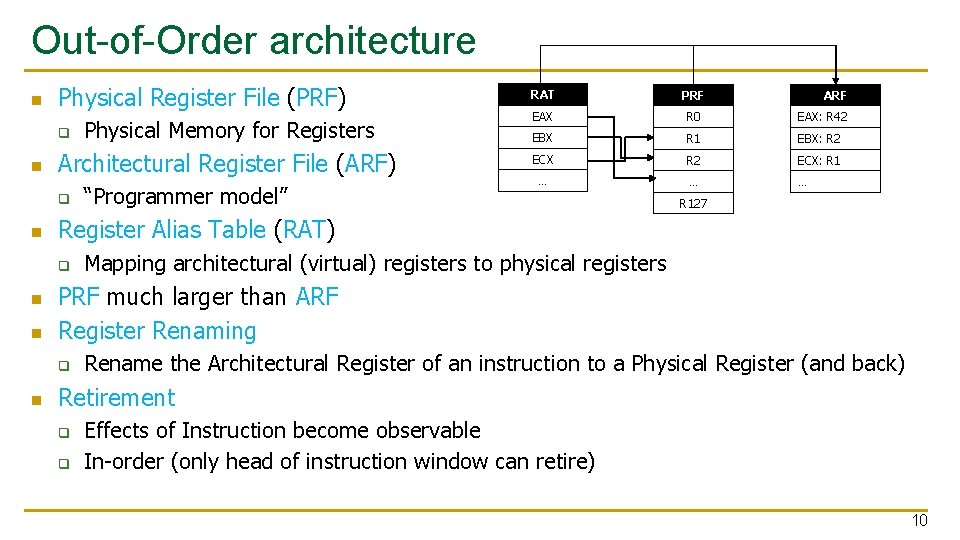

Out-of-Order architecture n Physical Register File (PRF) q n Architectural Register File (ARF) q n n EAX R 0 EAX: R 42 EBX R 1 EBX: R 2 ECX: R 1 … … … ARF R 127 Mapping architectural (virtual) registers to physical registers PRF much larger than ARF Register Renaming q n “Programmer model” PRF Register Alias Table (RAT) q n Physical Memory for Registers RAT Rename the Architectural Register of an instruction to a Physical Register (and back) Retirement q q Effects of Instruction become observable In-order (only head of instruction window can retire) 10

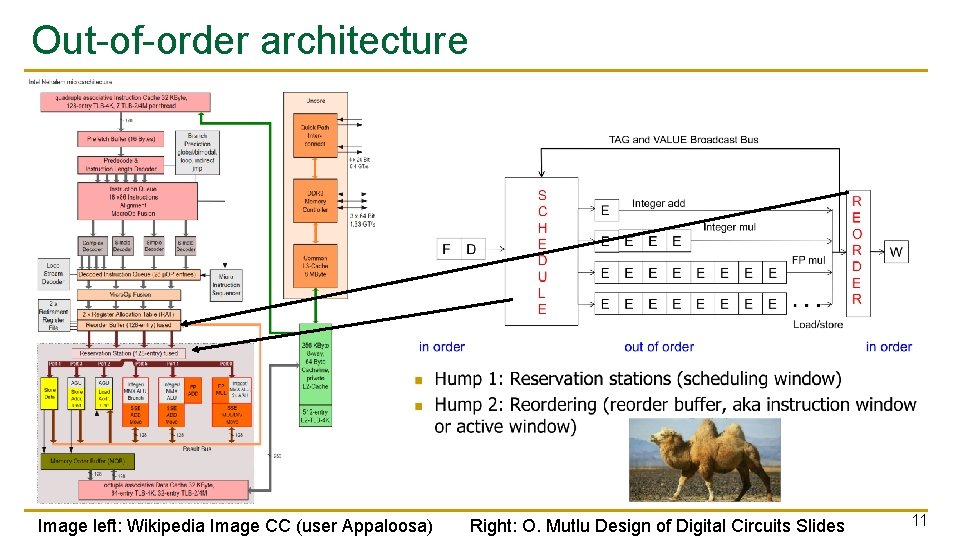

Out-of-order architecture Image left: Wikipedia Image CC (user Appaloosa) Right: O. Mutlu Design of Digital Circuits Slides 11

Out-of-order architecture n Scheduling Window q q n Instruction Window q q n How many instructions are waiting to be retired Element on chip: Reorder Buffer (ROB) In reality: Instruction Window larger than Scheduling Window q n How many instructions are waiting for execution Element on chip: Reservation Station Sched. W. subset of Inst. W. For this presentation: Instruction Window = Scheduling Window 12

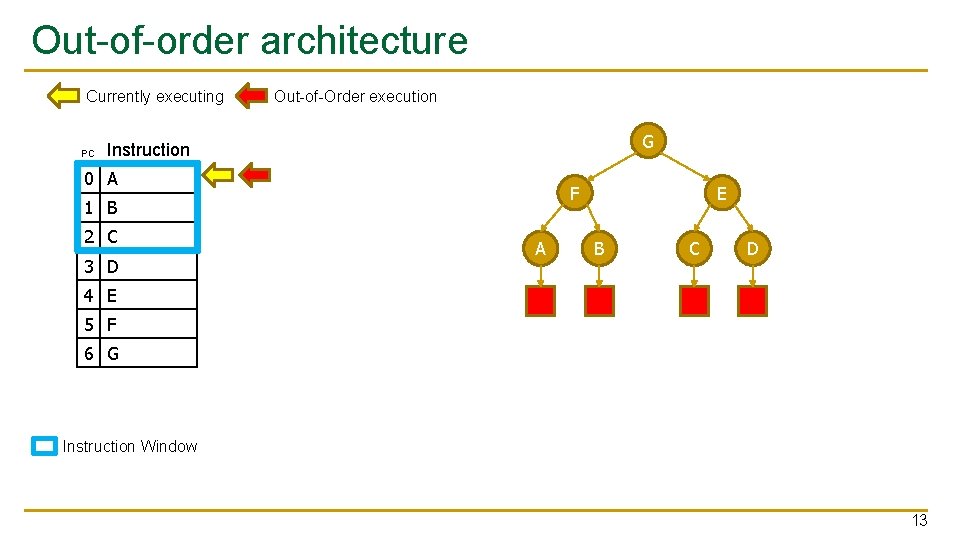

Out-of-order architecture Currently executing PC Out-of-Order execution G Instruction 0 A F 1 B 2 C 3 D A E B C D 4 E 5 F 6 G Instruction Window 13

Out-of-order architecture n Advantages q q q n Dependency-Aware Fast (ILP, MLP) Instructions executed once Disadvantages q q q Expensive Performance largely dependent on window size Blocking 14

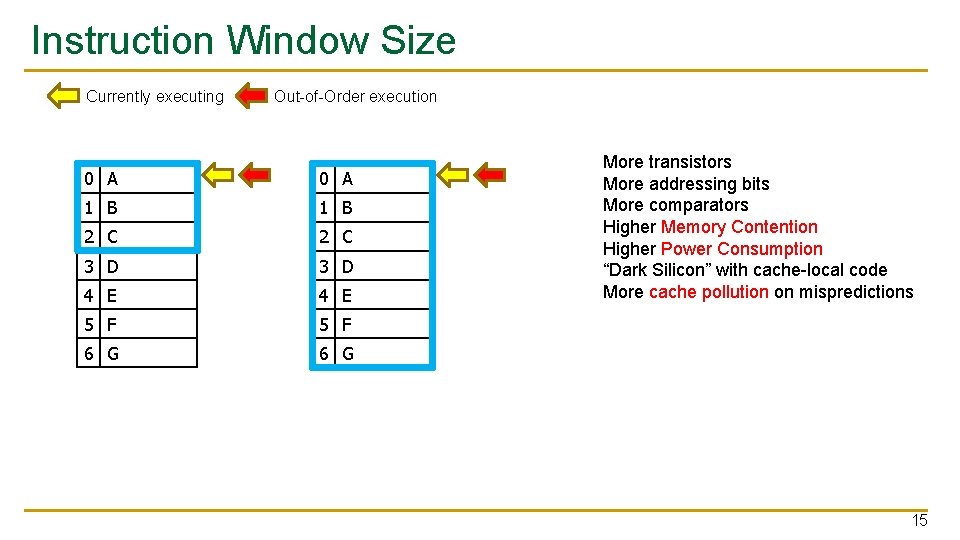

Instruction Window Size Currently executing Out-of-Order execution 0 A 1 B 2 C 3 D 4 E 5 F 6 G More transistors More addressing bits More comparators Higher Memory Contention Higher Power Consumption “Dark Silicon” with cache-local code More cache pollution on mispredictions 15

Key Approach and Ideas 16

Make the window non-blocking n A non-blocking window behaves like a bigger blocking window q n But costs less Existing hardware can be used while otherwise idle 17

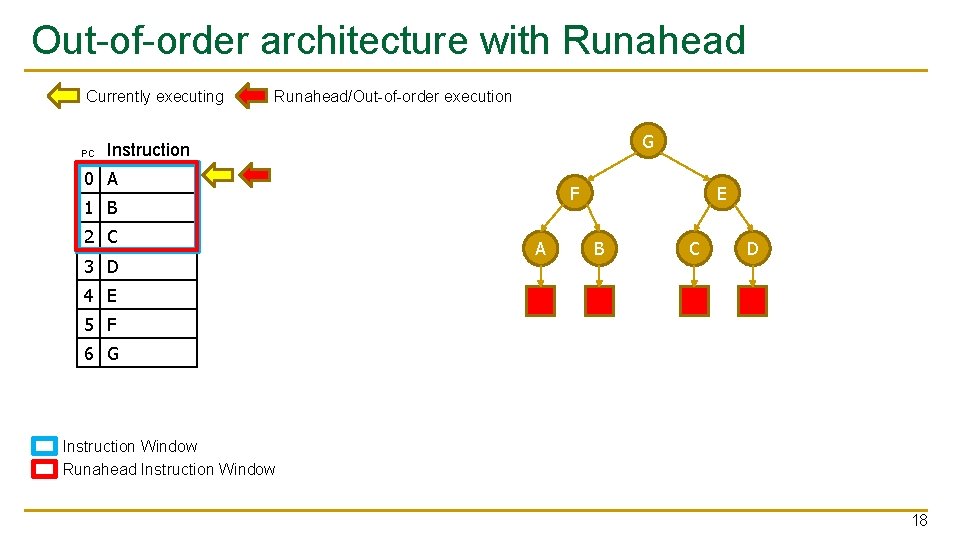

Out-of-order architecture with Runahead Currently executing PC Runahead/Out-of-order execution G Instruction 0 A F 1 B 2 C 3 D A E B C D 4 E 5 F 6 G Instruction Window Runahead Instruction Window 18

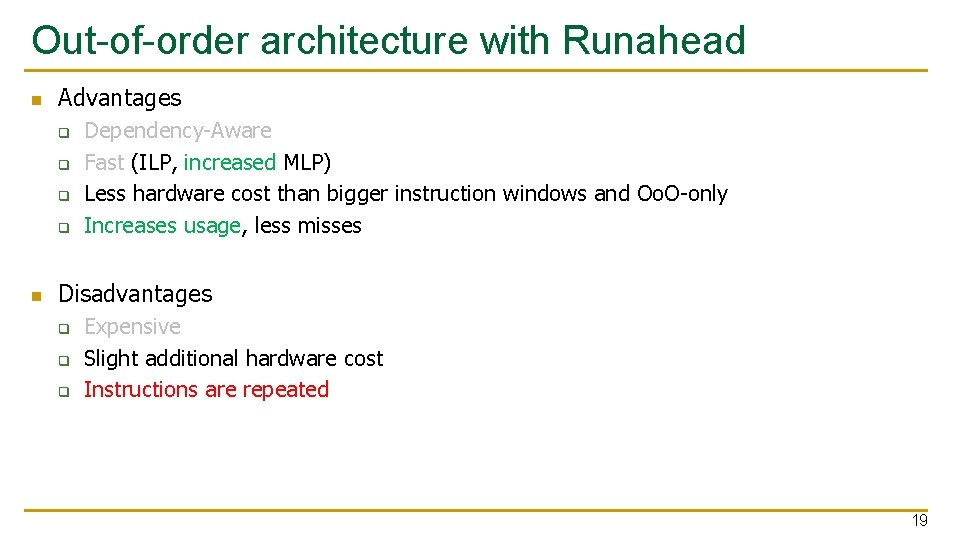

Out-of-order architecture with Runahead n Advantages q q n Dependency-Aware Fast (ILP, increased MLP) Less hardware cost than bigger instruction windows and Oo. O-only Increases usage, less misses Disadvantages q q q Expensive Slight additional hardware cost Instructions are repeated 19

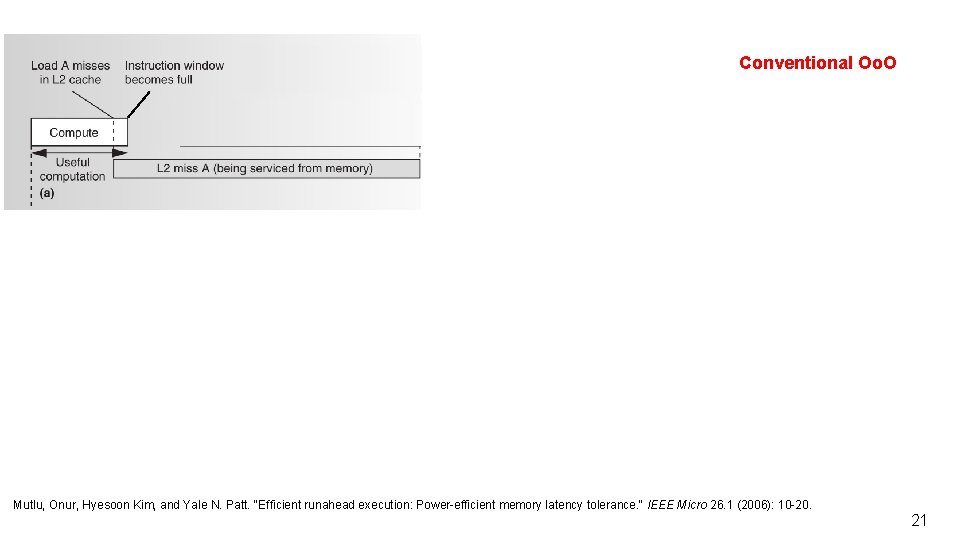

Conventional Oo. O Mutlu, Onur, Hyesoon Kim, and Yale N. Patt. "Efficient runahead execution: Power-efficient memory latency tolerance. " IEEE Micro 26. 1 (2006): 10 -20. 20

Conventional Oo. O Mutlu, Onur, Hyesoon Kim, and Yale N. Patt. "Efficient runahead execution: Power-efficient memory latency tolerance. " IEEE Micro 26. 1 (2006): 10 -20. 21

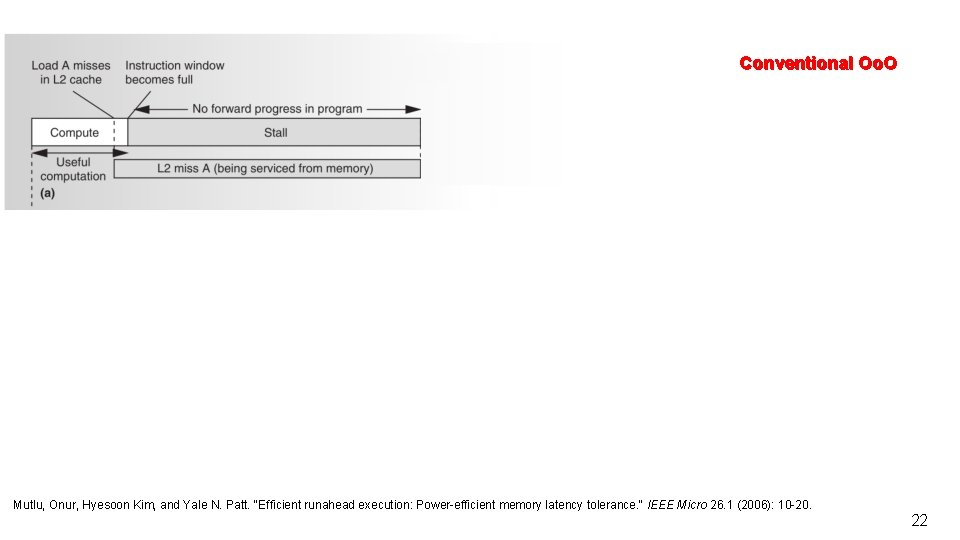

Conventional Oo. O Mutlu, Onur, Hyesoon Kim, and Yale N. Patt. "Efficient runahead execution: Power-efficient memory latency tolerance. " IEEE Micro 26. 1 (2006): 10 -20. 22

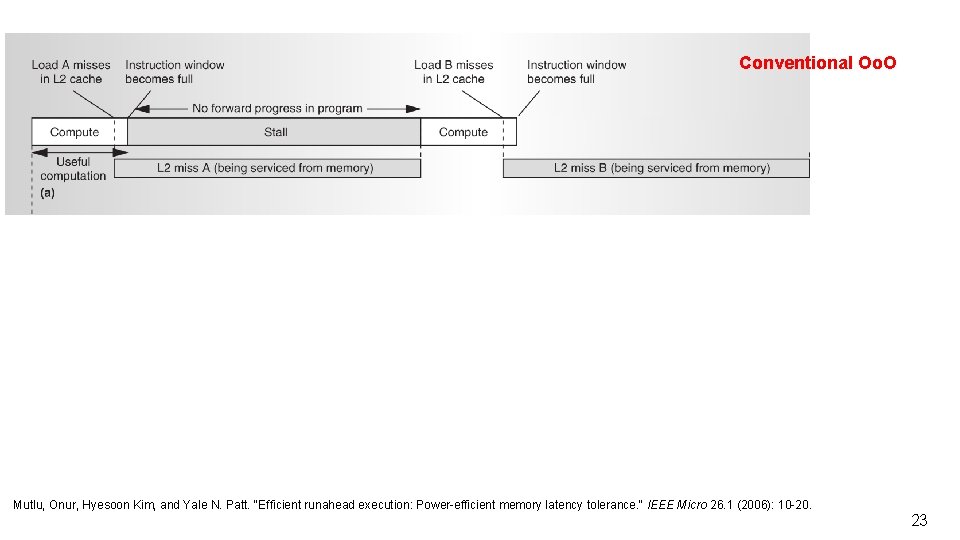

Conventional Oo. O Mutlu, Onur, Hyesoon Kim, and Yale N. Patt. "Efficient runahead execution: Power-efficient memory latency tolerance. " IEEE Micro 26. 1 (2006): 10 -20. 23

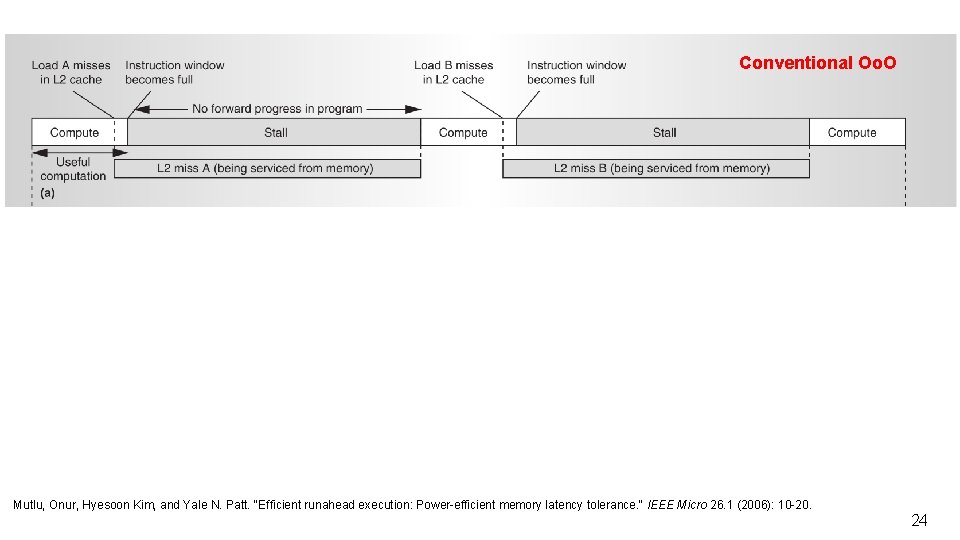

Conventional Oo. O Mutlu, Onur, Hyesoon Kim, and Yale N. Patt. "Efficient runahead execution: Power-efficient memory latency tolerance. " IEEE Micro 26. 1 (2006): 10 -20. 24

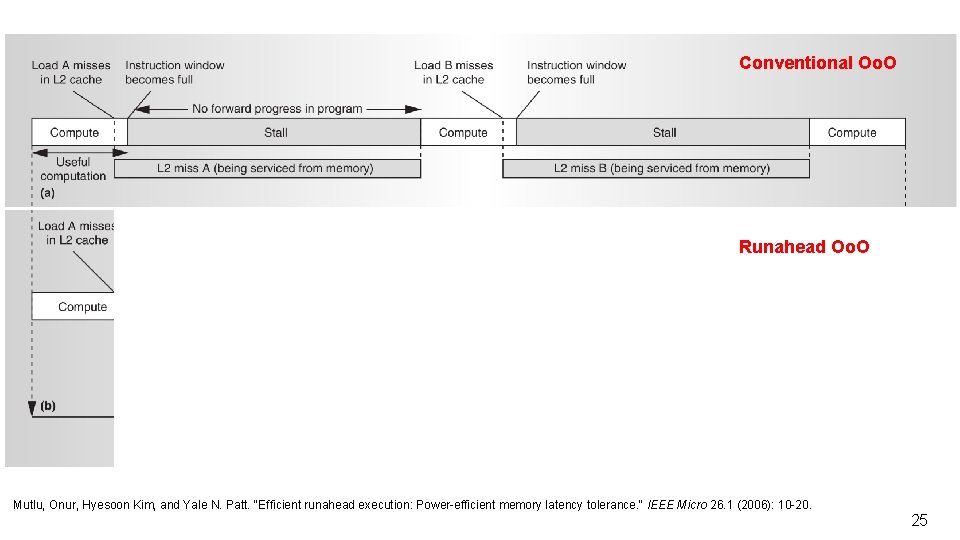

Conventional Oo. O Runahead Oo. O Mutlu, Onur, Hyesoon Kim, and Yale N. Patt. "Efficient runahead execution: Power-efficient memory latency tolerance. " IEEE Micro 26. 1 (2006): 10 -20. 25

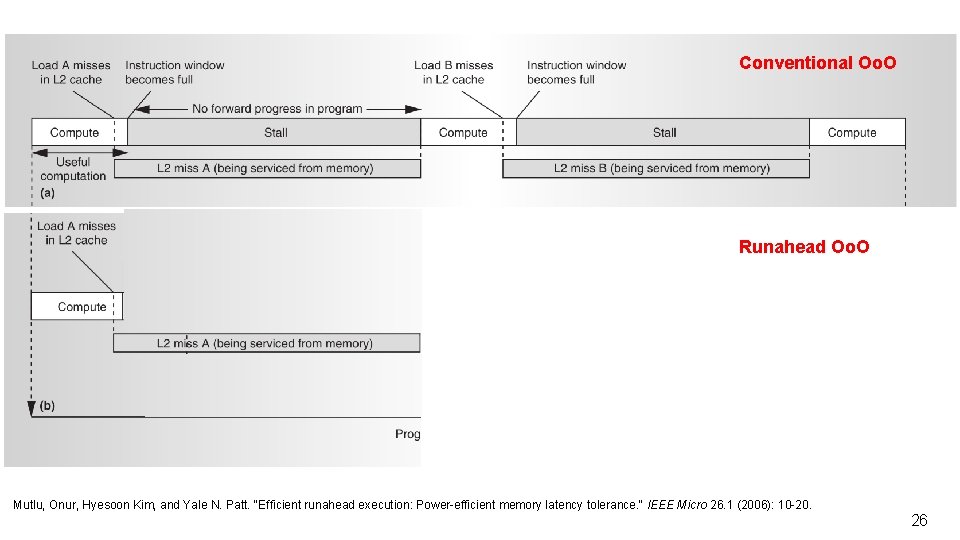

Conventional Oo. O Runahead Oo. O Mutlu, Onur, Hyesoon Kim, and Yale N. Patt. "Efficient runahead execution: Power-efficient memory latency tolerance. " IEEE Micro 26. 1 (2006): 10 -20. 26

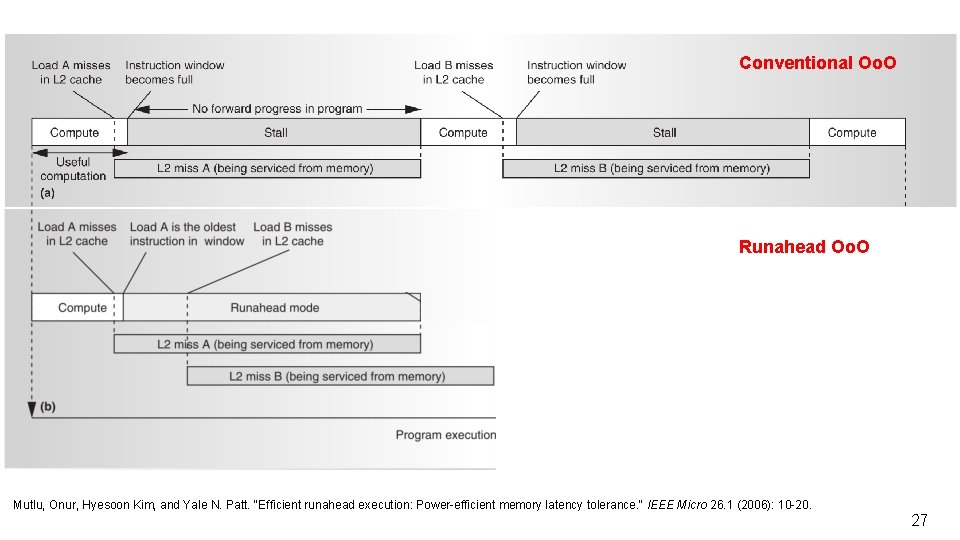

Conventional Oo. O Runahead Oo. O Mutlu, Onur, Hyesoon Kim, and Yale N. Patt. "Efficient runahead execution: Power-efficient memory latency tolerance. " IEEE Micro 26. 1 (2006): 10 -20. 27

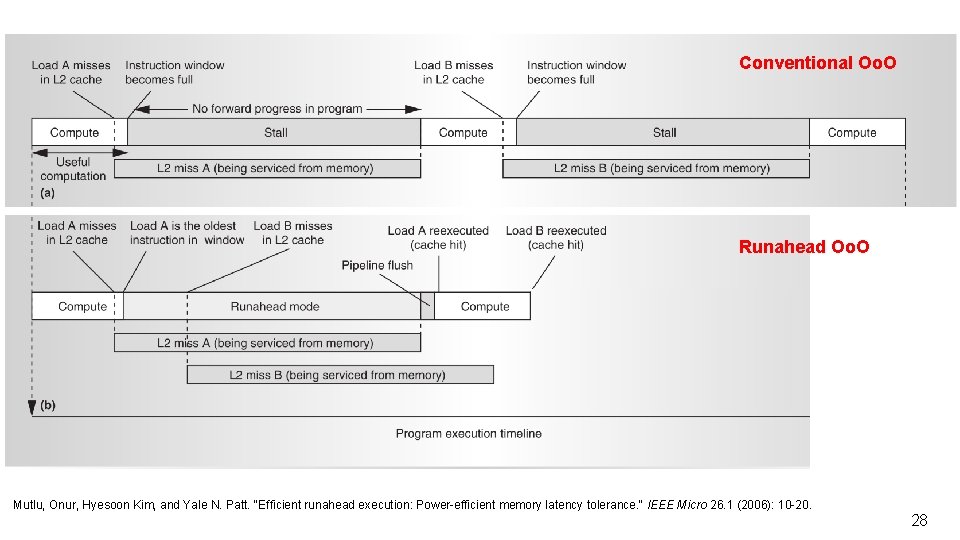

Conventional Oo. O Runahead Oo. O Mutlu, Onur, Hyesoon Kim, and Yale N. Patt. "Efficient runahead execution: Power-efficient memory latency tolerance. " IEEE Micro 26. 1 (2006): 10 -20. 28

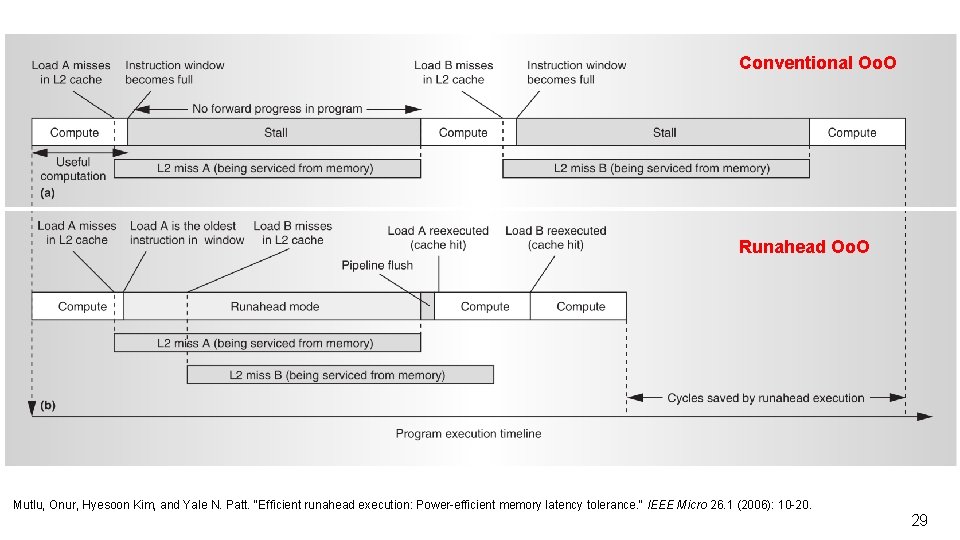

Conventional Oo. O Runahead Oo. O Mutlu, Onur, Hyesoon Kim, and Yale N. Patt. "Efficient runahead execution: Power-efficient memory latency tolerance. " IEEE Micro 26. 1 (2006): 10 -20. 29

Mechanisms (in some detail) 30

Runahead Mode n n n Turning the CPU into an expensive (and smart) prefetcher Everything runs the same as in “Normal Mode” Exceptions: q q q n Interrupts I/O Accesses Stores Has no effect on the architectural state q “Hidden from the programmer” 31

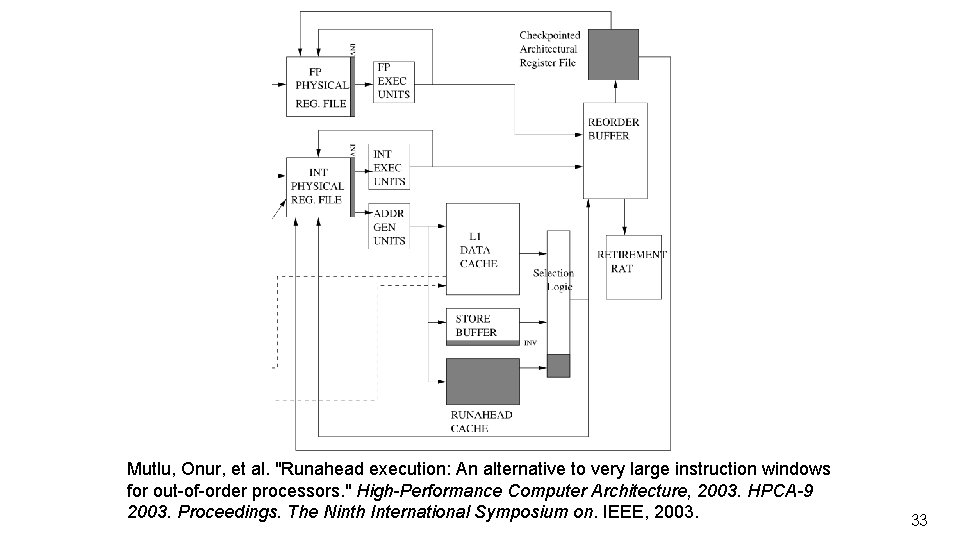

Mutlu, Onur, et al. "Runahead execution: An alternative to very large instruction windows for out-of-order processors. " High-Performance Computer Architecture, 2003. HPCA-9 2003. Proceedings. The Ninth International Symposium on. IEEE, 2003. 32

Mutlu, Onur, et al. "Runahead execution: An alternative to very large instruction windows for out-of-order processors. " High-Performance Computer Architecture, 2003. HPCA-9 2003. Proceedings. The Ninth International Symposium on. IEEE, 2003. 33

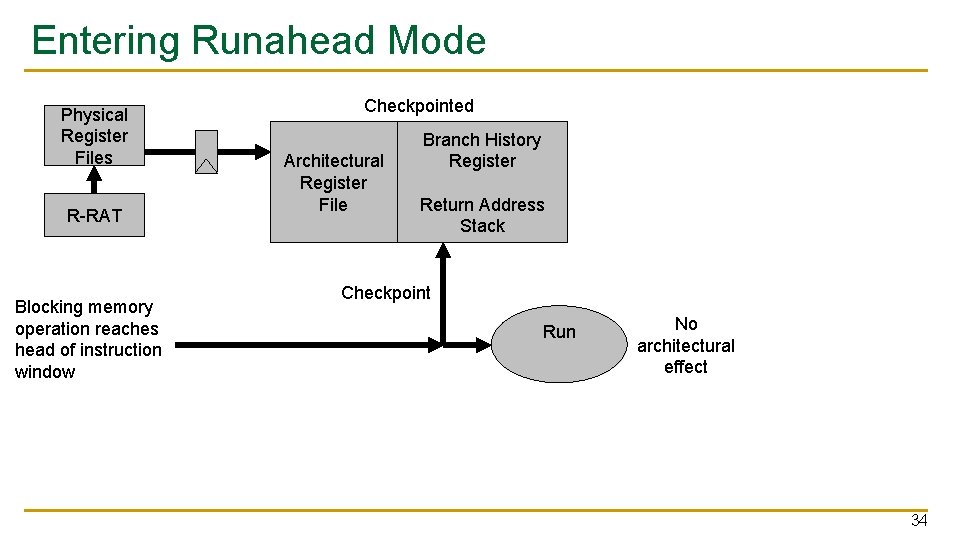

Entering Runahead Mode Physical Register Files R-RAT Blocking memory operation reaches head of instruction window Checkpointed Architectural Register File Branch History Register Return Address Stack Checkpoint Run No architectural effect 34

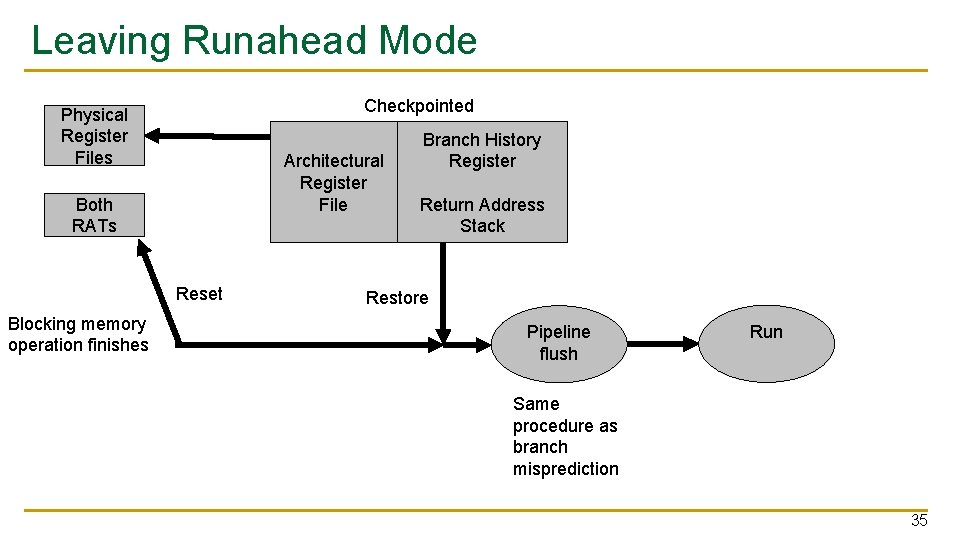

Leaving Runahead Mode Checkpointed Physical Register Files Architectural Register File Both RATs Reset Blocking memory operation finishes Branch History Register Return Address Stack Restore Pipeline flush Run Same procedure as branch misprediction 35

Mutlu, Onur, et al. "Runahead execution: An alternative to very large instruction windows for out-of-order processors. " High-Performance Computer Architecture, 2003. HPCA-9 2003. Proceedings. The Ninth International Symposium on. IEEE, 2003. 36

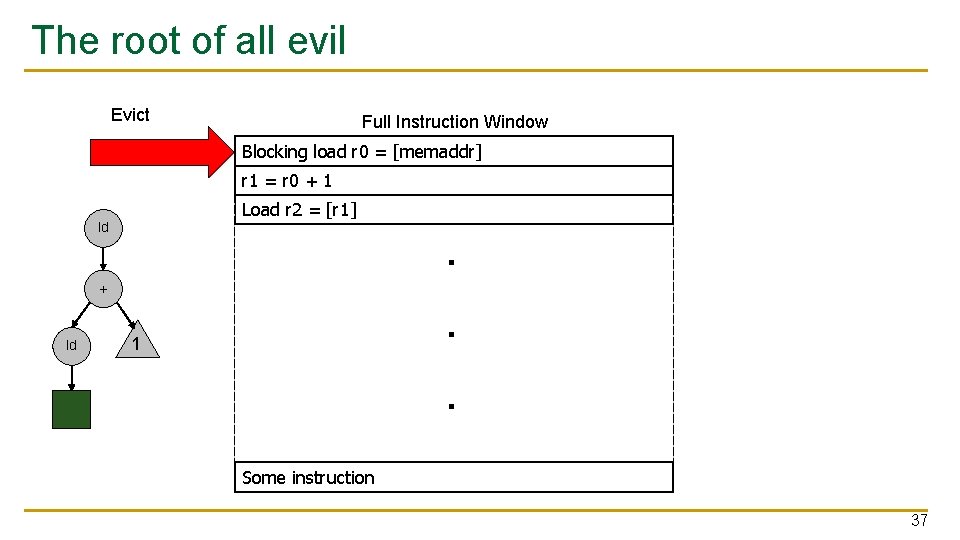

The root of all evil Evict Full Instruction Window Blocking load r 0 = [memaddr] r 1 = r 0 + 1 Load r 2 = [r 1] ld + ld 1 . . . Some instruction 37

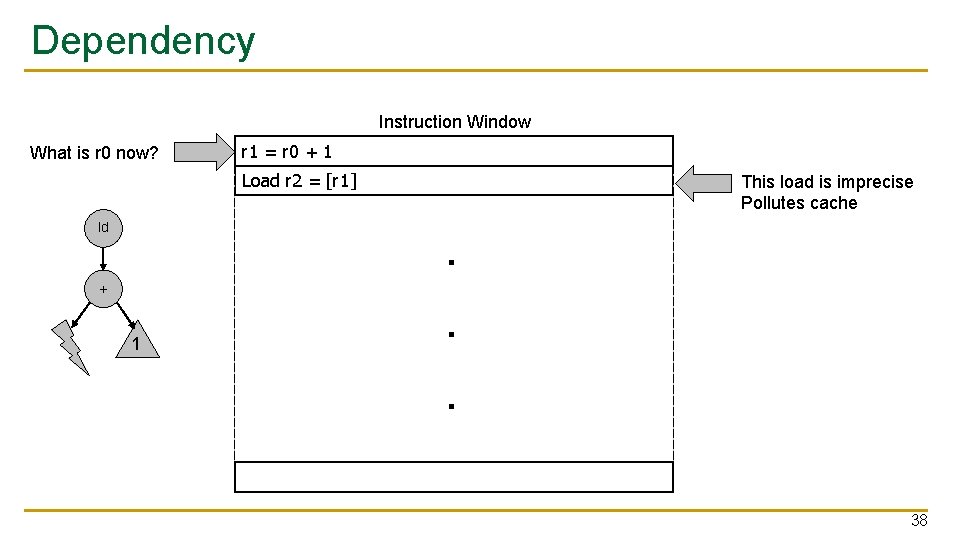

Dependency Instruction Window What is r 0 now? r 1 = r 0 + 1 Load r 2 = [r 1] ld + 1 . . . This load is imprecise Pollutes cache 38

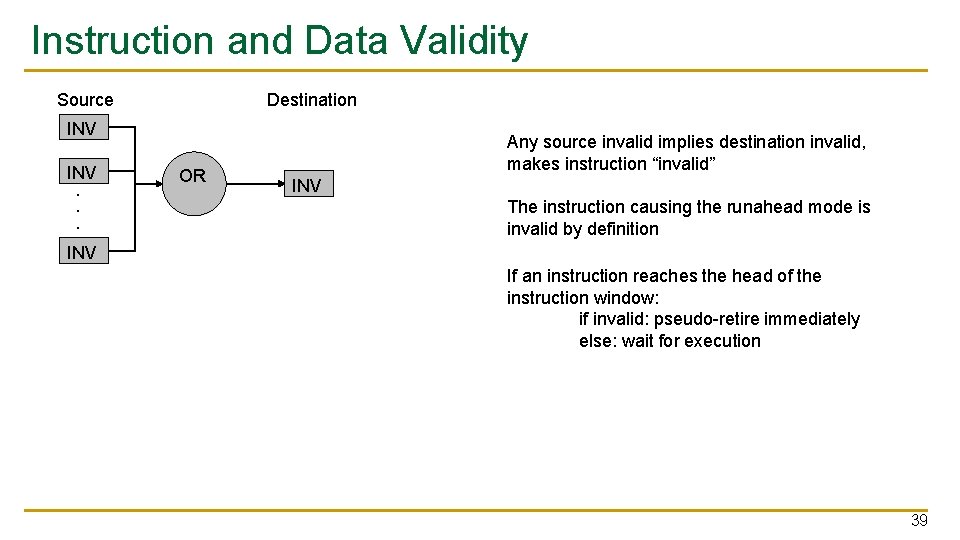

Instruction and Data Validity Source Destination INV . . . OR Any source invalid implies destination invalid, makes instruction “invalid” INV The instruction causing the runahead mode is invalid by definition INV If an instruction reaches the head of the instruction window: if invalid: pseudo-retire immediately else: wait for execution 39

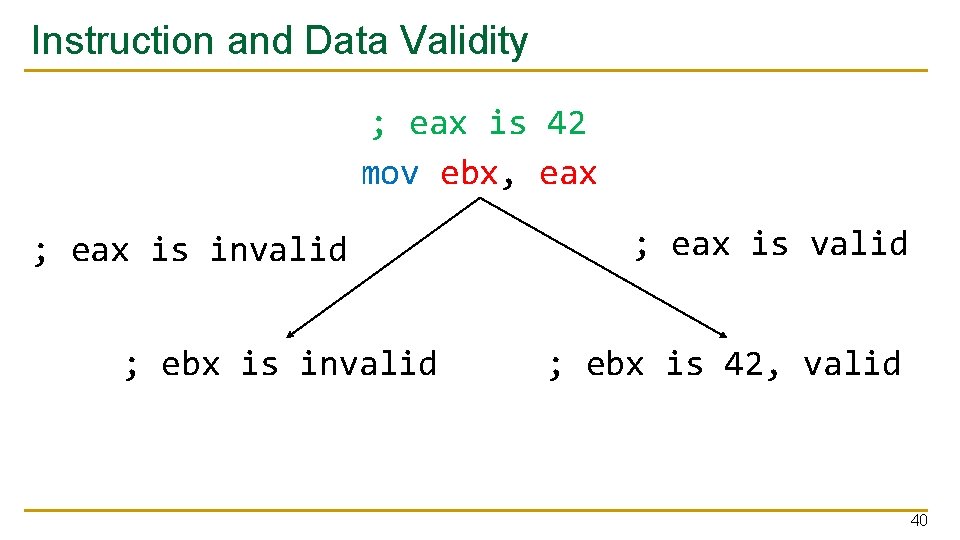

Instruction and Data Validity ; eax is 42 mov ebx, eax ; eax is invalid ; ebx is invalid ; eax is valid ; ebx is 42, valid 40

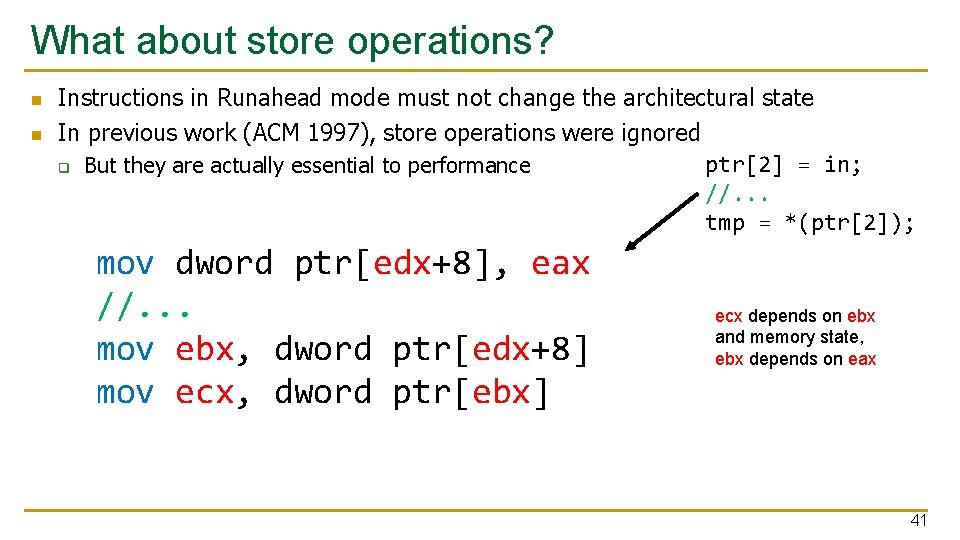

What about store operations? n n Instructions in Runahead mode must not change the architectural state In previous work (ACM 1997), store operations were ignored ptr[2] = in; q But they are actually essential to performance //. . . tmp = *(ptr[2]); mov dword ptr[edx+8], eax //. . . mov ebx, dword ptr[edx+8] mov ecx, dword ptr[ebx] ecx depends on ebx and memory state, ebx depends on eax 41

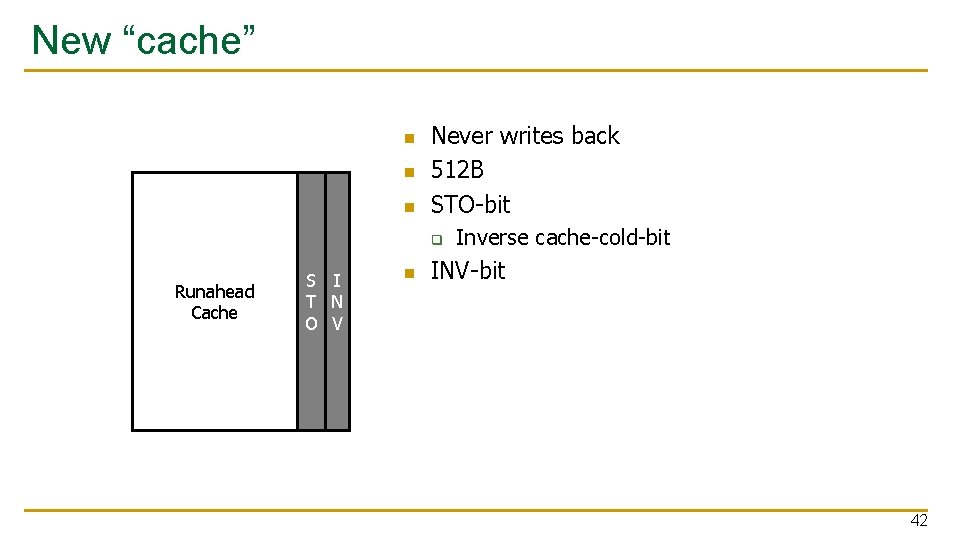

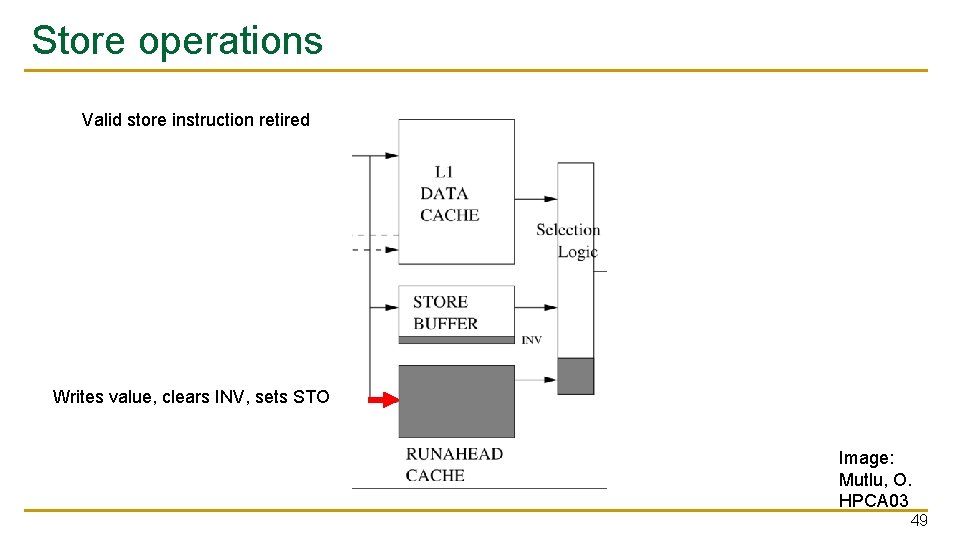

New “cache” n n n Never writes back 512 B STO-bit q Runahead Cache S I T N O V n Inverse cache-cold-bit INV-bit 42

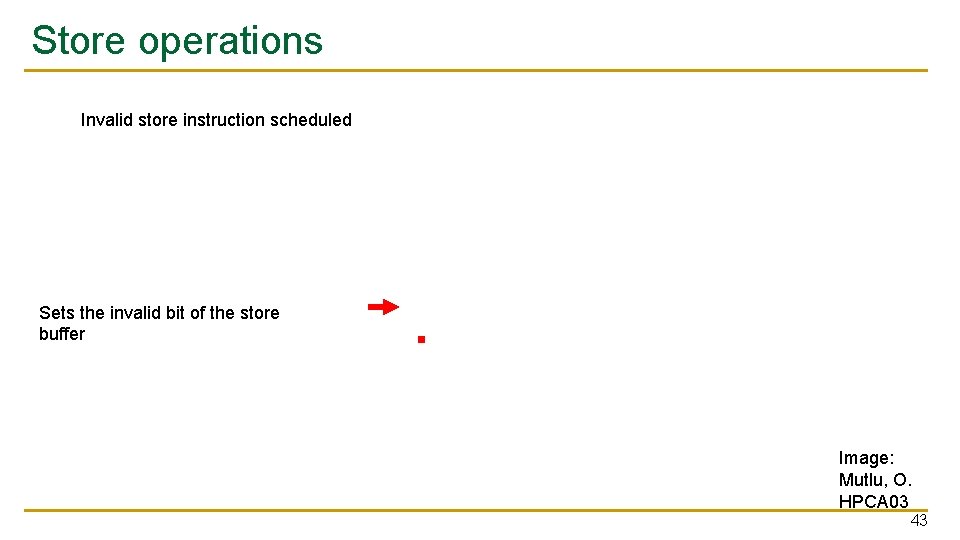

Store operations Invalid store instruction scheduled Sets the invalid bit of the store buffer Image: Mutlu, O. HPCA 03 43

![Load operations Invalid store instruction scheduled mov dword ptr[esp+8], eax // few instructions mov Load operations Invalid store instruction scheduled mov dword ptr[esp+8], eax // few instructions mov](http://slidetodoc.com/presentation_image/2c402a0ad3cf663d8a17d588ba232a70/image-44.jpg)

Load operations Invalid store instruction scheduled mov dword ptr[esp+8], eax // few instructions mov ebx, dword ptr[esp+8] mov ecx, dword ptr[ebx] ebx is now INV Sets the invalid bit of the store buffer Image: Mutlu, O. HPCA 03 44

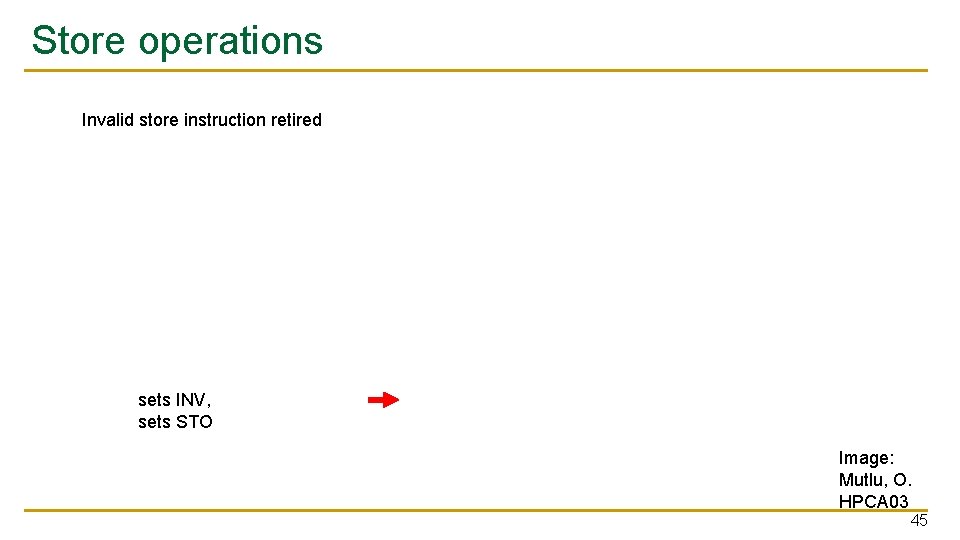

Store operations Invalid store instruction retired sets INV, sets STO Image: Mutlu, O. HPCA 03 45

![Load operations Invalid store instruction retired mov dword ptr[esp+8], eax // many instructions mov Load operations Invalid store instruction retired mov dword ptr[esp+8], eax // many instructions mov](http://slidetodoc.com/presentation_image/2c402a0ad3cf663d8a17d588ba232a70/image-46.jpg)

Load operations Invalid store instruction retired mov dword ptr[esp+8], eax // many instructions mov ebx, dword ptr[esp+8] mov ecx, dword ptr[ebx] ebx is now INV sets INV, sets STO Image: Mutlu, O. HPCA 03 46

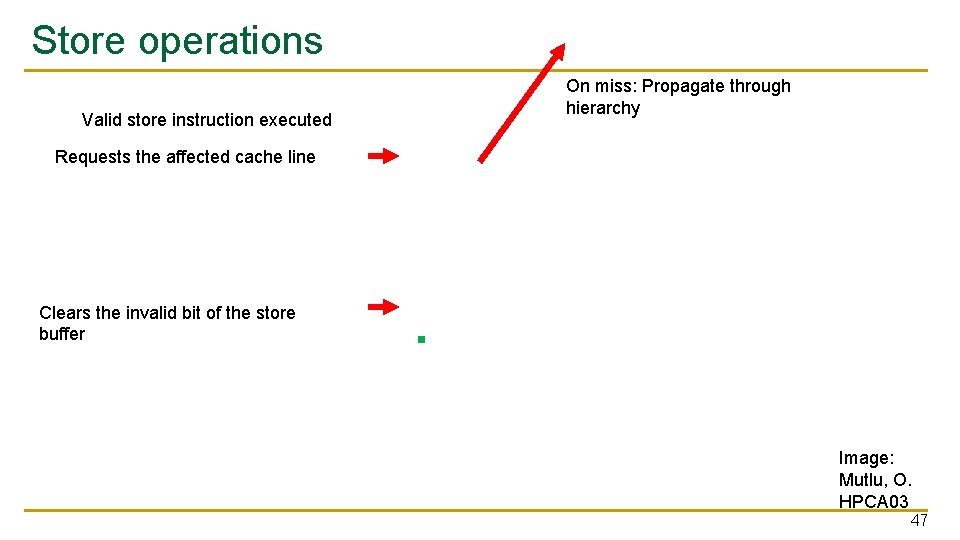

Store operations Valid store instruction executed On miss: Propagate through hierarchy Requests the affected cache line Clears the invalid bit of the store buffer Image: Mutlu, O. HPCA 03 47

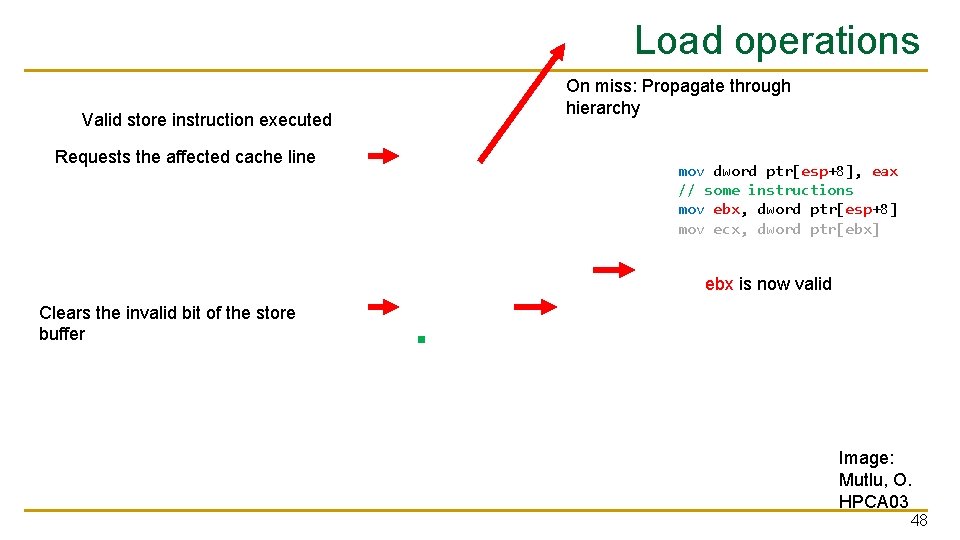

Load operations Valid store instruction executed Requests the affected cache line On miss: Propagate through hierarchy mov dword ptr[esp+8], eax // some instructions mov ebx, dword ptr[esp+8] mov ecx, dword ptr[ebx] ebx is now valid Clears the invalid bit of the store buffer Image: Mutlu, O. HPCA 03 48

Store operations Valid store instruction retired Writes value, clears INV, sets STO Image: Mutlu, O. HPCA 03 49

![Load operations Valid store instruction retired mov dword ptr[esp+8], eax // many instructions mov Load operations Valid store instruction retired mov dword ptr[esp+8], eax // many instructions mov](http://slidetodoc.com/presentation_image/2c402a0ad3cf663d8a17d588ba232a70/image-50.jpg)

Load operations Valid store instruction retired mov dword ptr[esp+8], eax // many instructions mov ebx, dword ptr[esp+8] mov ecx, dword ptr[ebx] ebx is now valid Writes value, clears INV, sets STO Image: Mutlu, O. HPCA 03 50

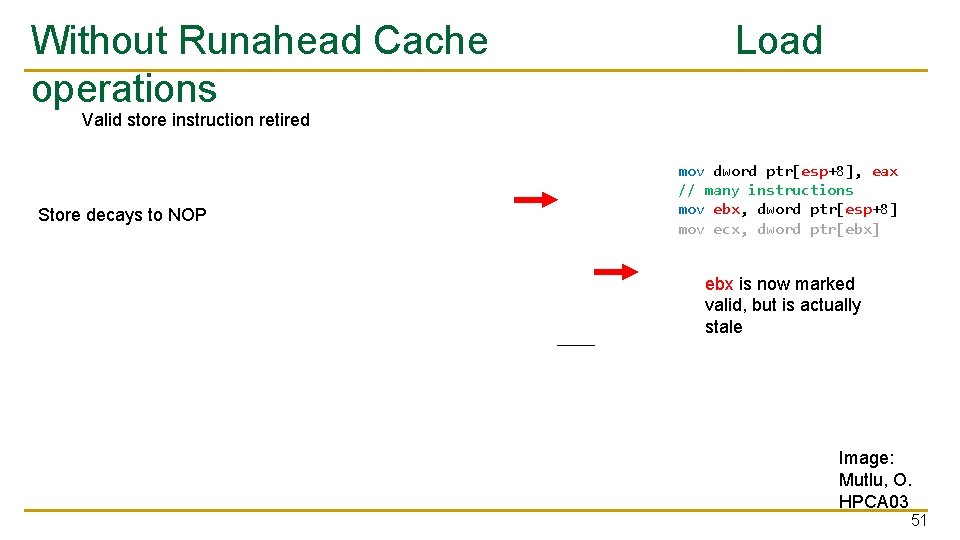

Without Runahead Cache operations Load Valid store instruction retired Store decays to NOP mov dword ptr[esp+8], eax // many instructions mov ebx, dword ptr[esp+8] mov ecx, dword ptr[ebx] ebx is now marked valid, but is actually stale Image: Mutlu, O. HPCA 03 51

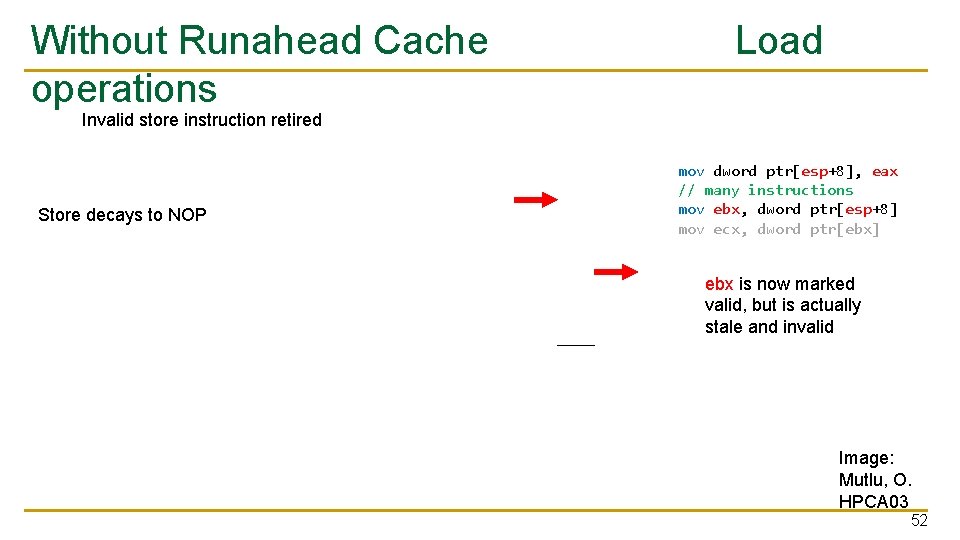

Without Runahead Cache operations Load Invalid store instruction retired Store decays to NOP mov dword ptr[esp+8], eax // many instructions mov ebx, dword ptr[esp+8] mov ecx, dword ptr[ebx] ebx is now marked valid, but is actually stale and invalid Image: Mutlu, O. HPCA 03 52

Load operations Store Buffer R. Cache L 1 Miss On miss: Propagate through hierarchy Image: Mutlu, O. HPCA 03 53

Key Results: Methodology and Evaluation 54

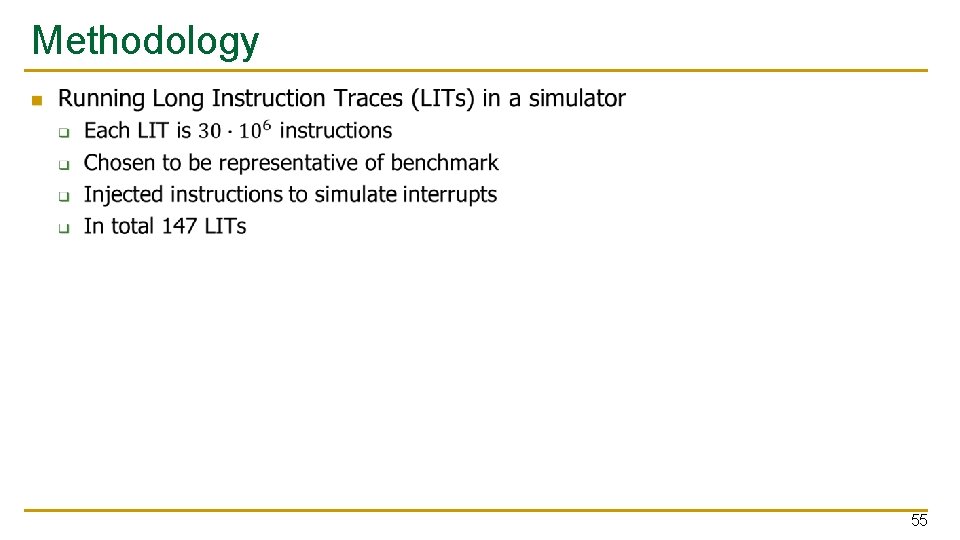

Methodology n 55

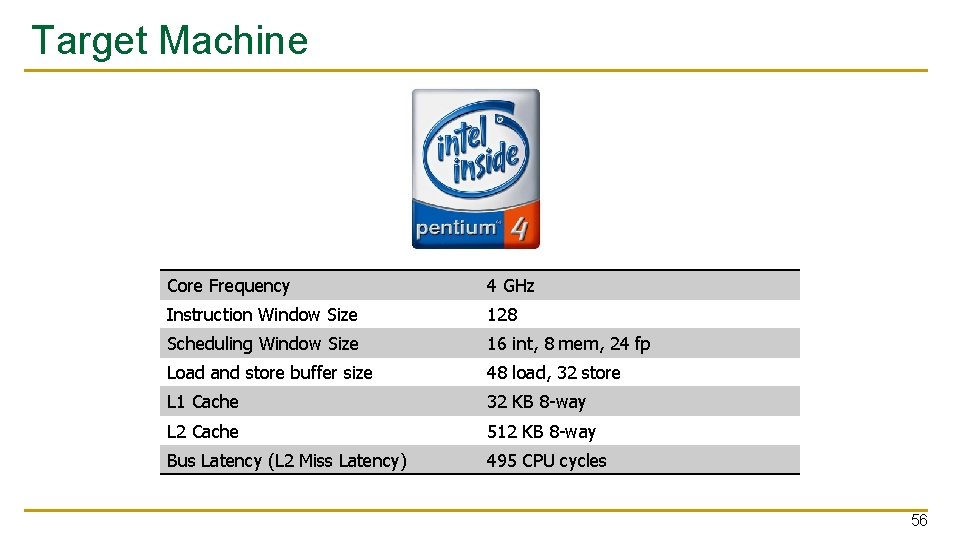

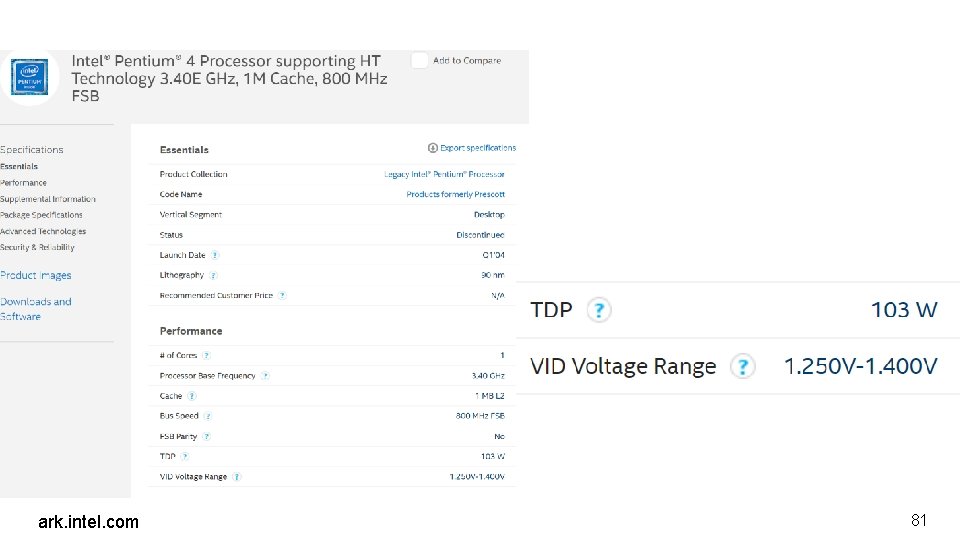

Target Machine Core Frequency 4 GHz Instruction Window Size 128 Scheduling Window Size 16 int, 8 mem, 24 fp Load and store buffer size 48 load, 32 store L 1 Cache 32 KB 8 -way L 2 Cache 512 KB 8 -way Bus Latency (L 2 Miss Latency) 495 CPU cycles 56

Mutlu, O. HPCA 03 (recolored) 57

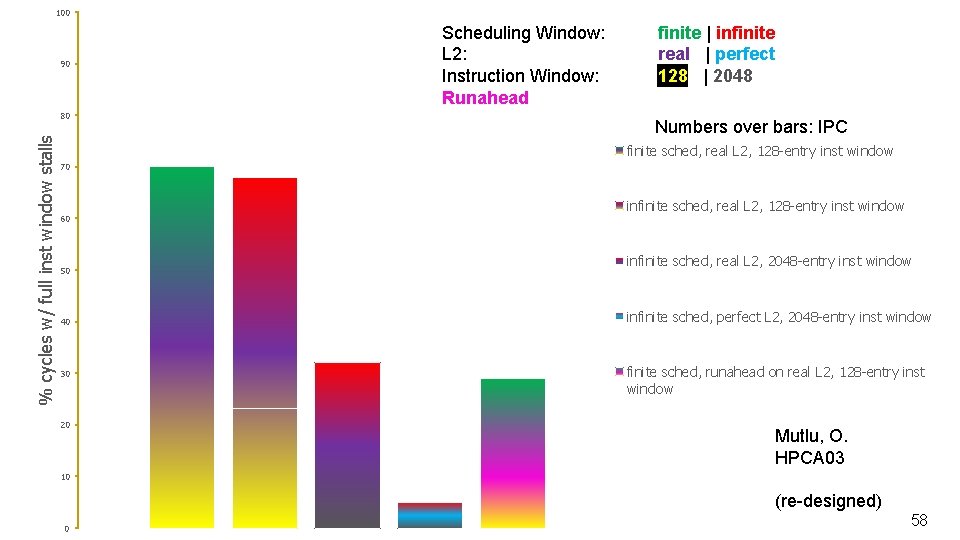

100 90 % cycles w/ full inst window stalls 80 Scheduling Window: L 2: Instruction Window: Runahead finite | infinite real | perfect 128 | 2048 Numbers over bars: IPC finite sched, real L 2, 128 -entry inst window 70 60 50 40 30 20 infinite sched, real L 2, 128 -entry inst window infinite sched, real L 2, 2048 -entry inst window infinite sched, perfect L 2, 2048 -entry inst window finite sched, runahead on real L 2, 128 -entry inst window Mutlu, O. HPCA 03 10 (re-designed) 0 58

Mutlu, O. HPCA 03 (recolored) 59

Image: Multu, O. Design of Digital Circuits slides 60

Mutlu, Onur. Efficient runahead execution processors. Diss. 2006. 61

Summary 62

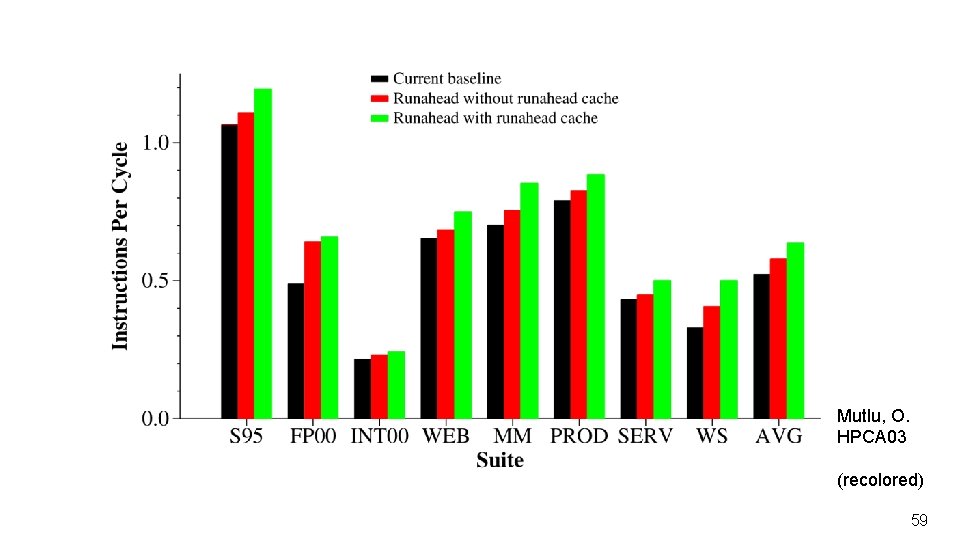

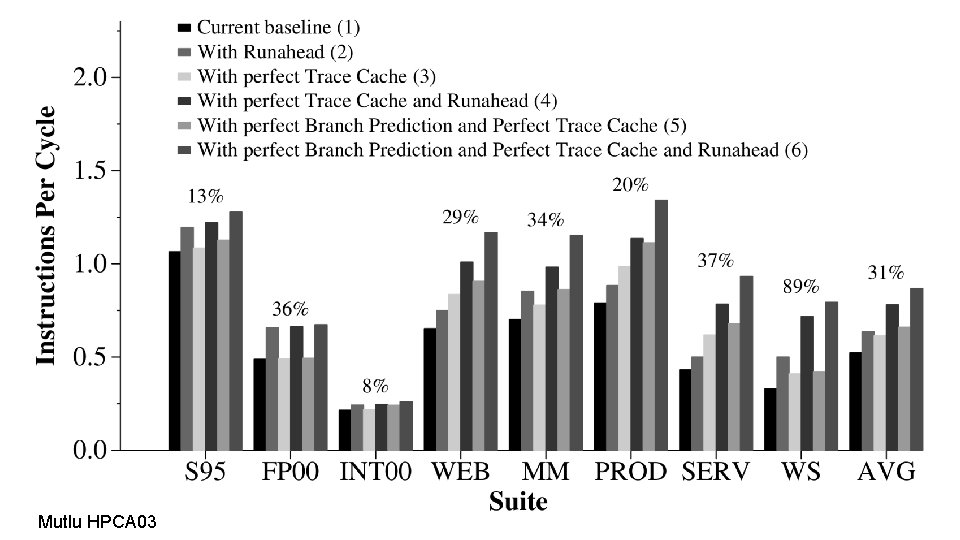

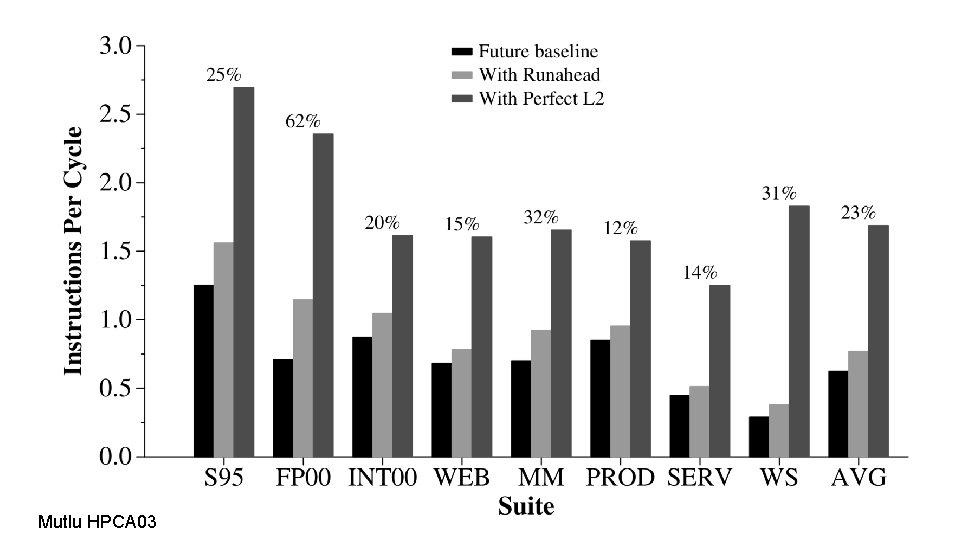

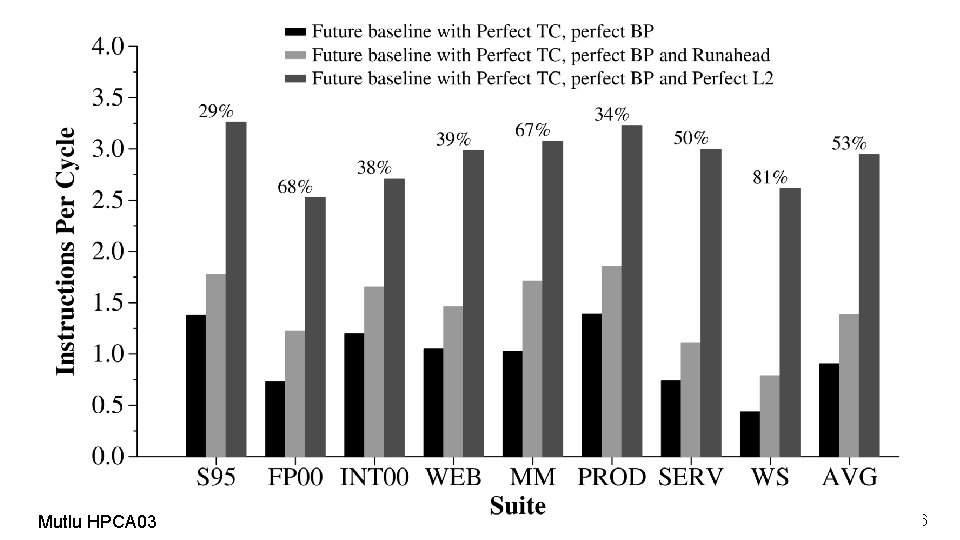

Summary n Goal q n Mechanisms q q n Efficiently increase performance by removing the bottleneck of memory latency Transform the blocking instruction window into a nonblocking instruction window Add a runahead cache to delay the divergence point Results q q Runahead itself gives a performance increase of 11% on the evaluated workload When working with a runahead cache, this improvement is doubled to 20% 63

Strengths 64

Strengths n n n Small changes with big effects Allows for combination with other optimizations Successful adaption and extension of in-order runahead Increases Memory Level Parallelism (MLP) Well-written 65

Weaknesses 66

Weaknesses n n Parts of paper did not age well Missing/Hidden information in paper q n n e. g. What happens on a page fault? Limited by memory bandwidth Prefetch distance limited by memory speed q The faster a full window stall resolves, the less prefetch requests are generated 67

Future? 68

Future? NOW 5 GHz 224 97 unified 72 load, 56 store 32 KB, 8 -way, 64 -byte line size 5 cycles 256 KB, 4 -way, 64 -byte line size 12 cycles 320 cycles-ish (80 ns / 4 GHz) 69

Thoughts and Ideas 70

Sun Rock n n n https: //arstechnica. com/gadgets/2008/02/sun-can-you-smell-what-the-rock-is-cookin/ Magic Everything-CPU q Out-of-order retirement q Hardware scout q Hardware Transactional Memory Cancelled in 2010 “This processor had two incredible virtues: It was incredibly slow and it consumed vast amounts of energy. It was so hot that they had to put about 12 inches of cooling fans on top of it to cool the processor, ” said [Larry] Ellison. “It was just madness to continue that project. ” Chaudhry, Shailender, et al. "High-performance throughput computing. " IEEE Micro 25. 3 (2005): 32 -45. https: //www. reuters. com/article/us-oracle/special-report-can-that-guy-in-ironman-2 -whip-ibm-in-real-lifeid. USTRE 64 B 5 YX 20100512, accessed 1. 18 71

Thoughts and ideas n How to reuse the added structures? q q Easier hardware debugging by having the architectural register file collected anyways Adding instructions to use runahead cache as a scratch buffer? n q As transactional memory? Using the checkpointed architectural register file for context switches? n pushad 72

Takeaways 73

Takeaways n It is easier to reuse resources n Adapting existing techniques might work very well 74

Further reading n n n n n Mutlu, Onur. Efficient runahead execution processors. Diss. 2006. Mutlu, Onur, Hyesoon Kim, and Yale N. Patt. "Efficient runahead execution: Power-efficient memory latency tolerance. " IEEE Micro 26. 1 (2006): 10 -20. Mutlu, Onur, et al. "On reusing the results of pre-executed instructions in a runahead execution processor. " IEEE Computer Architecture Letters 4. 1 (2005): 2 -2. Chappell, Robert S. , et al. "Simultaneous subordinate microthreading (SSMT). " Computer Architecture, 1999. Proceedings of the 26 th International Symposium on. IEEE, 1999. Hashemi, Milad, Onur Mutlu, and Yale N. Patt. "Continuous runahead: Transparent hardware acceleration for memory intensive workloads. " The 49 th Annual IEEE/ACM International Symposium on Microarchitecture. IEEE Press, 2016. Ramirez, Tanausu, et al. "Runahead threads to improve SMT performance. " High Performance Computer Architecture, 2008. HPCA 2008. IEEE 14 th International Symposium on. IEEE, 2008. Chaudhry, Shailender, et al. "High-performance throughput computing. " IEEE Micro 25. 3 (2005): 32 -45. Cain, Harold W. , and Priya Nagpurkar. "Runahead execution vs. conventional data prefetching in the IBM POWER 6 microprocessor. " Performance Analysis of Systems & Software (ISPASS), 2010 IEEE International Symposium on. IEEE, 2010. “Port Contention for Fun and Profit” (brand new, not published yet) 75

Questions 76

Open Discussion 77

Open Discussion n What’s a simple worst case where Runahead Execution would not give any benefits? Would it be beneficial to also catch and treat page faults in runahead mode? If you had to choose between SMT and Runahead Execution: Which one? q q n It is possible to combine them (at a small cost). Is there a reason you would not want to? SMT leak: “Port Contention for Fun and Profit” (“Port. Smash”) CVE-2018 -5407 Runahead Execution implemented in in-Order CPUs, but not in Oo. O-CPUs q q Why? How does the addition of L 3 -cache impact Runahead Execution? n What if instead of having an L 3, the L 2 was just bigger? What changes? 78

Open Discussion n n Intel Atom processors used to be in-Order Architectures, but did not feature runahead execution. Why? Other ideas for runahead execution? q q n Continuous Runahead Execution Subordinate Simultaneous Multithreading Other ideas to overcome the memory wall? 79

Backup Slides 80

ark. intel. com 81

100 90 % cycles w/ full inst window stalls 80 Scheduling Window: L 2: Instruction Window: Runahead finite | infinite real | perfect 128 | 2048 Numbers over bars: IPC finite sched, real L 2, 128 -entry inst window 70 infinite sched, real L 2, 128 -entry inst window 60 50 finite sched, perfect L 2, 128 -entry inst window infinite sched, real L 2, 2048 -entry inst window 40 infinite sched, perfect L 2, 2048 -entry inst window 30 20 finite sched, runahead on real L 2, 128 -entry inst window Mutlu, O. HPCA 03 10 (re-designed) 0 82

Mutlu HPCA 03 83

Mutlu HPCA 03 84

Mutlu HPCA 03 85

Mutlu HPCA 03 86

- Slides: 86