PrototypeDriven Grammar Induction Aria Haghighi and Dan Klein

- Slides: 29

Prototype-Driven Grammar Induction Aria Haghighi and Dan Klein Computer Science Division University of California Berkeley

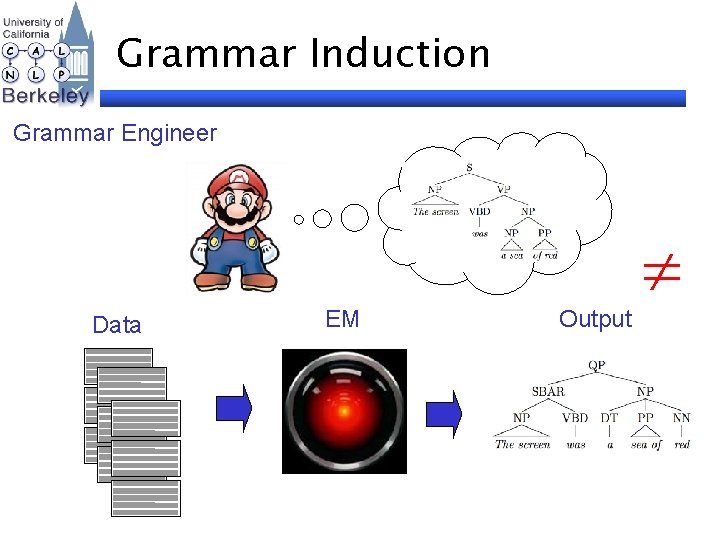

Grammar Induction Grammar Engineer Data EM Output

Central Questions How do we specify what we want to learn? NPs are things like DT NN How do we fix observed errors? That’s not quite it!

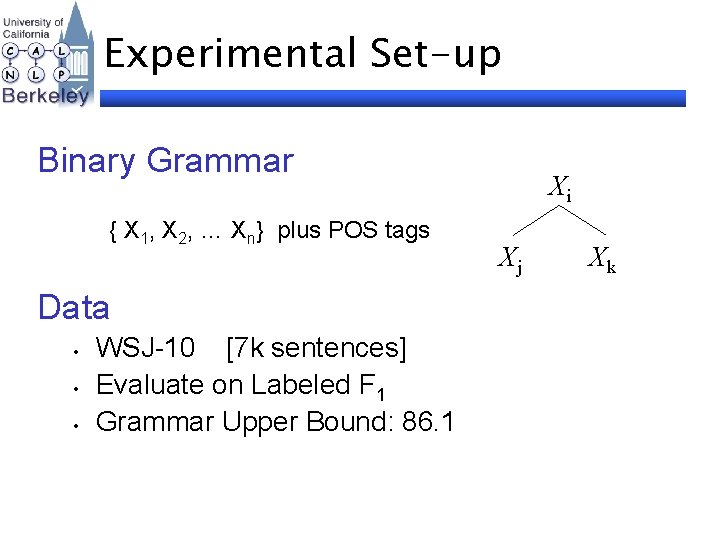

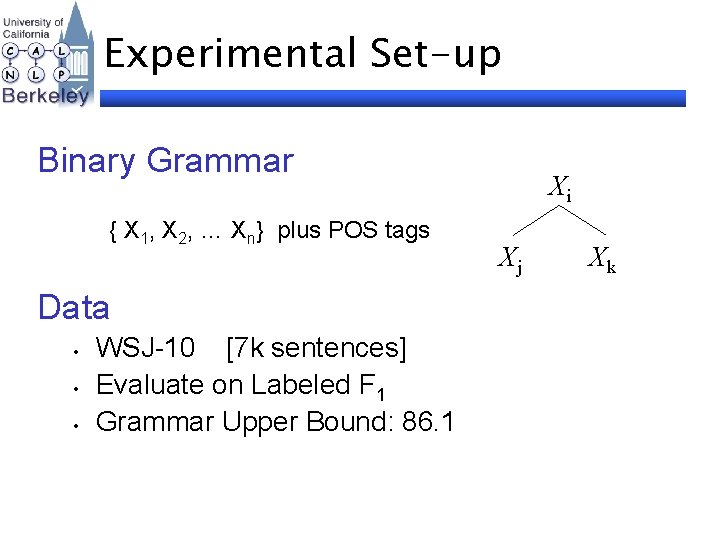

Experimental Set-up Binary Grammar { X 1, X 2, … Xn} plus POS tags Data • • • WSJ-10 [7 k sentences] Evaluate on Labeled F 1 Grammar Upper Bound: 86. 1 Xi Xj Xk

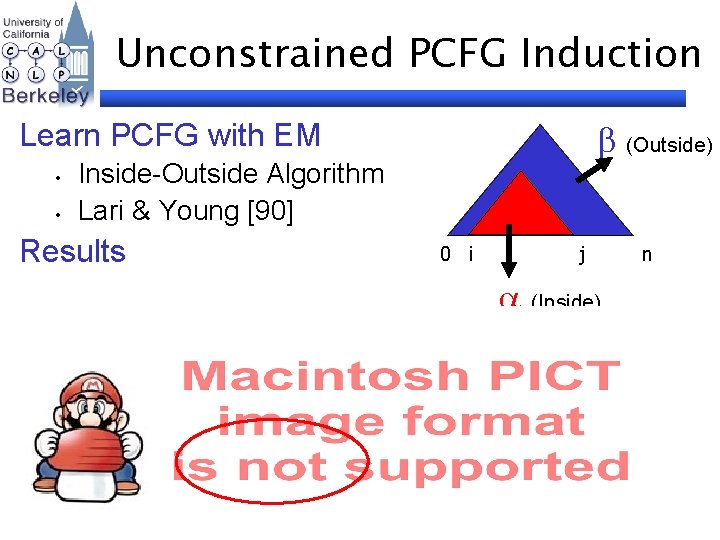

Unconstrained PCFG Induction (Outside) Learn PCFG with EM • • Inside-Outside Algorithm Lari & Young [90] Results 0 i j (Inside) n

Encoding Knowledge What’s an NP? Semi-Supervised Learning

Encoding Knowledge What’s an NP? For instance, DT NN JJ NNS NNP Prototype Learning

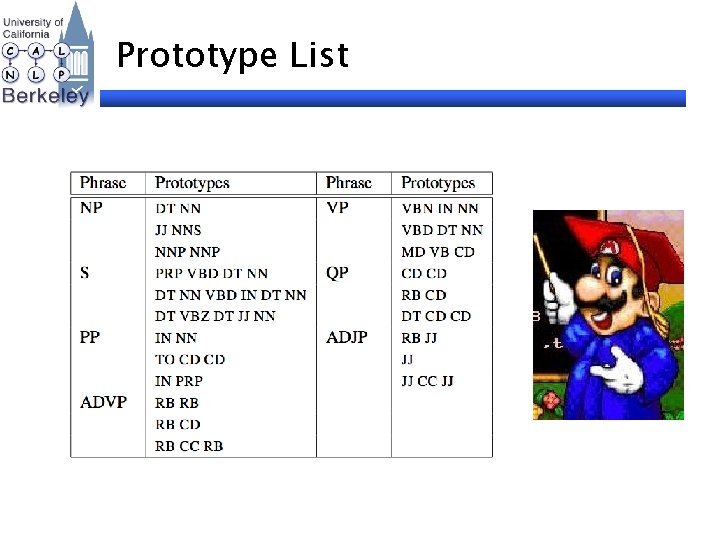

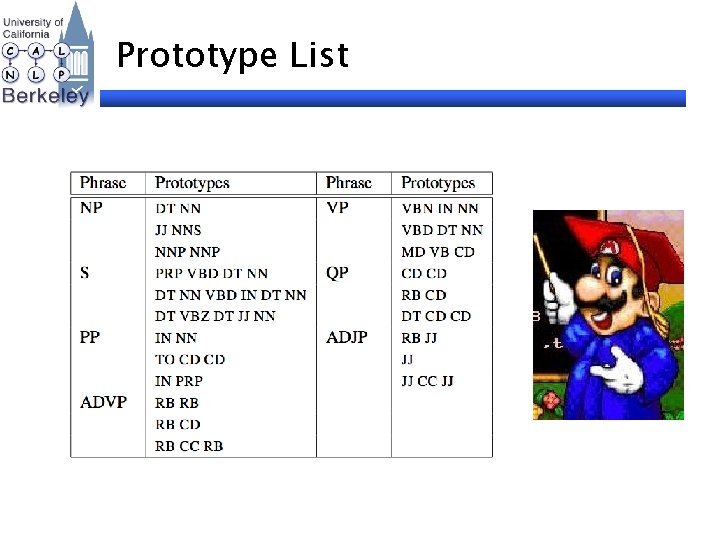

Prototype List

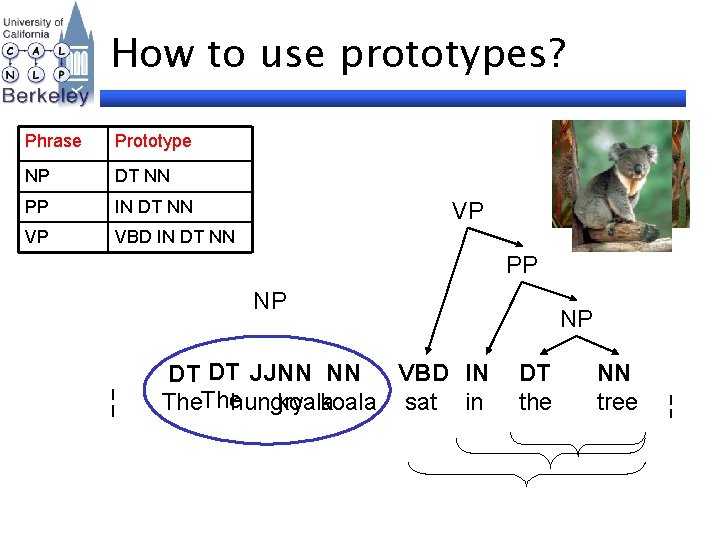

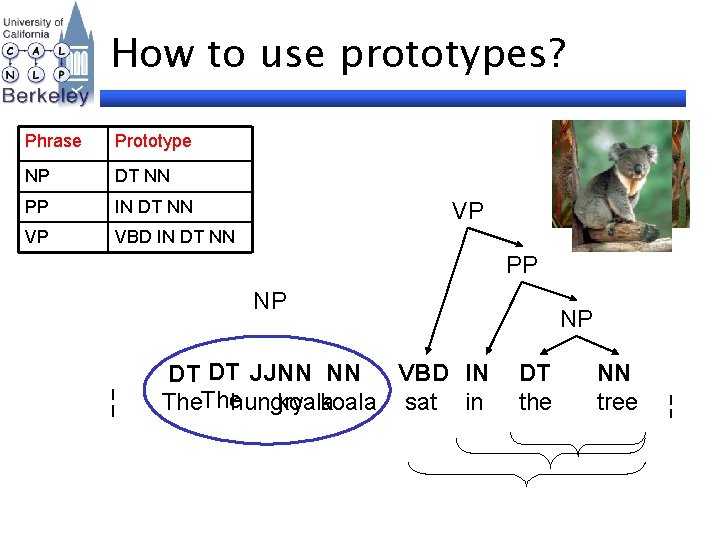

How to use prototypes? Phrase Prototype NP DT NN PP IN DT NN VP VBD IN DT NN VP PP NP ¦ VBD IN DT DT JJNN NN hungry koala sat in The NP DT the NN tree ¦

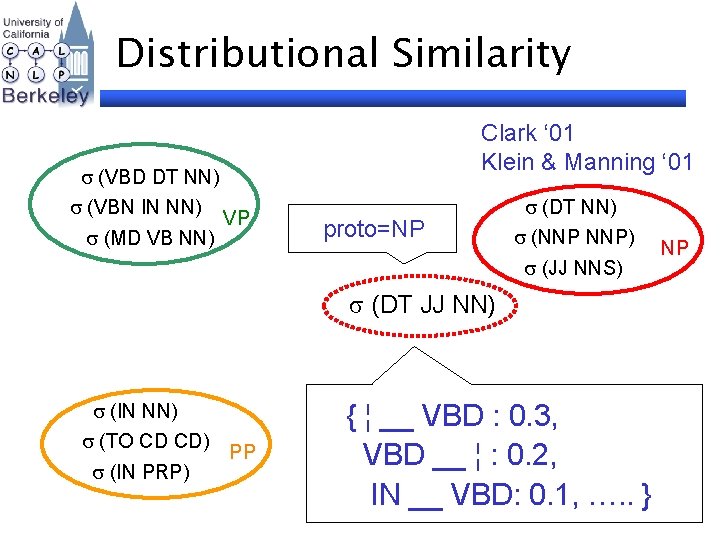

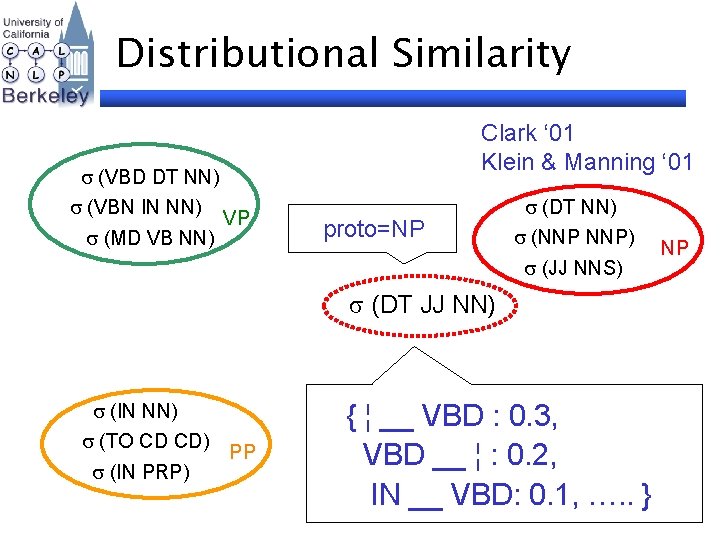

Distributional Similarity (VBD DT NN) (VBN IN NN) (MD VB NN) Clark ‘ 01 Klein & Manning ‘ 01 VP proto=NP (DT NN) (NNP NNP) (JJ NNS) (DT JJ NN) (IN NN) (TO CD CD) (IN PRP) PP { ¦ __ VBD : 0. 3, VBD __ ¦ : 0. 2, IN __ VBD: 0. 1, …. . } NP

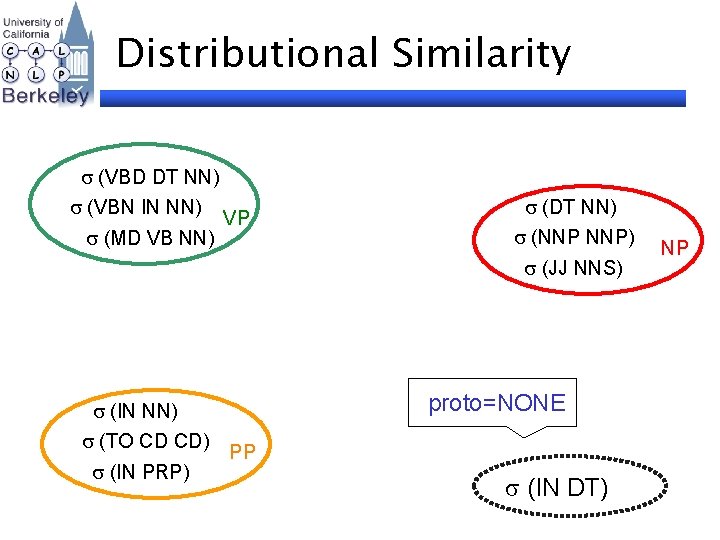

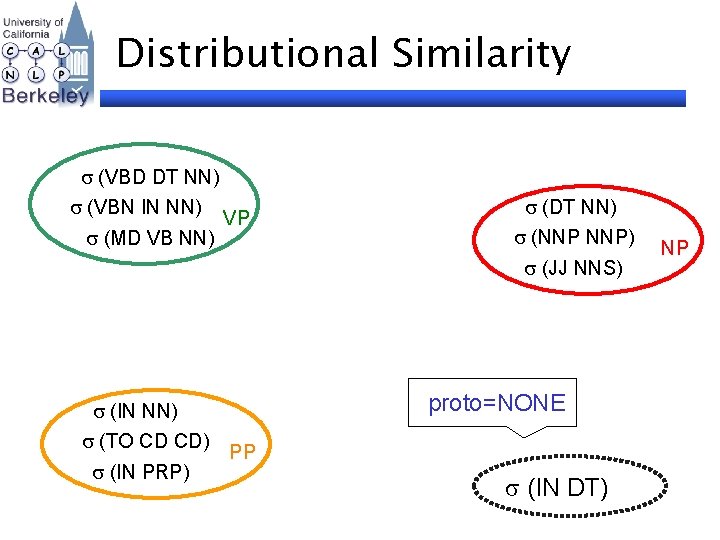

Distributional Similarity (VBD DT NN) (VBN IN NN) (MD VB NN) VP (DT NN) (NNP NNP) (JJ NNS) (IN NN) (TO CD CD) (IN PRP) proto=NONE PP (IN DT) NP

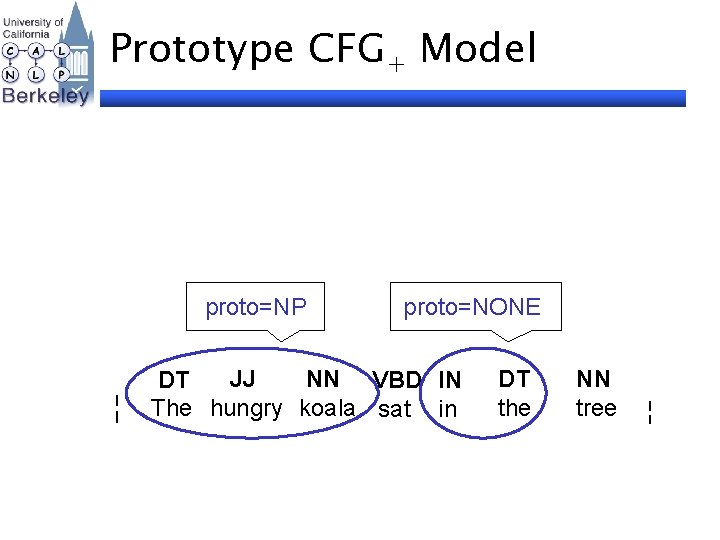

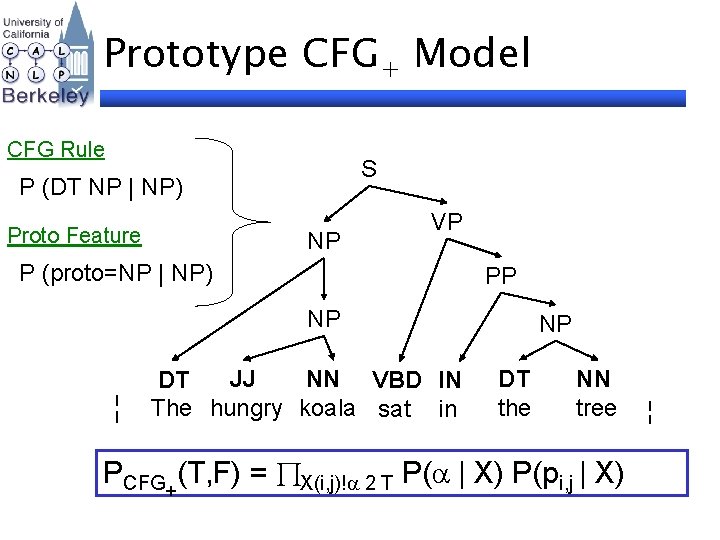

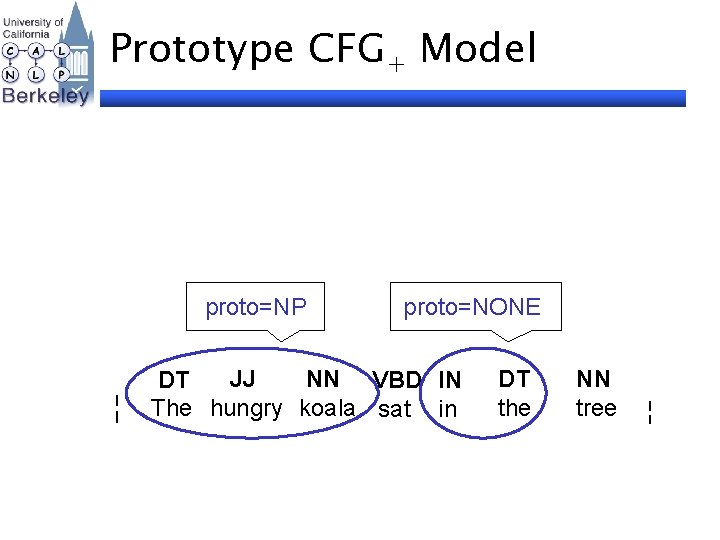

Prototype CFG+ Model proto=NP ¦ proto=NONE JJ NN VBD IN DT The hungry koala sat in DT the NN tree ¦

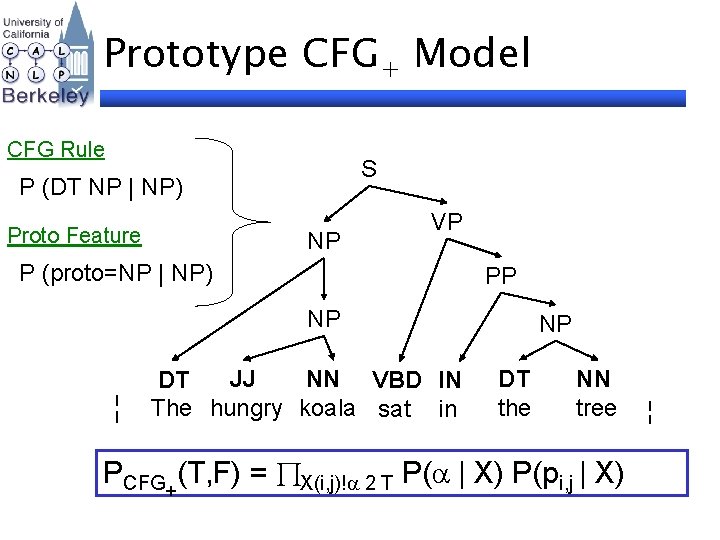

Prototype CFG+ Model CFG Rule S P (DT NP | NP) Proto Feature NP VP P (proto=NP | NP) PP NP ¦ JJ NN VBD IN DT The hungry koala sat in NP DT the NN tree PCFG+(T, F) = X(i, j)! 2 T P( | X) P(pi, j | X) ¦

Prototype CFG+ Induction Experimental Set-Up • • Add Prototypes BLIPP corpus Results Unlabeled F 1 66. 9

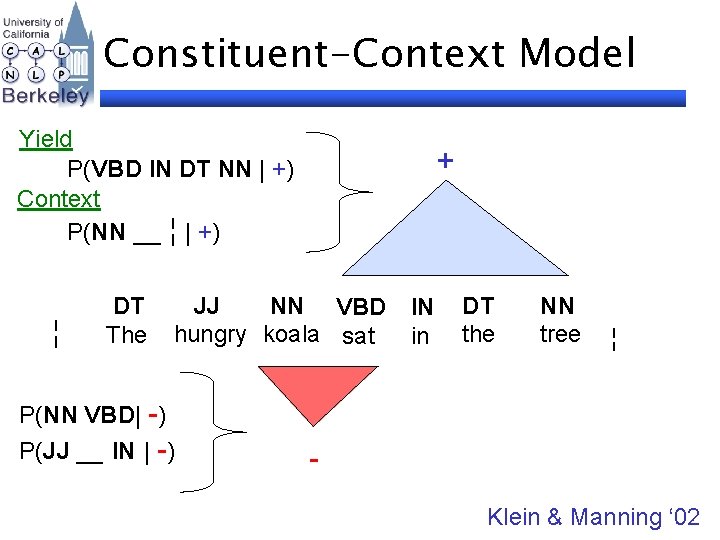

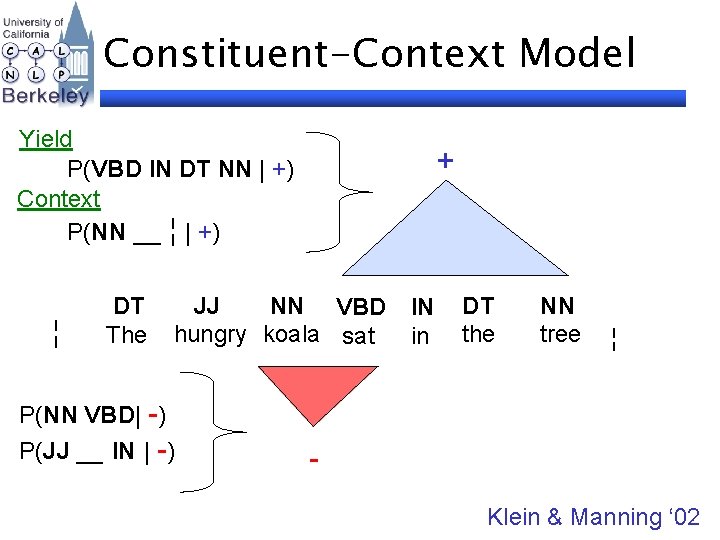

Constituent-Context Model Yield P(VBD IN DT NN | +) Context P(NN __ ¦ | +) JJ NN VBD hungry koala sat P(NN VBD| -) P(JJ __ IN | -) IN in DT the NN tree ¦ - ¦ DT The + Klein & Manning ‘ 02

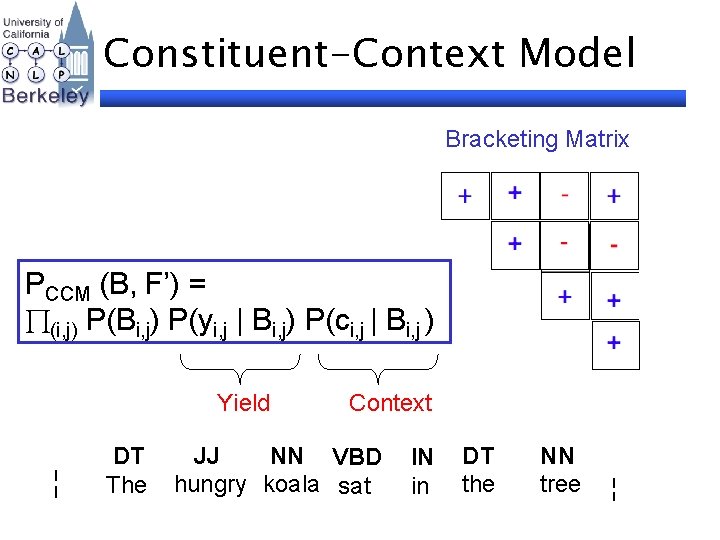

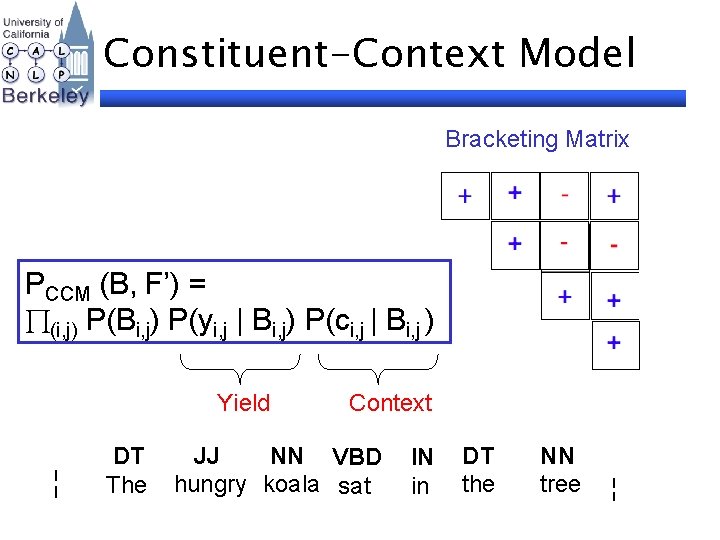

Constituent-Context Model Bracketing Matrix PCCM (B, F’) = (i, j) P(Bi, j) P(yi, j | Bi, j) P(ci, j | Bi, j ) Yield ¦ DT The Context JJ NN VBD hungry koala sat IN in DT the NN tree ¦

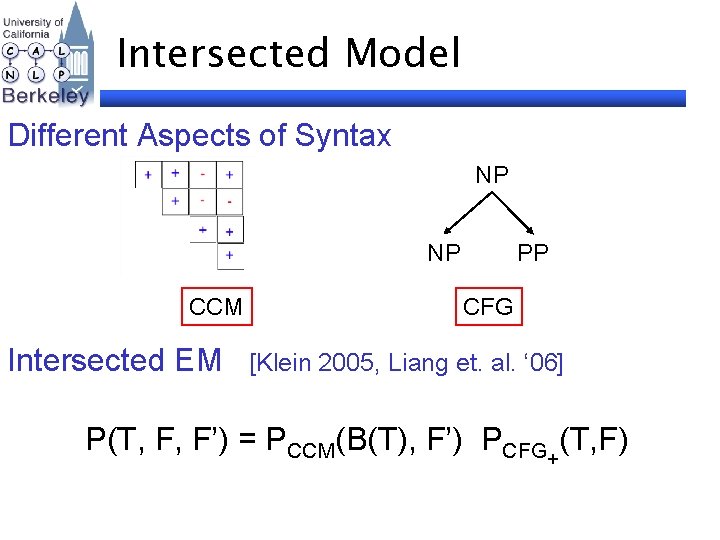

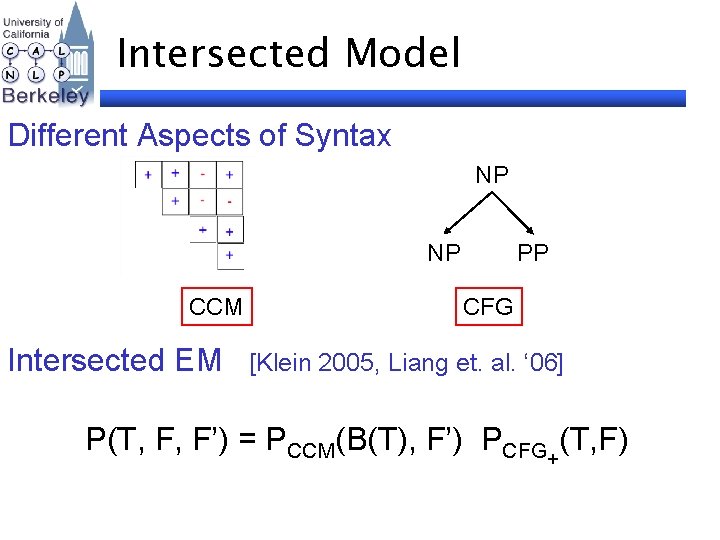

Intersected Model Different Aspects of Syntax NP NP CCM Intersected EM PP CFG [Klein 2005, Liang et. al. ‘ 06] P(T, F, F’) = PCCM(B(T), F’) PCFG+(T, F)

Grammar Induction Experiments Intersected CFG+ and CCM Add CCM brackets Results

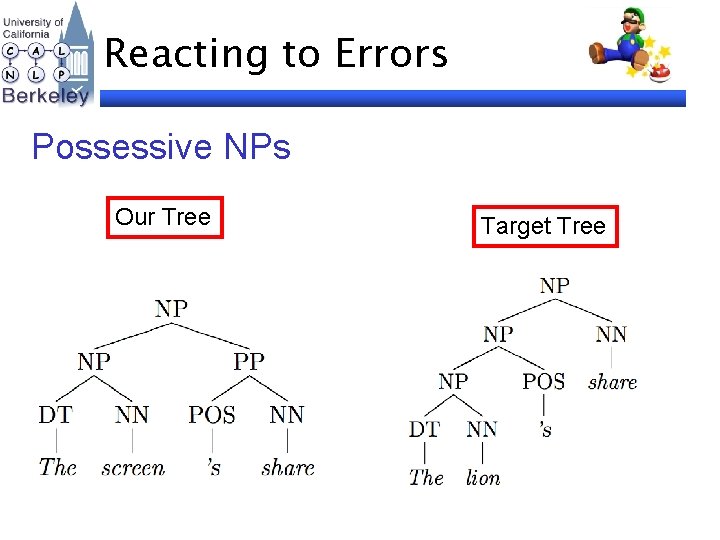

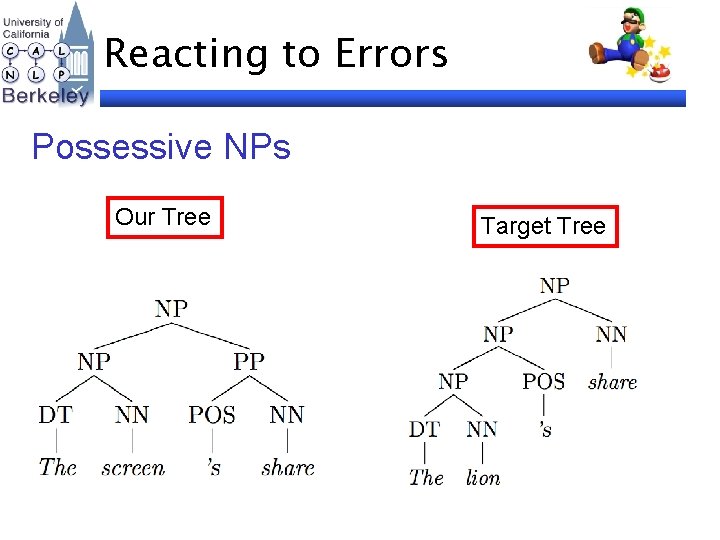

Reacting to Errors Possessive NPs Our Tree Target Tree

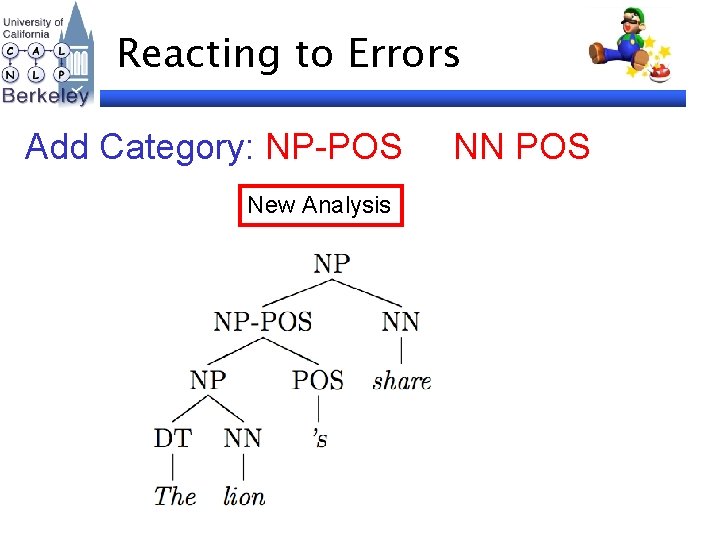

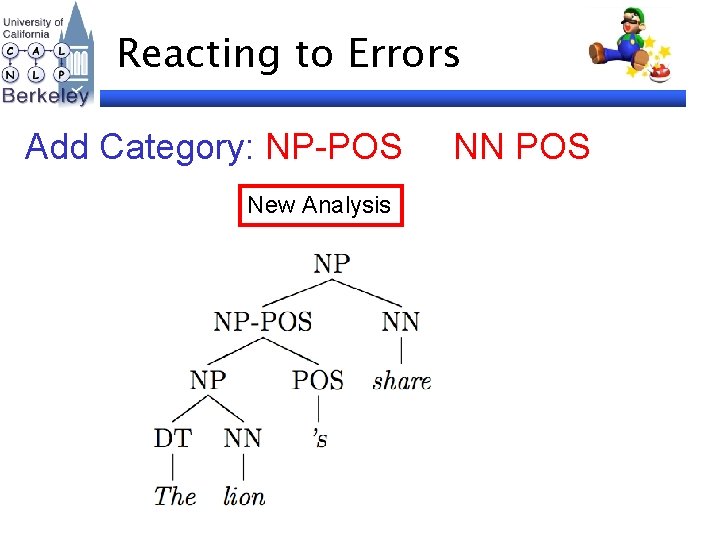

Reacting to Errors Add Category: NP-POS New Analysis NN POS

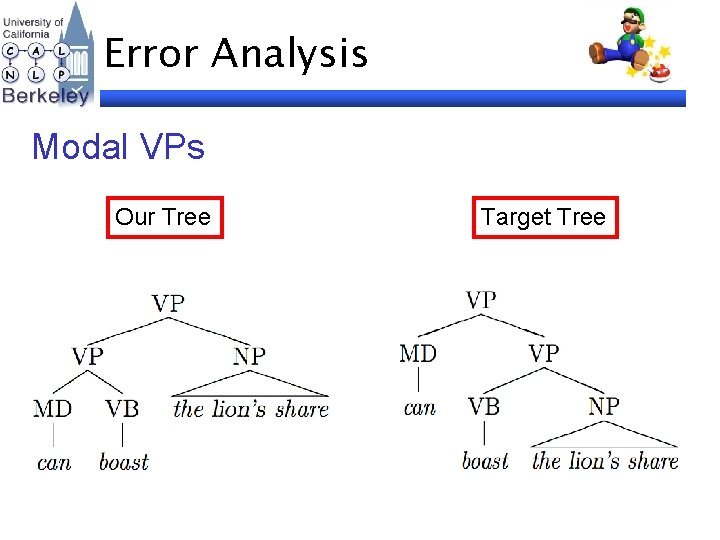

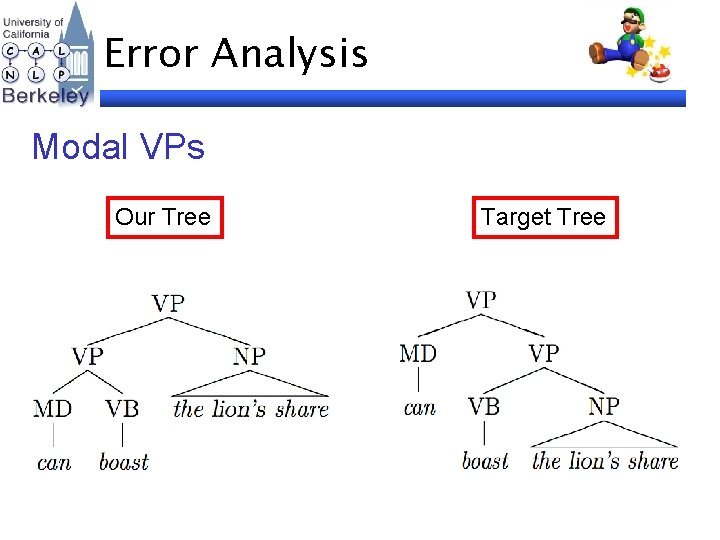

Error Analysis Modal VPs Our Tree Target Tree

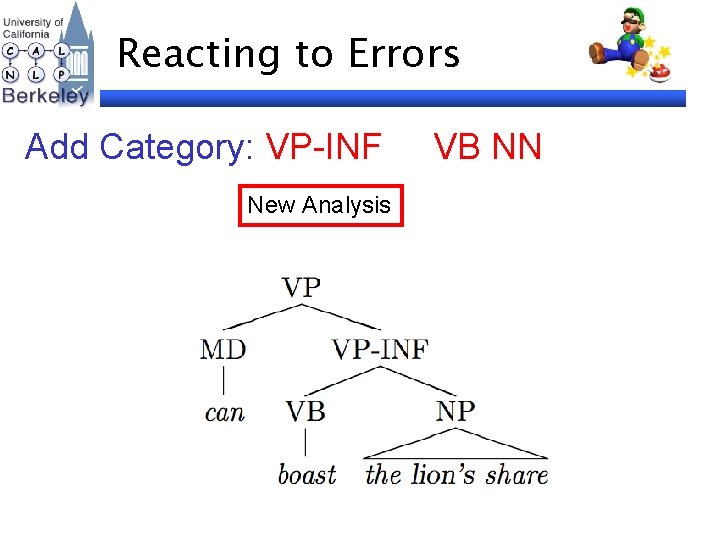

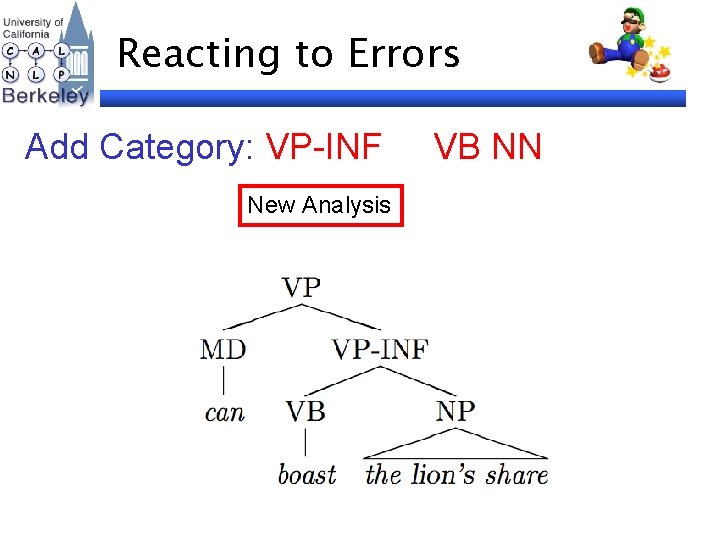

Reacting to Errors Add Category: VP-INF New Analysis VB NN

Fixing Errors Supplement Prototypes • NP-POS and VP-INF Results Unlabeled F 1 78. 2

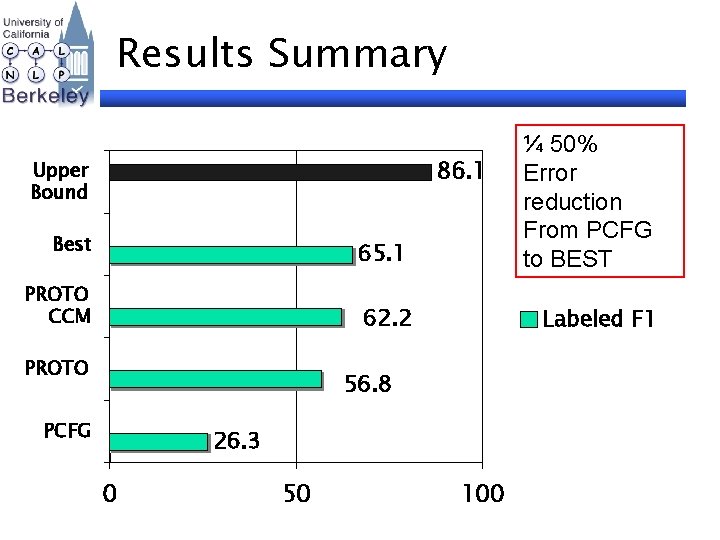

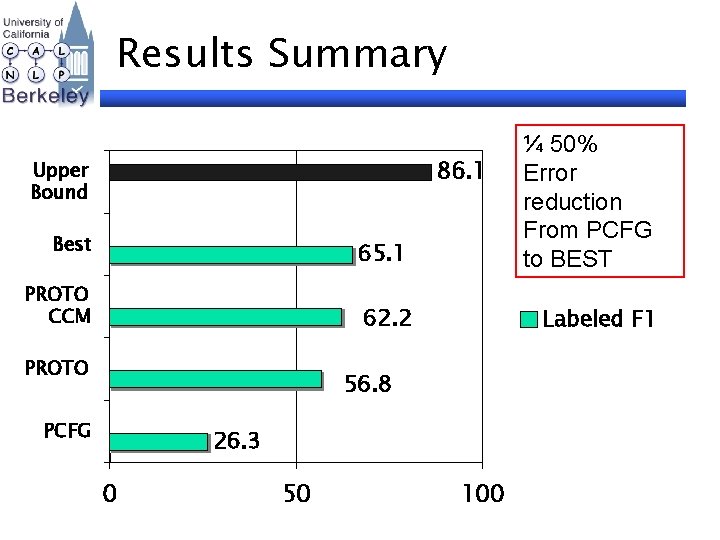

Results Summary 65. 1 ¼ 50% Error reduction From PCFG to BEST 62. 2 Labeled F 1 86. 1 Upper Bound Best PROTO CCM PROTO 56. 8 PCFG 26. 3 0 50 100

Conclusion • • Prototype-Driven Learning Flexible Weakly Supervised Framework Merged distributional clustering techniques with structured models

Thank You! http: //www. cs. berkeley. edu/~aria 42

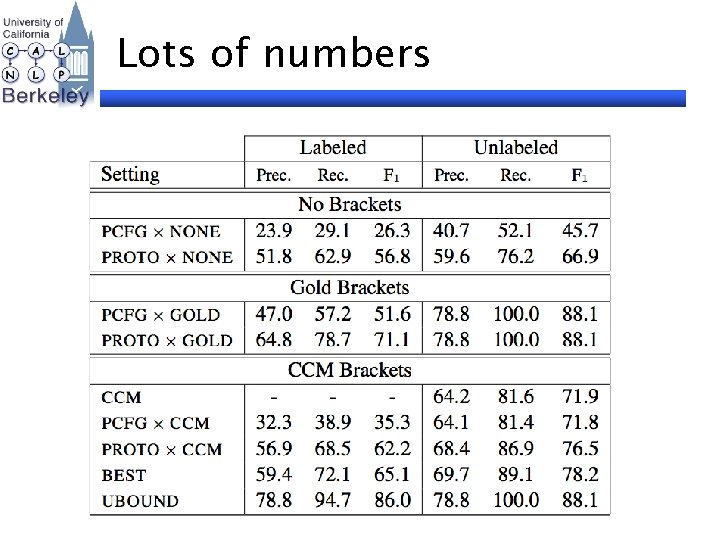

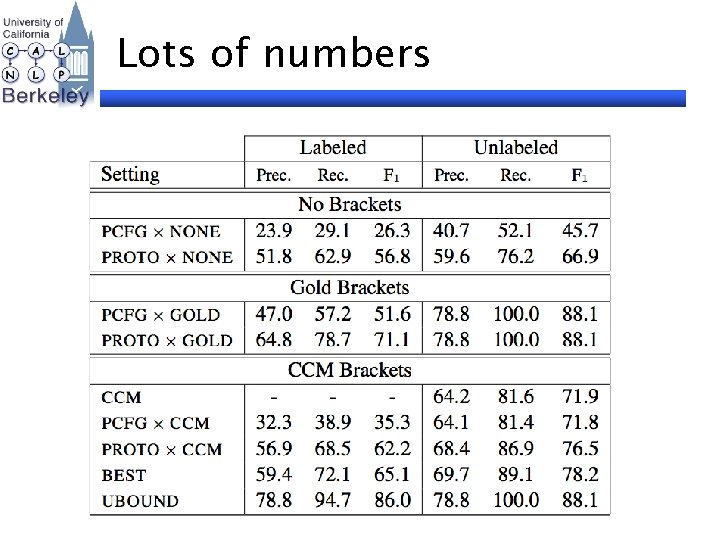

Lots of numbers

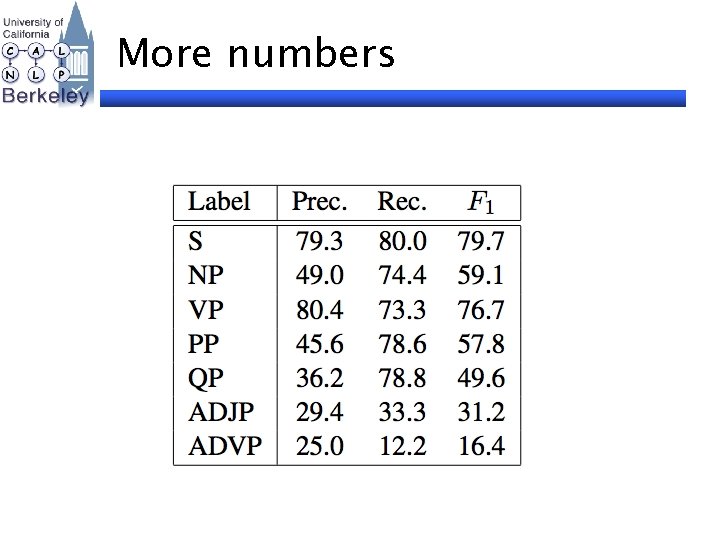

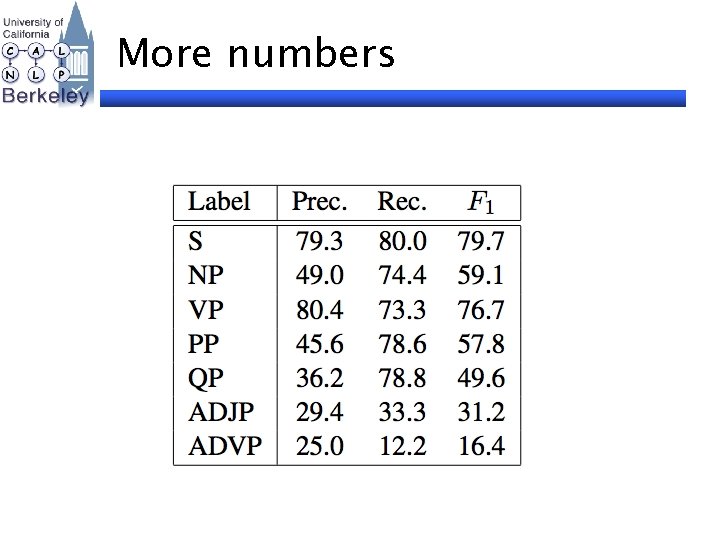

More numbers

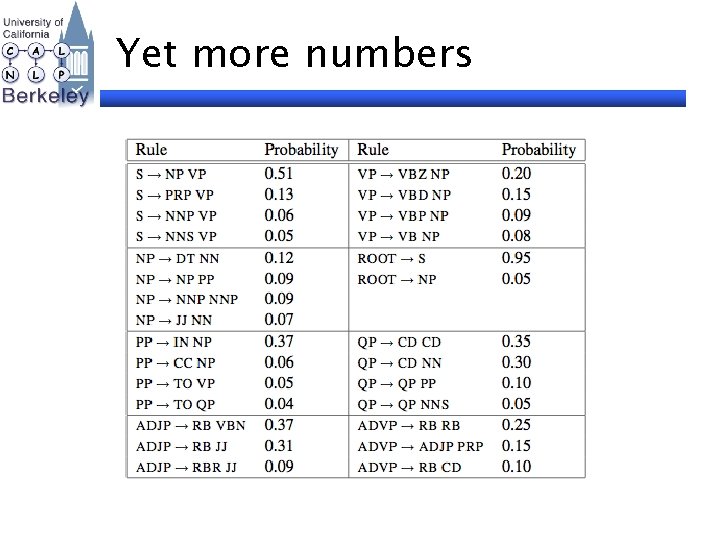

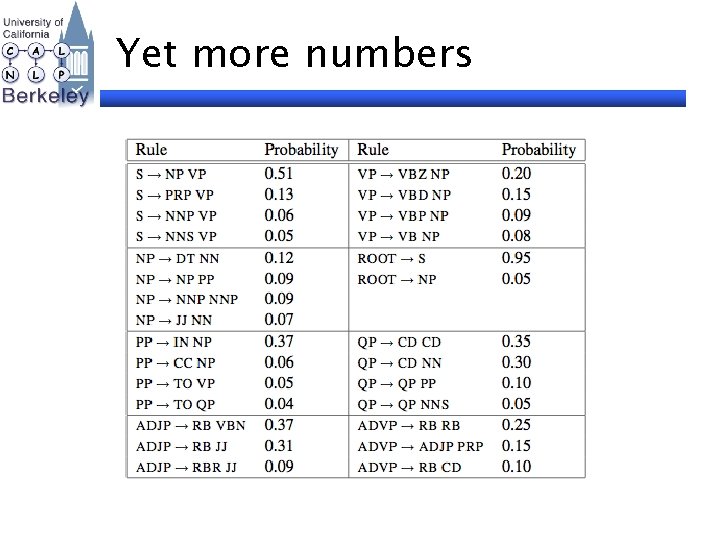

Yet more numbers