Protocols Working with 10 Gigabit Ethernet Richard HughesJones

Protocols Working with 10 Gigabit Ethernet Richard Hughes-Jones The University of Manchester www. hep. man. ac. uk/~rich/ then “Talks” CALICE, Mar 2007, R. Hughes-Jones Manchester 1

u Introduction to Measurements u 10 Gig. E on Super. Micro X 7 DBE u 10 Gig. E on Super. Micro X 5 DPE-G 2 u 10 Gig. E and TCP – Monitor with web 100 disk writes u 10 Gig. E and Constant Bit Rate program u UDP + memory access CALICE, Mar 2007, R. Hughes-Jones Manchester 2

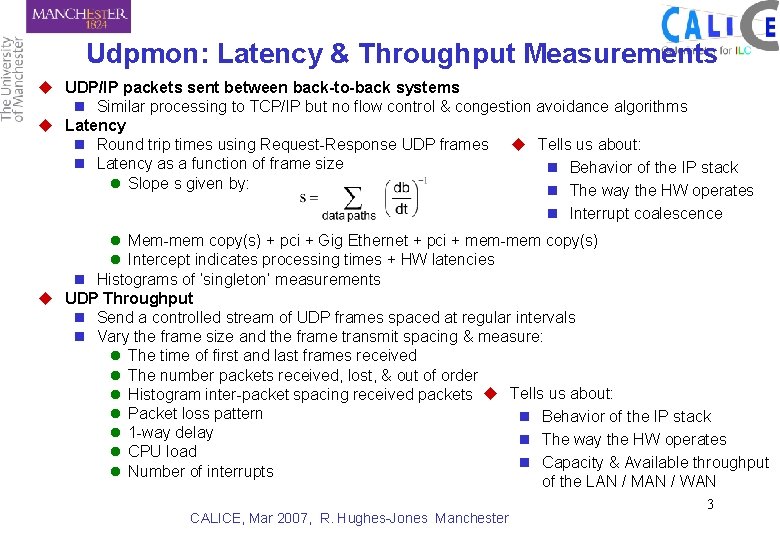

Udpmon: Latency & Throughput Measurements u UDP/IP packets sent between back-to-back systems n Similar processing to TCP/IP but no flow control & congestion avoidance algorithms u Latency n Round trip times using Request-Response UDP frames u Tells us about: n Latency as a function of frame size n Behavior of the IP stack l Slope s given by: n The way the HW operates n Interrupt coalescence l Mem-mem copy(s) + pci + Gig Ethernet + pci + mem-mem copy(s) l Intercept indicates processing times + HW latencies n Histograms of ‘singleton’ measurements u UDP Throughput n Send a controlled stream of UDP frames spaced at regular intervals n Vary the frame size and the frame transmit spacing & measure: l The time of first and last frames received l The number packets received, lost, & out of order l Histogram inter-packet spacing received packets u Tells us about: l Packet loss pattern n Behavior of the IP stack l 1 -way delay n The way the HW operates l CPU load n Capacity & Available throughput l Number of interrupts of the LAN / MAN / WAN CALICE, Mar 2007, R. Hughes-Jones Manchester 3

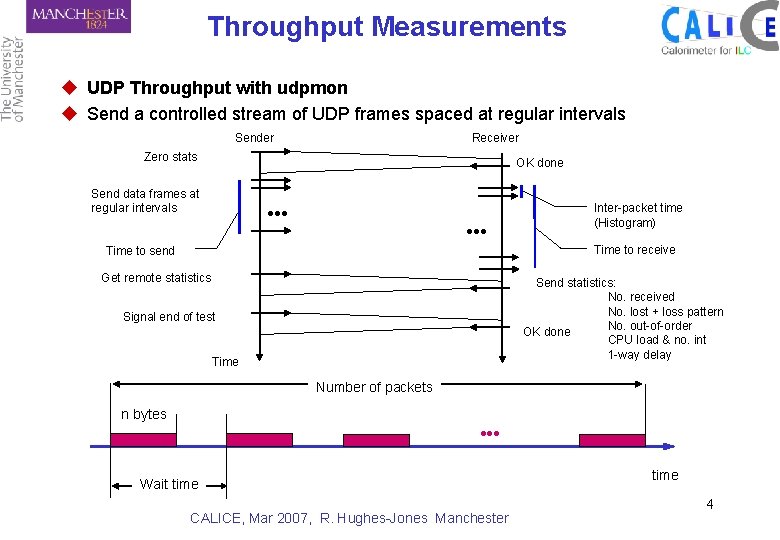

Throughput Measurements u UDP Throughput with udpmon u Send a controlled stream of UDP frames spaced at regular intervals Sender Receiver Zero stats OK done Send data frames at regular intervals ●●● Inter-packet time (Histogram) Time to receive Time to send Get remote statistics Send statistics: No. received No. lost + loss pattern No. out-of-order OK done CPU load & no. int 1 -way delay Signal end of test Time Number of packets n bytes Wait time CALICE, Mar 2007, R. Hughes-Jones Manchester time 4

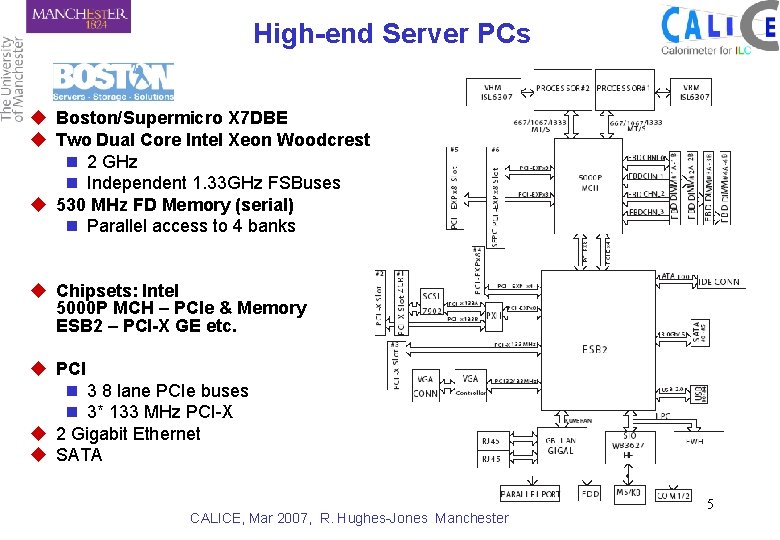

High-end Server PCs u Boston/Supermicro X 7 DBE u Two Dual Core Intel Xeon Woodcrest 5130 n 2 GHz n Independent 1. 33 GHz FSBuses u 530 MHz FD Memory (serial) n Parallel access to 4 banks u Chipsets: Intel 5000 P MCH – PCIe & Memory ESB 2 – PCI-X GE etc. u PCI n 3 8 lane PCIe buses n 3* 133 MHz PCI-X u 2 Gigabit Ethernet u SATA CALICE, Mar 2007, R. Hughes-Jones Manchester 5

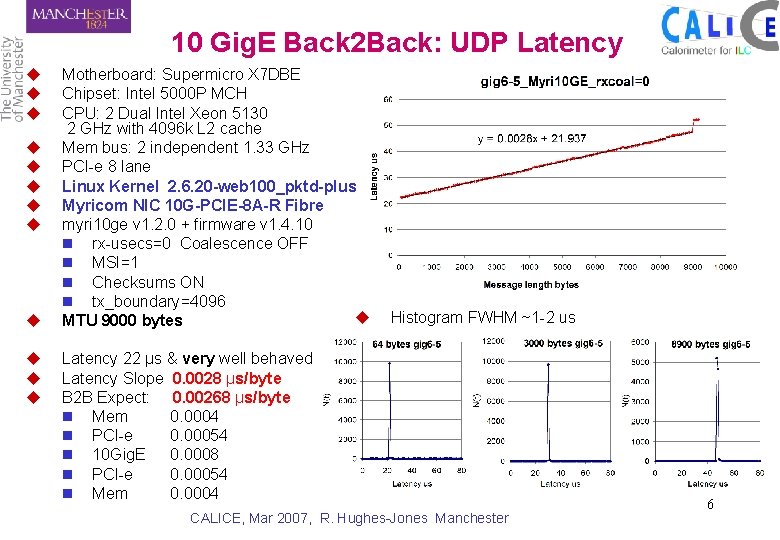

10 Gig. E Back 2 Back: UDP Latency u u u Motherboard: Supermicro X 7 DBE Chipset: Intel 5000 P MCH CPU: 2 Dual Intel Xeon 5130 2 GHz with 4096 k L 2 cache Mem bus: 2 independent 1. 33 GHz PCI-e 8 lane Linux Kernel 2. 6. 20 -web 100_pktd-plus Myricom NIC 10 G-PCIE-8 A-R Fibre myri 10 ge v 1. 2. 0 + firmware v 1. 4. 10 n rx-usecs=0 Coalescence OFF n MSI=1 n Checksums ON n tx_boundary=4096 u MTU 9000 bytes Histogram FWHM ~1 -2 us Latency 22 µs & very well behaved Latency Slope 0. 0028 µs/byte B 2 B Expect: 0. 00268 µs/byte n Mem 0. 0004 n PCI-e 0. 00054 n 10 Gig. E 0. 0008 n PCI-e 0. 00054 n Mem 0. 0004 CALICE, Mar 2007, R. Hughes-Jones Manchester 6

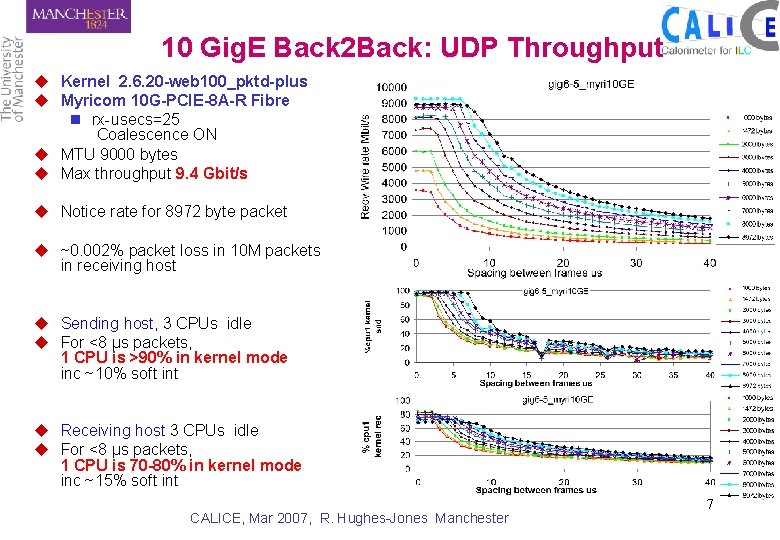

10 Gig. E Back 2 Back: UDP Throughput u Kernel 2. 6. 20 -web 100_pktd-plus u Myricom 10 G-PCIE-8 A-R Fibre n rx-usecs=25 Coalescence ON u MTU 9000 bytes u Max throughput 9. 4 Gbit/s u Notice rate for 8972 byte packet u ~0. 002% packet loss in 10 M packets in receiving host u Sending host, 3 CPUs idle u For <8 µs packets, 1 CPU is >90% in kernel mode inc ~10% soft int u Receiving host 3 CPUs idle u For <8 µs packets, 1 CPU is 70 -80% in kernel mode inc ~15% soft int CALICE, Mar 2007, R. Hughes-Jones Manchester 7

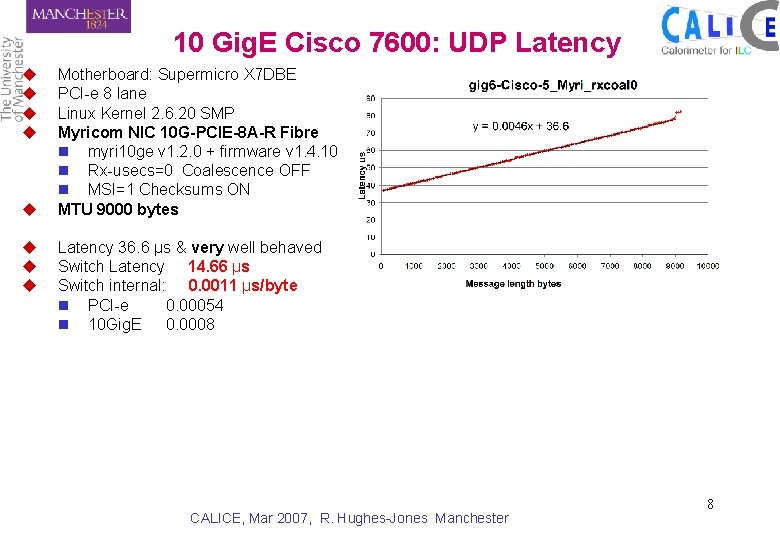

10 Gig. E Cisco 7600: UDP Latency u u u u Motherboard: Supermicro X 7 DBE PCI-e 8 lane Linux Kernel 2. 6. 20 SMP Myricom NIC 10 G-PCIE-8 A-R Fibre n myri 10 ge v 1. 2. 0 + firmware v 1. 4. 10 n Rx-usecs=0 Coalescence OFF n MSI=1 Checksums ON MTU 9000 bytes Latency 36. 6 µs & very well behaved Switch Latency 14. 66 µs Switch internal: 0. 0011 µs/byte n PCI-e 0. 00054 n 10 Gig. E 0. 0008 CALICE, Mar 2007, R. Hughes-Jones Manchester 8

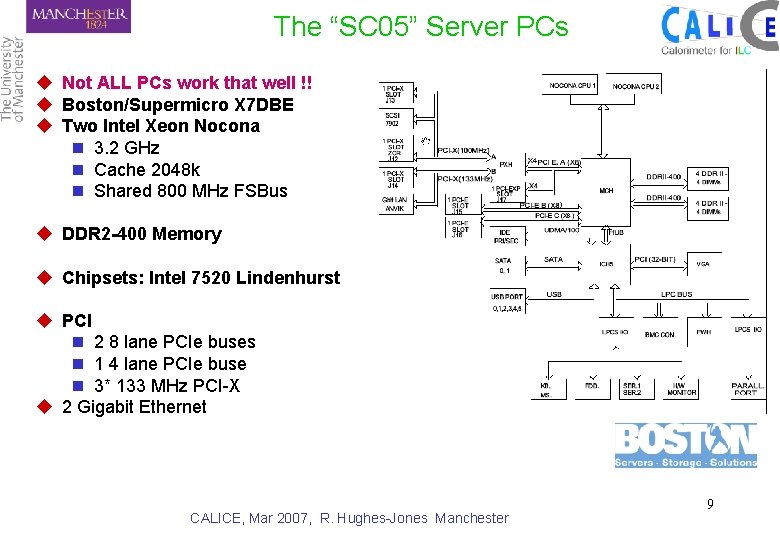

The “SC 05” Server PCs u Not ALL PCs work that well !! u Boston/Supermicro X 7 DBE u Two Intel Xeon Nocona n 3. 2 GHz n Cache 2048 k n Shared 800 MHz FSBus u DDR 2 -400 Memory u Chipsets: Intel 7520 Lindenhurst u PCI n 2 8 lane PCIe buses n 1 4 lane PCIe buse n 3* 133 MHz PCI-X u 2 Gigabit Ethernet CALICE, Mar 2007, R. Hughes-Jones Manchester 9

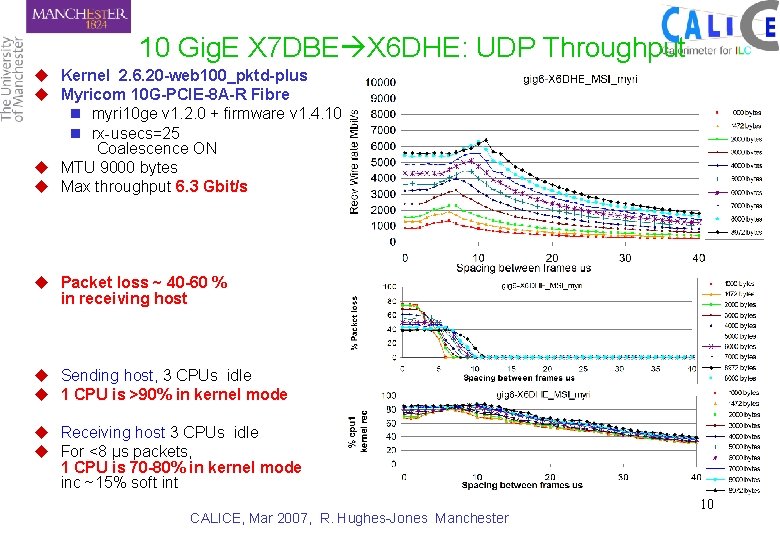

10 Gig. E X 7 DBE X 6 DHE: UDP Throughput u Kernel 2. 6. 20 -web 100_pktd-plus u Myricom 10 G-PCIE-8 A-R Fibre n myri 10 ge v 1. 2. 0 + firmware v 1. 4. 10 n rx-usecs=25 Coalescence ON u MTU 9000 bytes u Max throughput 6. 3 Gbit/s u Packet loss ~ 40 -60 % in receiving host u Sending host, 3 CPUs idle u 1 CPU is >90% in kernel mode u Receiving host 3 CPUs idle u For <8 µs packets, 1 CPU is 70 -80% in kernel mode inc ~15% soft int CALICE, Mar 2007, R. Hughes-Jones Manchester 10

So now we can run at 9. 4 Gbit/s Can we do any work ? CALICE, Mar 2007, R. Hughes-Jones Manchester 11

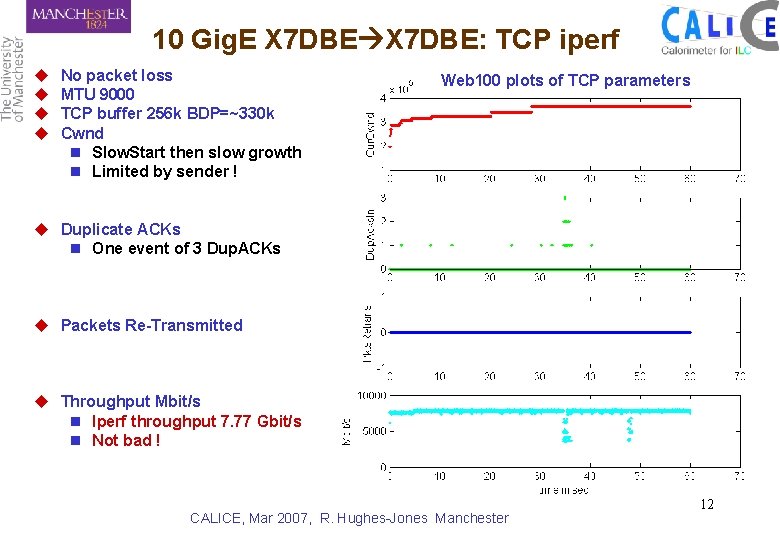

10 Gig. E X 7 DBE: TCP iperf u u No packet loss MTU 9000 TCP buffer 256 k BDP=~330 k Cwnd n Slow. Start then slow growth n Limited by sender ! Web 100 plots of TCP parameters u Duplicate ACKs n One event of 3 Dup. ACKs u Packets Re-Transmitted u Throughput Mbit/s n Iperf throughput 7. 77 Gbit/s n Not bad ! CALICE, Mar 2007, R. Hughes-Jones Manchester 12

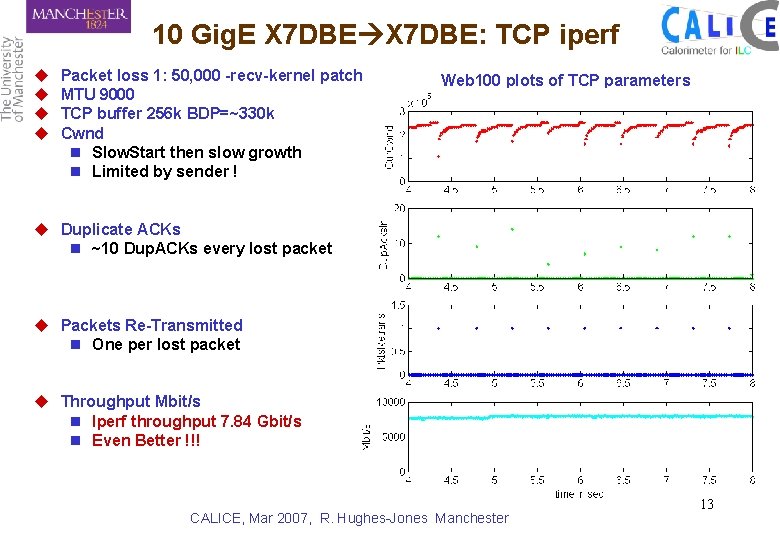

10 Gig. E X 7 DBE: TCP iperf u u Packet loss 1: 50, 000 -recv-kernel patch MTU 9000 TCP buffer 256 k BDP=~330 k Cwnd n Slow. Start then slow growth n Limited by sender ! Web 100 plots of TCP parameters u Duplicate ACKs n ~10 Dup. ACKs every lost packet u Packets Re-Transmitted n One per lost packet u Throughput Mbit/s n Iperf throughput 7. 84 Gbit/s n Even Better !!! CALICE, Mar 2007, R. Hughes-Jones Manchester 13

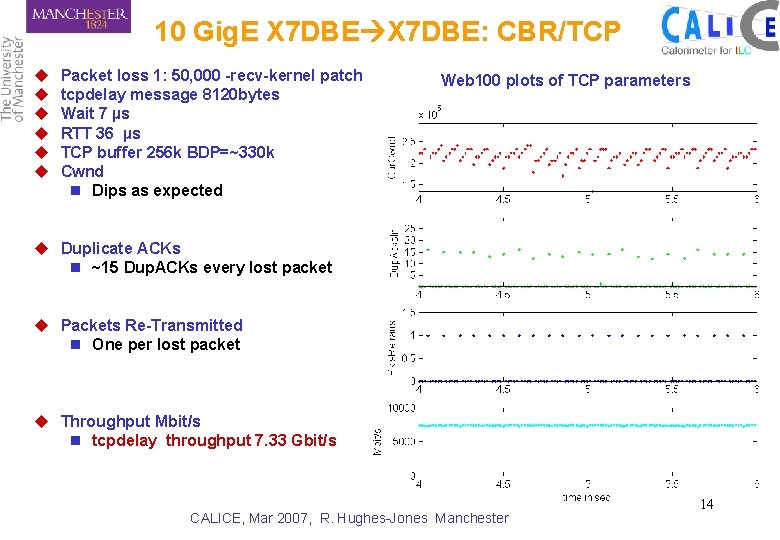

10 Gig. E X 7 DBE: CBR/TCP u u u Packet loss 1: 50, 000 -recv-kernel patch tcpdelay message 8120 bytes Wait 7 µs RTT 36 µs TCP buffer 256 k BDP=~330 k Cwnd n Dips as expected Web 100 plots of TCP parameters u Duplicate ACKs n ~15 Dup. ACKs every lost packet u Packets Re-Transmitted n One per lost packet u Throughput Mbit/s n tcpdelay throughput 7. 33 Gbit/s CALICE, Mar 2007, R. Hughes-Jones Manchester 14

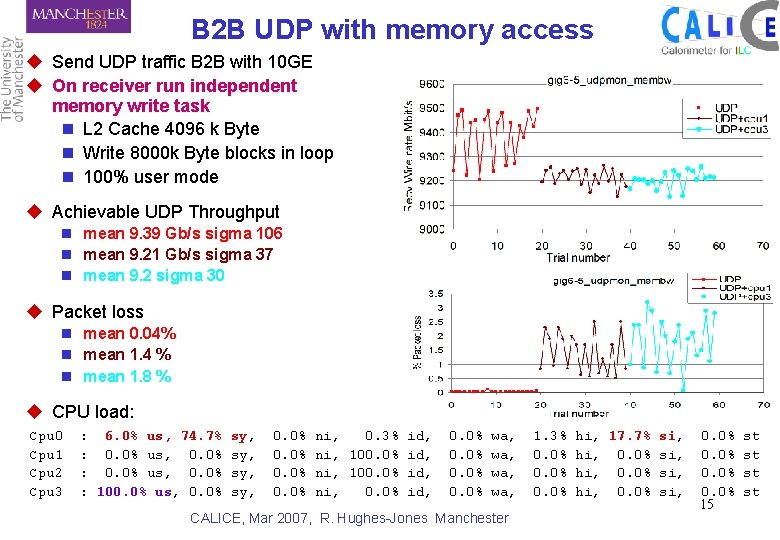

B 2 B UDP with memory access u Send UDP traffic B 2 B with 10 GE u On receiver run independent memory write task n L 2 Cache 4096 k Byte n Write 8000 k Byte blocks in loop n 100% user mode u Achievable UDP Throughput n mean 9. 39 Gb/s sigma 106 n mean 9. 21 Gb/s sigma 37 n mean 9. 2 sigma 30 u Packet loss n mean 0. 04% n mean 1. 4 % n mean 1. 8 % u CPU load: Cpu 0 Cpu 1 Cpu 2 Cpu 3 : 6. 0% us, 74. 7% : 0. 0% us, 0. 0% : 100. 0% us, 0. 0% sy, sy, 0. 0% ni, 0. 3% id, ni, 100. 0% id, ni, 0. 0% id, 0. 0% wa, wa, CALICE, Mar 2007, R. Hughes-Jones Manchester 1. 3% 0. 0% hi, 17. 7% si, hi, 0. 0% si, 0. 0% 15 st st

Backup Slides CALICE, Mar 2007, R. Hughes-Jones Manchester 16

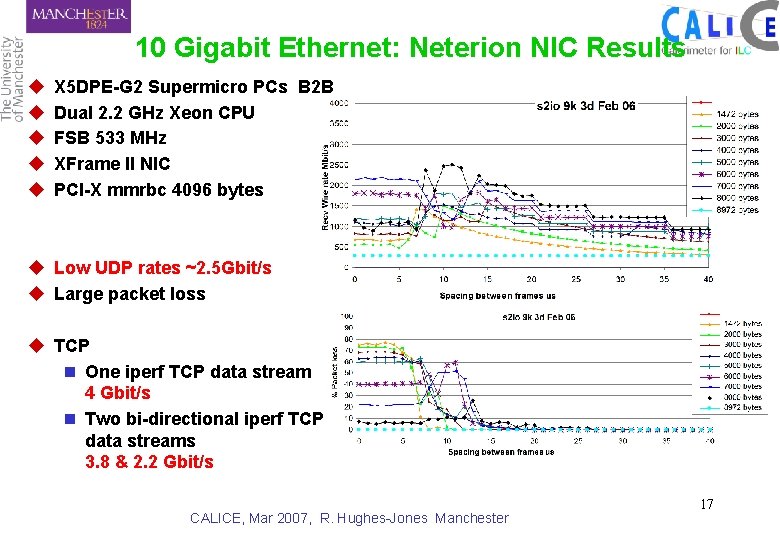

10 Gigabit Ethernet: Neterion NIC Results u u u X 5 DPE-G 2 Supermicro PCs B 2 B Dual 2. 2 GHz Xeon CPU FSB 533 MHz XFrame II NIC PCI-X mmrbc 4096 bytes u Low UDP rates ~2. 5 Gbit/s u Large packet loss u TCP n One iperf TCP data stream 4 Gbit/s n Two bi-directional iperf TCP data streams 3. 8 & 2. 2 Gbit/s CALICE, Mar 2007, R. Hughes-Jones Manchester 17

- Slides: 17