ProofofConcept Demonstrators and Other Evils of ApplicationLed Research

- Slides: 9

Proof-of-Concept Demonstrators and Other Evils of Application-Led Research Nigel Davies

background most : collaborative tools for mobile workers Guide : building the hitch-hikers guide to the galaxy e-Campus : networks of pervasive public displays

deployment u statement: ”you can’t have ubicomp without deployment – that’s the ubiquitous bit in ubiquitous computing “

a position statement u clarify the distinction between application-led research and application development. – can you get your requirements from elsewhere ? u stop too many people from developing applications. – community self-regulation. u stop people from developing applications from scratch. – make components/concepts easier to use. u develop metrics and benchmarks for (a) evaluating applications and (b) evaluating middleware. – enable comparisons and evaluations.

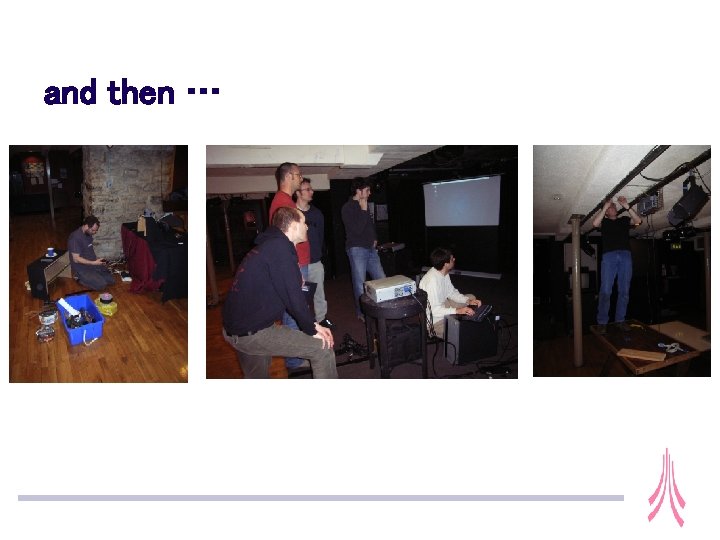

and then …

the Brewery Arts Centre u two weeks of events themed around the 1940 s u our focus was “Evidence” u test case for early e-Campus technologies u designed to make sure we start to understand practical issues of developing and deploying e-Campus systems u this week and last week are the Brewery’s “Forties Fortnight”

evidence u content is coming from: – – the video diary system digitised interviews with local residents digitised old film footage images (“art”) created using the Kirlian table – developed by. : the pooch: .

deployment

a position statement u clarify the distinction between application-led research and application development. – can you get your requirements from elsewhere ? – clarify the distinction between applications development for requirements capture/domain knowledge, inspiration and application development for evaluation (proof!). u stop too many people from developing applications. – community self-regulation. – “stop” people developing applications for the wrong reasons. u stop people from developing applications from scratch. – make components/concepts easier to use – share application experiences, domain knowledge (often done through seminars – can we do this through papers) and test data u develop metrics and benchmarks for (a) evaluating applications and (b) evaluating middleware. – enable comparisons and evaluations. – can we generate these from applications – standard problems.