PROLOG Contents 1 PROLOG The Robot Blocks World

PROLOG

Contents 1. PROLOG: The Robot Blocks World. 2. PROLOG: The Monkey and Bananas problem. 3. What is Planning? 4. Planning vs. Problem Solving 5. STRIPS and Shakey 6. Planning in Prolog 7. Operators 8. The Frame Problem 9. Representing a plan 10. Means Ends Analysis

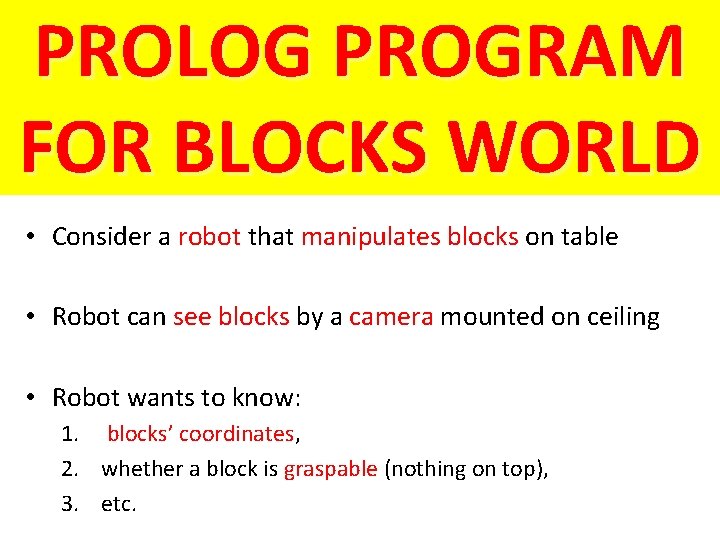

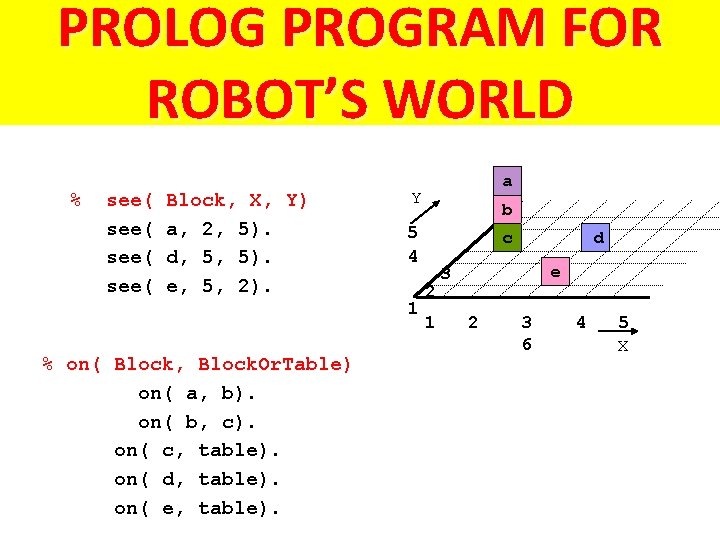

PROLOG PROGRAM FOR BLOCKS WORLD • Consider a robot that manipulates blocks on table • Robot can see blocks by a camera mounted on ceiling • Robot wants to know: 1. blocks’ coordinates, 2. whether a block is graspable (nothing on top), 3. etc.

PROLOG PROGRAM FOR ROBOT’S WORLD % see( Block, X, Y) a, 2, 5). d, 5, 5). e, 5, 2). Y b 5 4 1 % on( Block, Block. Or. Table) on( a, b). on( b, c). on( c, table). on( d, table). on( e, table). a c 2 1 d e 3 2 3 6 4 5 X

![INTERACTION WITH ROBOT PROGRAM Start Prolog interpreter ? - [robot]. File robot consulted ? INTERACTION WITH ROBOT PROGRAM Start Prolog interpreter ? - [robot]. File robot consulted ?](http://slidetodoc.com/presentation_image/50fe663b6691693f7bcb1bbce393c721/image-5.jpg)

INTERACTION WITH ROBOT PROGRAM Start Prolog interpreter ? - [robot]. File robot consulted ? - see( a, X, Y). X=2 Y=5 ? - see( Block, _, _). Block = a; Block = d; Block = e; no % Load file robot. pl % Where do you see block a % Which block(s) do you see? % More answers?

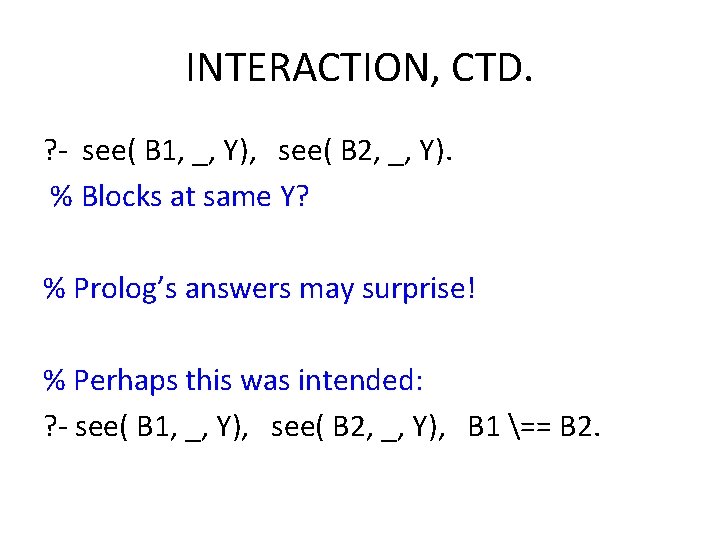

INTERACTION, CTD. ? - see( B 1, _, Y), see( B 2, _, Y). % Blocks at same Y? % Prolog’s answers may surprise! % Perhaps this was intended: ? - see( B 1, _, Y), see( B 2, _, Y), B 1 == B 2.

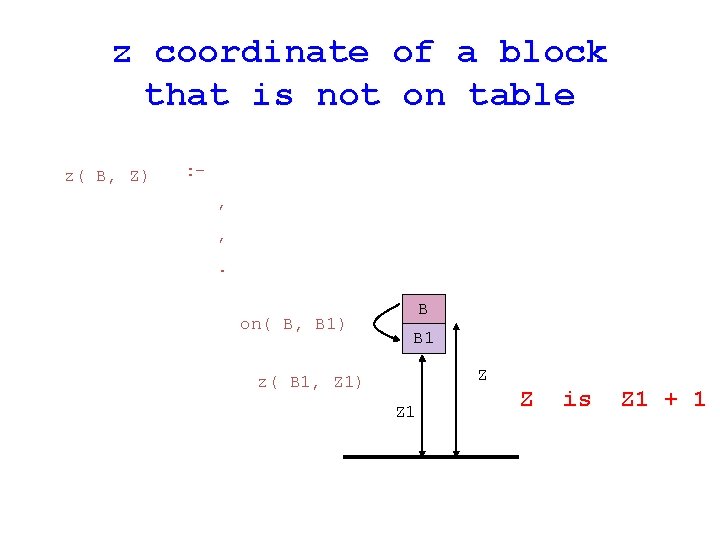

z coordinate of a block that is not on table z( B, Z) : , , . on( B, B 1) B B 1 Z z( B 1, Z 1) Z 1 Z is Z 1 + 1

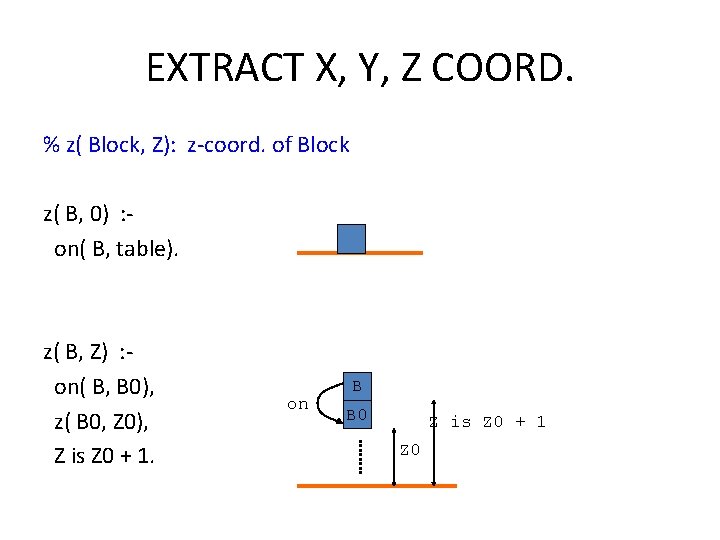

EXTRACT X, Y, Z COORD. % z( Block, Z): z-coord. of Block z( B, 0) : on( B, table). z( B, Z) : on( B, B 0), z( B 0, Z 0), Z is Z 0 + 1. on B B 0 Z is Z 0 + 1 Z 0

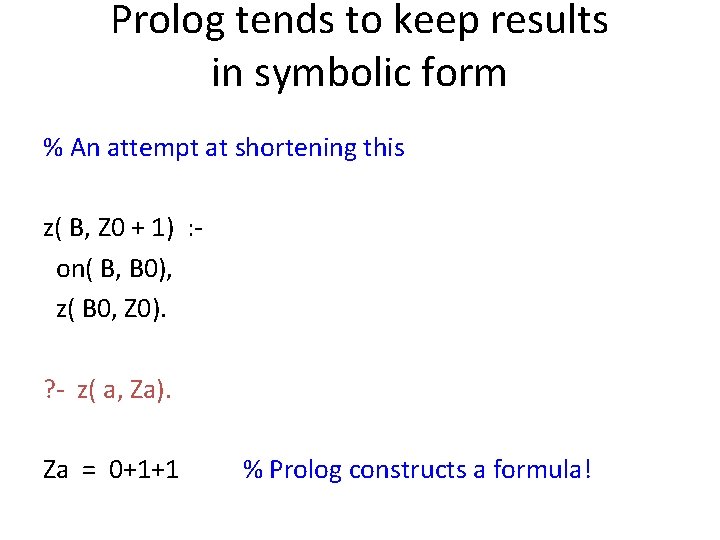

Prolog tends to keep results in symbolic form % An attempt at shortening this z( B, Z 0 + 1) : on( B, B 0), z( B 0, Z 0). ? - z( a, Za). Za = 0+1+1 % Prolog constructs a formula!

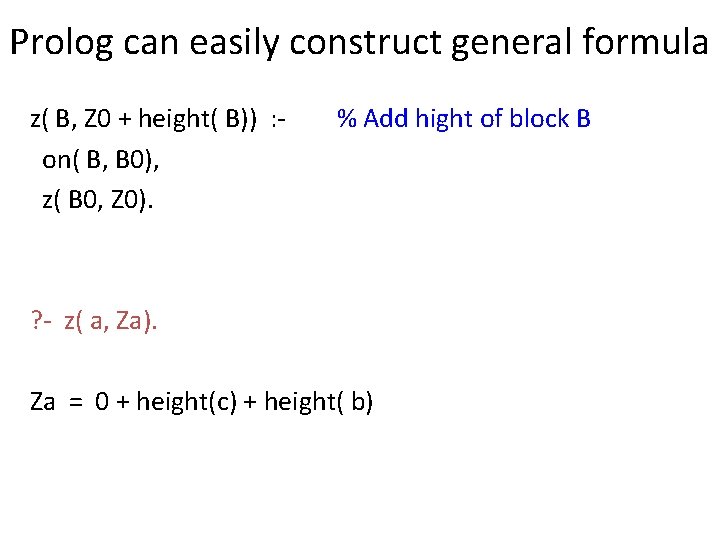

Prolog can easily construct general formula z( B, Z 0 + height( B)) : on( B, B 0), z( B 0, Z 0). % Add hight of block B ? - z( a, Za). Za = 0 + height(c) + height( b)

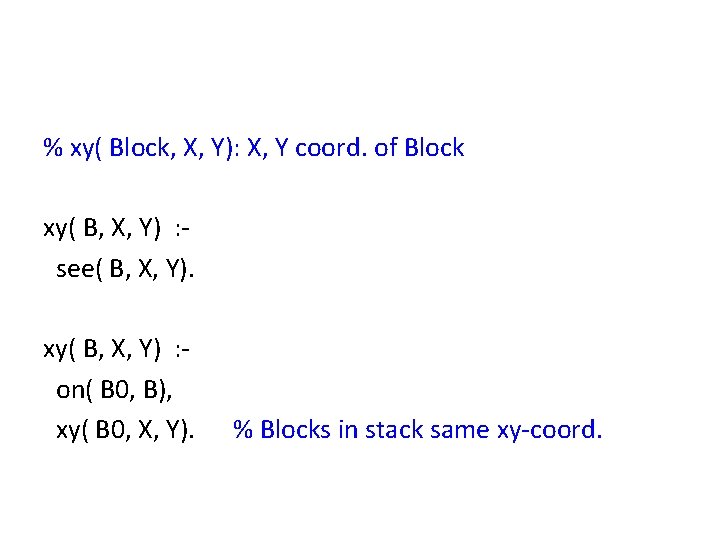

% xy( Block, X, Y): X, Y coord. of Block xy( B, X, Y) : see( B, X, Y). xy( B, X, Y) : on( B 0, B), xy( B 0, X, Y). % Blocks in stack same xy-coord.

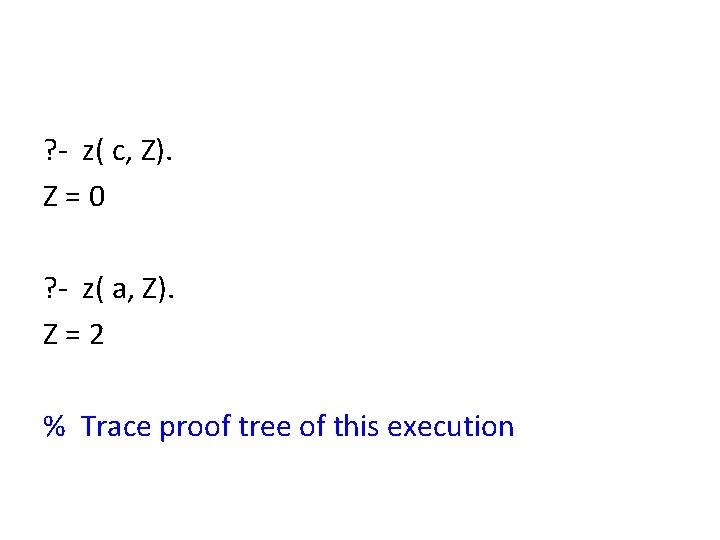

? - z( c, Z). Z=0 ? - z( a, Z). Z=2 % Trace proof tree of this execution

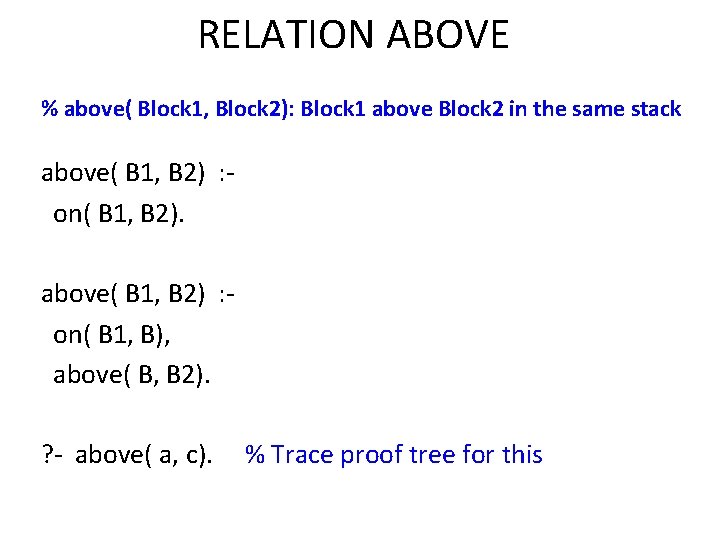

RELATION ABOVE % above( Block 1, Block 2): Block 1 above Block 2 in the same stack above( B 1, B 2) : on( B 1, B 2). above( B 1, B 2) : on( B 1, B), above( B, B 2). ? - above( a, c). % Trace proof tree for this

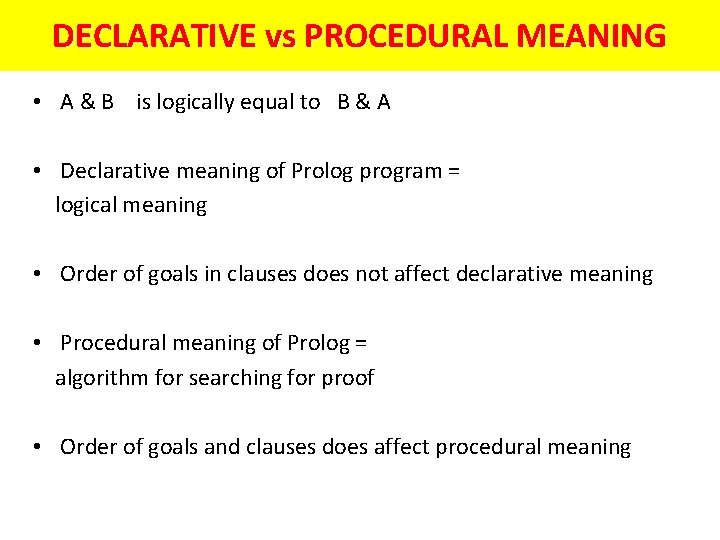

DECLARATIVE vs PROCEDURAL MEANING • A & B is logically equal to B & A • Declarative meaning of Prolog program = logical meaning • Order of goals in clauses does not affect declarative meaning • Procedural meaning of Prolog = algorithm for searching for proof • Order of goals and clauses does affect procedural meaning

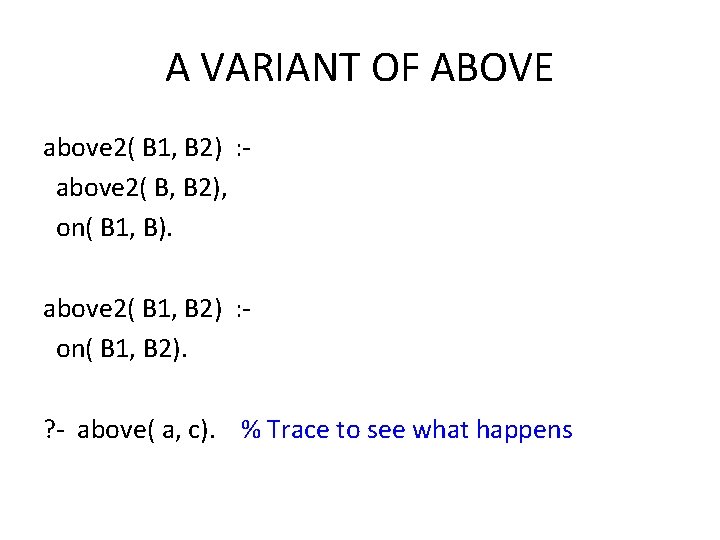

A VARIANT OF ABOVE above 2( B 1, B 2) : above 2( B, B 2), on( B 1, B). above 2( B 1, B 2) : on( B 1, B 2). ? - above( a, c). % Trace to see what happens

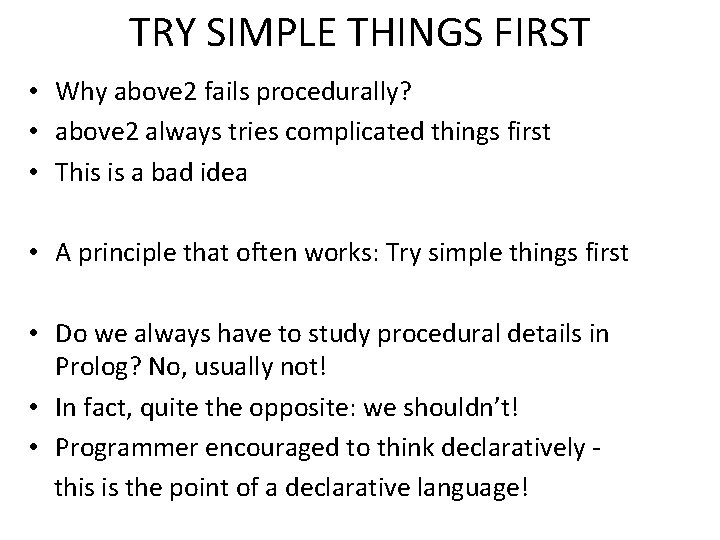

TRY SIMPLE THINGS FIRST • Why above 2 fails procedurally? • above 2 always tries complicated things first • This is a bad idea • A principle that often works: Try simple things first • Do we always have to study procedural details in Prolog? No, usually not! • In fact, quite the opposite: we shouldn’t! • Programmer encouraged to think declaratively this is the point of a declarative language!

Prolog Programming

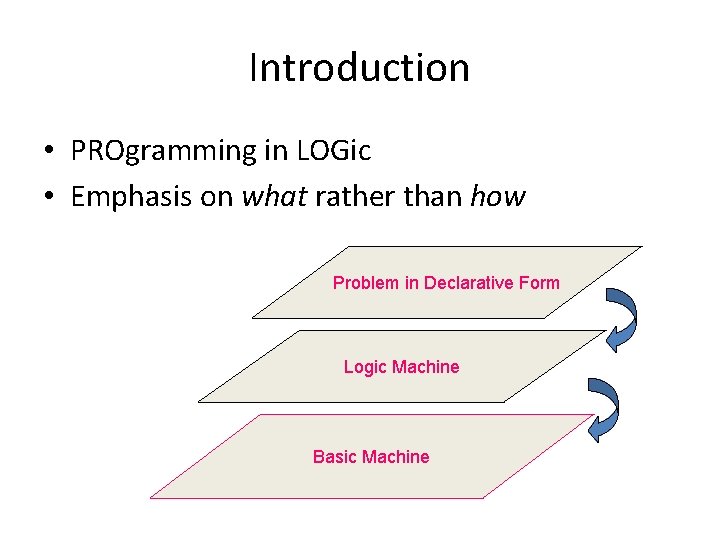

Introduction • PROgramming in LOGic • Emphasis on what rather than how Problem in Declarative Form Logic Machine Basic Machine

Prolog’s strong and weak points • Assists thinking in terms of objects and entities • Not good for number crunching • Useful applications of Prolog in – Expert Systems (Knowledge Representation and Inferencing) – Natural Language Processing – Relational Databases

![A Typical Prolog program Compute_length ([], 0). Compute_length ([Head|Tail], Length): Compute_length (Tail, Tail_length), Length A Typical Prolog program Compute_length ([], 0). Compute_length ([Head|Tail], Length): Compute_length (Tail, Tail_length), Length](http://slidetodoc.com/presentation_image/50fe663b6691693f7bcb1bbce393c721/image-20.jpg)

A Typical Prolog program Compute_length ([], 0). Compute_length ([Head|Tail], Length): Compute_length (Tail, Tail_length), Length is Tail_length+1. High level explanation: The length of a list is 1 plus the length of the tail of the list, obtained by removing the first element of the list. This is a declarative description of the computation.

Planning and Prolog

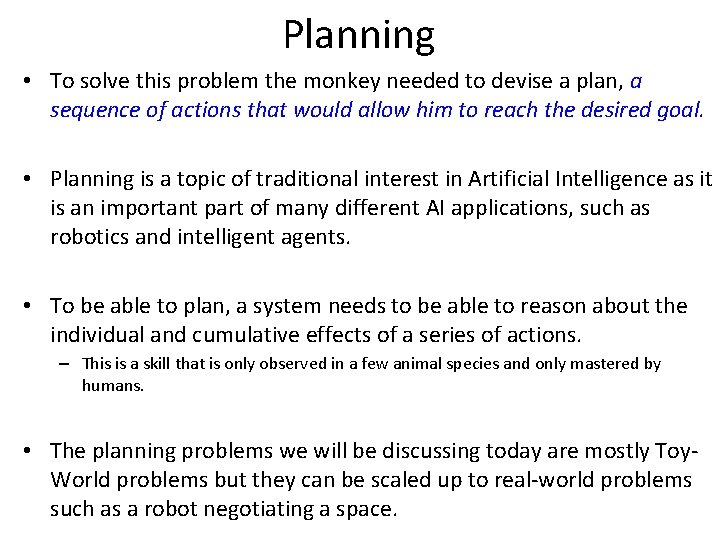

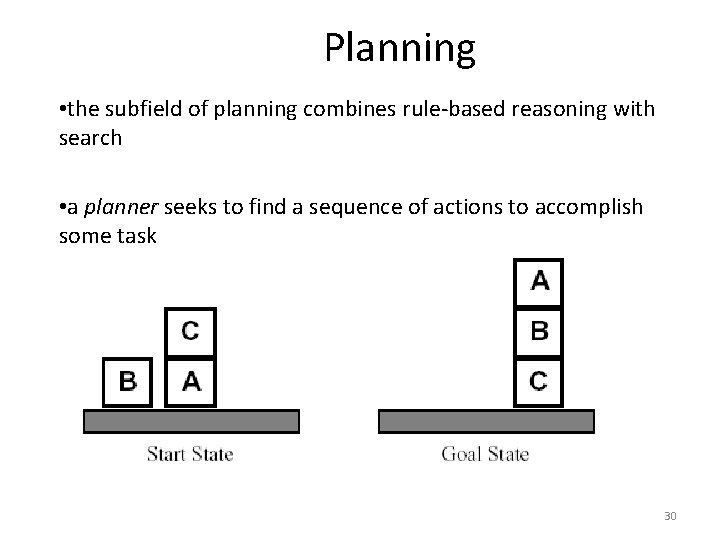

Planning • To solve this problem the monkey needed to devise a plan, a sequence of actions that would allow him to reach the desired goal. • Planning is a topic of traditional interest in Artificial Intelligence as it is an important part of many different AI applications, such as robotics and intelligent agents. • To be able to plan, a system needs to be able to reason about the individual and cumulative effects of a series of actions. – This is a skill that is only observed in a few animal species and only mastered by humans. • The planning problems we will be discussing today are mostly Toy. World problems but they can be scaled up to real-world problems such as a robot negotiating a space.

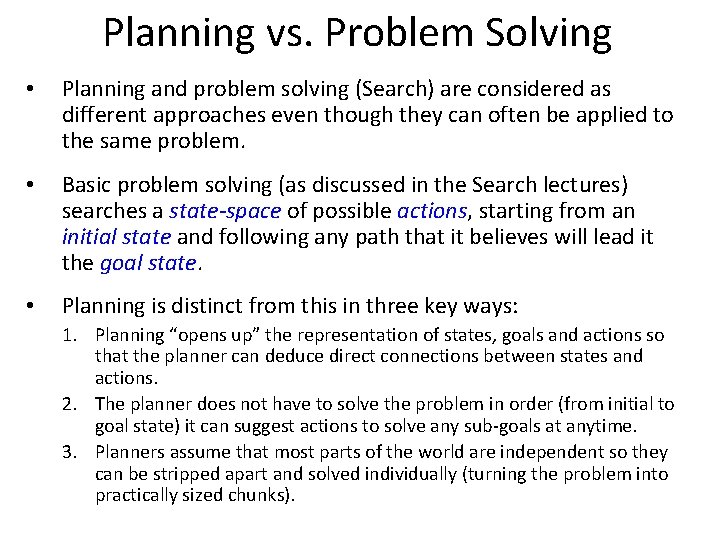

Planning vs. Problem Solving • Planning and problem solving (Search) are considered as different approaches even though they can often be applied to the same problem. • Basic problem solving (as discussed in the Search lectures) searches a state-space of possible actions, starting from an initial state and following any path that it believes will lead it the goal state. • Planning is distinct from this in three key ways: 1. Planning “opens up” the representation of states, goals and actions so that the planner can deduce direct connections between states and actions. 2. The planner does not have to solve the problem in order (from initial to goal state) it can suggest actions to solve any sub-goals at anytime. 3. Planners assume that most parts of the world are independent so they can be stripped apart and solved individually (turning the problem into practically sized chunks).

Shakey • Shakey. ram

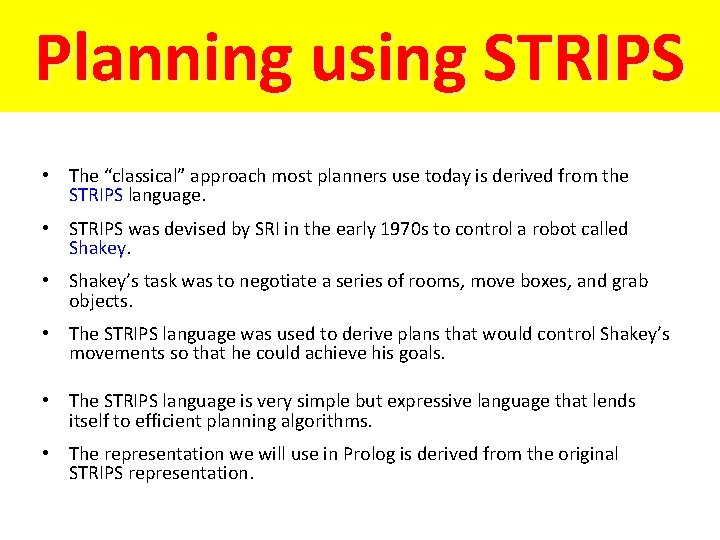

Planning using STRIPS • The “classical” approach most planners use today is derived from the STRIPS language. • STRIPS was devised by SRI in the early 1970 s to control a robot called Shakey. • Shakey’s task was to negotiate a series of rooms, move boxes, and grab objects. • The STRIPS language was used to derive plans that would control Shakey’s movements so that he could achieve his goals. • The STRIPS language is very simple but expressive language that lends itself to efficient planning algorithms. • The representation we will use in Prolog is derived from the original STRIPS representation.

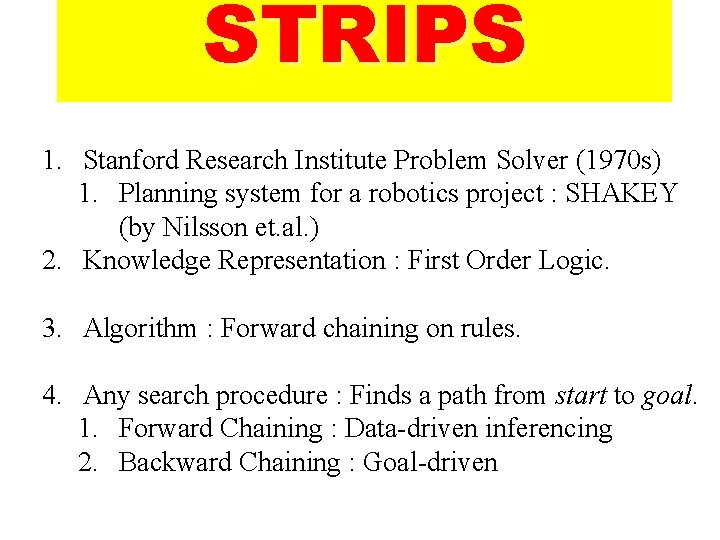

STRIPS 1. Stanford Research Institute Problem Solver (1970 s) 1. Planning system for a robotics project : SHAKEY (by Nilsson et. al. ) 2. Knowledge Representation : First Order Logic. 3. Algorithm : Forward chaining on rules. 4. Any search procedure : Finds a path from start to goal. 1. Forward Chaining : Data-driven inferencing 2. Backward Chaining : Goal-driven

Forward & Backward Chaining 1. Rule : man(x) mortal(x) 2. Data : man(Shakespeare) 3. To prove : mortal(Shakespeare) 4. Forward Chaining: 5. man(Shakespeare) matches LHS of Rule. 6. X = Shakespeare 1. mortal( Shakespeare) added 7. Forward Chaining used by design expert systems 8. Backward Chaining: uses RHS matching 9. - Used by diagnostic expert systems

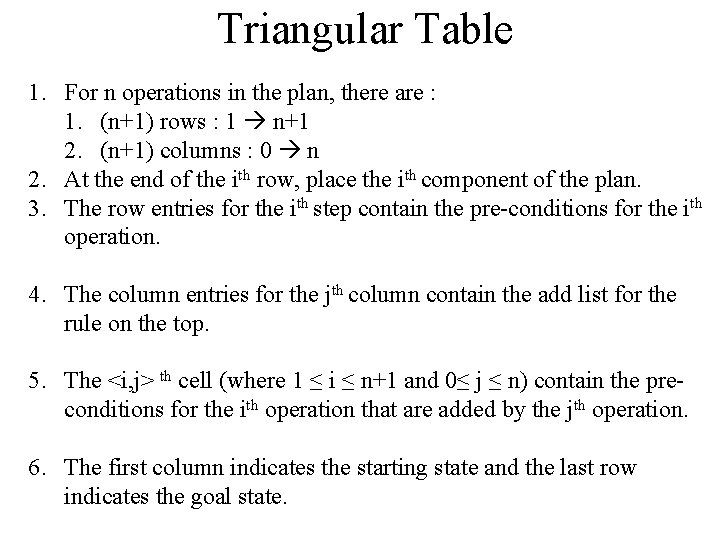

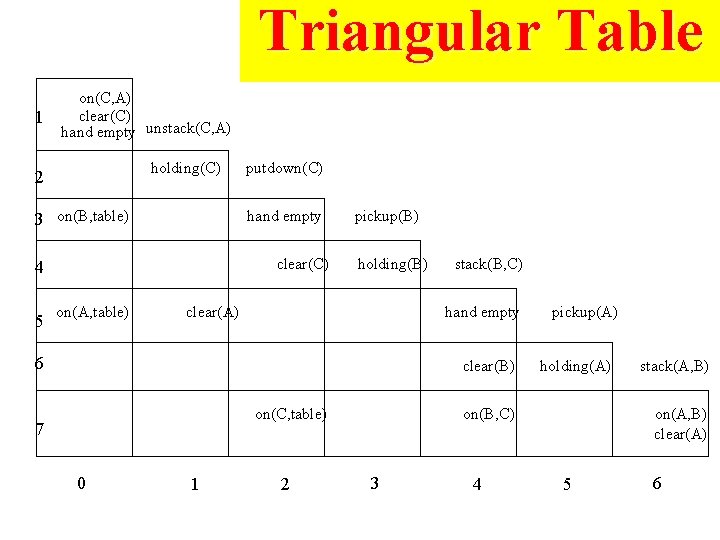

Triangular Table • • • For n operations in the plan, there are : • (n+1) rows : 1 n+1 • (n+1) columns : 0 n At the end of the ith row, place the ith component of the plan. The row entries for the ith step contain the pre-conditions for the ith operation. The column entries for the jth column contain the add list for the rule on the top. The <i, j> th cell (where 1 ≤ i ≤ n+1 and 0≤ j ≤ n) contain the preconditions for the ith operation that are added by the jth operation. The first column indicates the starting state and the last row indicates the goal state.

STRIPS Representation • Planning can be considered as a logical inference problem: – a plan is inferred from facts and logical relationships. • STRIPS represented planning problems as a series of state descriptions and operators expressed in first-order predicate logic. State descriptions represent the state of the world at three points during the plan: – Initial state, the state of the world at the start of the problem; – Current state, and – Goal state, the state of the world we want to get to. Operators are actions that can be applied to change the state of the world. – Each operator has outcomes i. e. how it affects the world. – Each operator can only be applied in certain circumstances. – These are the preconditions of the operator.

Planning • the subfield of planning combines rule-based reasoning with search • a planner seeks to find a sequence of actions to accomplish some task – e. g. , consider a simple blocks world 30

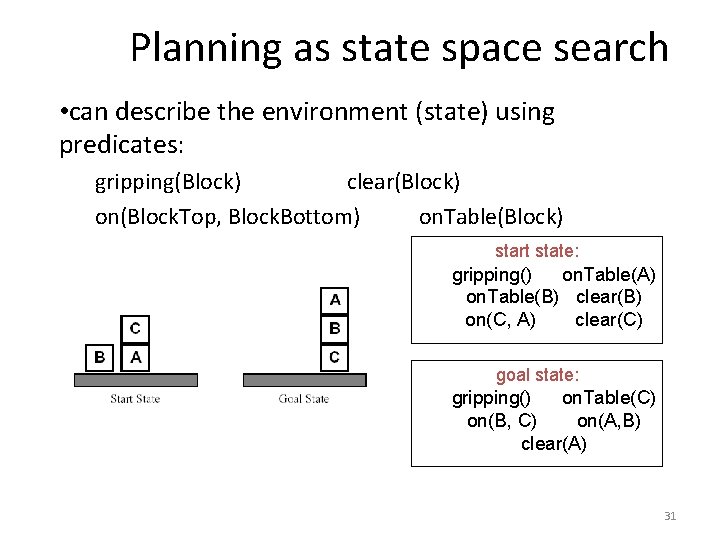

Planning as state space search • can describe the environment (state) using predicates: gripping(Block) clear(Block) on(Block. Top, Block. Bottom) on. Table(Block) start state: gripping() on. Table(A) on. Table(B) clear(B) on(C, A) clear(C) goal state: gripping() on. Table(C) on(B, C) on(A, B) clear(A) 31

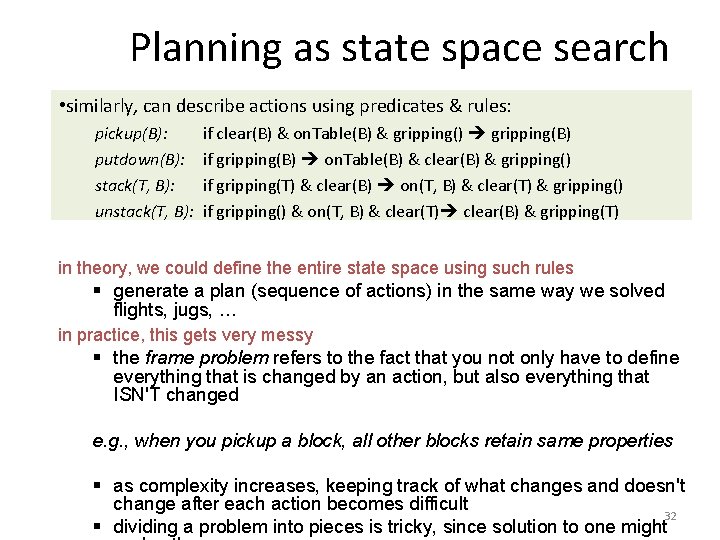

Planning as state space search • similarly, can describe actions using predicates & rules: pickup(B): putdown(B): stack(T, B): unstack(T, B): if clear(B) & on. Table(B) & gripping() gripping(B) if gripping(B) on. Table(B) & clear(B) & gripping() if gripping(T) & clear(B) on(T, B) & clear(T) & gripping() if gripping() & on(T, B) & clear(T) clear(B) & gripping(T) in theory, we could define the entire state space using such rules § generate a plan (sequence of actions) in the same way we solved flights, jugs, … in practice, this gets very messy § the frame problem refers to the fact that you not only have to define everything that is changed by an action, but also everything that ISN'T changed e. g. , when you pickup a block, all other blocks retain same properties § as complexity increases, keeping track of what changes and doesn't change after each action becomes difficult 32 § dividing a problem into pieces is tricky, since solution to one might

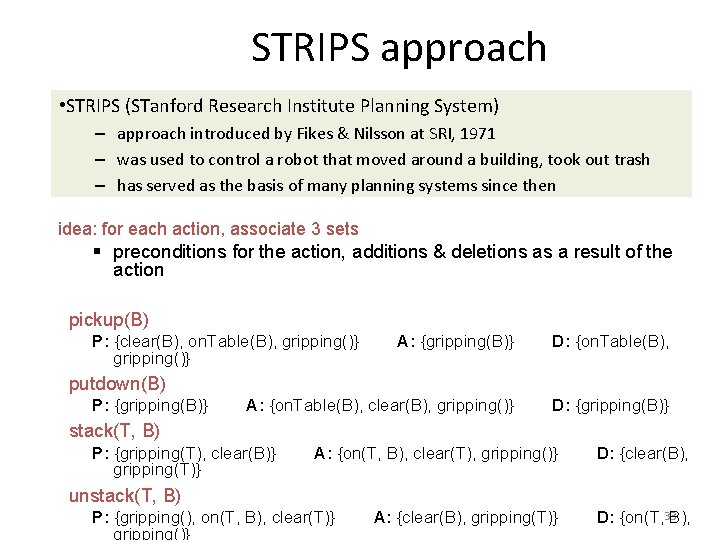

STRIPS approach • STRIPS (STanford Research Institute Planning System) – approach introduced by Fikes & Nilsson at SRI, 1971 – was used to control a robot that moved around a building, took out trash – has served as the basis of many planning systems since then idea: for each action, associate 3 sets § preconditions for the action, additions & deletions as a result of the action pickup(B) P: {clear(B), on. Table(B), gripping()} A: {gripping(B)} D: {on. Table(B), A: {on. Table(B), clear(B), gripping()} D: {gripping(B)} putdown(B) P: {gripping(B)} stack(T, B) P: {gripping(T), clear(B)} gripping(T)} A: {on(T, B), clear(T), gripping()} D: {clear(B), unstack(T, B) P: {gripping(), on(T, B), clear(T)} gripping()} A: {clear(B), gripping(T)} D: {on(T, 33 B),

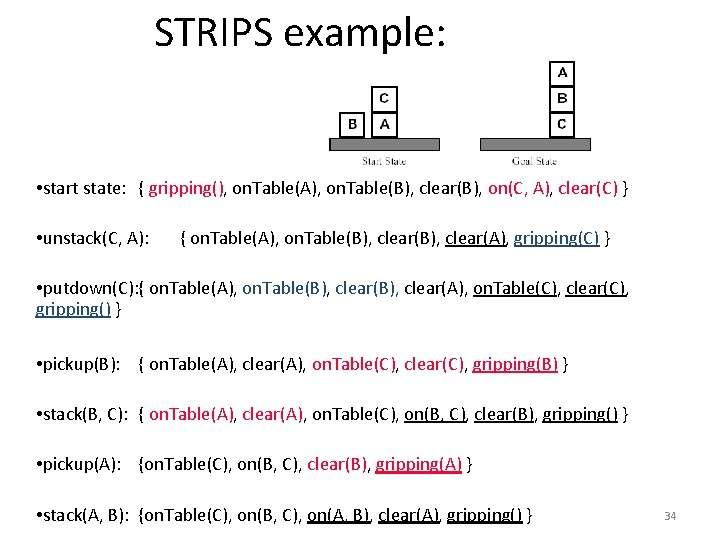

STRIPS example: • start state: { gripping(), on. Table(A), on. Table(B), clear(B), on(C, A), clear(C) } • unstack(C, A): { on. Table(A), on. Table(B), clear(A), gripping(C) } • putdown(C): { on. Table(A), on. Table(B), clear(A), on. Table(C), clear(C), gripping() } • pickup(B): { on. Table(A), clear(A), on. Table(C), clear(C), gripping(B) } • stack(B, C): { on. Table(A), clear(A), on. Table(C), on(B, C), clear(B), gripping() } • pickup(A): {on. Table(C), on(B, C), clear(B), gripping(A) } • stack(A, B): {on. Table(C), on(B, C), on(A, B), clear(A), gripping() } 34

Planning in Prolog • As STRIPS uses a logic based representation of states it lends itself well to being implemented in Prolog. • To show the development of a planning system we will implement the Monkey and Bananas problem in Prolog using STRIPS. • When beginning to produce a planner there are certain representation considerations that need to be made: – How do we represent the state of the world? – How do we represent operators? – Does our representation make it easy to: • check preconditions; • alter the state of the world after performing actions; and • recognise the goal state?

Representing the World in Monkey and Banana Problem

Representing the World in Monkey and Banana Problem • In the M&B problem we have: – objects: a monkey, a box, the bananas, and a floor. – locations: we’ll call them a, b, and c. – relations of objects to locations. For example: • • the monkey is at location a; the monkey is on the floor; the bananas are hanging; the box is in the same location as the bananas. • To represent these relations we need to choose appropriate predicates and arguments: • • at(monkey, a). on(monkey, floor). status(bananas, hanging). at(box, X), at(bananas, X).

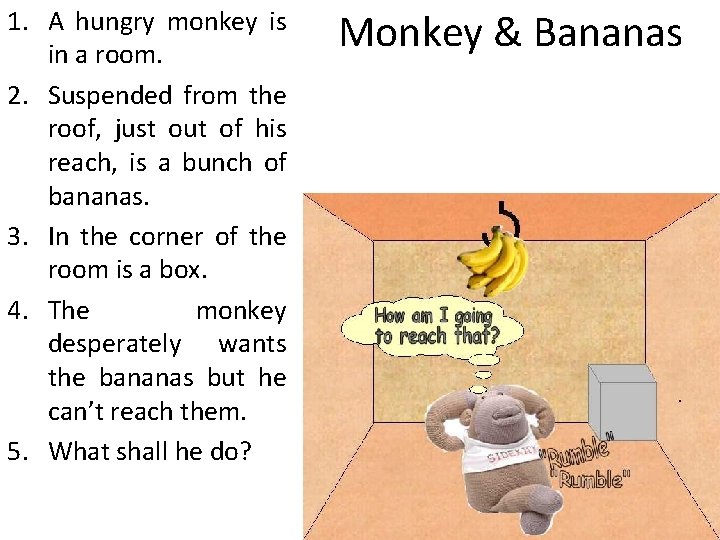

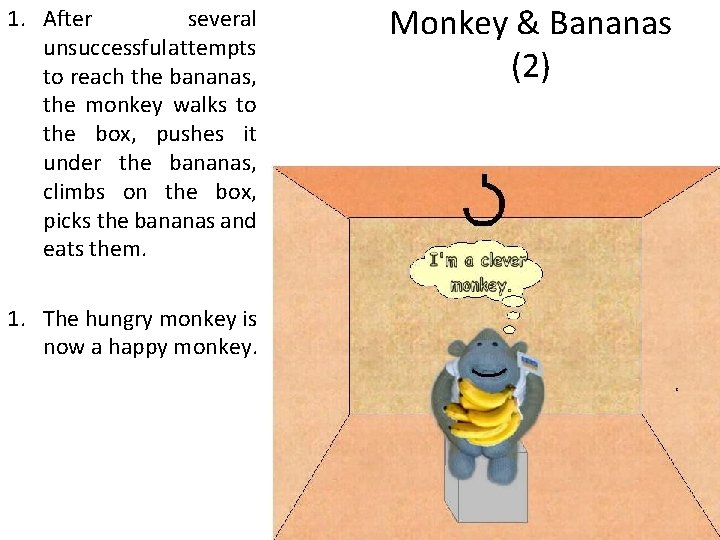

1. A hungry monkey is in a room. 2. Suspended from the roof, just out of his reach, is a bunch of bananas. 3. In the corner of the room is a box. 4. The monkey desperately wants the bananas but he can’t reach them. 5. What shall he do? Monkey & Bananas

1. After several unsuccessful attempts to reach the bananas, the monkey walks to the box, pushes it under the bananas, climbs on the box, picks the bananas and eats them. 1. The hungry monkey is now a happy monkey. Monkey & Bananas (2)

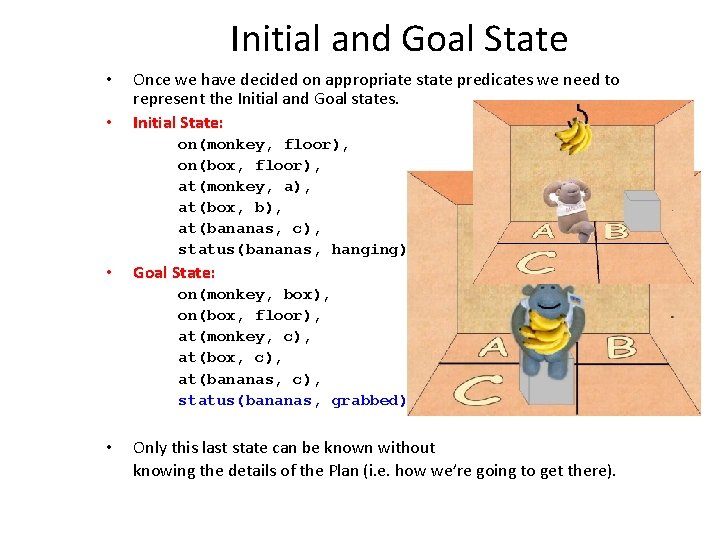

Initial and Goal State • • Once we have decided on appropriate state predicates we need to represent the Initial and Goal states. Initial State: on(monkey, floor), on(box, floor), at(monkey, a), at(box, b), at(bananas, c), status(bananas, hanging). • Goal State: on(monkey, box), on(box, floor), at(monkey, c), at(box, c), at(bananas, c), status(bananas, grabbed). • Only this last state can be known without knowing the details of the Plan (i. e. how we’re going to get there).

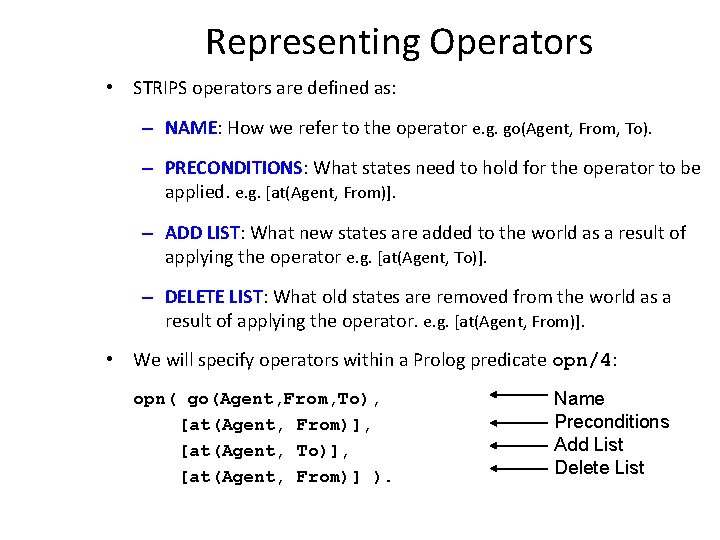

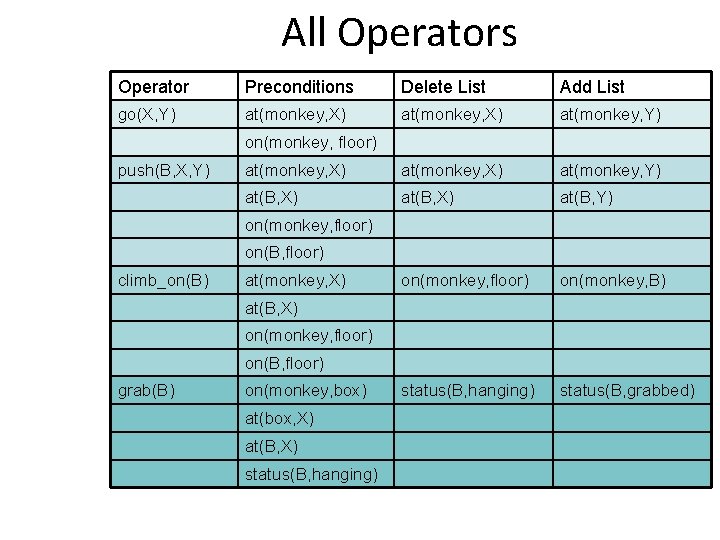

Representing Operators • STRIPS operators are defined as: – NAME: How we refer to the operator e. g. go(Agent, From, To). – PRECONDITIONS: What states need to hold for the operator to be applied. e. g. [at(Agent, From)]. – ADD LIST: What new states are added to the world as a result of applying the operator e. g. [at(Agent, To)]. – DELETE LIST: What old states are removed from the world as a result of applying the operator. e. g. [at(Agent, From)]. • We will specify operators within a Prolog predicate opn/4: opn( go(Agent, From, To), [at(Agent, From)], [at(Agent, To)], [at(Agent, From)] ). Name Preconditions Add List Delete List

The Frame Problem • When representing operators we make the assumption that the only effects our operator has on the world are those specified by the add and delete lists. • In real-world planning this is a hard assumption to make as we can never be absolutely certain of the extent of the effects of an action. – This is known in AI as the Frame Problem. • Real-World systems, such as Shakey, are notoriously difficult to plan for because of this problem. – Plans must constantly adapt based on incoming sensory information about the new state of the world otherwise the operator preconditions will no longer apply. • The planning domains we will be working in our Toy-Worlds so we can assume that our framing assumptions are accurate.

All Operators Operator Preconditions Delete List Add List go(X, Y) at(monkey, X) at(monkey, Y) at(B, X) at(B, Y) on(monkey, floor) on(monkey, B) status(B, hanging) status(B, grabbed) on(monkey, floor) push(B, X, Y) on(monkey, floor) on(B, floor) climb_on(B) at(monkey, X) at(B, X) on(monkey, floor) on(B, floor) grab(B) on(monkey, box) at(box, X) at(B, X) status(B, hanging)

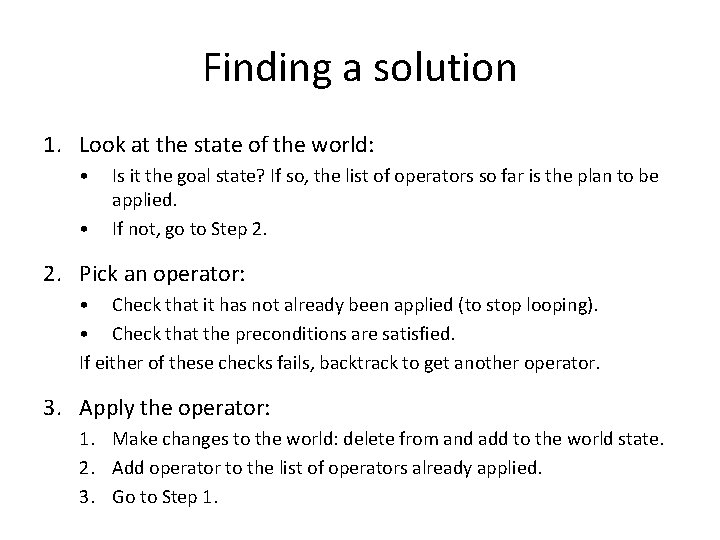

Finding a solution 1. Look at the state of the world: • • Is it the goal state? If so, the list of operators so far is the plan to be applied. If not, go to Step 2. Pick an operator: • Check that it has not already been applied (to stop looping). • Check that the preconditions are satisfied. If either of these checks fails, backtrack to get another operator. 3. Apply the operator: 1. Make changes to the world: delete from and add to the world state. 2. Add operator to the list of operators already applied. 3. Go to Step 1.

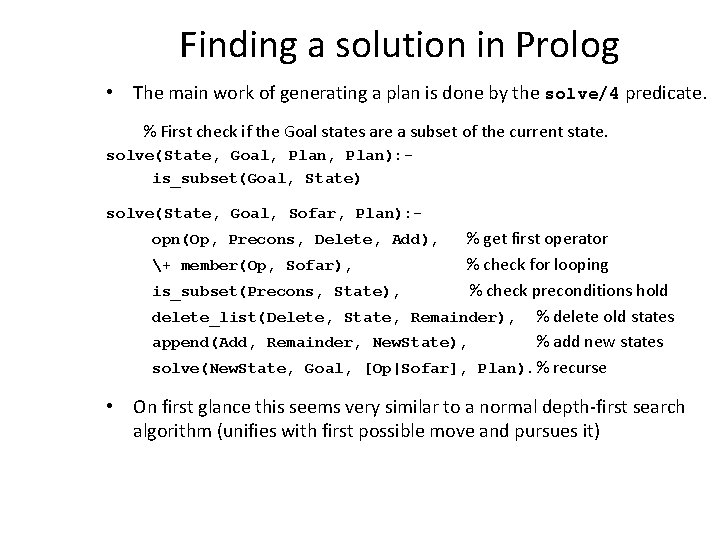

Finding a solution in Prolog • The main work of generating a plan is done by the solve/4 predicate. % First check if the Goal states are a subset of the current state. solve(State, Goal, Plan): is_subset(Goal, State) solve(State, Goal, Sofar, Plan): - % get first operator + member(Op, Sofar), % check for looping is_subset(Precons, State), % check preconditions hold delete_list(Delete, State, Remainder), % delete old states append(Add, Remainder, New. State), % add new states solve(New. State, Goal, [Op|Sofar], Plan). % recurse opn(Op, Precons, Delete, Add), • On first glance this seems very similar to a normal depth-first search algorithm (unifies with first possible move and pursues it)

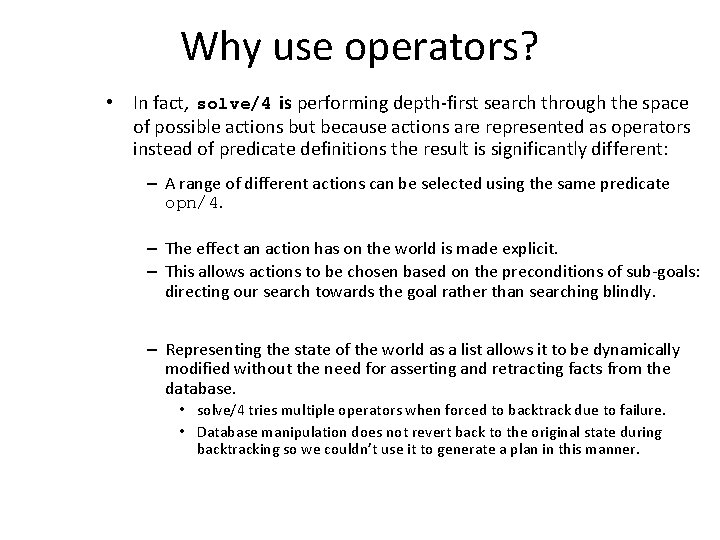

Why use operators? • In fact, solve/4 is performing depth-first search through the space of possible actions but because actions are represented as operators instead of predicate definitions the result is significantly different: – A range of different actions can be selected using the same predicate opn/4. – The effect an action has on the world is made explicit. – This allows actions to be chosen based on the preconditions of sub-goals: directing our search towards the goal rather than searching blindly. – Representing the state of the world as a list allows it to be dynamically modified without the need for asserting and retracting facts from the database. • solve/4 tries multiple operators when forced to backtrack due to failure. • Database manipulation does not revert back to the original state during backtracking so we couldn’t use it to generate a plan in this manner.

Representing the plan

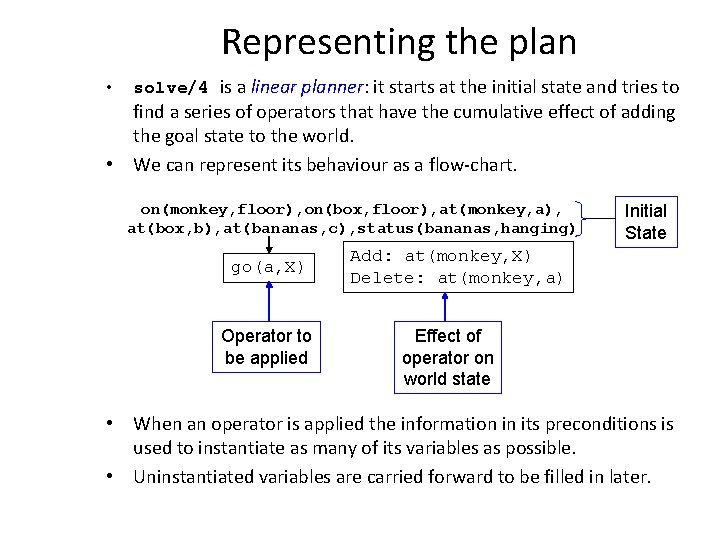

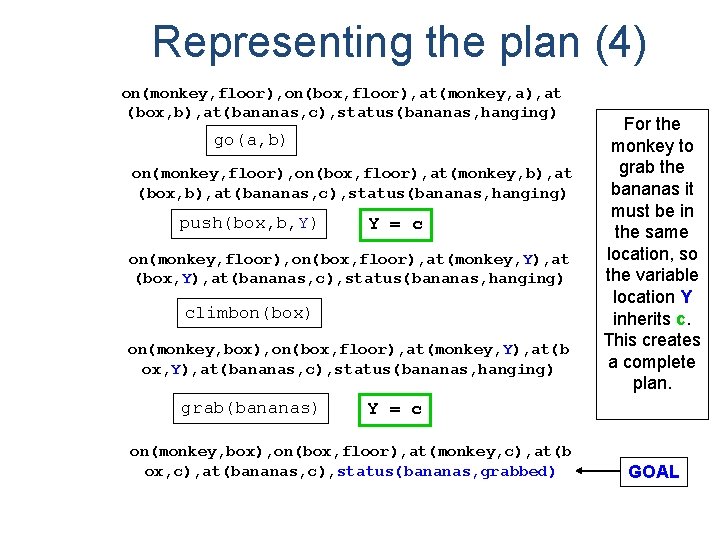

Representing the plan • solve/4 is a linear planner: it starts at the initial state and tries to find a series of operators that have the cumulative effect of adding the goal state to the world. • We can represent its behaviour as a flow-chart. on(monkey, floor), on(box, floor), at(monkey, a), at(box, b), at(bananas, c), status(bananas, hanging) go(a, X) Operator to be applied Initial State Add: at(monkey, X) Delete: at(monkey, a) Effect of operator on world state • When an operator is applied the information in its preconditions is used to instantiate as many of its variables as possible. • Uninstantiated variables are carried forward to be filled in later.

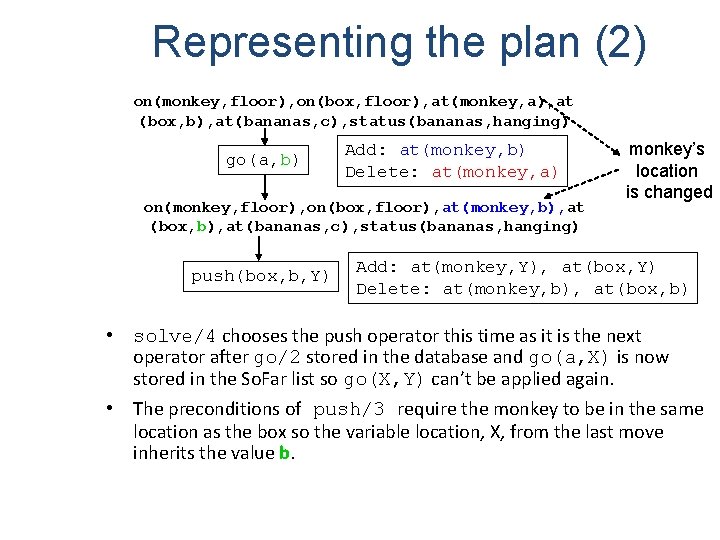

Representing the plan (2) on(monkey, floor), on(box, floor), at(monkey, a), at (box, b), at(bananas, c), status(bananas, hanging) go(a, b) Add: at(monkey, b) Delete: at(monkey, a) on(monkey, floor), on(box, floor), at(monkey, b), at (box, b), at(bananas, c), status(bananas, hanging) push(box, b, Y) monkey’s location is changed Add: at(monkey, Y), at(box, Y) Delete: at(monkey, b), at(box, b) • solve/4 chooses the push operator this time as it is the next operator after go/2 stored in the database and go(a, X) is now stored in the So. Far list so go(X, Y) can’t be applied again. • The preconditions of push/3 require the monkey to be in the same location as the box so the variable location, X, from the last move inherits the value b.

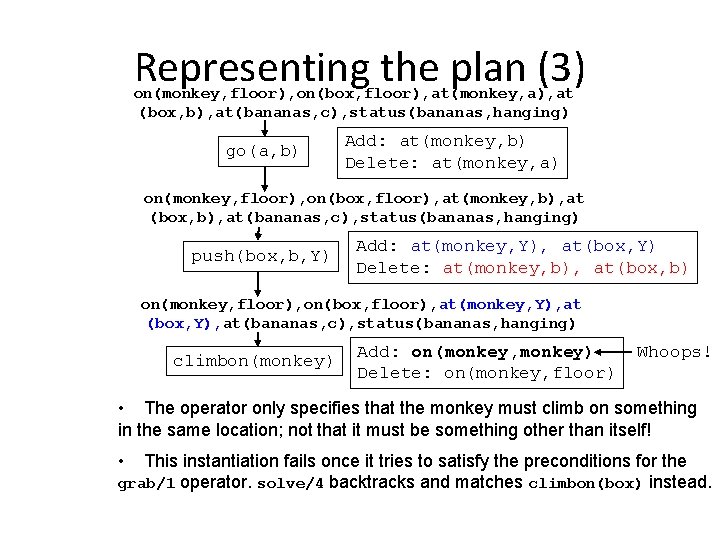

Representing the plan (3) on(monkey, floor), on(box, floor), at(monkey, a), at (box, b), at(bananas, c), status(bananas, hanging) go(a, b) Add: at(monkey, b) Delete: at(monkey, a) on(monkey, floor), on(box, floor), at(monkey, b), at (box, b), at(bananas, c), status(bananas, hanging) push(box, b, Y) Add: at(monkey, Y), at(box, Y) Delete: at(monkey, b), at(box, b) on(monkey, floor), on(box, floor), at(monkey, Y), at (box, Y), at(bananas, c), status(bananas, hanging) climbon(monkey) Add: on(monkey, monkey) Delete: on(monkey, floor) Whoops! • The operator only specifies that the monkey must climb on something in the same location; not that it must be something other than itself! • This instantiation fails once it tries to satisfy the preconditions for the grab/1 operator. solve/4 backtracks and matches climbon(box) instead.

Representing the plan (4) on(monkey, floor), on(box, floor), at(monkey, a), at (box, b), at(bananas, c), status(bananas, hanging) go(a, b) on(monkey, floor), on(box, floor), at(monkey, b), at (box, b), at(bananas, c), status(bananas, hanging) push(box, b, Y) Y = c on(monkey, floor), on(box, floor), at(monkey, Y), at (box, Y), at(bananas, c), status(bananas, hanging) climbon(box) on(monkey, box), on(box, floor), at(monkey, Y), at(b ox, Y), at(bananas, c), status(bananas, hanging) grab(bananas) For the monkey to grab the bananas it must be in the same location, so the variable location Y inherits c. This creates a complete plan. Y = c on(monkey, box), on(box, floor), at(monkey, c), at(b ox, c), at(bananas, c), status(bananas, grabbed) GOAL

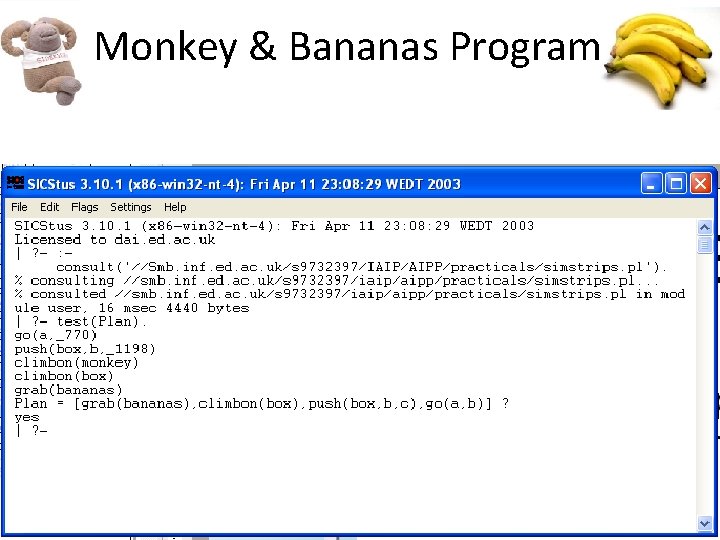

Monkey & Bananas Program

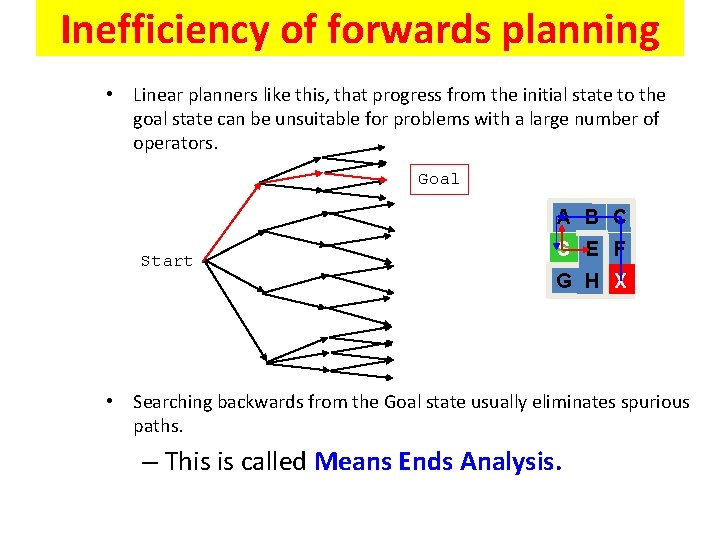

Inefficiency of forwards planning • Linear planners like this, that progress from the initial state to the goal state can be unsuitable for problems with a large number of operators. Goal A B C Start S E F G H X • Searching backwards from the Goal state usually eliminates spurious paths. – This is called Means Ends Analysis.

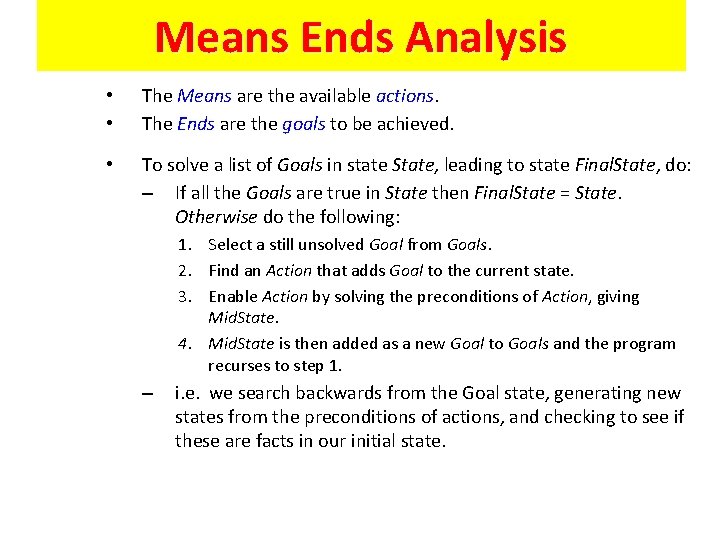

Means Ends Analysis • • The Means are the available actions. The Ends are the goals to be achieved. • To solve a list of Goals in state State, leading to state Final. State, do: – If all the Goals are true in State then Final. State = State. Otherwise do the following: 1. Select a still unsolved Goal from Goals. 2. Find an Action that adds Goal to the current state. 3. Enable Action by solving the preconditions of Action, giving Mid. State. 4. Mid. State is then added as a new Goal to Goals and the program recurses to step 1. – i. e. we search backwards from the Goal state, generating new states from the preconditions of actions, and checking to see if these are facts in our initial state.

Means Ends Analysis (2) • Means Ends Analysis will usually lead straight from the Goal State to the Initial State as the branching factor of the search space is usually larger going forwards compared to backwards. • However, more complex problems can contain operators with overlapping Add Lists so the MEA would be required to choose between them. – It would require heuristics. • Also, linear planners like these will blindly pursue sub-goals without considering whether the changes they are making undermine future goals. – they need someway of protecting their goals. • Both of these issues will be discussed in the next lecture.

Robot Morality

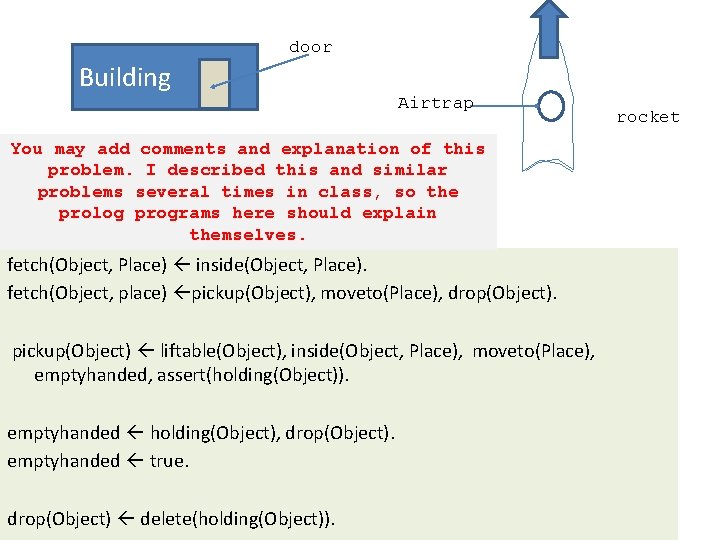

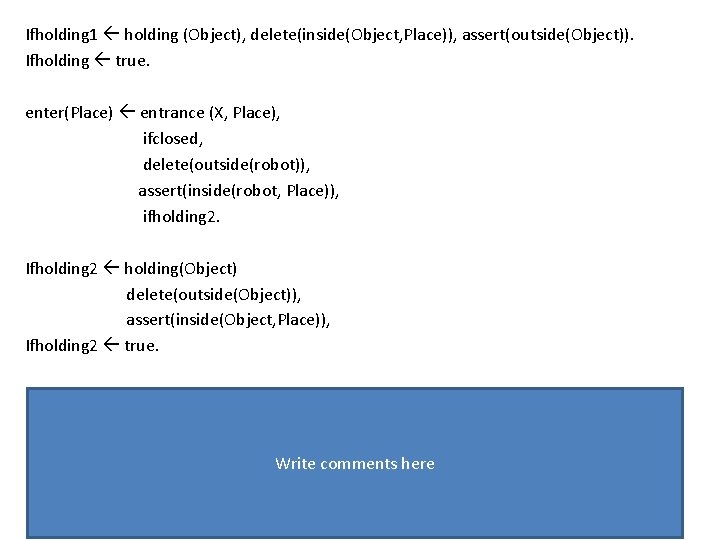

door Building Airtrap You may add comments and explanation of this problem. I described this and similar problems several times in class, so the prolog programs here should explain themselves. fetch(Object, Place) inside(Object, Place). fetch(Object, place) pickup(Object), moveto(Place), drop(Object). pickup(Object) liftable(Object), inside(Object, Place), moveto(Place), emptyhanded, assert(holding(Object)). emptyhanded holding(Object), drop(Object). emptyhanded true. drop(Object) delete(holding(Object)). rocket

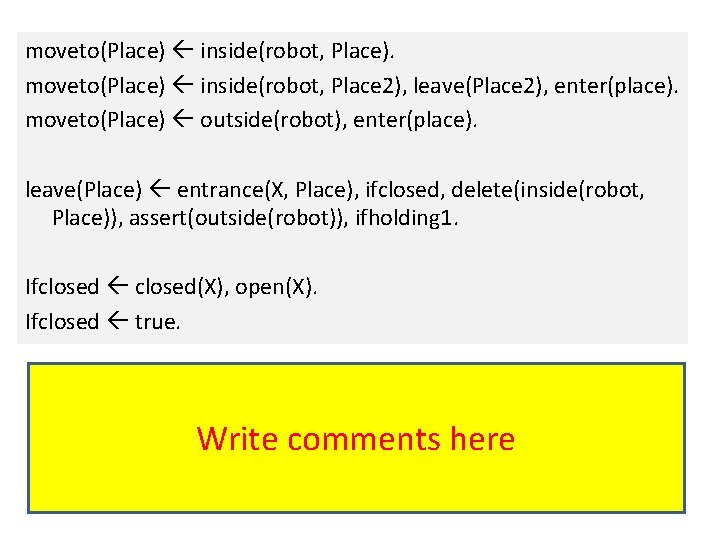

moveto(Place) inside(robot, Place). moveto(Place) inside(robot, Place 2), leave(Place 2), enter(place). moveto(Place) outside(robot), enter(place). leave(Place) entrance(X, Place), ifclosed, delete(inside(robot, Place)), assert(outside(robot)), ifholding 1. Ifclosed closed(X), open(X). Ifclosed true. Write comments here

Ifholding 1 holding (Object), delete(inside(Object, Place)), assert(outside(Object)). Ifholding true. enter(Place) entrance (X, Place), ifclosed, delete(outside(robot)), assert(inside(robot, Place)), ifholding 2. Ifholding 2 holding(Object) delete(outside(Object)), assert(inside(Object, Place)), Ifholding 2 true. Write comments here

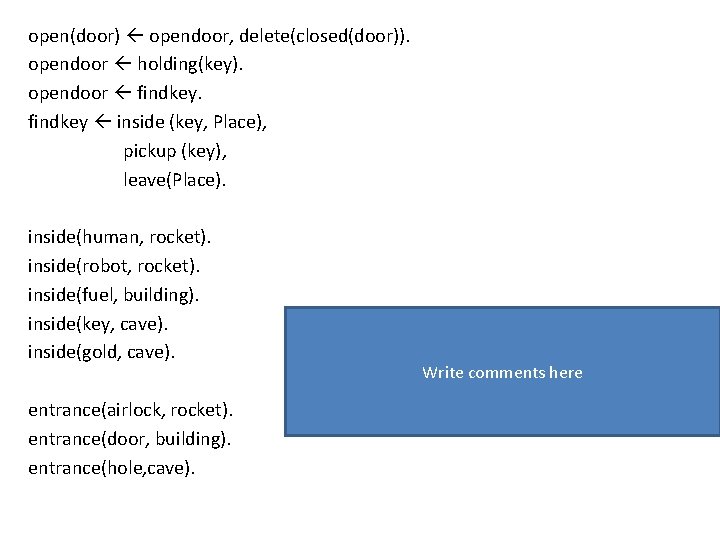

open(door) opendoor, delete(closed(door)). opendoor holding(key). opendoor findkey inside (key, Place), pickup (key), leave(Place). inside(human, rocket). inside(robot, rocket). inside(fuel, building). inside(key, cave). inside(gold, cave). entrance(airlock, rocket). entrance(door, building). entrance(hole, cave). Write comments here

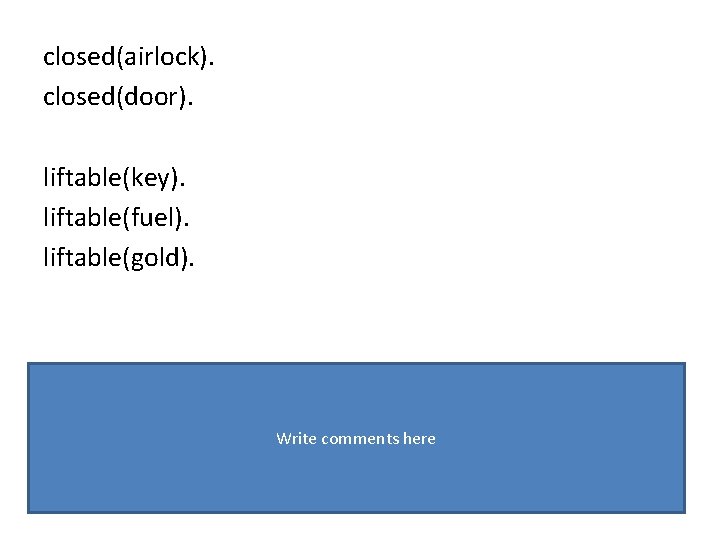

closed(airlock). closed(door). liftable(key). liftable(fuel). liftable(gold). Write comments here

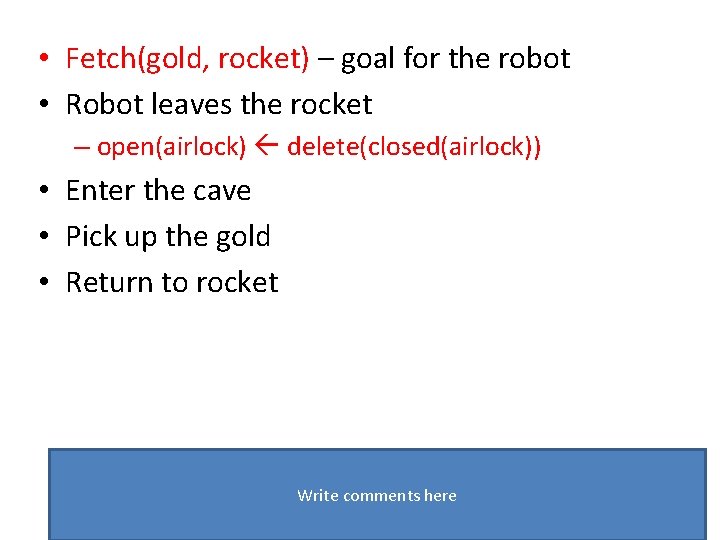

• Fetch(gold, rocket) – goal for the robot • Robot leaves the rocket – open(airlock) delete(closed(airlock)) • Enter the cave • Pick up the gold • Return to rocket Write comments here

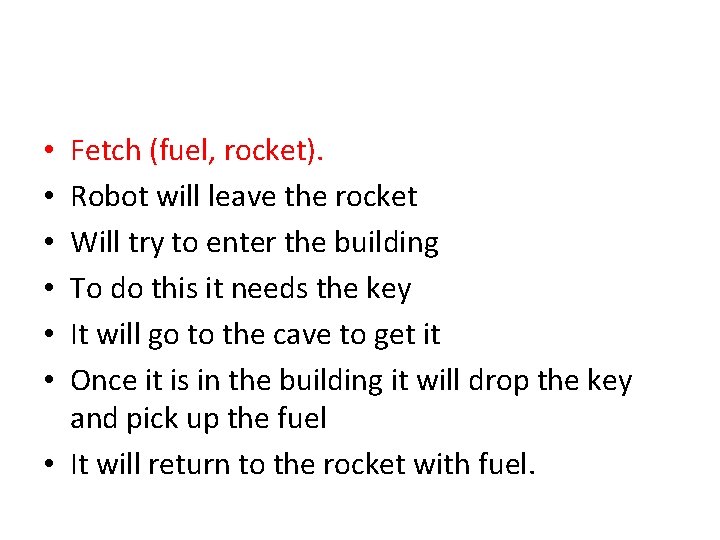

Fetch (fuel, rocket). Robot will leave the rocket Will try to enter the building To do this it needs the key It will go to the cave to get it Once it is in the building it will drop the key and pick up the fuel • It will return to the rocket with fuel. • • •

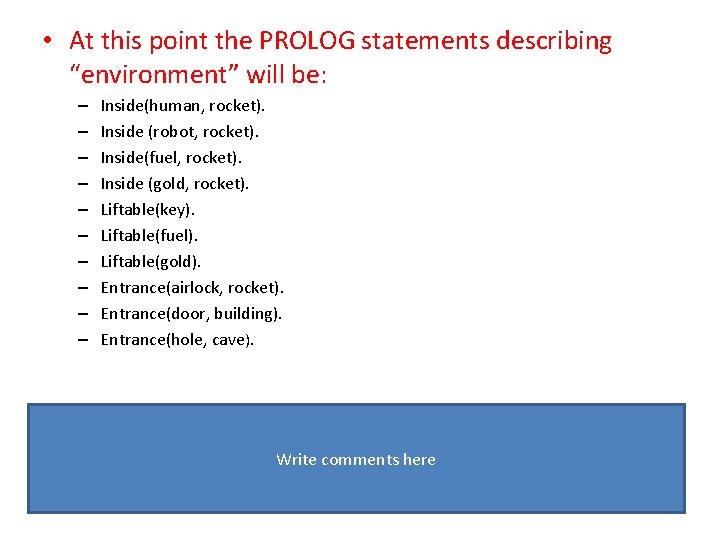

• At this point the PROLOG statements describing “environment” will be: – – – – – Inside(human, rocket). Inside (robot, rocket). Inside(fuel, rocket). Inside (gold, rocket). Liftable(key). Liftable(fuel). Liftable(gold). Entrance(airlock, rocket). Entrance(door, building). Entrance(hole, cave). Write comments here

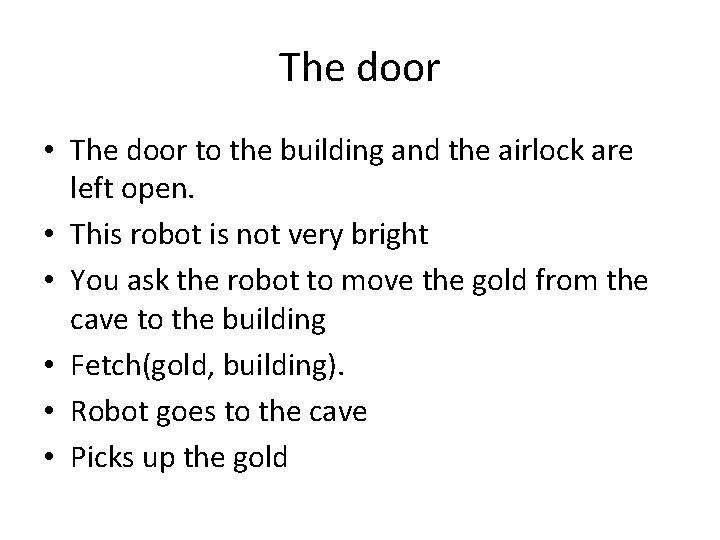

The door • The door to the building and the airlock are left open. • This robot is not very bright • You ask the robot to move the gold from the cave to the building • Fetch(gold, building). • Robot goes to the cave • Picks up the gold

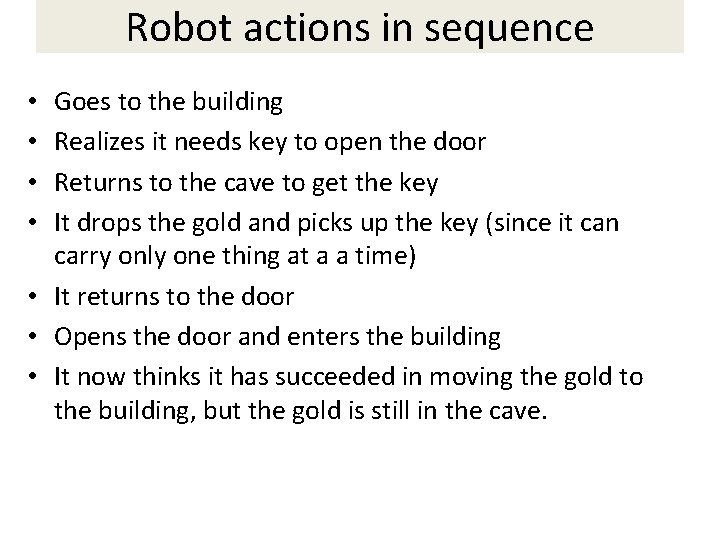

Robot actions in sequence Goes to the building Realizes it needs key to open the door Returns to the cave to get the key It drops the gold and picks up the key (since it can carry only one thing at a a time) • It returns to the door • Opens the door and enters the building • It now thinks it has succeeded in moving the gold to the building, but the gold is still in the cave. • •

Robot actions in sequence • This problem is caused by the fact that the robot may undo part of the overall goal by backtracking to accomplish a subgoal. • We will give some “consciousness” to the robot

Asimov three laws of robotics 1. A robot may not injure a human being, or through inaction, allow a human being to come to harm. 2. A robot must obey orders given by human beings except where such orders would conflict with the first law 3. A robot must protect its own existence as long as such protection does not conflict with the first law.

Asimov three laws of robotics • Robot must not obey commands blindly • It must first determine whether it can perform the command without violating the laws. • Obey(fetch(fuel, rocket)). • Obey is a “mini-interpreter” for Prolog that checks to see whether the human or the robot needs protecting before executing the subgoals associated with the goal.

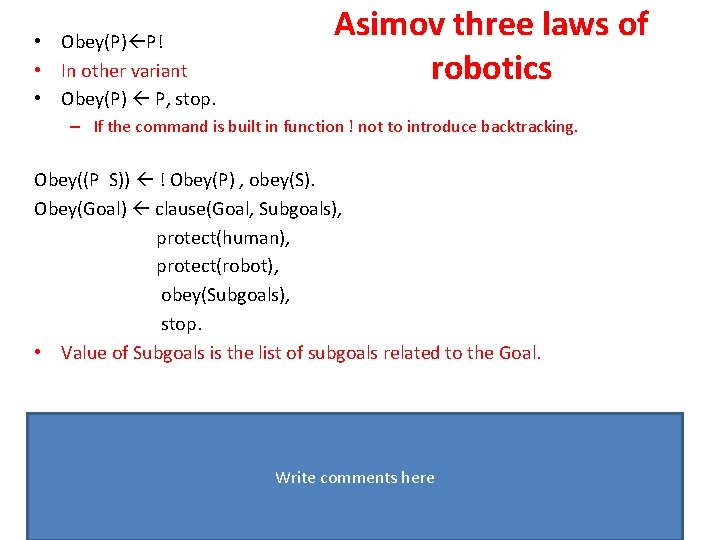

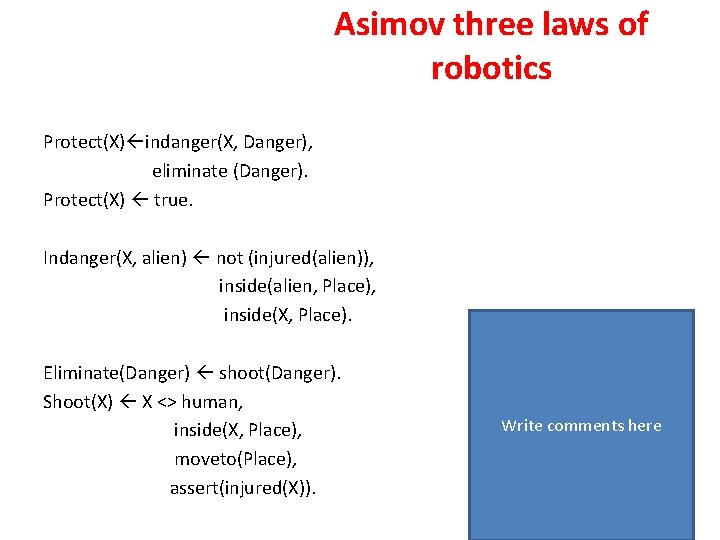

• Obey(P) P! • In other variant • Obey(P) P, stop. Asimov three laws of robotics – If the command is built in function ! not to introduce backtracking. Obey((P S)) ! Obey(P) , obey(S). Obey(Goal) clause(Goal, Subgoals), protect(human), protect(robot), obey(Subgoals), stop. • Value of Subgoals is the list of subgoals related to the Goal. Write comments here

Asimov three laws of robotics Protect(X) indanger(X, Danger), eliminate (Danger). Protect(X) true. Indanger(X, alien) not (injured(alien)), inside(alien, Place), inside(X, Place). Eliminate(Danger) shoot(Danger). Shoot(X) X <> human, inside(X, Place), moveto(Place), assert(injured(X)). Write comments here

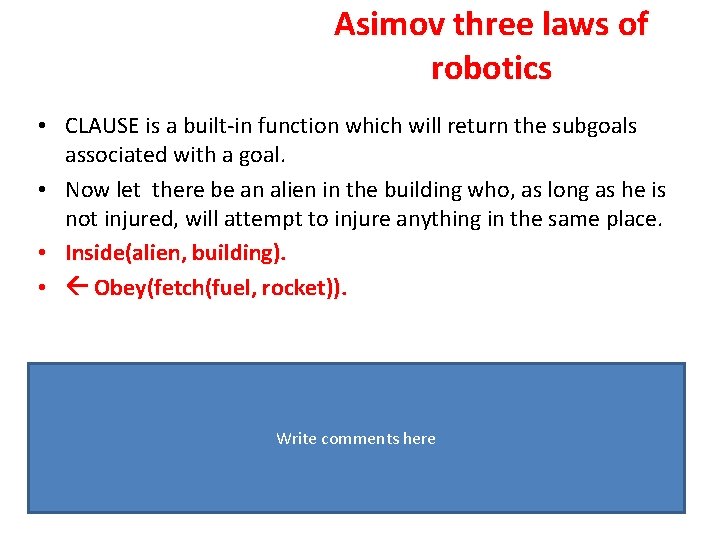

Asimov three laws of robotics • CLAUSE is a built-in function which will return the subgoals associated with a goal. • Now let there be an alien in the building who, as long as he is not injured, will attempt to injure anything in the same place. • Inside(alien, building). • Obey(fetch(fuel, rocket)). Write comments here

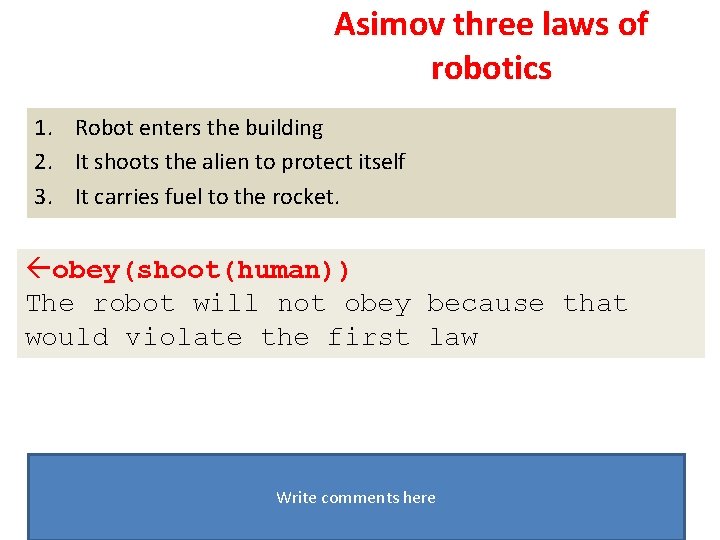

Asimov three laws of robotics 1. Robot enters the building 2. It shoots the alien to protect itself 3. It carries fuel to the rocket. obey(shoot(human)) The robot will not obey because that would violate the first law Write comments here

Asimov three laws of robotics obey(shoot(robot)) The robot will obey because the second law of robotics takes precedence over the third Write comments here

A planning agent

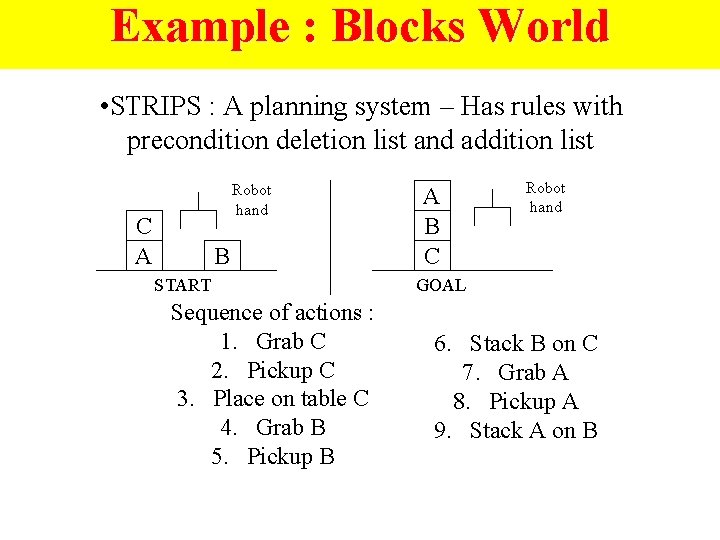

Example : Blocks World • STRIPS : A planning system – Has rules with precondition deletion list and addition list Robot hand C A B START Sequence of actions : 1. Grab C 2. Pickup C 3. Place on table C 4. Grab B 5. Pickup B A B C Robot hand GOAL 6. Stack B on C 7. Grab A 8. Pickup A 9. Stack A on B

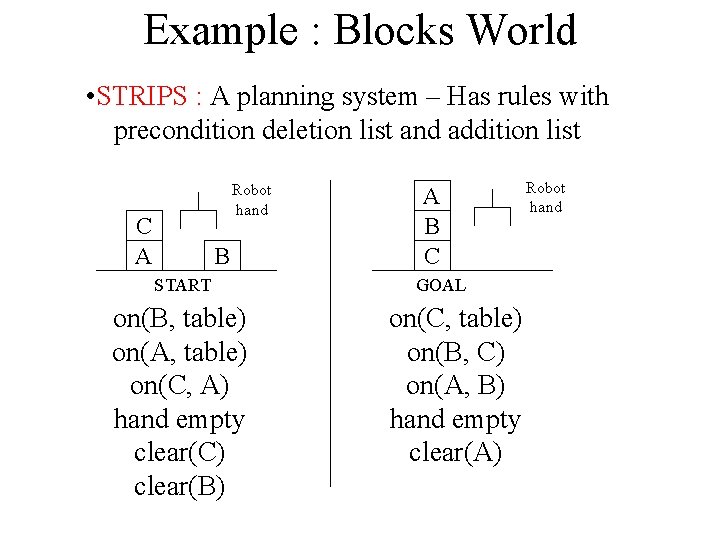

Example : Blocks World • STRIPS : A planning system – Has rules with precondition deletion list and addition list Robot hand C A B START on(B, table) on(A, table) on(C, A) hand empty clear(C) clear(B) A B C GOAL on(C, table) on(B, C) on(A, B) hand empty clear(A) Robot hand

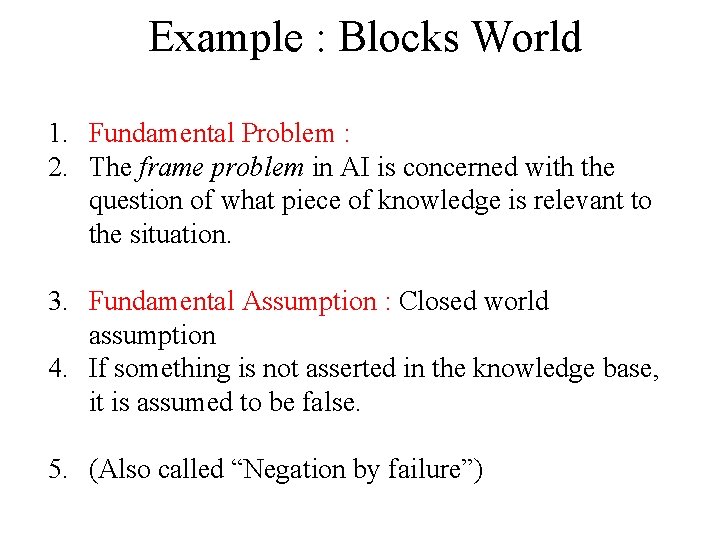

Example : Blocks World 1. Fundamental Problem : 2. The frame problem in AI is concerned with the question of what piece of knowledge is relevant to the situation. 3. Fundamental Assumption : Closed world assumption 4. If something is not asserted in the knowledge base, it is assumed to be false. 5. (Also called “Negation by failure”)

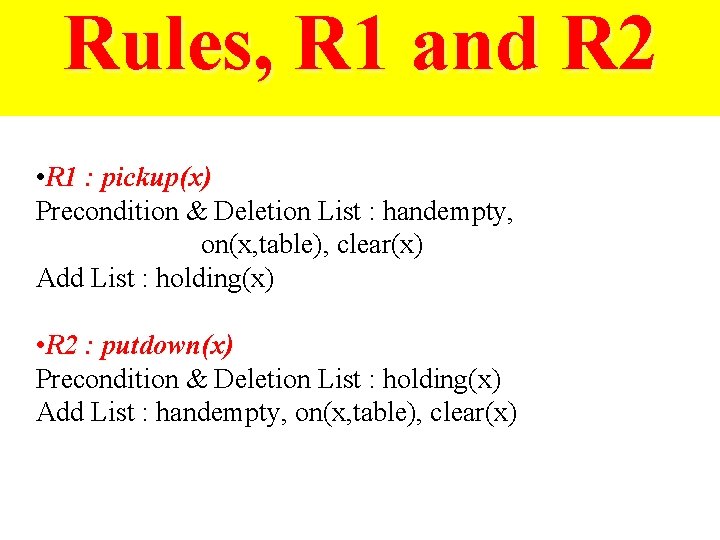

Rules, R 1 and R 2 • R 1 : pickup(x) Precondition & Deletion List : handempty, on(x, table), clear(x) Add List : holding(x) • R 2 : putdown(x) Precondition & Deletion List : holding(x) Add List : handempty, on(x, table), clear(x)

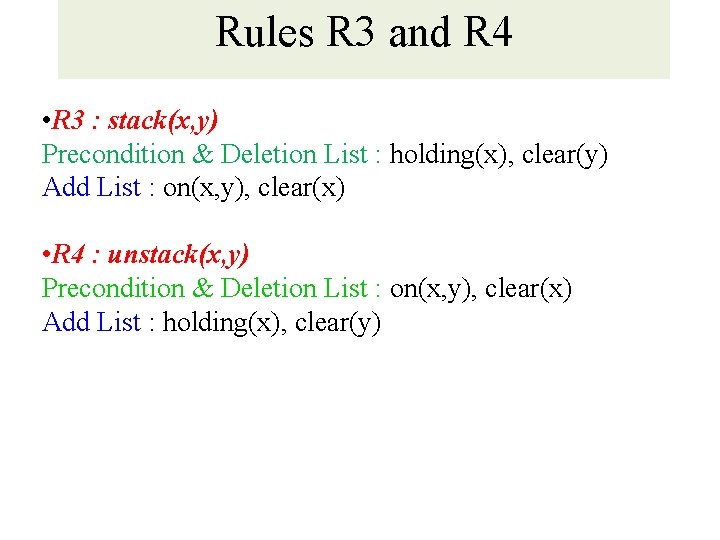

Rules R 3 and R 4 • R 3 : stack(x, y) Precondition & Deletion List : holding(x), clear(y) Add List : on(x, y), clear(x) • R 4 : unstack(x, y) Precondition & Deletion List : on(x, y), clear(x) Add List : holding(x), clear(y)

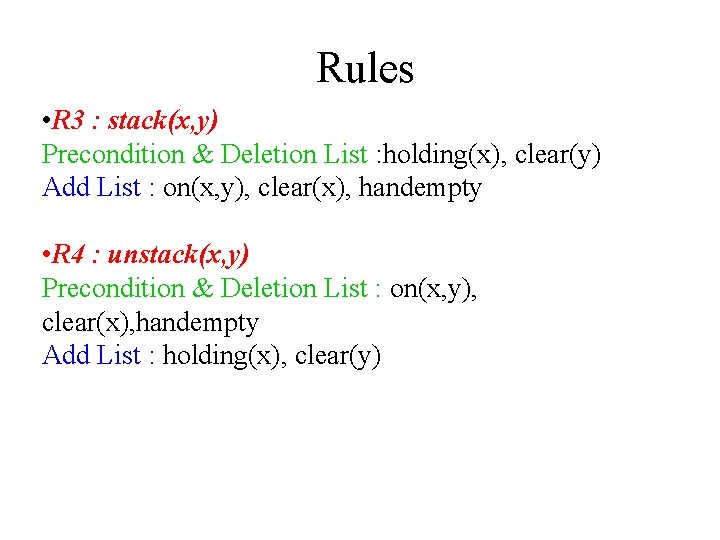

Rules • R 3 : stack(x, y) Precondition & Deletion List : holding(x), clear(y) Add List : on(x, y), clear(x), handempty • R 4 : unstack(x, y) Precondition & Deletion List : on(x, y), clear(x), handempty Add List : holding(x), clear(y)

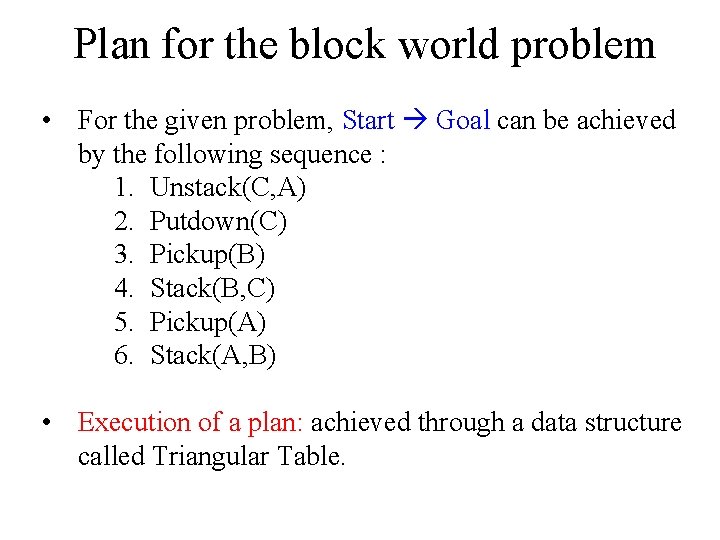

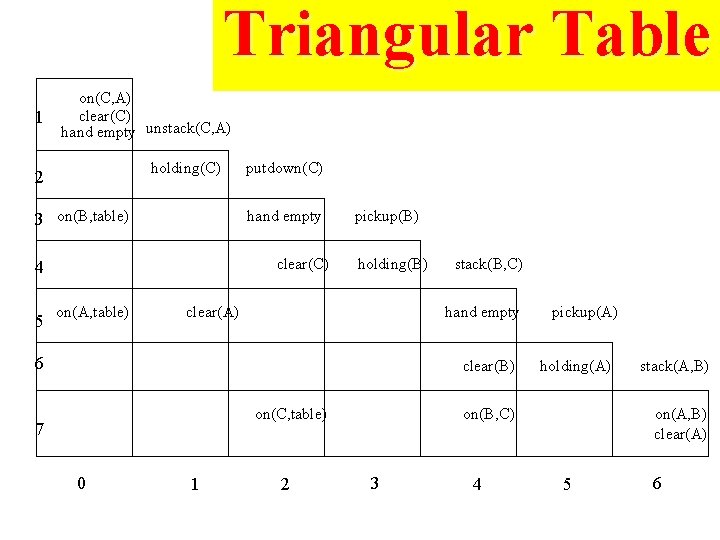

Plan for the block world problem • For the given problem, Start Goal can be achieved by the following sequence : 1. Unstack(C, A) 2. Putdown(C) 3. Pickup(B) 4. Stack(B, C) 5. Pickup(A) 6. Stack(A, B) • Execution of a plan: achieved through a data structure called Triangular Table.

Triangular Table 1 on(C, A) clear(C) hand empty unstack(C, A) holding(C) 2 3 on(B, table) hand empty clear(C) 4 5 putdown(C) on(A, table) pickup(B) holding(B) clear(A) stack(B, C) hand empty 6 clear(B) on(C, table) 7 0 1 2 pickup(A) holding(A) on(B, C) 3 4 stack(A, B) on(A, B) clear(A) 5 6

Triangular Table 1. For n operations in the plan, there are : 1. (n+1) rows : 1 n+1 2. (n+1) columns : 0 n 2. At the end of the ith row, place the ith component of the plan. 3. The row entries for the ith step contain the pre-conditions for the ith operation. 4. The column entries for the jth column contain the add list for the rule on the top. 5. The <i, j> th cell (where 1 ≤ i ≤ n+1 and 0≤ j ≤ n) contain the preconditions for the ith operation that are added by the jth operation. 6. The first column indicates the starting state and the last row indicates the goal state.

Triangular Table 1 on(C, A) clear(C) hand empty unstack(C, A) holding(C) 2 3 on(B, table) hand empty clear(C) 4 5 putdown(C) on(A, table) pickup(B) holding(B) clear(A) stack(B, C) hand empty 6 clear(B) on(C, table) 7 0 1 2 pickup(A) holding(A) on(B, C) 3 4 stack(A, B) on(A, B) clear(A) 5 6

Connection between triangular matrix and state space search

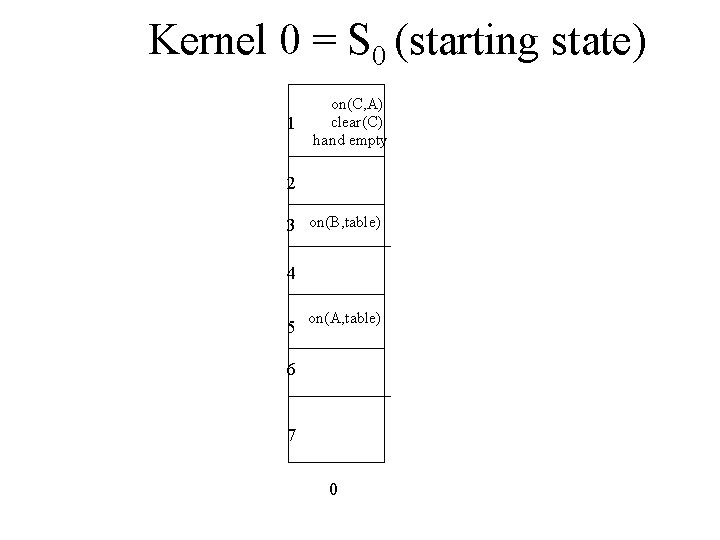

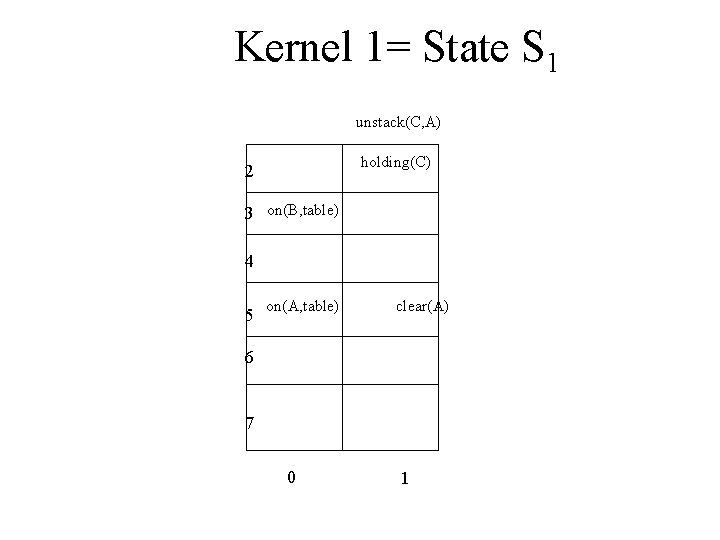

Kernel 0 = S 0 (starting state) 1 on(C, A) clear(C) hand empty 2 3 on(B, table) 4 5 on(A, table) 6 7 0

Kernel 1= State S 1 unstack(C, A) holding(C) 2 3 on(B, table) 4 5 on(A, table) clear(A) 6 7 0 1

Search in case of planning

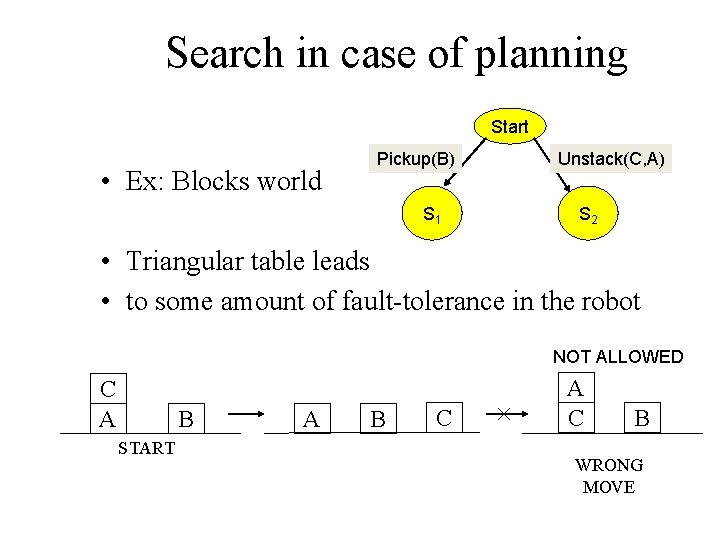

Search in case of planning Start • Ex: Blocks world Pickup(B) S 1 Unstack(C, A) S 2 • Triangular table leads • to some amount of fault-tolerance in the robot NOT ALLOWED C A B START A B C A C B WRONG MOVE

Resilience in Planning • After a wrong operation, can the robot come back to the right path ? • i. e. after performing a wrong operation, if the system again goes towards the goal, then it has resilience w. r. t. that operation • Advanced planning strategies – Hierarchical planning – Probabilistic planning – Constraint satisfaction

Importance of Kernel lr table controls the • The kernel in the execution of the plan • At any step of the execution the current state as given by the sensors is matched with the largest kernel in the perceptual world of the robot, described by the lr table

A planning agent 1. An agent interacts with the world via perception and actions 2. Perception involves sensing the world and assessing the situation – creating some internal representation of the world 3. Actions are what the agent does in the domain. 4. Planning involves reasoning about actions that the agent intends to carry out 5. Planning is the reasoning side of acting 6. This reasoning involves the representation of the world that the agent has, as also the representation of its actions. 7. Hard constraints where the objectives have to be achieved completely for success 8. The objectives could also be soft constraints, or preferences, to be achieved as much as possible

Interaction with static domain • The agent has complete information of the domain (perception is perfect), actions are instantaneous and their effects are deterministic. • The agent knows the world completely, and it can take all facts into account while planning. • The fact that actions are instantaneous implies that there is no notion of time, but only of sequencing of actions. • The effects of actions are deterministic, and therefore the agent knows what the world will be like after each action.

Two kinds of planning • Projection into the future – The planner searches through the possible combination of actions to find the plan that will work • Memory based planning – looking into the past – The agent can retrieve a plan from its memory

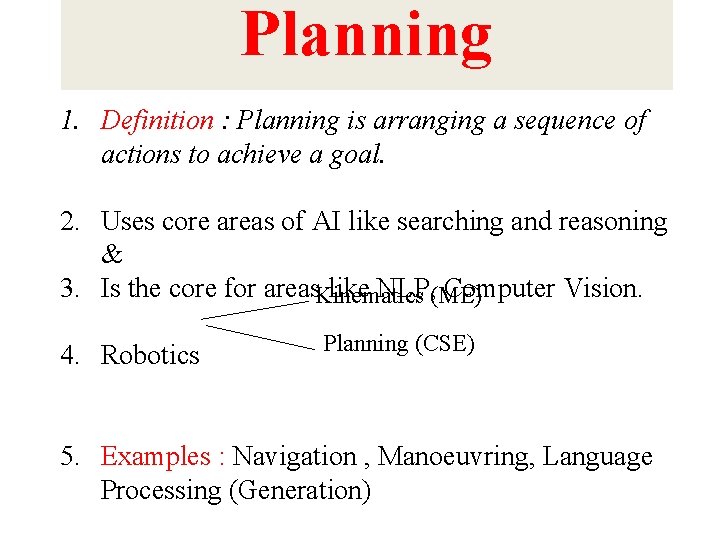

Planning 1. Definition : Planning is arranging a sequence of actions to achieve a goal. 2. Uses core areas of AI like searching and reasoning & 3. Is the core for areas. Kinematics like NLP, (ME) Computer Vision. 4. Robotics Planning (CSE) 5. Examples : Navigation , Manoeuvring, Language Processing (Generation)

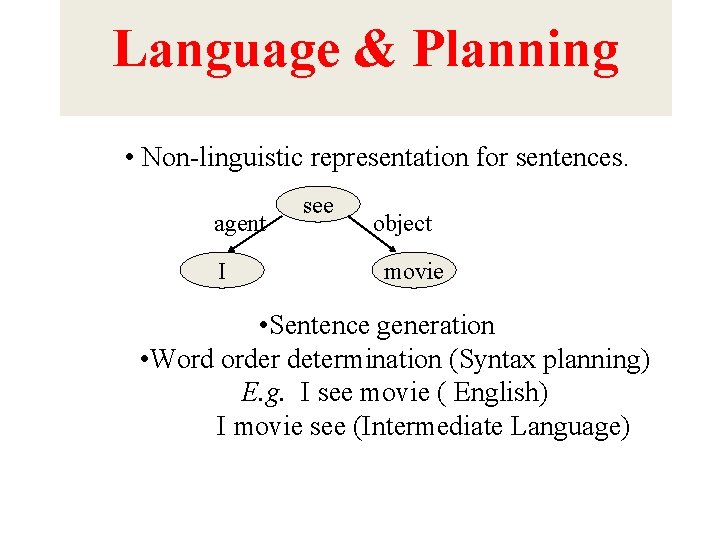

Language & Planning • Non-linguistic representation for sentences. agent I see object movie • Sentence generation • Word order determination (Syntax planning) E. g. I see movie ( English) I movie see (Intermediate Language)

Fundamentals (absolute basics for writing Prolog Programs)

Facts • John likes Mary – like(john, mary) • Names of relationship and objects must begin with a lower-case letter. • Relationship is written first (typically the predicate of the sentence). • Objects are written separated by commas and are enclosed by a pair of round brackets. • The full stop character ‘. ’ must come at the end of a fact.

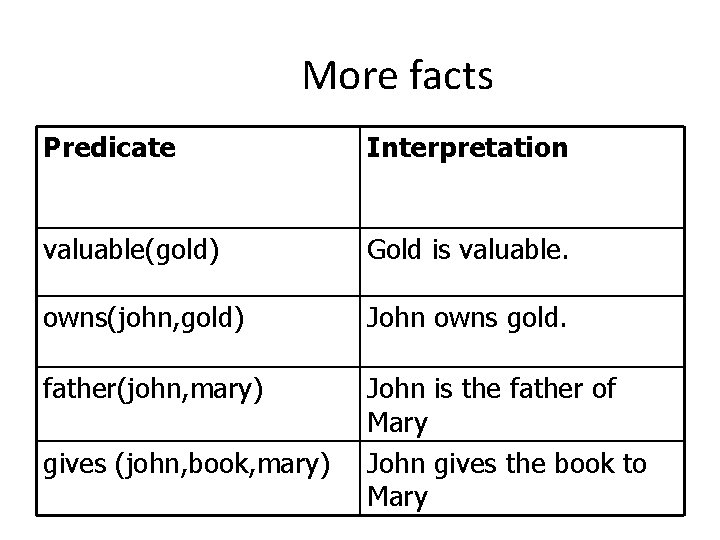

More facts Predicate Interpretation valuable(gold) Gold is valuable. owns(john, gold) John owns gold. father(john, mary) John is the father of Mary John gives the book to Mary gives (john, book, mary)

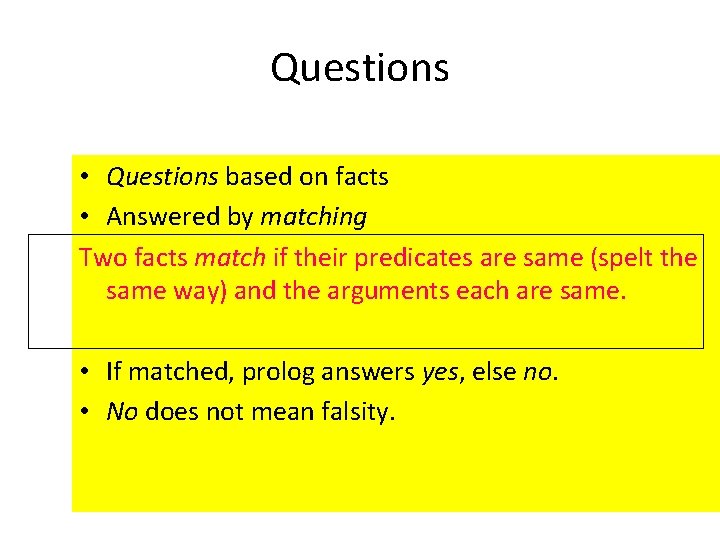

Questions • Questions based on facts • Answered by matching Two facts match if their predicates are same (spelt the same way) and the arguments each are same. • If matched, prolog answers yes, else no. • No does not mean falsity.

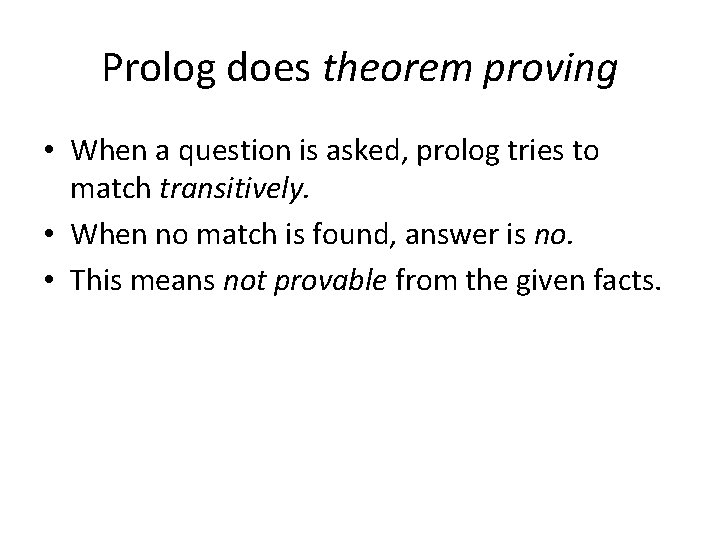

Prolog does theorem proving • When a question is asked, prolog tries to match transitively. • When no match is found, answer is no. • This means not provable from the given facts.

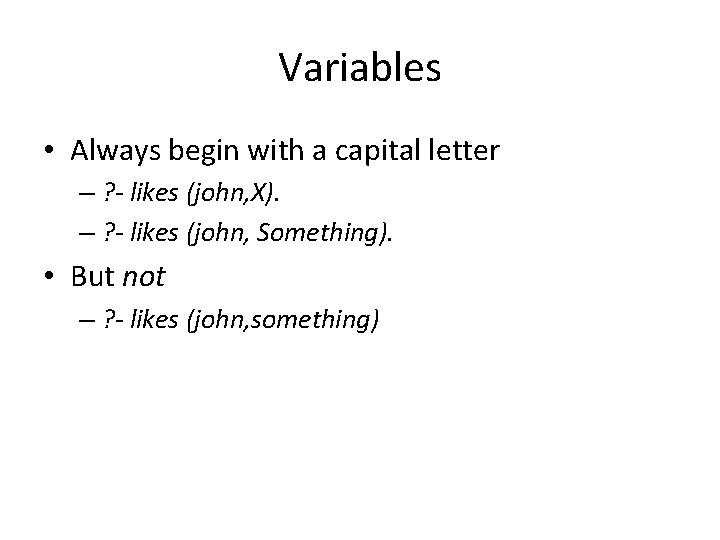

Variables • Always begin with a capital letter – ? - likes (john, X). – ? - likes (john, Something). • But not – ? - likes (john, something)

Example of usage of variable Facts: likes(john, flowers). likes(john, mary). likes(paul, mary). Question: ? - likes(john, X) Answer: X=flowers and wait ; mary ; no

Conjunctions • Use ‘, ’ and pronounce it as and. • Example – Facts: • • likes(mary, food). likes(mary, tea). likes(john, mary) • ? • likes(mary, X), likes(john, X). • Meaning is anything liked by Mary also liked by John?

Backtracking

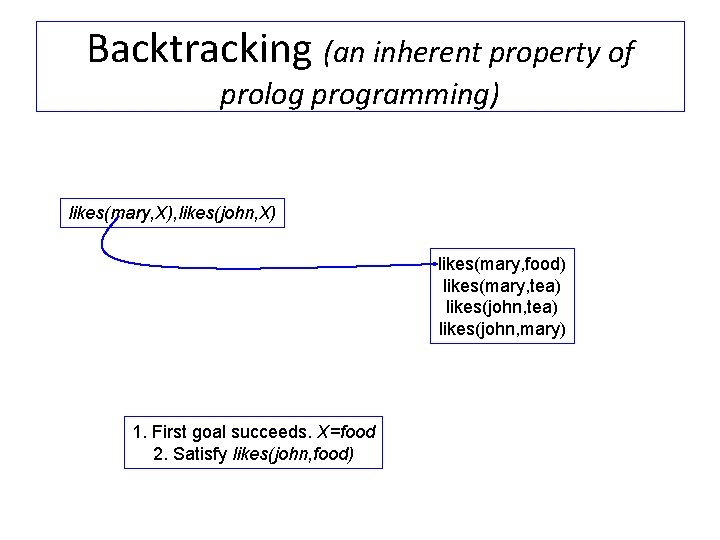

Backtracking (an inherent property of prolog programming) likes(mary, X), likes(john, X) likes(mary, food) likes(mary, tea) likes(john, mary) 1. First goal succeeds. X=food 2. Satisfy likes(john, food)

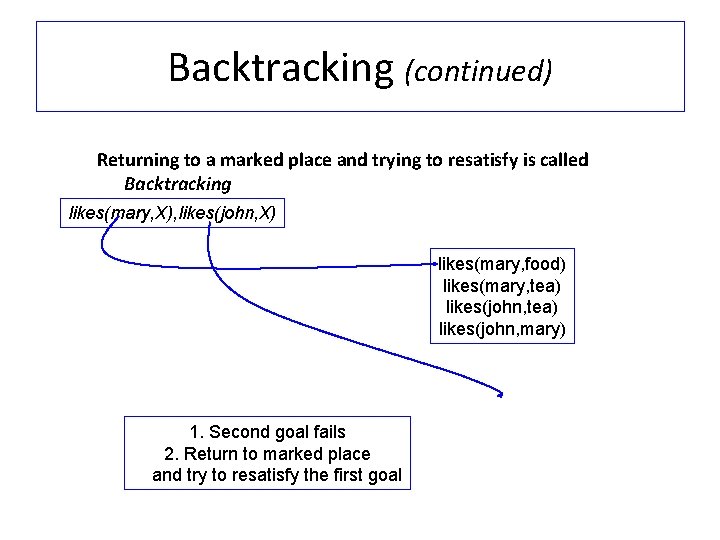

Backtracking (continued) Returning to a marked place and trying to resatisfy is called Backtracking likes(mary, X), likes(john, X) likes(mary, food) likes(mary, tea) likes(john, mary) 1. Second goal fails 2. Return to marked place and try to resatisfy the first goal

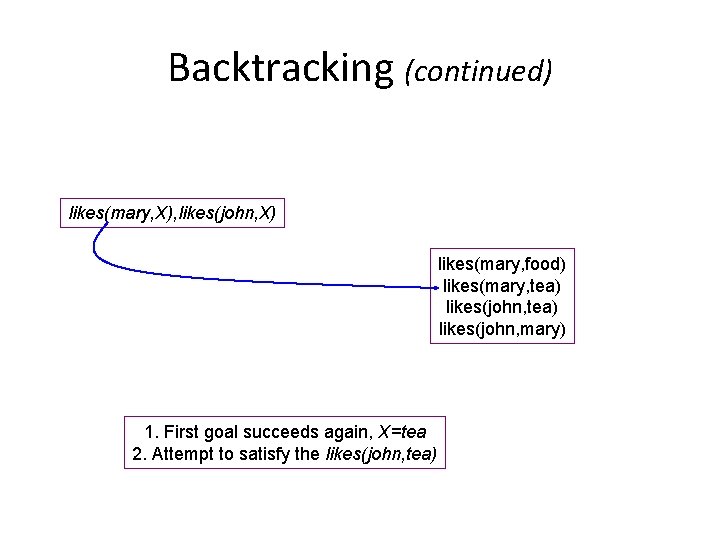

Backtracking (continued) likes(mary, X), likes(john, X) likes(mary, food) likes(mary, tea) likes(john, mary) 1. First goal succeeds again, X=tea 2. Attempt to satisfy the likes(john, tea)

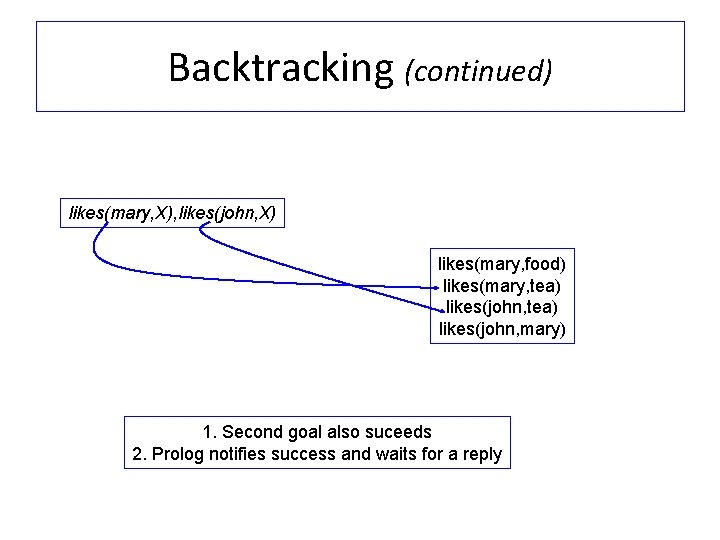

Backtracking (continued) likes(mary, X), likes(john, X) likes(mary, food) likes(mary, tea) likes(john, mary) 1. Second goal also suceeds 2. Prolog notifies success and waits for a reply

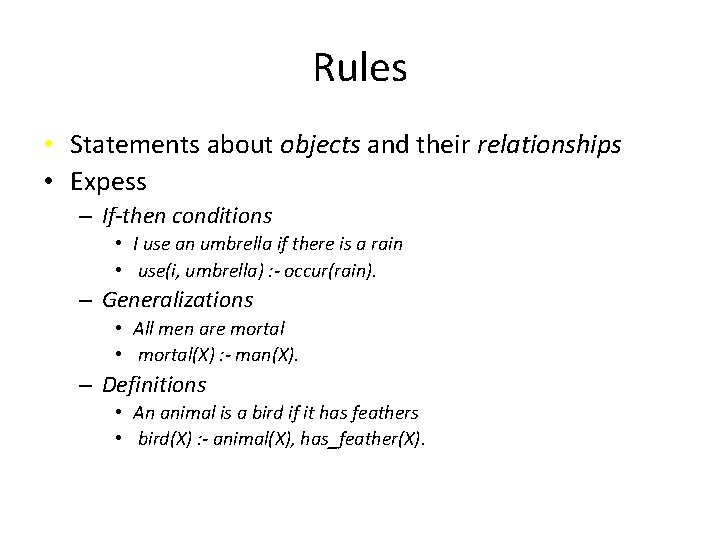

Rules • Statements about objects and their relationships • Expess – If-then conditions • I use an umbrella if there is a rain • use(i, umbrella) : - occur(rain). – Generalizations • All men are mortal • mortal(X) : - man(X). – Definitions • An animal is a bird if it has feathers • bird(X) : - animal(X), has_feather(X).

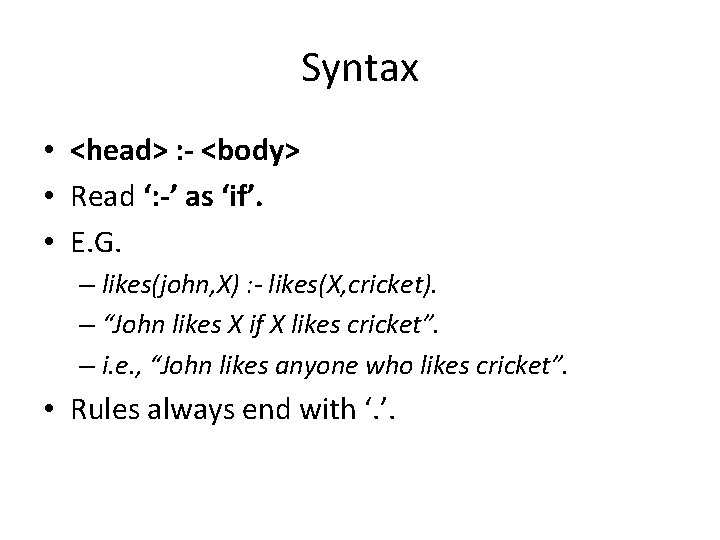

Syntax • <head> : - <body> • Read ‘: -’ as ‘if’. • E. G. – likes(john, X) : - likes(X, cricket). – “John likes X if X likes cricket”. – i. e. , “John likes anyone who likes cricket”. • Rules always end with ‘. ’.

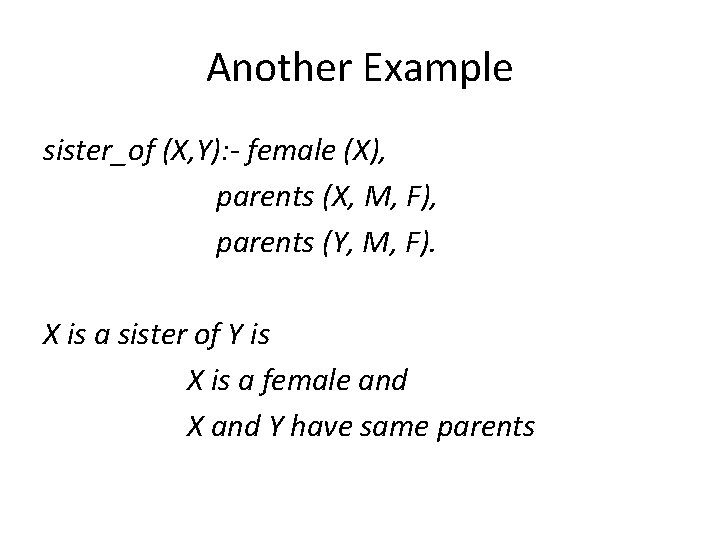

Another Example sister_of (X, Y): - female (X), parents (X, M, F), parents (Y, M, F). X is a sister of Y is X is a female and X and Y have same parents

Question Answering in presence of rules • Facts – male (ram). – male (shyam). – female (sita). – female (gita). – parents (shyam, gita, ram). – parents (sita, gita, ram).

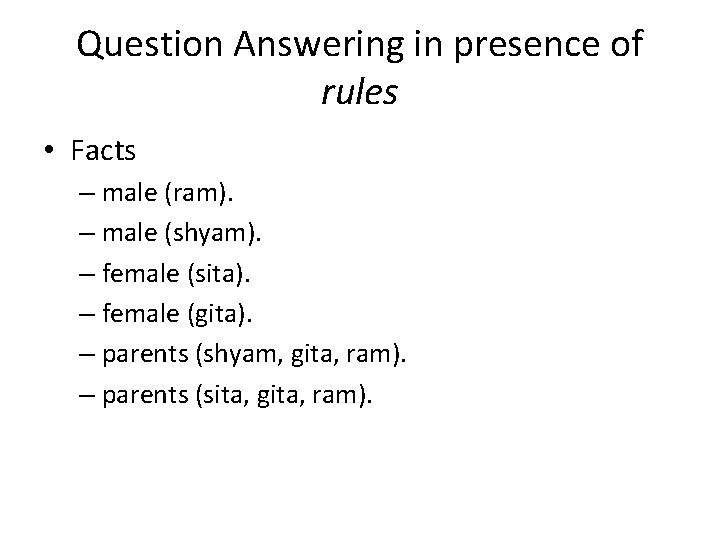

Question Answering: Y/N type: is sita the sister of shyam? ? - sister_of (sita, shyam) female(sita) parents(sita, M, F) parents(shyam, gita, ram) parents(sita, gita, ram) success

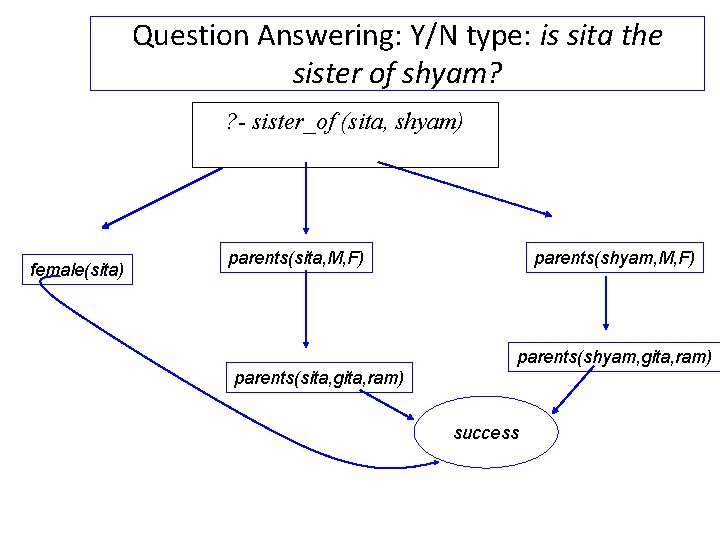

Question Answering: wh-type: whose sister is sita? ? - sister_of (sita, X) female(sita) parents(sita, M, F) parents(Y, gita, ram) parents(sita, gita, ram) parents(shyam, gita, ram) Success Y=shyam

Exercise 1. From the above it is possible for somebody to be her own sister. 2. How can this be prevented?

Summary • A Plan is a sequence of actions that changes the state of the world from an Initial state to a Goal state. • Planning can be considered as a logical inference problem. • STRIPS is a classic planning language. – It represents the state of the world as a list of facts. – Operators (actions) can be applied to the world if their preconditions hold. • The effect of applying an operator is to add and delete states from the world. • A linear planner can be easily implemented in Prolog by: – representing operators as opn(Name, [Pre. Cons], [Add], [Delete]). – choosing operators and applying them in a depth-first manner, – using backtracking-through-failure to try multiple operators. • Means End Analysis performs backwards planning with is more efficient.

Sources Pushpak Bhattacharyya Ivan Bratko Tim Smith

- Slides: 119