Progress Report 20161228 ICPADS 16 Keynote Database Meets

Progress Report 2016/12/28

ICPADS’ 16 Keynote �Database Meets Deep Learning: Challenges and Opportunities �Io. T: Towards a Connected Era— Research Direction and Social Impacts �On Sensorless Sensing for the Internet of Everything �Theory and Optimization of Multi-core Memory Performance

Database Meets Deep Learning: Challenges and Opportunities �Deep Learning(DL) is difficult to: ◦ Understand (its effectiveness) ◦ Tune the hyper-parameters ◦ Optimize (the training speed and memory usage) �Apply database techniques to DL ◦ For efficiency optimization ◦ For memory optimization �Why? ◦ Database operations are “predictable”.

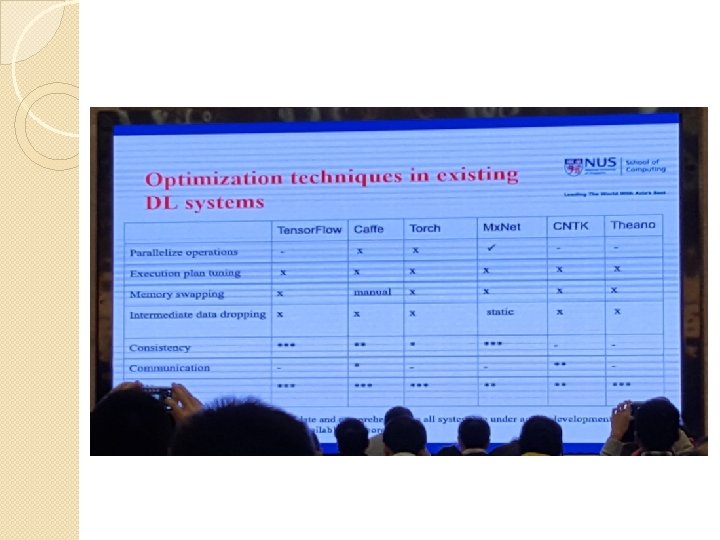

Efficiency Optimization �To improve the training speed of DL on a single device(GPU or CPU device) ◦ All operations of one iteration create a dataflow graph. ◦ Existing systems either do static(Theano and Tensor. Flow) or dynamic(Mx. Net) dependency analysis to parallelize operations without data dependencies. ◦ Possible improvements: �When there are limited resources, e. g. executors(CUDA streams), there could be multiple ways of placing the operations onto the executors. �Runtime optimization by 1) collecting the cost(i. e. FLOPS) of each operation and hardware statistics 2) estimating the total cost of all plans.

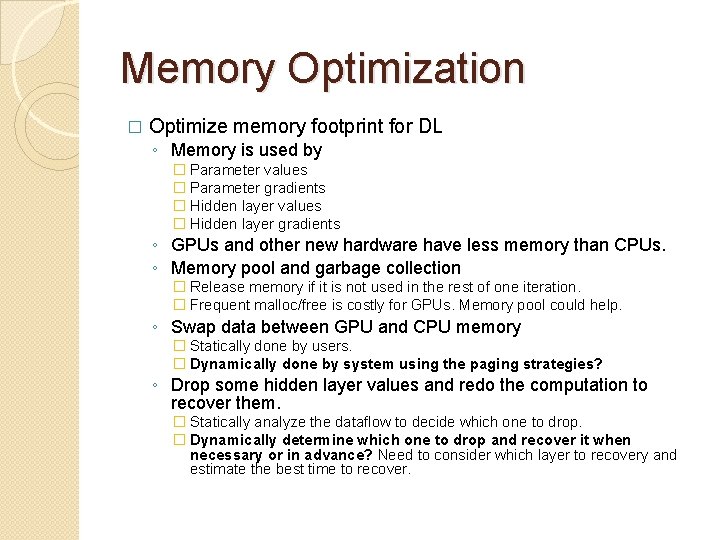

Memory Optimization � Optimize memory footprint for DL ◦ Memory is used by � Parameter values � Parameter gradients � Hidden layer values � Hidden layer gradients ◦ GPUs and other new hardware have less memory than CPUs. ◦ Memory pool and garbage collection � Release memory if it is not used in the rest of one iteration. � Frequent malloc/free is costly for GPUs. Memory pool could help. ◦ Swap data between GPU and CPU memory � Statically done by users. � Dynamically done by system using the paging strategies? ◦ Drop some hidden layer values and redo the computation to recover them. � Statically analyze the dataflow to decide which one to drop. � Dynamically determine which one to drop and recover it when necessary or in advance? Need to consider which layer to recovery and estimate the best time to recover.

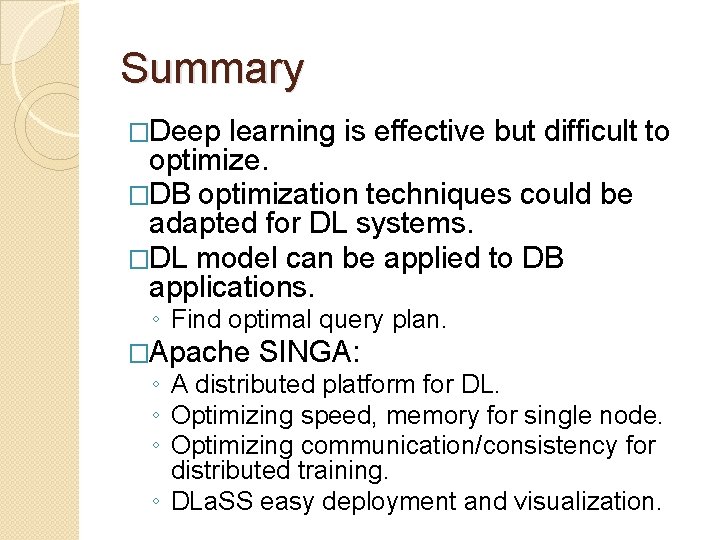

Summary �Deep learning is effective but difficult to optimize. �DB optimization techniques could be adapted for DL systems. �DL model can be applied to DB applications. ◦ Find optimal query plan. �Apache SINGA: ◦ A distributed platform for DL. ◦ Optimizing speed, memory for single node. ◦ Optimizing communication/consistency for distributed training. ◦ DLa. SS easy deployment and visualization.

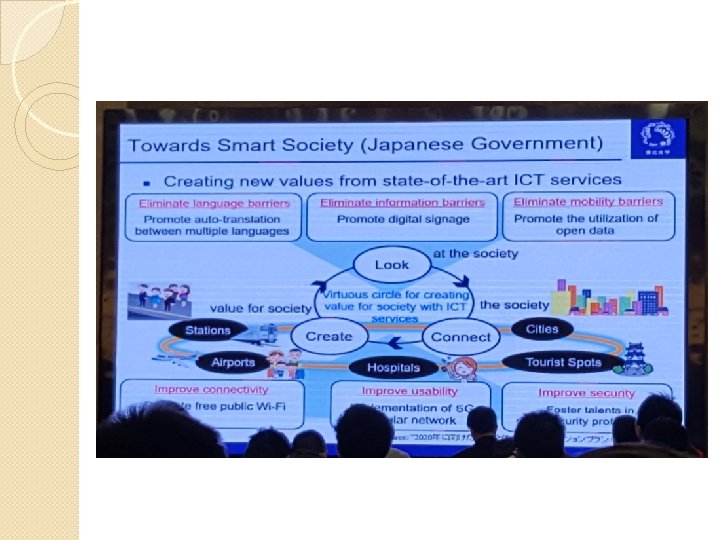

Io. T: Towards a Connected Era— Research Direction and Social Impacts �Network society = Ubiquitous society ◦ From vertical integration to horizontal integration. �Io. T can help: ◦ Elderly care, traffic for elderly, local industry, agriculture revolution, smart city, … ◦ Toward a better society with open data.

Core Technology �Big data processing �Edge Computing ◦ Pushing the frontier of computing applications, data, and services away from centralized nodes to the logical extremes of a network. ◦ Enables analytics and knowledge generation to occur at the source of the data. �Edge access network construction

Relay-by-Smartphone �Delivering Messages Using only Wifi without Celler Coverage ◦ 1) https: //www. youtube. com/watch? v=Jbx. KPr. PF 6 JQ ◦ 2) https: //www. youtube. com/watch? v=d. VC 4 RLWMBng ◦ 3) https: //www. youtube. com/watch? v=KXvph. DBr 5 k. E ◦ 4) https: //www. youtube. com/watch? v=X 7 usb 2 ld. HBs

On Sensorless Sensing for the Internet of Everything �The barriers for long-term and large scale sensor networks and Io. T: ◦ 1) Hardware cost and its maintenance ◦ 2) Energy �Possible solutions: ◦ 1) Crowd-sourcing and participatory sensing. ◦ 2) backscatter to solve the energy problem.

Sensorless Sensing �Applications: ◦ Intrusion detection ◦ Healthcare monitoring ◦ Human-Computer Interaction �Benefits: ◦ Wireless sensing without wire. ◦ Contactless sensing without wearable sensors. ◦ Sensorless sensing without dedicated sensors.

Projects �City. See 2011 -ongoing �签信通 2011 -ongoing �Green. Orbs (绿野千传) 2009 -ongoing ◦ http: //www. greenorbs. org/ �Trustworthy RFID (签若磐石) 2007 - ongoing �Ocean. Sense, Sensor Network for Sea Monitoring 2007 -2009 ◦ http: //www. cse. ust. hk/~liu/Ocean/index. html �SASA, Coal Mine Monitoring with Sensors 2005 -2008

Theory and Optimization of Multicore Memory Performance �Memory is multi-layered, hierarchical and shared. �Caching is dynamic sharing of fast memory. ◦ Parallel programs / workloads ◦ Large cache ◦ Run-time mediation between demand supply �Data-ranking / priority allocation ◦ Similar to the role of an OS scheduler for a CPU �But cache intervenes at every load/store

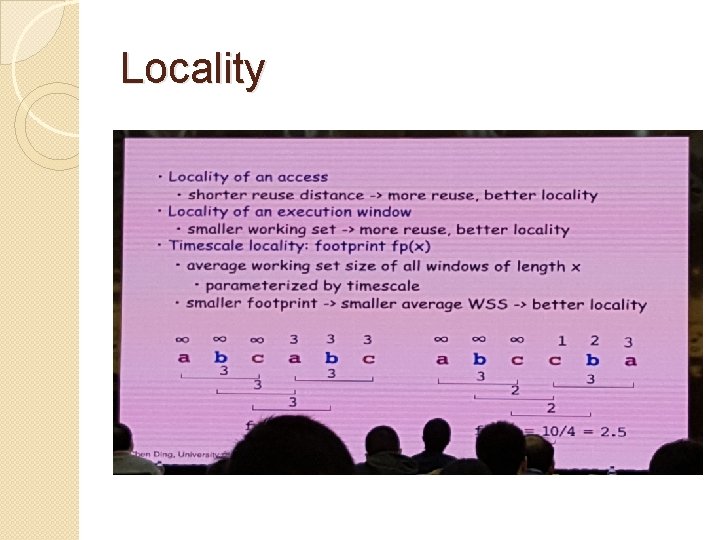

Locality

Rochester Elastic Cache Utility (RECU) �Trade off between Efficiency and Fairness. �Provides an algorithm to choose a cache partitioning for a set of programs in order to optimize the predicted cache performance. ◦ With some fairness concessions based on a baseline of each program having an equal partition. �Strict baseline.

Miss Ratio Elasticity and Cache Space Elasticity �Elastic miss ratio baseline (EMB) ◦ The program's predicted miss ratio may increase by up to some percentage compared to what it would be with the strict baseline. �Elastic cache space baseline (ECB) ◦ The program may yield at most a given percentage of its cache space to peer programs.

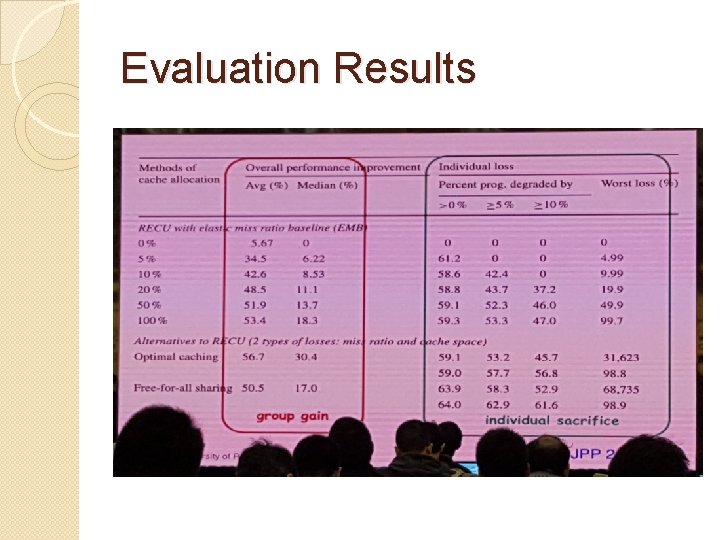

Evaluation Results

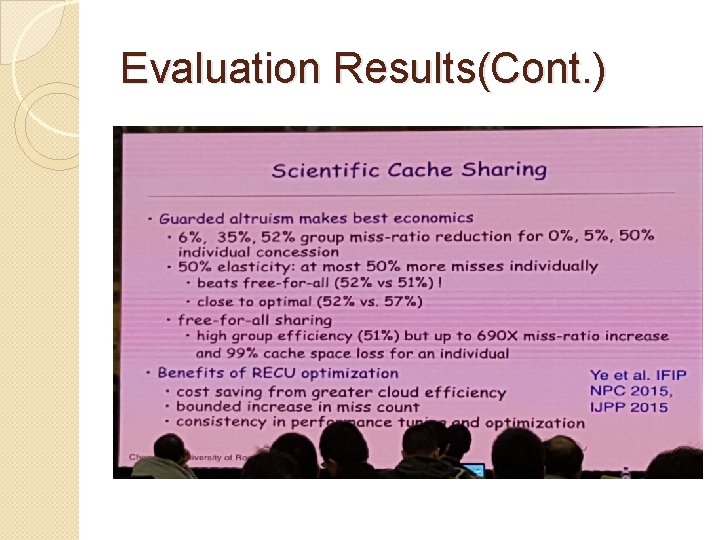

Evaluation Results(Cont. )

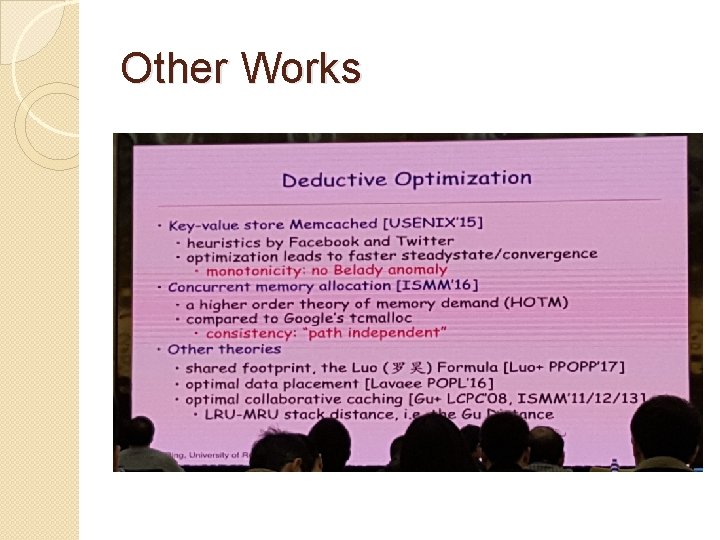

Other Works

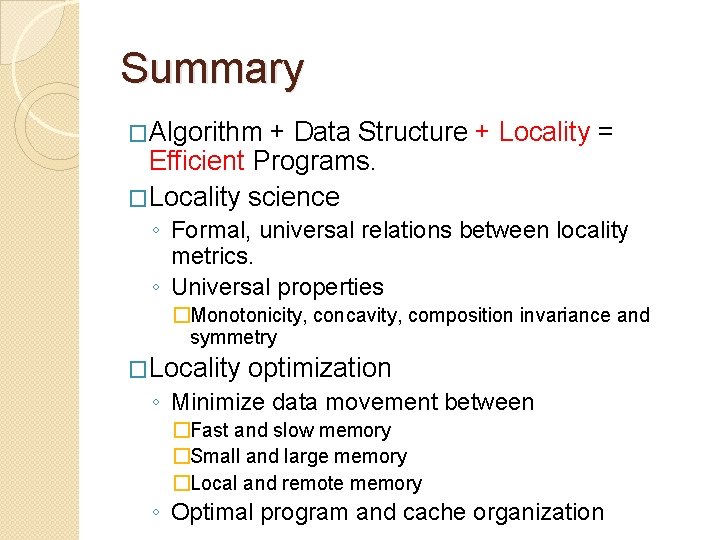

Summary �Algorithm + Data Structure + Locality = Efficient Programs. �Locality science ◦ Formal, universal relations between locality metrics. ◦ Universal properties �Monotonicity, concavity, composition invariance and symmetry �Locality optimization ◦ Minimize data movement between �Fast and slow memory �Small and large memory �Local and remote memory ◦ Optimal program and cache organization

- Slides: 23