Program Analysis Techniques for Memory Disambiguation Radu Rugina

Program Analysis Techniques for Memory Disambiguation Radu Rugina and Martin Rinard Laboratory for Computer Science Massachusetts Institute of Technology

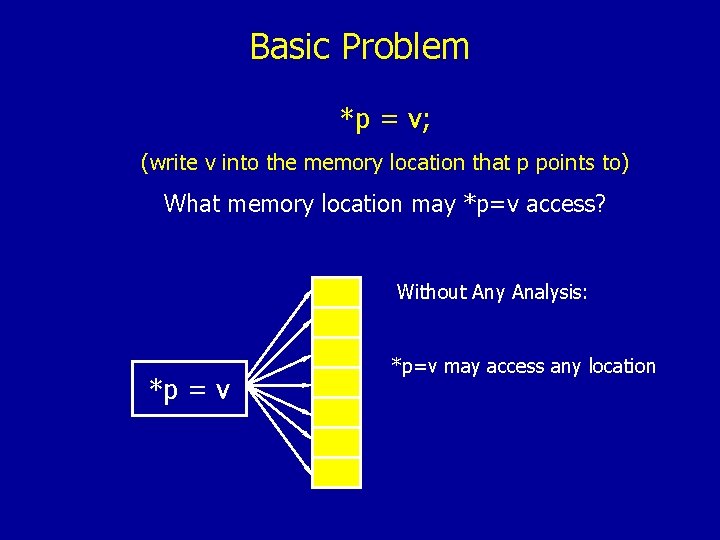

Basic Problem *p = v; (write v into the memory location that p points to) What memory location may *p=v access? Without Any Analysis: *p = v *p=v may access any location

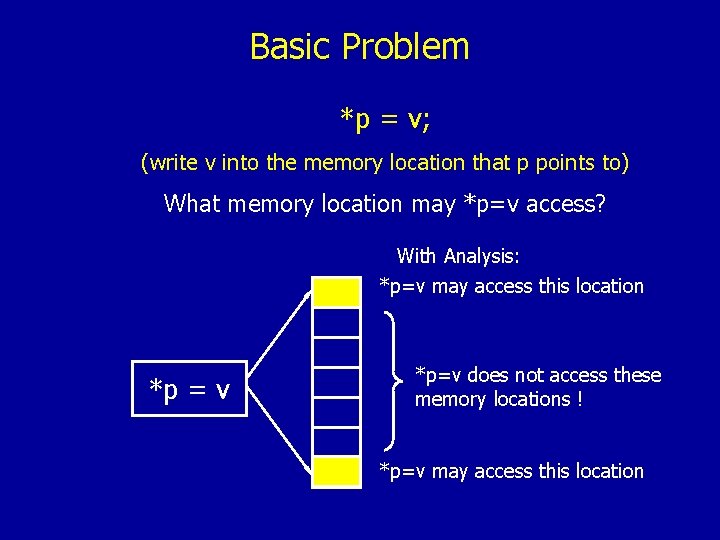

Basic Problem *p = v; (write v into the memory location that p points to) What memory location may *p=v access? With Analysis: *p=v may access this location *p = v *p=v does not access these memory locations ! *p=v may access this location

Static Memory Disambiguation Analyze the program to characterize the memory locations that statements in the program read and write Fundamental problem in program analysis with many applications

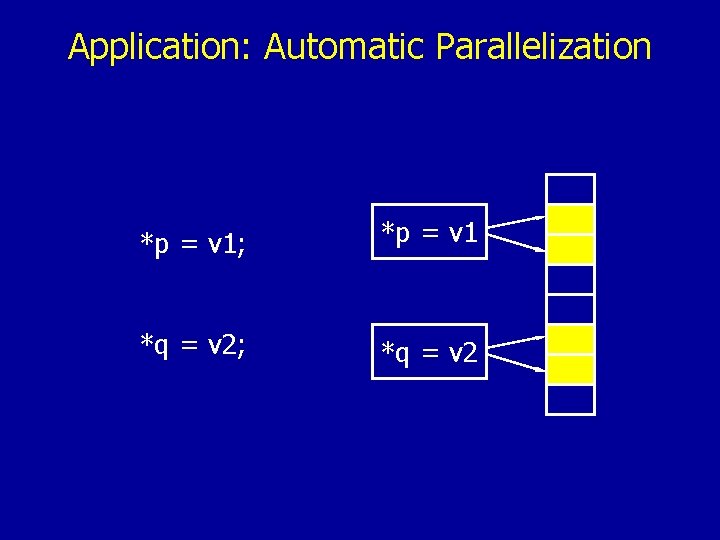

Application: Automatic Parallelization *p = v 1; *p = v 1 *q = v 2; *q = v 2

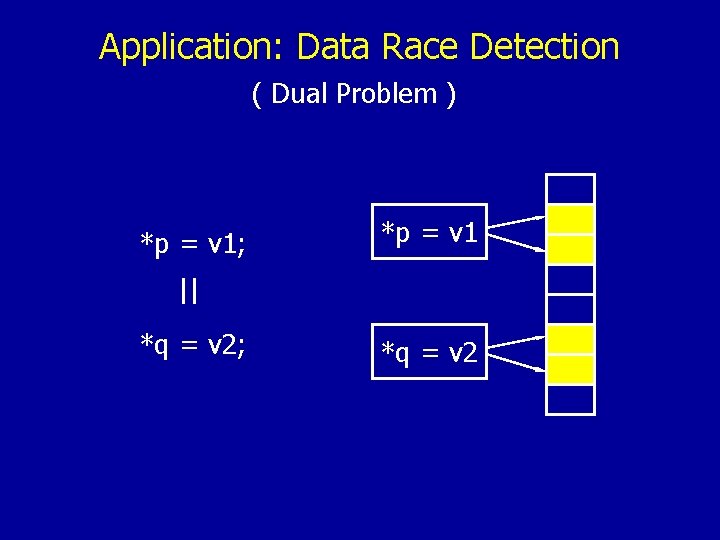

Application: Data Race Detection ( Dual Problem ) *p = v 1; *p = v 1 || *q = v 2; *q = v 2

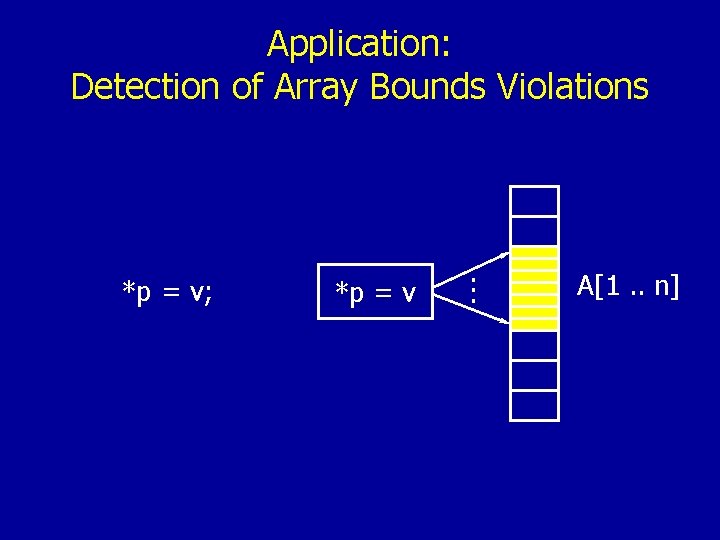

Application: Detection of Array Bounds Violations *p = v; *p = v . . . A[1. . n]

Many Other Applications • Virtually all program analyses, transformations, and validations require information about how the program accesses memory • Foundation for other analyses and transformations • Understand, maintain, debug programs • Give security guarantees

Analysis Techniques for Memory Disambiguation Pointer Analysis Disambiguates memory accesses via pointers Symbolic Analysis Characterizes accessed subregions within dynamically allocated memory blocks

1. Pointer Analysis

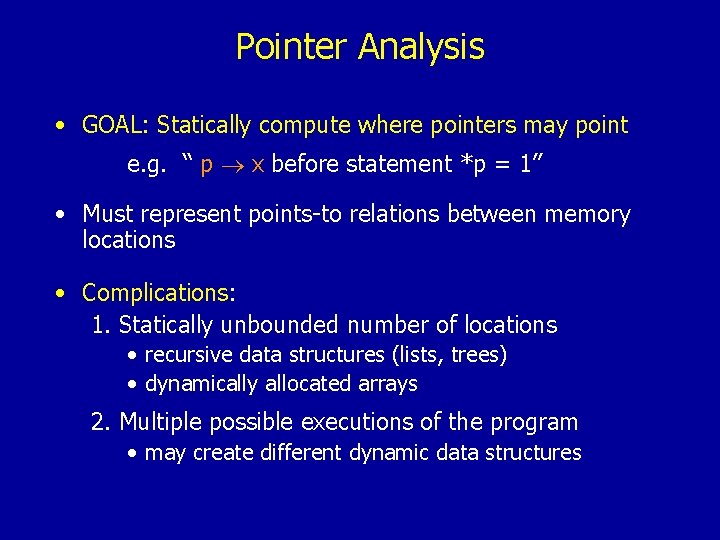

Pointer Analysis • GOAL: Statically compute where pointers may point e. g. “ p x before statement *p = 1” • Must represent points-to relations between memory locations • Complications: 1. Statically unbounded number of locations • recursive data structures (lists, trees) • dynamically allocated arrays 2. Multiple possible executions of the program • may create different dynamic data structures

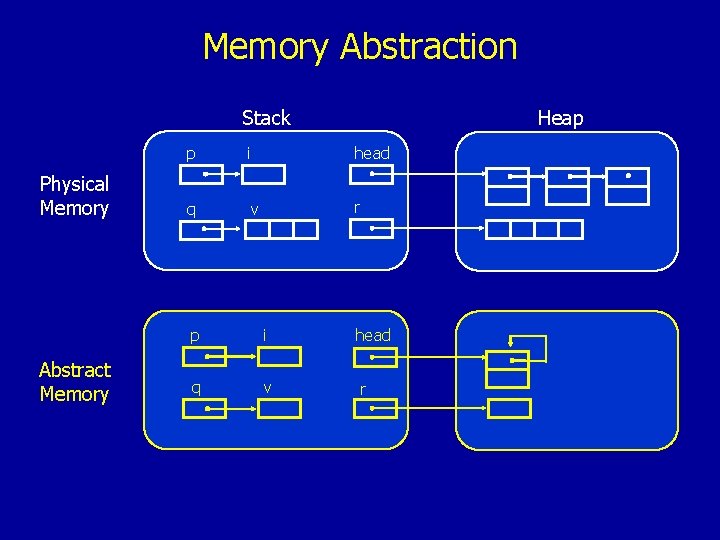

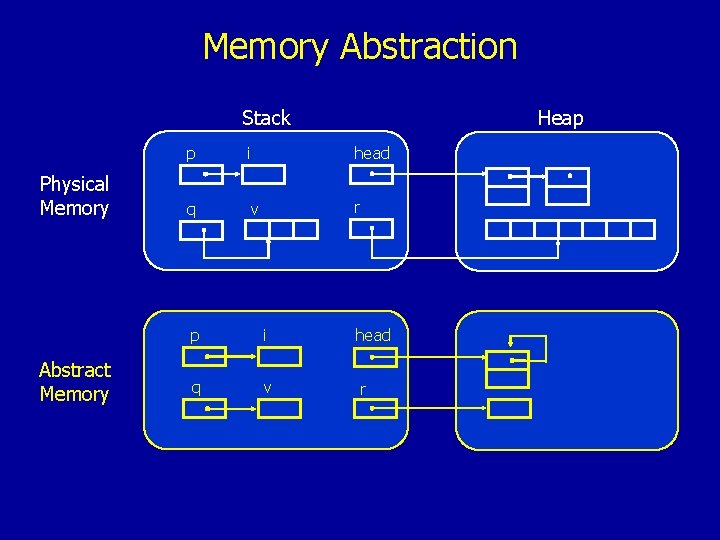

Memory Abstraction Stack Physical Memory Abstract Memory Heap p i head q v r

Memory Abstraction Stack Physical Memory Abstract Memory Heap p i head q v r

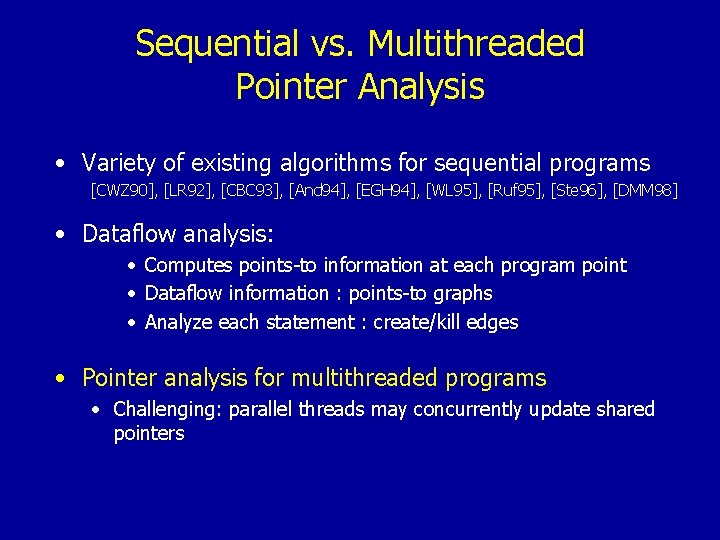

Sequential vs. Multithreaded Pointer Analysis • Variety of existing algorithms for sequential programs [CWZ 90], [LR 92], [CBC 93], [And 94], [EGH 94], [WL 95], [Ruf 95], [Ste 96], [DMM 98] • Dataflow analysis: • Computes points-to information at each program point • Dataflow information : points-to graphs • Analyze each statement : create/kill edges • Pointer analysis for multithreaded programs • Challenging: parallel threads may concurrently update shared pointers

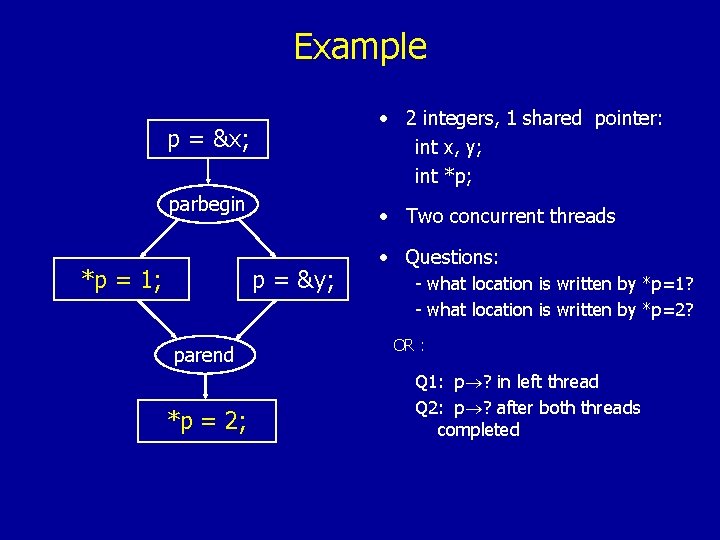

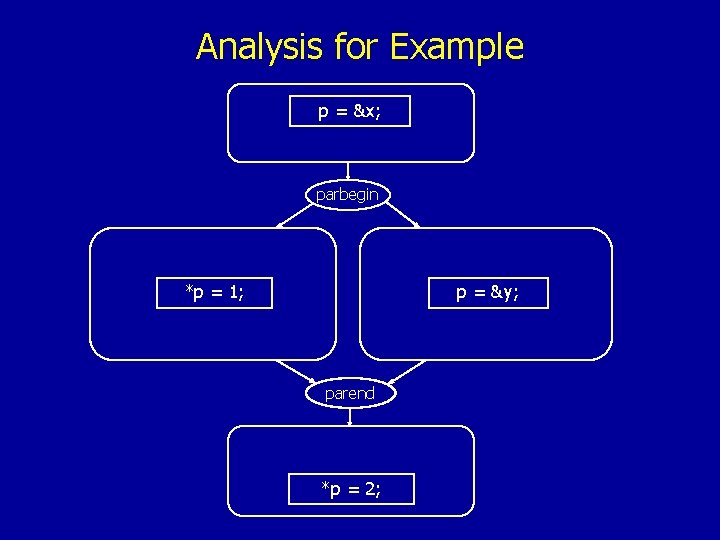

Example • 2 integers, 1 shared pointer: int x, y; int *p; p = &x; parbegin *p = 1; • Two concurrent threads p = &y; parend *p = 2; • Questions: - what location is written by *p=1? - what location is written by *p=2? OR : Q 1: p ? in left thread Q 2: p ? after both threads completed

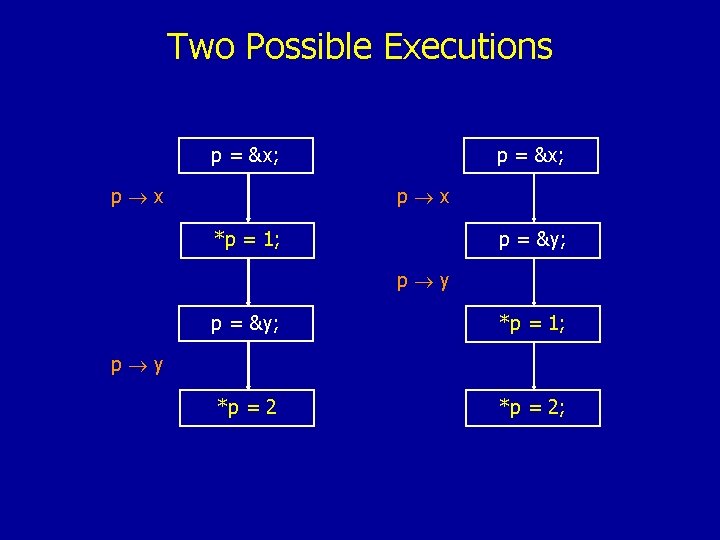

Two Possible Executions p = &x; p x *p = 1; p = &y; p y p = &y; *p = 1; *p = 2; p y

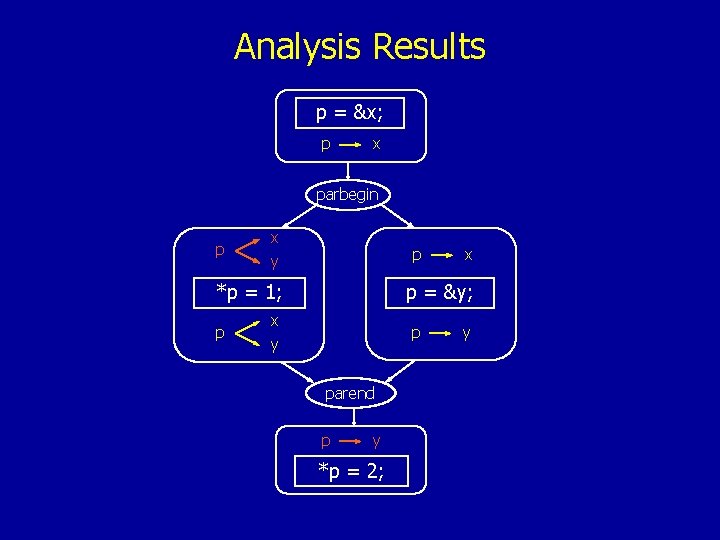

Analysis Results p = &x; p x parbegin p x y p *p = 1; p x p = &y; x y p parend p y *p = 2; y

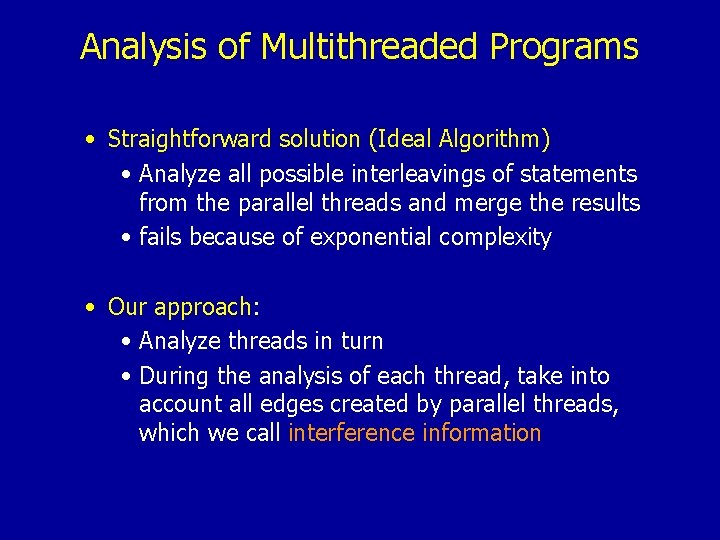

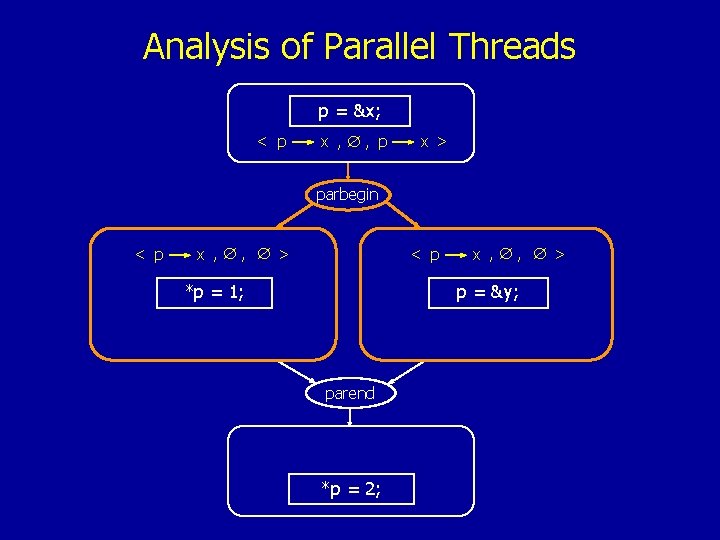

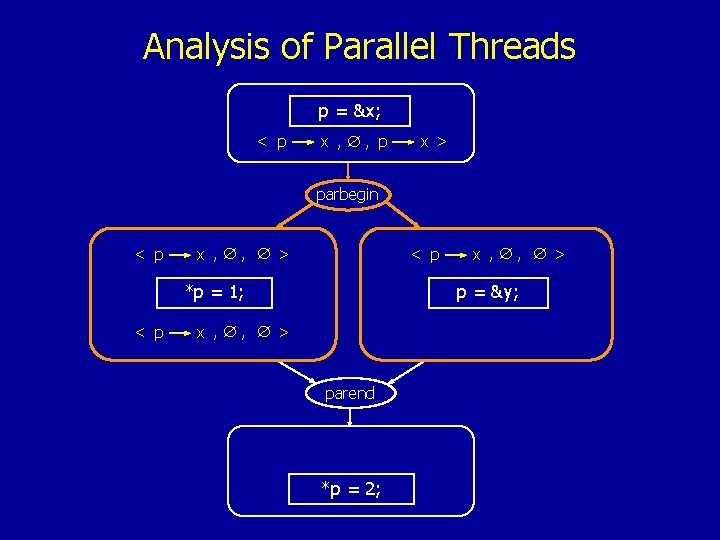

Analysis of Multithreaded Programs • Straightforward solution (Ideal Algorithm) • Analyze all possible interleavings of statements from the parallel threads and merge the results • fails because of exponential complexity • Our approach: • Analyze threads in turn • During the analysis of each thread, take into account all edges created by parallel threads, which we call interference information

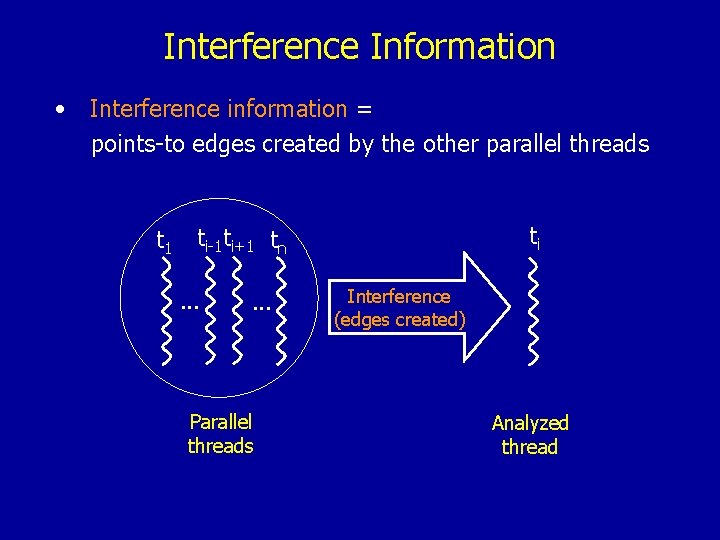

Interference Information • Interference information = points-to edges created by the other parallel threads t 1 ti ti-1 ti+1 tn. . . Parallel threads Interference (edges created) Analyzed thread

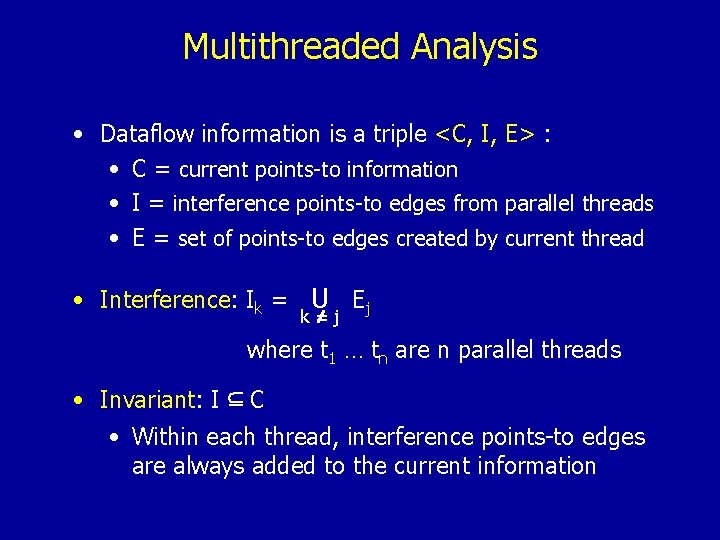

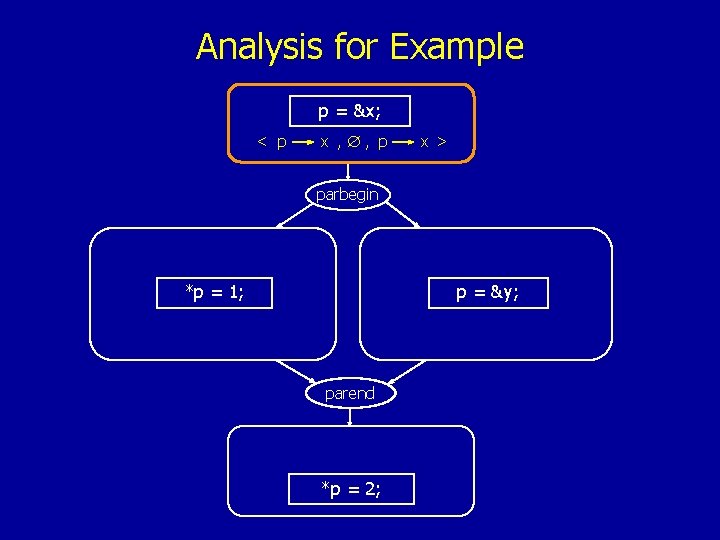

Multithreaded Analysis • Dataflow information is a triple <C, I, E> : • C = current points-to information • I = interference points-to edges from parallel threads • E = set of points-to edges created by current thread • Interference: Ik = U Ej k=j where t 1 … tn are n parallel threads • Invariant: I C • Within each thread, interference points-to edges are always added to the current information

Analysis for Example p = &x; parbegin *p = 1; p = &y; parend *p = 2;

Analysis for Example p = &x; < p x , , p x > parbegin *p = 1; p = &y; parend *p = 2;

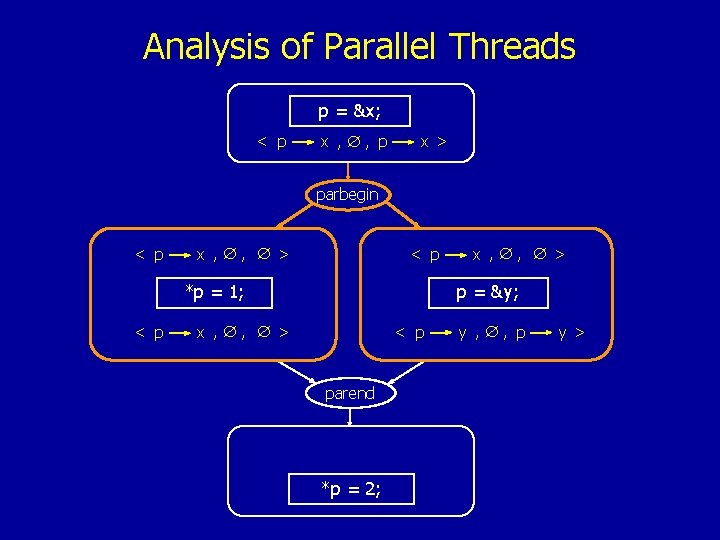

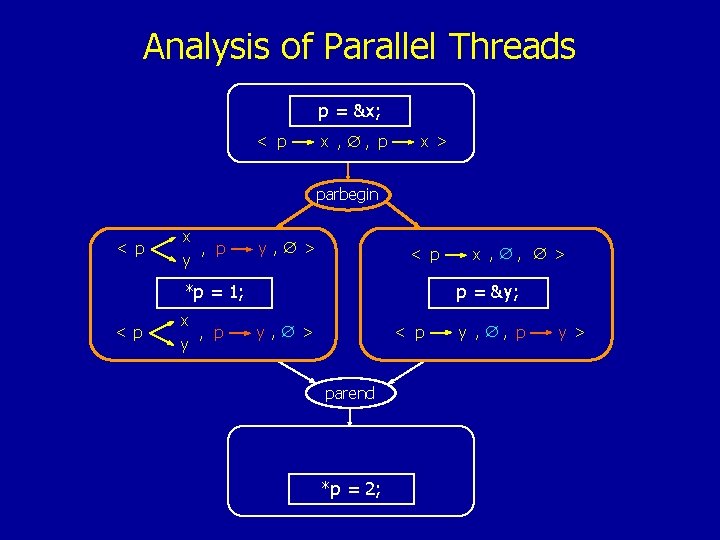

Analysis of Parallel Threads p = &x; < p x , , p x > parbegin < p x , , > < p *p = 1; x , , > p = &y; parend *p = 2;

Analysis of Parallel Threads p = &x; < p x , , p x > parbegin < p x , , > < p *p = 1; < p x , , > p = &y; x , , > parend *p = 2;

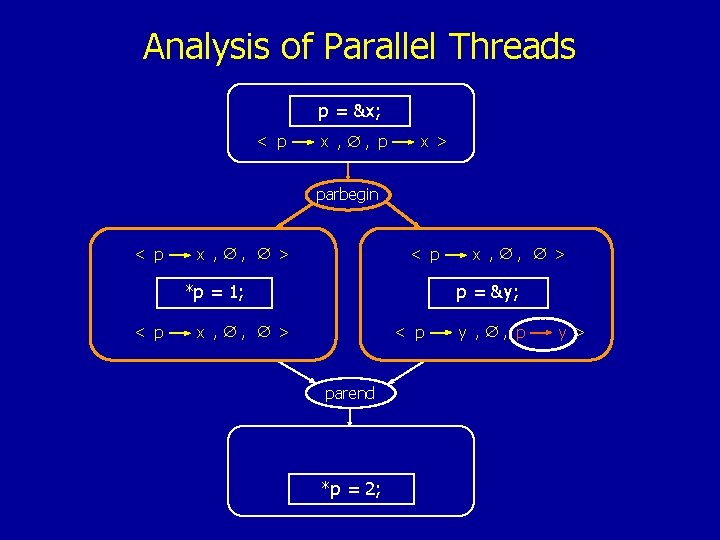

Analysis of Parallel Threads p = &x; < p x , , p x > parbegin < p x , , > < p *p = 1; < p x , , > p = &y; x , , > < p parend *p = 2; y , , p y >

Analysis of Parallel Threads p = &x; < p x , , p x > parbegin < p x , , > < p *p = 1; < p x , , > p = &y; x , , > < p parend *p = 2; y , , p y >

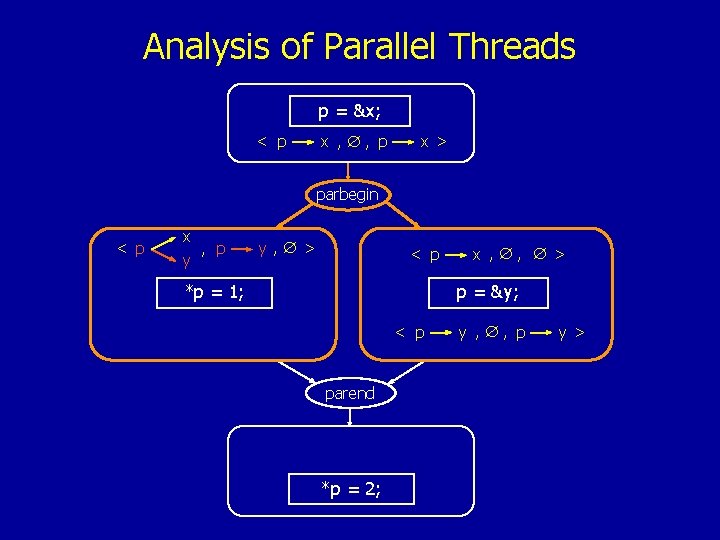

Analysis of Parallel Threads p = &x; < p x , , p x > parbegin < p x , p y y, > < p *p = 1; x , , > p = &y; < p parend *p = 2; y , , p y >

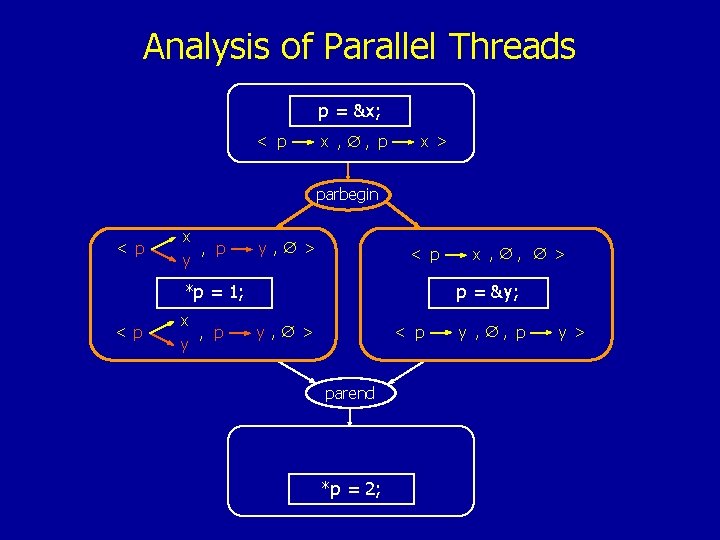

Analysis of Parallel Threads p = &x; < p x , , p x > parbegin < p x , p y y, > < p *p = 1; < p x , p y x , , > p = &y; y, > < p parend *p = 2; y , , p y >

Analysis of Parallel Threads p = &x; < p x , , p x > parbegin < p x , p y y, > < p *p = 1; < p x , p y x , , > p = &y; y, > < p parend *p = 2; y , , p y >

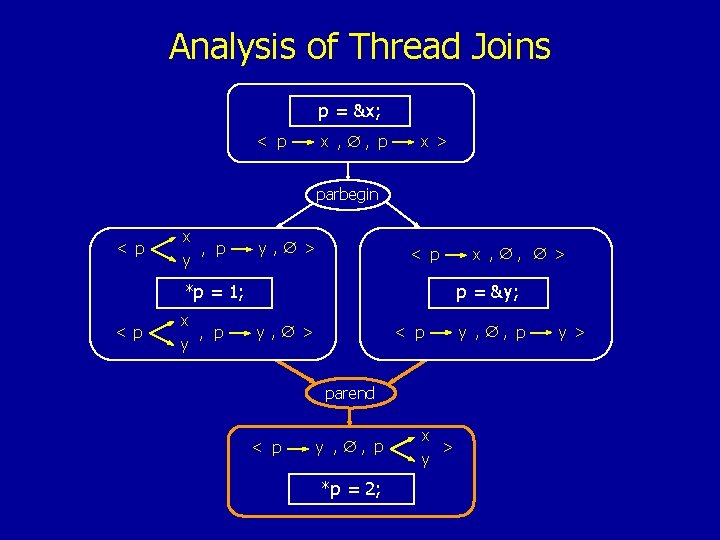

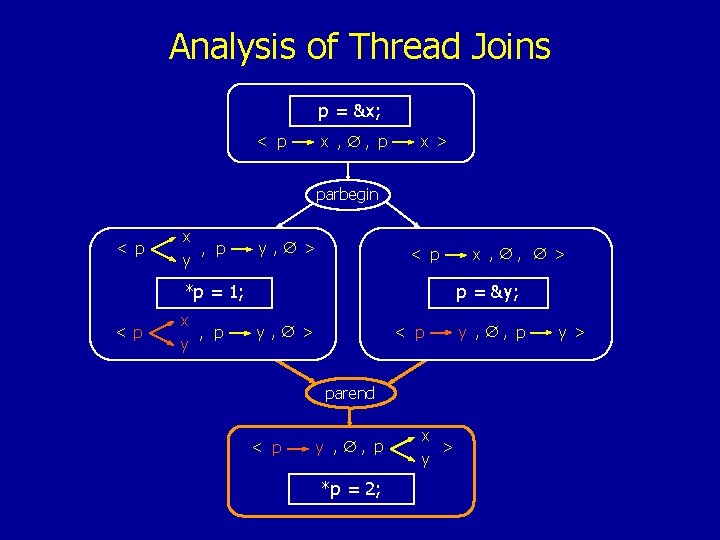

Analysis of Thread Joins p = &x; < p x , , p x > parbegin < p x , p y y, > < p *p = 1; < p x , p y x , , > p = &y; y, > < p parend < p y , , p *p = 2; x > y y , , p y >

Analysis of Thread Joins p = &x; < p x , , p x > parbegin < p x , p y y, > < p *p = 1; < p x , p y x , , > p = &y; y, > < p parend < p y , , p *p = 2; x > y y , , p y >

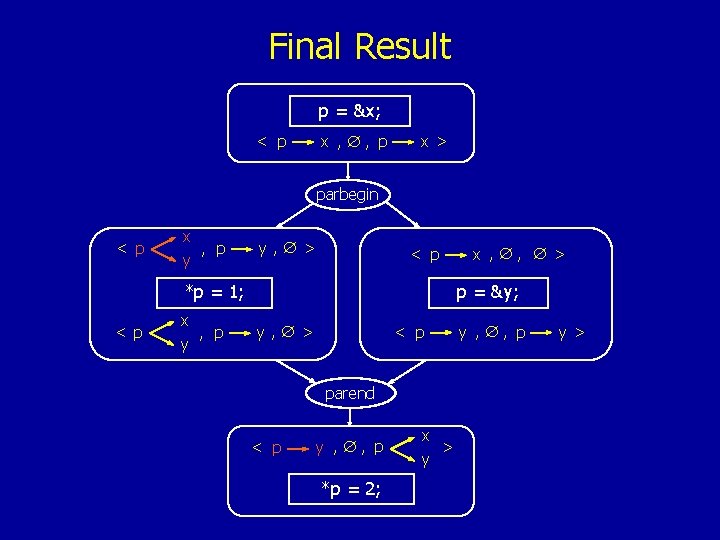

Final Result p = &x; < p x , , p x > parbegin < p x , p y y, > < p *p = 1; < p x , p y x , , > p = &y; y, > < p parend < p y , , p *p = 2; x > y y , , p y >

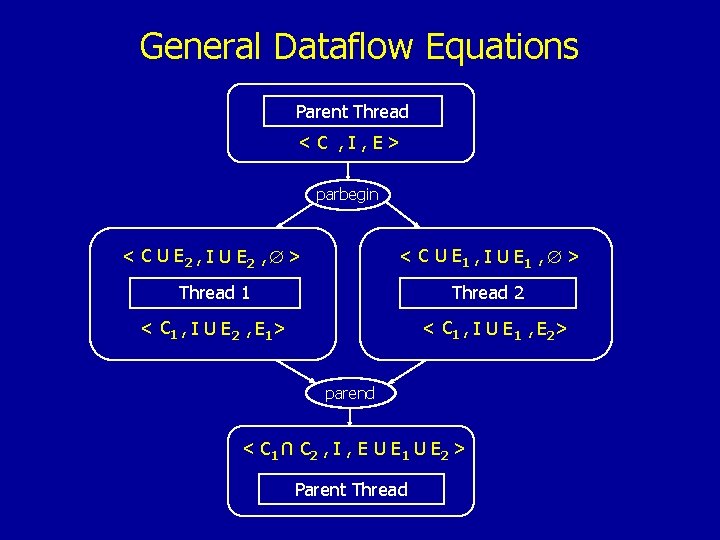

General Dataflow Equations Parent Thread < C , I , E> parbegin < C U E 2 , I U E 2 , > < C U E 1 , I U E 1 , > Thread 1 Thread 2 < C 1 , I U E 2 , E 1 > < C 1 , I U E 1 , E 2 > parend U < C 1 C 2 , I , E U E 1 U E 2 > Parent Thread

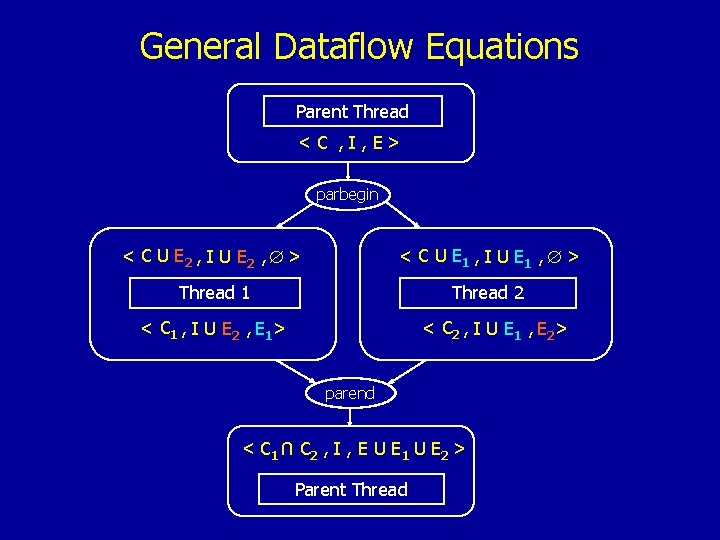

General Dataflow Equations Parent Thread < C , I , E> parbegin < C U E 2 , I U E 2 , > < C U E 1 , I U E 1 , > Thread 1 Thread 2 < C 1 , I U E 2 , E 1 > < C 2 , I U E 1 , E 2 > parend U < C 1 C 2 , I , E U E 1 U E 2 > Parent Thread

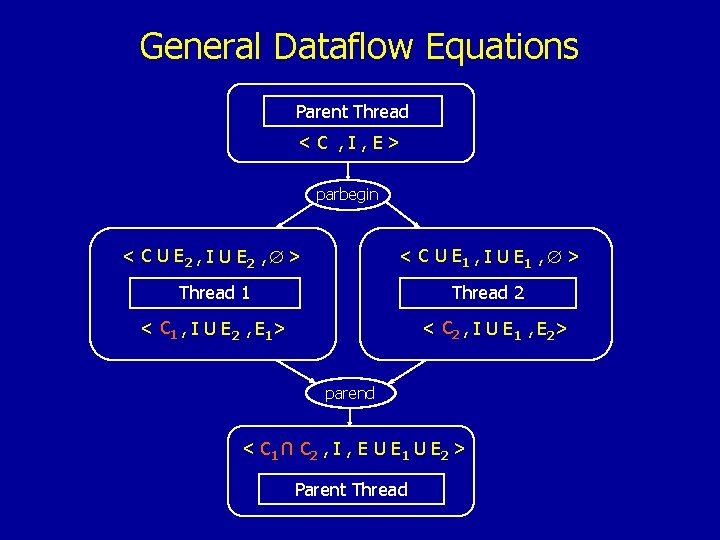

General Dataflow Equations Parent Thread < C , I , E> parbegin < C U E 2 , I U E 2 , > < C U E 1 , I U E 1 , > Thread 1 Thread 2 < C 1 , I U E 2 , E 1 > < C 2 , I U E 1 , E 2 > parend U < C 1 C 2 , I , E U E 1 U E 2 > Parent Thread

Overall Algorithm • Extensions: • Parallel loops • Conditionally spawned threads • Recursively generated concurrency • Flow-sensitive at intra-procedural level • Context-sensitive at inter-procedural level

Algorithm Evaluation • Soundness : • the multithreaded algorithm conservatively approximates all possible interleavings of statements from the parallel threads • Termination of fixed-point algorithms: • follows from the monotonicity of the transfer functions • Complexity of fixed-point algorithms: • worst-case polynomial complexity: O(n 4), where n = number of statements • Precision of analysis: • if the concurrent threads do not (pointer-)interfere then this algorithm gives the same result as the Ideal Algorithm

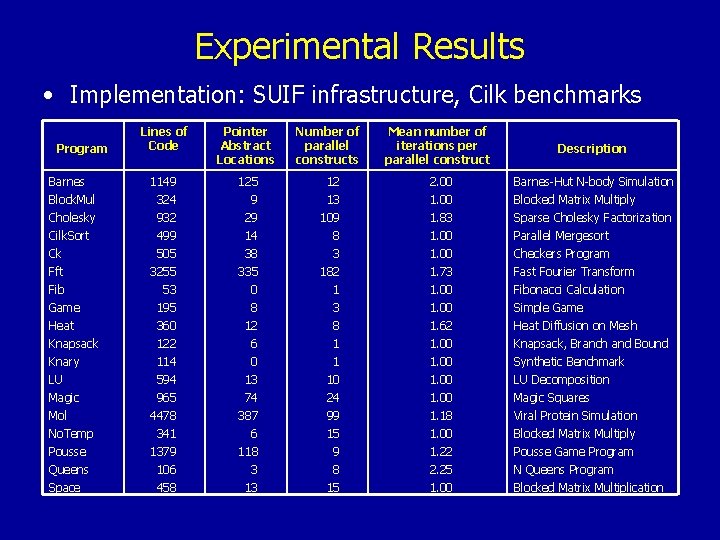

Experimental Results • Implementation: SUIF infrastructure, Cilk benchmarks Lines of Code Pointer Abstract Locations Number of parallel constructs Barnes Block. Mul Cholesky Cilk. Sort Ck Fft Fib Game Heat Knapsack Knary LU Magic Mol No. Temp 1149 324 932 499 505 3255 53 195 360 122 114 594 965 4478 341 125 9 29 14 38 335 0 8 12 6 0 13 74 387 6 12 13 109 8 3 182 1 3 8 1 1 10 24 99 15 2. 00 1. 83 1. 00 1. 73 1. 00 1. 62 1. 00 1. 18 1. 00 Barnes-Hut N-body Simulation Blocked Matrix Multiply Sparse Cholesky Factorization Parallel Mergesort Checkers Program Fast Fourier Transform Fibonacci Calculation Simple Game Heat Diffusion on Mesh Knapsack, Branch and Bound Synthetic Benchmark LU Decomposition Magic Squares Viral Protein Simulation Blocked Matrix Multiply Pousse Queens Space 1379 106 458 118 3 13 9 8 15 1. 22 2. 25 1. 00 Pousse Game Program N Queens Program Blocked Matrix Multiplication Program Mean number of iterations per parallel construct Description

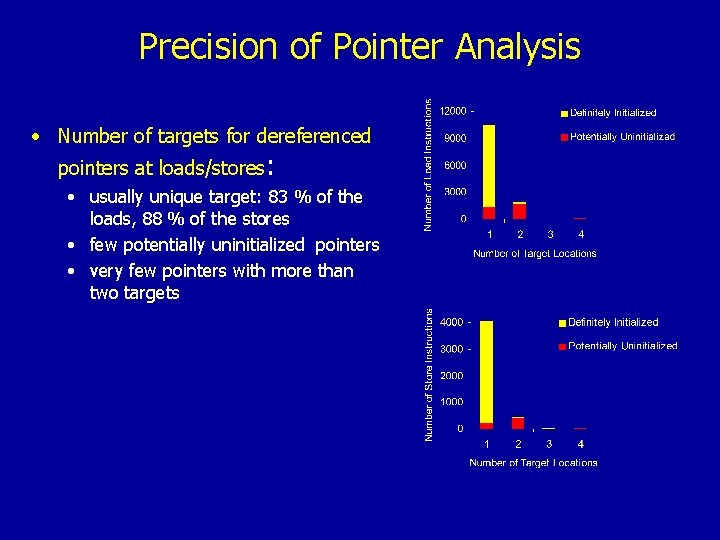

Precision of Pointer Analysis • Number of targets for dereferenced pointers at loads/stores: • usually unique target: 83 % of the loads, 88 % of the stores • few potentially uninitialized pointers • very few pointers with more than two targets

What Pointer Analysis Gives Us • Disambiguation of Memory Accesses Via Pointers • Pointer-based loads and stores: use pointer analysis results to derive the memory locations that each pointer-based load or store statement accesses • MOD-REF or READ-WRITE SETS Analysis: • All loads and stores • Procedures: use the memory access information for loads and stores to compute what locations each procedure accesses

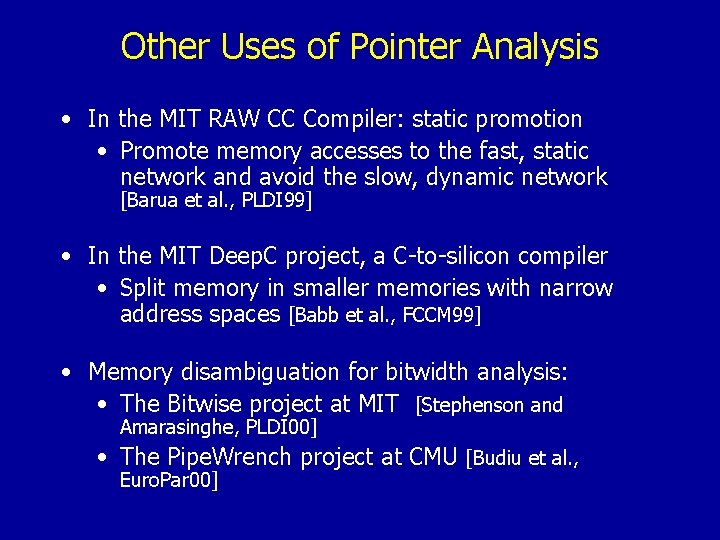

Other Uses of Pointer Analysis • In the MIT RAW CC Compiler: static promotion • Promote memory accesses to the fast, static network and avoid the slow, dynamic network [Barua et al. , PLDI 99] • In the MIT Deep. C project, a C-to-silicon compiler • Split memory in smaller memories with narrow address spaces [Babb et al. , FCCM 99] • Memory disambiguation for bitwidth analysis: • The Bitwise project at MIT [Stephenson and Amarasinghe, PLDI 00] • The Pipe. Wrench project at CMU [Budiu et al. , Euro. Par 00]

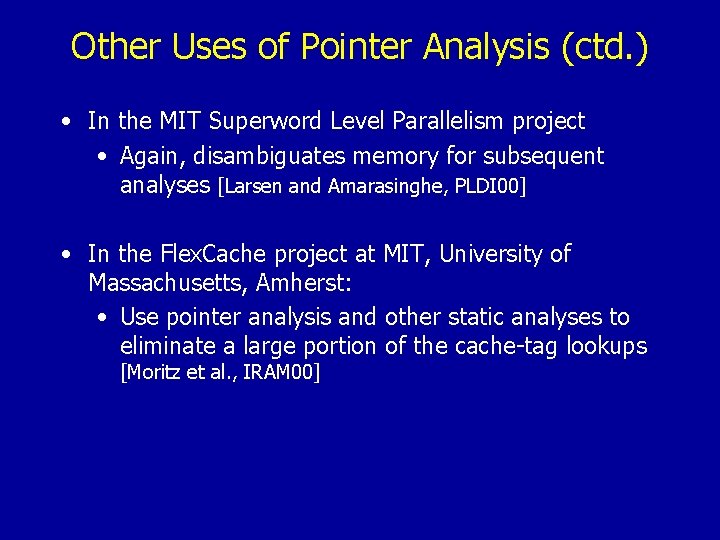

Other Uses of Pointer Analysis (ctd. ) • In the MIT Superword Level Parallelism project • Again, disambiguates memory for subsequent analyses [Larsen and Amarasinghe, PLDI 00] • In the Flex. Cache project at MIT, University of Massachusetts, Amherst: • Use pointer analysis and other static analyses to eliminate a large portion of the cache-tag lookups [Moritz et al. , IRAM 00]

Is Pointer Analysis Always Enough to Disambiguate Memory?

Is Pointer Analysis Always Enough to Disambiguate Memory? No

Is Pointer Analysis Always Enough to Disambiguate Memory? • Pointer analysis uses a memory abstraction that merges together all elements within allocated memory blocks • Sometimes need more sophisticated techniques to characterize accessed regions within allocated memory blocks

Motivating Example

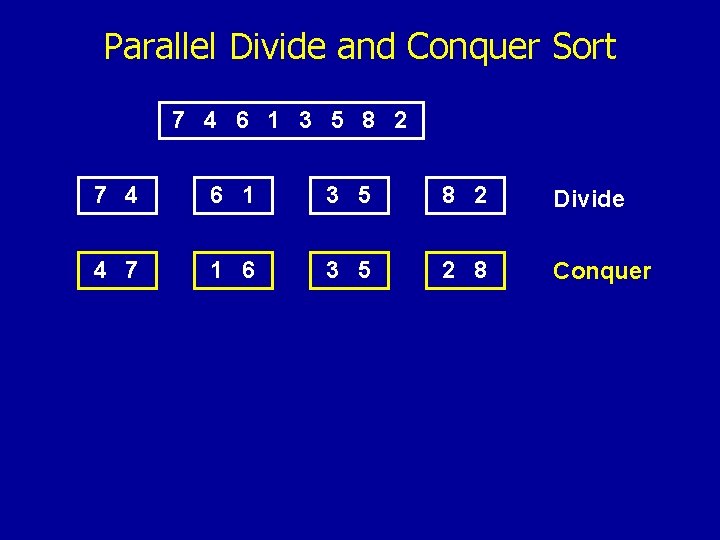

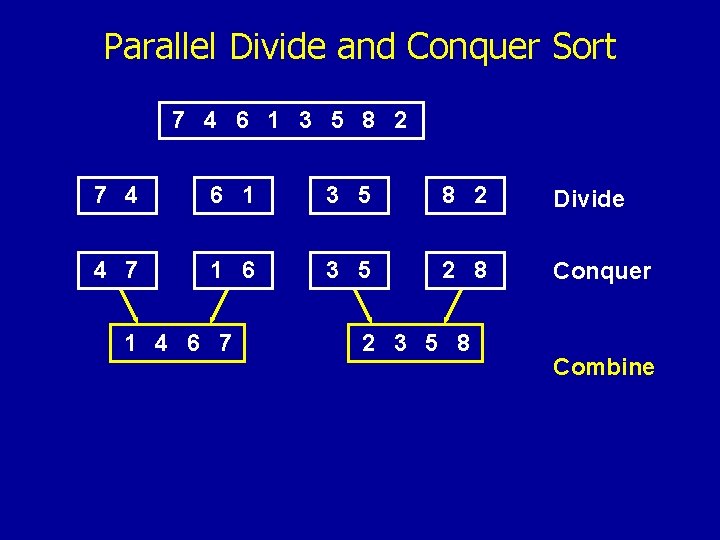

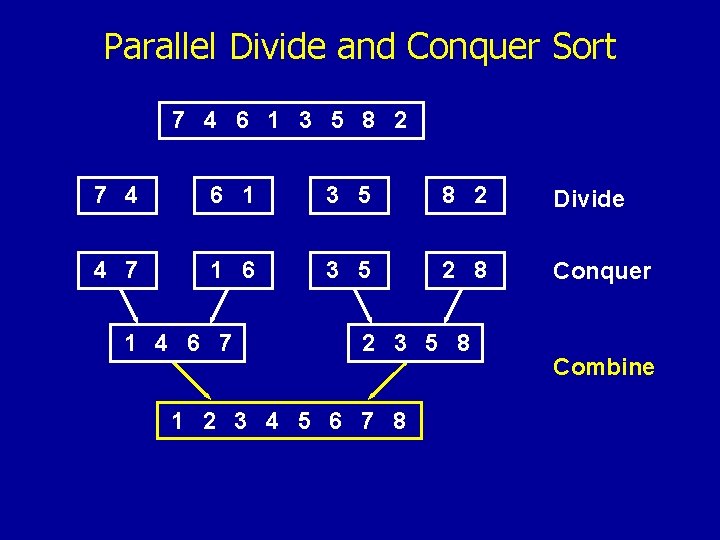

Parallel Divide and Conquer Sort 7 4 6 1 3 5 8 2

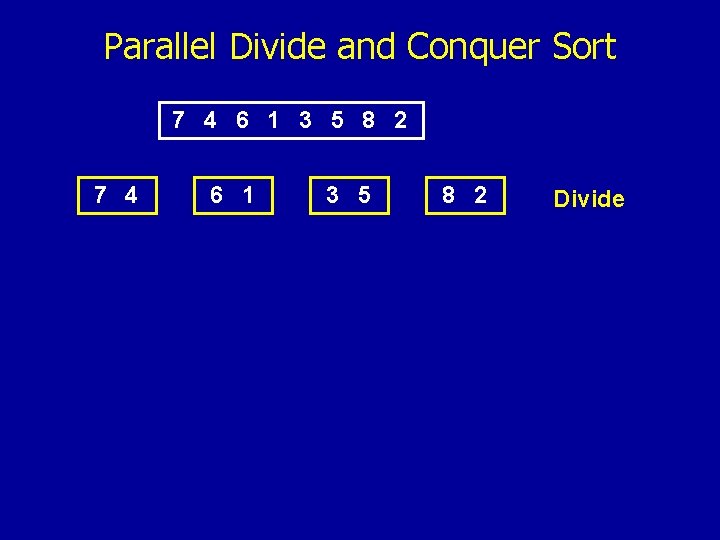

Parallel Divide and Conquer Sort 7 4 6 1 3 5 8 2 Divide

Parallel Divide and Conquer Sort 7 4 6 1 3 5 8 2 Divide 4 7 1 6 3 5 2 8 Conquer

Parallel Divide and Conquer Sort 7 4 6 1 3 5 8 2 Divide 4 7 1 6 3 5 2 8 Conquer 1 4 6 7 2 3 5 8 Combine

Parallel Divide and Conquer Sort 7 4 6 1 3 5 8 2 Divide 4 7 1 6 3 5 2 8 Conquer 1 4 6 7 2 3 5 8 1 2 3 4 5 6 7 8 Combine

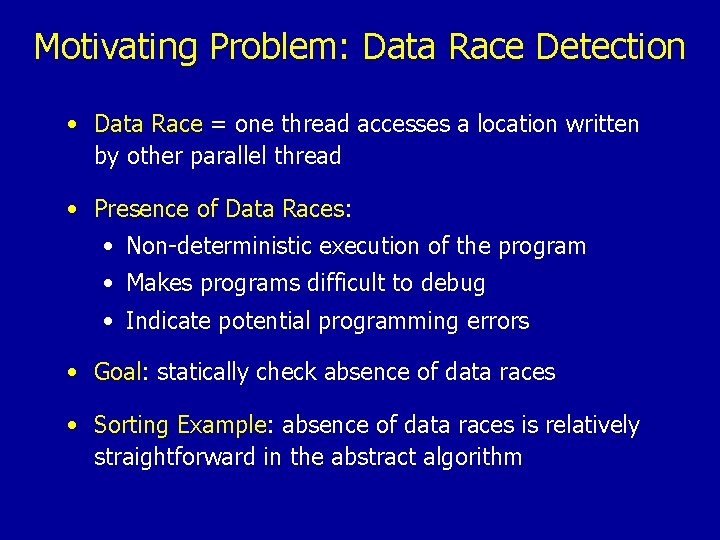

Motivating Problem: Data Race Detection • Data Race = one thread accesses a location written by other parallel thread • Presence of Data Races: • Non-deterministic execution of the program • Makes programs difficult to debug • Indicate potential programming errors • Goal: statically check absence of data races • Sorting Example: absence of data races is relatively straightforward in the abstract algorithm

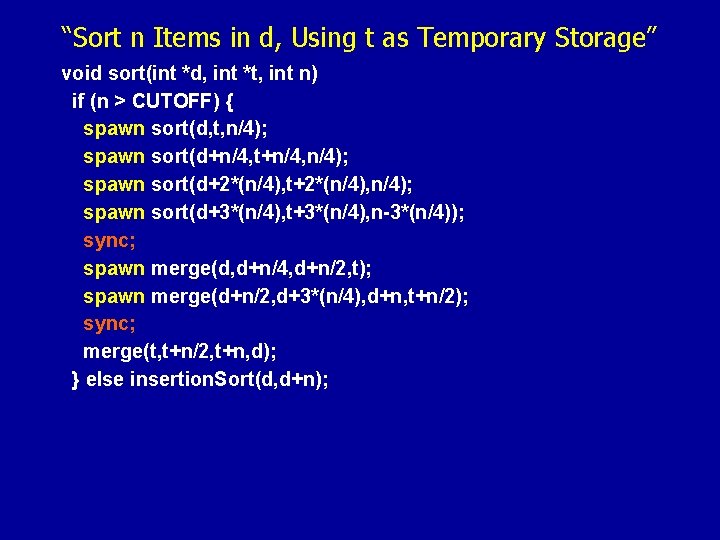

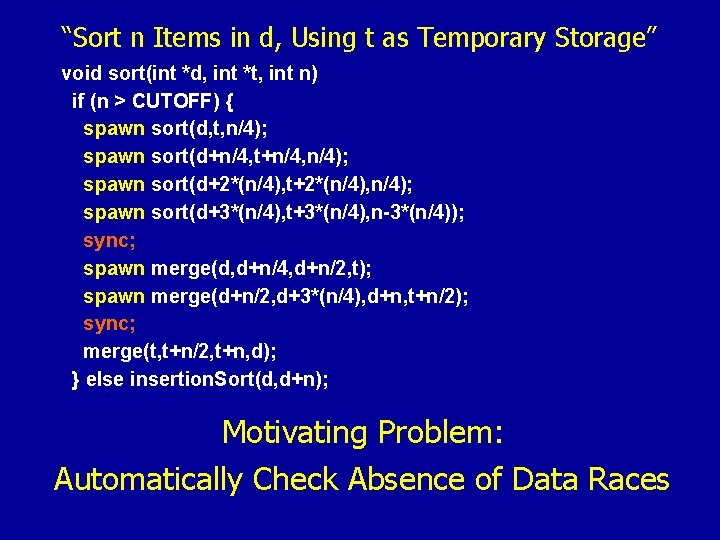

“Sort n Items in d, Using t as Temporary Storage” void sort(int *d, int *t, int n) if (n > CUTOFF) { spawn sort(d, t, n/4); spawn sort(d+n/4, t+n/4, n/4); spawn sort(d+2*(n/4), t+2*(n/4), n/4); spawn sort(d+3*(n/4), t+3*(n/4), n-3*(n/4)); sync; spawn merge(d, d+n/4, d+n/2, t); spawn merge(d+n/2, d+3*(n/4), d+n, t+n/2); sync; merge(t, t+n/2, t+n, d); } else insertion. Sort(d, d+n);

“Sort n Items in d, Using t as Temporary Storage” void sort(int *d, int *t, int n) if (n > CUTOFF) { spawn sort(d, t, n/4); spawn sort(d+n/4, t+n/4, n/4); spawn sort(d+2*(n/4), t+2*(n/4), n/4); spawn sort(d+3*(n/4), t+3*(n/4), n-3*(n/4)); sync; spawn merge(d, d+n/4, d+n/2, t); spawn merge(d+n/2, d+3*(n/4), d+n, t+n/2); sync; merge(t, t+n/2, t+n, d); } else insertion. Sort(d, d+n); Motivating Problem: Automatically Check Absence of Data Races

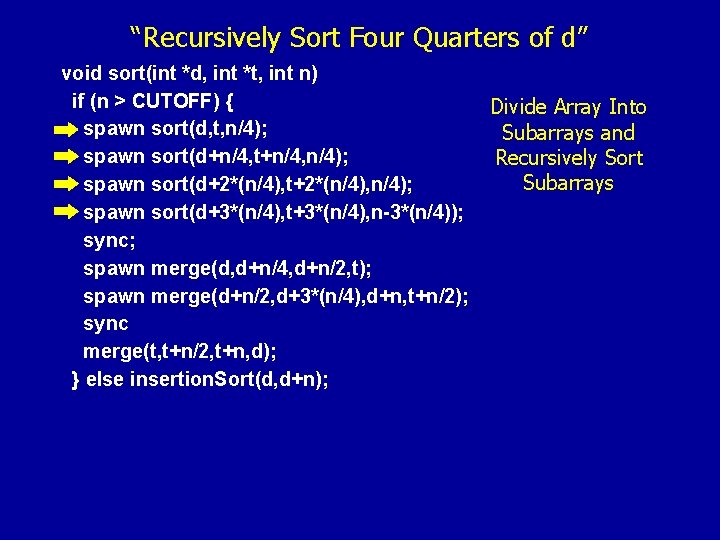

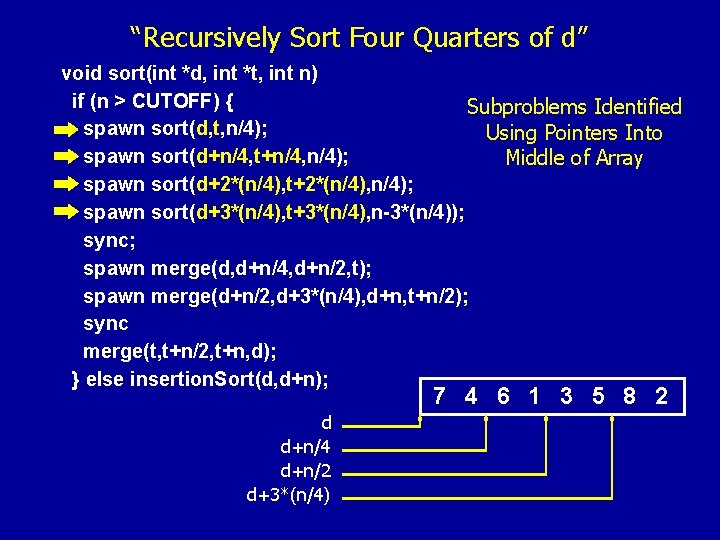

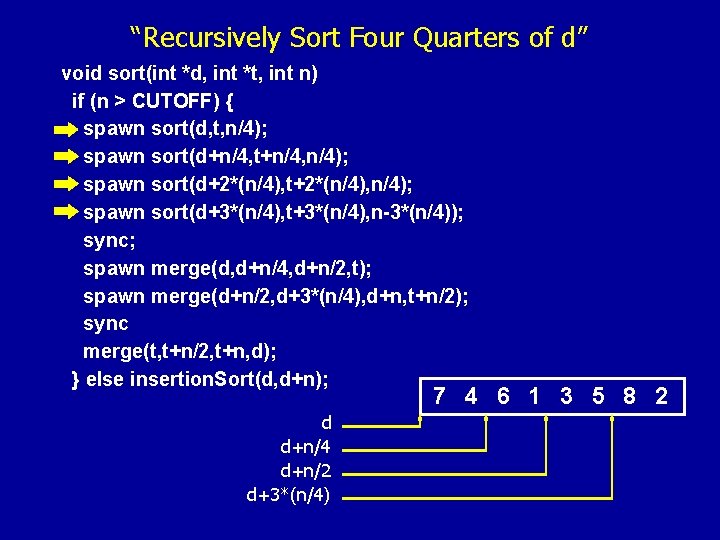

“Recursively Sort Four Quarters of d” void sort(int *d, int *t, int n) if (n > CUTOFF) { spawn sort(d, t, n/4); spawn sort(d+n/4, t+n/4, n/4); spawn sort(d+2*(n/4), t+2*(n/4), n/4); spawn sort(d+3*(n/4), t+3*(n/4), n-3*(n/4)); sync; spawn merge(d, d+n/4, d+n/2, t); spawn merge(d+n/2, d+3*(n/4), d+n, t+n/2); sync merge(t, t+n/2, t+n, d); } else insertion. Sort(d, d+n); Divide Array Into Subarrays and Recursively Sort Subarrays

“Recursively Sort Four Quarters of d” void sort(int *d, int *t, int n) if (n > CUTOFF) { Subproblems Identified spawn sort(d, t, n/4); Using Pointers Into spawn sort(d+n/4, t+n/4, n/4); Middle of Array spawn sort(d+2*(n/4), t+2*(n/4), n/4); spawn sort(d+3*(n/4), t+3*(n/4), n-3*(n/4)); sync; spawn merge(d, d+n/4, d+n/2, t); spawn merge(d+n/2, d+3*(n/4), d+n, t+n/2); sync merge(t, t+n/2, t+n, d); } else insertion. Sort(d, d+n); 7 4 6 1 3 5 8 2 d d+n/4 d+n/2 d+3*(n/4)

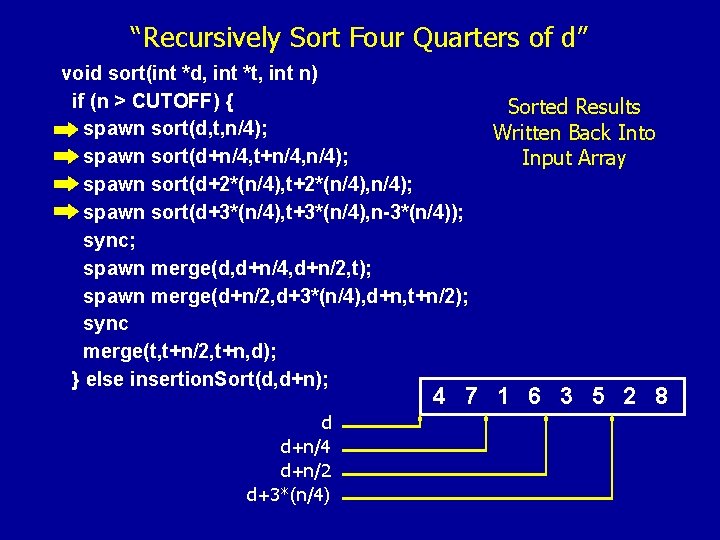

“Recursively Sort Four Quarters of d” void sort(int *d, int *t, int n) if (n > CUTOFF) { spawn sort(d, t, n/4); spawn sort(d+n/4, t+n/4, n/4); spawn sort(d+2*(n/4), t+2*(n/4), n/4); spawn sort(d+3*(n/4), t+3*(n/4), n-3*(n/4)); sync; spawn merge(d, d+n/4, d+n/2, t); spawn merge(d+n/2, d+3*(n/4), d+n, t+n/2); sync merge(t, t+n/2, t+n, d); } else insertion. Sort(d, d+n); 7 4 6 1 3 5 8 2 d d+n/4 d+n/2 d+3*(n/4)

“Recursively Sort Four Quarters of d” void sort(int *d, int *t, int n) if (n > CUTOFF) { spawn sort(d, t, n/4); spawn sort(d+n/4, t+n/4, n/4); spawn sort(d+2*(n/4), t+2*(n/4), n/4); spawn sort(d+3*(n/4), t+3*(n/4), n-3*(n/4)); sync; spawn merge(d, d+n/4, d+n/2, t); spawn merge(d+n/2, d+3*(n/4), d+n, t+n/2); sync merge(t, t+n/2, t+n, d); } else insertion. Sort(d, d+n); Sorted Results Written Back Into Input Array 4 7 1 6 3 5 2 8 d d+n/4 d+n/2 d+3*(n/4)

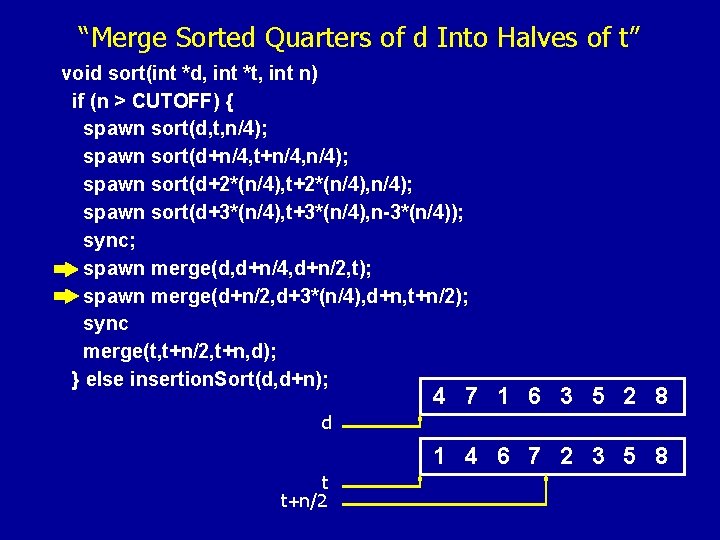

“Merge Sorted Quarters of d Into Halves of t” void sort(int *d, int *t, int n) if (n > CUTOFF) { spawn sort(d, t, n/4); spawn sort(d+n/4, t+n/4, n/4); spawn sort(d+2*(n/4), t+2*(n/4), n/4); spawn sort(d+3*(n/4), t+3*(n/4), n-3*(n/4)); sync; spawn merge(d, d+n/4, d+n/2, t); spawn merge(d+n/2, d+3*(n/4), d+n, t+n/2); sync merge(t, t+n/2, t+n, d); } else insertion. Sort(d, d+n); 4 7 1 6 3 5 2 8 d 1 4 6 7 2 3 5 8 t t+n/2

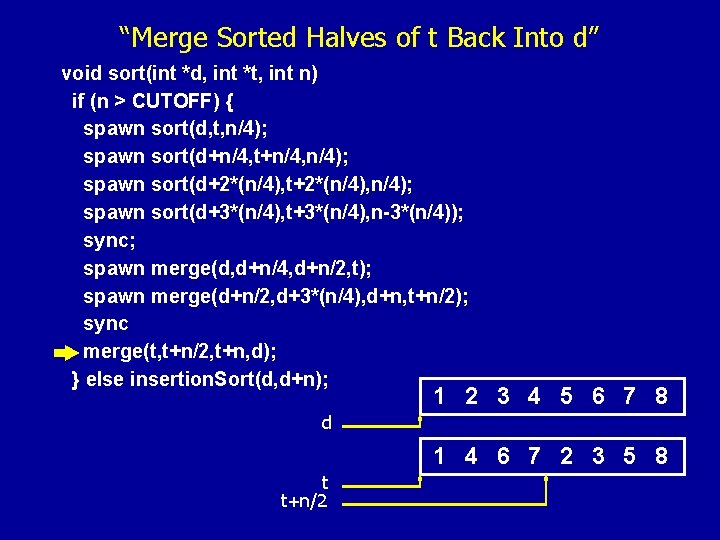

“Merge Sorted Halves of t Back Into d” void sort(int *d, int *t, int n) if (n > CUTOFF) { spawn sort(d, t, n/4); spawn sort(d+n/4, t+n/4, n/4); spawn sort(d+2*(n/4), t+2*(n/4), n/4); spawn sort(d+3*(n/4), t+3*(n/4), n-3*(n/4)); sync; spawn merge(d, d+n/4, d+n/2, t); spawn merge(d+n/2, d+3*(n/4), d+n, t+n/2); sync merge(t, t+n/2, t+n, d); } else insertion. Sort(d, d+n); 1 2 3 4 5 6 7 8 d 1 4 6 7 2 3 5 8 t t+n/2

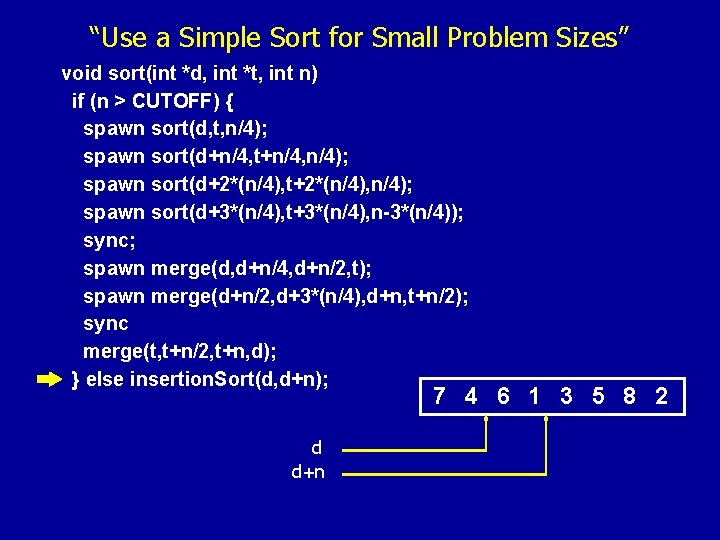

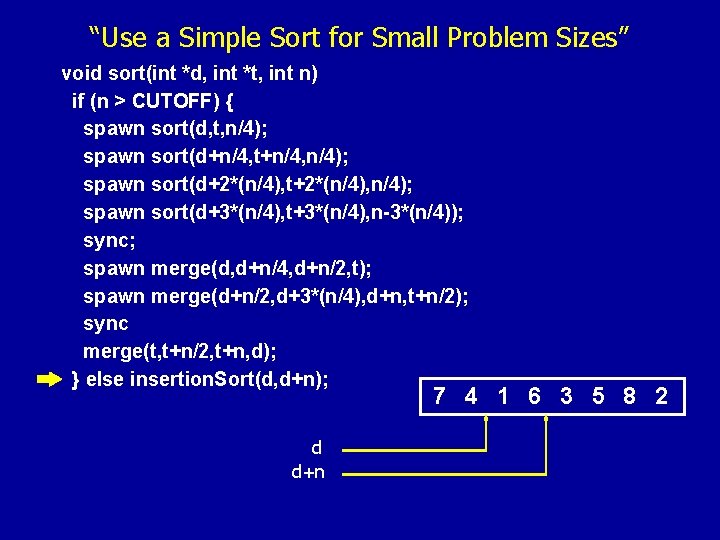

“Use a Simple Sort for Small Problem Sizes” void sort(int *d, int *t, int n) if (n > CUTOFF) { spawn sort(d, t, n/4); spawn sort(d+n/4, t+n/4, n/4); spawn sort(d+2*(n/4), t+2*(n/4), n/4); spawn sort(d+3*(n/4), t+3*(n/4), n-3*(n/4)); sync; spawn merge(d, d+n/4, d+n/2, t); spawn merge(d+n/2, d+3*(n/4), d+n, t+n/2); sync merge(t, t+n/2, t+n, d); } else insertion. Sort(d, d+n); 7 4 6 1 3 5 8 2 d d+n

“Use a Simple Sort for Small Problem Sizes” void sort(int *d, int *t, int n) if (n > CUTOFF) { spawn sort(d, t, n/4); spawn sort(d+n/4, t+n/4, n/4); spawn sort(d+2*(n/4), t+2*(n/4), n/4); spawn sort(d+3*(n/4), t+3*(n/4), n-3*(n/4)); sync; spawn merge(d, d+n/4, d+n/2, t); spawn merge(d+n/2, d+3*(n/4), d+n, t+n/2); sync merge(t, t+n/2, t+n, d); } else insertion. Sort(d, d+n); 7 4 1 6 3 5 8 2 d d+n

What Do You Need To Know To Check the Absence of Data Races?

What Do You Need To Know To Check the Absence of Data Races? Points-To Information Is Not Enough ! Parallel Threads Access The Same Array

What Do You Need To Know To Check the Absence of Data Races? Key Piece of Information: Symbolic Information About Accessed Memory Regions

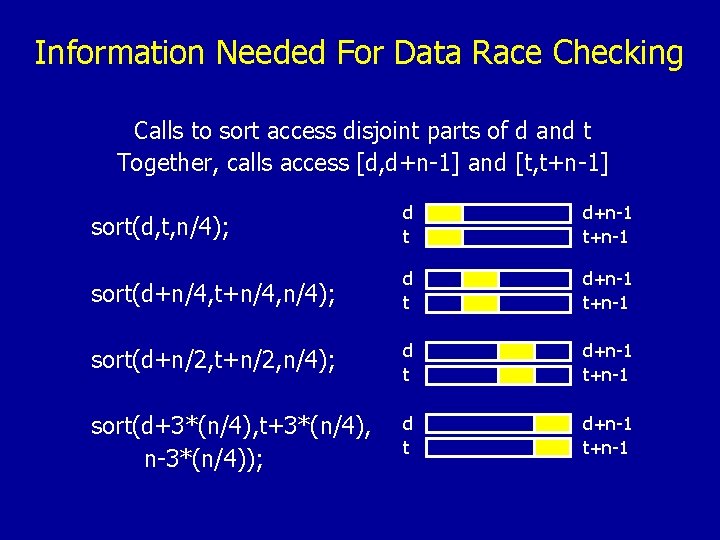

Information Needed For Data Race Checking Calls to sort access disjoint parts of d and t Together, calls access [d, d+n-1] and [t, t+n-1] sort(d, t, n/4); d t d+n-1 t+n-1 sort(d+n/4, t+n/4, n/4); d t d+n-1 t+n-1 sort(d+n/2, t+n/2, n/4); d t d+n-1 t+n-1 sort(d+3*(n/4), t+3*(n/4), n-3*(n/4)); d t d+n-1 t+n-1

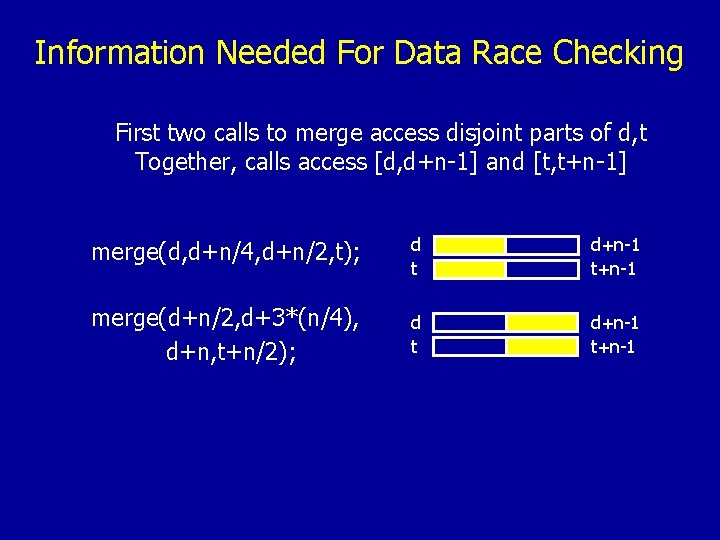

Information Needed For Data Race Checking First two calls to merge access disjoint parts of d, t Together, calls access [d, d+n-1] and [t, t+n-1] merge(d, d+n/4, d+n/2, t); d t d+n-1 t+n-1 merge(d+n/2, d+3*(n/4), d+n, t+n/2); d t d+n-1 t+n-1

![Information Needed For Data Race Checking Calls to insertion. Sort access [d, d+n-1] insertion. Information Needed For Data Race Checking Calls to insertion. Sort access [d, d+n-1] insertion.](http://slidetodoc.com/presentation_image/cb8167a7dc76b8eb13060a6924526d57/image-68.jpg)

Information Needed For Data Race Checking Calls to insertion. Sort access [d, d+n-1] insertion. Sort(d, d+n); d d+n-1

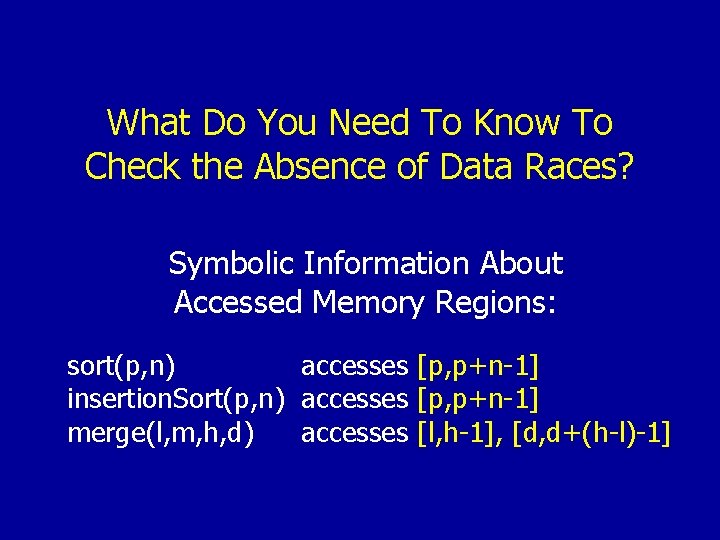

What Do You Need To Know To Check the Absence of Data Races? Symbolic Information About Accessed Memory Regions: sort(p, n) accesses [p, p+n-1] insertion. Sort(p, n) accesses [p, p+n-1] merge(l, m, h, d) accesses [l, h-1], [d, d+(h-l)-1]

How Hard Is It To Figure These Things Out?

How Hard Is It To Figure These Things Out? Challenging

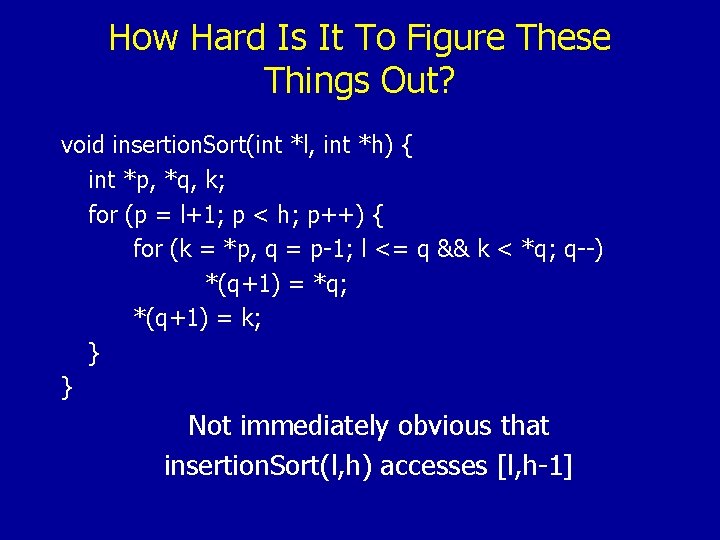

How Hard Is It To Figure These Things Out? void insertion. Sort(int *l, int *h) { int *p, *q, k; for (p = l+1; p < h; p++) { for (k = *p, q = p-1; l <= q && k < *q; q--) *(q+1) = *q; *(q+1) = k; } } Not immediately obvious that insertion. Sort(l, h) accesses [l, h-1]

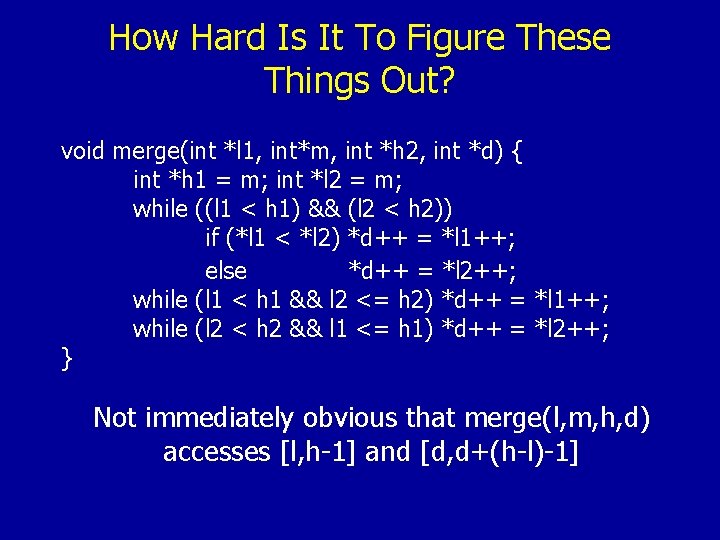

How Hard Is It To Figure These Things Out? void merge(int *l 1, int*m, int *h 2, int *d) { int *h 1 = m; int *l 2 = m; while ((l 1 < h 1) && (l 2 < h 2)) if (*l 1 < *l 2) *d++ = *l 1++; else *d++ = *l 2++; while (l 1 < h 1 && l 2 <= h 2) *d++ = *l 1++; while (l 2 < h 2 && l 1 <= h 1) *d++ = *l 2++; } Not immediately obvious that merge(l, m, h, d) accesses [l, h-1] and [d, d+(h-l)-1]

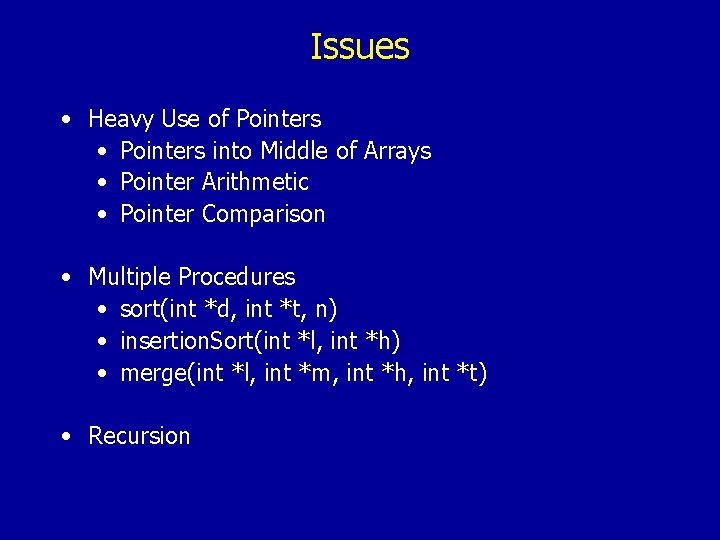

Issues • Heavy Use of Pointers • Pointers into Middle of Arrays • Pointer Arithmetic • Pointer Comparison • Multiple Procedures • sort(int *d, int *t, n) • insertion. Sort(int *l, int *h) • merge(int *l, int *m, int *h, int *t) • Recursion

2. Symbolic Bounds Analysis Algorithm

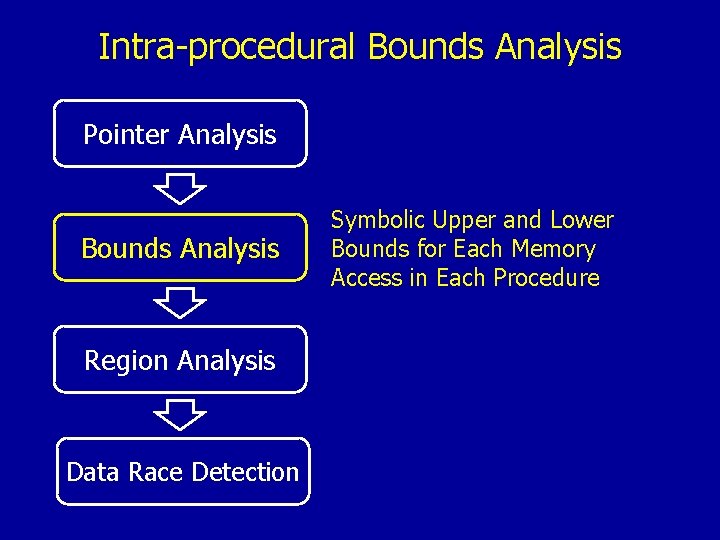

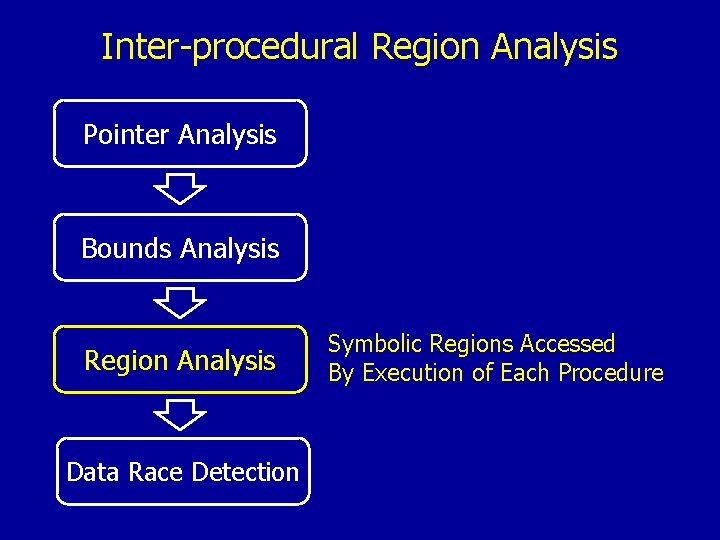

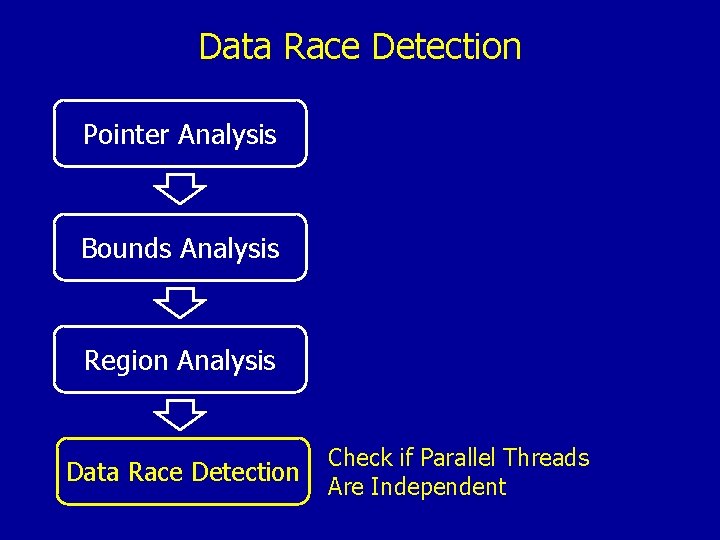

Overall Compiler Structure Pointer Analysis Disambiguate Memory at the Granularity of Abstract Locations Bounds Analysis Symbolic Upper and Lower Bounds for Each Memory Access in Each Procedure Region Analysis Symbolic Regions Accessed By Execution of Each Procedure Data Race Detection Check if Parallel Threads Are Independent

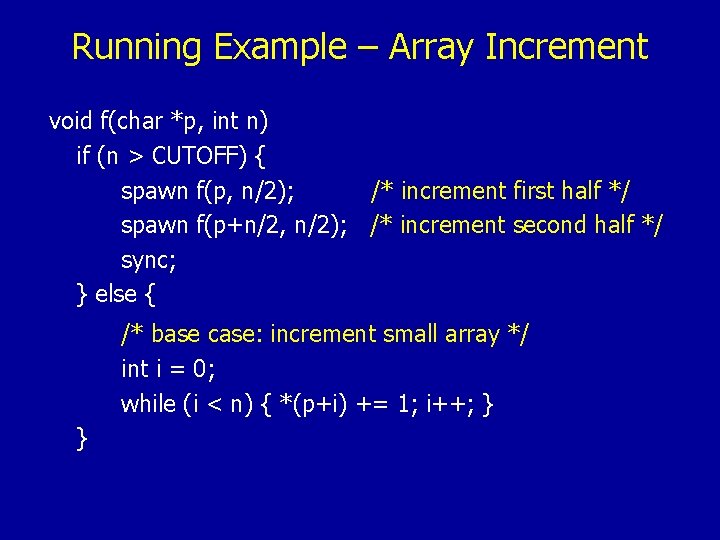

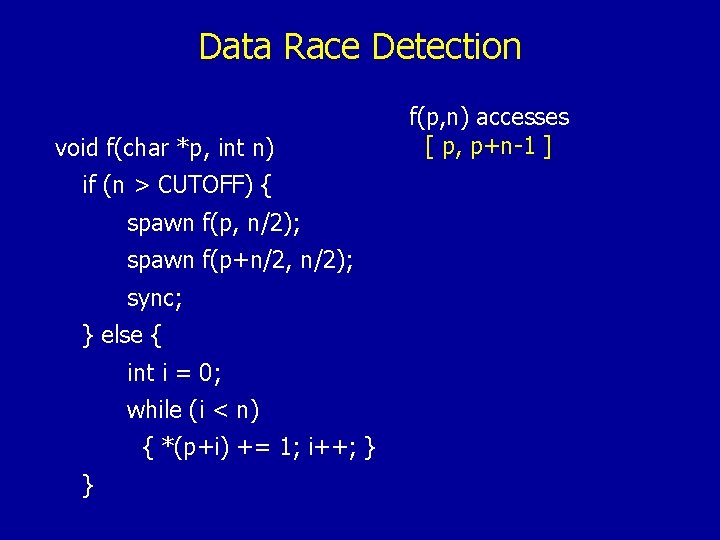

Running Example – Array Increment void f(char *p, int n) if (n > CUTOFF) { spawn f(p, n/2); /* increment first half */ spawn f(p+n/2, n/2); /* increment second half */ sync; } else { /* base case: increment small array */ int i = 0; while (i < n) { *(p+i) += 1; i++; } }

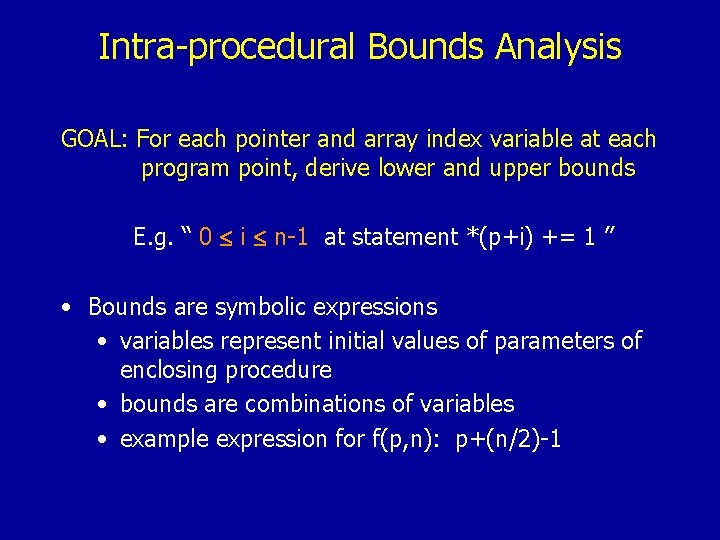

Intra-procedural Bounds Analysis Pointer Analysis Bounds Analysis Region Analysis Data Race Detection Symbolic Upper and Lower Bounds for Each Memory Access in Each Procedure

Intra-procedural Bounds Analysis GOAL: For each pointer and array index variable at each program point, derive lower and upper bounds E. g. “ 0 i n-1 at statement *(p+i) += 1 ” • Bounds are symbolic expressions • variables represent initial values of parameters of enclosing procedure • bounds are combinations of variables • example expression for f(p, n): p+(n/2)-1

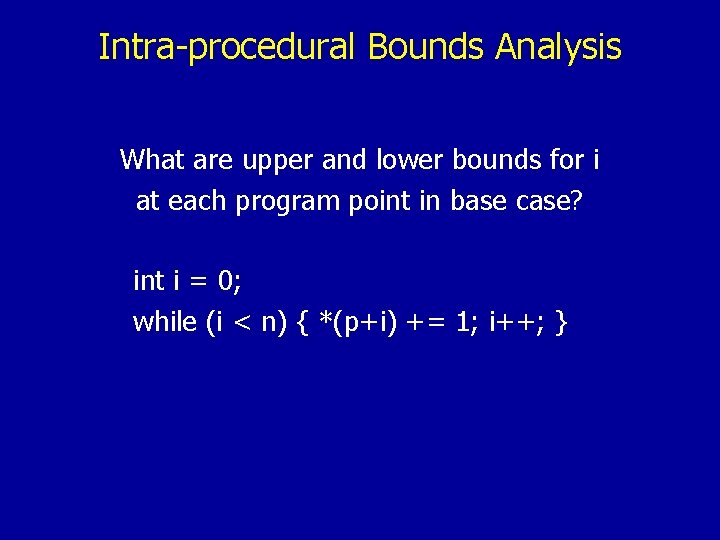

Intra-procedural Bounds Analysis What are upper and lower bounds for i at each program point in base case? int i = 0; while (i < n) { *(p+i) += 1; i++; }

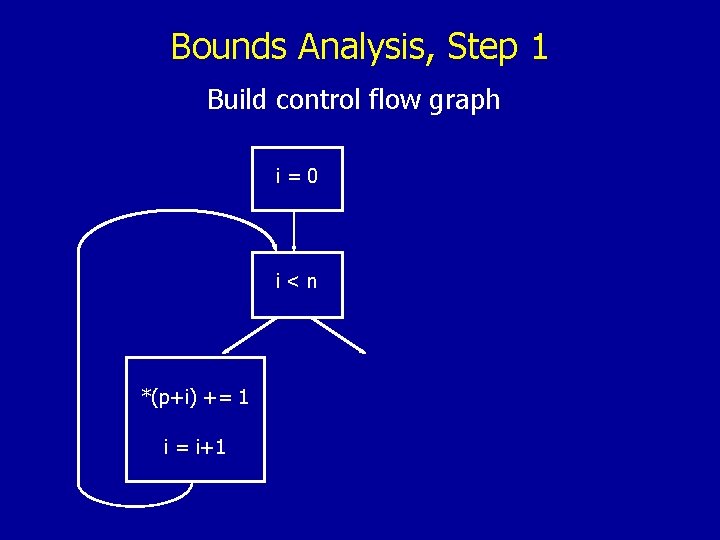

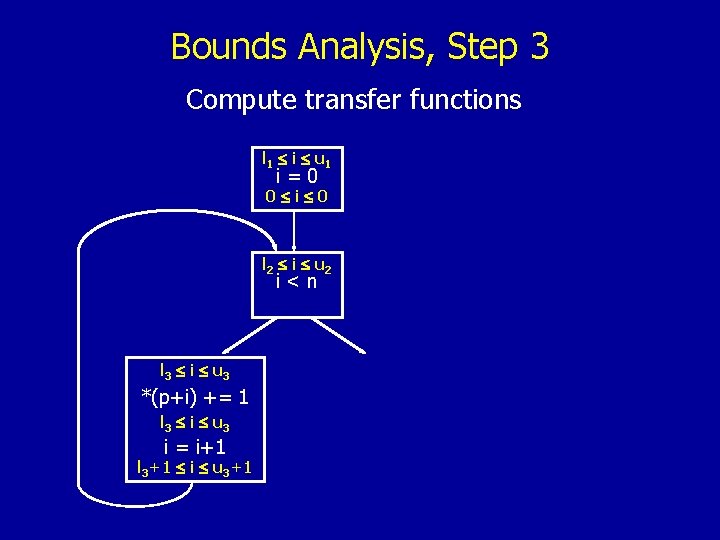

Bounds Analysis, Step 1 Build control flow graph i=0 i<n *(p+i) += 1 i = i+1

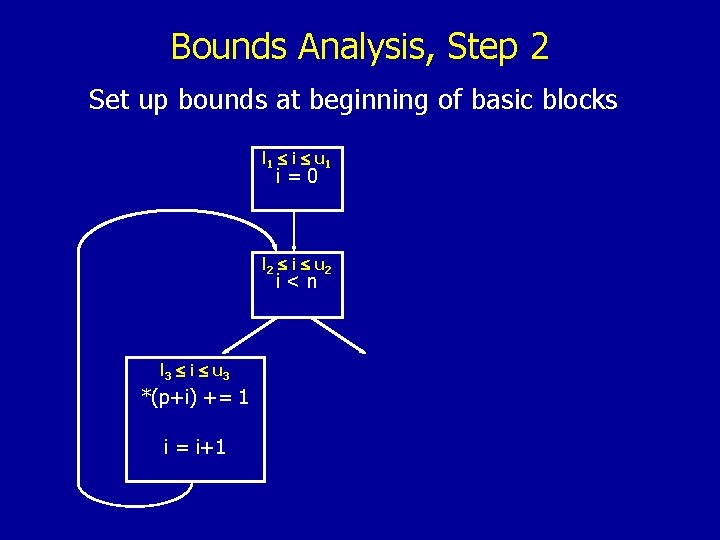

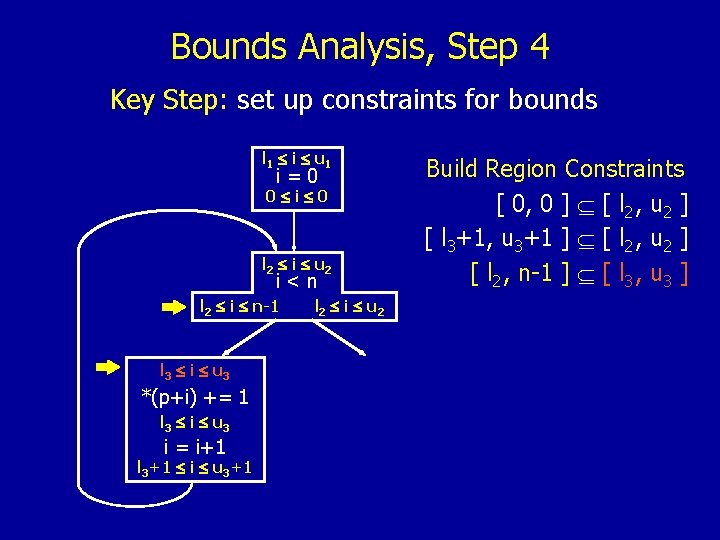

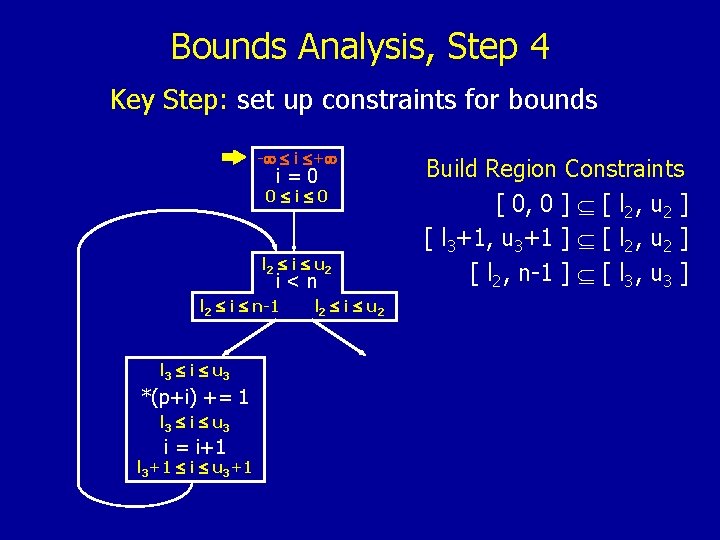

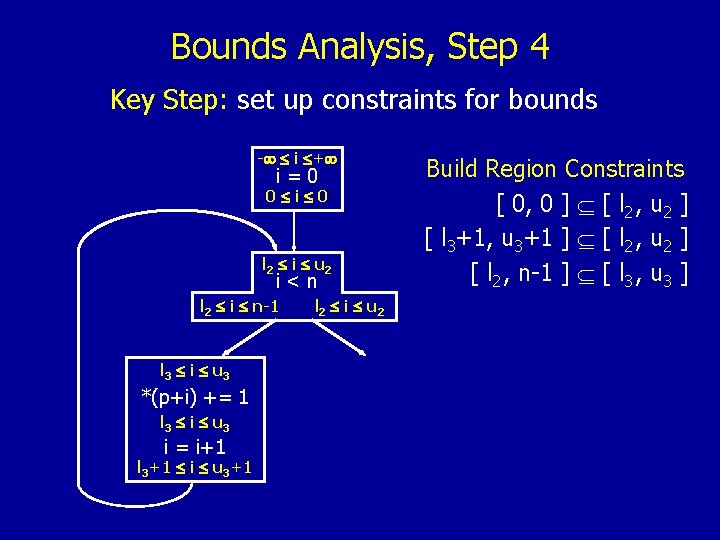

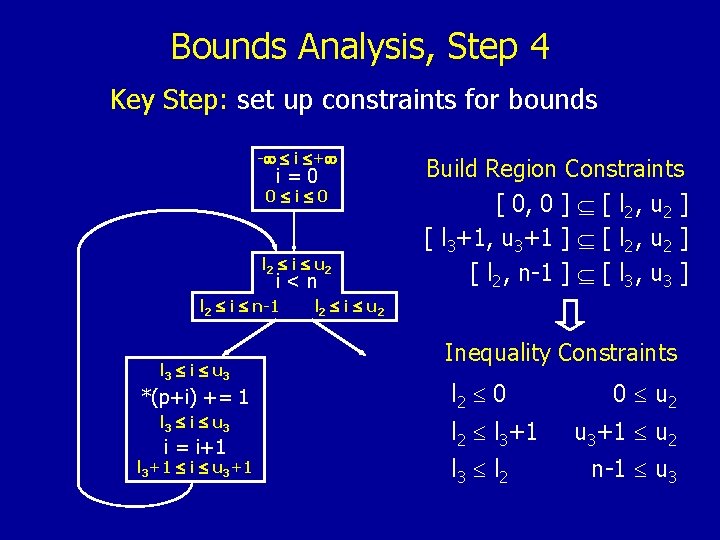

Bounds Analysis, Step 2 Set up bounds at beginning of basic blocks l 1 i u 1 i=0 l 2 i u 2 i<n l 3 i u 3 *(p+i) += 1 i = i+1

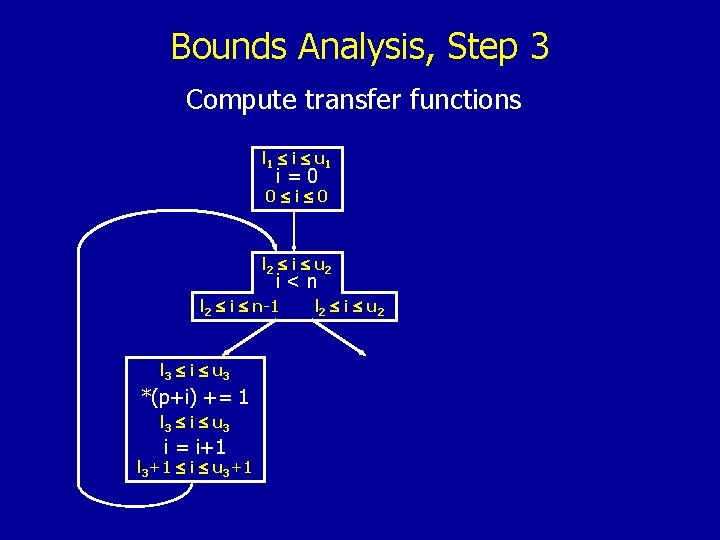

Bounds Analysis, Step 3 Compute transfer functions l 1 i u 1 i=0 0 i 0 l 2 i u 2 i<n l 3 i u 3 *(p+i) += 1 l 3 i u 3 i = i+1 l 3+1 i u 3+1

Bounds Analysis, Step 3 Compute transfer functions l 1 i u 1 i=0 0 i 0 l 2 i u 2 i<n l 2 i n-1 l 3 i u 3 *(p+i) += 1 l 3 i u 3 i = i+1 l 3+1 i u 3+1 l 2 i u 2

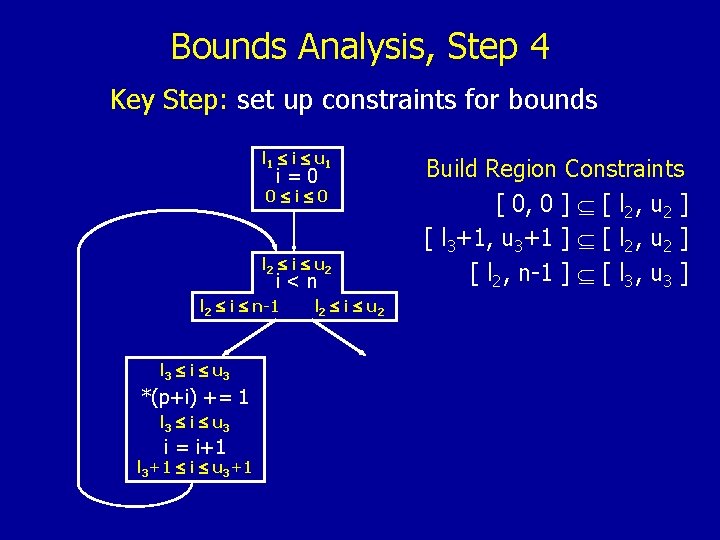

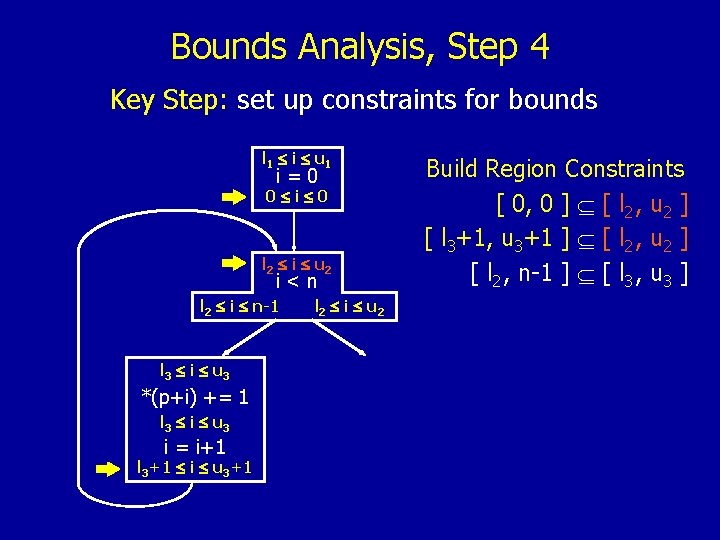

Bounds Analysis, Step 4 Key Step: set up constraints for bounds l 1 i u 1 i=0 0 i 0 l 2 i u 2 i<n l 2 i n-1 l 3 i u 3 *(p+i) += 1 l 3 i u 3 i = i+1 l 3+1 i u 3+1 l 2 i u 2 Build Region Constraints [ 0, 0 ] [ l 2 , u 2 ] [ l 3+1, u 3+1 ] [ l 2 , u 2 ] [ l 2 , n-1 ] [ l 3 , u 3 ]

Bounds Analysis, Step 4 Key Step: set up constraints for bounds l 1 i u 1 i=0 0 i 0 l 2 i u 2 i<n l 2 i n-1 l 3 i u 3 *(p+i) += 1 l 3 i u 3 i = i+1 l 3+1 i u 3+1 l 2 i u 2 Build Region Constraints [ 0, 0 ] [ l 2 , u 2 ] [ l 3+1, u 3+1 ] [ l 2 , u 2 ] [ l 2 , n-1 ] [ l 3 , u 3 ]

Bounds Analysis, Step 4 Key Step: set up constraints for bounds l 1 i u 1 i=0 0 i 0 l 2 i u 2 i<n l 2 i n-1 l 3 i u 3 *(p+i) += 1 l 3 i u 3 i = i+1 l 3+1 i u 3+1 l 2 i u 2 Build Region Constraints [ 0, 0 ] [ l 2 , u 2 ] [ l 3+1, u 3+1 ] [ l 2 , u 2 ] [ l 2 , n-1 ] [ l 3 , u 3 ]

Bounds Analysis, Step 4 Key Step: set up constraints for bounds - i + i=0 0 i 0 l 2 i u 2 i<n l 2 i n-1 l 3 i u 3 *(p+i) += 1 l 3 i u 3 i = i+1 l 3+1 i u 3+1 l 2 i u 2 Build Region Constraints [ 0, 0 ] [ l 2 , u 2 ] [ l 3+1, u 3+1 ] [ l 2 , u 2 ] [ l 2 , n-1 ] [ l 3 , u 3 ]

Bounds Analysis, Step 4 Key Step: set up constraints for bounds - i + i=0 0 i 0 l 2 i u 2 i<n l 2 i n-1 l 3 i u 3 *(p+i) += 1 l 3 i u 3 i = i+1 l 3+1 i u 3+1 l 2 i u 2 Build Region Constraints [ 0, 0 ] [ l 2 , u 2 ] [ l 3+1, u 3+1 ] [ l 2 , u 2 ] [ l 2 , n-1 ] [ l 3 , u 3 ]

Bounds Analysis, Step 4 Key Step: set up constraints for bounds - i + i=0 0 i 0 l 2 i u 2 i<n l 2 i n-1 l 3 i u 3 *(p+i) += 1 l 3 i u 3 i = i+1 l 3+1 i u 3+1 Build Region Constraints [ 0, 0 ] [ l 2 , u 2 ] [ l 3+1, u 3+1 ] [ l 2 , u 2 ] [ l 2 , n-1 ] [ l 3 , u 3 ] l 2 i u 2 Inequality Constraints l 2 0 l 2 l 3+1 l 3 l 2 0 u 2 u 3+1 u 2 n-1 u 3

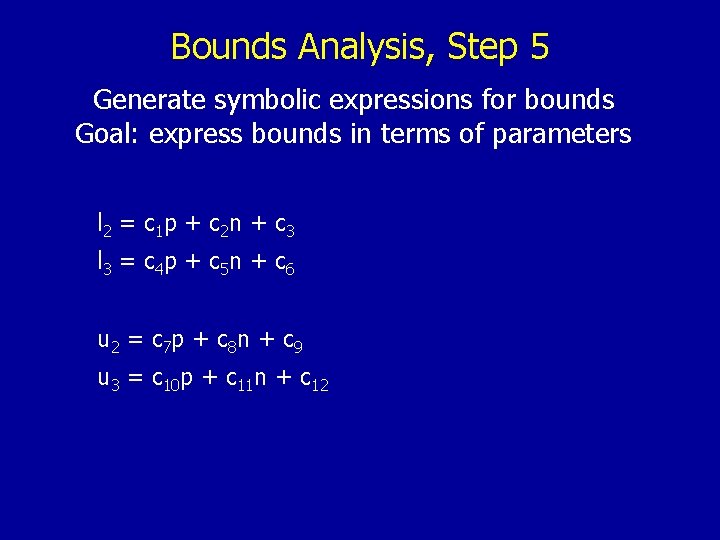

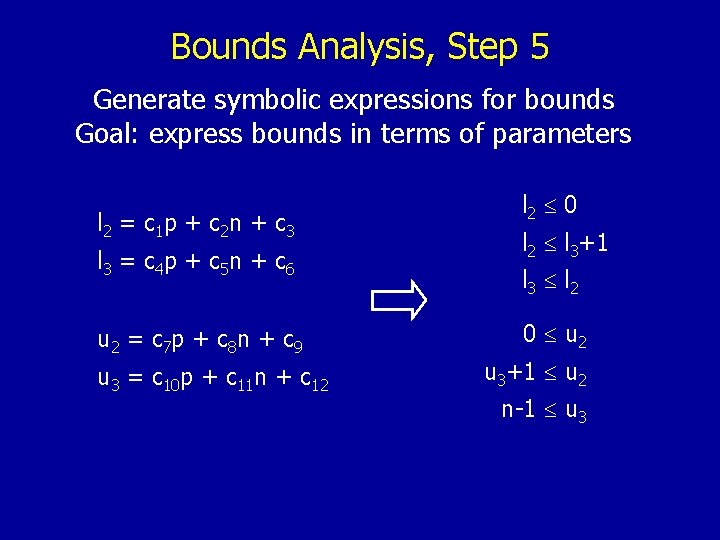

Bounds Analysis, Step 5 Generate symbolic expressions for bounds Goal: express bounds in terms of parameters l 2 = c 1 p + c 2 n + c 3 l 3 = c 4 p + c 5 n + c 6 u 2 = c 7 p + c 8 n + c 9 u 3 = c 10 p + c 11 n + c 12

Bounds Analysis, Step 5 Generate symbolic expressions for bounds Goal: express bounds in terms of parameters l 2 = c 1 p + c 2 n + c 3 l 3 = c 4 p + c 5 n + c 6 u 2 = c 7 p + c 8 n + c 9 u 3 = c 10 p + c 11 n + c 12 l 2 0 l 2 l 3+1 l 3 l 2 0 u 2 u 3+1 u 2 n-1 u 3

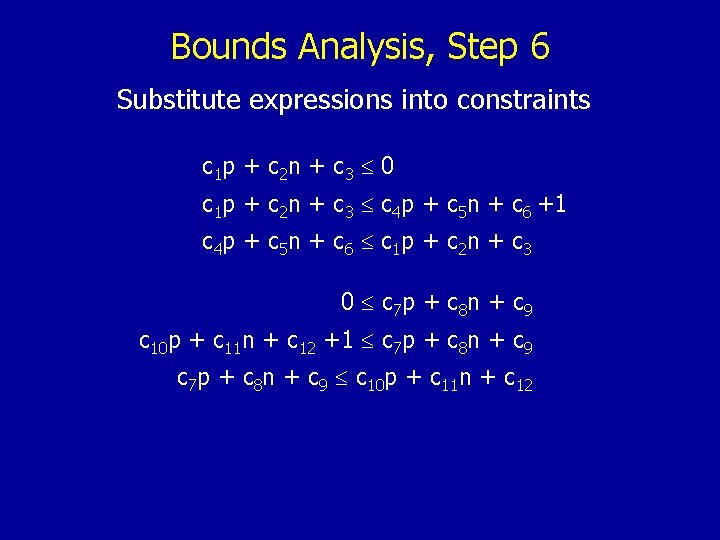

Bounds Analysis, Step 6 Substitute expressions into constraints c 1 p + c 2 n + c 3 0 c 1 p + c 2 n + c 3 c 4 p + c 5 n + c 6 +1 c 4 p + c 5 n + c 6 c 1 p + c 2 n + c 3 0 c 7 p + c 8 n + c 9 c 10 p + c 11 n + c 12 +1 c 7 p + c 8 n + c 9 c 10 p + c 11 n + c 12

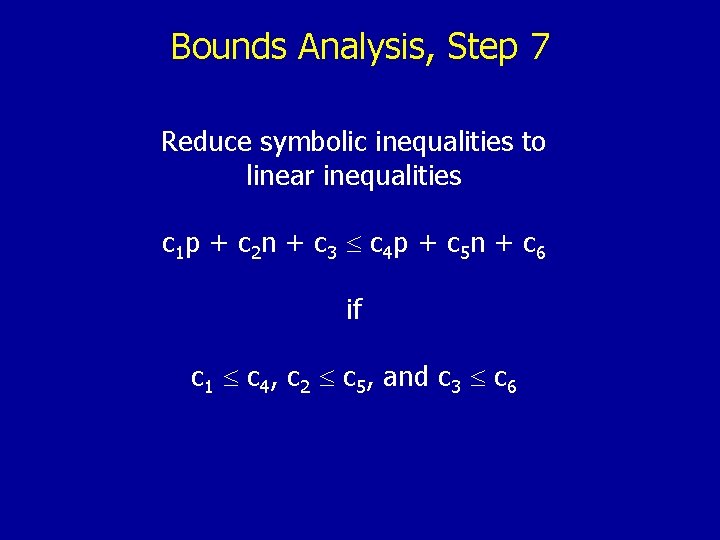

Bounds Analysis, Step 7 Reduce symbolic inequalities to linear inequalities c 1 p + c 2 n + c 3 c 4 p + c 5 n + c 6 if c 1 c 4, c 2 c 5, and c 3 c 6

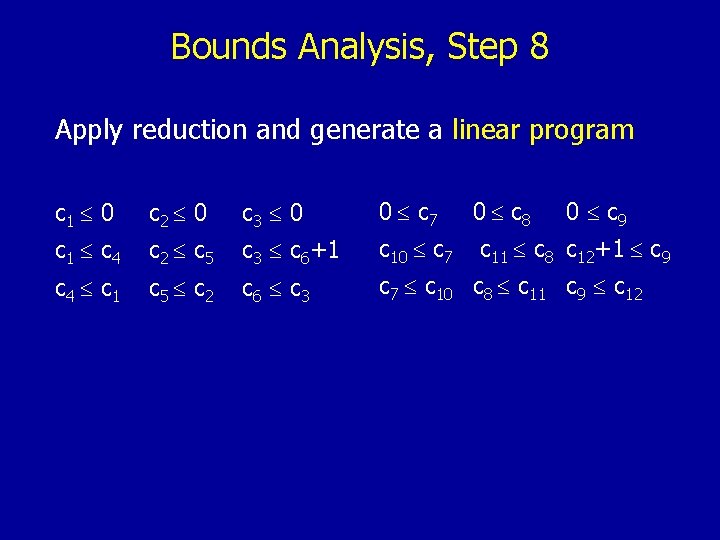

Bounds Analysis, Step 8 Apply reduction and generate a linear program c 1 0 c 2 0 c 3 0 0 c 7 0 c 8 0 c 9 c 1 c 4 c 2 c 5 c 3 c 6+1 c 10 c 7 c 4 c 1 c 5 c 2 c 6 c 3 c 7 c 10 c 8 c 11 c 9 c 12 c 11 c 8 c 12+1 c 9

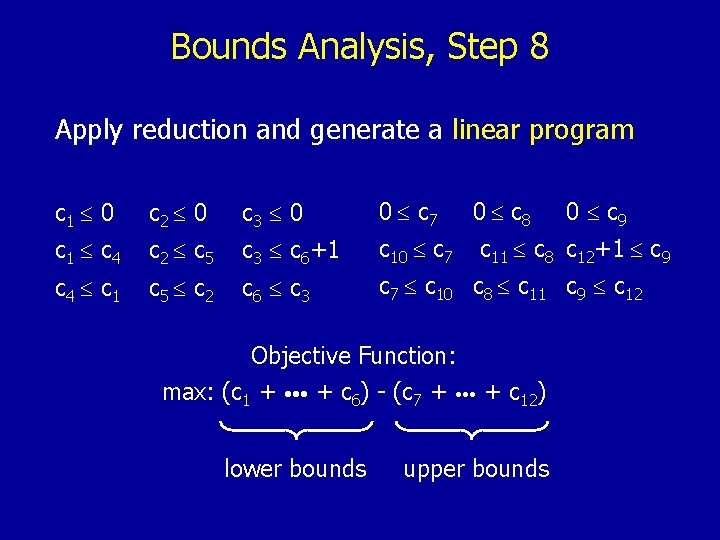

Bounds Analysis, Step 8 Apply reduction and generate a linear program c 1 0 c 2 0 c 3 0 0 c 7 0 c 8 c 1 c 4 c 2 c 5 c 3 c 6+1 c 10 c 7 c 4 c 1 c 5 c 2 c 6 c 3 c 7 c 10 c 8 c 11 c 9 c 12 c 11 c 8 c 12+1 c 9 Objective Function: max: (c 1 + • • • + c 6) - (c 7 + • • • + c 12) lower bounds 0 c 9 upper bounds

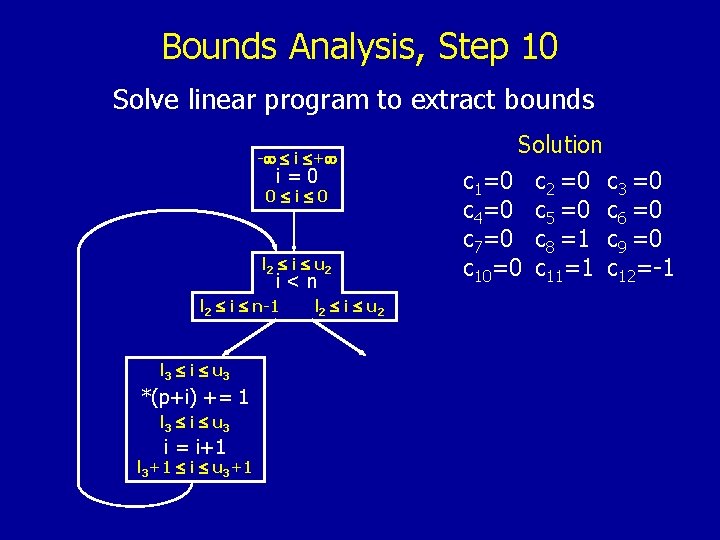

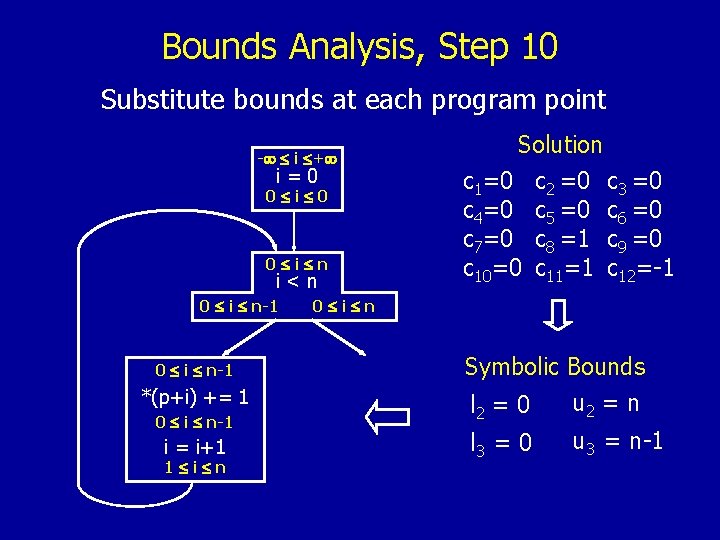

Bounds Analysis, Step 10 Solve linear program to extract bounds - i + i=0 0 i 0 l 2 i u 2 i<n l 2 i n-1 l 3 i u 3 *(p+i) += 1 l 3 i u 3 i = i+1 l 3+1 i u 3+1 l 2 i u 2 Solution c 1=0 c 2 =0 c 3 =0 c 4=0 c 5 =0 c 6 =0 c 7=0 c 8 =1 c 9 =0 c 10=0 c 11=1 c 12=-1

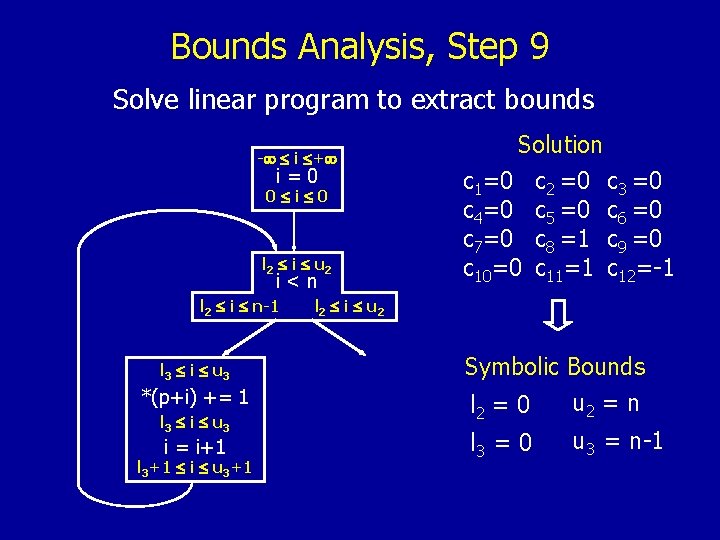

Bounds Analysis, Step 9 Solve linear program to extract bounds - i + i=0 0 i 0 l 2 i u 2 i<n l 2 i n-1 l 3 i u 3 *(p+i) += 1 l 3 i u 3 i = i+1 l 3+1 i u 3+1 Solution c 1=0 c 2 =0 c 3 =0 c 4=0 c 5 =0 c 6 =0 c 7=0 c 8 =1 c 9 =0 c 10=0 c 11=1 c 12=-1 l 2 i u 2 Symbolic Bounds u 2 = n l 2 = 0 l 3 = 0 u 3 = n-1

Bounds Analysis, Step 10 Substitute bounds at each program point - i + i=0 0 i n i<n 0 i n-1 *(p+i) += 1 0 i n-1 i = i+1 1 i n Solution c 1=0 c 2 =0 c 3 =0 c 4=0 c 5 =0 c 6 =0 c 7=0 c 8 =1 c 9 =0 c 10=0 c 11=1 c 12=-1 0 i n Symbolic Bounds u 2 = n l 2 = 0 l 3 = 0 u 3 = n-1

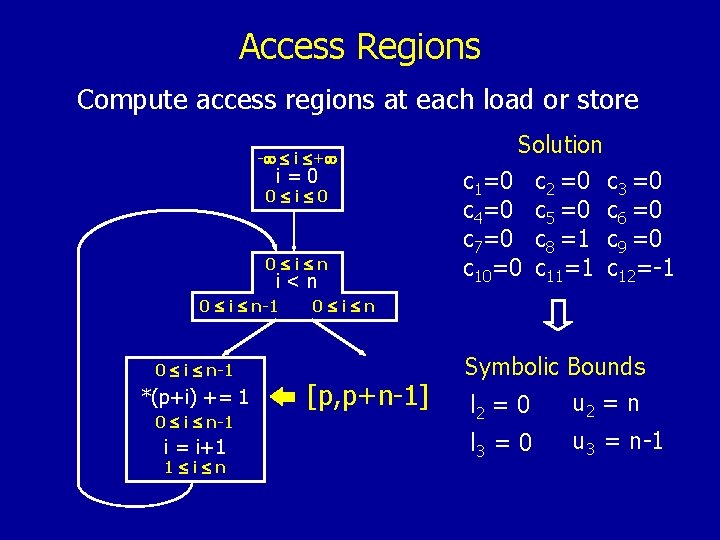

Access Regions Compute access regions at each load or store - i + i=0 0 i n i<n 0 i n-1 *(p+i) += 1 0 i n-1 i = i+1 1 i n Solution c 1=0 c 2 =0 c 3 =0 c 4=0 c 5 =0 c 6 =0 c 7=0 c 8 =1 c 9 =0 c 10=0 c 11=1 c 12=-1 0 i n [p, p+n-1] Symbolic Bounds u 2 = n l 2 = 0 l 3 = 0 u 3 = n-1

Inter-procedural Region Analysis Pointer Analysis Bounds Analysis Region Analysis Data Race Detection Symbolic Regions Accessed By Execution of Each Procedure

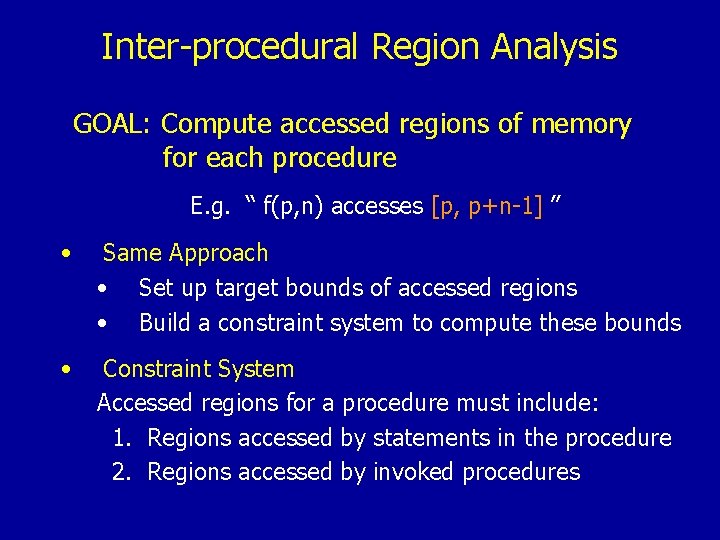

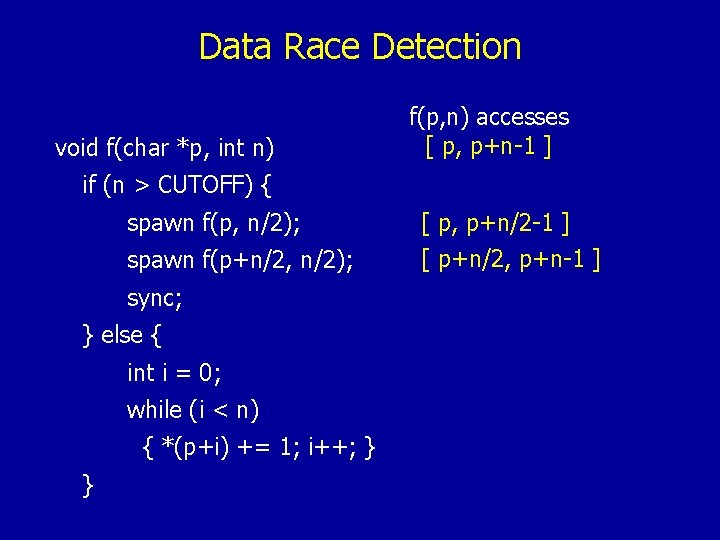

Inter-procedural Region Analysis GOAL: Compute accessed regions of memory for each procedure E. g. “ f(p, n) accesses [p, p+n-1] ” • Same Approach • Set up target bounds of accessed regions • Build a constraint system to compute these bounds • Constraint System Accessed regions for a procedure must include: 1. Regions accessed by statements in the procedure 2. Regions accessed by invoked procedures

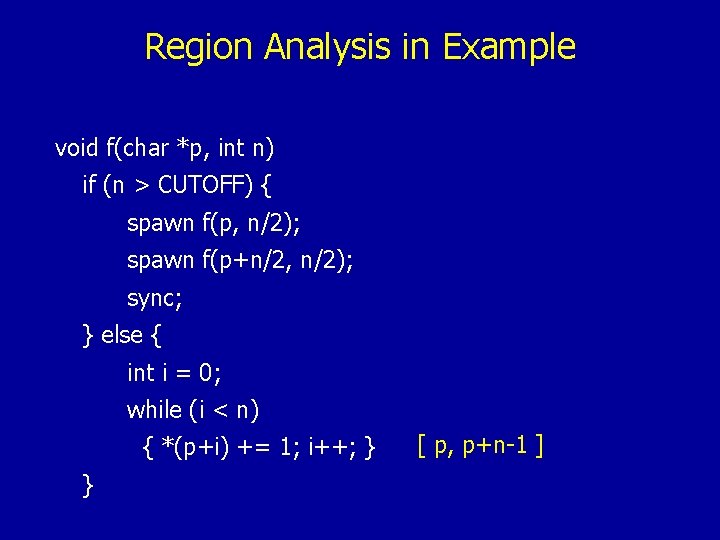

Region Analysis in Example void f(char *p, int n) if (n > CUTOFF) { spawn f(p, n/2); spawn f(p+n/2, n/2); sync; } else { int i = 0; while (i < n) { *(p+i) += 1; i++; } } [ p, p+n-1 ]

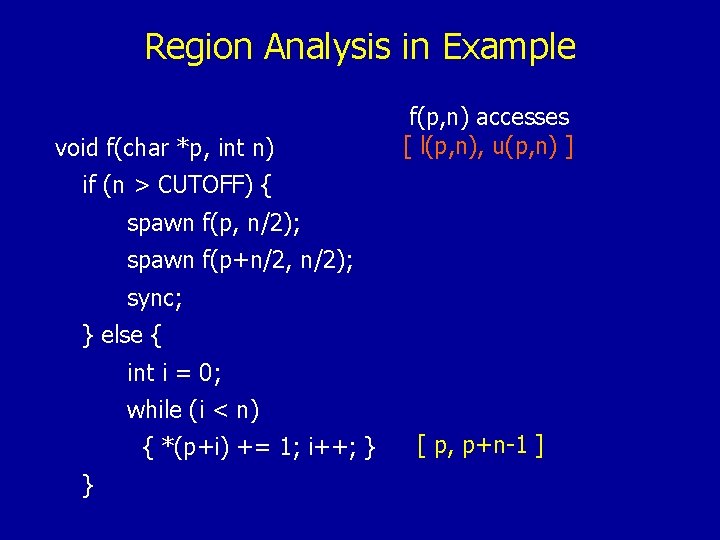

Region Analysis in Example void f(char *p, int n) f(p, n) accesses [ l(p, n), u(p, n) ] if (n > CUTOFF) { spawn f(p, n/2); spawn f(p+n/2, n/2); sync; } else { int i = 0; while (i < n) { *(p+i) += 1; i++; } } [ p, p+n-1 ]

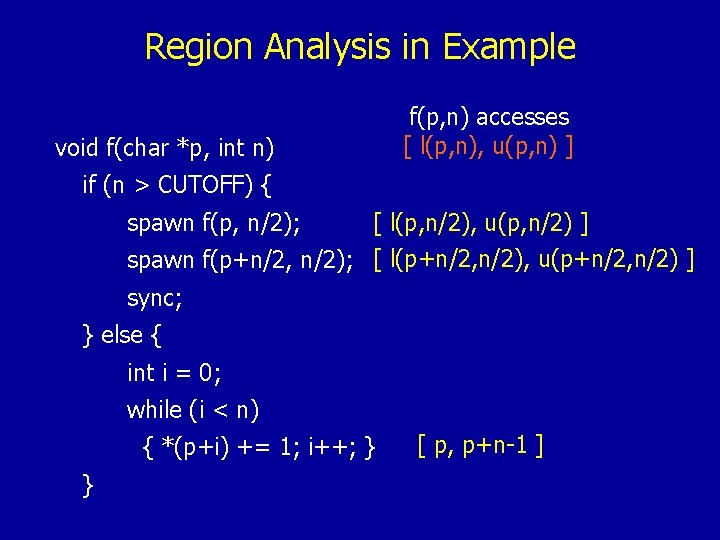

Region Analysis in Example void f(char *p, int n) f(p, n) accesses [ l(p, n), u(p, n) ] if (n > CUTOFF) { spawn f(p, n/2); [ l(p, n/2), u(p, n/2) ] spawn f(p+n/2, n/2); [ l(p+n/2, n/2), u(p+n/2, n/2) ] sync; } else { int i = 0; while (i < n) { *(p+i) += 1; i++; } } [ p, p+n-1 ]

![Derive Constraint System • Region constraints [ l(p, n/2), u(p, n/2) ] [ l(p, Derive Constraint System • Region constraints [ l(p, n/2), u(p, n/2) ] [ l(p,](http://slidetodoc.com/presentation_image/cb8167a7dc76b8eb13060a6924526d57/image-106.jpg)

Derive Constraint System • Region constraints [ l(p, n/2), u(p, n/2) ] [ l(p, n), u(p, n) ]www [ l(p+n/2, n/2), u(p+n/2, n/2) ] [ l(p, n), u(p, n) ]www [ p, p+n-1 ] [ l(p, n), u(p, n) ]www • Reduce to inequalities between lower/upper bounds • Further reduce to a linear program and solve: l(p, n) = p u(p, n) = p+n-1 • Access region for f(p, n): [p, p+n-1]

Data Race Detection Pointer Analysis Bounds Analysis Region Analysis Data Race Detection Check if Parallel Threads Are Independent

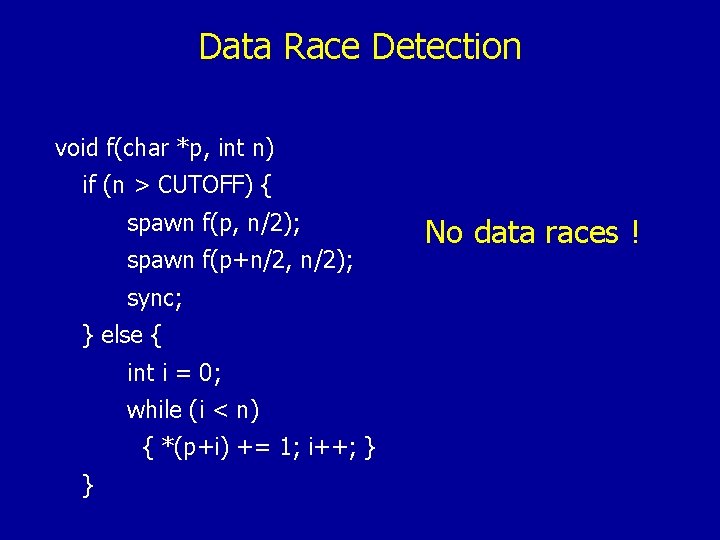

Data Race Detection • Dependence testing of two statements • Do accessed regions intersect? • Based on comparing upper and lower bounds of accessed regions • Absence of data races • Check if all the statements that execute in parallel are independent

Data Race Detection void f(char *p, int n) if (n > CUTOFF) { spawn f(p, n/2); spawn f(p+n/2, n/2); sync; } else { int i = 0; while (i < n) { *(p+i) += 1; i++; } } f(p, n) accesses [ p, p+n-1 ]

Data Race Detection void f(char *p, int n) f(p, n) accesses [ p, p+n-1 ] if (n > CUTOFF) { spawn f(p, n/2); spawn f(p+n/2, n/2); sync; } else { int i = 0; while (i < n) { *(p+i) += 1; i++; } } [ p, p+n/2 -1 ] [ p+n/2, p+n-1 ]

Data Race Detection void f(char *p, int n) if (n > CUTOFF) { spawn f(p, n/2); spawn f(p+n/2, n/2); sync; } else { int i = 0; while (i < n) { *(p+i) += 1; i++; } } No data races !

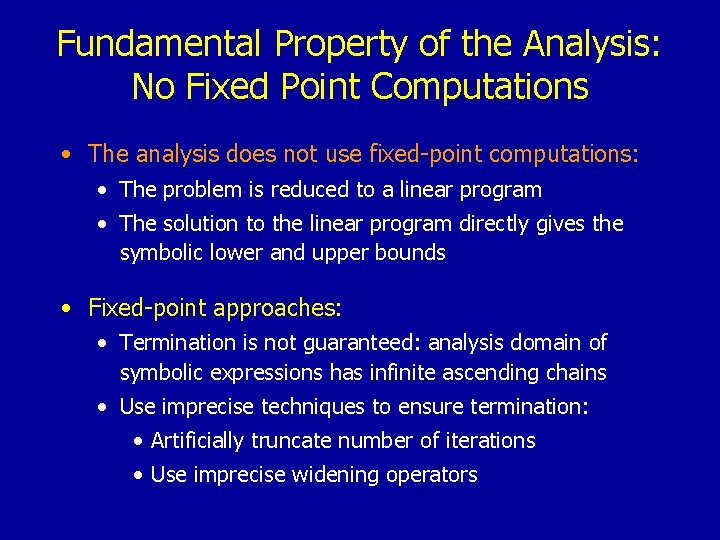

Fundamental Property of the Analysis: No Fixed Point Computations • The analysis does not use fixed-point computations: • The problem is reduced to a linear program • The solution to the linear program directly gives the symbolic lower and upper bounds • Fixed-point approaches: • Termination is not guaranteed: analysis domain of symbolic expressions has infinite ascending chains • Use imprecise techniques to ensure termination: • Artificially truncate number of iterations • Use imprecise widening operators

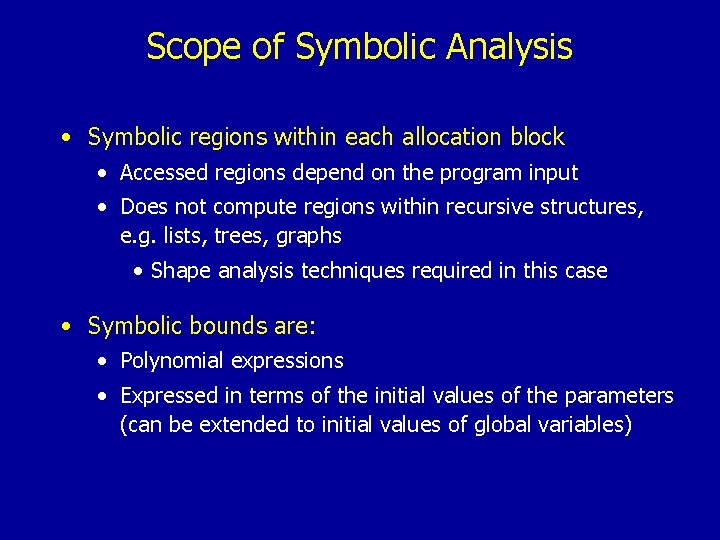

Scope of Symbolic Analysis • Symbolic regions within each allocation block • Accessed regions depend on the program input • Does not compute regions within recursive structures, e. g. lists, trees, graphs • Shape analysis techniques required in this case • Symbolic bounds are: • Polynomial expressions • Expressed in terms of the initial values of the parameters (can be extended to initial values of global variables)

3. Uses of Pointer Analysis and Symbolic Analysis

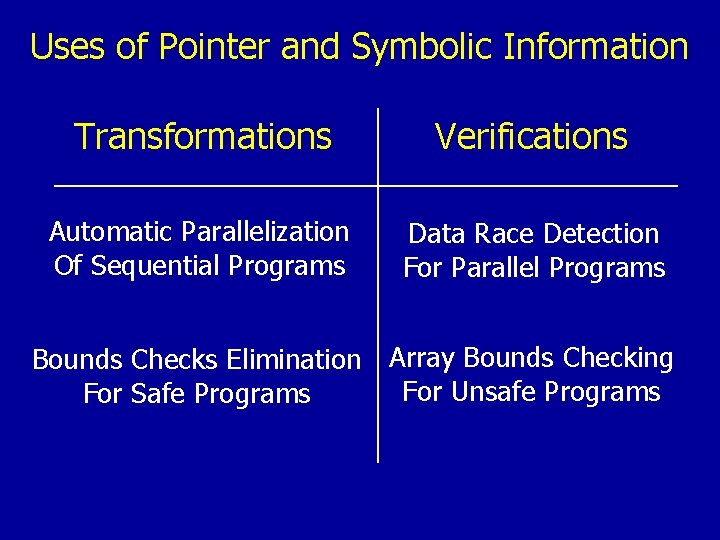

Uses of Pointer and Symbolic Information Transformations Verifications Automatic Parallelization Of Sequential Programs Data Race Detection For Parallel Programs Bounds Checks Elimination Array Bounds Checking For Unsafe Programs For Safe Programs

Experimental Results • Implementation • SUIF Infrastructure • lp_solve linear programming solver • Cilk multithreaded language • Benchmarks: • Sorting programs: Quick. Sort, Merge. Sort • Dense matrix programs: Matrix Multiplication, LU • Stencil computation: Heat • Branch and Bound: Knapsack

Experimental Results • Two versions of each benchmark • Sequential version written in C • Multithreaded version written in Cilk • Experiments: 1. Data Race Detection for the multithreaded versions 2. Array Bounds Violation Detection for both sequential and multithreaded versions 3. Automatic Parallelization for the sequential version

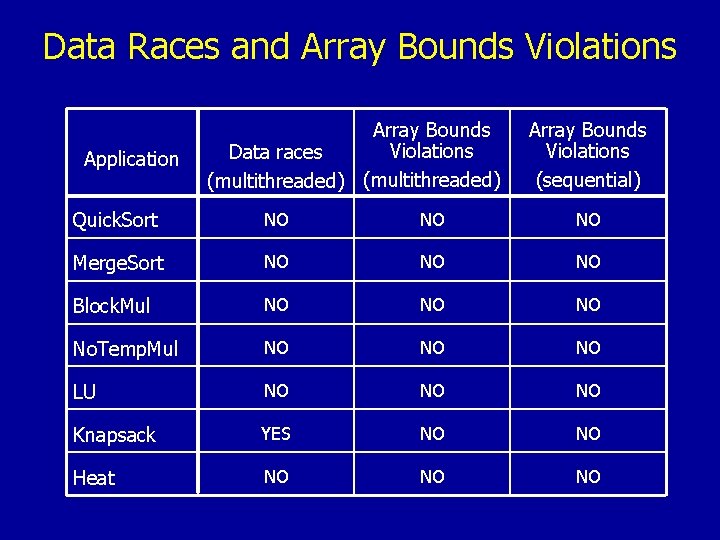

Data Races and Array Bounds Violations Application Array Bounds Violations Data races (multithreaded) Array Bounds Violations (sequential) Quick. Sort NO NO NO Merge. Sort NO NO NO Block. Mul NO NO NO No. Temp. Mul NO NO NO LU NO NO NO Knapsack YES NO NO Heat NO NO NO

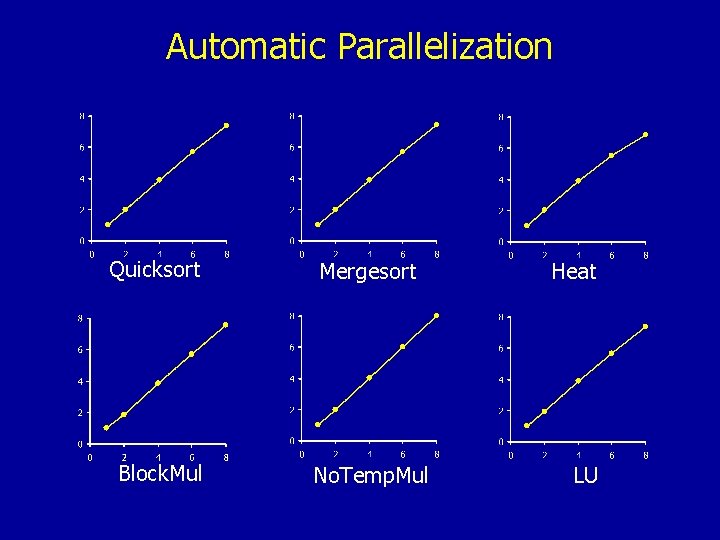

Automatic Parallelization Quicksort Mergesort Block. Mul No. Temp. Mul Heat LU

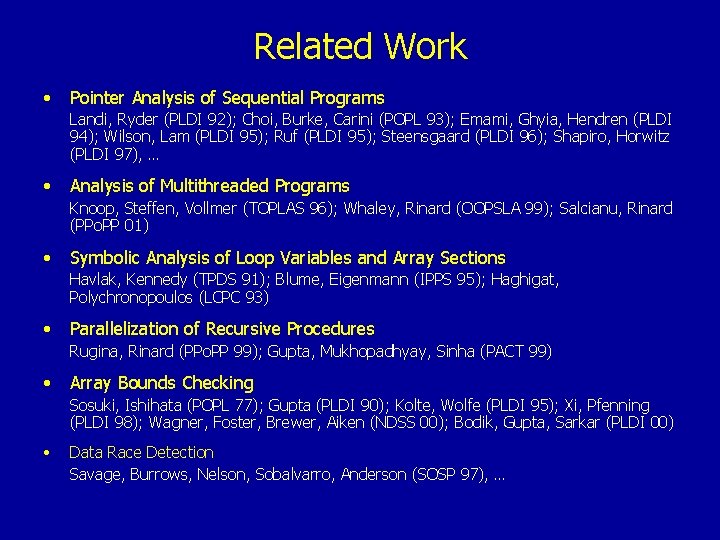

Related Work • Pointer Analysis of Sequential Programs Landi, Ryder (PLDI 92); Choi, Burke, Carini (POPL 93); Emami, Ghyia, Hendren (PLDI 94); Wilson, Lam (PLDI 95); Ruf (PLDI 95); Steensgaard (PLDI 96); Shapiro, Horwitz (PLDI 97), … • Analysis of Multithreaded Programs Knoop, Steffen, Vollmer (TOPLAS 96); Whaley, Rinard (OOPSLA 99); Salcianu, Rinard (PPo. PP 01) • Symbolic Analysis of Loop Variables and Array Sections Havlak, Kennedy (TPDS 91); Blume, Eigenmann (IPPS 95); Haghigat, Polychronopoulos (LCPC 93) • Parallelization of Recursive Procedures Rugina, Rinard (PPo. PP 99); Gupta, Mukhopadhyay, Sinha (PACT 99) • Array Bounds Checking Sosuki, Ishihata (POPL 77); Gupta (PLDI 90); Kolte, Wolfe (PLDI 95); Xi, Pfenning (PLDI 98); Wagner, Foster, Brewer, Aiken (NDSS 00); Bodik, Gupta, Sarkar (PLDI 00) • Data Race Detection Savage, Burrows, Nelson, Sobalvarro, Anderson (SOSP 97), …

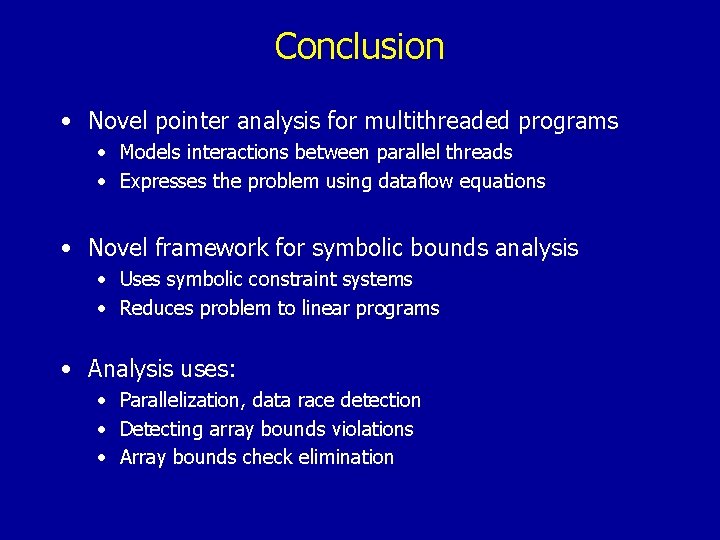

Conclusion • Novel pointer analysis for multithreaded programs • Models interactions between parallel threads • Expresses the problem using dataflow equations • Novel framework for symbolic bounds analysis • Uses symbolic constraint systems • Reduces problem to linear programs • Analysis uses: • Parallelization, data race detection • Detecting array bounds violations • Array bounds check elimination

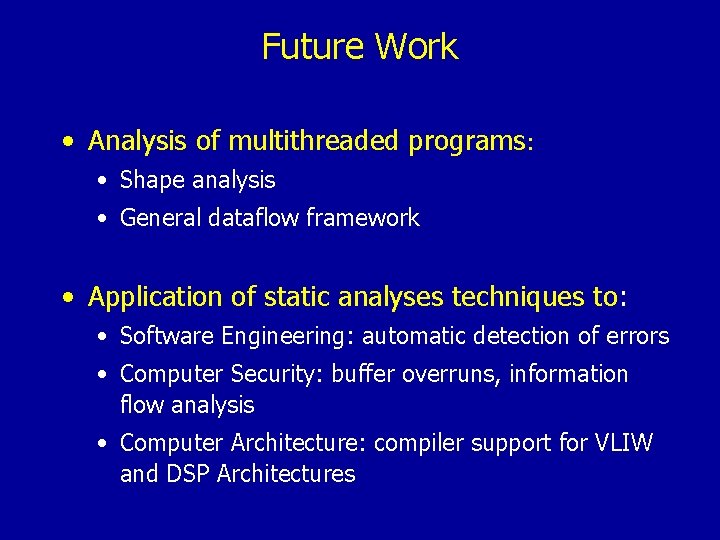

Future Work • Analysis of multithreaded programs: • Shape analysis • General dataflow framework • Application of static analyses techniques to: • Software Engineering: automatic detection of errors • Computer Security: buffer overruns, information flow analysis • Computer Architecture: compiler support for VLIW and DSP Architectures

- Slides: 122