Profile of HDFEOS 5 Files Abe Taaheri Raytheon

Profile of HDF-EOS 5 Files Abe Taaheri, Raytheon Information Systems Larry Klein, RS Information Systems HDF EOS Workshop X November 2006

General HDF-EOS 5 File Structure • HDF EOS 5 file is any valid HDF 5 file that contains: – a family of global attributes called: coremetadata. X Optional data objects: § family of global attributes called: archivemetadata. X § any number of Swath, Grid, Point, ZA, and Profile data structures. « another family of global attributes: Struct. Metadata. X • The global attributes provide information on the structure of HDF EOS 5 file or information on the data granule that file contains. • Other optional user added global attributes such as “PGEVersion”, “Orbit. Number”, etc. are written as HDF 5 attributes into a group called “FILE ATTRIBUTES” Page 2

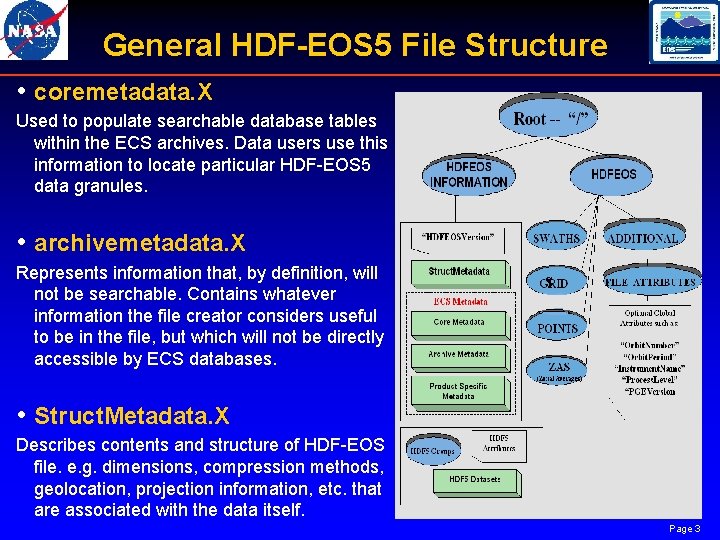

General HDF-EOS 5 File Structure • coremetadata. X Used to populate searchable database tables within the ECS archives. Data users use this information to locate particular HDF EOS 5 data granules. • archivemetadata. X Represents information that, by definition, will not be searchable. Contains whatever information the file creator considers useful to be in the file, but which will not be directly accessible by ECS databases. S • Struct. Metadata. X Describes contents and structure of HDF EOS file. e. g. dimensions, compression methods, geolocation, projection information, etc. that are associated with the data itself. Page 3

General HDF-EOS 5 File Structure • An HDF EOS 5 file – can contain any number of Grid, Point, Swath, Zonal Average, and Profile data structures – has no size limits. § A file containing 1000's of objects could cause program execution slow downs – can be hybrid, containing plain HDF 5 objects for special purposes. § HDF 5 objects must be accessed by the HDF 5 library and not by HDF EOS 5 extensions. § will require more knowledge of file contents on the part of an applications developer or data user. Page 4

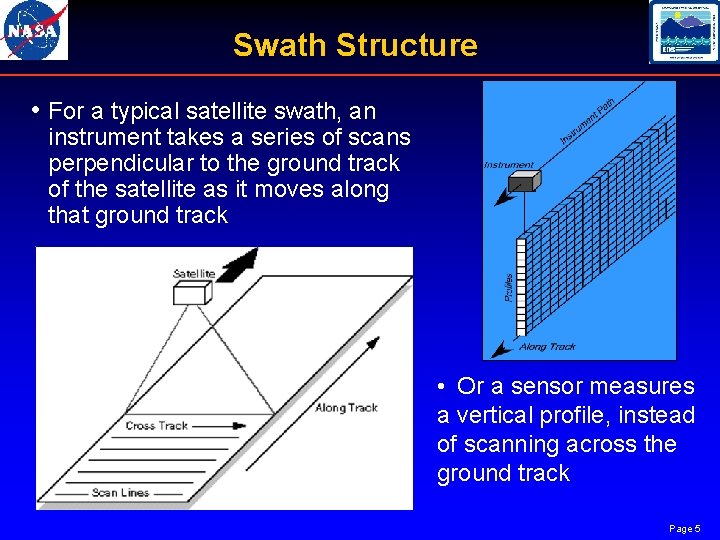

Swath Structure • For a typical satellite swath, an instrument takes a series of scans perpendicular to the ground track of the satellite as it moves along that ground track • Or a sensor measures a vertical profile, instead of scanning across the ground track Page 5

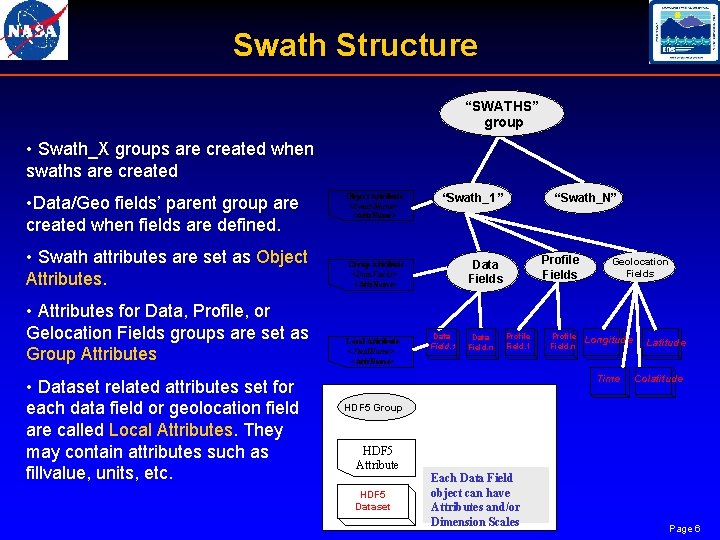

Swath Structure “SWATHS” group • Swath_X groups are created when swaths are created Object Attribute <Swath. Name>: <Attr. Name> “Swath_1” • Swath attributes are set as Object Attributes. Group Attribute <Data. Fields>: <Attr. Name> Data Fields • Attributes for Data, Profile, or Gelocation Fields groups are set as Group Attributes Local Attribute <Field. Name>: <Attr. Name> • Data/Geo fields’ parent group are created when fields are defined. • Dataset related attributes set for each data field or geolocation field are called Local Attributes. They may contain attributes such as fillvalue, units, etc. Data Field. 1 Data Field. n “Swath_N” Profile Fields Profile Field. 1 Profile Field. n Geolocation Fields Longitude Time Latitude Colatitude HDF 5 Group HDF 5 Attribute HDF 5 Dataset Each Data Field object can have Attributes and/or Dimension Scales Page 6

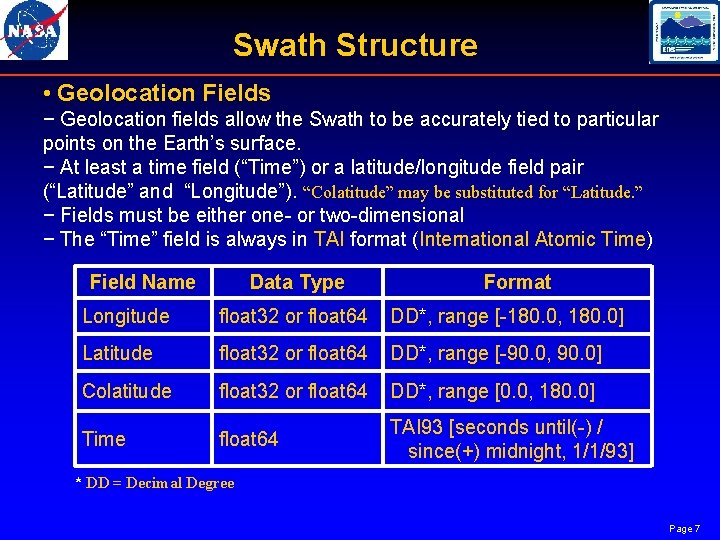

Swath Structure • Geolocation Fields − Geolocation fields allow the Swath to be accurately tied to particular points on the Earth’s surface. − At least a time field (“Time”) or a latitude/longitude field pair (“Latitude” and “Longitude”). “Colatitude” may be substituted for “Latitude. ” − Fields must be either one or two dimensional − The “Time” field is always in TAI format (International Atomic Time) Field Name Data Type Format Longitude float 32 or float 64 DD*, range [ 180. 0, 180. 0] Latitude float 32 or float 64 DD*, range [ 90. 0, 90. 0] Colatitude float 32 or float 64 DD*, range [0. 0, 180. 0] Time float 64 TAI 93 [seconds until( ) / since(+) midnight, 1/1/93] * DD = Decimal Degree Page 7

Swath Structure • Data Fields − Fields may have up to 8 dimensions. − An “unlimited” dimension must be the first dimension (in C order). − For all multi dimensional fields in scan or profile oriented Swaths, the dimension representing the “along track” dimension must precede the dimension representing the scan or profile dimension(s) (in C order). − Compression is selectable at the field level within a Swath. All HDF 5 supported compression methods are available through the HDF EOS 5 library. The compression method is stored within the file. Subsequent use of the library will un compress the file. As in HDF 5 the data needs to be chunked before the compression is applied. − Field names: * may be up to 64 characters in length. * Any character can be used with the exception of, ", ", "; ", " and "/". * are case sensitive. * must be unique within a particular Swath structure. Page 8

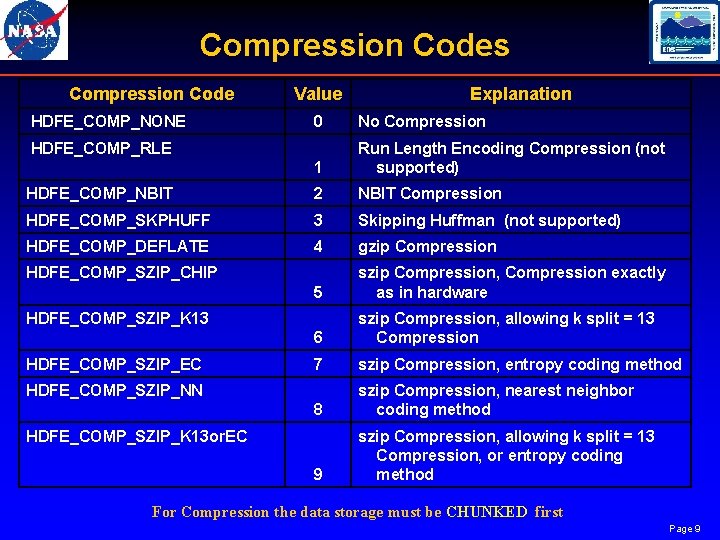

Compression Codes Compression Code HDFE_COMP_NONE Value Explanation 0 No Compression 1 Run Length Encoding Compression (not supported) HDFE_COMP_NBIT 2 NBIT Compression HDFE_COMP_SKPHUFF 3 Skipping Huffman (not supported) HDFE_COMP_DEFLATE 4 gzip Compression 5 szip Compression, Compression exactly as in hardware 6 szip Compression, allowing k split = 13 Compression 7 szip Compression, entropy coding method 8 szip Compression, nearest neighbor coding method 9 szip Compression, allowing k split = 13 Compression, or entropy coding method HDFE_COMP_RLE HDFE_COMP_SZIP_CHIP HDFE_COMP_SZIP_K 13 HDFE_COMP_SZIP_EC HDFE_COMP_SZIP_NN HDFE_COMP_SZIP_K 13 or. EC For Compression the data storage must be CHUNKED first Page 9

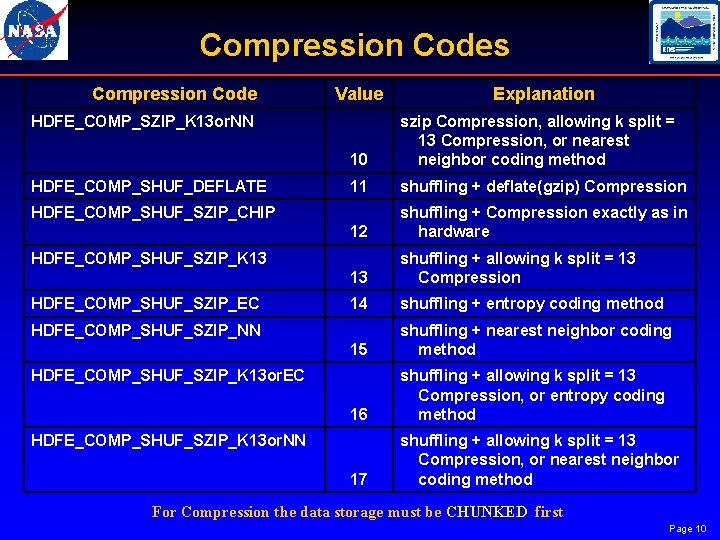

Compression Codes Compression Code Value HDFE_COMP_SZIP_K 13 or. NN HDFE_COMP_SHUF_DEFLATE 10 szip Compression, allowing k split = 13 Compression, or nearest neighbor coding method 11 shuffling + deflate(gzip) Compression 12 shuffling + Compression exactly as in hardware 13 shuffling + allowing k split = 13 Compression 14 shuffling + entropy coding method 15 shuffling + nearest neighbor coding method 16 shuffling + allowing k split = 13 Compression, or entropy coding method 17 shuffling + allowing k split = 13 Compression, or nearest neighbor coding method HDFE_COMP_SHUF_SZIP_CHIP HDFE_COMP_SHUF_SZIP_K 13 HDFE_COMP_SHUF_SZIP_EC Explanation HDFE_COMP_SHUF_SZIP_NN HDFE_COMP_SHUF_SZIP_K 13 or. EC HDFE_COMP_SHUF_SZIP_K 13 or. NN For Compression the data storage must be CHUNKED first Page 10

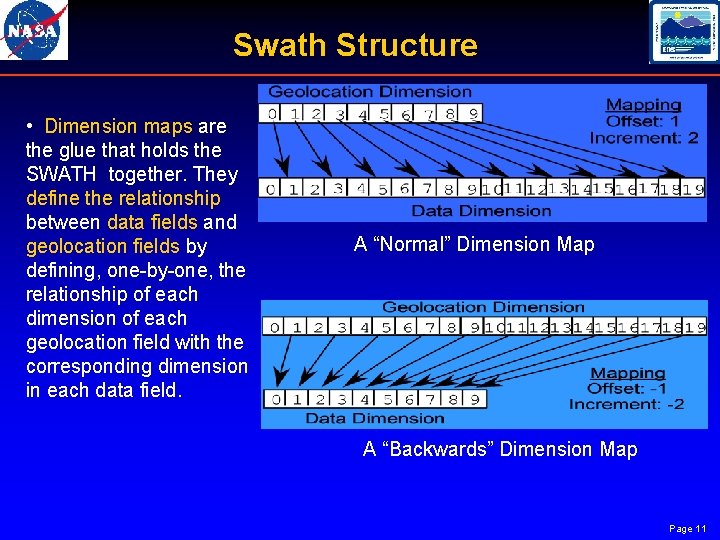

Swath Structure • Dimension maps are the glue that holds the SWATH together. They define the relationship between data fields and geolocation fields by defining, one by one, the relationship of each dimension of each geolocation field with the corresponding dimension in each data field. A “Normal” Dimension Map A “Backwards” Dimension Map Page 11

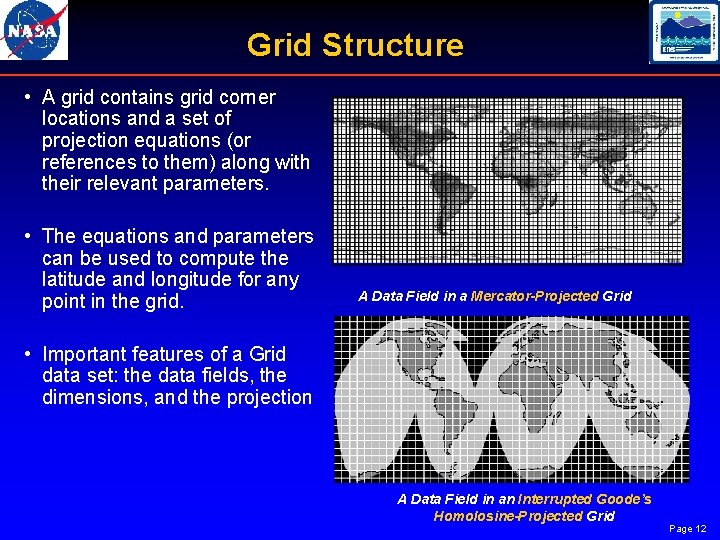

Grid Structure • A grid contains grid corner locations and a set of projection equations (or references to them) along with their relevant parameters. • The equations and parameters can be used to compute the latitude and longitude for any point in the grid. A Data Field in a Mercator-Projected Grid • Important features of a Grid data set: the data fields, the dimensions, and the projection A Data Field in an Interrupted Goode’s Homolosine-Projected Grid Page 12

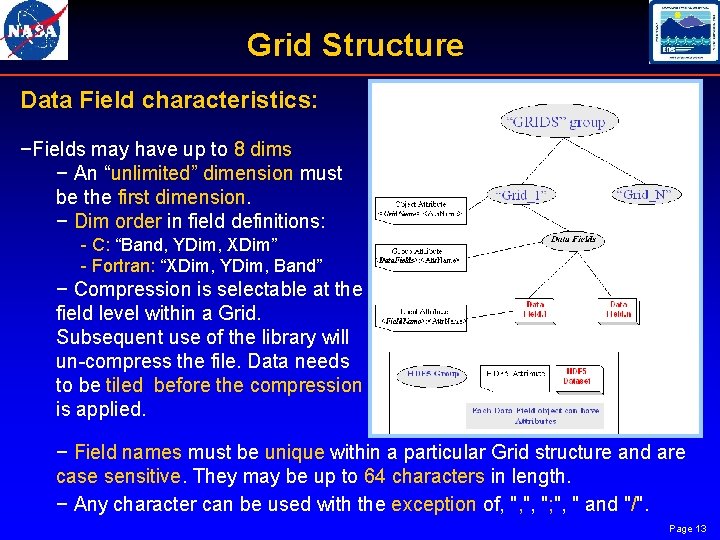

Grid Structure Data Field characteristics: −Fields may have up to 8 dims − An “unlimited” dimension must be the first dimension. − Dim order in field definitions: C: “Band, YDim, XDim” Fortran: “XDim, YDim, Band” − Compression is selectable at the field level within a Grid. Subsequent use of the library will un compress the file. Data needs to be tiled before the compression is applied. − Field names must be unique within a particular Grid structure and are case sensitive. They may be up to 64 characters in length. − Any character can be used with the exception of, ", ", "; ", " and "/". Page 13

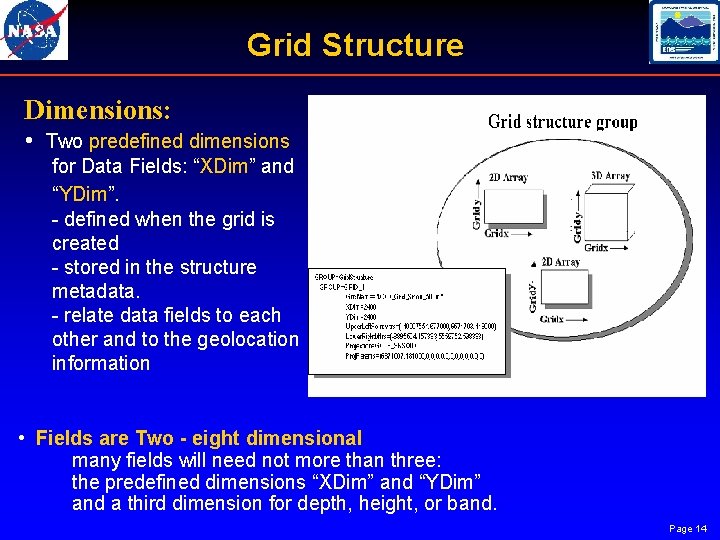

Grid Structure Dimensions: • Two predefined dimensions for Data Fields: “XDim” and “YDim”. defined when the grid is created stored in the structure metadata. relate data fields to each other and to the geolocation information • Fields are Two - eight dimensional many fields will need not more than three: the predefined dimensions “XDim” and “YDim” and a third dimension for depth, height, or band. Page 14

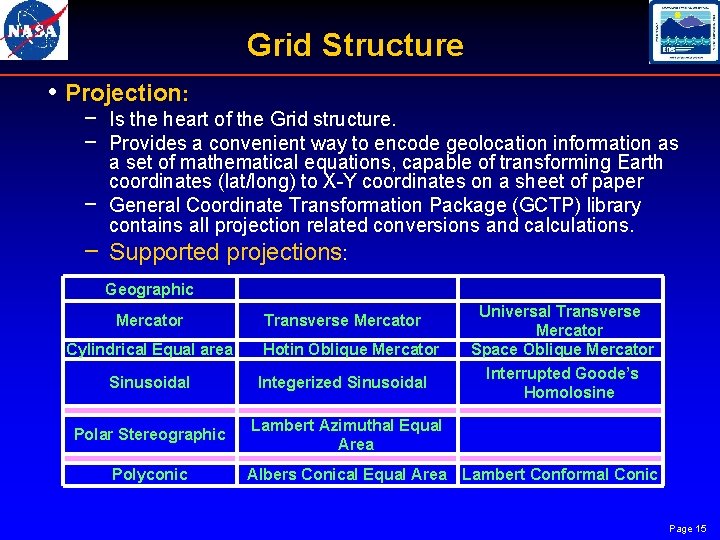

Grid Structure • Projection: − Is the heart of the Grid structure. − Provides a convenient way to encode geolocation information as a set of mathematical equations, capable of transforming Earth coordinates (lat/long) to X Y coordinates on a sheet of paper − General Coordinate Transformation Package (GCTP) library contains all projection related conversions and calculations. − Supported projections: Geographic Mercator Cylindrical Equal area Transverse Mercator Hotin Oblique Mercator Sinusoidal Integerized Sinusoidal Polar Stereographic Lambert Azimuthal Equal Area Polyconic Universal Transverse Mercator Space Oblique Mercator Interrupted Goode’s Homolosine Albers Conical Equal Area Lambert Conformal Conic Page 15

![Point Structure • Made up of a series of data records taken at [possibly] Point Structure • Made up of a series of data records taken at [possibly]](http://slidetodoc.com/presentation_image_h2/1cb0f2021cbaccac47ae16eef69a626d/image-16.jpg)

Point Structure • Made up of a series of data records taken at [possibly] irregular time intervals and at scattered geographic locations • Loosely organized form of geolocated data supported by HDF EOS • Level are linked by a common field name called Link. Field • Usually shared info is stored in Parent level, while data values stored in Child level • The values for the Link. Filed in the Parent level must be unique Page 16

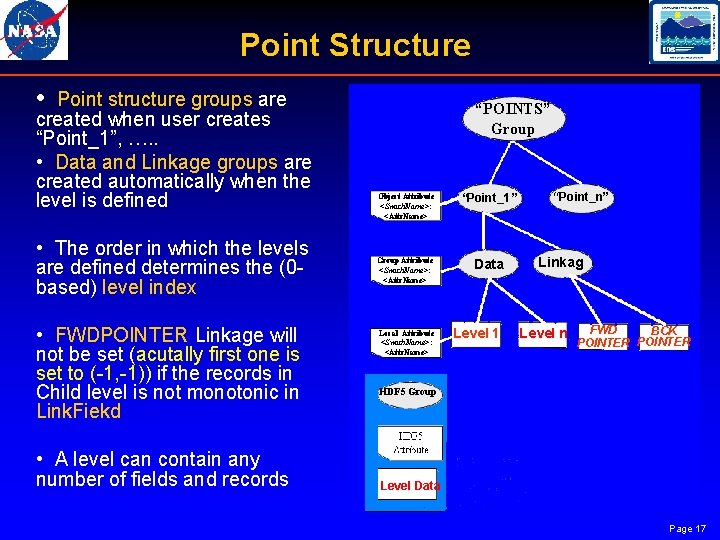

Point Structure • Point structure groups are created when user creates “Point_1”, …. . • Data and Linkage groups are created automatically when the level is defined • The order in which the levels are defined determines the (0 based) level index • FWDPOINTER Linkage will not be set (acutally first one is set to ( 1, 1)) if the records in Child level is not monotonic in Link. Fiekd “POINTS” Group Object Attribute <Swath. Name>: <Attr. Name> “Point_1” Group Attribute <Swath. Name>: <Attr. Name> Data Local Attribute <Swath. Name>: <Attr. Name> Level 1 “Point_n” Linkag Level n FWD BCK POINTER HDF 5 Group • A level can contain any number of fields and records Level Data Page 17

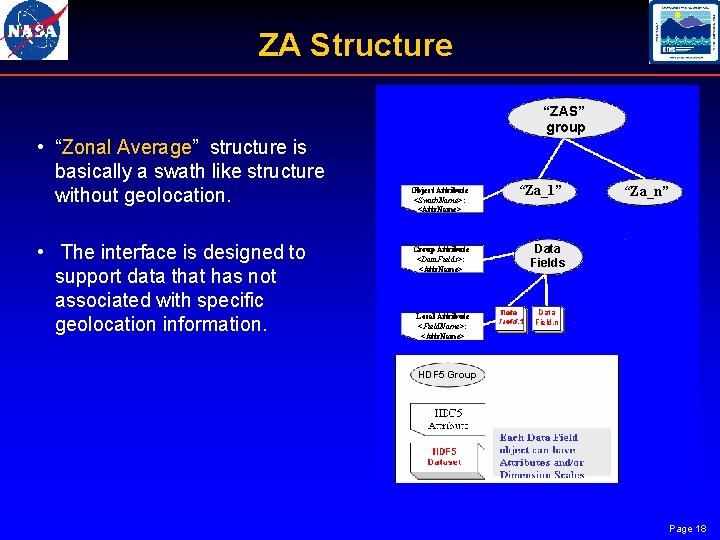

ZA Structure “ZAS” group • “Zonal Average” structure is basically a swath like structure without geolocation. • The interface is designed to support data that has not associated with specific geolocation information. Object Attribute <Swath. Name>: <Attr. Name> Group Attribute <Data. Fields>: <Attr. Name> Local Attribute <Field. Name>: <Attr. Name> “Za_1” “Za_n” Data Fields Data Field. n HDF 5 Group Page 18

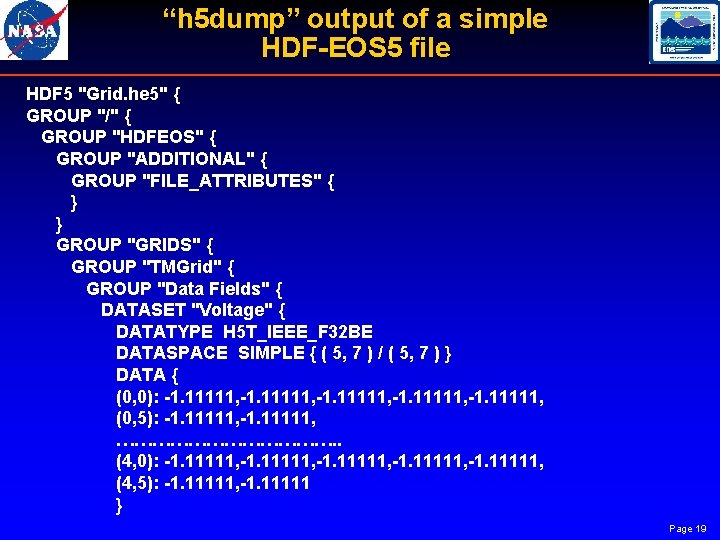

“h 5 dump” output of a simple HDF-EOS 5 file HDF 5 "Grid. he 5" { GROUP "/" { GROUP "HDFEOS" { GROUP "ADDITIONAL" { GROUP "FILE_ATTRIBUTES" { } } GROUP "GRIDS" { GROUP "TMGrid" { GROUP "Data Fields" { DATASET "Voltage" { DATATYPE H 5 T_IEEE_F 32 BE DATASPACE SIMPLE { ( 5, 7 ) / ( 5, 7 ) } DATA { (0, 0): -1. 11111, (0, 5): -1. 11111, ………………. . (4, 0): -1. 11111, (4, 5): -1. 11111, -1. 11111 } Page 19

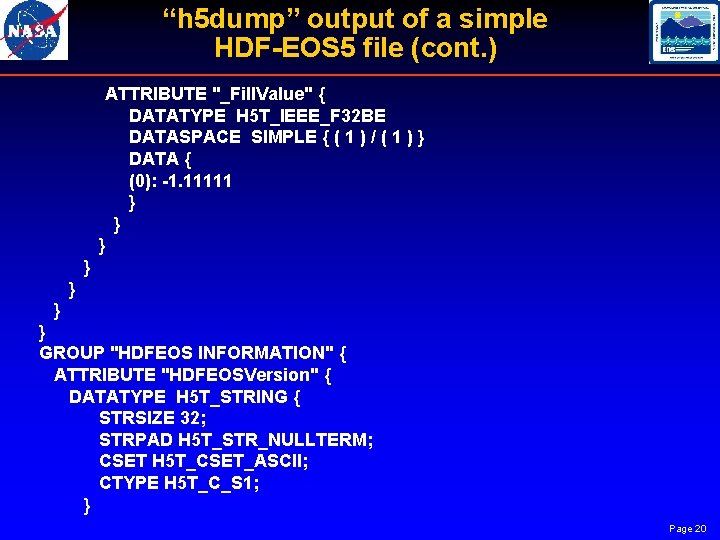

“h 5 dump” output of a simple HDF-EOS 5 file (cont. ) ATTRIBUTE "_Fill. Value" { DATATYPE H 5 T_IEEE_F 32 BE DATASPACE SIMPLE { ( 1 ) / ( 1 ) } DATA { (0): -1. 11111 } } } } GROUP "HDFEOS INFORMATION" { ATTRIBUTE "HDFEOSVersion" { DATATYPE H 5 T_STRING { STRSIZE 32; STRPAD H 5 T_STR_NULLTERM; CSET H 5 T_CSET_ASCII; CTYPE H 5 T_C_S 1; } Page 20

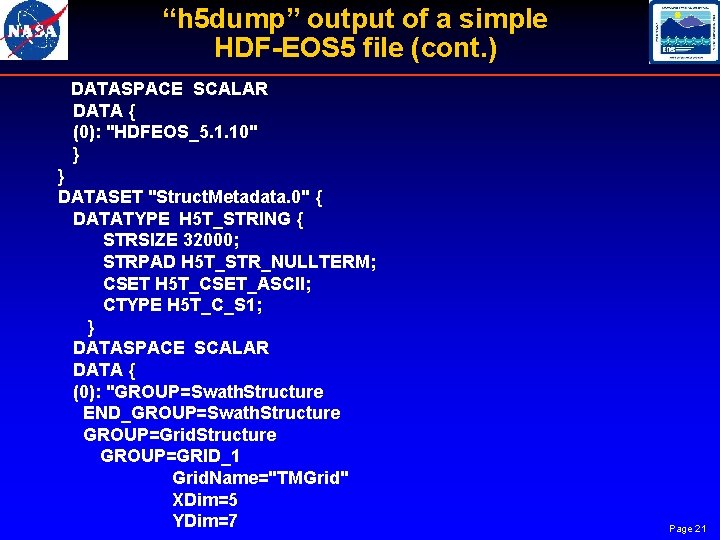

“h 5 dump” output of a simple HDF-EOS 5 file (cont. ) DATASPACE SCALAR DATA { (0): "HDFEOS_5. 1. 10" } } DATASET "Struct. Metadata. 0" { DATATYPE H 5 T_STRING { STRSIZE 32000; STRPAD H 5 T_STR_NULLTERM; CSET H 5 T_CSET_ASCII; CTYPE H 5 T_C_S 1; } DATASPACE SCALAR DATA { (0): "GROUP=Swath. Structure END_GROUP=Swath. Structure GROUP=Grid. Structure GROUP=GRID_1 Grid. Name="TMGrid" XDim=5 YDim=7 Page 21

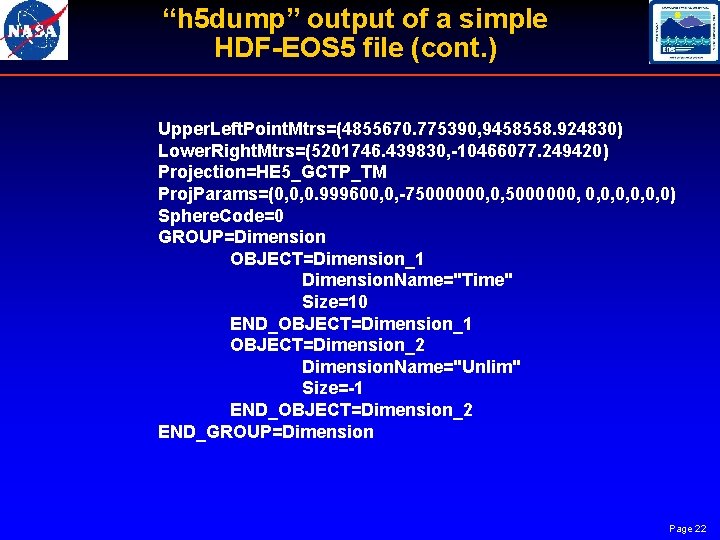

“h 5 dump” output of a simple HDF-EOS 5 file (cont. ) Upper. Left. Point. Mtrs=(4855670. 775390, 9458558. 924830) Lower. Right. Mtrs=(5201746. 439830, -10466077. 249420) Projection=HE 5_GCTP_TM Proj. Params=(0, 0, 0. 999600, 0, -75000000, 0, 0) Sphere. Code=0 GROUP=Dimension OBJECT=Dimension_1 Dimension. Name="Time" Size=10 END_OBJECT=Dimension_1 OBJECT=Dimension_2 Dimension. Name="Unlim" Size=-1 END_OBJECT=Dimension_2 END_GROUP=Dimension Page 22

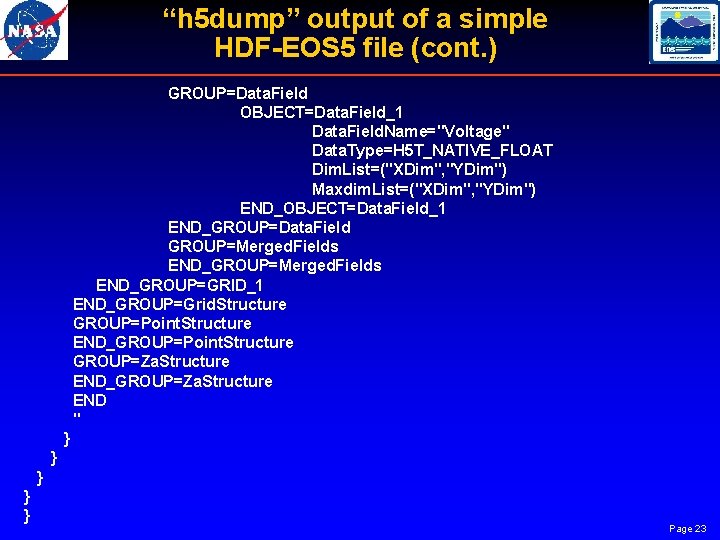

“h 5 dump” output of a simple HDF-EOS 5 file (cont. ) GROUP=Data. Field OBJECT=Data. Field_1 Data. Field. Name="Voltage" Data. Type=H 5 T_NATIVE_FLOAT Dim. List=("XDim", "YDim") Maxdim. List=("XDim", "YDim") END_OBJECT=Data. Field_1 END_GROUP=Data. Field GROUP=Merged. Fields END_GROUP=GRID_1 END_GROUP=Grid. Structure GROUP=Point. Structure END_GROUP=Point. Structure GROUP=Za. Structure END_GROUP=Za. Structure END " } } } Page 23

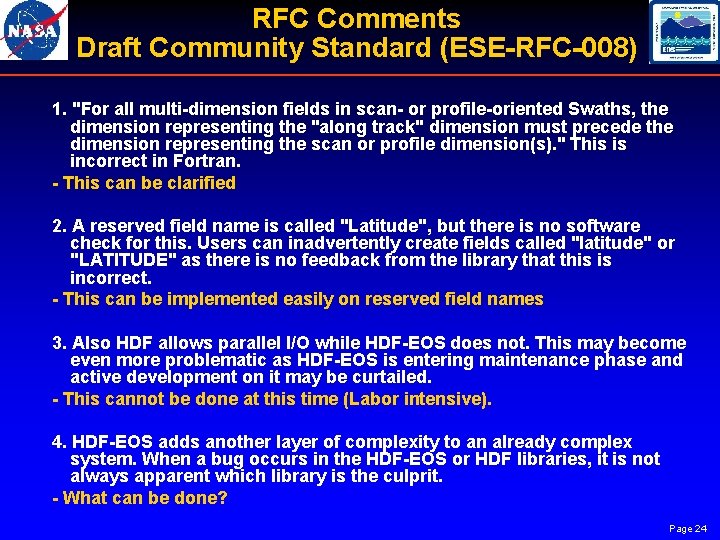

RFC Comments Draft Community Standard (ESE-RFC-008) 1. "For all multi-dimension fields in scan- or profile-oriented Swaths, the dimension representing the "along track" dimension must precede the dimension representing the scan or profile dimension(s). " This is incorrect in Fortran. - This can be clarified 2. A reserved field name is called "Latitude", but there is no software check for this. Users can inadvertently create fields called "latitude" or "LATITUDE" as there is no feedback from the library that this is incorrect. - This can be implemented easily on reserved field names 3. Also HDF allows parallel I/O while HDF-EOS does not. This may become even more problematic as HDF-EOS is entering maintenance phase and active development on it may be curtailed. - This cannot be done at this time (Labor intensive). 4. HDF-EOS adds another layer of complexity to an already complex system. When a bug occurs in the HDF-EOS or HDF libraries, it is not always apparent which library is the culprit. - What can be done? Page 24

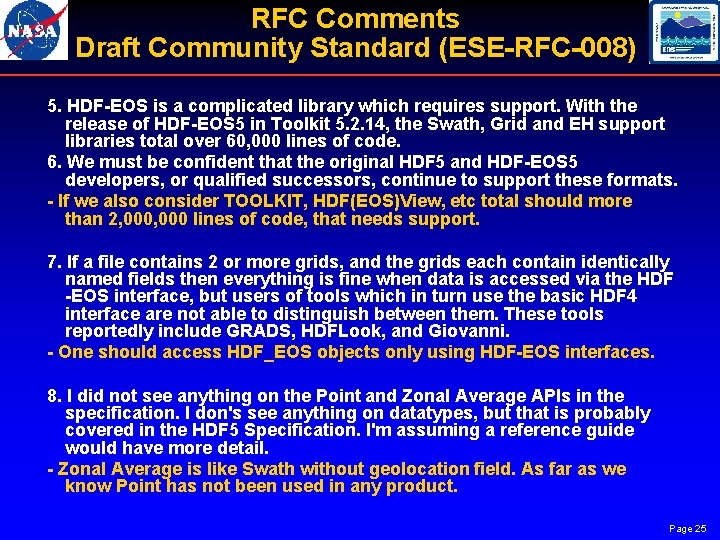

RFC Comments Draft Community Standard (ESE-RFC-008) 5. HDF-EOS is a complicated library which requires support. With the release of HDF-EOS 5 in Toolkit 5. 2. 14, the Swath, Grid and EH support libraries total over 60, 000 lines of code. 6. We must be confident that the original HDF 5 and HDF-EOS 5 developers, or qualified successors, continue to support these formats. - If we also consider TOOLKIT, HDF(EOS)View, etc total should more than 2, 000 lines of code, that needs support. 7. If a file contains 2 or more grids, and the grids each contain identically named fields then everything is fine when data is accessed via the HDF -EOS interface, but users of tools which in turn use the basic HDF 4 interface are not able to distinguish between them. These tools reportedly include GRADS, HDFLook, and Giovanni. - One should access HDF_EOS objects only using HDF-EOS interfaces. 8. I did not see anything on the Point and Zonal Average APIs in the specification. I don's see anything on datatypes, but that is probably covered in the HDF 5 Specification. I'm assuming a reference guide would have more detail. - Zonal Average is like Swath without geolocation field. As far as we know Point has not been used in any product. Page 25

RFC Comments Draft Community Standard (ESE-RFC-008) 9. I have never tried to develop an implementation of HDF-EOS 5. However, the standard doesn't provide sufficient information to either reproduce or parse a correct coremetadata string. The standard stipulates the syntax of the structural metadata string description language (ODL), but contains no discussion of the lexicon or semantics to be used when creating these strings. - This is in SDP Toolkit and too much to bring it up in the HDF-EOS Standards. 10. The description of the standard should be completed so that all components of the metadata are well defined. - Metadata is in SDP TOOLKIT with ample examples. It was left out to concentrate on Swath and Grid structures in the Draft Community Standard (ESE-RFC-008) 11. Additionally the Draft Community Standard (ESE-RFC-008) states in section 7. 2. 3 that SDP toolkit contains the tools for parsing the coremetadata strings. Thus, the HDF-EOS(5) format is based not only upon HDF(5) but also put the SDP toolkit library. In this way the claim that HDF-EOS(5) is based upon HDF(5) is incomplete - it is also based on SDP toolkit, or a library of similar functionality - HDF-EOS Objects depend only on HDF 5 library. Only ECS metadata is handled using Toolkit. Page 26

RFC Comments Draft Community Standard (ESE-RFC-008) 12. Handing HDF-EOS in a high level language without HDF-EOS support would be difficult. This is because the standard relies on an obscure, obsolete syntax (Jet Propulsion Laboratory's ODL syntax) for its data serialization. Parsers for ODL are rare and not widely supported in different programming languages. The metadata stored with a dataset is effectively dumped into a metaphorical "black hole". - XML implementation was abandoned because of high cost. 13. The API documentation describes input variables to functions as being either IN or OUT (or perhaps both). In the case of variables which are passed IN type parameters it has never been clear if the functions will modify the referent or not. Are IN type parameter referents constants? - IN type parameters are not modified in functions (if that is the case, then it is explained in the Users Guide). 14. The primary failing of HDF-EOS as a useful product was made clear by an participant in the 2004 HDF/HDF-EOS meeting in Aurora, CO. The participate pointed out that HDF-EOS was not a standard but an implementation effectively "locked" to the particular programming languages which have been supported by the HDF-EOS(5) developers. This inhibits use of HDF-EOS(5) format data in other programming languages than C or Fortran. - We can support any language HDF can, but it would be labor intensive to do so. Page 27

RFC Comments Draft Community Standard (ESE-RFC-008) 15. For all practical purposes it is impossible to read or write HDF-EOS 5 datasets completely without using the existing libraries. Much of the high level software used for scientific data analysis doesn't support HDF-EOS 5 libraries completely or in a timely fashion. This lack support (in contrast to HDF) makes it a less than desirable form in which to store data. - What can be done? 16. SDP Toolkit which contains the recommended ODL parser is not supported under Mac OS X or Windows. - SDP Toolkit parser is supported under Mac OS X. A shorter version of SDP TOOLKIT, called MTD Toolkit, supports metadata handling tools in Windows. 17. ODL should be abandoned as a data serialization syntax. Indeed, the need for data serialization should be reconsidered altogether as the underlying HDF(5) data structure contains the necessary components to maintain structured data. - Too much work for redesigning HDf_EOS 5 18. A set of Quality Assurance (QA) tools should be developed which analyze a target dataset to verify that it is a lexically and syntactically correct HDF-EOS formatted dataset. The QA tools should be both distributed as open source and made available as a Web based service. - Good idea, but very labor intensive. Page 28

RFC Comments Draft Community Standard (ESE-RFC-008) 19. Example codes should be provided in different programming languages which produce and read the most commonly used HDF-EOS data structures. - Requires more work than can be done in the maintenance phase Page 29

- Slides: 29