Problem Solving through Exhaustive State Space Search Jacques

Problem Solving through Exhaustive State Space Search Jacques Robin Ontologies Reasoning Components Agents Simulations

Outline E E Search Agent Formulating Decision Problems as Navigating State Space to Find Goal State Generic Search Algorithm Specific Algorithms E E E E Breadth-First Search Uniform Cost Search Depth-First Search Backtracking Search Iterative Deepening Search Bi-Directional Search Comparative Table E Limitations and Difficulties E Repeated States E Partial Information

Search Agents E Generic decision problem to be solved by an agent: E Among all possible action sequences that I can execute, E which ones will result in changing the environment from its current state, E to another state that matches my goal? E Additional optimization problem: E Among those action sequences that will change the environment from its current state to a goal state E which one can be executed at minimum cost? E or which one leads to a state with maximum utility? E Search agent solves this decision problem by a generate and test approach: E Given the environment model, E generate one by one (all) the possible states (the state space) of the environment reachable through all possible action sequences from the current state, E test each generated state to determine whether it satisfies the goal or maximize utility E Navigation metaphor: E The order of state generation is viewed as navigating the entire environment state space

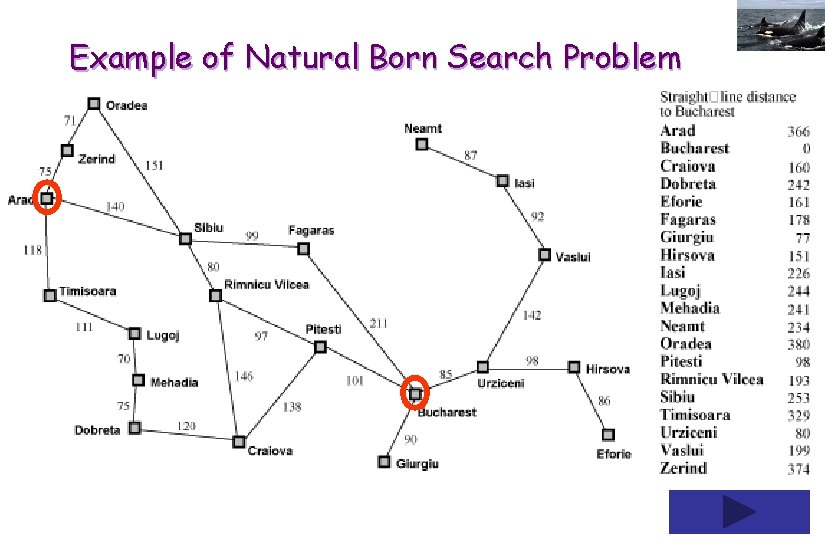

Example of Natural Born Search Problem

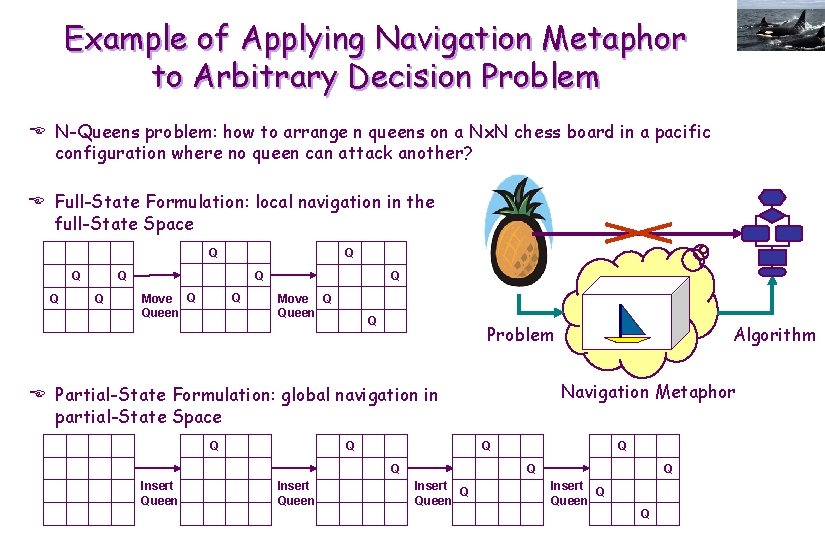

Example of Applying Navigation Metaphor to Arbitrary Decision Problem E N-Queens problem: how to arrange n queens on a Nx. N chess board in a pacific configuration where no queen can attack another? E Full-State Formulation: local navigation in the full-State Space Q Q Q Q Move Q Queen Q Problem Navigation Metaphor E Partial-State Formulation: global navigation in partial-State Space Q Q Insert Queen Algorithm Q Q Insert Q Queen Q

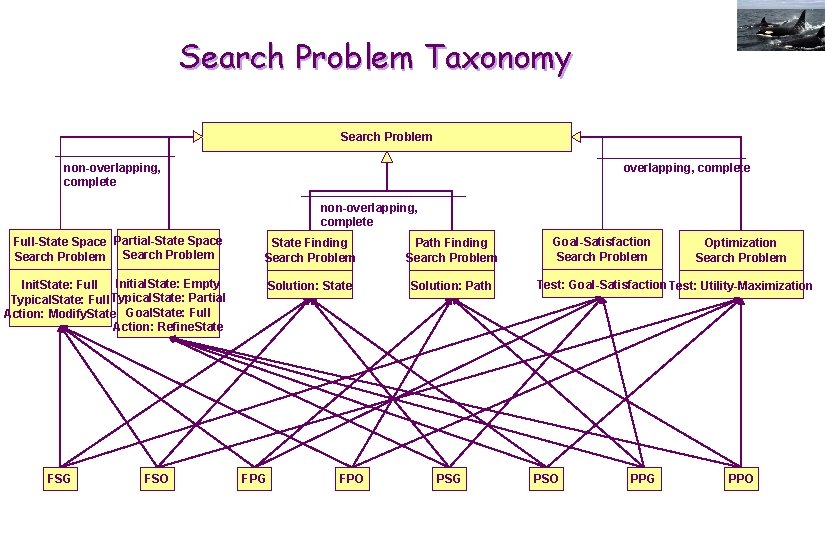

Search Problem Taxonomy Search Problem non-overlapping, complete Full-State Space Partial-State Space Search Problem State Finding Search Problem Path Finding Search Problem Initial. State: Empty Init. State: Full Typical. State: Partial Action: Modify. State Goal. State: Full Action: Refine. State Solution: Path FSG FSO FPG FPO PSG Goal-Satisfaction Search Problem Optimization Search Problem Test: Goal-Satisfaction Test: Utility-Maximization PSO PPG PPO

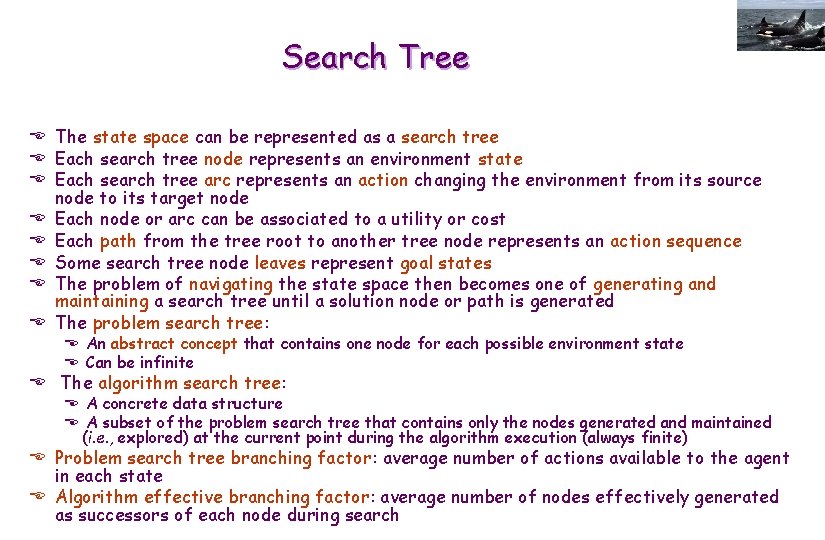

Search Tree E The state space can be represented as a search tree E Each search tree node represents an environment state E Each search tree arc represents an action changing the environment from its source node to its target node E Each node or arc can be associated to a utility or cost E Each path from the tree root to another tree node represents an action sequence E Some search tree node leaves represent goal states E The problem of navigating the state space then becomes one of generating and maintaining a search tree until a solution node or path is generated E The problem search tree: E An abstract concept that contains one node for each possible environment state E Can be infinite E The algorithm search tree: E A concrete data structure E A subset of the problem search tree that contains only the nodes generated and maintained (i. e. , explored) at the current point during the algorithm execution (always finite) E Problem search tree branching factor: average number of actions available to the agent in each state E Algorithm effective branching factor: average number of nodes effectively generated as successors of each node during search

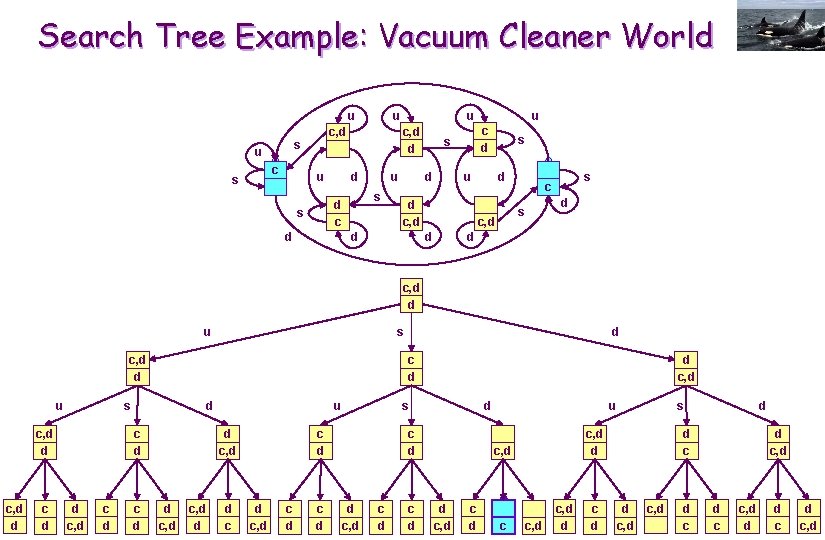

Search Tree Example: Vacuum Cleaner World u c s u d d u s d c s u c, d d c, d s u u c d s u d c, d d u d d d s c, d s c d c, d d u c, d d c d d s c, d d c, d c d d u d c, d d d c s c d d c, d c d u d c, d c s c, d d c d d d c, d d c c, d d d c, d

Search Methods E Searching the entire space of the action sequence reachable environment states is called exhaustive search, systematic search, blind search or uninformed search E Searching a restricted subset of that state space based on knowledge about the specific characteristics of the problem or problem class is called partial search E A heuristic is an insight or approximate knowledge about a problem class or problem class family on which a search algorithm can rely to improve its run time and/or space requirement E An ordering heuristic defines: E In which order to generate the problem search tree nodes, E When to go next while navigating the state space (to get closer faster to a solution point) E A pruning heuristic defines: E Which branches of the problem search tree to avoid generating alltogether, E What subspaces of the state space not to explore (for they cannot or are very unlikely to contain a solution point) E Non-heuristic, exhaustive search is not scalable to large problem instances (worst-case exponential in time and/or space) E Heuristic, partial search offers no warrantee to find a solution if one exist, or to find the best solution if several exists.

Formulating a Agent Decision Problem as a Search Problem E Define abstract format of a generic environment state, ex. , a class C E Define the initial state, ex. , a specific object of class C E Define the successor operation: E Takes as input a state or state set S and an action A E Returns the state or state set R resulting from the agent executing A in S E Together, these 3 elements constitute an intentional representation of the state space EThe search algorithm transforms this intentional representation into an extensional one, by repeatedly applying the successor operation starting from the initial state E Define a boolean operation testing whether a state is a goal, ex. , a method of C E For optimization problems: additionally define a operation that returns the cost or utility of an action or state

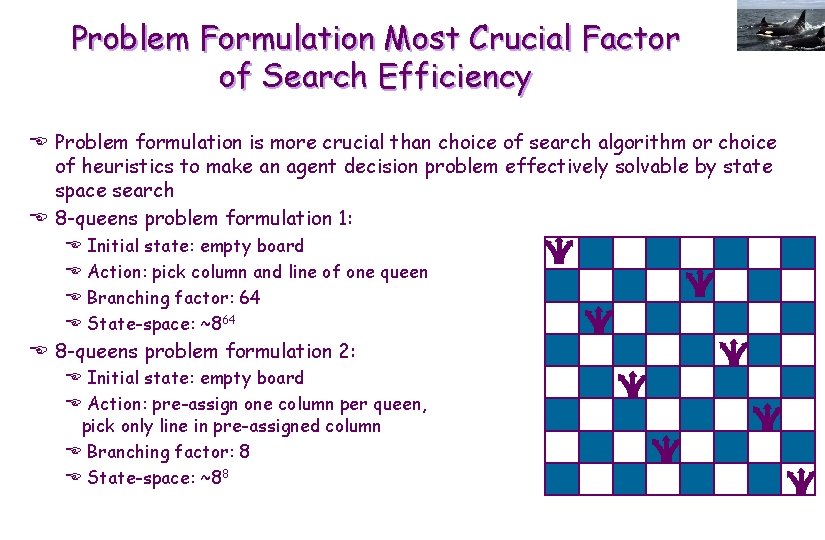

Problem Formulation Most Crucial Factor of Search Efficiency E Problem formulation is more crucial than choice of search algorithm or choice of heuristics to make an agent decision problem effectively solvable by state space search E 8 -queens problem formulation 1: E E Initial state: empty board Action: pick column and line of one queen Branching factor: 64 State-space: ~864 E 8 -queens problem formulation 2: E Initial state: empty board E Action: pre-assign one column per queen, pick only line in pre-assigned column E Branching factor: 8 E State-space: ~88

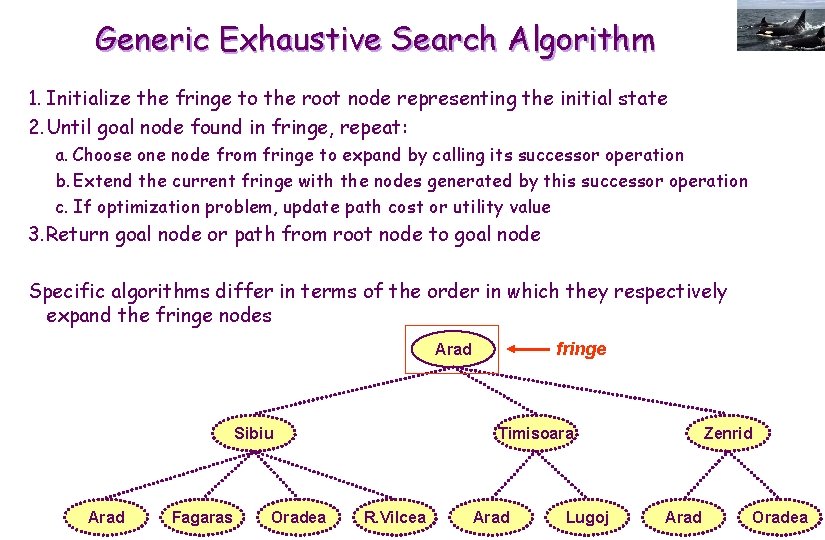

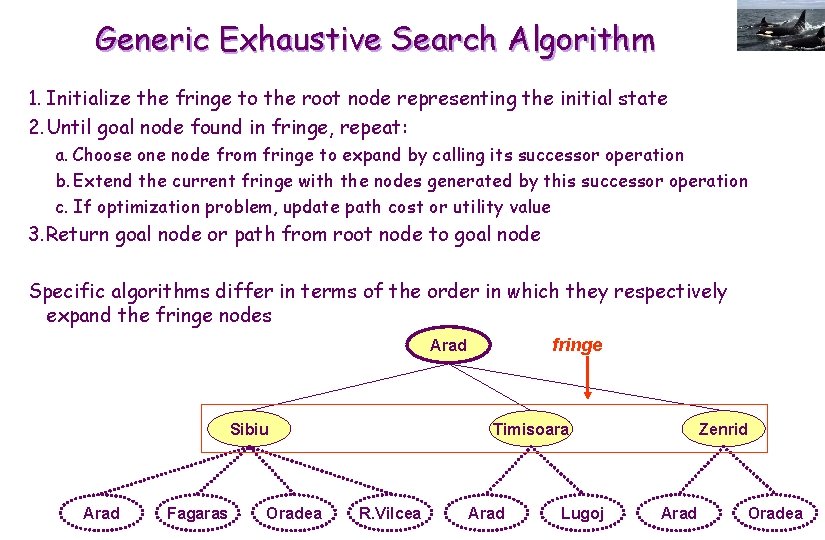

Generic Exhaustive Search Algorithm 1. Initialize the fringe to the root node representing the initial state 2. Until goal node found in fringe, repeat: a. Choose one node from fringe to expand by calling its successor operation b. Extend the current fringe with the nodes generated by this successor operation c. If optimization problem, update path cost or utility value 3. Return goal node or path from root node to goal node Specific algorithms differ in terms of the order in which they respectively expand the fringe nodes fringe Arad Sibiu Arad Fagaras Oradea Timisoara R. Vilcea Arad Lugoj Zenrid Arad Oradea

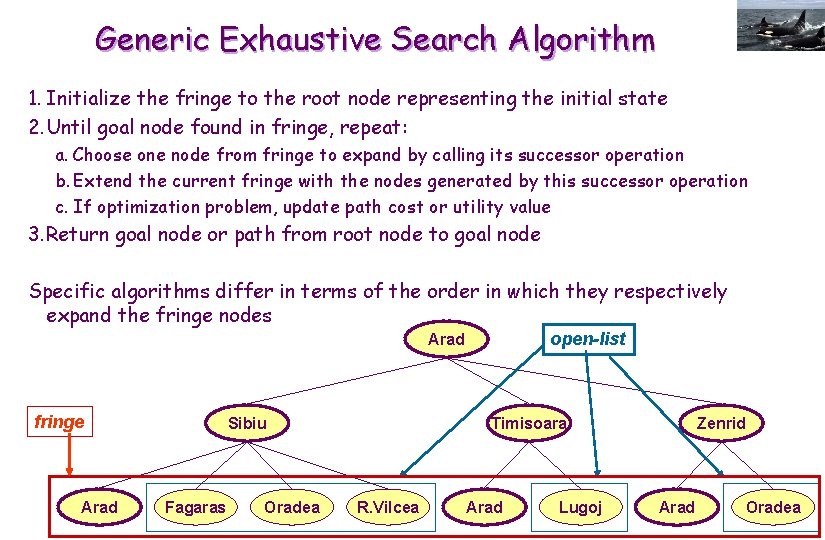

Generic Exhaustive Search Algorithm 1. Initialize the fringe to the root node representing the initial state 2. Until goal node found in fringe, repeat: a. Choose one node from fringe to expand by calling its successor operation b. Extend the current fringe with the nodes generated by this successor operation c. If optimization problem, update path cost or utility value 3. Return goal node or path from root node to goal node Specific algorithms differ in terms of the order in which they respectively expand the fringe nodes fringe Arad Sibiu Arad Fagaras Oradea Timisoara R. Vilcea Arad Lugoj Zenrid Arad Oradea

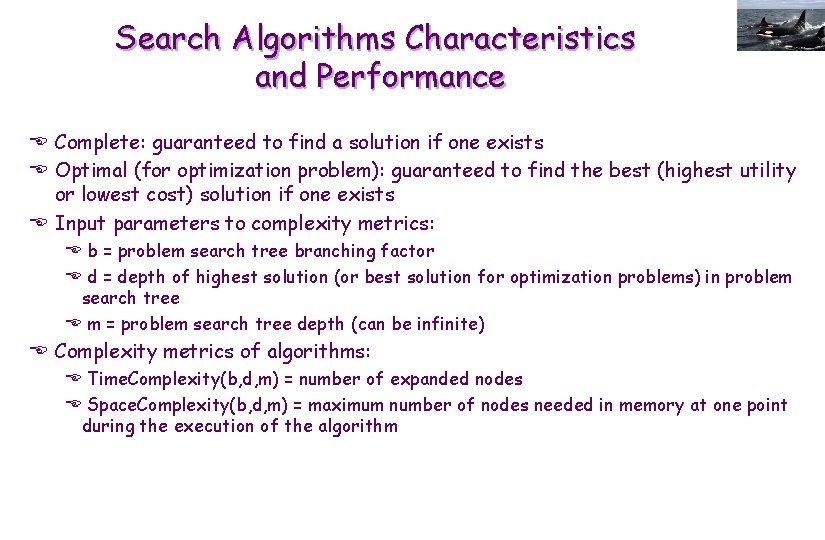

Generic Exhaustive Search Algorithm 1. Initialize the fringe to the root node representing the initial state 2. Until goal node found in fringe, repeat: a. Choose one node from fringe to expand by calling its successor operation b. Extend the current fringe with the nodes generated by this successor operation c. If optimization problem, update path cost or utility value 3. Return goal node or path from root node to goal node Specific algorithms differ in terms of the order in which they respectively expand the fringe nodes open-list Arad fringe Arad Sibiu Fagaras Oradea Timisoara R. Vilcea Arad Lugoj Zenrid Arad Oradea

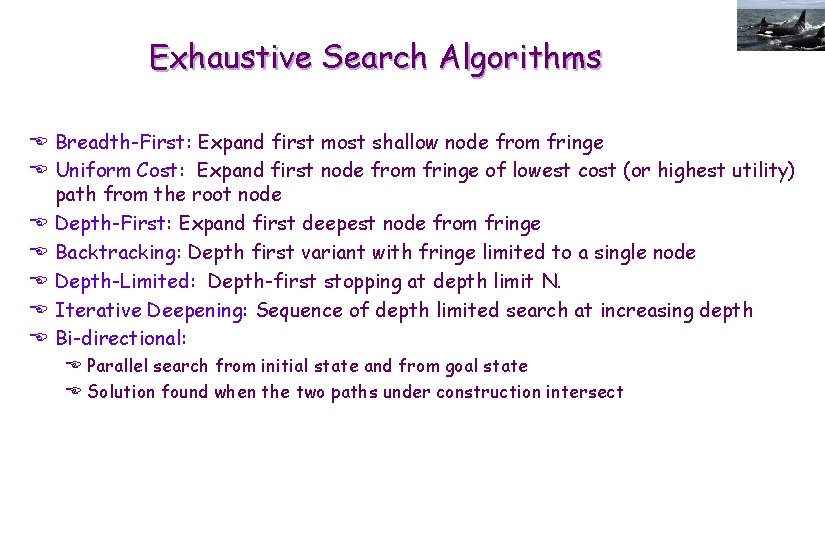

Search Algorithms Characteristics and Performance E Complete: guaranteed to find a solution if one exists E Optimal (for optimization problem): guaranteed to find the best (highest utility or lowest cost) solution if one exists E Input parameters to complexity metrics: E b = problem search tree branching factor E d = depth of highest solution (or best solution for optimization problems) in problem search tree E m = problem search tree depth (can be infinite) E Complexity metrics of algorithms: E Time. Complexity(b, d, m) = number of expanded nodes E Space. Complexity(b, d, m) = maximum number of nodes needed in memory at one point during the execution of the algorithm

Exhaustive Search Algorithms E Breadth-First: Expand first most shallow node from fringe E Uniform Cost: Expand first node from fringe of lowest cost (or highest utility) path from the root node E Depth-First: Expand first deepest node from fringe E Backtracking: Depth first variant with fringe limited to a single node E Depth-Limited: Depth-first stopping at depth limit N. E Iterative Deepening: Sequence of depth limited search at increasing depth E Bi-directional: E Parallel search from initial state and from goal state E Solution found when the two paths under construction intersect

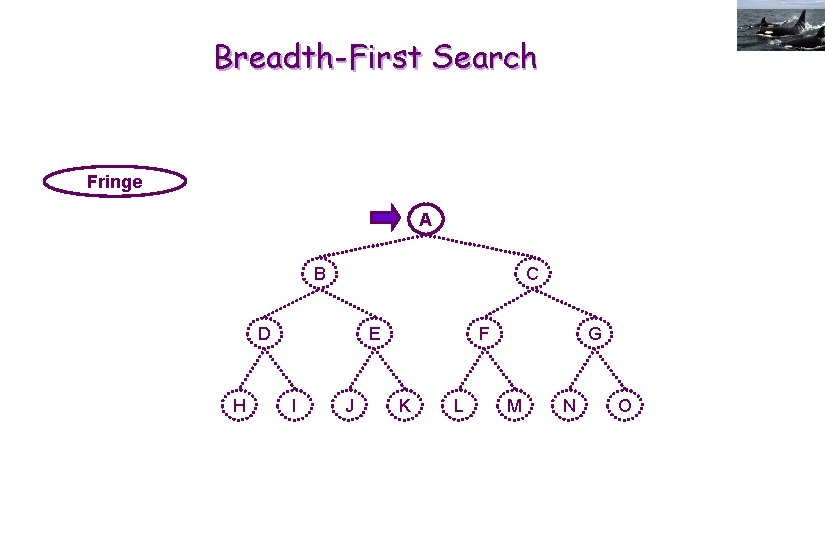

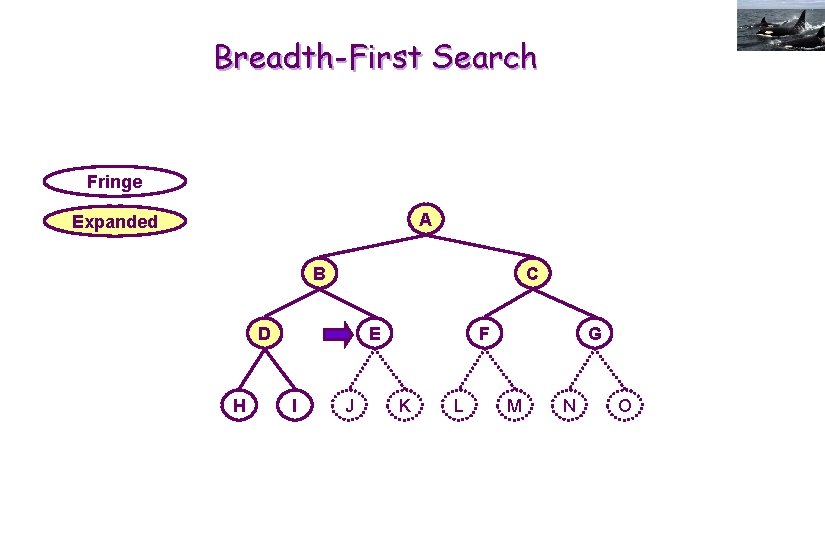

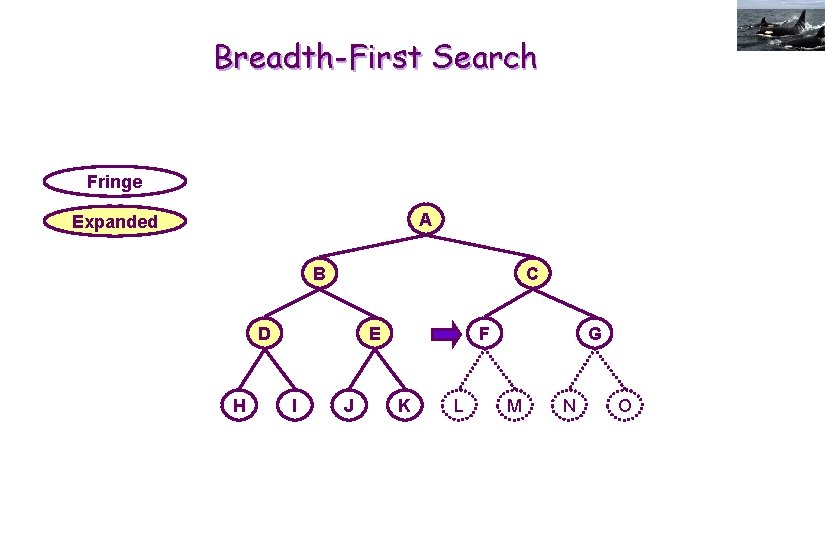

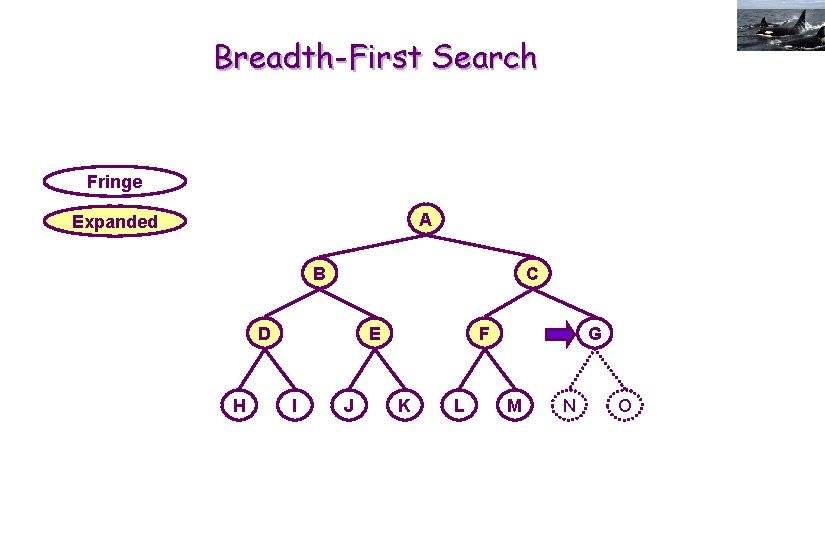

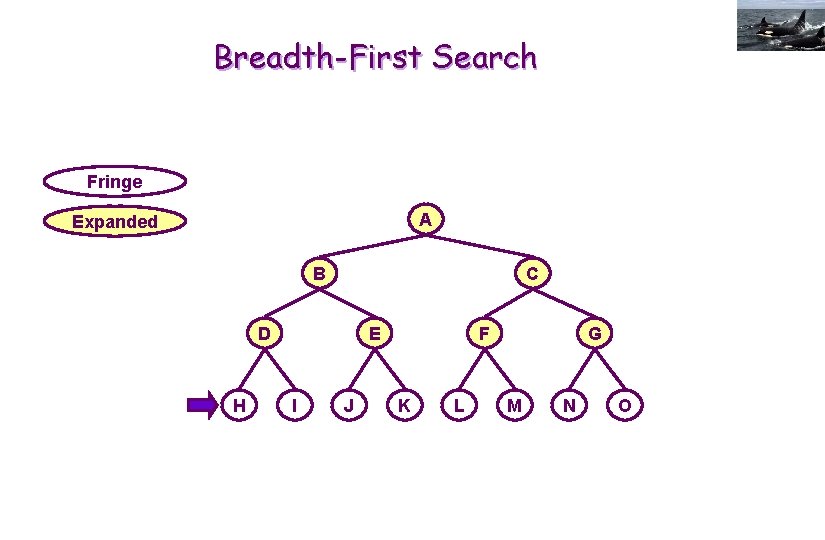

Breadth-First Search Fringe A B C D H E I J F K L G M N O

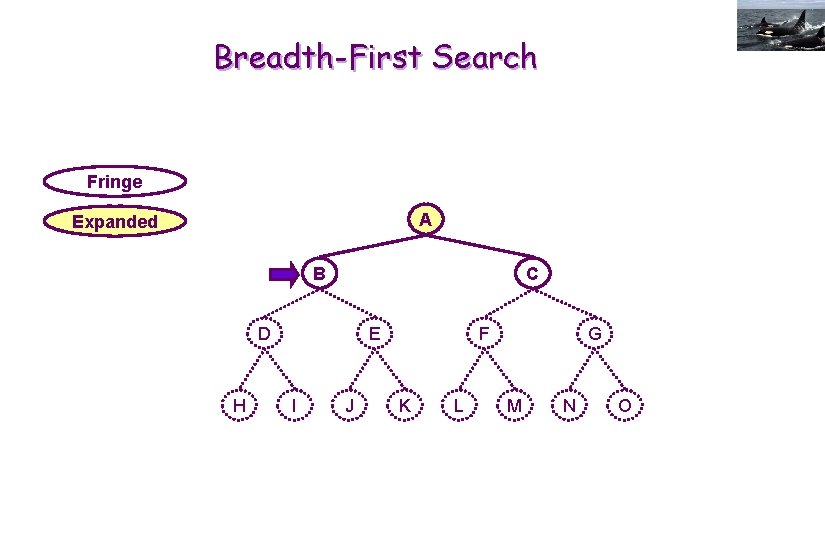

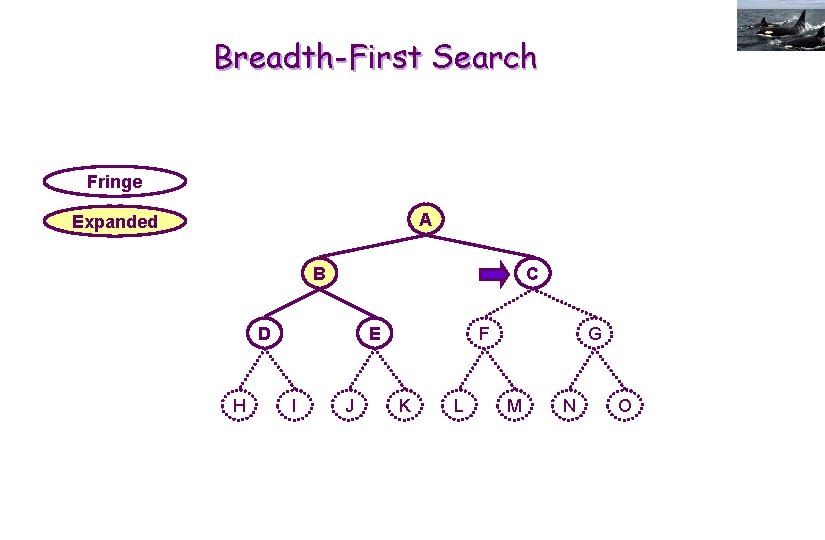

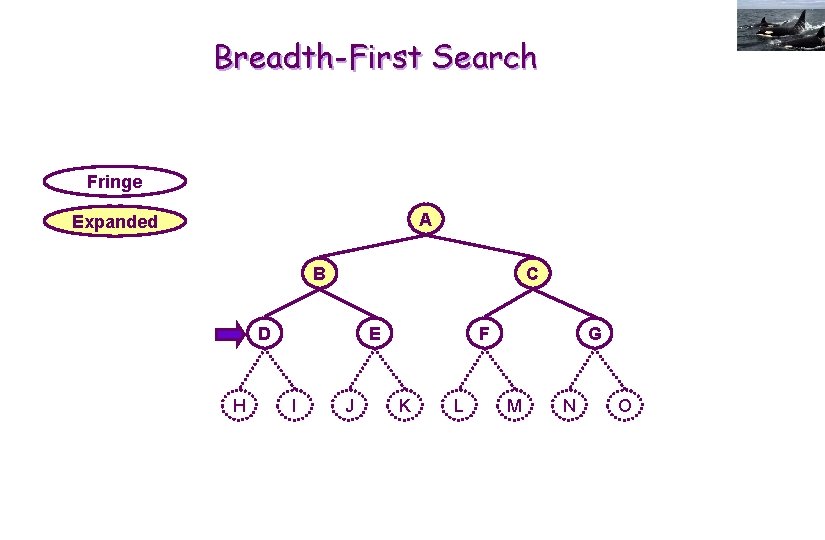

Breadth-First Search Fringe A Expanded B C D H E I J F K L G M N O

Breadth-First Search Fringe A Expanded B C D H E I J F K L G M N O

Breadth-First Search Fringe A Expanded B C D H E I J F K L G M N O

Breadth-First Search Fringe A Expanded B C D H E I J F K L G M N O

Breadth-First Search Fringe A Expanded B C D H E I J F K L G M N O

Breadth-First Search Fringe A Expanded B C D H E I J F K L G M N O

Breadth-First Search Fringe A Expanded B C D H E I J F K L G M N O

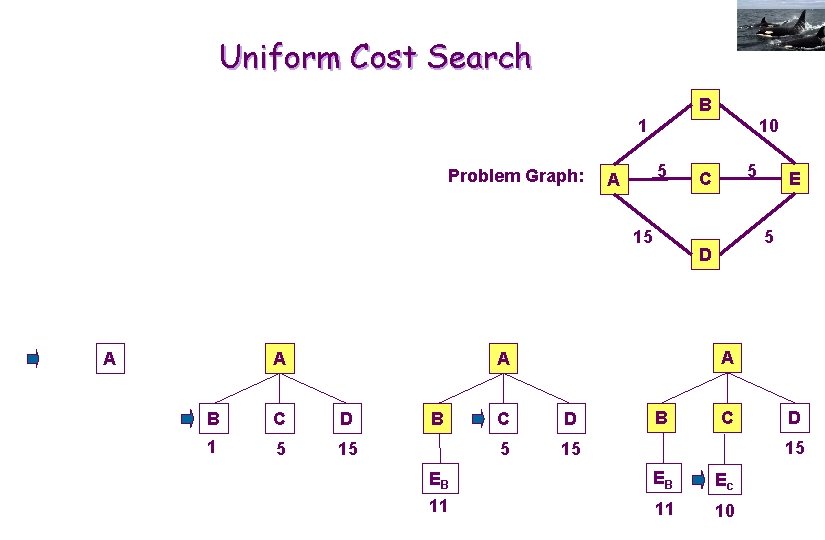

Uniform Cost Search B 10 1 Problem Graph: 5 A 15 A A C D 1 5 15 B E 5 D A A B 5 C C D 5 15 B C D 15 EB EB Ec 11 11 10

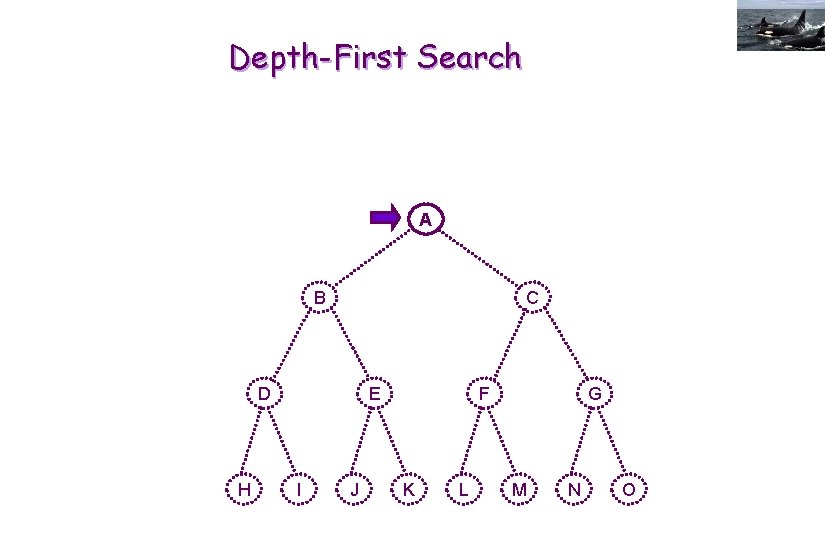

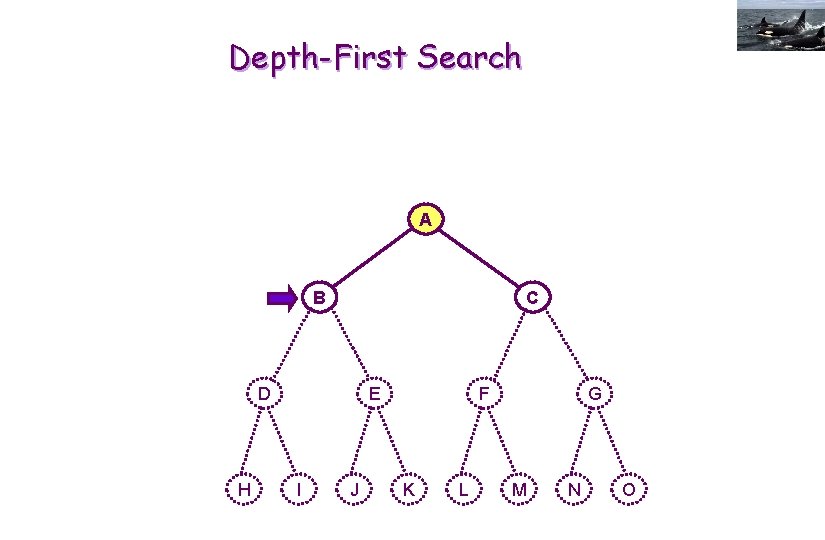

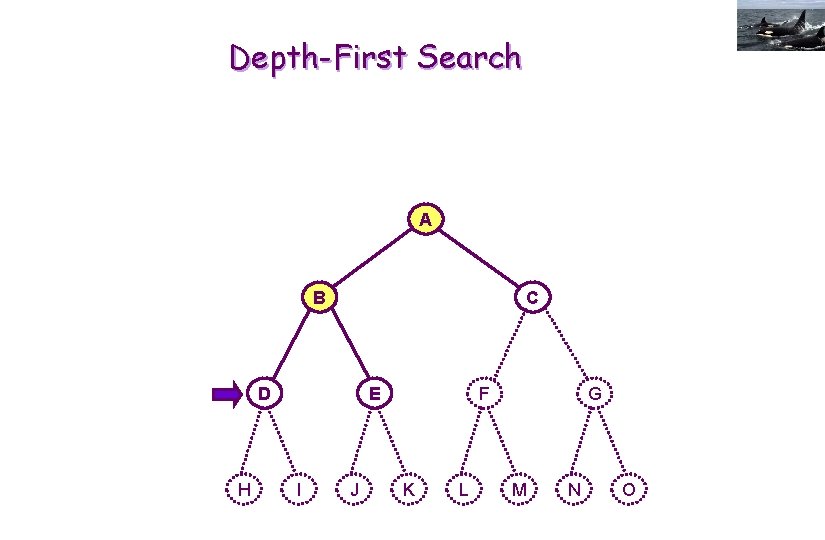

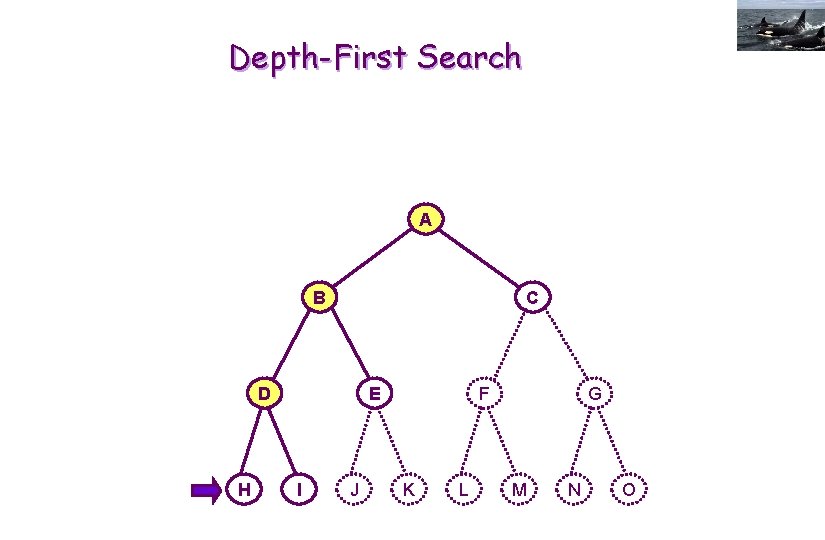

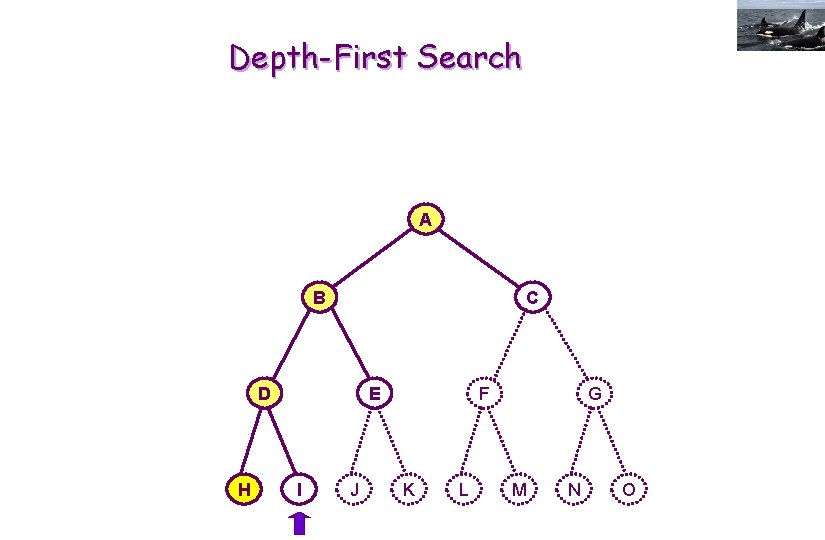

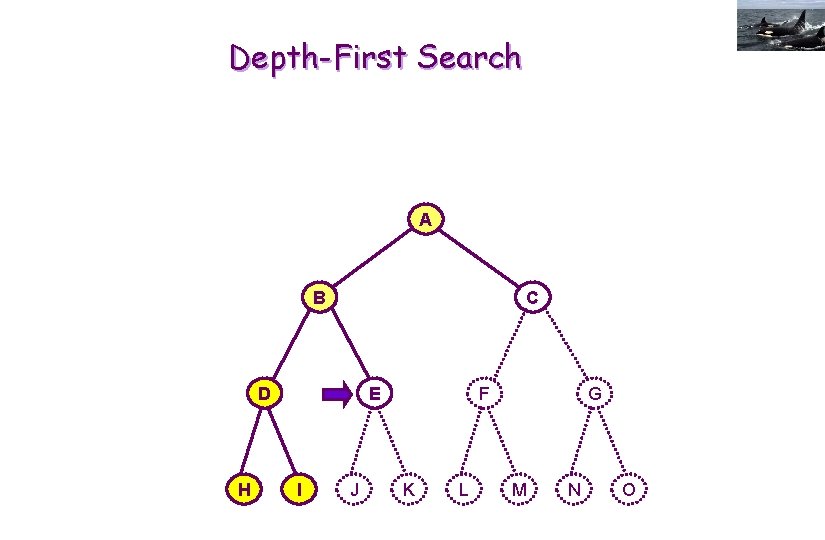

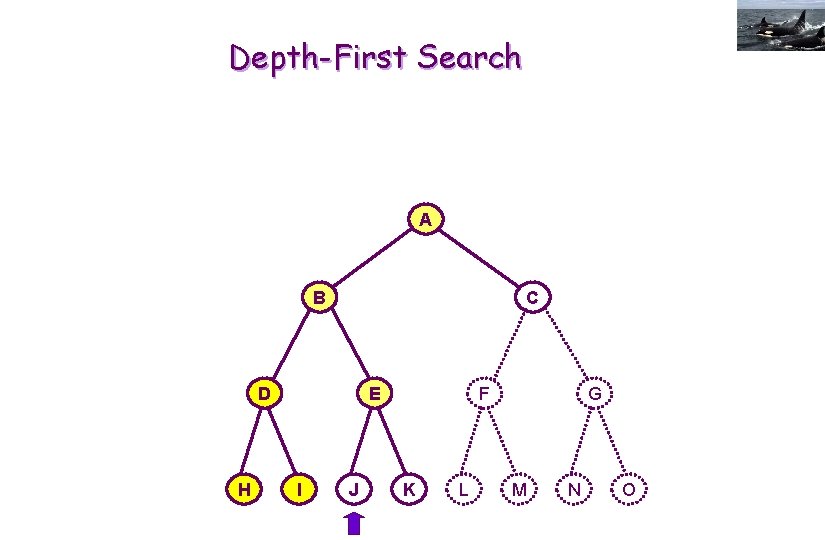

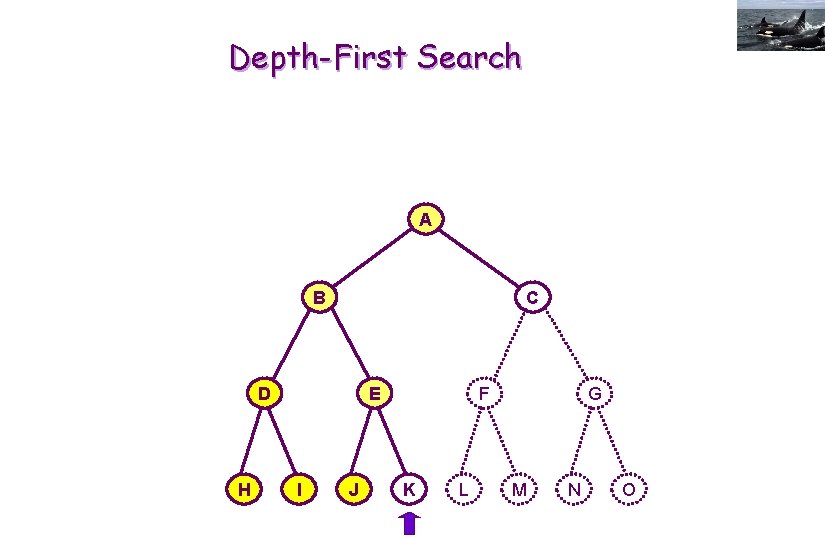

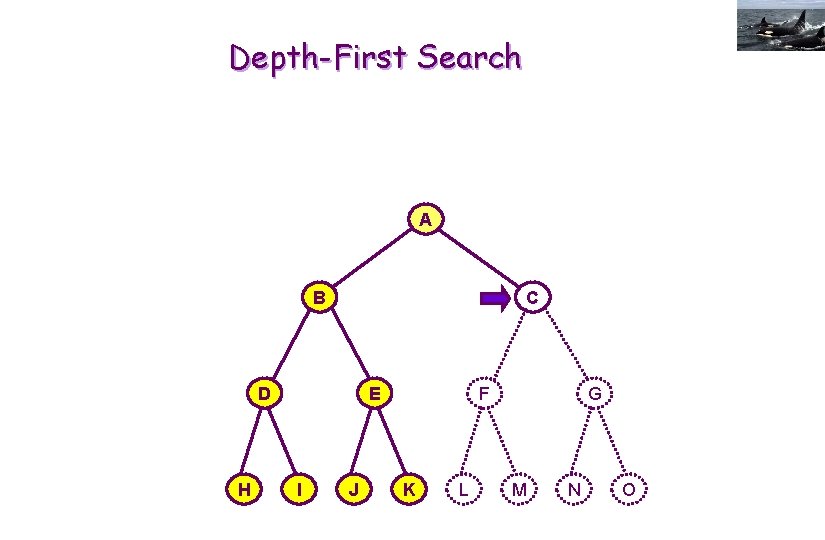

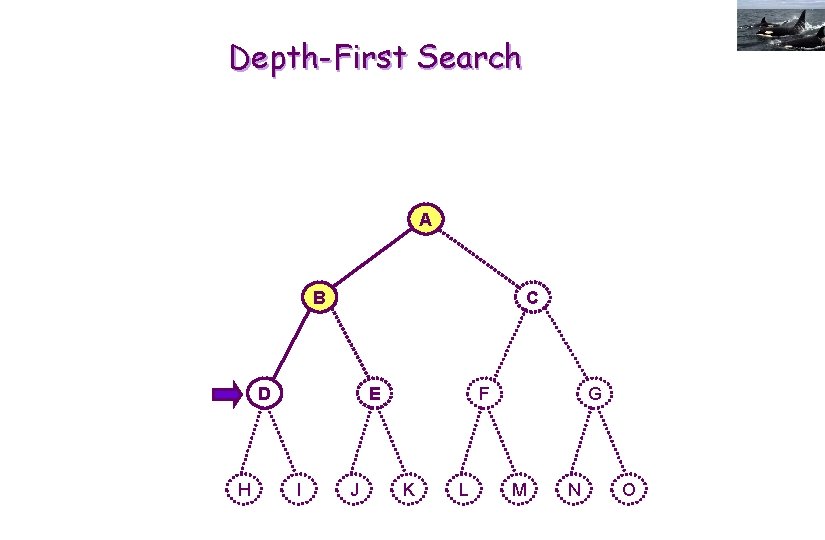

Depth-First Search A B C D H E I J F K L G M N O

Depth-First Search A B C D H E I J F K L G M N O

Depth-First Search A B C D H E I J F K L G M N O

Depth-First Search A B C D H E I J F K L G M N O

Depth-First Search A B C D H E I J F K L G M N O

Depth-First Search A B C D H E I J F K L G M N O

Depth-First Search A B C D H E I J F K L G M N O

Depth-First Search A B C D H E I J F K L G M N O

Depth-First Search A B C D H E I J F K L G M N O

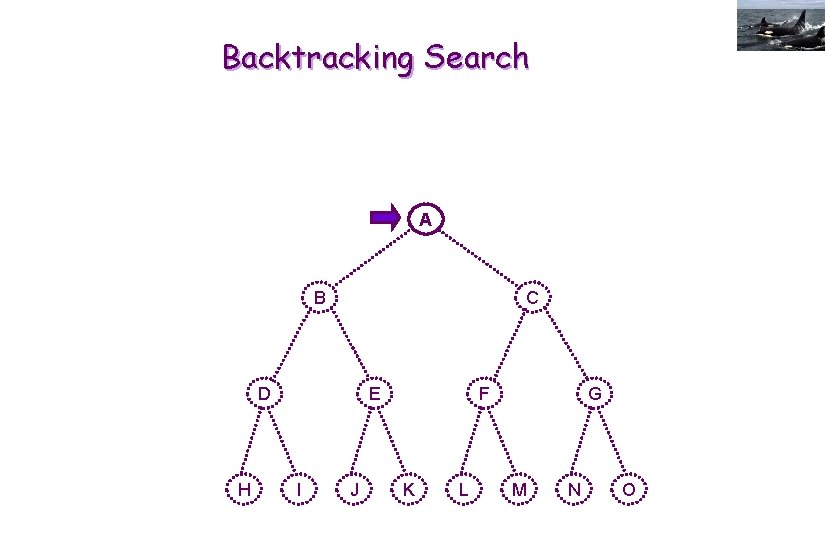

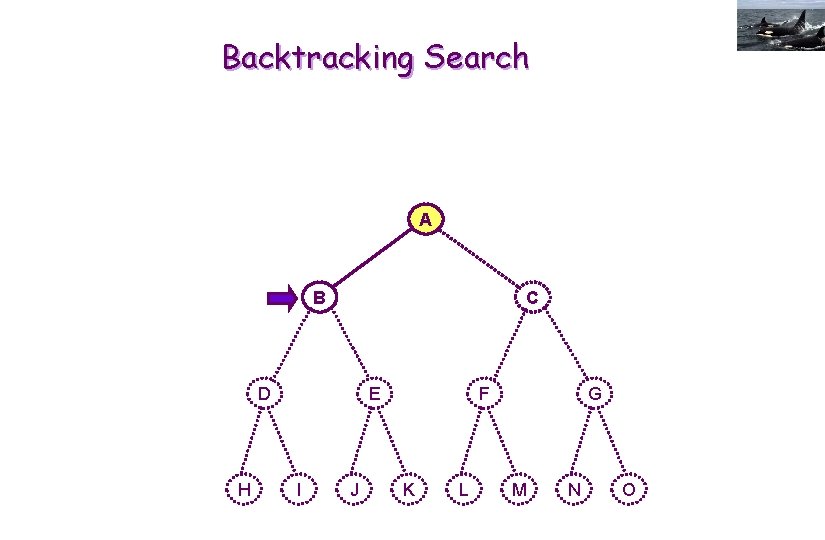

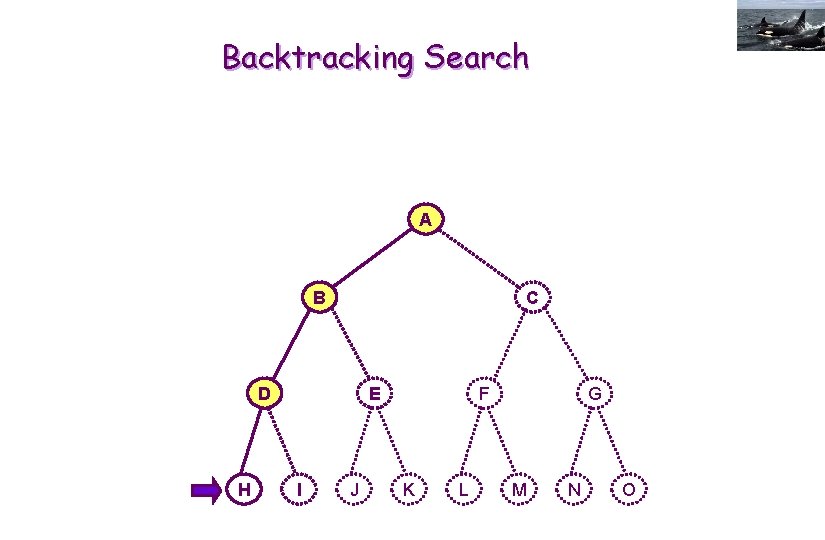

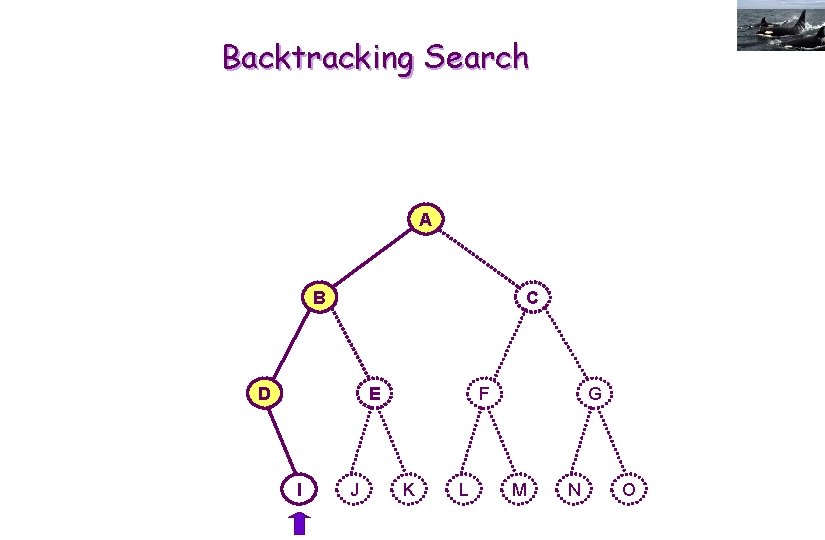

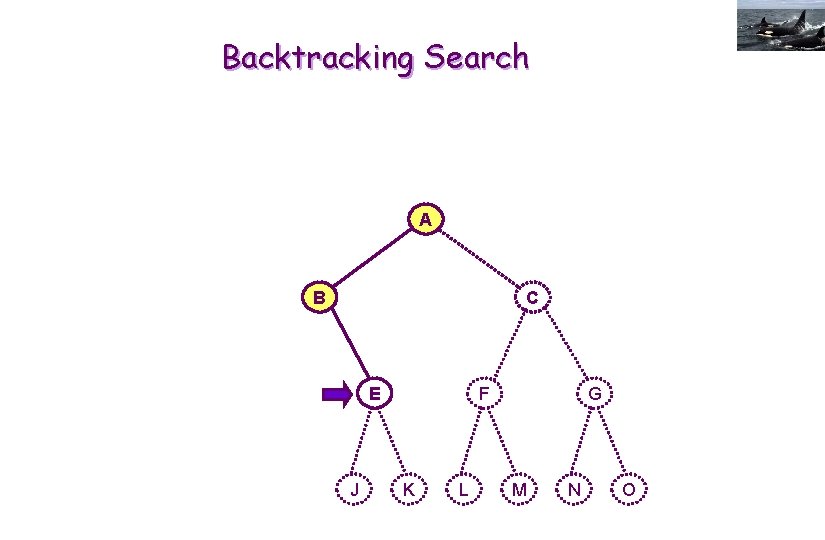

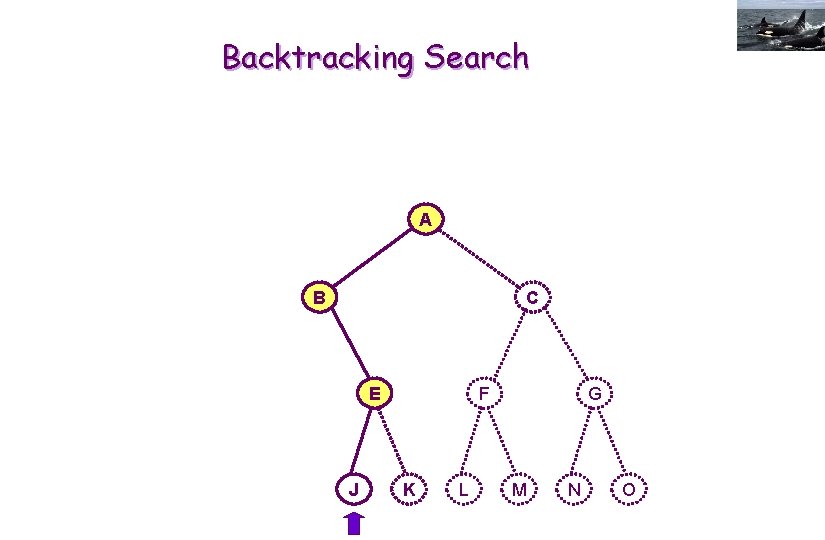

Backtracking Search A B C D H E I J F K L G M N O

Backtracking Search A B C D H E I J F K L G M N O

Depth-First Search A B C D H E I J F K L G M N O

Backtracking Search A B C D H E I J F K L G M N O

Backtracking Search A B C D E I J F K L G M N O

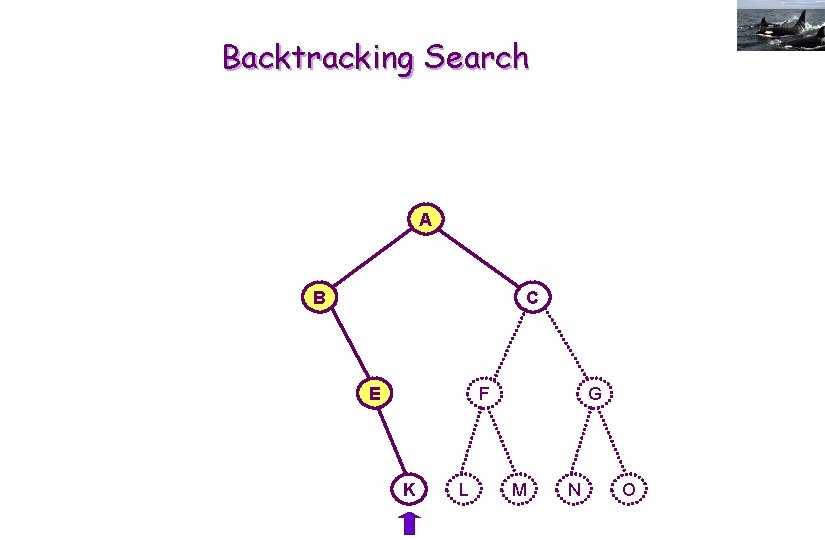

Backtracking Search A B C E J F K L G M N O

Backtracking Search A B C E J F K L G M N O

Backtracking Search A B C E F K L G M N O

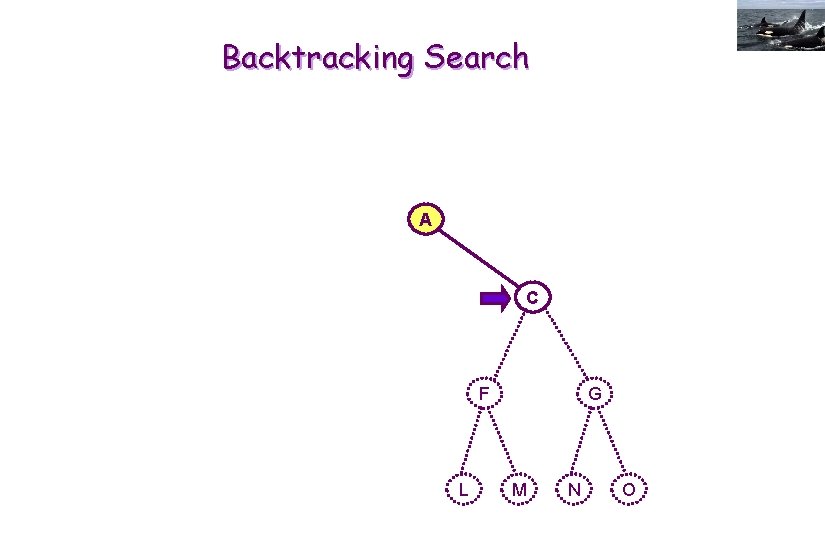

Backtracking Search A C F L G M N O

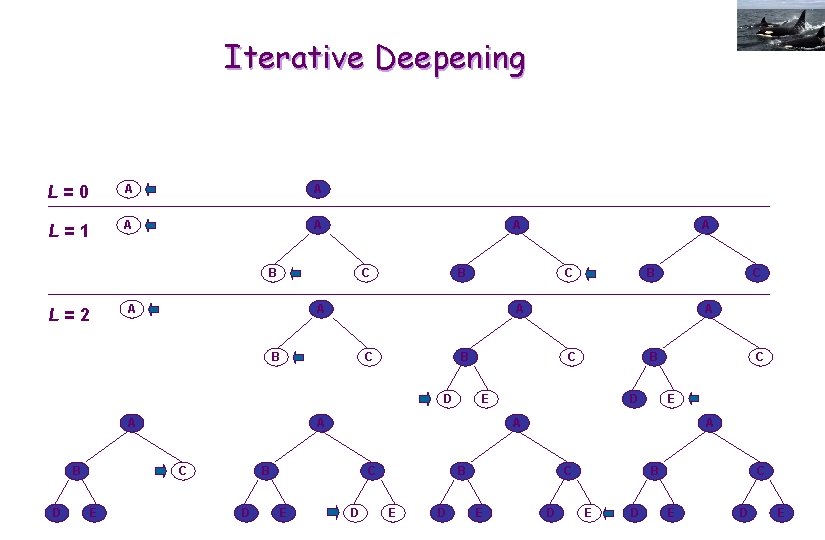

Iterative Deepening L=0 A A L=1 A A A B C D E C C E D C E A B D B E A C C A B D A B B C A A L=2 A D A B E D C E D E

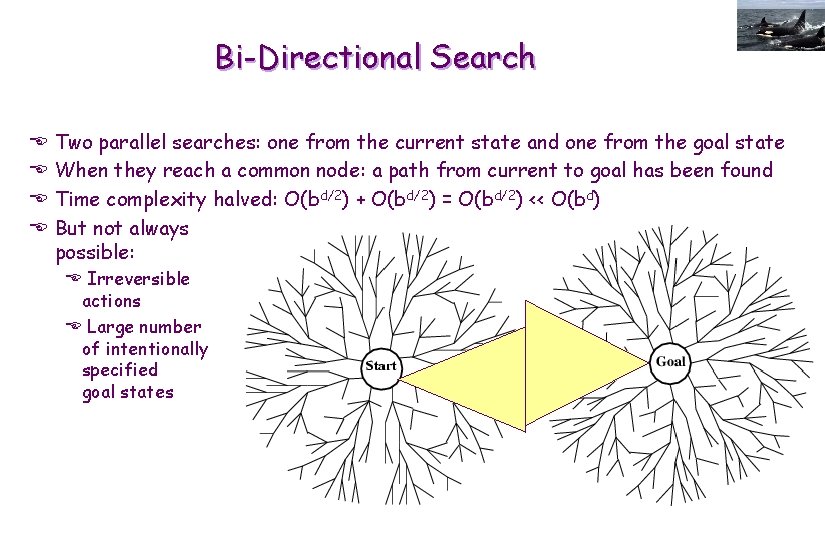

Bi-Directional Search E E Two parallel searches: one from the current state and one from the goal state When they reach a common node: a path from current to goal has been found Time complexity halved: O(bd/2) + O(bd/2) = O(bd/2) << O(bd) But not always possible: E Irreversible actions E Large number of intentionally specified goal states

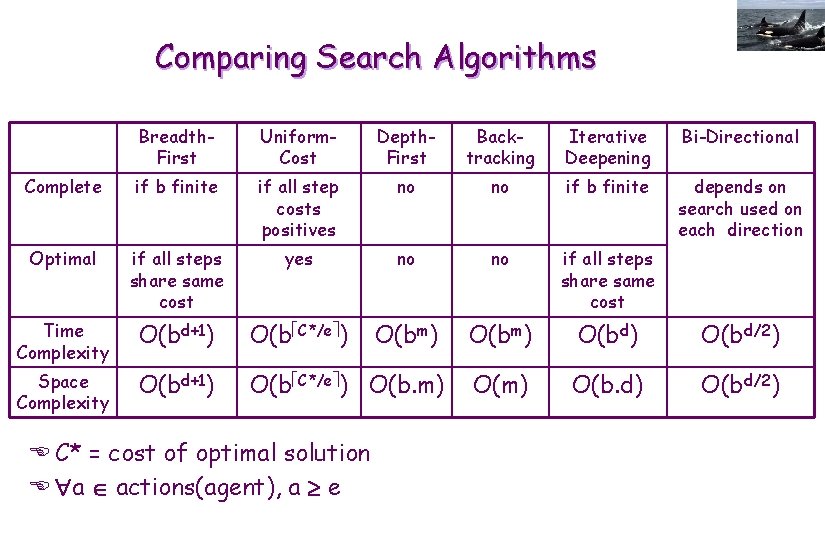

Comparing Search Algorithms Breadth. First Uniform. Cost Depth. First Backtracking Iterative Deepening Bi-Directional Complete if b finite if all step costs positives no no if b finite depends on search used on each direction Optimal if all steps share same cost yes no no if all steps share same cost Time Complexity O(bd+1) O(b C*/e ) O(bm) O(bd/2) Space Complexity O(bd+1) O(b C*/e ) O(b. m) O(b. d) O(bd/2) E C* = cost of optimal solution E a actions(agent), a e

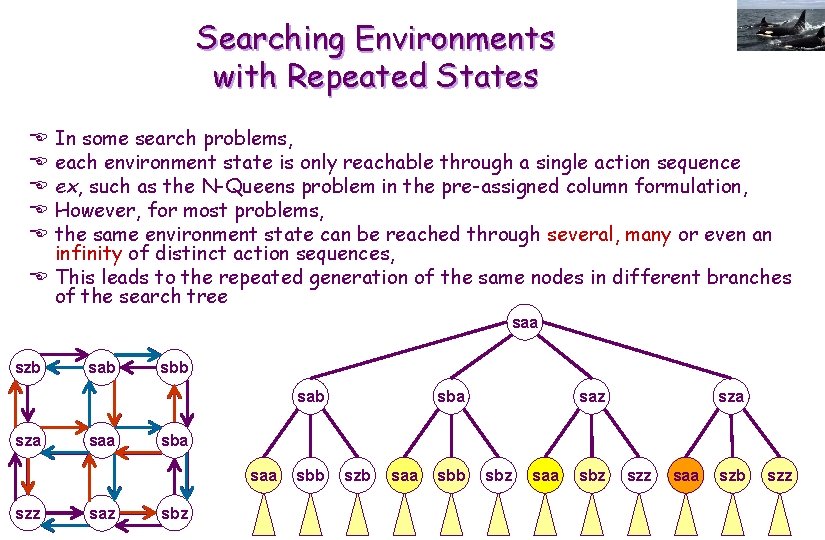

Searching Environments with Repeated States In some search problems, each environment state is only reachable through a single action sequence ex, such as the N-Queens problem in the pre-assigned column formulation, However, for most problems, the same environment state can be reached through several, many or even an infinity of distinct action sequences, E This leads to the repeated generation of the same nodes in different branches of the search tree E E E saa szb sab sba sab sza saz sza sba saa szz saz sbb szb saa sbb sbz saa sbz szz saa szb szz

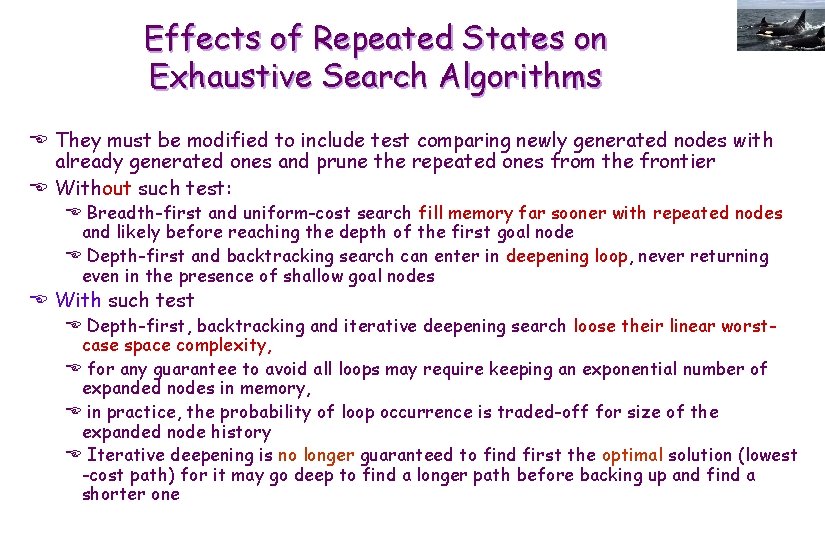

Effects of Repeated States on Exhaustive Search Algorithms E They must be modified to include test comparing newly generated nodes with already generated ones and prune the repeated ones from the frontier E Without such test: E Breadth-first and uniform-cost search fill memory far sooner with repeated nodes and likely before reaching the depth of the first goal node E Depth-first and backtracking search can enter in deepening loop, never returning even in the presence of shallow goal nodes E With such test E Depth-first, backtracking and iterative deepening search loose their linear worstcase space complexity, E for any guarantee to avoid all loops may require keeping an exponential number of expanded nodes in memory, E in practice, the probability of loop occurrence is traded-off for size of the expanded node history E Iterative deepening is no longer guaranteed to find first the optimal solution (lowest -cost path) for it may go deep to find a longer path before backing up and find a shorter one

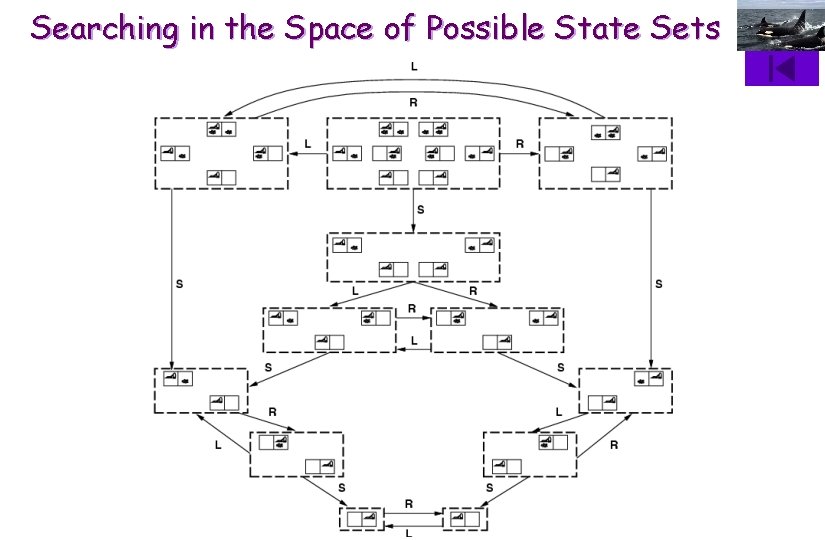

In which Environments can a State Space Searching Agent (S 3 A) Act Successfully? E Fully observable? Partially observable? Sensorless? EAn S 3 A can act successfully in an environment that is either partially observable or even sensorless, provided that it is deterministic, small, lowly diverse and that the agent possesses an action model E Instead of searching in the space of states, it can search in the space of possible state sets (beliefs) to know which actions are potentially available E Deterministic or stochastic? E An S 3 A can act successfully in an environment that is stochastic provided that it is small and at least partially observable E Instead of searching offline in the space of states, it can search offline in the space of possible state sets (beliefs) resulting of an action with stochastic effects E It can also search online and generate action programs or conditional plans instead of mere action sequences, ex, [suck, right, {if right. dirty then suck}] E Discrete or continuous? E An S 3 A using a global search algorithm can only act successfully in an discrete environment, for continuous variables imply infinite branching factor

In which Environments can a State Space Searching Agent (S 3 A) Act Successfully? E Episodic or non-episodic? E An S 3 A is only necessary for a non-episodic environment which requires choosing action sequences, where the choice of one action conditions the action range subsequently available E Static? sequential? concurrent synchronous or concurrent asynchronous? E An S 3 A can only act successfully in a static or sequential environment, for there is no point in searching for goal-achieving or optimal action sequences in an environment that changes due to factors outside the control of the agent E Small or large? E An S 3 A can only act successfully in a medium-sized environment provided that it is deterministic and at least partially observable, or small environment that are either stochastic or sensorless E Lowly or highly diverse? E An S 3 A can only act successfully in low diversity environment for diversity affects directly the branching factor of the problem search tree space

Searching in the Space of Possible State Sets

- Slides: 51