Problem Diagnosis Distributed Problem Diagnosis Sherlock Xtrace Troubleshooting

Problem Diagnosis • Distributed Problem Diagnosis • Sherlock • X-trace

Troubleshooting Networked Systems • Hard to develop, debug, deploy, troubleshoot • No standard way to integrate debugging, monitoring, diagnostics

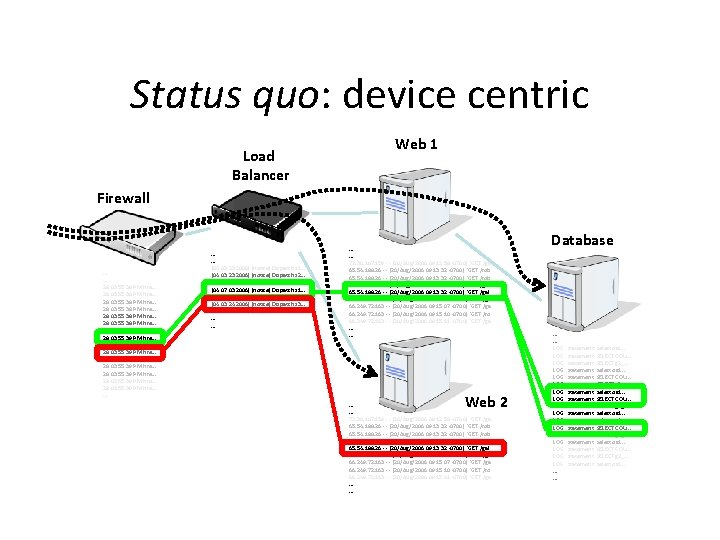

Status quo: device centric Load Balancer Web 1 Firewall . . . 28 03: 55: 38 PM fire. . . 28 03: 55: 39 PM fire. . . [04: 03: 23 2006] [notice] Dispatch s 1. . . [04: 03: 23 2006] [notice] Dispatch s 2. . . [04: 18 2006] [notice] Dispatch s 3. . . [04: 07: 03 2006] [notice] Dispatch s 1. . . [04: 10: 55 2006] [notice] Dispatch s 2. . . [04: 03: 24 2006] [notice] Dispatch s 3. . . [04: 47 2006] [crit] Server s 3 down. . . . 72. 30. 107. 159 - - [20/Aug/2006: 09: 12: 58 -0700] "GET /ga 65. 54. 188. 26 - - [20/Aug/2006: 09: 13: 32 -0700] "GET /rob 65. 54. 188. 26 - - [20/Aug/2006: 09: 13: 32 -0700] "GET /gal 66. 249. 72. 163 - - [20/Aug/2006: 09: 15: 04 -0700] "GET /ga 66. 249. 72. 163 - - [20/Aug/2006: 09: 15: 07 -0700] "GET /ga 66. 249. 72. 163 - - [20/Aug/2006: 09: 15: 10 -0700] "GET /ro 66. 249. 72. 163 - - [20/Aug/2006: 09: 15: 11 -0700] "GET /ga. . . Web 2 . . . 72. 30. 107. 159 - - [20/Aug/2006: 09: 12: 58 -0700] "GET /ga 65. 54. 188. 26 - - [20/Aug/2006: 09: 13: 32 -0700] "GET /rob 65. 54. 188. 26 - - [20/Aug/2006: 09: 13: 32 -0700] "GET /gal 66. 249. 72. 163 - - [20/Aug/2006: 09: 15: 04 -0700] "GET /ga 66. 249. 72. 163 - - [20/Aug/2006: 09: 15: 07 -0700] "GET /ga 66. 249. 72. 163 - - [20/Aug/2006: 09: 15: 10 -0700] "GET /ro 66. 249. 72. 163 - - [20/Aug/2006: 09: 15: 11 -0700] "GET /ga. . . Database . . . LOG: LOG: LOG: LOG: LOG: . . . statement: select oid. . . statement: SELECT COU. . . statement: SELECT g 2_. . . statement: select oid. . .

Status quo: device centric • Determining paths: – Join logs on time and ad-hoc identifiers • Relies on – well synchronized clocks – extensive application knowledge • Requires all operations logged to guarantee complete paths

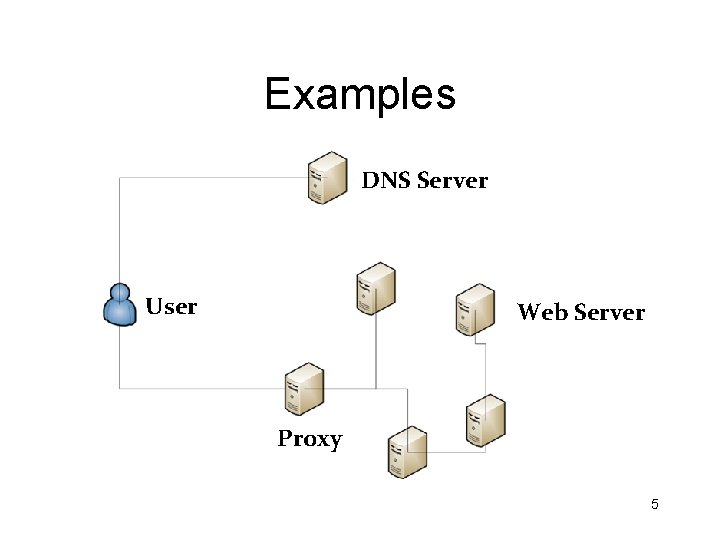

Examples DNS Server User Web Server Proxy 5

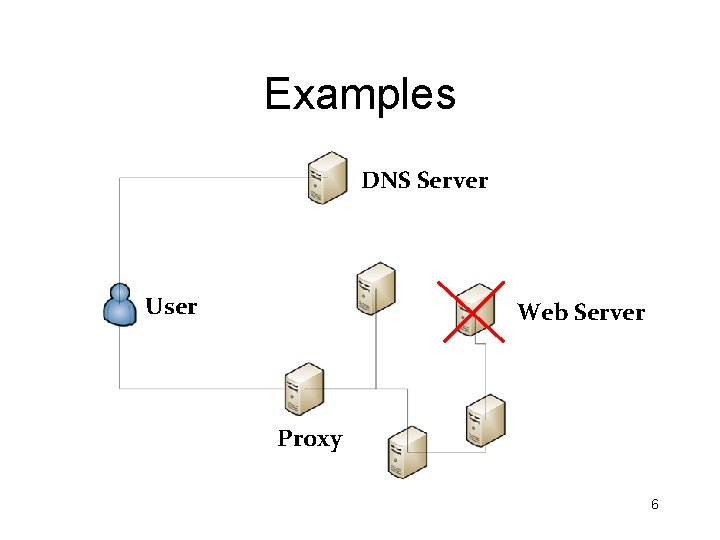

Examples DNS Server User Web Server Proxy 6

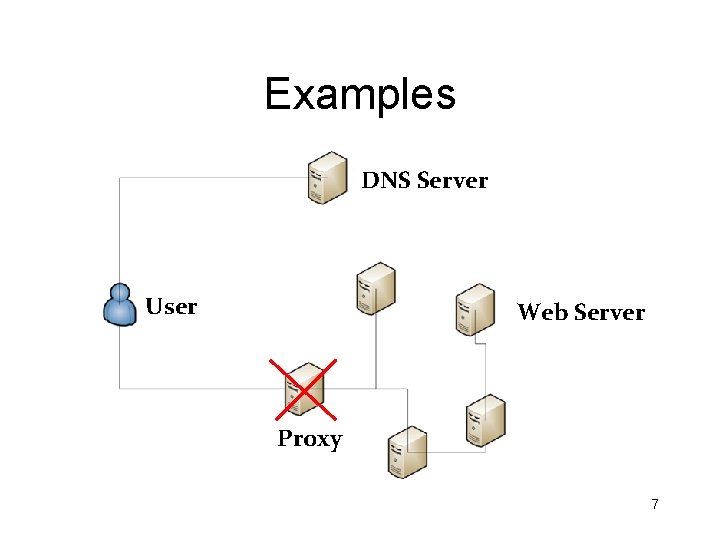

Examples DNS Server User Web Server Proxy 7

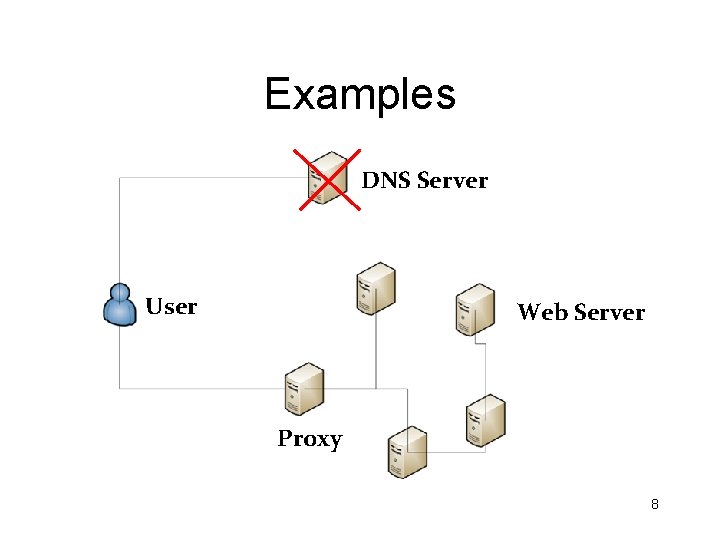

Examples DNS Server User Web Server Proxy 8

Approaches to Diagnosis • Passively learn the relationships – Infer problems as deviations from the norm • Actively Instrument the stack to learn relationships – Infer problems as deviations from the norm

Sherlock – Diagnosing Problems in the Enterprise Srikanth Kandula

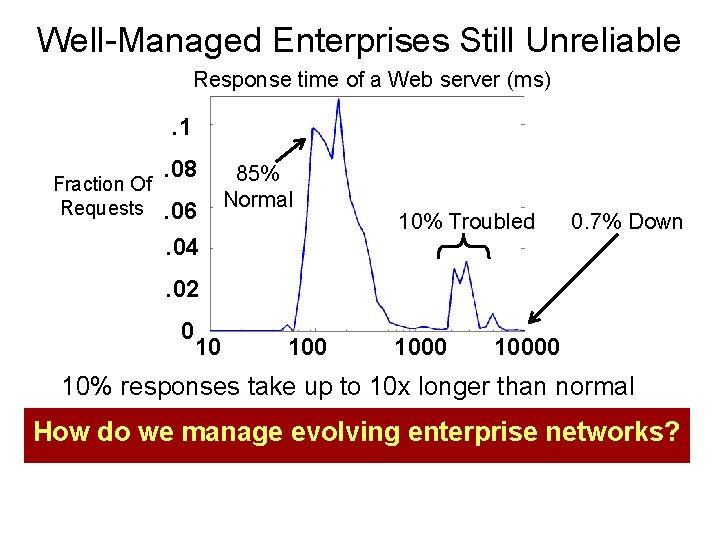

Well-Managed Enterprises Still Unreliable Response time of a Web server (ms) . 1 Fraction Of Requests . 08. 06. 04 85% Normal 10% Troubled 0. 7% Down . 02 0 10 10000 10% responses take up to 10 x longer than normal How do we manage evolving enterprise networks?

Sherlock Instead of looking at the nitty-gritty of individual components, use an end-to-end approach that focuses on user problems

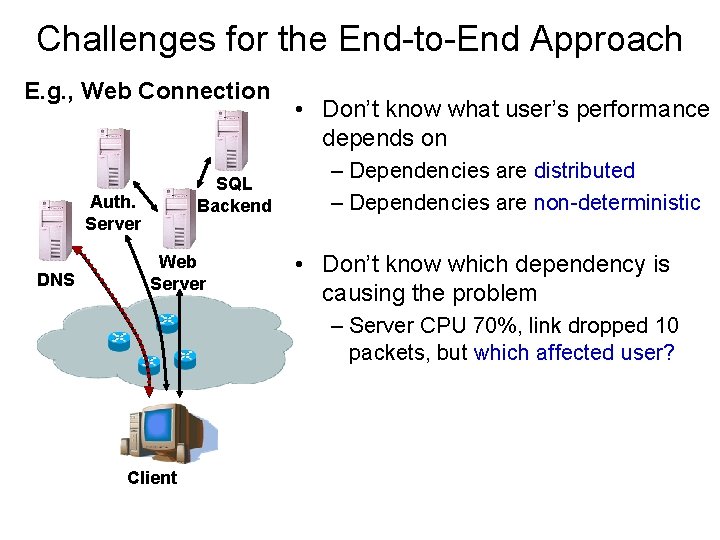

Challenges for the End-to-End Approach • Don’t know what user’s performance depends on

Challenges for the End-to-End Approach E. g. , Web Connection SQL Backend Auth. Server DNS Web Server • Don’t know what user’s performance depends on – Dependencies are distributed – Dependencies are non-deterministic • Don’t know which dependency is causing the problem – Server CPU 70%, link dropped 10 packets, but which affected user? Client

Sherlock’s Contributions • Passively infers dependencies from logs • Builds a unified dependency graph incorporating network, server and application dependencies • Diagnoses user problems in the enterprise • Deployed in a part of the Microsoft Enterprise

Sherlock’s Architecture

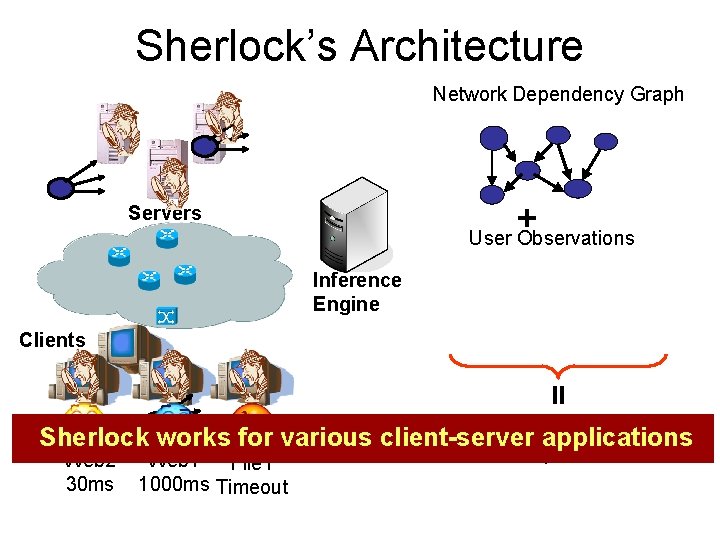

Sherlock’s Architecture Network Dependency Graph + User Observations Servers Inference Engine Clients = List Troubled Sherlock works for various client-server applications Web 2 30 ms Web 1 File 1 1000 ms Timeout Components

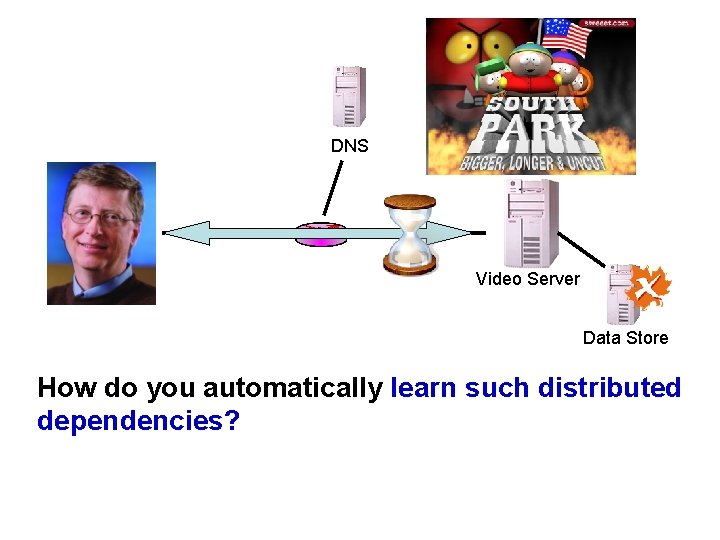

DNS Video Server Data Store How do you automatically learn such distributed dependencies?

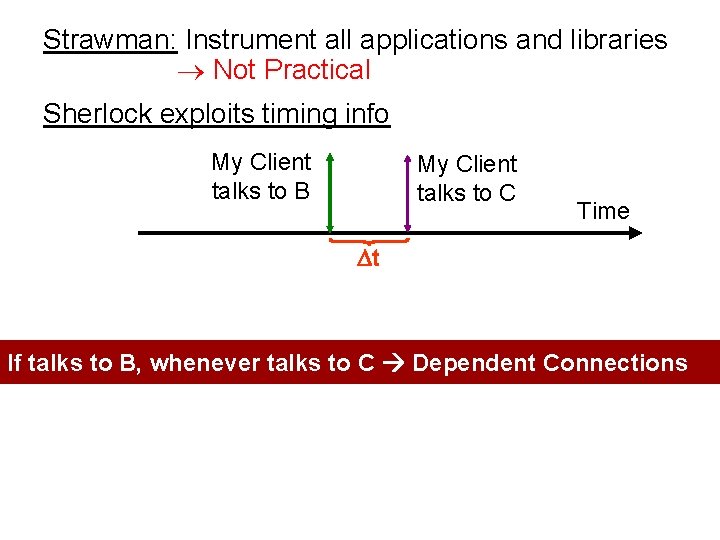

Strawman: Instrument all applications and libraries Not Practical Sherlock exploits timing info My Client talks to B My Client talks to C Time t If talks to B, whenever talks to C Dependent Connections

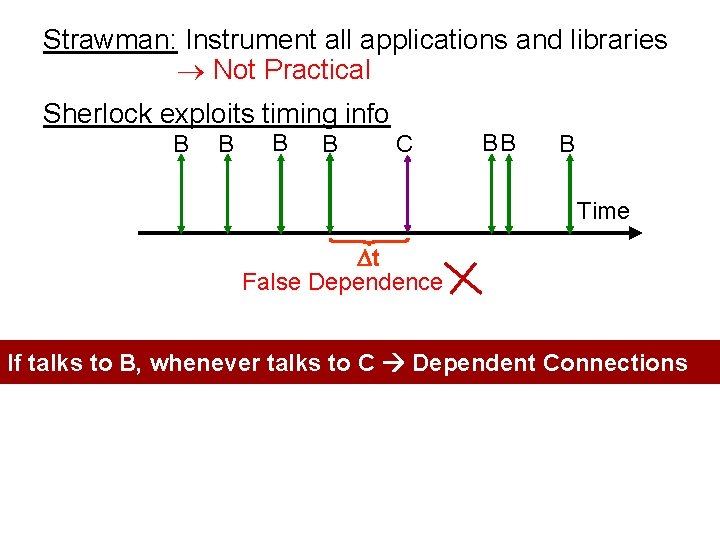

Strawman: Instrument all applications and libraries Not Practical Sherlock exploits timing info B B C BB B Time t False Dependence If talks to B, whenever talks to C Dependent Connections

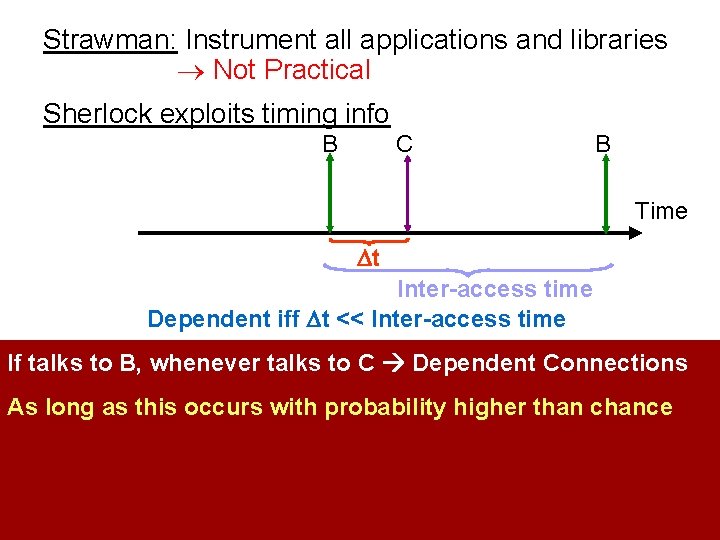

Strawman: Instrument all applications and libraries Not Practical Sherlock exploits timing info B C B Time t Inter-access time Dependent iff t << Inter-access time If talks to B, whenever talks to C Dependent Connections As long as this occurs with probability higher than chance

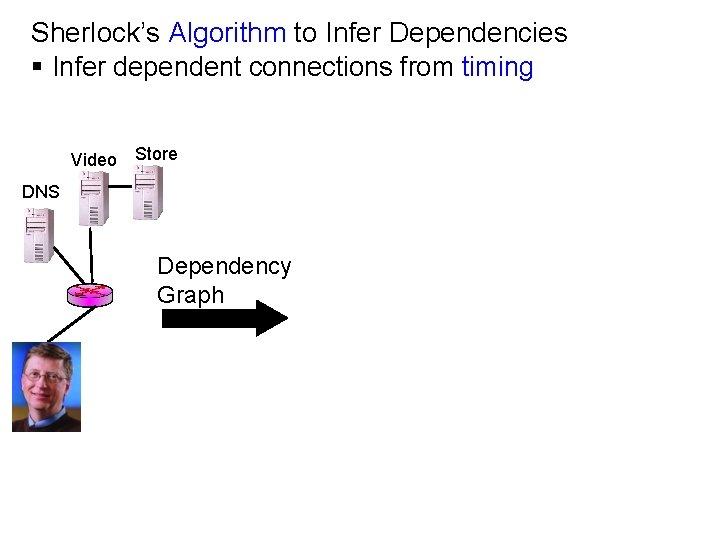

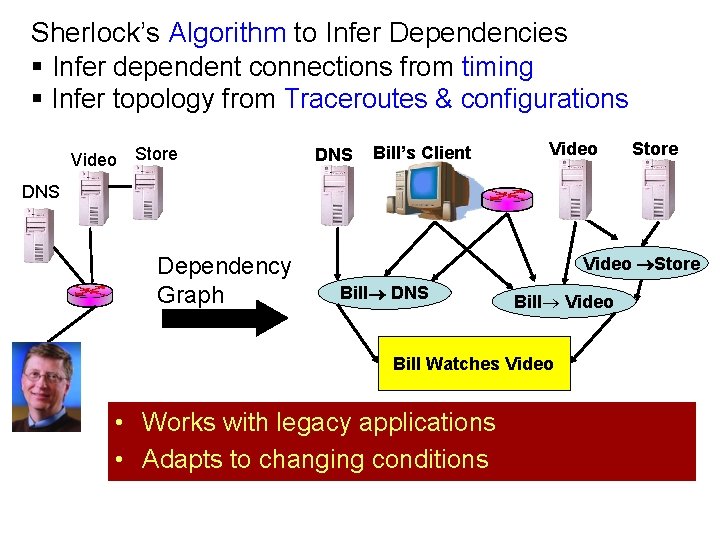

Sherlock’s Algorithm to Infer Dependencies § Infer dependent connections from timing Video Store DNS Dependency Graph

Sherlock’s Algorithm to Infer Dependencies § Infer dependent connections from timing § Infer topology from Traceroutes & configurations Video Store DNS Bill’s Client Video Store DNS Dependency Graph Video Store Bill DNS Bill Video Bill Watches Video • Works with legacy applications • Adapts to changing conditions

But hard dependencies are not enough…

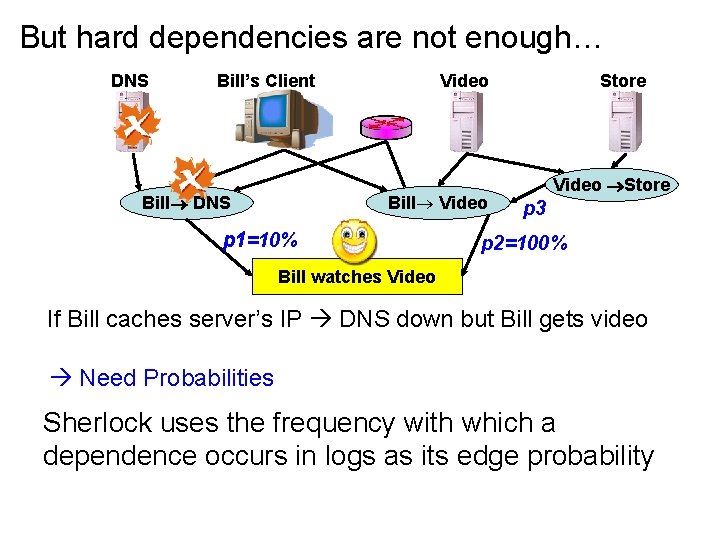

But hard dependencies are not enough… DNS Bill’s Client Bill DNS Video Bill Video p 1=10% Store Video Store p 3 p 2=100% Bill watches Video If Bill caches server’s IP DNS down but Bill gets video Need Probabilities Sherlock uses the frequency with which a dependence occurs in logs as its edge probability

How do we use the dependency graph to diagnose user problems?

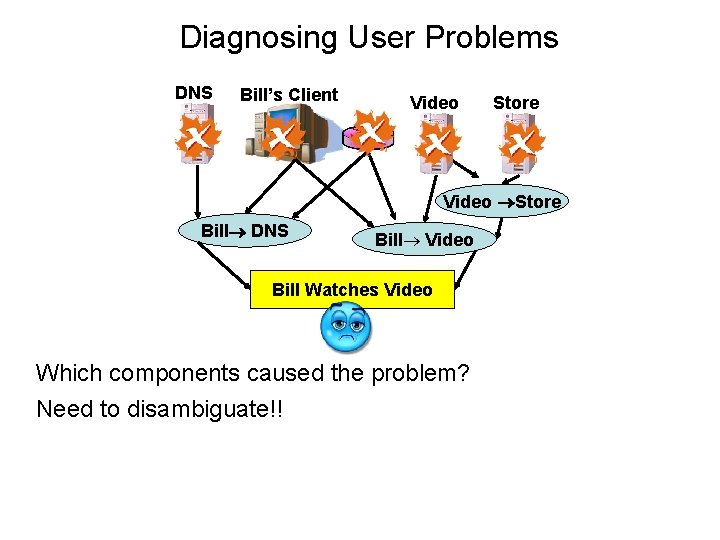

Diagnosing User Problems DNS Bill’s Client Video Store Bill DNS Bill Video Bill Watches Video Which components caused the problem? Need to disambiguate!!

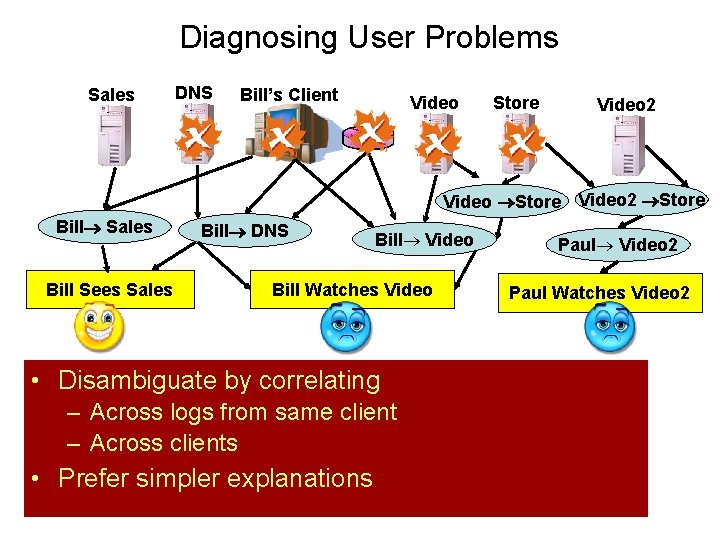

Diagnosing User Problems Sales DNS Bill’s Client Video Store Video 2 Store Bill Sales Bill Sees Sales Bill DNS Bill Video Bill Watches Video components the problem? • Which Disambiguate bycaused correlating Use– correlation disambiguate!! Across logstofrom same client – Across clients • Prefer simpler explanations Paul Video 2 Paul Watches Video 2

Will Correlation Scale?

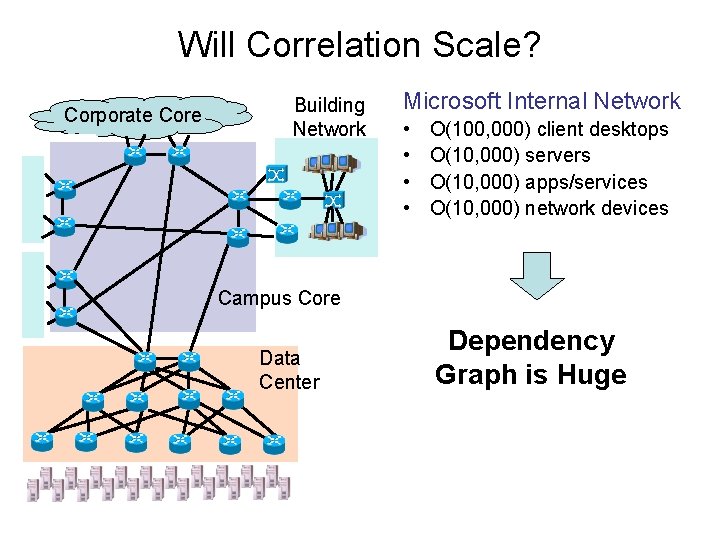

Will Correlation Scale? Corporate Core Building Network Microsoft Internal Network • • O(100, 000) client desktops O(10, 000) servers O(10, 000) apps/services O(10, 000) network devices Campus Core Data Center Dependency Graph is Huge

Will Correlation Scale? Can we evaluate all combinations of component failures? The number of fault combinations is exponential! Impossible to compute!

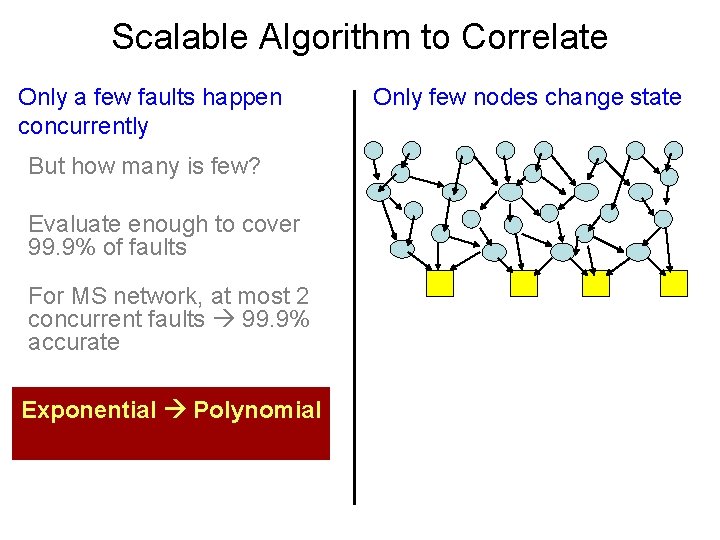

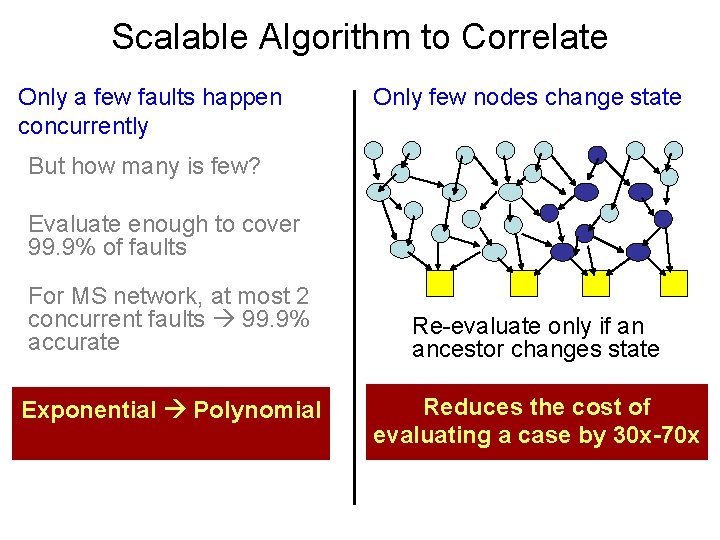

Scalable Algorithm to Correlate Only a few faults happen concurrently But how many is few? Evaluate enough to cover 99. 9% of faults For MS network, at most 2 concurrent faults 99. 9% accurate Exponential Polynomial

Scalable Algorithm to Correlate Only a few faults happen concurrently But how many is few? Evaluate enough to cover 99. 9% of faults For MS network, at most 2 concurrent faults 99. 9% accurate Exponential Polynomial Only few nodes change state

Scalable Algorithm to Correlate Only a few faults happen concurrently Only few nodes change state But how many is few? Evaluate enough to cover 99. 9% of faults For MS network, at most 2 concurrent faults 99. 9% accurate Exponential Polynomial Re-evaluate only if an ancestor changes state Reduces the cost of evaluating a case by 30 x-70 x

Results

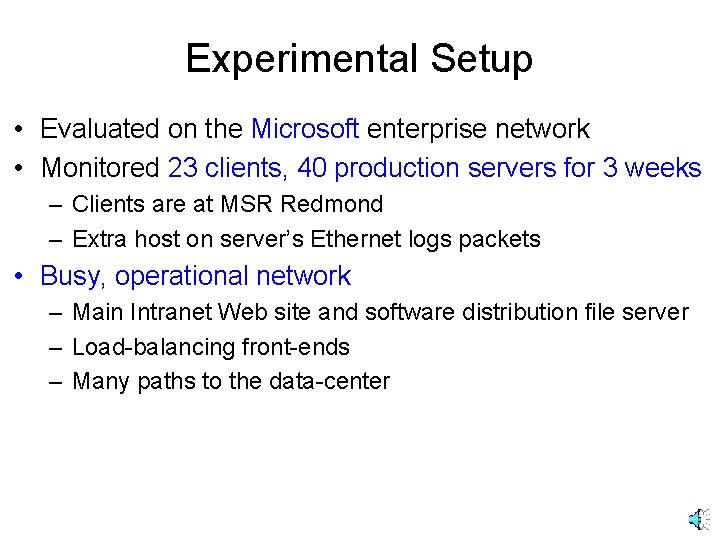

Experimental Setup • Evaluated on the Microsoft enterprise network • Monitored 23 clients, 40 production servers for 3 weeks – Clients are at MSR Redmond – Extra host on server’s Ethernet logs packets • Busy, operational network – Main Intranet Web site and software distribution file server – Load-balancing front-ends – Many paths to the data-center

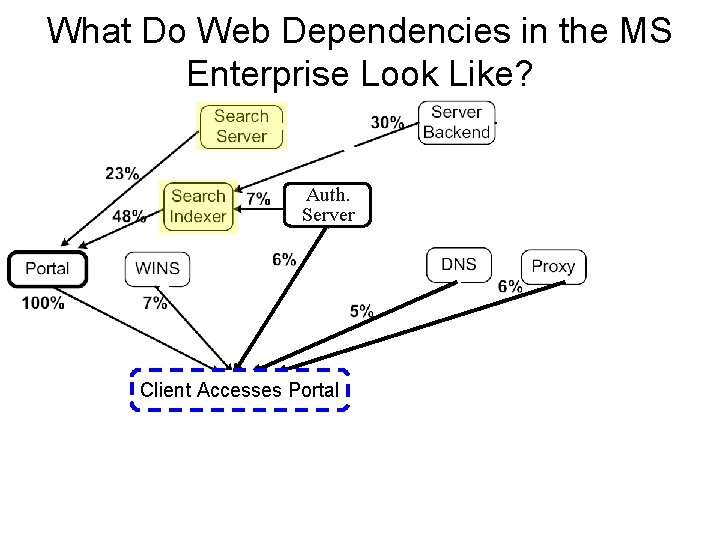

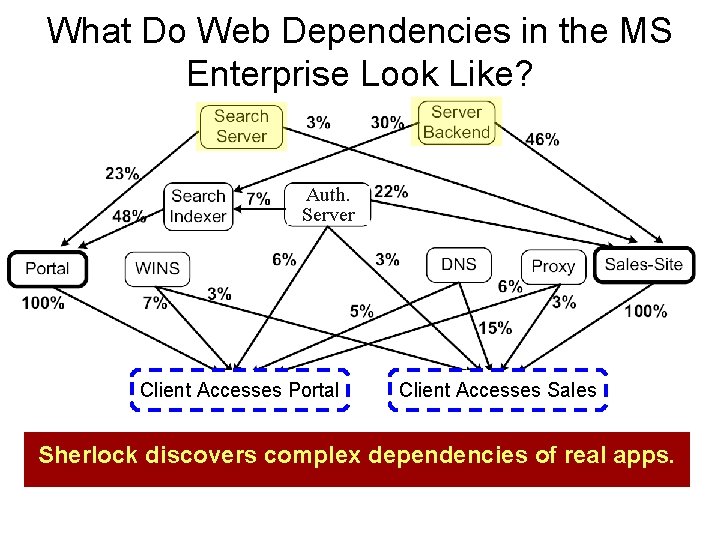

What Do Web Dependencies in the MS Enterprise Look Like?

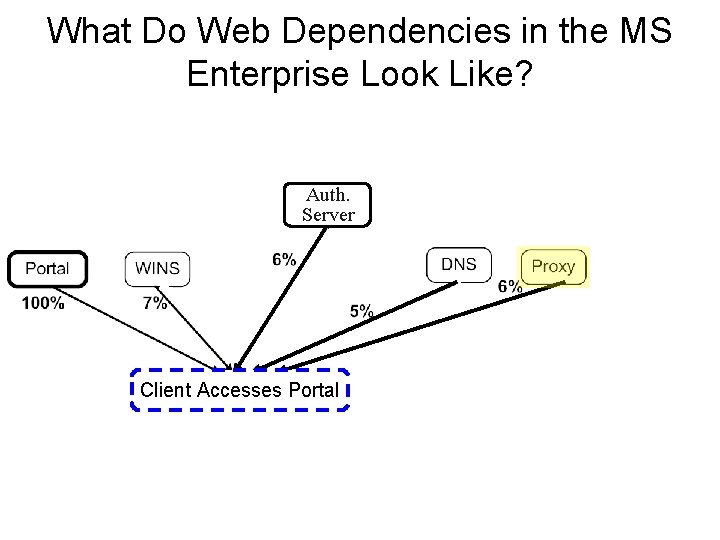

What Do Web Dependencies in the MS Enterprise Look Like? Auth. Server Client Accesses Portal

What Do Web Dependencies in the MS Enterprise Look Like? Auth. Server Client Accesses Portal

What Do Web Dependencies in the MS Enterprise Look Like? Auth. Server Client Accesses Portal Client Accesses Sales Sherlock discovers complex dependencies of real apps.

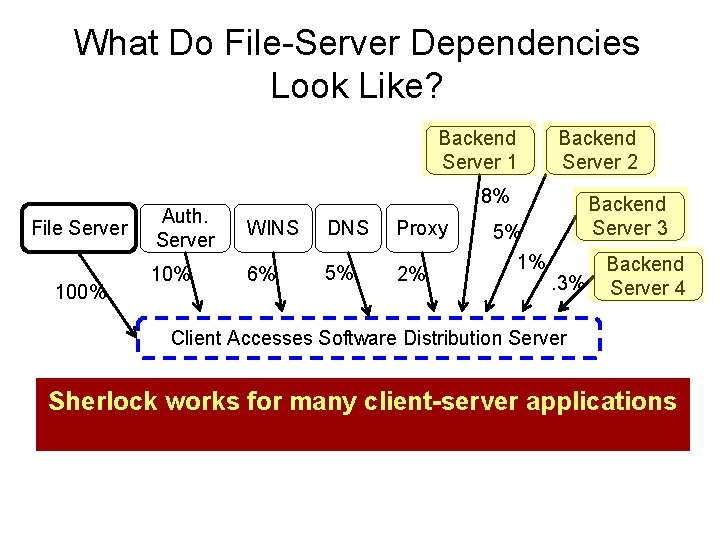

What Do File-Server Dependencies Look Like? Backend Server 1 File Server 100% Backend Server 2 8% Auth. Server WINS DNS Proxy 10% 6% 5% 2% 5% 1% Backend Server 3. 3% Backend Server 4 Client Accesses Software Distribution Server Sherlock works for many client-server applications

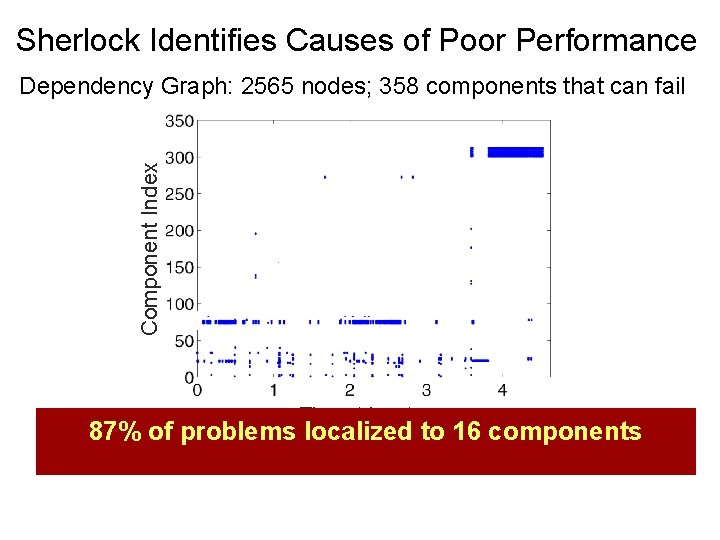

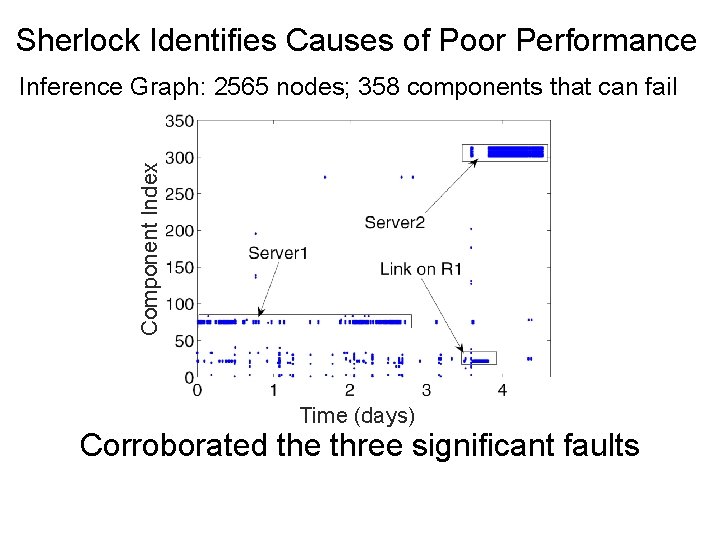

Sherlock Identifies Causes of Poor Performance Component Index Dependency Graph: 2565 nodes; 358 components that can fail Time (days) 87% of problems localized to 16 components

Sherlock Identifies Causes of Poor Performance Component Index Inference Graph: 2565 nodes; 358 components that can fail Time (days) Corroborated the three significant faults

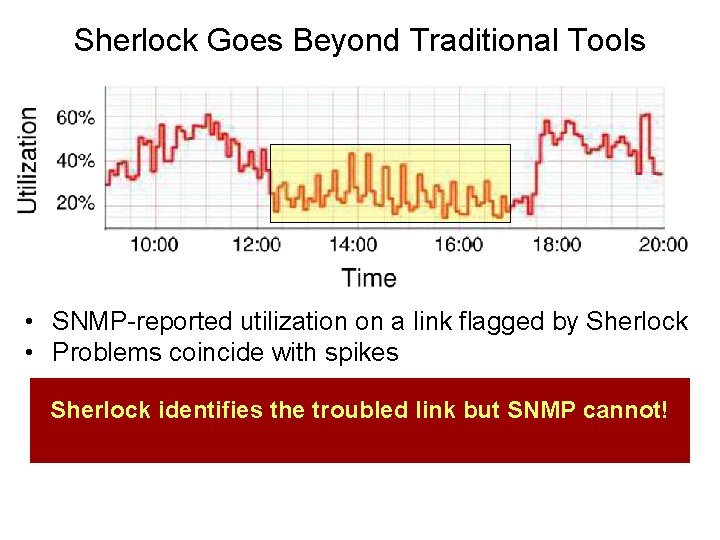

Sherlock Goes Beyond Traditional Tools • SNMP-reported utilization on a link flagged by Sherlock • Problems coincide with spikes Sherlock identifies the troubled link but SNMP cannot!

X-Trace • X-Trace records events in a distributed execution and their causal relationship • Events are grouped into tasks – Well defined starting event and all that is causally related • Each event generates a report, binding it to one or more preceding events • Captures full happens-before relation

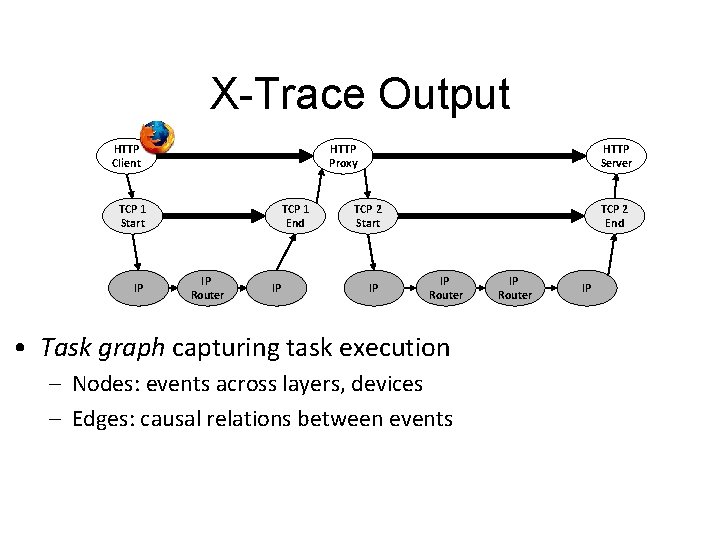

X-Trace Output HTTP Client HTTP Proxy TCP 1 Start IP TCP 1 End IP Router IP HTTP Server TCP 2 Start IP TCP 2 End IP Router • Task graph capturing task execution – Nodes: events across layers, devices – Edges: causal relations between events IP Router IP

![Basic Mechanism a g [T, a] HTTP Client [T, a] b c d IP Basic Mechanism a g [T, a] HTTP Client [T, a] b c d IP](http://slidetodoc.com/presentation_image_h/873722a666ddfccddbdb0b65198d3cfe/image-48.jpg)

Basic Mechanism a g [T, a] HTTP Client [T, a] b c d IP h f TCP 1 Start IP Router [T, g] HTTP Proxy TCP 1 End TCP 2 Start i e IP IP X-Trace Report Task. ID: T Event. ID: g j Edge: from ka, f IP Router HTTP Server TCP 2 End l IP • Each event uniquely identified within a task: [Task. Id, Event. Id] • [Task. Id, Event. Id] propagated along execution path • For each event create and log an X-Trace report – Enough info to reconstruct the task graph n m

X-Trace Library API • Handles propagation within app • Threads / event-based (e. g. , libasync) • Akin to a logging API: – Main call is log. Event(message) • Library takes care of event id creation, binding, reporting, etc • Implementations in C++, Java, Ruby, Javascript

Task Tree • X-Trace tags all network operations resulting from a particular task with the same task identifier • Task tree is the set of network operations connected with an initial task • Task tree could be reconstruct after collecting trace data with reports 52

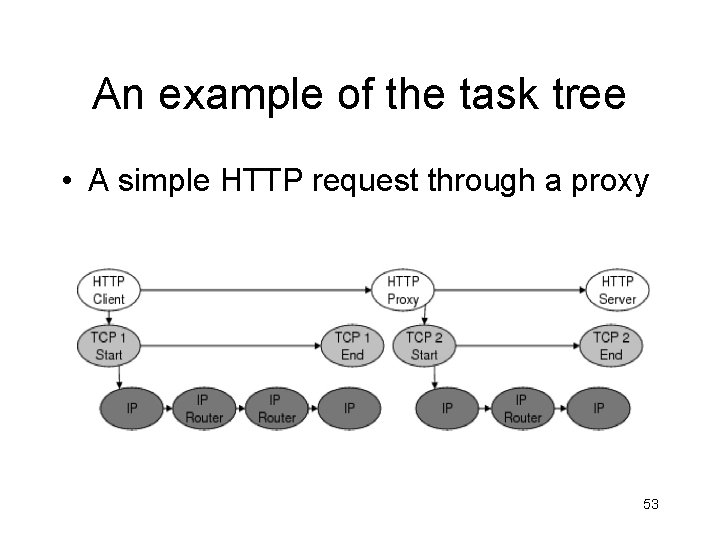

An example of the task tree • A simple HTTP request through a proxy 53

X-Trace Components • Data – X-Trace metadata • Network path – Task tree • Report – Reconstruct task tree 54

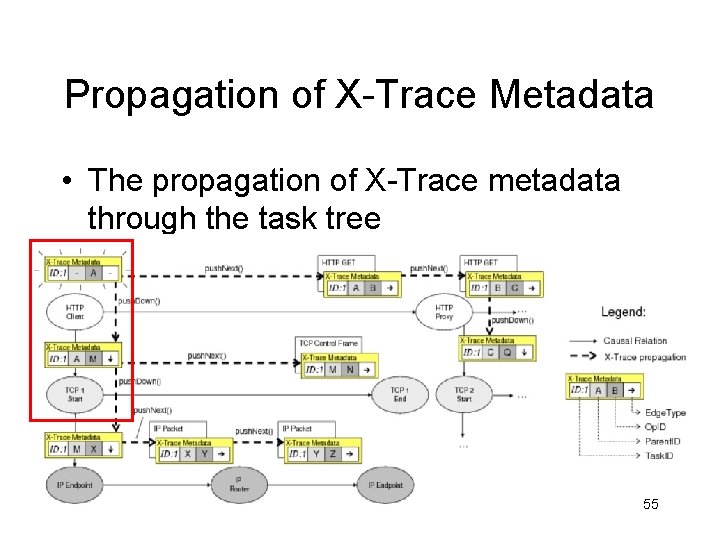

Propagation of X-Trace Metadata • The propagation of X-Trace metadata through the task tree 55

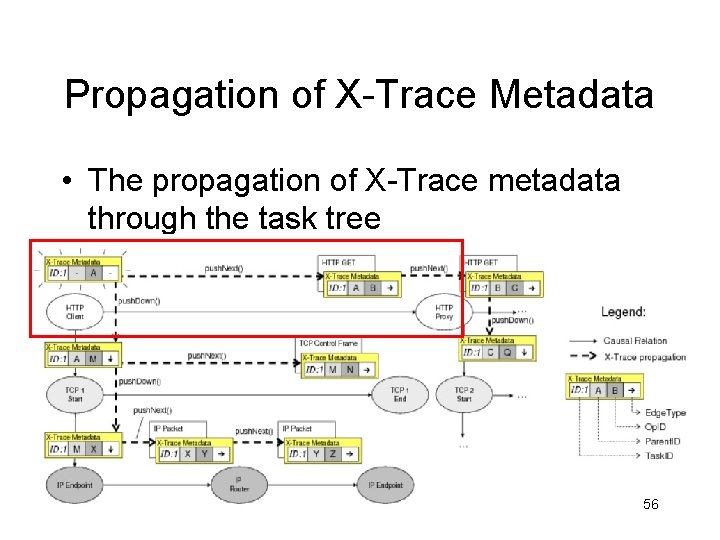

Propagation of X-Trace Metadata • The propagation of X-Trace metadata through the task tree 56

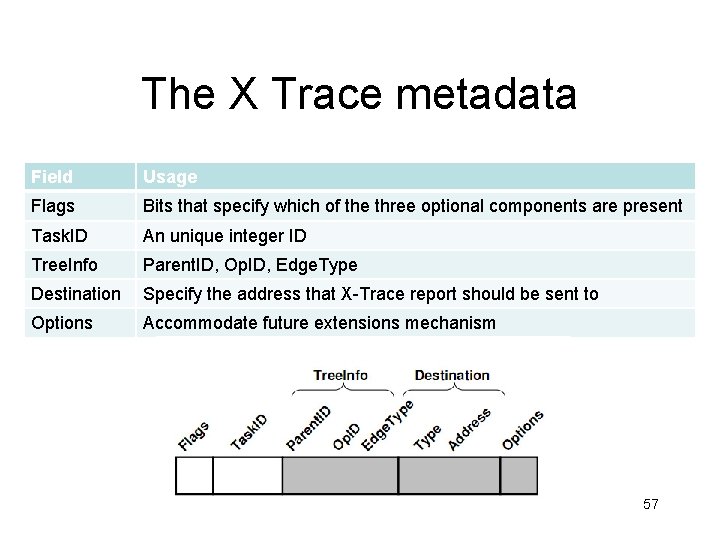

The X Trace metadata Field Usage Flags Bits that specify which of the three optional components are present Task. ID An unique integer ID Tree. Info Parent. ID, Op. ID, Edge. Type Destination Specify the address that X-Trace report should be sent to Options Accommodate future extensions mechanism 57

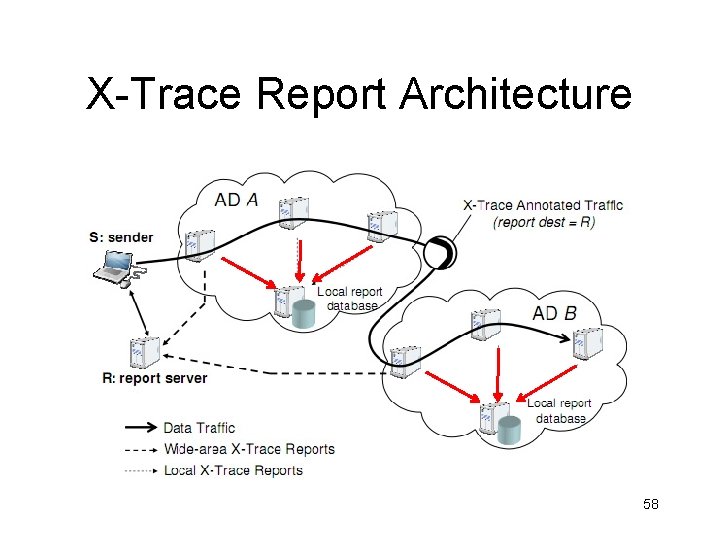

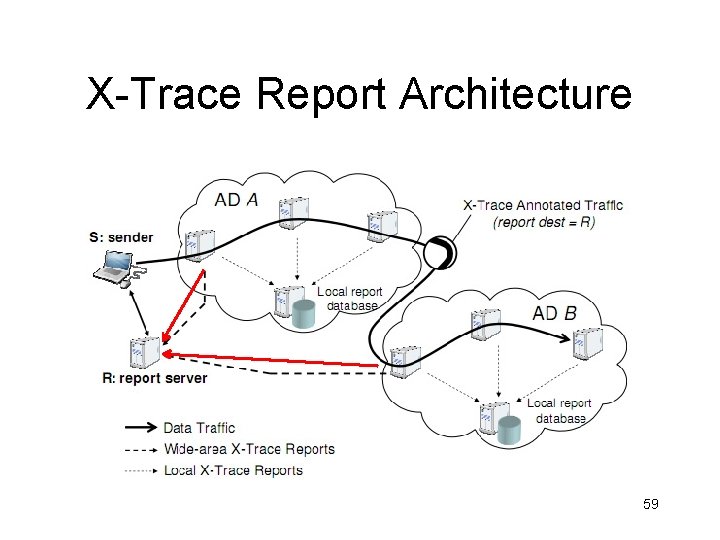

X-Trace Report Architecture 58

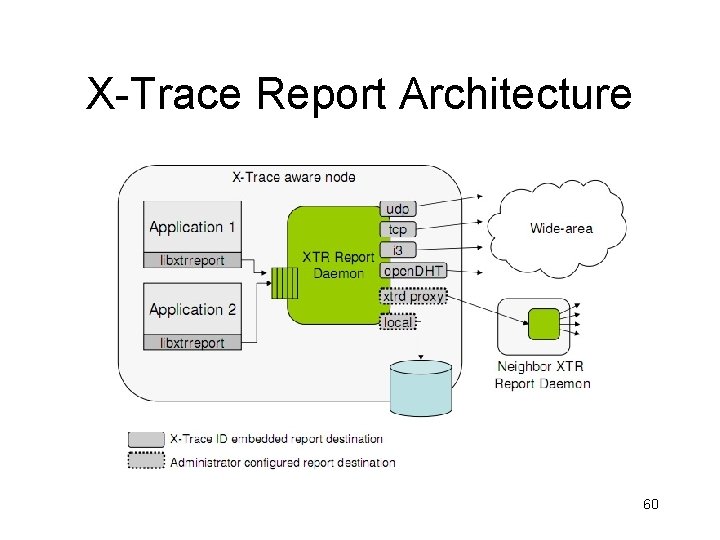

X-Trace Report Architecture 59

X-Trace Report Architecture 60

X-Trace-like in Google/Bing/Yahoo • Why? – Own large portion of the ecosystem – Use RPC for communication – Need to understand • Time for user request • Resource utilization by request

Sherlock V X-trace • Overhead V. Accuracy • Deployment issues – Invasiveness – Code modification

Conclusions • Sherlock passively infers network-wide dependencies from logs and traceroutes • It diagnoses faults by correlating user observations • X-trace actively discovers network-wide dependencies

- Slides: 61