Probability Review CS 479679 Pattern Recognition Dr George

Probability Review CS 479/679 Pattern Recognition Dr. George Bebis 1

Why Bother About Probabilities? • Accounting for uncertainty is a crucial component in decision making (e. g. , classification) because of ambiguity in our measurements. • Probability theory is the proper mechanism for accounting for uncertainty. • Take into account a-priori knowledge, for example: "If the fish was caught in the Atlantic ocean, then it is more likely to be salmon than sea-bass 2

Definitions • Random experiment – An experiment whose result is not certain in advance (e. g. , throwing a die) • Outcome – The result of a random experiment • Sample space – The set of all possible outcomes (e. g. , {1, 2, 3, 4, 5, 6}) • Event – A subset of the sample space (e. g. , obtain an odd number in the experiment of throwing a die = {1, 3, 5}) 3

Formulation of Probability • Intuitively, the probability of an event α could be defined as: where N(a) is the number of times that event α happens in n trials • Assumes that all outcomes are equally likely (Laplacian definition) 4

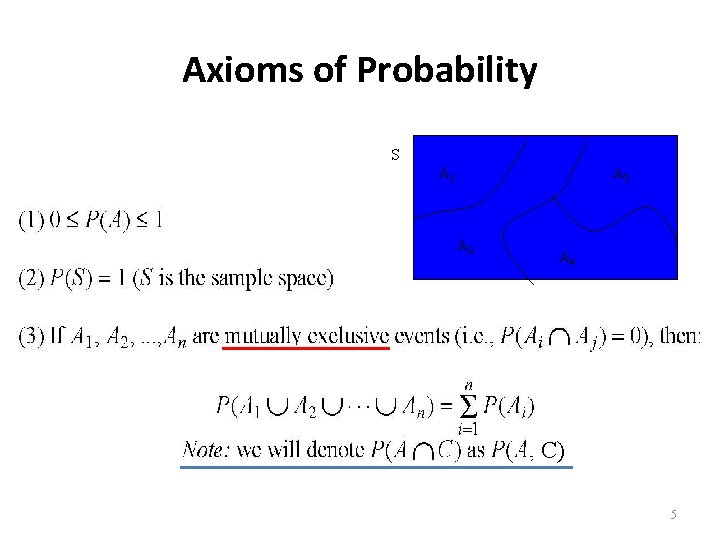

Axioms of Probability S A 1 A 3 A 2 A 4 C) 5

Unconditional (Prior) Probability • This is the probability of an event in the absence of any evidence. Example: P(Cavity)=0. 1 means that “in the absence of any other information, there is a 10% chance that the patient is having a cavity”. 6

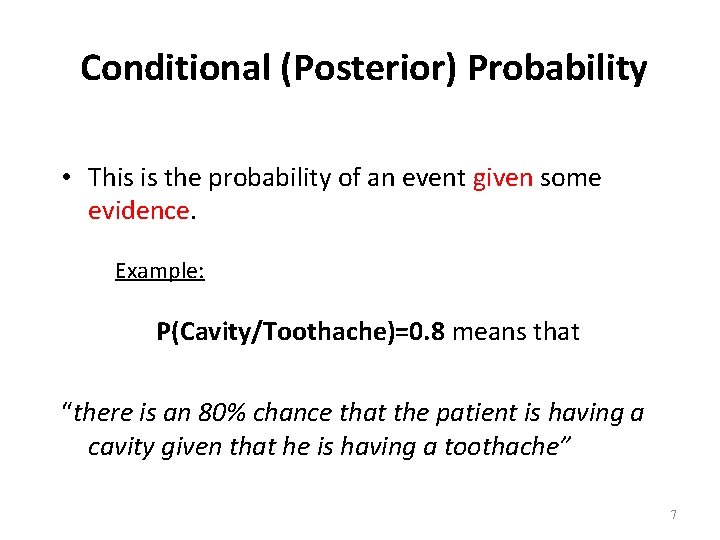

Conditional (Posterior) Probability • This is the probability of an event given some evidence. Example: P(Cavity/Toothache)=0. 8 means that “there is an 80% chance that the patient is having a cavity given that he is having a toothache” 7

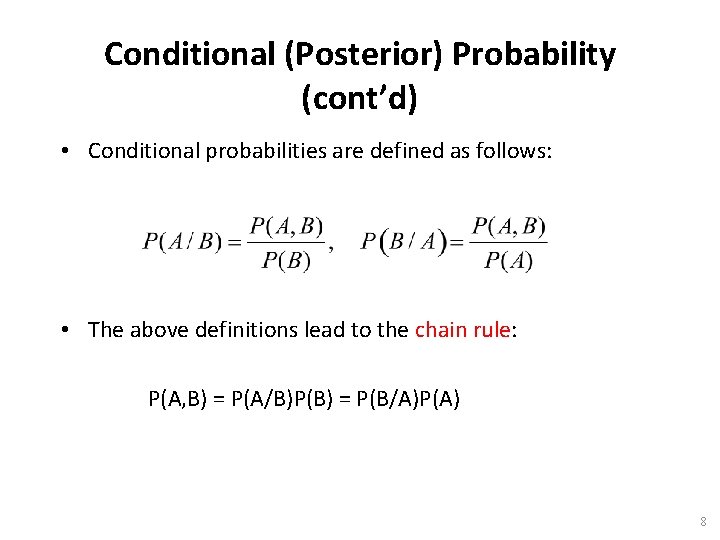

Conditional (Posterior) Probability (cont’d) • Conditional probabilities are defined as follows: • The above definitions lead to the chain rule: P(A, B) = P(A/B)P(B) = P(B/A)P(A) 8

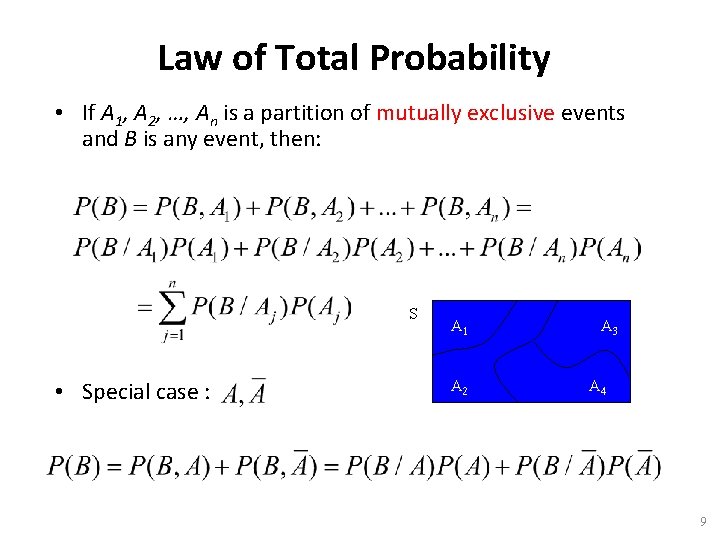

Law of Total Probability • If A 1, A 2, …, An is a partition of mutually exclusive events and B is any event, then: S • Special case : A 1 A 2 A 3 A 4 9

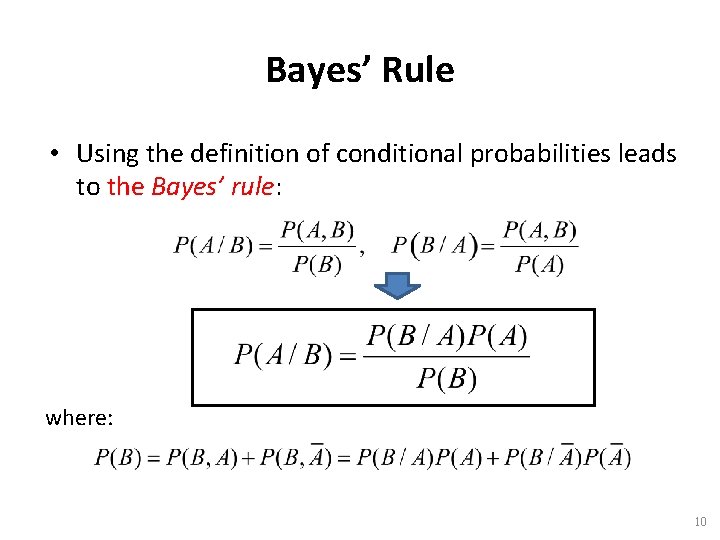

Bayes’ Rule • Using the definition of conditional probabilities leads to the Bayes’ rule: where: 10

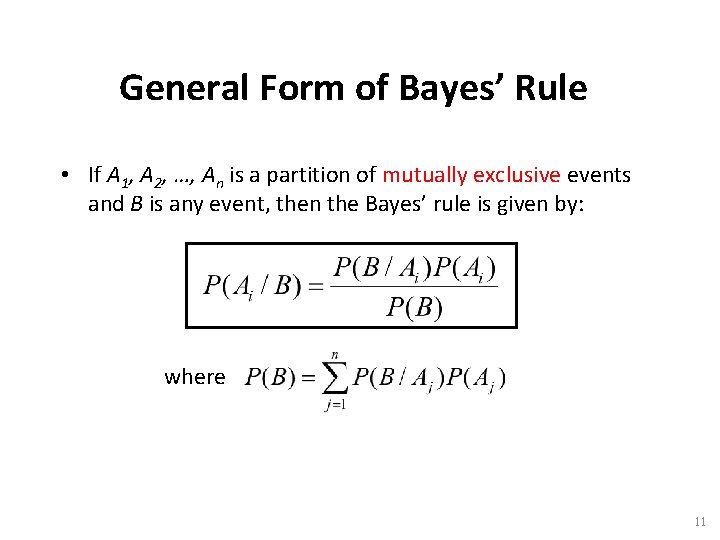

General Form of Bayes’ Rule • If A 1, A 2, …, An is a partition of mutually exclusive events and B is any event, then the Bayes’ rule is given by: where 11

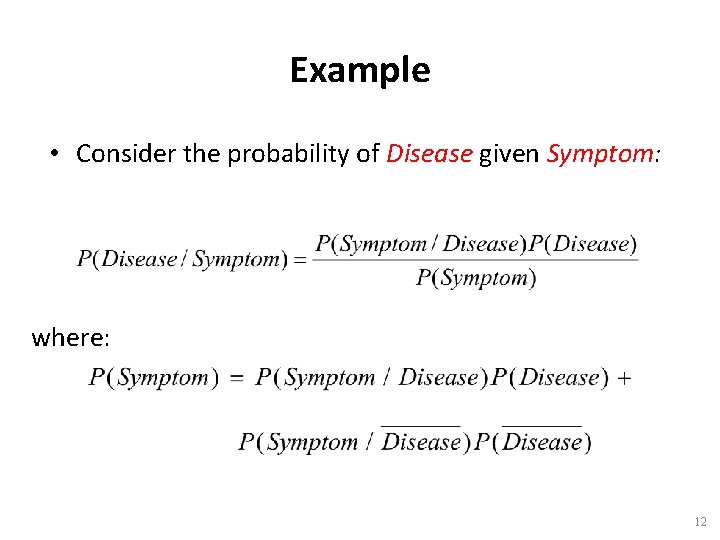

Example • Consider the probability of Disease given Symptom: where: 12

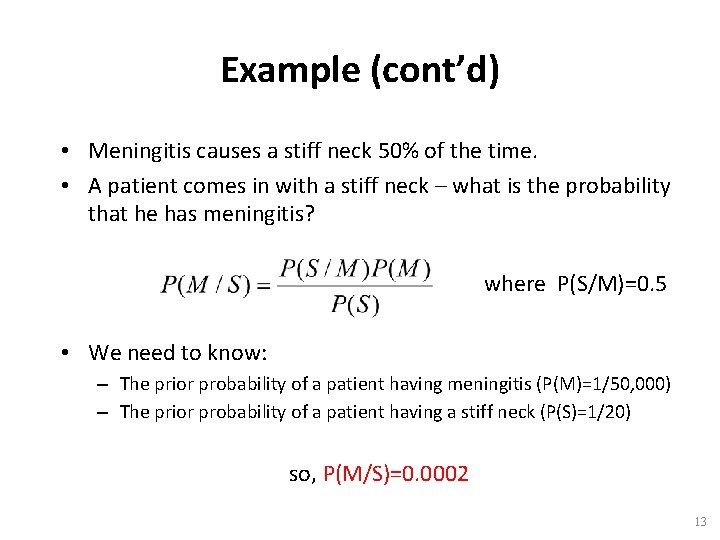

Example (cont’d) • Meningitis causes a stiff neck 50% of the time. • A patient comes in with a stiff neck – what is the probability that he has meningitis? where P(S/M)=0. 5 • We need to know: – The prior probability of a patient having meningitis (P(M)=1/50, 000) – The prior probability of a patient having a stiff neck (P(S)=1/20) so, P(M/S)=0. 0002 13

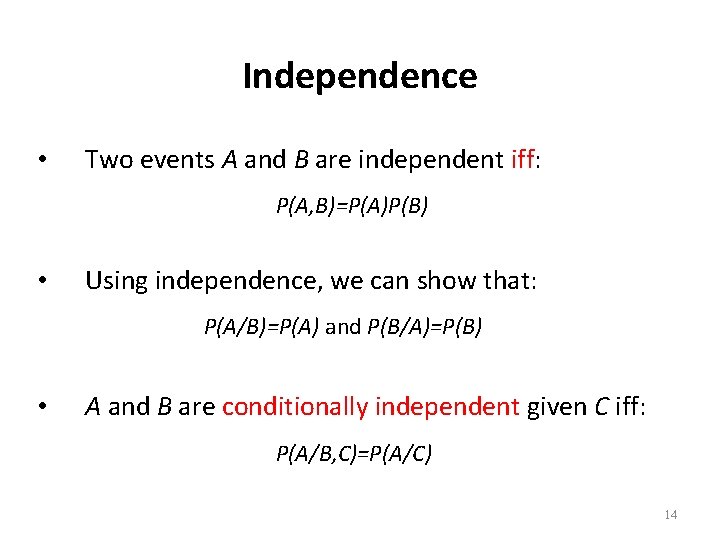

Independence • Two events A and B are independent iff: P(A, B)=P(A)P(B) • Using independence, we can show that: P(A/B)=P(A) and P(B/A)=P(B) • A and B are conditionally independent given C iff: P(A/B, C)=P(A/C) 14

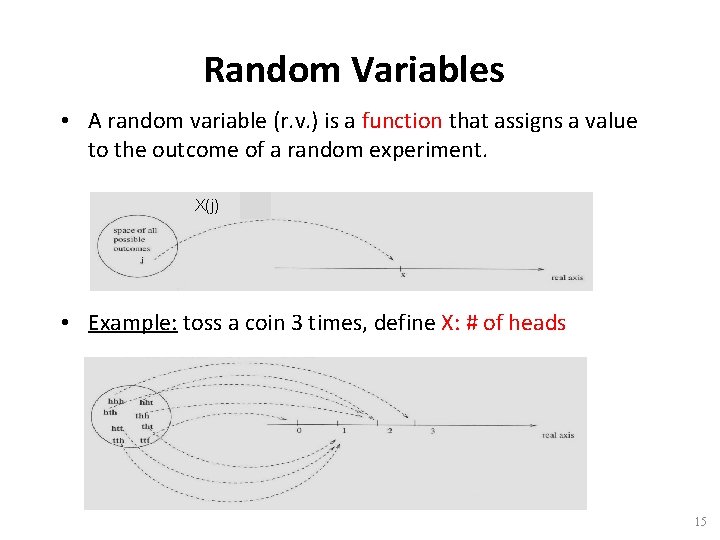

Random Variables • A random variable (r. v. ) is a function that assigns a value to the outcome of a random experiment. X(j) • Example: toss a coin 3 times, define X: # of heads 15

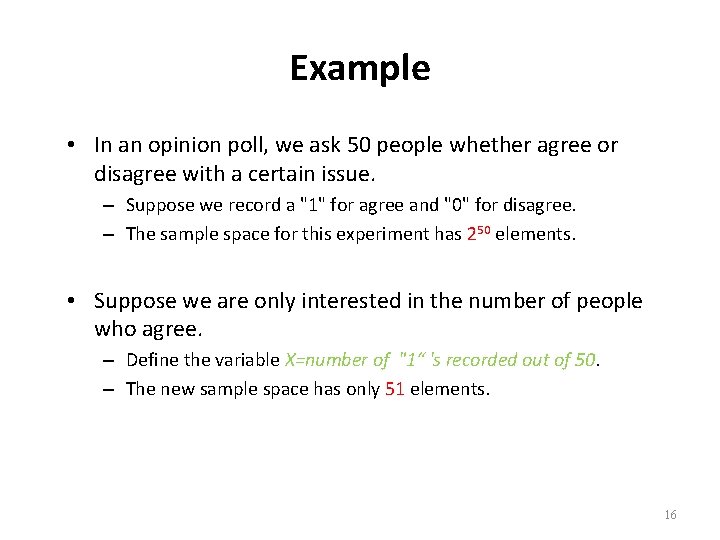

Example • In an opinion poll, we ask 50 people whether agree or disagree with a certain issue. – Suppose we record a "1" for agree and "0" for disagree. – The sample space for this experiment has 250 elements. • Suppose we are only interested in the number of people who agree. – Define the variable X=number of "1“ 's recorded out of 50. – The new sample space has only 51 elements. 16

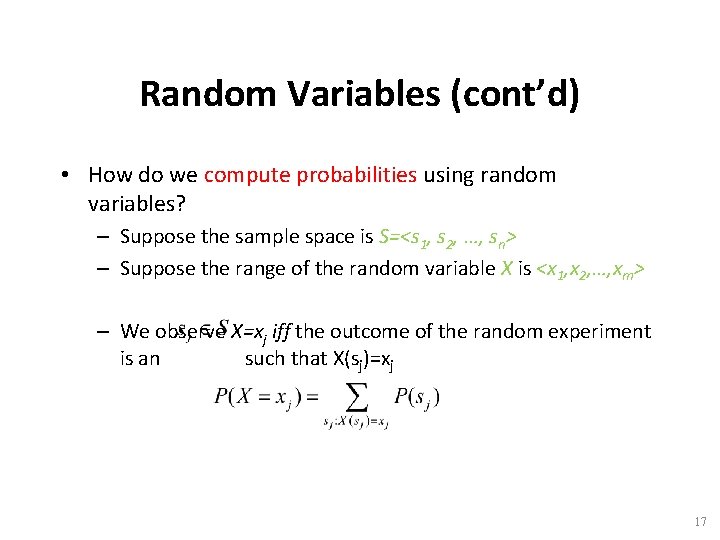

Random Variables (cont’d) • How do we compute probabilities using random variables? – Suppose the sample space is S=<s 1, s 2, …, sn> – Suppose the range of the random variable X is <x 1, x 2, …, xm> – We observe X=xj iff the outcome of the random experiment is an such that X(sj)=xj 17

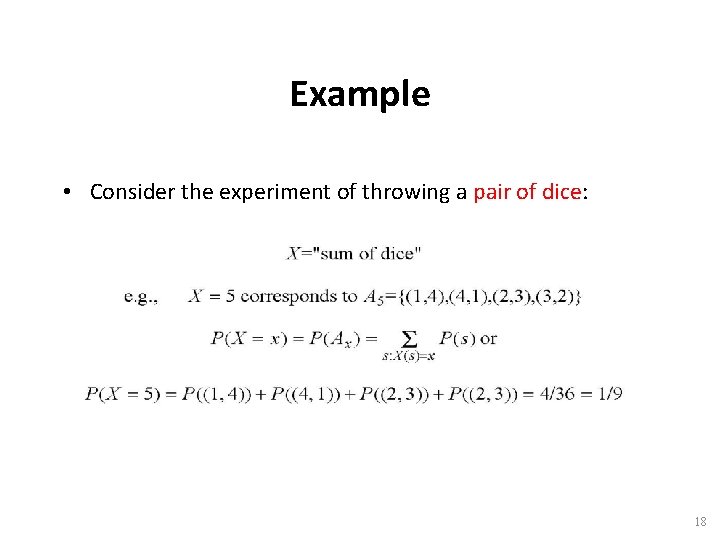

Example • Consider the experiment of throwing a pair of dice: 18

Discrete/Continuous Random Variables • A random variable (r. v. ) can be: – Discrete: assumes only discrete values. – Continuous: assumes continuous values (e. g. , sensor readings). • Random variables can be characterized by: – Probability mass/density function (pmf/pdf) – Probability distribution function (PDF) 19

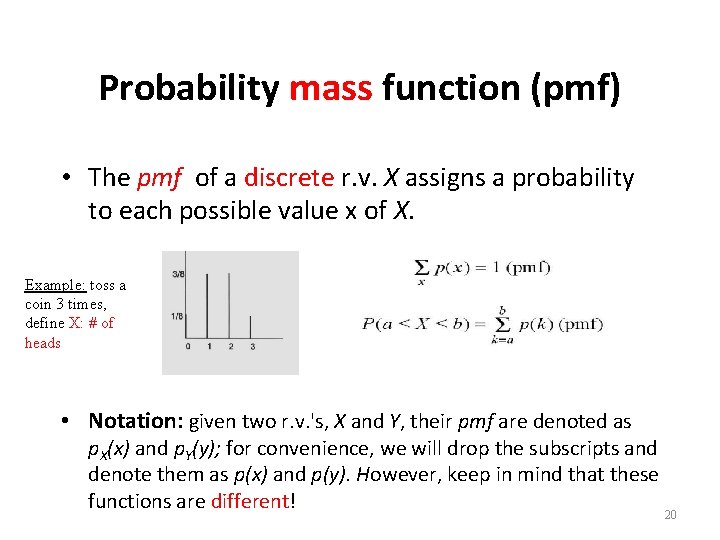

Probability mass function (pmf) • The pmf of a discrete r. v. X assigns a probability to each possible value x of X. Example: toss a coin 3 times, define X: # of heads • Notation: given two r. v. 's, X and Y, their pmf are denoted as p. X(x) and p. Y(y); for convenience, we will drop the subscripts and denote them as p(x) and p(y). However, keep in mind that these functions are different! 20

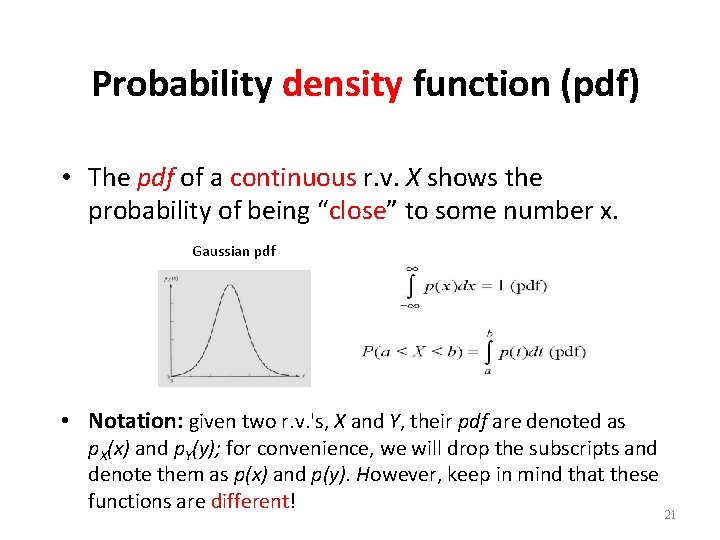

Probability density function (pdf) • The pdf of a continuous r. v. X shows the probability of being “close” to some number x. Gaussian pdf • Notation: given two r. v. 's, X and Y, their pdf are denoted as p. X(x) and p. Y(y); for convenience, we will drop the subscripts and denote them as p(x) and p(y). However, keep in mind that these functions are different! 21

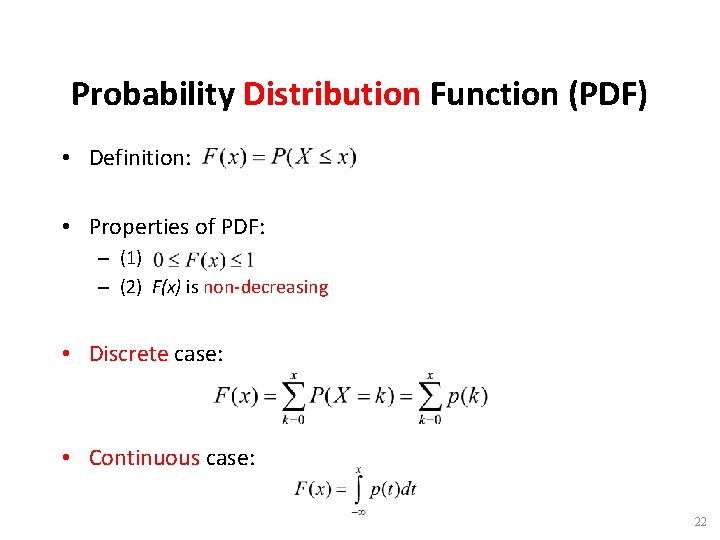

Probability Distribution Function (PDF) • Definition: • Properties of PDF: – (1) – (2) F(x) is non-decreasing • Discrete case: • Continuous case: 22

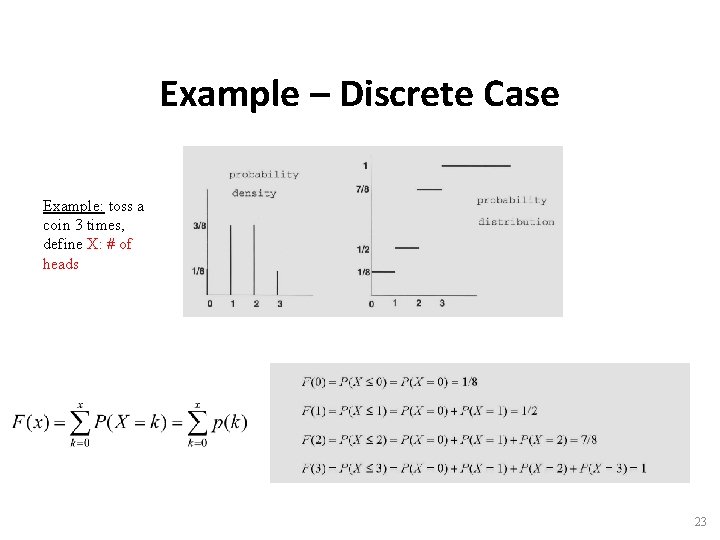

Example – Discrete Case Example: toss a coin 3 times, define X: # of heads 23

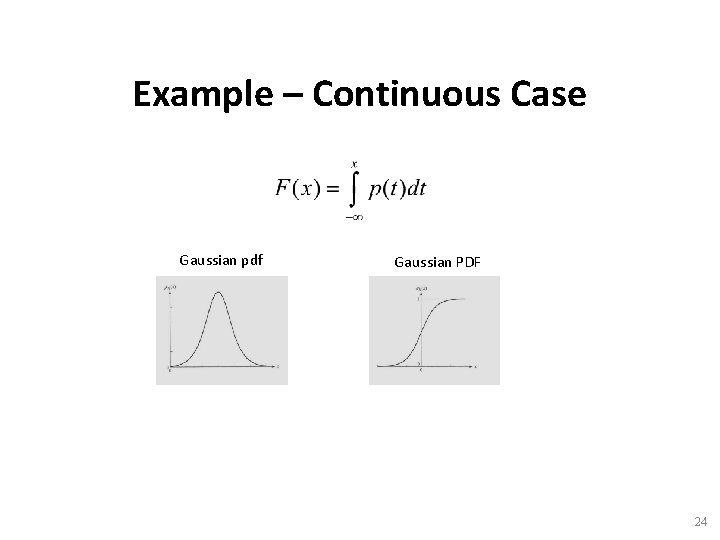

Example – Continuous Case Gaussian pdf Gaussian PDF 24

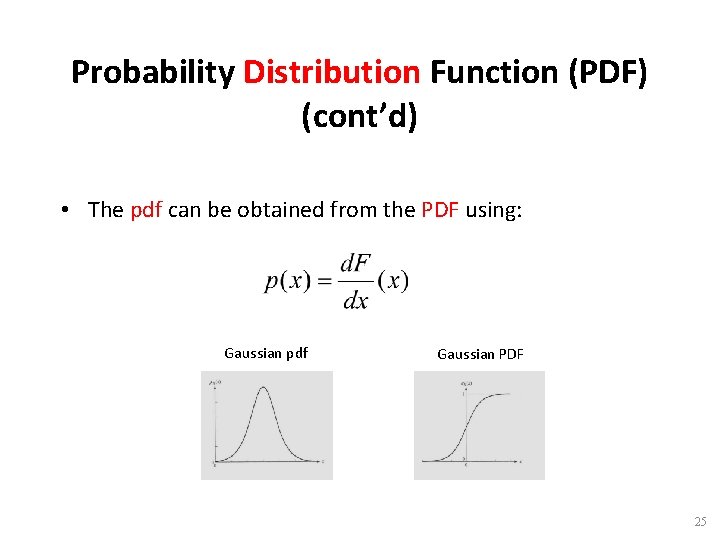

Probability Distribution Function (PDF) (cont’d) • The pdf can be obtained from the PDF using: Gaussian pdf Gaussian PDF 25

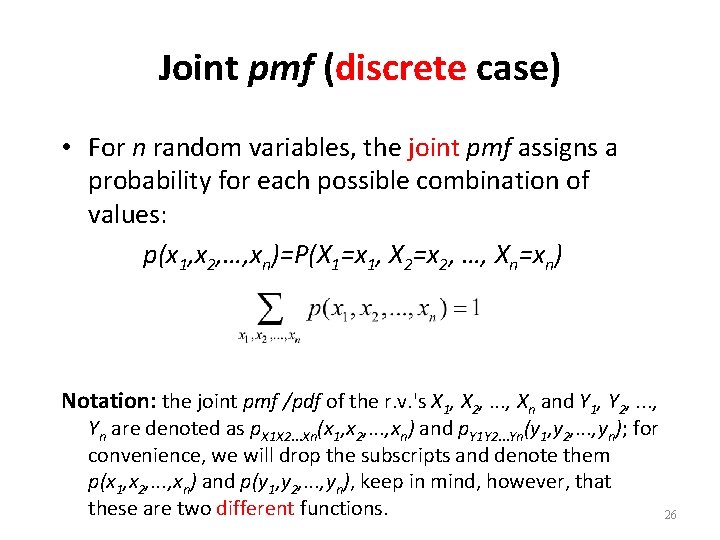

Joint pmf (discrete case) • For n random variables, the joint pmf assigns a probability for each possible combination of values: p(x 1, x 2, …, xn)=P(X 1=x 1, X 2=x 2, …, Xn=xn) Notation: the joint pmf /pdf of the r. v. 's X 1, X 2, . . . , Xn and Y 1, Y 2, . . . , Yn are denoted as p. X 1 X 2. . . Xn(x 1, x 2, . . . , xn) and p. Y 1 Y 2. . . Yn(y 1, y 2, . . . , yn); for convenience, we will drop the subscripts and denote them p(x 1, x 2, . . . , xn) and p(y 1, y 2, . . . , yn), keep in mind, however, that these are two different functions. 26

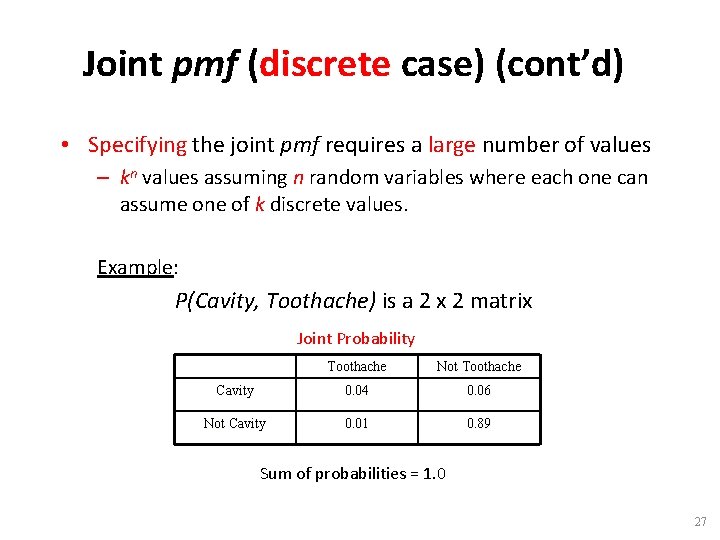

Joint pmf (discrete case) (cont’d) • Specifying the joint pmf requires a large number of values – kn values assuming n random variables where each one can assume one of k discrete values. Example: P(Cavity, Toothache) is a 2 x 2 matrix Joint Probability Toothache Not Toothache Cavity 0. 04 0. 06 Not Cavity 0. 01 0. 89 Sum of probabilities = 1. 0 27

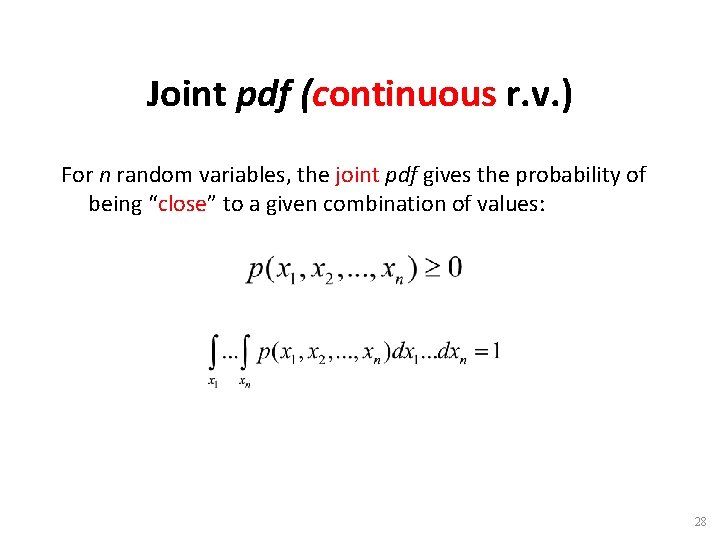

Joint pdf (continuous r. v. ) For n random variables, the joint pdf gives the probability of being “close” to a given combination of values: 28

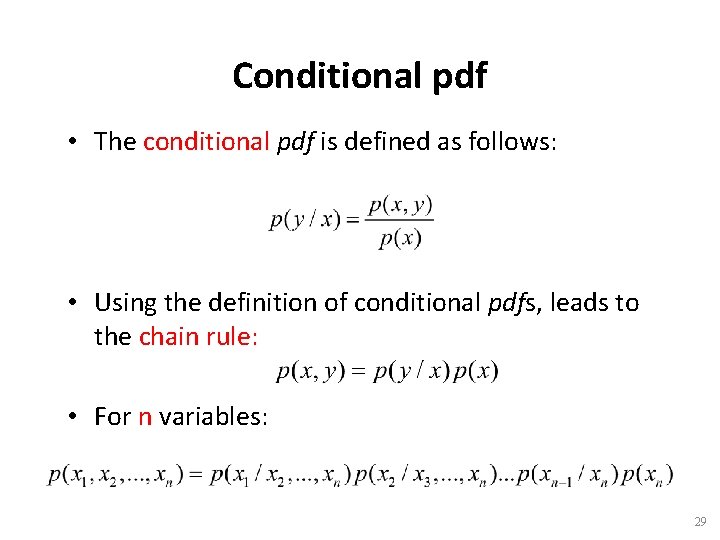

Conditional pdf • The conditional pdf is defined as follows: • Using the definition of conditional pdfs, leads to the chain rule: • For n variables: 29

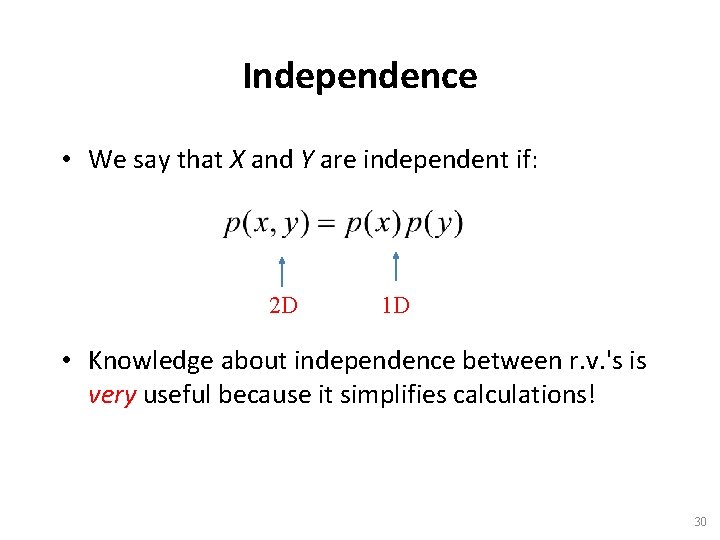

Independence • We say that X and Y are independent if: 2 D 1 D • Knowledge about independence between r. v. 's is very useful because it simplifies calculations! 30

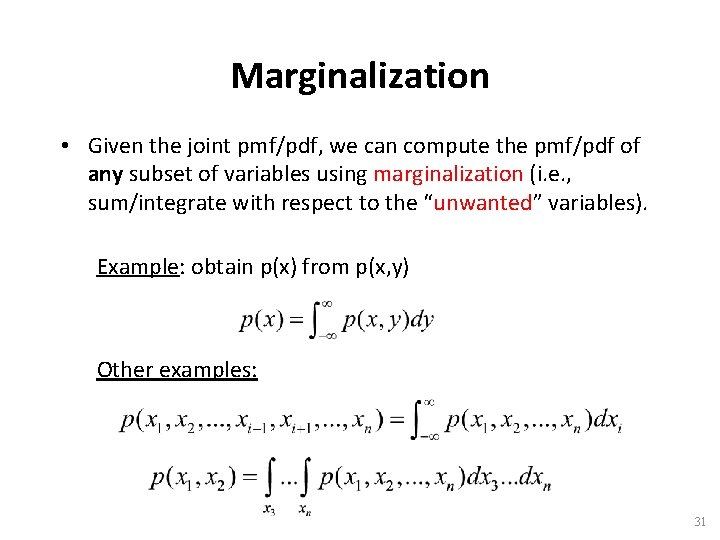

Marginalization • Given the joint pmf/pdf, we can compute the pmf/pdf of any subset of variables using marginalization (i. e. , sum/integrate with respect to the “unwanted” variables). Example: obtain p(x) from p(x, y) Other examples: 31

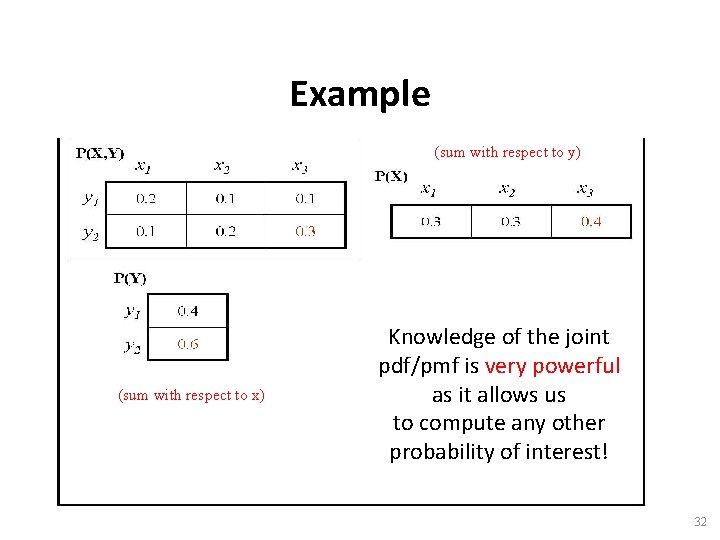

Example (sum with respect to y) (sum with respect to x) Knowledge of the joint pdf/pmf is very powerful as it allows us to compute any other probability of interest! 32

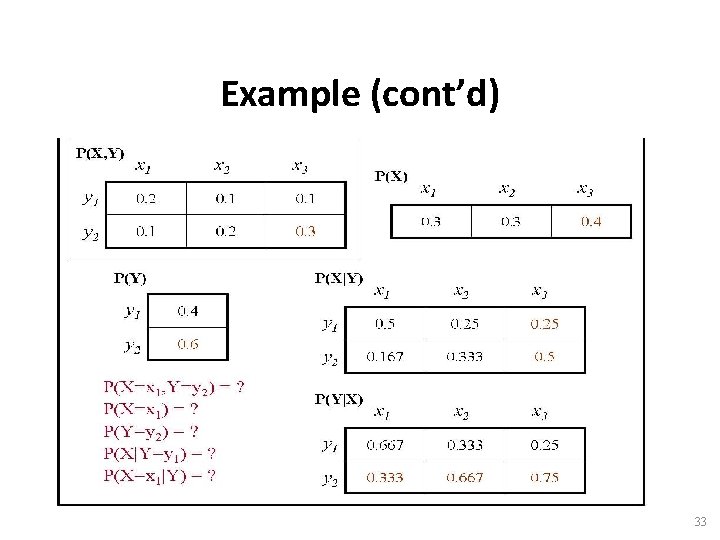

Example (cont’d) 33

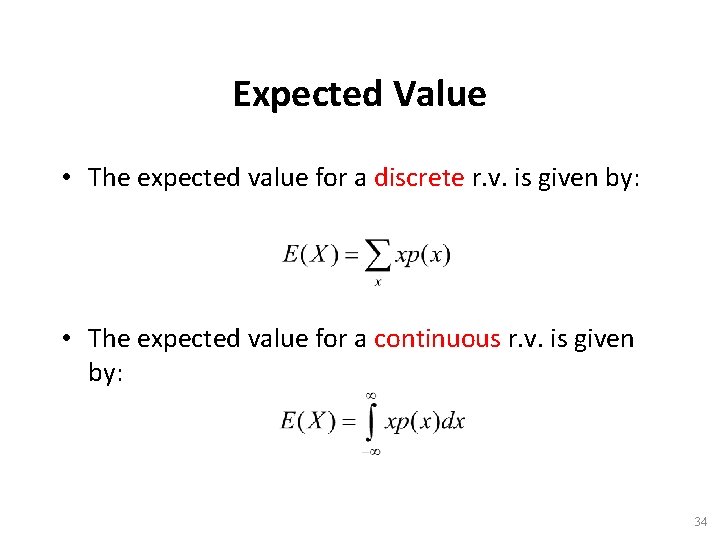

Expected Value • The expected value for a discrete r. v. is given by: • The expected value for a continuous r. v. is given by: 34

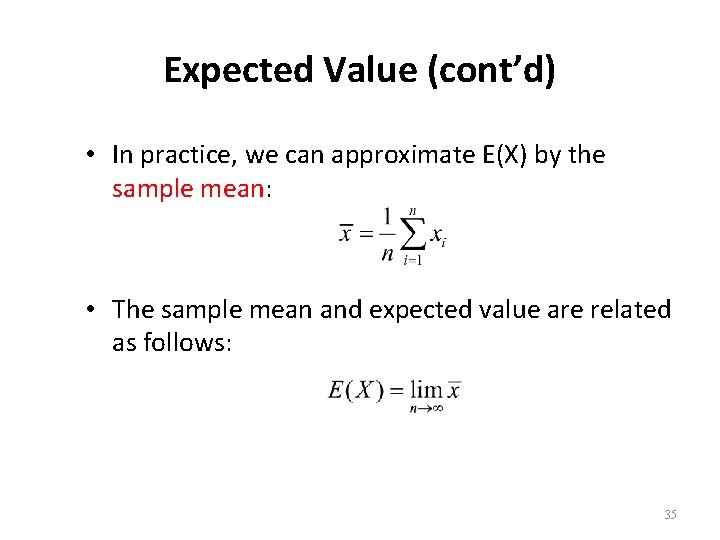

Expected Value (cont’d) • In practice, we can approximate E(X) by the sample mean: • The sample mean and expected value are related as follows: 35

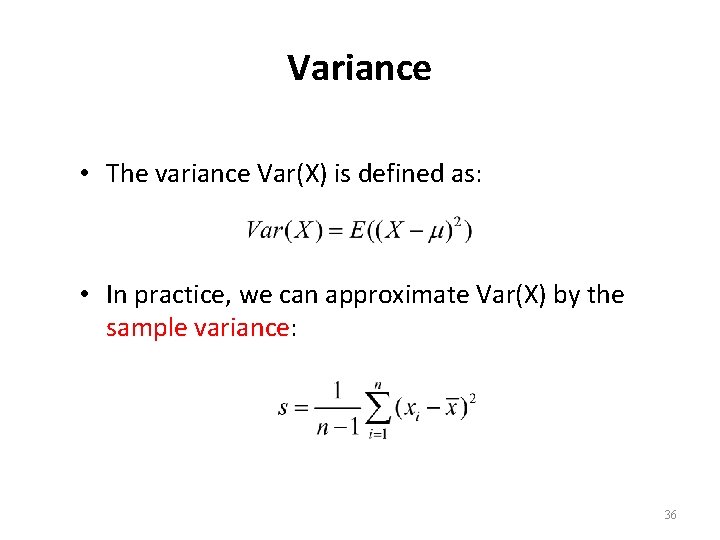

Variance • The variance Var(X) is defined as: • In practice, we can approximate Var(X) by the sample variance: 36

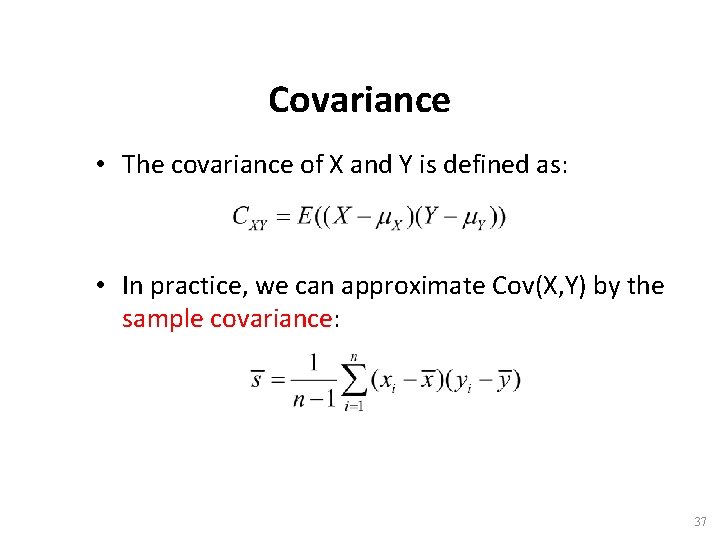

Covariance • The covariance of X and Y is defined as: • In practice, we can approximate Cov(X, Y) by the sample covariance: 37

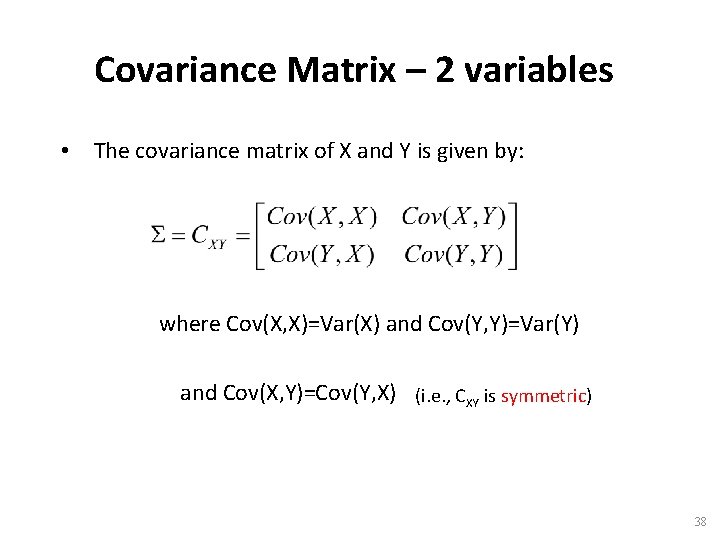

Covariance Matrix – 2 variables • The covariance matrix of X and Y is given by: where Cov(X, X)=Var(X) and Cov(Y, Y)=Var(Y) and Cov(X, Y)=Cov(Y, X) (i. e. , CXY is symmetric) 38

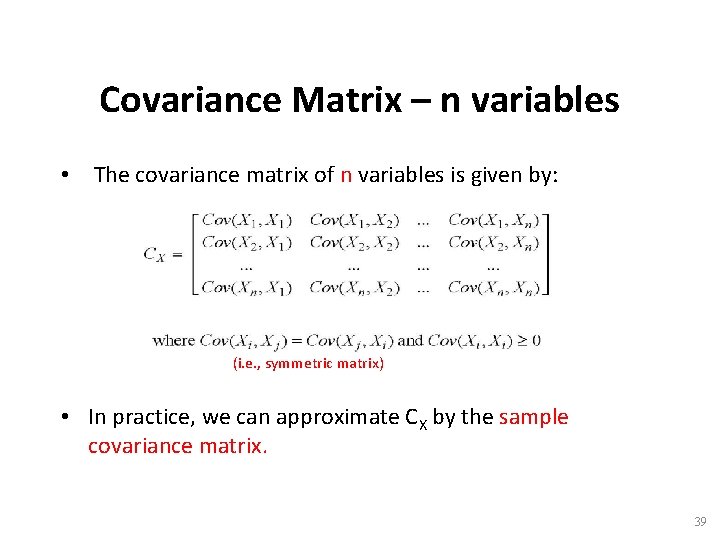

Covariance Matrix – n variables • The covariance matrix of n variables is given by: (i. e. , symmetric matrix) • In practice, we can approximate CX by the sample covariance matrix. 39

Uncorrelated r. v. ’s • X and Y are uncorrelated if Cov(X, Y) = 0 • If X 1, X 2, …, Xn are uncorrelated, then CX is diagonal. 40

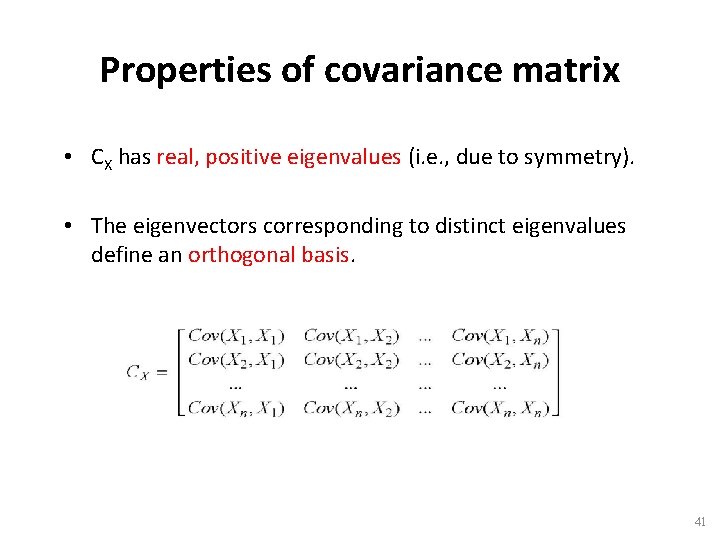

Properties of covariance matrix • CX has real, positive eigenvalues (i. e. , due to symmetry). • The eigenvectors corresponding to distinct eigenvalues define an orthogonal basis. 41

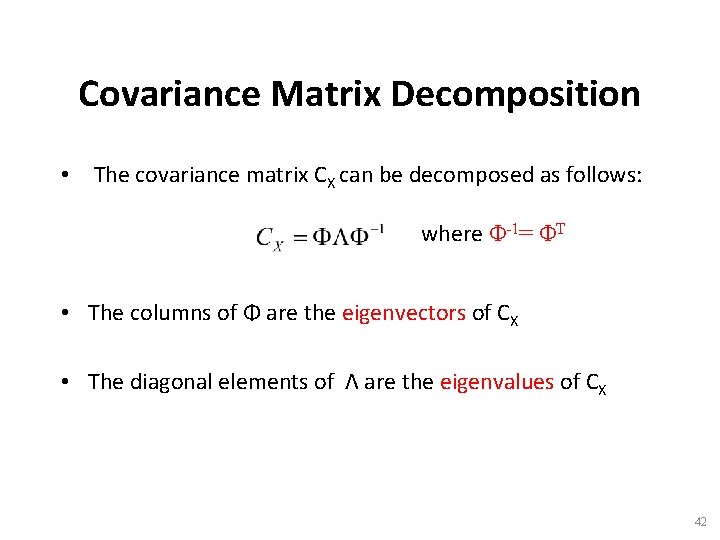

Covariance Matrix Decomposition • The covariance matrix CX can be decomposed as follows: where Φ-1= ΦT • The columns of Φ are the eigenvectors of CX • The diagonal elements of Λ are the eigenvalues of CX 42

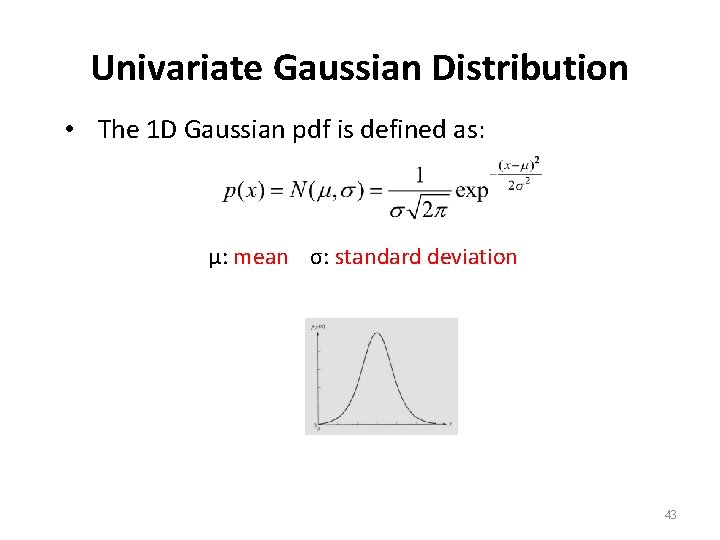

Univariate Gaussian Distribution • The 1 D Gaussian pdf is defined as: μ: mean σ: standard deviation 43

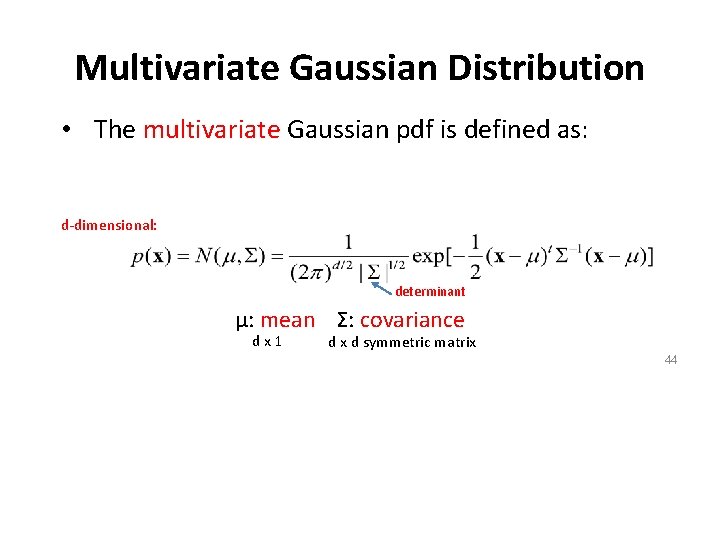

Multivariate Gaussian Distribution • The multivariate Gaussian pdf is defined as: d-dimensional: determinant μ: mean Σ: covariance dx 1 d x d symmetric matrix 44

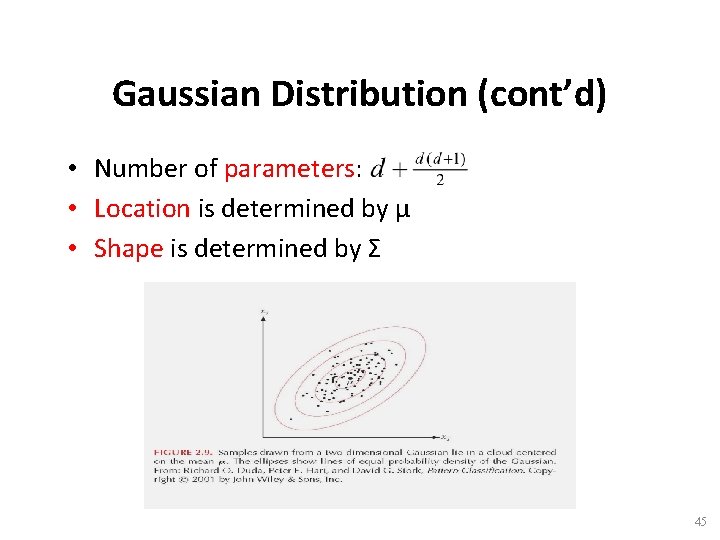

Gaussian Distribution (cont’d) • Number of parameters: • Location is determined by μ • Shape is determined by Σ 45

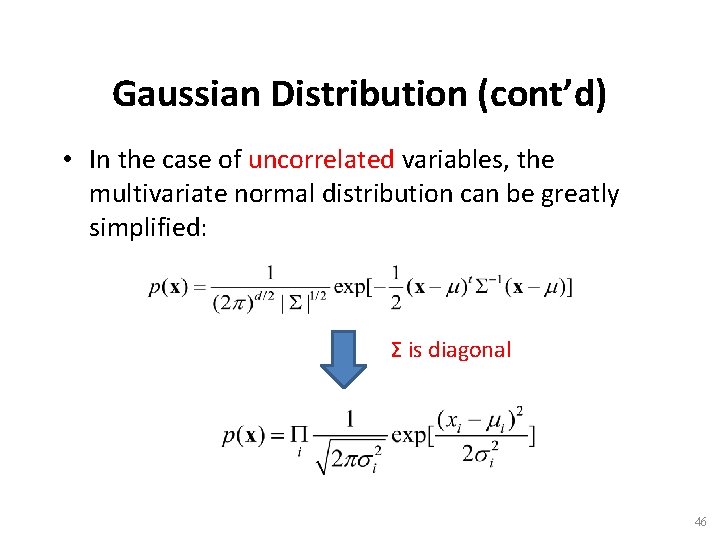

Gaussian Distribution (cont’d) • In the case of uncorrelated variables, the multivariate normal distribution can be greatly simplified: Σ is diagonal 46

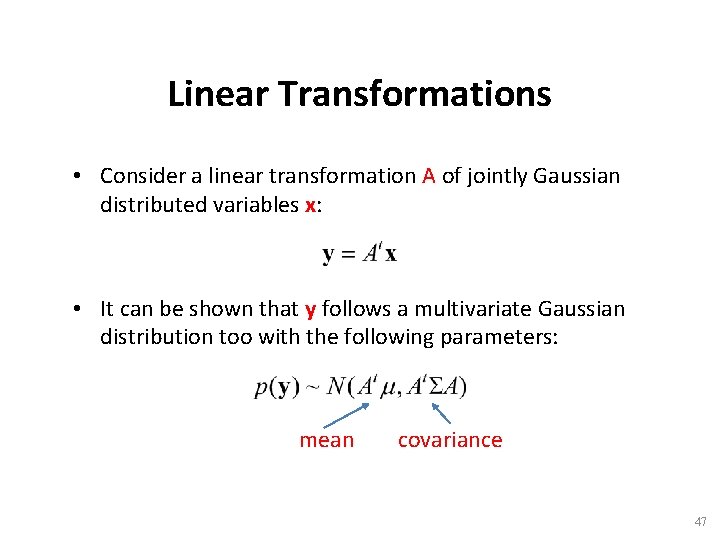

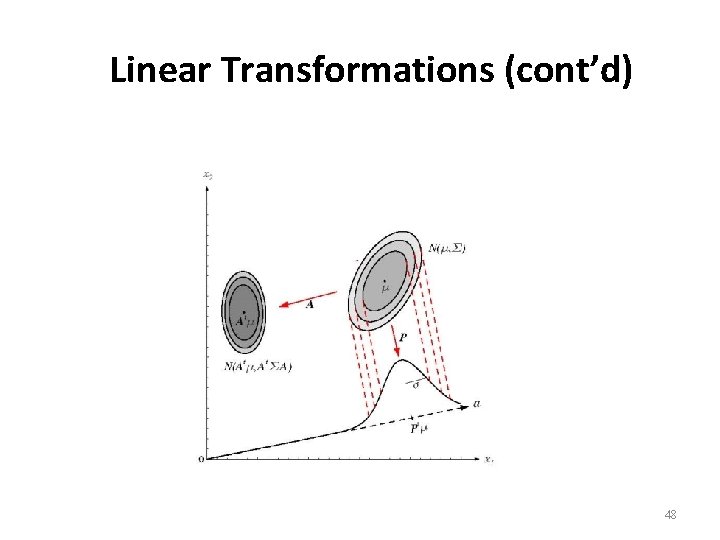

Linear Transformations • Consider a linear transformation A of jointly Gaussian distributed variables x: • It can be shown that y follows a multivariate Gaussian distribution too with the following parameters: mean covariance 47

Linear Transformations (cont’d) 48

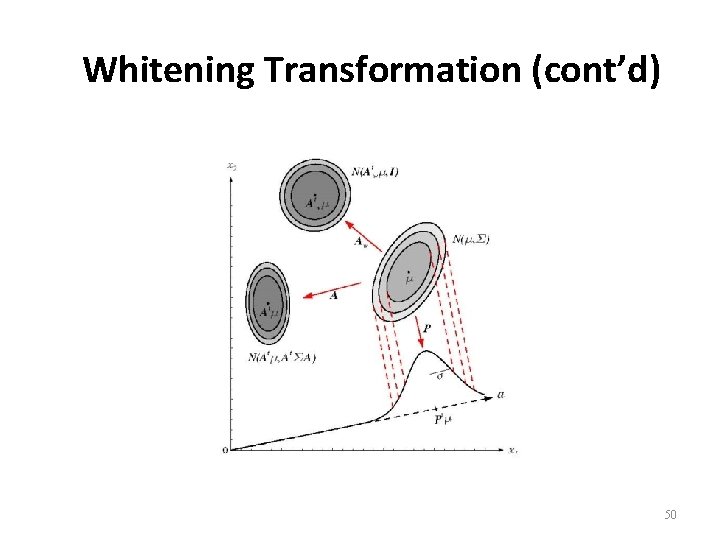

Whitening Transformation • Consider the following transformation: where • It can be shown that if then that is y are uncorrelated! 49

Whitening Transformation (cont’d) 50

- Slides: 50