Probabilistically Accurate Program Transformations Sasa Misailovic with Daniel

Probabilistically Accurate Program Transformations Sasa Misailovic with Daniel Roy and Martin Rinard MIT CSAIL SAS 2011, Venice, Italy

Candidate Transformation for (i = 0; i < n; i++) { … }

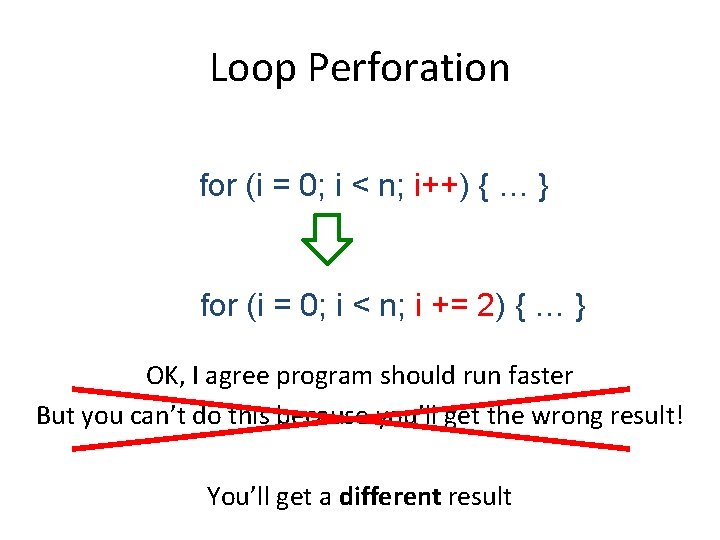

Loop Perforation for (i = 0; i < n; i++) { … } for (i = 0; i < n; i += 2) { … }

Loop Perforation for (i = 0; i < n; i++) { … } for (i = 0; i < n; i += 2) { … } OK, I agree program should run faster But you can’t do this because you’ll get the wrong result!

Loop Perforation for (i = 0; i < n; i++) { … } for (i = 0; i < n; i += 2) { … } OK, I agree program should run faster But you can’t do this because you’ll get the wrong result! You’ll get a different result

![Our Experience [ICSE ‘ 10, FSE ’ 11] Experimental evaluation on PARSEC benchmarks Video Our Experience [ICSE ‘ 10, FSE ’ 11] Experimental evaluation on PARSEC benchmarks Video](http://slidetodoc.com/presentation_image/e445f8760fa11d3a3e7520a2f60fb673/image-6.jpg)

Our Experience [ICSE ‘ 10, FSE ’ 11] Experimental evaluation on PARSEC benchmarks Video Encoding Financial Analysis . . . Similarity Search Machine Learning Multiple perforated loops per application Overall performance increase of over 2 times At the same time incurred only small accuracy loss

![Our Experience [ICSE ‘ 10, FSE ’ 11] Experimental evaluation on PARSEC benchmarks Speedup: Our Experience [ICSE ‘ 10, FSE ’ 11] Experimental evaluation on PARSEC benchmarks Speedup:](http://slidetodoc.com/presentation_image/e445f8760fa11d3a3e7520a2f60fb673/image-7.jpg)

Our Experience [ICSE ‘ 10, FSE ’ 11] Experimental evaluation on PARSEC benchmarks Speedup: 1. 2 x Original program Perforated program

![Our Experience [ICSE ‘ 10, FSE ’ 11] Experimental evaluation on PARSEC benchmarks Speedup: Our Experience [ICSE ‘ 10, FSE ’ 11] Experimental evaluation on PARSEC benchmarks Speedup:](http://slidetodoc.com/presentation_image/e445f8760fa11d3a3e7520a2f60fb673/image-8.jpg)

Our Experience [ICSE ‘ 10, FSE ’ 11] Experimental evaluation on PARSEC benchmarks Speedup: 1. 1 x Original program Perforated program

![Our Experience [ICSE ‘ 10, FSE ’ 11] Experimental evaluation on PARSEC benchmarks Speedup: Our Experience [ICSE ‘ 10, FSE ’ 11] Experimental evaluation on PARSEC benchmarks Speedup:](http://slidetodoc.com/presentation_image/e445f8760fa11d3a3e7520a2f60fb673/image-9.jpg)

Our Experience [ICSE ‘ 10, FSE ’ 11] Experimental evaluation on PARSEC benchmarks Speedup: 1. 1 x Original program Perforated program

![Our Experience [ICSE ‘ 10, FSE ’ 11] Experimental evaluation on PARSEC benchmarks Speedup: Our Experience [ICSE ‘ 10, FSE ’ 11] Experimental evaluation on PARSEC benchmarks Speedup:](http://slidetodoc.com/presentation_image/e445f8760fa11d3a3e7520a2f60fb673/image-10.jpg)

Our Experience [ICSE ‘ 10, FSE ’ 11] Experimental evaluation on PARSEC benchmarks Speedup: 1. 5 x Original program Perforated program

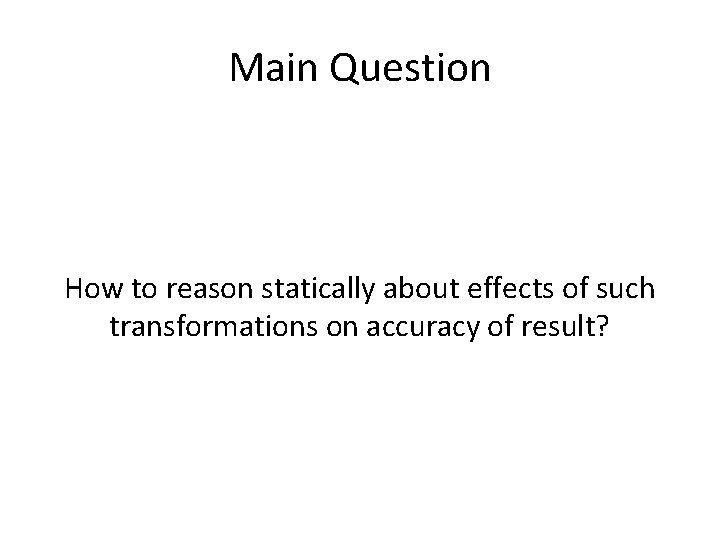

Main Question How to reason statically about effects of such transformations on accuracy of result?

Computation difference

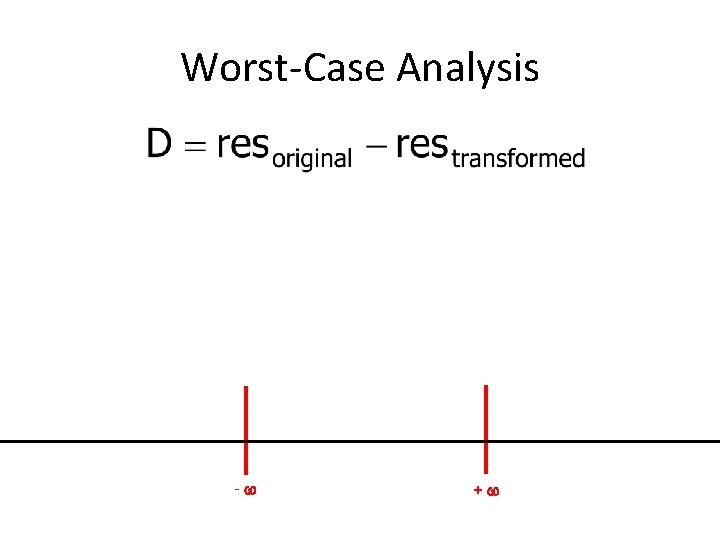

Worst-Case Analysis - +

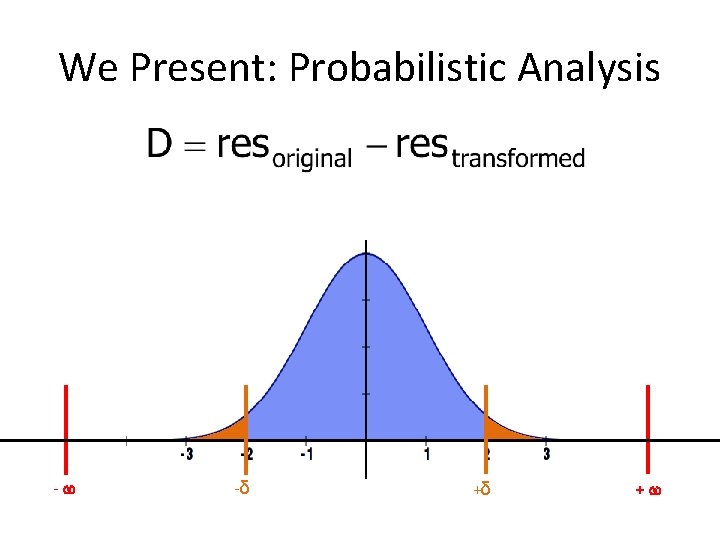

We Present: Probabilistic Analysis - -δ +δ +

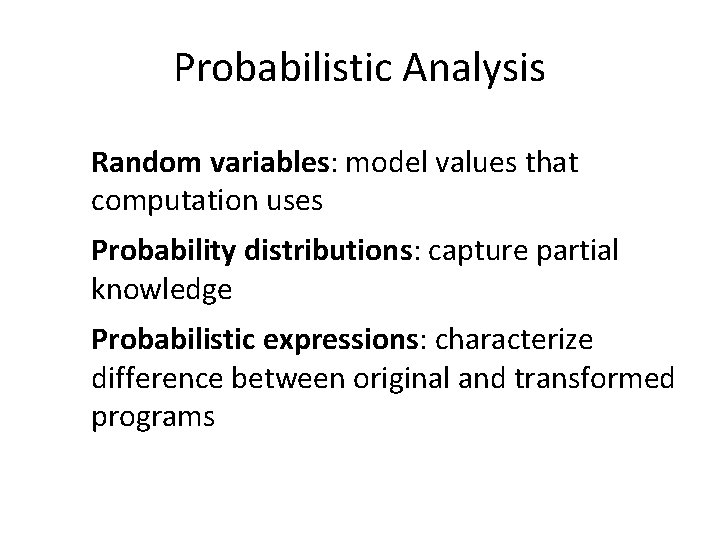

Probabilistic Analysis Random variables: model values that computation uses Probability distributions: capture partial knowledge Probabilistic expressions: characterize difference between original and transformed programs

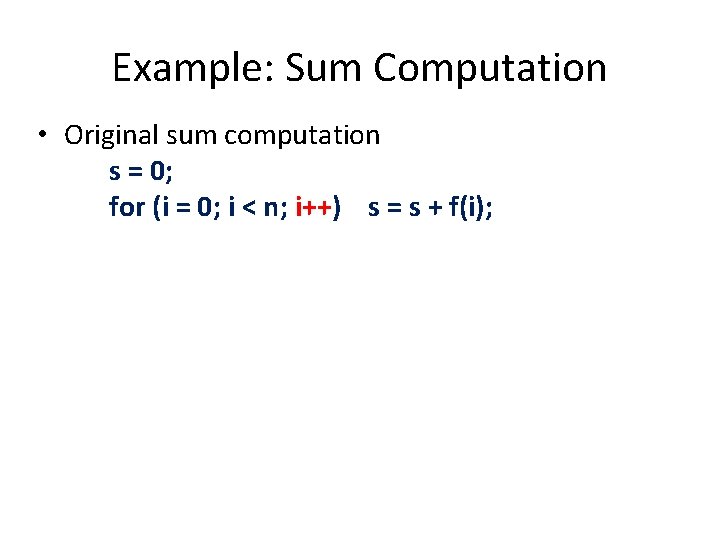

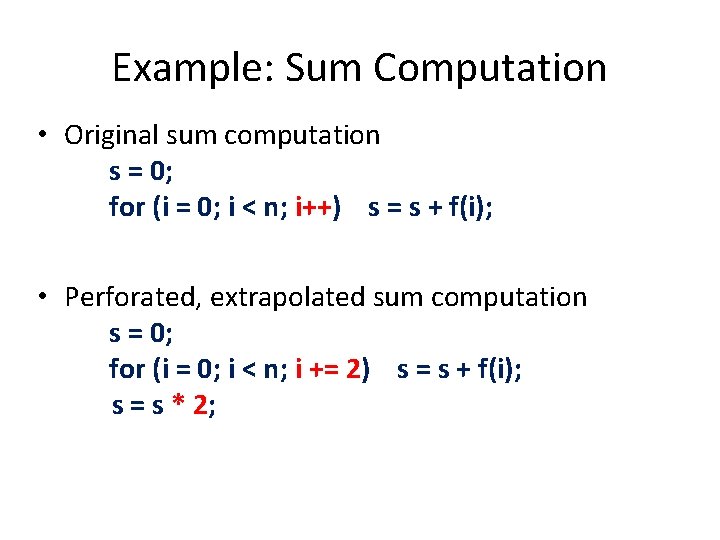

Example: Sum Computation • Original sum computation s = 0; for (i = 0; i < n; i++) s = s + f(i);

Example: Sum Computation • Original sum computation s = 0; for (i = 0; i < n; i++) s = s + f(i); • Perforated, extrapolated sum computation s = 0; for (i = 0; i < n; i += 2) s = s + f(i); s = s * 2;

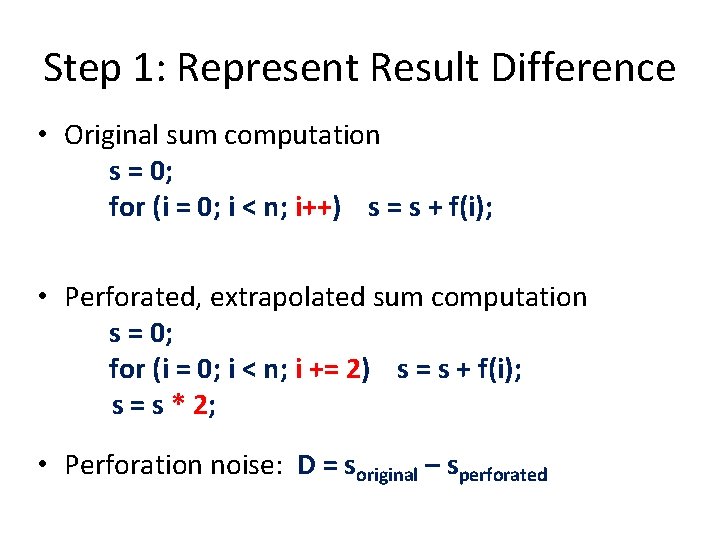

Step 1: Represent Result Difference • Original sum computation s = 0; for (i = 0; i < n; i++) s = s + f(i); • Perforated, extrapolated sum computation s = 0; for (i = 0; i < n; i += 2) s = s + f(i); s = s * 2; • Perforation noise: D = soriginal – sperforated

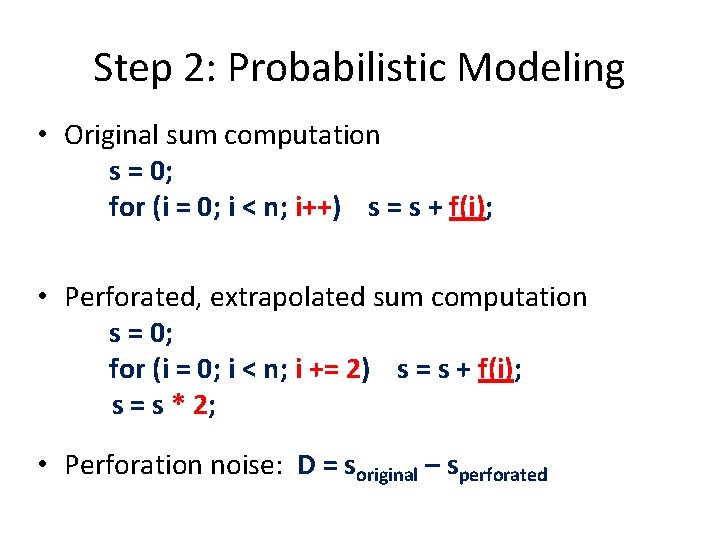

Step 2: Probabilistic Modeling • Original sum computation s = 0; for (i = 0; i < n; i++) s = s + f(i); • Perforated, extrapolated sum computation s = 0; for (i = 0; i < n; i += 2) s = s + f(i); s = s * 2; • Perforation noise: D = soriginal – sperforated

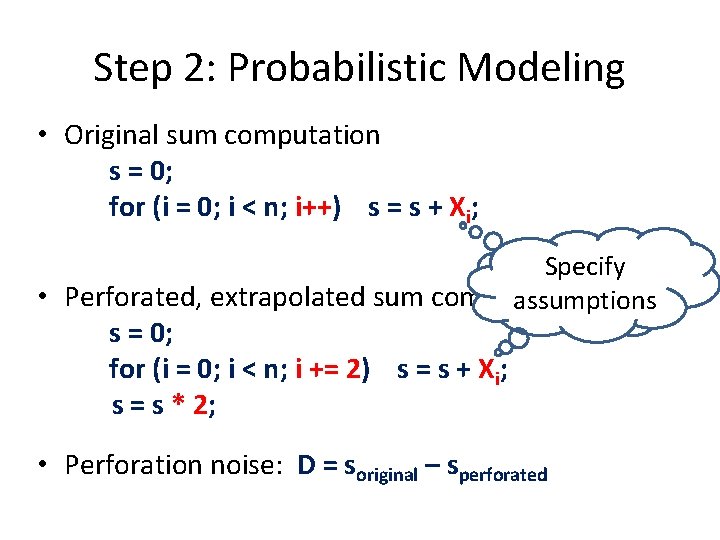

Step 2: Probabilistic Modeling • Original sum computation s = 0; for (i = 0; i < n; i++) s = s + Xi; Specify • Perforated, extrapolated sum computation assumptions s = 0; for (i = 0; i < n; i += 2) s = s + Xi; s = s * 2; • Perforation noise: D = soriginal – sperforated

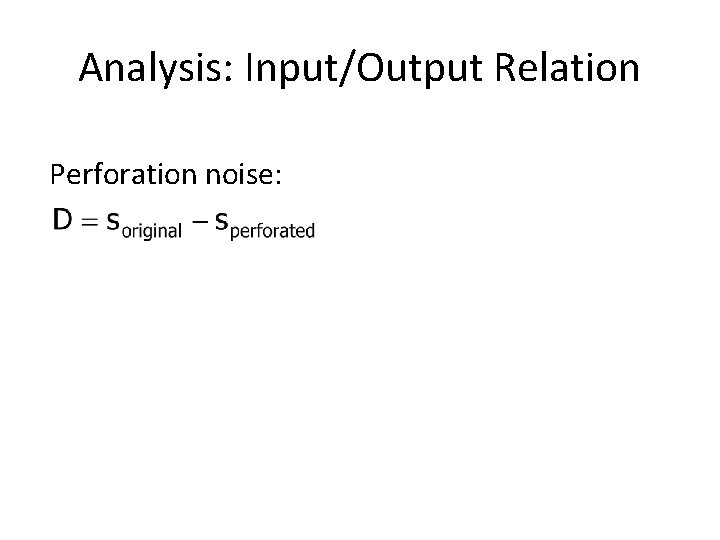

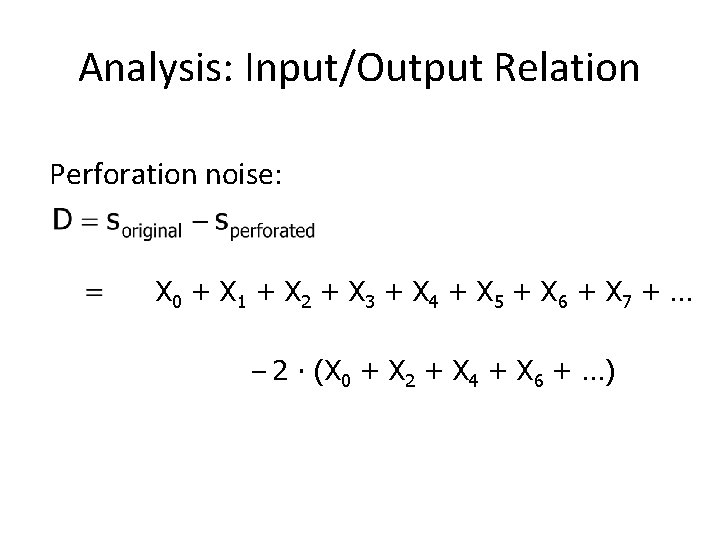

Analysis: Input/Output Relation Perforation noise:

Analysis: Input/Output Relation Perforation noise: X 0 + X 1 + X 2 + X 3 + X 4 + X 5 + X 6 + X 7 + … – 2 ∙ (X 0 + X 2 + X 4 + X 6 + …)

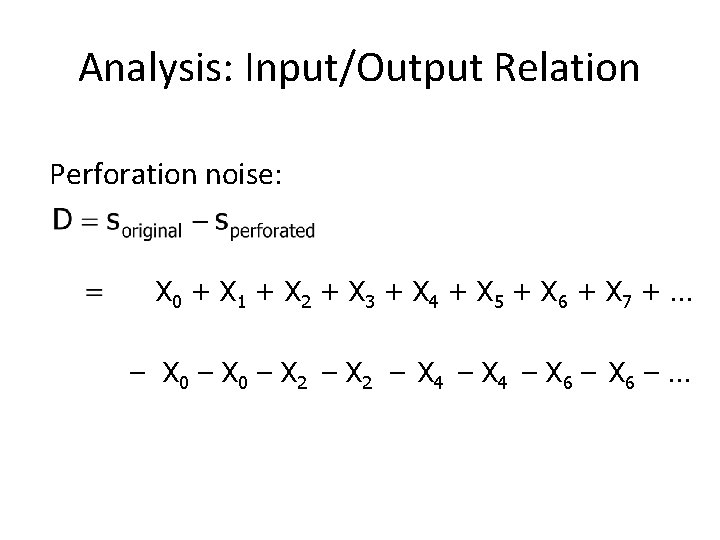

Analysis: Input/Output Relation Perforation noise: X 0 + X 1 + X 2 + X 3 + X 4 + X 5 + X 6 + X 7 + … – X 0 – X 2 – X 4 – X 6 – …

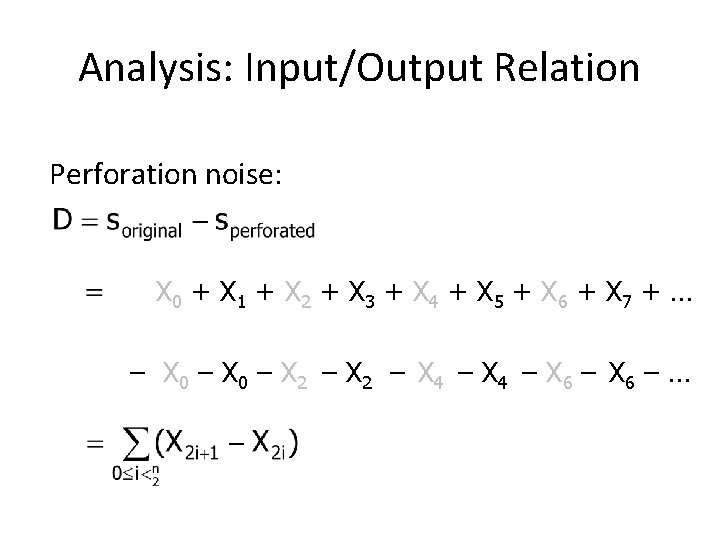

Analysis: Input/Output Relation Perforation noise: X 0 + X 1 + X 2 + X 3 + X 4 + X 5 + X 6 + X 7 + … – X 0 – X 2 – X 4 – X 6 – …

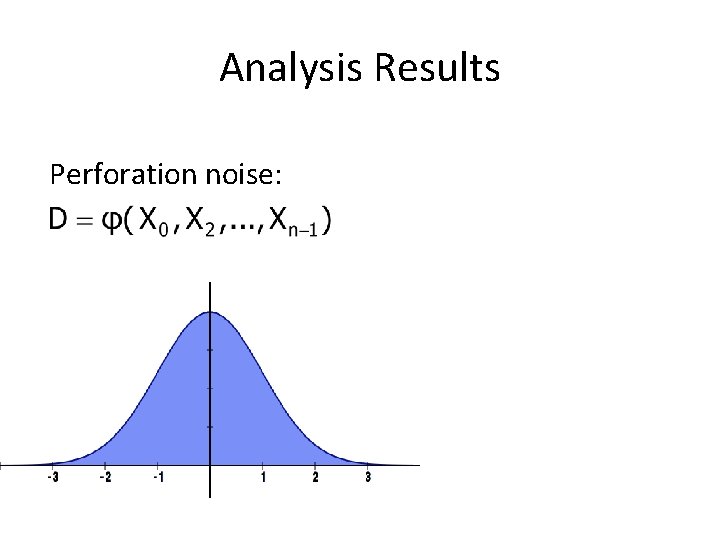

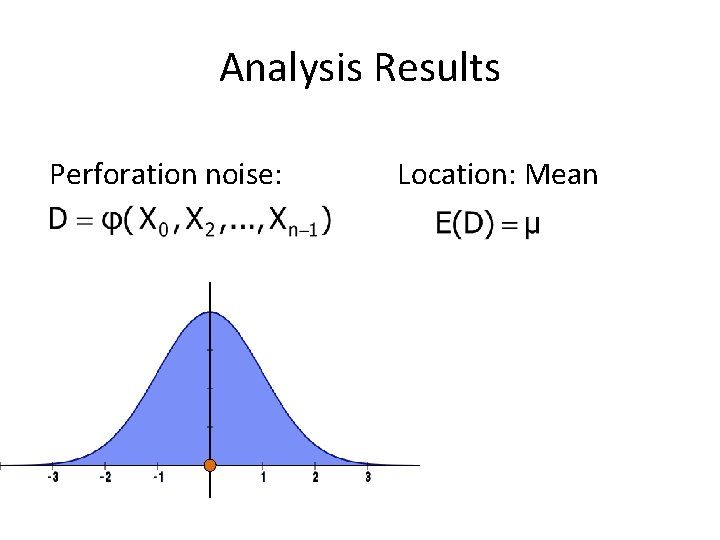

Analysis Results Perforation noise:

Analysis Results Perforation noise: Location: Mean

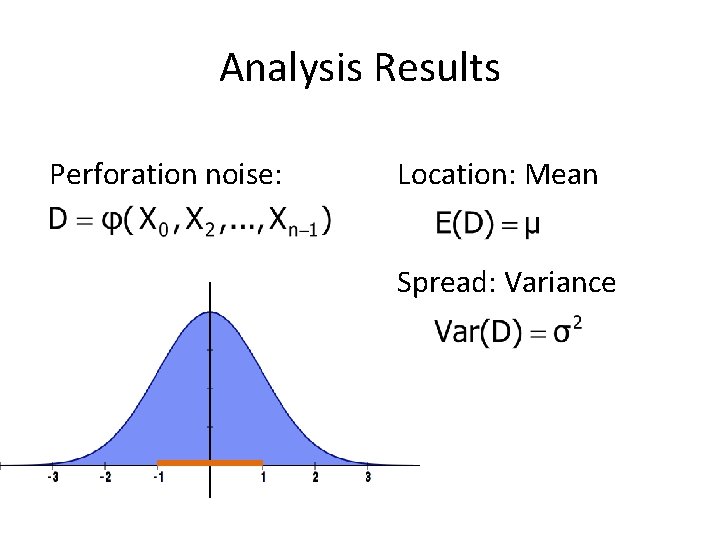

Analysis Results Perforation noise: Location: Mean Spread: Variance

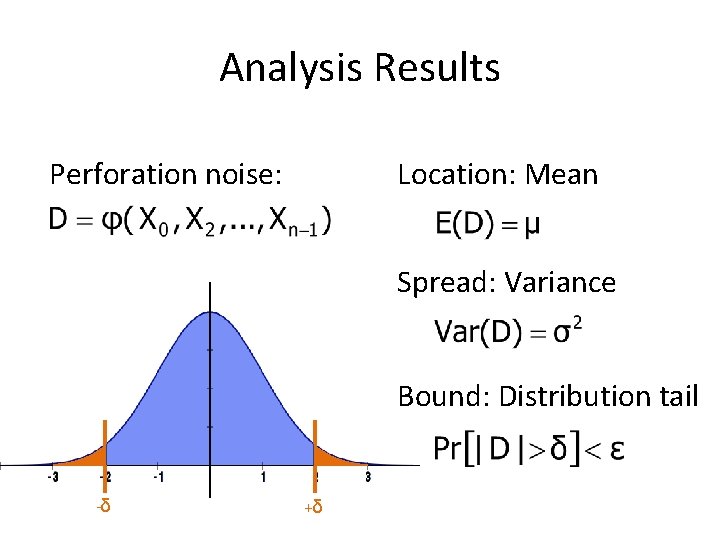

Analysis Results Perforation noise: Location: Mean Spread: Variance Bound: Distribution tail -δ +δ

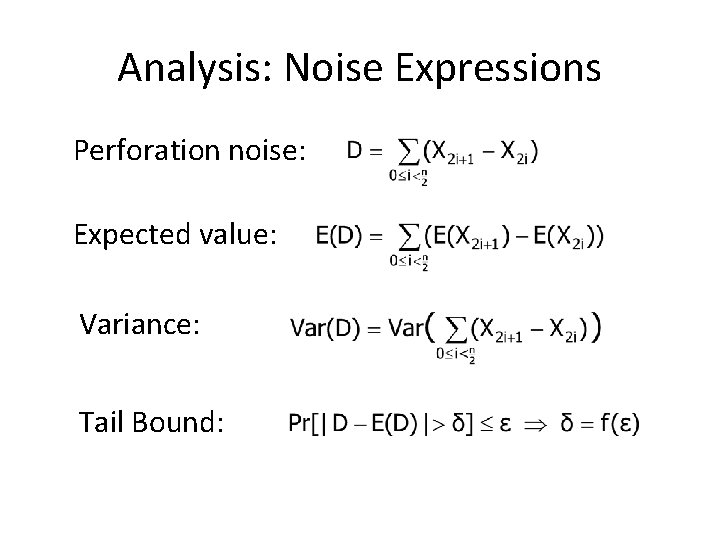

Analysis: Noise Expressions Perforation noise: Expected value: Variance: Tail Bound:

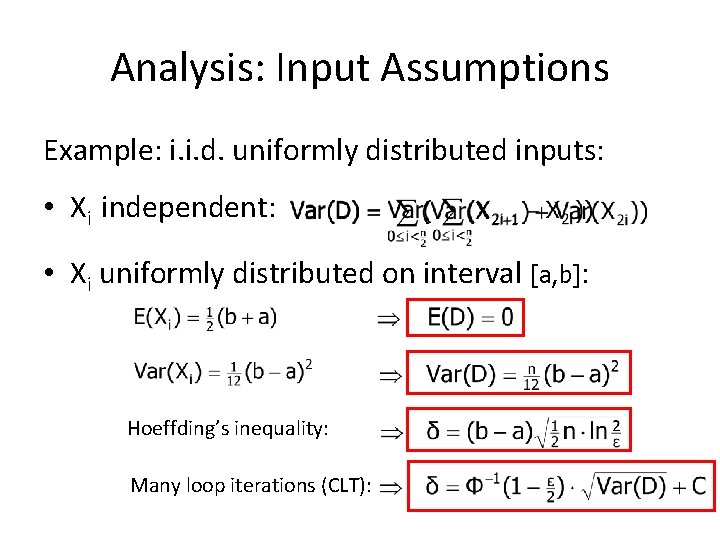

Analysis: Input Assumptions Example: i. i. d. uniformly distributed inputs: • Xi independent: • Xi uniformly distributed on interval [a, b]: Hoeffding’s inequality: Many loop iterations (CLT):

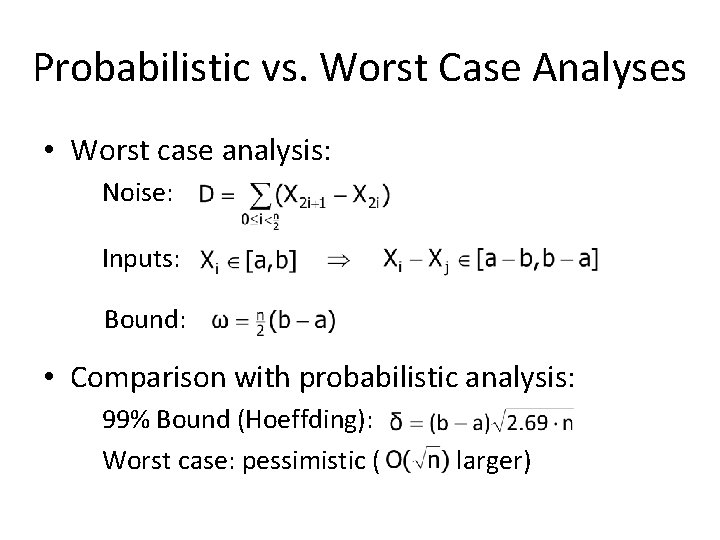

Probabilistic vs. Worst Case Analyses • Worst case analysis: Noise: Inputs: Bound: • Comparison with probabilistic analysis: 99% Bound (Hoeffding): Worst case: pessimistic ( larger)

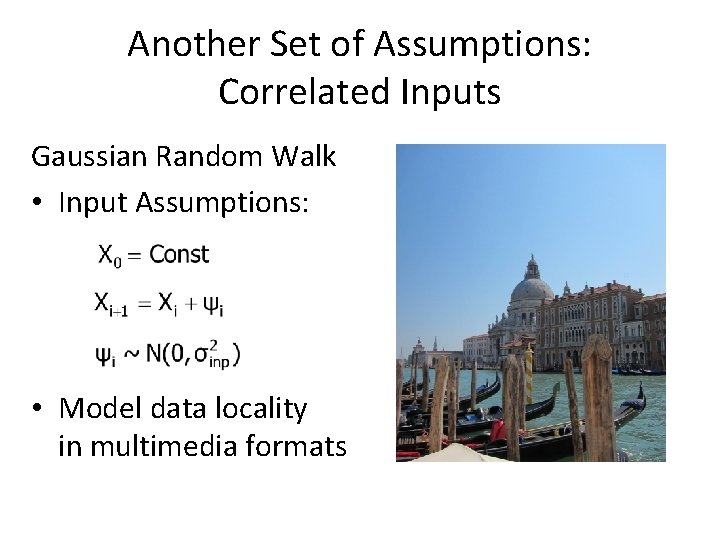

Another Set of Assumptions: Correlated Inputs Gaussian Random Walk • Input Assumptions: • Model data locality in multimedia formats

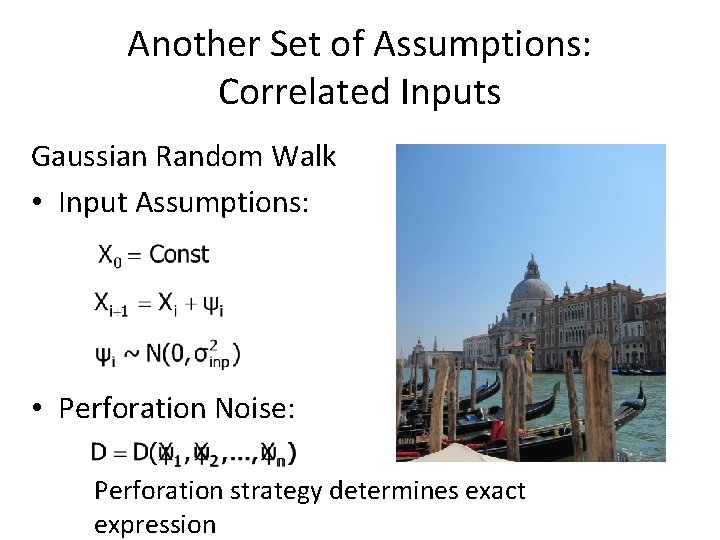

Another Set of Assumptions: Correlated Inputs Gaussian Random Walk • Input Assumptions: • Perforation Noise: Perforation strategy determines exact expression

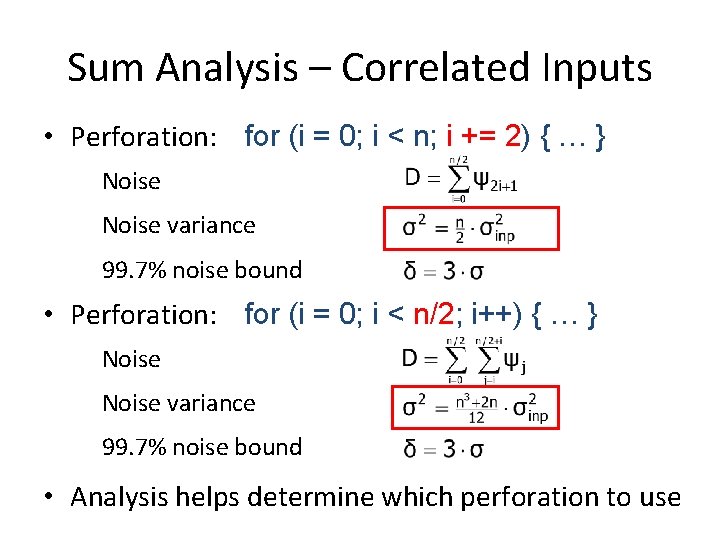

Sum Analysis – Correlated Inputs • Perforation: for (i = 0; i < n; i += 2) { … } Noise variance 99. 7% noise bound • Perforation: for (i = 0; i < n/2; i++) { … } Noise variance 99. 7% noise bound • Analysis helps determine which perforation to use

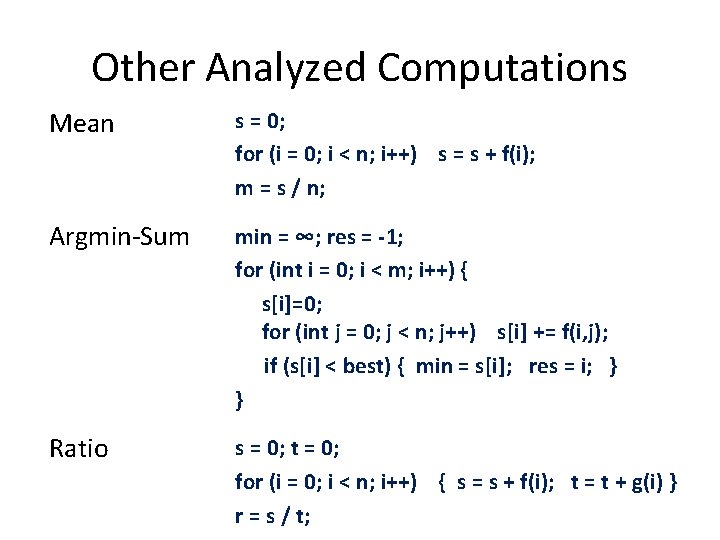

Other Analyzed Computations Mean s = 0; for (i = 0; i < n; i++) s = s + f(i); m = s / n; Argmin-Sum min = ∞; res = -1; for (int i = 0; i < m; i++) { s[i]=0; for (int j = 0; j < n; j++) s[i] += f(i, j); if (s[i] < best) { min = s[i]; res = i; } } Ratio s = 0; t = 0; for (i = 0; i < n; i++) { s = s + f(i); t = t + g(i) } r = s / t;

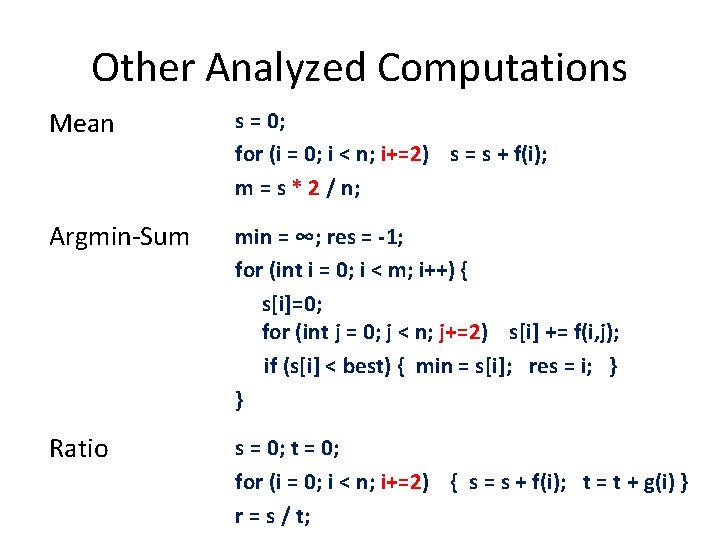

Other Analyzed Computations Mean s = 0; for (i = 0; i < n; i+=2) s = s + f(i); m = s * 2 / n; Argmin-Sum min = ∞; res = -1; for (int i = 0; i < m; i++) { s[i]=0; for (int j = 0; j < n; j+=2) s[i] += f(i, j); if (s[i] < best) { min = s[i]; res = i; } } Ratio s = 0; t = 0; for (i = 0; i < n; i+=2) { s = s + f(i); t = t + g(i) } r = s / t;

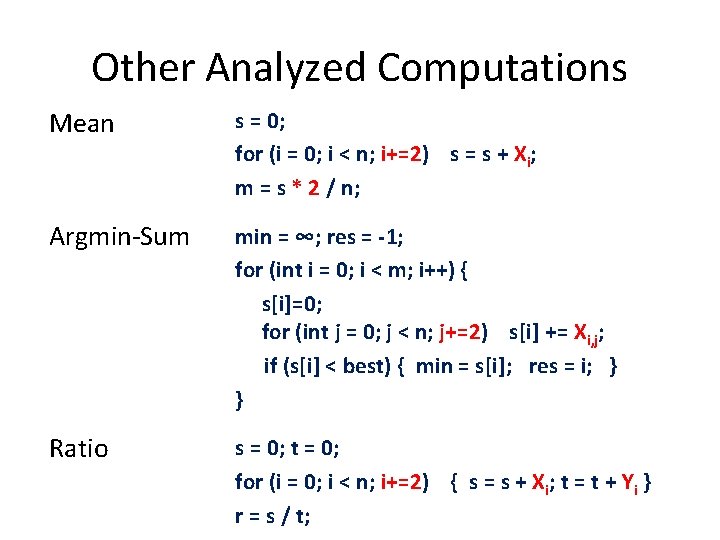

Other Analyzed Computations Mean s = 0; for (i = 0; i < n; i+=2) s = s + Xi; m = s * 2 / n; Argmin-Sum min = ∞; res = -1; for (int i = 0; i < m; i++) { s[i]=0; for (int j = 0; j < n; j+=2) s[i] += Xi, j; if (s[i] < best) { min = s[i]; res = i; } } Ratio s = 0; t = 0; for (i = 0; i < n; i+=2) { s = s + Xi; t = t + Yi } r = s / t;

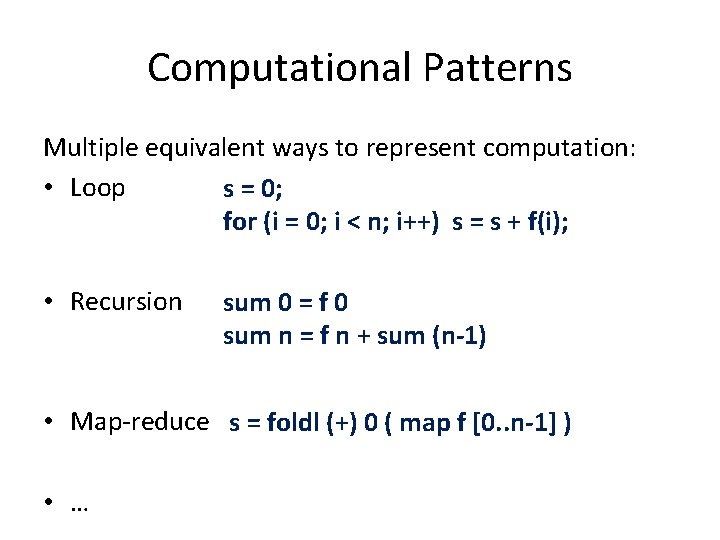

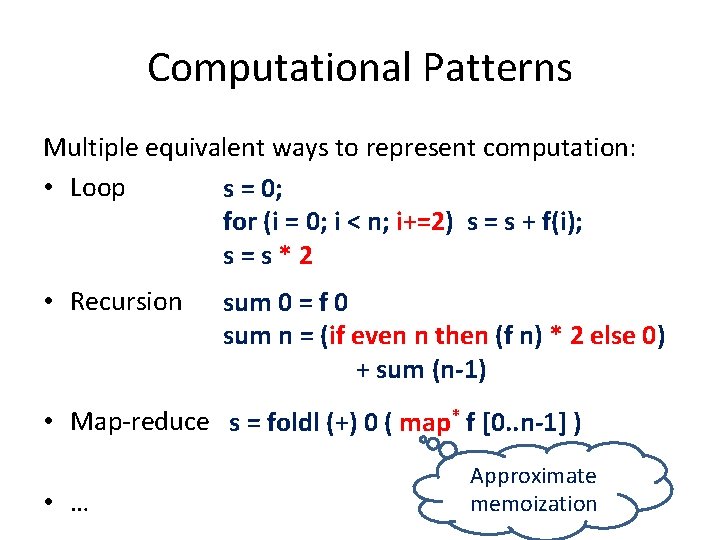

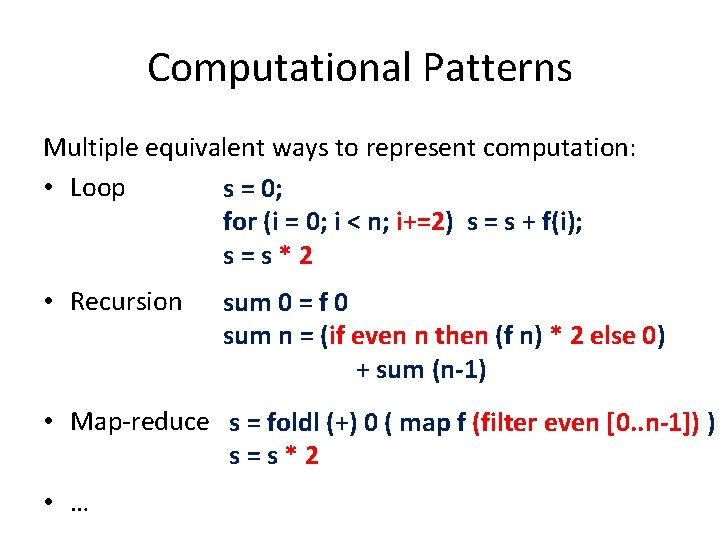

Computational Patterns Multiple equivalent ways to represent computation: • Loop s = 0; for (i = 0; i < n; i++) s = s + f(i); • Recursion sum 0 = f 0 sum n = f n + sum (n-1) • Map-reduce s = foldl (+) 0 ( map f [0. . n-1] ) • …

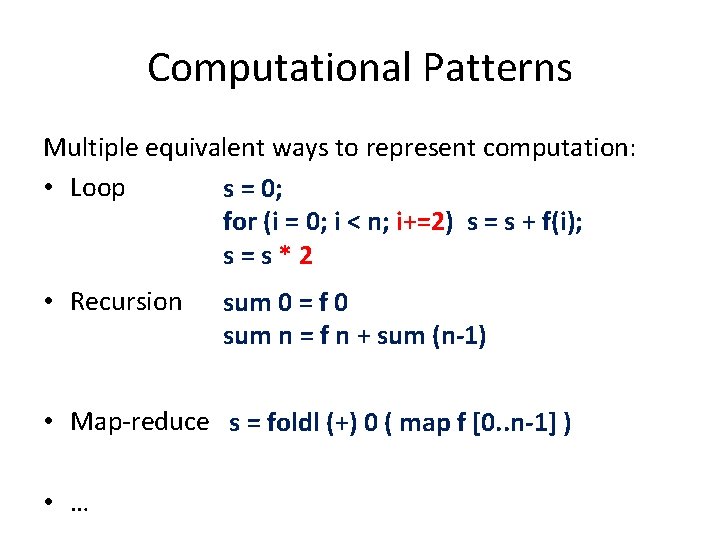

Computational Patterns Multiple equivalent ways to represent computation: • Loop s = 0; for (i = 0; i < n; i+=2) s = s + f(i); s=s*2 • Recursion sum 0 = f 0 sum n = f n + sum (n-1) • Map-reduce s = foldl (+) 0 ( map f [0. . n-1] ) • …

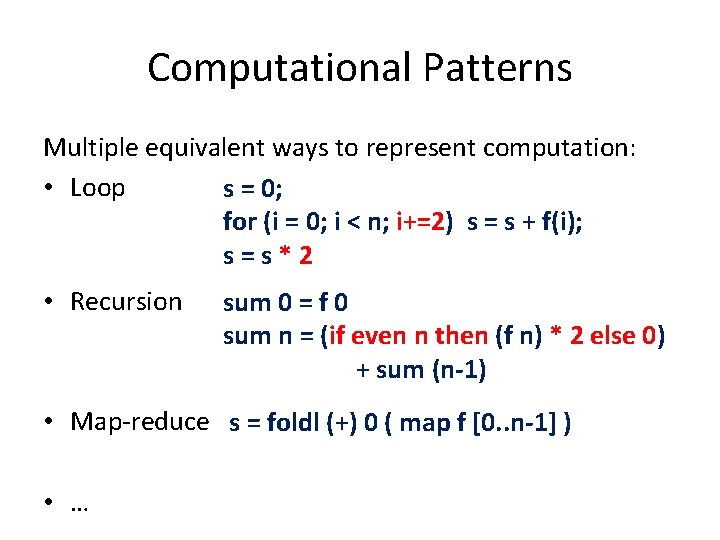

Computational Patterns Multiple equivalent ways to represent computation: • Loop s = 0; for (i = 0; i < n; i+=2) s = s + f(i); s=s*2 • Recursion sum 0 = f 0 sum n = (if even n then (f n) * 2 else 0) + sum (n-1) • Map-reduce s = foldl (+) 0 ( map f [0. . n-1] ) • …

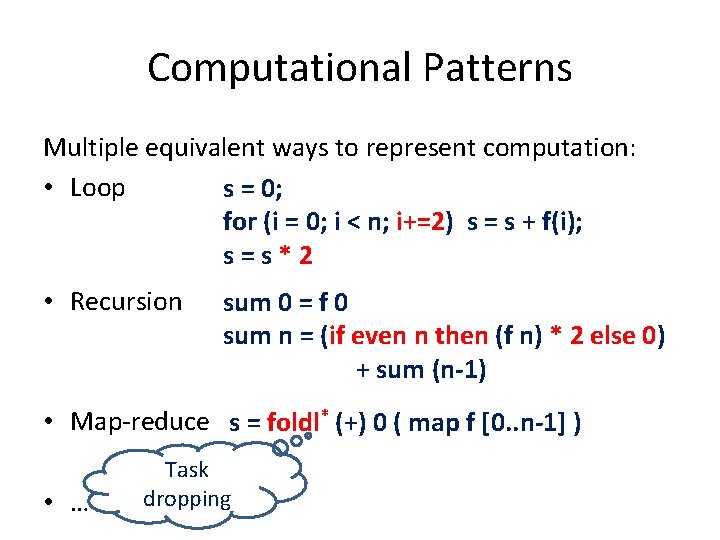

Computational Patterns Multiple equivalent ways to represent computation: • Loop s = 0; for (i = 0; i < n; i+=2) s = s + f(i); s=s*2 • Recursion sum 0 = f 0 sum n = (if even n then (f n) * 2 else 0) + sum (n-1) • Map-reduce s = foldl* (+) 0 ( map f [0. . n-1] ) • … Task dropping

Computational Patterns Multiple equivalent ways to represent computation: • Loop s = 0; for (i = 0; i < n; i+=2) s = s + f(i); s=s*2 • Recursion sum 0 = f 0 sum n = (if even n then (f n) * 2 else 0) + sum (n-1) • Map-reduce s = foldl (+) 0 ( map* f [0. . n-1] ) • … Approximate memoization

Computational Patterns Multiple equivalent ways to represent computation: • Loop s = 0; for (i = 0; i < n; i+=2) s = s + f(i); s=s*2 • Recursion sum 0 = f 0 sum n = (if even n then (f n) * 2 else 0) + sum (n-1) • Map-reduce s = foldl (+) 0 ( map f (filter even [0. . n-1]) ) s=s*2 • …

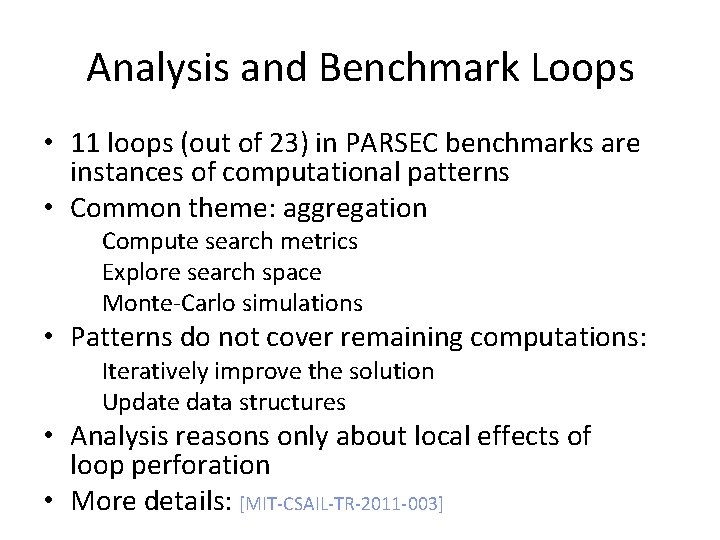

Analysis and Benchmark Loops • 11 loops (out of 23) in PARSEC benchmarks are instances of computational patterns • Common theme: aggregation Compute search metrics Explore search space Monte-Carlo simulations • Patterns do not cover remaining computations: Iteratively improve the solution Update data structures • Analysis reasons only about local effects of loop perforation • More details: [MIT-CSAIL-TR-2011 -003]

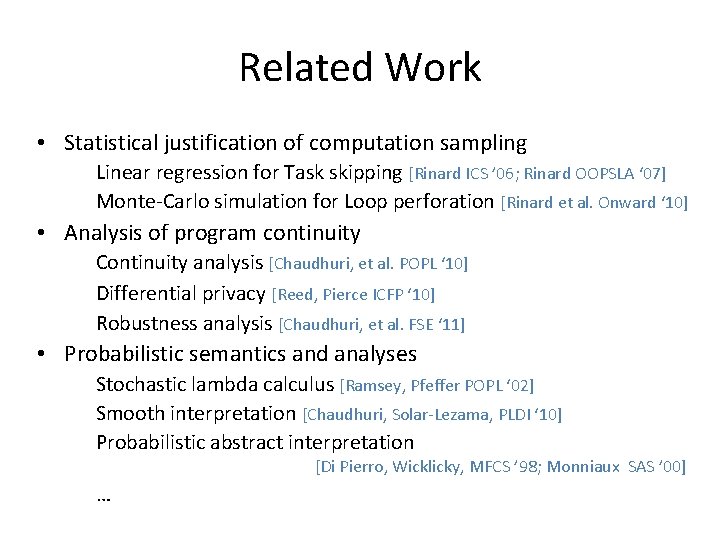

Related Work • Statistical justification of computation sampling Linear regression for Task skipping [Rinard ICS ’ 06; Rinard OOPSLA ‘ 07] Monte-Carlo simulation for Loop perforation [Rinard et al. Onward ‘ 10] • Analysis of program continuity Continuity analysis [Chaudhuri, et al. POPL ‘ 10] Differential privacy [Reed, Pierce ICFP ‘ 10] Robustness analysis [Chaudhuri, et al. FSE ‘ 11] • Probabilistic semantics and analyses Stochastic lambda calculus [Ramsey, Pfeffer POPL ‘ 02] Smooth interpretation [Chaudhuri, Solar-Lezama, PLDI ‘ 10] Probabilistic abstract interpretation [Di Pierro, Wicklicky, MFCS ’ 98; Monniaux SAS ’ 00] …

Conclusion • Focus of analysis Transformations that change program semantics Computations that trade off accuracy for performance • New foundation for justifying transformations Probabilistic modeling of uncertainty Probabilistic bounds on result difference Pattern-based analysis • Benefits of analysis Understand effect of transformation Predict behavior of computation on wide set of inputs

Thanks! Related publications: • S. Sidiroglou, S. Misailovic, H. Hoffmann, M. Rinard "Managing Performance vs. Accuracy Trade-offs With Loop Perforation" [ESEC/FSE 2011] • S. Misailovic, S. Sidiroglou, H. Hoffmann, M. Rinard "Quality of Service Profiling" [ICSE 2010] • S. Misailovic, D. Roy, M. Rinard. "Probabilistic and Statistical Analysis of Perforated Patterns" [MIT-CSAIL-TR-2011 -003] Website: http: //groups. csail. mit. edu/cag/codeperf/

- Slides: 47