Probabilistic Topic Models for Text Mining Cheng Xiang

Probabilistic Topic Models for Text Mining Cheng. Xiang Zhai (翟成祥) Department of Computer Science Graduate School of Library & Information Science Institute for Genomic Biology, Statistics University of Illinois, Urbana-Champaign http: //www-faculty. cs. uiuc. edu/~czhai, czhai@cs. uiuc. edu 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 1

What Is Text Mining? “The objective of Text Mining is to exploit information contained in textual documents in various ways, including …discovery of patterns and trends in data, associations among entities, predictive rules, etc. ” (Grobelnik et al. , 2001) “Another way to view text data mining is as a process of exploratory data analysis that leads to heretofore unknown information, or to answers for questions for which the answer is not currently known. ” (Hearst, 1999) (Slide from Rebecca Hwa’s “Intro to Text Mining”) 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 2

Two Different Views of Text Mining • Data Mining View: Explore patterns in textual data – Find latent topics – Find topical trends Shallow mining – Find outliers and other hidden patterns • Natural Language Processing View: Make inferences based on partial understanding natural language text – Information extraction Deep mining – Question answering 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 3

Applications of Text Mining • Direct applications: Go beyond search to find knowledge – Question-driven (Bioinformatics, Business Intelligence, etc): We have specific questions; how can we exploit data mining to answer the questions? – Data-driven (WWW, literature, email, customer reviews, etc): We have a lot of data; what can we do with it? • Indirect applications – Assist information access (e. g. , discover latent topics to better summarize search results) – Assist information organization (e. g. , discover hidden structures) 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 4

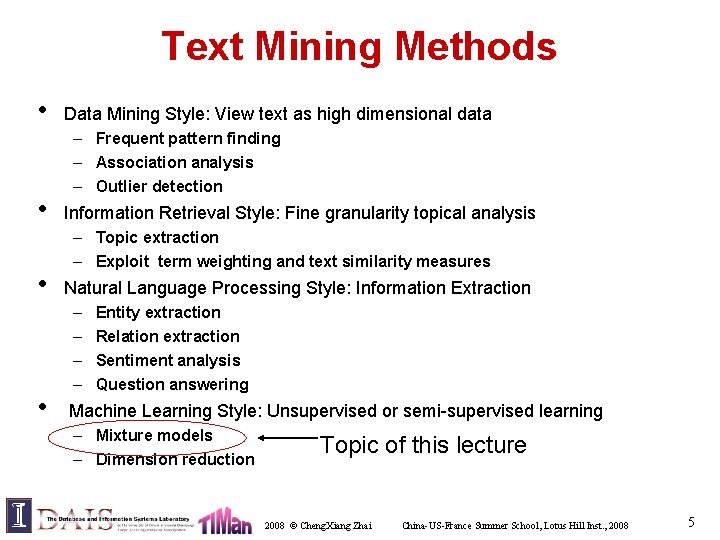

Text Mining Methods • • Data Mining Style: View text as high dimensional data – Frequent pattern finding – Association analysis – Outlier detection Information Retrieval Style: Fine granularity topical analysis – Topic extraction – Exploit term weighting and text similarity measures Natural Language Processing Style: Information Extraction – – Entity extraction Relation extraction Sentiment analysis Question answering Machine Learning Style: Unsupervised or semi-supervised learning – Mixture models – Dimension reduction Topic of this lecture 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 5

![Outline • The Basic Topic Models: – Probabilistic Latent Semantic Analysis (PLSA) [Hofmann 99] Outline • The Basic Topic Models: – Probabilistic Latent Semantic Analysis (PLSA) [Hofmann 99]](http://slidetodoc.com/presentation_image_h/7876d3a2704f7036f33660c37405d4d0/image-6.jpg)

Outline • The Basic Topic Models: – Probabilistic Latent Semantic Analysis (PLSA) [Hofmann 99] – Latent Dirichlet Allocation (LDA) [Blei et al. 02] • Extensions – Contextual Probabilistic Latent Semantic Analysis (CPLSA) [Mei & Zhai 06] 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 6

Basic Topic Model: PLSA 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 7

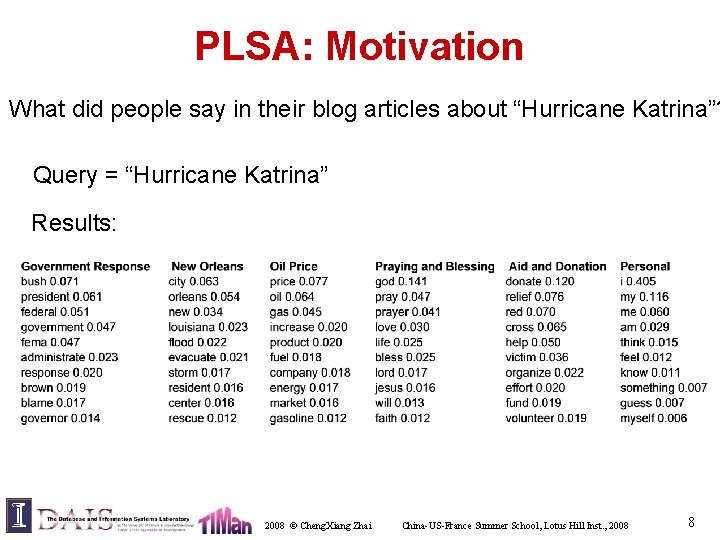

PLSA: Motivation What did people say in their blog articles about “Hurricane Katrina”? Query = “Hurricane Katrina” Results: 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 8

![Probabilistic Latent Semantic Analysis/Indexing (PLSA/PLSI) [Hofmann 99] • • Mix k multinomial distributions to Probabilistic Latent Semantic Analysis/Indexing (PLSA/PLSI) [Hofmann 99] • • Mix k multinomial distributions to](http://slidetodoc.com/presentation_image_h/7876d3a2704f7036f33660c37405d4d0/image-9.jpg)

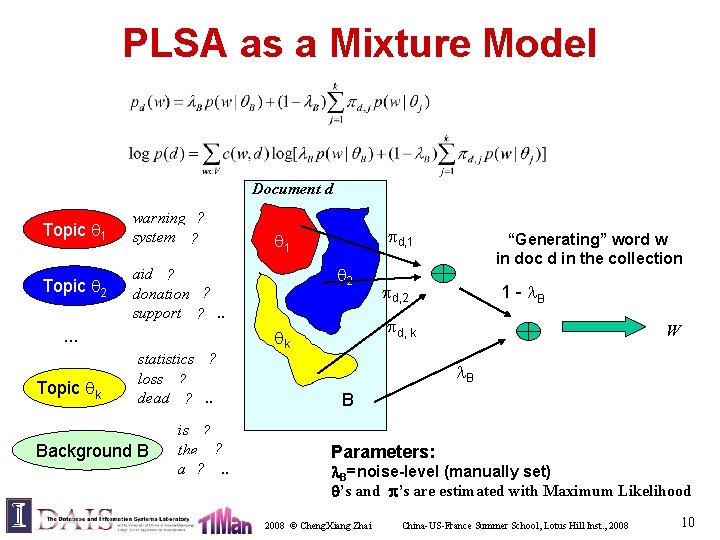

Probabilistic Latent Semantic Analysis/Indexing (PLSA/PLSI) [Hofmann 99] • • Mix k multinomial distributions to generate a document Each document has a potentially different set of mixing weights which captures the topic coverage When generating words in a document, each word may be generated using a DIFFERENT multinomial distribution (this is in contrast with the document clustering model where, once a multinomial distribution is chosen, all the words in a document would be generated using the same model) We may add a background distribution to “attract” background words 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 9

PLSA as a Mixture Model Document d Topic 1 warning 0. 3 ? system 0. 2. . ? Topic 2 aid 0. 1 ? ? donation 0. 05 support 0. 02 ? . . … Topic k statistics 0. 2 ? loss 0. 1 ? dead 0. 05 ? . . Background B is 0. 05 ? ? the 0. 04 a 0. 03 ? . . d, 1 1 2 “Generating” word w in doc d in the collection d, 2 1 - B d, k k W B B Parameters: B=noise-level (manually set) ’s and ’s are estimated with Maximum Likelihood 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 10

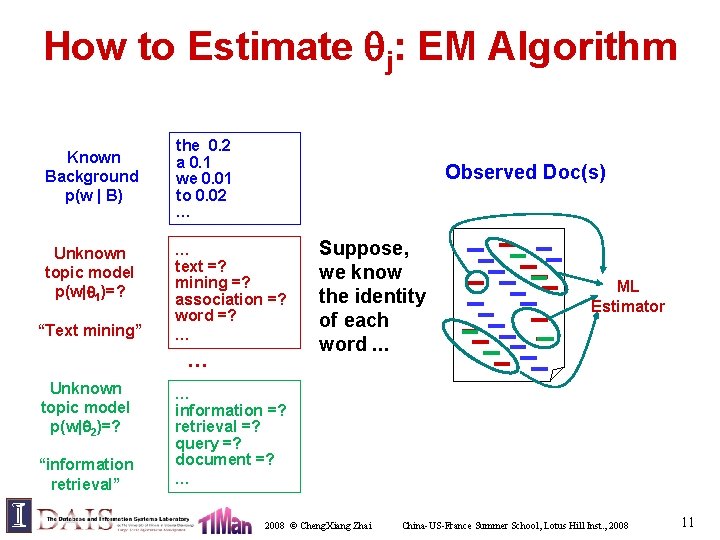

How to Estimate j: EM Algorithm Known Background p(w | B) Unknown topic model p(w| 1)=? “Text mining” the 0. 2 a 0. 1 we 0. 01 to 0. 02 … Observed Doc(s) … text =? mining =? association =? word =? … … Unknown topic model p(w| 2)=? “information retrieval” Suppose, we know the identity of each word. . . ML Estimator … information =? retrieval =? query =? document =? … 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 11

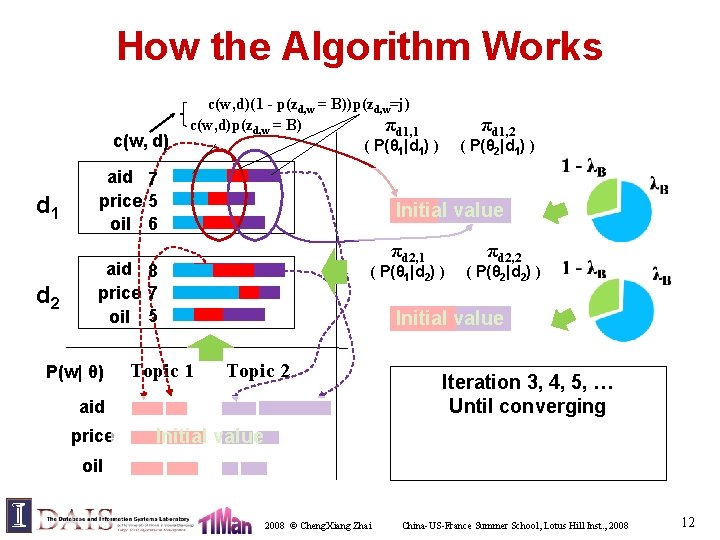

How the Algorithm Works c(w, d) d 1 d 2 c(w, d)(1 - p(zd, w = B))p(zd, w=j) c(w, d)p(zd, w = B) πd 1, 1 ( P(θ 1|d 1) ) aid 7 price 5 oil 6 Topic 1 πd 2, 1 ( P(θ 1|d 2) ) πd 2, 2 ( P(θ 2|d 2) ) Initial value Topic 2 aid price ( P(θ 2|d 1) ) Initial value aid 8 price 7 oil 5 P(w| θ) πd 1, 2 Initial value oil 2008 © Cheng. Xiang Zhai Initializing πd, E and Iteration 1: 2: 3, M 4, Step: 5, P(w| … split re-θj) j Step: estimate πd, converging P(w| θj) by word with Until counts random withvalues different j and adding topics and (by computing normalizingz’the s)12 splitted word counts China-US-France Summer School, Lotus Hill Inst. , 2008 12

Parameter Estimation E-Step: Word w in doc d is generated - from cluster j - from background Application of Bayes rule M-Step: Re-estimate - mixing weights - cluster LM Sum over all docs (in multiple collections) m = 1 if one collection Fractional counts contributing to - using cluster j in generating d - generating w from cluster j 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 13

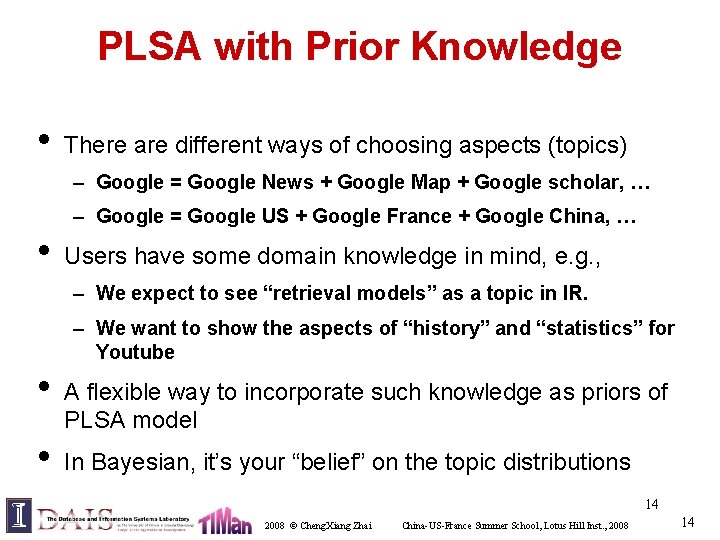

PLSA with Prior Knowledge • There are different ways of choosing aspects (topics) – Google = Google News + Google Map + Google scholar, … – Google = Google US + Google France + Google China, … • Users have some domain knowledge in mind, e. g. , – We expect to see “retrieval models” as a topic in IR. – We want to show the aspects of “history” and “statistics” for Youtube • • A flexible way to incorporate such knowledge as priors of PLSA model In Bayesian, it’s your “belief” on the topic distributions 14 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 14

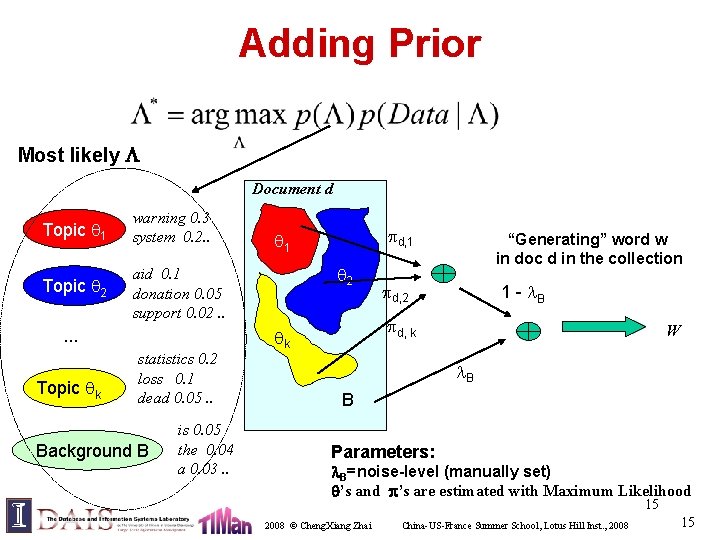

Adding Prior Most likely Document d Topic 1 warning 0. 3 system 0. 2. . Topic 2 aid 0. 1 donation 0. 05 support 0. 02. . … Topic k statistics 0. 2 loss 0. 1 dead 0. 05. . Background B is 0. 05 the 0. 04 a 0. 03. . d, 1 1 2 “Generating” word w in doc d in the collection d, 2 1 - B d, k k W B B Parameters: B=noise-level (manually set) ’s and ’s are estimated with Maximum Likelihood 15 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 15

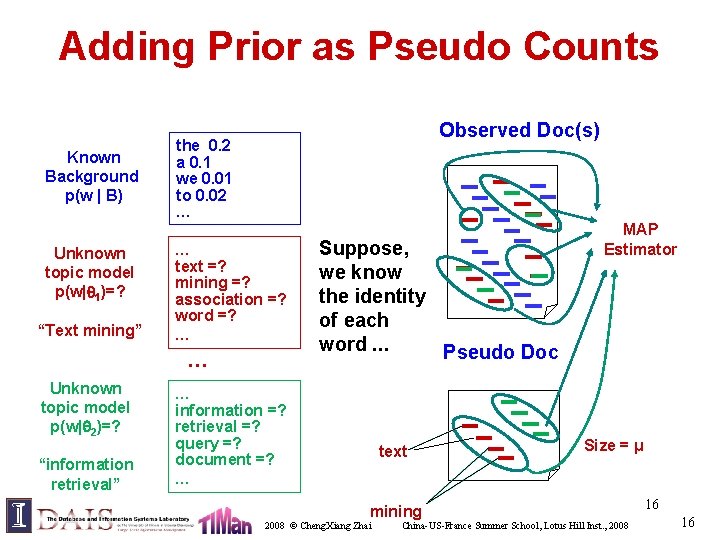

Adding Prior as Pseudo Counts Known Background p(w | B) Unknown topic model p(w| 1)=? “Text mining” Observed Doc(s) the 0. 2 a 0. 1 we 0. 01 to 0. 02 … … text =? mining =? association =? word =? … … Unknown topic model p(w| 2)=? “information retrieval” Suppose, we know the identity of each word. . . Pseudo Doc … information =? retrieval =? query =? document =? … text mining 2008 © Cheng. Xiang Zhai MAP Estimator Size = μ China-US-France Summer School, Lotus Hill Inst. , 2008 16 16

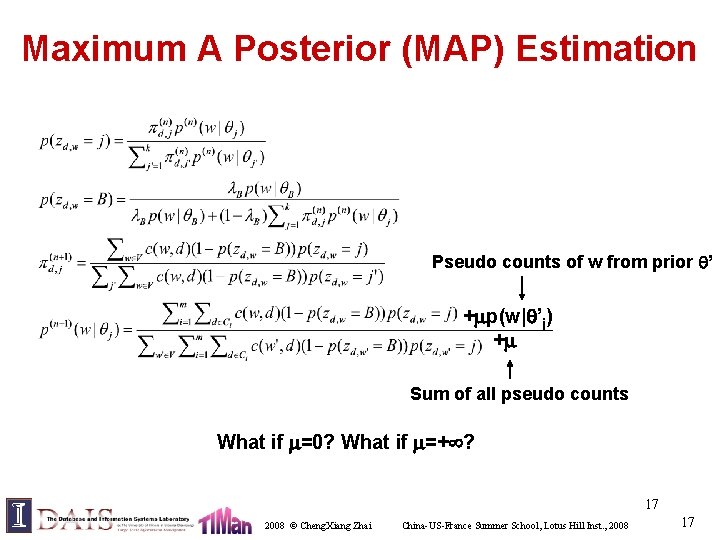

Maximum A Posterior (MAP) Estimation Pseudo counts of w from prior ’ + p(w| ’j) + Sum of all pseudo counts What if =0? What if =+ ? 17 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 17

Basic Topic Model: LDA The following slides about LDA are taken from Michael C. Mozer’s course lecture http: //www. cs. colorado. edu/~mozer/courses/Probabilistic. Models/ 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 18

LDA: Motivation – “Documents have no generative probabilistic semantics” • i. e. , document is just a symbol – Model has many parameters • linear in number of documents • need heuristic methods to prevent overfitting – Cannot generalize to new documents

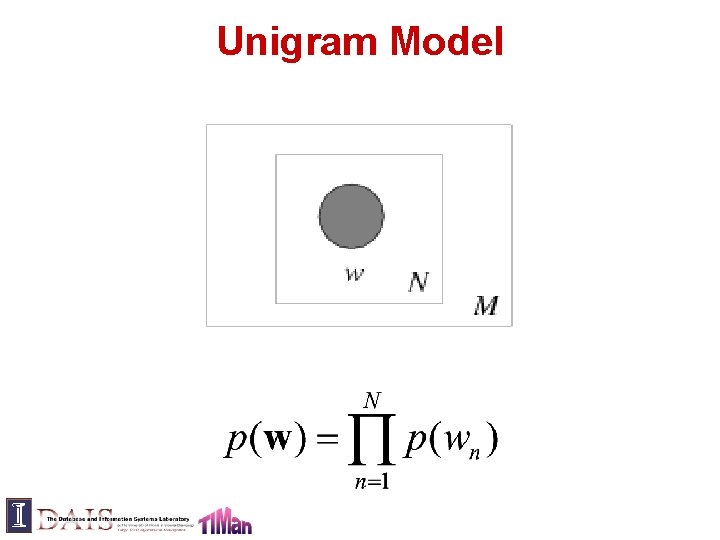

Unigram Model

Mixture of Unigrams

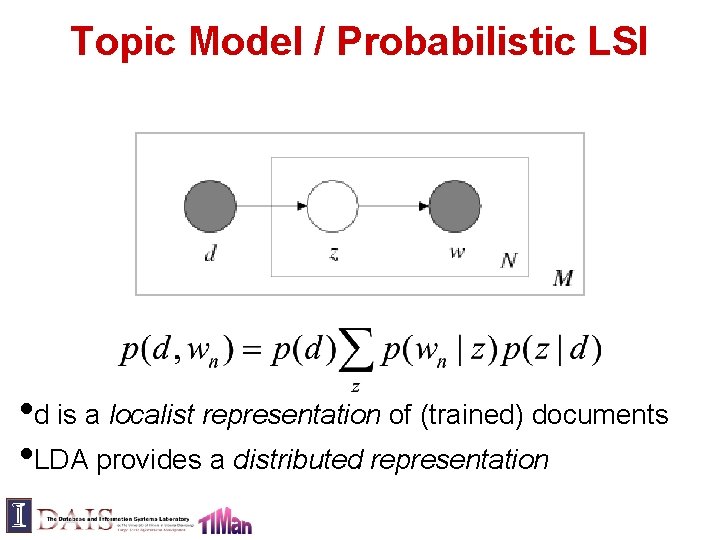

Topic Model / Probabilistic LSI • d is a localist representation of (trained) documents • LDA provides a distributed representation

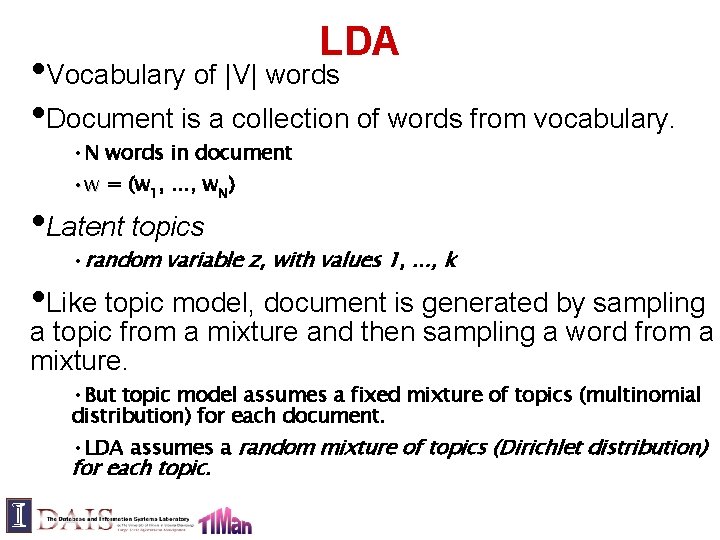

LDA • Vocabulary of |V| words • Document is a collection of words from vocabulary. • N words in document • w = (w 1, . . . , w. N) • Latent topics • random variable z, with values 1, . . . , k • Like topic model, document is generated by sampling a topic from a mixture and then sampling a word from a mixture. • But topic model assumes a fixed mixture of topics (multinomial distribution) for each document. • LDA assumes a random mixture of topics (Dirichlet distribution) for each topic.

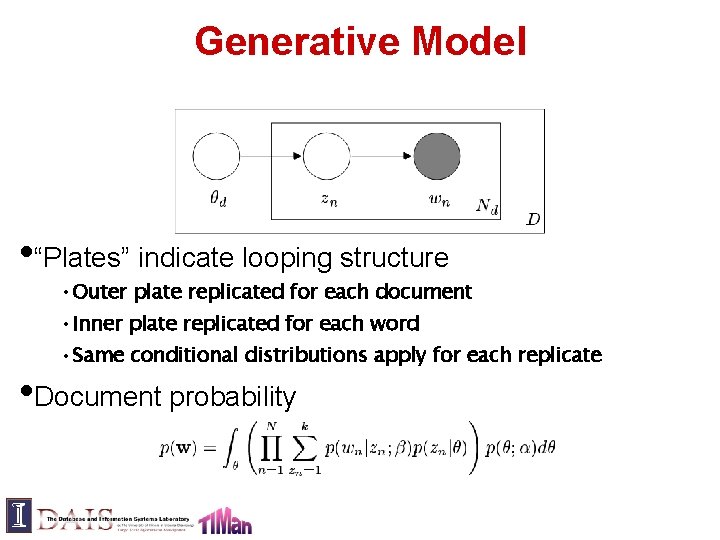

Generative Model • “Plates” indicate looping structure • Outer plate replicated for each document • Inner plate replicated for each word • Same conditional distributions apply for each replicate • Document probability

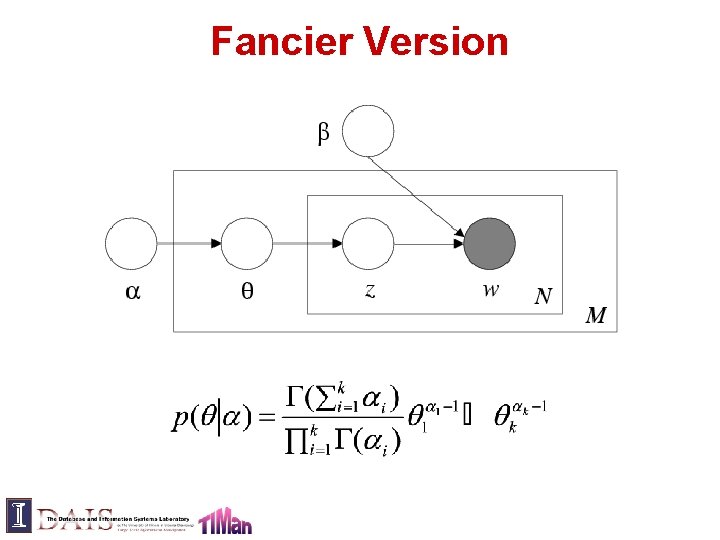

Fancier Version

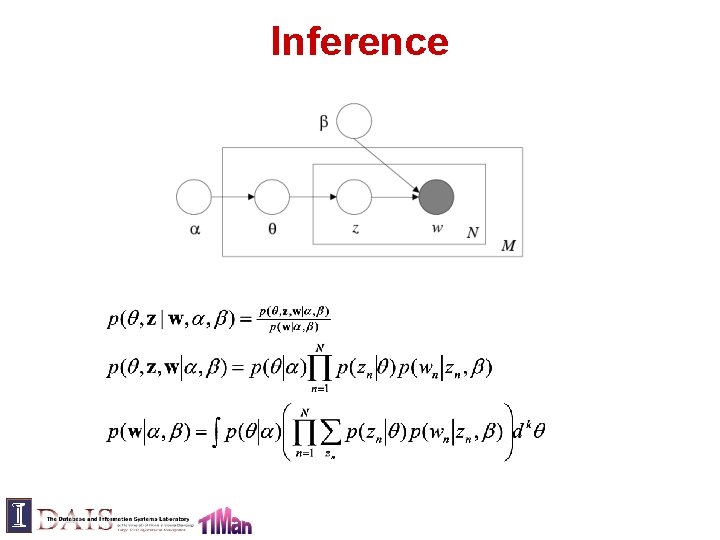

Inference

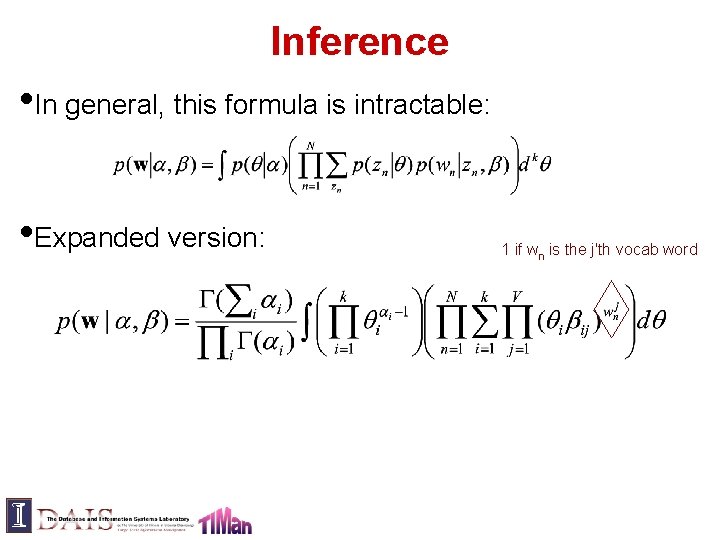

Inference • In general, this formula is intractable: • Expanded version: 1 if wn is the j'th vocab word

![Variational Approximation • Computing log likelihood and introducing Jensen's inequality: log(E[x]) >= E[log(x)] • Variational Approximation • Computing log likelihood and introducing Jensen's inequality: log(E[x]) >= E[log(x)] •](http://slidetodoc.com/presentation_image_h/7876d3a2704f7036f33660c37405d4d0/image-28.jpg)

Variational Approximation • Computing log likelihood and introducing Jensen's inequality: log(E[x]) >= E[log(x)] • Find variational distribution q such that the above equation is computable. – q parameterized by γ and φn – Maximize bound with respect to γ and φn to obtain best approximation to p(w | α, β) – Lead to variational EM algorithm • Sampling algorithms (e. g. , Gibbs sampling) are also common

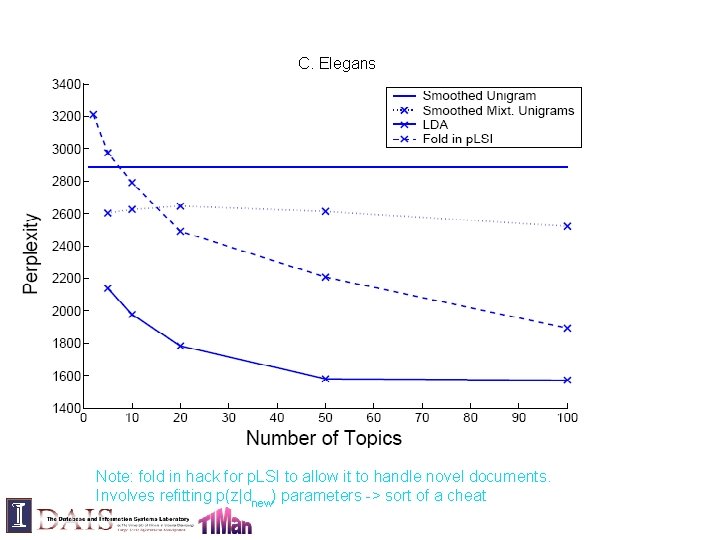

Data Sets C. Elegans Community abstracts 5, 225 abstracts 28, 414 unique terms TREC AP corpus (subset) 16, 333 newswire articles 23, 075 unique terms Held-out data – 10% Removed terms 50 stop words, words appearing once

C. Elegans Note: fold in hack for p. LSI to allow it to handle novel documents. Involves refitting p(z|dnew) parameters -> sort of a cheat

AP

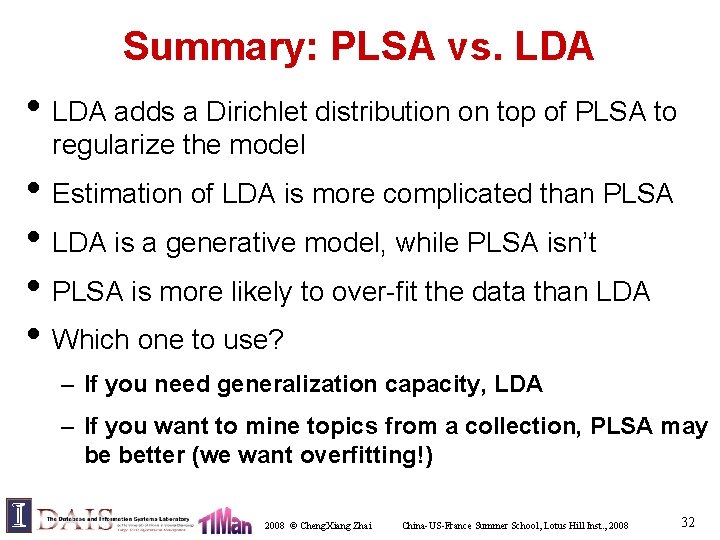

Summary: PLSA vs. LDA • LDA adds a Dirichlet distribution on top of PLSA to regularize the model • Estimation of LDA is more complicated than PLSA • LDA is a generative model, while PLSA isn’t • PLSA is more likely to over-fit the data than LDA • Which one to use? – If you need generalization capacity, LDA – If you want to mine topics from a collection, PLSA may be better (we want overfitting!) 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 32

Extension of PLSA: Contextual Probabilistic Latent Semantic Analysis (CPLSA) 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 33

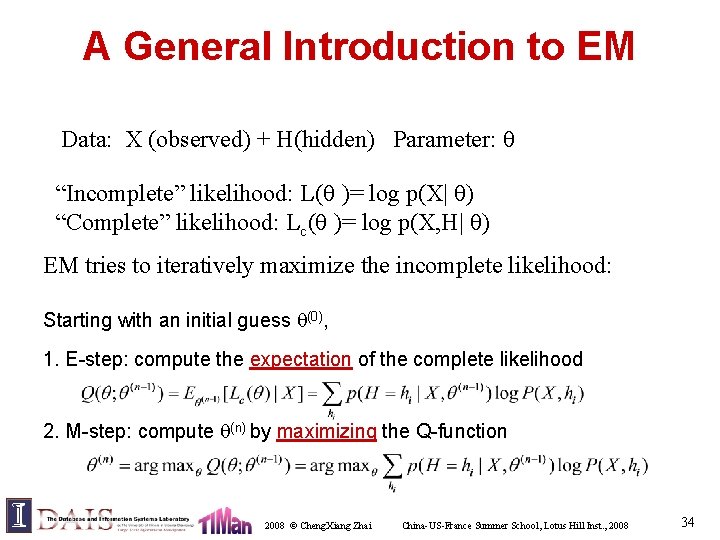

A General Introduction to EM Data: X (observed) + H(hidden) Parameter: “Incomplete” likelihood: L( )= log p(X| ) “Complete” likelihood: Lc( )= log p(X, H| ) EM tries to iteratively maximize the incomplete likelihood: Starting with an initial guess (0), 1. E-step: compute the expectation of the complete likelihood 2. M-step: compute (n) by maximizing the Q-function 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 34

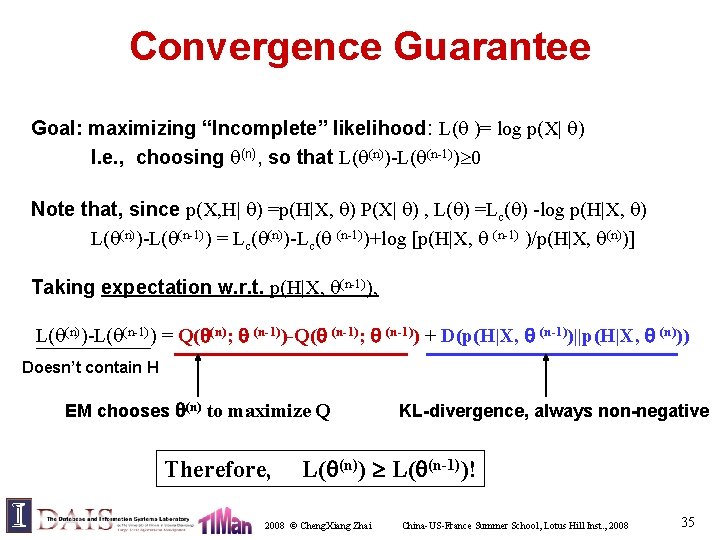

Convergence Guarantee Goal: maximizing “Incomplete” likelihood: L( )= log p(X| ) I. e. , choosing (n), so that L( (n))-L( (n-1)) 0 Note that, since p(X, H| ) =p(H|X, ) P(X| ) , L( ) =Lc( ) -log p(H|X, ) L( (n))-L( (n-1)) = Lc( (n))-Lc( (n-1))+log [p(H|X, (n-1) )/p(H|X, (n))] Taking expectation w. r. t. p(H|X, (n-1)), L( (n))-L( (n-1)) = Q( (n); (n-1))-Q( (n-1); (n-1)) + D(p(H|X, (n-1))||p(H|X, (n))) Doesn’t contain H EM chooses (n) to maximize Q Therefore, KL-divergence, always non-negative L( (n)) L( (n-1))! 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 35

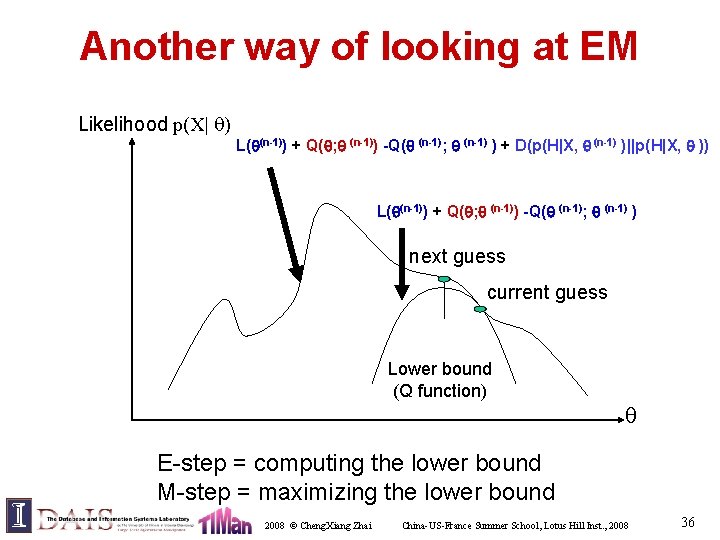

Another way of looking at EM Likelihood p(X| ) L( (n-1)) + Q( ; (n-1)) -Q( (n-1); (n-1) ) + D(p(H|X, (n-1) )||p(H|X, )) L( (n-1)) + Q( ; (n-1)) -Q( (n-1); (n-1) ) next guess current guess Lower bound (Q function) E-step = computing the lower bound M-step = maximizing the lower bound 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 36

Why Contextual PLSA? 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 37

Motivating Example: Comparing Product Reviews IBM Laptop Reviews APPLE Laptop Reviews DELL Laptop Reviews Common Themes “IBM” specific “APPLE” specific “DELL” specific Battery Life Long, 4 -3 hrs Medium, 3 -2 hrs Short, 2 -1 hrs Hard disk Large, 80 -100 GB Small, 5 -10 GB Medium, 20 -50 GB Speed Slow, 100 -200 Mhz Very Fast, 3 -4 Ghz Moderate, 1 -2 Ghz Unsupervised discovery of common topics and their variations 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 38

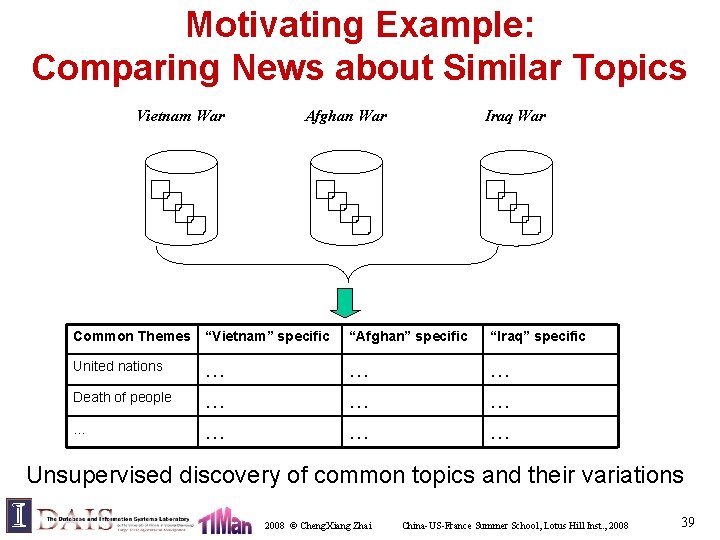

Motivating Example: Comparing News about Similar Topics Vietnam War Afghan War Iraq War Common Themes “Vietnam” specific “Afghan” specific “Iraq” specific United nations … … … Death of people … … … … Unsupervised discovery of common topics and their variations 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 39

Motivating Example: Discovering Topical Trends in Literature Theme Strength Time 1980 1998 TF-IDF Retrieval 2003 Language Model IR Applications Text Categorization Unsupervised discovery of topics and their temporal variations 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 40

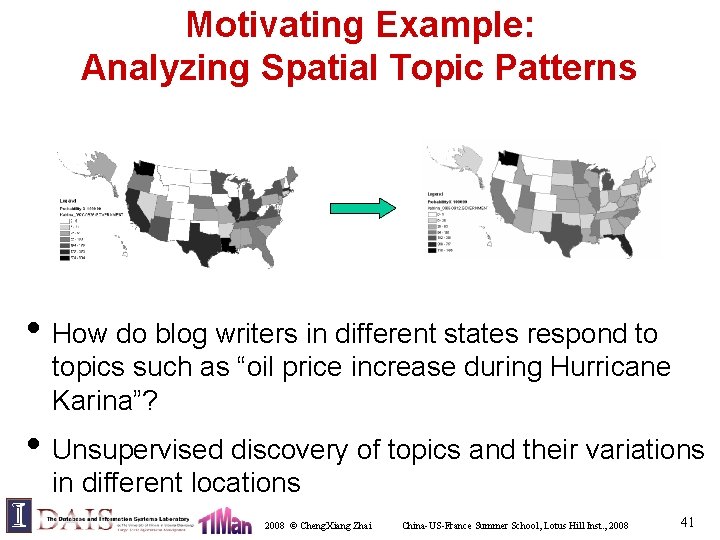

Motivating Example: Analyzing Spatial Topic Patterns • How do blog writers in different states respond to topics such as “oil price increase during Hurricane Karina”? • Unsupervised discovery of topics and their variations in different locations 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 41

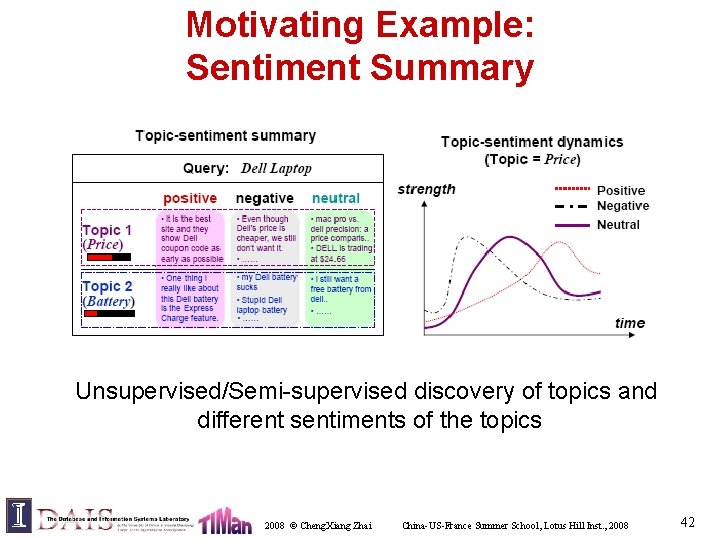

Motivating Example: Sentiment Summary Unsupervised/Semi-supervised discovery of topics and different sentiments of the topics 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 42

Research Questions • Can we model all these problems generally? • Can we solve these problems with a unified approach? • How can we bring human into the loop? 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 43

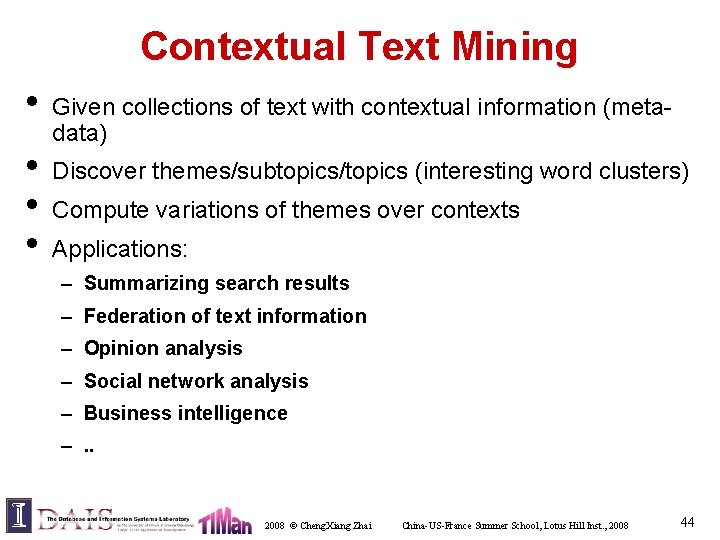

Contextual Text Mining • • Given collections of text with contextual information (metadata) Discover themes/subtopics/topics (interesting word clusters) Compute variations of themes over contexts Applications: – Summarizing search results – Federation of text information – Opinion analysis – Social network analysis – Business intelligence –. . 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 44

Context Features of Text (Meta-data) Weblog Article communities Author source Author’s Occupation Time 2008 © Cheng. Xiang Zhai Location China-US-France Summer School, Lotus Hill Inst. , 2008 45

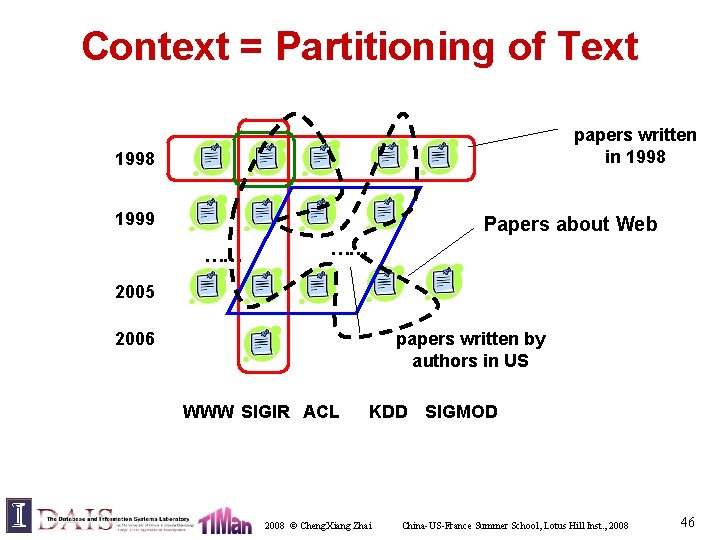

Context = Partitioning of Text papers written in 1998 1999 Papers about Web …… …… 2005 2006 papers written by authors in US WWW SIGIR ACL KDD SIGMOD 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 46

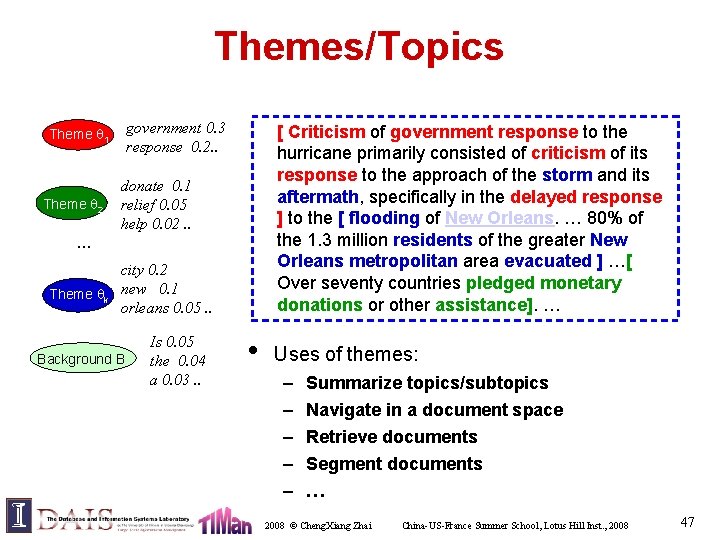

Themes/Topics government 0. 3 response 0. 2. . Theme 1 Theme 2 [ Criticism of government response to the hurricane primarily consisted of criticism of its response to the approach of the storm and its aftermath, specifically in the delayed response ] to the [ flooding of New Orleans. … 80% of the 1. 3 million residents of the greater New Orleans metropolitan area evacuated ] …[ Over seventy countries pledged monetary donations or other assistance]. … donate 0. 1 relief 0. 05 help 0. 02. . … Theme k city 0. 2 new 0. 1 orleans 0. 05. . Background B Is 0. 05 the 0. 04 a 0. 03. . • Uses of themes: – – – Summarize topics/subtopics Navigate in a document space Retrieve documents Segment documents … 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 47

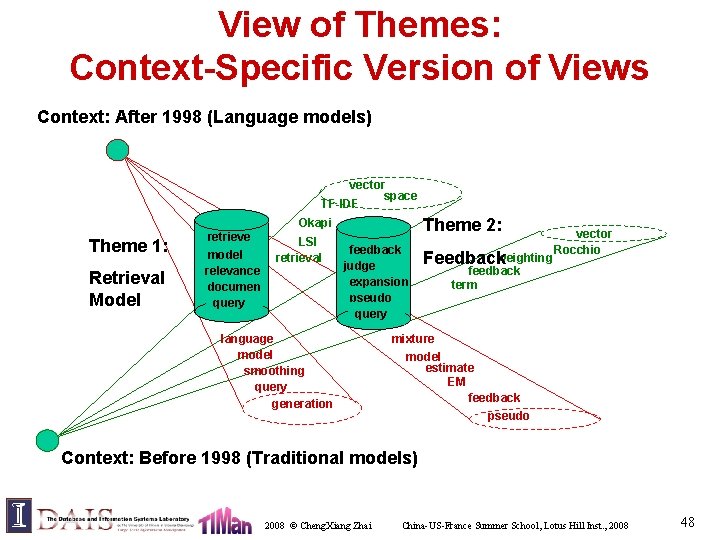

View of Themes: Context-Specific Version of Views Context: After 1998 (Language models) vector space TF-IDF Theme 2: Okapi Theme 1: Retrieval Model retrieve model relevance documen t query LSI retrieval feedback judge expansion pseudo query language model smoothing query generation weighting Feedback vector Rocchio feedback term mixture model estimate EM feedback pseudo Context: Before 1998 (Traditional models) 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 48

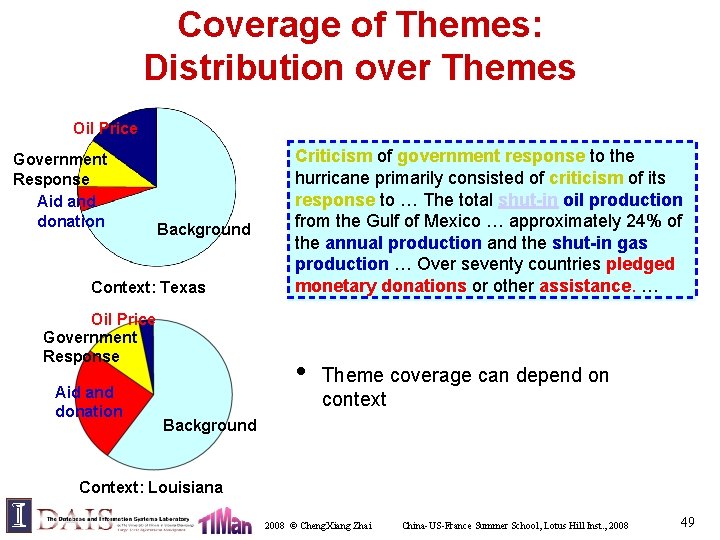

Coverage of Themes: Distribution over Themes Oil Price Government Response Aid and donation Background Context: Texas Oil Price Government Response Aid and donation Criticism of government response to the hurricane primarily consisted of criticism of its response to … The total shut-in oil production from the Gulf of Mexico … approximately 24% of the annual production and the shut-in gas production … Over seventy countries pledged monetary donations or other assistance. … • Theme coverage can depend on context Background Context: Louisiana 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 49

General Tasks of Contextual Text Mining • Theme Extraction: Extract the global salient themes – Common information shared over all contexts • • • View Comparison: Compare a theme from different views – Analyze the content variation of themes over contexts Coverage Comparison: Compare theme coverage of different contexts – Reveal how closely a theme is associated to a context Others: – Causal analysis – Correlation analysis 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 50

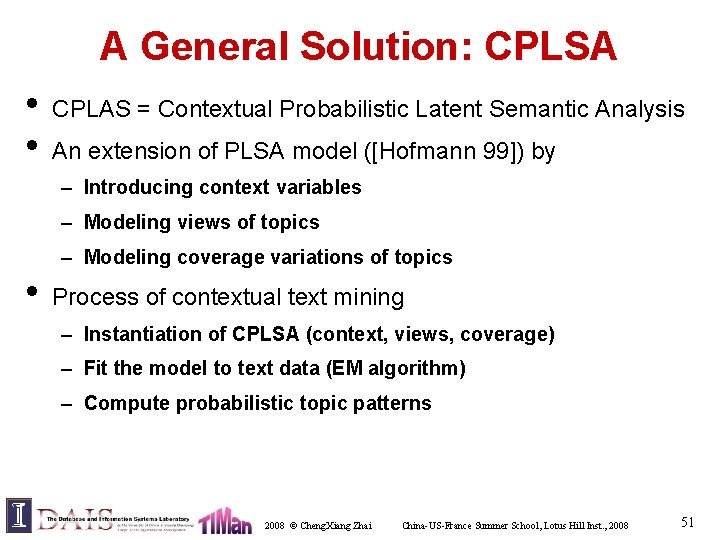

A General Solution: CPLSA • • CPLAS = Contextual Probabilistic Latent Semantic Analysis An extension of PLSA model ([Hofmann 99]) by – Introducing context variables – Modeling views of topics – Modeling coverage variations of topics • Process of contextual text mining – Instantiation of CPLSA (context, views, coverage) – Fit the model to text data (EM algorithm) – Compute probabilistic topic patterns 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 51

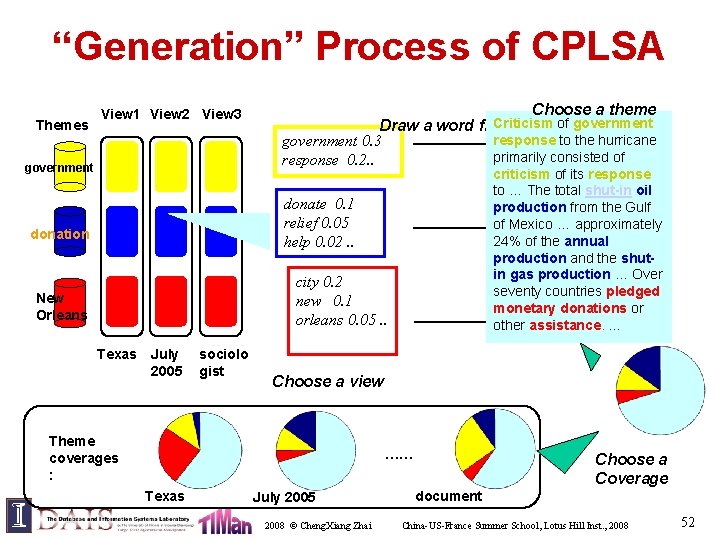

“Generation” Process of CPLSA Themes View 1 View 2 View 3 government Choose a theme Criticism of government Draw a word from i response togovernment the hurricane government 0. 3 primarily consisted of response 0. 2. . Document response criticism of its response context: to … The total shut-in oil Time = from July the 2005 production Gulf Location = Texas of Mexico …donate approximately = xxx 24% of. Author the annual help aid production the shut. Occup. = and Sociologist in gas Over Ageproduction Group = … 45+ seventy countries pledged …Orleans monetary donations or new other assistance. … donate 0. 1 relief 0. 05 help 0. 02. . donation city 0. 2 new 0. 1 orleans 0. 05. . New Orleans Texas July 2005 sociolo gist Choose a view Theme coverages : …… Texas July 2005 2008 © Cheng. Xiang Zhai Choose a Coverage document China-US-France Summer School, Lotus Hill Inst. , 2008 52

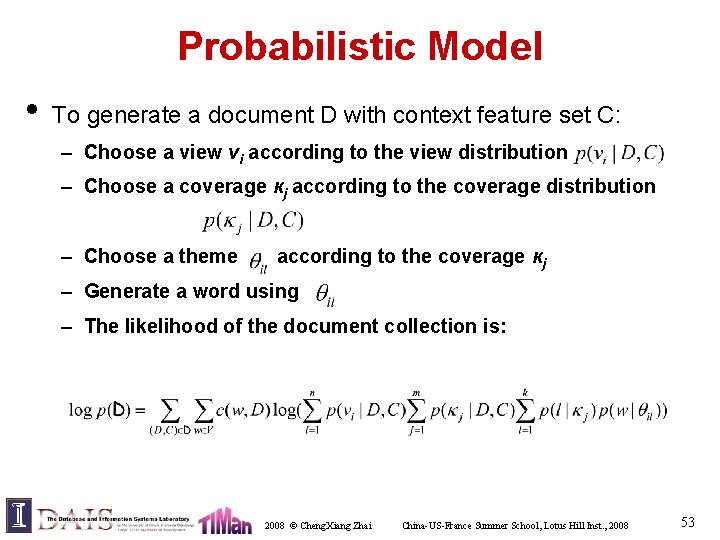

Probabilistic Model • To generate a document D with context feature set C: – Choose a view vi according to the view distribution – Choose a coverage кj according to the coverage distribution – Choose a theme according to the coverage кj – Generate a word using – The likelihood of the document collection is: 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 53

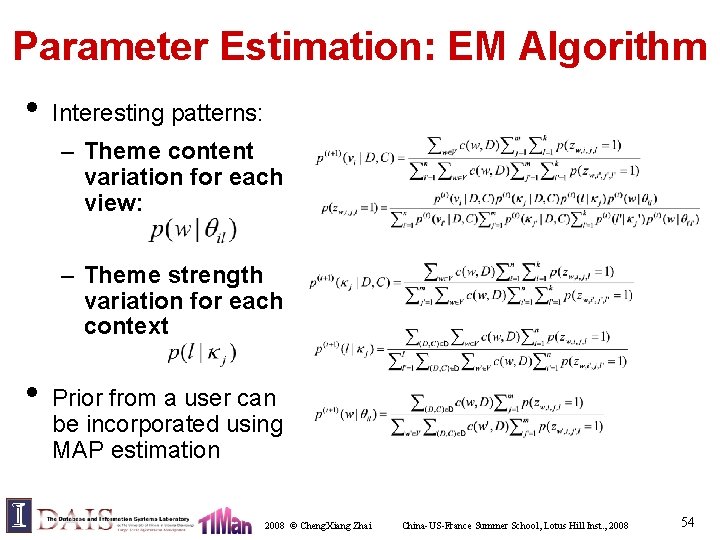

Parameter Estimation: EM Algorithm • Interesting patterns: – Theme content variation for each view: – Theme strength variation for each context • Prior from a user can be incorporated using MAP estimation 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 54

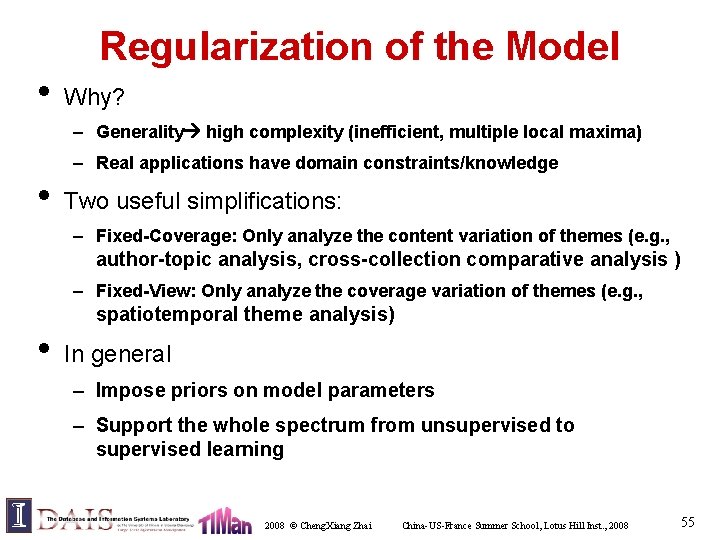

Regularization of the Model • Why? – Generality high complexity (inefficient, multiple local maxima) – Real applications have domain constraints/knowledge • Two useful simplifications: – Fixed-Coverage: Only analyze the content variation of themes (e. g. , author-topic analysis, cross-collection comparative analysis ) – Fixed-View: Only analyze the coverage variation of themes (e. g. , spatiotemporal theme analysis) • In general – Impose priors on model parameters – Support the whole spectrum from unsupervised to supervised learning 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 55

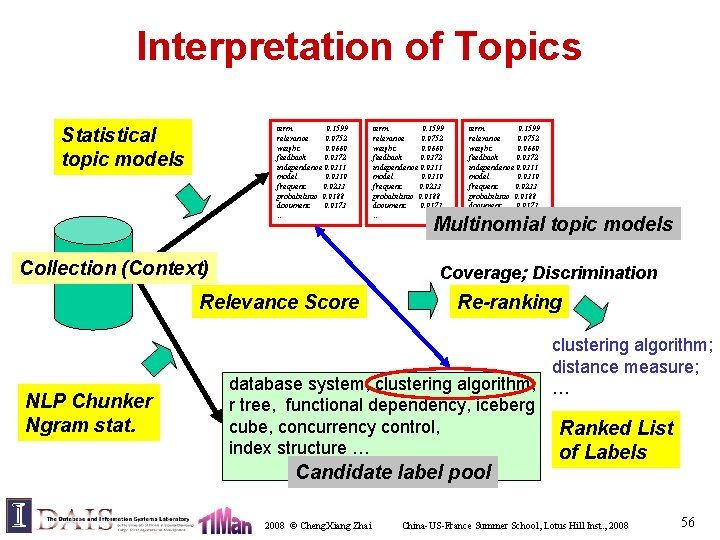

Interpretation of Topics Statistical topic models term 0. 1599 relevance 0. 0752 weight 0. 0660 feedback 0. 0372 independence 0. 0311 model 0. 0310 frequent 0. 0233 probabilistic 0. 0188 document 0. 0173 … Collection (Context) term 0. 1599 relevance 0. 0752 weight 0. 0660 feedback 0. 0372 independence 0. 0311 model 0. 0310 frequent 0. 0233 probabilistic 0. 0188 document 0. 0173 … Multinomial topic models Coverage; Discrimination Relevance Score NLP Chunker Ngram stat. term 0. 1599 relevance 0. 0752 weight 0. 0660 feedback 0. 0372 independence 0. 0311 model 0. 0310 frequent 0. 0233 probabilistic 0. 0188 document 0. 0173 … Re-ranking clustering algorithm; distance measure; database system, clustering algorithm, … r tree, functional dependency, iceberg cube, concurrency control, Ranked List index structure … of Labels Candidate label pool 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 56

Relevance: the Zero-Order Score • Intuition: prefer phrases covering high probability words Clustering dimensional Latent Topic algorithm Good Label (l 1): “clustering algorithm” … birch shape p(w| ) … Bad Label (l 2): “body shape” body 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 57

Relevance: the First-Order Score • Intuition: prefer phrases with similar context (distribution) Clustering Topic dimension partition algorithm … … hash P(w| ) dimension Good Label (l 1): “clustering … algorithm” algorithm join C: SIGMOD Proceedings hash P(w|l 1) P(w|l 2) Bad Label (l 2): “hash join” Score (l, ) hash D( ||l 1) < D( ||l 2) 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 58

Sample Results • Comparative text mining • Spatiotemporal pattern mining • Sentiment summary • Event impact analysis • Temporal author-topic analysis 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 59

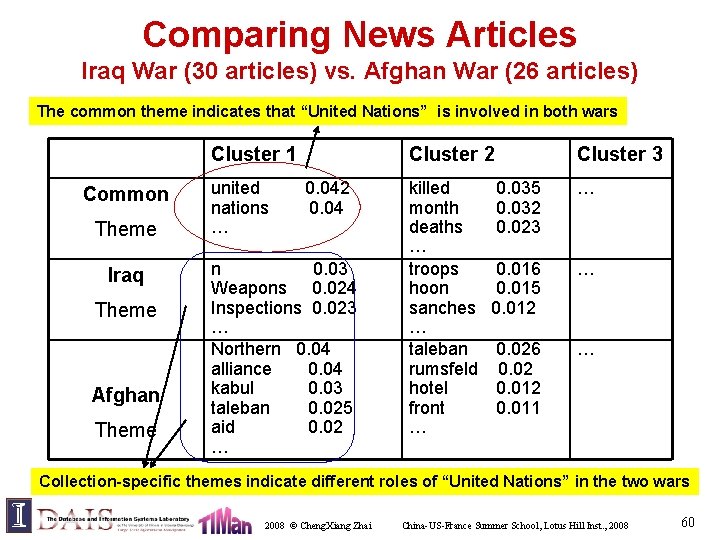

Comparing News Articles Iraq War (30 articles) vs. Afghan War (26 articles) The common theme indicates that “United Nations” is involved in both wars Cluster 1 Common Theme Iraq Theme Afghan Theme united nations … Cluster 2 0. 04 n 0. 03 Weapons 0. 024 Inspections 0. 023 … Northern 0. 04 alliance 0. 04 kabul 0. 03 taleban 0. 025 aid 0. 02 … killed month deaths … troops hoon sanches … taleban rumsfeld hotel front … Cluster 3 0. 035 0. 032 0. 023 … 0. 016 0. 015 0. 012 … 0. 026 0. 02 0. 011 … Collection-specific themes indicate different roles of “United Nations” in the two wars 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 60

Comparing Laptop Reviews Top words serve as “labels” for common themes (e. g. , [sound, speakers], [battery, hours], [cd, drive]) These word distributions can be used to segment text and add hyperlinks between documents 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 61

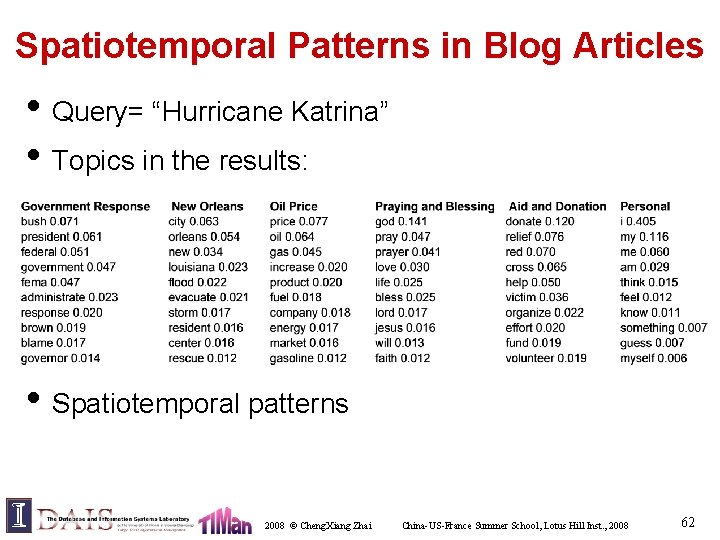

Spatiotemporal Patterns in Blog Articles • Query= “Hurricane Katrina” • Topics in the results: • Spatiotemporal patterns 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 62

Theme Life Cycles for Hurricane Katrina Oil Price New Orleans price 0. 0772 oil 0. 0643 gas 0. 0454 increase 0. 0210 product 0. 0203 fuel 0. 0188 company 0. 0182 … city 0. 0634 orleans 0. 0541 new 0. 0342 louisiana 0. 0235 flood 0. 0227 evacuate 0. 0211 storm 0. 0177 … 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 63

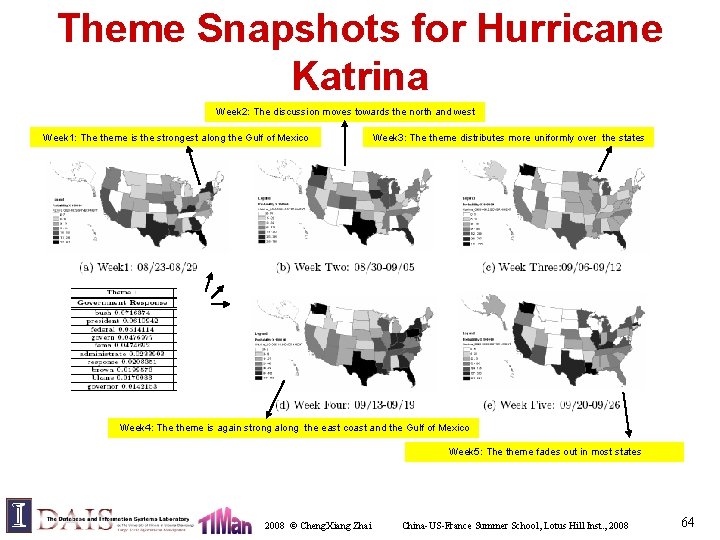

Theme Snapshots for Hurricane Katrina Week 2: The discussion moves towards the north and west Week 1: The theme is the strongest along the Gulf of Mexico Week 3: The theme distributes more uniformly over the states Week 4: The theme is again strong along the east coast and the Gulf of Mexico Week 5: The theme fades out in most states 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 64

Theme Life Cycles: KDD gene 0. 0173 expressions 0. 0096 probability 0. 0081 microarray 0. 0038 … marketing 0. 0087 customer 0. 0086 model 0. 0079 business 0. 0048 … rules 0. 0142 association 0. 0064 support 0. 0053 … Global Themes life cycles of KDD Abstracts 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 65

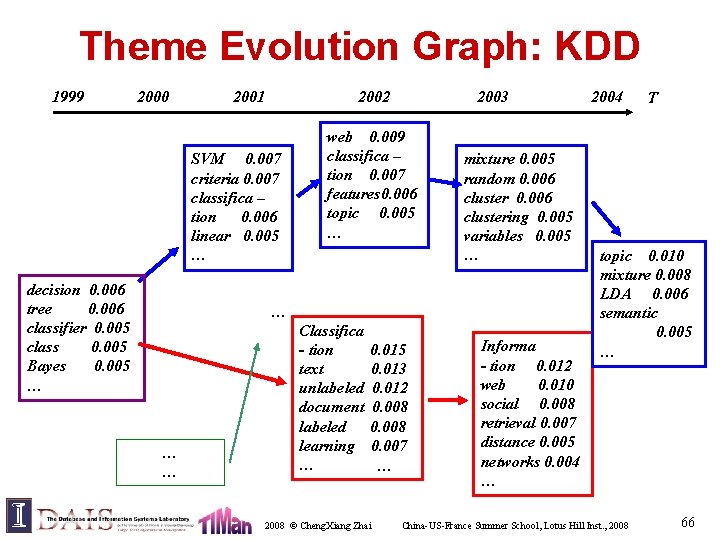

Theme Evolution Graph: KDD 1999 2000 2001 2002 SVM 0. 007 criteria 0. 007 classifica – tion 0. 006 linear 0. 005 … decision 0. 006 tree 0. 006 classifier 0. 005 class 0. 005 Bayes 0. 005 … 2003 web 0. 009 classifica – tion 0. 007 features 0. 006 topic 0. 005 … mixture 0. 005 random 0. 006 clustering 0. 005 variables 0. 005 … … Classifica - tion text unlabeled document labeled learning … 0. 015 0. 013 0. 012 0. 008 0. 007 … 2008 © Cheng. Xiang Zhai Informa - tion 0. 012 web 0. 010 social 0. 008 retrieval 0. 007 distance 0. 005 networks 0. 004 … 2004 T topic 0. 010 mixture 0. 008 LDA 0. 006 semantic 0. 005 … China-US-France Summer School, Lotus Hill Inst. , 2008 66

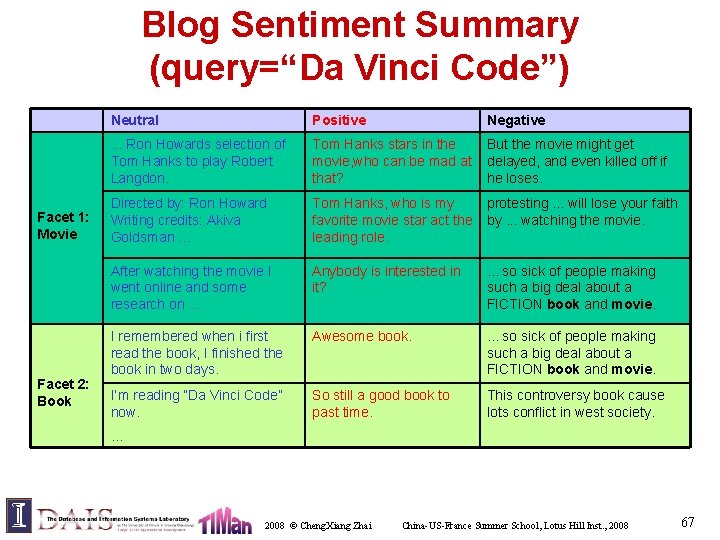

Blog Sentiment Summary (query=“Da Vinci Code”) Facet 1: Movie Facet 2: Book Neutral Positive Negative . . . Ron Howards selection of Tom Hanks to play Robert Langdon. Tom Hanks stars in the movie, who can be mad at that? But the movie might get delayed, and even killed off if he loses. Directed by: Ron Howard Writing credits: Akiva Goldsman. . . Tom Hanks, who is my favorite movie star act the leading role. protesting. . . will lose your faith by. . . watching the movie. After watching the movie I went online and some research on. . . Anybody is interested in it? . . . so sick of people making such a big deal about a FICTION book and movie. I remembered when i first read the book, I finished the book in two days. Awesome book. . so sick of people making such a big deal about a FICTION book and movie. I’m reading “Da Vinci Code” now. So still a good book to past time. This controversy book cause lots conflict in west society. … 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 67

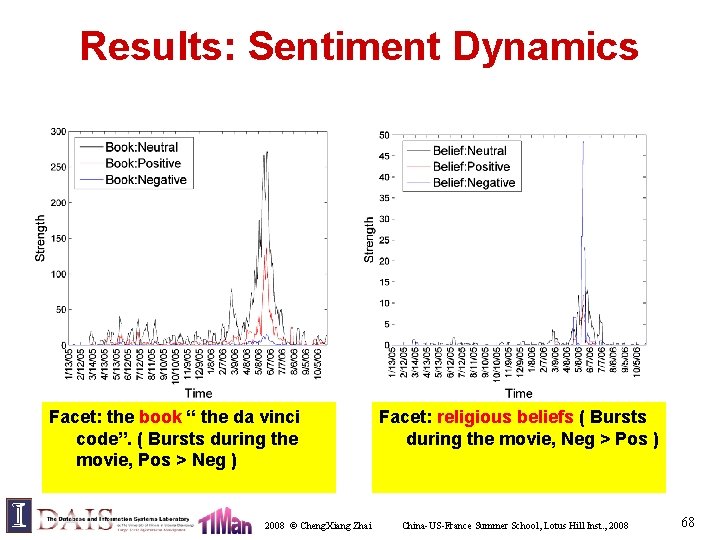

Results: Sentiment Dynamics Facet: the book “ the da vinci code”. ( Bursts during the movie, Pos > Neg ) 2008 © Cheng. Xiang Zhai Facet: religious beliefs ( Bursts during the movie, Neg > Pos ) China-US-France Summer School, Lotus Hill Inst. , 2008 68

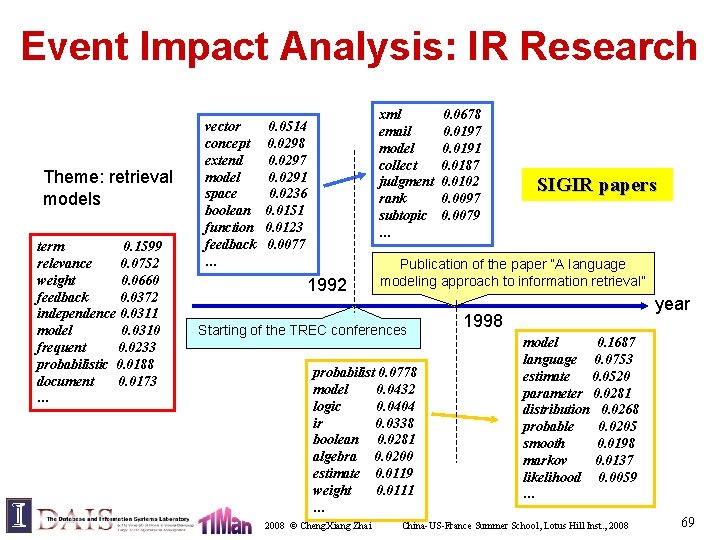

Event Impact Analysis: IR Research Theme: retrieval models term 0. 1599 relevance 0. 0752 weight 0. 0660 feedback 0. 0372 independence 0. 0311 model 0. 0310 frequent 0. 0233 probabilistic 0. 0188 document 0. 0173 … vector concept extend model space boolean function feedback … xml email model collect judgment rank subtopic … 0. 0514 0. 0298 0. 0297 0. 0291 0. 0236 0. 0151 0. 0123 0. 0077 1992 SIGIR papers Publication of the paper “A language modeling approach to information retrieval” Starting of the TREC conferences probabilist 0. 0778 model 0. 0432 logic 0. 0404 ir 0. 0338 boolean 0. 0281 algebra 0. 0200 estimate 0. 0119 weight 0. 0111 … 2008 © Cheng. Xiang Zhai 0. 0678 0. 0197 0. 0191 0. 0187 0. 0102 0. 0097 0. 0079 year 1998 model 0. 1687 language 0. 0753 estimate 0. 0520 parameter 0. 0281 distribution 0. 0268 probable 0. 0205 smooth 0. 0198 markov 0. 0137 likelihood 0. 0059 … China-US-France Summer School, Lotus Hill Inst. , 2008 69

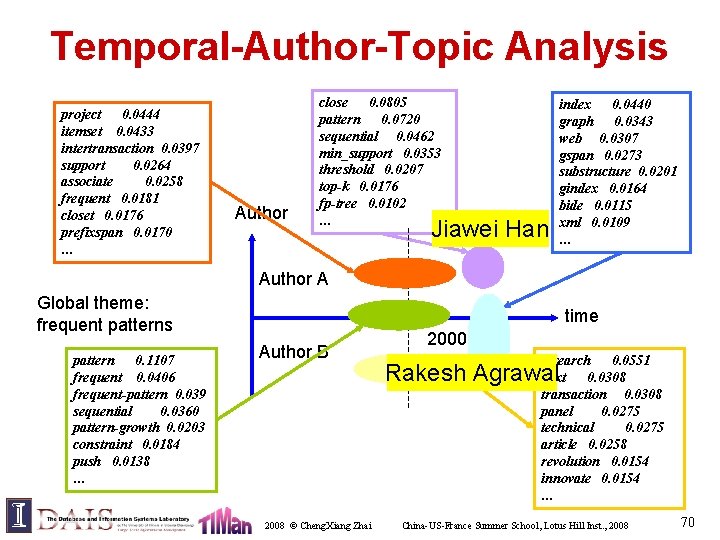

Temporal-Author-Topic Analysis project 0. 0444 itemset 0. 0433 intertransaction 0. 0397 support 0. 0264 associate 0. 0258 frequent 0. 0181 closet 0. 0176 prefixspan 0. 0170 … Author close 0. 0805 pattern 0. 0720 sequential 0. 0462 min_support 0. 0353 threshold 0. 0207 top-k 0. 0176 fp-tree 0. 0102 … Jiawei Han index 0. 0440 graph 0. 0343 web 0. 0307 gspan 0. 0273 substructure 0. 0201 gindex 0. 0164 bide 0. 0115 xml 0. 0109 … Author A Global theme: frequent patterns pattern 0. 1107 frequent 0. 0406 frequent-pattern 0. 039 sequential 0. 0360 pattern-growth 0. 0203 constraint 0. 0184 push 0. 0138 … time Author B 2008 © Cheng. Xiang Zhai 2000 research 0. 0551 next 0. 0308 transaction 0. 0308 panel 0. 0275 technical 0. 0275 article 0. 0258 revolution 0. 0154 innovate 0. 0154 … Rakesh Agrawal China-US-France Summer School, Lotus Hill Inst. , 2008 70

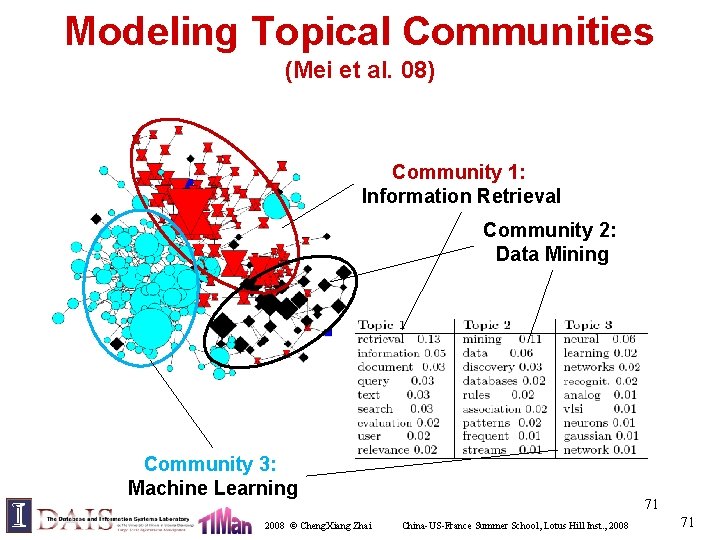

Modeling Topical Communities (Mei et al. 08) Community 1: Information Retrieval Community 2: Data Mining Community 3: Machine Learning 2008 © Cheng. Xiang Zhai 71 China-US-France Summer School, Lotus Hill Inst. , 2008 71

Other Extensions (LDA Extensions) • Many extensions of LDA, mostly done by David Blei, Andrew Mc. Callum and their co-authors • Some examples: – Hierarchical topic models [Blei et al. 03] – Modeling annotated data [Blei & Jordan 03] – Dynamic topic models – Pachinko allocation [Blei & Lafferty 06] [Li & Mc. Callum 06]) • Also, some specific context extension of PLSA, e. g. , author-topic model [Steyvers et al. 04] 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 72

Future Research Directions • Topic models for text mining – Evaluation of topic models – Improve the efficiency of estimation and inferences – Incorporate linguistic knowledge • – Applications in new domains and for new tasks Text mining in general – Combination of NLP-style and DM-style mining algorithms – Integrated mining of text (unstructured) and unstructured data (e. g. , Text OLAP) – Interactive mining: • Incorporate user constraints and support iterative mining • Design and implement mining languages 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 73

Lecture 5: Key Points • Topic models coupled with topic labeling are quite useful for extracting and modeling subtopics in text • Adding context variables significantly increases a topic model’s capacity of performing text mining – Enable interpretation of topics in context – Accommodate variation analysis and correlation analysis of topics over context • User’s preferences and domain knowledge can be added as prior or soft constraint 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 74

Readings • PLSA: – http: //www. cs. brown. edu/~th/papers/Hofmann-UAI 99. pdf • LDA: – http: //www. cs. princeton. edu/~blei/papers/Blei. Ng. Jordan 2003. pdf – Many recent extensions, mostly done by David Blei and Andrew Mc. Callums • CPLSA: – http: //sifaka. cs. uiuc. edu/czhai/pub/kdd 06 -mix. pdf – http: //sifaka. cs. uiuc. edu/czhai/pub/www 08 -net. pdf 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 75

Discussion • Topic models for mining multimedia data – Simultaneous modeling of text and images • Cross-media analysis – Text provides context to analyze images and vice versa 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 76

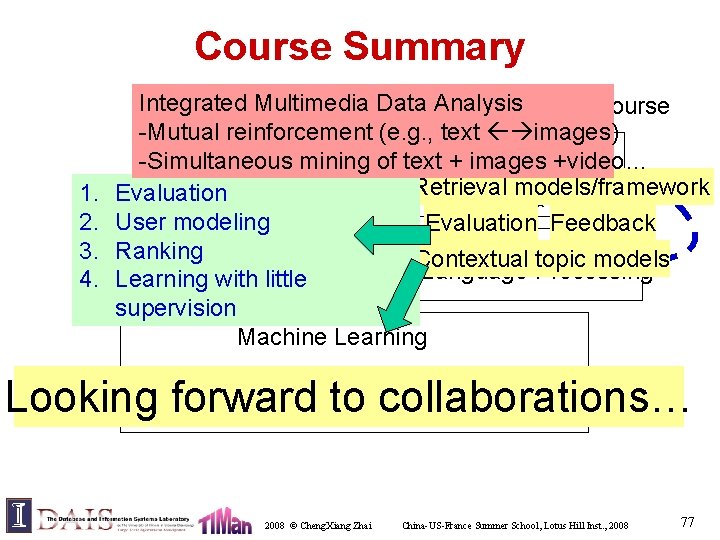

Course Summary 1. 2. 3. 4. Integrated Multimedia Data Analysis Scope of the course -Mutual reinforcement (e. g. , text images) Information Retrieval -Simultaneous mining of text + images +video… Retrieval models/framework Evaluation Multimedia Data Text Data User modeling Evaluation Feedback Ranking Contextual topic models Computer Vision Natural Language Processing Learning with little supervision Machine Learning Statistics Looking forward to collaborations… 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 77

Thank You! 2008 © Cheng. Xiang Zhai China-US-France Summer School, Lotus Hill Inst. , 2008 78

- Slides: 78