Probabilistic Robotics Bayes Filter Implementations Gaussian filters Kalman

Probabilistic Robotics Bayes Filter Implementations Gaussian filters

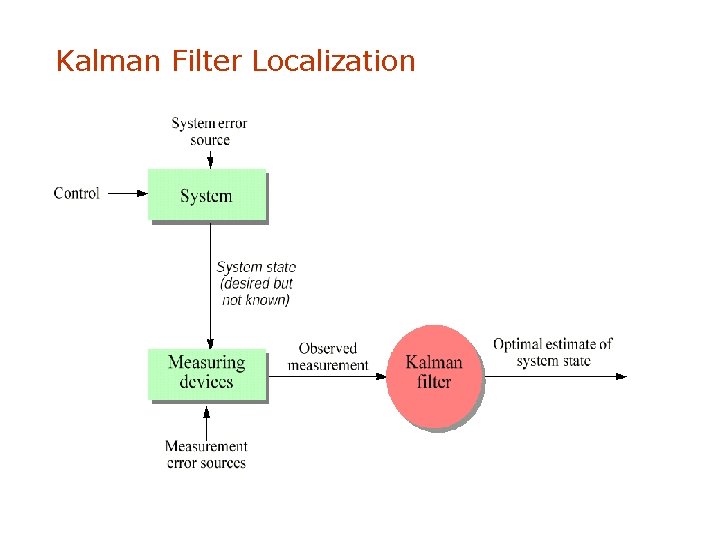

Kalman Filter Localization

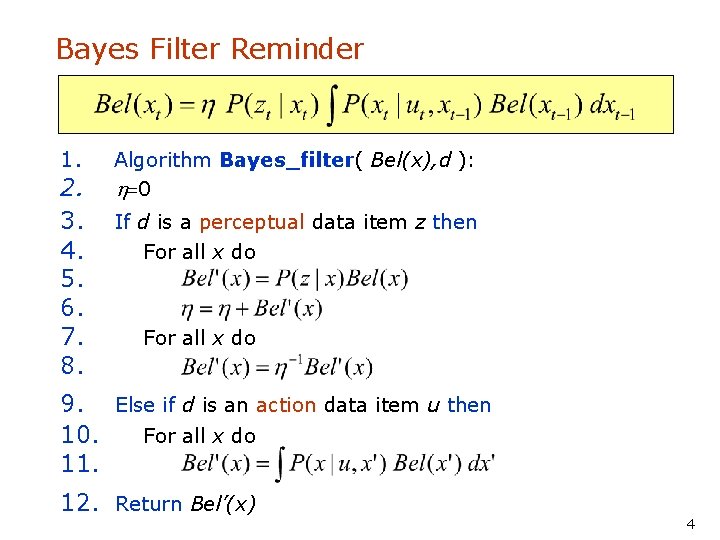

Bayes Filter Reminder 1. 2. 3. 4. 5. 6. 7. 8. Algorithm Bayes_filter( Bel(x), d ): h=0 If d is a perceptual data item z then For all x do 9. Else if d is an action data item u then 10. For all x do 11. 12. Return Bel’(x) 4

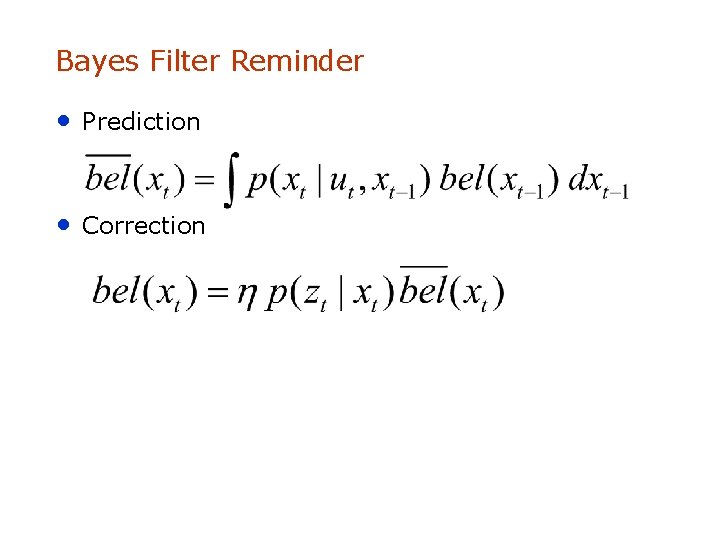

Bayes Filter Reminder • Prediction • Correction

Kalman Filter • • Bayes filter with Gaussians Developed in the late 1950's Most relevant Bayes filter variant in practice Applications range from economics, wheather forecasting, satellite navigation to robotics and many more. • The Kalman filter "algorithm" is a couple of matrix multiplications! 6

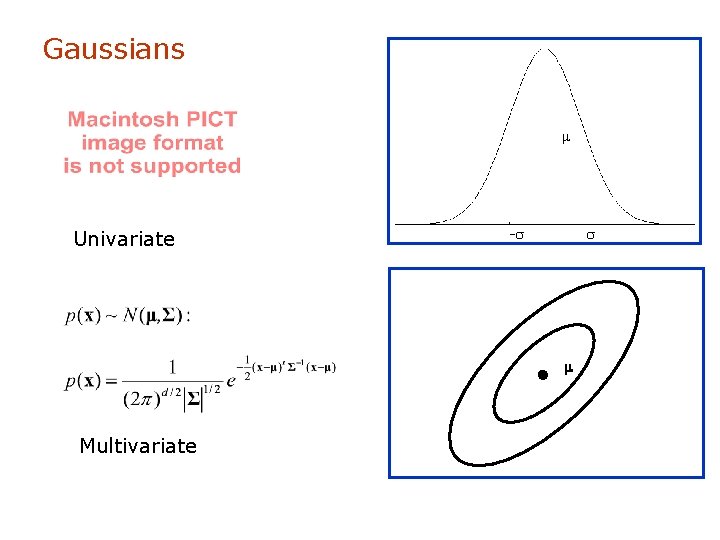

Gaussians m Univariate -s s m Multivariate

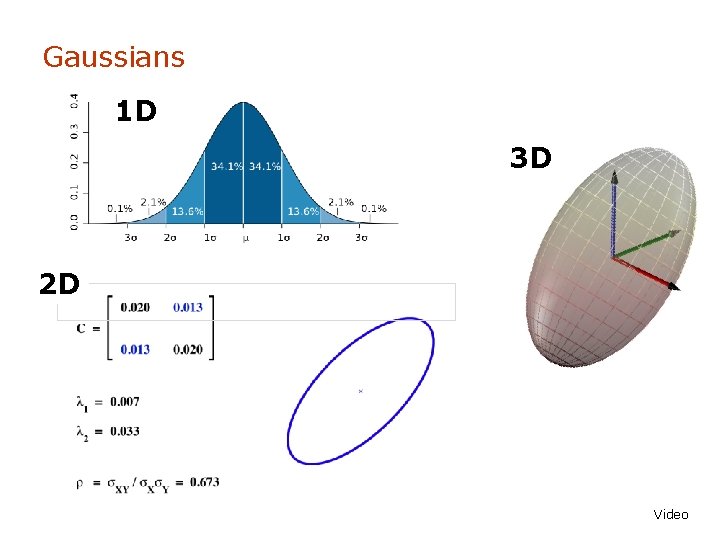

Gaussians 1 D 3 D 2 D Video

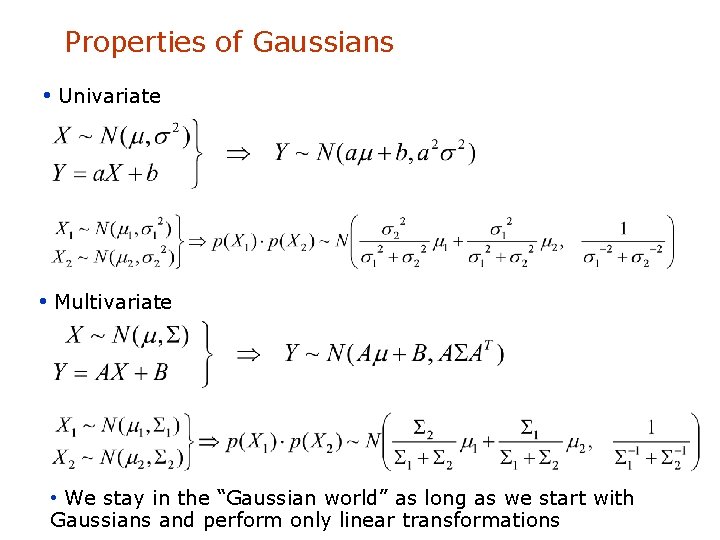

Properties of Gaussians • Univariate • Multivariate • We stay in the “Gaussian world” as long as we start with Gaussians and perform only linear transformations

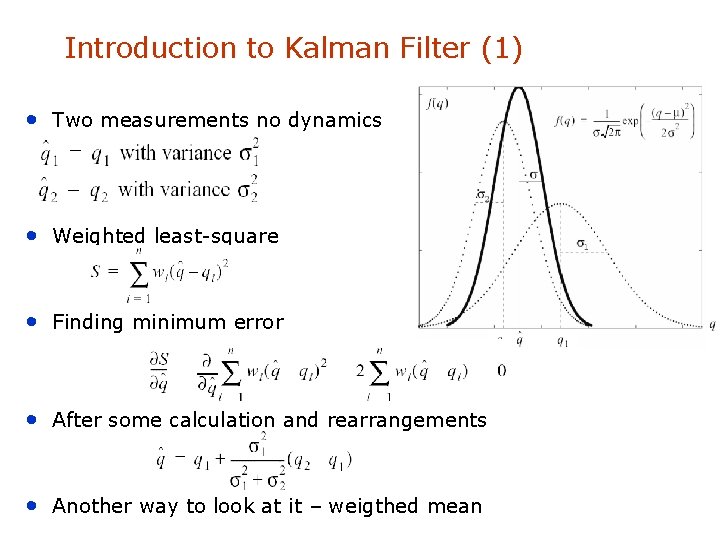

Introduction to Kalman Filter (1) • Two measurements no dynamics • Weighted least-square • Finding minimum error • After some calculation and rearrangements • Another way to look at it – weigthed mean

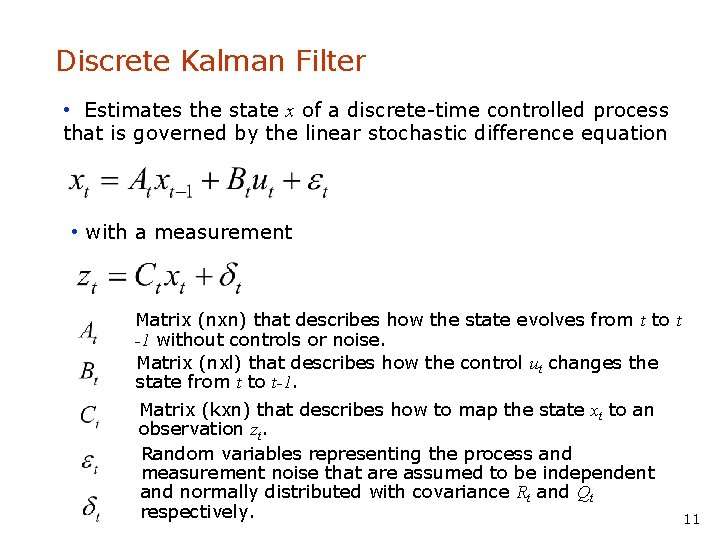

Discrete Kalman Filter • Estimates the state x of a discrete-time controlled process that is governed by the linear stochastic difference equation • with a measurement Matrix (nxn) that describes how the state evolves from t to t -1 without controls or noise. Matrix (nxl) that describes how the control ut changes the state from t to t-1. Matrix (kxn) that describes how to map the state xt to an observation zt. Random variables representing the process and measurement noise that are assumed to be independent and normally distributed with covariance Rt and Qt respectively. 11

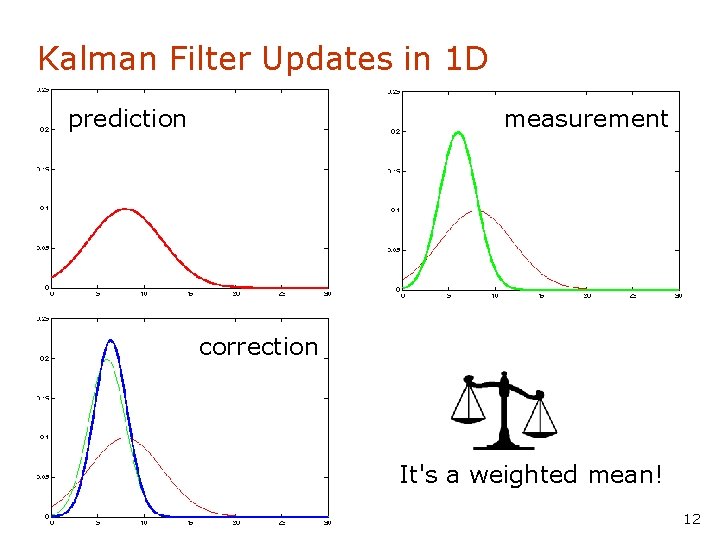

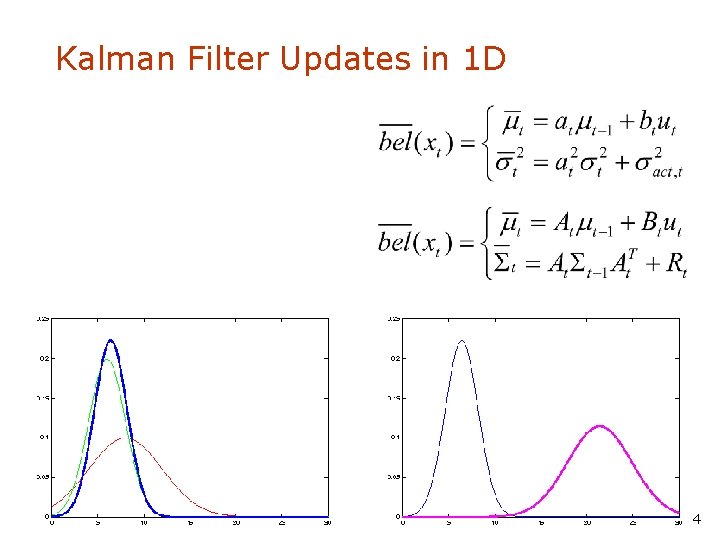

Kalman Filter Updates in 1 D prediction measurement correction It's a weighted mean! 12

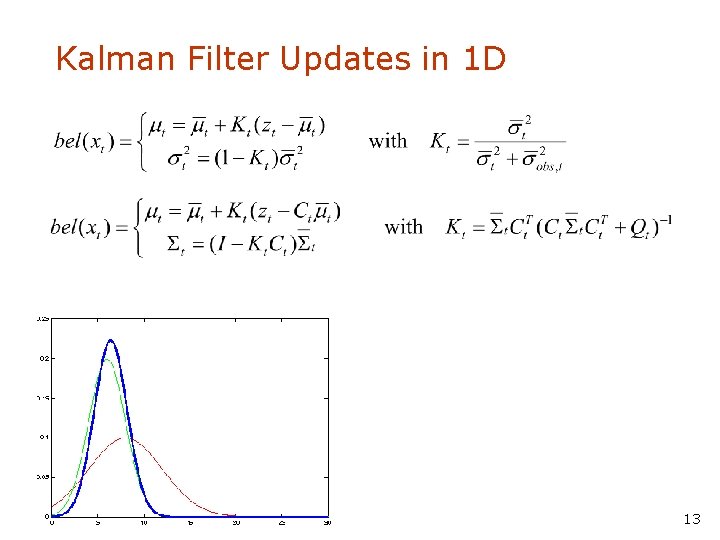

Kalman Filter Updates in 1 D 13

Kalman Filter Updates in 1 D 14

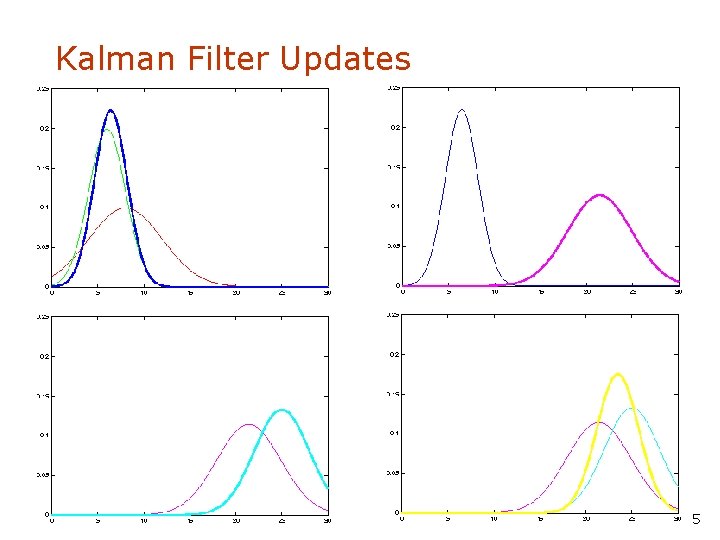

Kalman Filter Updates 15

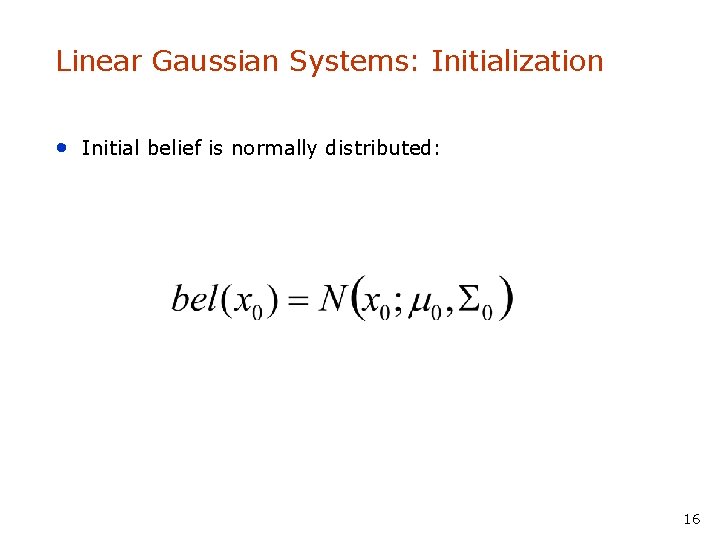

Linear Gaussian Systems: Initialization • Initial belief is normally distributed: 16

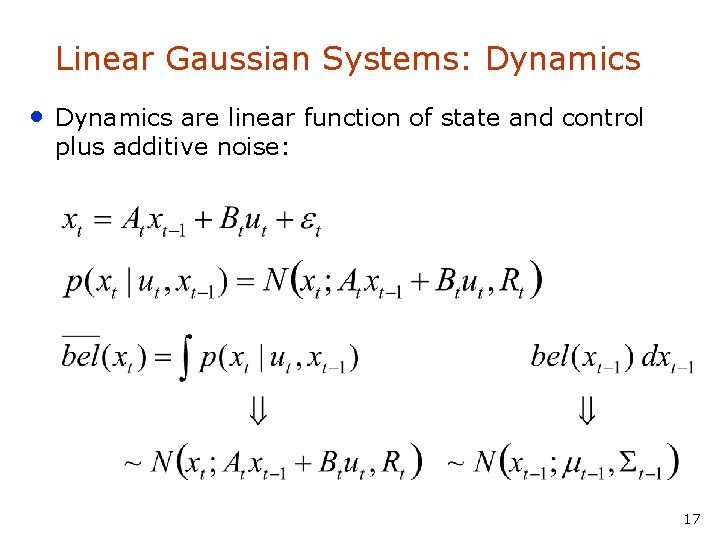

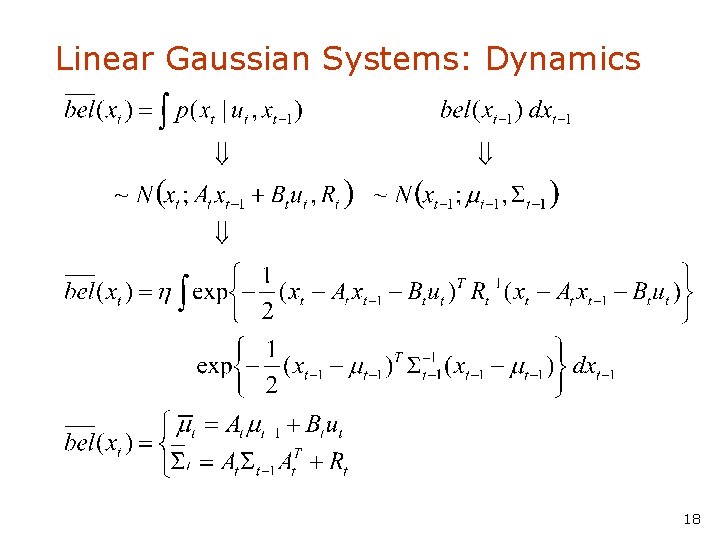

Linear Gaussian Systems: Dynamics • Dynamics are linear function of state and control plus additive noise: 17

Linear Gaussian Systems: Dynamics 18

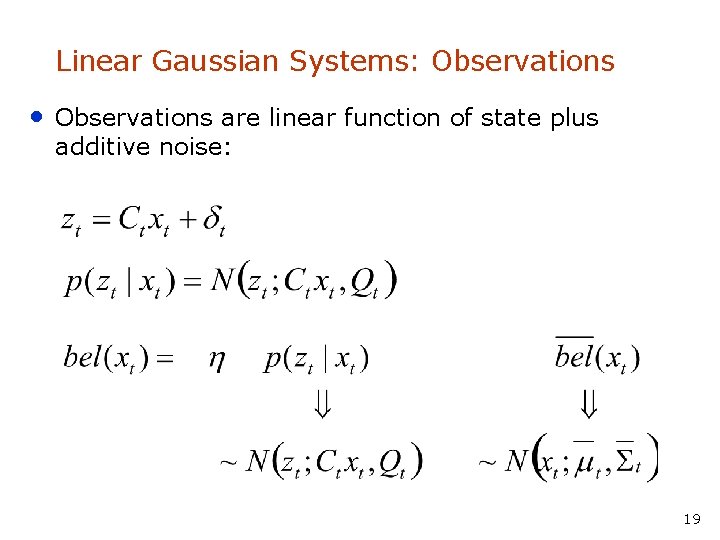

Linear Gaussian Systems: Observations • Observations are linear function of state plus additive noise: 19

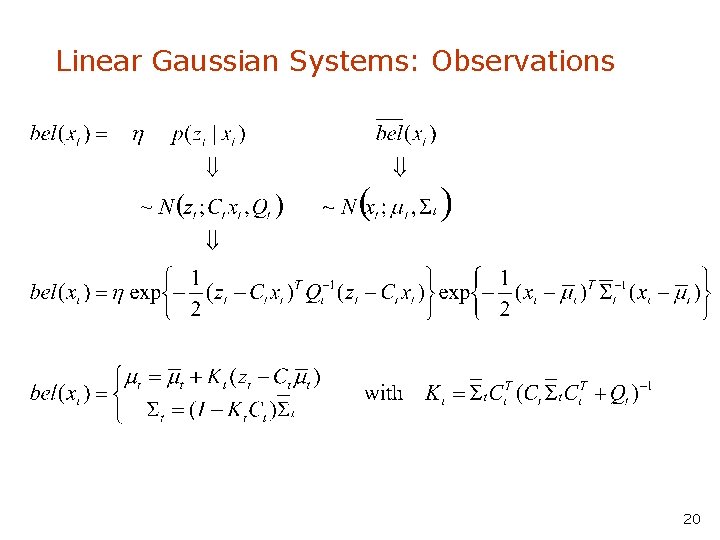

Linear Gaussian Systems: Observations 20

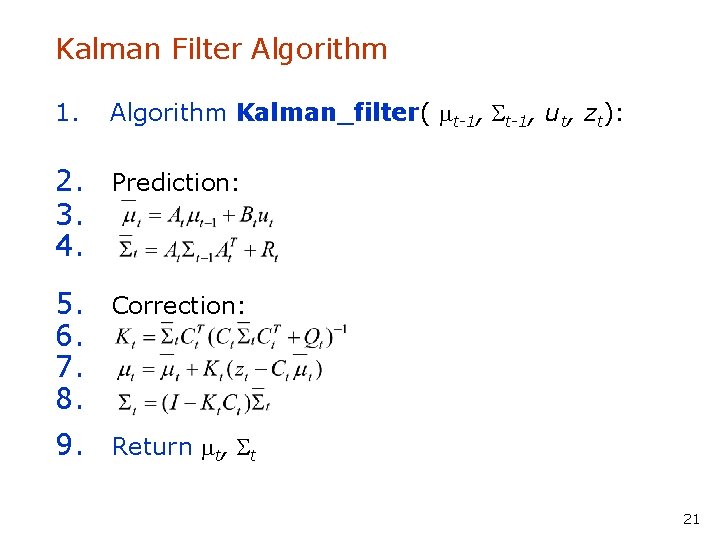

Kalman Filter Algorithm 1. Algorithm Kalman_filter( mt-1, St-1, ut, zt): 2. Prediction: 3. 4. 5. Correction: 6. 7. 8. 9. Return mt, St 21

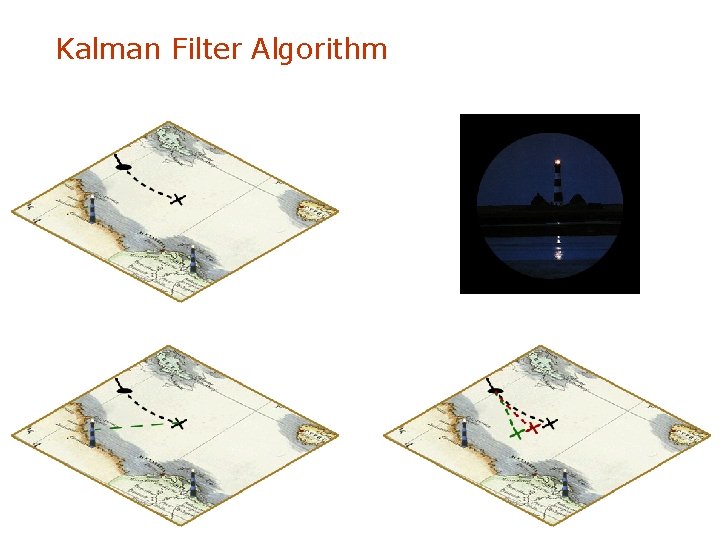

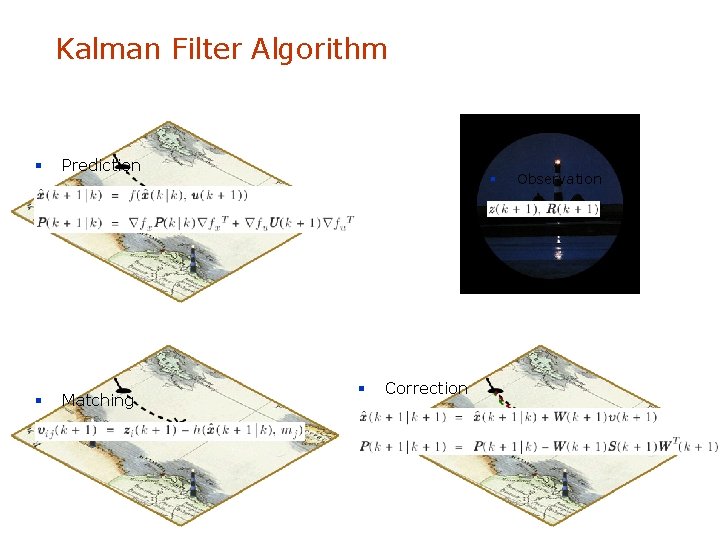

Kalman Filter Algorithm

Kalman Filter Algorithm § Prediction § Matching § § Correction Observation

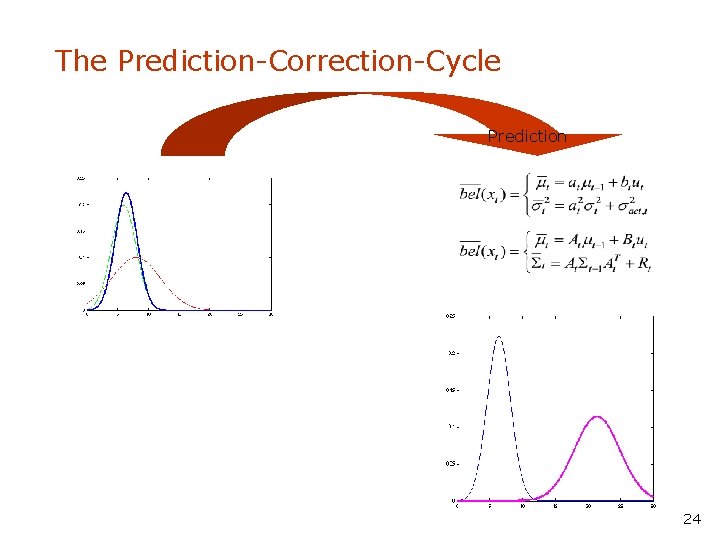

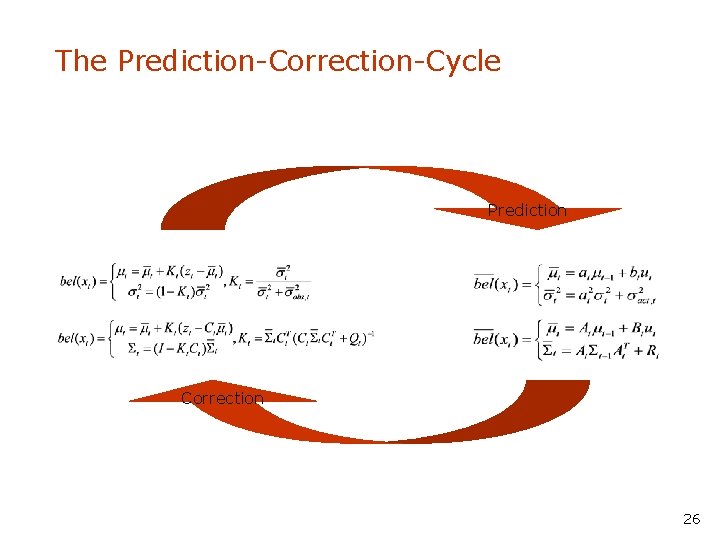

The Prediction-Correction-Cycle Prediction 24

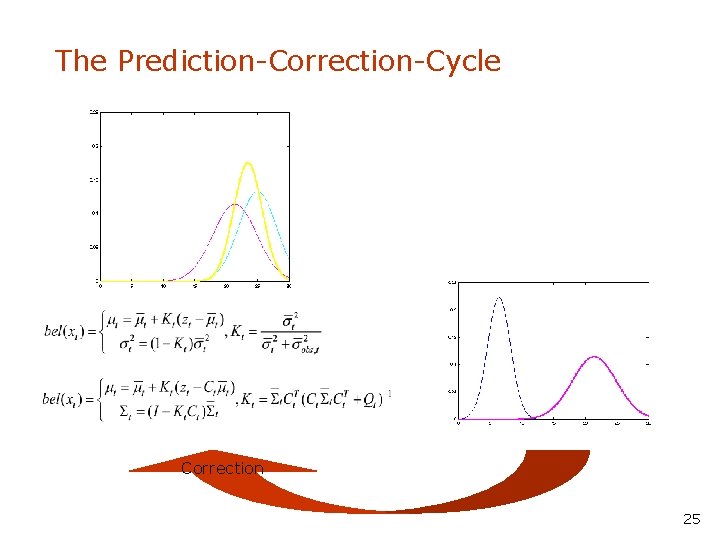

The Prediction-Correction-Cycle Correction 25

The Prediction-Correction-Cycle Prediction Correction 26

Kalman Filter Summary • Highly efficient: Polynomial in measurement dimensionality k and state dimensionality n: O(k 2. 376 + n 2) • Optimal for linear Gaussian systems! • Most robotics systems are nonlinear! 27

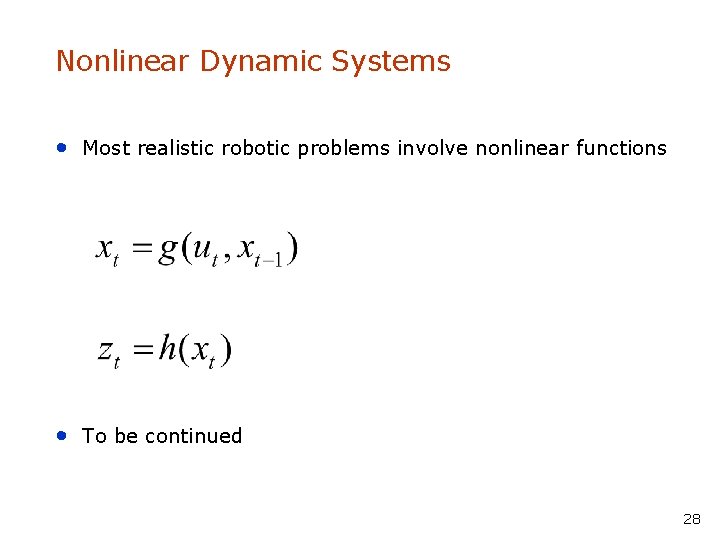

Nonlinear Dynamic Systems • Most realistic robotic problems involve nonlinear functions • To be continued 28

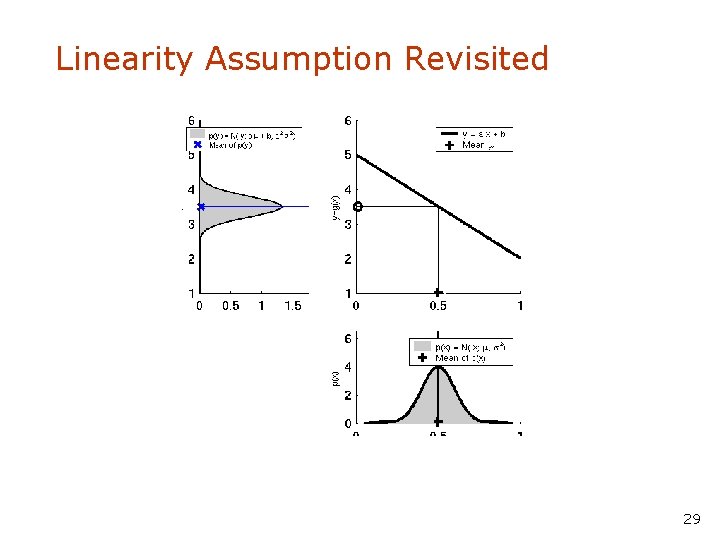

Linearity Assumption Revisited 29

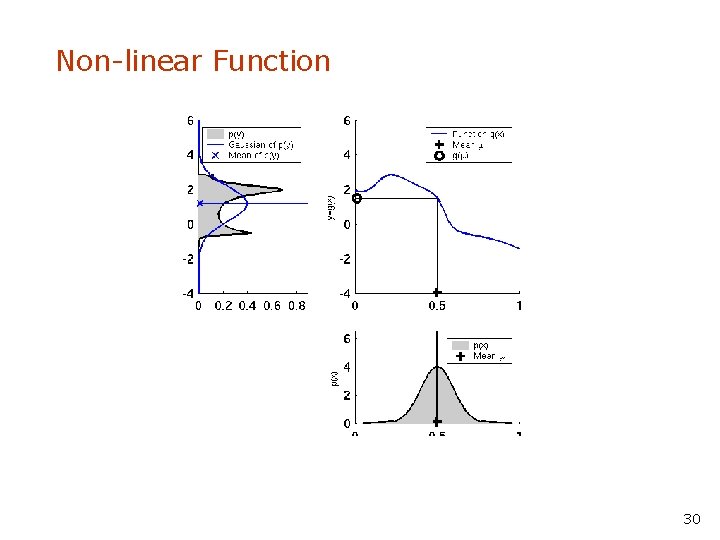

Non-linear Function 30

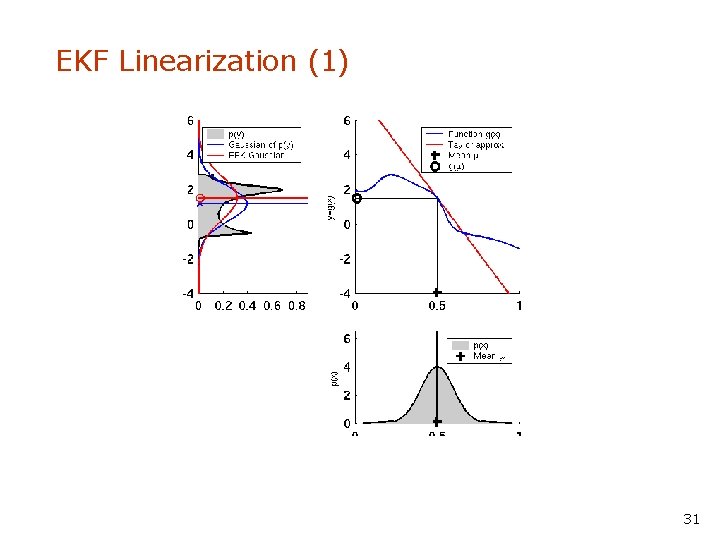

EKF Linearization (1) 31

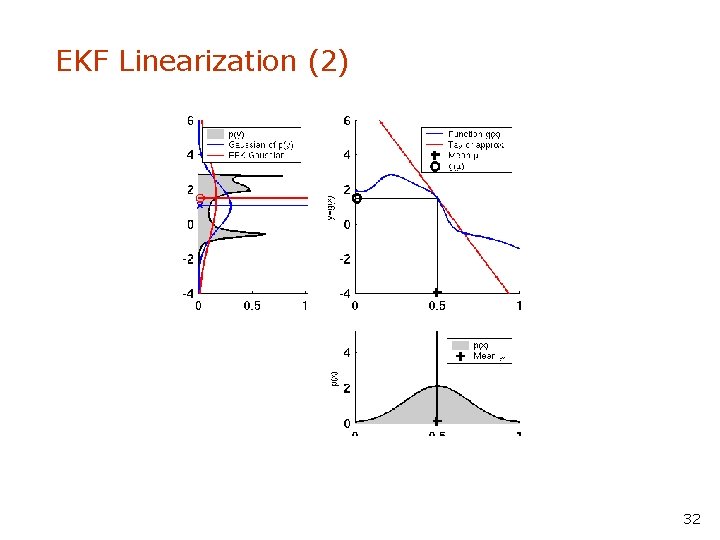

EKF Linearization (2) 32

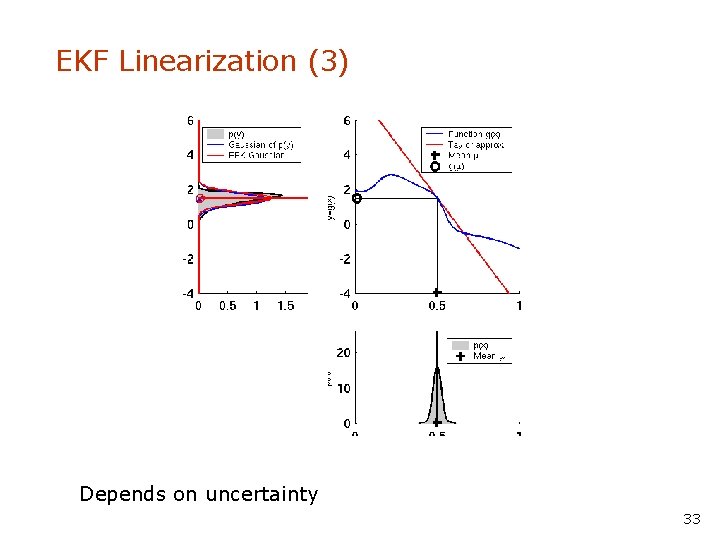

EKF Linearization (3) Depends on uncertainty 33

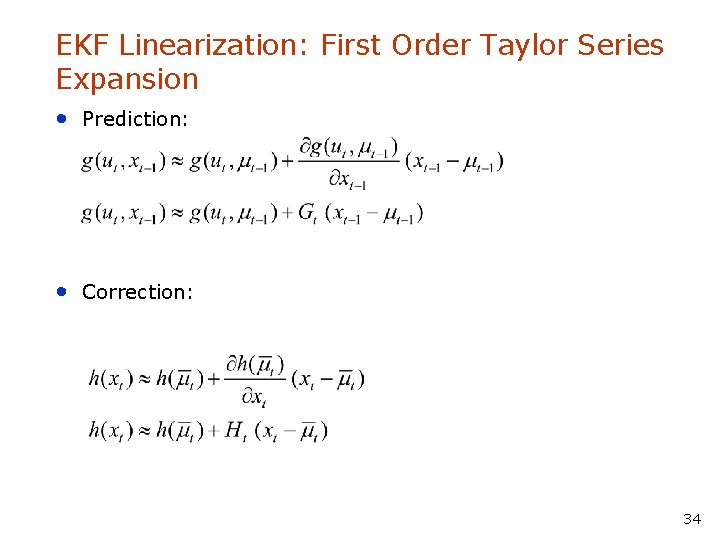

EKF Linearization: First Order Taylor Series Expansion • Prediction: • Correction: 34

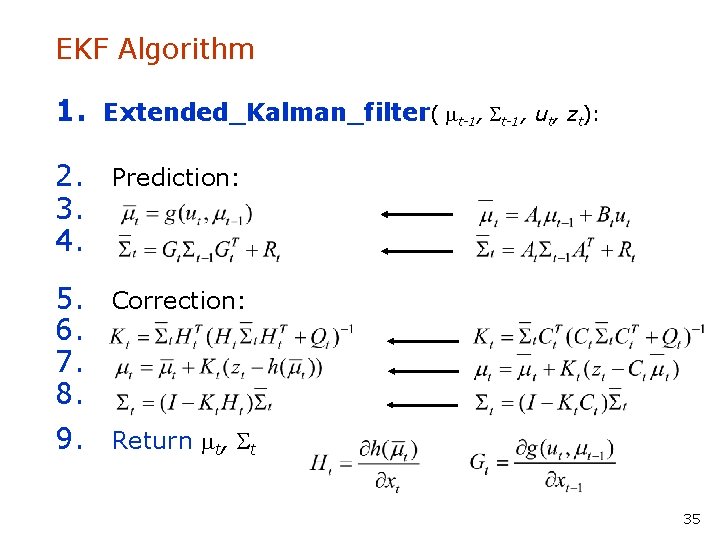

EKF Algorithm 1. Extended_Kalman_filter( mt-1, St-1, ut, zt): 2. Prediction: 3. 4. 5. Correction: 6. 7. 8. 9. Return mt, St 35

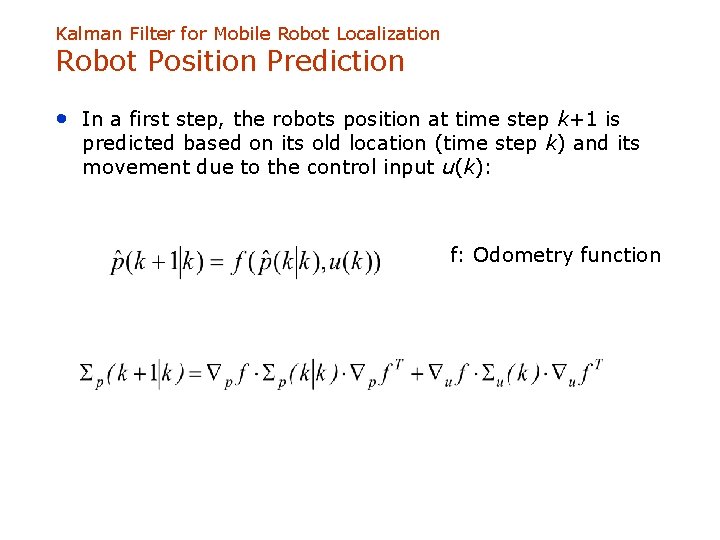

Kalman Filter for Mobile Robot Localization Robot Position Prediction • In a first step, the robots position at time step k+1 is predicted based on its old location (time step k) and its movement due to the control input u(k): f: Odometry function

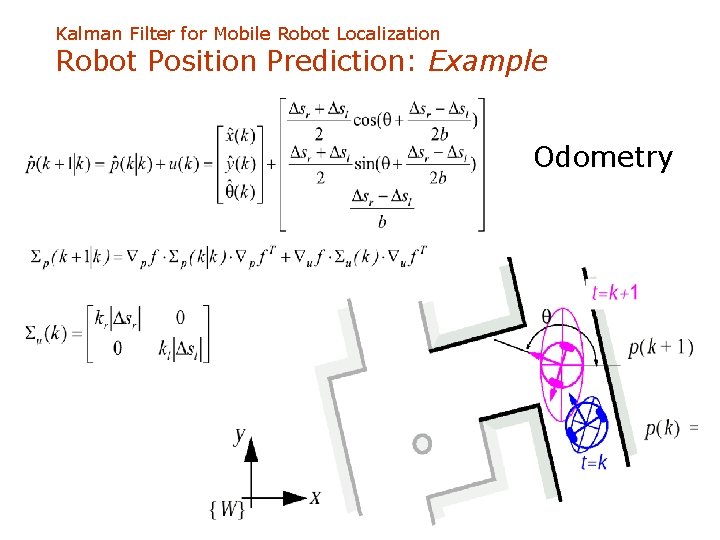

Kalman Filter for Mobile Robot Localization Robot Position Prediction: Example Odometry

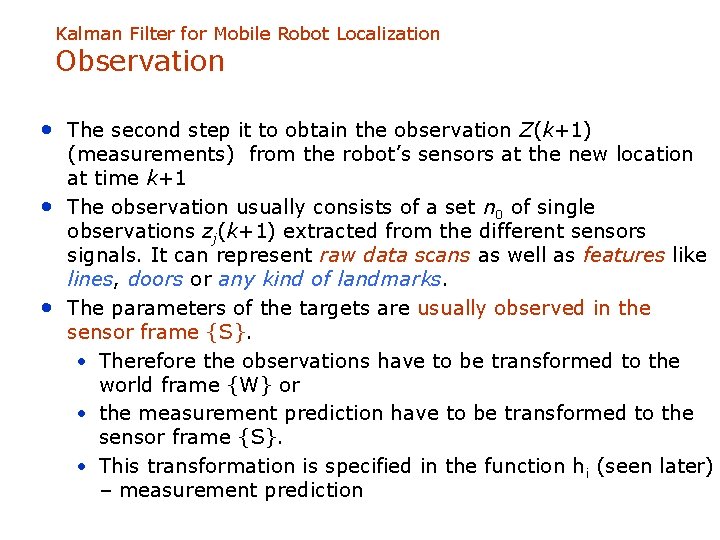

Kalman Filter for Mobile Robot Localization Observation • The second step it to obtain the observation Z(k+1) • • (measurements) from the robot’s sensors at the new location at time k+1 The observation usually consists of a set n 0 of single observations zj(k+1) extracted from the different sensors signals. It can represent raw data scans as well as features like lines, doors or any kind of landmarks. The parameters of the targets are usually observed in the sensor frame {S}. • Therefore the observations have to be transformed to the world frame {W} or • the measurement prediction have to be transformed to the sensor frame {S}. • This transformation is specified in the function hi (seen later) – measurement prediction

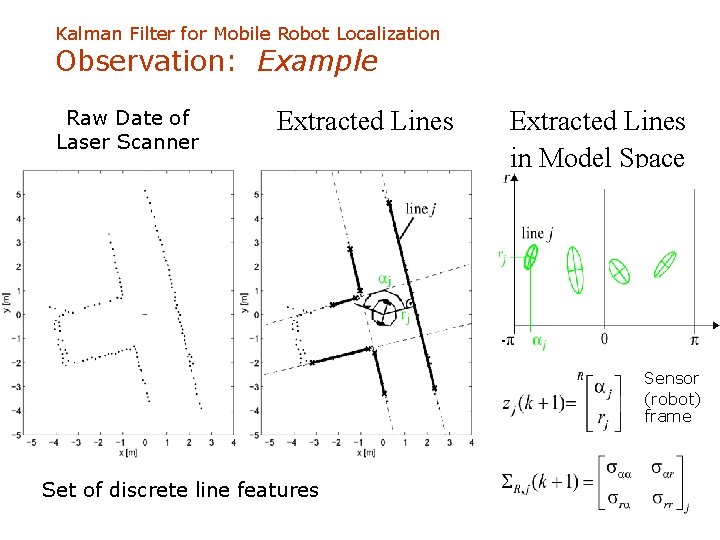

Kalman Filter for Mobile Robot Localization Observation: Example Raw Date of Laser Scanner Extracted Lines in Model Space Sensor (robot) frame Set of discrete line features

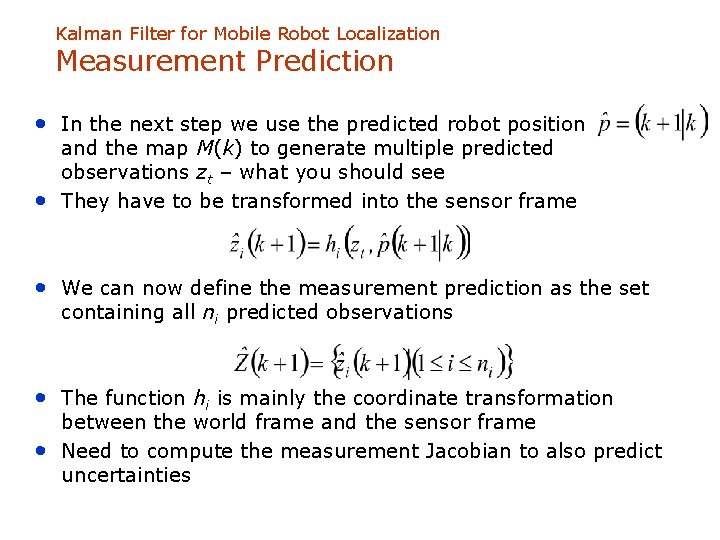

Kalman Filter for Mobile Robot Localization Measurement Prediction • In the next step we use the predicted robot position • and the map M(k) to generate multiple predicted observations zt – what you should see They have to be transformed into the sensor frame • We can now define the measurement prediction as the set containing all ni predicted observations • The function hi is mainly the coordinate transformation • between the world frame and the sensor frame Need to compute the measurement Jacobian to also predict uncertainties

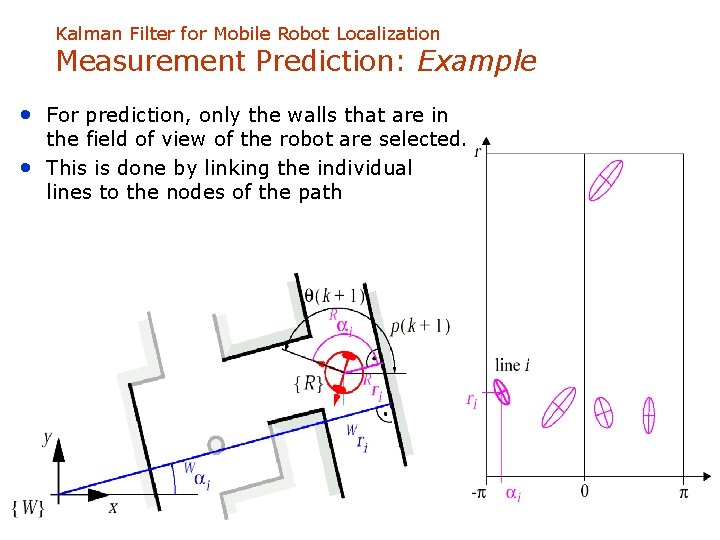

Kalman Filter for Mobile Robot Localization Measurement Prediction: Example • For prediction, only the walls that are in • the field of view of the robot are selected. This is done by linking the individual lines to the nodes of the path

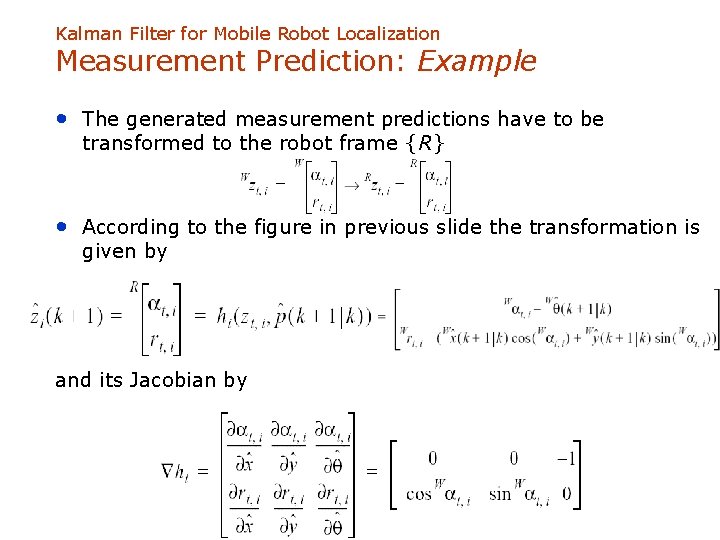

Kalman Filter for Mobile Robot Localization Measurement Prediction: Example • The generated measurement predictions have to be transformed to the robot frame {R} • According to the figure in previous slide the transformation is given by and its Jacobian by

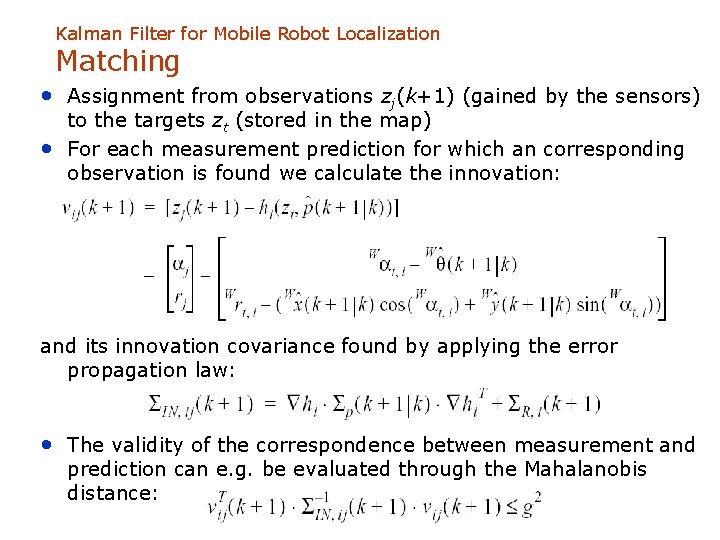

Kalman Filter for Mobile Robot Localization Matching • Assignment from observations zj(k+1) (gained by the sensors) • to the targets zt (stored in the map) For each measurement prediction for which an corresponding observation is found we calculate the innovation: and its innovation covariance found by applying the error propagation law: • The validity of the correspondence between measurement and prediction can e. g. be evaluated through the Mahalanobis distance:

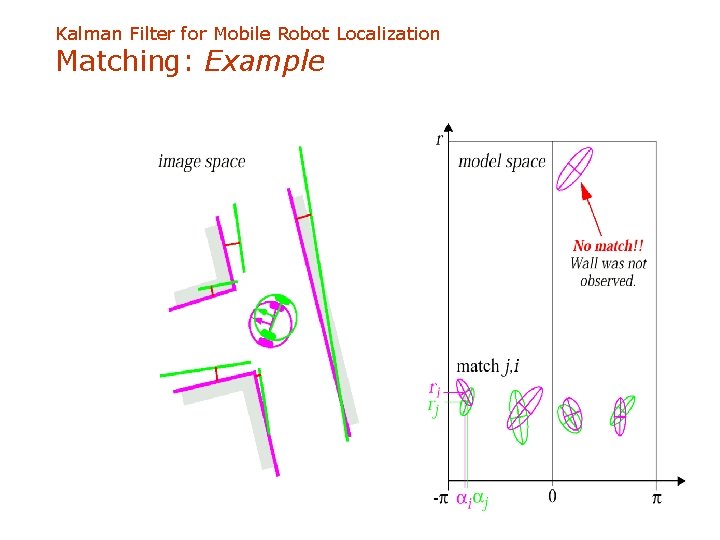

Kalman Filter for Mobile Robot Localization Matching: Example

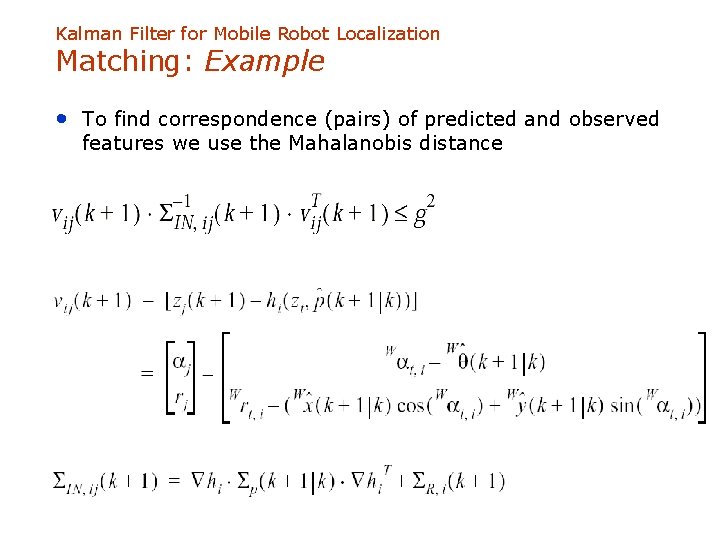

Kalman Filter for Mobile Robot Localization Matching: Example • To find correspondence (pairs) of predicted and observed features we use the Mahalanobis distance with

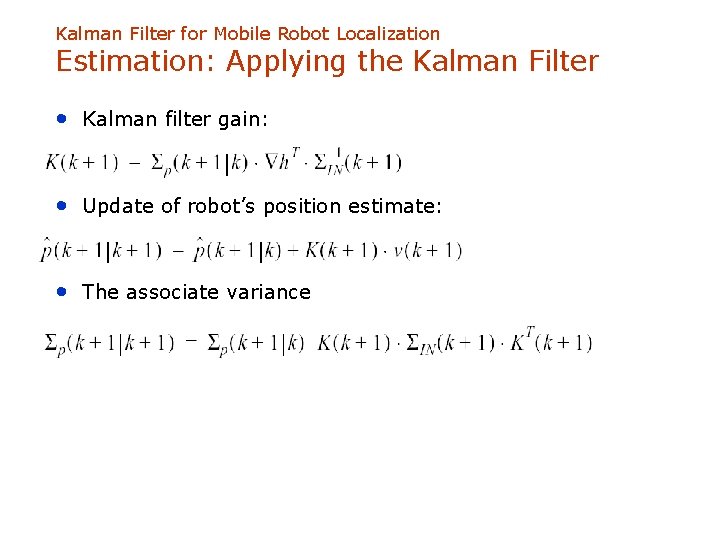

Kalman Filter for Mobile Robot Localization Estimation: Applying the Kalman Filter • Kalman filter gain: • Update of robot’s position estimate: • The associate variance

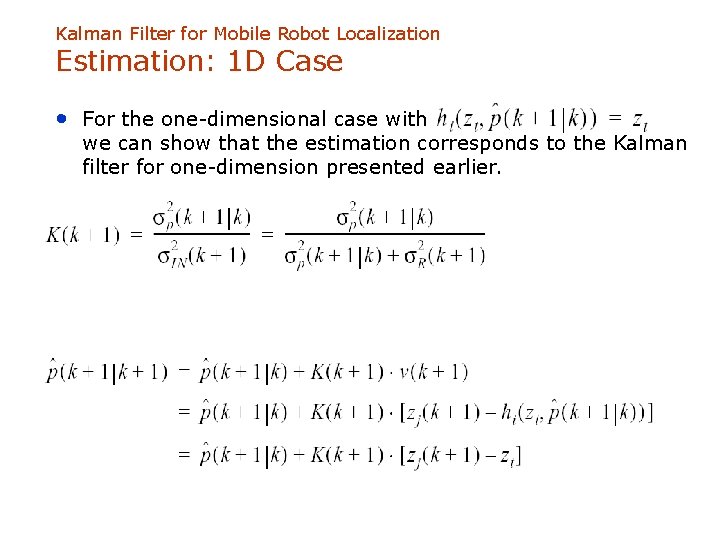

Kalman Filter for Mobile Robot Localization Estimation: 1 D Case • For the one-dimensional case with we can show that the estimation corresponds to the Kalman filter for one-dimension presented earlier.

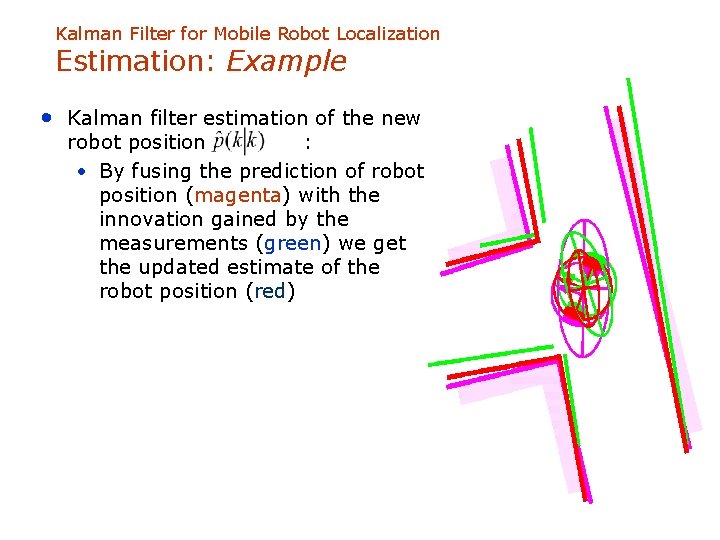

Kalman Filter for Mobile Robot Localization Estimation: Example • Kalman filter estimation of the new robot position : • By fusing the prediction of robot position (magenta) with the innovation gained by the measurements (green) we get the updated estimate of the robot position (red)

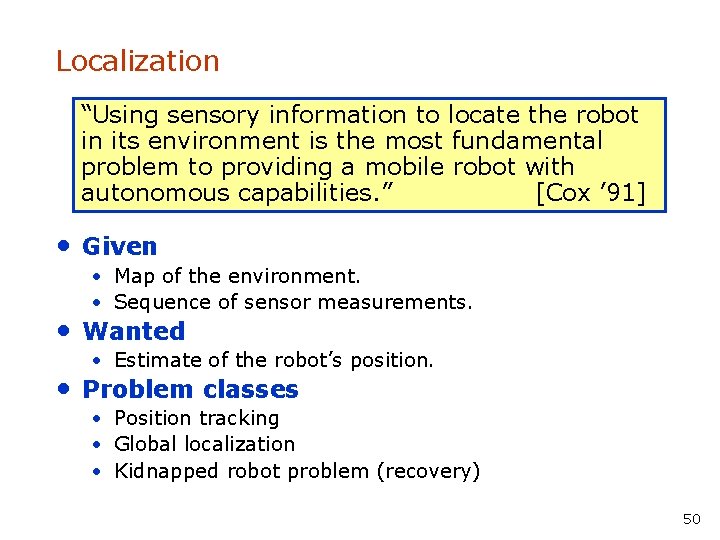

Localization “Using sensory information to locate the robot in its environment is the most fundamental problem to providing a mobile robot with autonomous capabilities. ” [Cox ’ 91] • Given • Map of the environment. • Sequence of sensor measurements. • Wanted • Estimate of the robot’s position. • Problem classes • Position tracking • Global localization • Kidnapped robot problem (recovery) 50

Landmark-based Localization 51

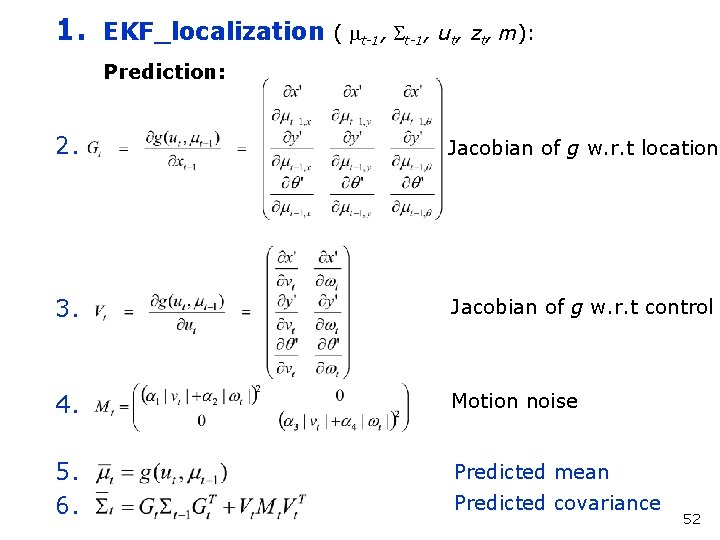

1. EKF_localization ( mt-1, St-1, ut, zt, m): Prediction: 2. Jacobian of g w. r. t location 3. Jacobian of g w. r. t control 4. Motion noise 5. 6. Predicted mean Predicted covariance 52

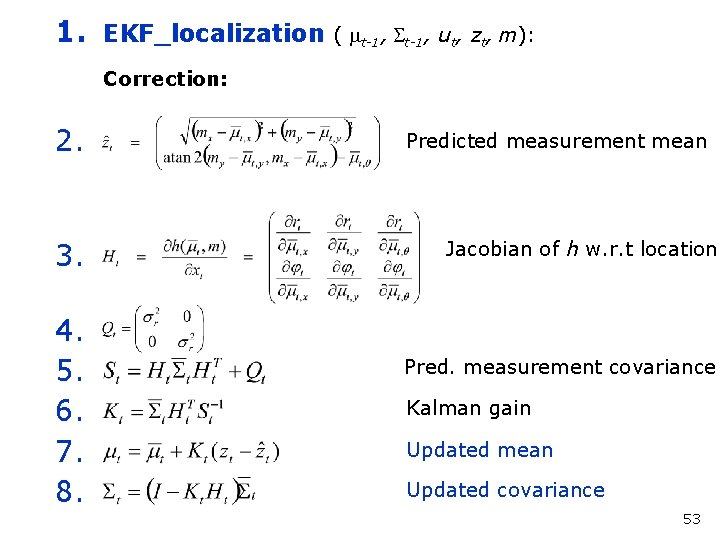

1. EKF_localization ( mt-1, St-1, ut, zt, m): Correction: 2. 3. 4. 5. 6. 7. 8. Predicted measurement mean Jacobian of h w. r. t location Pred. measurement covariance Kalman gain Updated mean Updated covariance 53

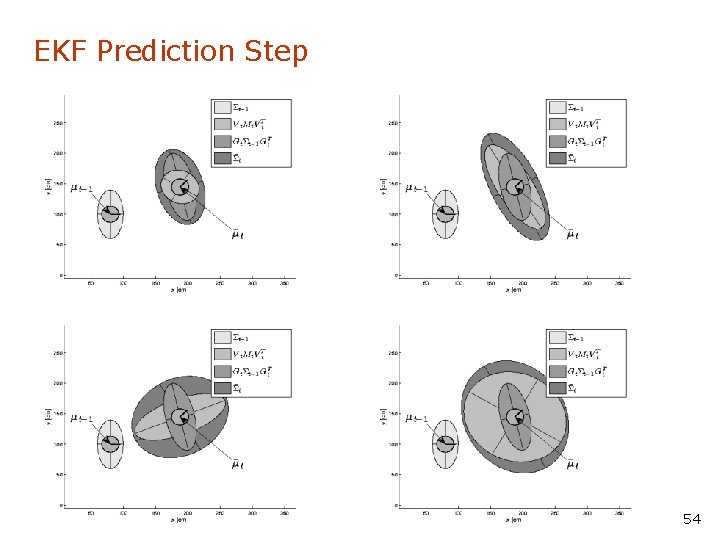

EKF Prediction Step 54

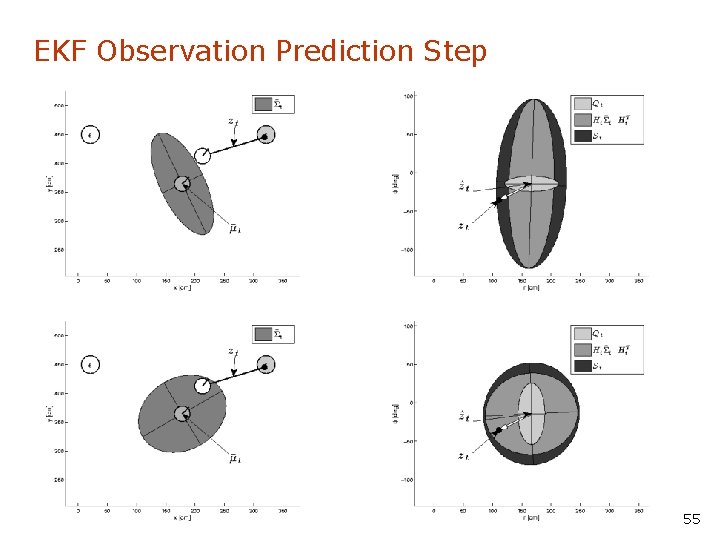

EKF Observation Prediction Step 55

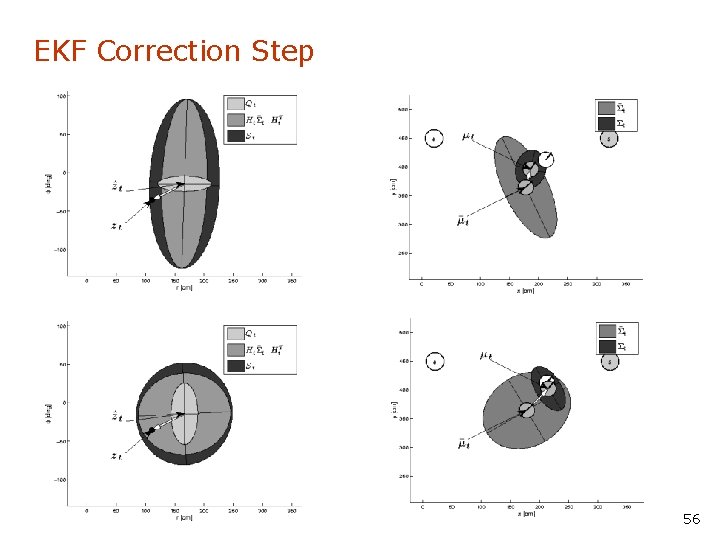

EKF Correction Step 56

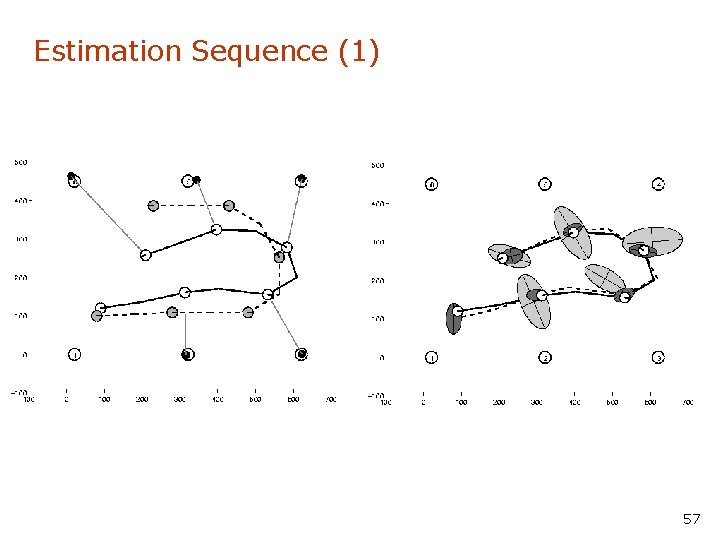

Estimation Sequence (1) 57

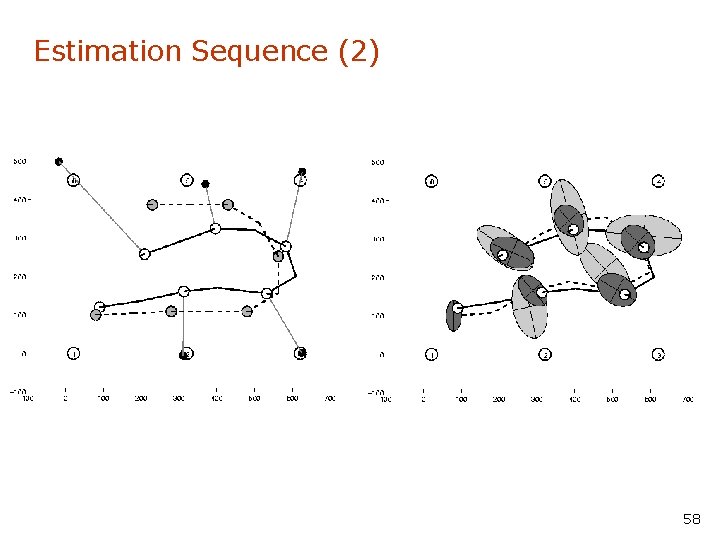

Estimation Sequence (2) 58

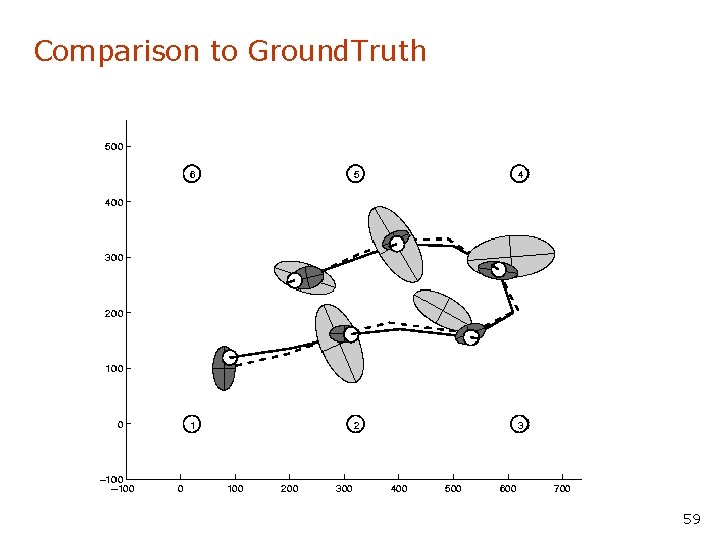

Comparison to Ground. Truth 59

EKF Summary • Highly efficient: Polynomial in measurement dimensionality k and state dimensionality n: O(k 2. 376 + n 2) • Not optimal! • Can diverge if nonlinearities are large! • Works surprisingly well even when all assumptions are violated! 60

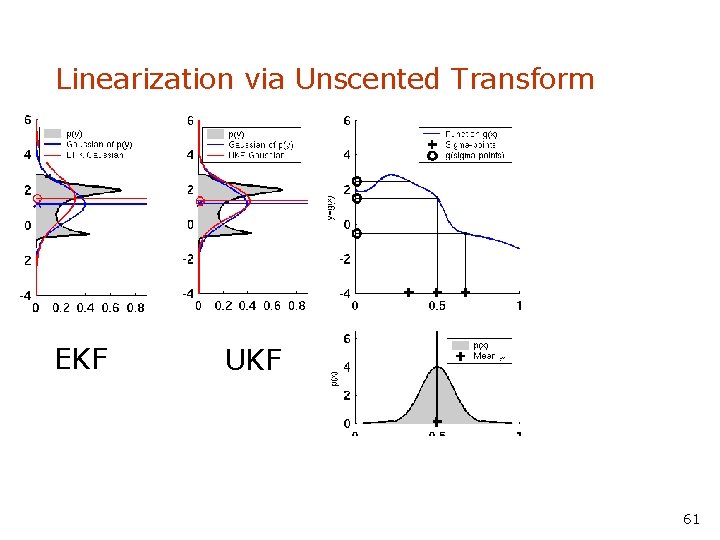

Linearization via Unscented Transform EKF UKF 61

![Kalman Filter-based System • [Arras et al. 98]: • Laser range-finder and vision • Kalman Filter-based System • [Arras et al. 98]: • Laser range-finder and vision •](http://slidetodoc.com/presentation_image_h2/eb18809864b0231de561af4aa2b6560c/image-60.jpg)

Kalman Filter-based System • [Arras et al. 98]: • Laser range-finder and vision • High precision (<1 cm accuracy) [Courtesy of Kai Arras] 76

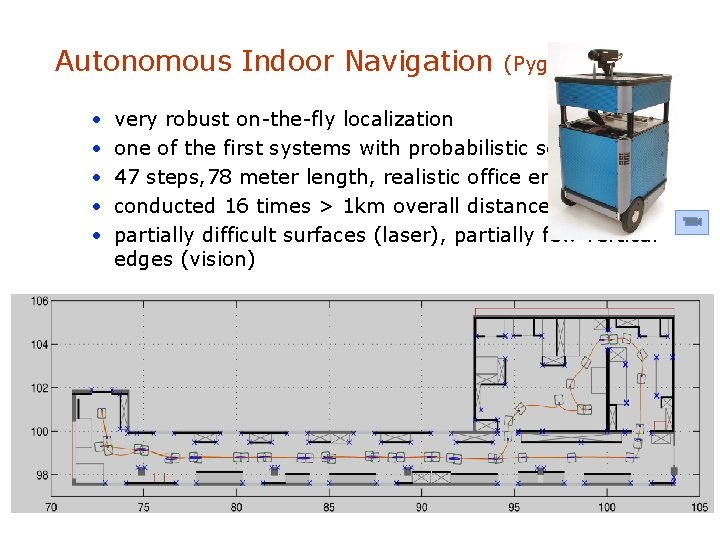

Autonomous Indoor Navigation • • • (Pygmalion EPFL) very robust on-the-fly localization one of the first systems with probabilistic sensor fusion 47 steps, 78 meter length, realistic office environment, conducted 16 times > 1 km overall distance partially difficult surfaces (laser), partially few vertical edges (vision)

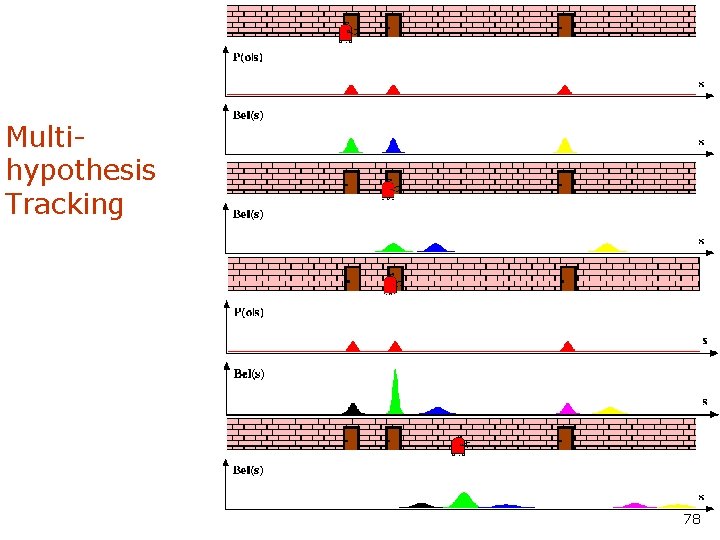

Multihypothesis Tracking 78

Localization With MHT • Belief is represented by multiple hypotheses • Each hypothesis is tracked by a Kalman filter • Additional problems: • Data association: Which observation corresponds to which hypothesis? • Hypothesis management: When to add / delete hypotheses? • Huge body of literature on target tracking, motion correspondence etc. 79

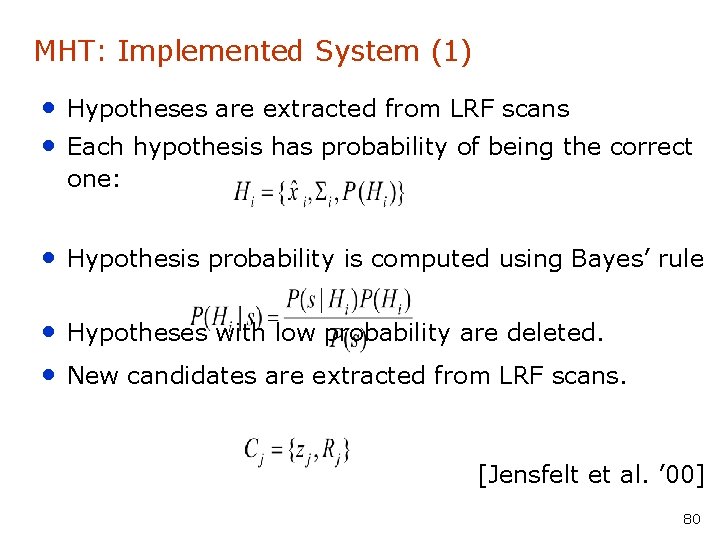

MHT: Implemented System (1) • Hypotheses are extracted from LRF scans • Each hypothesis has probability of being the correct one: • Hypothesis probability is computed using Bayes’ rule • Hypotheses with low probability are deleted. • New candidates are extracted from LRF scans. [Jensfelt et al. ’ 00] 80

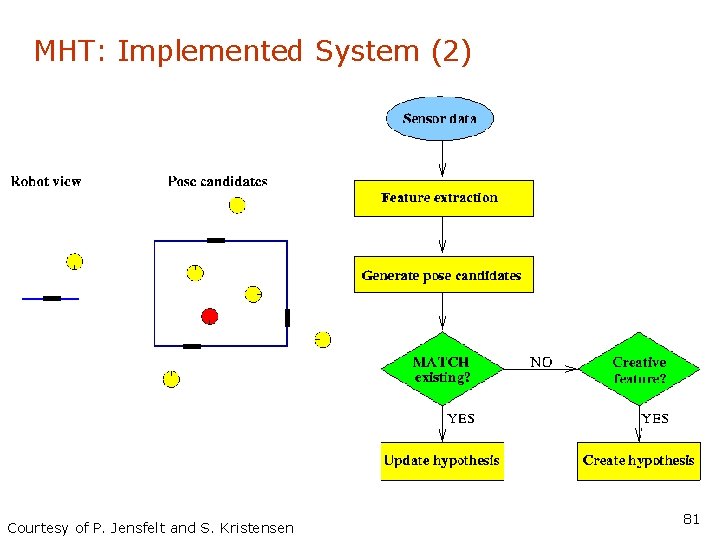

MHT: Implemented System (2) Courtesy of P. Jensfelt and S. Kristensen 81

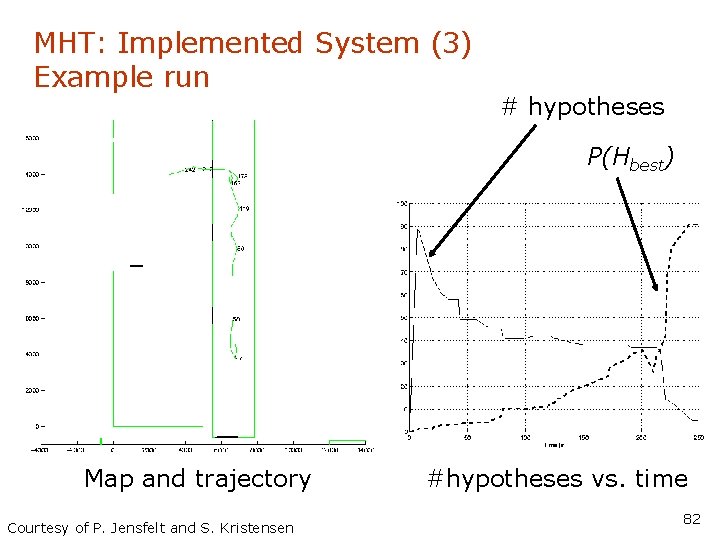

MHT: Implemented System (3) Example run # hypotheses P(Hbest) Map and trajectory Courtesy of P. Jensfelt and S. Kristensen #hypotheses vs. time 82

- Slides: 66