Probabilistic Reasoning with Uncertain Data Yun Peng and

Probabilistic Reasoning with Uncertain Data Yun Peng and Zhongli Ding, Rong Pan, Shenyong Zhang

Uncertain Evidences • Causes for uncertainty of evidence – – • Observation error Unable to observe the precise state the world is in Two types of uncertain evidences – – Virtual evidence: evidence with uncertainty I’m not sure about my observation that A = a 1 Soft evidence: evidence of uncertainty I cannot observe the state of A but have observed the distribution of A as P(A) = (0. 7, 0. 3)

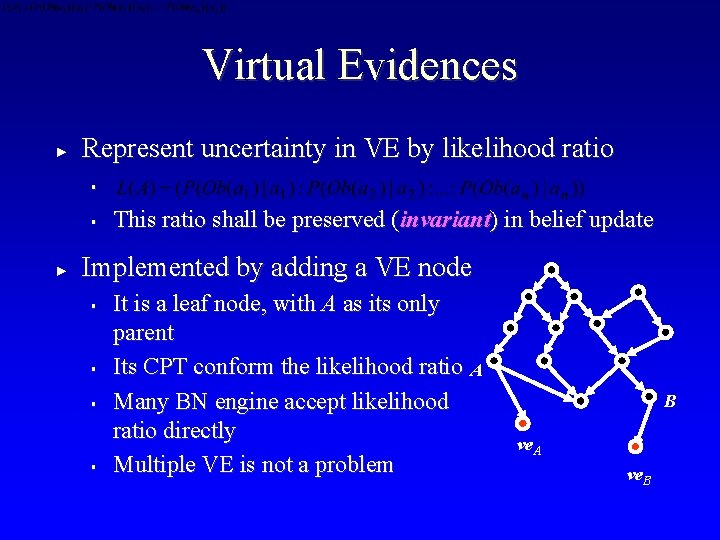

Virtual Evidences ► Represent uncertainty in VE by likelihood ratio § § ► This ratio shall be preserved (invariant) in belief update Implemented by adding a VE node § § It is a leaf node, with A as its only parent Its CPT conform the likelihood ratio A Many BN engine accept likelihood ratio directly Multiple VE is not a problem B ve. A ve. B

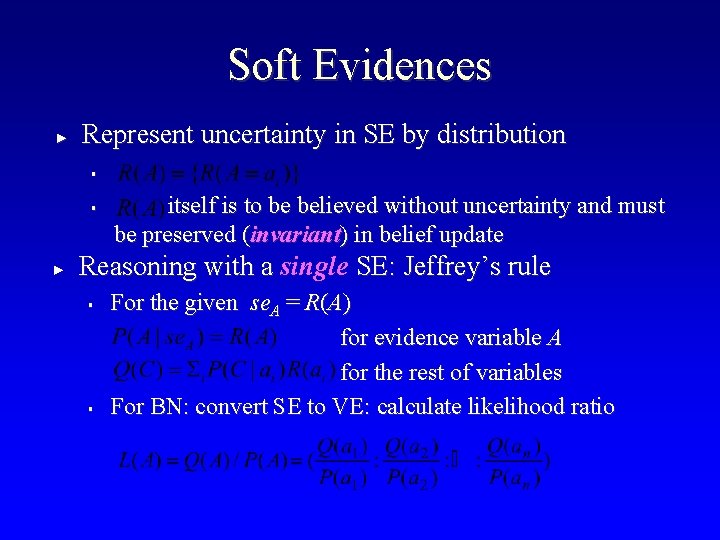

Soft Evidences ► Represent uncertainty in SE by distribution § § ► itself is to be believed without uncertainty and must be preserved (invariant) in belief update Reasoning with a single SE: Jeffrey’s rule § § For the given se. A = R(A) for evidence variable A for the rest of variables For BN: convert SE to VE: calculate likelihood ratio

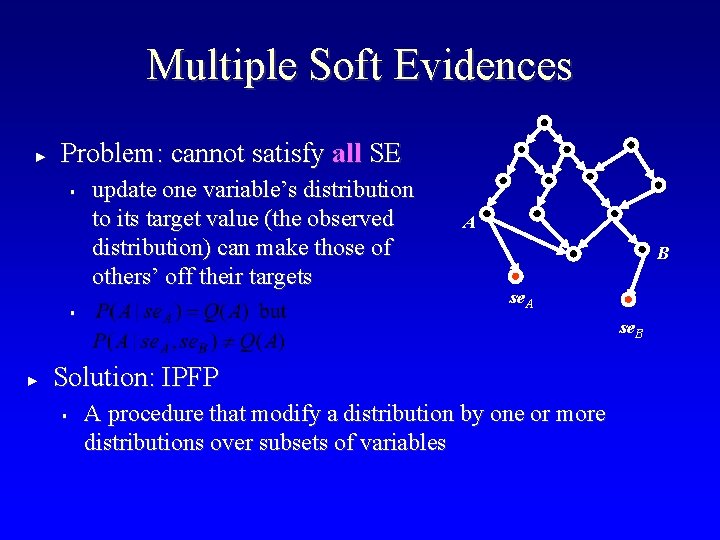

Multiple Soft Evidences ► Problem: cannot satisfy all SE § update one variable’s distribution to its target value (the observed distribution) can make those of others’ off their targets § ► A B se. A se. B Solution: IPFP § A procedure that modify a distribution by one or more distributions over subsets of variables

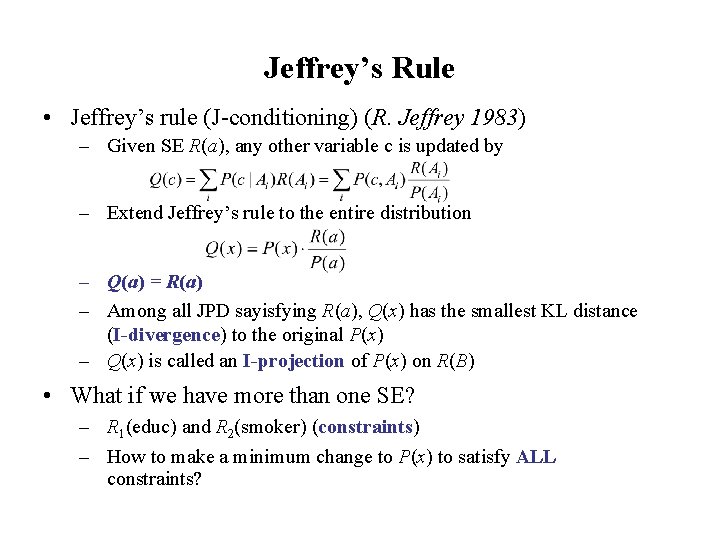

Jeffrey’s Rule • Jeffrey’s rule (J-conditioning) (R. Jeffrey 1983) – Given SE R(a), any other variable c is updated by – Extend Jeffrey’s rule to the entire distribution – Q(a) = R(a) – Among all JPD sayisfying R(a), Q(x) has the smallest KL distance (I-divergence) to the original P(x) – Q(x) is called an I-projection of P(x) on R(B) • What if we have more than one SE? – R 1(educ) and R 2(smoker) (constraints) – How to make a minimum change to P(x) to satisfy ALL constraints?

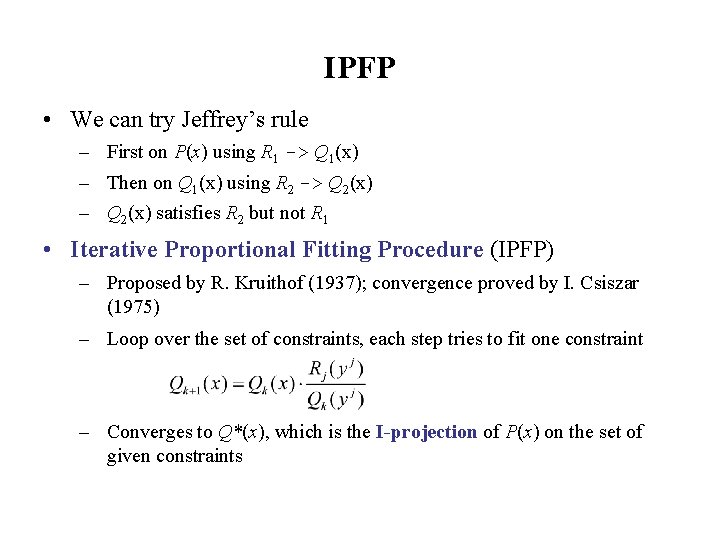

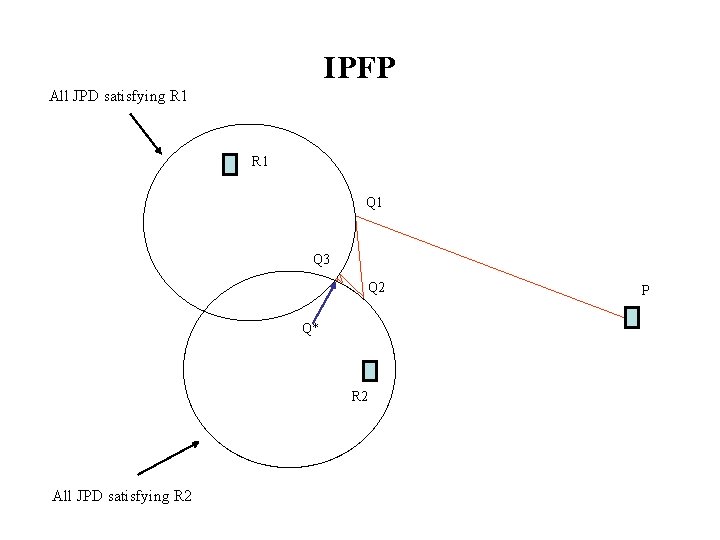

IPFP • We can try Jeffrey’s rule – First on P(x) using R 1 -> Q 1(x) – Then on Q 1(x) using R 2 -> Q 2(x) – Q 2(x) satisfies R 2 but not R 1 • Iterative Proportional Fitting Procedure (IPFP) – Proposed by R. Kruithof (1937); convergence proved by I. Csiszar (1975) – Loop over the set of constraints, each step tries to fit one constraint – Converges to Q*(x), which is the I-projection of P(x) on the set of given constraints

IPFP All JPD satisfying R 1 Q 1 Q 3 Q 2 Q* R 2 All JPD satisfying R 2 P

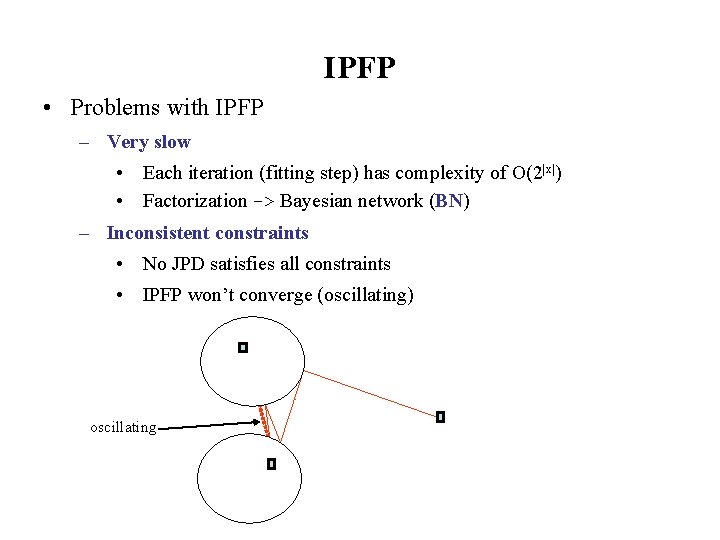

IPFP • Problems with IPFP – Very slow • Each iteration (fitting step) has complexity of O(2|x|) • Factorization -> Bayesian network (BN) – Inconsistent constraints • No JPD satisfies all constraints • IPFP won’t converge (oscillating) oscillating

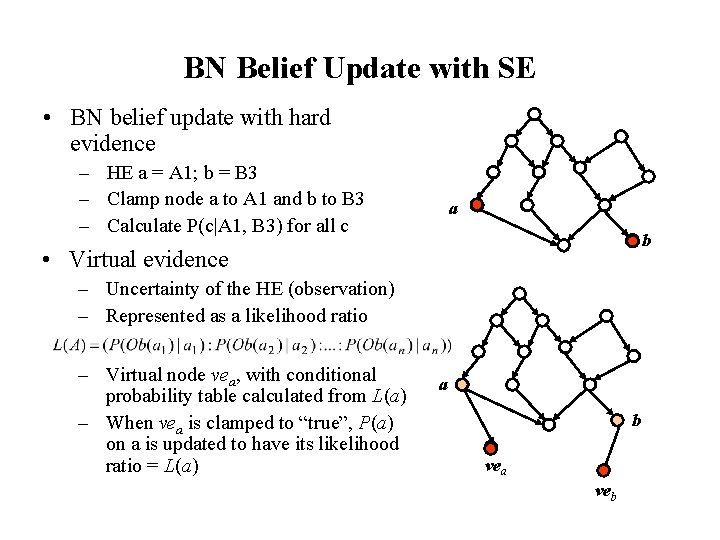

BN Belief Update with SE • BN belief update with hard evidence – HE a = A 1; b = B 3 – Clamp node a to A 1 and b to B 3 – Calculate P(c|A 1, B 3) for all c a b • Virtual evidence – Uncertainty of the HE (observation) – Represented as a likelihood ratio – Virtual node vea, with conditional probability table calculated from L(a) – When vea is clamped to “true”, P(a) on a is updated to have its likelihood ratio = L(a) a b vea veb

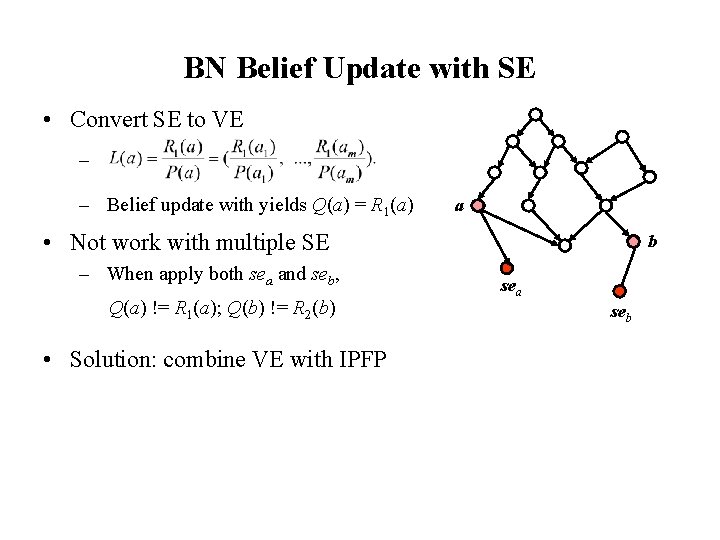

BN Belief Update with SE • Convert SE to VE – – Belief update with yields Q(a) = R 1(a) a • Not work with multiple SE – When apply both sea and seb, Q(a) != R 1(a); Q(b) != R 2(b) • Solution: combine VE with IPFP b sea seb

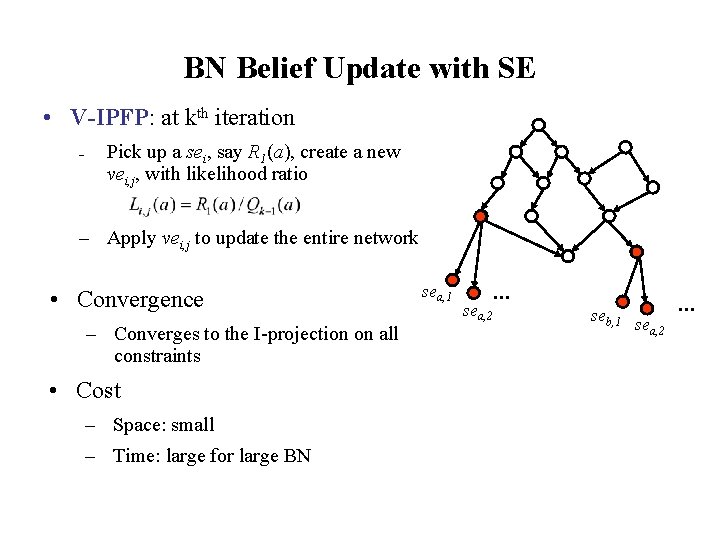

BN Belief Update with SE • V-IPFP: at kth iteration – Pick up a sei, say R 1(a), create a new vei, j, with likelihood ratio – Apply vei, j to update the entire network • Convergence – Converges to the I-projection on all constraints • Cost – Space: small – Time: large for large BN sea, 1 sea, 2 … seb, 1 sea, 2 …

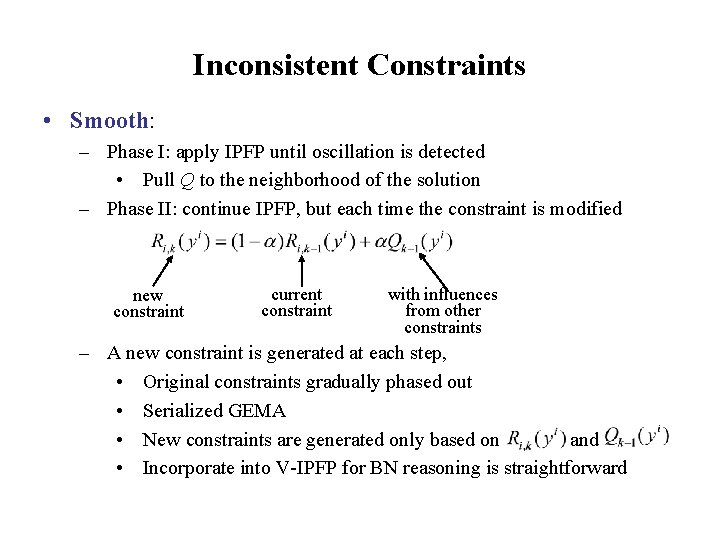

Inconsistent Constraints • Smooth: – Phase I: apply IPFP until oscillation is detected • Pull Q to the neighborhood of the solution – Phase II: continue IPFP, but each time the constraint is modified new constraint current constraint with influences from other constraints – A new constraint is generated at each step, • Original constraints gradually phased out • Serialized GEMA • New constraints are generated only based on and • Incorporate into V-IPFP for BN reasoning is straightforward

BN Learning with Uncertain Data • Modify BN by a set of low dimensional PD (constraints) – Approach 1: • • • Compute the JPD P(x) from BN, Modify P(x) to Q*(x) by constraints using IPFP Construct a new BN from Q*(x) (it may have different structure that the original BN – Our approach: • • Keep BN structure unchanged, only modify the CPTs Developed a localized version of IPFP – Next step: • • • Dealing with inconsistency Change structure (minimum necessary) Learning both structure and CPT with mixed data (samples as low dimensional PDs)

Remarks • Wide potential applications – Probabilistic resources are all over the places (survey data, databases, probabilistic knowledge bases of different kinds) – This line of research may lead to effective ways to connect them • Problems with the IPFP based approaches – Computationally expensive – Hard to do mathematical proofs References: [1] [2] [3] Peng, Y. , Zhang, S. , Pan, R. : “Bayesian Network Reasoning with Uncertain Evidences”, International Journal of Uncertainty, Fuzziness and Knowledge-Based Systems, 18 (5), 539564, 2010 Pan, R. , Peng, Y. , and Ding, Z: “Belief Update in Bayesian Networks Using Uncertain Evidence”, in Proceedings of the IEEE International Conference on Tools with Artificial Intelligence (ICTAI-2006), Washington, DC, 13 – 15, Nov. 2006. Peng, Y. and Ding, Z. : “Modifying Bayesian Networks by Probability Constraints”, in Proceedings of 21 st Conference on Uncertainty in Artificial Intelligence (UAI-2005), Edinburgh, Scotland, July 26 -29, 2005

- Slides: 15