Probabilistic Reasoning Ch 14 Bayes Networks Part 1

![Probabilistic Reasoning [Ch. 14] • Bayes Networks – Part 1 ◦ Syntax ◦ Semantics Probabilistic Reasoning [Ch. 14] • Bayes Networks – Part 1 ◦ Syntax ◦ Semantics](https://slidetodoc.com/presentation_image_h/2865e9fbdc5418a6e5a6ab695b76aa50/image-1.jpg)

Probabilistic Reasoning [Ch. 14] • Bayes Networks – Part 1 ◦ Syntax ◦ Semantics ◦ Parameterized distributions • Inference – Part 2 ◦ Exact inference by enumeration ◦ Exact inference by variable elimination ◦ Approximate inference by stochastic simulation ◦ Approximate inference by Markov chain Monte Carlo

Bayesian networks A simple, graphical notation for conditional independence assertions and hence for compact specification of full joint distributions • Syntax: • ◦ a set of nodes, one per variable ◦ a directed, acyclic graph (link ≈ “directly influences”) ◦ a conditional distribution for each node given its parents: P(X i |Parents(X i )) • In the simplest case, conditional distribution represented as a conditional probability table (CPT) giving the distribution over X i for each combination of parent values

Bayes’ Nets • A Bayes’ net is an efficient encoding of a probabilistic model of a domain • Questions we can ask: ◦ Inference: given a fixed BN, what is P(X | e)? ◦ Representation: given a BN graph, what kinds of distributions can it encode? ◦ Modeling: what BN is most appropriate for a given domain?

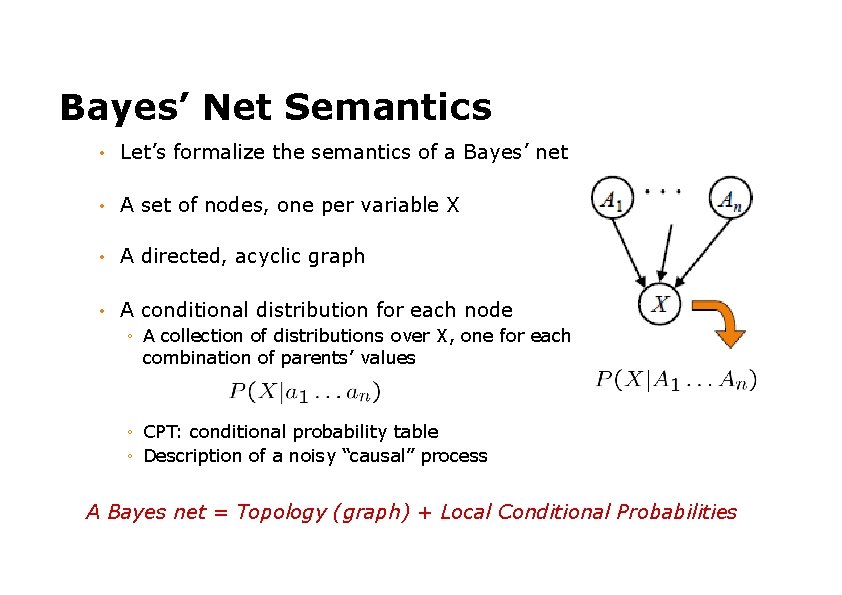

Bayes’ Net Semantics • Let’s formalize the semantics of a Bayes’ net • A set of nodes, one per variable X • A directed, acyclic graph • A conditional distribution for each node ◦ A collection of distributions over X, one for each combination of parents’ values ◦ CPT: conditional probability table ◦ Description of a noisy “causal” process A Bayes net = Topology (graph) + Local Conditional Probabilities

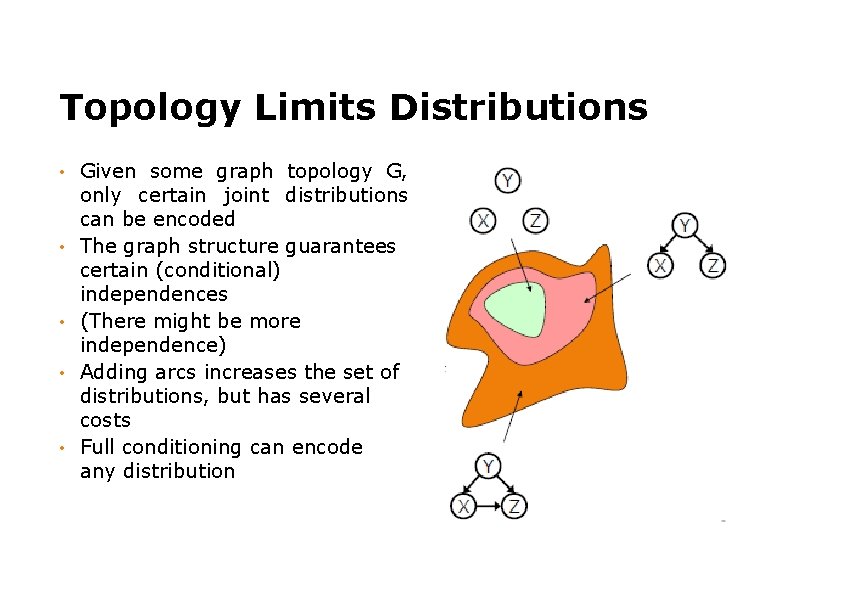

Topology Limits Distributions • • • Given some graph topology G, only certain joint distributions can be encoded The graph structure guarantees certain (conditional) independences (There might be more independence) Adding arcs increases the set of distributions, but has several costs Full conditioning can encode any distribution

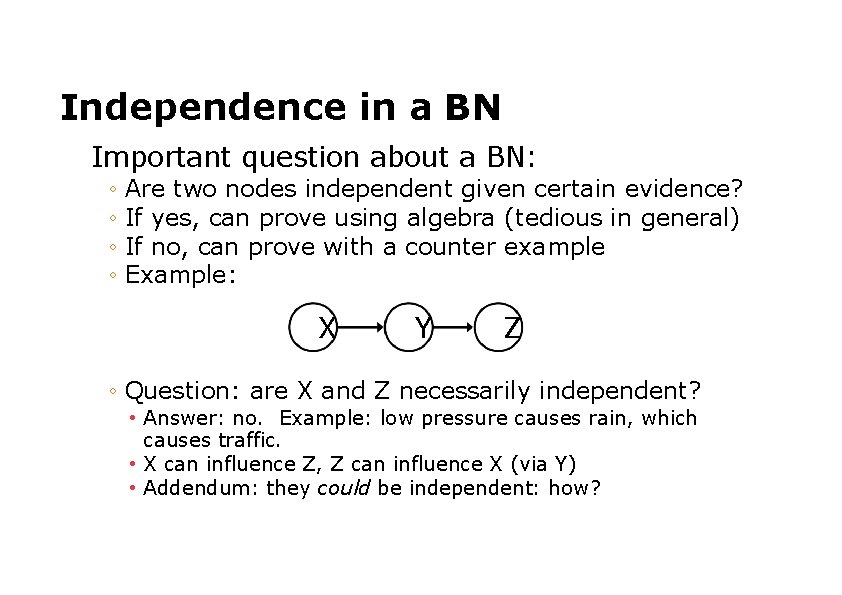

Independence in a BN Important question about a BN: ◦ Are two nodes independent given certain evidence? ◦ If yes, can prove using algebra (tedious in general) ◦ If no, can prove with a counter example ◦ Example: X Y Z ◦ Question: are X and Z necessarily independent? • Answer: no. Example: low pressure causes rain, which causes traffic. • X can influence Z, Z can influence X (via Y) • Addendum: they could be independent: how?

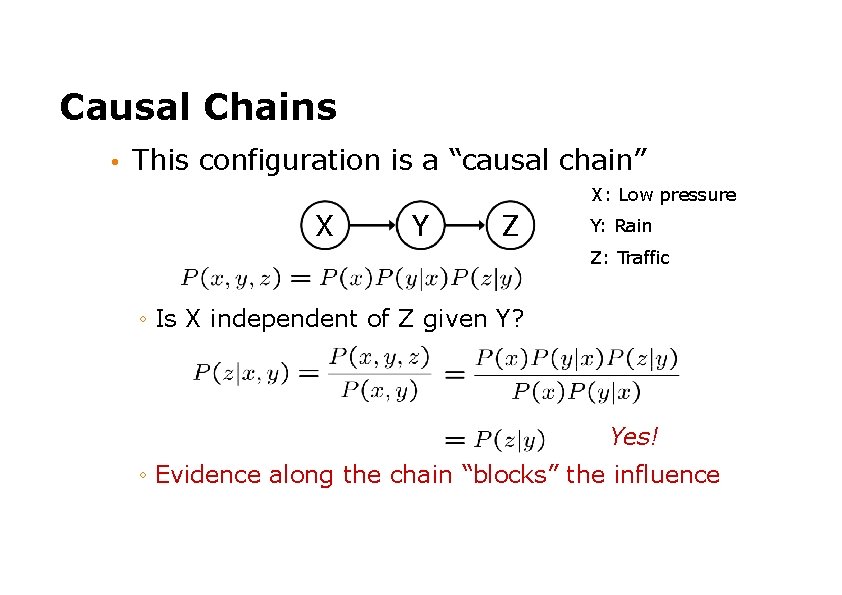

Causal Chains • This configuration is a “causal chain” X: Low pressure X Y Z Y: Rain Z: Traffic ◦ Is X independent of Z given Y? Yes! ◦ Evidence along the chain “blocks” the influence

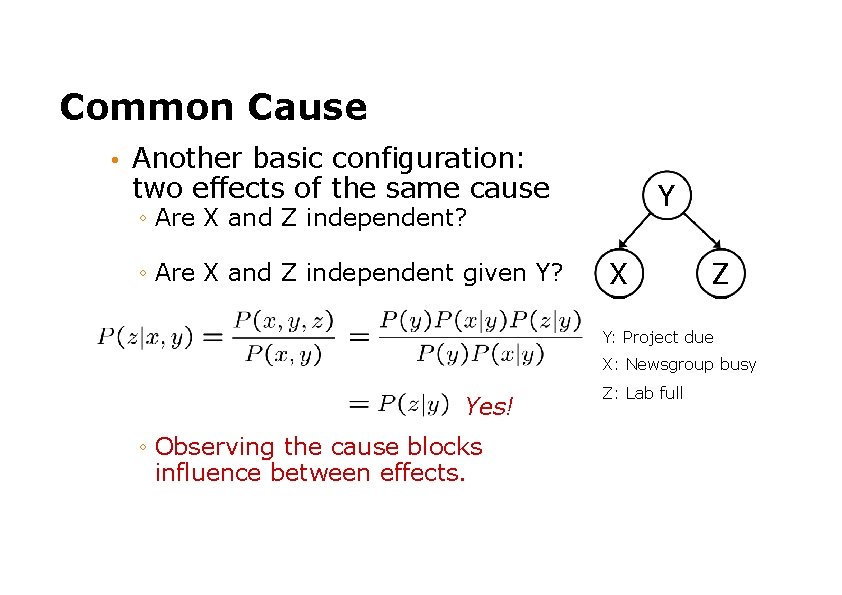

Common Cause • Another basic configuration: two effects of the same cause Y ◦ Are X and Z independent? ◦ Are X and Z independent given Y? X Z Y: Project due X: Newsgroup busy Yes! ◦ Observing the cause blocks influence between effects. Z: Lab full

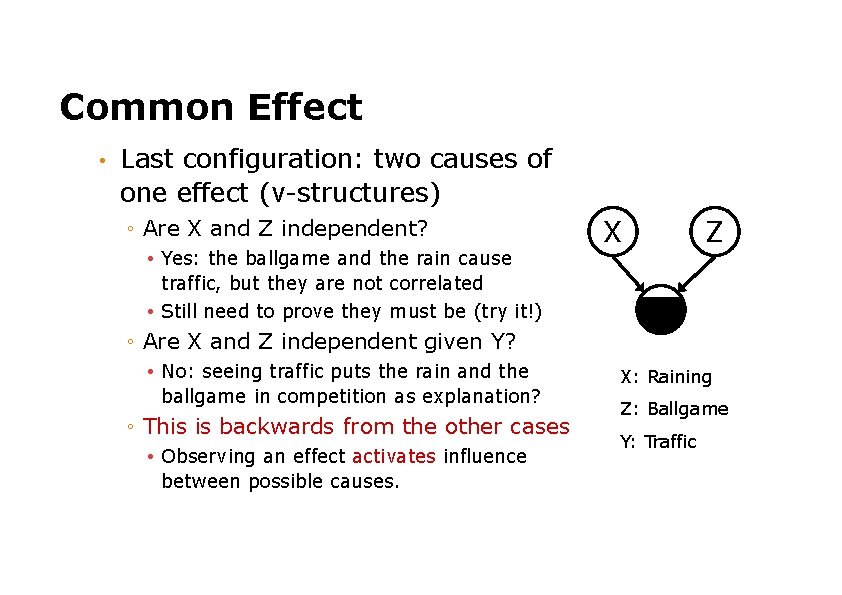

Common Effect • Last configuration: two causes of one effect (v-structures) ◦ Are X and Z independent? • Yes: the ballgame and the rain cause traffic, but they are not correlated • Still need to prove they must be (try it!) X Z Y ◦ Are X and Z independent given Y? • No: seeing traffic puts the rain and the ballgame in competition as explanation? ◦ This is backwards from the other cases • Observing an effect activates influence between possible causes. X: Raining Z: Ballgame Y: Traffic

The General Case • Any complex example can be analyzed using these three canonical cases • General question: in a given BN, are two variables independent (given evidence)? • Solution: analyze the graph

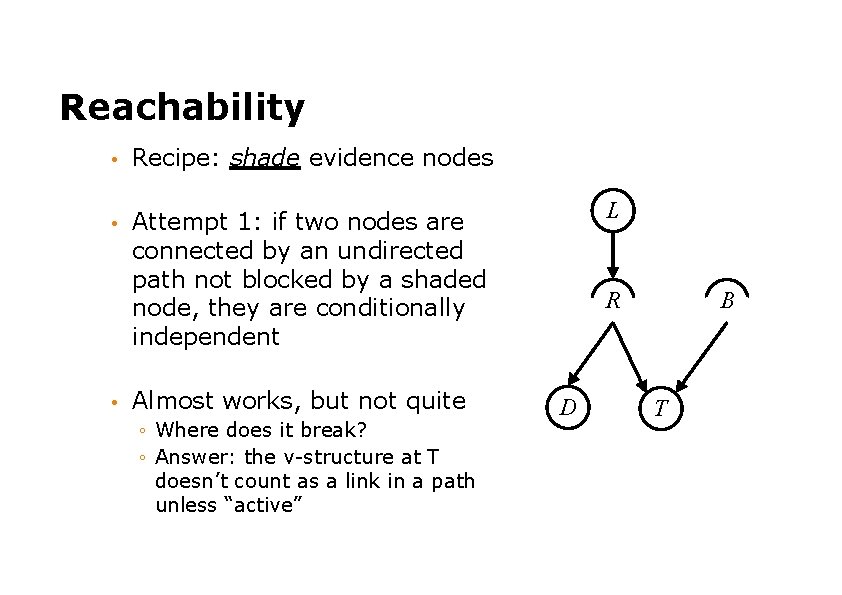

Reachability • Recipe: shade evidence nodes • Attempt 1: if two nodes are connected by an undirected path not blocked by a shaded node, they are conditionally independent • Almost works, but not quite ◦ Where does it break? ◦ Answer: the v-structure at T doesn’t count as a link in a path unless “active” L R D B T

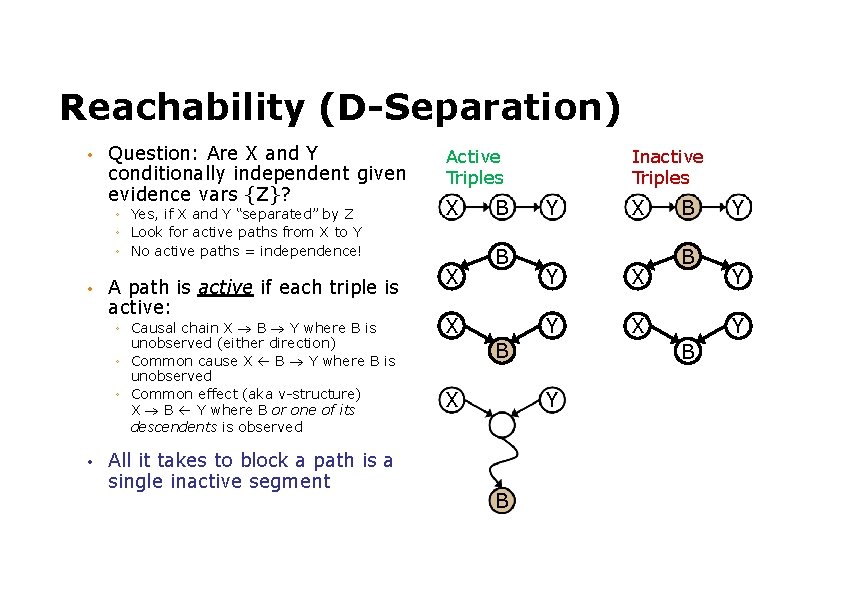

Reachability (D-Separation) • Question: Are X and Y conditionally independent given evidence vars {Z}? ◦ Yes, if X and Y “separated” by Z ◦ Look for active paths from X to Y ◦ No active paths = independence! • A path is active if each triple is active: ◦ Causal chain X B Y where B is unobserved (either direction) ◦ Common cause X B Y where B is unobserved ◦ Common effect (aka v-structure) X B Y where B or one of its descendents is observed • All it takes to block a path is a single inactive segment Active Triples X X B B X Inactive Triples Y Y X B X B B Y Y Y B X

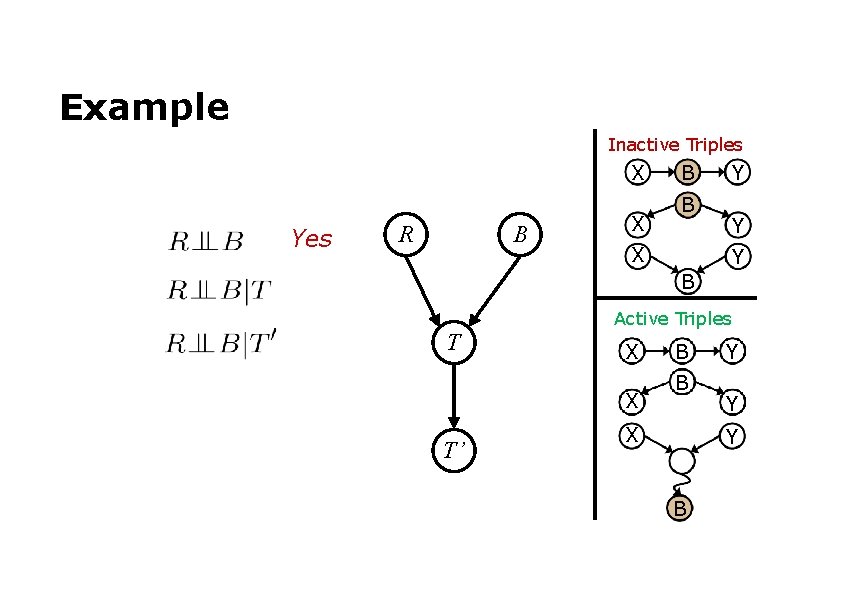

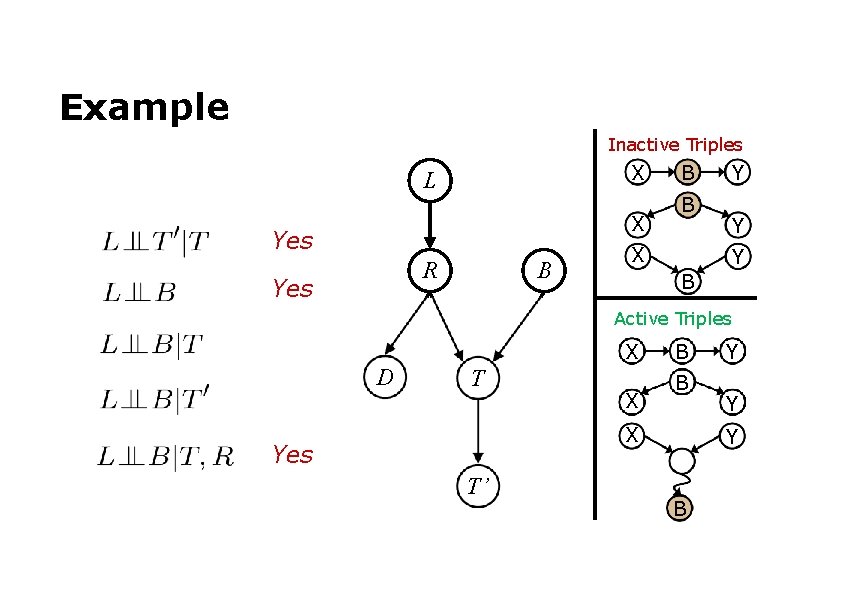

Example Inactive Triples X Yes R B X B B X Y Y Y B Active Triples T X X T’ B B X Y Y Y B

Example Inactive Triples X L X Yes R Yes B B B X Y Y Y B Active Triples X D T X B B X Yes T’ Y Y Y B

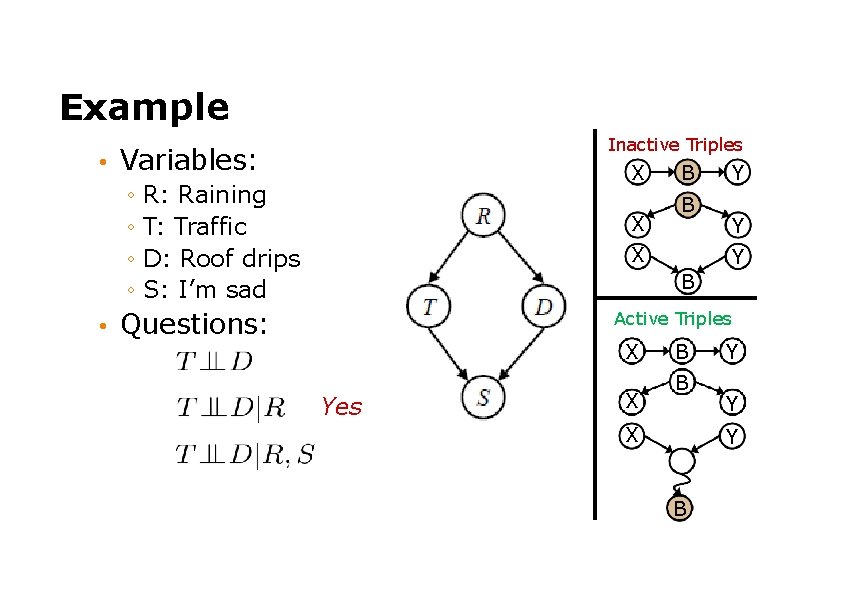

Example • Inactive Triples Variables: X ◦ R: Raining ◦ T: Traffic ◦ D: Roof drips ◦ S: I’m sad • X B B X Y Y Y B Questions: Active Triples X Yes X B B X Y Y Y B

Causality? • When Bayes’ nets reflect the true causal patterns: ◦ Often simpler (nodes have fewer parents) ◦ Often easier to think about ◦ Often easier to elicit from experts • BNs need not actually be causal ◦ Sometimes no causal net exists over the domain ◦ E. g. consider the variables Traffic and Drips ◦ End up with arrows that reflect correlation, not causation • What do the arrows really mean? ◦ Topology may happen to encode causal structure ◦ Topology only guaranteed to encode conditional independence

Changing Bayes’ Net Structure • The same joint distribution can be encoded in many different Bayes’ nets ◦ Causal structure tends to be the simplest • Analysis question: given some edges, what other edges do you need to add? ◦ One answer: fully connect the graph ◦ Better answer: don’t make any false conditional independence assumptions

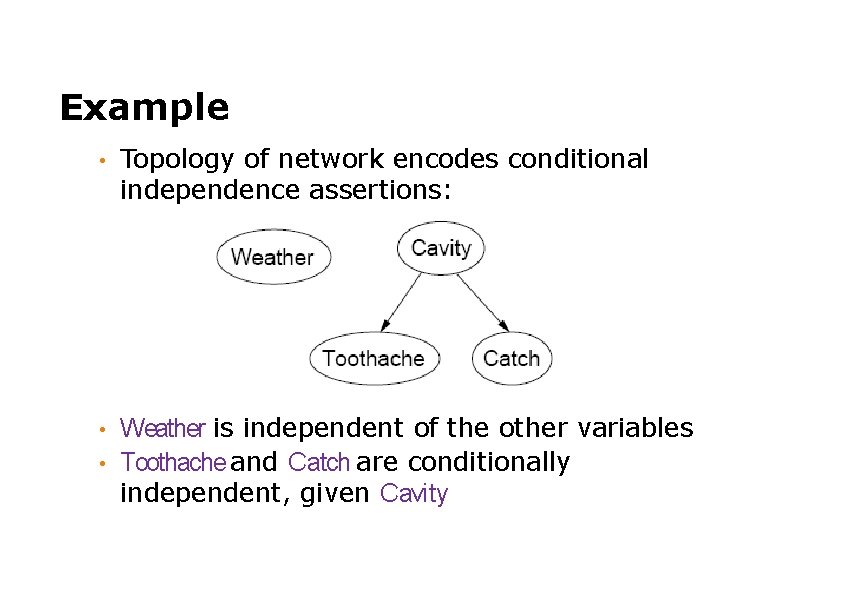

Example • Topology of network encodes conditional independence assertions: Weather is independent of the other variables • Toothache and Catch are conditionally independent, given Cavity •

Example I'm at work, neighbor John calls to say my alarm is ringing, but neighbor Mary doesn't call. Sometimes it's set off by minor earthquakes. Is there a burglar? • Variables: Burglar, E arthquake, Alarm, J ohn. Calls, M ary. Calls • Network topology reflects “causal” knowledge: • ◦ ◦ A burglar can set the alarm off An earthquake can set the alarm off The alarm can cause Mary to call The alarm can cause John to call

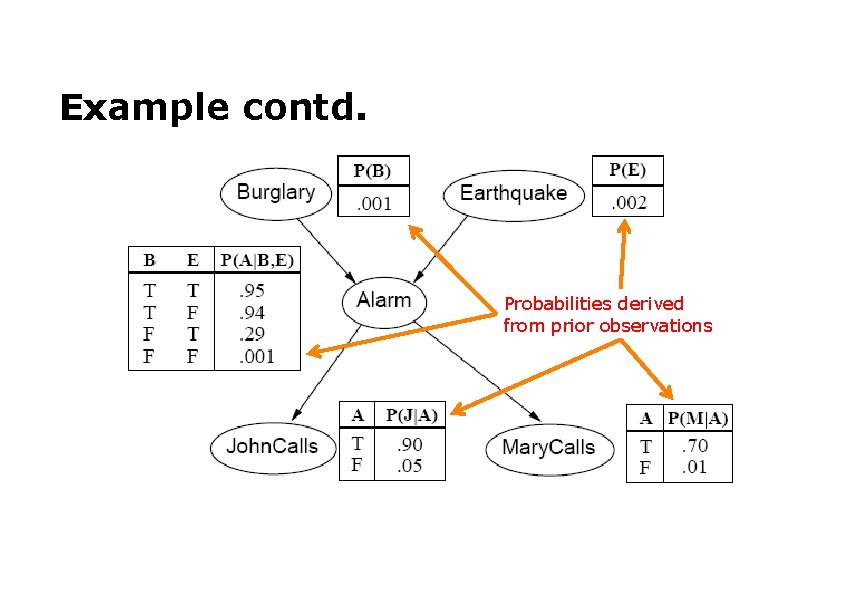

Example contd. Probabilities derived from prior observations

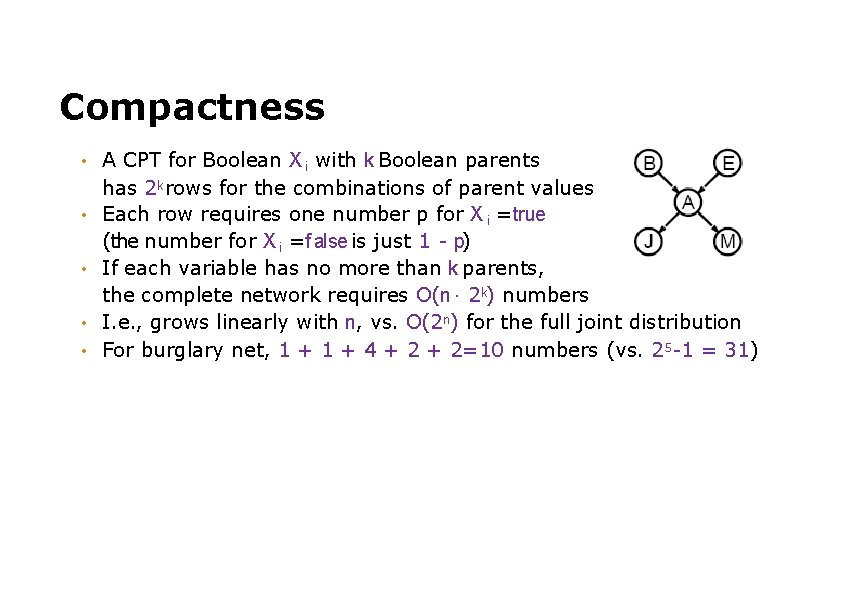

Compactness • • • A CPT for Boolean X i with k Boolean parents has 2 k rows for the combinations of parent values Each row requires one number p for X i =true (the number for X i =f alse is just 1 - p) If each variable has no more than k parents, the complete network requires O(n · 2 k) numbers I. e. , grows linearly with n, vs. O(2 n) for the full joint distribution For burglary net, 1 + 4 + 2=10 numbers (vs. 25 -1 = 31)

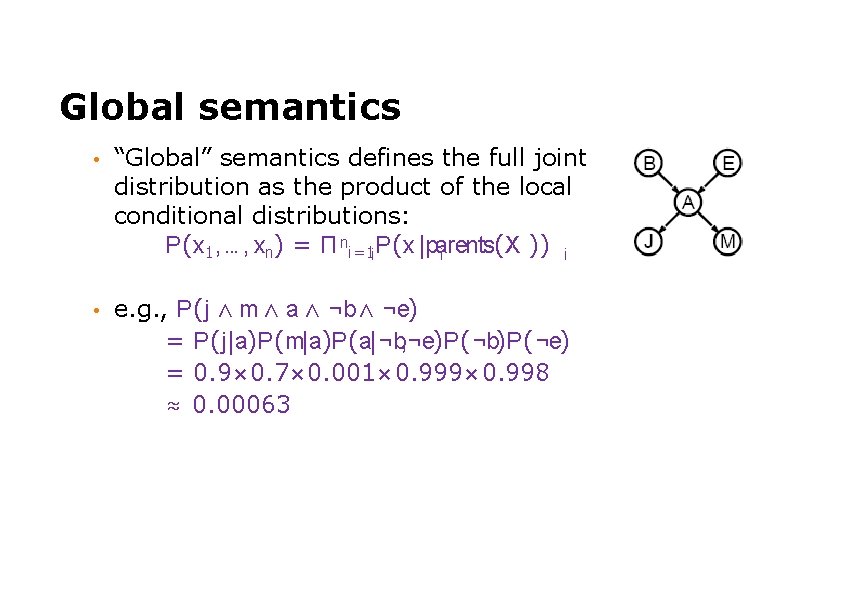

Global semantics • “Global” semantics defines the full joint distribution as the product of the local conditional distributions: P(x 1, . . . , xn) = Π ni=1 i P(x |parents(X )) i i • e. g. , P(j ∧ m ∧ a ∧ ¬b ∧ ¬e) = P(j|a)P(m|a)P(a|¬b, ¬e)P(¬b)P(¬e) = 0. 9× 0. 7× 0. 001× 0. 999× 0. 998 ≈ 0. 00063

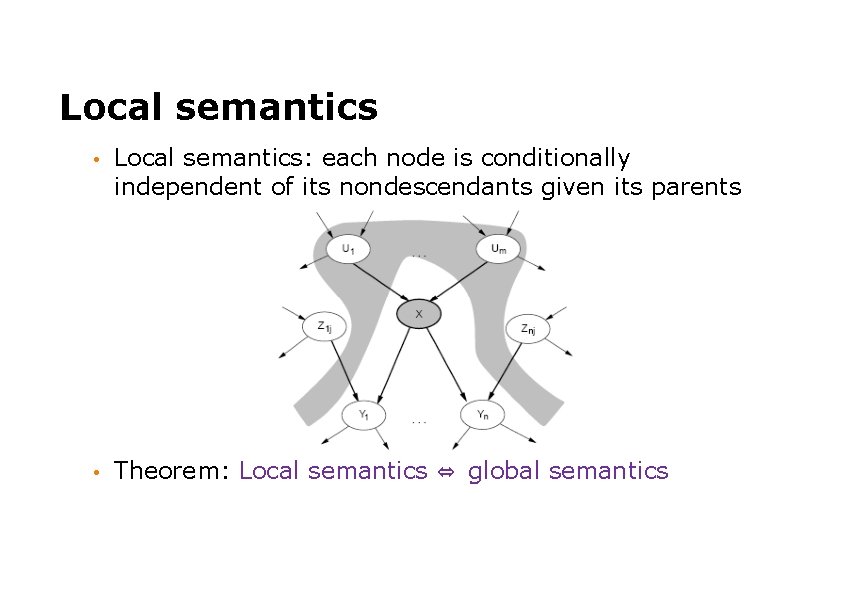

Local semantics • Local semantics: each node is conditionally independent of its nondescendants given its parents • Theorem: Local semantics ⇔ global semantics

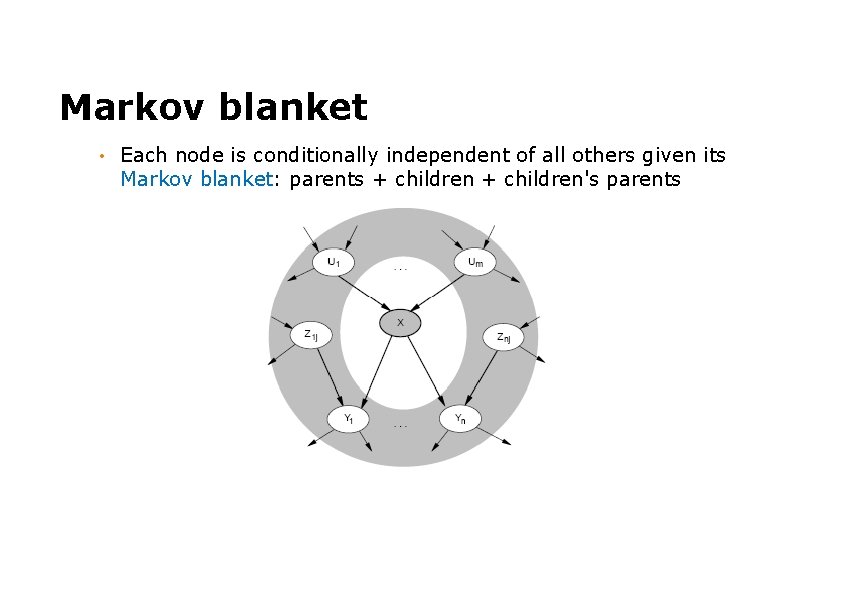

Markov blanket • Each node is conditionally independent of all others given its Markov blanket: parents + children's parents

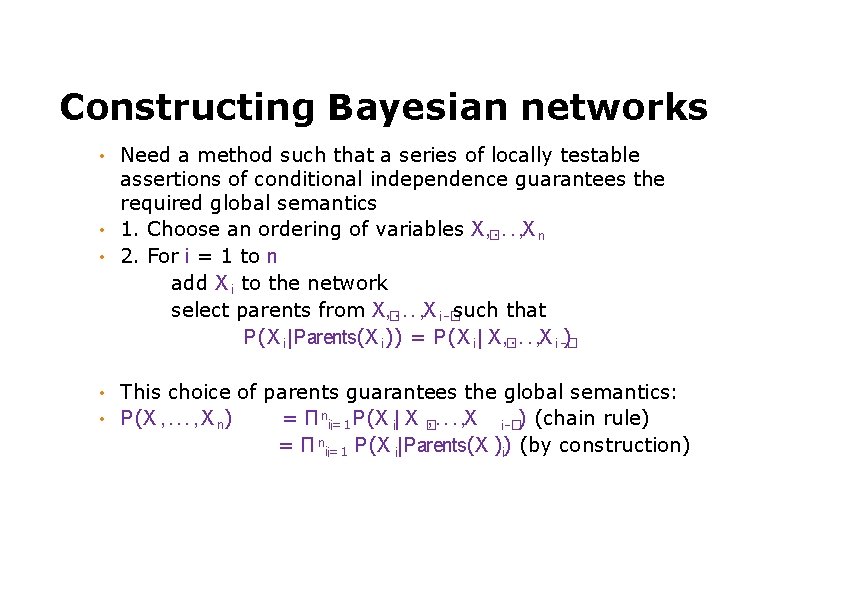

Constructing Bayesian networks Need a method such that a series of locally testable assertions of conditional independence guarantees the required global semantics • 1. Choose an ordering of variables X, �. . . , X n • 2. For i = 1 to n add X i to the network select parents from X, �. . . , X i -�such that P(X i |Parents(X i )) = P(X i | X, �. . . , X i -� ) • • • This choice of parents guarantees the global semantics: P(X , . . . , X n) = Π ni=1 , . . . , X i-�) (chain rule) i P(X i| X � = Π ni=1 P(X i|Parents(X )) i i (by construction)

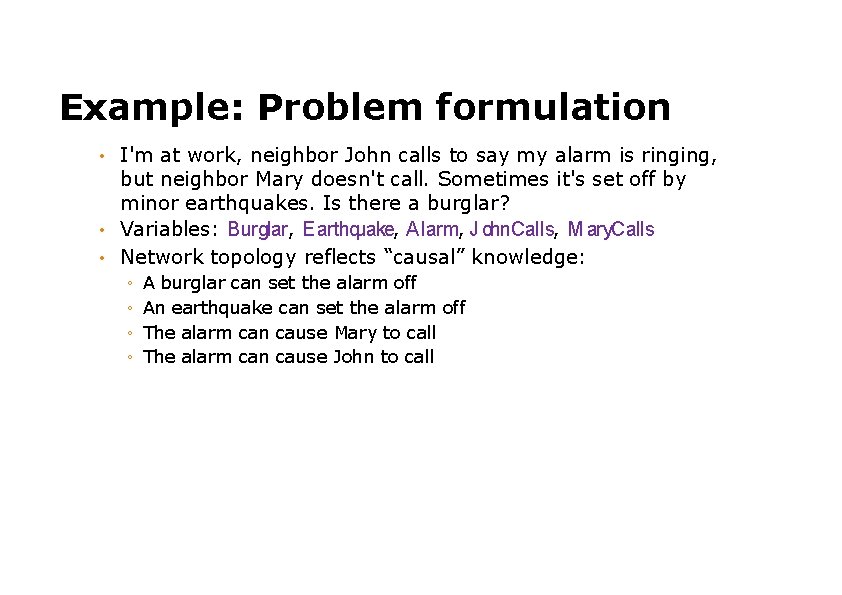

Example: Problem formulation I'm at work, neighbor John calls to say my alarm is ringing, but neighbor Mary doesn't call. Sometimes it's set off by minor earthquakes. Is there a burglar? • Variables: Burglar, E arthquake, Alarm, J ohn. Calls, M ary. Calls • Network topology reflects “causal” knowledge: • ◦ ◦ A burglar can set the alarm off An earthquake can set the alarm off The alarm can cause Mary to call The alarm can cause John to call

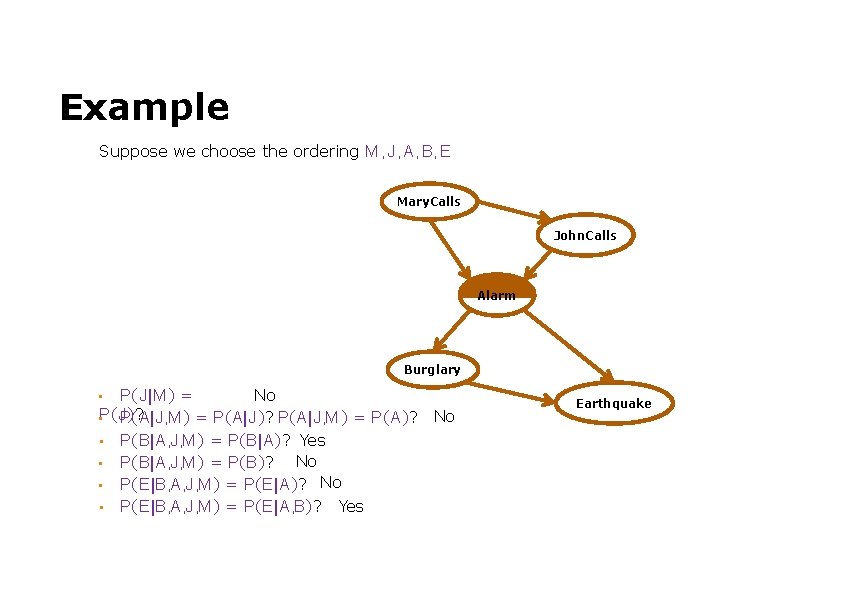

Example Suppose we choose the ordering M , J , A, B, E Mary. Calls John. Calls Alarm Burglary No P(J |M ) = P(J )? , M ) = P(A|J )? P(A|J , M ) = P(A)? • P(A|J • P(B|A, J, M ) = P(B|A)? Yes No • P(B|A, J, M ) = P(B)? • P(E |B, A, J, M ) = P(E |A)? No • P(E |B, A, J, M ) = P(E |A, B)? Yes • No Earthquake

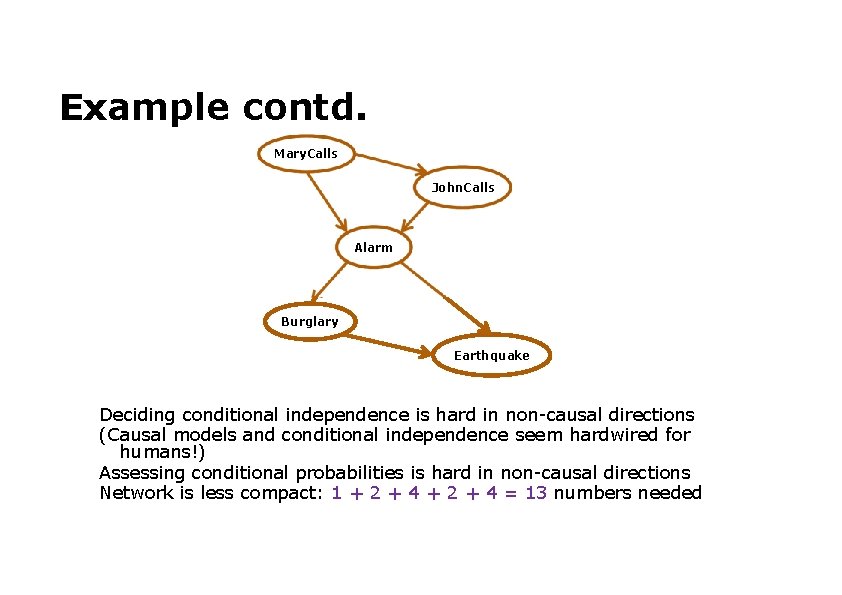

Example contd. Mary. Calls John. Calls Alarm Burglary Earthquake Deciding conditional independence is hard in non-causal directions (Causal models and conditional independence seem hardwired for humans!) Assessing conditional probabilities is hard in non-causal directions Network is less compact: 1 + 2 + 4 = 13 numbers needed

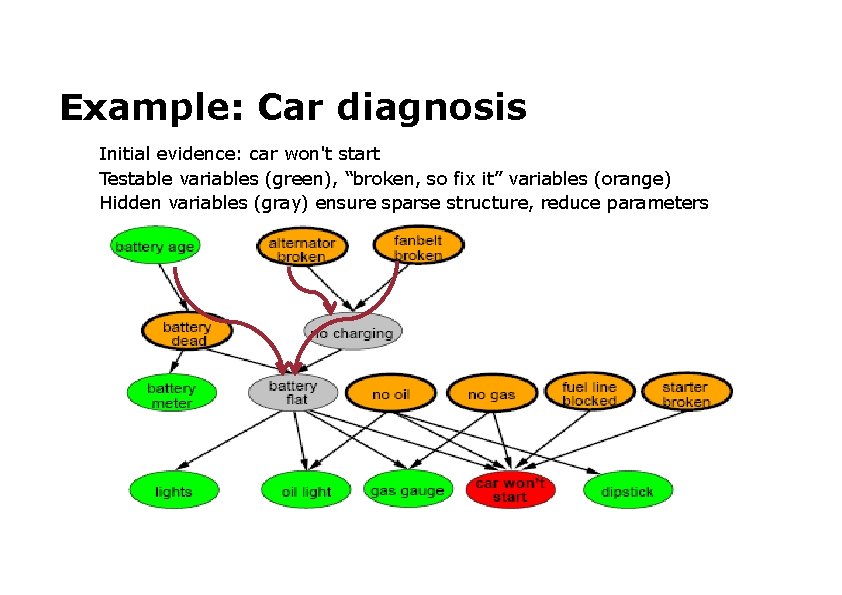

Example: Car diagnosis Initial evidence: car won't start Testable variables (green), “broken, so fix it” variables (orange) Hidden variables (gray) ensure sparse structure, reduce parameters

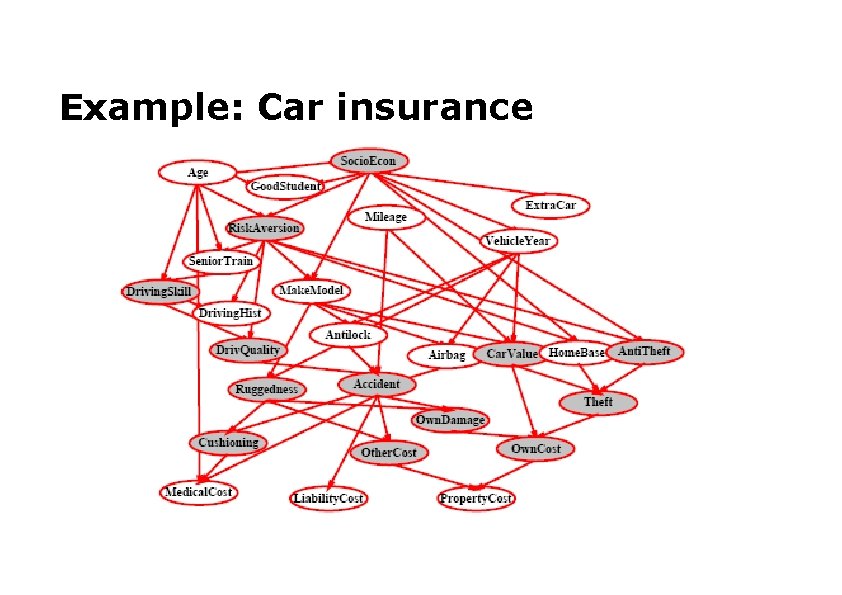

Example: Car insurance

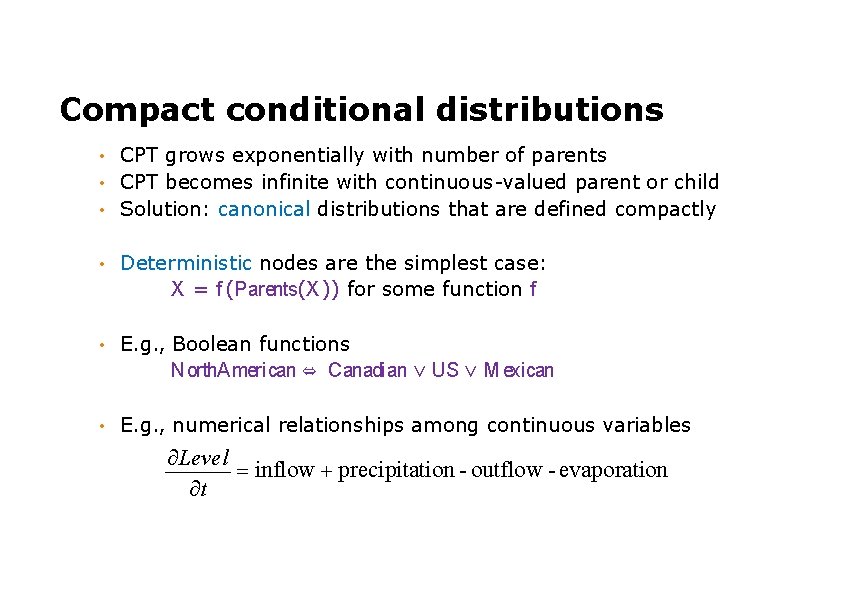

Compact conditional distributions CPT grows exponentially with number of parents • CPT becomes infinite with continuous-valued parent or child • Solution: canonical distributions that are defined compactly • • Deterministic nodes are the simplest case: X = f (Parents(X )) for some function f • E. g. , Boolean functions North. American ⇔ Canadian ∨ US ∨ M exican • E. g. , numerical relationships among continuous variables Level inflow precipitation - outflow - evaporation t

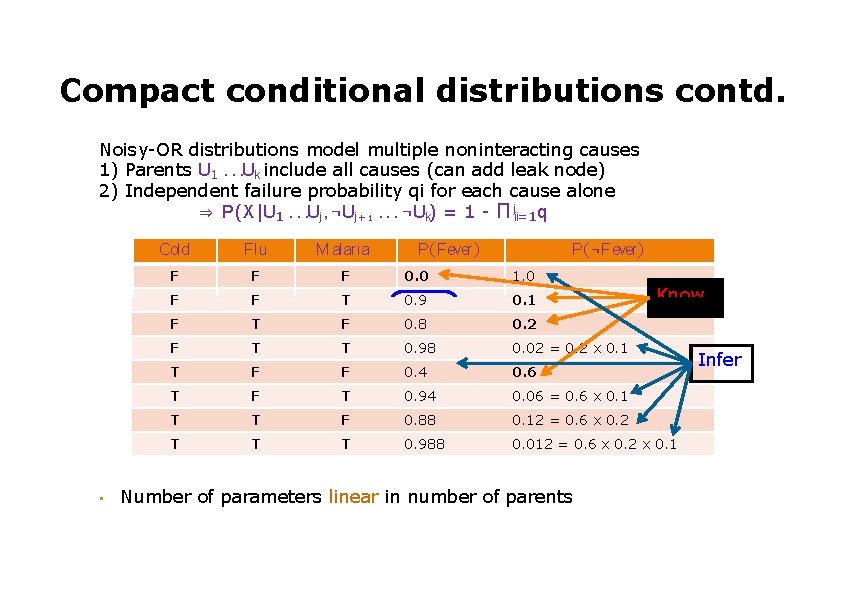

Compact conditional distributions contd. Noisy-OR distributions model multiple noninteracting causes 1) Parents U 1. . . Uk include all causes (can add leak node) 2) Independent failure probability qi for each cause alone ⇒ P(X |U 1. . . Uj , ¬Uj +1. . . ¬Uk) = 1 - Π ji=1 i qi • Cold Flu M alaria F F F 0. 0 1. 0 F F T 0. 9 0. 1 F T F 0. 8 0. 2 F T T 0. 98 0. 02 = 0. 2 x 0. 1 T F F 0. 4 0. 6 T F T 0. 94 0. 06 = 0. 6 x 0. 1 T T F 0. 88 0. 12 = 0. 6 x 0. 2 T T T 0. 988 0. 012 = 0. 6 x 0. 2 x 0. 1 P(Fever) P(¬Fever) Number of parameters linear in number of parents Know Infer

- Slides: 32