Probabilistic Latent Preference Analysis Nathan Liu Min Zhao

Probabilistic Latent Preference Analysis Nathan Liu, Min Zhao, Qiang Yang Presented by Zachary 1

Outline • • • Motivation Probabilistic Latent Semantic Analysis Bradley-Terry Model Probabilistic Latent Preference Analysis Experiments and evaluation 2

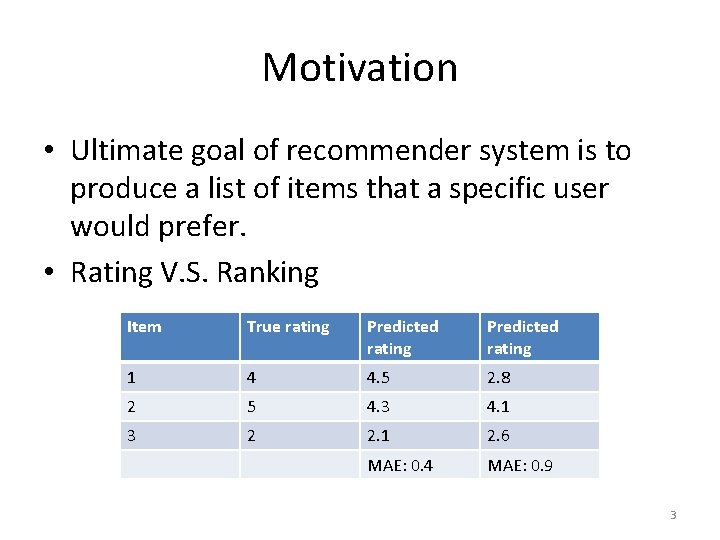

Motivation • Ultimate goal of recommender system is to produce a list of items that a specific user would prefer. • Rating V. S. Ranking Item True rating Predicted rating 1 4 4. 5 2. 8 2 5 4. 3 4. 1 3 2 2. 1 2. 6 MAE: 0. 4 MAE: 0. 9 3

Probabilistic Latent Semantic Analysis • A model-based approach to CF • Also known as Aspect Model 4

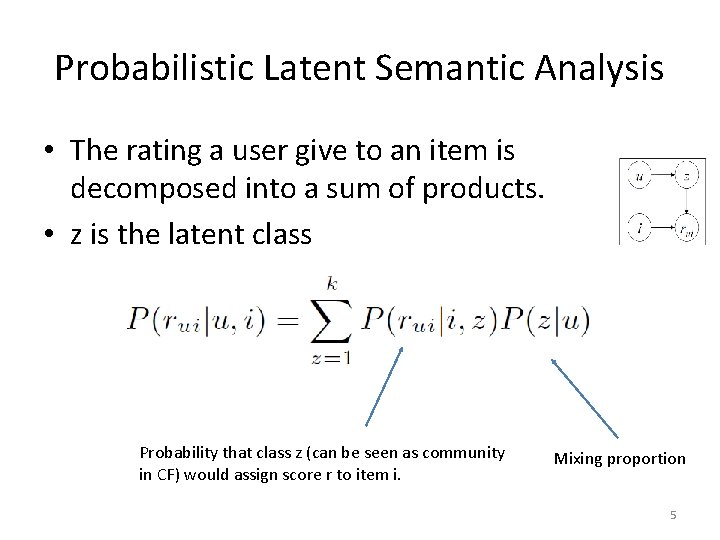

Probabilistic Latent Semantic Analysis • The rating a user give to an item is decomposed into a sum of products. • z is the latent class Probability that class z (can be seen as community in CF) would assign score r to item i. Mixing proportion 5

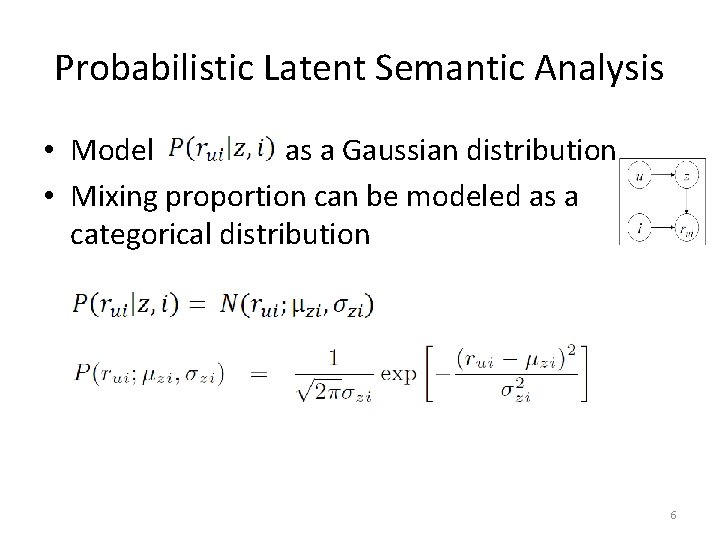

Probabilistic Latent Semantic Analysis • Model as a Gaussian distribution • Mixing proportion can be modeled as a categorical distribution 6

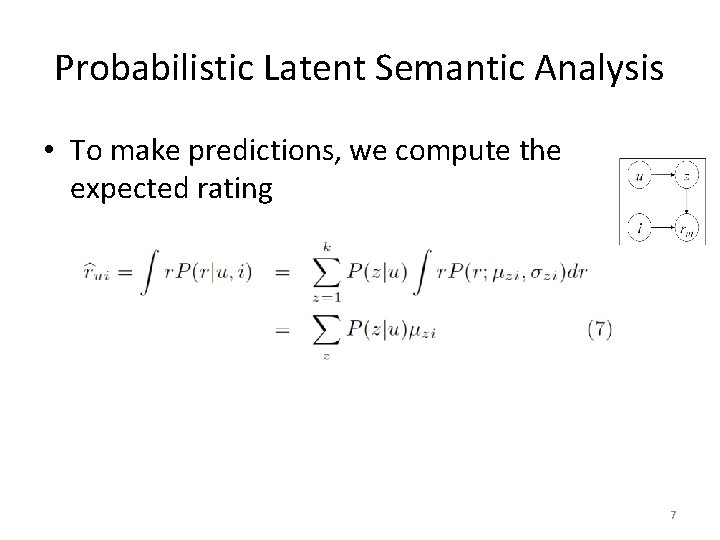

Probabilistic Latent Semantic Analysis • To make predictions, we compute the expected rating 7

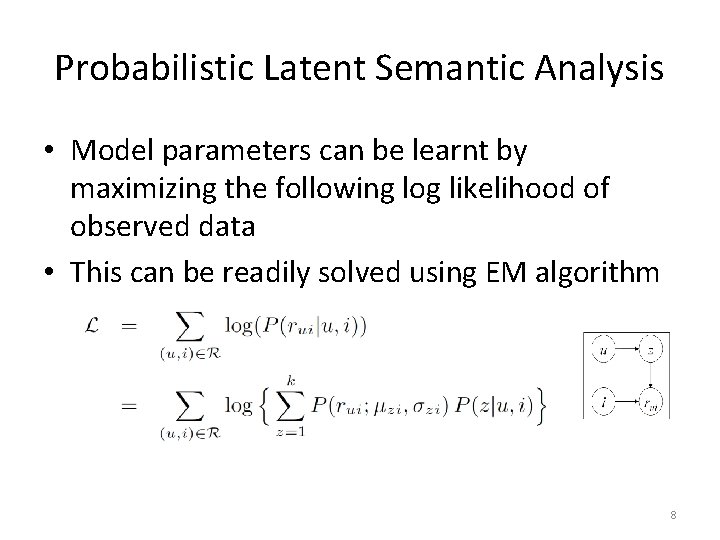

Probabilistic Latent Semantic Analysis • Model parameters can be learnt by maximizing the following log likelihood of observed data • This can be readily solved using EM algorithm 8

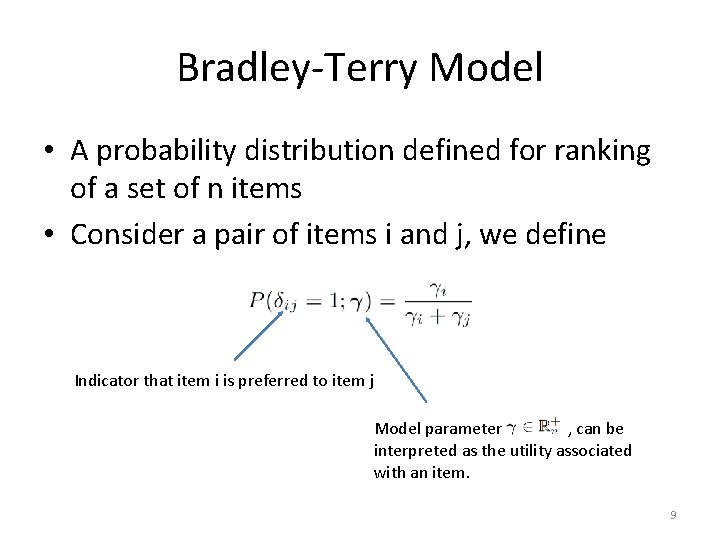

Bradley-Terry Model • A probability distribution defined for ranking of a set of n items • Consider a pair of items i and j, we define Indicator that item i is preferred to item j Model parameter , can be interpreted as the utility associated with an item. 9

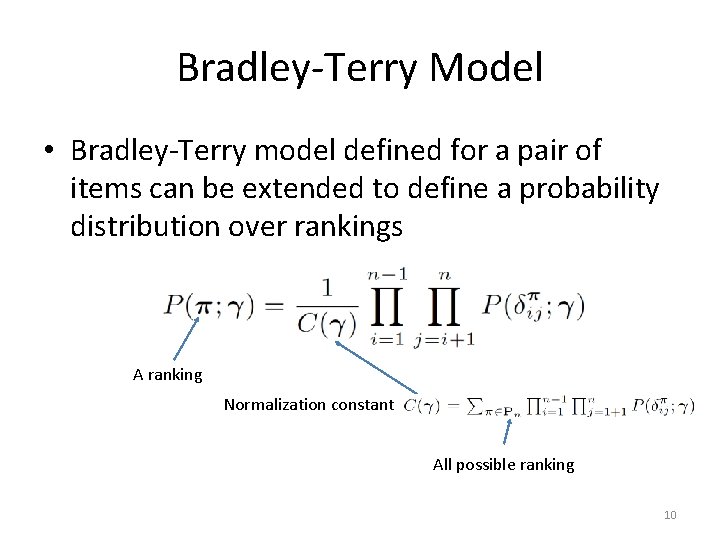

Bradley-Terry Model • Bradley-Terry model defined for a pair of items can be extended to define a probability distribution over rankings A ranking Normalization constant All possible ranking 10

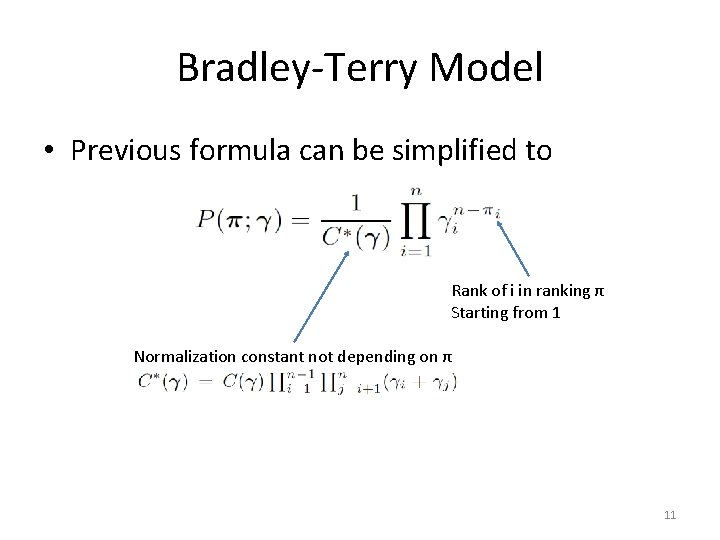

Bradley-Terry Model • Previous formula can be simplified to Rank of i in ranking π Starting from 1 Normalization constant not depending on π 11

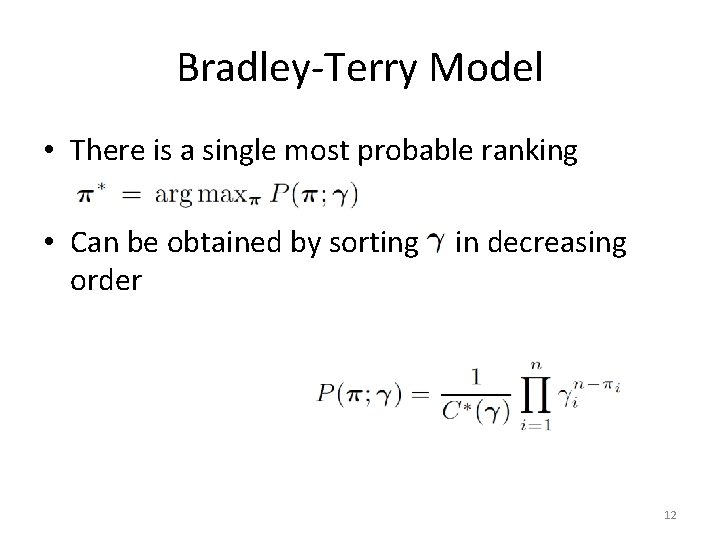

Bradley-Terry Model • There is a single most probable ranking • Can be obtained by sorting order in decreasing 12

Probabilistic Latent Preference Analysis • A model similar to p. LSA – Latent class mixture model – With Bradley-Terry model incorporated 13

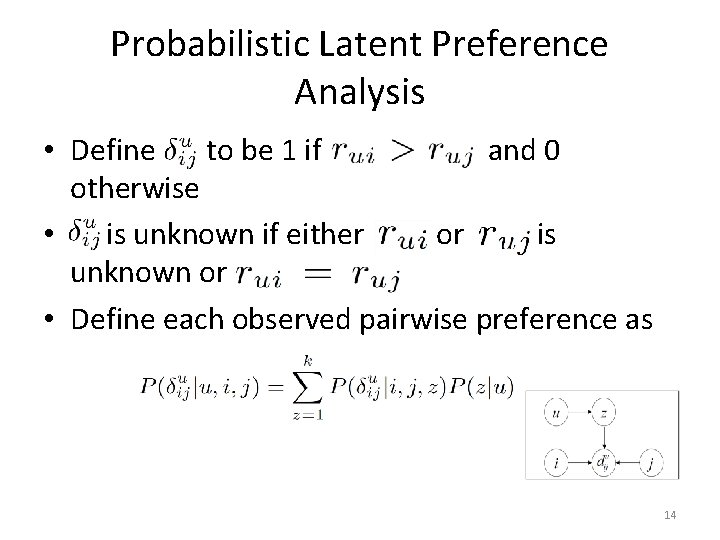

Probabilistic Latent Preference Analysis • Define to be 1 if and 0 otherwise • is unknown if either or is unknown or • Define each observed pairwise preference as 14

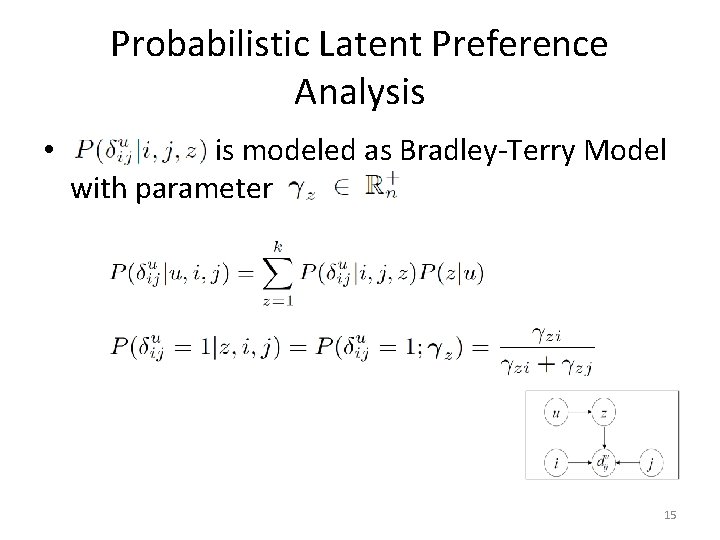

Probabilistic Latent Preference Analysis • is modeled as Bradley-Terry Model with parameter 15

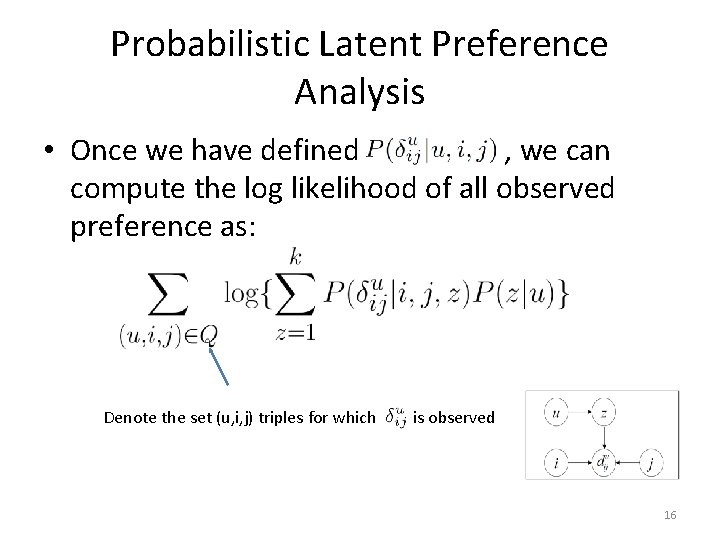

Probabilistic Latent Preference Analysis • Once we have defined , we can compute the log likelihood of all observed preference as: Denote the set (u, i, j) triples for which is observed 16

Probabilistic Latent Preference Analysis • Parameters can, again, be learnt using EM algorithm • We have closed form solution for • However, no closed form solution for Bradley. Terry model exists – There is an efficient numerical algorithm (refer to paper) 17

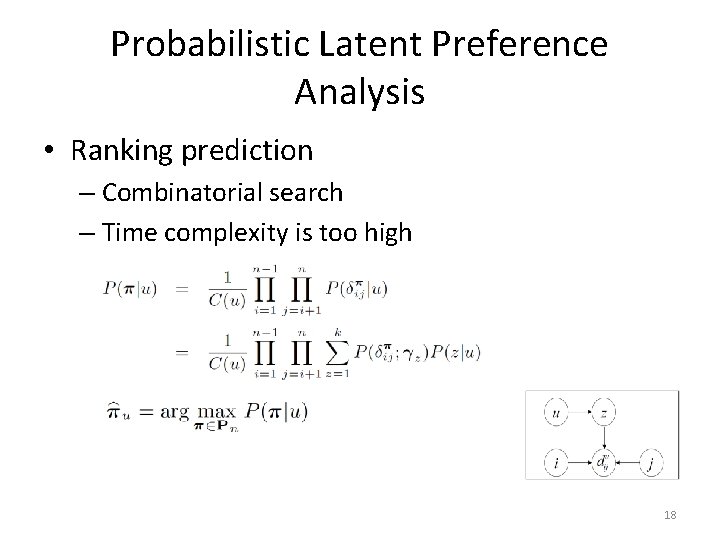

Probabilistic Latent Preference Analysis • Ranking prediction – Combinatorial search – Time complexity is too high 18

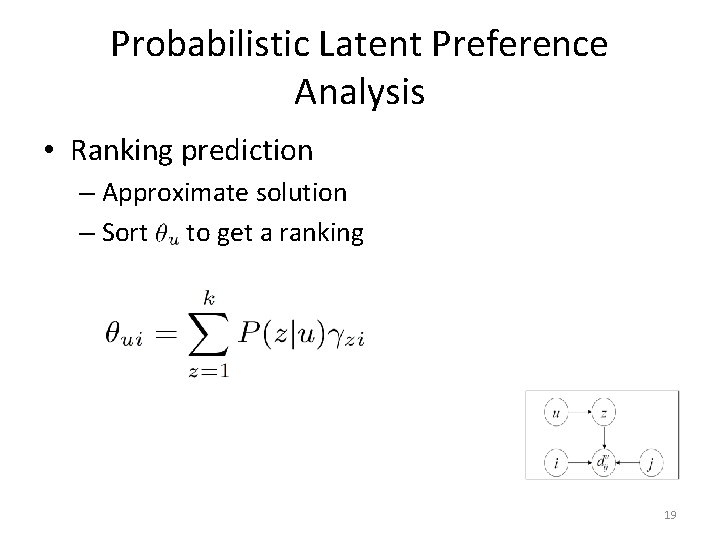

Probabilistic Latent Preference Analysis • Ranking prediction – Approximate solution – Sort to get a ranking 19

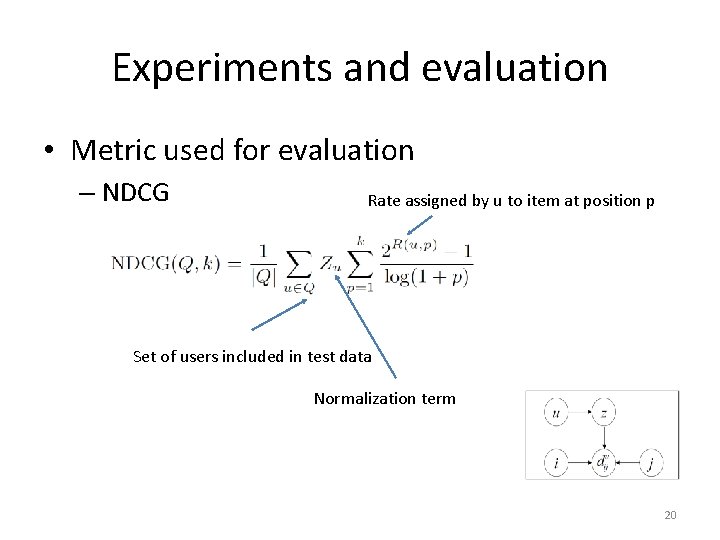

Experiments and evaluation • Metric used for evaluation – NDCG Rate assigned by u to item at position p Set of users included in test data Normalization term 20

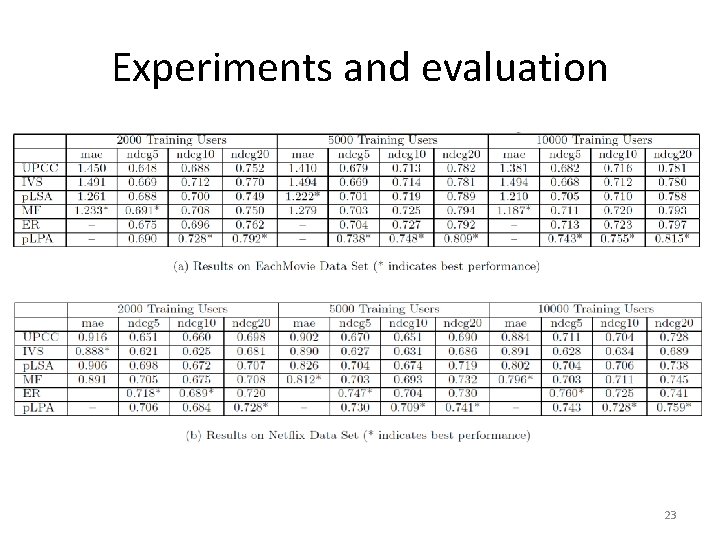

Experiments and evaluation • Data set used for evaluation – Eachmovie (random picked partial data) – Netflix (random picked partial data) – Pick 10, 600 users randomly • 10, 000 users for training • 100 users for parameter tuning (k) • 500 active users, 80% training, 20% testing 21

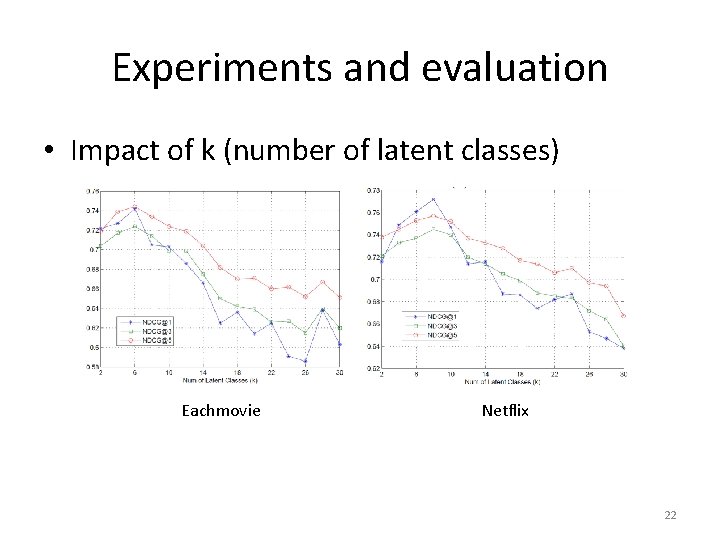

Experiments and evaluation • Impact of k (number of latent classes) Eachmovie Netflix 22

Experiments and evaluation 23

- Slides: 23