Probabilistic Graphical Models Inference Message Passing BP in

Probabilistic Graphical Models Inference Message Passing BP in Practice Daphne Koller

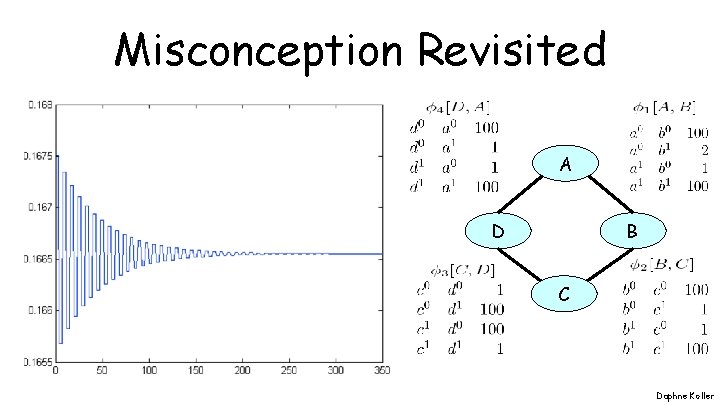

Misconception Revisited A D B C Daphne Koller

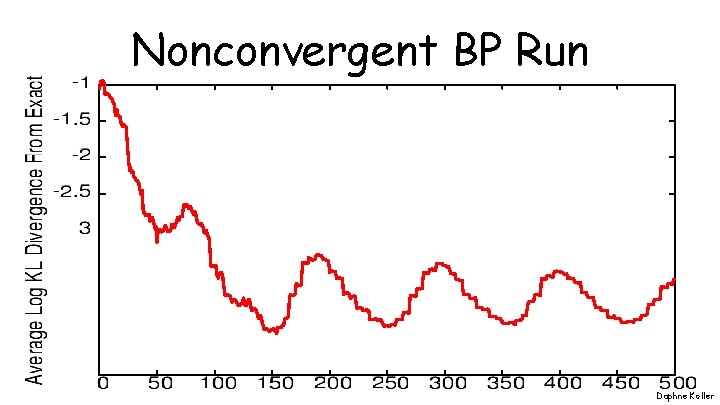

Nonconvergent BP Run Daphne Koller

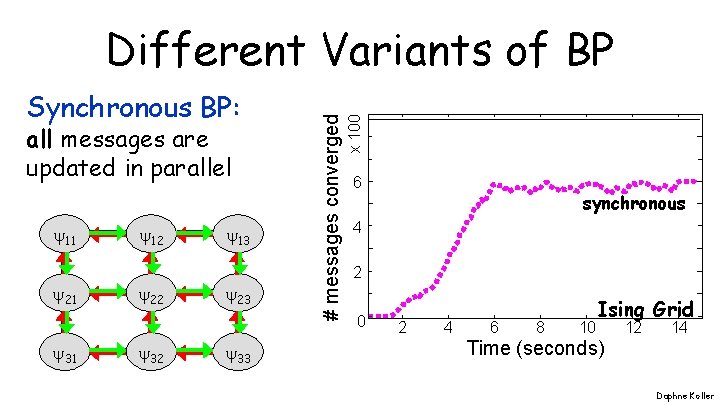

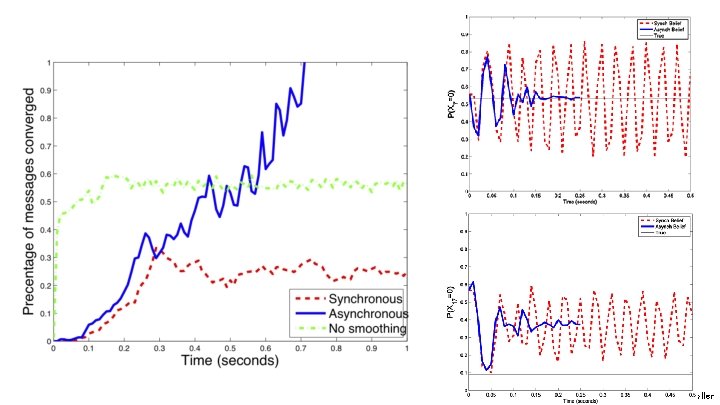

all messages are updated in parallel 11 21 31 12 22 32 13 23 33 x 100 Synchronous BP: # messages converged Different Variants of BP 6 synchronous 4 2 0 2 4 6 8 10 Ising Grid 12 14 Time (seconds) Daphne Koller

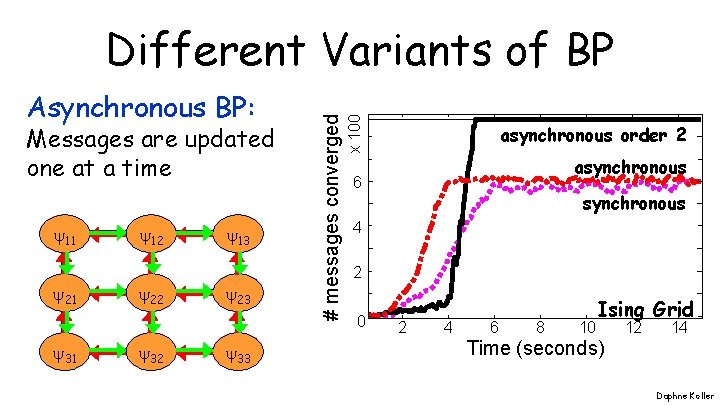

Messages are updated one at a time 11 21 31 12 22 32 13 23 33 x 100 Asynchronous BP: # messages converged Different Variants of BP asynchronous order 2 asynchronous 6 synchronous 4 2 0 2 4 6 8 10 Ising Grid 12 14 Time (seconds) Daphne Koller

Observations • Convergence is a local property: – some messages converge soon – others may never converge • Synchronous BP converges considerably worse than asynchronous • Message passing order makes a difference to extent and rate of convergence Daphne Koller

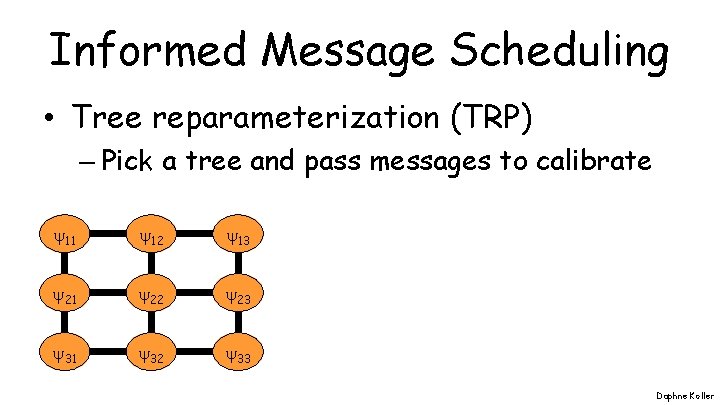

Informed Message Scheduling • Tree reparameterization (TRP) – Pick a tree and pass messages to calibrate 11 12 13 21 22 23 31 32 33 Daphne Koller

Informed Message Scheduling • Tree reparameterization (TRP) – Pick a tree and pass messages to calibrate • Residual belief propagation (RBP) – Pass messages between two clusters whose beliefs over the sepset disagree the most Daphne Koller

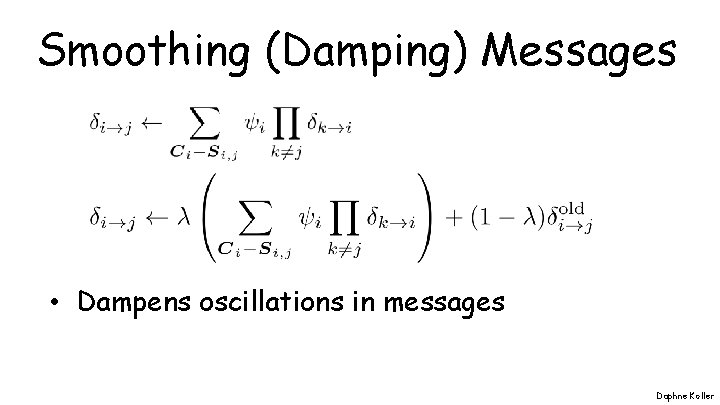

Smoothing (Damping) Messages • Dampens oscillations in messages Daphne Koller

Daphne Koller

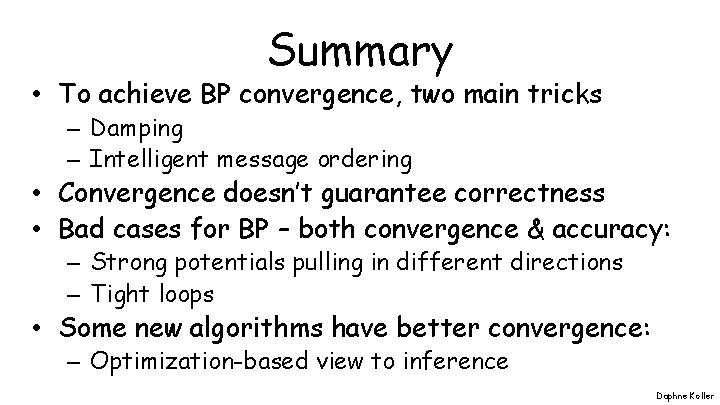

Summary • To achieve BP convergence, two main tricks – Damping – Intelligent message ordering • Convergence doesn’t guarantee correctness • Bad cases for BP – both convergence & accuracy: – Strong potentials pulling in different directions – Tight loops • Some new algorithms have better convergence: – Optimization-based view to inference Daphne Koller

END END Daphne Koller

- Slides: 12