Presentation on Inferential Statistic Basic Inferential statistical terms

Presentation on: Inferential Statistic (Basic Inferential statistical terms)

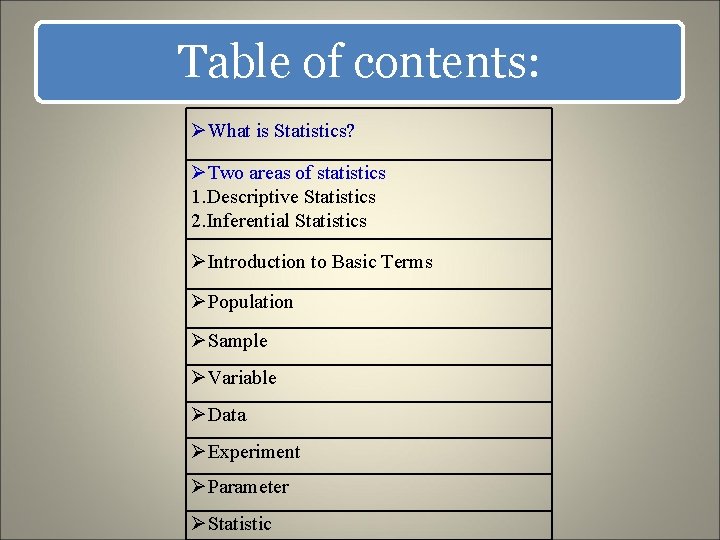

Table of contents: ØWhat is Statistics? ØTwo areas of statistics 1. Descriptive Statistics 2. Inferential Statistics ØIntroduction to Basic Terms ØPopulation ØSample ØVariable ØData ØExperiment ØParameter ØStatistic

Statistics

What is Statistics? Statistics: The science of collecting, describing, and interpreting data. Two areas of statistics: Descriptive Statistics: collection, presentation, and description of sample data. Inferential Statistics: making decisions and drawing conclusions about populations.

Descriptive Statistics: • Collection, presentation, and description of sample data. • We use descriptive statistics simply to describe what's going on in our data.

Inferential statistics • Inferential statistics refer to the use of current information regarding a sample of subjects in order to: Ø Make assumptions about the population at large and/or Ø Make predictions about what might happen in the future.

Continue…. . • The goal of inferential statistics is to do just that – to take what is known and make assumptions or inferences about what is not known. • We use inferential statistics to make inferences from our data to more general conditions; • Inferential statistics are concerned with determining how likely it is that results based on a sample or samples are the same results that would have been obtained for entire population

Introduction to Basic Terms Population: A collection, or set, of individuals or objects or events whose properties are to be analyzed. • Example: A faculty dean is interested in learning about the average of faculty. Identify the basic terms in this situation. • The population is the age of all faculty members at the college.

Sample: • A subset of the population. • For example, we might select 10 faculty members and determine their age.

Variable: • A characteristic about each individual element of a population or sample. Or • A variable that categorizes or describes an element of a population. • The variable is the “age” of each faculty member.

Two kinds of variables: Qualitative, or Attribute Variable: A variable that categorizes or describes an element of a population. Note: Arithmetic operations, such as addition and averaging, are not meaningful for data resulting from a qualitative variable. Quantitative, or Numerical Variable: A variable that quantifies an element of a population. Note: Arithmetic operations such as addition and averaging, are meaningful for data resulting from a quantitative variable.

Data • Data (singular): The value of the variable associated with one element of a population or sample. This value may be a number, a word, or a symbol. • One data would be the age of a specific faculty member. • Data (plural): The set of values collected for the variable from each of the elements belonging to the sample. • The data would be the set of values in the sample.

Experiment: • A planned activity whose results yield a set of data. • The experiment would be the method used to select the ages forming the sample and determining the actual age of each faculty member in the sample.

Parameter: • A numerical value summarizing all the data of an entire population. • The parameter of interest is the “average” age of all faculty at the college.

Statistic: • A numerical value summarizing the sample data. • The statistic is the “average” age for all faculty in the sample.

Example: A faculty dean is interested in learning about the average of faculty. Identify the basic terms in this situation. • The population is the age of all faculty members at the college. • A sample is any subset of that population. For example, we might select 10 faculty members and determine their age. • The variable is the “age” of each faculty member. • One data would be the age of a specific faculty member. • The data would be the set of values in the sample. • The experiment would be the method used to select the ages forming the sample and determining the actual age of each faculty member in the sample. • The parameter of interest is the “average” age of all faculty at the college. • The statistic is the “average” age for all faculty in the sample.

Remember: Responsible use of statistical methodology is very important. The burden is on the user to ensure that the appropriate methods are correctly applied and that accurate conclusions are drawn and communicated to others.

Null hypothesis: In inferential statistics on observational data, the null hypothesis refers to a general statement or default position that there is no relationship between two measured phenomena. Rejecting or disproving the null hypothesis—and thus concluding that there are grounds for believing that there is a relationship between two phenomena (e. g. that a potential treatment has a measurable effect)—is a central task in the modern practice of science, and gives a precise sense in which a claim is capable of being proven false. The null hypothesis is generally assumed to be true until evidence indicates otherwise. In statistics, it is often denoted H 0 (read “H-nought”, "H-null", or "H-zero").

Statistical inference can be done without a null hypothesis, thus avoiding the criticisms under debate. An approach to statistical inference that does not involve a null hypothesis is the following: for each candidate hypothesis, specify a statistical model that corresponds to the hypothesis; then, use model selection techniques to choose the most appropriate model. (The most common selection techniques are based on either Akaike information criterion or Bayes factor. )

Tests of Significance: Once sample data has been gathered through an observational study or experiment, statistical inference allows analysts to assess evidence in favor or some claim about the population from which the sample has been drawn. The methods of inference used to support or reject claims based on sample data are known as tests of significance. Every test of significance begins with a null hypothesis H 0 represents a theory that has been put forward, either because it is believed to be true or because it is to be used as a basis for argument, but has not been proved. For example, in a clinical trial of a new drug, the null hypothesis might be that the new drug is no better, on average, than the current drug. We would write H 0: there is no difference between the two drugs on average.

Degrees of freedom: In statistics, the number of degrees of freedom is the number of values in the final calculation of a statistic that are free to vary. The number of independent ways by which a dynamic system can move, without violating any constraint imposed on it, is called number of degrees of freedom. In other words, the number of degrees of freedom can be defined as the minimum number of independent coordinates that can specify the position of the system completely.

Mathematically, degrees of freedom is the number of dimensions of the domain of a random vector, or essentially the number of "free" components (how many components need to be known before the vector is fully determined). The term is most often used in the context of linear models (linear regression, analysis of variance), where certain random vectors are constrained to lie in linear subspaces, and the number of degrees of freedom is the dimension of the subspace. The degrees of freedom are also commonly associated with the squared lengths (or "sum of squares" of the coordinates) of such vectors, and the parameters of chi-squared and other distributions that arise in associated statistical testing problems.

Standard error: The standard error (SE) is the standard deviation of the sampling distribution of a statistic, most commonly of the mean. The term may also be used to refer to an estimate of that standard deviation, derived from a particular sample used to compute the estimate. For example, the sample mean is the usual estimator of a population mean. However, different samples drawn from that same population would in general have different values of the sample mean, so there is a distribution of sampled means (with its own mean and variance). The standard error of the mean (SEM) (i. e. , of using the sample mean as a method of estimating the population mean) is the standard deviation of those sample means over all possible samples (of a given size) drawn from the population. Secondly, the standard error of the mean can refer to an estimate of that standard deviation, computed from the sample of data being analyzed at the time.

References…. . L. R. Gay Educational Research, 5 th E, Islamabad , National Book Foundation, http: //en. wikipedia. org/wiki/Statistical_inference (May 19, 2014, 08: 30 pm) http: //onlinestatbook. com/2/introduction/inferential. html (May 19, 2014, 08: 30 pm) www. radford. edu/~dsnuffer/chap 1. ppt www. sagepub. com/upm-data/41413_3. pdf

Any Question? ? ?

Thank you…. .

- Slides: 26