PrefetchAware DRAM Controllers Chang Joo Lee Onur Mutlu

- Slides: 43

Prefetch-Aware DRAM Controllers Chang Joo Lee Onur Mutlu* Veynu Narasiman Yale N. Patt Electrical and Computer Engineering The University of Texas at Austin *Microsoft Research and Carnegie Mellon University 1

Outline Motivation n Mechanism n Experimental Evaluation n Conclusion n 2

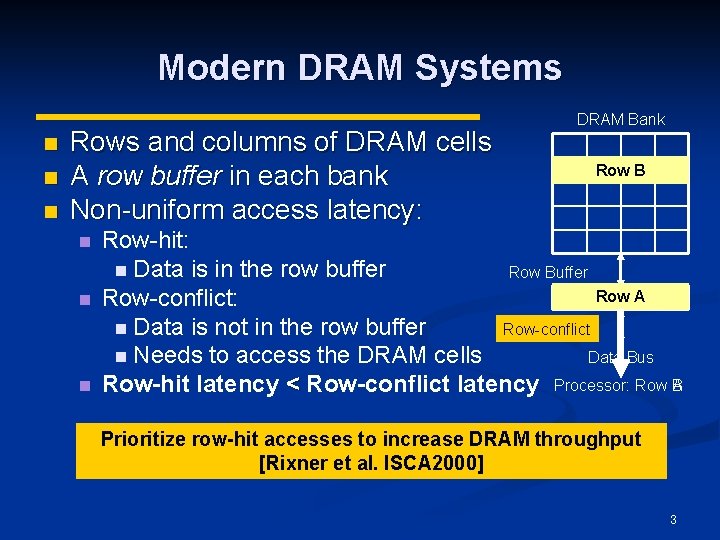

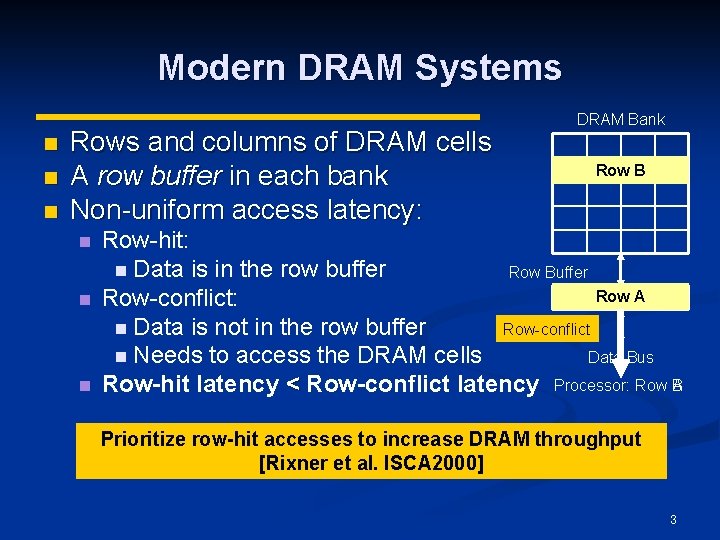

Modern DRAM Systems n n n Rows and columns of DRAM cells A row buffer in each bank Non-uniform access latency: n n n DRAM Bank Row B Row-hit: n Data is in the row buffer Row Buffer Row A Row-conflict: n Data is not in the row buffer Row-conflict Row-hit Data Bus n Needs to access the DRAM cells Row-hit latency < Row-conflict latency Processor: Row BA Prioritize row-hit accesses to increase DRAM throughput [Rixner et al. ISCA 2000] 3

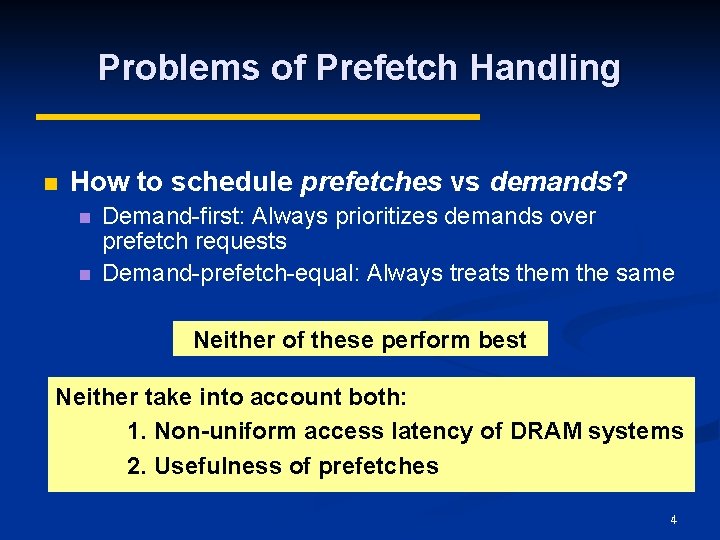

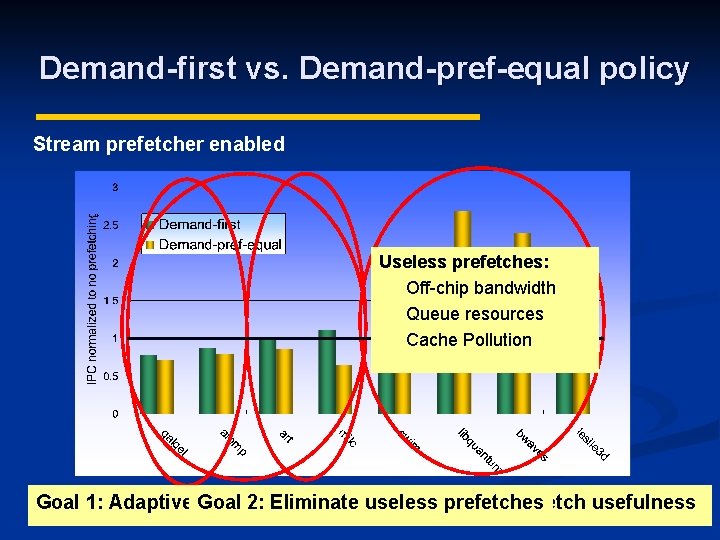

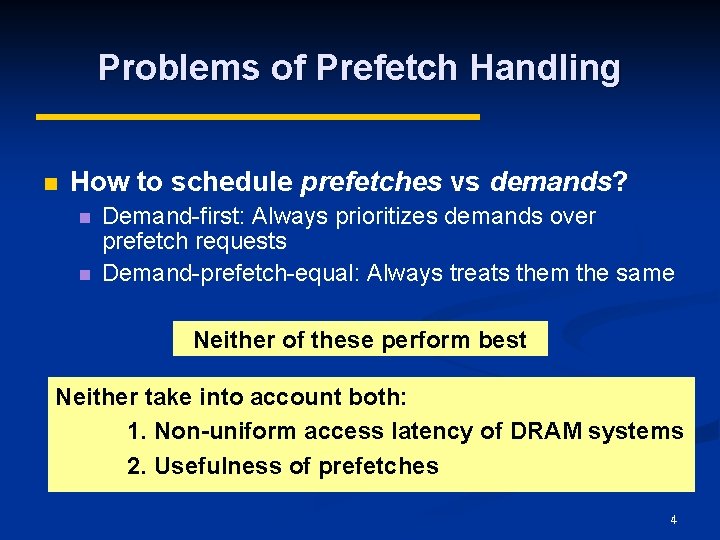

Problems of Prefetch Handling n How to schedule prefetches vs demands? n n Demand-first: Always prioritizes demands over prefetch requests Demand-prefetch-equal: Always treats them the same Neither of these perform best Neither take into account both: 1. Non-uniform access latency of DRAM systems 2. Usefulness of prefetches 4

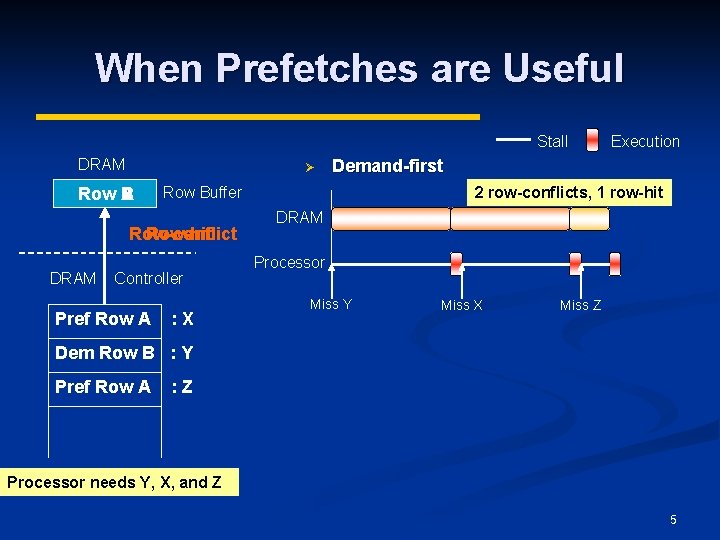

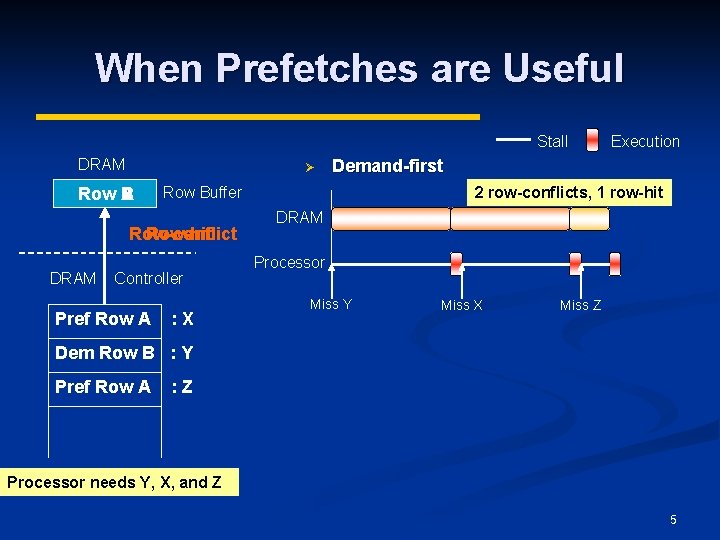

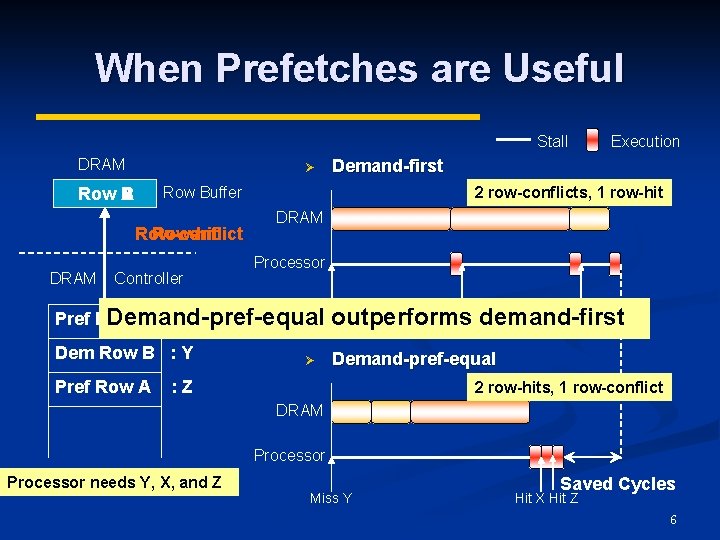

When Prefetches are Useful Stall DRAM Ø Row B A DRAM Controller Pref Row A Demand-first Row Buffer Row-conflict Row-hit : X Execution 2 row-conflicts, 1 row-hit DRAM Processor Miss Y Miss X Miss Z Dem Row B : Y Pref Row A : Z Processor needs Y, X, and Z 5

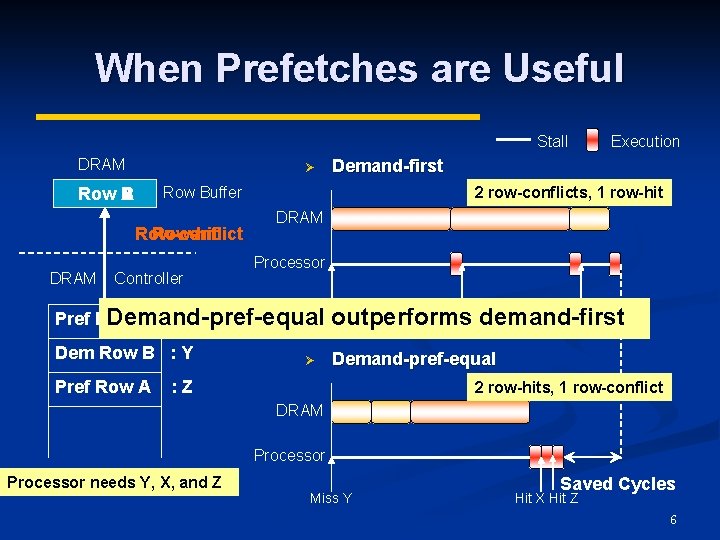

When Prefetches are Useful Stall DRAM Ø Row-conflict Row-hit DRAM Demand-first Row Buffer Row B A Controller Execution 2 row-conflicts, 1 row-hit DRAM Processor Miss Y Miss X Miss Z Pref Row A : X Demand-pref-equal outperforms demand-first Dem Row B : Y Demand-pref-equal Pref Row A Ø : Z 2 row-hits, 1 row-conflict DRAM Processor needs Y, X, and Z Miss Y Saved Cycles Hit X Hit Z 6

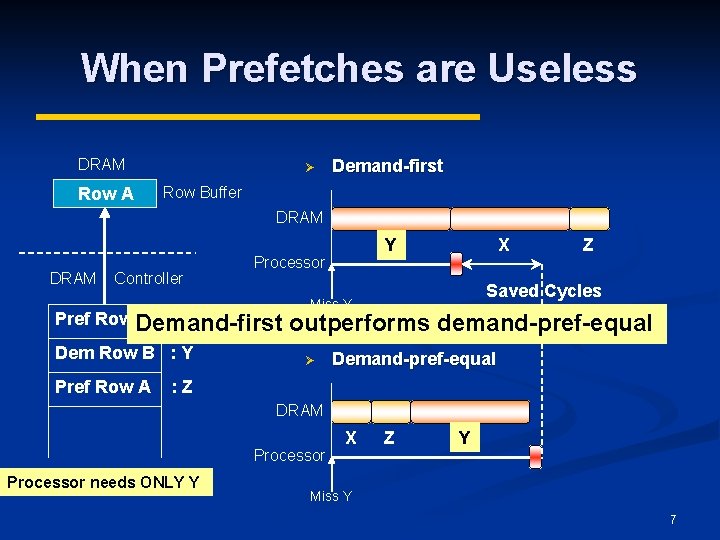

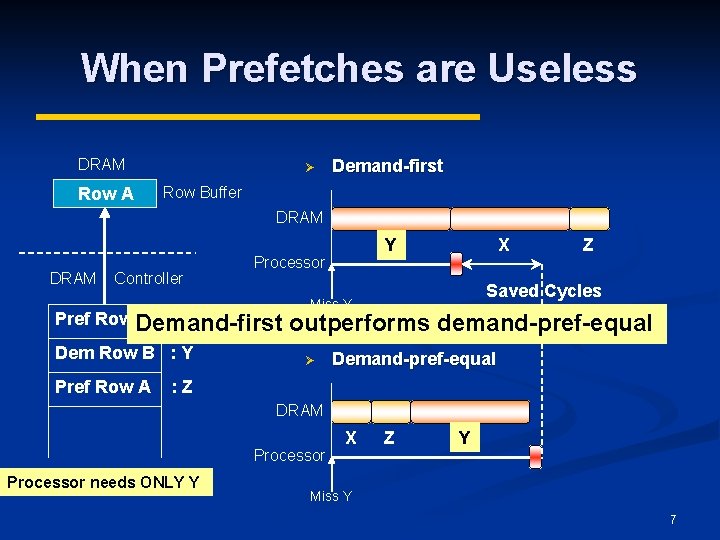

When Prefetches are Useless DRAM Row A Ø Demand-first Row Buffer DRAM Controller Processor Pref Row Demand-first A : X Dem Row B : Y Pref Row A Y X Z Saved Cycles Miss Y outperforms demand-pref-equal Ø Demand-pref-equal : Z DRAM Processor needs ONLY Y X Z Y Miss Y 7

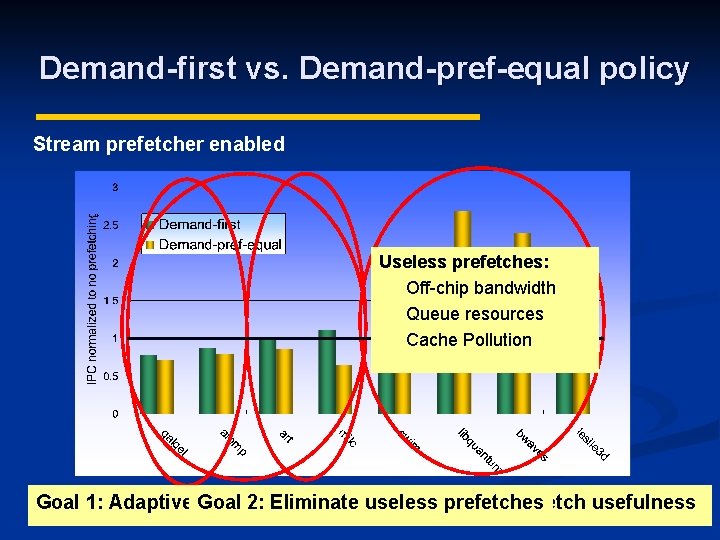

Demand-first vs. Demand-pref-equal policy Stream prefetcher enabled Useless prefetches: Off-chip bandwidth Queue resources Cache Pollution Goal 1: Adaptively Goal schedule 2: Eliminate prefetches useless prefetches on prefetch usefulness Demand-pref-equal Demand-first is better isbased better 8

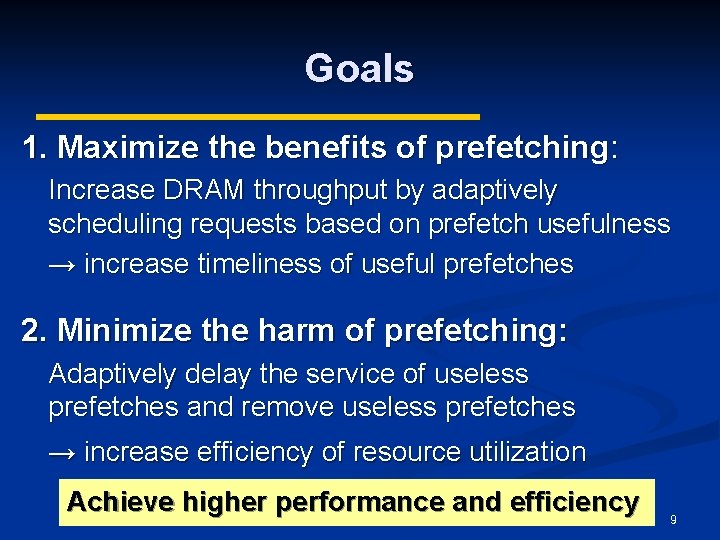

Goals 1. Maximize the benefits of prefetching: Increase DRAM throughput by adaptively scheduling requests based on prefetch usefulness → increase timeliness of useful prefetches 2. Minimize the harm of prefetching: Adaptively delay the service of useless prefetches and remove useless prefetches → increase efficiency of resource utilization Achieve higher performance and efficiency 9

Outline Motivation n Mechanism n Experimental Evaluation n Conclusion n 10

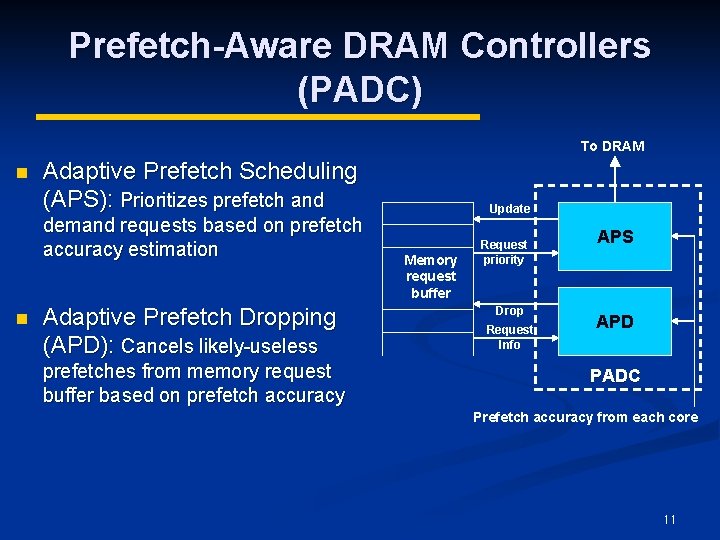

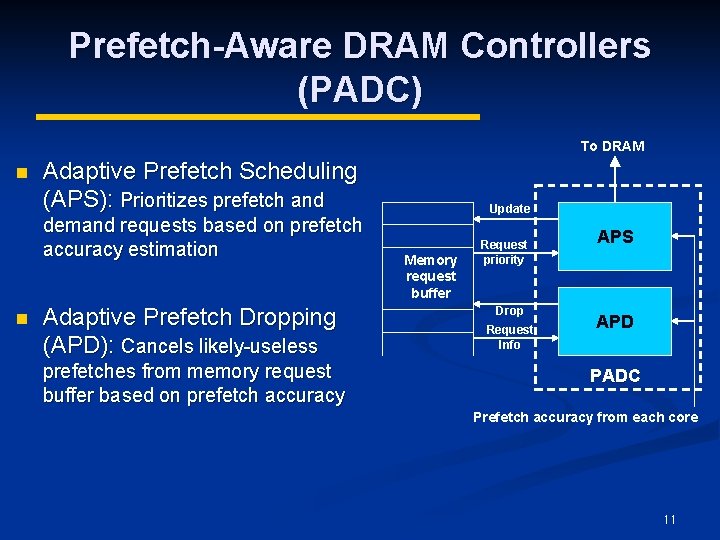

Prefetch-Aware DRAM Controllers (PADC) To DRAM n Adaptive Prefetch Scheduling (APS): Prioritizes prefetch and demand requests based on prefetch accuracy estimation n Adaptive Prefetch Dropping (APD): Cancels likely-useless prefetches from memory request buffer based on prefetch accuracy Update Memory request buffer Request priority Drop Request Info APS APD PADC Prefetch accuracy from each core 11

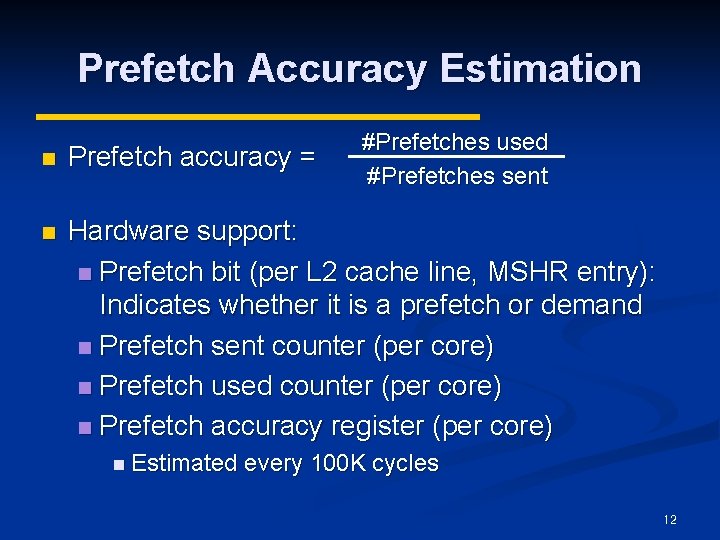

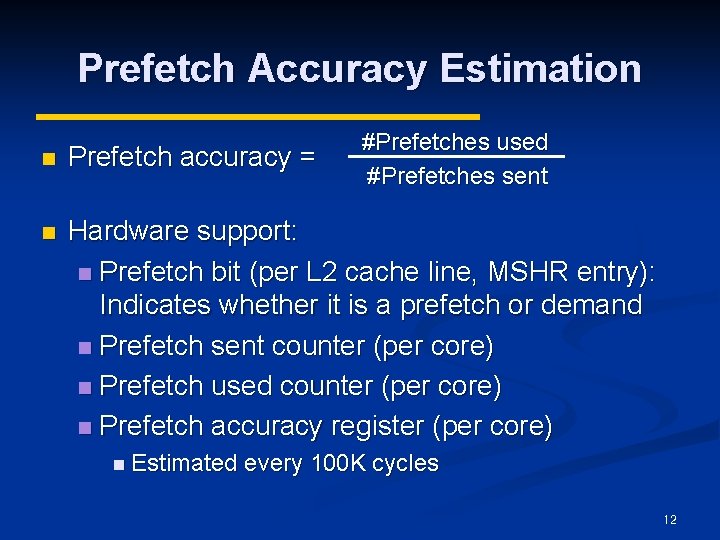

Prefetch Accuracy Estimation #Prefetches used #Prefetches sent n Prefetch accuracy = n Hardware support: n Prefetch bit (per L 2 cache line, MSHR entry): Indicates whether it is a prefetch or demand n Prefetch sent counter (per core) n Prefetch used counter (per core) n Prefetch accuracy register (per core) n Estimated every 100 K cycles 12

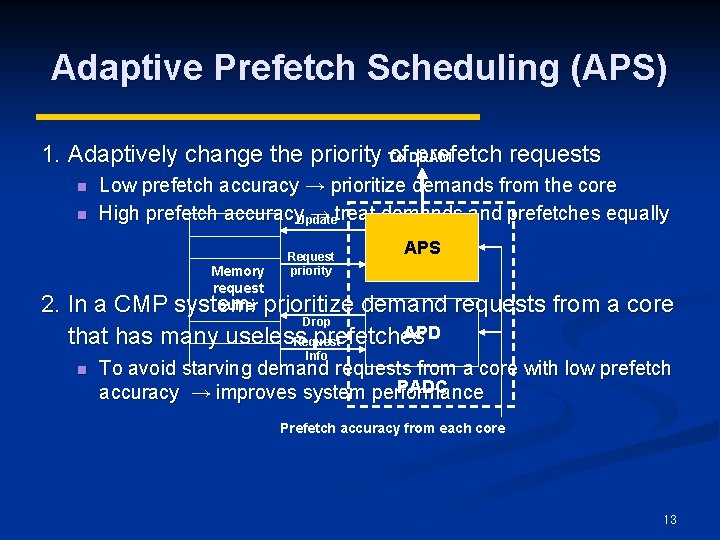

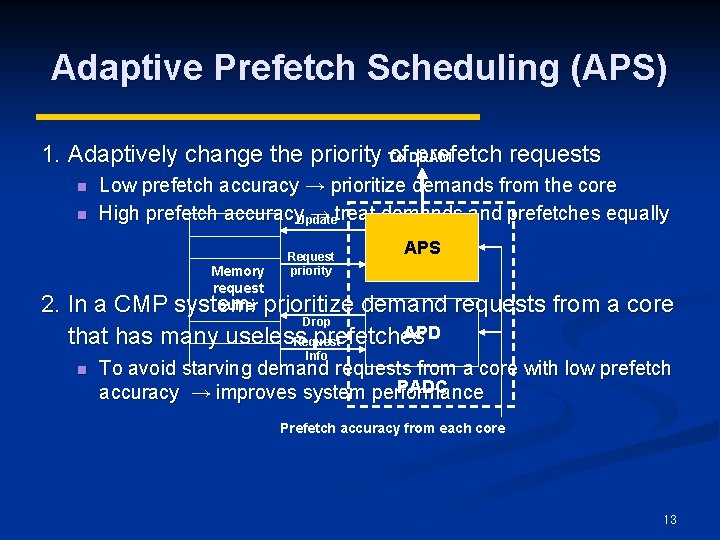

Adaptive Prefetch Scheduling (APS) 1. Adaptively change the priority To of. DRAM prefetch requests n n Low prefetch accuracy → prioritize demands from the core High prefetch accuracy. Update → treat demands and prefetches equally Memory request buffer Request priority APS 2. In a CMP system: prioritize demand requests from a core Drop APD that has many useless prefetches Request n Info To avoid starving demand requests from a core with low prefetch PADC accuracy → improves system performance Prefetch accuracy from each core 13

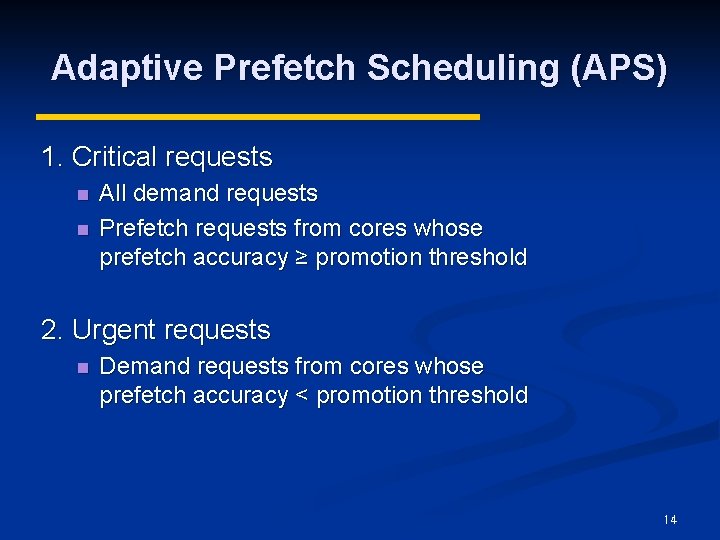

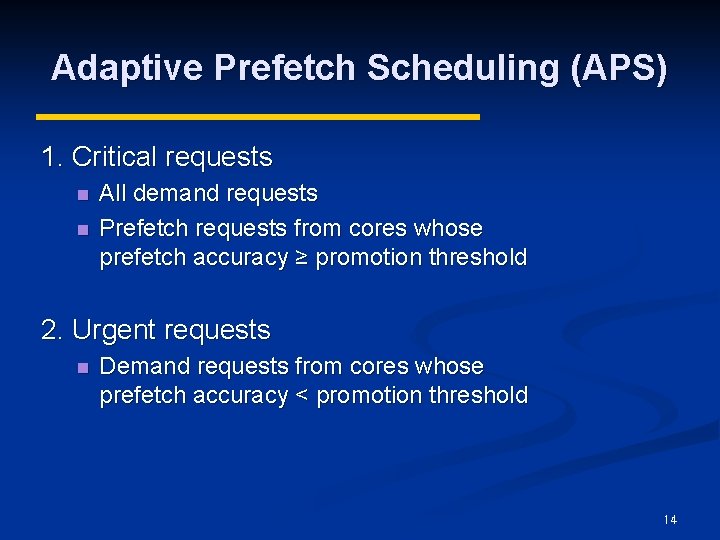

Adaptive Prefetch Scheduling (APS) 1. Critical requests n n All demand requests Prefetch requests from cores whose prefetch accuracy ≥ promotion threshold 2. Urgent requests n Demand requests from cores whose prefetch accuracy < promotion threshold 14

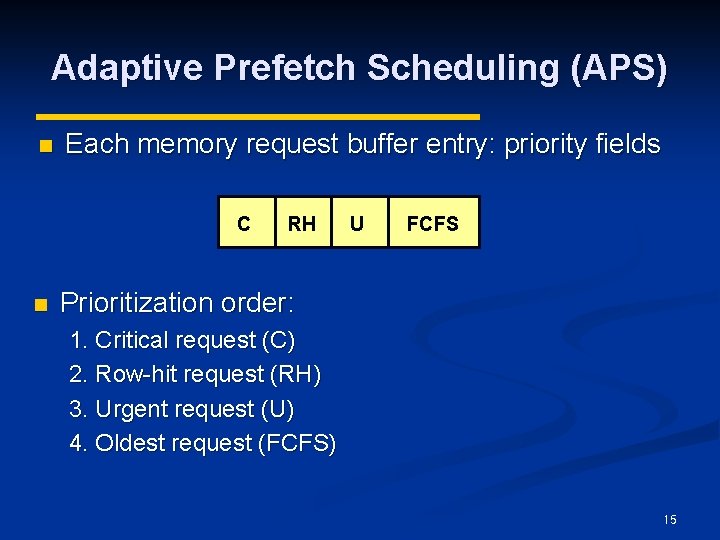

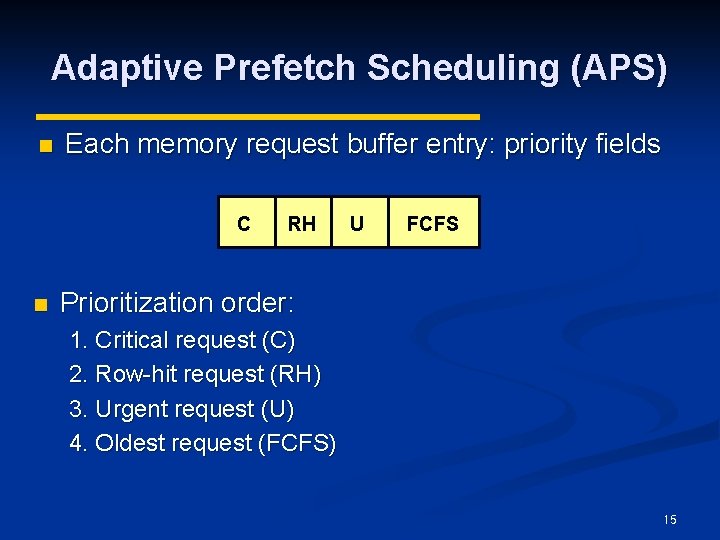

Adaptive Prefetch Scheduling (APS) n Each memory request buffer entry: priority fields C n RH U FCFS Prioritization order: 1. Critical request (C) 2. Row-hit request (RH) 3. Urgent request (U) 4. Oldest request (FCFS) 15

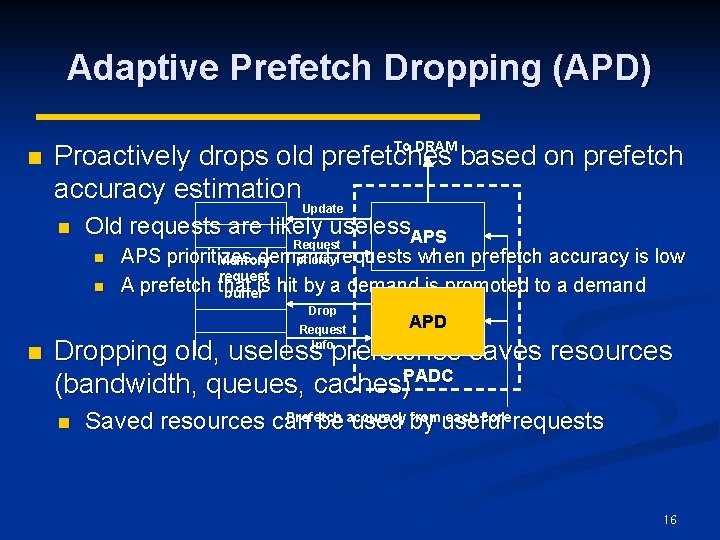

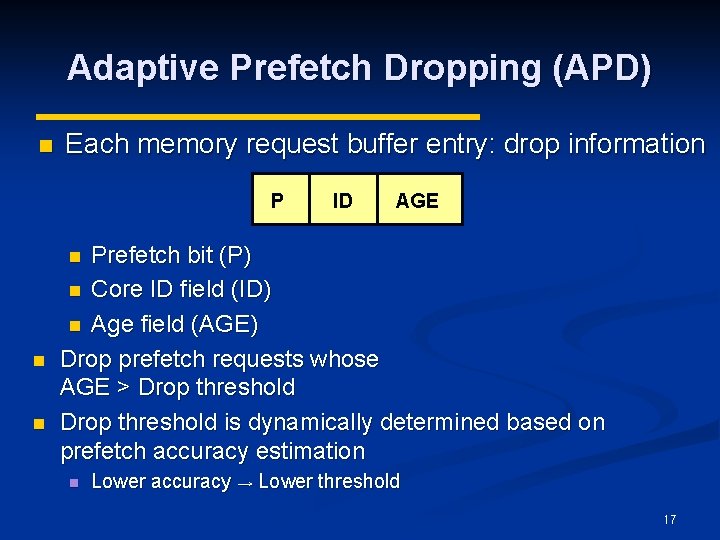

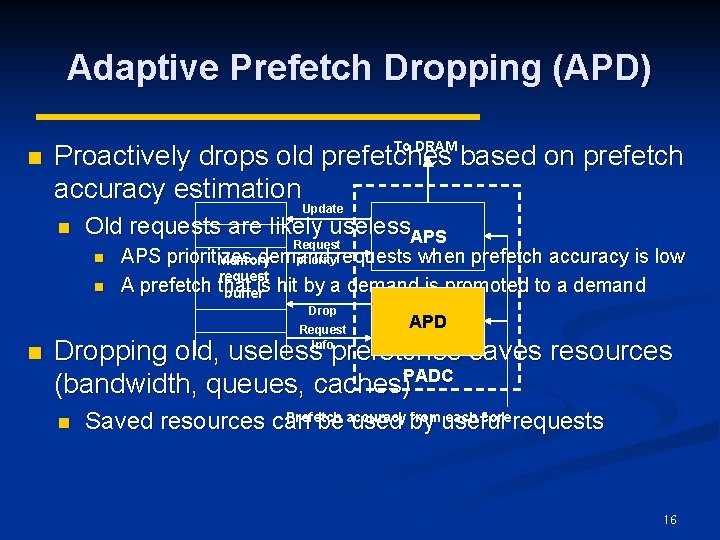

Adaptive Prefetch Dropping (APD) n To DRAM Proactively drops old prefetches based on prefetch accuracy estimation Update n Old requests are likely useless. APS n n Request APS prioritizes demand priorityrequests when prefetch accuracy is low Memory request A prefetch that bufferis hit by a demand is promoted to a demand Drop n Request Info APD Dropping old, useless prefetches saves resources (bandwidth, queues, caches)PADC n Prefetch fromuseful each core requests Saved resources can be accuracy used by 16

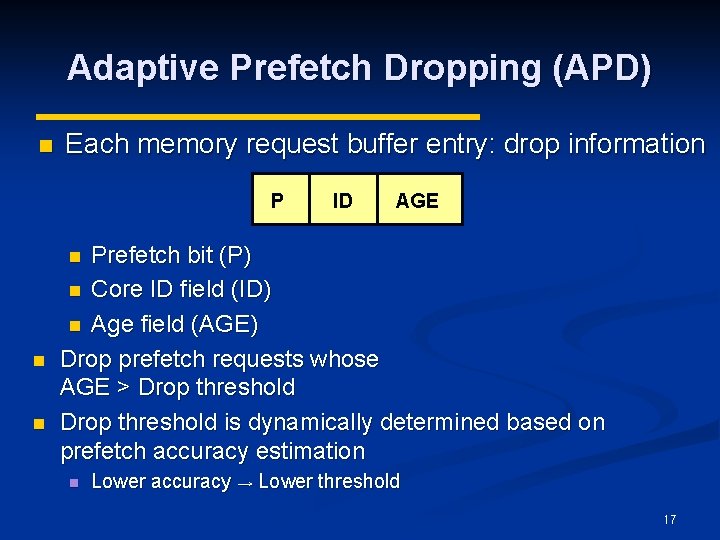

Adaptive Prefetch Dropping (APD) n Each memory request buffer entry: drop information P ID AGE Prefetch bit (P) n Core ID field (ID) n Age field (AGE) Drop prefetch requests whose AGE > Drop threshold is dynamically determined based on prefetch accuracy estimation n n Lower accuracy → Lower threshold 17

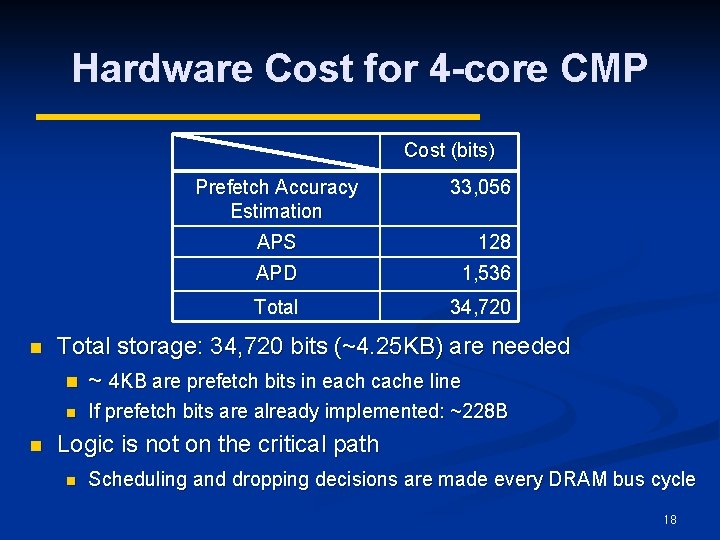

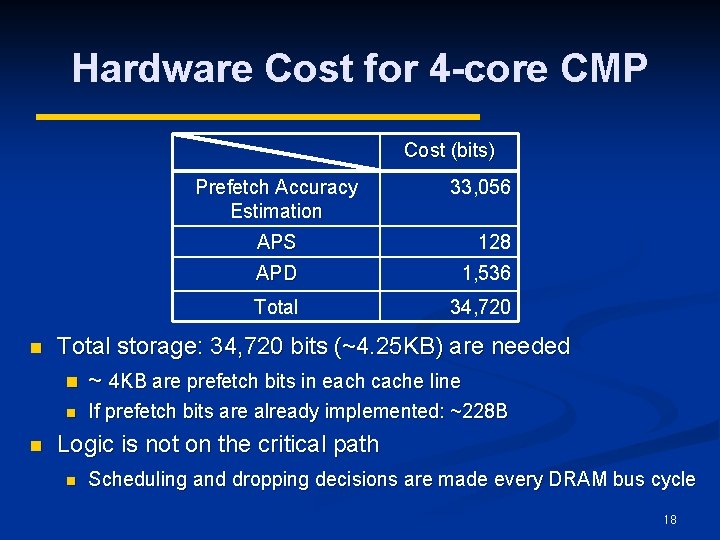

Hardware Cost for 4 -core CMP Cost (bits) Prefetch Accuracy Estimation n APS 128 APD 1, 536 Total 34, 720 Total storage: 34, 720 bits (~4. 25 KB) are needed n ~ 4 KB are prefetch bits in each cache line n n 33, 056 If prefetch bits are already implemented: ~228 B Logic is not on the critical path n Scheduling and dropping decisions are made every DRAM bus cycle 18

Outline Motivation n Mechanism n Experimental Evaluation n Conclusion n 19

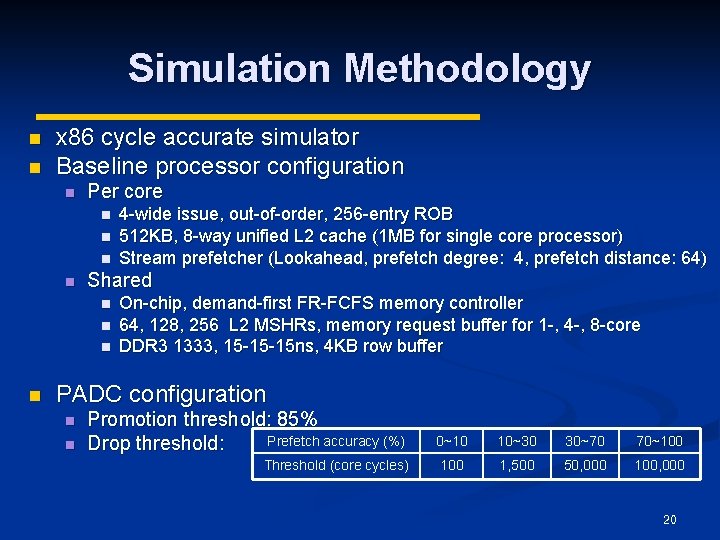

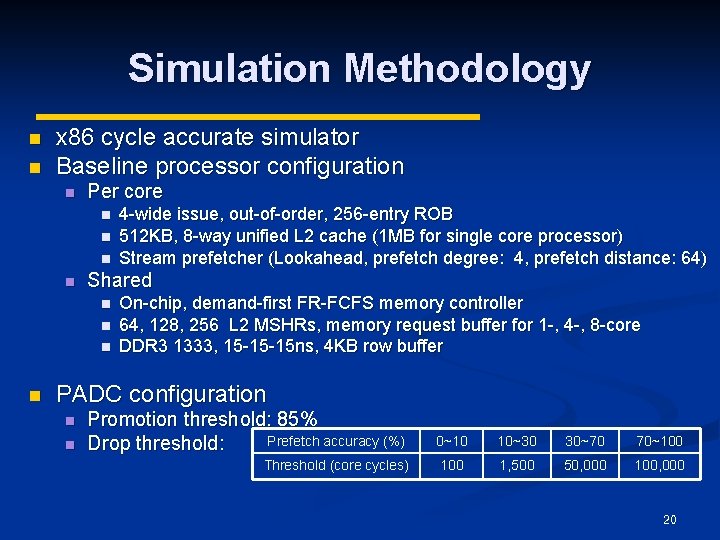

Simulation Methodology n n x 86 cycle accurate simulator Baseline processor configuration n Per core n n Shared n n 4 -wide issue, out-of-order, 256 -entry ROB 512 KB, 8 -way unified L 2 cache (1 MB for single core processor) Stream prefetcher (Lookahead, prefetch degree: 4, prefetch distance: 64) On-chip, demand-first FR-FCFS memory controller 64, 128, 256 L 2 MSHRs, memory request buffer for 1 -, 4 -, 8 -core DDR 3 1333, 15 -15 -15 ns, 4 KB row buffer PADC configuration n n Promotion threshold: 85% Prefetch accuracy (%) Drop threshold: 0~10 10~30 30~70 70~100 Threshold (core cycles) 100 1, 500 50, 000 100, 000 20

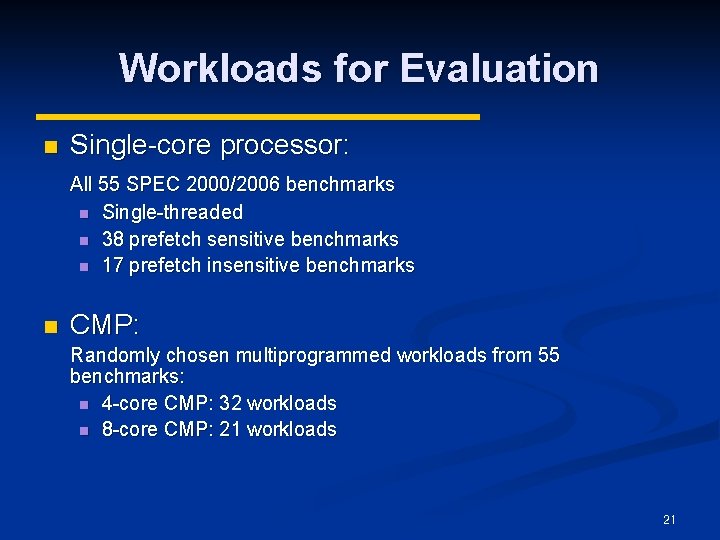

Workloads for Evaluation n Single-core processor: All 55 SPEC 2000/2006 benchmarks n Single-threaded n 38 prefetch sensitive benchmarks n 17 prefetch insensitive benchmarks n CMP: Randomly chosen multiprogrammed workloads from 55 benchmarks: n 4 -core CMP: 32 workloads n 8 -core CMP: 21 workloads 21

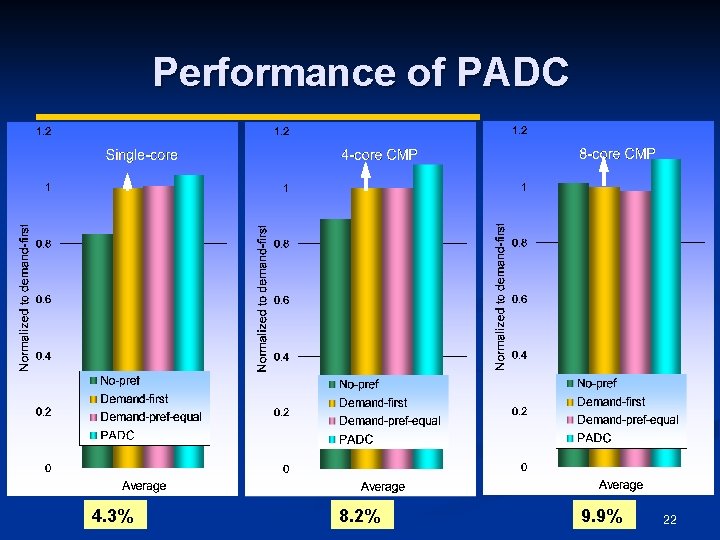

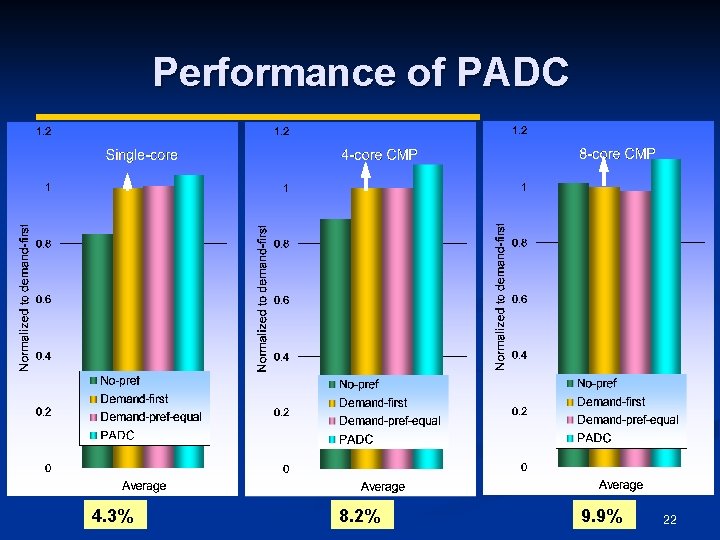

Performance of PADC 4. 3% 8. 2% 9. 9% 22

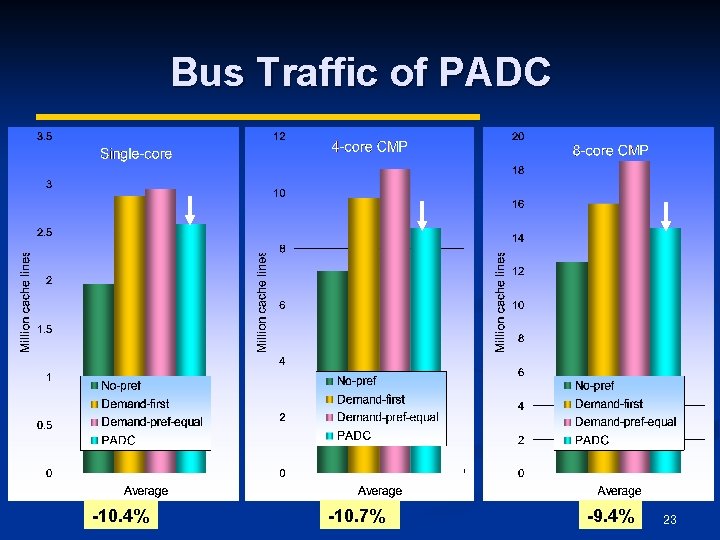

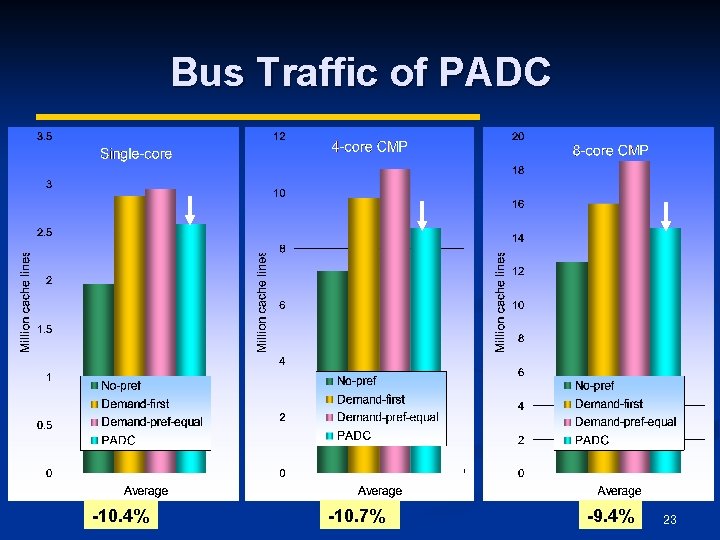

Bus Traffic of PADC -10. 4% -10. 7% -9. 4% 23

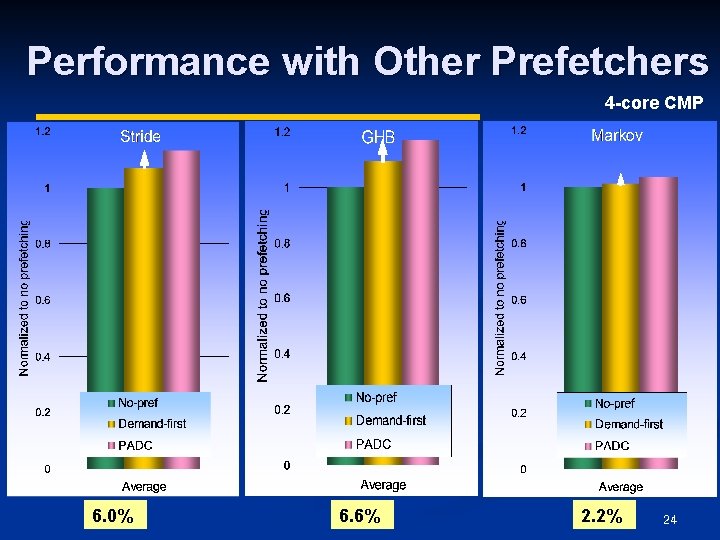

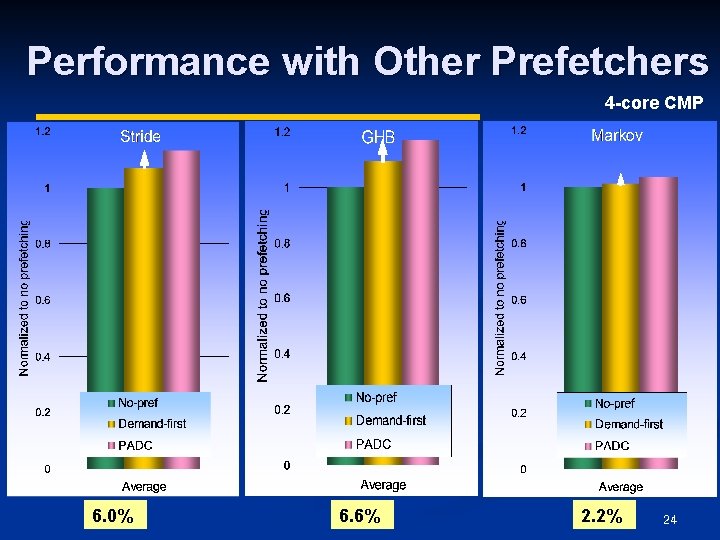

Performance with Other Prefetchers 4 -core CMP 6. 0% 6. 6% 2. 2% 24

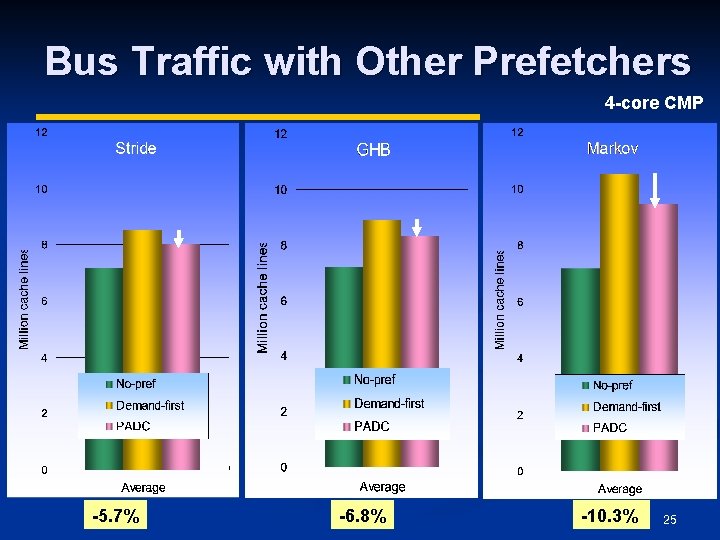

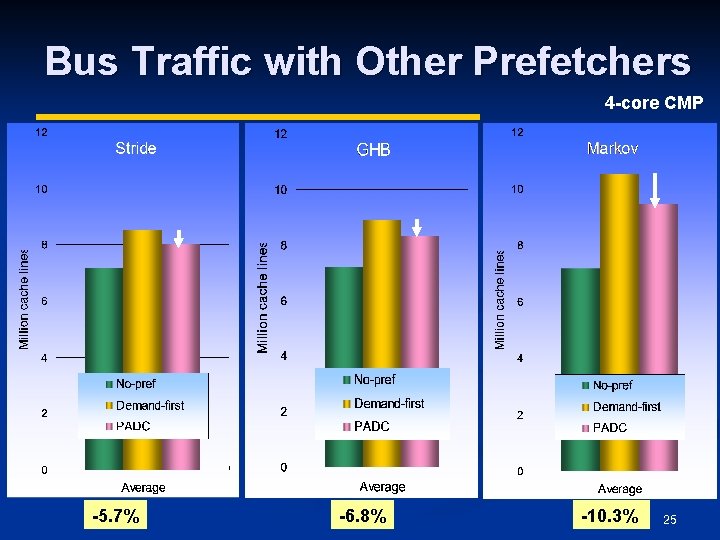

Bus Traffic with Other Prefetchers 4 -core CMP -5. 7% -6. 8% -10. 3% 25

Outline Motivation n Mechanism n Experimental Evaluation n Conclusion n 26

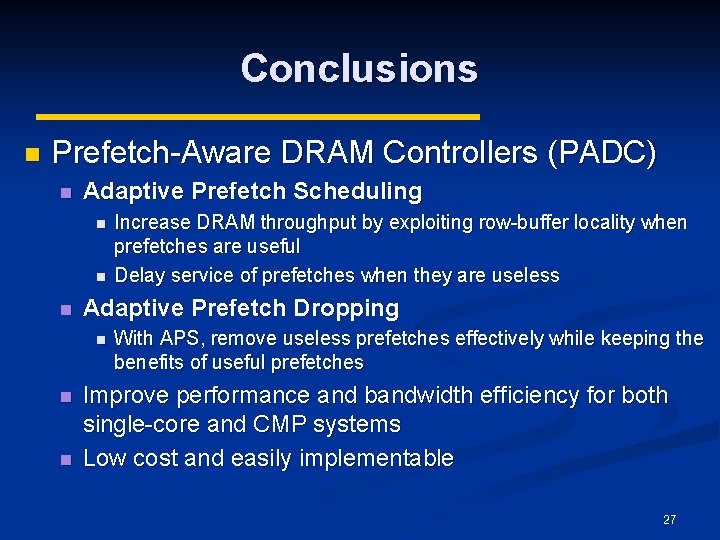

Conclusions n Prefetch-Aware DRAM Controllers (PADC) n Adaptive Prefetch Scheduling n n n Adaptive Prefetch Dropping n n n Increase DRAM throughput by exploiting row-buffer locality when prefetches are useful Delay service of prefetches when they are useless With APS, remove useless prefetches effectively while keeping the benefits of useful prefetches Improve performance and bandwidth efficiency for both single-core and CMP systems Low cost and easily implementable 27

Questions? 28

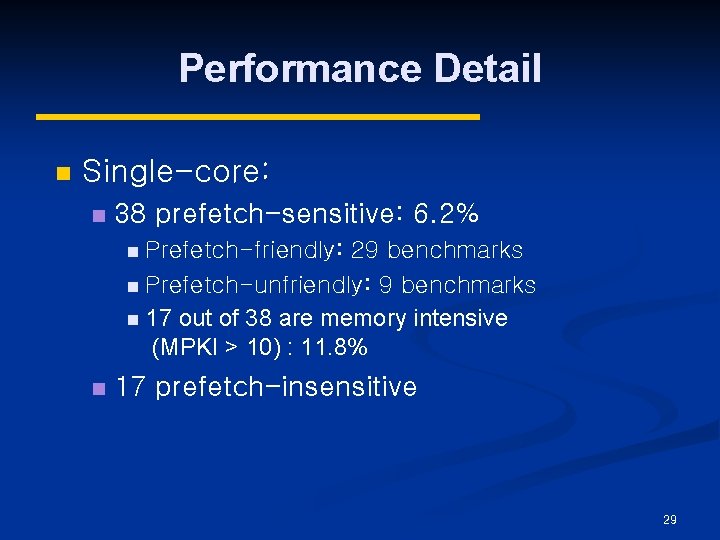

Performance Detail n Single-core: n 38 prefetch-sensitive: 6. 2% n Prefetch-friendly: 29 benchmarks n Prefetch-unfriendly: 9 benchmarks n 17 out of 38 are memory intensive (MPKI > 10) : 11. 8% n 17 prefetch-insensitive 29

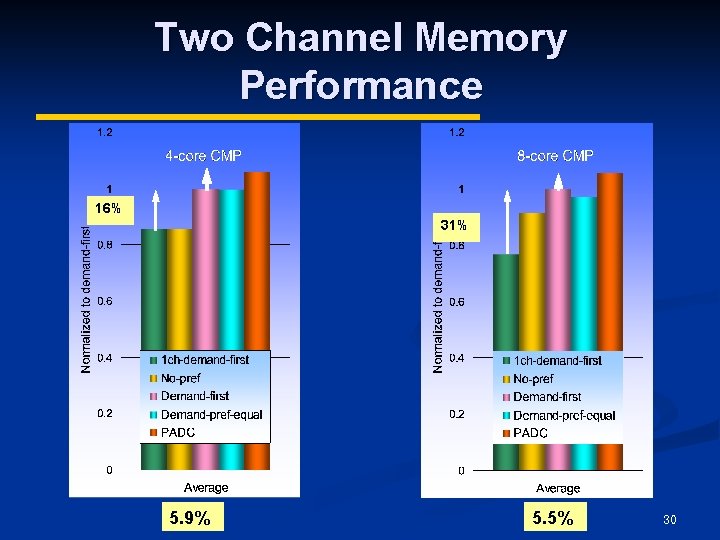

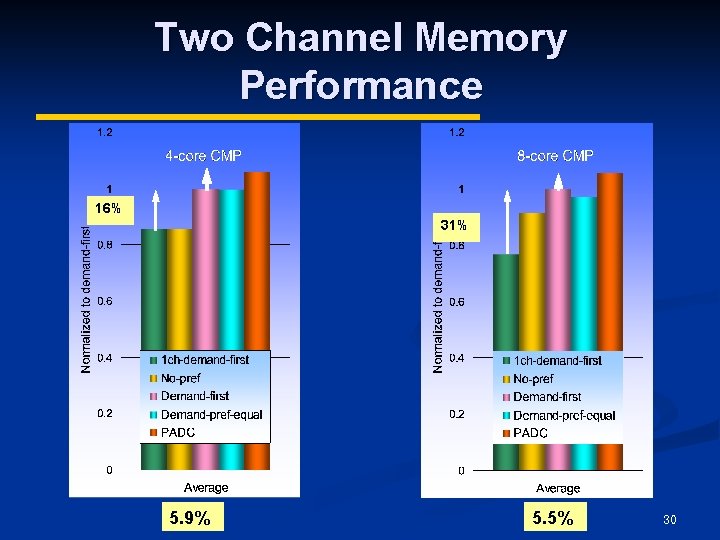

Two Channel Memory Performance 16% 31% 5. 9% 5. 5% 30

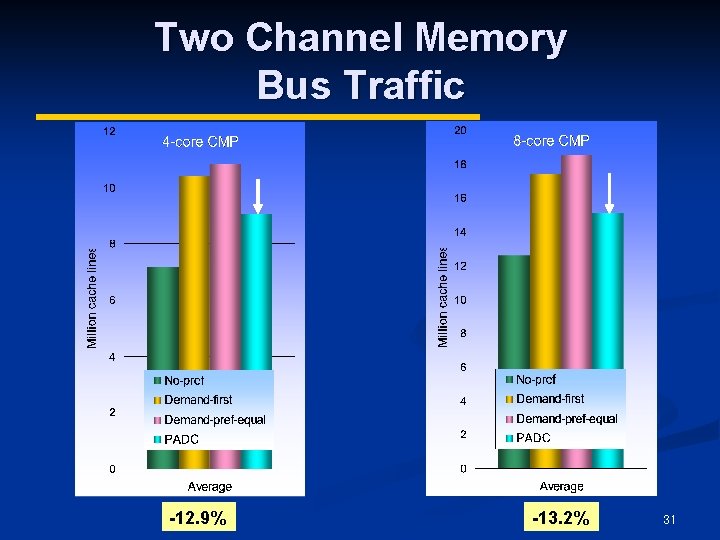

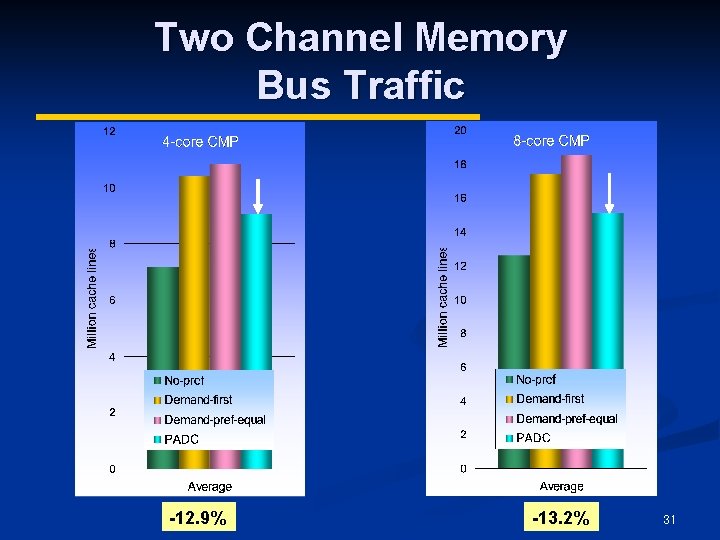

Two Channel Memory Bus Traffic -12. 9% -13. 2% 31

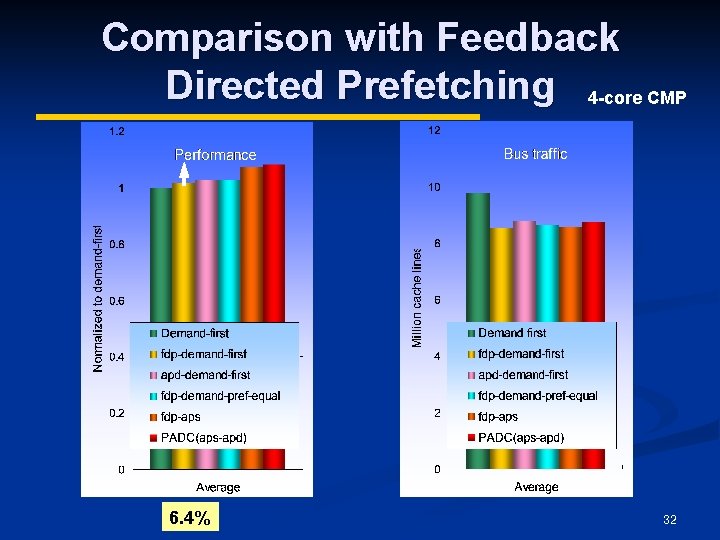

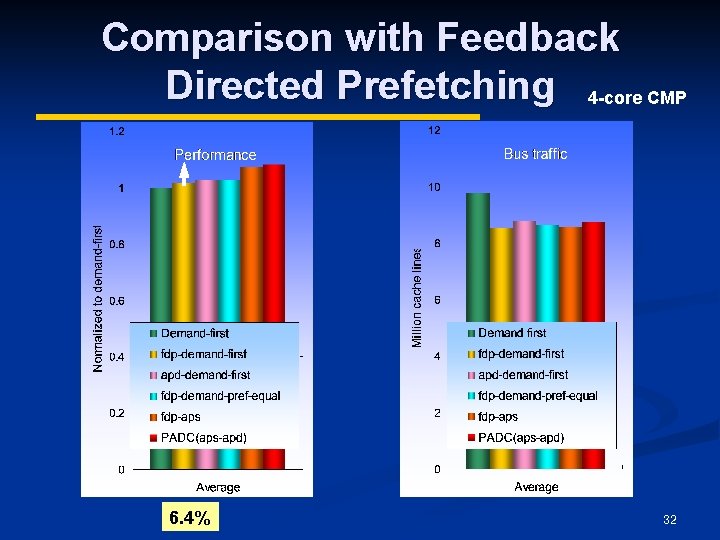

Comparison with Feedback Directed Prefetching 4 -core CMP 6. 4% 32

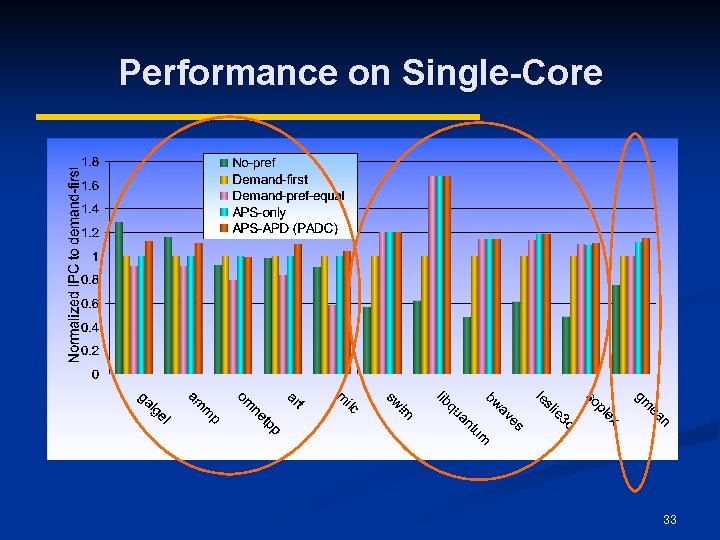

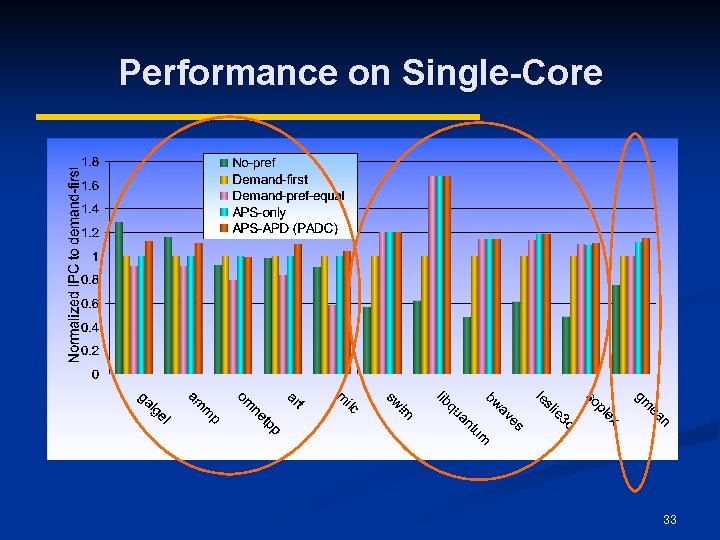

Performance on Single-Core 33

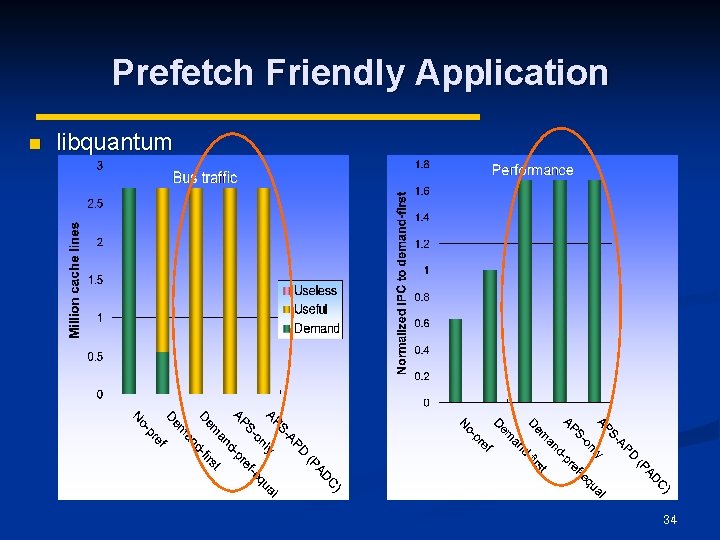

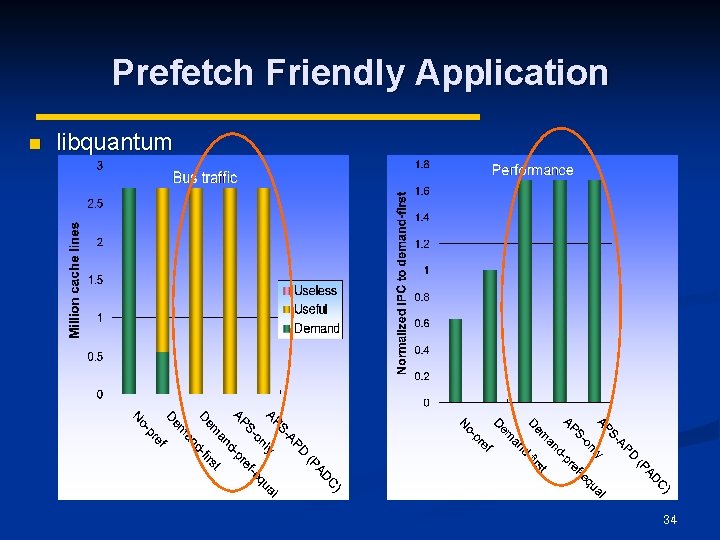

Prefetch Friendly Application n libquantum 34

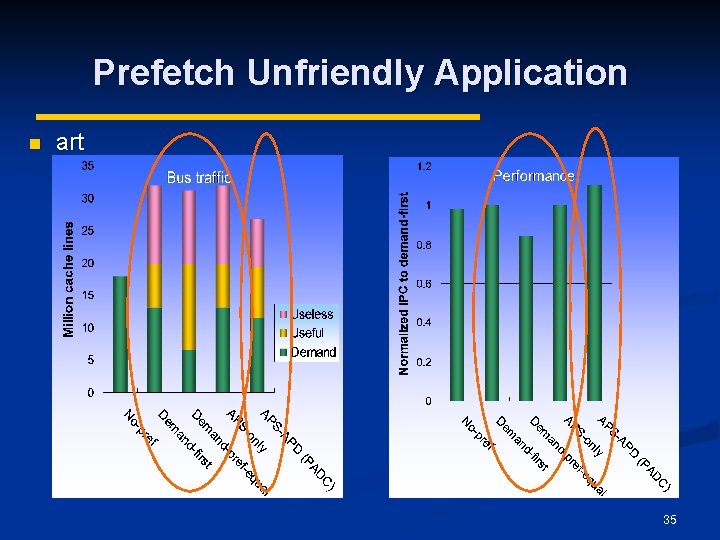

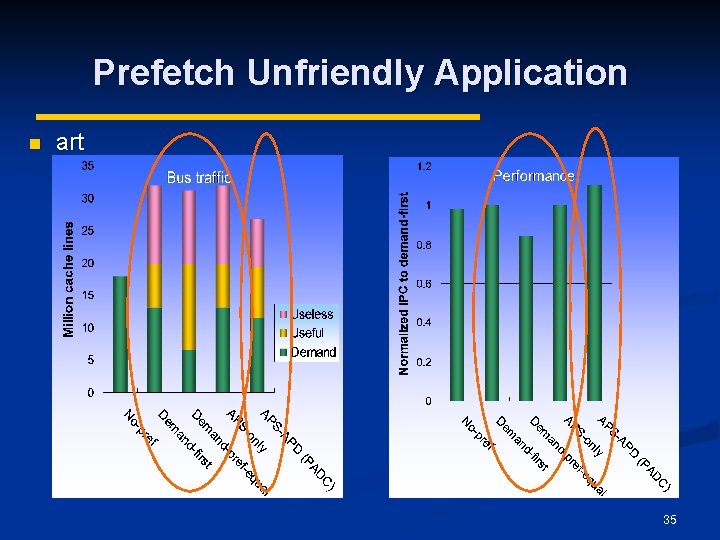

Prefetch Unfriendly Application n art 35

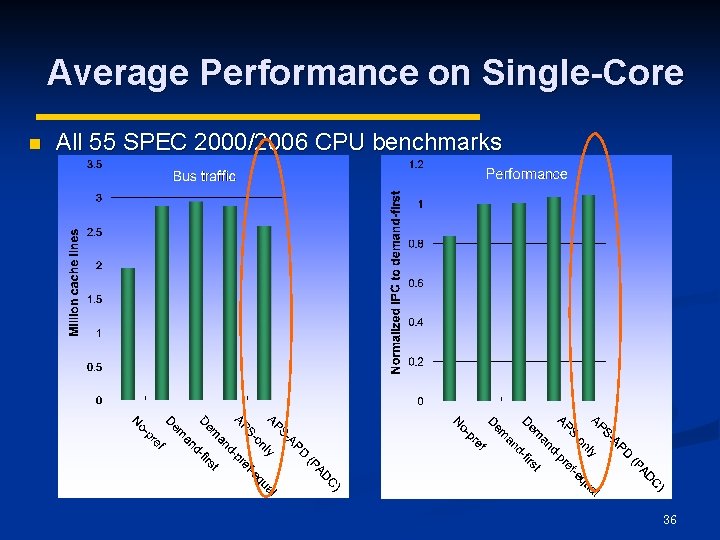

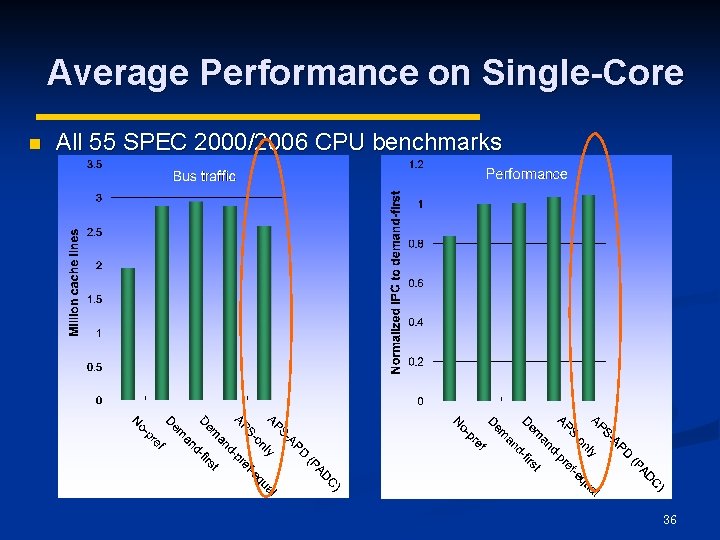

Average Performance on Single-Core n All 55 SPEC 2000/2006 CPU benchmarks 36

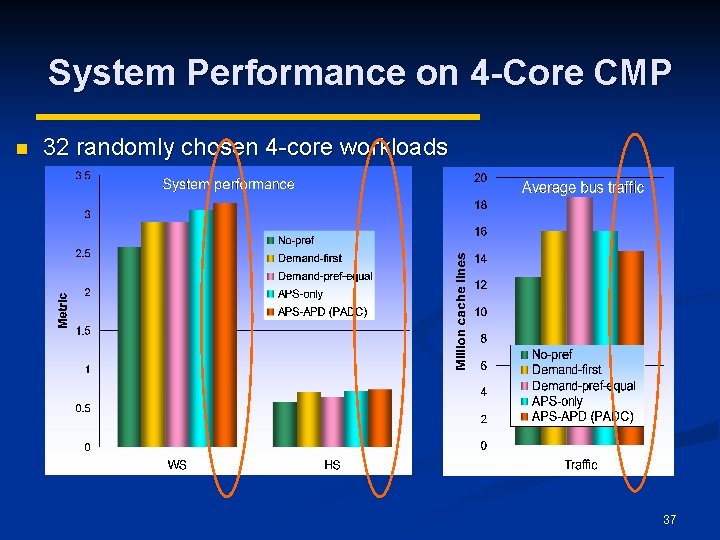

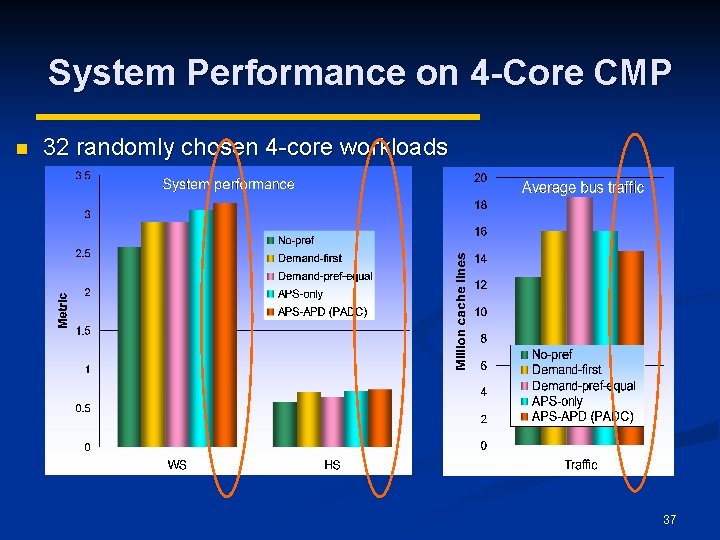

System Performance on 4 -Core CMP n 32 randomly chosen 4 -core workloads 37

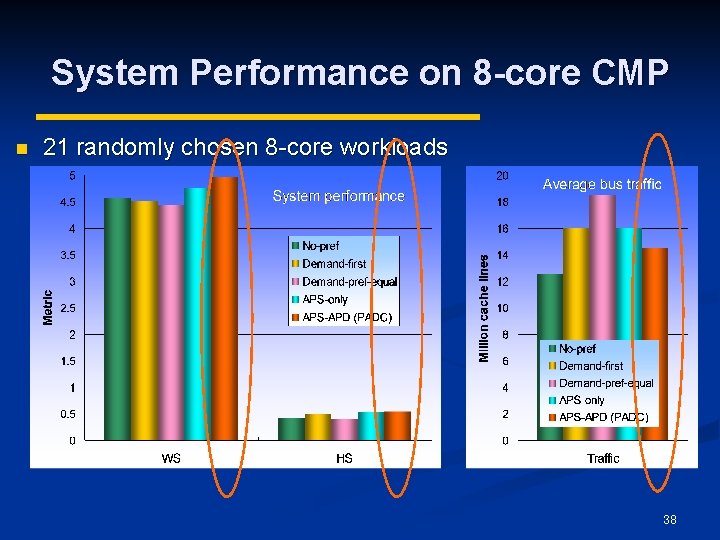

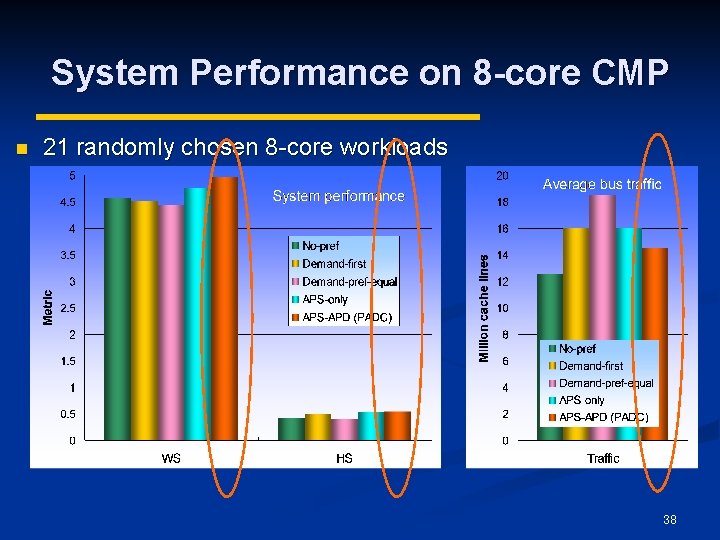

System Performance on 8 -core CMP n 21 randomly chosen 8 -core workloads 38

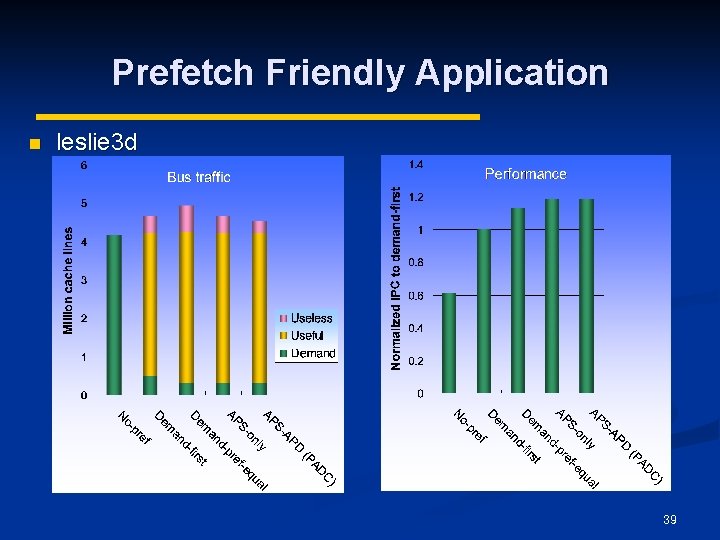

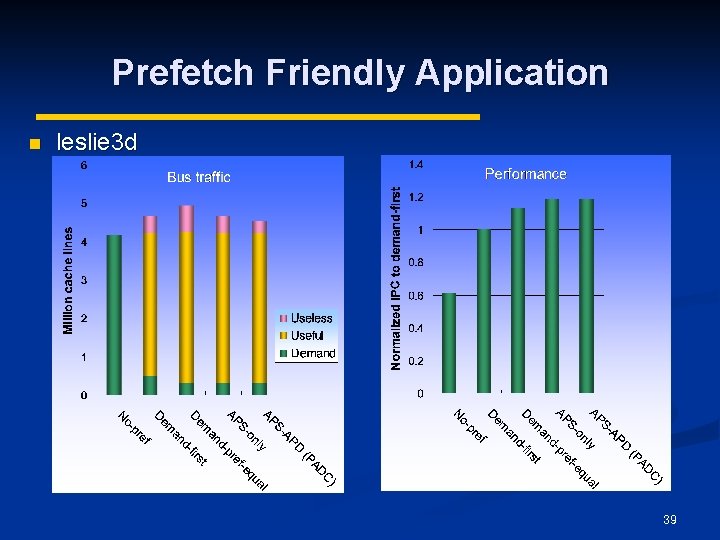

Prefetch Friendly Application n leslie 3 d 39

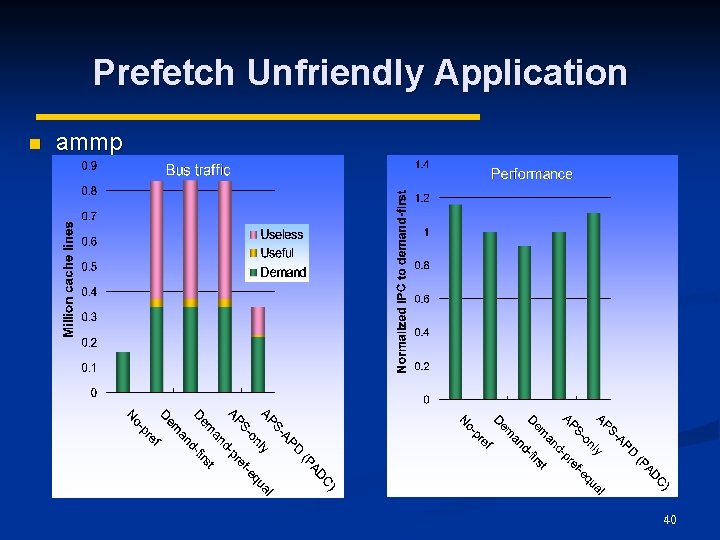

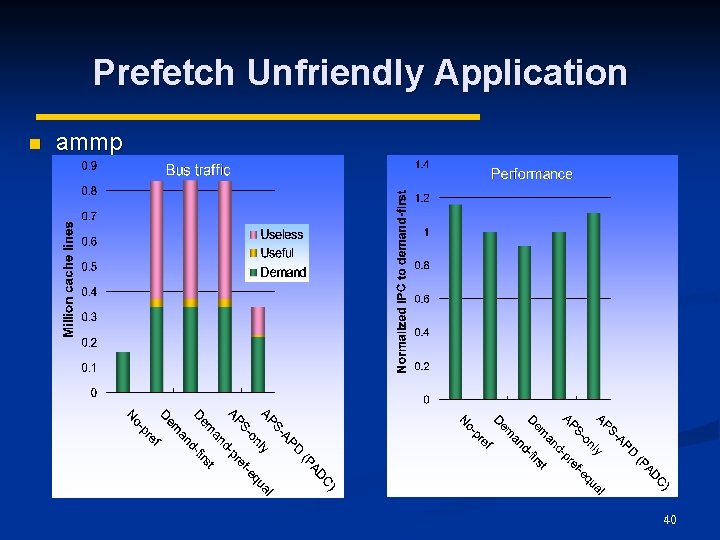

Prefetch Unfriendly Application n ammp 40

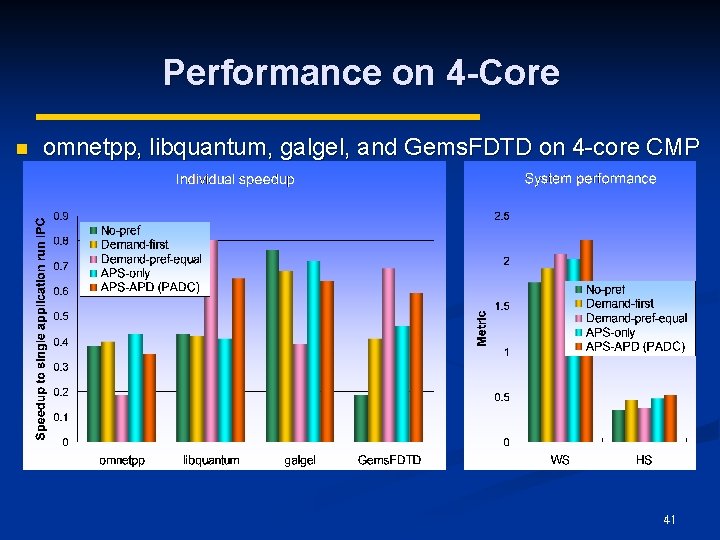

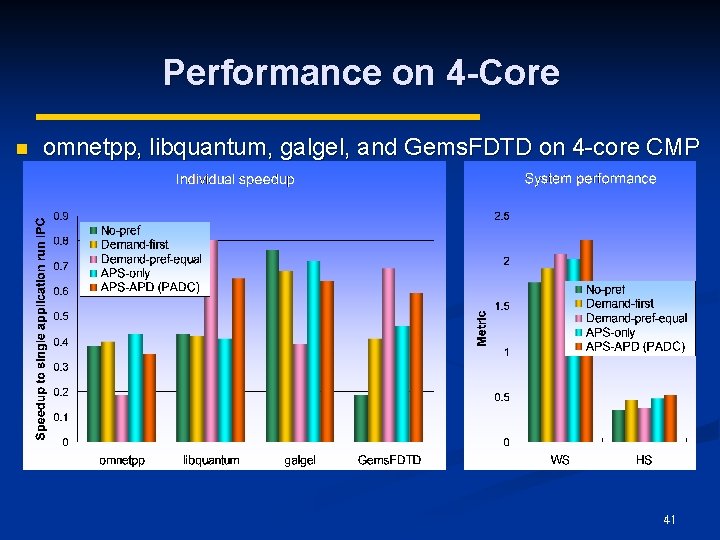

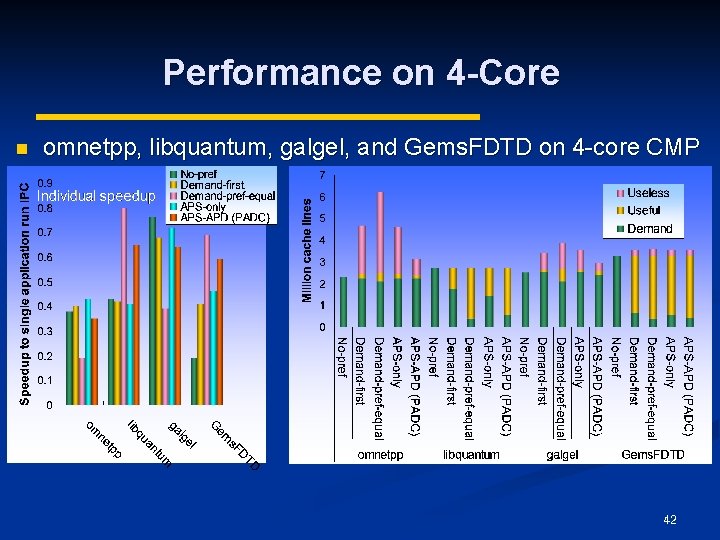

Performance on 4 -Core n omnetpp, libquantum, galgel, and Gems. FDTD on 4 -core CMP 41

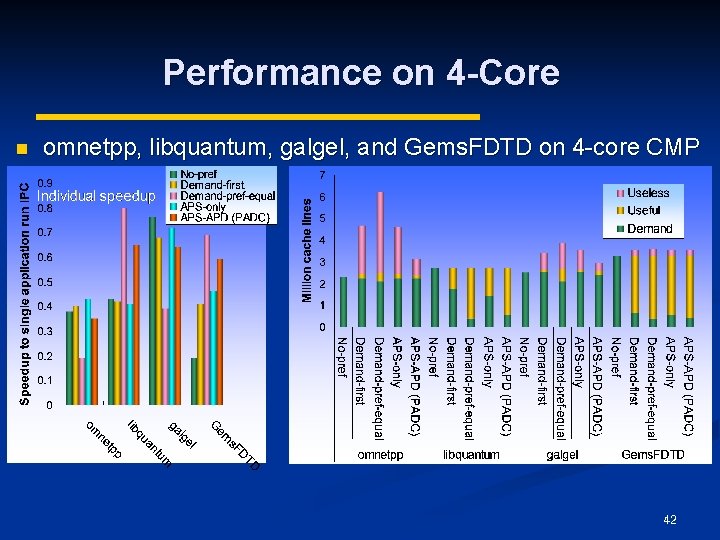

Performance on 4 -Core n omnetpp, libquantum, galgel, and Gems. FDTD on 4 -core CMP 42

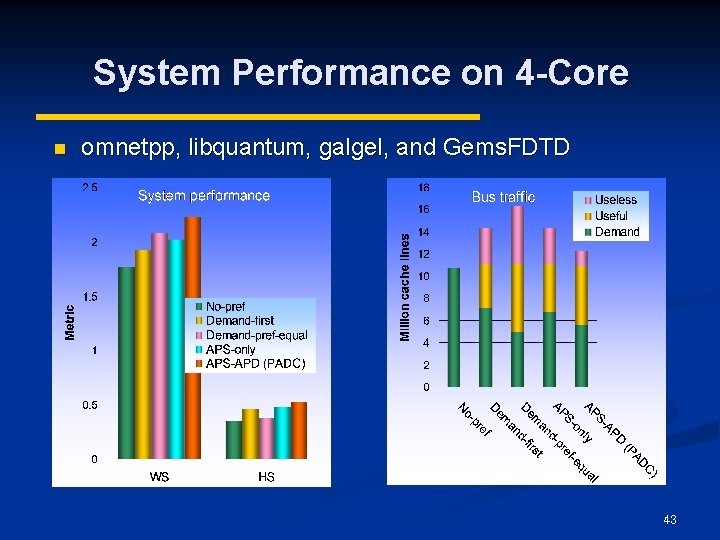

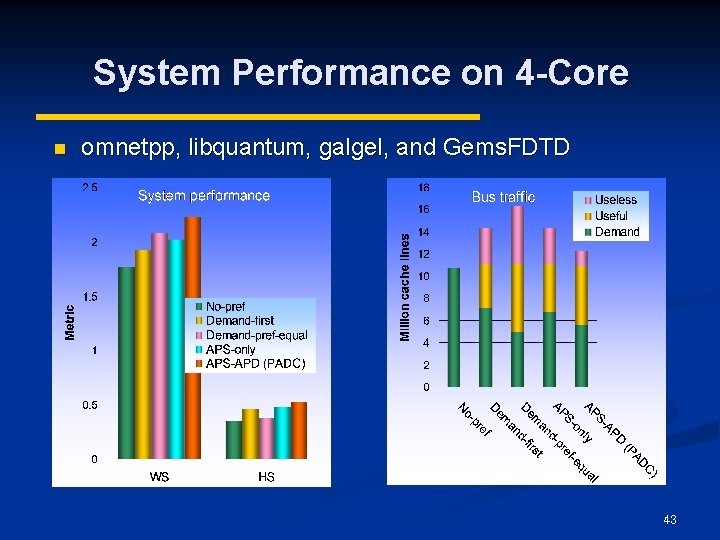

System Performance on 4 -Core n omnetpp, libquantum, galgel, and Gems. FDTD 43