Prediction of Diabetes by Employing a MetaHeuristic Which

Prediction of Diabetes by Employing a Meta-Heuristic Which Can Optimize the Performance of Existing Data Mining Approaches by Huy Nguyen Anh Pham and Evangelos Triantaphyllou ICIS’ 2008 – Portland, Oregon, May 14 - 16, 2008 Department of Computer Science, Louisiana State University Baton Rouge, LA 70803 Emails: hpham 15@lsu. edu and trianta@lsu. edu 6/12/2021 1

Outline n n n n Diabetes and the Pima Indian Diabetes (PID) dataset Selected current work Motivation The Homogeneity Based Algorithm (HBA) Rationale for the HBA Some computational results Conclusions 6/12/2021 2

Diabetes and the PID dataset n Diabetes: If the body does not produce or properly use insulin, the redundant amount of sugar will be driven out by urination. This phenomenon (or disease) is called diabetes. n 20. 8 million children and adults in the United States (approximately 7% of the population) were diagnosed with diabetes (American Diabetes Association, 11/2007). n The Pima Indian Diabetes (PID) dataset : 768 records describing female patients of Pima Indian heritage which are at least 21 years old living near Phoenix, Arizona, USA (UCI-Machine Learning Repository, 2007). 6/12/2021 3

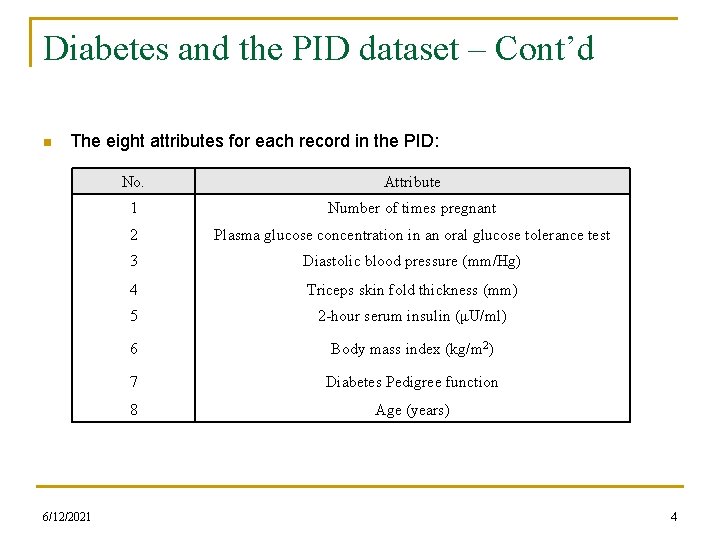

Diabetes and the PID dataset – Cont’d n The eight attributes for each record in the PID: 6/12/2021 No. Attribute 1 Number of times pregnant 2 Plasma glucose concentration in an oral glucose tolerance test 3 Diastolic blood pressure (mm/Hg) 4 Triceps skin fold thickness (mm) 5 2 -hour serum insulin (μU/ml) 6 Body mass index (kg/m 2) 7 Diabetes Pedigree function 8 Age (years) 4

Selected Current work n n n 76. 0% diagnosis accuracy by Smith et al (1988) when using an early neural network. 77. 6% diagnosis accuracy by Jankowski and Kadirkamanathan (1997) when using Inc. Net. 77. 6% diagnosis accuracy by Au and Chan (2001) using a fuzzy approach. 78. 6% diagnosis accuracy by Rutkowski and Cpalka (2003) when using a flexible neural-fuzzy inference system (FLEXNFIS). 81. 8% diagnosis accuracy by Davis (2006) when using a fuzzy neural network. Less than 78% diagnosis accuracy by the Statlog project (1994) when using different classification algorithms. 6/12/2021 5

Motivation n In medical diagnosis there are three different types of possible errors: q q q 6/12/2021 The false-negative type in which a patient, who in reality has that disease, is diagnosed as disease free. The false-positive type in which a patient, who in reality does not have that disease, is diagnosed as having that disease. The unclassifiable type in which the diagnostic system cannot diagnose a given case. This happens due to insufficient knowledge extracted from the historic data. 6

Motivation – Cont’d n Current medical data mining approaches often: q Assign equal penalty costs for the false-positive and the falsenegative types: n Diagnose a new patient to be in the false-positive type: q Make the patient to worry unnecessarily. q Lead to unnecessary treatments and expenses. q Not life-threatening possibilities. n Diagnose a new patient to be in the false-negative type: q No treatment on time or none at all. q Conditions may deteriorate and the patient’s life may be at risk. => The two penalty costs for the false-positive and the false-negative types may be significantly different. 6/12/2021 7

Motivation – Cont’d n Current medical data mining approaches ignore the penalty cost for the unclassifiable type: q Because of insufficient knowledge extracted from the historic data, a given patient should be predicted as in the unclassifiable type. q q However, in reality current approaches have often predicted the patient as either having diabetes or being disease free. Such misdiagnosis may lead to either unnecessary treatments or no treatment when one is needed. => Consideration for the unclassifiable type is required. 6/12/2021 8

Outline n n n n Diabetes and the PID dataset Selected current work Motivation The Homogeneity Based Algorithm (HBA) Rationale for the HBA Some computational results Conclusions 6/12/2021 9

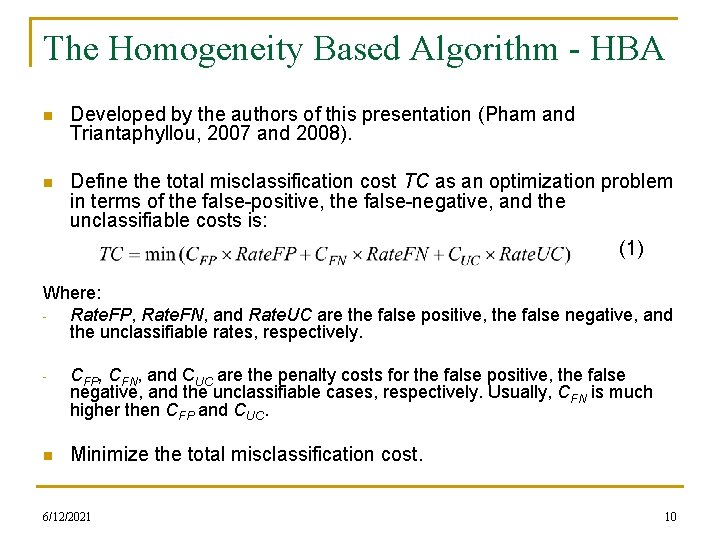

The Homogeneity Based Algorithm - HBA n Developed by the authors of this presentation (Pham and Triantaphyllou, 2007 and 2008). n Define the total misclassification cost TC as an optimization problem in terms of the false-positive, the false-negative, and the unclassifiable costs is: (1) Where: Rate. FP, Rate. FN, and Rate. UC are the false positive, the false negative, and the unclassifiable rates, respectively. - CFP, CFN, and CUC are the penalty costs for the false positive, the false negative, and the unclassifiable cases, respectively. Usually, CFN is much higher then CFP and CUC. n Minimize the total misclassification cost. 6/12/2021 10

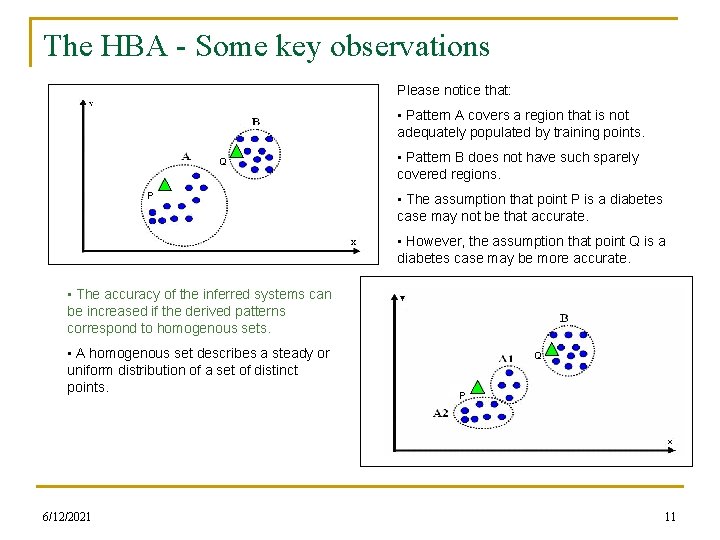

The HBA - Some key observations Please notice that: • Pattern A covers a region that is not adequately populated by training points. Q P • Pattern B does not have such sparely covered regions. • The assumption that point P is a diabetes case may not be that accurate. • However, the assumption that point Q is a diabetes case may be more accurate. • The accuracy of the inferred systems can be increased if the derived patterns correspond to homogenous sets. • A homogenous set describes a steady or uniform distribution of a set of distinct points. 6/12/2021 Q P 11

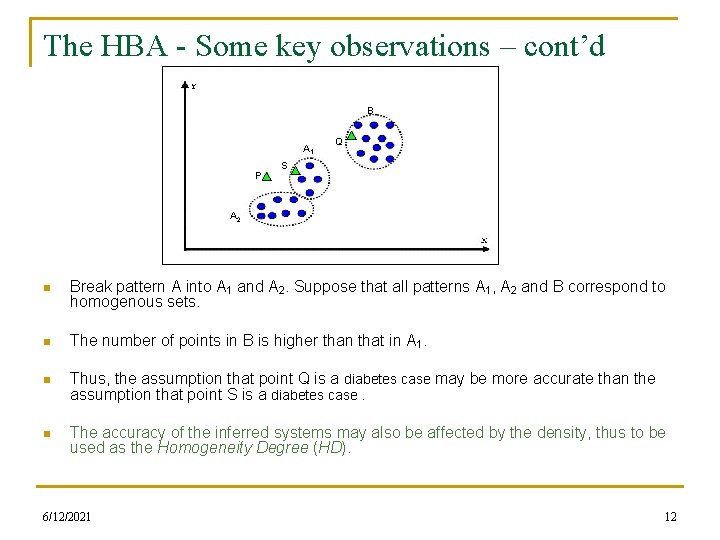

The HBA - Some key observations – cont’d B A 1 P Q S A 2 n Break pattern A into A 1 and A 2. Suppose that all patterns A 1, A 2 and B correspond to homogenous sets. n The number of points in B is higher than that in A 1. n Thus, the assumption that point Q is a diabetes case may be more accurate than the assumption that point S is a diabetes case. n The accuracy of the inferred systems may also be affected by the density, thus to be used as the Homogeneity Degree (HD). 6/12/2021 12

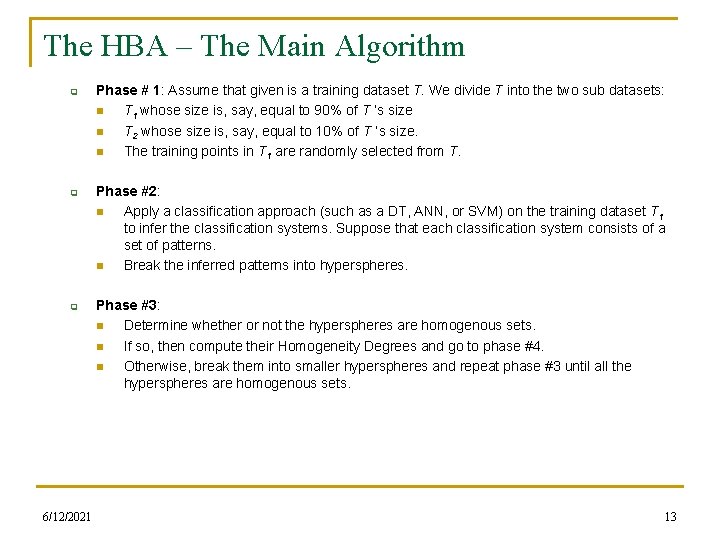

The HBA – The Main Algorithm q q q 6/12/2021 Phase # 1: Assume that given is a training dataset T. We divide T into the two sub datasets: n T 1 whose size is, say, equal to 90% of T ’s size n T 2 whose size is, say, equal to 10% of T ’s size. n The training points in T 1 are randomly selected from T. Phase #2: n Apply a classification approach (such as a DT, ANN, or SVM) on the training dataset T 1 to infer the classification systems. Suppose that each classification system consists of a set of patterns. n Break the inferred patterns into hyperspheres. Phase #3: n Determine whether or not the hyperspheres are homogenous sets. n If so, then compute their Homogeneity Degrees and go to phase #4. n Otherwise, break them into smaller hyperspheres and repeat phase #3 until all the hyperspheres are homogenous sets. 13

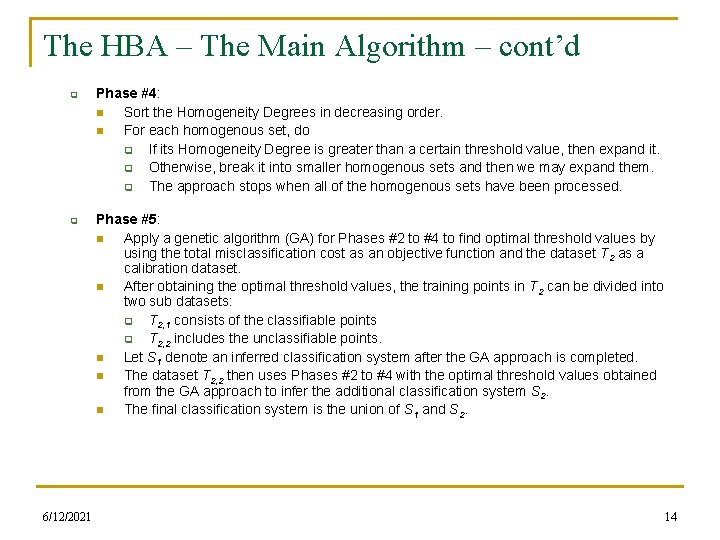

The HBA – The Main Algorithm – cont’d q q 6/12/2021 Phase #4: n Sort the Homogeneity Degrees in decreasing order. n For each homogenous set, do q If its Homogeneity Degree is greater than a certain threshold value, then expand it. q Otherwise, break it into smaller homogenous sets and then we may expand them. q The approach stops when all of the homogenous sets have been processed. Phase #5: n Apply a genetic algorithm (GA) for Phases #2 to #4 to find optimal threshold values by using the total misclassification cost as an objective function and the dataset T 2 as a calibration dataset. n After obtaining the optimal threshold values, the training points in T 2 can be divided into two sub datasets: q T 2, 1 consists of the classifiable points q T 2, 2 includes the unclassifiable points. n Let S 1 denote an inferred classification system after the GA approach is completed. n The dataset T 2, 2 then uses Phases #2 to #4 with the optimal threshold values obtained from the GA approach to infer the additional classification system S 2. n The final classification system is the union of S 1 and S 2. 14

Rationale for the HBA n Consider the problem as a optimization formulation in terms of the false-positive, the false-negative, and the unclassifiable costs. n The HBA optimally adjusts the inferred classification systems. n We use the Homogeneity Degree in the control conditions for both expansion (to control generalization) and breaking (to control fitting). n Homogenous sets are expanded in decreasing order of their Homogeneity Degrees. 6/12/2021 15

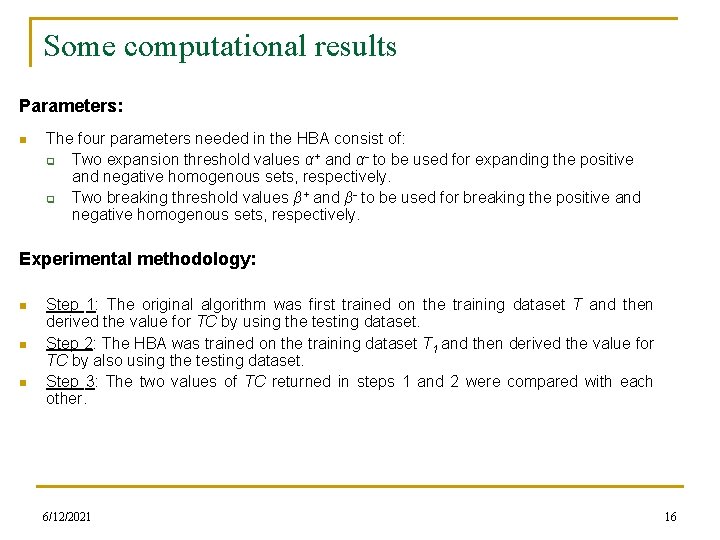

Some computational results Parameters: n The four parameters needed in the HBA consist of: q Two expansion threshold values α+ and α- to be used for expanding the positive and negative homogenous sets, respectively. q Two breaking threshold values β+ and β- to be used for breaking the positive and negative homogenous sets, respectively. Experimental methodology: n n n Step 1: The original algorithm was first trained on the training dataset T and then derived the value for TC by using the testing dataset. Step 2: The HBA was trained on the training dataset T 1 and then derived the value for TC by also using the testing dataset. Step 3: The two values of TC returned in steps 1 and 2 were compared with each other. 6/12/2021 16

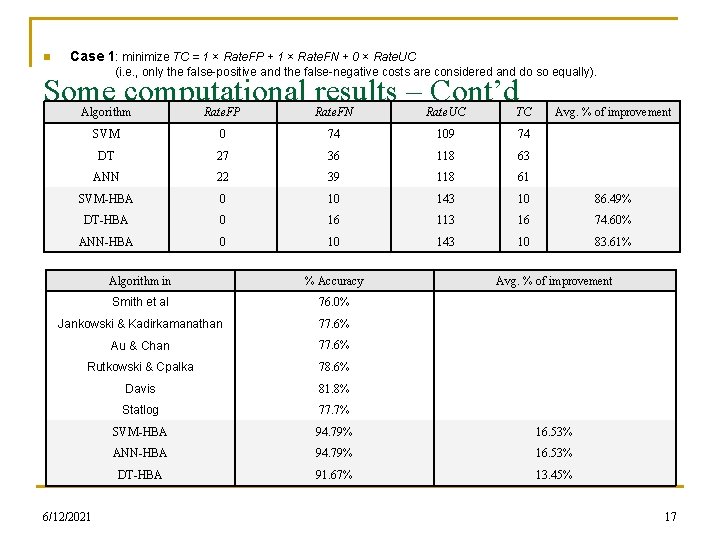

n Case 1: minimize TC = 1 × Rate. FP + 1 × Rate. FN + 0 × Rate. UC (i. e. , only the false-positive and the false-negative costs are considered and do so equally). Some computational results – Cont’d n Algorithm Rate. FP Rate. FN Rate. UC TC Avg. % of improvement SVM 0 74 109 74 DT 27 36 118 63 ANN 22 39 118 61 SVM-HBA 0 10 143 10 86. 49% DT-HBA 0 16 113 16 74. 60% The HBA, on the average, decreased the total misclassification cost by ANN-HBA 0 10 143 about 81. 57%. 10 83. 61% Algorithm in % Accuracy Smith et al 76. 0% Jankowski & Kadirkamanathan 77. 6% Au & Chan 77. 6% Rutkowski & Cpalka 78. 6% Davis 81. 8% Statlog 77. 7% SVM-HBA 94. 79% 16. 53% ANN-HBA 94. 79% 16. 53% DT-HBA 91. 67% 13. 45% 6/12/2021 Avg. % of improvement 17

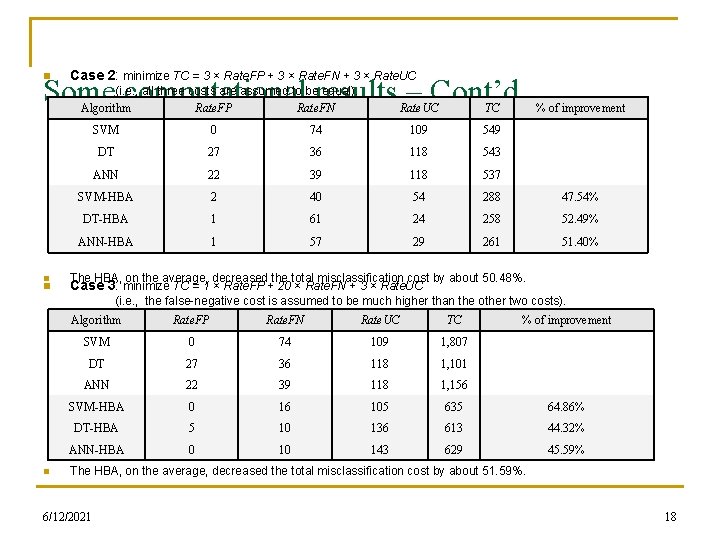

n Case 2: minimize TC = 3 × Rate. FP + 3 × Rate. FN + 3 × Rate. UC Some computational results – Cont’d (i. e. , all three costs are assumed to be equal). Algorithm Rate. FP Rate. FN n n n Rate. UC TC % of improvement SVM 0 74 109 549 DT 27 36 118 543 ANN 22 39 118 537 SVM-HBA 2 40 54 288 47. 54% DT-HBA 1 61 24 258 52. 49% ANN-HBA 1 57 29 261 51. 40% The HBA, on the average, decreased the total misclassification cost by about 50. 48%. Case 3: minimize TC = 1 × Rate. FP + 20 × Rate. FN + 3 × Rate. UC (i. e. , the false-negative cost is assumed to be much higher than the other two costs). Algorithm Rate. FP Rate. FN Rate. UC TC % of improvement SVM 0 74 109 1, 807 DT 27 36 118 1, 101 ANN 22 39 118 1, 156 SVM-HBA 0 16 105 635 64. 86% DT-HBA 5 10 136 613 44. 32% ANN-HBA 0 10 143 629 45. 59% The HBA, on the average, decreased the total misclassification cost by about 51. 59%. 6/12/2021 18

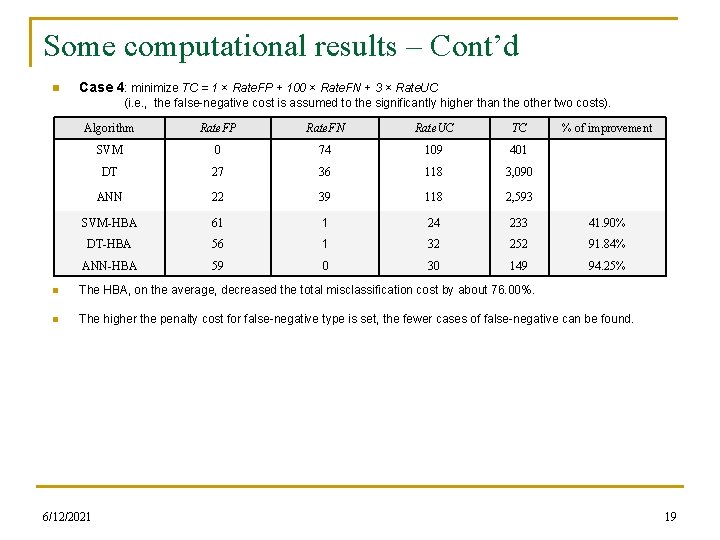

Some computational results – Cont’d n Case 4: minimize TC = 1 × Rate. FP + 100 × Rate. FN + 3 × Rate. UC (i. e. , the false-negative cost is assumed to the significantly higher than the other two costs). Algorithm Rate. FP Rate. FN Rate. UC TC % of improvement SVM 0 74 109 401 DT 27 36 118 3, 090 ANN 22 39 118 2, 593 SVM-HBA 61 1 24 233 41. 90% DT-HBA 56 1 32 252 91. 84% ANN-HBA 59 0 30 149 94. 25% n The HBA, on the average, decreased the total misclassification cost by about 76. 00%. n The higher the penalty cost for false-negative type is set, the fewer cases of false-negative can be found. 6/12/2021 19

Conclusions n Millions of people in the United States and in the world have diabetes. n The ability to predict diabetes early plays an important role for the patient’s treatment process. n The correct prediction percentage of current algorithms may oftentimes be coincidental. n This study identified the need for different penalty costs for the false-positive, the false-negative, and the unclassifiable types of errors in medical data mining. n This study applied a meta heuristic approach, called the Homogeneity-Based Algorithm (HBA), for enhancing the diabetes prediction. n The HBA first defines the desired goal as an optimization problem in terms of the false-positive, the false-negative, and the unclassifiable costs. n The HBA is then used in conjunction with traditional classification algorithms (such as SVMs, DTs, ANNs, etc) to enhance the diabetes prediction. n The Pima Indian diabetes dataset has been used for evaluating the performance of the HBA. n The obtained results appear to be very important both for accurately predicting diabetes and also for the medical data mining community in general. These slides are also available at: http: //www. csc. lsu. edu/trianta 6/12/2021 20

References n n n n n Asuncion A. and D. J. Newman, “UCI-Machine Learning Repository, ” University of California, Irvine, California, USA, School of Information and Computer Sciences, 2007. Smith J. W. , J. E. Everhart, W. C. Dickson, W. C. Knowler, and R. S. Johannes, “Using the ADAP learning algorithm to forecast the onset of diabetes mellitus, ” Proceedings of 12 th Symposium on Computer Applications and Medical Care, Los Angeles, California, USA, 1988, pp. 261 - 265. Jankowski N. and V. Kadirkamanathan, “Statistical control of RBF-like networks for classification, ” Proceedings of the 7 th International Conference on Artificial Neural Networks (ICANN), Lausanne, Switzerland, 1997, pp. 385 - 390. Au W. H. and K. C. C. Chan, “Classification with degree of membership: A fuzzy approach, ” Proceedings of the 1 st IEEE Int'l Conference on Data Mining, San Jose, California, USA, 2001, pp. 35 - 42. Rutkowski L. and K. Cpalka, “Flexible neuro-fuzzy systems, ” IEEE Transactions on Neural Networks, Vol. 14, 2003, pp. 554 - 574. Davis W. L. IV, “Enhancing Pattern Classification with Relational Fuzzy Neural Networks and Square BK-Products, ” Ph. D Dissertation in Computer Science, 2006, pp. 71 - 74. Michie D. , D. J. Spiegelhalter, and C. C. Taylor, “Machine Learning, Neural and Statistical Classification, ” Englewood Cliffs in Series Artificial Intelligence, Prentice Hall, Chapter 9, 1994, pp. 157 - 160. Pham H. N. A. and E. Triantaphyllou, “The Impact of Overfitting and Overgeneralization on the Classification Accuracy in Data Mining, ” in Soft Computing for Knowledge Discovery and Data Mining, (O. Maimon and L. Rokach, Editors), Springer, New-York, USA, 2007, Part 4, Chapter 5, pp. 391 - 431. Pham H. N. A. and E. Triantaphyllou, "An Optimization Approach for Improving Accuracy by Balancing Overfitting and Overgeneralization in Data Mining, " submitted for publication, January 2008. Thank you Any questions? 6/12/2021 21

- Slides: 21