Prediction Basic concepts Scope Prediction of Resources Calendar

Prediction Basic concepts

Scope Prediction of: Ø Resources Ø Calendar time Ø Quality (or lack of quality) Ø Change impact Ø Process performance Ø Often confounded with the decision process

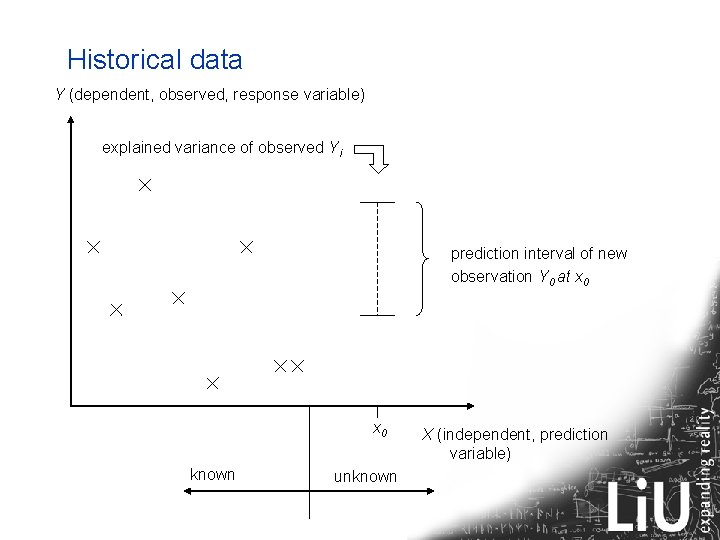

Historical data Y (dependent, observed, response variable) explained variance of observed Yi prediction interval of new observation Y 0 at x 0 known unknown X (independent, prediction variable)

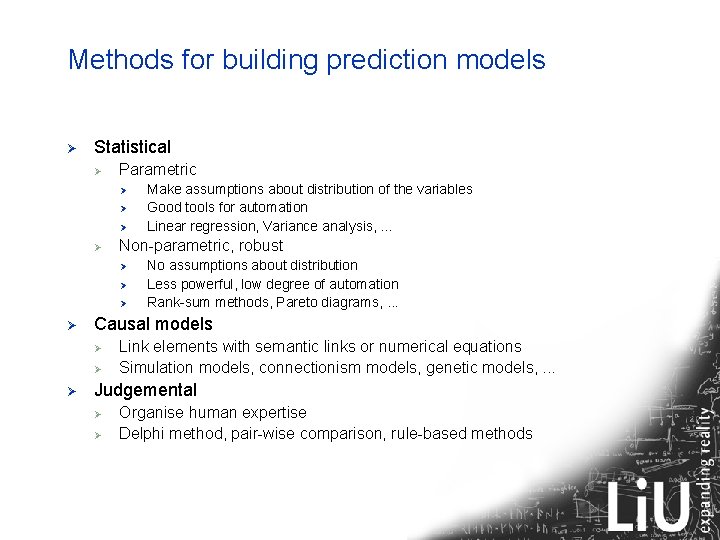

Methods for building prediction models Ø Statistical Ø Parametric Ø Ø Non-parametric, robust Ø Ø No assumptions about distribution Less powerful, low degree of automation Rank-sum methods, Pareto diagrams, . . . Causal models Ø Ø Ø Make assumptions about distribution of the variables Good tools for automation Linear regression, Variance analysis, . . . Link elements with semantic links or numerical equations Simulation models, connectionism models, genetic models, . . . Judgemental Ø Ø Organise human expertise Delphi method, pair-wise comparison, rule-based methods

Common SE-predictions Ø Ø Ø Ø Detecting fault-prone modules Project effort estimation Change Impact Analysis Ripple effect analysis Process improvement models Model checking Consistency checking

Introduction Ø Ø Ø There are many faults in software Faults are costly to find and repair The later we find faults the more costly they are We want to find faults early We want to have automated ways of finding faults Our approach Ø Ø Automatic measurements on models Use metrics to predict fault-prone modules

Related work Ø Niclas Ohlsson, Ph. D work 1993 Ø Ø Ø Lionel Briand, Khaled El Eman, et al Ø Ø Numerous contributions in exploring relations between faultproness and object-oriented metrics Piotr Tomaszewski, Ph. D Karlskrona 2006 Ø Ø Ø AXE, fault prediction, introduced Pareto diagrams, Predictor: number of new and changed signals Studies fault density Comparison of statistical methods and expert judgement Jeanette Heidenberg, Andreas Nåls Ø Discover weak design and propose changes

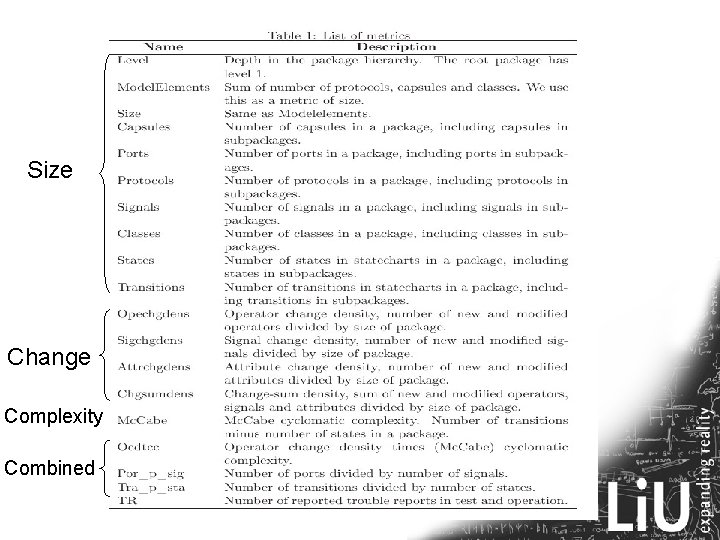

Approach Ø Find metrics (independent variables) Ø Ø Ø Number of model elements (size) Number of changed methods (change) Transitions per state (complexity) Changed operations * transitions per state (combinations). . . Use metrics to predict (dependent variable) Ø Number of TRs

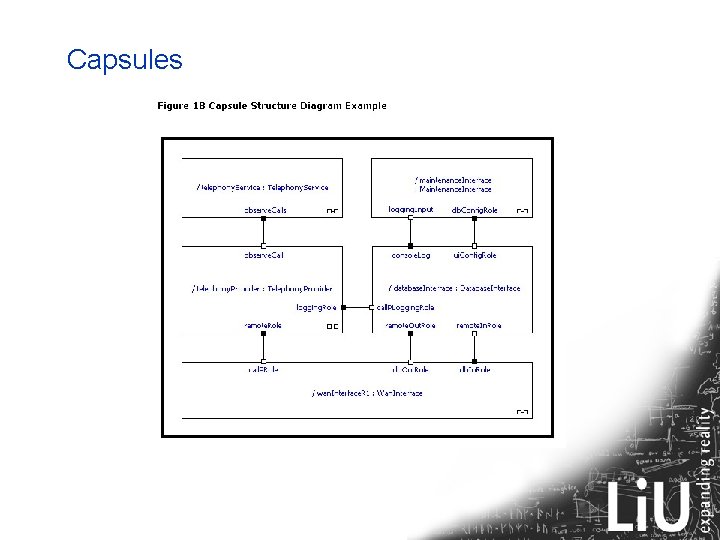

Capsules

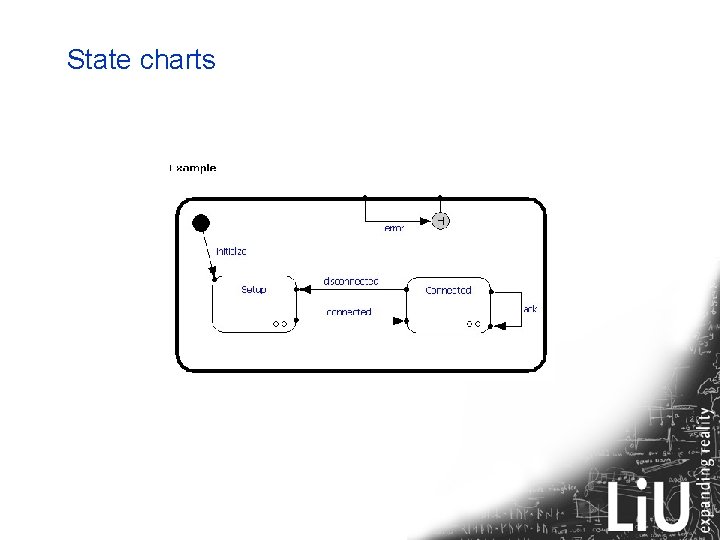

State charts

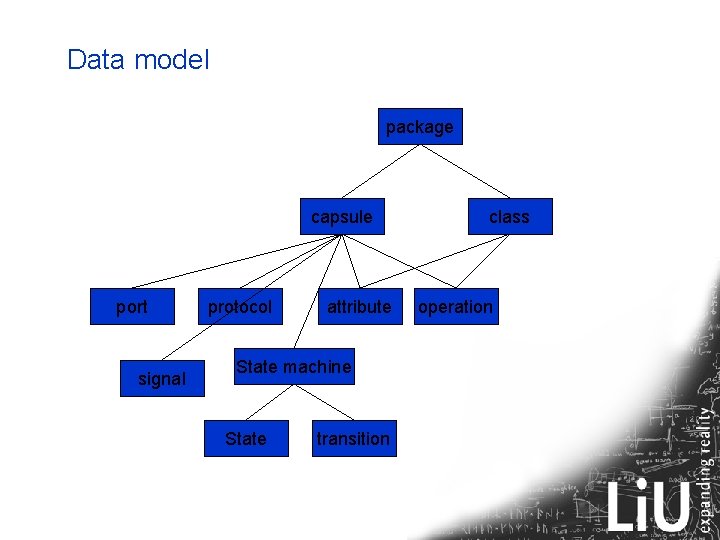

Data model package capsule port signal protocol attribute State machine State transition class operation

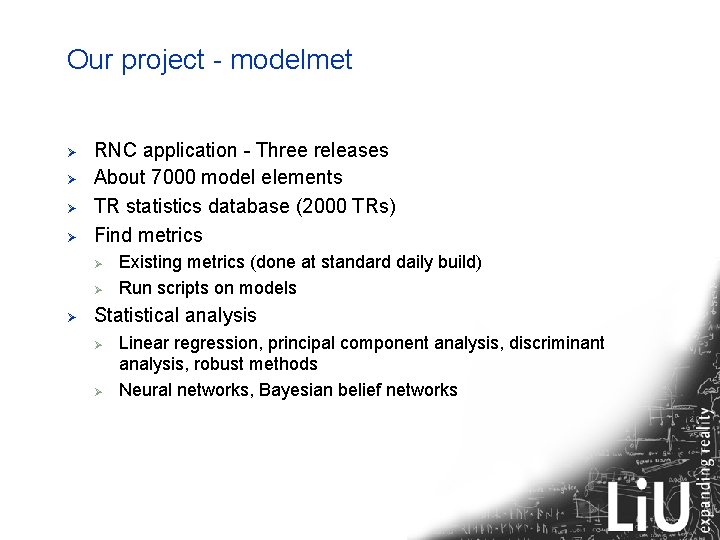

Our project - modelmet Ø Ø RNC application - Three releases About 7000 model elements TR statistics database (2000 TRs) Find metrics Ø Ø Ø Existing metrics (done at standard daily build) Run scripts on models Statistical analysis Ø Ø Linear regression, principal component analysis, discriminant analysis, robust methods Neural networks, Bayesian belief networks

Size Change Complexity Combined

Metrics based on change, system A

Metrics based on change, system B

Complexity and size metrics, system A

Complexity and Size metrics, system B

Other metrics, system A TRD = C + 0. 034 states – 0. 9 modeleleme

Other metrics, system B

How to use predictions Ø Uneven distribution of faults is common – 80/20 rule Ø Perform special treatment on selected parts Ø Ø Ø Ø Select experienced designers Provide good working conditions Parallell teams Inspections Static and dynamic analysis tools. . . Perform root-cause analysis and make corrections

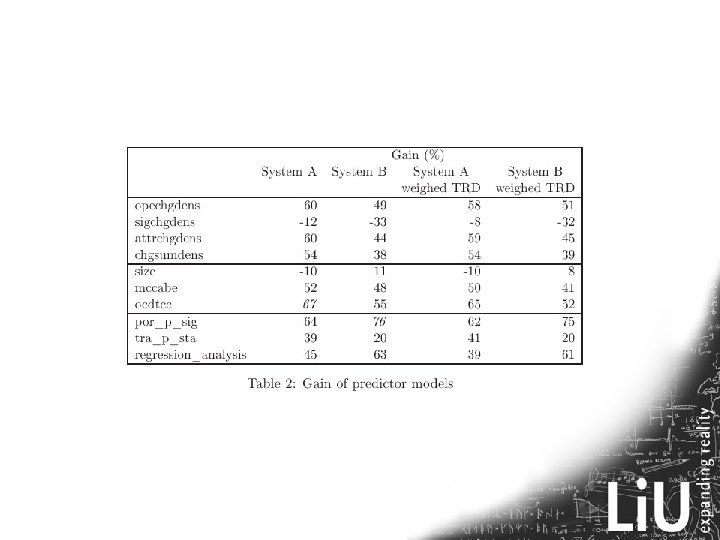

Results Contributions: Ø Valid statistical material: Ø Ø Large models, large number of TRs Two change projects Two highly explanatory predictors were found State chart metrics are as good as OO metrics Problems: Ø Some problems to match modules in models and TRs Ø Effort to collect change data

- Slides: 22