Practices of Integrating Parallel and Distributed Computing Topics

Practices of Integrating Parallel and Distributed Computing Topics into CS Curriculum at UESTC Guoming Lu University of Electronic Science and Technology of China

Introduction to UESTC • Two majors in Department of Computer Science and Engineering o Information Security(200+ students/year) o Computer Science and Technology(300+ students/year) • Computer Science • Computer Engineering

Project Goal • Re-structure department curriculum to fully cover parallel and distributed computing with both a breadth and depth. o fundamental concepts o Full framework of parallel and distributed computing • Sufficient practical exposure and hands on development experience • Integration PDC topics at early stage of curriculum • Think in parallel

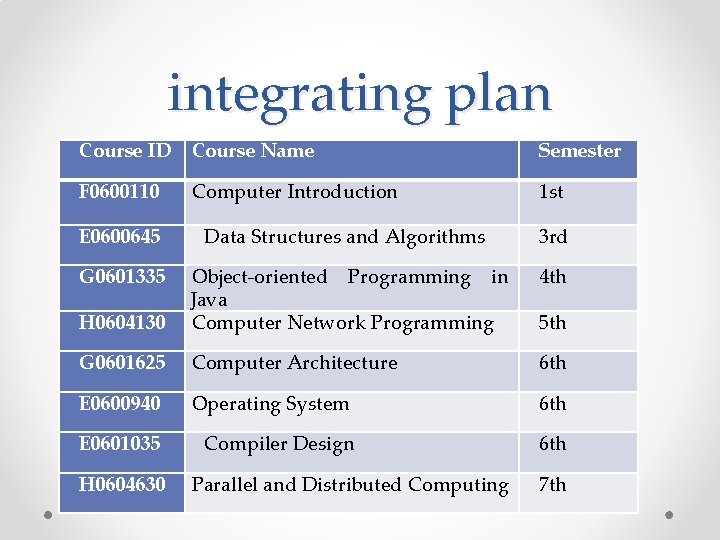

integrating plan Course ID Course Name Semester F 0600110 1 st Computer Introduction E 0600645 Data Structures and Algorithms 3 rd G 0601335 4 th H 0604130 Object-oriented Programming in Java Computer Network Programming G 0601625 Computer Architecture 6 th E 0600940 Operating System 6 th E 0601035 Compiler Design 6 th H 0604630 Parallel and Distributed Computing 5 th 7 th

First step Of Integration, Fall 2013 • Object-oriented Programming in Java (G 0601335, core) o Java threads • Computer Network Programming (H 0604130, elective) o pthreads • Computer Architecture (G 0601625, core) o Most architectures topics • Parallel and Distributed Computing (H 0604630 core) o Algorithms and programming topics

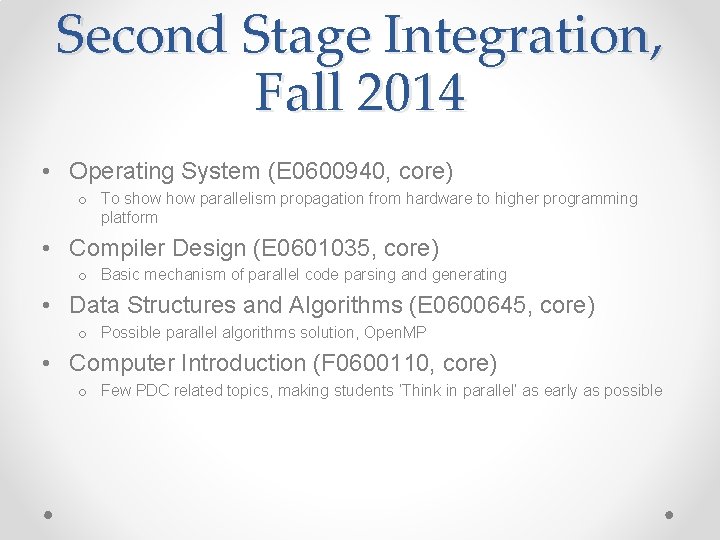

Second Stage Integration, Fall 2014 • Operating System (E 0600940, core) o To show parallelism propagation from hardware to higher programming platform • Compiler Design (E 0601035, core) o Basic mechanism of parallel code parsing and generating • Data Structures and Algorithms (E 0600645, core) o Possible parallel algorithms solution, Open. MP • Computer Introduction (F 0600110, core) o Few PDC related topics, making students ‘Think in parallel’ as early as possible

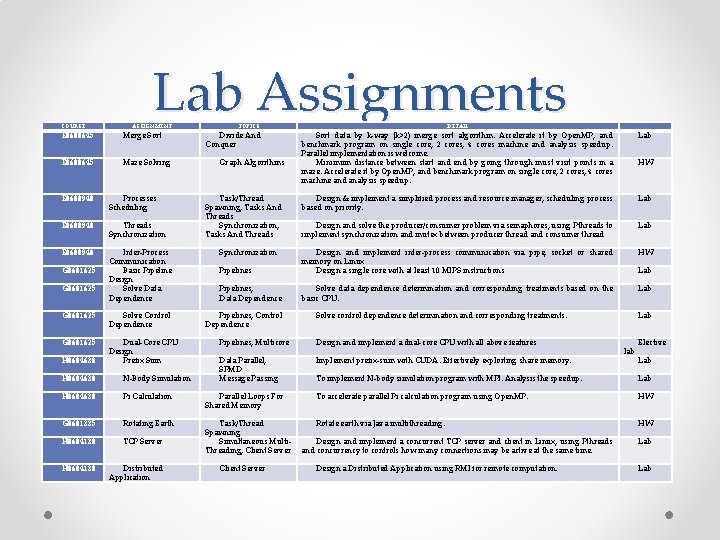

COURSE Lab Assignments ASSIGNMENT E 0600645 Merge Sort E 0600645 Maze Solving E 0600940 Processes Scheduling E 0600940 Threads Synchronization E 0600940 Inter-Process Communication Basic Pipeline Design Solve Data Dependence G 0601625 Solve Control Dependence G 0601625 Dual-Core CPU Design Prefix Sum H 0604630 N-Body Simulation H 0604630 Pi Calculation G 0601335 Rotating Earth H 0604130 TCP Server H 0604130 Distributed Application TOPICS Divide And Conquer Graph Algorithms Task/Thread Spawning, Tasks And Threads Synchronization, Tasks And Threads Lab DETAIL Sort data by k-way (k>2) merge sort algorithm. Accelerate it by Open. MP, and benchmark program on single core, 2 cores, 4 cores machine and analysis speedup. Parallel implementation is welcome. Minimum distance between start and end by going through must visit points in a maze. Accelerate it by Open. MP, and benchmark program on single core, 2 cores, 4 cores machine and analysis speedup. HW Design & implement a simplified process and resource manager, scheduling process based on priority. Lab Design and solve the producer/consumer problem via semaphores, using Pthreads to implement synchronization and mutex between producer thread and consumer thread Lab HW Pipelines Design and implement inter-process communication via pipe, socket or shared memory on Linux Design a single core with at least 10 MIPS instructions Pipelines, Data Dependence Solve data dependence determination and corresponding treatments based on the basic CPU. Lab Synchronization Pipelines, Control Dependence Lab Solve control dependence determination and corresponding treatments. Pipelines, Multicore Design and implement a dual-core CPU with all above features Data Parallel, SPMD Message Passing Implement prefix-sum with CUDA. Effectively exploiting share memory. Lab lab Elective Lab To implement N-body simulation program with MPI. Analysis the speedup. Lab Parallel Loops For Shared Memory To accelerate parallel Pi calculation program using Open. MP. HW Task/Thread Spawning Simultaneous Multi. Threading, Client Server Rotate earth via Java multithreading. HW Client Server Design and implement a concurrent TCP server and client in Linux, using Pthreads and concurrency to controls how many connections may be active at the same time. Design a Distributed Application using RMI for remote computation. Lab

Conclusion • TCPP recommended curriculum has been integrated eight courses, evaluation shows our integration effort is successful. • Practice such as lab/homework assignment effective improve students’ conception and practical skill. • Contest can significantly motivate students’ imagination and creativity.

Future work • ‘General education’ leads to majors reformation. • CS and CE will merge into a single CST(Computer Science and Technology) major in UESTC. o o o Courses adjustment and condense. CST Curriculum is very full. Parallel and Distributed Computing will change into elective course Scatter TCPP PDC topics in large amount of elective courses Add more upper-level courses that provide more breadth and depth of coverage of parallel and distributed computing. Computer Graphics, Information Security etc.

- Slides: 9