Practical SAT A Tutorial on Applied Satisfiability Solving

Practical SAT A Tutorial on Applied Satisfiability Solving Niklas Eén Cadence Research Laboratories Presented by Alan Mishchenko UC Berkeley 1

Overview Modern SAT solvers – Terminology – Chaff-like SAT solvers – Features of Mini. Sat Encoding problems to be solved by SAT – Minimum-area AIG synthesis – AIG-to-CNF conversion – CNF minimization – Incremental SAT interface Conclusions

Introduction SAT Terminology

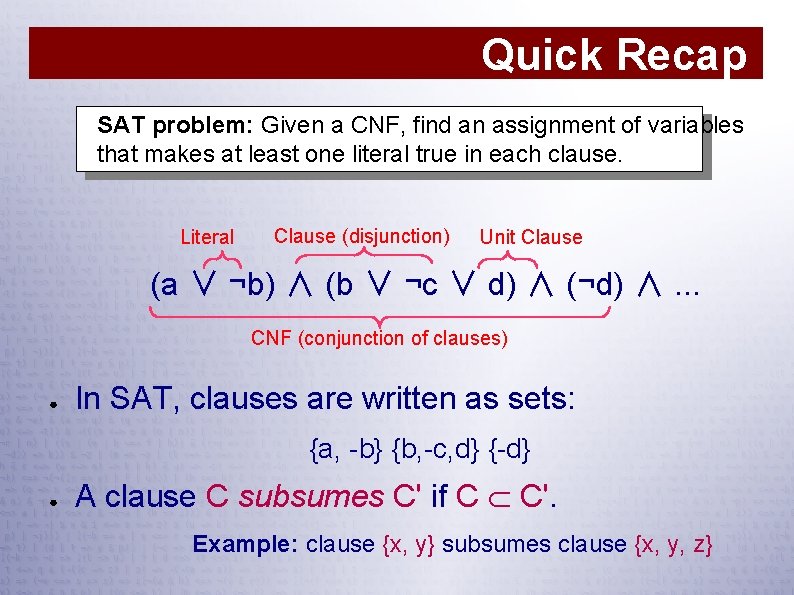

Quick Recap SAT problem: Given a CNF, find an assignment of variables that makes at least one literal true in each clause. Literal Clause (disjunction) Unit Clause (a ∨ ¬b) ∧ (b ∨ ¬c ∨ d) ∧ (¬d) ∧. . . CNF (conjunction of clauses) ● In SAT, clauses are written as sets: {a, -b} {b, -c, d} {-d} ● A clause C subsumes C' if C C'. Example: clause {x, y} subsumes clause {x, y, z}

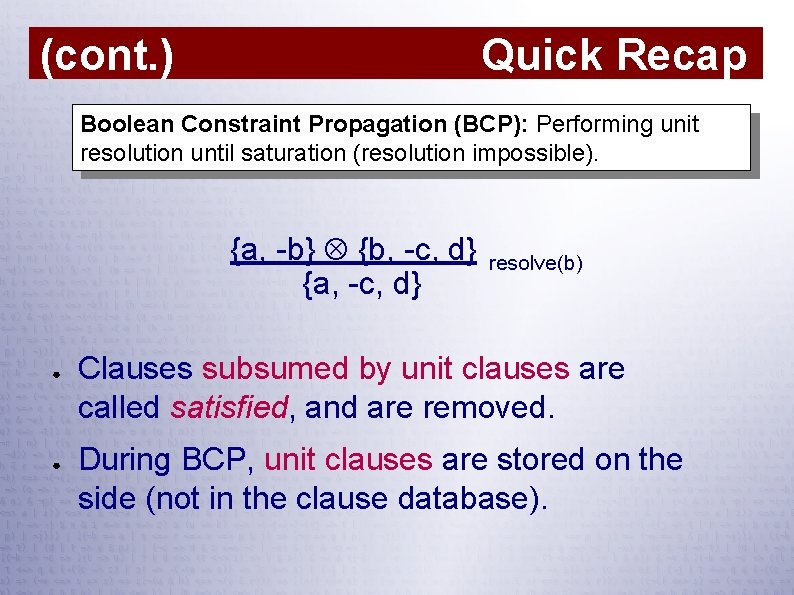

Quick Recap (cont. ) Boolean Constraint Propagation (BCP): Performing unit resolution until saturation (resolution impossible). {a, -b} {b, -c, d} {a, -c, d} ● ● resolve(b) Clauses subsumed by unit clauses are called satisfied, and are removed. During BCP, unit clauses are stored on the side (not in the clause database).

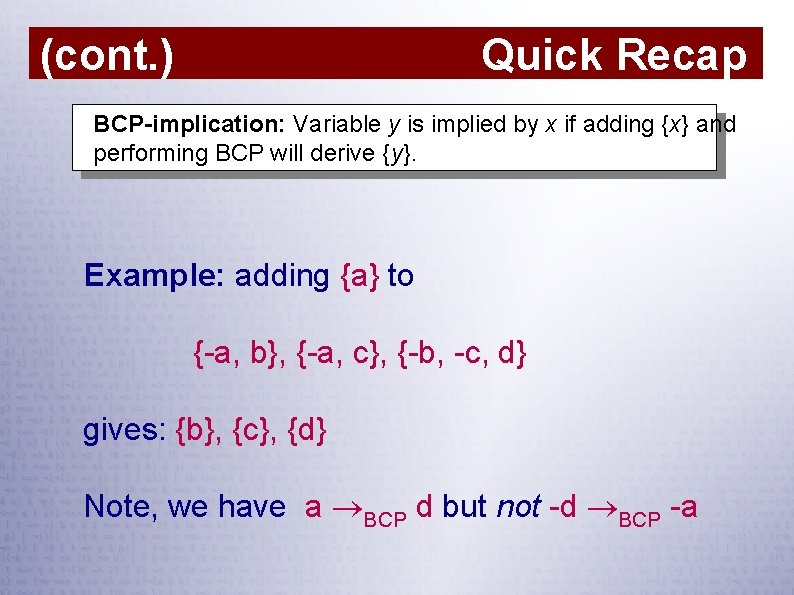

Quick Recap (cont. ) BCP-implication: Variable y is implied by x if adding {x} and performing BCP will derive {y}. Example: adding {a} to {-a, b}, {-a, c}, {-b, -c, d} gives: {b}, {c}, {d} Note, we have a BCP d but not -d BCP -a

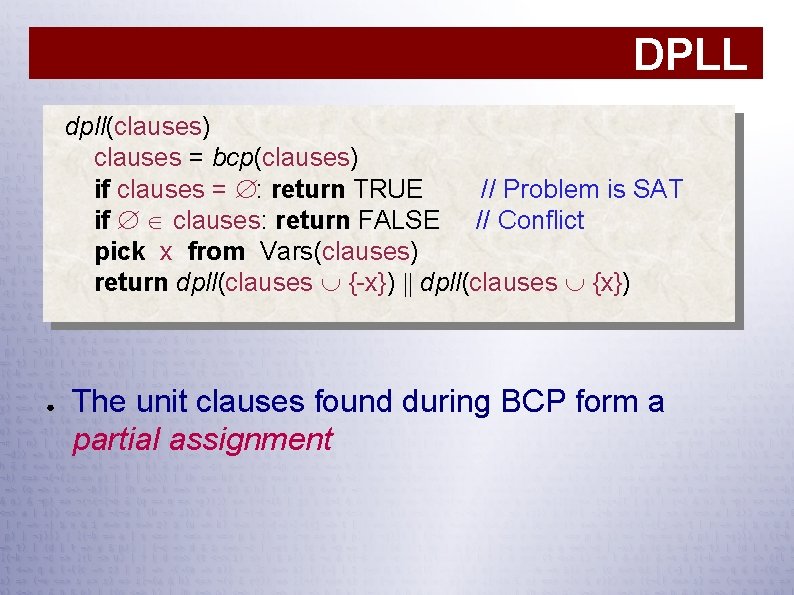

DPLL dpll(clauses) clauses = bcp(clauses) if clauses = : return TRUE // Problem is SAT if clauses: return FALSE // Conflict pick x from Vars(clauses) return dpll(clauses {-x}) dpll(clauses {x}) ● The unit clauses found during BCP form a partial assignment

Modern SAT Solvers Chaff-like SAT solvers

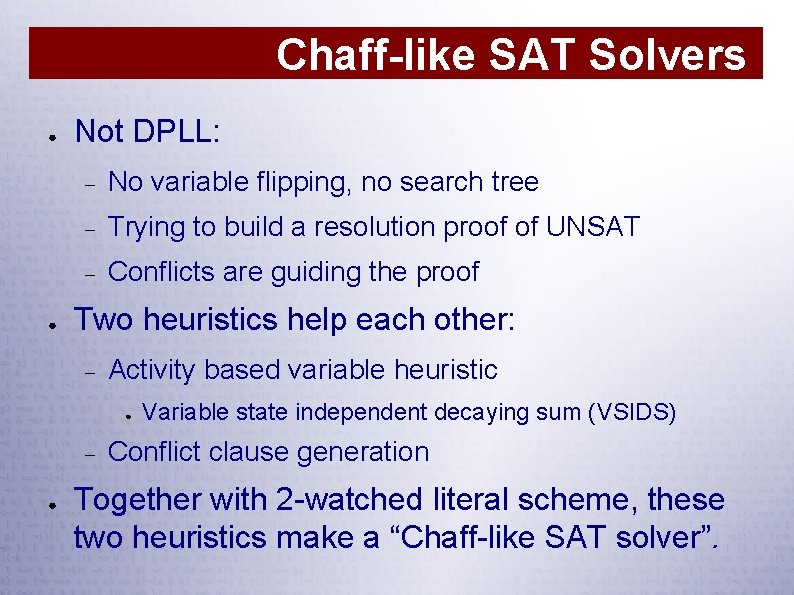

Chaff-like SAT Solvers ● ● Not DPLL: No variable flipping, no search tree Trying to build a resolution proof of UNSAT Conflicts are guiding the proof Two heuristics help each other: Activity based variable heuristic ● ● Variable state independent decaying sum (VSIDS) Conflict clause generation Together with 2 -watched literal scheme, these two heuristics make a “Chaff-like SAT solver”.

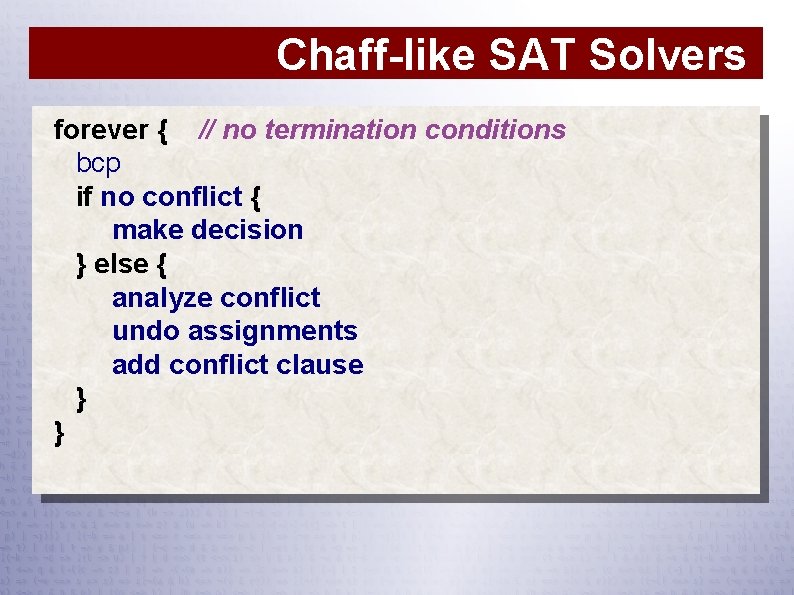

Chaff-like SAT Solvers forever { // no termination conditions bcp if no conflict { make decision } else { analyze conflict undo assignments add conflict clause } }

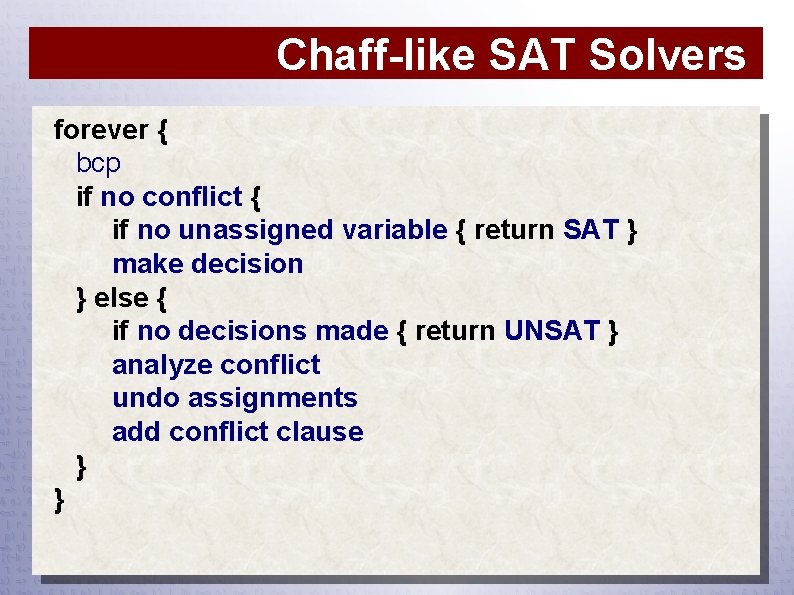

Chaff-like SAT Solvers forever { bcp if no conflict { if no unassigned variable { return SAT } make decision } else { if no decisions made { return UNSAT } analyze conflict undo assignments add conflict clause } }

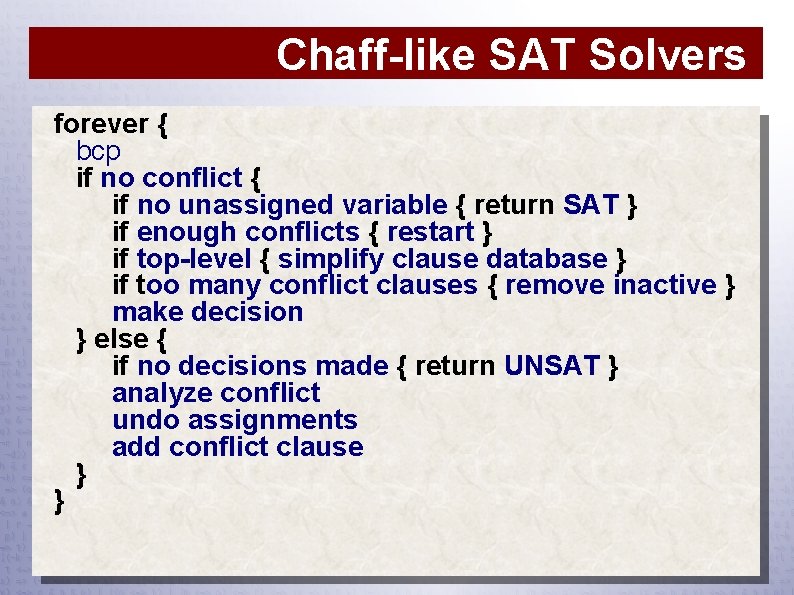

Chaff-like SAT Solvers forever { bcp if no conflict { if no unassigned variable { return SAT } if enough conflicts { restart } if top-level { simplify clause database } if too many conflict clauses { remove inactive } make decision } else { if no decisions made { return UNSAT } analyze conflict undo assignments add conflict clause } }

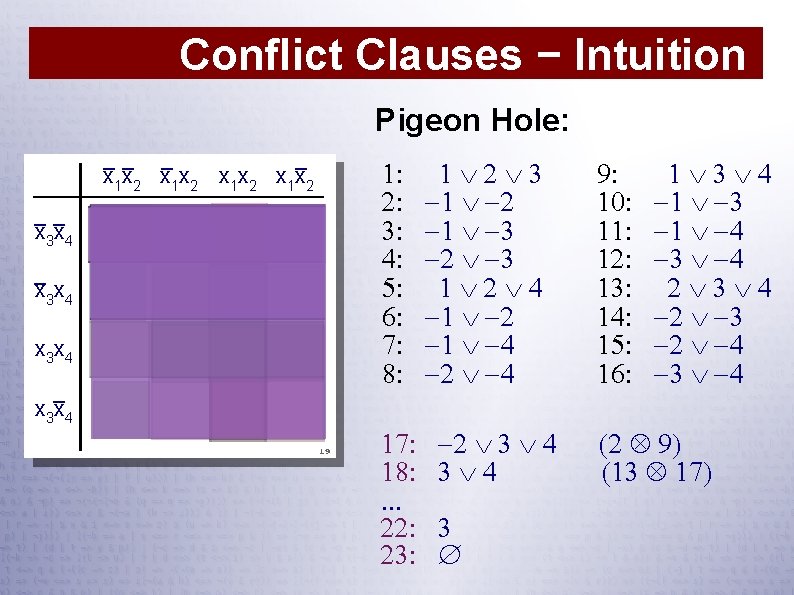

Conflict Clauses − Intuition Pigeon Hole: __ _ _ x 1 x 2 __ x 3 x 4 _ x 3 x 4 1: 2: 3: 4: 5: 6: 7: 8: 17: 18: . . . 22: 23: 1 2 3 1 2 1 3 2 3 1 2 4 1 2 1 4 2 3 4 3 9: 10: 11: 12: 13: 14: 15: 16: 1 3 4 1 3 1 4 3 4 2 3 4 2 3 2 4 3 4 (2 9) (13 17)

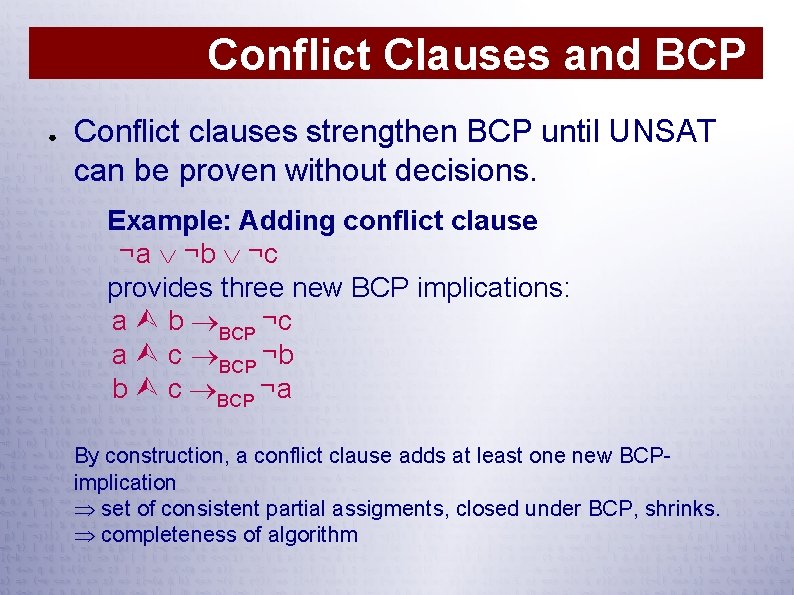

Conflict Clauses and BCP ● Conflict clauses strengthen BCP until UNSAT can be proven without decisions. Example: Adding conflict clause ¬a ¬b ¬c provides three new BCP implications: a b BCP ¬c a c BCP ¬b b c BCP ¬a By construction, a conflict clause adds at least one new BCPimplication set of consistent partial assigments, closed under BCP, shrinks. completeness of algorithm

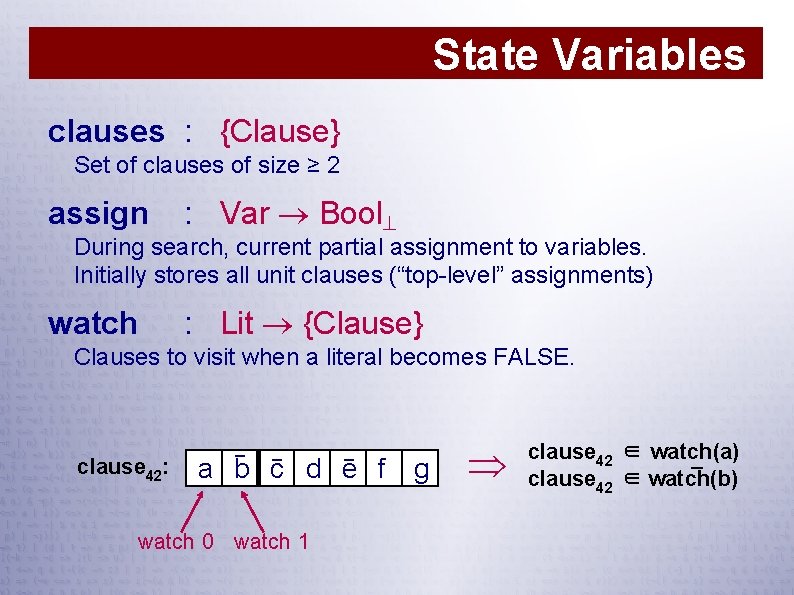

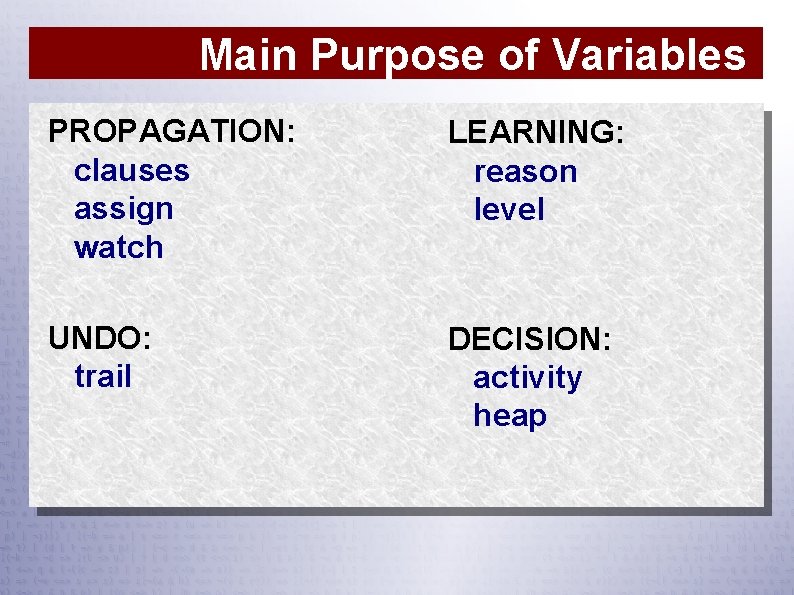

State Variables clauses : {Clause} Set of clauses of size ≥ 2 assign : Var Bool During search, current partial assignment to variables. Initially stores all unit clauses (“top-level” assignments) watch : Lit {Clause} Clauses to visit when a literal becomes FALSE. clause 42: _ _ _ a b c d e f watch 0 watch 1 g clause 42 ∈ watch(a) _ clause 42 ∈ watch(b)

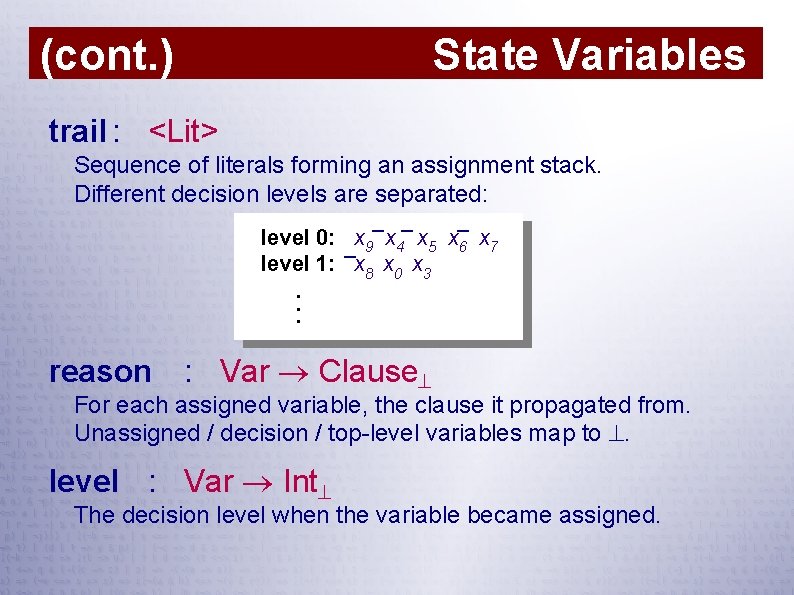

State Variables (cont. ) trail : <Lit> Sequence of literals forming an assignment stack. Different decision levels are separated: _ _ _ level 0: _x 9 x 4 x 5 x 6 x 7 level 1: x 8 x 0 x 3. . . reason : Var Clause For each assigned variable, the clause it propagated from. Unassigned / decision / top-level variables map to . level : Var Int The decision level when the variable became assigned.

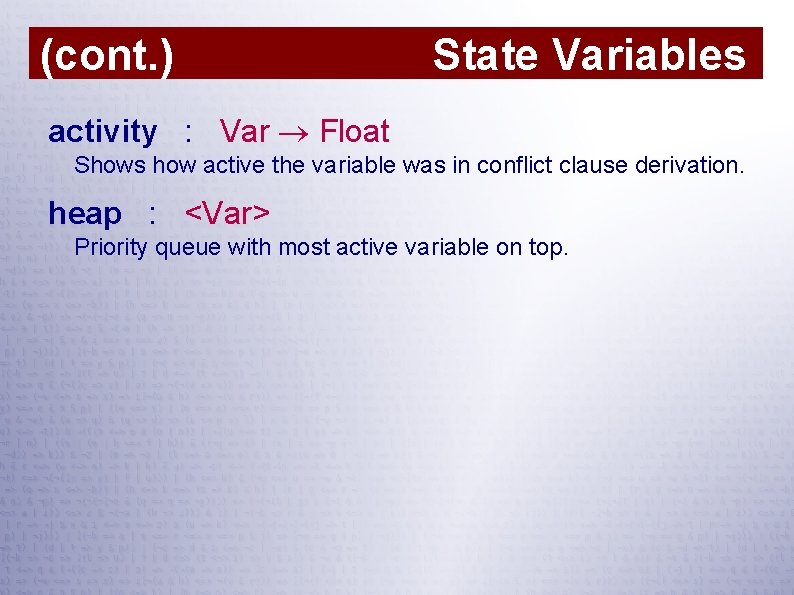

(cont. ) State Variables activity : Var Float Shows how active the variable was in conflict clause derivation. heap : <Var> Priority queue with most active variable on top.

Main Purpose of Variables PROPAGATION: clauses assign watch LEARNING: reason level UNDO: trail DECISION: activity heap

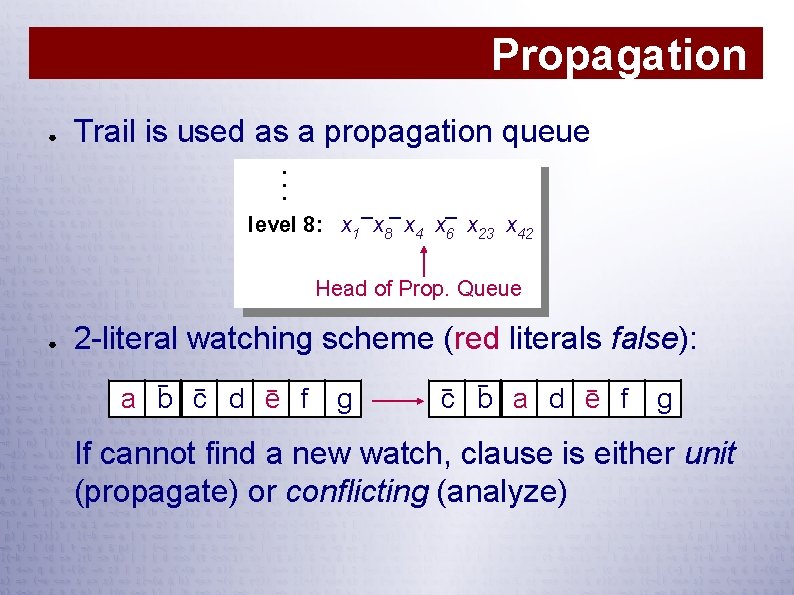

Propagation ● Trail is used as a propagation queue. . . _ _ _ level 8: x 1 x 8 x 4 x 6 x 23 x 42 Head of Prop. Queue ● 2 -literal watching scheme (red literals false): _ _ _ a b c d e f g _ _ _ c b a d e f g If cannot find a new watch, clause is either unit (propagate) or conflicting (analyze)

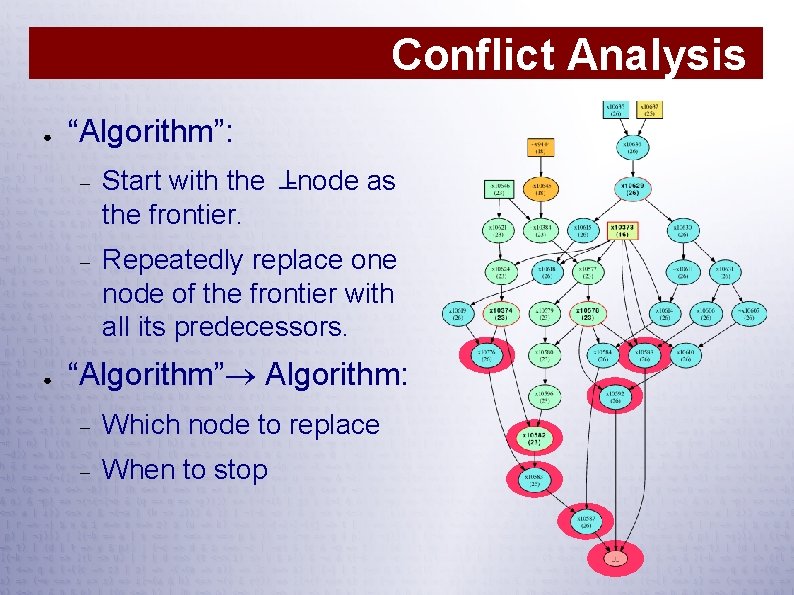

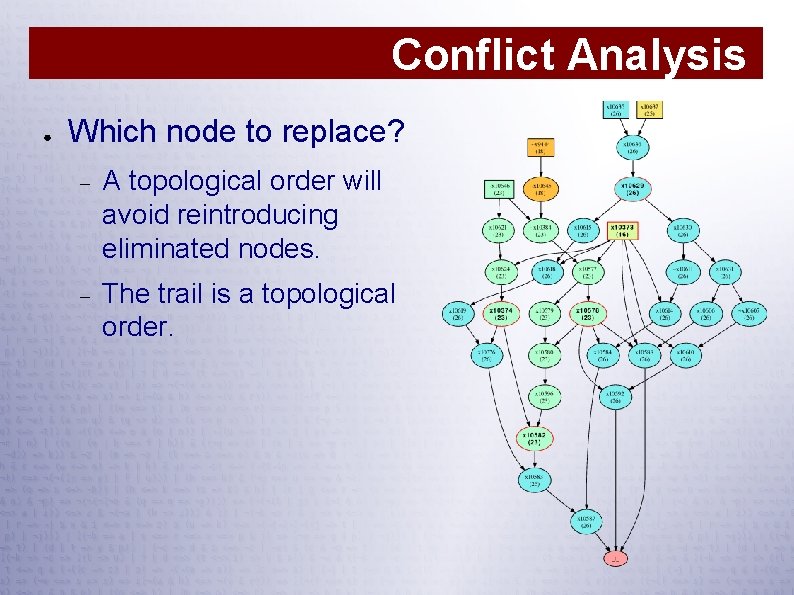

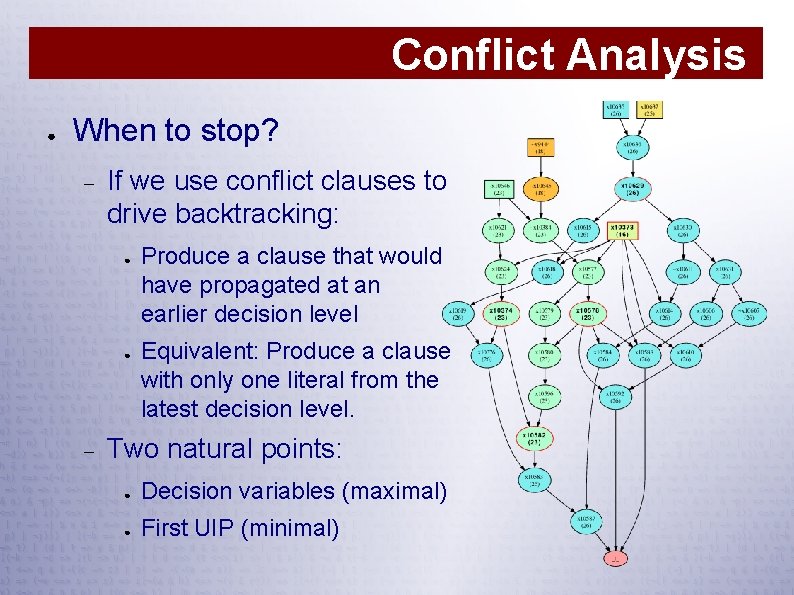

Conflict Analysis ● ● “Algorithm”: Start with the ⊥-node as the frontier. Repeatedly replace one node of the frontier with all its predecessors. “Algorithm” Algorithm: Which node to replace When to stop

Conflict Analysis ● Which node to replace? A topological order will avoid reintroducing eliminated nodes. The trail is a topological order.

Conflict Analysis ● When to stop? If we use conflict clauses to drive backtracking: ● ● Produce a clause that would have propagated at an earlier decision level Equivalent: Produce a clause with only one literal from the latest decision level. Two natural points: ● Decision variables (maximal) ● First UIP (minimal)

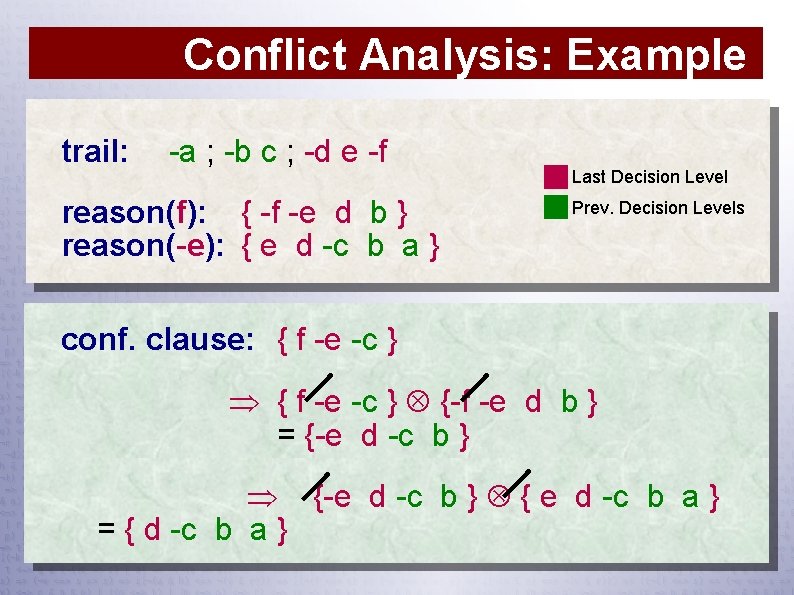

Conflict Analysis: Example trail: -a ; -b c ; -d e -f reason(f): { -f -e d b } reason(-e): { e d -c b a } Last Decision Level Prev. Decision Levels conf. clause: { f -e -c } {-f -e d b } = {-e d -c b } { e d -c b a } = { d -c b a }

Things Worth Noting ● Conflict nests. ● Long clauses are not useless. ● Since we are not flipping variables, we can “branch” on the same variable/value twice. ● Watches are not moved during backtracking. ● Watches tend to migrate to the “silent” literals. ● Industrial SAT problem ≈ UNSAT problems

(cont. ) ● ● Things Worth Noting Very common to visit satisfied clauses Restarts are not “real” restarts. Their main function is to compress the trail. Example: a is active, b inactive, clauses: {a, x} {b, x}. ● CNF-based is solver "better" than circuit-based: Clauses, if long, are efficient for BCP Simple and uniform, which means: ● easy to improve algorithm ● easy to get correct ● beautiful

Modern SAT Solvers Features of Mini. Sat

Features of Mini. Sat ● 1 Floating point based VSIDS heuristic: Moves it closer to Berk. Min/Siege Longer memory (“never” decays to zero)1 Aggressive decay Variable-based, not literal-based Bumps a lot of variables in conflict analysis Polarity heuristic: always try FALSE first With current decay, floating point number becomes epsilon after 14468 conflicts

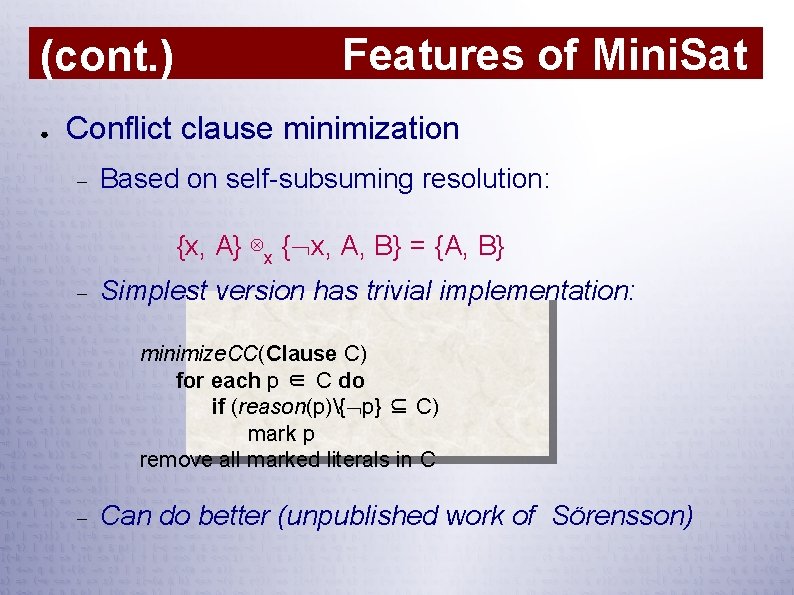

(cont. ) ● Features of Mini. Sat Conflict clause minimization Based on self-subsuming resolution: {x, A} ⊗x { x, A, B} = {A, B} Simplest version has trivial implementation: minimize. CC(Clause C) for each p ∈ C do if (reason(p){ p} ⊆ C) mark p remove all marked literals in C Can do better (unpublished work of Sörensson)

(cont. ) Features of Mini. Sat ● Binary clauses inlined in watcher lists ● Progressive restarts (improved by Pico. SAT) ● Activity based clause deletion ● Handles subsumed clauses better than the original scheme Very effective for small, hard SAT problems Random decisions are made 2% of the time

Features of Mini. Sat 2. 0 ● ● Improved SATELITE-style preprocessing Faster, better, more features Integrated Indeed, what SATELITE was meant to be. . .

Conflict Clause Minimization ● ● ● Continuation of analysis to previous decision levels — but restricted Finds a minimal subset of the originally derived conflict clause Typically removes about 30% of the literals of the conflict clause

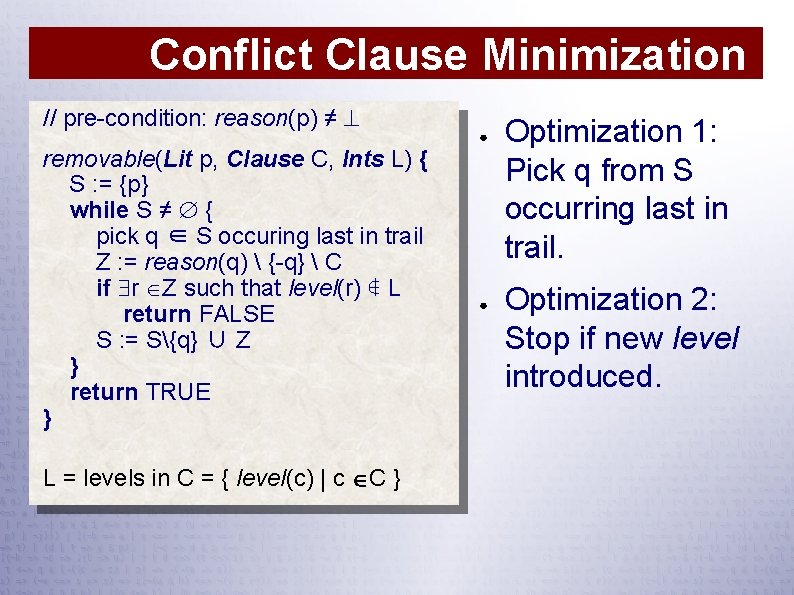

Conflict Clause Minimization // pre-condition: reason(p) ≠ removable(Lit p, Clause C, Ints L) { S : = {p} while S ≠ { pick q ∈ S occuring last in trail Z : = reason(q) {-q} C if r Z such that level(r) ∉ L return FALSE S : = S{q} ∪ Z } return TRUE } L = levels in C = { level(c) | c C } ● ● Optimization 1: Pick q from S occurring last in trail. Optimization 2: Stop if new level introduced.

SAT Encoding Example: Optimal circuit synthesis

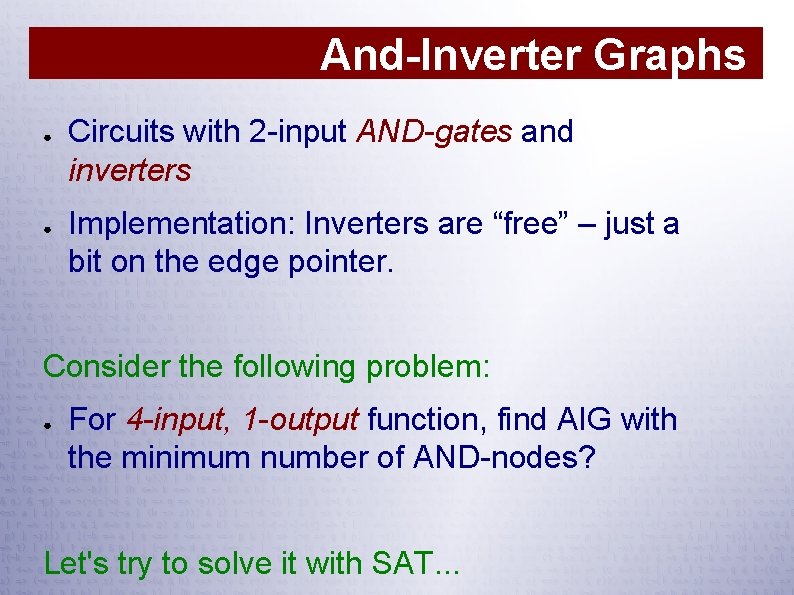

And-Inverter Graphs ● ● Circuits with 2 -input AND-gates and inverters Implementation: Inverters are “free” – just a bit on the edge pointer. Consider the following problem: ● For 4 -input, 1 -output function, find AIG with the minimum number of AND-nodes? Let's try to solve it with SAT. . .

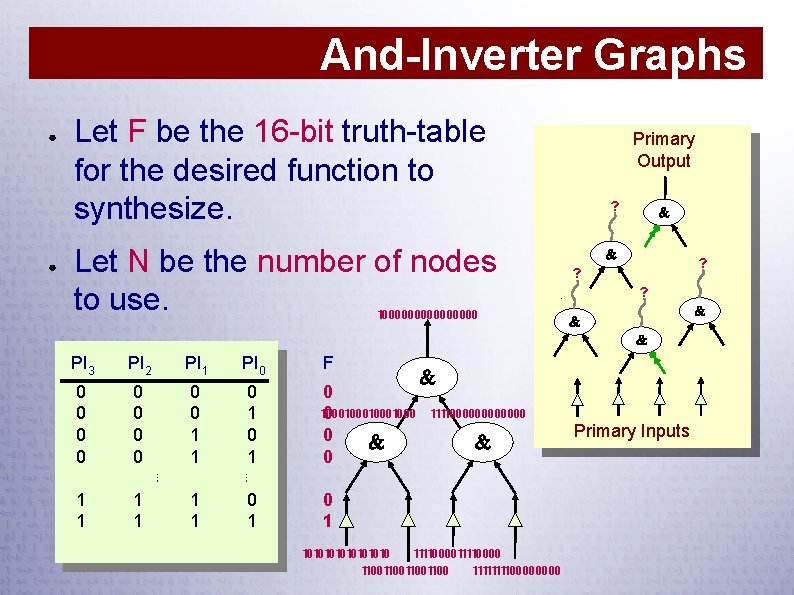

And-Inverter Graphs ● ● Let F be the 16 -bit truth-table for the desired function to synthesize. Let N be the number of nodes to use. Primary Output ? ? ? ? 100000000 PI 3 PI 2 PI 1 PI 0 F 0 0 0 0 0 1 1 0 1 ⋮ 0 100010001000 0 ⋮ 1 1 1 1111000000 0 1 10101010 11110000 11001100 11110000 Primary Inputs

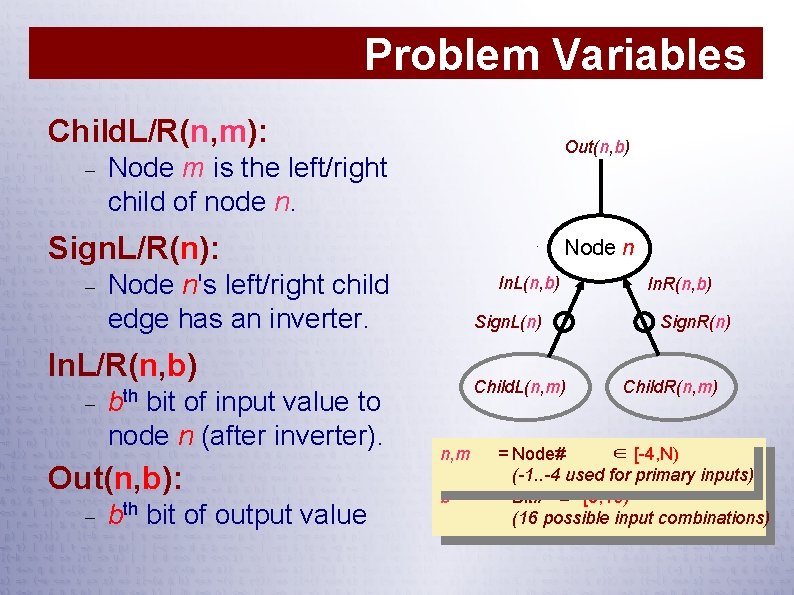

Problem Variables Child. L/R(n, m): Out(n, b) Node m is the left/right child of node n. Sign. L/R(n): Node n's left/right child edge has an inverter. In. L(n, b) Sign. L(n) In. L/R(n, b) Child. L(n, m) bth bit of input value to node n (after inverter). Out(n, b): bth bit of output value n, m b In. R(n, b) Sign. R(n) Child. R(n, m) = Node# ∈ [-4, N) (-1. . -4 used for primary inputs) = Bit# ∈ [0, 16) (16 possible input combinations)

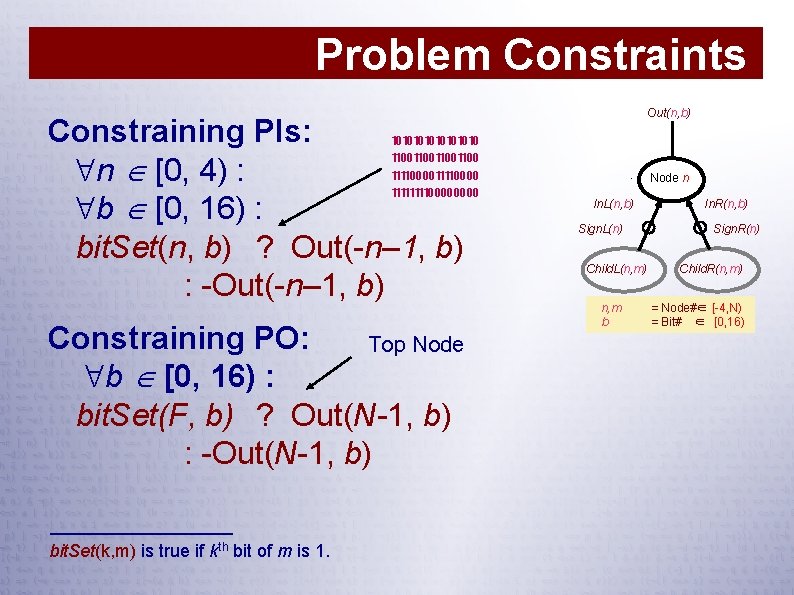

Problem Constraints Constraining PIs: n [0, 4) : b [0, 16) : bit. Set(n, b) ? Out(-n– 1, b) : -Out(-n– 1, b) 10101010 11001100 11110000 Constraining PO: Top Node b [0, 16) : bit. Set(F, b) ? Out(N-1, b) : -Out(N-1, b) bit. Set(k, m) is true if kth bit of m is 1. Out(n, b) Node n In. L(n, b) Sign. L(n) Child. L(n, m) n, m b In. R(n, b) Sign. R(n) Child. R(n, m) = Node#∈ [-4, N) = Bit# ∈ [0, 16)

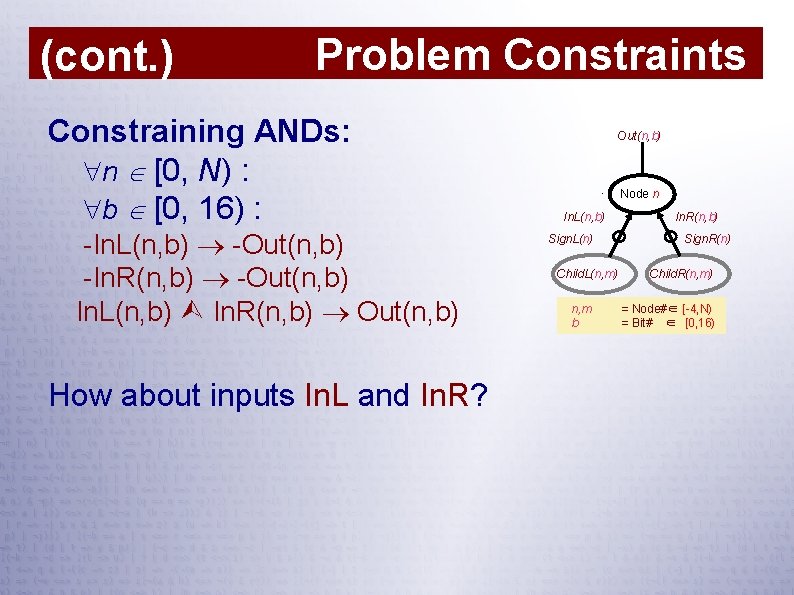

(cont. ) Problem Constraints Constraining ANDs: n [0, N) : b [0, 16) : -In. L(n, b) -Out(n, b) -In. R(n, b) -Out(n, b) In. L(n, b) In. R(n, b) Out(n, b) How about inputs In. L and In. R? Out(n, b) Node n In. L(n, b) Sign. L(n) Child. L(n, m) n, m b In. R(n, b) Sign. R(n) Child. R(n, m) = Node#∈ [-4, N) = Bit# ∈ [0, 16)

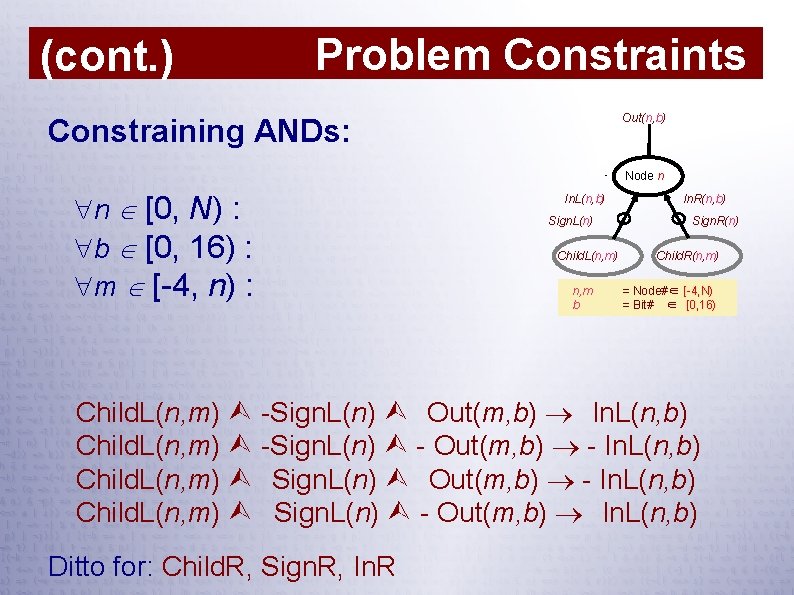

(cont. ) Problem Constraints Out(n, b) Constraining ANDs: Node n n [0, N) : b [0, 16) : m [-4, n) : In. L(n, b) Sign. L(n) Child. L(n, m) n, m b In. R(n, b) Sign. R(n) Child. R(n, m) = Node#∈ [-4, N) = Bit# ∈ [0, 16) Child. L(n, m) -Sign. L(n) Out(m, b) In. L(n, b) Child. L(n, m) -Sign. L(n) - Out(m, b) - In. L(n, b) Child. L(n, m) Sign. L(n) - Out(m, b) In. L(n, b) Ditto for: Child. R, Sign. R, In. R

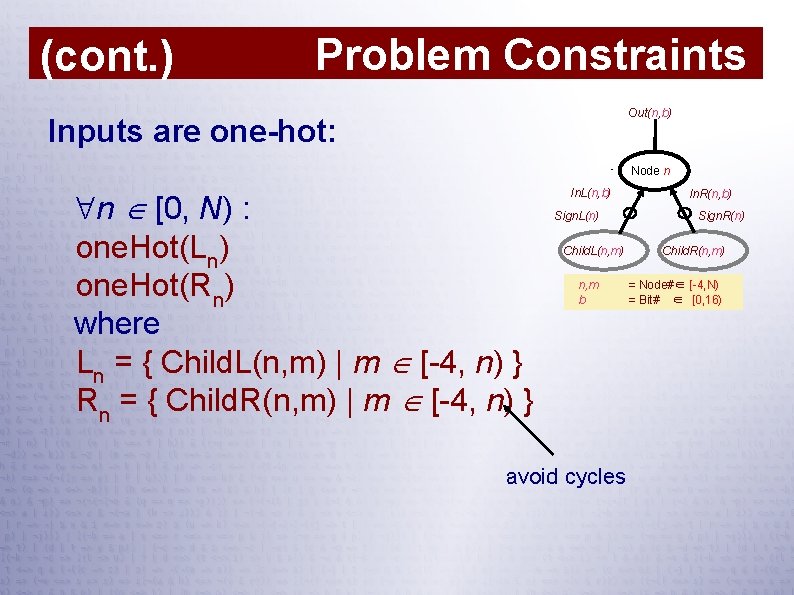

(cont. ) Problem Constraints Out(n, b) Inputs are one-hot: Node n n [0, N) : one. Hot(Ln) one. Hot(Rn) where Ln = { Child. L(n, m) | m [-4, n) } Rn = { Child. R(n, m) | m [-4, n) } In. L(n, b) Sign. L(n) Child. L(n, m) n, m b avoid cycles In. R(n, b) Sign. R(n) Child. R(n, m) = Node#∈ [-4, N) = Bit# ∈ [0, 16)

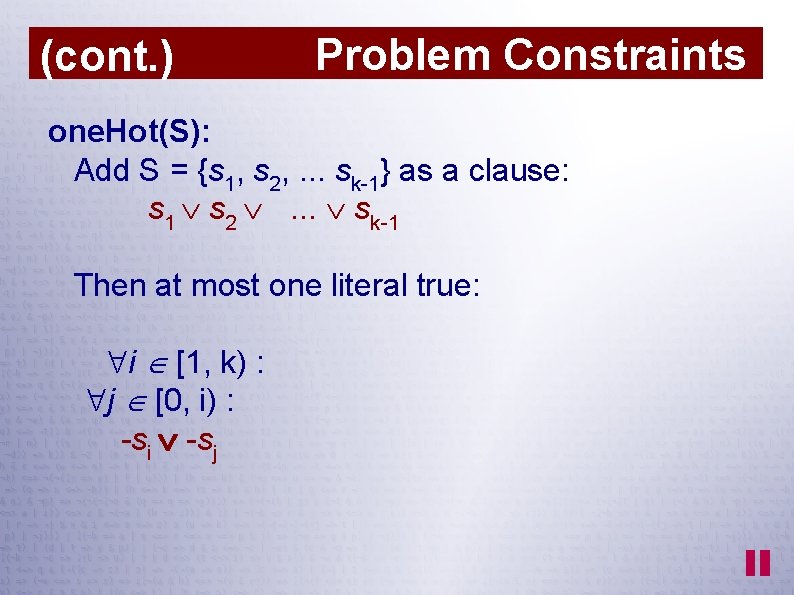

(cont. ) Problem Constraints one. Hot(S): Add S = {s 1, s 2, . . . sk-1} as a clause: s 1 s 2 . . . sk-1 Then at most one literal true: i [1, k) : j [0, i) : -si -sj

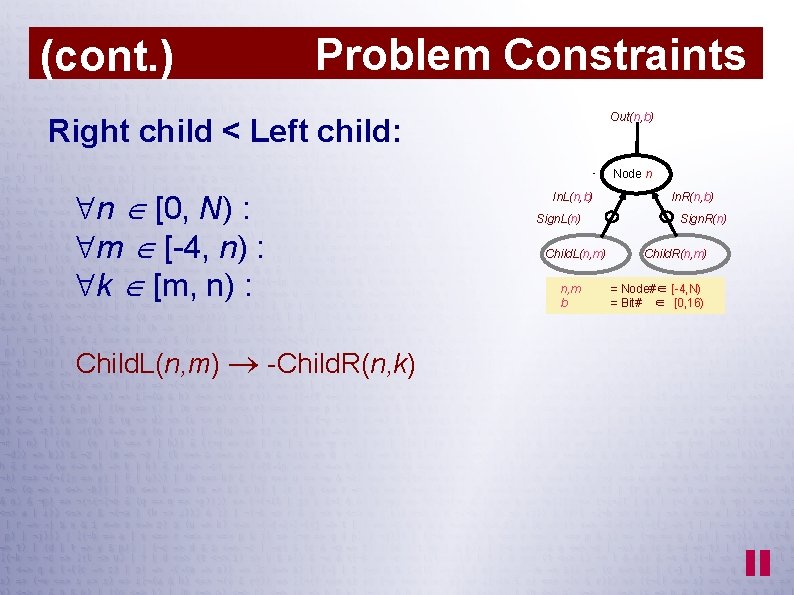

(cont. ) Problem Constraints Out(n, b) Right child < Left child: Node n n [0, N) : m [-4, n) : k [m, n) : Child. L(n, m) -Child. R(n, k) In. L(n, b) Sign. L(n) Child. L(n, m) n, m b In. R(n, b) Sign. R(n) Child. R(n, m) = Node#∈ [-4, N) = Bit# ∈ [0, 16)

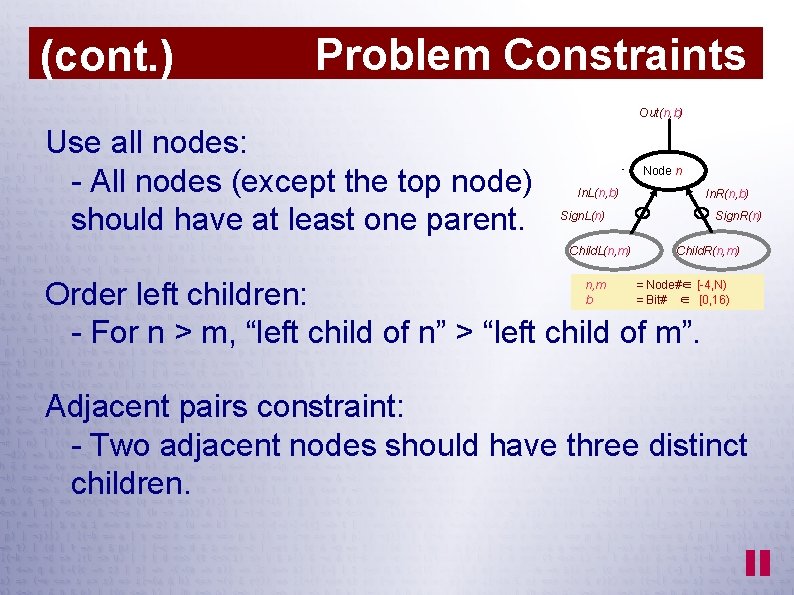

(cont. ) Problem Constraints Out(n, b) Use all nodes: - All nodes (except the top node) should have at least one parent. Node n In. L(n, b) In. R(n, b) Sign. L(n) Child. L(n, m) Sign. R(n) Child. R(n, m) Order left children: - For n > m, “left child of n” > “left child of m”. n, m b = Node#∈ [-4, N) = Bit# ∈ [0, 16) Adjacent pairs constraint: - Two adjacent nodes should have three distinct children.

(cont. ) Problem Constraints Even more constraints Order right children (if left are equal) Unique truth-tables Non-trivial truth-tables (cheaper) Constraints on input permutation/negation (requires different constr on PO)

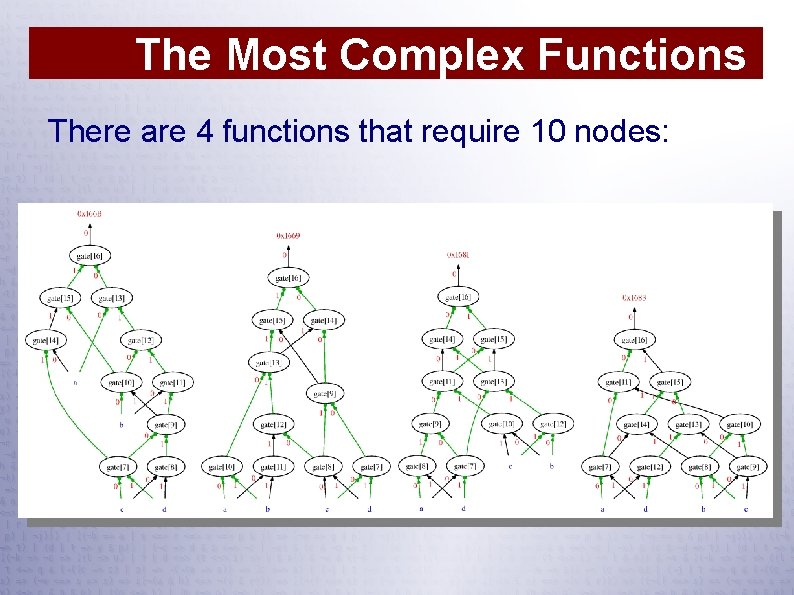

The Most Complex Functions There are 4 functions that require 10 nodes:

Comments on SAT Formulations ● SAT is not magic ● Need to consider: Are there fast specialized algorithms? How often do we need to solve this problem? Shape of search space (is it localizable? ) Do variables have symmetries? Are there constraints with no compact CNF encoding?

Rules of Thumb ● Redundant constraints may be important. ● Many encodings possible. ● Effectiveness may vary greatly. Avoid bit-vectors. Avoid XORs. Small is not always better. Need "good" literals. Example: Sorters vs. adders in PB-translation

SAT Encoding Converting circuits to CNF

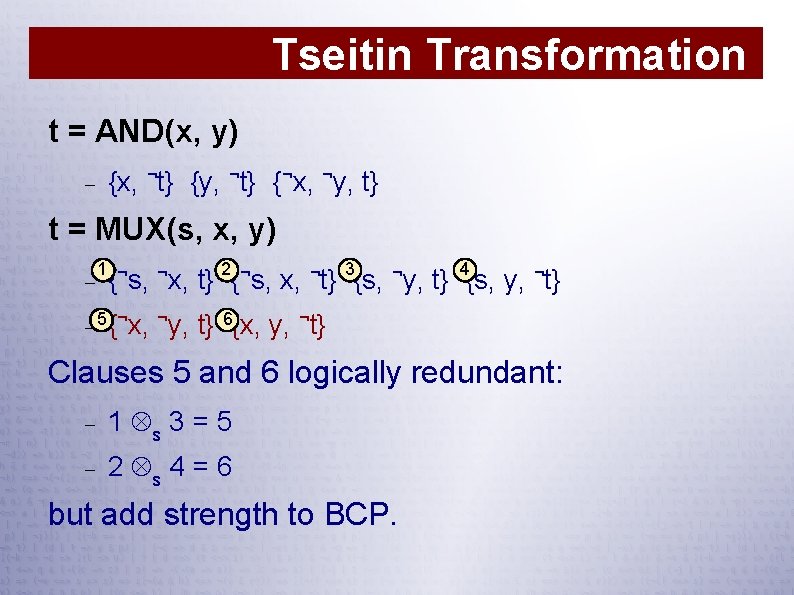

Tseitin Transformation t = AND(x, y) {x, ¬t} {y, ¬t} {¬x, ¬y, t} t = MUX(s, x, y) 1 ¬ ¬ 2 ¬ 3 ¬ 4 { s, x, t} {s, y, ¬t} 5 {¬x, ¬y, ¬ t} 6{x, y, ¬t} Clauses 5 and 6 logically redundant: 1 s 3 = 5 2 s 4 = 6 but add strength to BCP.

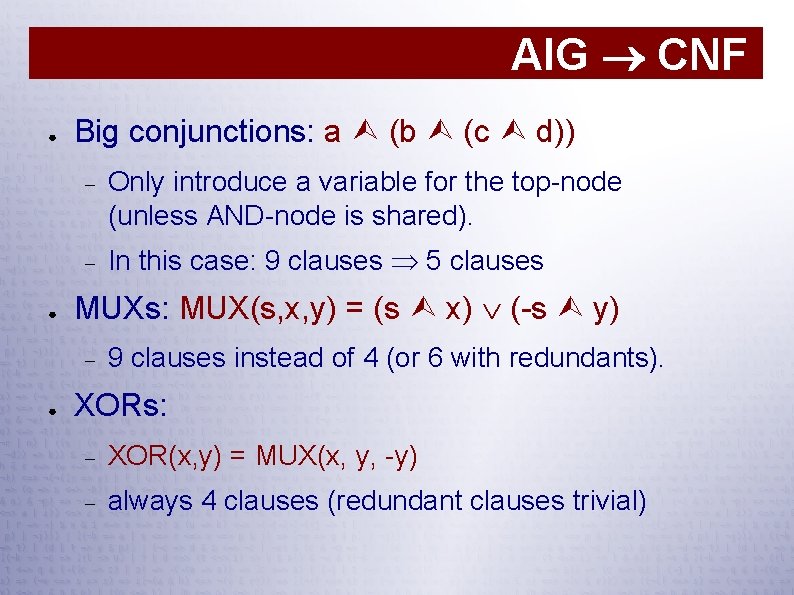

AIG CNF ● ● Big conjunctions: a (b (c d)) Only introduce a variable for the top-node (unless AND-node is shared). In this case: 9 clauses 5 clauses MUXs: MUX(s, x, y) = (s x) (-s y) ● 9 clauses instead of 4 (or 6 with redundants). XORs: XOR(x, y) = MUX(x, y, -y) always 4 clauses (redundant clauses trivial)

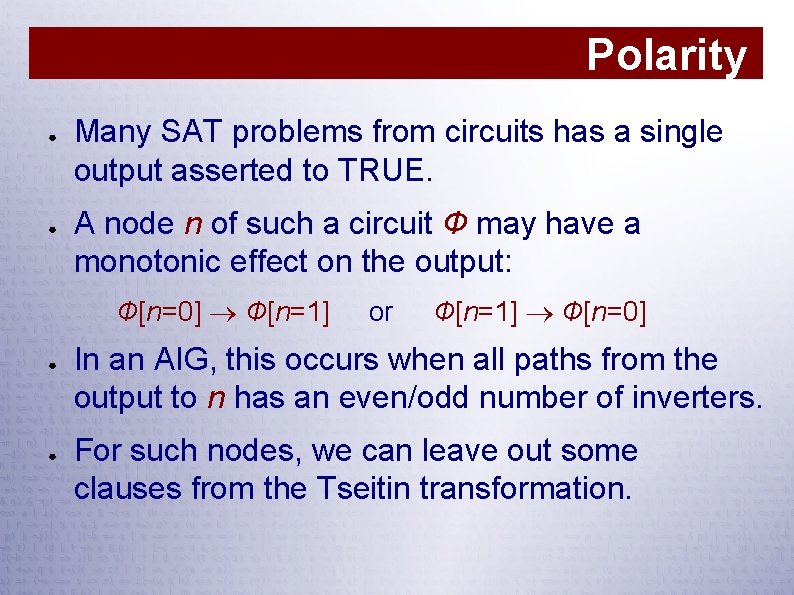

Polarity ● ● Many SAT problems from circuits has a single output asserted to TRUE. A node n of such a circuit Ф may have a monotonic effect on the output: Ф[n=0] Ф[n=1] ● ● or Ф[n=1] Ф[n=0] In an AIG, this occurs when all paths from the output to n has an even/odd number of inverters. For such nodes, we can leave out some clauses from the Tseitin transformation.

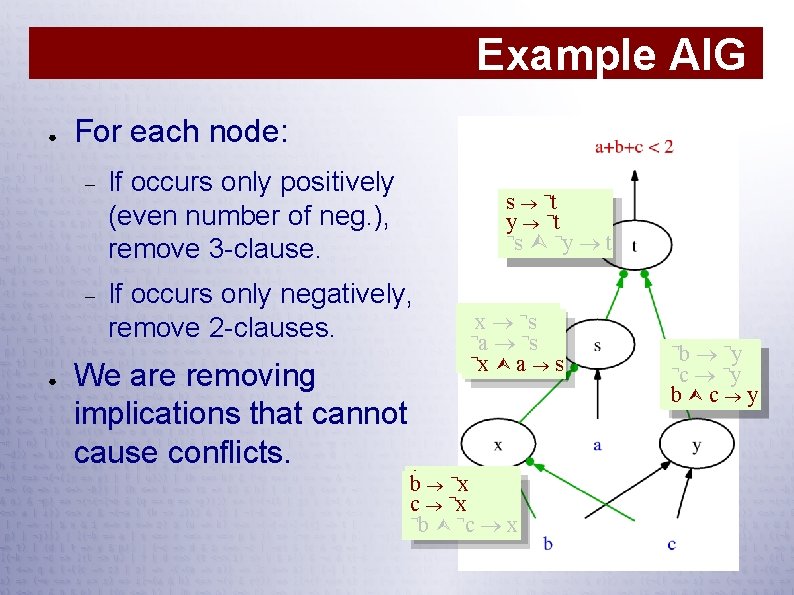

Example AIG ● ● For each node: If occurs only positively (even number of neg. ), remove 3 -clause. If occurs only negatively, remove 2 -clauses. We are removing implications that cannot cause conflicts. s ¬t y ¬t ¬ s ¬¬yy t t ¬s¬ xx s ¬¬a ¬s¬ a s ¬¬ xx aa ss ¬¬xx bb ¬¬xx cc¬ ¬ b ¬¬c x b c x ¬¬b ¬¬y b y ¬¬c ¬¬y c y bb c y

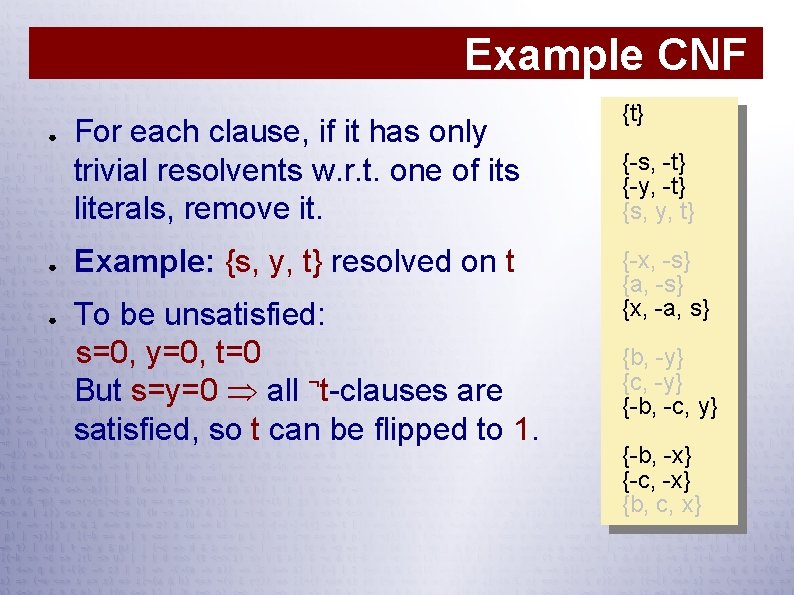

Example CNF ● ● ● For each clause, if it has only trivial resolvents w. r. t. one of its literals, remove it. Example: {s, y, t} resolved on t To be unsatisfied: s=0, y=0, t=0 But s=y=0 all ¬t-clauses are satisfied, so t can be flipped to 1. {t} {-s, -t} {-y, -t} {s, y, t} {-x, -s} {a, -s} {x, -a, s} {b, -y} {c, -y} {-b, -c, y} {-b, -x} {-c, -x} {b, c, x}

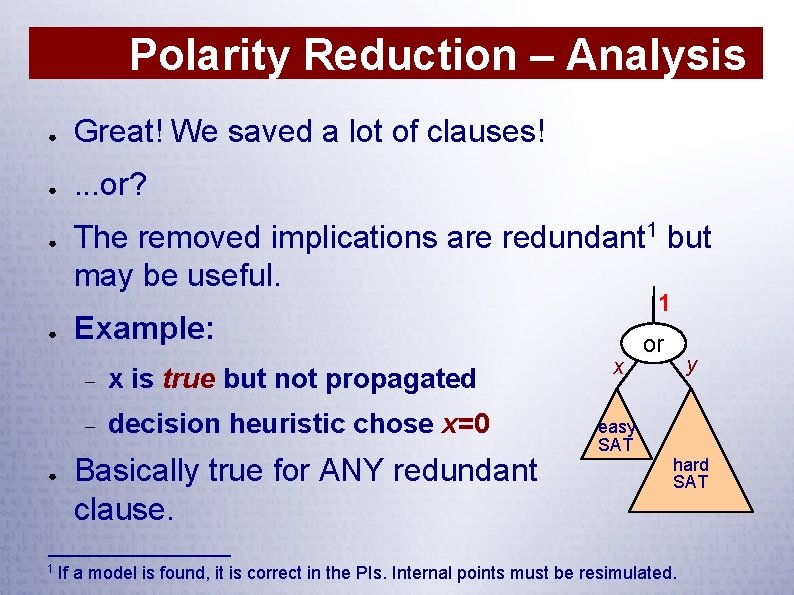

Polarity Reduction – Analysis ● Great! We saved a lot of clauses! ● . . . or? ● ● ● 1 The removed implications are redundant 1 but may be useful. 1 Example: x is true but not propagated decision heuristic chose x=0 Basically true for ANY redundant clause. x or y easy SAT hard SAT If a model is found, it is correct in the PIs. Internal points must be resimulated.

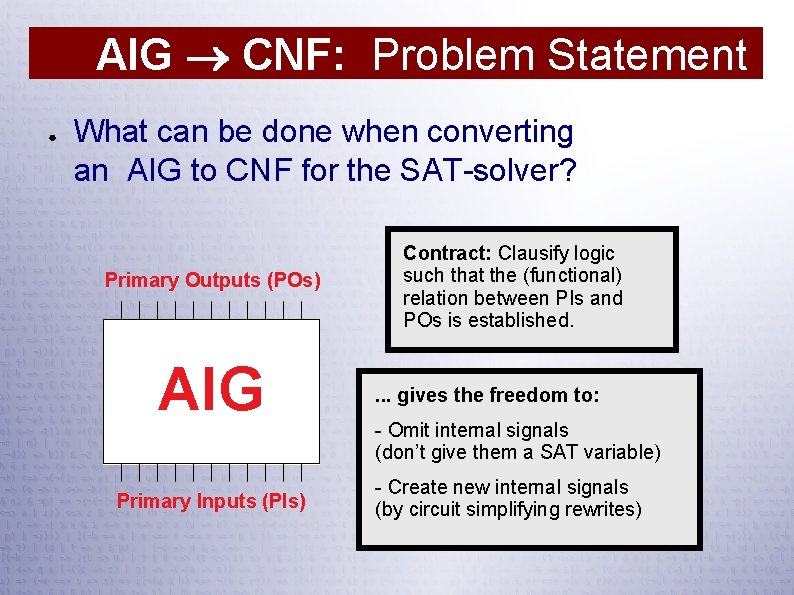

AIG CNF: Problem Statement ● What can be done when converting an AIG to CNF for the SAT-solver? Primary Outputs (POs) AIG Primary Inputs (PIs) Contract: Clausify logic such that the (functional) relation between PIs and POs is established. . gives the freedom to: - Omit internal signals (don’t give them a SAT variable) - Create new internal signals (by circuit simplifying rewrites)

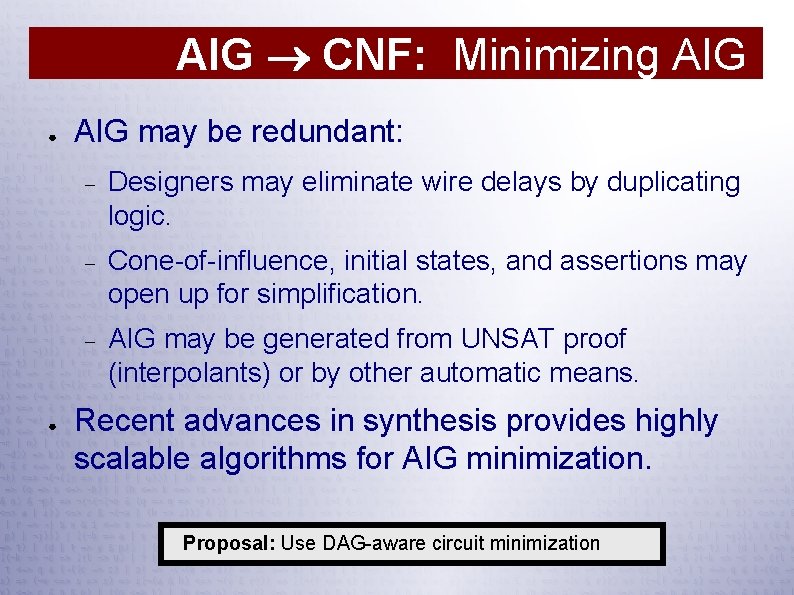

AIG CNF: Minimizing AIG ● ● AIG may be redundant: Designers may eliminate wire delays by duplicating logic. Cone-of-influence, initial states, and assertions may open up for simplification. AIG may be generated from UNSAT proof (interpolants) or by other automatic means. Recent advances in synthesis provides highly scalable algorithms for AIG minimization. Proposal: Use DAG-aware circuit minimization

AIG CNF: Picking Variables ● Clausification in the small (easy) How to produce clauses for 1 -output, k-input subgraphs (“super-gates”) of AIG for small k: k ≤ 4: Pre-compute and tabulate exact results 4 < k ≤ 16: Use Minato’s ISOP-algorithm ● Clausification in the large (hard!) How to partition AIG into super-gates (i. e. what variables to introduce)? Proposal: Use FPGA-style technology mapping!

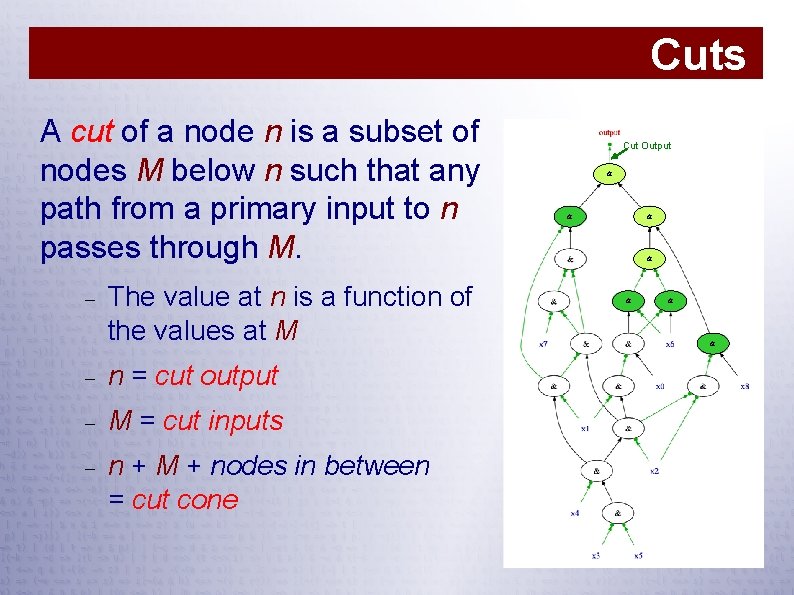

Cuts A cut of a node n is a subset of nodes M below n such that any path from a primary input to n passes through M. The value at n is a function of the values at M n = cut output M = cut inputs n + M + nodes in between = cut cone Cut Output & & & &

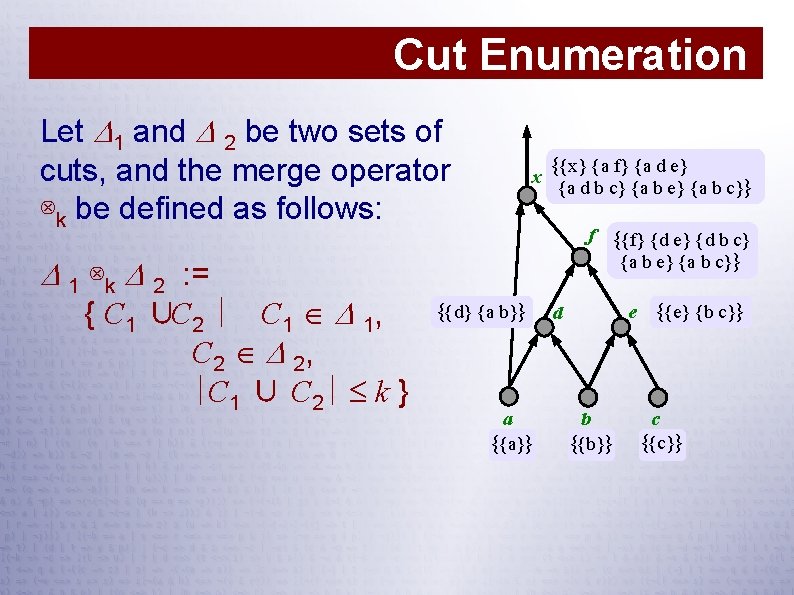

Cut Enumeration Let 1 and 2 be two sets of cuts, and the merge operator ⊗k be defined as follows: x {{x} {a f} {a d e} {a d b c} {a b e} {a b c}} f {{f} {d e} {d b c} {a b e} {a b c}} 1 ⊗k 2 : = { C 1 ∪C 2 ∣ C 1 1, C 2 2, ∣C 1 ∪ C 2∣ k } {{d} {a b}} a {{a}} e {{e} {b c}} d b {{b}} c {{c}}

DAG-Aware Minimization ● Minimizes an AIG taking sharing into account: Compute “good” AIG representations for each 4 input function. For every 4 -input subgraph, see if nodes can be saved by change of representation.

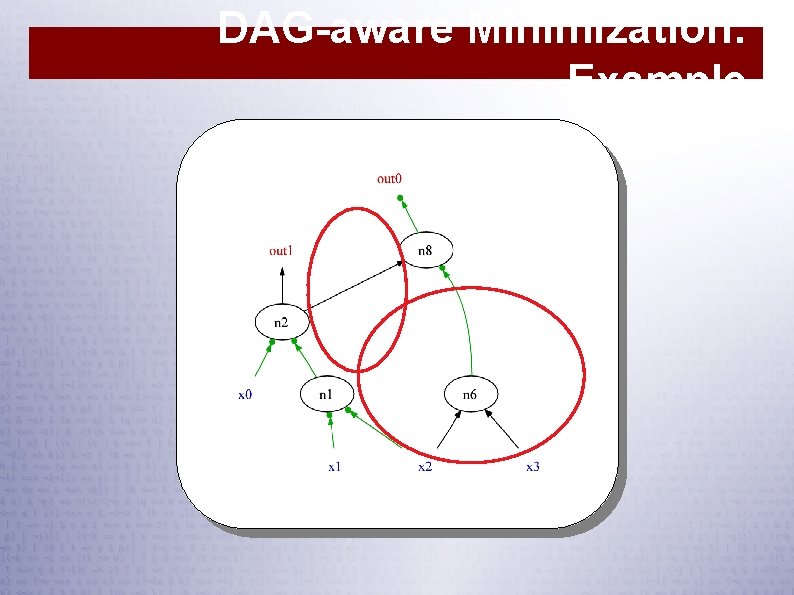

DAG-aware Minimization: Example

Technology Mapping for CNF ● Enumerate all 4 -input. ● Select “best” cut for each node. ● Outputs from logic will induce a subgraph. ● Area Flow: Estimate the area increase that would result from including a node: cost of node + area flow of children estimated number of fanouts ● In example (next slide): cost of node = 1 In real algorithm: #clauses

![Techmap: Enumerating Cuts Node 17: [fanout est. =1. 5] { { { { 16 Techmap: Enumerating Cuts Node 17: [fanout est. =1. 5] { { { { 16](http://slidetodoc.com/presentation_image_h2/57fee86f468c7c52b2af05be374d0e6f/image-63.jpg)

Techmap: Enumerating Cuts Node 17: [fanout est. =1. 5] { { { { 16 15 14 16 15 13 16 15 14 x 2 14 13 x 3 13 } x 2 } x 1 } x 3 x 2 } x 2 x 1 } x 3 x 1 } AF=1. 44 AF=1. 56 AF=1. 11 AF=1. 44 AF=1. 78 AF=1. 33 AF=1. 67 CS=2 CS=3 CS=4 AF = Area Flow (estimated area required for introducing node) CS = Cut Size

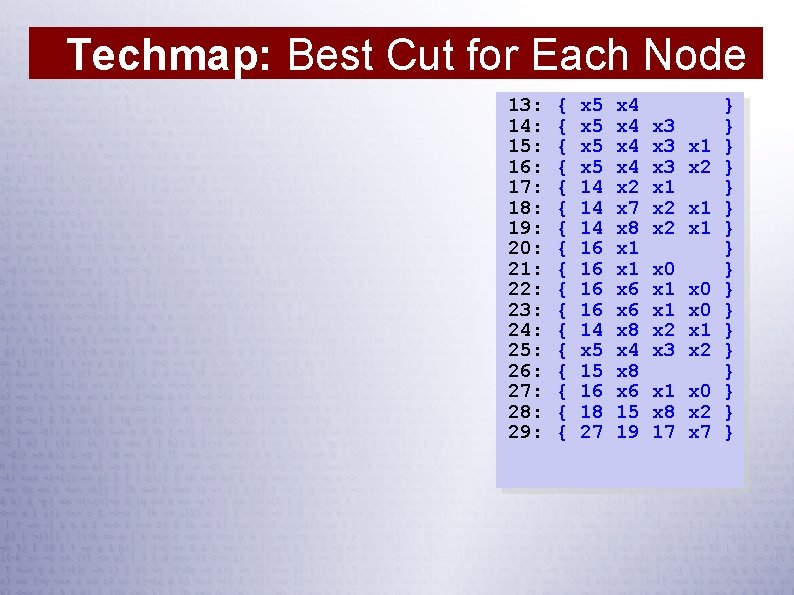

Techmap: Best Cut for Each Node 13: 14: 15: 16: 17: 18: 19: 20: 21: 22: 23: 24: 25: 26: 27: 28: 29: { { { { { x 5 x 5 14 14 14 16 16 14 x 5 15 16 18 27 x 4 x 4 x 2 x 7 x 8 x 1 x 6 x 8 x 4 x 8 x 6 15 19 x 3 x 3 x 1 x 2 x 0 x 1 x 2 x 3 x 1 x 2 x 1 x 0 x 8 x 2 17 x 7 } } } } }

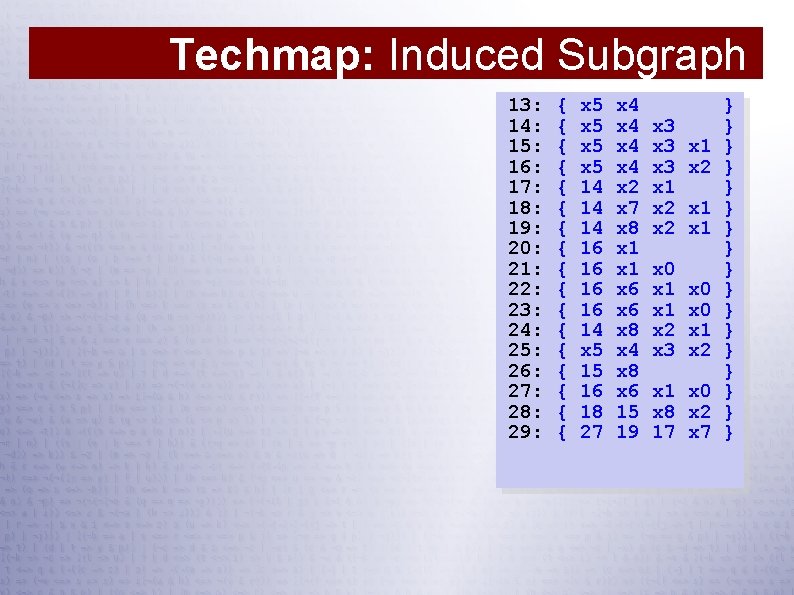

Techmap: Induced Subgraph 13: 14: 15: 16: 17: 18: 19: 20: 21: 22: 23: 24: 25: 26: 27: 28: 29: { { { { { x 5 x 5 14 14 14 16 16 14 x 5 15 16 18 27 x 4 x 4 x 2 x 7 x 8 x 1 x 6 x 8 x 4 x 8 x 6 15 19 x 3 x 3 x 1 x 2 x 0 x 1 x 2 x 3 x 1 x 2 x 1 x 0 x 8 x 2 17 x 7 } } } } }

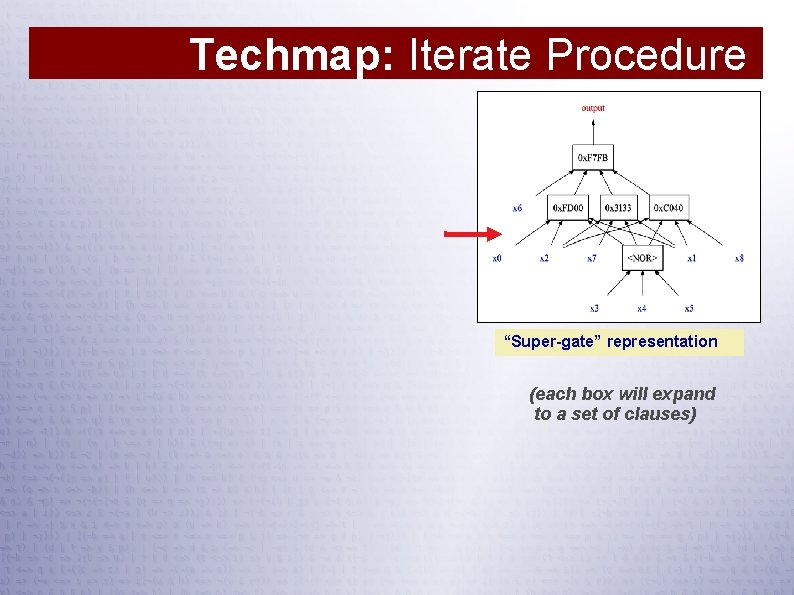

Techmap: Iterate Procedure “Super-gate” representation (each box will expand to a set of clauses)

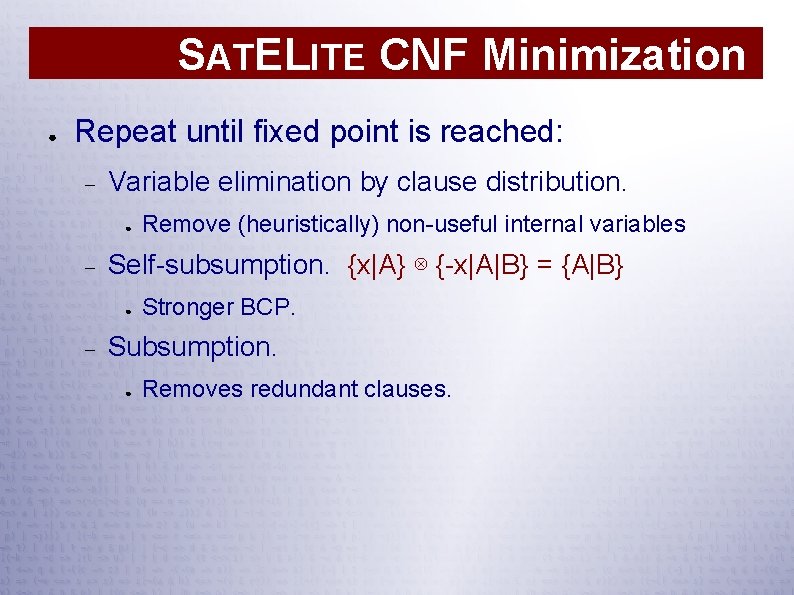

SATELITE CNF Minimization ● Repeat until fixed point is reached: Variable elimination by clause distribution. ● Self-subsumption. {x|A} ⊗ {-x|A|B} = {A|B} ● Remove (heuristically) non-useful internal variables Stronger BCP. Subsumption. ● Removes redundant clauses.

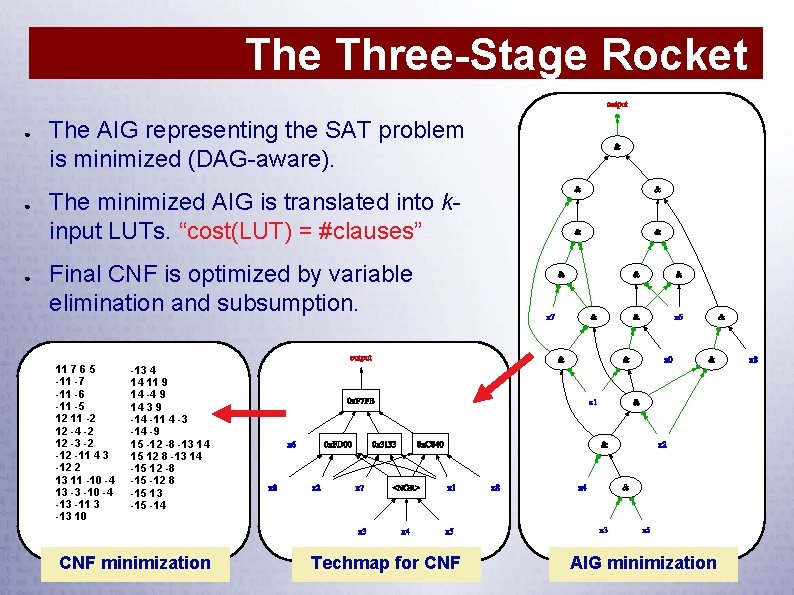

The Three-Stage Rocket ● ● ● The AIG representing the SAT problem is minimized (DAG-aware). The minimized AIG is translated into kinput LUTs. “cost(LUT) = #clauses” Final CNF is optimized by variable elimination and subsumption. 11 7 6 5 -11 -7 -11 -6 -11 -5 12 11 -2 12 -4 -2 12 -3 -2 -11 4 3 -12 2 13 11 -10 -4 13 -3 -10 -4 -13 -11 3 -13 10 -13 4 14 11 9 14 -4 9 14 3 9 -14 -11 4 -3 -14 -9 15 -12 -8 -13 14 15 12 8 -13 14 -15 12 -8 -15 -12 8 -15 13 -15 -14 CNF minimization Techmap for CNF AIG minimization

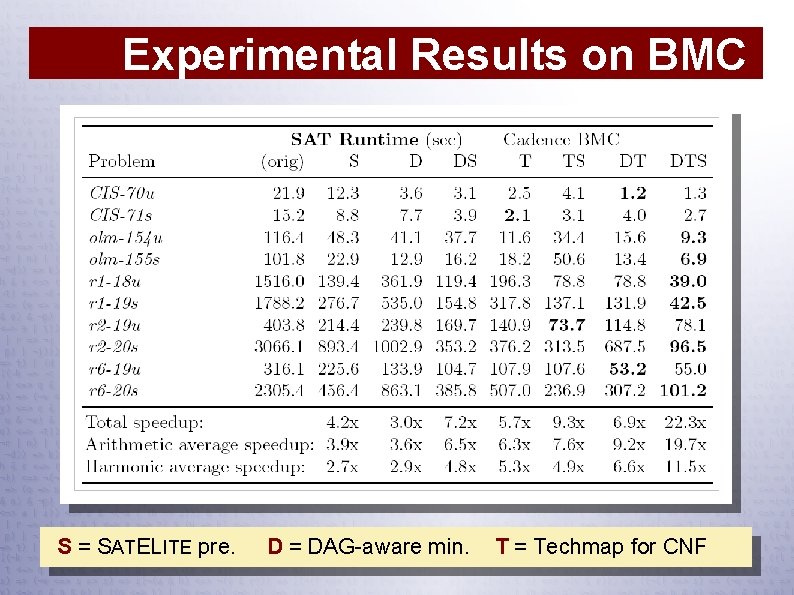

Experimental Results on BMC S = SATELITE pre. D = DAG-aware min. T = Techmap for CNF

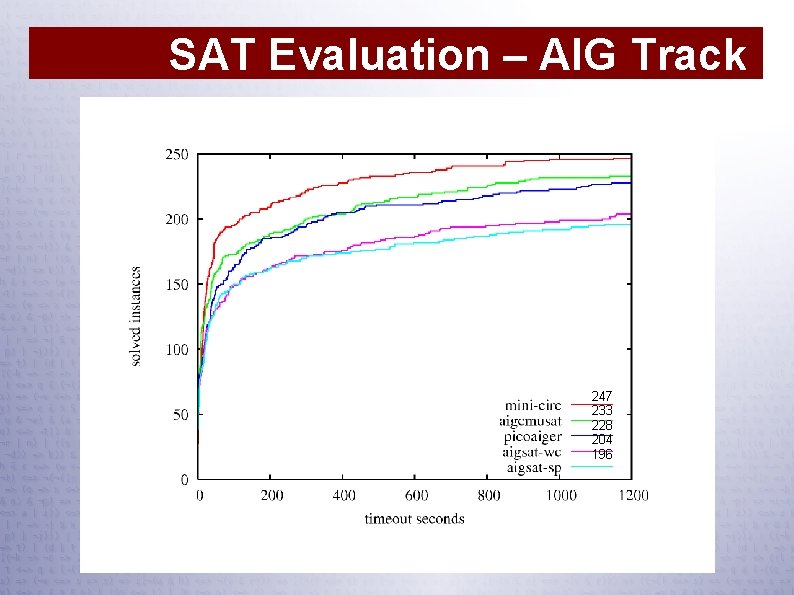

SAT Evaluation – AIG Track 247 233 228 204 196

Incremental SAT ● ● Important for integrated use of SAT Keeps the solver “warm”: variable heuristic, learnt clauses, clause activity May conflict with preprocessing and minimization techniques Mini. Sat's interface: void add. Clause(lits c) bool solve(lits assumps) lits analyze. Final()

![Incremental SAT — BMC for x ∈ [0, #vars) add. Clause({ Init[0][x] }) for Incremental SAT — BMC for x ∈ [0, #vars) add. Clause({ Init[0][x] }) for](http://slidetodoc.com/presentation_image_h2/57fee86f468c7c52b2af05be374d0e6f/image-72.jpg)

Incremental SAT — BMC for x ∈ [0, #vars) add. Clause({ Init[0][x] }) for i ∈ [0, ] { if solve({ ~Prop[i] }) return counter-example add. Clause({ Prop[i] }) } Init Prop Init Prop Prop Init ● Prop Problems How to limit CNF minimization to the cone-ofinfluence?

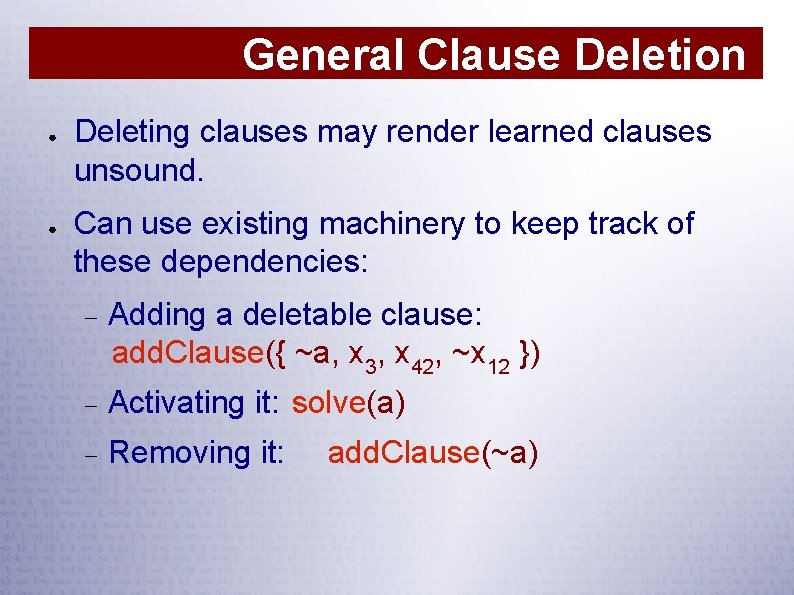

General Clause Deletion ● ● Deleting clauses may render learned clauses unsound. Can use existing machinery to keep track of these dependencies: Adding a deletable clause: add. Clause({ ~a, x 3, x 42, ~x 12 }) Activating it: solve(a) Removing it: add. Clause(~a)

Conclusions

Concluding Remarks ● Research to improve SAT solvers has largely saturated ● ● It is hard to improve upon Chaff style SAT-solving However, as SAT methods are now well understood by many, they become building blocks: SMT solvers Theorem provers Model finders etc. As SAT gets integrated into many contexts, it is important to keep the core solver simple and small. . . . in fact, it should be kept mini!

- Slides: 75