Populationbased metaheuristics Natureinspired Initialize a population A new

Population-based metaheuristics • • Nature-inspired Initialize a population A new population of solutions is generated Integrate the new population into the current one using one these methods – by replacement which is a selection process from the new and current solutions – – – Evolutionary Algorithms – genetic algorithm Scatter search Estimation of distribution algorithm (EDA) Evolutionary programming- genetic programming Swarm Intelligence • Ant colony • Particle swarm optimization (PSO) • Bee colony – Artificial Immune system AIS • Continue until a stopping criteria is reached • The generation and replacement process could be memoryless or some search memory is used 1

Common concepts of Evolutionary Algorithm • Main search components are – Representation For Ex: in Genetic Algorithm GA, the encoded solution is called a chromosome. The decision variables within a solution are genes. The possible values of the genes are alleles and the position of the element (gene) within a chromosome is named locus. – Ex TSP Chromosome = (_, _, _) – Population Initialization – Objective function, also called fitness in EA terminology. – Selection strategy – which parents are chosen for the next generation with the objective of better fitness – Reproduction strategy – mutation, crossover, merge, or from a shared memory – Replacement strategy – using the best of the old and new population – survival of the fittest – Stopping criteria 2

Genetic Algorithm • Very popular method from the 1970 s. Used in optimization and machine learning. • Representation – binary or non-binary (real-valued vectors) • Use a crossover rate to choose parents that will generate offsprings. – Selection is based on proportional fitness assignment • Applies n-point or uniform crossover to two parent solutions and a mutation (bit flipping) to randomly modify the individual solutions contents to promote diversity. • Replacement is generational – parents are replaced by the offsprings. • See handout for an example. 3

Scatter Search • Combines both P and S metaheuristics • Select an initial population satisfying both diversity and high quality – reference set – in which useful information about the global optima is stored in a diverse and elite set of solutions • Reference set is partitioned into subsets and linearly recombined to create weighted centroids of sample-based neighborhoods. • Use both P and S metaheuristics on these centroids- cross over, mutation followed by S-meta heuristics (local search) to create new populations – Evaluate the objective function – Keep diverse and high quality solutions • Update the reference set with the best solutions • Continue the process till stopping criteria. 4

Estimation of Distribution Algorithm Generate a population of solutions Evaluate the objective function of the individuals Select m best individuals using any selection method Use the m sample population to generate a probability distribution • New individuals are generated by sampling this distribution to form the next new population • • – Also called non-Darwinian evolutionary algorithm • Continue until stopping criteria is reached 5

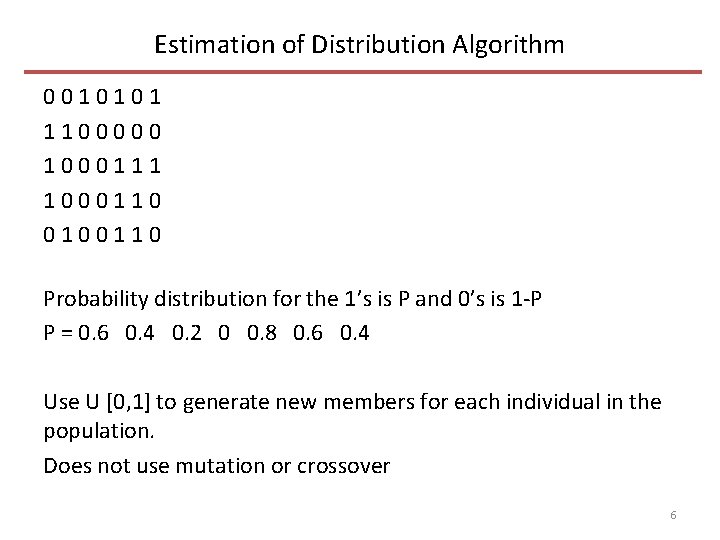

Estimation of Distribution Algorithm 0 0 1 0 1 1 1 0 0 0 1 1 0 0 0 1 1 0 Probability distribution for the 1’s is P and 0’s is 1 -P P = 0. 6 0. 4 0. 2 0 0. 8 0. 6 0. 4 Use U [0, 1] to generate new members for each individual in the population. Does not use mutation or crossover 6

Genetic Programming • More recent approach than GA (1992, Koza from MIT) • Instead of fixed length strings as in GA, the individuals in GP are programs- nonlinear representation based on trees. • Crossover is based on subtree exchange and mutation is based on random changes in the tree • Parent selection is fitness proportional and replacement is generational. • One of the issue is the uncontrolled growth of trees – known as bloat • Used in machine learning and data mining tasks such as prediction and classification. 7

Genetic Programming • GP uses terminal (leaves of a tree) and function (interior nodes) sets • The objective is to generate programs that perform a task • The programs grow or shrink in size at each iteration as the search progresses • 1) Generate an initial population of random compositions of the functions and terminals of the problem (computer programs). 2) Execute each program in the population and assign it a fitness value according to how well it solves the problem. 3) Create a new population of computer programs. i) Copy the best existing programs ii) Create new computer programs by mutation. iii) Create new computer programs by crossover 4) The best computer program that appeared in any generation, the bestso-far solution, is designated as the result of genetic programming 8

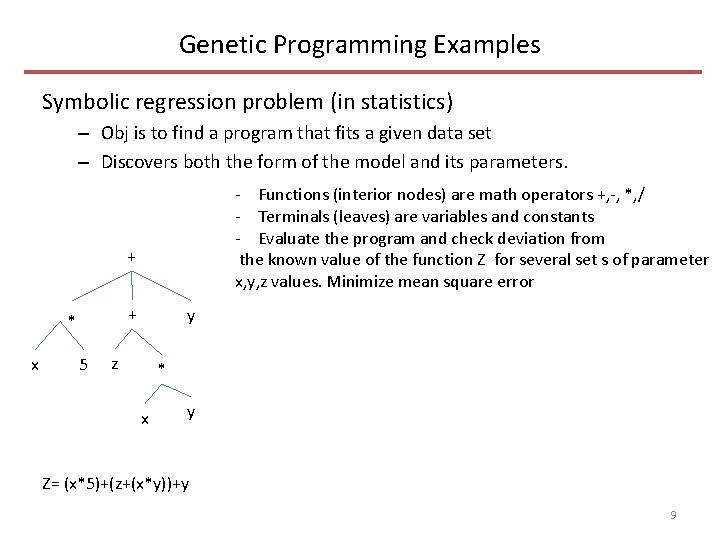

Genetic Programming Examples Symbolic regression problem (in statistics) – Obj is to find a program that fits a given data set – Discovers both the form of the model and its parameters. - Functions (interior nodes) are math operators +, -, *, / - Terminals (leaves) are variables and constants - Evaluate the program and check deviation from the known value of the function Z for several set s of parameter x, y, z values. Minimize mean square error + + * x 5 y z * x y Z= (x*5)+(z+(x*y))+y 9

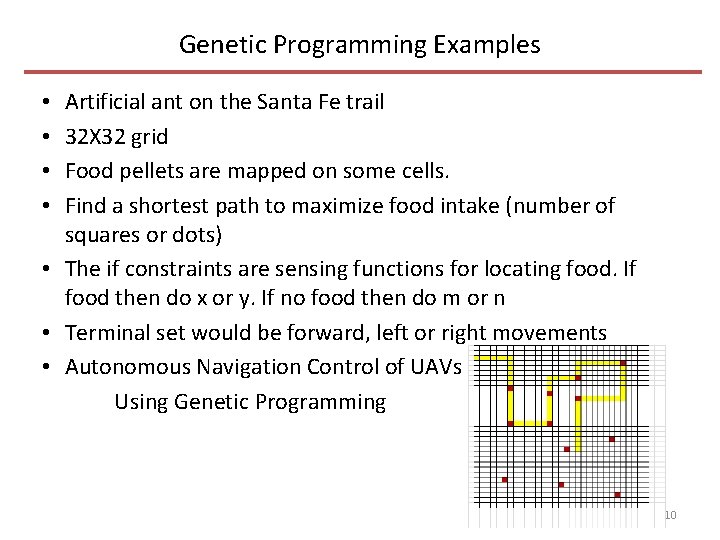

Genetic Programming Examples Artificial ant on the Santa Fe trail 32 X 32 grid Food pellets are mapped on some cells. Find a shortest path to maximize food intake (number of squares or dots) • The if constraints are sensing functions for locating food. If food then do x or y. If no food then do m or n • Terminal set would be forward, left or right movements • Autonomous Navigation Control of UAVs Using Genetic Programming • • 10

Genetic Programming Examples • A program to control flow of water through a network of water sprinklers • There are several sprinklers on a golf course and several valves that can turn on/off to let water flow through them. • The objective (fitness function) is to achieve correct amount of water output from each sprinkler and achieve uniform distribution (pressure). Measure fitness by placing measuring devices (e. g rain gauge) that record the amount of water collected then minimize the standard deviation among these devices. • Opening too many valves at a time will drop the pressure in the system (some areas may not get water), and too few will result in excess flow due to high pressure. • The terminal sets are sprinklers (leaves) and function sets interior nodes) are valves • The solution is to generate programs that open and close valves to achieve the above objective. • There are many solutions to evaluate 11

Swarm Intelligence • Algorithms inspired by collective behavior of species – Ants, bees, termites etc. • Inspired from the social behavior of the species as they compete/forage for food • Particles are – Simple, non-sophisticated agents – Agents cooperate by indirect communication – Agents move around in the decision space. • Non evolutionary, uses shared memory 12

Ant Colony Optimization • Ants transport food and find shortest paths Use simple communicating methods Leave a chemical trail – that is both olfactive and volatile The trail is also called a pheromone trail The trail guides the other ants toward the food Larger the amount of pheromone, larger the probability that a trail will be chosen – The pheromone evaporates over time – – – • In optimization – Initialize pheromone trails – Construct solutions using the pheromone trails – Pheromone update using generated solutions • Evaporation • reinforcement 13

Ant Colony Optimization • The pheromone trails memorize the characteristics of good generated solutions, which guides the construction of new solutions • The trails change dynamically to reflect acquired knowledge • Evaporation phase: The trail decreases automatically. Each pheromone value is reduced by a fixed proportion – The goal is to avoid a premature convergence to the good solution for all the ants – Also helps in diversification • Reinforcement Phase: the pheromone trail is updated according to the generated solution 14

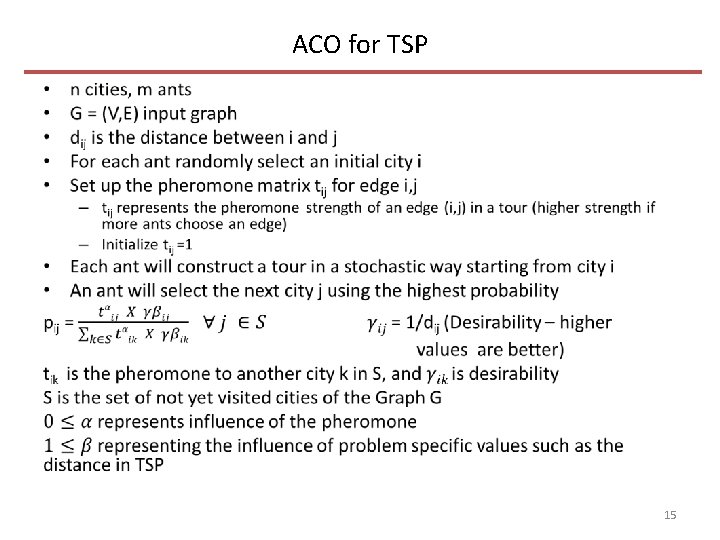

ACO for TSP • 15

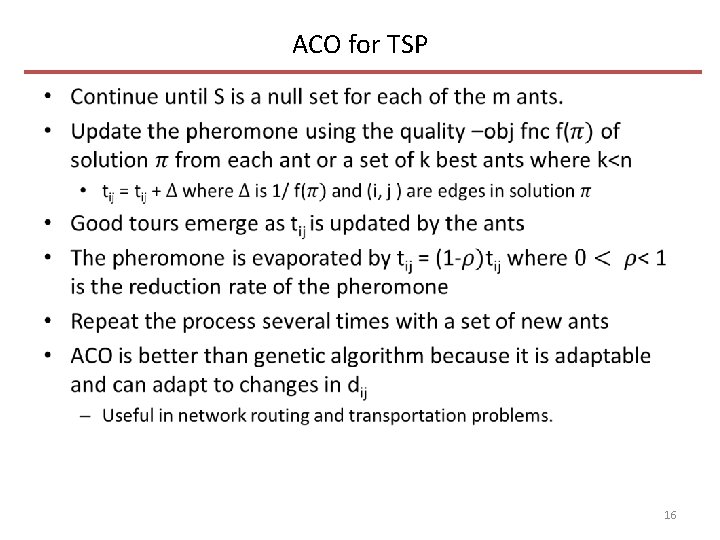

ACO for TSP • 16

Particle Swarm Optimization • Stochastic p-metaheuristics • PSO does not use the gradient of the problem being optimized • Mimics social behavior of swarms such as birds, fish that go after food • A coordinated behavior using local movement emerges without any central control • A swarm consists of N particles flying in D dimensional space (D refers to the # of iterations for the algorithm, t= 1, …. . , t=D) • Each particle i is a candidate solution and represented by a vector xi – position in the decision space • Each particle has a velocity vi that gives direction and a value (called step) that updates the position xi, and distance travelled is from xi(t) to xi(t-1) • Each particle successfully adjusts its position toward the global optimum using the following two factors 17

PSO • 1) the best position visited by itself pi = best of (xi(1), xi(2), … xi(t-1)) • 2) the best position visited by the whole swarm pg = best of (xg(1), …. . xg(t-1)) • Neighborhood- a topology is associated with the swarm – Global – the whole population – complete graph – Local –The neighborhood is a set of directly connected particles, a ring formation where every particle is connected to two others • At each iteration t in D, each particle updates – Velocity vi(t) = w vi(t-1) + r 1 C 1 (pi - xi(t-1)) + r 2 C 2 (pg - xi(t-1)) where r 1 , r 2 are two random variables on [0, 1]. Constant C 1 is the cognitive learning factor that represents the attraction that a particle has towards its own success and C 2 is the social learning factor that represents the attraction that a particle has towards the success of its neighbors. w is a weight on the previous velocity. A large w will encourage diversification and a small value will encourage intensification 18

PSO • Position update xi(t) = xi(t-1) + vi(t) • Update the best found particles • For a minimization problem – If f(pg) < f(xi) < f(pi) then pi = xi – If f(xi) < f(pg) then pg = xi 19

- Slides: 19