PLB A Programming Language for the Blues George

PL/B: A Programming Language for the Blues George Almasi, Luiz De. Rose, Jose Moreira, and David Padua

Objective of this Project • To develop a programming system for the Blue Gene series (and distributed-memory machines in general) that is: – Convenient to use for program development and maintenance. Resulting parallel programs should be very readable – easy to modify. – Not too difficult to implement so that the system could be completed in a reasonable time. • Main focus is numerical computing.

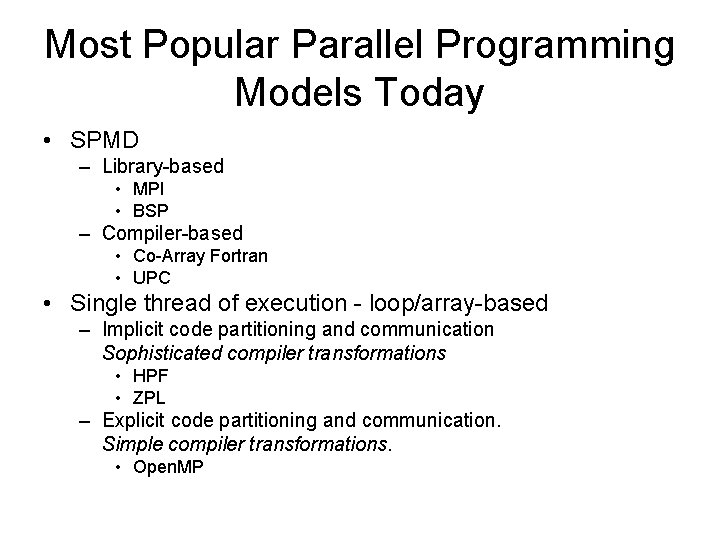

Most Popular Parallel Programming Models Today • SPMD – Library-based • MPI • BSP – Compiler-based • Co-Array Fortran • UPC • Single thread of execution - loop/array-based – Implicit code partitioning and communication Sophisticated compiler transformations • HPF • ZPL – Explicit code partitioning and communication. Simple compiler transformations. • Open. MP

Design Principles (1 of 2) • Wanted to avoid the SPMD programming model. – In its more general form can lead to 4 D spaghetti code. More structure is needed. – The most popular of the SPMD implementations, MPI, is cumbersome to use, in part due to the lack of compiler support. It has been called the “assembly language of parallel computing”. – It is difficult to reason about SPMD programs. When we reason about them, we rely on an (implicit) global view of communication and computation. • Wanted to avoid the compiler complexity and limitations that led to the failure of HPF.

Design Principles (2 of 2) • Open. MP is a good model, but Blue Gene is not a shared -memory machine. Open. MP could be implemented on top of Tread. Marks, but efficient implementations would require untested, sophisticated compilers. • We propose a few language extensions and a programming model based on a single thread of execution. We would like the extensions to require only simple transformations similar to those used by Open. MP compilers. • We would like the extensions to be of a general nature so that they can be applied to any conventional language.

MATLAB Implementation • • We will present next our solution in the context of MATLAB. There at least two reasons why developing a parallel extension to MATLAB is a good idea. 1. MATLAB is an excellent language for prototyping conventional algorithms. There is nothing equivalent for parallel algorithms. • • Programmers of parallel machines should appreciate the MATLAB environment as much as conventional programmers. In fact, during a site visit to TJ Watson, several government representatives requested that a parallel MATLAB be included in the PERCS project. 2. It is relatively simple to develop a prototype of the proposed extensions in MATLAB.

PL/B Data Types and Statements • The constructs of PL/B are – A new type of objects: Hierarchically tiled arrays. – Array (and hierarchical array) operations and assignment statements similar to those found in MATLAB, Fortran 90, and APL. • Hierarchically tiled arrays (HTAs) are used – to specify data distribution in parallel programs and – to facilitate the writing of blocked sequential algorithms (for locality).

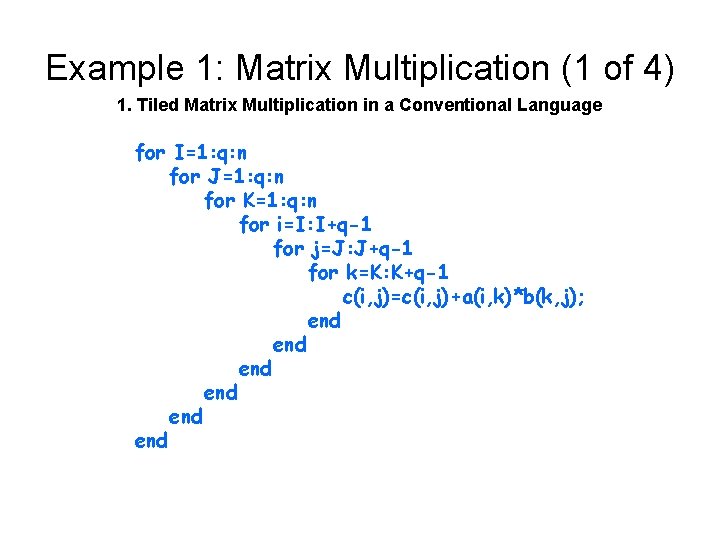

Example 1: Matrix Multiplication (1 of 4) 1. Tiled Matrix Multiplication in a Conventional Language for I=1: q: n for J=1: q: n for K=1: q: n for i=I: I+q-1 for j=J: J+q-1 for k=K: K+q-1 c(i, j)=c(i, j)+a(i, k)*b(k, j); end end end

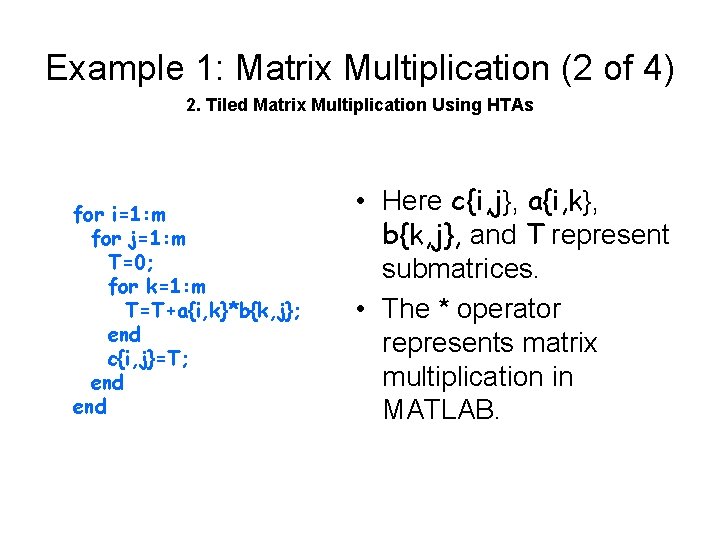

Example 1: Matrix Multiplication (2 of 4) 2. Tiled Matrix Multiplication Using HTAs for i=1: m for j=1: m T=0; for k=1: m T=T+a{i, k}*b{k, j}; end c{i, j}=T; end • Here c{i, j}, a{i, k}, b{k, j}, and T represent submatrices. • The * operator represents matrix multiplication in MATLAB.

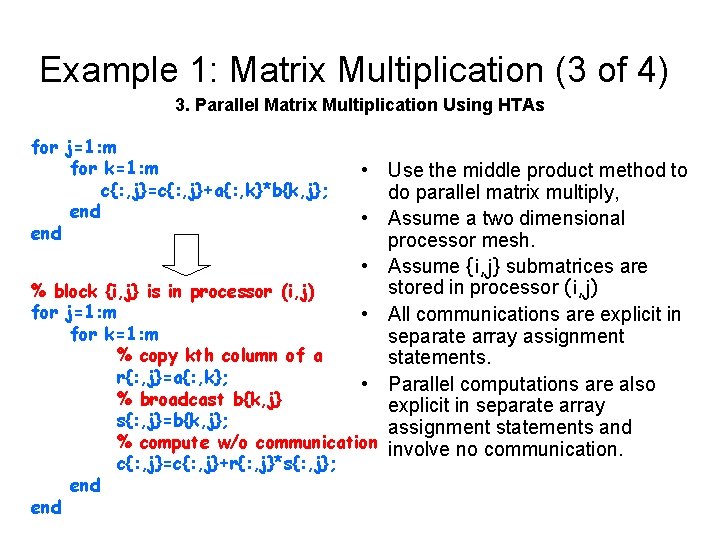

Example 1: Matrix Multiplication (3 of 4) 3. Parallel Matrix Multiplication Using HTAs for j=1: m for k=1: m c{: , j}=c{: , j}+a{: , k}*b{k, j}; end • Use the middle product method to do parallel matrix multiply, • Assume a two dimensional processor mesh. • Assume {i, j} submatrices are stored in processor (i, j) % block {i, j} is in processor (i, j) for j=1: m • All communications are explicit in for k=1: m separate array assignment % copy kth column of a statements. r{: , j}=a{: , k}; • Parallel computations are also % broadcast b{k, j} explicit in separate array s{: , j}=b{k, j}; assignment statements and % compute w/o communication involve no communication. end c{: , j}=c{: , j}+r{: , j}*s{: , j};

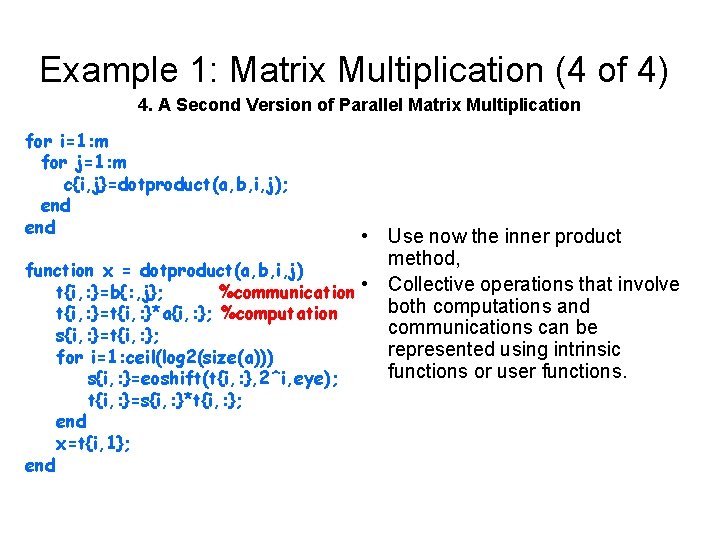

Example 1: Matrix Multiplication (4 of 4) 4. A Second Version of Parallel Matrix Multiplication for i=1: m for j=1: m c{i, j}=dotproduct(a, b, i, j); end • Use now the inner product method, function x = dotproduct(a, b, i, j) t{i, : }=b{: , j}; %communication • Collective operations that involve both computations and t{i, : }=t{i, : }*a{i, : }; %computation communications can be s{i, : }=t{i, : }; represented using intrinsic for i=1: ceil(log 2(size(a))) functions or user functions. s{i, : }=eoshift(t{i, : }, 2^i, eye); t{i, : }=s{i, : }*t{i, : }; end x=t{i, 1};

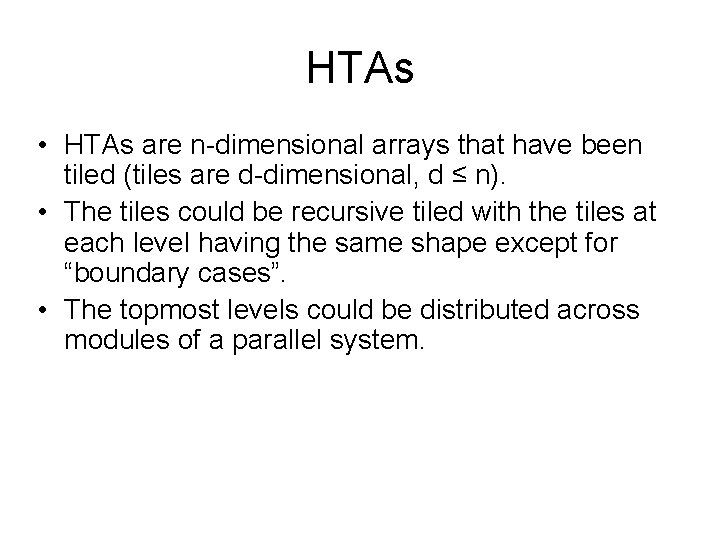

HTAs • HTAs are n-dimensional arrays that have been tiled (tiles are d-dimensional, d ≤ n). • The tiles could be recursive tiled with the tiles at each level having the same shape except for “boundary cases”. • The topmost levels could be distributed across modules of a parallel system.

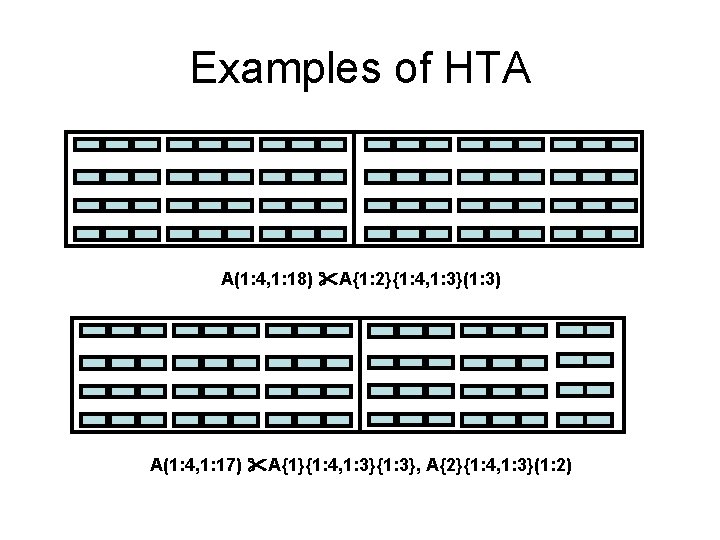

Examples of HTA A(1: 4, 1: 18) A{1: 2}{1: 4, 1: 3}(1: 3) A(1: 4, 1: 17) A{1}{1: 4, 1: 3}{1: 3}, A{2}{1: 4, 1: 3}(1: 2)

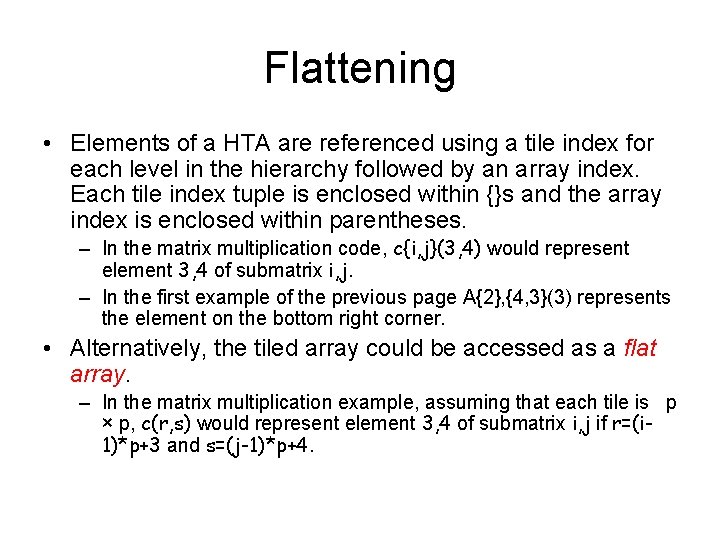

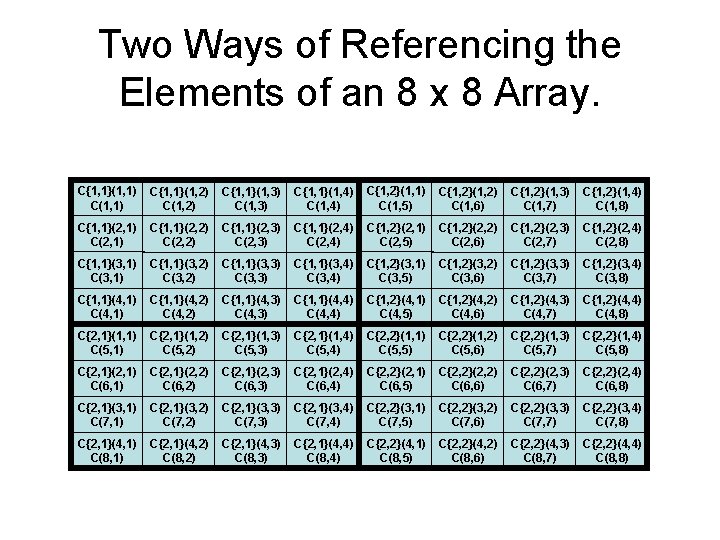

Flattening • Elements of a HTA are referenced using a tile index for each level in the hierarchy followed by an array index. Each tile index tuple is enclosed within {}s and the array index is enclosed within parentheses. – In the matrix multiplication code, c{i, j}(3, 4) would represent element 3, 4 of submatrix i, j. – In the first example of the previous page A{2}, {4, 3}(3) represents the element on the bottom right corner. • Alternatively, the tiled array could be accessed as a flat array. – In the matrix multiplication example, assuming that each tile is p × p, c(r, s) would represent element 3, 4 of submatrix i, j if r=(i 1)*p+3 and s=(j-1)*p+4.

Two Ways of Referencing the Elements of an 8 x 8 Array. C{1, 1}(1, 1) C{1, 1}(1, 2) C{1, 1}(1, 3) C{1, 1}(1, 4) C{1, 2}(1, 1) C(1, 5) C{1, 2}(1, 2) C(1, 6) C{1, 2}(1, 3) C(1, 7) C{1, 2}(1, 4) C(1, 8) C{1, 1}(2, 1) C{1, 1}(2, 2) C{1, 1}(2, 3) C{1, 1}(2, 4) C{1, 2}(2, 1) C(2, 5) C{1, 2}(2, 2) C(2, 6) C{1, 2}(2, 3) C(2, 7) C{1, 2}(2, 4) C(2, 8) C{1, 1}(3, 1) C{1, 1}(3, 2) C{1, 1}(3, 3) C{1, 1}(3, 4) C{1, 2}(3, 1) C(3, 5) C{1, 2}(3, 2) C(3, 6) C{1, 2}(3, 3) C(3, 7) C{1, 2}(3, 4) C(3, 8) C{1, 1}(4, 1) C{1, 1}(4, 2) C{1, 1}(4, 3) C{1, 1}(4, 4) C{1, 2}(4, 1) C(4, 5) C{1, 2}(4, 2) C(4, 6) C{1, 2}(4, 3) C(4, 7) C{1, 2}(4, 4) C(4, 8) C{2, 1}(1, 1) C(5, 1) C{2, 1}(1, 2) C(5, 2) C{2, 1}(1, 3) C(5, 3) C{2, 1}(1, 4) C(5, 4) C{2, 2}(1, 1) C(5, 5) C{2, 2}(1, 2) C(5, 6) C{2, 2}(1, 3) C(5, 7) C{2, 2}(1, 4) C(5, 8) C{2, 1}(2, 1) C(6, 1) C{2, 1}(2, 2) C(6, 2) C{2, 1}(2, 3) C(6, 3) C{2, 1}(2, 4) C(6, 4) C{2, 2}(2, 1) C(6, 5) C{2, 2}(2, 2) C(6, 6) C{2, 2}(2, 3) C(6, 7) C{2, 2}(2, 4) C(6, 8) C{2, 1}(3, 1) C(7, 1) C{2, 1}(3, 2) C(7, 2) C{2, 1}(3, 3) C(7, 3) C{2, 1}(3, 4) C(7, 4) C{2, 2}(3, 1) C(7, 5) C{2, 2}(3, 2) C(7, 6) C{2, 2}(3, 3) C(7, 7) C{2, 2}(3, 4) C(7, 8) C{2, 1}(4, 1) C(8, 1) C{2, 1}(4, 2) C(8, 2) C{2, 1}(4, 3) C(8, 3) C{2, 1}(4, 4) C(8, 4) C{2, 2}(4, 1) C(8, 5) C{2, 2}(4, 2) C(8, 6) C{2, 2}(4, 3) C(8, 7) C{2, 2}(4, 4) C(8, 8)

Parallel Communication and Computation in PL/B (1 of 2) • PL/B programs are single-threaded and contain array operations on HTAs. The sequential part of the program • Like in HPF and MPI processors can be arranged in different forms. In MPF and MPI, processors are arranged into meshes, in PL/B we also consider (virtual) nodes so that processors can be arranged into hierarchical meshes. • The top levels of HTAs can be distributed onto a subset of nodes.

Parallel Communication and Computation in PL/B (1 of 2) • Array operations on HTAs can represent communication or computation. – Assignment statements where all HTA indices are identical are computations executed in the home of each of the HTA elements involved. – Assignment statements where this is not the case represent communication operations.

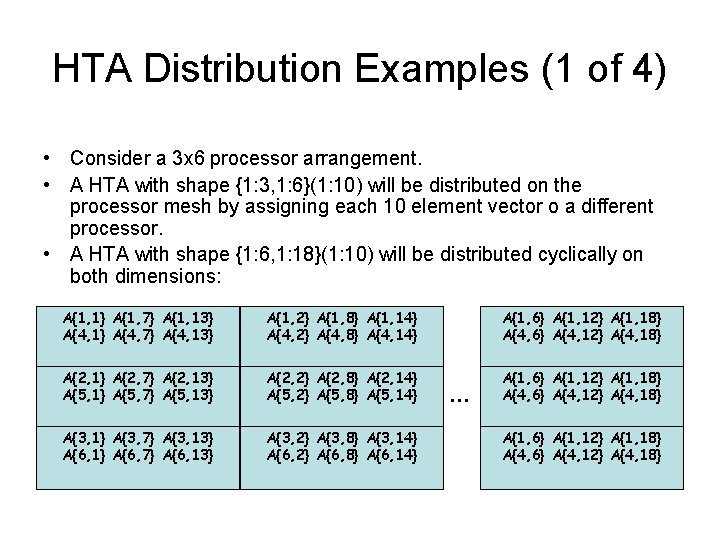

HTA Distribution Examples (1 of 4) • Consider a 3 x 6 processor arrangement. • A HTA with shape {1: 3, 1: 6}(1: 10) will be distributed on the processor mesh by assigning each 10 element vector o a different processor. • A HTA with shape {1: 6, 1: 18}(1: 10) will be distributed cyclically on both dimensions: A{1, 1} A{1, 7} A{1, 13} A{4, 1} A{4, 7} A{4, 13} A{1, 2} A{1, 8} A{1, 14} A{4, 2} A{4, 8} A{4, 14} A{1, 6} A{1, 12} A{1, 18} A{4, 6} A{4, 12} A{4, 18} A{2, 1} A{2, 7} A{2, 13} A{5, 1} A{5, 7} A{5, 13} A{2, 2} A{2, 8} A{2, 14} A{5, 2} A{5, 8} A{5, 14} A{1, 6} A{1, 12} A{1, 18} A{4, 6} A{4, 12} A{4, 18} A{3, 1} A{3, 7} A{3, 13} A{6, 1} A{6, 7} A{6, 13} A{3, 2} A{3, 8} A{3, 14} A{6, 2} A{6, 8} A{6, 14} … A{1, 6} A{1, 12} A{1, 18} A{4, 6} A{4, 12} A{4, 18}

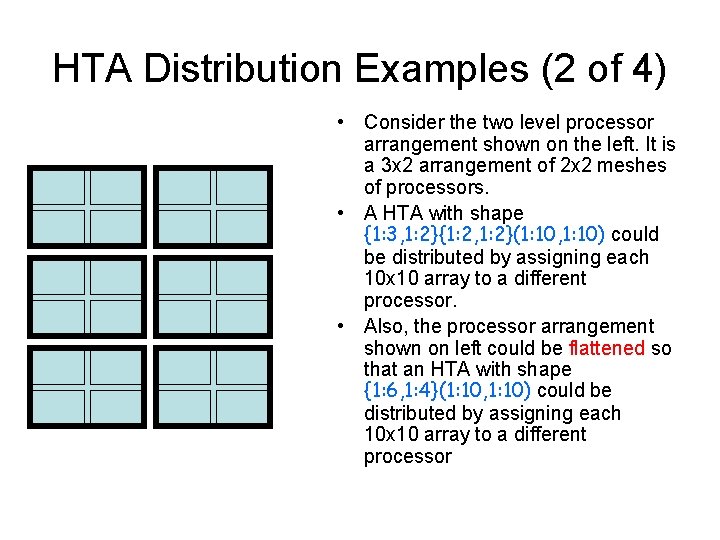

HTA Distribution Examples (2 of 4) • Consider the two level processor arrangement shown on the left. It is a 3 x 2 arrangement of 2 x 2 meshes of processors. • A HTA with shape {1: 3, 1: 2}{1: 2, 1: 2}(1: 10, 1: 10) could be distributed by assigning each 10 x 10 array to a different processor. • Also, the processor arrangement shown on left could be flattened so that an HTA with shape {1: 6, 1: 4}(1: 10, 1: 10) could be distributed by assigning each 10 x 10 array to a different processor

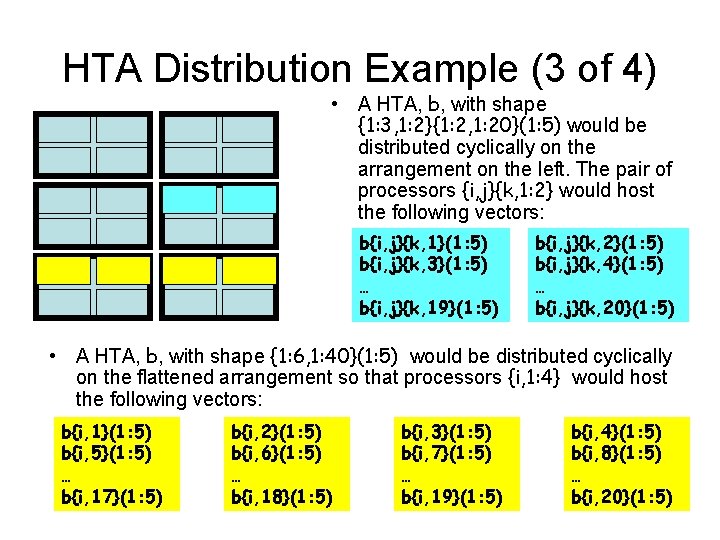

HTA Distribution Example (3 of 4) • A HTA, b, with shape {1: 3, 1: 2}{1: 2, 1: 20}(1: 5) would be distributed cyclically on the arrangement on the left. The pair of processors {i, j}{k, 1: 2} would host the following vectors: b{i, j}{k, 1}(1: 5) b{i, j}{k, 3}(1: 5) … b{i, j}{k, 19}(1: 5) b{i, j}{k, 2}(1: 5) b{i, j}{k, 4}(1: 5) … b{i, j}{k, 20}(1: 5) • A HTA, b, with shape {1: 6, 1: 40}(1: 5) would be distributed cyclically on the flattened arrangement so that processors {i, 1: 4} would host the following vectors: b{i, 1}(1: 5) b{i, 5}(1: 5) … b{i, 17}(1: 5) b{i, 2}(1: 5) b{i, 6}(1: 5) … b{i, 18}(1: 5) b{i, 3}(1: 5) b{i, 7}(1: 5) … b{i, 19}(1: 5) b{i, 4}(1: 5) b{i, 8}(1: 5) … b{i, 20}(1: 5)

Advantages of the PL/B Programming Model • We believe that by relying on a single thread our programming model facilitates the transition from sequential to parallel form. • In fact, a conventional program can be incrementally modified by converting one data structure at a time into distributed cell array form (cf. Open. MP).

Additional Examples • We now present several additional examples of PL/B • Except where indicated, we assume that: – Each HTA has a single level of tiling, – All HTAs have identical sizes and shapes, – The HTAs are identically distributed on a processor mesh that matches in size and shape all HTAs. • Our objective in these examples is to show the expressiveness of the language for both dense and sparse computations.

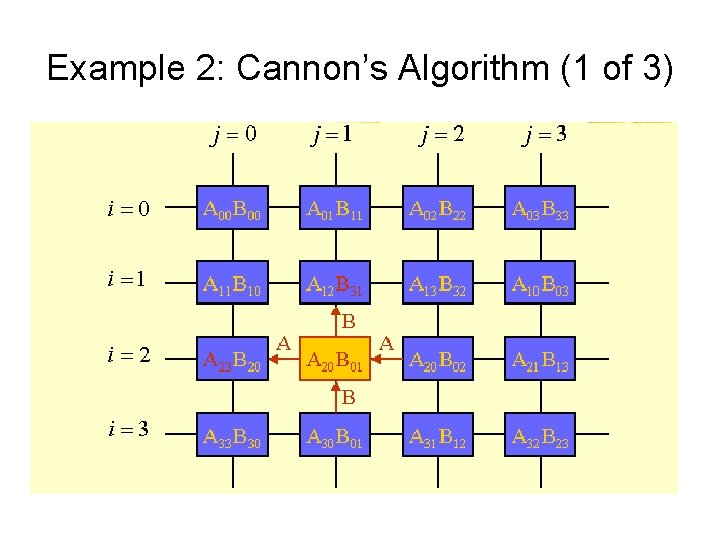

Example 2: Cannon’s Algorithm (1 of 3)

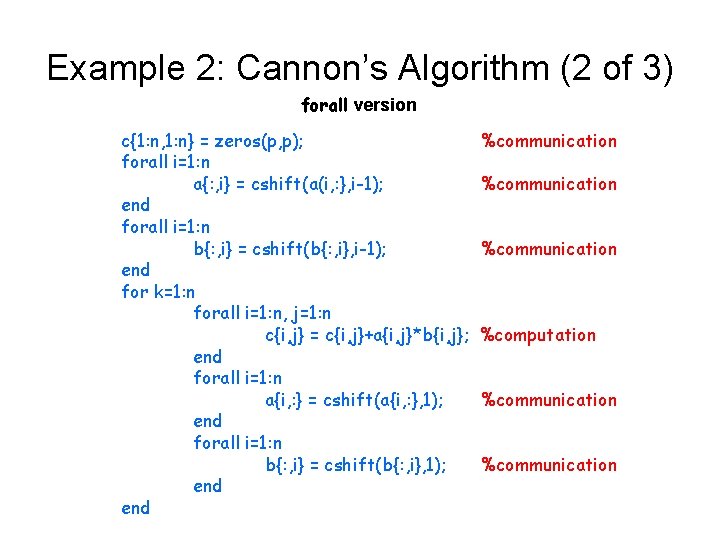

Example 2: Cannon’s Algorithm (2 of 3) forall version c{1: n, 1: n} = zeros(p, p); forall i=1: n a{: , i} = cshift(a(i, : }, i-1); end forall i=1: n b{: , i} = cshift(b{: , i}, i-1); end for k=1: n forall i=1: n, j=1: n c{i, j} = c{i, j}+a{i, j}*b{i, j}; end forall i=1: n a{i, : } = cshift(a{i, : }, 1); end forall i=1: n b{: , i} = cshift(b{: , i}, 1); end %communication %computation %communication

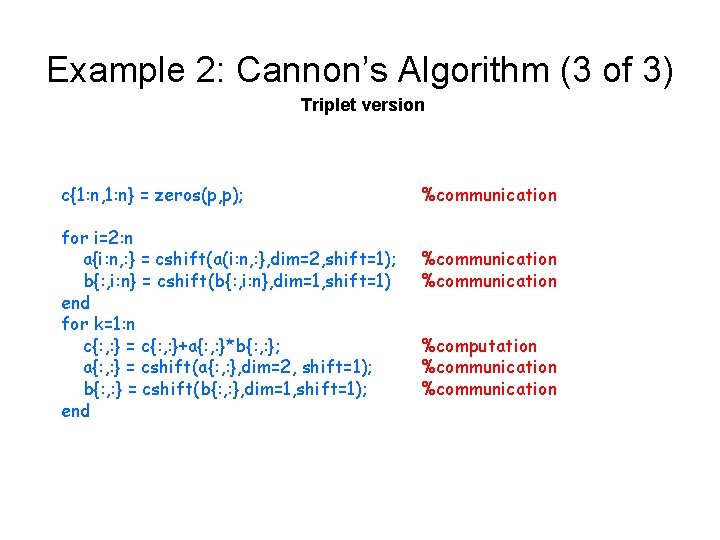

Example 2: Cannon’s Algorithm (3 of 3) Triplet version c{1: n, 1: n} = zeros(p, p); for i=2: n a{i: n, : } = cshift(a(i: n, : }, dim=2, shift=1); b{: , i: n} = cshift(b{: , i: n}, dim=1, shift=1) end for k=1: n c{: , : } = c{: , : }+a{: , : }*b{: , : }; a{: , : } = cshift(a{: , : }, dim=2, shift=1); b{: , : } = cshift(b{: , : }, dim=1, shift=1); end %communication %computation %communication

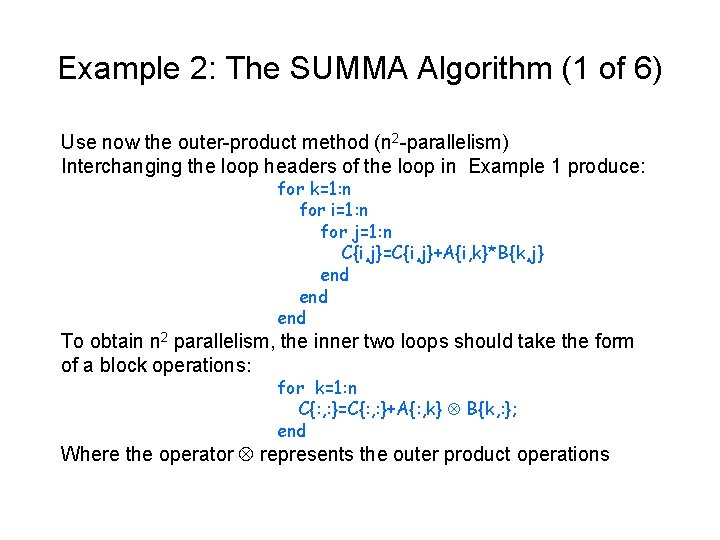

Example 2: The SUMMA Algorithm (1 of 6) Use now the outer-product method (n 2 -parallelism) Interchanging the loop headers of the loop in Example 1 produce: for k=1: n for i=1: n for j=1: n C{i, j}=C{i, j}+A{i, k}*B{k, j} end end To obtain n 2 parallelism, the inner two loops should take the form of a block operations: for k=1: n C{: , : }=C{: , : }+A{: , k} B{k, : }; end Where the operator represents the outer product operations

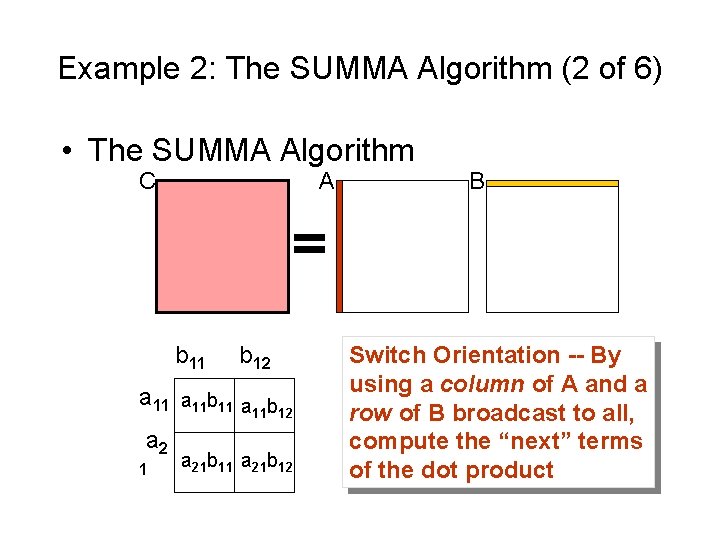

Example 2: The SUMMA Algorithm (2 of 6) • The SUMMA Algorithm C A b 11 b 12 a 11 b 11 a b 11 12 a 2 1 a 21 b 12 B Switch Orientation -- By using a column of A and a row of B broadcast to all, compute the “next” terms of the dot product

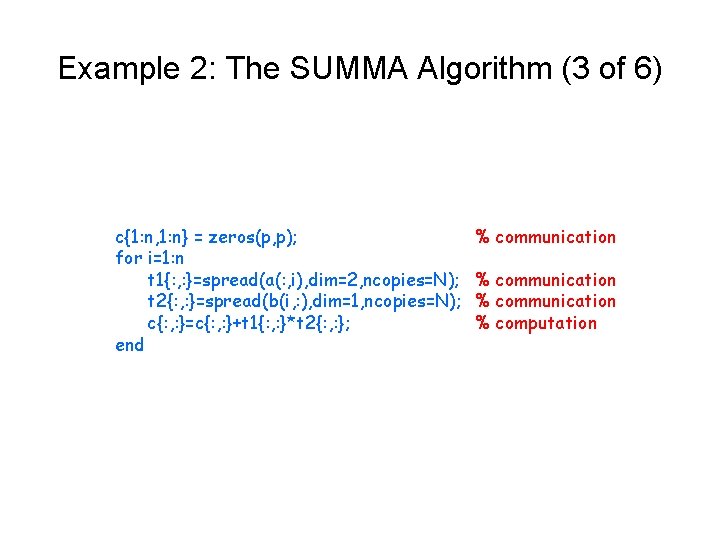

Example 2: The SUMMA Algorithm (3 of 6) c{1: n, 1: n} = zeros(p, p); for i=1: n t 1{: , : }=spread(a(: , i), dim=2, ncopies=N); t 2{: , : }=spread(b(i, : ), dim=1, ncopies=N); c{: , : }=c{: , : }+t 1{: , : }*t 2{: , : }; end % communication % computation

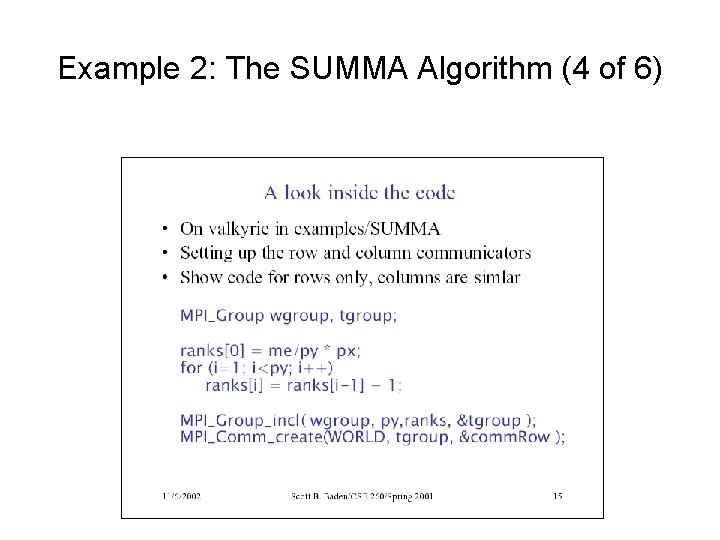

Example 2: The SUMMA Algorithm (4 of 6)

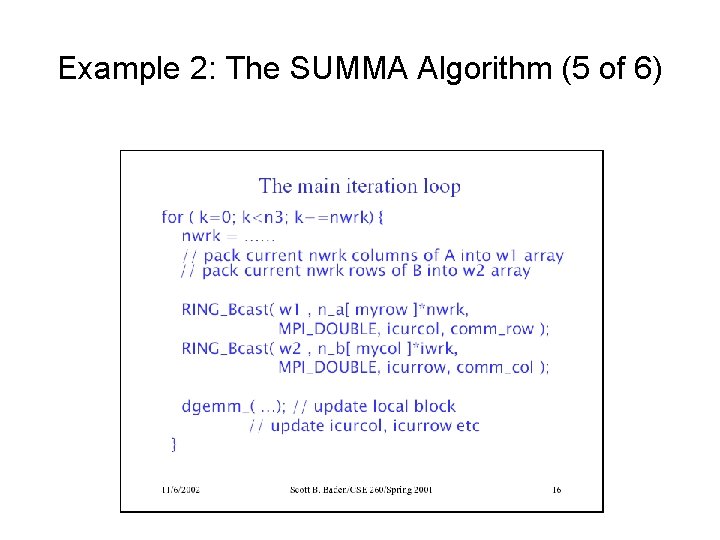

Example 2: The SUMMA Algorithm (5 of 6)

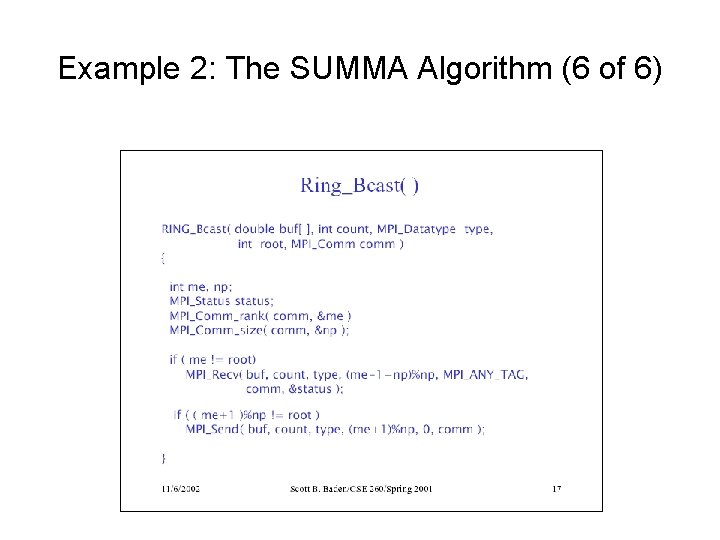

Example 2: The SUMMA Algorithm (6 of 6)

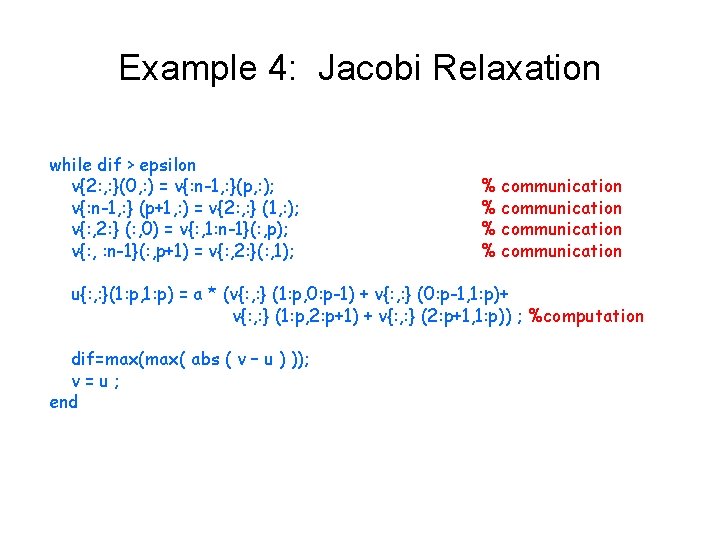

Example 4: Jacobi Relaxation while dif > epsilon v{2: , : }(0, : ) = v{: n-1, : }(p, : ); v{: n-1, : } (p+1, : ) = v{2: , : } (1, : ); v{: , 2: } (: , 0) = v{: , 1: n-1}(: , p); v{: , : n-1}(: , p+1) = v{: , 2: }(: , 1); % communication u{: , : }(1: p, 1: p) = a * (v{: , : } (1: p, 0: p-1) + v{: , : } (0: p-1, 1: p)+ v{: , : } (1: p, 2: p+1) + v{: , : } (2: p+1, 1: p)) ; %computation dif=max( abs ( v – u ) )); v=u; end

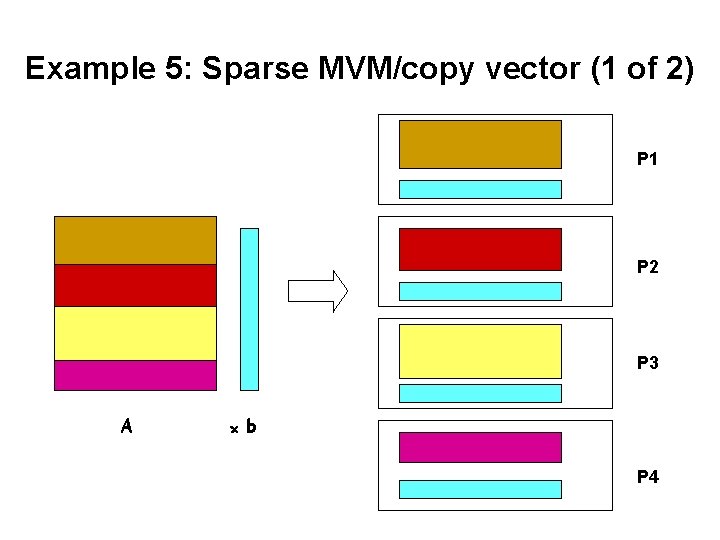

Example 5: Sparse MVM/copy vector (1 of 2) P 1 P 2 P 3 A ×b P 4

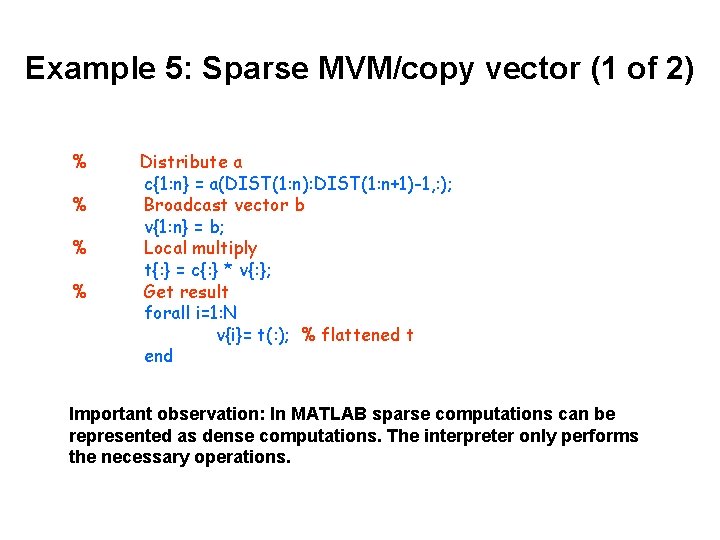

Example 5: Sparse MVM/copy vector (1 of 2) % % Distribute a c{1: n} = a(DIST(1: n): DIST(1: n+1)-1, : ); Broadcast vector b v{1: n} = b; Local multiply t{: } = c{: } * v{: }; Get result forall i=1: N v{i}= t(: ); % flattened t end Important observation: In MATLAB sparse computations can be represented as dense computations. The interpreter only performs the necessary operations.

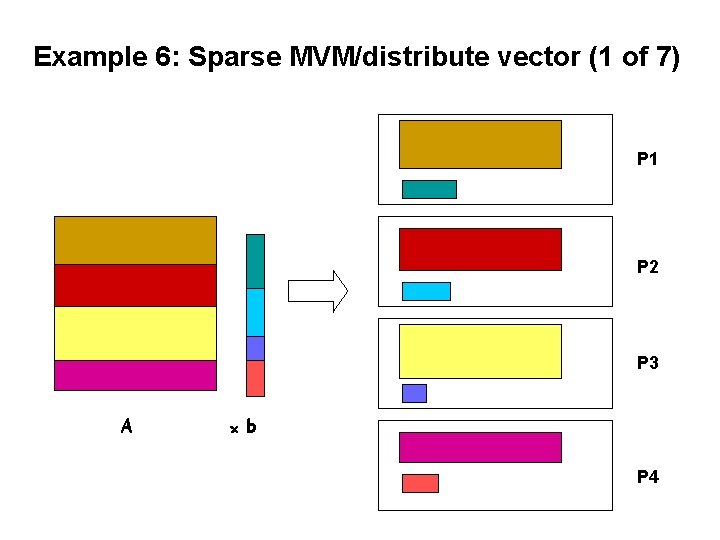

Example 6: Sparse MVM/distribute vector (1 of 7) P 1 P 2 P 3 A ×b P 4

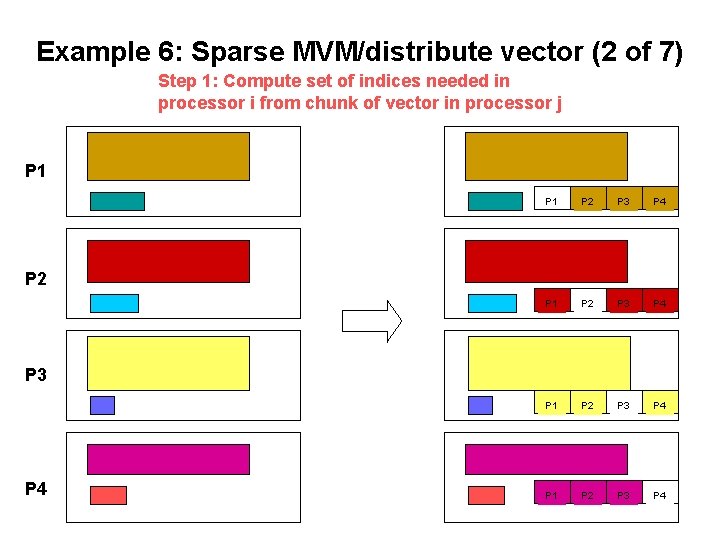

Example 6: Sparse MVM/distribute vector (2 of 7) Step 1: Compute set of indices needed in processor i from chunk of vector in processor j P 1 P 2 P 3 P 4 P 1 P 2 P 3 P 4

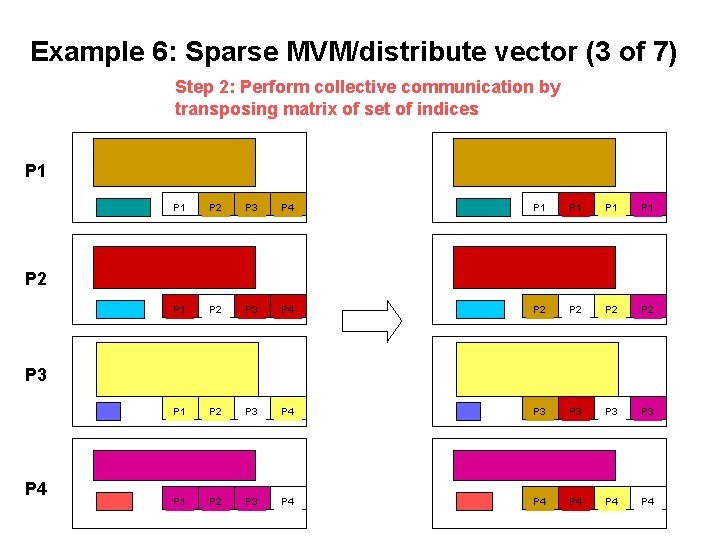

Example 6: Sparse MVM/distribute vector (3 of 7) Step 2: Perform collective communication by transposing matrix of set of indices P 1 P 2 P 3 P 4 P 1 P 1 P 1 P 2 P 3 P 4 P 2 P 2 P 1 P 2 P 3 P 4 P 3 P 3 P 1 P 2 P 3 P 4 P 4 P 4 P 2 P 3 P 4

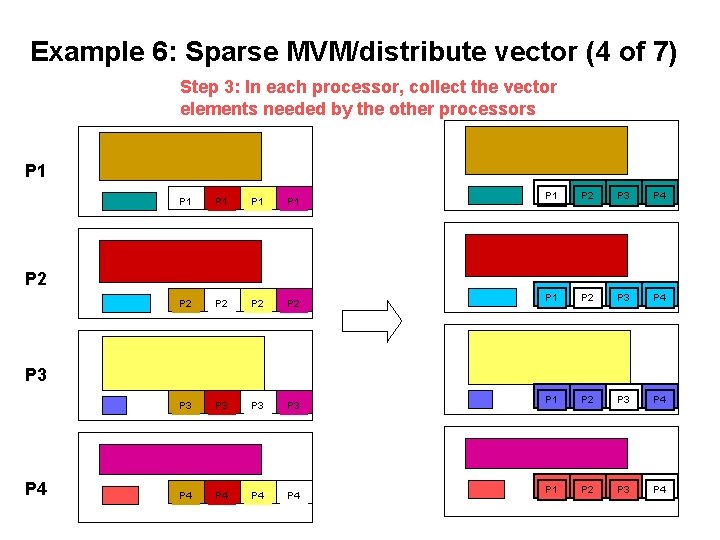

Example 6: Sparse MVM/distribute vector (4 of 7) Step 3: In each processor, collect the vector elements needed by the other processors P 1 P 1 P 1 P 2 P 2 P 3 P 3 P 4 P 4 P 1 P 2 P 3 P 4 P 2 P 3 P 4

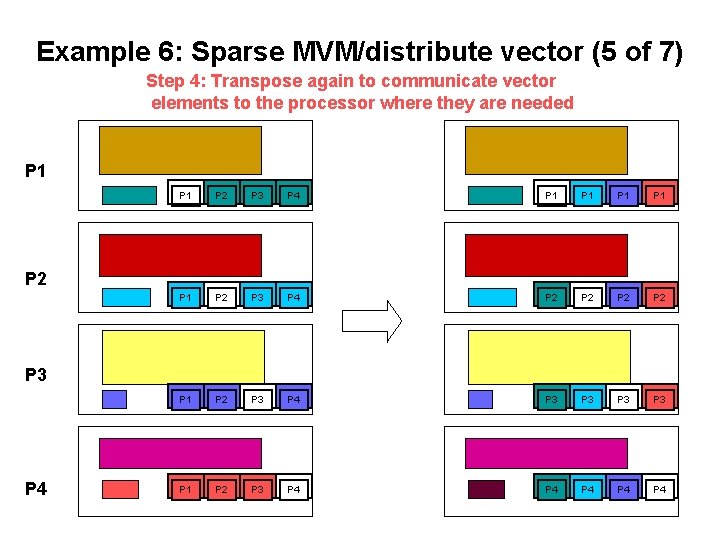

Example 6: Sparse MVM/distribute vector (5 of 7) Step 4: Transpose again to communicate vector elements to the processor where they are needed P 1 P 2 P 3 P 4 P 1 P 1 P 1 P 2 P 3 P 4 P 2 P 2 P 1 P 2 P 3 P 4 P 3 P 3 P 1 P 2 P 3 P 4 P 4 P 4 P 2 P 3 P 4

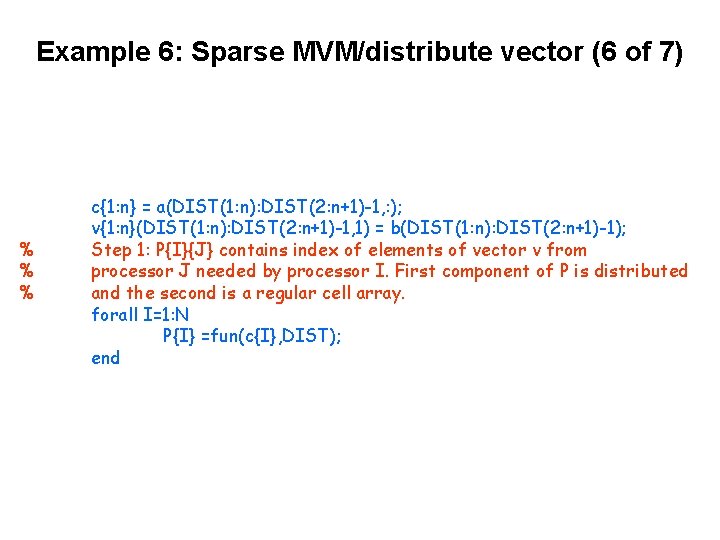

Example 6: Sparse MVM/distribute vector (6 of 7) % % % c{1: n} = a(DIST(1: n): DIST(2: n+1)-1, : ); v{1: n}(DIST(1: n): DIST(2: n+1)-1, 1) = b(DIST(1: n): DIST(2: n+1)-1); Step 1: P{I}{J} contains index of elements of vector v from processor J needed by processor I. First component of P is distributed and the second is a regular cell array. forall I=1: N P{I} =fun(c{I}, DIST); end

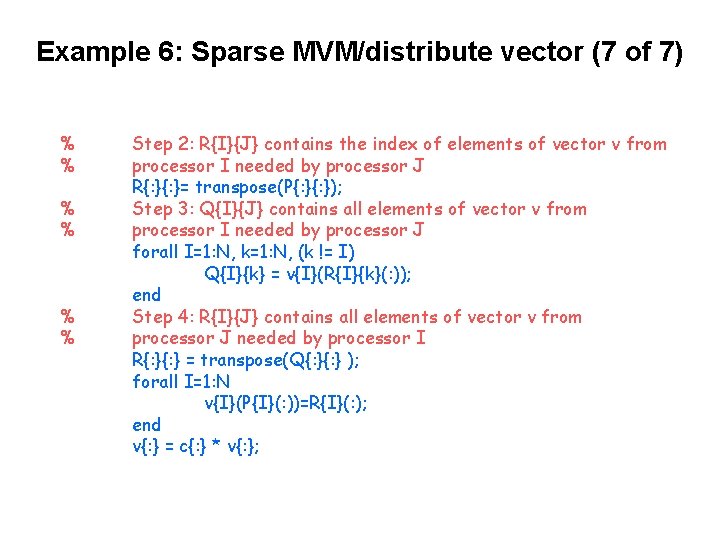

Example 6: Sparse MVM/distribute vector (7 of 7) % % % Step 2: R{I}{J} contains the index of elements of vector v from processor I needed by processor J R{: }= transpose(P{: }); Step 3: Q{I}{J} contains all elements of vector v from processor I needed by processor J forall I=1: N, k=1: N, (k != I) Q{I}{k} = v{I}(R{I}{k}(: )); end Step 4: R{I}{J} contains all elements of vector v from processor J needed by processor I R{: } = transpose(Q{: } ); forall I=1: N v{I}(P{I}(: ))=R{I}(: ); end v{: } = c{: } * v{: };

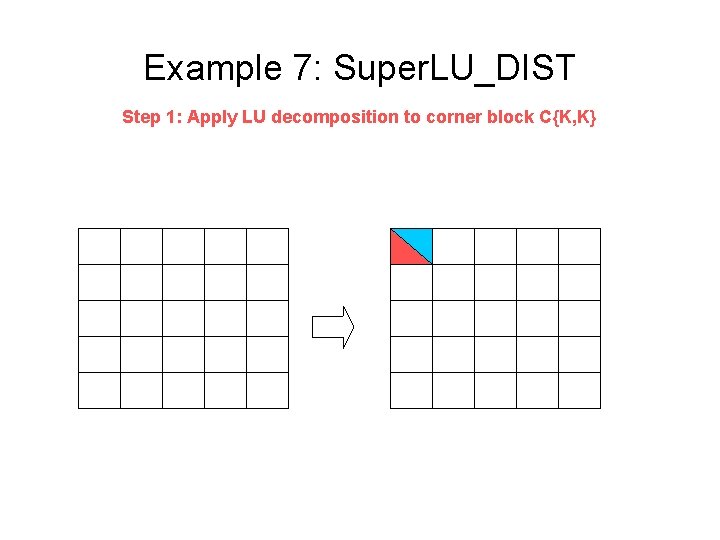

Example 7: Super. LU_DIST Step 1: Apply LU decomposition to corner block C{K, K}

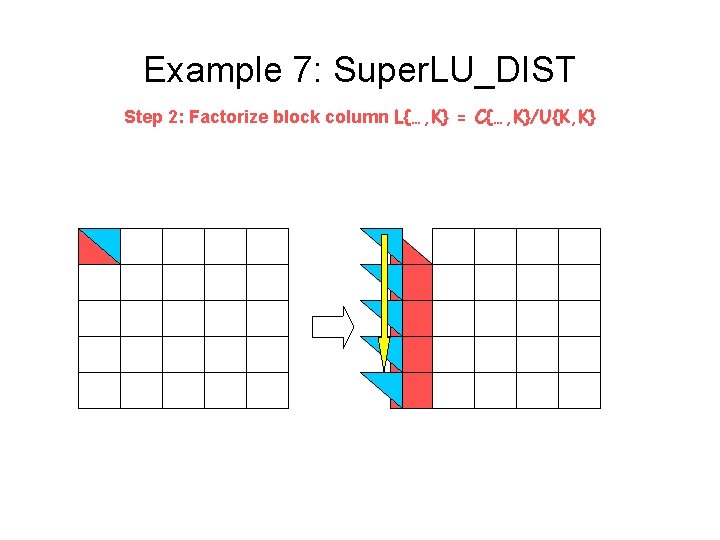

Example 7: Super. LU_DIST Step 2: Factorize block column L{…, K} = C{…, K}/U{K, K}

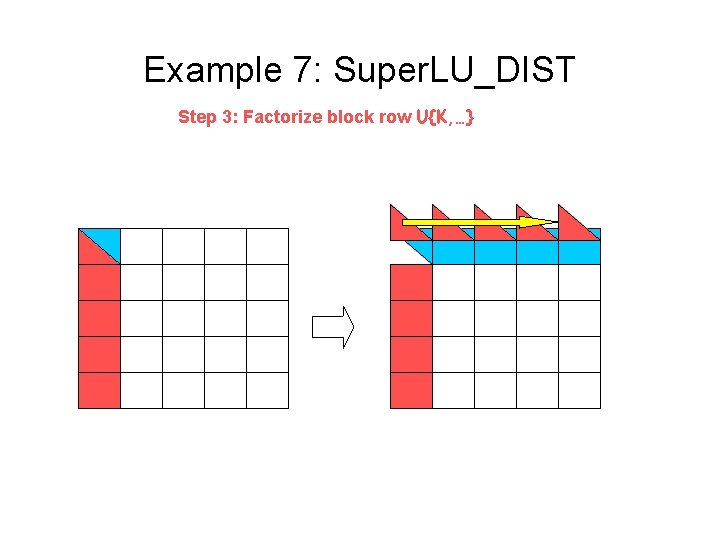

Example 7: Super. LU_DIST Step 3: Factorize block row U{K, …}

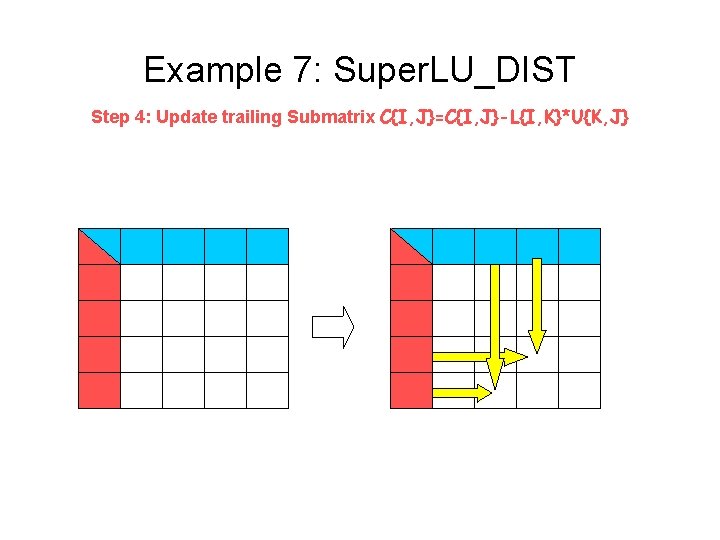

Example 7: Super. LU_DIST Step 4: Update trailing Submatrix C{I, J}=C{I, J}-L{I, K}*U{K, J}

![Example 7: Super. LU_DIST for K=1: N [L{K, K}, U{K, K}]=lu(C{K, K}); L{K+1: N, Example 7: Super. LU_DIST for K=1: N [L{K, K}, U{K, K}]=lu(C{K, K}); L{K+1: N,](http://slidetodoc.com/presentation_image/2b72eda350f19a2b72f1a7ece38c1707/image-46.jpg)

Example 7: Super. LU_DIST for K=1: N [L{K, K}, U{K, K}]=lu(C{K, K}); L{K+1: N, K}=C{K+1: N, K}/U{K, K}; U{K, K+1: N}=L{K, K}C{K, K+1: N}; %Step 1 %Step 2 %Step 3 forall I=K+1: N; L{I, K+1: }=L{I, K}; U{K+1: , I}=U{K+1: , I}; end C{K+1: , K+1: }=C{K+1: , K+1: }-L{K+1: , K+1: }*U{K+1: , K+1: }; %Step 4

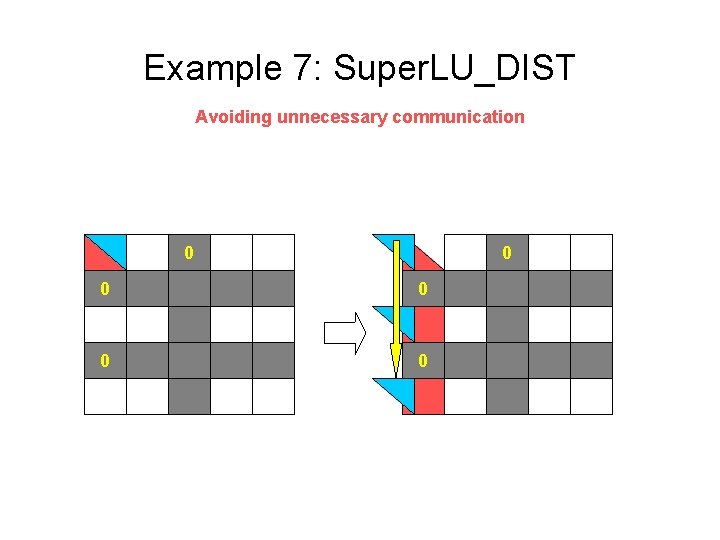

Example 7: Super. LU_DIST Avoiding unnecessary communication 0 0 0

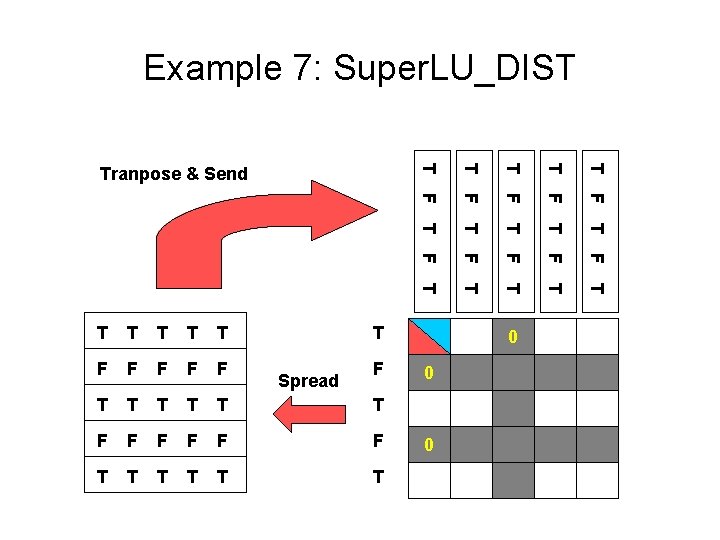

Example 7: Super. LU_DIST T T T F F F T T T F T F F F T T Tranpose & Send T Spread F 0 0 0

![Example 7: Super. LU_DIST for K=1: N [L{K, K}, U{K, K}]=lu(C{K, K}); %Step 1 Example 7: Super. LU_DIST for K=1: N [L{K, K}, U{K, K}]=lu(C{K, K}); %Step 1](http://slidetodoc.com/presentation_image/2b72eda350f19a2b72f1a7ece38c1707/image-49.jpg)

Example 7: Super. LU_DIST for K=1: N [L{K, K}, U{K, K}]=lu(C{K, K}); %Step 1 T{K: N, K} = C{K: N, K} != 0; S{K: N, K}(K: N)=spread(T{K: N, K}, …); R {K, K: N}=transpose(SL{K+1: N, K}{K: N}); L{K+1: N, K}=C{K+1: N, K}/U{K, K}; U{K, K+1: N}=L{K, K}C{K, K+1: N}; %Step 2 %Step 3 forall I=K+1: N; L{I, K+1: }=L{I, K}; end forall I=K=1: N U{R{K, I}=U{K, I}; end C{K+1: , K+1: }=C{K+1: , K+1: }-L{K+1: , K+1: }*U{K+1: , K+1: }; %Step 4

- Slides: 49