Playing Distributed Systems with MemorytoMemory Communication Liviu Iftode

Playing Distributed Systems with Memory-to-Memory Communication Liviu Iftode Department of Computer Science University of Maryland

Outline z The M 2 M Game z M 2 M Toys: VIA, Infini. Band, DAFS z Playing with M 2 M y y Software DSM Intra-Server Communication Fault-Tolerance and Availability TCP Offloading z Conclusions Most of this work has been done in the Distributed Computing (Disco) Lab at Rutgers University, http: //discolab. rutgers. edu

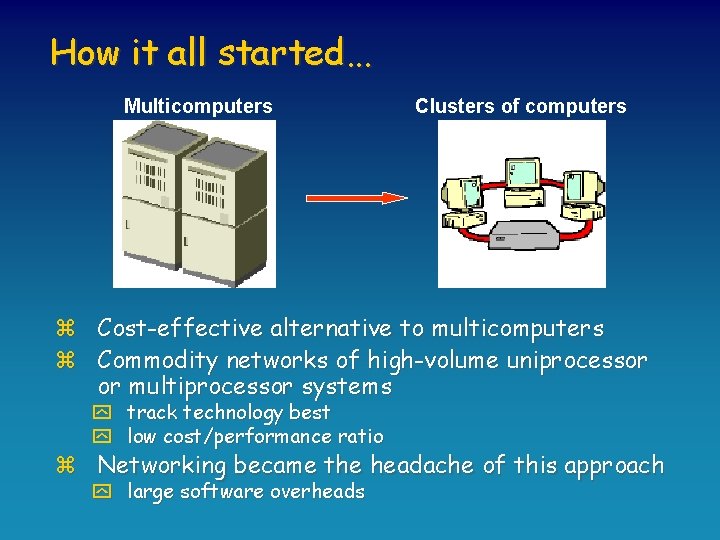

How it all started. . . Multicomputers Clusters of computers z Cost-effective alternative to multicomputers z Commodity networks of high-volume uniprocessor or multiprocessor systems y track technology best y low cost/performance ratio z Networking became the headache of this approach y large software overheads

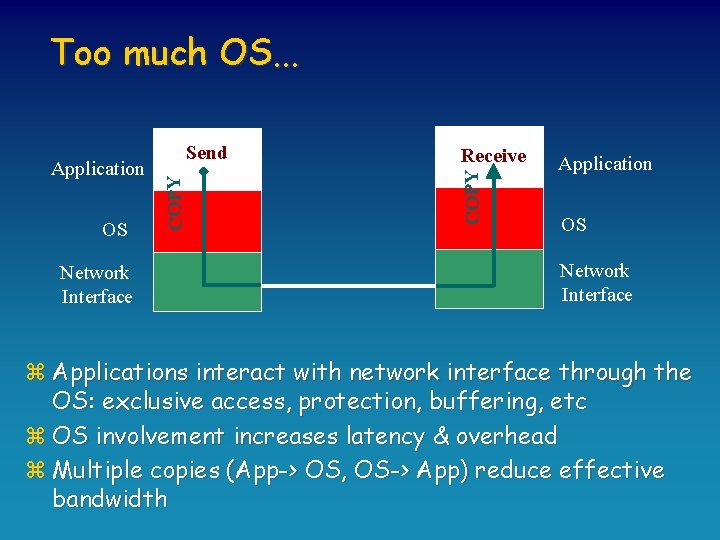

Too much OS. . . Network Interface Receive COPY OS COPY Application Send Application OS Network Interface z Applications interact with network interface through the OS: exclusive access, protection, buffering, etc z OS involvement increases latency & overhead z Multiple copies (App-> OS, OS-> App) reduce effective bandwidth

![User-Level Protected Communication Application Send OS NIC [Receive] Application OS NIC z Application has User-Level Protected Communication Application Send OS NIC [Receive] Application OS NIC z Application has](http://slidetodoc.com/presentation_image/139dbad5e255407882b4dcbfbc63d3fe/image-5.jpg)

User-Level Protected Communication Application Send OS NIC [Receive] Application OS NIC z Application has direct access to the network interface z OS involved only in connection setup to ensure protection z Performance benefits: zero-copy, low-overhead z Special support in the network interface

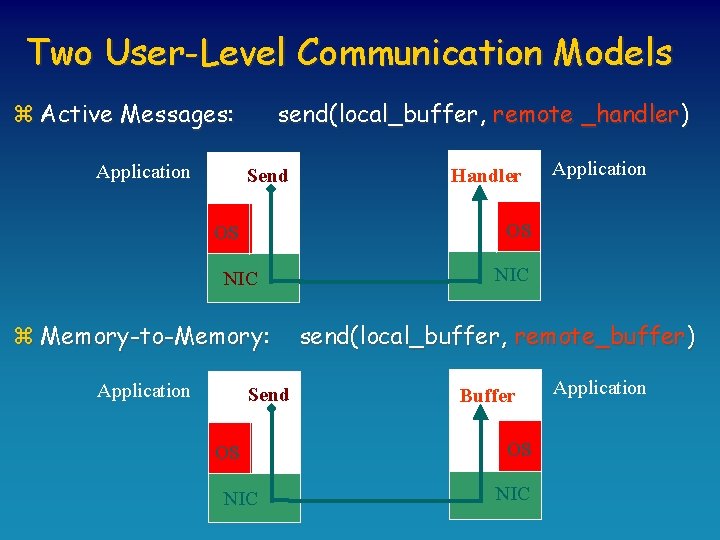

Two User-Level Communication Models z Active Messages: Application send(local_buffer, remote _handler) Send Application OS OS NIC z Memory-to-Memory: Application Handler Send OS NIC send(local_buffer, remote_buffer) Buffer OS NIC Application

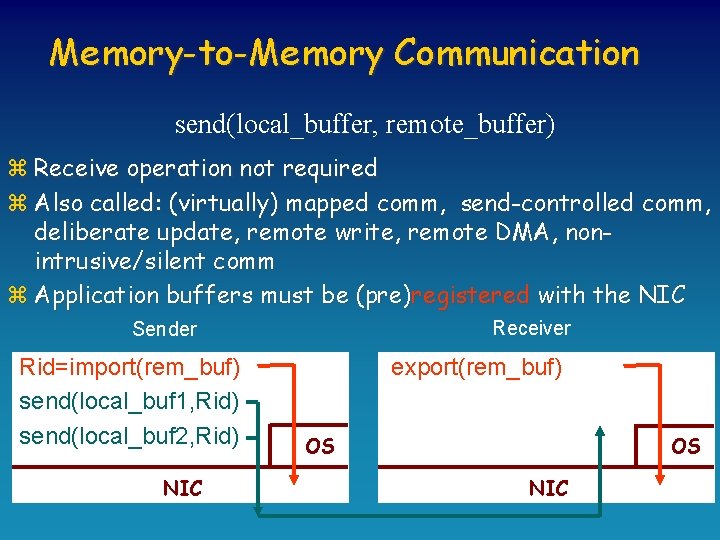

Memory-to-Memory Communication send(local_buffer, remote_buffer) z Receive operation not required z Also called: (virtually) mapped comm, send-controlled comm, deliberate update, remote write, remote DMA, nonintrusive/silent comm z Application buffers must be (pre)registered with the NIC Receiver Sender Rid=import(rem_buf) send(local_buf 1, Rid) send(local_buf 2, Rid) NIC export(rem_buf) OS OS NIC

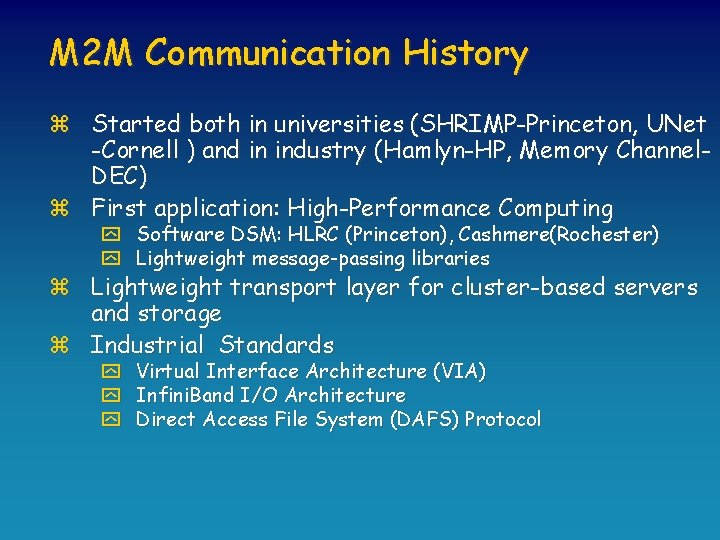

M 2 M Communication History z Started both in universities (SHRIMP-Princeton, UNet -Cornell ) and in industry (Hamlyn-HP, Memory Channel. DEC) z First application: High-Performance Computing y Software DSM: HLRC (Princeton), Cashmere(Rochester) y Lightweight message-passing libraries z Lightweight transport layer for cluster-based servers and storage z Industrial Standards y Virtual Interface Architecture (VIA) y Infini. Band I/O Architecture y Direct Access File System (DAFS) Protocol

Outline z The M 2 M Game z M 2 M Toys: VIA, Infini. Band, DAFS z Playing with M 2 M y y Software DSM Intra-Server Communication Fault Tolerance and Availability TCP Offloading z Conclusions

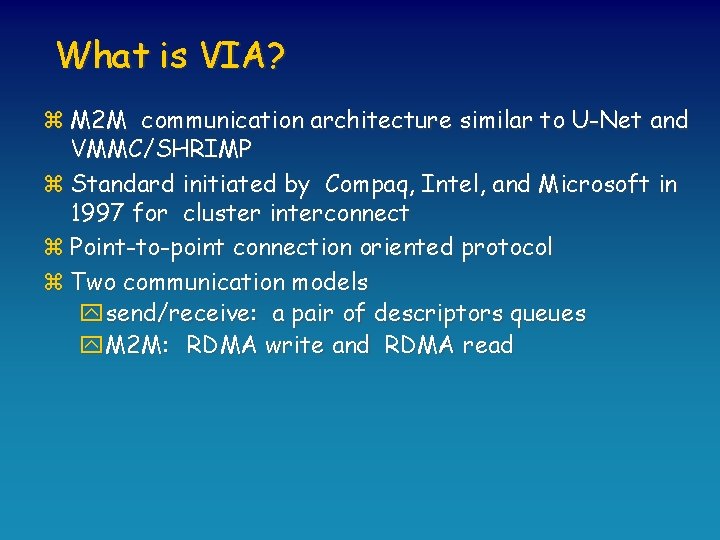

What is VIA? z M 2 M communication architecture similar to U-Net and VMMC/SHRIMP z Standard initiated by Compaq, Intel, and Microsoft in 1997 for cluster interconnect z Point-to-point connection oriented protocol z Two communication models ysend/receive: a pair of descriptors queues y. M 2 M: RDMA write and RDMA read

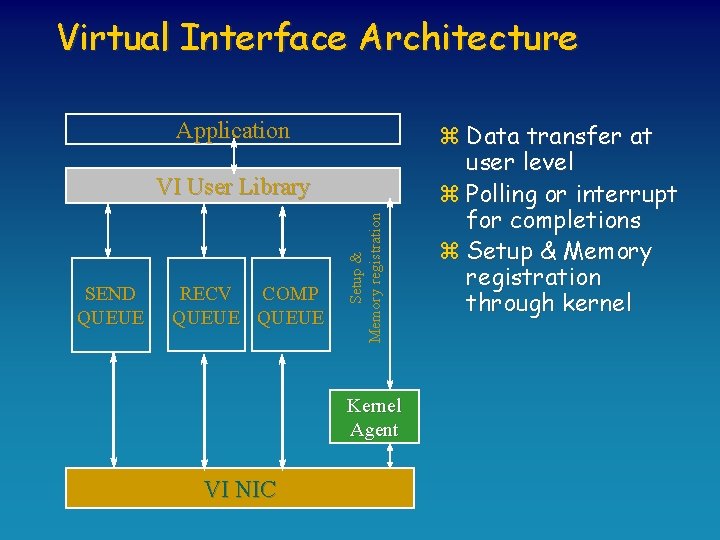

Virtual Interface Architecture Application SEND QUEUE RECV COMP QUEUE Setup & Memory registration VI User Library Kernel Agent VI NIC z Data transfer at user level z Polling or interrupt for completions z Setup & Memory registration through kernel

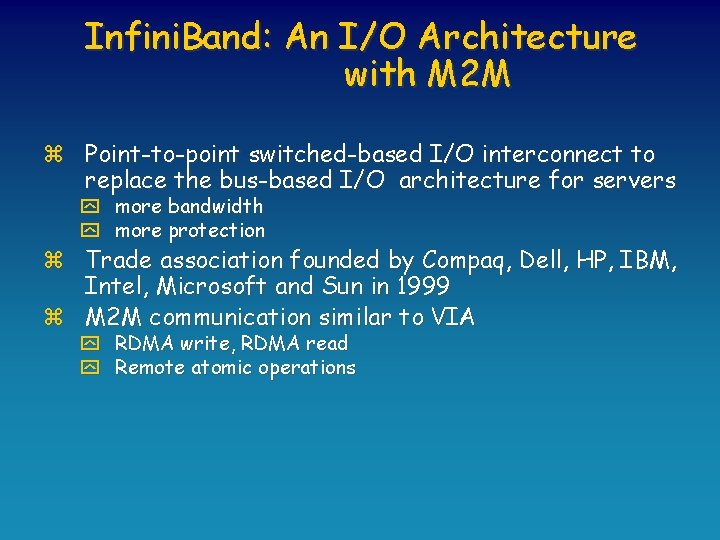

Infini. Band: An I/O Architecture with M 2 M z Point-to-point switched-based I/O interconnect to replace the bus-based I/O architecture for servers y more bandwidth y more protection z Trade association founded by Compaq, Dell, HP, IBM, Intel, Microsoft and Sun in 1999 z M 2 M communication similar to VIA y RDMA write, RDMA read y Remote atomic operations

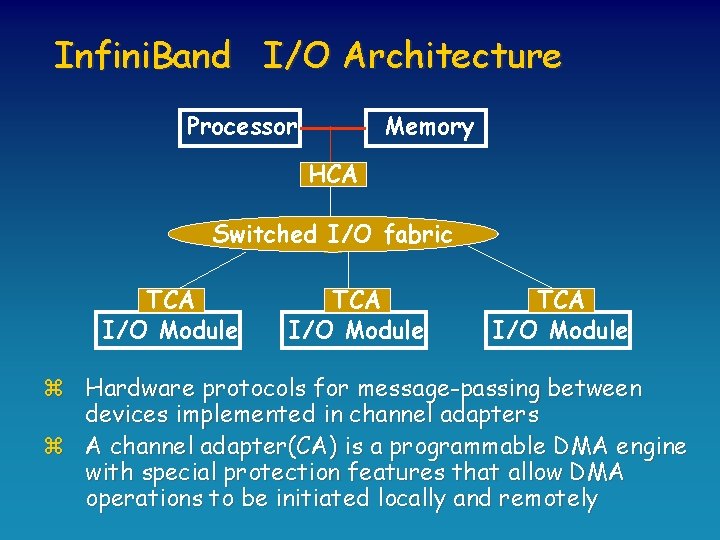

Infini. Band I/O Architecture Processor Memory HCA Switched I/O fabric TCA I/O Module z Hardware protocols for message-passing between devices implemented in channel adapters z A channel adapter(CA) is a programmable DMA engine with special protection features that allow DMA operations to be initiated locally and remotely

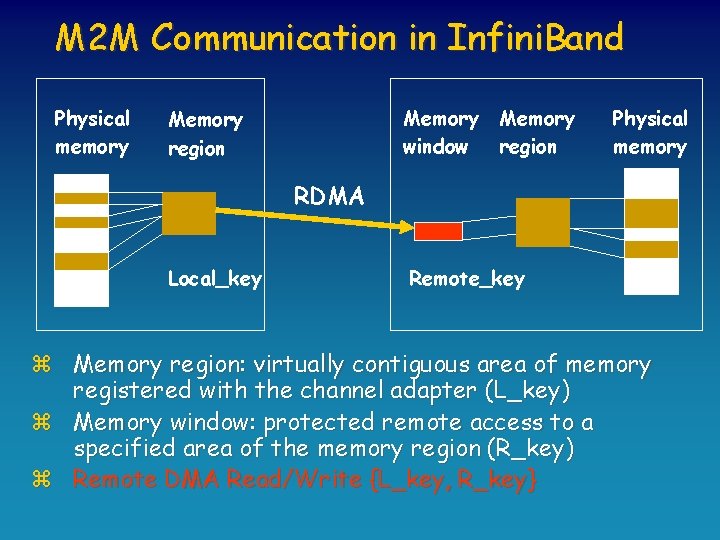

M 2 M Communication in Infini. Band Physical memory Memory window Memory region Physical memory RDMA Local_key Remote_key z Memory region: virtually contiguous area of memory registered with the channel adapter (L_key) z Memory window: protected remote access to a specified area of the memory region (R_key) z Remote DMA Read/Write {L_key, R_key}

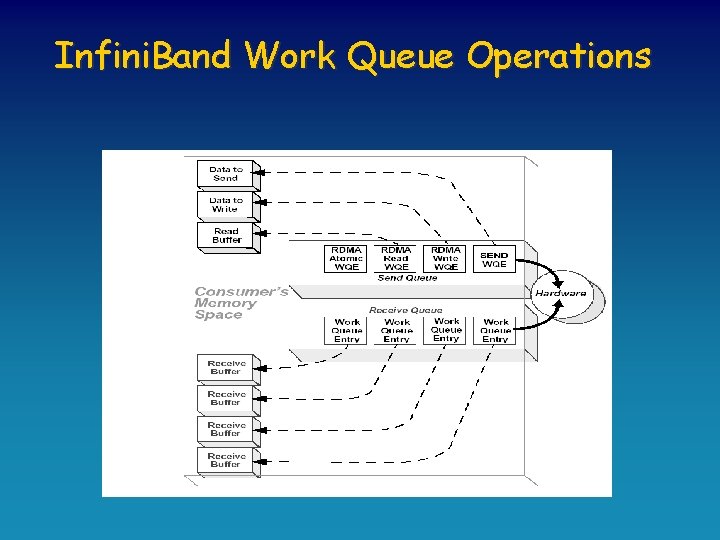

Infini. Band Work Queue Operations

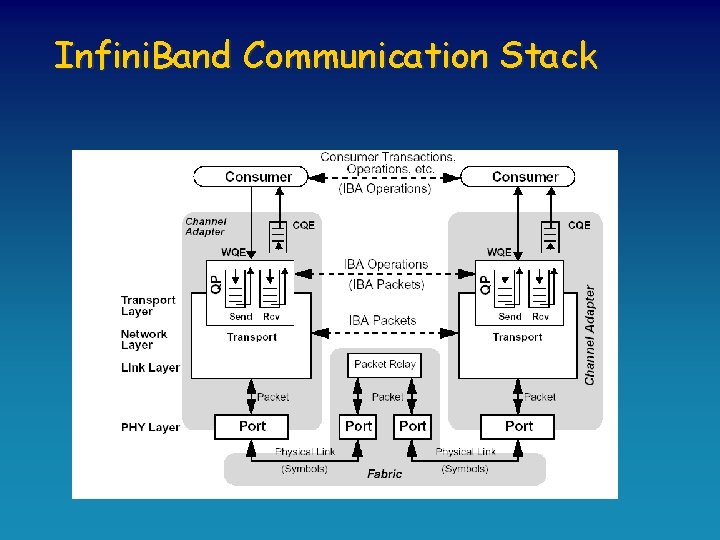

Infini. Band Communication Stack

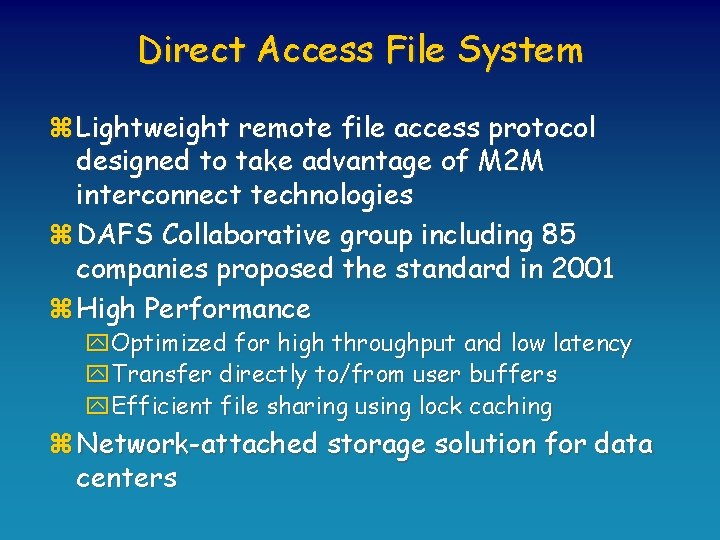

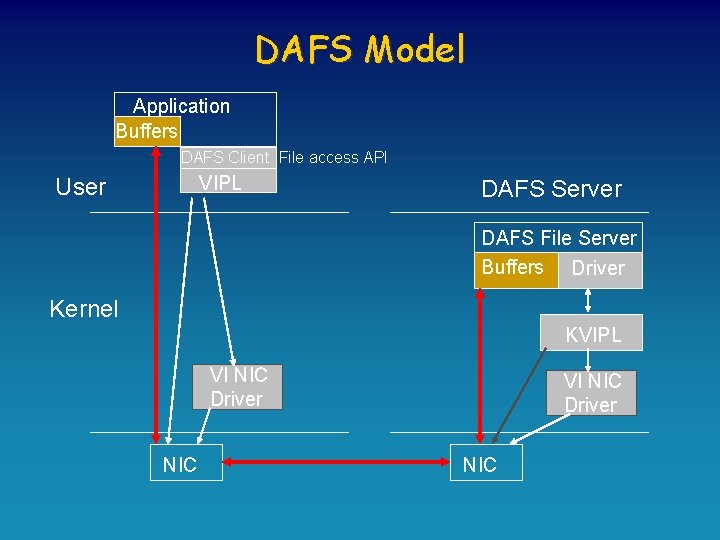

Direct Access File System z Lightweight remote file access protocol designed to take advantage of M 2 M interconnect technologies z DAFS Collaborative group including 85 companies proposed the standard in 2001 z High Performance y. Optimized for high throughput and low latency y. Transfer directly to/from user buffers y. Efficient file sharing using lock caching z Network-attached storage solution for data centers

DAFS Model Application Buffers DAFS Client File access API VIPL User DAFS Server DAFS File Server Buffers Driver Kernel KVIPL VI NIC Driver NIC

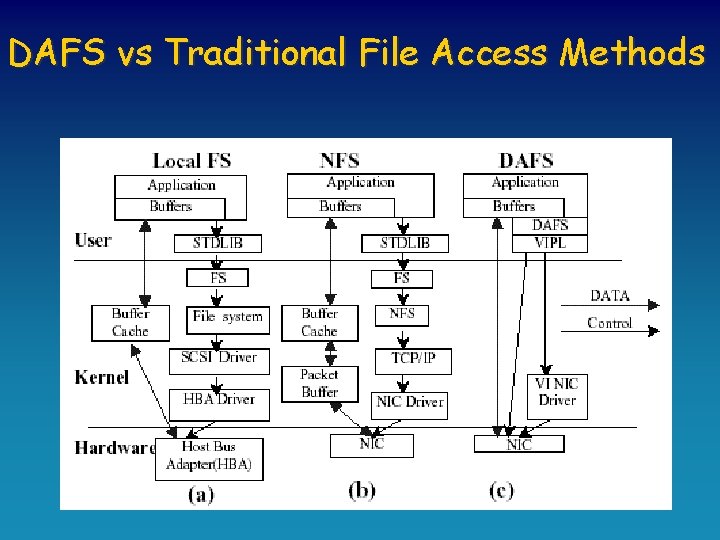

DAFS vs Traditional File Access Methods

M 2 M Product Market n n n VIA: Emulex (former Giganet) Infini. Band: Mellanox DAFS software distribution: Duke, Harvard, British Columbia, Rutgers (soon)

Outline z The M 2 M Game z M 2 M Toys: VIA, Infini. Band, DAFS z Playing with M 2 M y y Software DSM Intra-Server Communication Fault Tolerance and Availability TCP Offloading z Conclusions

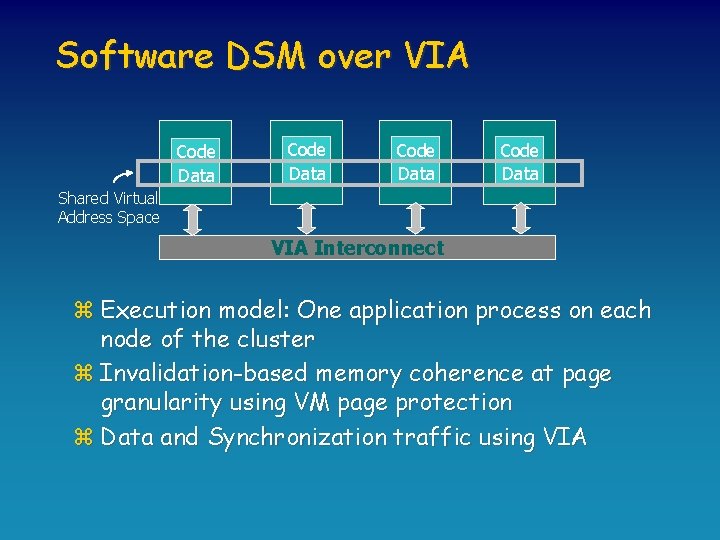

Software DSM over VIA Code Data Shared Virtual Address Space VIA Interconnect z Execution model: One application process on each node of the cluster z Invalidation-based memory coherence at page granularity using VM page protection z Data and Synchronization traffic using VIA

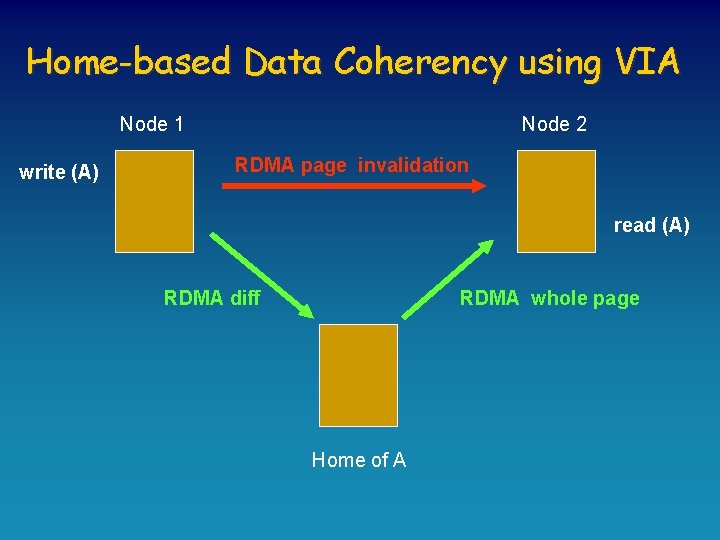

Home-based Data Coherency using VIA Node 1 write (A) Node 2 RDMA page invalidation read (A) RDMA diff RDMA whole page Home of A

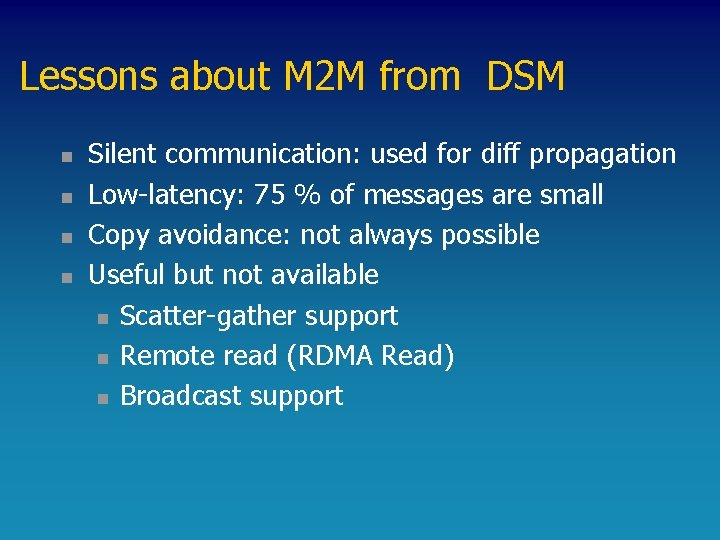

Lessons about M 2 M from DSM n n Silent communication: used for diff propagation Low-latency: 75 % of messages are small Copy avoidance: not always possible Useful but not available n Scatter-gather support n Remote read (RDMA Read) n Broadcast support

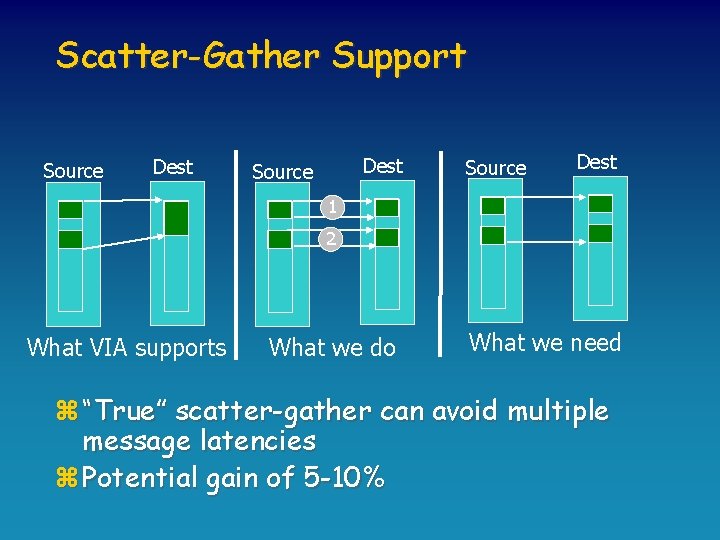

Scatter-Gather Support Source Dest 1 2 What VIA supports What we do What we need z “True” scatter-gather can avoid multiple message latencies z Potential gain of 5 -10%

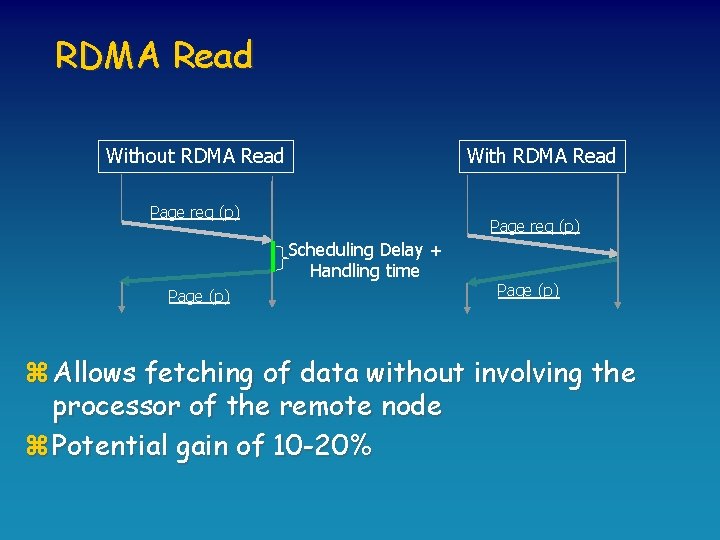

RDMA Read Without RDMA Read With RDMA Read Page req (p) Scheduling Delay + Handling time Page (p) z Allows fetching of data without involving the processor of the remote node z Potential gain of 10 -20%

Broadcast Support z Useful for the software DSM protocol y. Eager invalidation propagation y. Eager update of data z Previous research (Cashmere’ 00) speculates a gain of 10 -15% from the use of broadcast

Outline z The M 2 M Game z M 2 M Toys: VIA, Infini. Band, DAFS z Playing with M 2 M y y Software DSM Intra-Server Communication Fault-Tolerance and Availability TCP Offloading z Conclusions

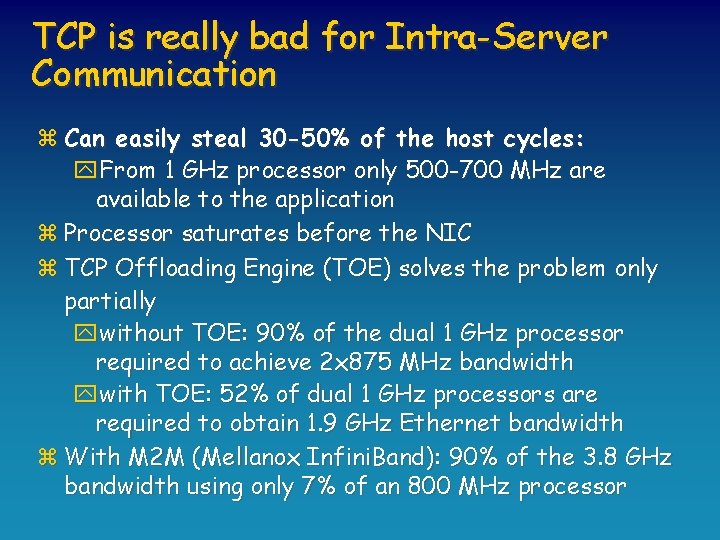

TCP is really bad for Intra-Server Communication z Can easily steal 30 -50% of the host cycles: y. From 1 GHz processor only 500 -700 MHz are available to the application z Processor saturates before the NIC z TCP Offloading Engine (TOE) solves the problem only partially ywithout TOE: 90% of the dual 1 GHz processor required to achieve 2 x 875 MHz bandwidth ywith TOE: 52% of dual 1 GHz processors are required to obtain 1. 9 GHz Ethernet bandwidth z With M 2 M (Mellanox Infini. Band): 90% of the 3. 8 GHz bandwidth using only 7% of an 800 MHz processor

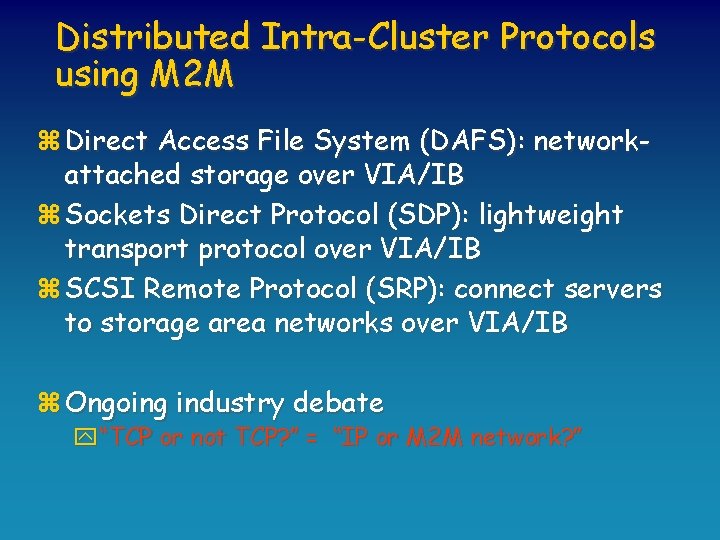

Distributed Intra-Cluster Protocols using M 2 M z Direct Access File System (DAFS): networkattached storage over VIA/IB z Sockets Direct Protocol (SDP): lightweight transport protocol over VIA/IB z SCSI Remote Protocol (SRP): connect servers to storage area networks over VIA/IB z Ongoing industry debate y“TCP or not TCP? ” = “IP or M 2 M network? ”

Distributed Intra-Cluster Server Applications using M 2 M z Cluster-Based Web Server z Storage Servers z Distributed File Systems

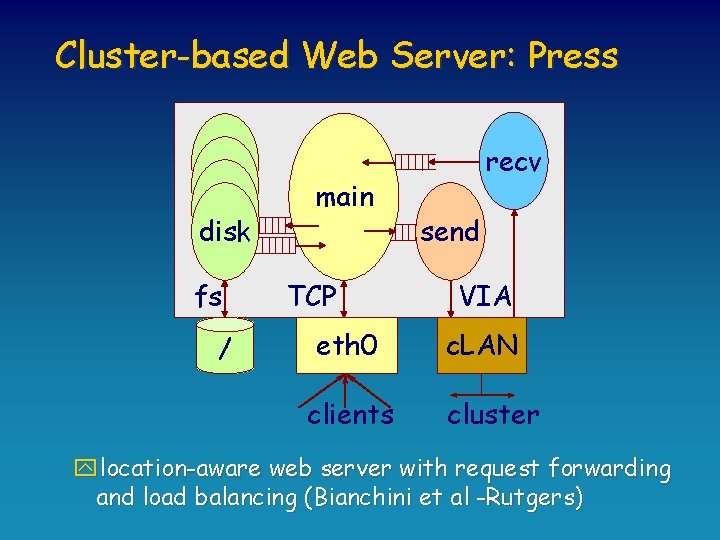

Cluster-based Web Server: Press disk fs / main TCP eth 0 clients recv send VIA c. LAN cluster ylocation-aware web server with request forwarding and load balancing (Bianchini et al -Rutgers)

![Performance of VIA-based Press Web Server [Carrera et al, HPCA’ 02] Performance of VIA-based Press Web Server [Carrera et al, HPCA’ 02]](http://slidetodoc.com/presentation_image/139dbad5e255407882b4dcbfbc63d3fe/image-33.jpg)

Performance of VIA-based Press Web Server [Carrera et al, HPCA’ 02]

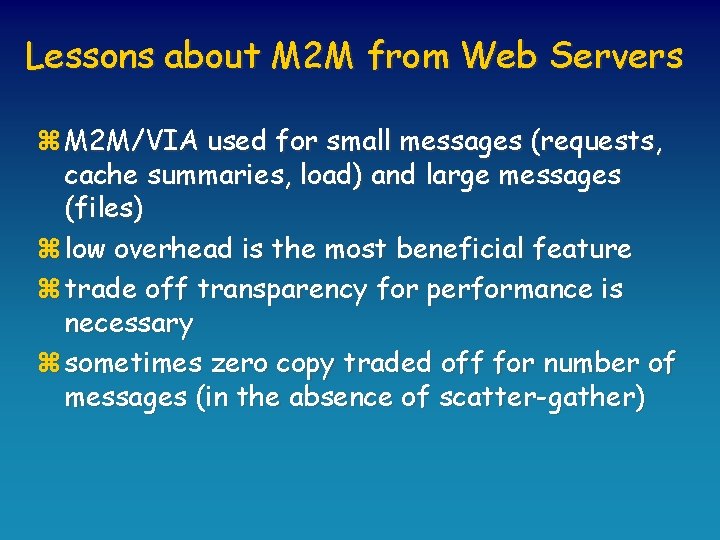

Lessons about M 2 M from Web Servers z M 2 M/VIA used for small messages (requests, cache summaries, load) and large messages (files) z low overhead is the most beneficial feature z trade off transparency for performance is necessary z sometimes zero copy traded off for number of messages (in the absence of scatter-gather)

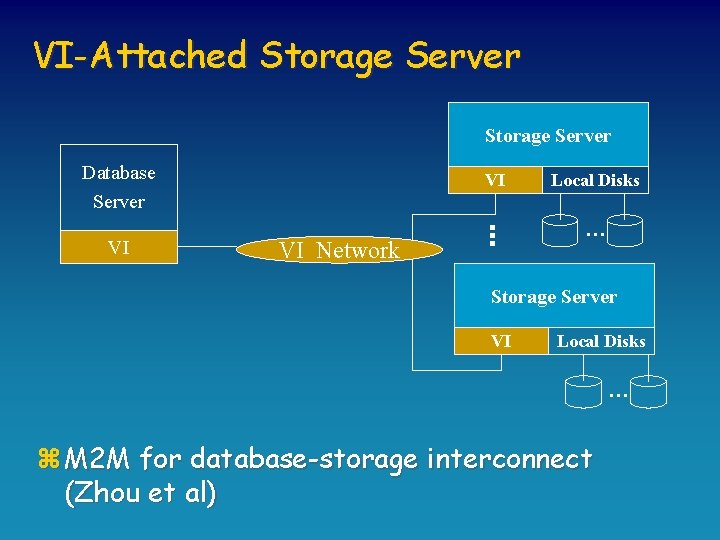

VI-Attached Storage Server VI VI Network VI Local Disks … Database Server … … Storage Server VI Local Disks … z M 2 M for database-storage interconnect (Zhou et al)

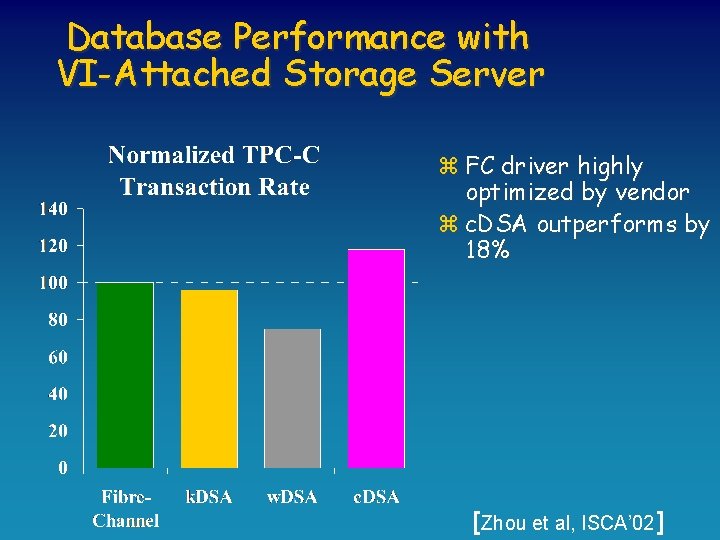

Database Performance with VI-Attached Storage Server z FC driver highly optimized by vendor z c. DSA outperforms by 18% [Zhou et al, ISCA’ 02]

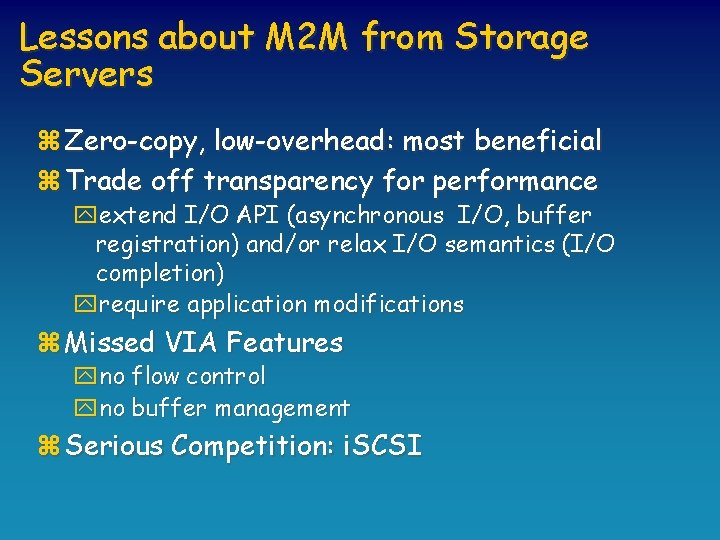

Lessons about M 2 M from Storage Servers z Zero-copy, low-overhead: most beneficial z Trade off transparency for performance yextend I/O API (asynchronous I/O, buffer registration) and/or relax I/O semantics (I/O completion) yrequire application modifications z Missed VIA Features yno flow control yno buffer management z Serious Competition: i. SCSI

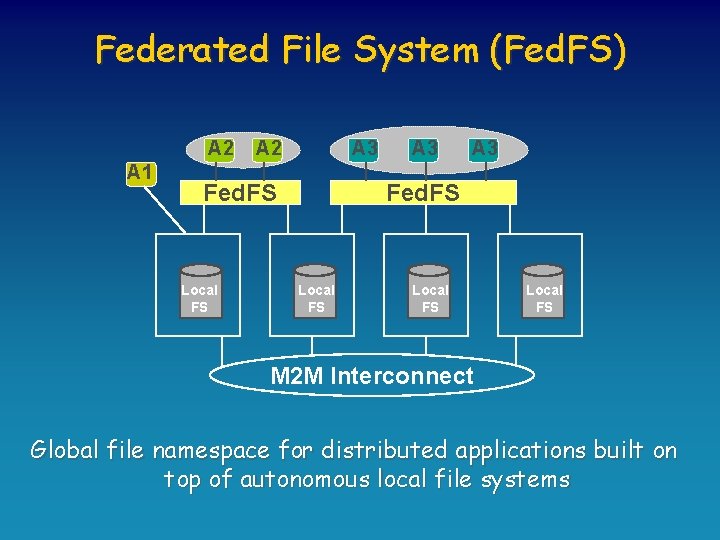

Federated File System (Fed. FS) A 2 A 1 A 2 A 3 Fed. FS Local FS Local FS M 2 M Interconnect Global file namespace for distributed applications built on top of autonomous local file systems

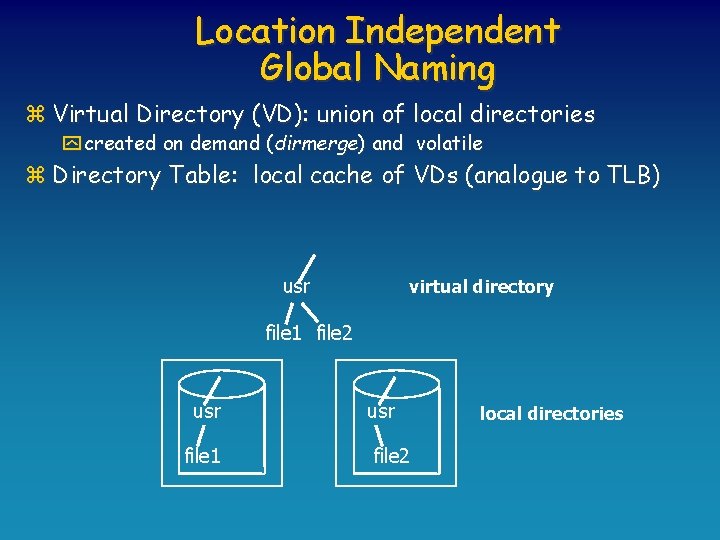

Location Independent Global Naming z Virtual Directory (VD): union of local directories y created on demand (dirmerge) and volatile z Directory Table: local cache of VDs (analogue to TLB) usr virtual directory file 1 file 2 usr file 1 usr file 2 local directories

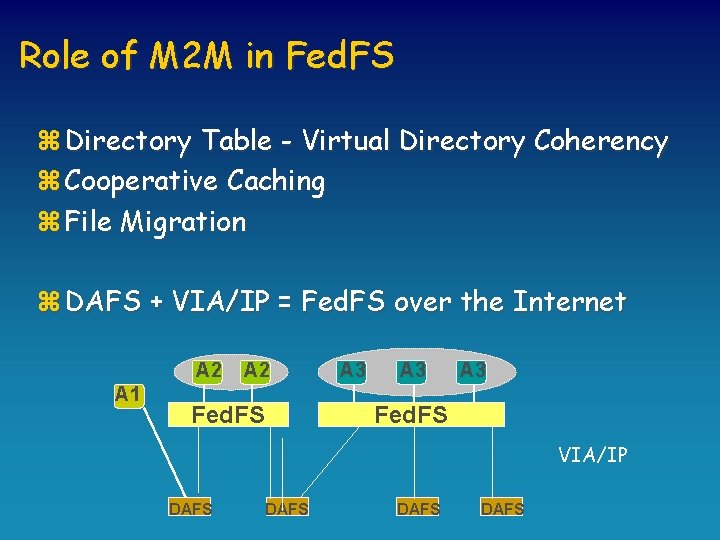

Role of M 2 M in Fed. FS z Directory Table - Virtual Directory Coherency z Cooperative Caching z File Migration z DAFS + VIA/IP = Fed. FS over the Internet A 2 A 1 A 2 Fed. FS A 3 A 3 Fed. FS VIA/IP DAFS

Outline z The M 2 M Game z M 2 M Toys: VIA, Infini. Band, DAFS z Playing with M 2 M y y Software DSM Intra-Server Communication Fault-Tolerance and Availability TCP Offloading z Conclusions

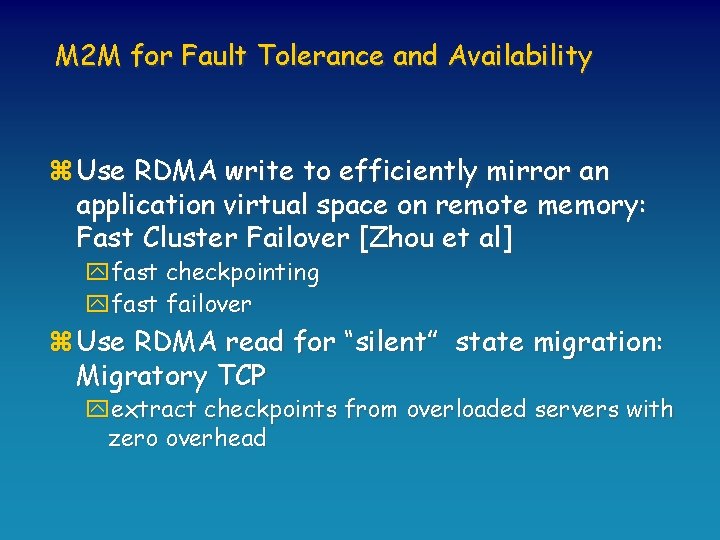

M 2 M for Fault Tolerance and Availability z Use RDMA write to efficiently mirror an application virtual space on remote memory: Fast Cluster Failover [Zhou et al] yfast checkpointing yfast failover z Use RDMA read for “silent” state migration: Migratory TCP yextract checkpoints from overloaded servers with zero overhead

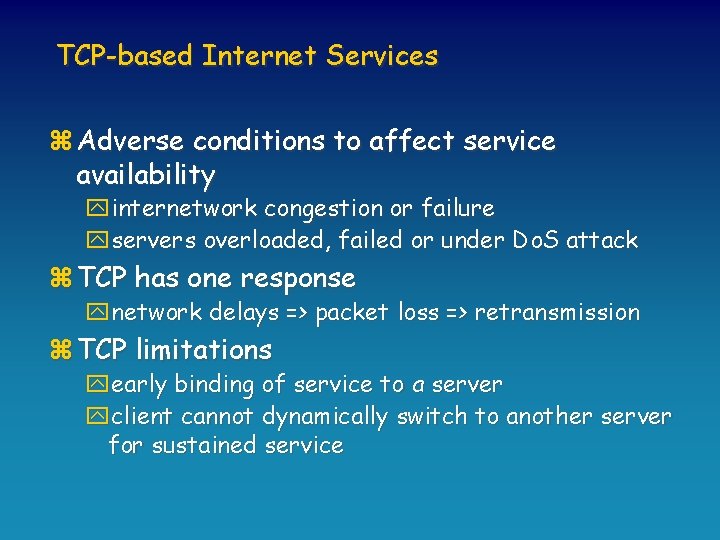

TCP-based Internet Services z Adverse conditions to affect service availability yinternetwork congestion or failure yservers overloaded, failed or under Do. S attack z TCP has one response ynetwork delays => packet loss => retransmission z TCP limitations yearly binding of service to a server yclient cannot dynamically switch to another server for sustained service

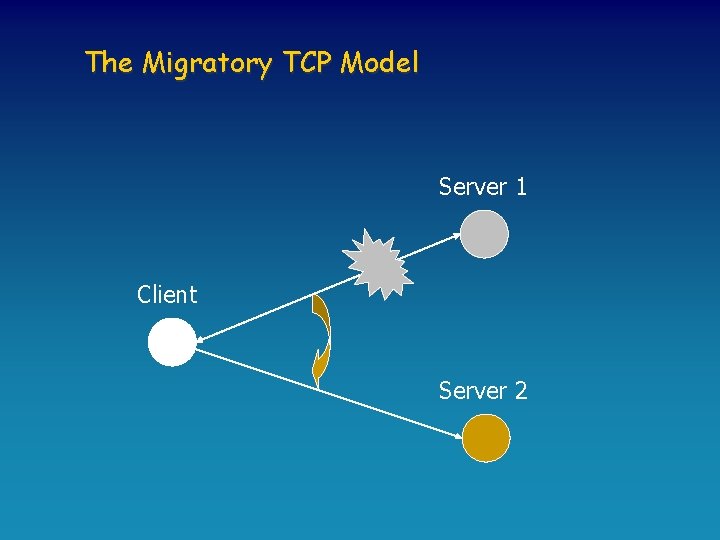

The Migratory TCP Model Server 1 Client Server 2

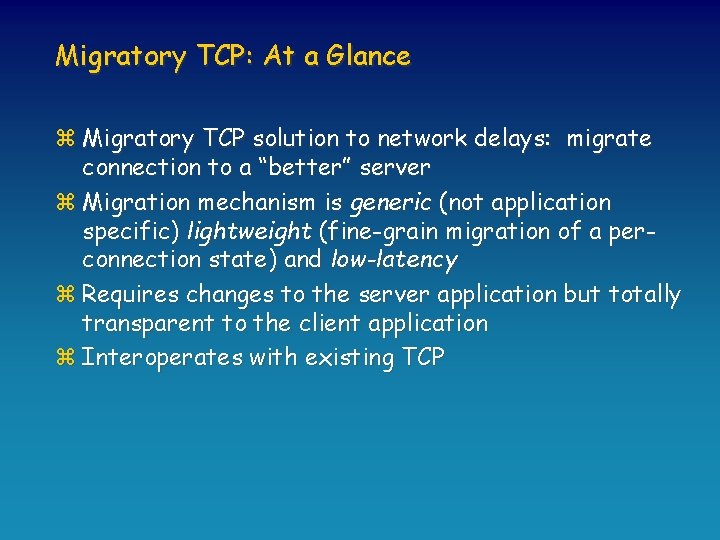

Migratory TCP: At a Glance z Migratory TCP solution to network delays: migrate connection to a “better” server z Migration mechanism is generic (not application specific) lightweight (fine-grain migration of a perconnection state) and low-latency z Requires changes to the server application but totally transparent to the client application z Interoperates with existing TCP

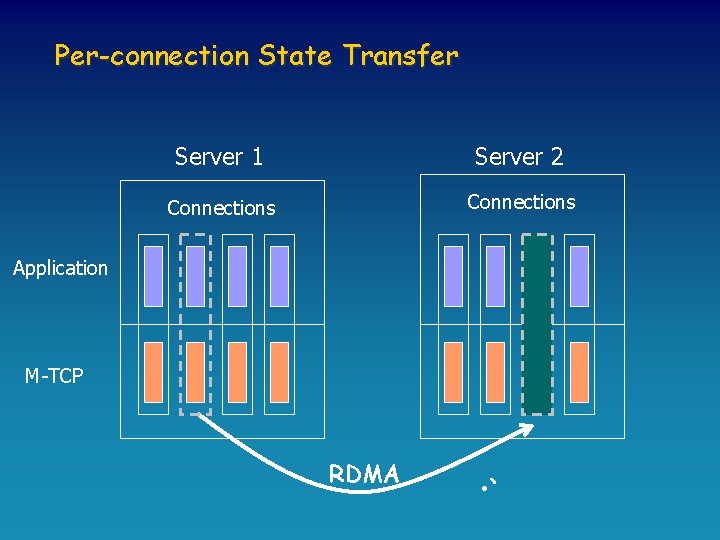

Per-connection State Transfer Server 1 Server 2 Connections Application M-TCP RDMA • `

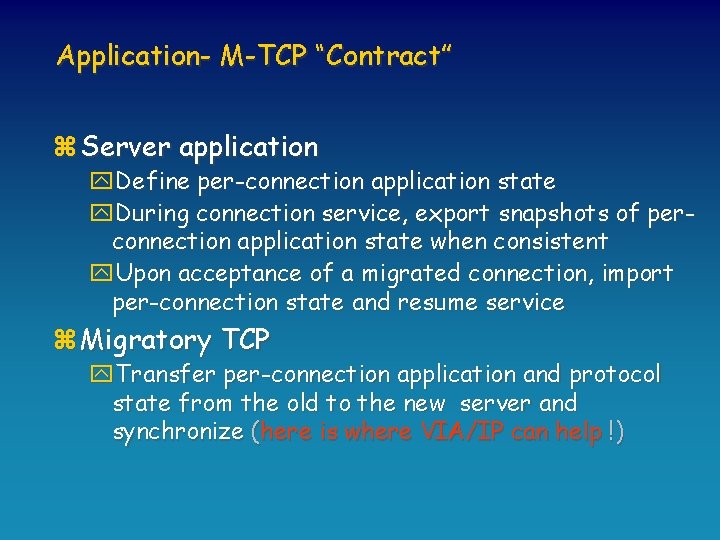

Application- M-TCP “Contract” z Server application y. Define per-connection application state y. During connection service, export snapshots of perconnection application state when consistent y. Upon acceptance of a migrated connection, import per-connection state and resume service z Migratory TCP y. Transfer per-connection application and protocol state from the old to the new server and synchronize (here is where VIA/IP can help !)

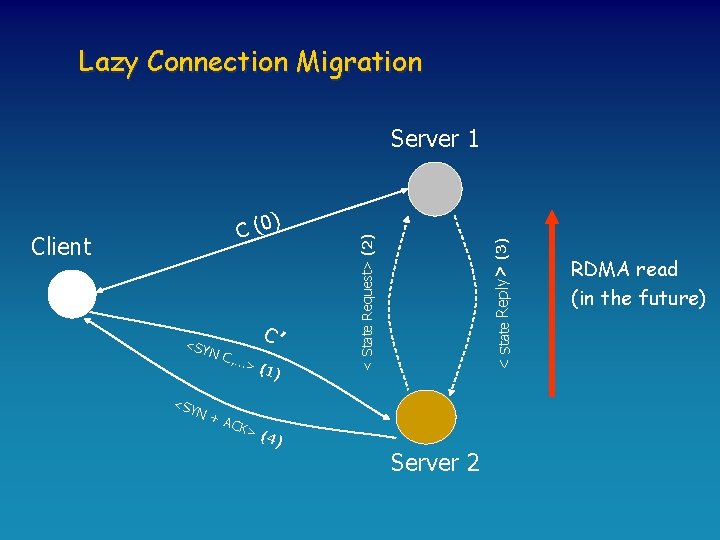

Lazy Connection Migration Client <SY C’ N C, <SY N+ …> (1) ACK < State Reply> (3) ) C (0 < State Request> (2) Server 1 >( 4) Server 2 RDMA read (in the future)

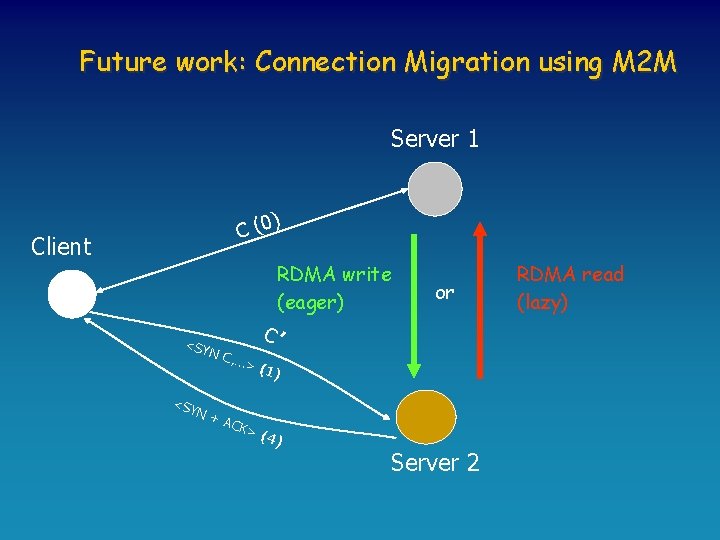

Future work: Connection Migration using M 2 M Server 1 ) C (0 Client RDMA write (eager) <SY C’ N C, <SY N+ or …> (1) ACK >( 4) Server 2 RDMA read (lazy)

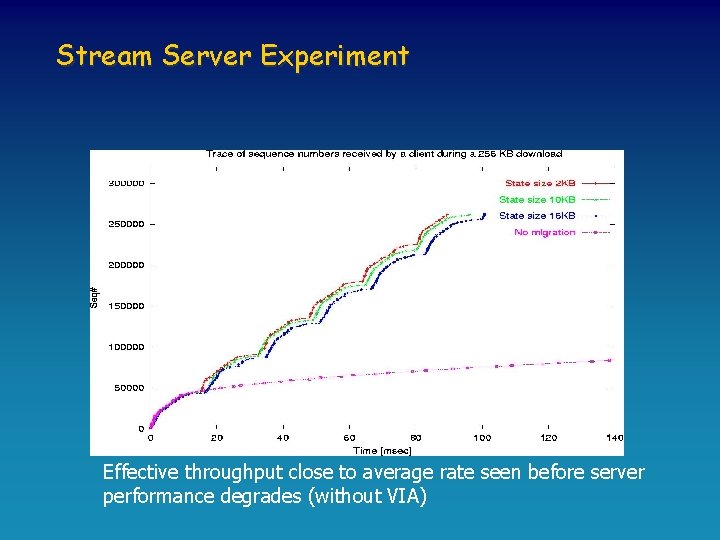

Stream Server Experiment Effective throughput close to average rate seen before server performance degrades (without VIA)

Outline z The M 2 M Game z M 2 M Toys: VIA, Infini. Band, DAFS z Playing with M 2 M y y Software DSM Intra-Server Communication Fault-Tolerance and Availability TCP Offloading z Conclusions

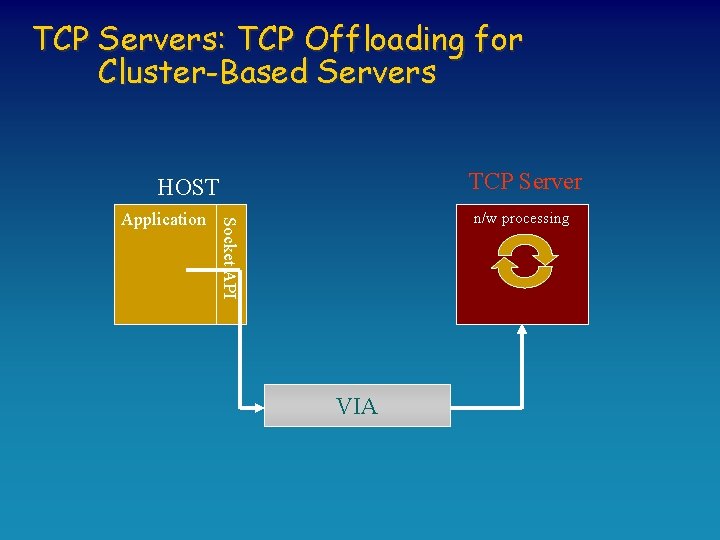

TCP Servers: TCP Offloading for Cluster-Based Servers TCP Server HOST n/w processing Socket API Application VIA

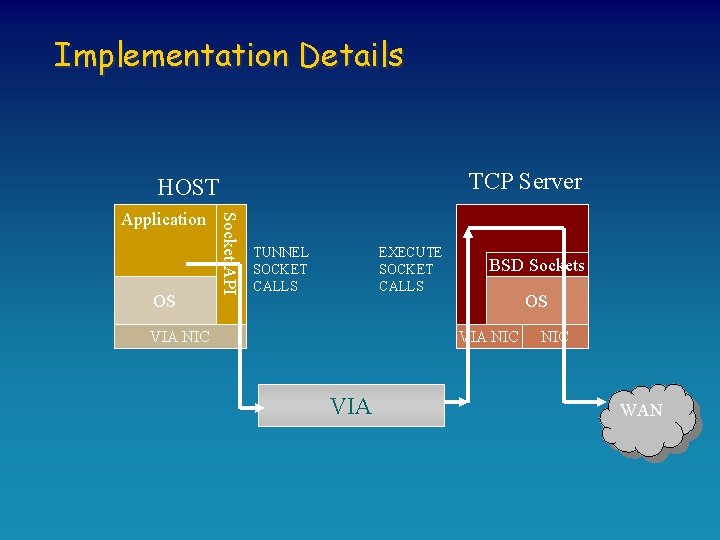

Implementation Details TCP Server HOST OS Socket API Application TUNNEL SOCKET CALLS EXECUTE SOCKET CALLS VIA NIC BSD Sockets OS VIA NIC WAN

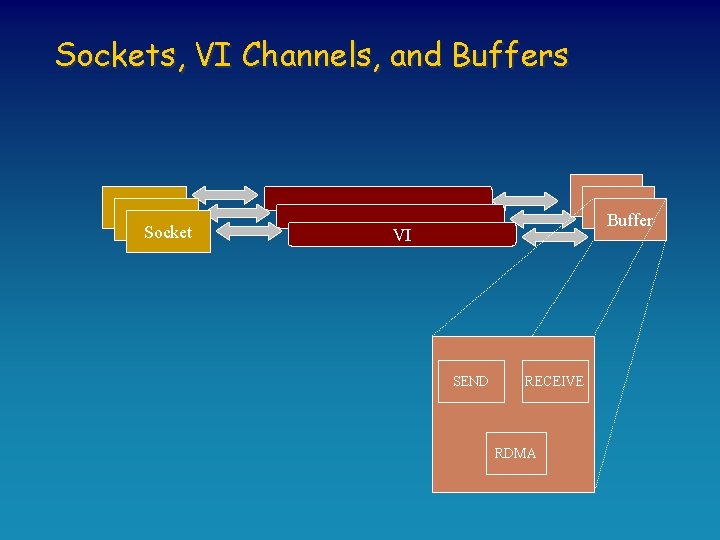

Sockets, VI Channels, and Buffers Socket Buffer VI SEND RECEIVE RDMA

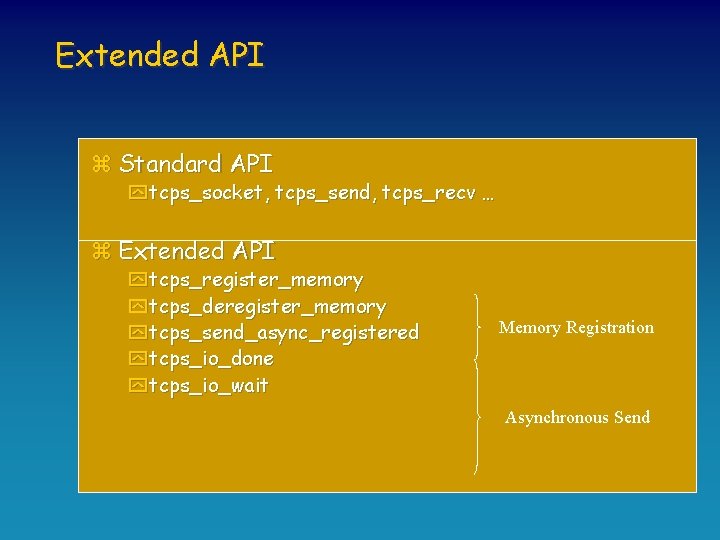

Extended API z Standard API y tcps_socket, tcps_send, tcps_recv … z Extended API y tcps_register_memory y tcps_deregister_memory y tcps_send_async_registered y tcps_io_done y tcps_io_wait Memory Registration Asynchronous Send

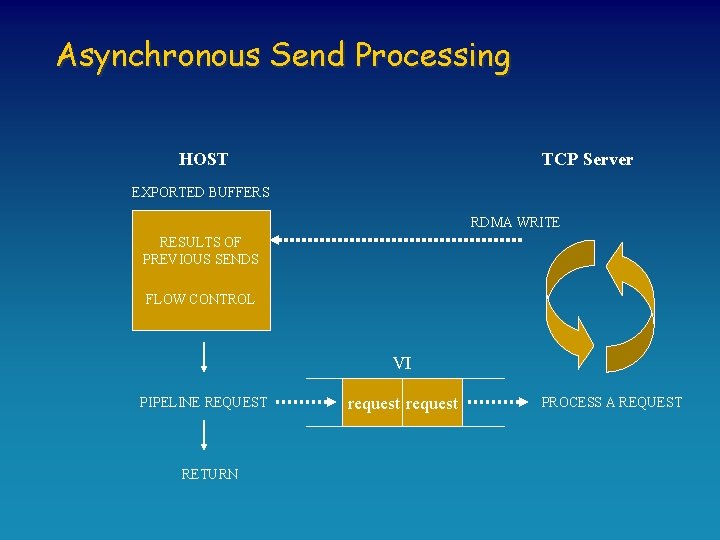

Asynchronous Send Processing HOST TCP Server EXPORTED BUFFERS RDMA WRITE RESULTS OF PREVIOUS SENDS FLOW CONTROL VI PIPELINE REQUEST RETURN request PROCESS A REQUEST

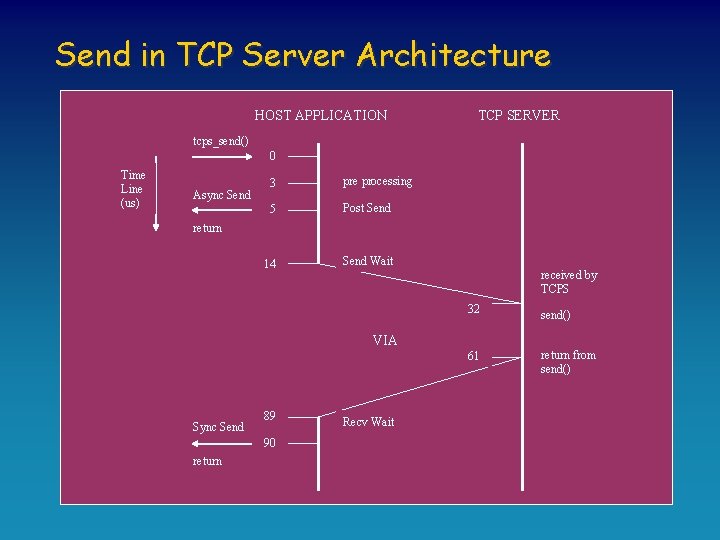

Send in TCP Server Architecture HOST APPLICATION TCP SERVER tcps_send() 0 Time Line (us) Async Send 3 pre processing 5 Post Send 14 Send Wait return received by TCPS 32 send() 61 return from send() VIA Sync Send 89 90 return Recv Wait

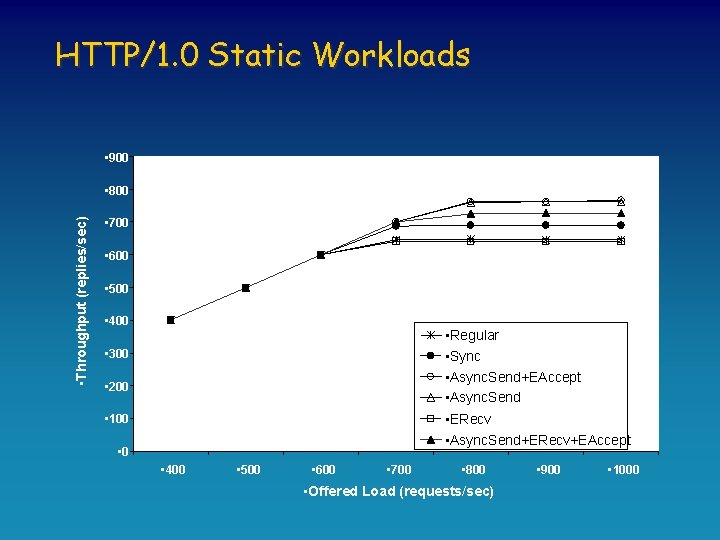

HTTP/1. 0 Static Workloads • 900 • Throughput (replies/sec) • 800 • 700 • 600 • 500 • 400 • Regular • Sync • Async. Send+EAccept • Async. Send • ERecv • Async. Send+ERecv+EAccept • 300 • 200 • 100 • 400 • 500 • 600 • 700 • 800 • Offered Load (requests/sec) • 900 • 1000

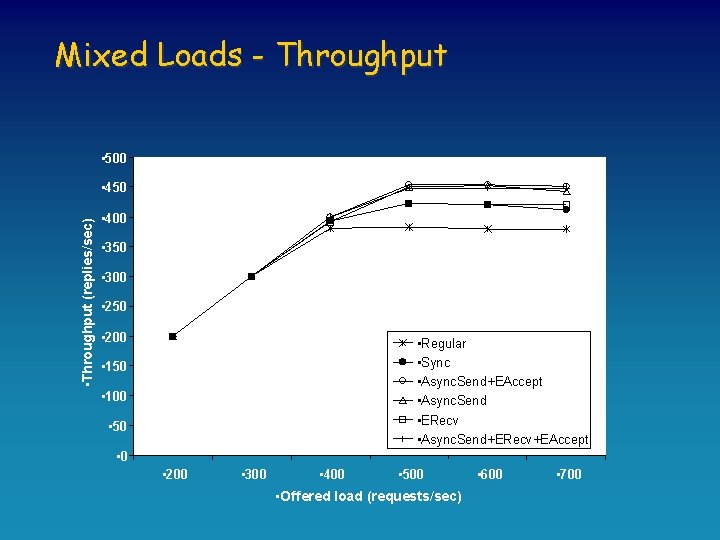

Mixed Loads - Throughput • 500 • Throughput (replies/sec) • 450 • 400 • 350 • 300 • 250 • 200 • Regular • Sync • Async. Send+EAccept • Async. Send • ERecv • Async. Send+ERecv+EAccept • 150 • 100 • 50 • 200 • 300 • 400 • 500 • Offered load (requests/sec) • 600 • 700

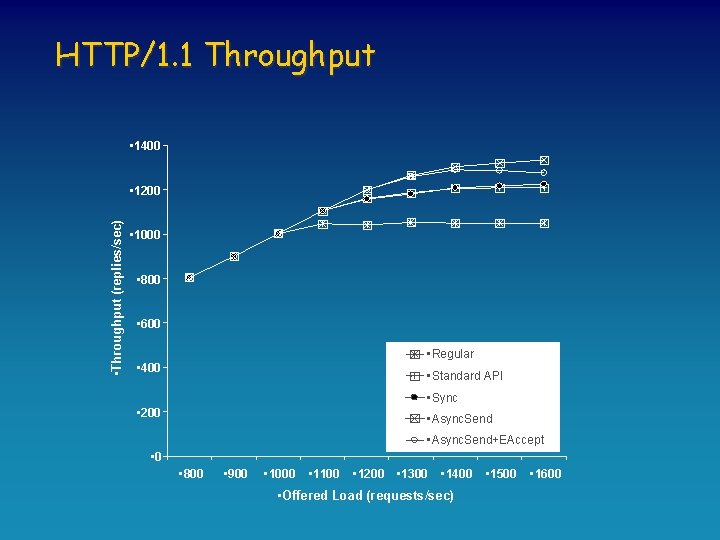

HTTP/1. 1 Throughput • 1400 • Throughput (replies/sec) • 1200 • 1000 • 800 • 600 • Regular • 400 • Standard API • Sync • 200 • Async. Send+EAccept • 0 • 800 • 900 • 1000 • 1100 • 1200 • 1300 • 1400 • Offered Load (requests/sec) • 1500 • 1600

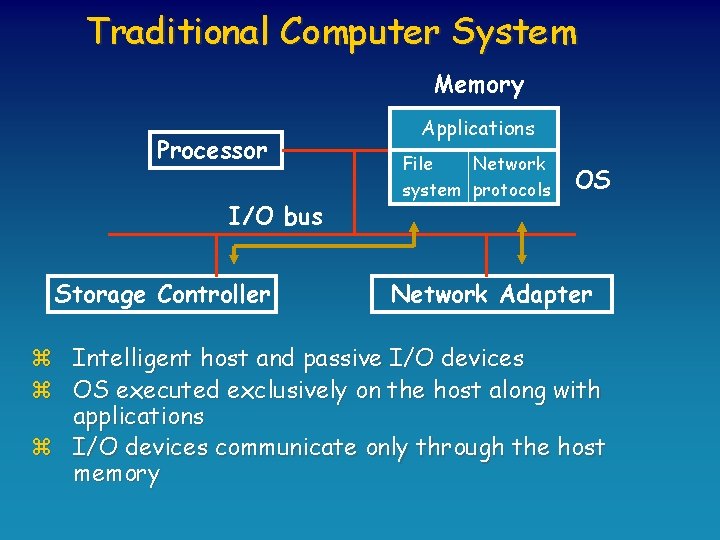

Traditional Computer System Memory Processor I/O bus Storage Controller Applications File Network system protocols OS Network Adapter z Intelligent host and passive I/O devices z OS executed exclusively on the host along with applications z I/O devices communicate only through the host memory

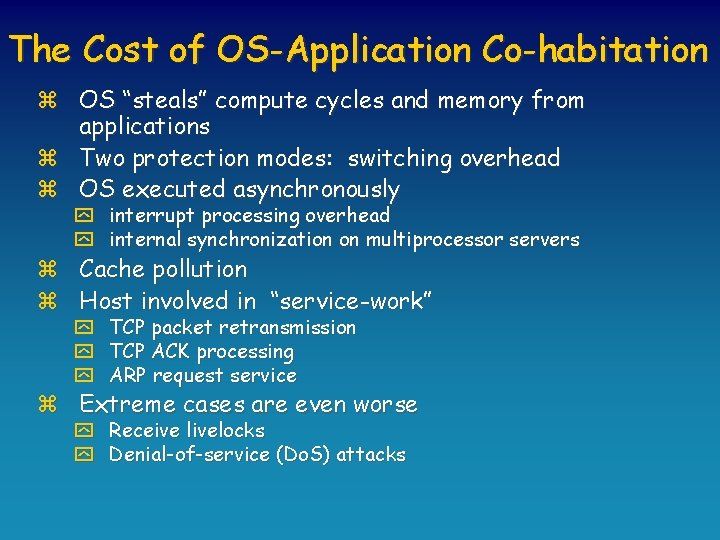

The Cost of OS-Application Co-habitation z OS “steals” compute cycles and memory from applications z Two protection modes: switching overhead z OS executed asynchronously y interrupt processing overhead y internal synchronization on multiprocessor servers z Cache pollution z Host involved in “service-work” y TCP packet retransmission y TCP ACK processing y ARP request service z Extreme cases are even worse y Receive livelocks y Denial-of-service (Do. S) attacks

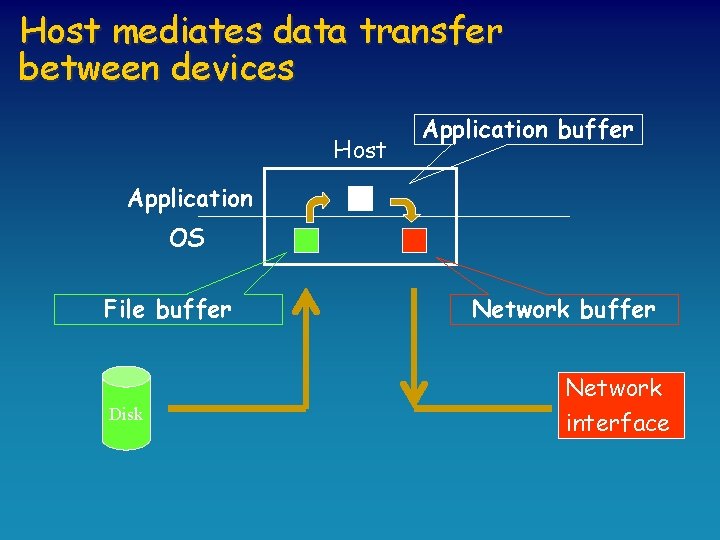

Host mediates data transfer between devices Host Application buffer Application OS File buffer Disk Network buffer Network interface

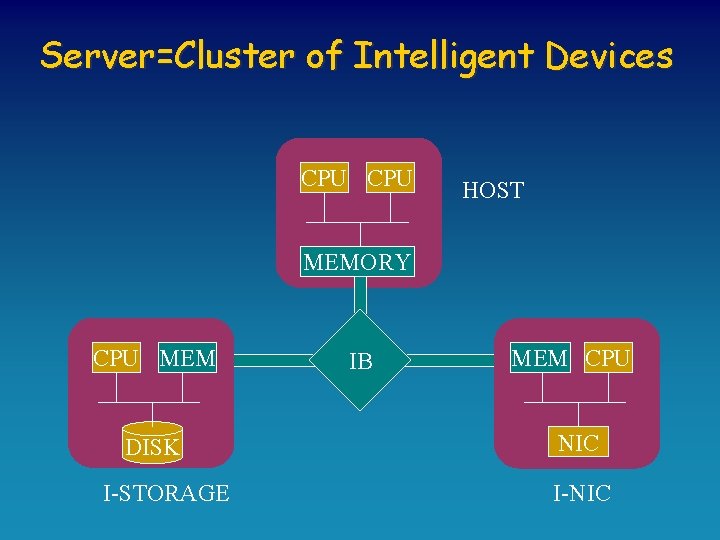

Server=Cluster of Intelligent Devices CPU HOST MEMORY CPU MEM DISK I-STORAGE IB MEM CPU NIC I-NIC

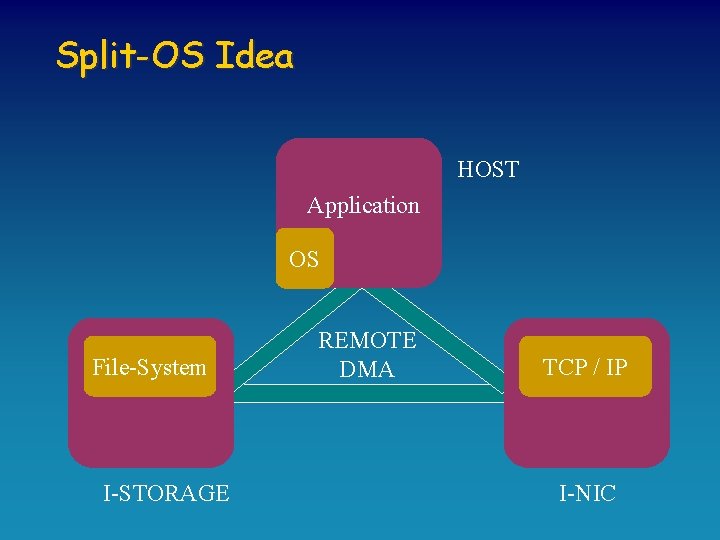

Split-OS Idea HOST Application OS File-System I-STORAGE REMOTE DMA TCP / IP I-NIC

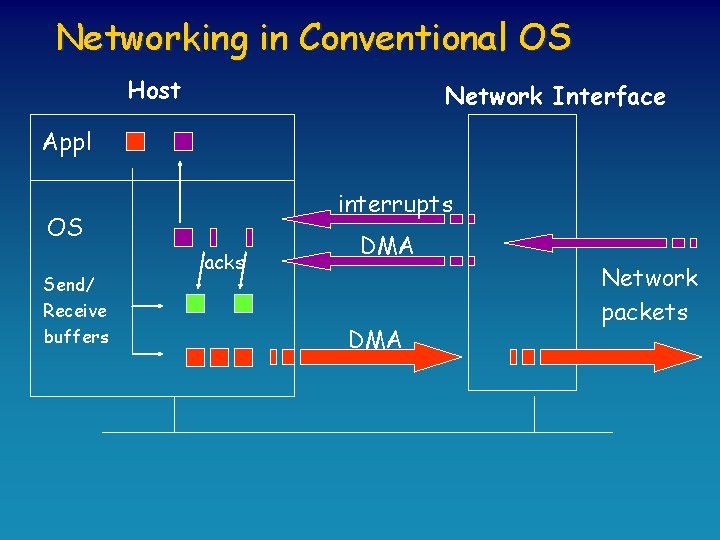

Networking in Conventional OS Host Network Interface Appl interrupts OS Send/ Receive buffers acks DMA Network packets

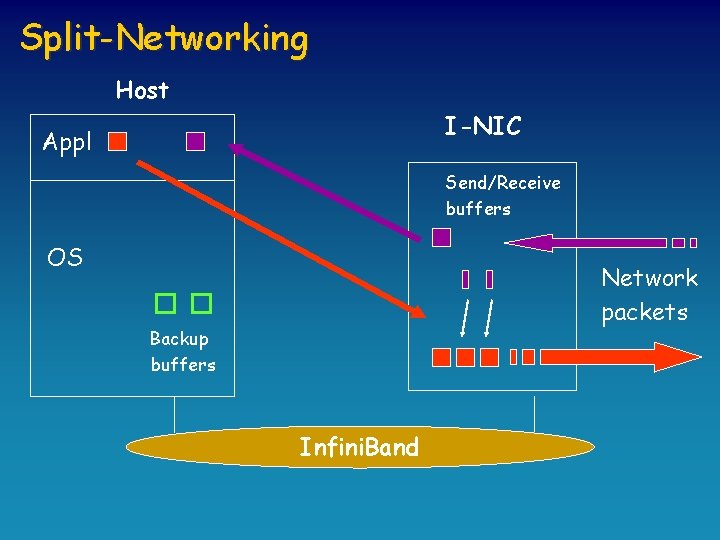

Split-Networking Host I-NIC Appl Send/Receive buffers OS Network packets Backup buffers Infini. Band

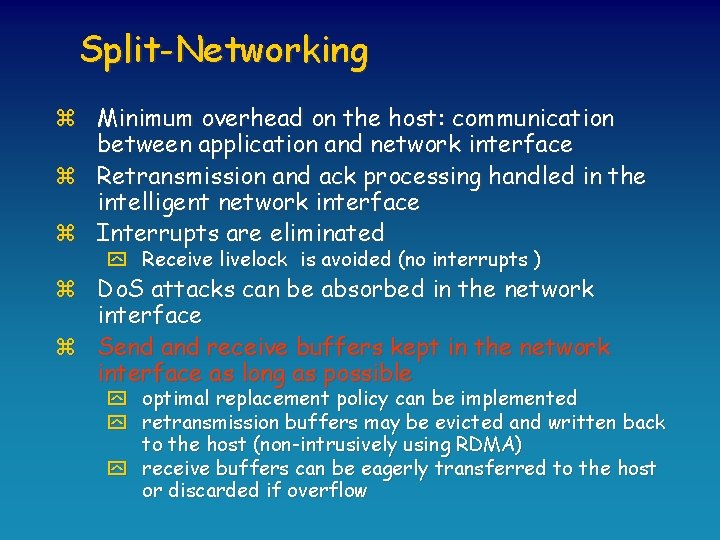

Split-Networking z Minimum overhead on the host: communication between application and network interface z Retransmission and ack processing handled in the intelligent network interface z Interrupts are eliminated y Receive livelock is avoided (no interrupts ) z Do. S attacks can be absorbed in the network interface z Send and receive buffers kept in the network interface as long as possible y optimal replacement policy can be implemented y retransmission buffers may be evicted and written back to the host (non-intrusively using RDMA) y receive buffers can be eagerly transferred to the host or discarded if overflow

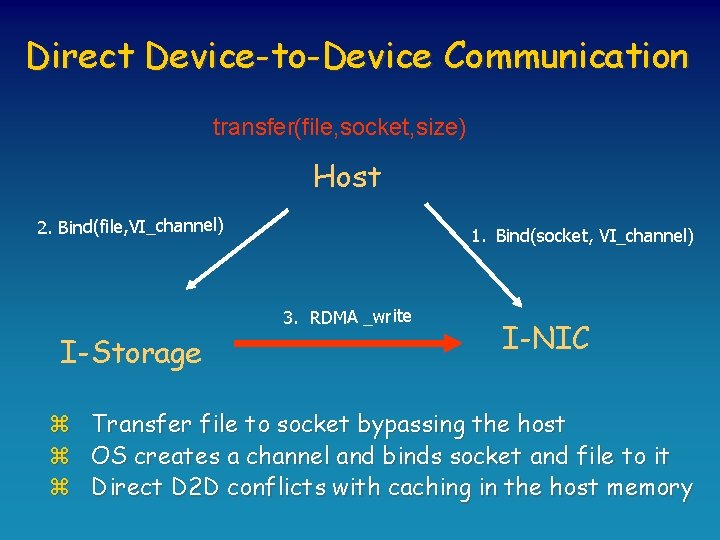

Direct Device-to-Device Communication transfer(file, socket, size) Host 2. Bind(file, VI_channel) 1. Bind(socket, VI_channel) 3. RDMA _write I-Storage z z z I-NIC Transfer file to socket bypassing the host OS creates a channel and binds socket and file to it Direct D 2 D conflicts with caching in the host memory

Outline z The M 2 M Game z M 2 M Toys: VIA, Infini. Band, DAFS z Playing with M 2 M y y Software DSM Intra-Server Communication Fault-Tolerance and Availability TCP Offloading z Conclusions

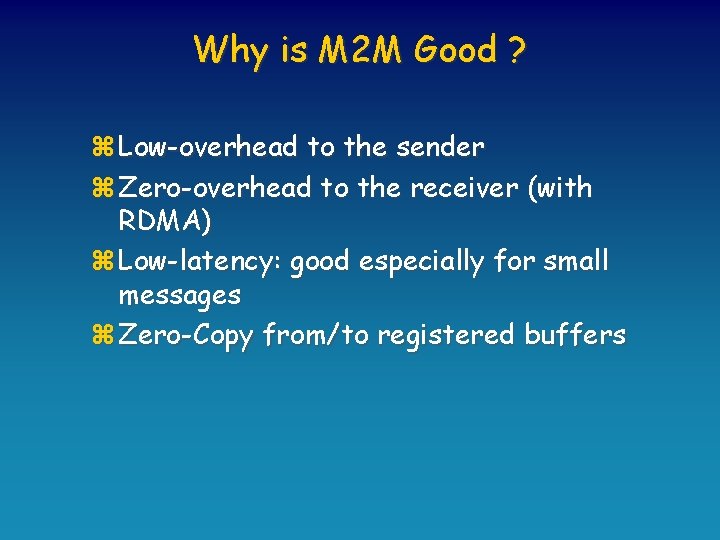

Why is M 2 M Good ? z Low-overhead to the sender z Zero-overhead to the receiver (with RDMA) z Low-latency: good especially for small messages z Zero-Copy from/to registered buffers

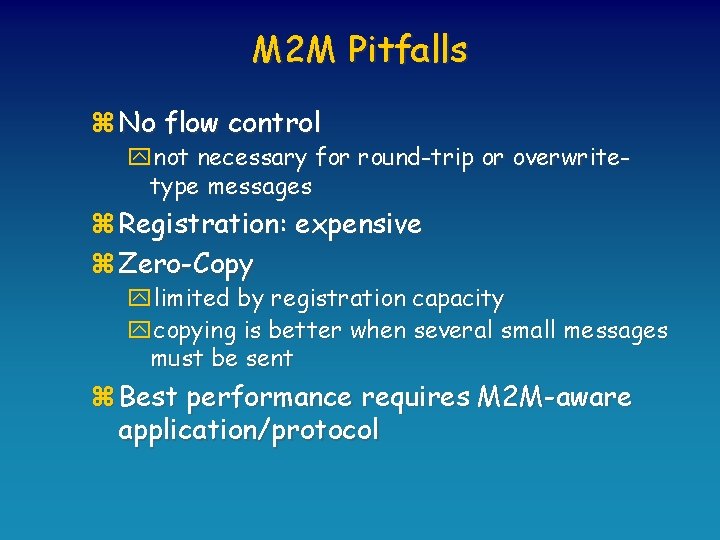

M 2 M Pitfalls z No flow control ynot necessary for round-trip or overwritetype messages z Registration: expensive z Zero-Copy ylimited by registration capacity ycopying is better when several small messages must be sent z Best performance requires M 2 M-aware application/protocol

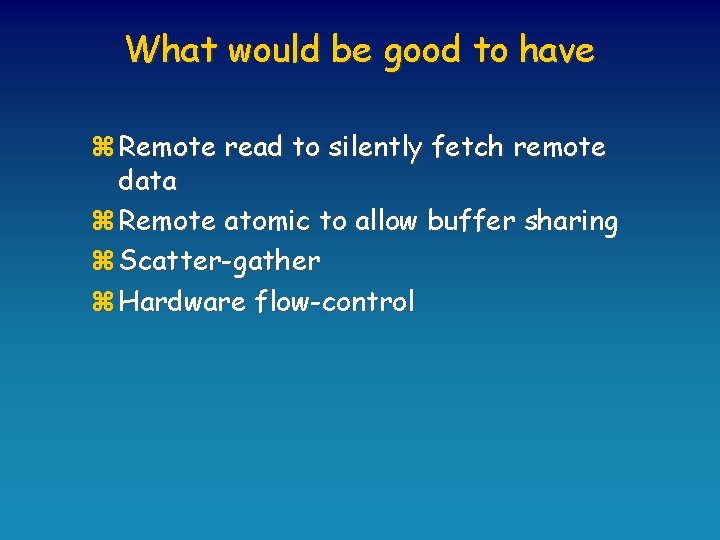

What would be good to have z Remote read to silently fetch remote data z Remote atomic to allow buffer sharing z Scatter-gather z Hardware flow-control

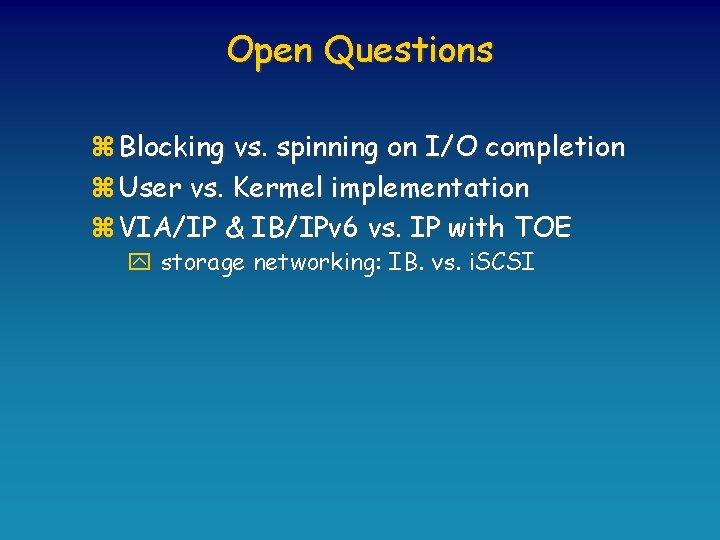

Open Questions z Blocking vs. spinning on I/O completion z User vs. Kermel implementation z VIA/IP & IB/IPv 6 vs. IP with TOE y storage networking: IB. vs. i. SCSI

- Slides: 74